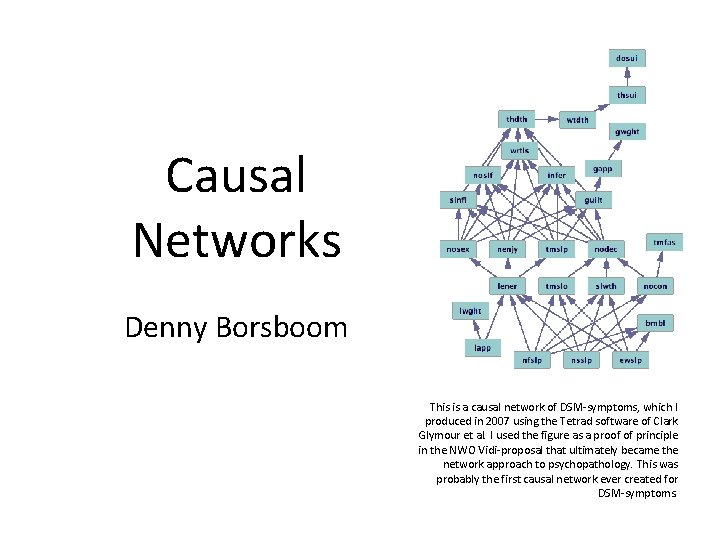

Causal Networks Denny Borsboom This is a causal

Causal Networks Denny Borsboom This is a causal network of DSM-symptoms, which I produced in 2007 using the Tetrad software of Clark Glymour et al. I used the figure as a proof of principle in the NWO Vidi-proposal that ultimately became the network approach to psychopathology. This was probably the first causal network ever created for DSM-symptoms.

Overview The causal relation Conditional independence Causal networks Blocking and d-separation Exercise

The causal relation • What constitutes the “secret connexion” of causality is one of the big questions of philosophy • Philosophical proposals: – A causes B means that… • A invariably follows B (David Hume) • A is an Insufficient but Nonredundant part of an Unnecessary but Sufficient condition for B (INUS condition; John Mackie) • B counterfactually depends on A: if A had not happened, B would not have happened (David Lewis) • … David Hume (1711 -1776) Portret door Allan Ramsay, 1766

Disadvantage of philosophical accounts • Can’t cope well with noisy data (i. e. , can’t cope with data) • Almost all causal relations are observed through statistical analysis: probabilities • Probabilities didn’t sit well with the philosophical analyses, and neither did data • For a long time, causal inference was therefore done in a theoretical vacuum

An alternative • Recently, Judea Pearl suggested an alternative approach based in the statistical method of structural relations • He argues that causal relations should be framed in terms of interventions on a model: given a causal model, what would happen to B if we changed A? • This is a simple idea but it turned out very powerful Judea Pearl (1936 -)

Pearl’s approach (I) • In a now legendary text, Pearl noted that statistics has no words to express that A causes B • He asks: how can we statistically express the fact that rain causes the pavement to be wet? • He gets no further than a variant of “rain and wet pavements are correlated, and it rained first” • Solution: create a syntax and semantics for causality Wet pavements do not cause rain

Pearl’s approach (II) • A causal relation is encoded in a structural equation that says how B would change if A were changed • This can be coded with the do operator • “Do(A=a)” represents a natural or experimental intervention that fixes variable A to the value a • “E(B|Do(A=a))” then expresses the expectation of B, given an intervention on A • Note that “E(B|Do(A=a))” is not the same as the conventional statistical expectation E(B|A), which Pearl denotes E(B|See (A=a))” • Doing is not the same as seeing!

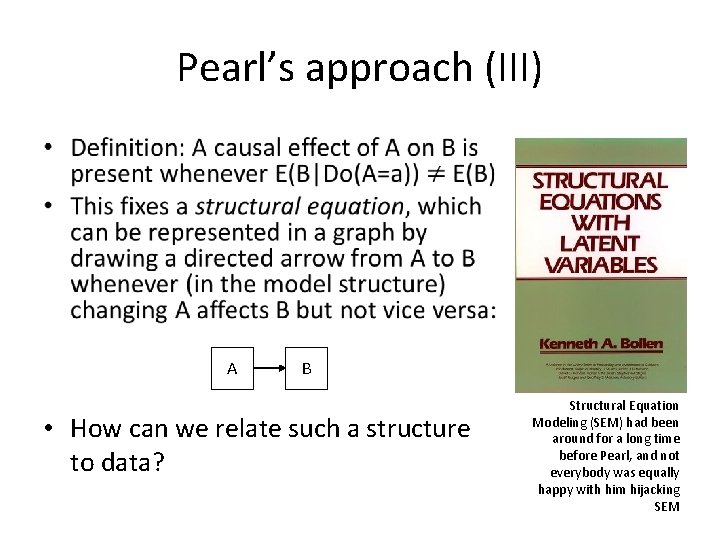

Pearl’s approach (III) • A B • How can we relate such a structure to data? Structural Equation Modeling (SEM) had been around for a long time before Pearl, and not everybody was equally happy with him hijacking SEM

Pearl’s approach (IV) • The classic problem of induction now presents itself as a statistical identification problem • Given a only two variables, it is not possible to deduce from the data whether A->B or B>A (or some other structure generated the dependence): both are equally consistent with the data • If temporal precedence distinguishes A->B from B->A then the skeptic may argue that this is all there is to know (really hardcore skeptics generalize to experiments) • This is the root of the platitude that “correlation does not equal causation” The Humean skeptic turns out to be have an identification problem

Pearl’s approach (V) • However, where there’s correlational smoke, there is often a causal fire… • How to identify that fire? • 20 th century statistics struggled with this issue; at the end of the 20 th century many had given up • Pearl and Glymour et al. then simultaneously developed the insight that not correlations or conditional probabilities but conditional independence relations are key to the identification of causal structure Pittsburgh philosopher Clark Glymour (1942 -…)

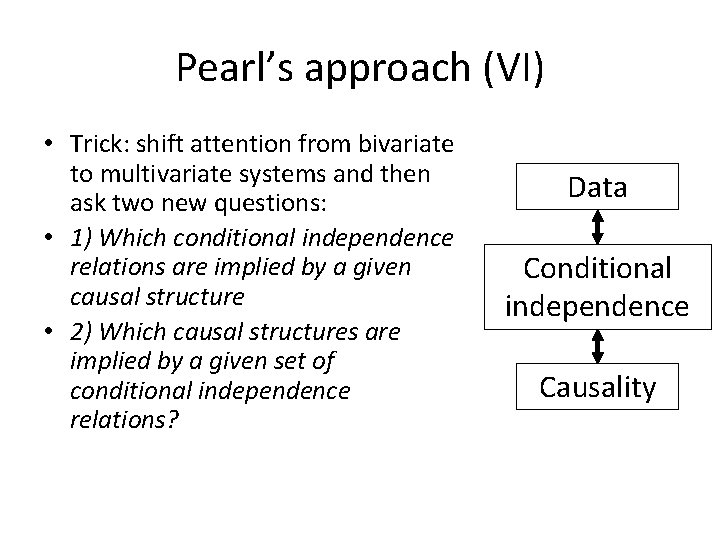

Pearl’s approach (VI) • Trick: shift attention from bivariate to multivariate systems and then ask two new questions: • 1) Which conditional independence relations are implied by a given causal structure • 2) Which causal structures are implied by a given set of conditional independence relations? Data Conditional independence Causality

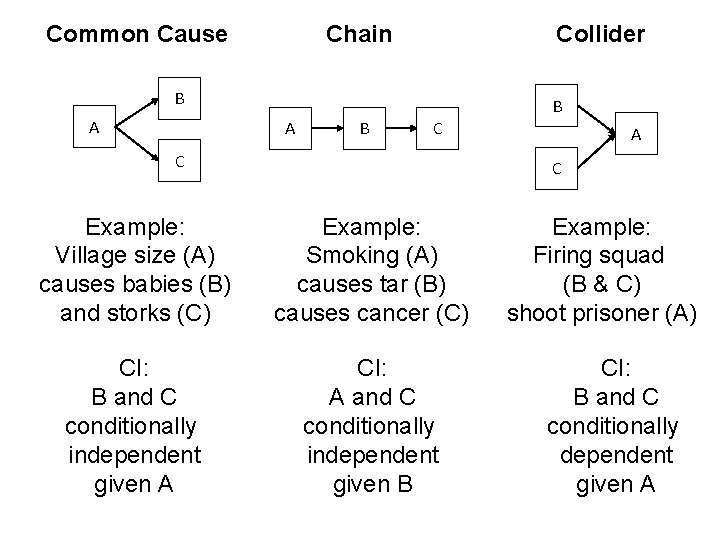

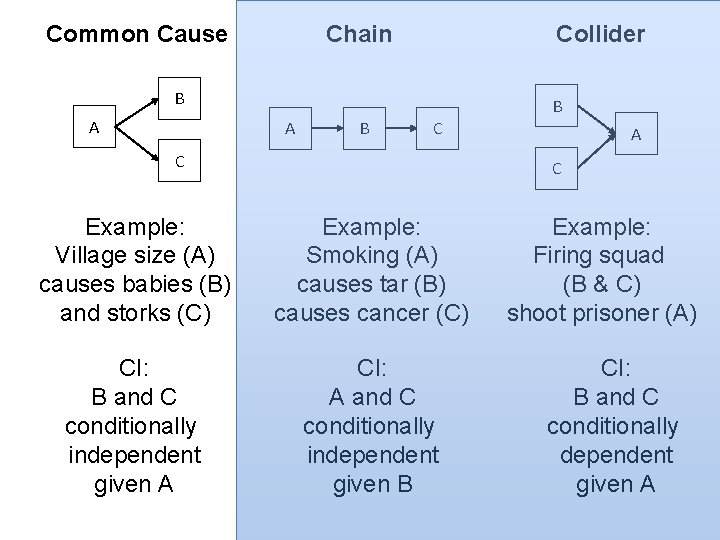

Common Cause Chain Collider B A B C C A C Example: Village size (A) causes babies (B) and storks (C) Example: Smoking (A) causes tar (B) causes cancer (C) CI: B and C conditionally independent given A CI: A and C conditionally independent given B Example: Firing squad (B & C) shoot prisoner (A) CI: B and C conditionally dependent given A

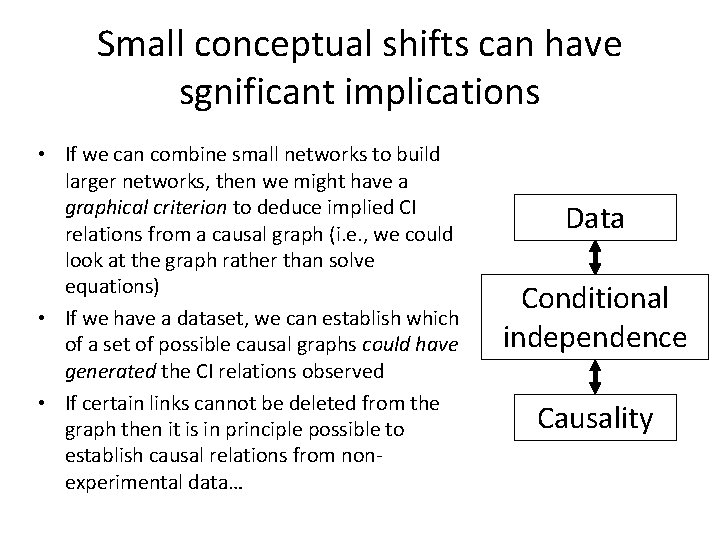

Small conceptual shifts can have sgnificant implications • If we can combine small networks to build larger networks, then we might have a graphical criterion to deduce implied CI relations from a causal graph (i. e. , we could look at the graph rather than solve equations) • If we have a dataset, we can establish which of a set of possible causal graphs could have generated the CI relations observed • If certain links cannot be deleted from the graph then it is in principle possible to establish causal relations from nonexperimental data… Data Conditional independence Causality

To work!

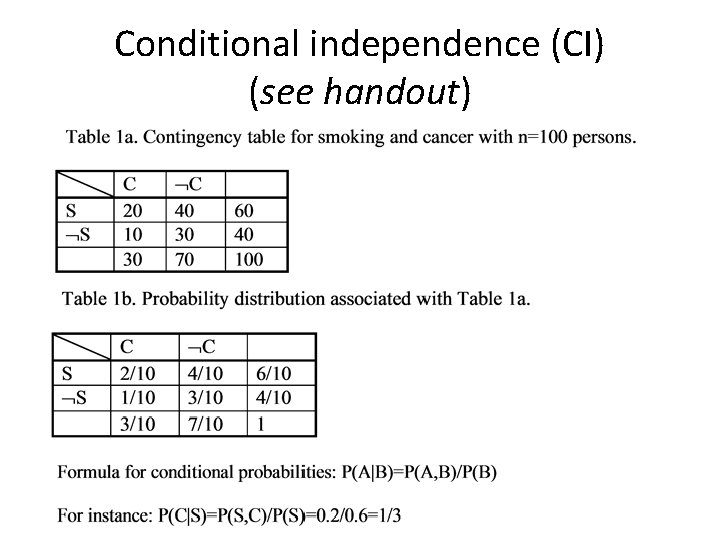

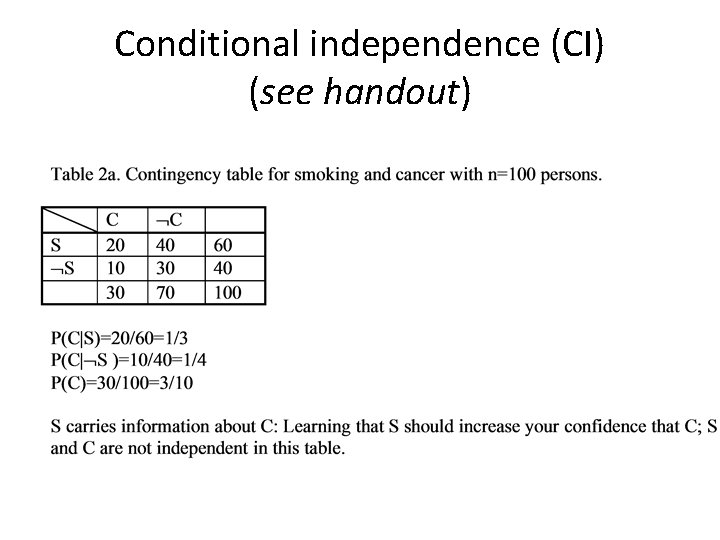

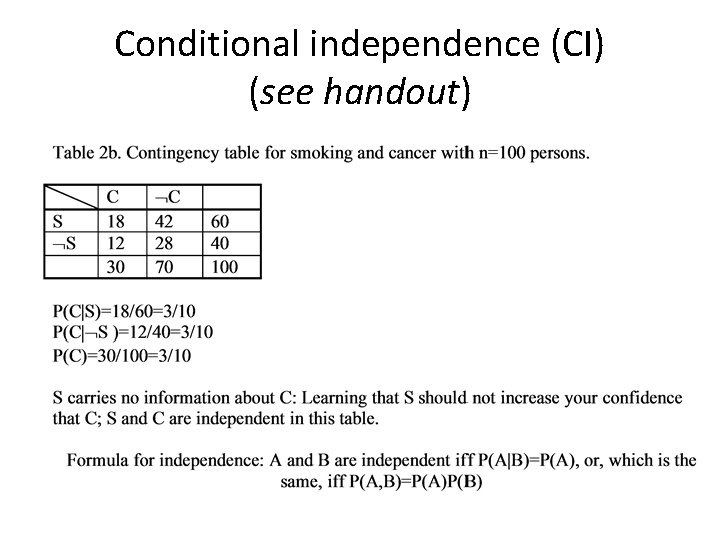

Conditional independence (CI) (see handout)

Conditional independence (CI) (see handout)

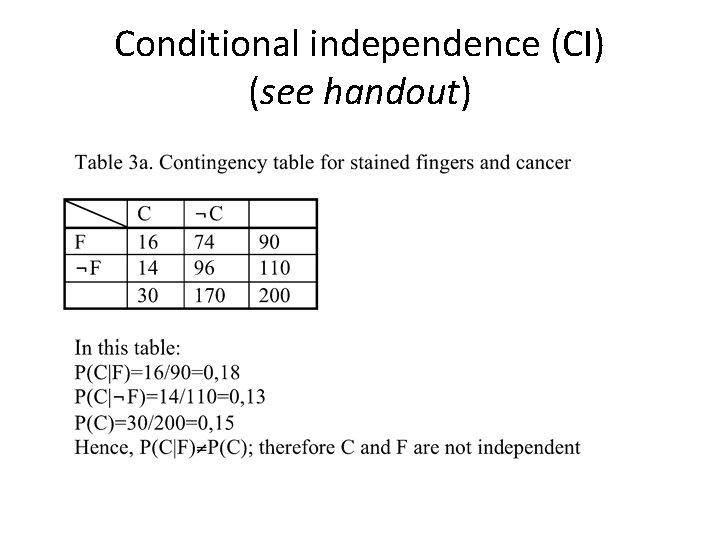

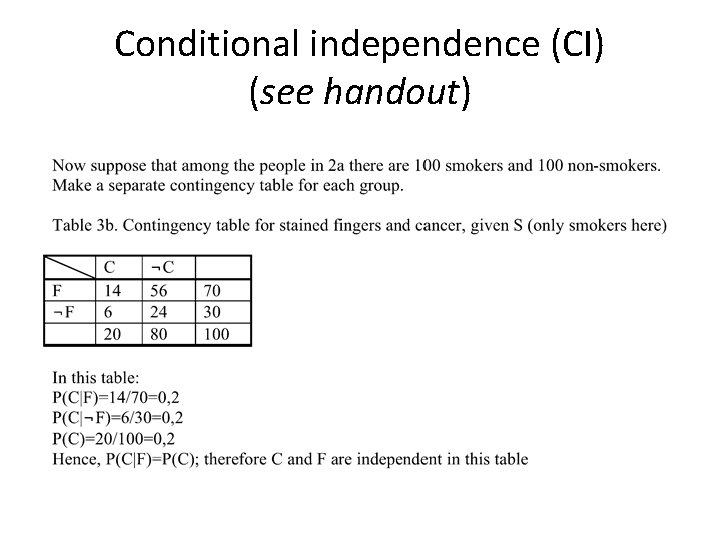

Conditional independence (CI) (see handout)

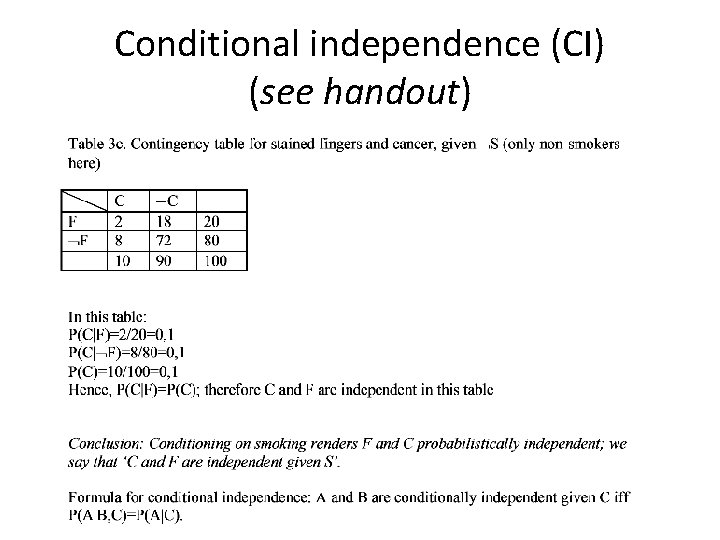

Conditional independence (CI) (see handout)

Conditional independence (CI) (see handout)

Conditional independence (CI) (see handout)

Common Cause Chain Collider B A B C C A C Example: Village size (A) causes babies (B) and storks (C) Example: Smoking (A) causes tar (B) causes cancer (C) CI: B and C conditionally independent given A CI: A and C conditionally independent given B Example: Firing squad (B & C) shoot prisoner (A) CI: B and C conditionally dependent given A

Common Cause Chain Collider B A B C C A C Example: Village size (A) causes babies (B) and storks (C) Example: Smoking (A) causes tar (B) causes cancer (C) CI: B and C conditionally independent given A CI: A and C conditionally independent given B Example: Firing squad (B & C) shoot prisoner (A) CI: B and C conditionally dependent given A

Therefore • Now suppose we are prepared to make some causal assumptions, most importantly: – there are no omitted variables that generate dependencies, and – all causal relations are necessary to establish the pattern of CIs • Then we can deduce causal relations from correlational data (at least in principle) • Quite a nice result!

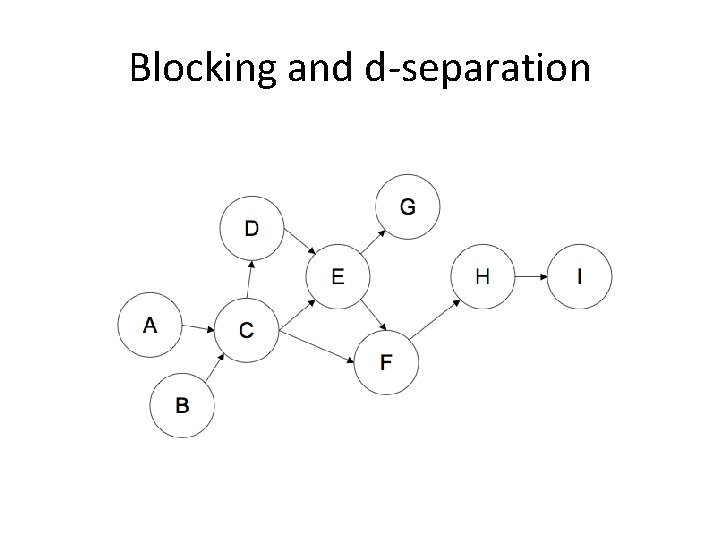

Blocking and d-separation

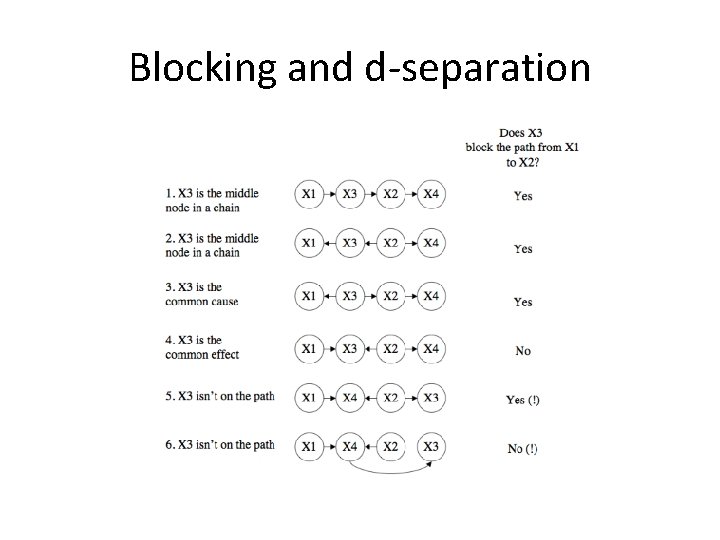

Blocking and d-separation • It would be nice if we could just look at the graph and see which CI relations it entails • This turns out to be possible • Rule: if you want to know whether in a directed acyclic graph two variables A and B are independent given C, see if they are d-separated • For this you have to (a) check all the paths between A and B, and (b) see if they are all blocked • If all paths are blocked by C, then C d-separates A and B, and you can predict that A is independent of B given C

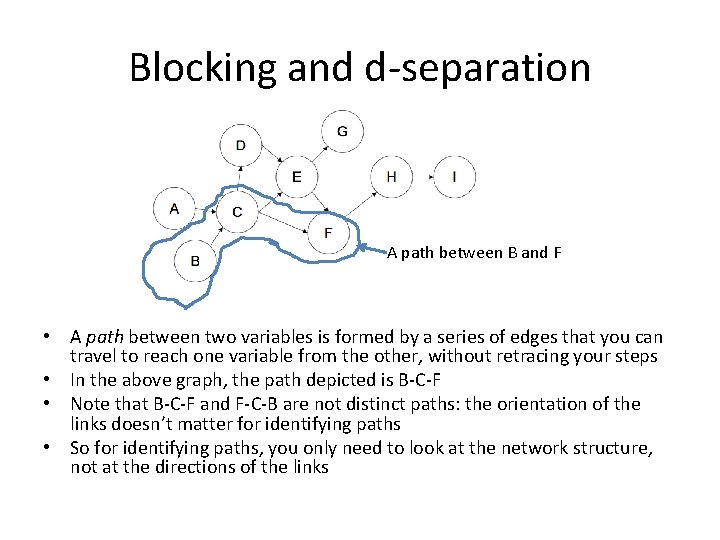

Blocking and d-separation A path between B and F • A path between two variables is formed by a series of edges that you can travel to reach one variable from the other, without retracing your steps • In the above graph, the path depicted is B-C-F • Note that B-C-F and F-C-B are not distinct paths: the orientation of the links doesn’t matter for identifying paths • So for identifying paths, you only need to look at the network structure, not at the directions of the links

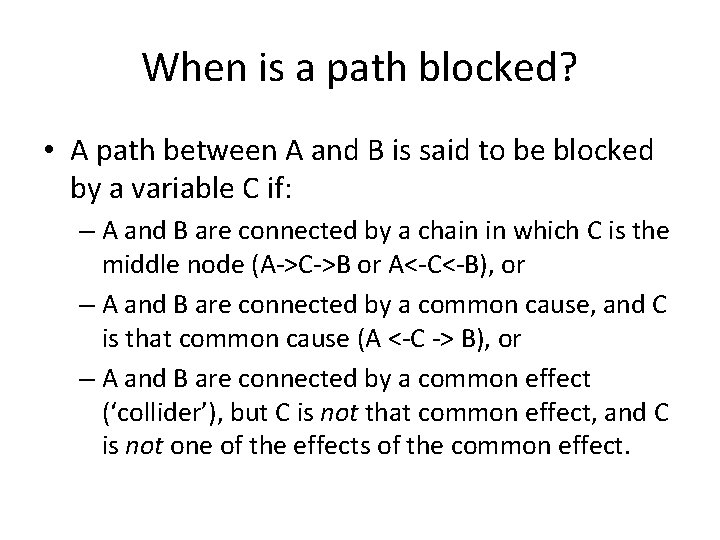

When is a path blocked? • A path between A and B is said to be blocked by a variable C if: – A and B are connected by a chain in which C is the middle node (A->C->B or A<-C<-B), or – A and B are connected by a common cause, and C is that common cause (A <-C -> B), or – A and B are connected by a common effect (‘collider’), but C is not that common effect, and C is not one of the effects of the common effect.

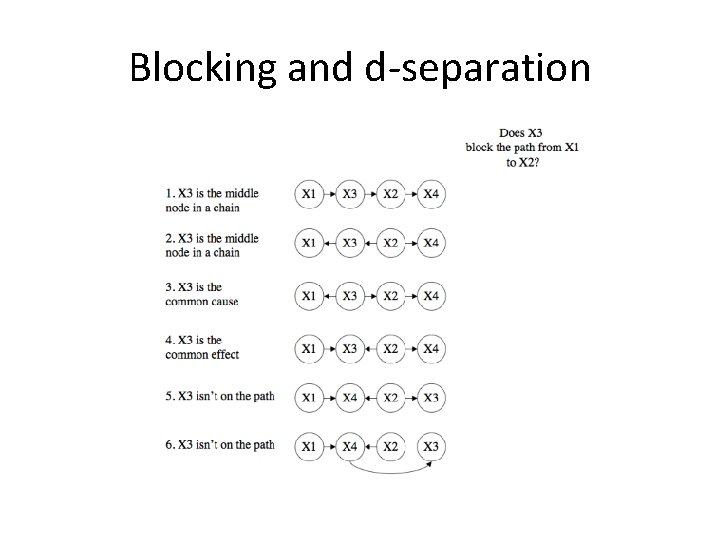

Blocking and d-separation

Blocking and d-separation

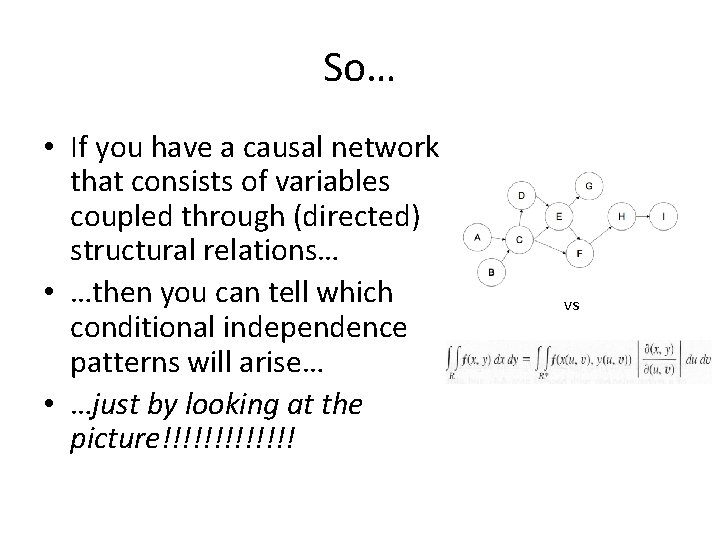

So… • If you have a causal network that consists of variables coupled through (directed) structural relations… • …then you can tell which conditional independence patterns will arise… • …just by looking at the picture!!!!!!! vs

So… • And in the other direction: if you have a set of conditional independencies, you can search for the causal network that could have produced them • This has become an important tool in machine learning • This is material Sacha will cover later in the course

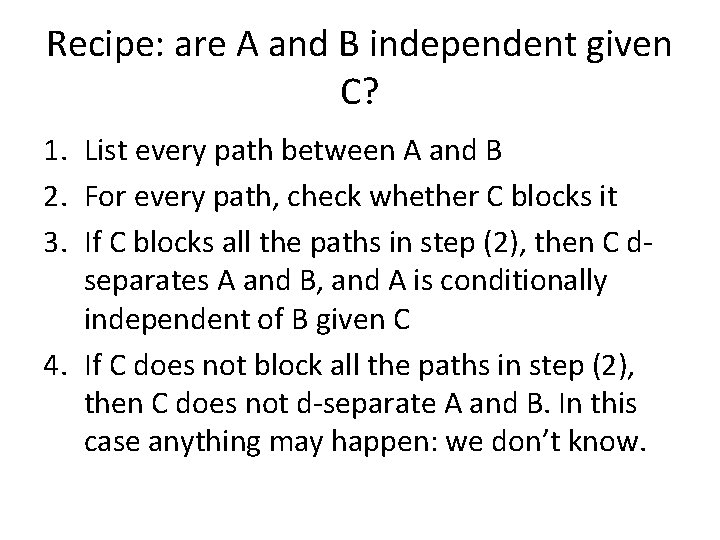

Recipe: are A and B independent given C? 1. List every path between A and B 2. For every path, check whether C blocks it 3. If C blocks all the paths in step (2), then C dseparates A and B, and A is conditionally independent of B given C 4. If C does not block all the paths in step (2), then C does not d-separate A and B. In this case anything may happen: we don’t know.

Practice!

- Slides: 33