Carnegie Mellon Kalman and Kalman Bucy 50 Distributed

Carnegie Mellon Kalman and Kalman Bucy @ 50: Distributed and Intermittency José M. F. Moura Joint Work with Soummya Kar Advanced Network Colloquium University of Maryland College Park, MD November 04, 2011 Acknowledgements: NSF under grants CCF-1011903 and CCF-1018509, and AFOSR grant FA 95501010291

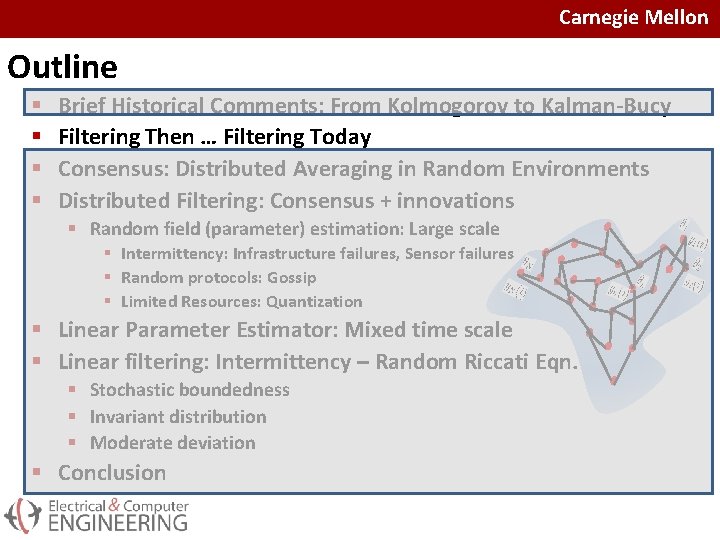

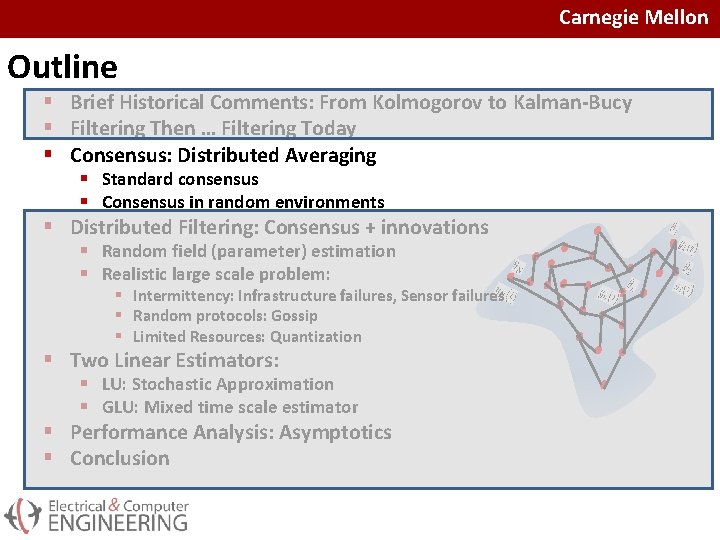

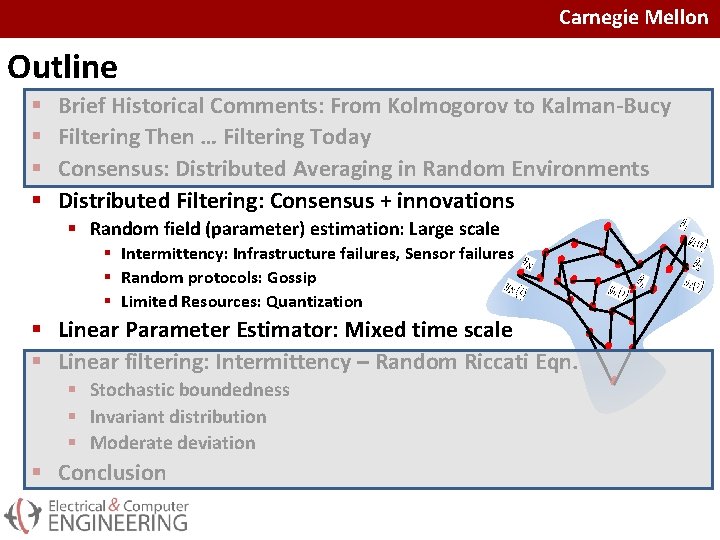

Carnegie Mellon Outline § § Brief Historical Comments: From Kolmogorov to Kalman-Bucy Filtering Then … Filtering Today Consensus: Distributed Averaging in Random Environments Distributed Filtering: Consensus + innovations § Random field (parameter) estimation: Large scale § Intermittency: Infrastructure failures, Sensor failures § Random protocols: Gossip § Limited Resources: Quantization § Linear Parameter Estimator: Mixed time scale § Linear filtering: Intermittency – Random Riccati Eqn. § Stochastic boundedness § Invariant distribution § Moderate deviation § Conclusion

Carnegie Mellon Outline § § Brief Historical Comments: From Kolmogorov to Kalman-Bucy Filtering Then … Filtering Today Consensus: Distributed Averaging in Random Environments Distributed Filtering: Consensus + innovations § Random field (parameter) estimation: Large scale § Intermittency: Infrastructure failures, Sensor failures § Random protocols: Gossip § Limited Resources: Quantization § Linear Parameter Estimator: Mixed time scale § Linear filtering: Intermittency – Random Riccati Eqn. § Stochastic boundedness § Invariant distribution § Moderate deviation § Conclusion

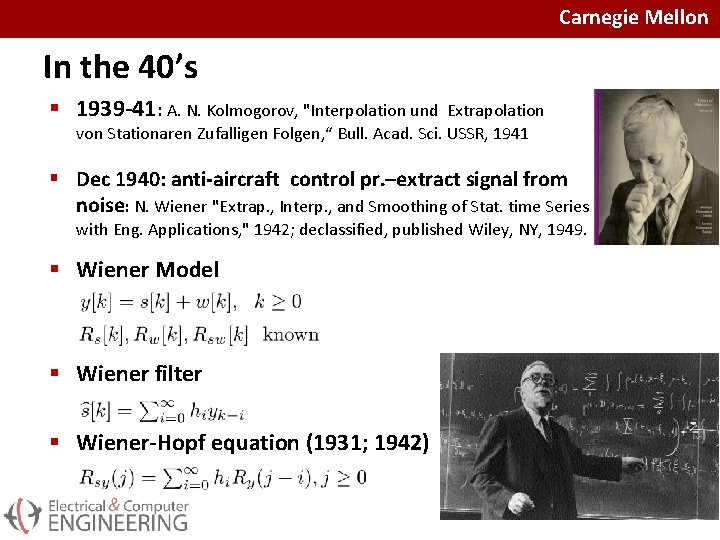

Carnegie Mellon In the 40’s § 1939 -41: A. N. Kolmogorov, "Interpolation und Extrapolation von Stationaren Zufalligen Folgen, “ Bull. Acad. Sci. USSR, 1941 § Dec 1940: anti-aircraft control pr. –extract signal from noise: N. Wiener "Extrap. , Interp. , and Smoothing of Stat. time Series with Eng. Applications, " 1942; declassified, published Wiley, NY, 1949. § Wiener Model § Wiener filter § Wiener-Hopf equation (1931; 1942)

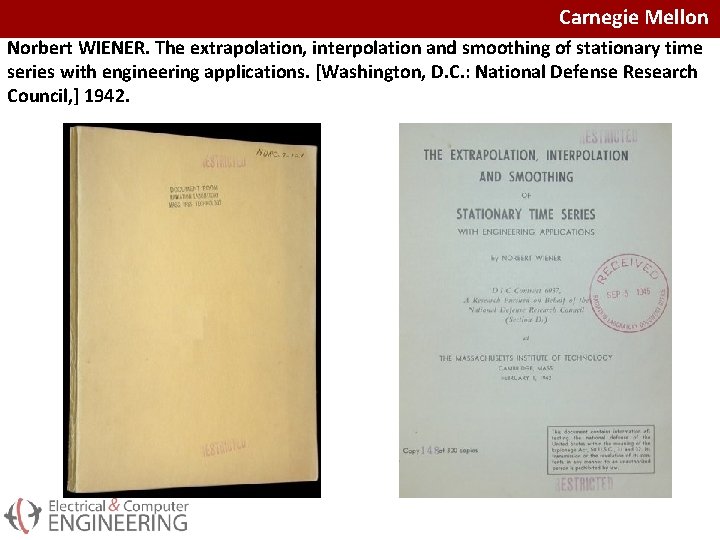

Carnegie Mellon Norbert WIENER. The extrapolation, interpolation and smoothing of stationary time series with engineering applications. [Washington, D. C. : National Defense Research Council, ] 1942.

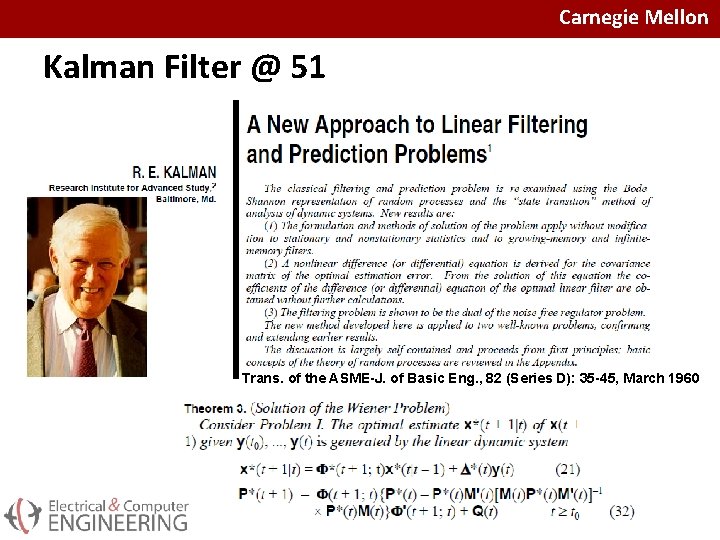

Carnegie Mellon Kalman Filter @ 51 Trans. of the ASME-J. of Basic Eng. , 82 (Series D): 35 -45, March 1960

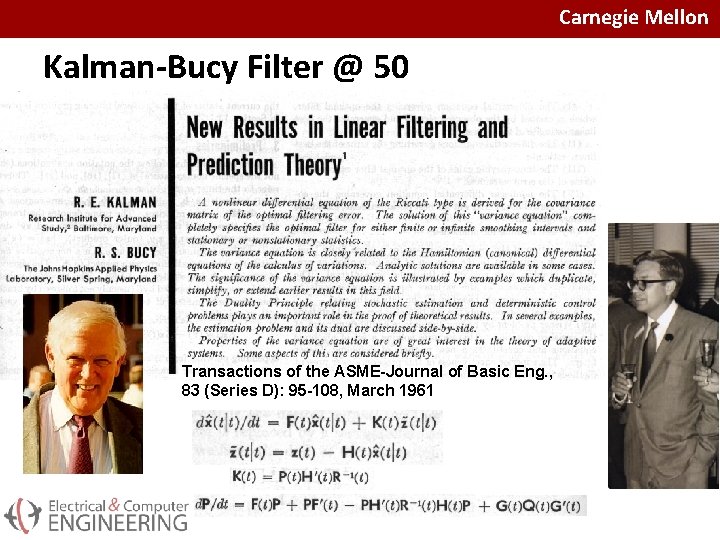

Carnegie Mellon Kalman-Bucy Filter @ 50 Transactions of the ASME-Journal of Basic Eng. , 83 (Series D): 95 -108, March 1961

Carnegie Mellon Outline § § Brief Historical Comments: From Kolmogorov to Kalman-Bucy Filtering Then … Filtering Today Consensus: Distributed Averaging in Random Environments Distributed Filtering: Consensus + innovations § Random field (parameter) estimation: Large scale § Intermittency: Infrastructure failures, Sensor failures § Random protocols: Gossip § Limited Resources: Quantization § Linear Parameter Estimator: Mixed time scale § Linear filtering: Intermittency – Random Riccati Eqn. § Stochastic boundedness § Invariant distribution § Moderate deviation § Conclusion

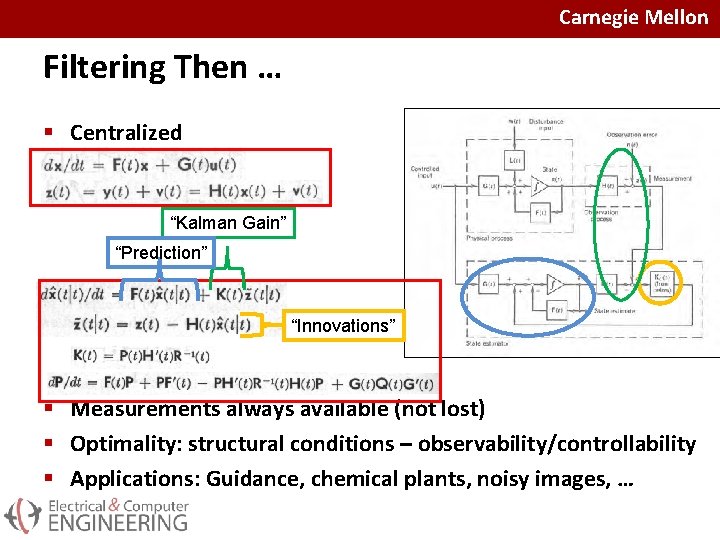

Carnegie Mellon Filtering Then … § Centralized “Kalman Gain” “Prediction” “Innovations” § Measurements always available (not lost) § Optimality: structural conditions – observability/controllability § Applications: Guidance, chemical plants, noisy images, …

Carnegie Mellon Filtering Today: Distributed Solution § Local communications § Agents communicate with neighbors § No central collection of data § Cooperative solution § In isolation: myopic view and knowledge § Cooperation: better understanding/global knowledge § Iterative solution § Realistic Problem: Intermittency § Sensors fail § Local communication channels fail § Limited resources: Structural Random Failures § Noisy sensors § Noisy communications § Limited bandwidth (quantized communications) § Optimality: § Asymptotically § Convergence rate

Carnegie Mellon Outline § Brief Historical Comments: From Kolmogorov to Kalman-Bucy § Filtering Then … Filtering Today § Consensus: Distributed Averaging § Standard consensus § Consensus in random environments § Distributed Filtering: Consensus + innovations § Random field (parameter) estimation § Realistic large scale problem: § Intermittency: Infrastructure failures, Sensor failures § Random protocols: Gossip § Limited Resources: Quantization § Two Linear Estimators: § LU: Stochastic Approximation § GLU: Mixed time scale estimator § Performance Analysis: Asymptotics § Conclusion

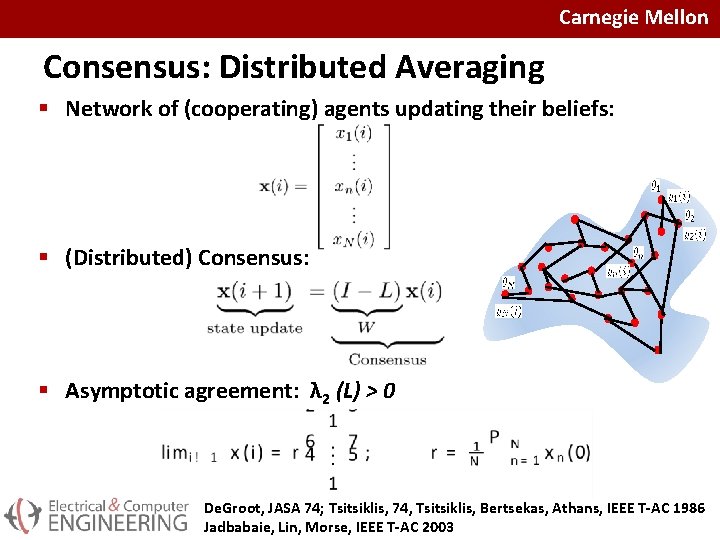

Carnegie Mellon Consensus: Distributed Averaging § Network of (cooperating) agents updating their beliefs: § (Distributed) Consensus: § Asymptotic agreement: λ 2 (L) > 0 De. Groot, JASA 74; Tsitsiklis, 74, Tsitsiklis, Bertsekas, Athans, IEEE T-AC 1986 Jadbabaie, Lin, Morse, IEEE T-AC 2003

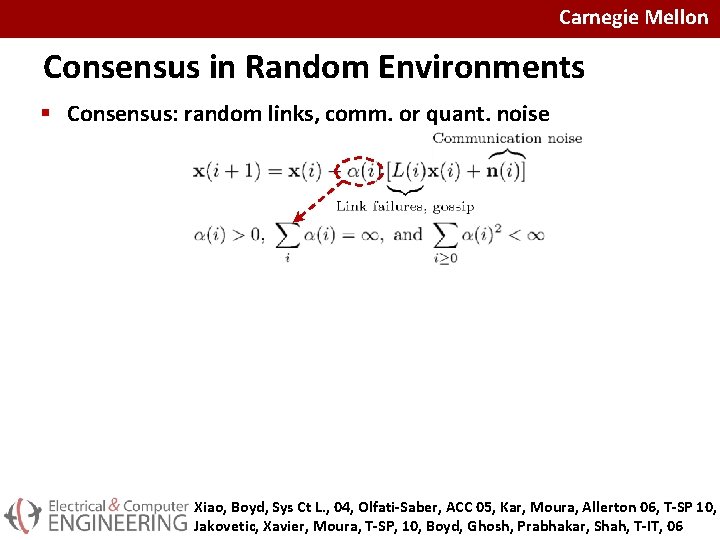

Carnegie Mellon Consensus in Random Environments § Consensus: random links, comm. or quant. noise § Consensus (reinterpreted): a. s. convergence to unbiased rv θ: Xiao, Boyd, Sys Ct L. , 04, Olfati-Saber, ACC 05, Kar, Moura, Allerton 06, T-SP 10, Jakovetic, Xavier, Moura, T-SP, 10, Boyd, Ghosh, Prabhakar, Shah, T-IT, 06

Carnegie Mellon Outline § § Brief Historical Comments: From Kolmogorov to Kalman-Bucy Filtering Then … Filtering Today Consensus: Distributed Averaging in Random Environments Distributed Filtering: Consensus + innovations § Random field (parameter) estimation: Large scale § Intermittency: Infrastructure failures, Sensor failures § Random protocols: Gossip § Limited Resources: Quantization § Linear Parameter Estimator: Mixed time scale § Linear filtering: Intermittency – Random Riccati Eqn. § Stochastic boundedness § Invariant distribution § Moderate deviation § Conclusion

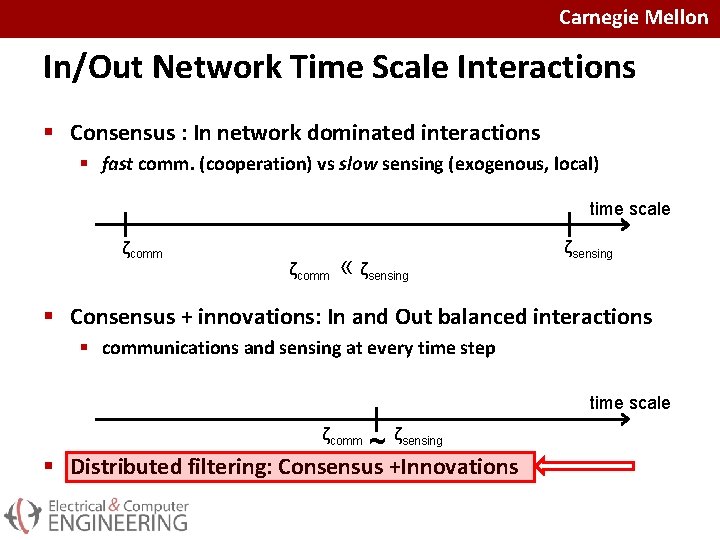

Carnegie Mellon In/Out Network Time Scale Interactions § Consensus : In network dominated interactions § fast comm. (cooperation) vs slow sensing (exogenous, local) time scale ζcomm « ζsensing § Consensus + innovations: In and Out balanced interactions § communications and sensing at every time step time scale ζcomm ~ ζsensing § Distributed filtering: Consensus +Innovations

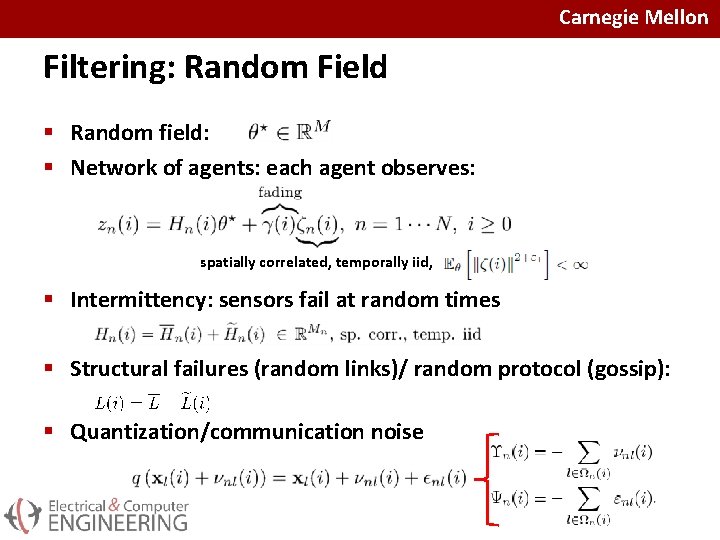

Carnegie Mellon Filtering: Random Field § Random field: § Network of agents: each agent observes: spatially correlated, temporally iid, § Intermittency: sensors fail at random times § Structural failures (random links)/ random protocol (gossip): § Quantization/communication noise

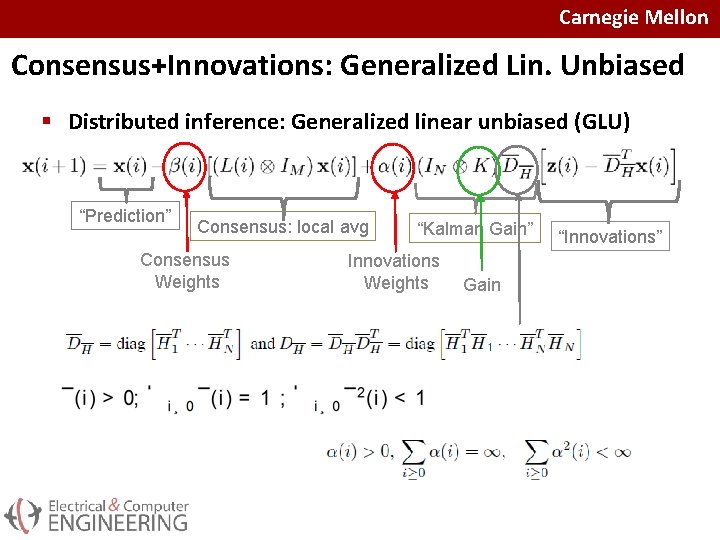

Carnegie Mellon Consensus+Innovations: Generalized Lin. Unbiased § Distributed inference: Generalized linear unbiased (GLU) “Prediction” Consensus: local avg Consensus Weights “Kalman Gain” Innovations Weights Gain “Innovations”

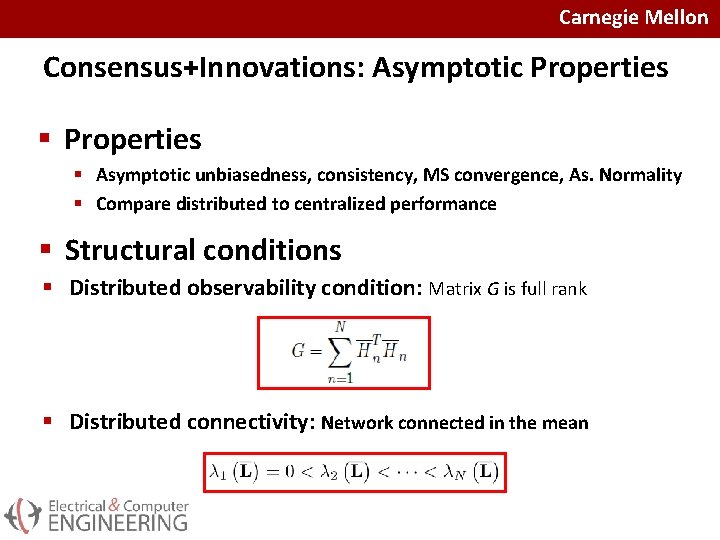

Carnegie Mellon Consensus+Innovations: Asymptotic Properties § Asymptotic unbiasedness, consistency, MS convergence, As. Normality § Compare distributed to centralized performance § Structural conditions § Distributed observability condition: Matrix G is full rank § Distributed connectivity: Network connected in the mean

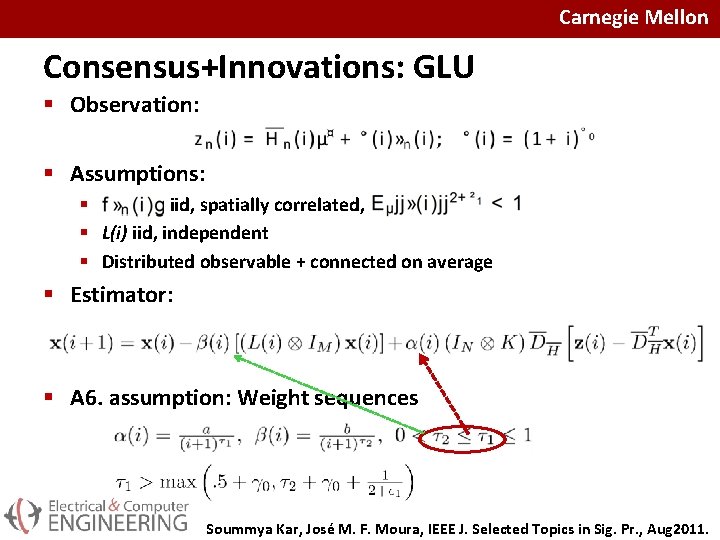

Carnegie Mellon Consensus+Innovations: GLU § Observation: § Assumptions: § iid, spatially correlated, § L(i) iid, independent § Distributed observable + connected on average § Estimator: § A 6. assumption: Weight sequences Soummya Kar, José M. F. Moura, IEEE J. Selected Topics in Sig. Pr. , Aug 2011.

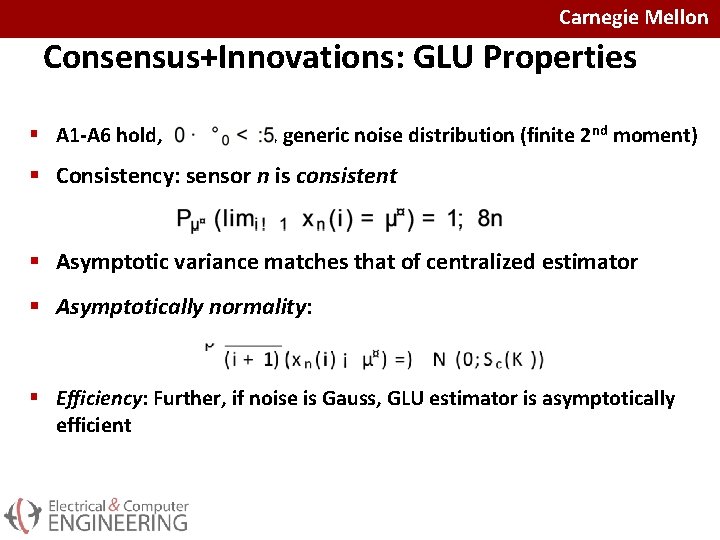

Carnegie Mellon Consensus+Innovations: GLU Properties § A 1 -A 6 hold, , generic noise distribution (finite 2 nd moment) § Consistency: sensor n is consistent § Asymptotic variance matches that of centralized estimator § Asymptotically normality: § Efficiency: Further, if noise is Gauss, GLU estimator is asymptotically efficient

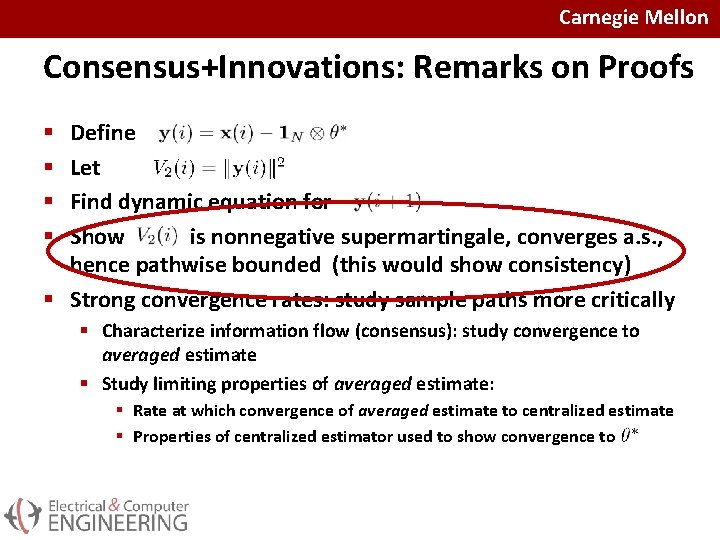

Carnegie Mellon Consensus+Innovations: Remarks on Proofs Define Let Find dynamic equation for Show is nonnegative supermartingale, converges a. s. , hence pathwise bounded (this would show consistency) § Strong convergence rates: study sample paths more critically § § § Characterize information flow (consensus): study convergence to averaged estimate § Study limiting properties of averaged estimate: § Rate at which convergence of averaged estimate to centralized estimate § Properties of centralized estimator used to show convergence to

Carnegie Mellon Outline § § Intermittency: networked systems, packet loss Random Riccati Equation: stochastic Boundedness Random Riccati Equation: Invariant distribution Random Riccati Equation: Moderate deviation principle § Rate of decay of probability of rare events § Scalar numerical example § Conclusions

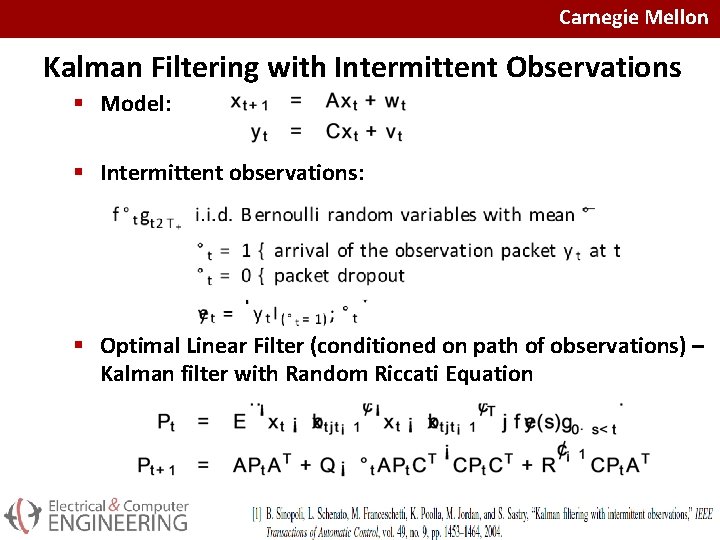

Carnegie Mellon Kalman Filtering with Intermittent Observations § Model: § Intermittent observations: § Optimal Linear Filter (conditioned on path of observations) – Kalman filter with Random Riccati Equation

Carnegie Mellon Outline § § Intermittency: networked systems, packet loss Random Riccati Equation: stochastic Boundedness Random Riccati Equation: Invariant distribution Random Riccati Equation: Moderate deviation principle § Rate of decay of probability of rare events § Scalar numerical example § Conclusions

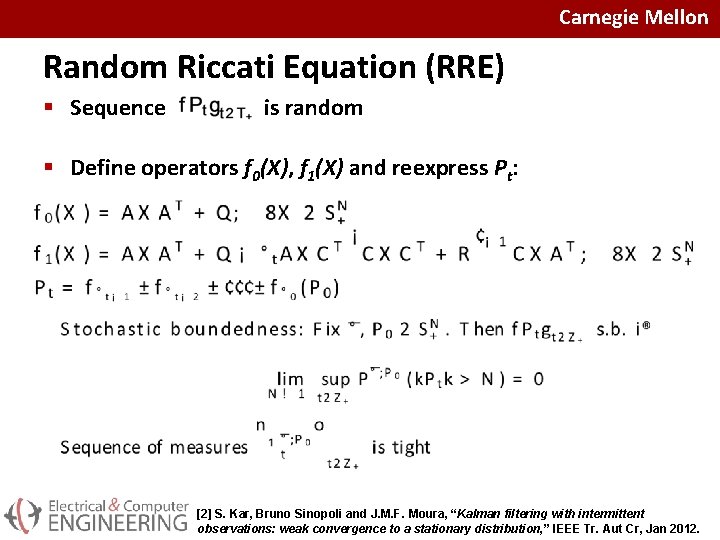

Carnegie Mellon Random Riccati Equation (RRE) § Sequence is random § Define operators f 0(X), f 1(X) and reexpress Pt: [2] S. Kar, Bruno Sinopoli and J. M. F. Moura, “Kalman filtering with intermittent observations: weak convergence to a stationary distribution, ” IEEE Tr. Aut Cr, Jan 2012.

Carnegie Mellon Outline § § Intermittency: networked systems, packet loss Random Riccati Equation: stochastic Boundedness Random Riccati Equation: Invariant distribution Random Riccati Equation: Moderate deviation principle § Rate of decay of probability of rare events § Scalar numerical example § Conclusions

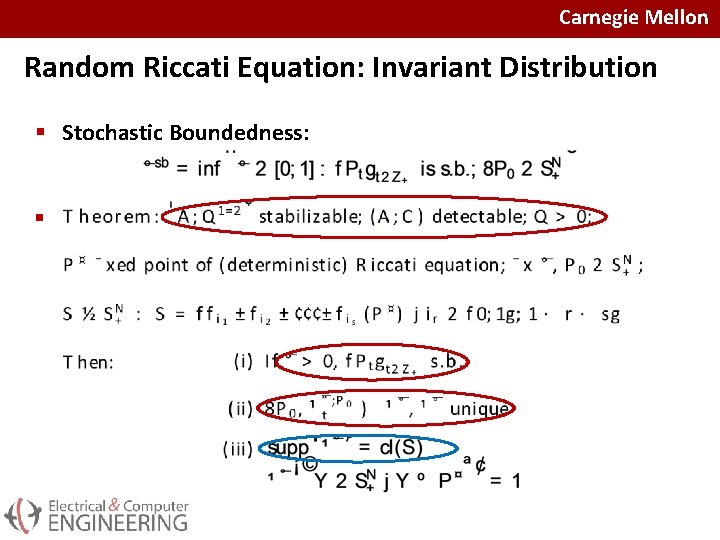

Carnegie Mellon Random Riccati Equation: Invariant Distribution § Stochastic Boundedness: §

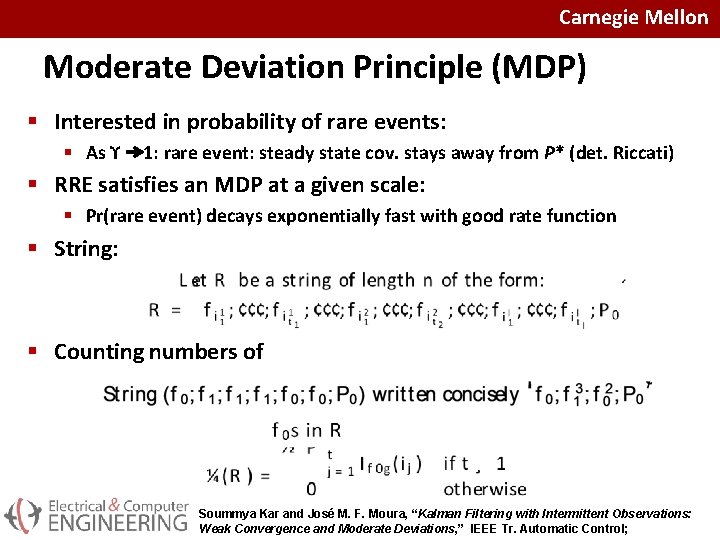

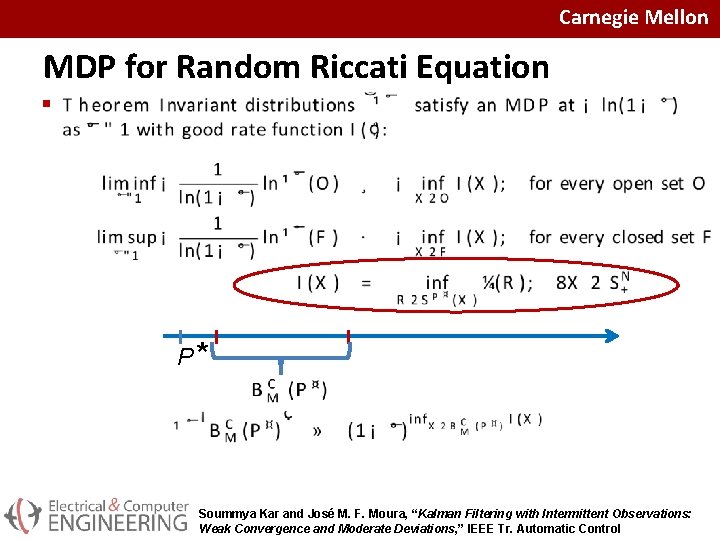

Carnegie Mellon Moderate Deviation Principle (MDP) § Interested in probability of rare events: § As ϒ 1: rare event: steady state cov. stays away from P* (det. Riccati) § RRE satisfies an MDP at a given scale: § Pr(rare event) decays exponentially fast with good rate function § String: § Counting numbers of Soummya Kar and José M. F. Moura, “Kalman Filtering with Intermittent Observations: Weak Convergence and Moderate Deviations, ” IEEE Tr. Automatic Control;

Carnegie Mellon MDP for Random Riccati Equation § P* Soummya Kar and José M. F. Moura, “Kalman Filtering with Intermittent Observations: Weak Convergence and Moderate Deviations, ” IEEE Tr. Automatic Control

Carnegie Mellon Outline § § Intermittency: networked systems, packet loss Random Riccati Equation: stochastic Boundedness Random Riccati Equation: Invariant distribution Random Riccati Equation: Moderate deviation principle § Rate of decay of probability of rare events § Scalar numerical example § Conclusions

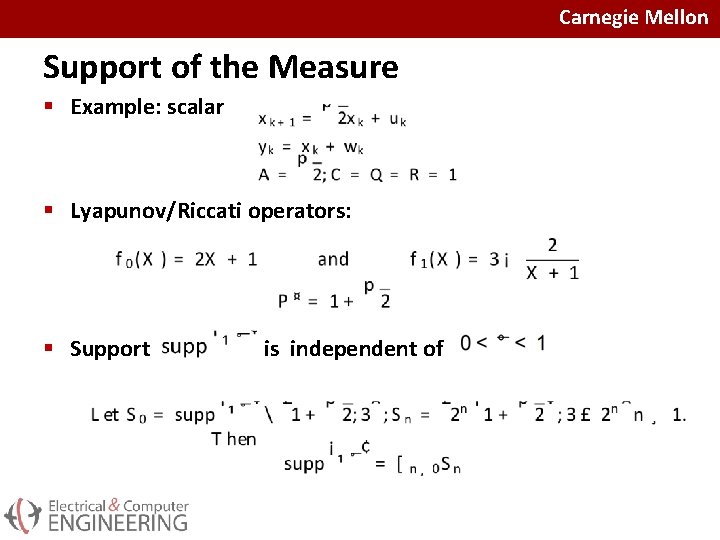

Carnegie Mellon Support of the Measure § Example: scalar § Lyapunov/Riccati operators: § Support is independent of

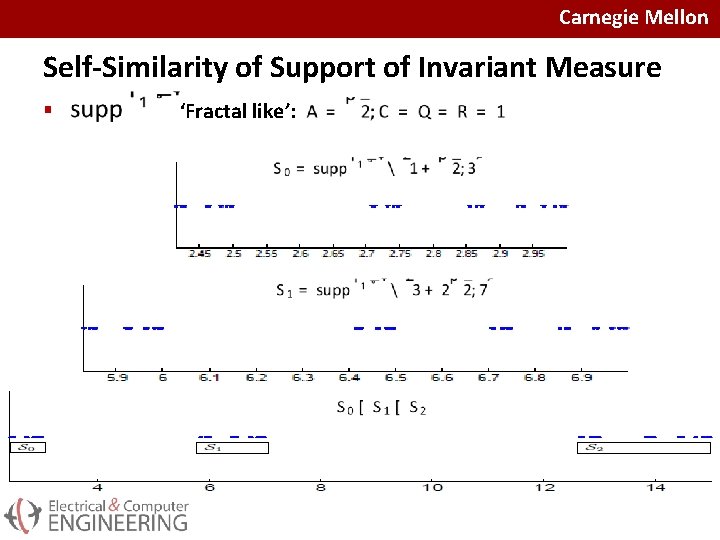

Carnegie Mellon Self-Similarity of Support of Invariant Measure § ‘Fractal like’:

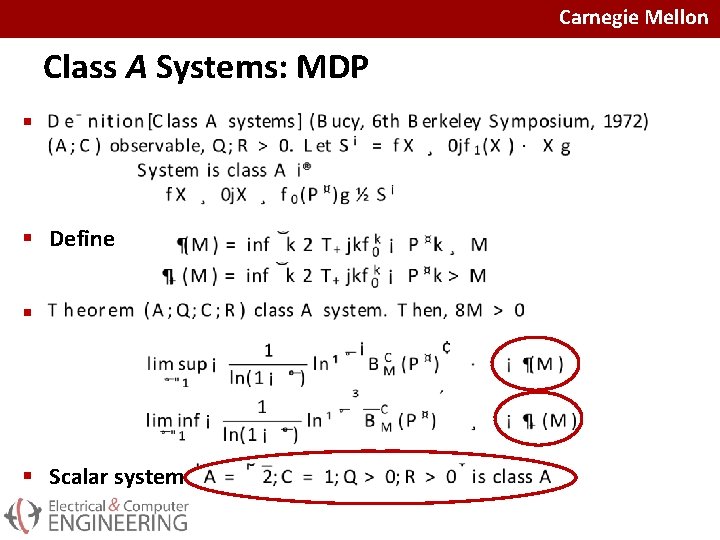

Carnegie Mellon Class A Systems: MDP § § Define § § Scalar system

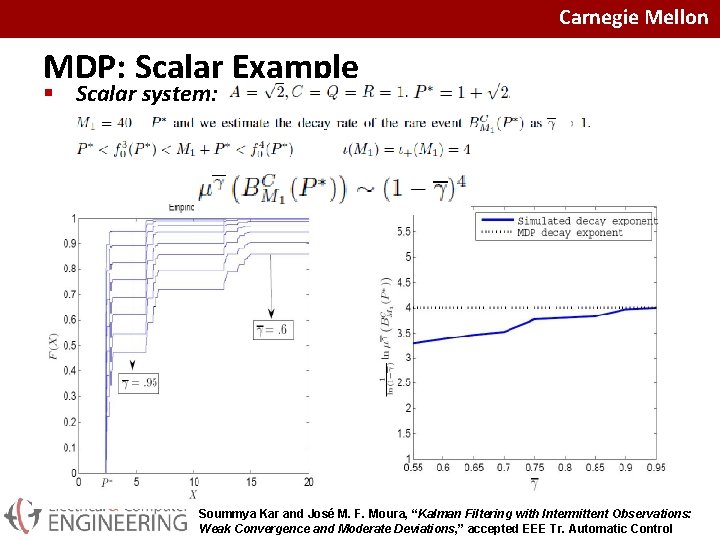

Carnegie Mellon MDP: Scalar Example § Scalar system: Soummya Kar and José M. F. Moura, “Kalman Filtering with Intermittent Observations: Weak Convergence and Moderate Deviations, ” accepted EEE Tr. Automatic Control

Carnegie Mellon Outline § § Intermittency: networked systems, packet loss Random Riccati Equation: stochastic Boundedness Random Riccati Equation: Invariant distribution Random Riccati Equation: Moderate deviation principle § Rate of decay of probability of rare events § Scalar numerical example § Conclusions

Carnegie Mellon Conclusion § Filtering 50 years after Kalman and Kalman-Bucy: § Consensus+innovations: Large scale distributed networked agents § § Intermittency: sensors fail; comm links fail Gossip: random protocol Limited power: quantization Observ. Noise § Linear estimators: § Interleave consensus and innovations § Single scale: stochastic approximation § Mixed scale: can optimize rate of convergence and limiting covariance § Structural conditions: distributed observability+ mean connectivitiy § Asymptotic properties: Distributed as Good as Centralized § unbiased, consistent, normal, mixed scale converges to optimal centralized

Carnegie Mellon Conclusion § Intermittency: packet loss § Stochastically bounded as long as rate of measurements strictly positive § Random Riccati Equation: Probability measure of random covariance is invariant to initial condition § Support of invariant measure is ‘fractal like’ § Moderate Deviation Principle: rate of decay of probability of ‘bad’ (rare) events as rate of measurements grows to 1 P* § All is computable

Carnegie Mellon Thanks Questions?

- Slides: 38