CAPRI A Common Architecture for Autonomous Distributed Internet

CAPRI: A Common Architecture for Autonomous, Distributed Internet Fault Diagnosis using Probabilistic Relational Models George J. Lee <gjl@mit. edu> Advanced Network Architecture Group Computer Science and Artificial Intelligence Lab Massachusetts Institute of Technology 1/15/2022 1

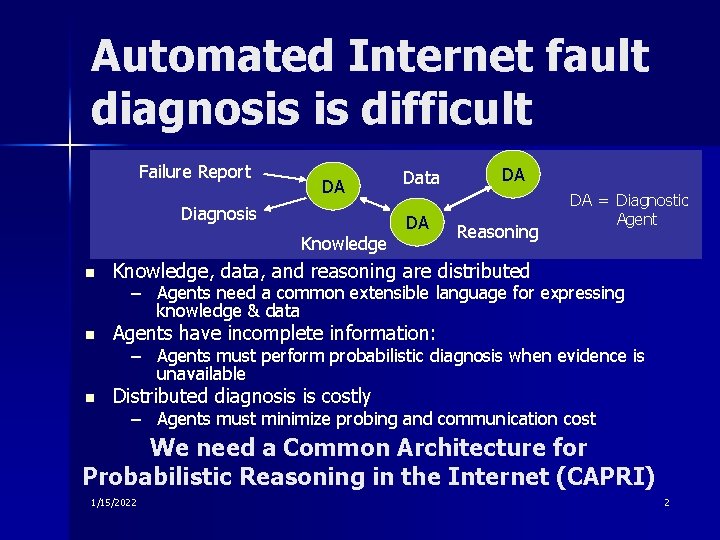

Automated Internet fault diagnosis is difficult Failure Report DA Diagnosis Knowledge n Data DA DA Reasoning DA = Diagnostic Agent Knowledge, data, and reasoning are distributed – Agents need a common extensible language for expressing knowledge & data n Agents have incomplete information: – Agents must perform probabilistic diagnosis when evidence is unavailable n Distributed diagnosis is costly – Agents must minimize probing and communication cost We need a Common Architecture for Probabilistic Reasoning in the Internet (CAPRI) 1/15/2022 2

Overview n An extensible language for expressing diagnostic data & knowledge – Based on Bayes nets and Probabilistic Relational Models n Distributed probabilistic reasoning while minimizing probing and communication cost – – n Trading off accuracy and cost Incorporating past evidence Propagating evidence to other agents Simulations: accuracy vs. cost Learning diagnostic knowledge for real-world diagnosis – Passive diagnosis of HTTP proxy connections – Evaluation: accuracy using learned knowledge 1/15/2022 3

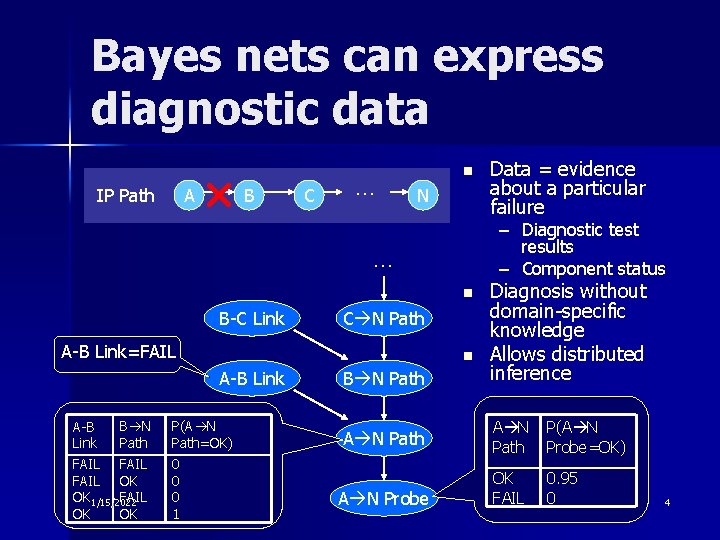

Bayes nets can express diagnostic data IP Path A B C … n N – Diagnostic test results – Component status … n B-C Link C N Path A-B Link=FAIL n A-B Link B N A-B Link Path FAIL OK OK 1/15/2022 FAIL OK OK P(A N Path=OK) 0 0 0 1 B N Path A N Probe Data = evidence about a particular failure Diagnosis without domain-specific knowledge Allows distributed inference A N Path P(A N Probe=OK) OK FAIL 0. 95 0 4

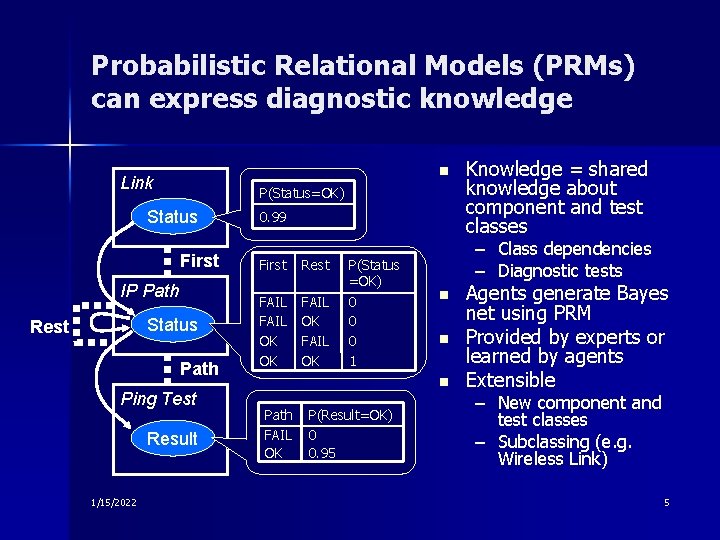

Probabilistic Relational Models (PRMs) can express diagnostic knowledge n Link P(Status=OK) Status First IP Path Status Rest Path Ping Test Result 1/15/2022 0. 99 First Rest FAIL OK OK FAIL OK P(Status =OK) 0 0 0 1 – Class dependencies – Diagnostic tests n n n Path FAIL OK P(Result=OK) 0 0. 95 Knowledge = shared knowledge about component and test classes Agents generate Bayes net using PRM Provided by experts or learned by agents Extensible – New component and test classes – Subclassing (e. g. Wireless Link) 5

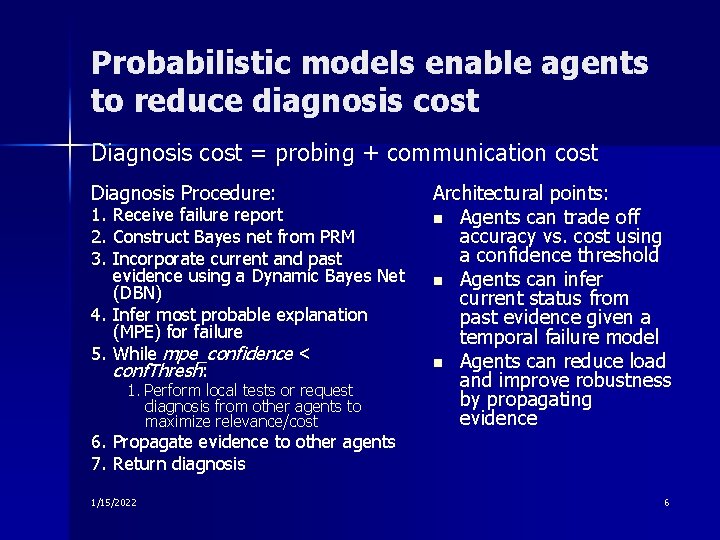

Probabilistic models enable agents to reduce diagnosis cost Diagnosis cost = probing + communication cost Diagnosis Procedure: 1. Receive failure report 2. Construct Bayes net from PRM 3. Incorporate current and past evidence using a Dynamic Bayes Net (DBN) 4. Infer most probable explanation (MPE) for failure 5. While mpe_confidence < conf. Thresh: 1. Perform local tests or request diagnosis from other agents to maximize relevance/cost Architectural points: n Agents can trade off accuracy vs. cost using a confidence threshold n Agents can infer current status from past evidence given a temporal failure model n Agents can reduce load and improve robustness by propagating evidence 6. Propagate evidence to other agents 7. Return diagnosis 1/15/2022 6

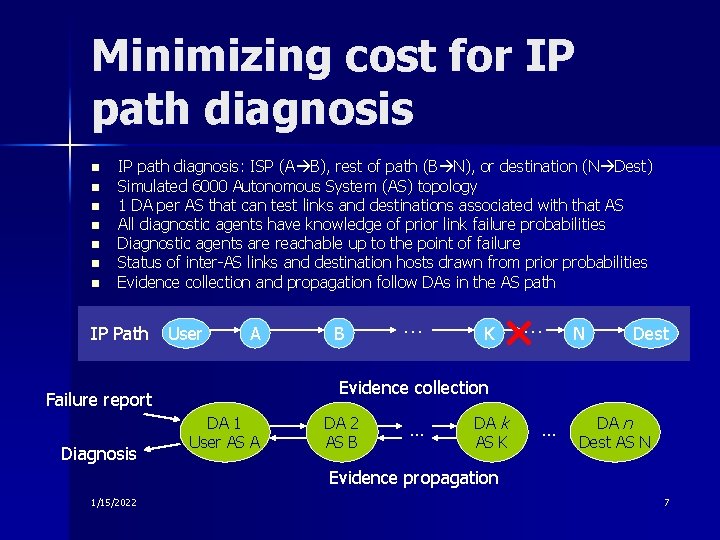

Minimizing cost for IP path diagnosis n n n n IP path diagnosis: ISP (A B), rest of path (B N), or destination (N Dest) Simulated 6000 Autonomous System (AS) topology 1 DA per AS that can test links and destinations associated with that AS All diagnostic agents have knowledge of prior link failure probabilities Diagnostic agents are reachable up to the point of failure Status of inter-AS links and destination hosts drawn from prior probabilities Evidence collection and propagation follow DAs in the AS path IP Path User A … K … N Dest Evidence collection Failure report Diagnosis B DA 1 User AS A DA 2 AS B … DA k AS K … DA n Dest AS N Evidence propagation 1/15/2022 7

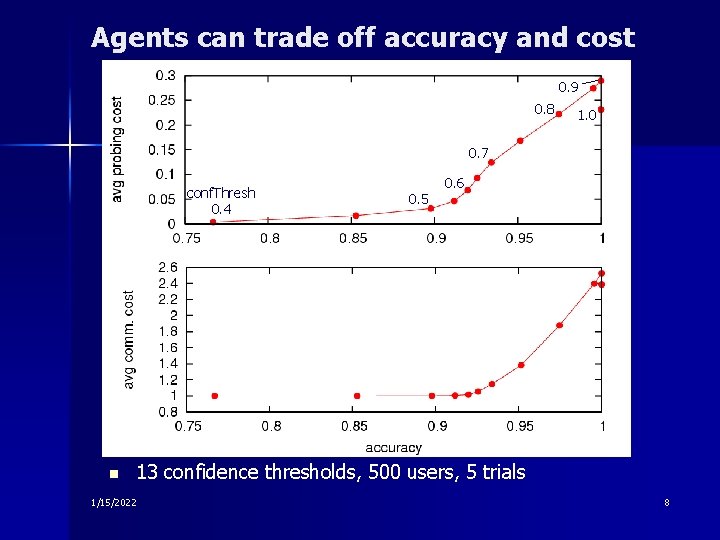

Agents can trade off accuracy and cost 0. 9 0. 8 1. 0 0. 7 conf. Thresh 0. 4 n 0. 5 0. 6 13 confidence thresholds, 500 users, 5 trials 1/15/2022 8

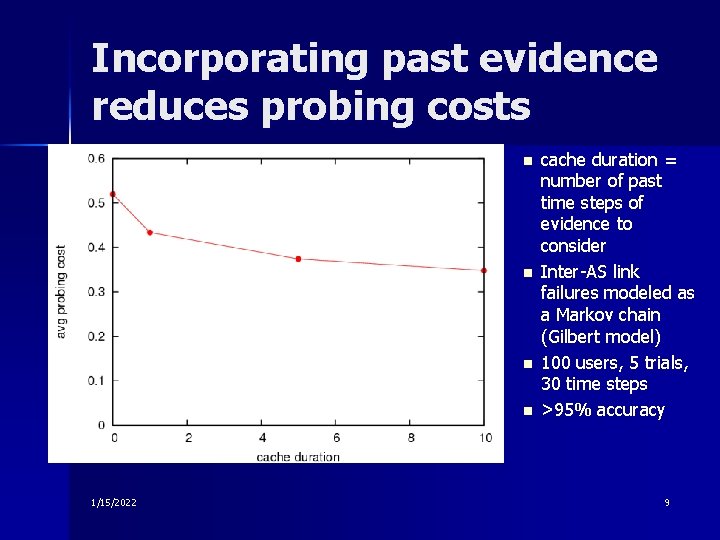

Incorporating past evidence reduces probing costs n n 1/15/2022 cache duration = number of past time steps of evidence to consider Inter-AS link failures modeled as a Markov chain (Gilbert model) 100 users, 5 trials, 30 time steps >95% accuracy 9

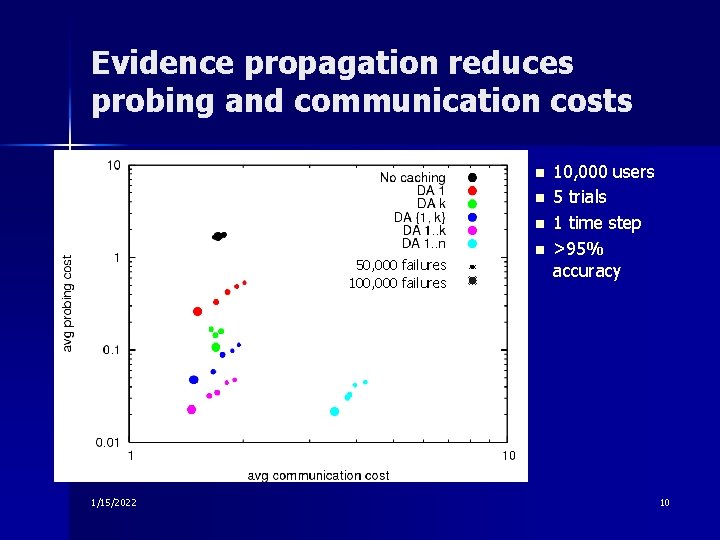

Evidence propagation reduces probing and communication costs n n n 50, 000 failures 100, 000 failures 1/15/2022 n 10, 000 users 5 trials 1 time step >95% accuracy 10

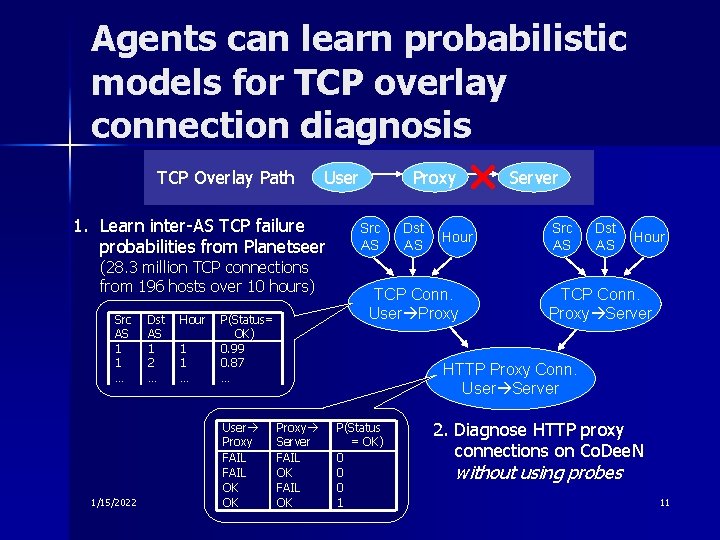

Agents can learn probabilistic models for TCP overlay connection diagnosis TCP Overlay Path User 1. Learn inter-AS TCP failure probabilities from Planetseer (28. 3 million TCP connections from 196 hosts over 10 hours) Src AS 1 1 … 1/15/2022 Dst AS 1 2 … Hour 1 1 … P(Status= OK) 0. 99 0. 87 … User Proxy FAIL OK OK Proxy Src AS Dst AS Hour TCP Conn. User Proxy Server Src AS Dst AS Hour TCP Conn. Proxy Server HTTP Proxy Conn. User Server Proxy Server FAIL OK P(Status = OK) 0 0 0 1 2. Diagnose HTTP proxy connections on Co. Dee. N without using probes 11

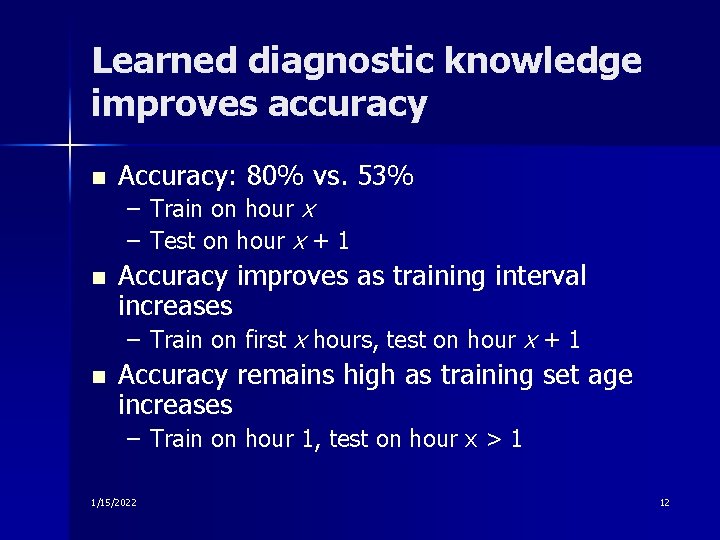

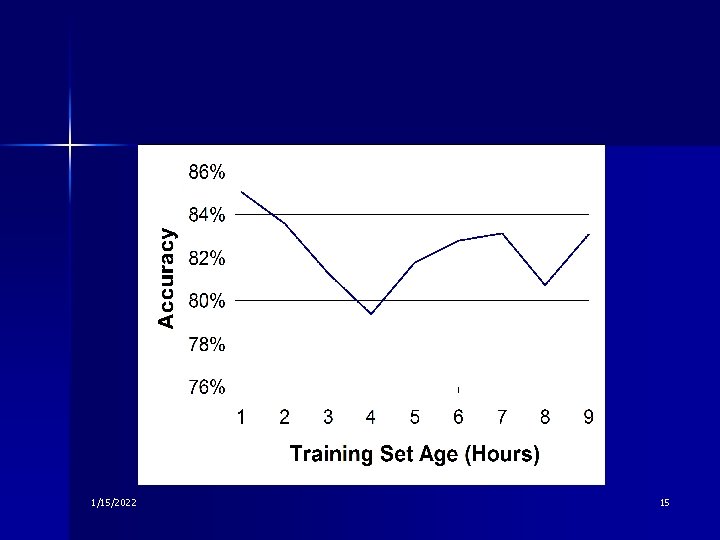

Learned diagnostic knowledge improves accuracy n Accuracy: 80% vs. 53% – Train on hour x – Test on hour x + 1 n Accuracy improves as training interval increases – Train on first x hours, test on hour x + 1 n Accuracy remains high as training set age increases – Train on hour 1, test on hour x > 1 1/15/2022 12

Benefits of CAPRI n An extensible language for diagnostic data and knowledge – Based on Bayes nets and PRMs n Distributed diagnosis while minimizing probing and communication cost – accuracy/cost tradeoff – incorporating past evidence – evidence propagation n Robustness to missing data n Ability to learn diagnostic knowledge – probabilistic inference using cached data – learn conditional failure probabilities using PRMs 1/15/2022 13

Future Work n Costs and incentives – Learning the true network costs of diagnostic tests – Dynamically adjusting cost – Incentives for agent to reveal evidence n Intelligent routing of diagnostic queries Temporal failure models n Diagnosis using data from end users n – Learning temporal failure models – Predicting failure duration 1/15/2022 14

1/15/2022 15

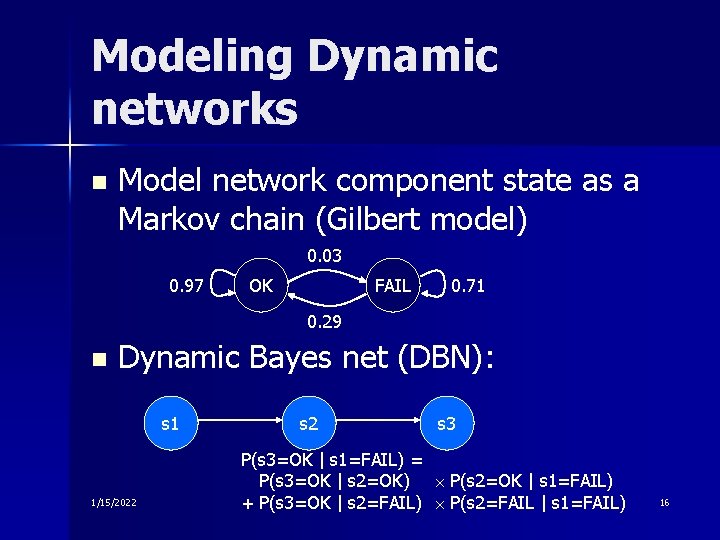

Modeling Dynamic networks n Model network component state as a Markov chain (Gilbert model) 0. 03 0. 97 OK FAIL 0. 71 0. 29 n Dynamic Bayes net (DBN): s 1 1/15/2022 s 3 P(s 3=OK | s 1=FAIL) = P(s 3=OK | s 2=OK) P(s 2=OK | s 1=FAIL) + P(s 3=OK | s 2=FAIL) P(s 2=FAIL | s 1=FAIL) 16

- Slides: 16