California K12 Assessment Update California Education Research Association

- Slides: 48

California K-12 Assessment Update California Education Research Association November 30, 2012 Patrick Traynor, Ph. D. Director, Assessment Development and Administration Division Eric Zilbert, Ph. D. Administrator, ADAD Psychometric Unit CALIFORNIA DEPARTMENT OF EDUCATION Tom Torlakson, State Superintendent of Public Instruction

Presentation Overview TOM TORLAKSON State Superintendent of Public Instruction • Recent and Upcoming SBAC Developments • Scoring Technologies • Alternate Assessment Participation (1% Population) • Transitional Activities 2

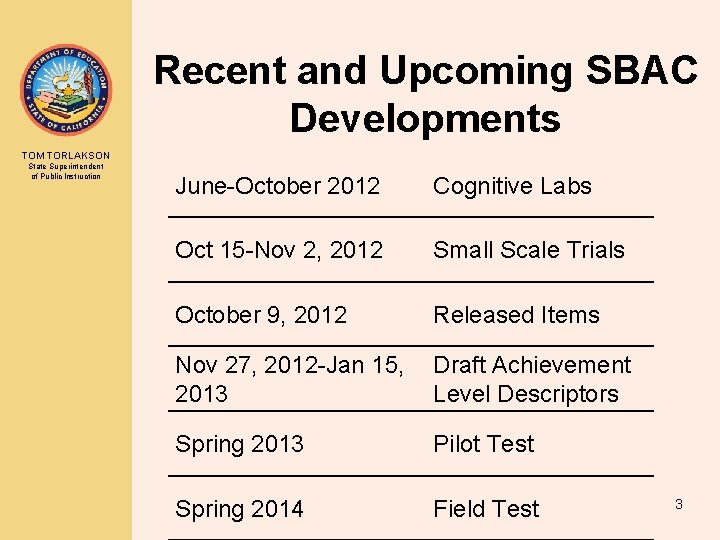

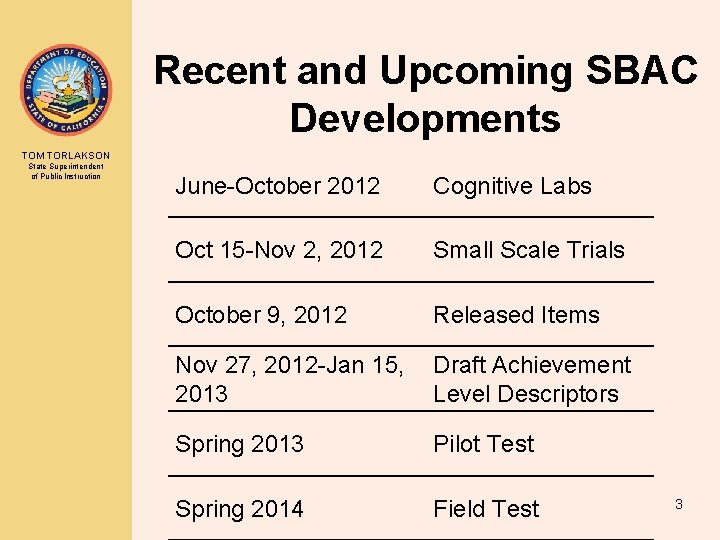

Recent and Upcoming SBAC Developments TOM TORLAKSON State Superintendent of Public Instruction June-October 2012 Cognitive Labs Oct 15 -Nov 2, 2012 Small Scale Trials October 9, 2012 Released Items Nov 27, 2012 -Jan 15, Draft Achievement 2013 Level Descriptors Spring 2013 Pilot Test Spring 2014 Field Test 3

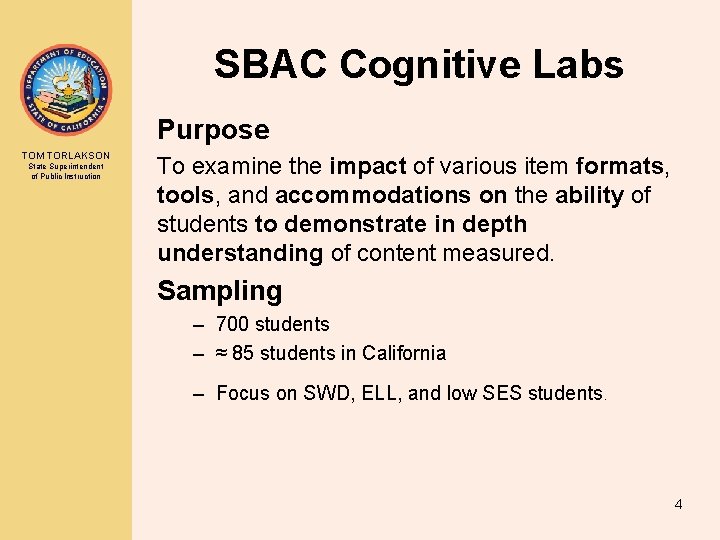

SBAC Cognitive Labs Purpose TOM TORLAKSON State Superintendent of Public Instruction To examine the impact of various item formats, tools, and accommodations on the ability of students to demonstrate in depth understanding of content measured. Sampling – 700 students – ≈ 85 students in California – Focus on SWD, ELL, and low SES students . 4

SBAC Cognitive Labs TOM TORLAKSON State Superintendent of Public Instruction Methodology • Research questions related to technologybased assessment • A trained facilitator – administers test items – conducts a interview (≈ 90 -120 minutes). • Computer provided by the facilitator • Labs (two approaches) – Think aloud as work through an item – First solve, then respond to questions about approach 5

SBAC Small Scale Trials Purpose TOM TORLAKSON State Superintendent of Public Instruction To inform automated and human scoring Sampling • Stratified random sampling – ≈ 900 schools across member states – ≈ 230 schools in California • Goal: to be representative of SBAC students • Schools – randomly select one to two classrooms – assigned grade (4, 7, or 11) 6

SBAC Small Scale Trials Methodology TOM TORLAKSON State Superintendent of Public Instruction • Each assessment – 15 -18 selected-response and constructed response items – ≈ 60 -90 minutes of student time • School computers • Each participating school designates a school coordinator who is trained to administer the assessment • Schools are not required to assess all selected students at the same time or on the same day 7

Smarter Balanced Pilot Testing TOM TORLAKSON State Superintendent of Public Instruction • Smarter Balanced will conduct a Pilot computer-based administration of their assessment system beginning in February 2013. • Items will be aligned to the Common Core State Standards and will include selected response, constructed response, and performance tasks • Participation in the Pilot Test will be open to all schools in the Consortium and will be administered to students in grades 3– 8 and grade 11. 8

Smarter Balanced Pilot Testing TOM TORLAKSON State Superintendent of Public Instruction • The Pilot Test entails two approaches (or components) in its implementation: 1) “Volunteer” component that is open to all schools in Smarter Balanced states and will ensure that all schools have the opportunity to experience the basic functionality of the system 2) “Scientific” component that targets a representative sample of schools and yields critical data about the items developed to date, as well as how the system is functioning 9

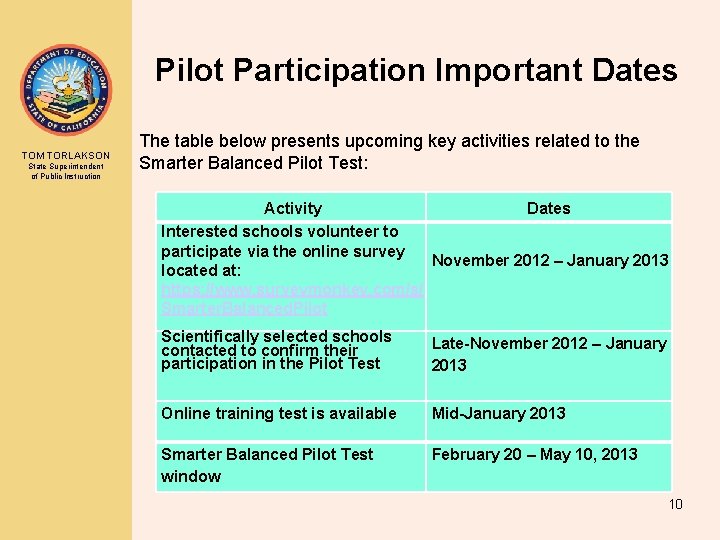

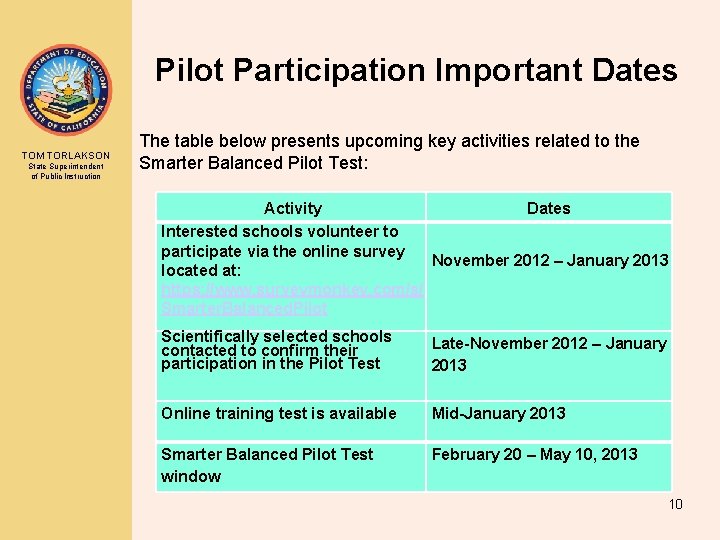

Pilot Participation Important Dates TOM TORLAKSON State Superintendent of Public Instruction The table below presents upcoming key activities related to the Smarter Balanced Pilot Test: Activity Dates Interested schools volunteer to participate via the online survey November 2012 – January 2013 located at: https: //www. surveymonkey. com/s/ Smarter. Balanced. Pilot Scientifically selected schools contacted to confirm their participation in the Pilot Test Late-November 2012 – January 2013 Online training test is available Mid-January 2013 Smarter Balanced Pilot Test window February 20 – May 10, 2013 10

Smarter Balanced Draft Achievement Level Descriptors (ALDs) TOM TORLAKSON State Superintendent of Public Instruction • “Describe” four levels of achievement: “deep command, ” “sufficient command, ” “partial command, ” or “minimal command” of knowledge, skills, and processes in both English– language arts/literacy and mathematics • First draft ALDs for public comment from November 27, 2012 through January 15, 2013 • A full description of the ALDs and an online survey for providing feedback are available on the Smarter Balanced achievement level descriptors Web page at http: //www. smarterbalanced. org/achievement-level 11 -descriptors-and-college-readiness/

Smarter Balanced Sample Items and Performance Tasks 12

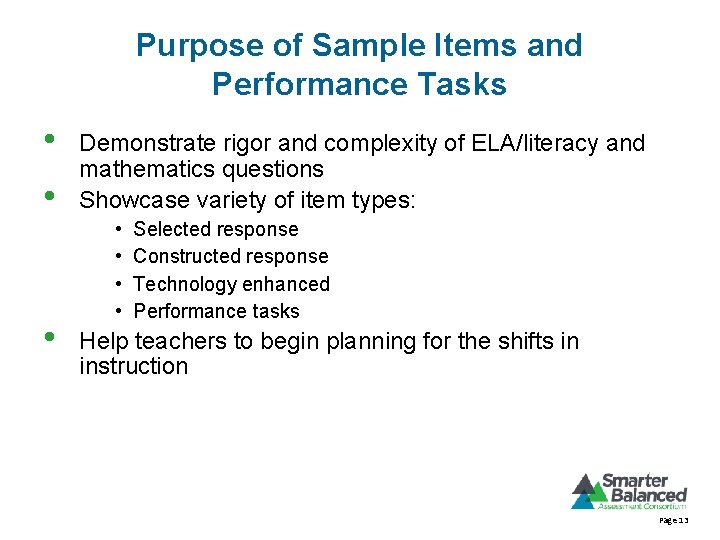

Purpose of Sample Items and Performance Tasks • • • Demonstrate rigor and complexity of ELA/literacy and mathematics questions Showcase variety of item types: • • Selected response Constructed response Technology enhanced Performance tasks Help teachers to begin planning for the shifts in instruction Page 13

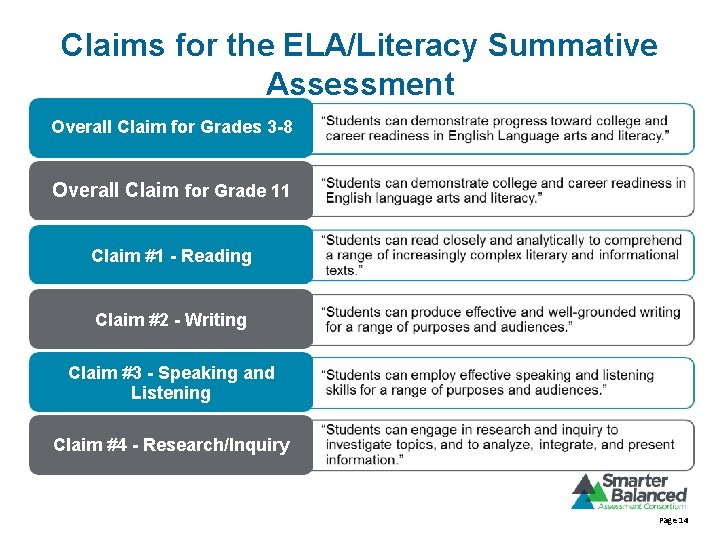

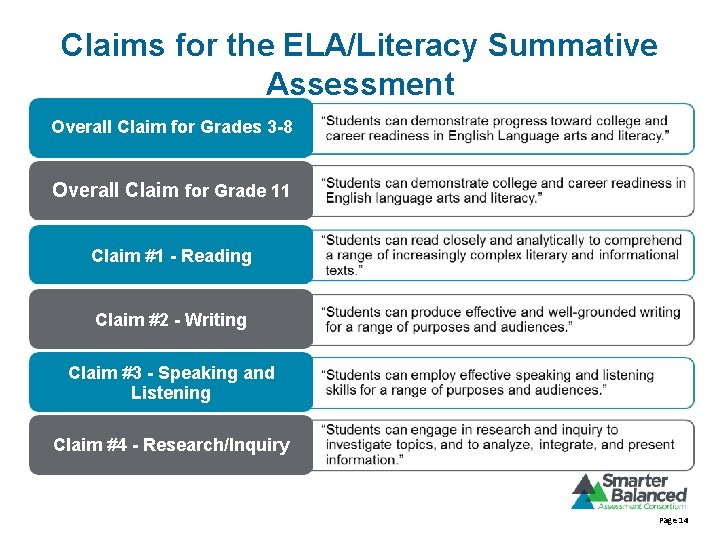

Claims for the ELA/Literacy Summative Assessment Overall Claim for Grades 3 -8 Overall Claim for Grade 11 Claim #1 - Reading Claim #2 - Writing Claim #3 - Speaking and Listening Claim #4 - Research/Inquiry Page 14

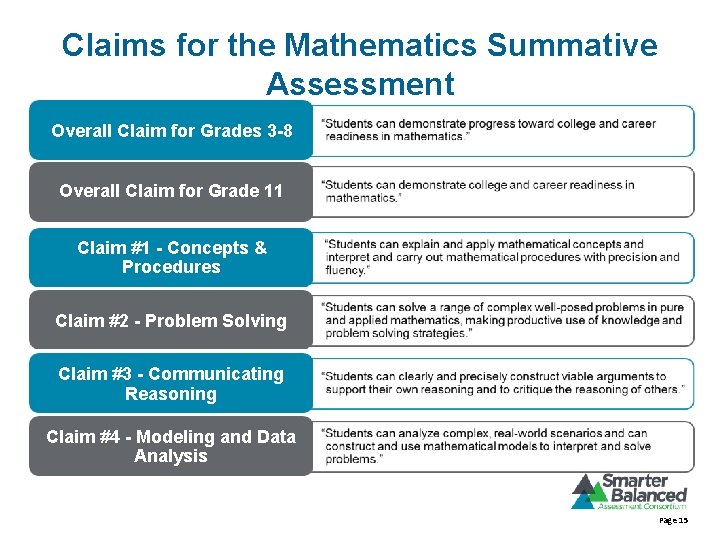

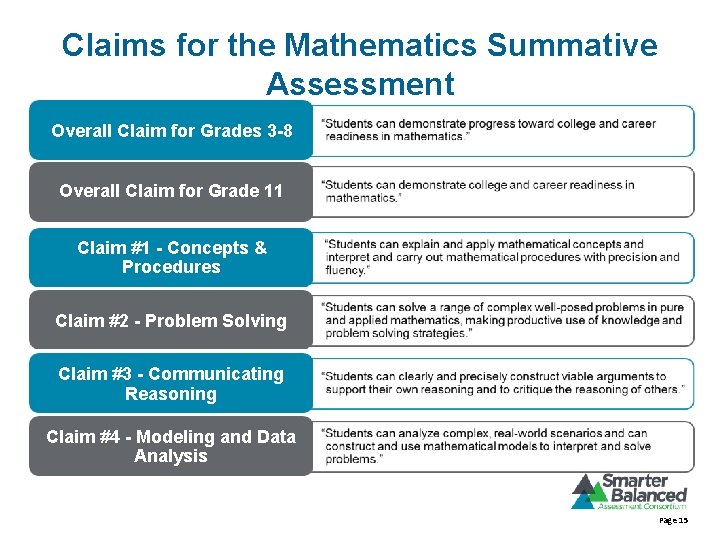

Claims for the Mathematics Summative Assessment Overall Claim for Grades 3 -8 Overall Claim for Grade 11 Claim #1 - Concepts & Procedures Claim #2 - Problem Solving Claim #3 - Communicating Reasoning Claim #4 - Modeling and Data Analysis Page 15

Exploring the Sample Items 16

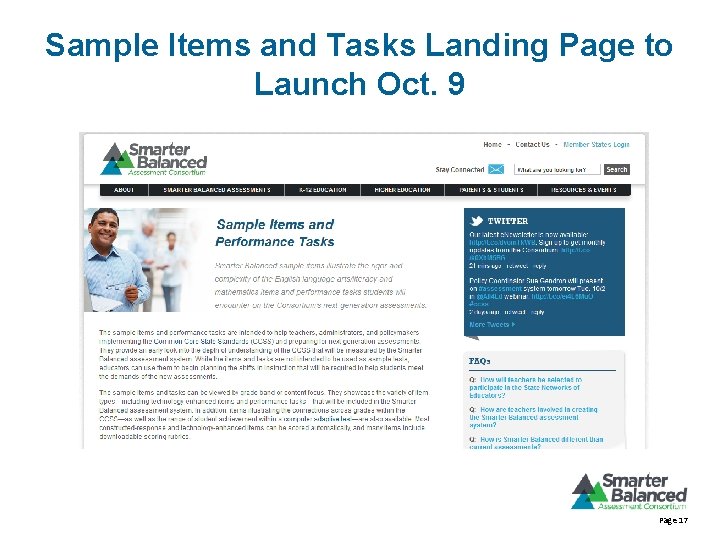

Sample Items and Tasks Landing Page to Launch Oct. 9 Page 17

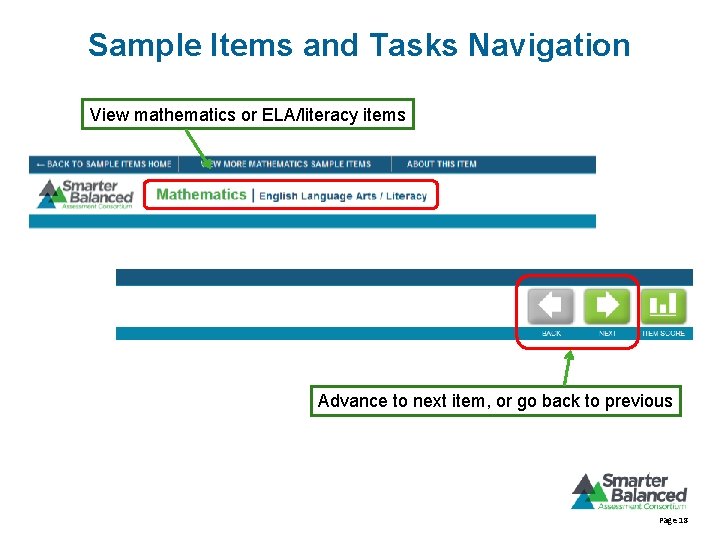

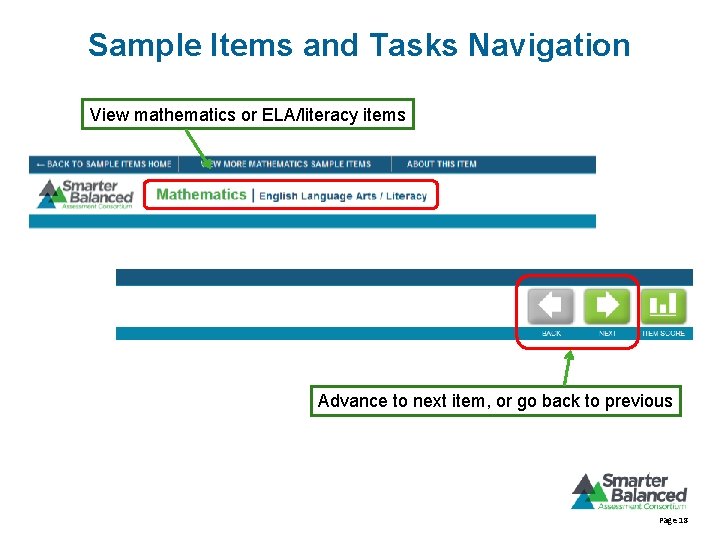

Sample Items and Tasks Navigation View mathematics or ELA/literacy items Advance to next item, or go back to previous Page 18

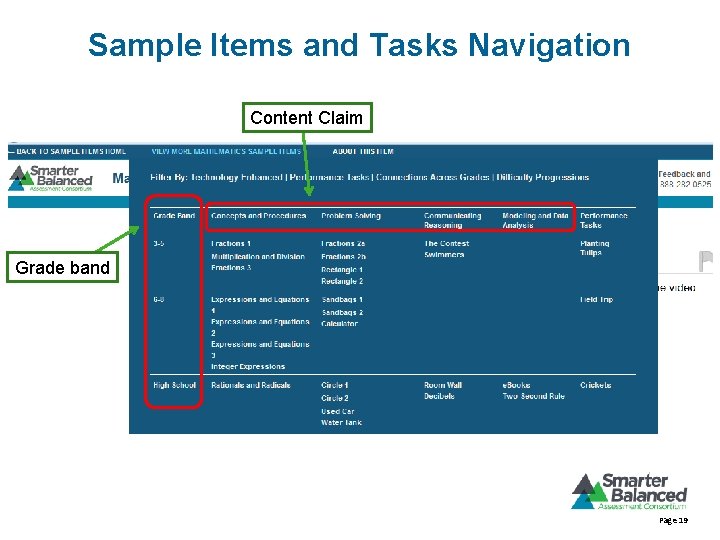

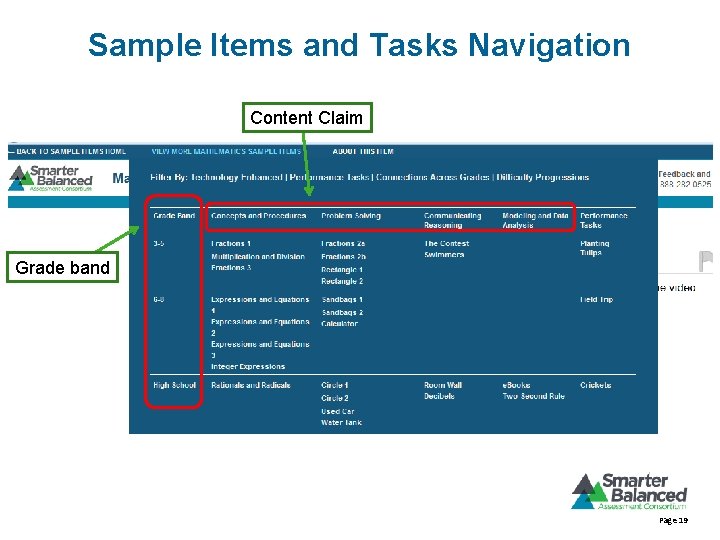

Sample Items and Tasks Navigation Content Claim Grade band Page 19

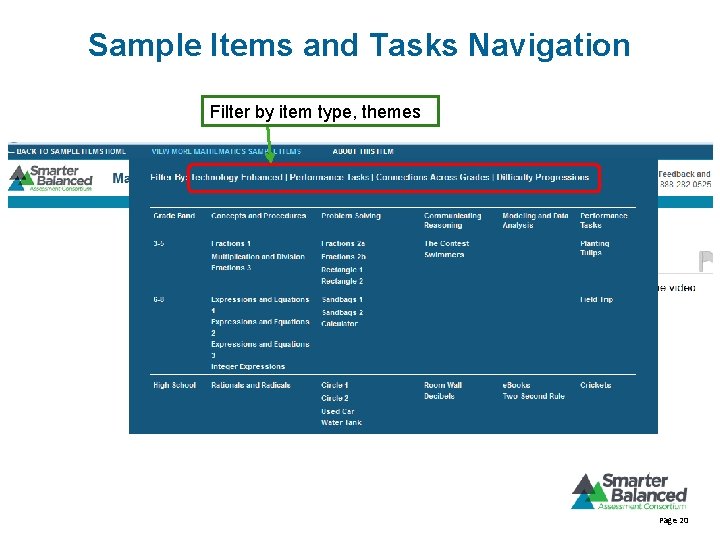

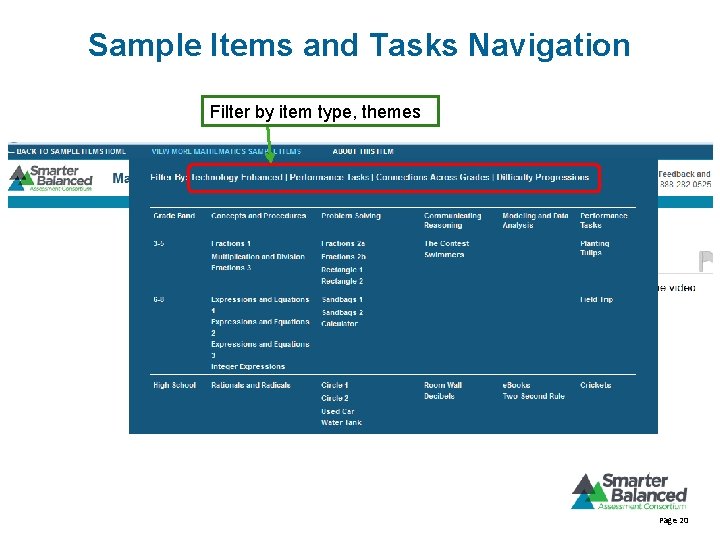

Sample Items and Tasks Navigation Filter by item type, themes Page 20

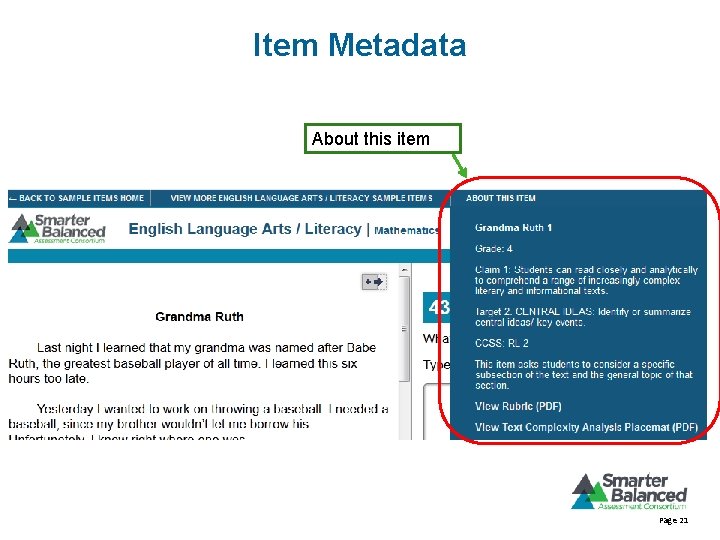

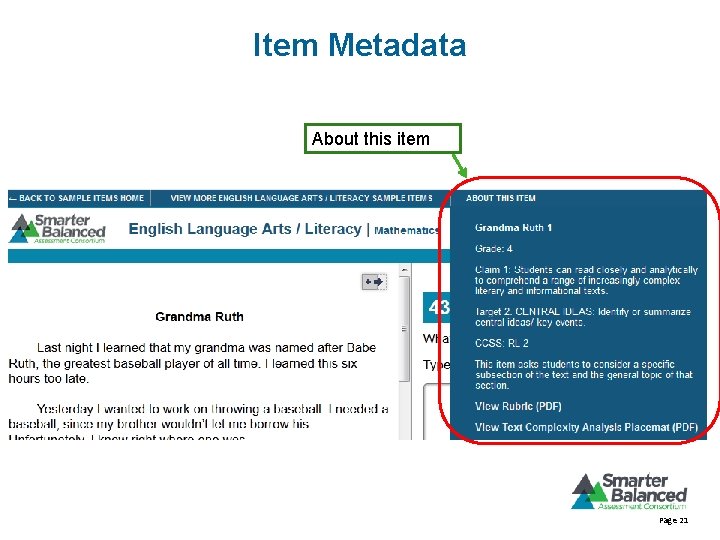

Item Metadata About this item Page 21

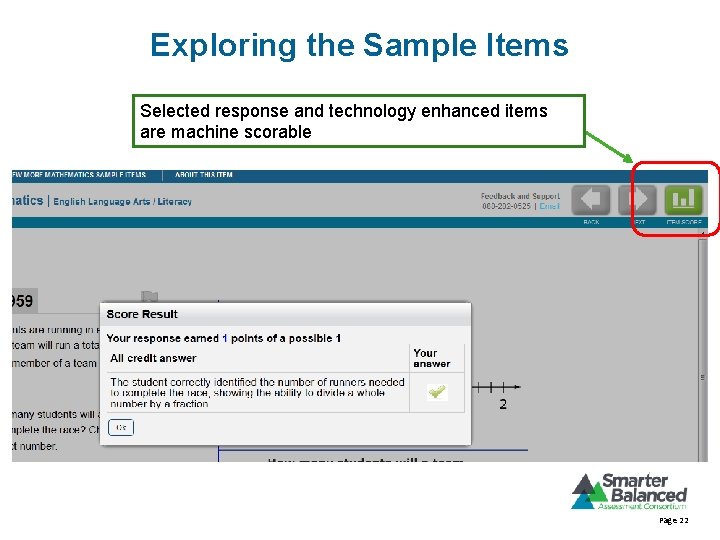

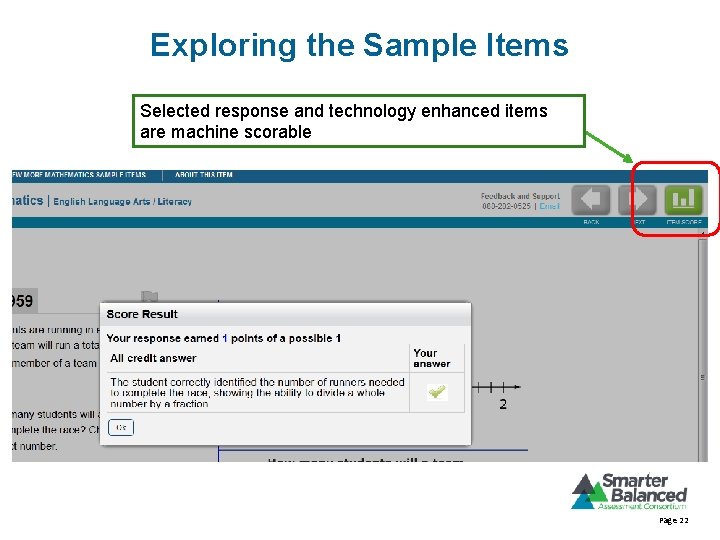

Exploring the Sample Items Selected response and technology enhanced items are machine scorable Page 22

Accessibility and Accommodations • Sample items do not include accessibility and accommodations features • As part of the development of accessibility and accommodations policies, the Consortium commissioned research on best practices for assessing English language learners and students with disabilities • When fully operational, Smarter Balanced will be providing translation accommodation options for all math items • For the Pilot, Smarter Balanced will be providing full Spanish translations and translated Spanish pop-up glossaries that are customized at the item-level for a specific form for each of three grades (almost 70 items each). Each grade-level (elementary, middle, and high school) will have one grade with the special form. • The Consortium will need ELLs to participate in the Pilot to make sure they are represented in the student sample. 23

Accessibility and Accommodations • Full range of accessibility tools and accommodations options under development guided by: – Magda Chia, Ph. D. , Director of Support for Under-Represented Students – Accessibility and Accommodations Work Group – Students with Disabilities Advisory Committee • Chair: Martha Thurlow (NCEO) – English Language Learners Advisory Committee • These teams of experts will ensure that the assessments provide valid, reliable, and fair measures of achievement and growth for both English learners and Students with Disabilities • Smarter Balanced will also continue working with educators and experts in the field to design and test the assessment system • Learn more online: – http: //www. smarterbalanced. org/parents-students/support-forunder-represented-students/ 24

Scoring Technology TOM TORLAKSON State Superintendent of Public Instruction • Templates • Optical Scanning – Scantron – Electronic image based scoring (E. g. Pearson e-Pen) – Scan to Score • Traditional machine scoring – Dichotomous (correct/incorrect) scoring most common – Exact word, number, or grid matches – No partial credit • Automated Scoring – Allows scoring of short answer and essay questions – Require set of human scored papers to develop the scoring model – Can give partial credit, or multiple point scores 25

How Automated Scoring Works TOM TORLAKSON State Superintendent of Public Instruction • Uses a set of human scored examples to develop a statistical model used to analyze answers • Generally examine overall form and specific combinations of words • Has an extensive library of possible meanings for words 26

What can be scored? TOM TORLAKSON State Superintendent of Public Instruction • Written responses – – Prompt Specific Essays Prompt Independent Essays Short Answers Summaries • Spoken language – Correctness – Fluency • Responses to simulations – Diagnosis of a patient’s illness – Landing a plane 27

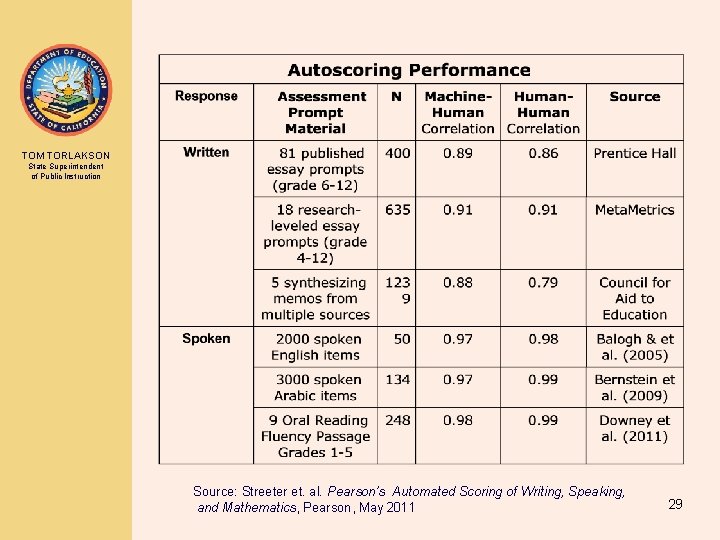

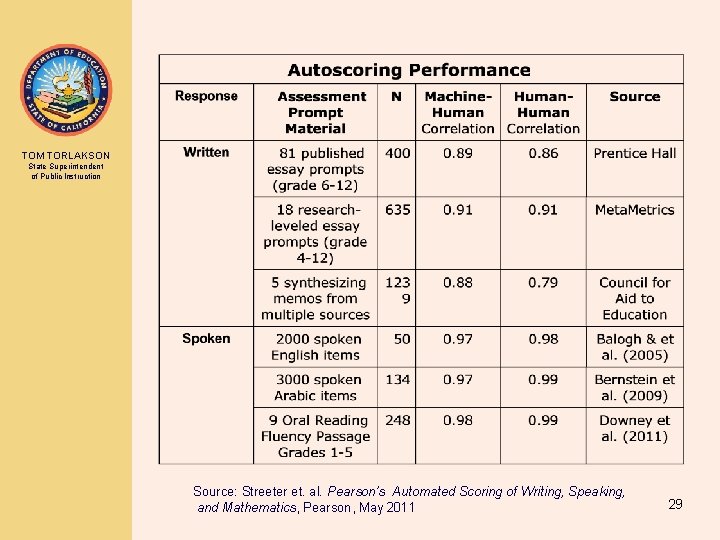

How good is automated scoring? TOM TORLAKSON State Superintendent of Public Instruction According to ETS, Pearson and the College Board in the recent, report “Automated Scoring for the Common Core Standards: ” • Consistent with the scores from expert human graders • The way automated scores are produced is understandable and meaningful • Fair • Validated against external measures in the same way as is done with human scoring • The impact of automated scoring on reported scores is understood 28

TOM TORLAKSON State Superintendent of Public Instruction Source: Streeter et. al. Pearson’s Automated Scoring of Writing, Speaking, and Mathematics, Pearson, May 2011 29

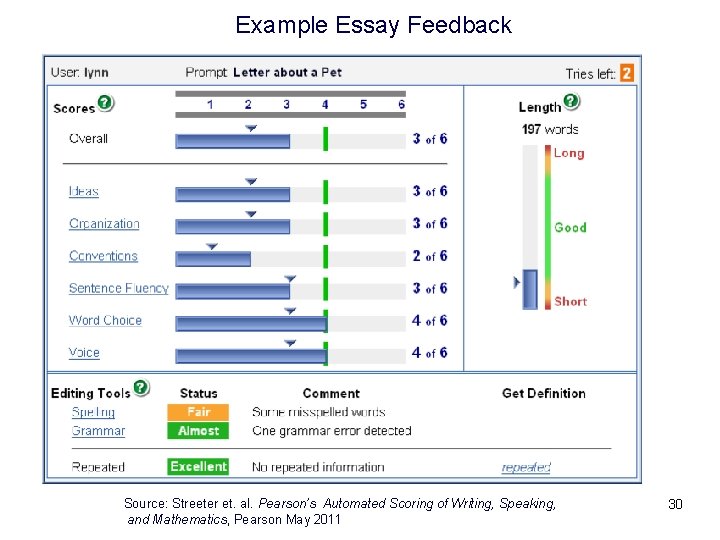

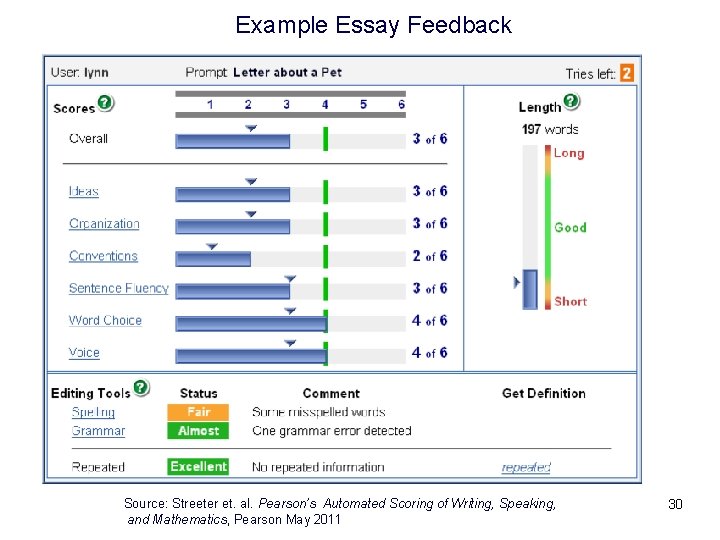

Example Essay Feedback Source: Streeter et. al. Pearson’s Automated Scoring of Writing, Speaking, and Mathematics, Pearson May 2011 30

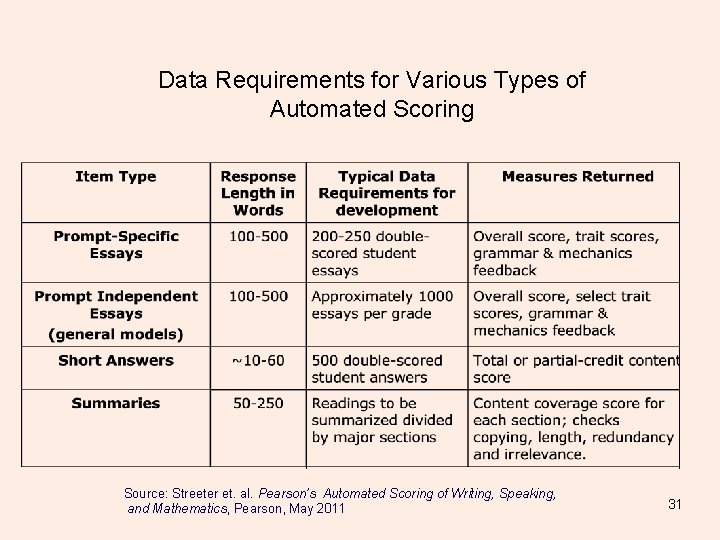

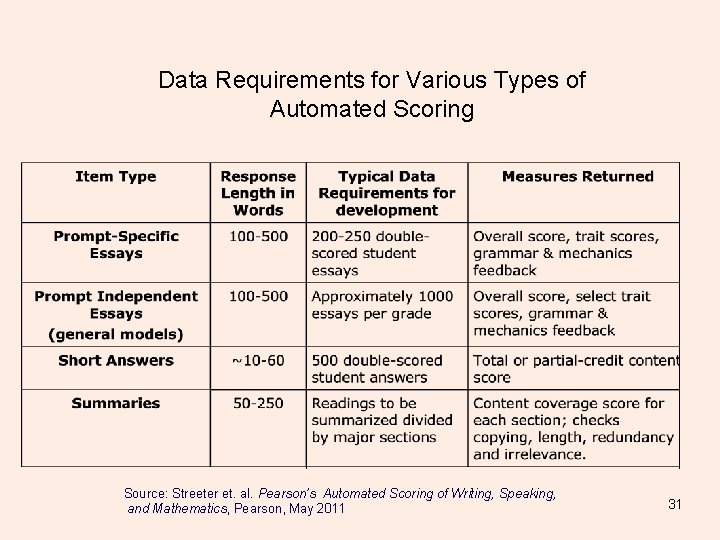

Data Requirements for Various Types of Automated Scoring Source: Streeter et. al. Pearson’s Automated Scoring of Writing, Speaking, and Mathematics, Pearson, May 2011 31

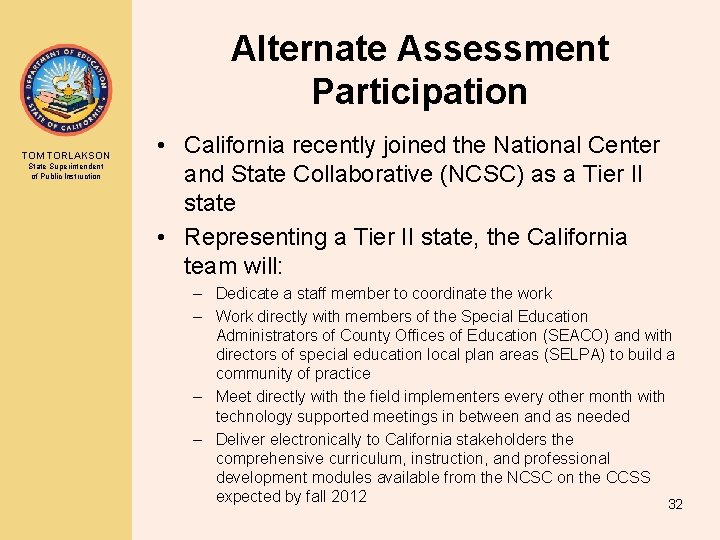

Alternate Assessment Participation TOM TORLAKSON State Superintendent of Public Instruction • California recently joined the National Center and State Collaborative (NCSC) as a Tier II state • Representing a Tier II state, the California team will: – Dedicate a staff member to coordinate the work – Work directly with members of the Special Education Administrators of County Offices of Education (SEACO) and with directors of special education local plan areas (SELPA) to build a community of practice – Meet directly with the field implementers every other month with technology supported meetings in between and as needed – Deliver electronically to California stakeholders the comprehensive curriculum, instruction, and professional development modules available from the NCSC on the CCSS expected by fall 2012 32

STAR In-Transition Activities TOM TORLAKSON State Superintendent of Public Instruction • Considerations – SBAC – CAT – ELA and Math Only – System – Formative, Interim, and Summative • Specific Planned Activities 33

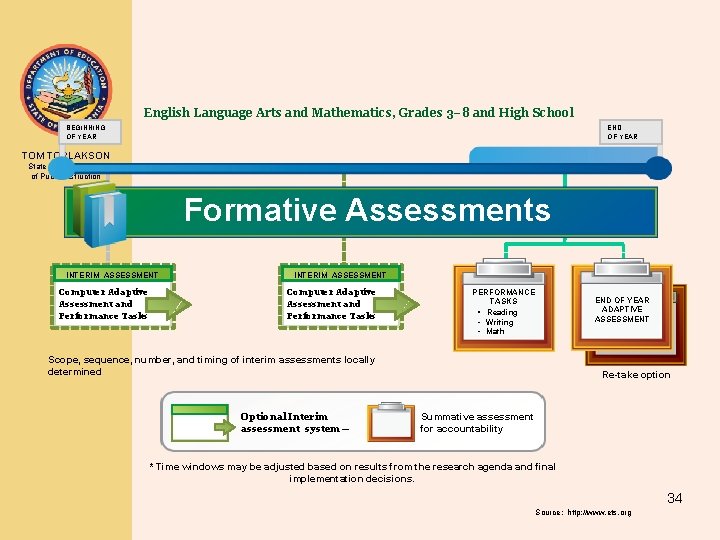

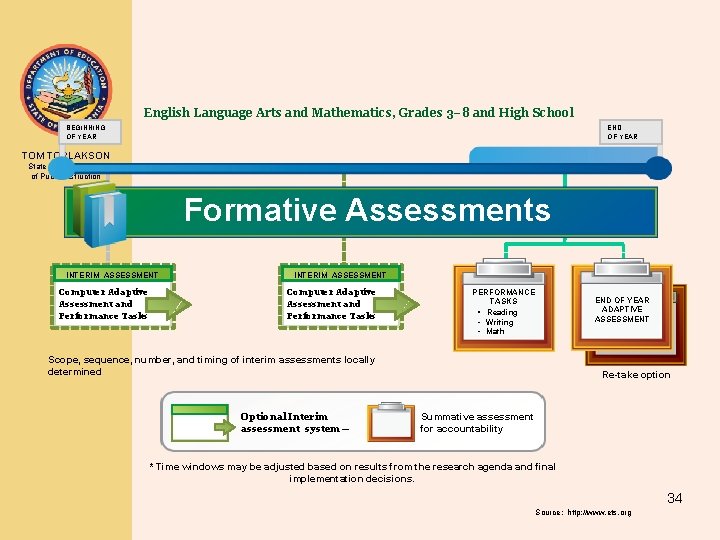

English Language Arts and Mathematics, Grades 3– 8 and High School BEGINNING OF YEAR END OF YEAR TOM TORLAKSON Last 12 weeks of year* State Superintendent of Public Instruction Formative Assessments DIGITAL CLEARINGHOUSE of formative tools, processes and exemplars; released items and tasks; model curriculum units; educator training; professional development tools and resources; scorer training modules; and teacher collaboration tools. INTERIM ASSESSMENT Computer Adaptive Assessment and Performance Tasks PERFORMANCE TASKS • Reading • Writing • Math END OF YEAR ADAPTIVE ASSESSMENT Scope, sequence, number, and timing of interim assessments locally determined Optional Interim assessment system— Re-take option Summative assessment for accountability * Time windows may be adjusted based on results from the research agenda and final implementation decisions. 34 Source: http: //www. ets. org

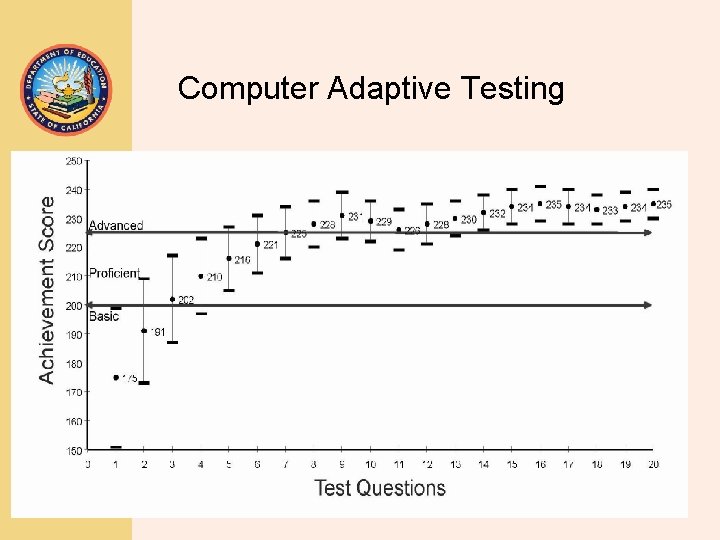

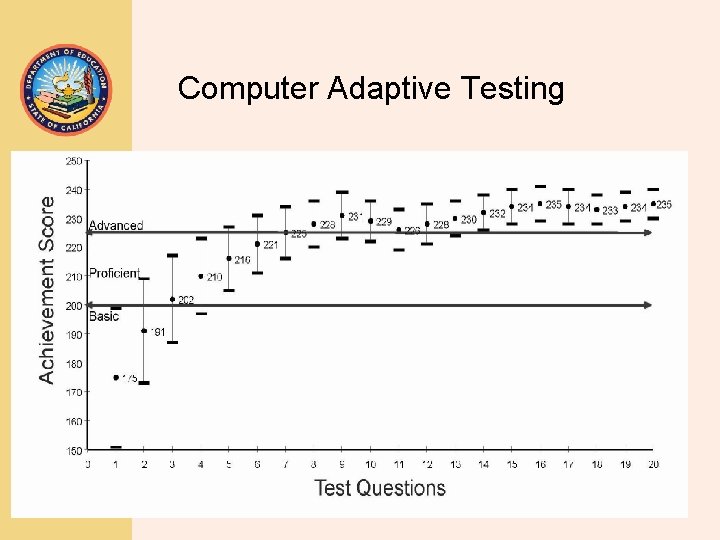

Computer Adaptive Testing TOM TORLAKSON State Superintendent of Public Instruction 35

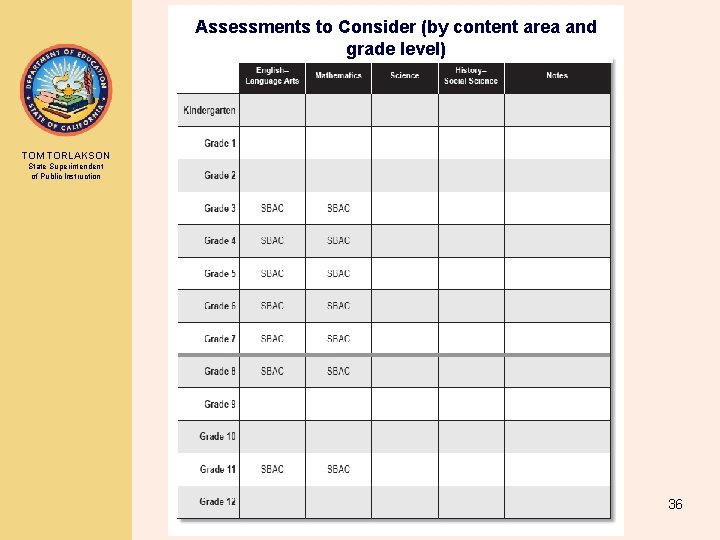

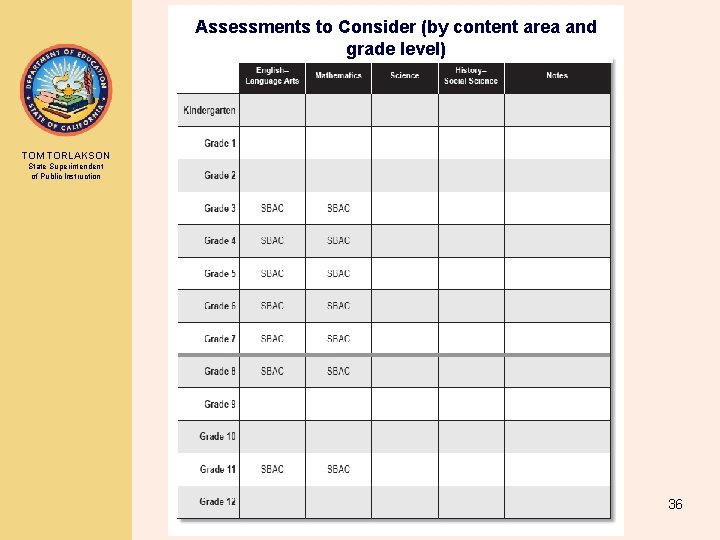

Assessments to Consider (by content area and grade level) TOM TORLAKSON State Superintendent of Public Instruction 36

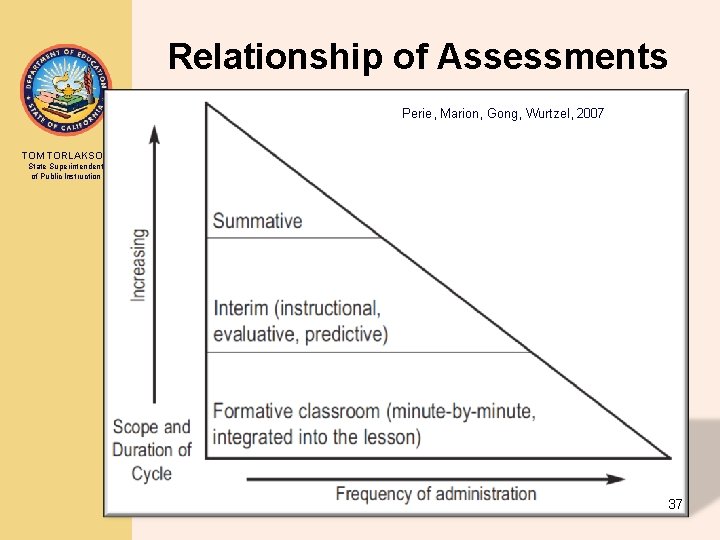

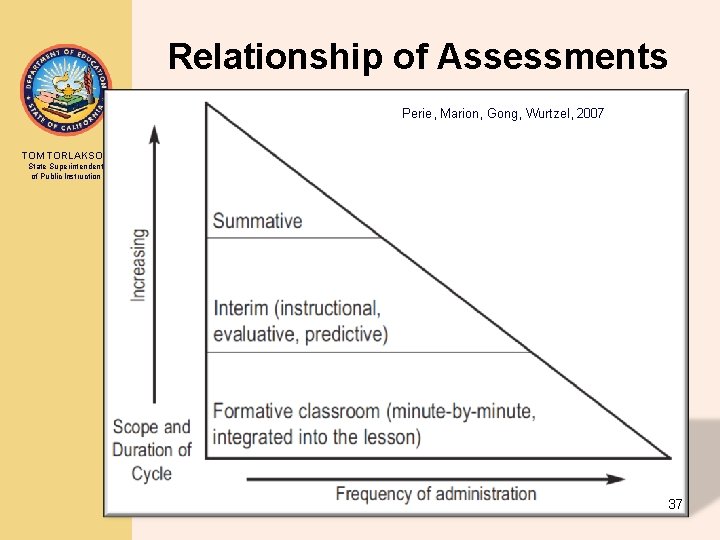

Relationship of Assessments Perie, Marion, Gong, Wurtzel, 2007 TOM TORLAKSON State Superintendent of Public Instruction 37

STAR In-Transition Activities TOM TORLAKSON State Superintendent of Public Instruction Alignment to Common Core State Standards • Planning to provide CCSS-aligned results on STAR Student Reports • Planning to align CST released test questions (RTQs) with California’s Common Core State Standards (CCSS) 38

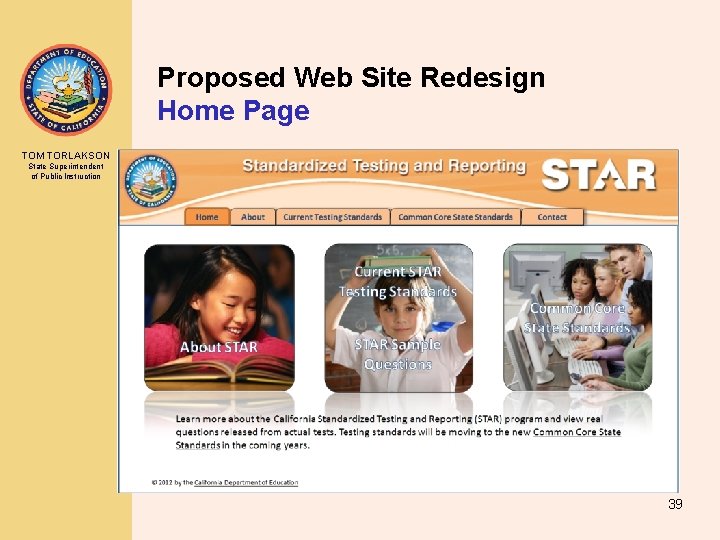

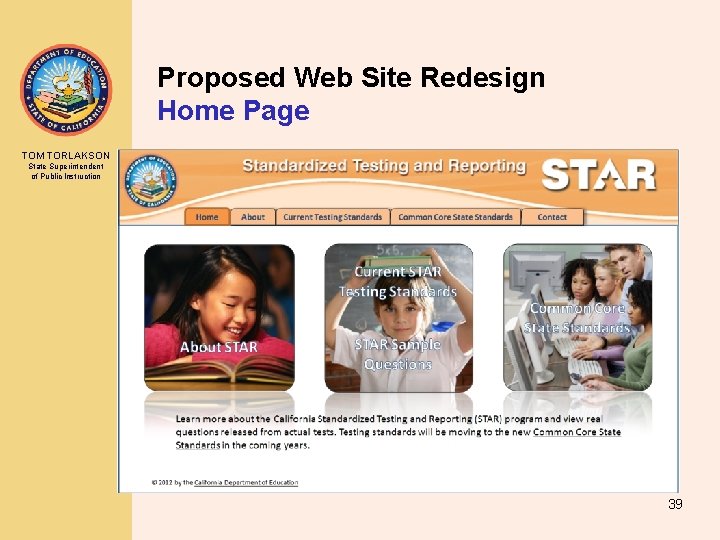

Proposed Web Site Redesign Home Page TOM TORLAKSON State Superintendent of Public Instruction 39

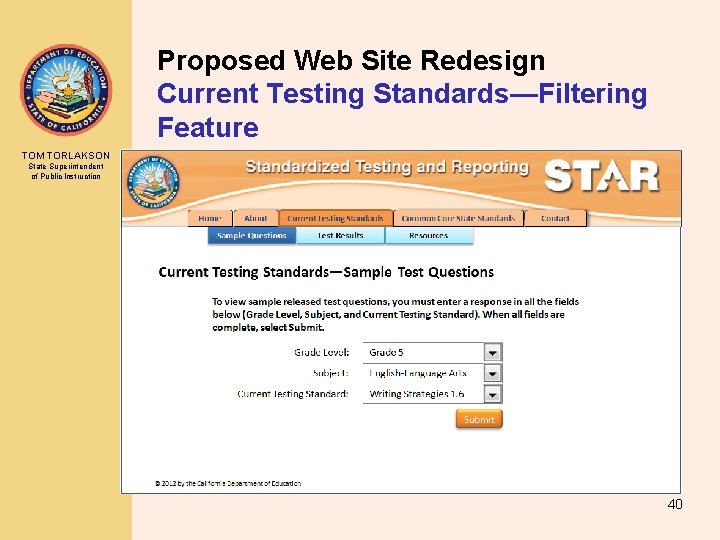

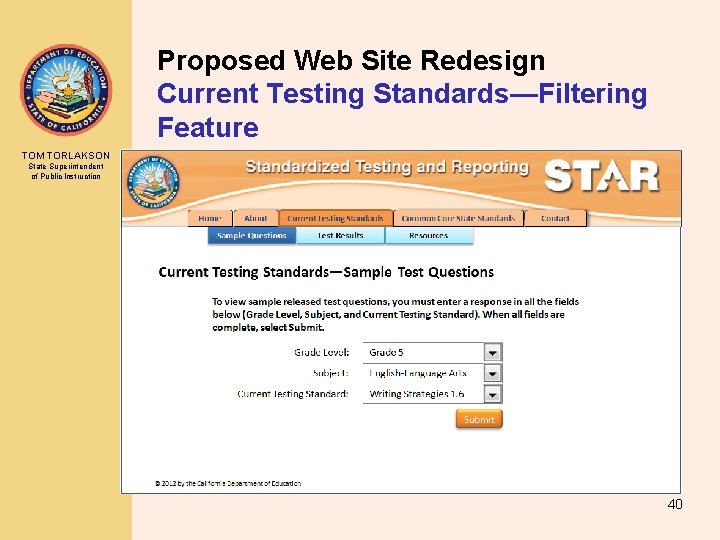

Proposed Web Site Redesign Current Testing Standards—Filtering Feature TOM TORLAKSON State Superintendent of Public Instruction 40

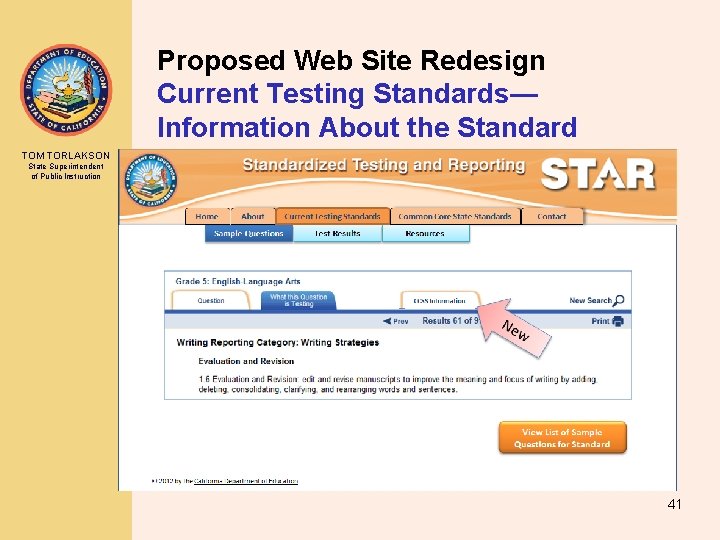

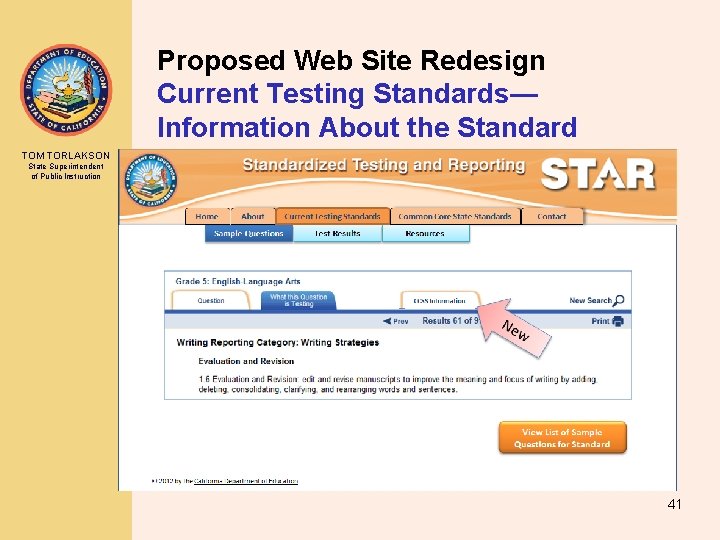

Proposed Web Site Redesign Current Testing Standards— Information About the Standard TOM TORLAKSON State Superintendent of Public Instruction 41

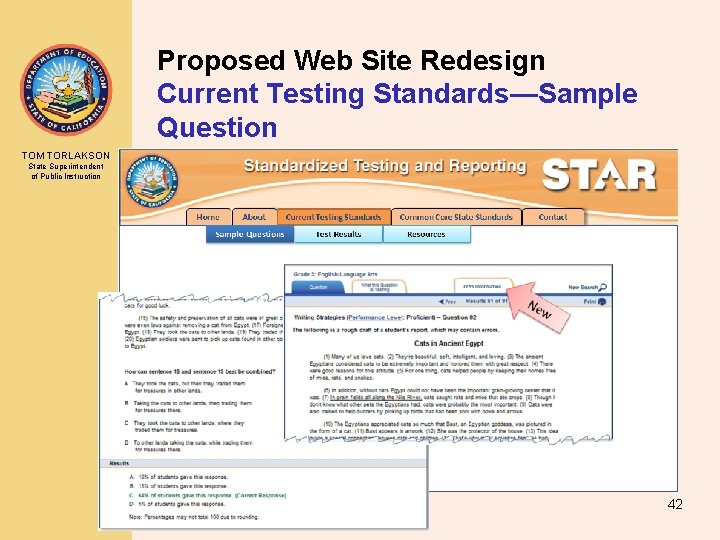

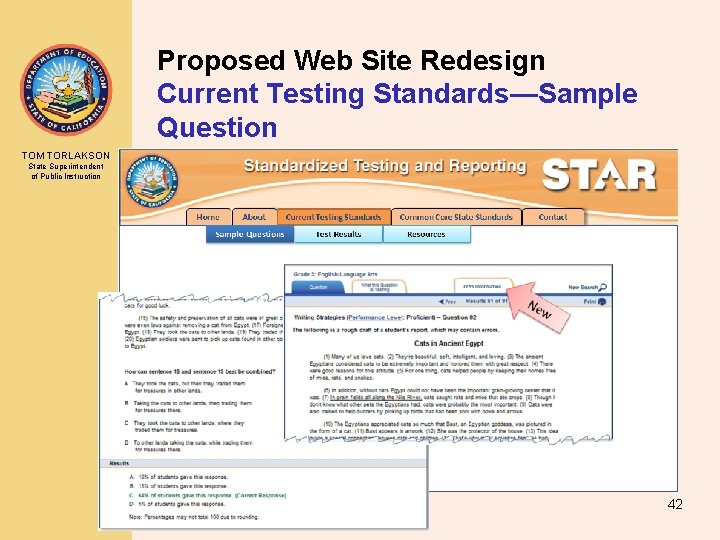

Proposed Web Site Redesign Current Testing Standards—Sample Question TOM TORLAKSON State Superintendent of Public Instruction 42

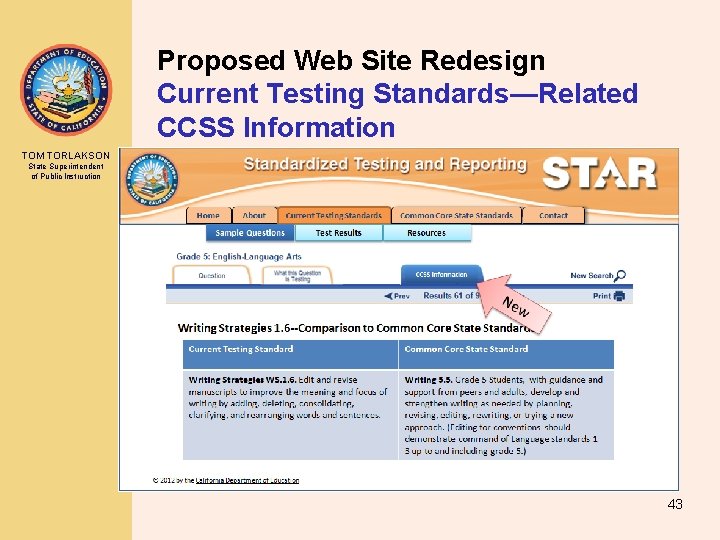

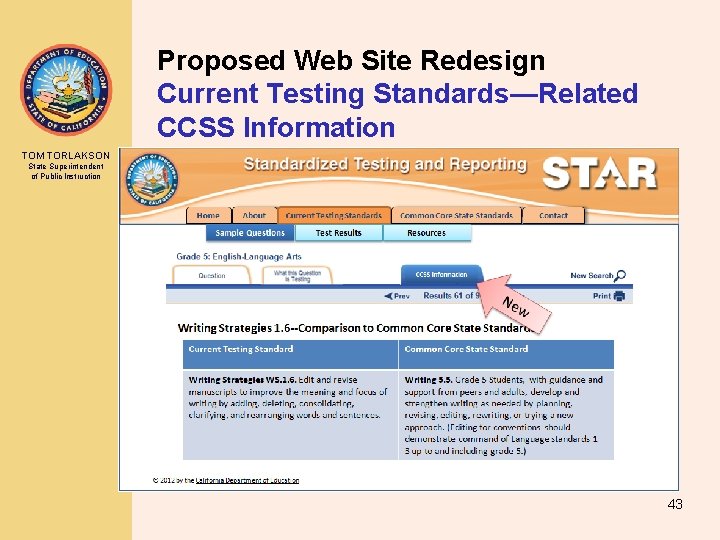

Proposed Web Site Redesign Current Testing Standards—Related CCSS Information TOM TORLAKSON State Superintendent of Public Instruction 43

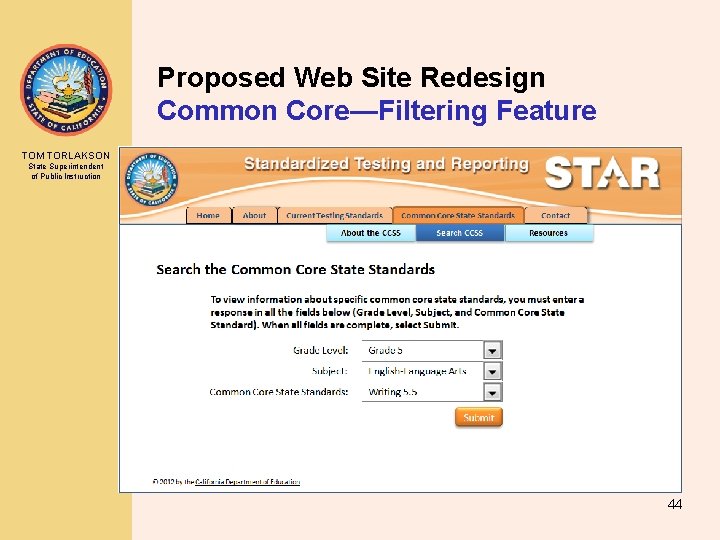

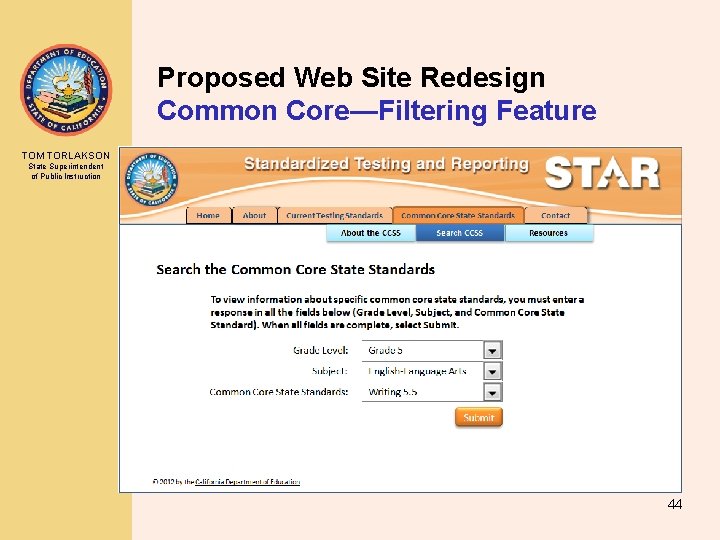

Proposed Web Site Redesign Common Core—Filtering Feature TOM TORLAKSON State Superintendent of Public Instruction 44

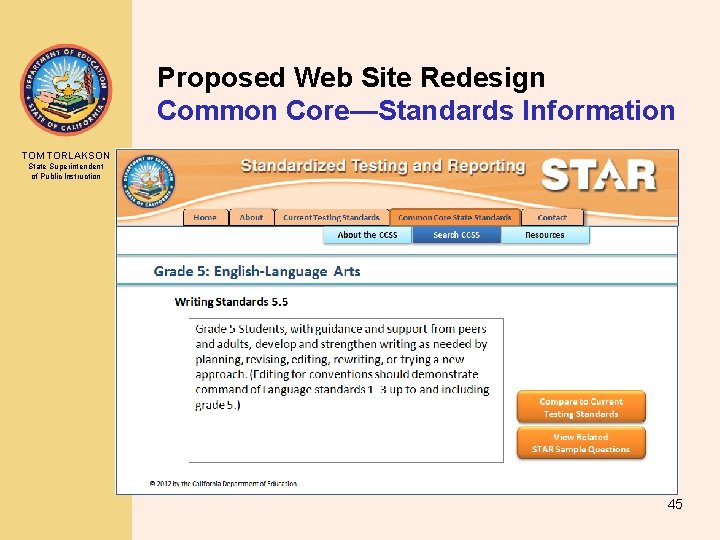

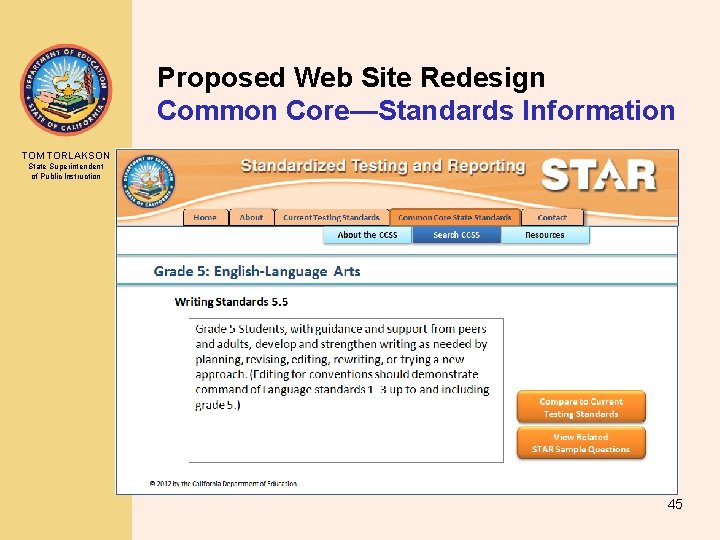

Proposed Web Site Redesign Common Core—Standards Information TOM TORLAKSON State Superintendent of Public Instruction 45

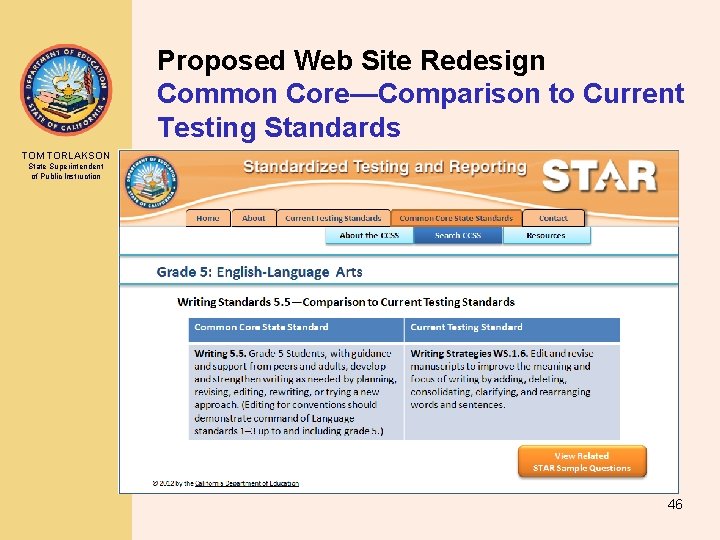

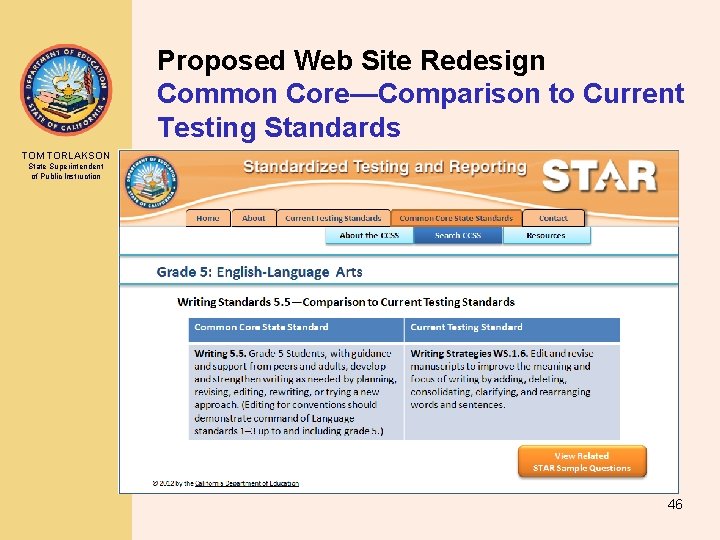

Proposed Web Site Redesign Common Core—Comparison to Current Testing Standards TOM TORLAKSON State Superintendent of Public Instruction 46

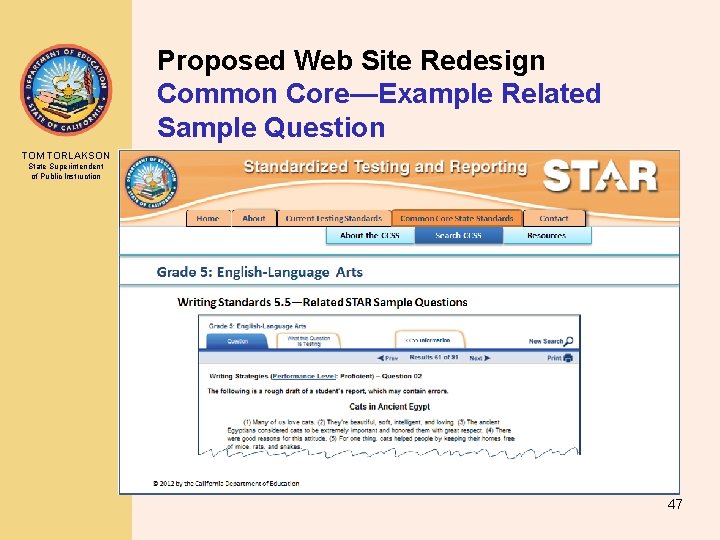

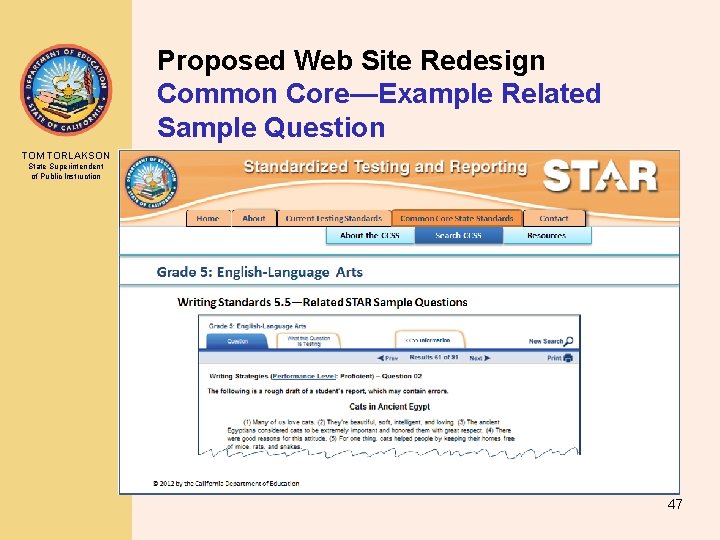

Proposed Web Site Redesign Common Core—Example Related Sample Question TOM TORLAKSON State Superintendent of Public Instruction 47

Contact Information TOM TORLAKSON State Superintendent of Public Instruction • Patrick Traynor, Ph. D, Director Assessment Development and Administration Division – E-mail: ptraynor@cde. ca. gov • Jessica Valdez, Administrator, Transition Office Assessment Development and Administration Division – Phone: 916 -319 -0332 – E-mail: jvaldez@cde. ca. gov • Jessica Barr, SBAC and Reauthorization Lead Consultant, Transition Office Assessment Development and Administration Division – Phone: 916 -319 -0364 – E-mail: jbarr@cde. ca. gov 48