Calibration Camera Calibration Geometric Intrinsics Focal length principal

Calibration

Camera Calibration • Geometric – Intrinsics: Focal length, principal point, distortion – Extrinsics: Position, orientation • Radiometric – Mapping between pixel value and scene radiance – Can be nonlinear at a pixel (gamma, etc. ) – Can vary between pixels (vignetting, cos 4, etc. ) – Dynamic range (calibrate shutter speed, etc. )

Geometric Calibration Issues • Camera Model – Orthogonal axes? – Square pixels? – Distortion? • Calibration Target – Known 3 D points, noncoplanar – Known 3 D points, coplanar – Unknown 3 D points (structure from motion) – Other features (e. g. , known straight lines)

Geometric Calibration Issues • Optimization method – Depends on camera model, available data – Linear vs. nonlinear model – Closed form vs. iterative – Intrinsics only vs. extrinsics only vs. both – Need initial guess?

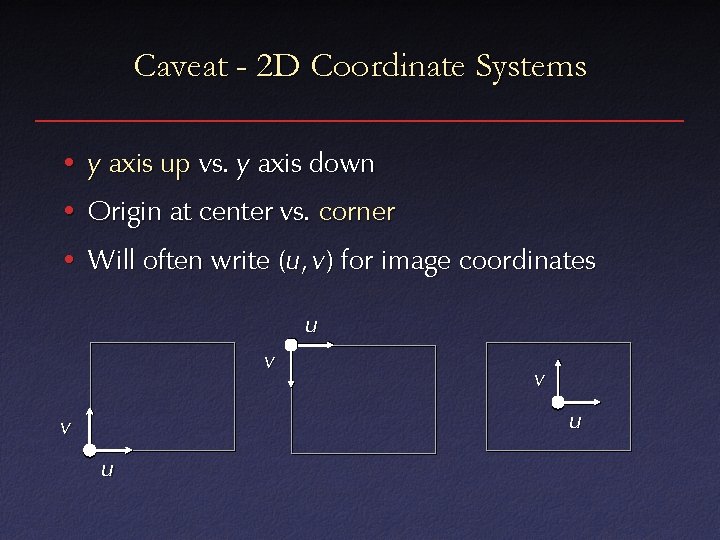

Caveat - 2 D Coordinate Systems • y axis up vs. y axis down • Origin at center vs. corner • Will often write (u, v) for image coordinates u v v u

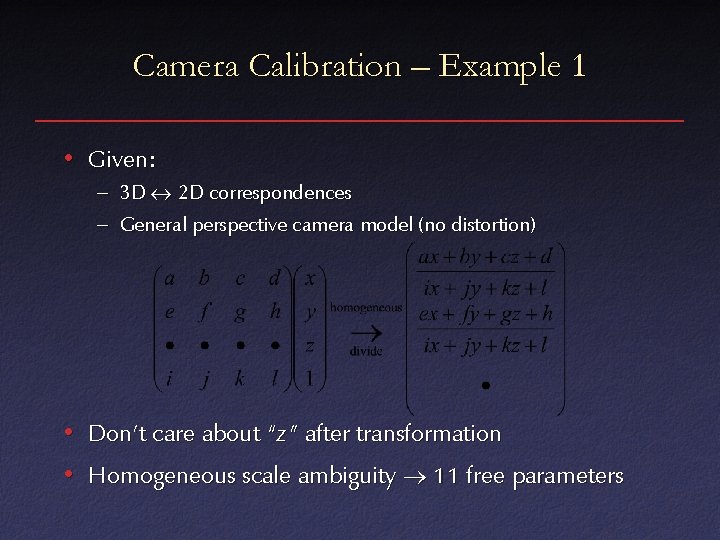

Camera Calibration – Example 1 • Given: – 3 D 2 D correspondences – General perspective camera model (no distortion) • Don’t care about “z” after transformation • Homogeneous scale ambiguity 11 free parameters

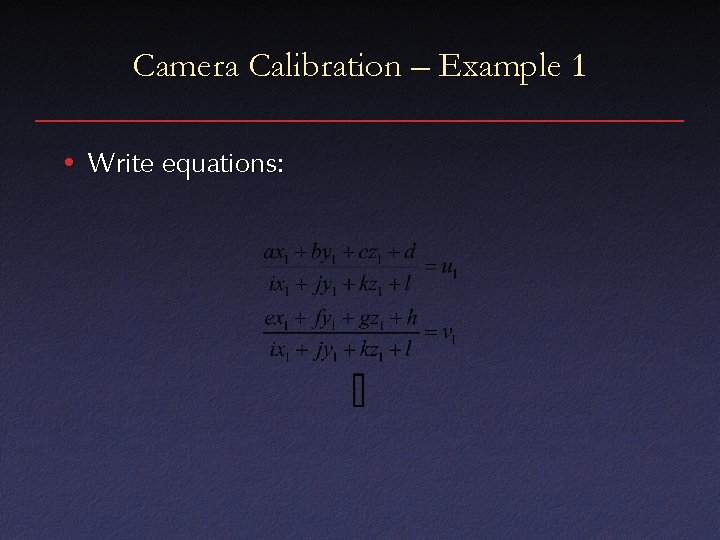

Camera Calibration – Example 1 • Write equations:

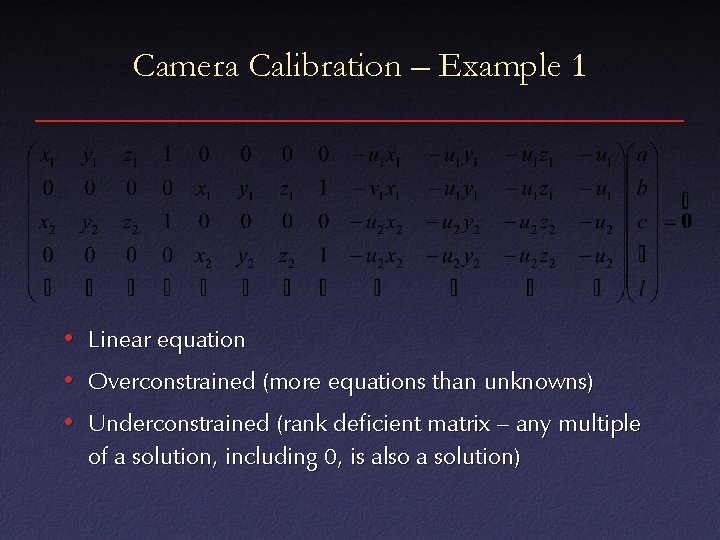

Camera Calibration – Example 1 • Linear equation • Overconstrained (more equations than unknowns) • Underconstrained (rank deficient matrix – any multiple of a solution, including 0, is also a solution)

Camera Calibration – Example 1 • Standard linear least squares methods for Ax=0 will give the solution x=0 • Instead, look for a solution with |x|= 1 • That is, minimize |Ax|2 subject to |x|2=1

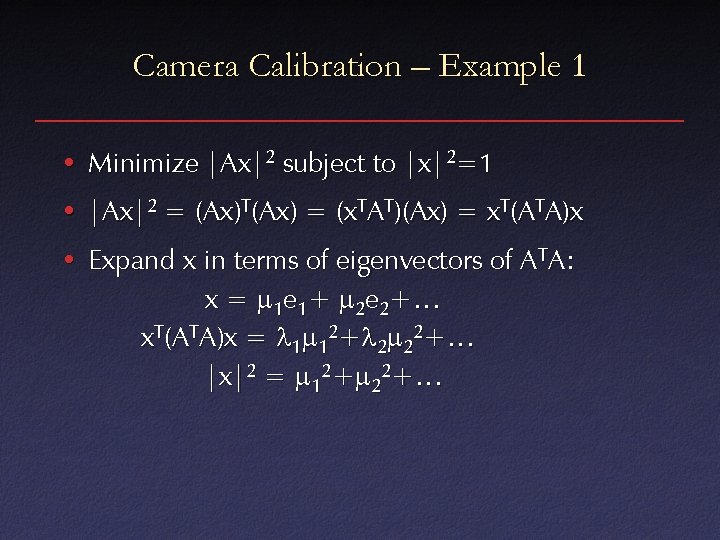

Camera Calibration – Example 1 • Minimize |Ax|2 subject to |x|2=1 • |Ax|2 = (Ax)T(Ax) = (x. TAT)(Ax) = x. T(ATA)x • Expand x in terms of eigenvectors of ATA: x = m 1 e 1+ m 2 e 2+… x. T(ATA)x = l 1 m 12+l 2 m 22+… |x|2 = m 12+m 22+…

Camera Calibration – Example 1 • To minimize subject to l 1 m 12+l 2 m 22+… m 12+m 22+… = 1 set mmin= 1 and all other mi=0 • Thus, least squares solution is eigenvector corresponding to minimum (non-zero) eigenvalue of ATA

Camera Calibration – Example 2 • Incorporating additional constraints into camera model – No shear (u, v axes orthogonal) – Square pixels – etc. • Doing minimization in image space • All of these impose nonlinear constraints on camera parameters

Camera Calibration – Example 2 • Option 1: nonlinear least squares – Usually “gradient descent” techniques – e. g. Levenberg-Marquardt • Option 2: solve for general perspective model, find closest solution that satisfies constraints – Use closed-form solution as initial guess for iterative minimization

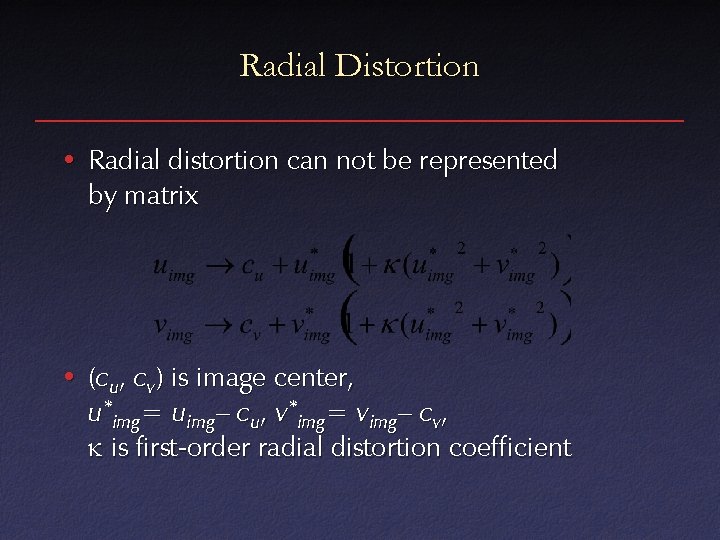

Radial Distortion • Radial distortion can not be represented by matrix • (cu, cv) is image center, u*img= uimg– cu, v*img= vimg– cv, k is first-order radial distortion coefficient

Camera Calibration – Example 3 • Incorporating radial distortion • Option 1: – Find distortion first (e. g. , straight lines in calibration target) – Warp image to eliminate distortion – Run (simpler) perspective calibration • Option 2: nonlinear least squares

Calibration Targets • Full 3 D (nonplanar) – Can calibrate with one image – Difficult to construct • 2 D (planar) – Can be made more accuracte – Need multiple views – Better constrained than full SFM problem

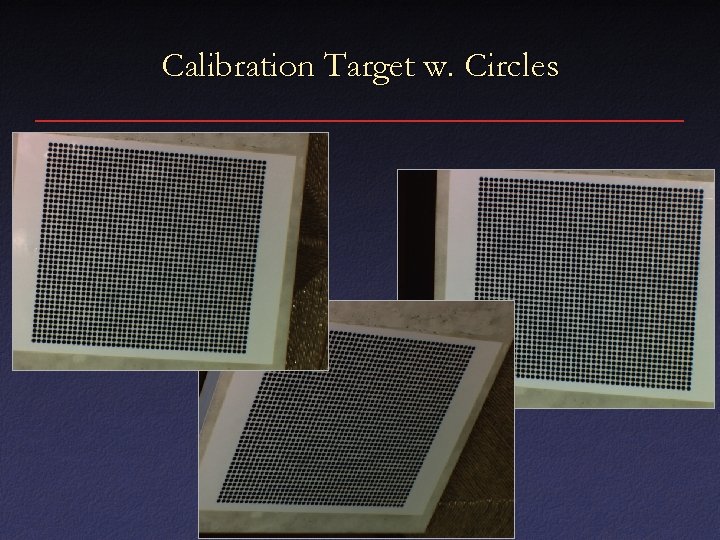

Calibration Targets • Identification of features – Manual – Regular array, manually seeded – Regular array, automatically seeded – Color coding, patterns, etc. • Subpixel estimation of locations – Circle centers – Checkerboard corners

Calibration Target w. Circles

3 D Target w. Circles

![Planar Checkerboard Target [Bouguet] Planar Checkerboard Target [Bouguet]](http://slidetodoc.com/presentation_image_h/8805957a20f31816582c3e1232ccd2a0/image-20.jpg)

Planar Checkerboard Target [Bouguet]

![Coded Circles [Marschner et al. ] Coded Circles [Marschner et al. ]](http://slidetodoc.com/presentation_image_h/8805957a20f31816582c3e1232ccd2a0/image-21.jpg)

Coded Circles [Marschner et al. ]

![Concentric Coded Circles [Gortler et al. ] Concentric Coded Circles [Gortler et al. ]](http://slidetodoc.com/presentation_image_h/8805957a20f31816582c3e1232ccd2a0/image-22.jpg)

Concentric Coded Circles [Gortler et al. ]

![Color Coded Circles [Culbertson] Color Coded Circles [Culbertson]](http://slidetodoc.com/presentation_image_h/8805957a20f31816582c3e1232ccd2a0/image-23.jpg)

Color Coded Circles [Culbertson]

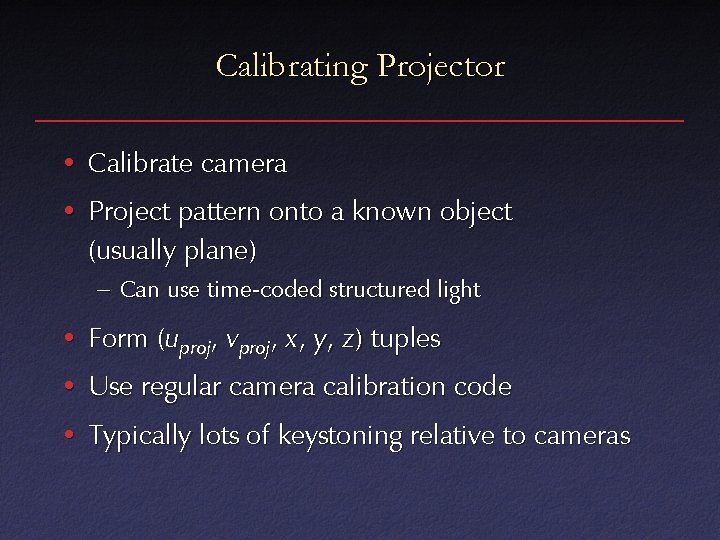

Calibrating Projector • Calibrate camera • Project pattern onto a known object (usually plane) – Can use time-coded structured light • Form (uproj, vproj, x , y, z) tuples • Use regular camera calibration code • Typically lots of keystoning relative to cameras

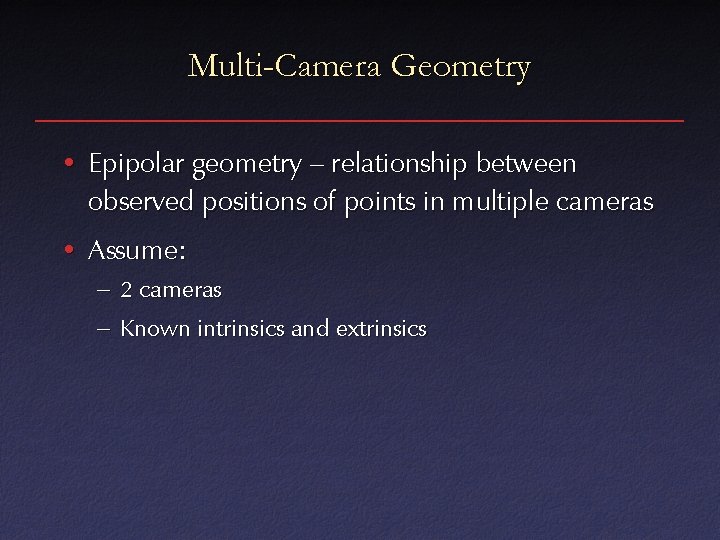

Multi-Camera Geometry • Epipolar geometry – relationship between observed positions of points in multiple cameras • Assume: – 2 cameras – Known intrinsics and extrinsics

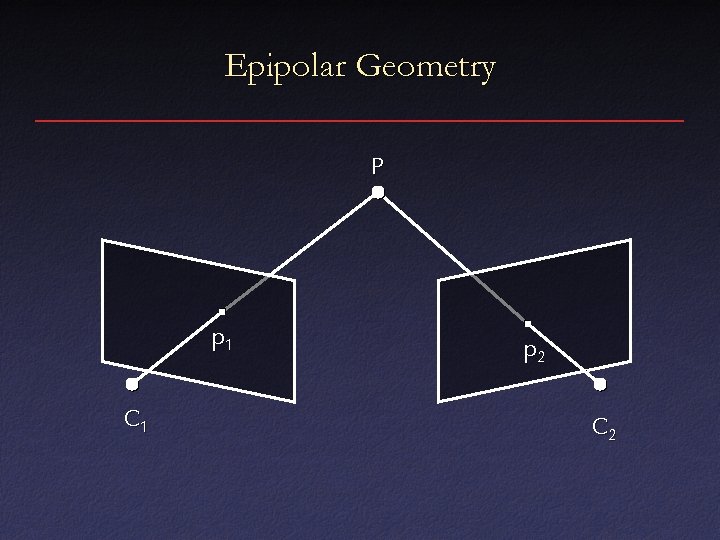

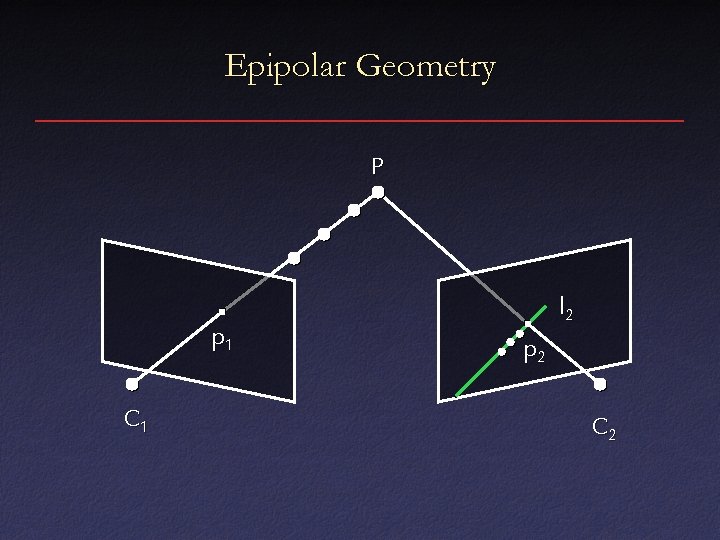

Epipolar Geometry P p 1 C 1 p 2 C 2

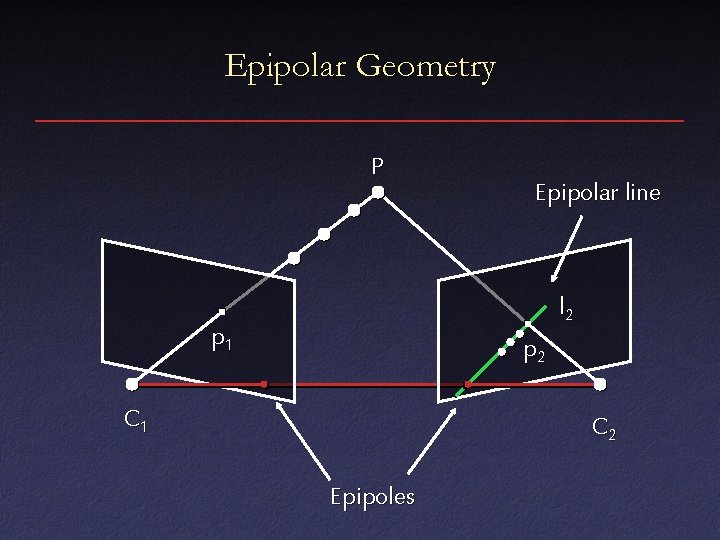

Epipolar Geometry P p 1 C 1 l 2 p 2 C 2

Epipolar Geometry P Epipolar line l 2 p 1 p 2 C 1 C 2 Epipoles

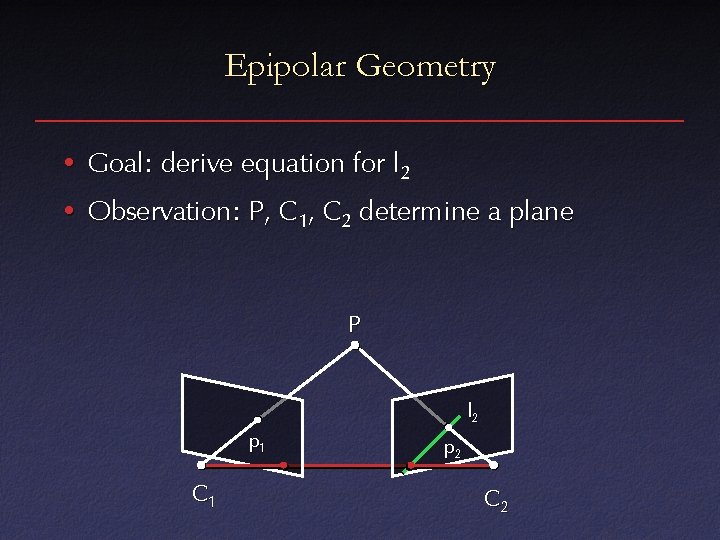

Epipolar Geometry • Goal: derive equation for l 2 • Observation: P, C 1, C 2 determine a plane P l 2 p 1 C 1 p 2 C 2

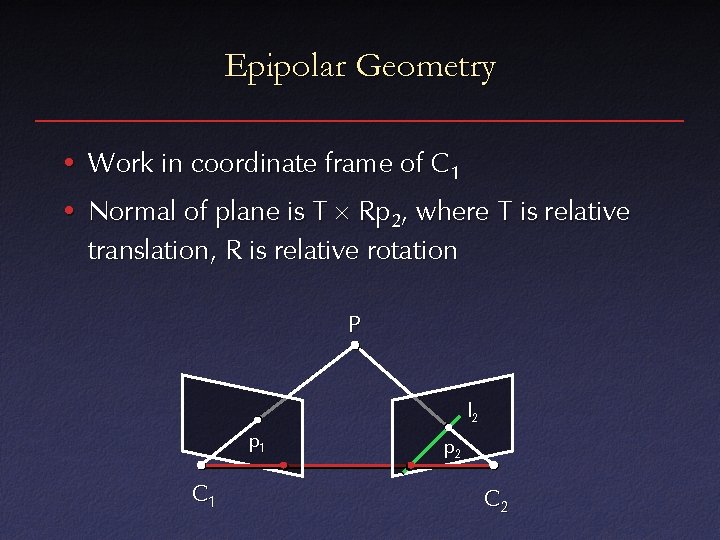

Epipolar Geometry • Work in coordinate frame of C 1 • Normal of plane is T Rp 2, where T is relative translation, R is relative rotation P l 2 p 1 C 1 p 2 C 2

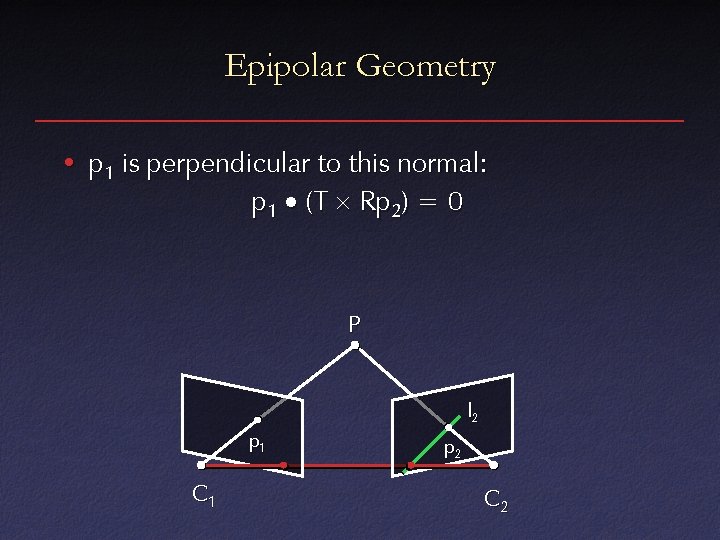

Epipolar Geometry • p 1 is perpendicular to this normal: p 1 (T Rp 2) = 0 P l 2 p 1 C 1 p 2 C 2

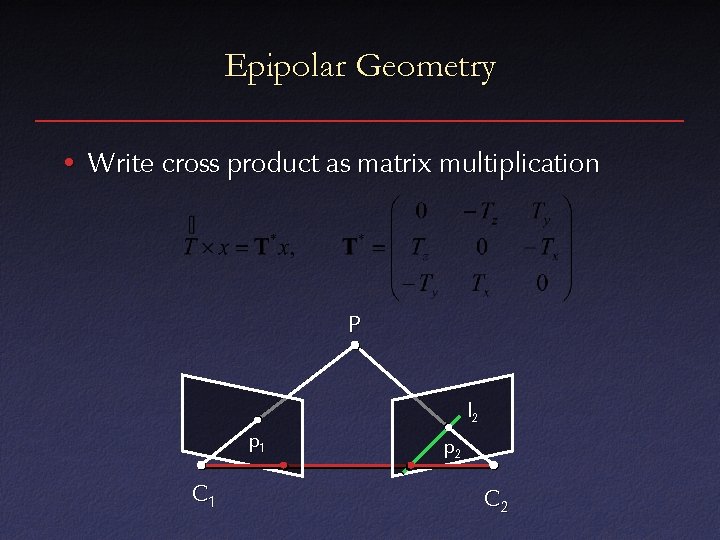

Epipolar Geometry • Write cross product as matrix multiplication P l 2 p 1 C 1 p 2 C 2

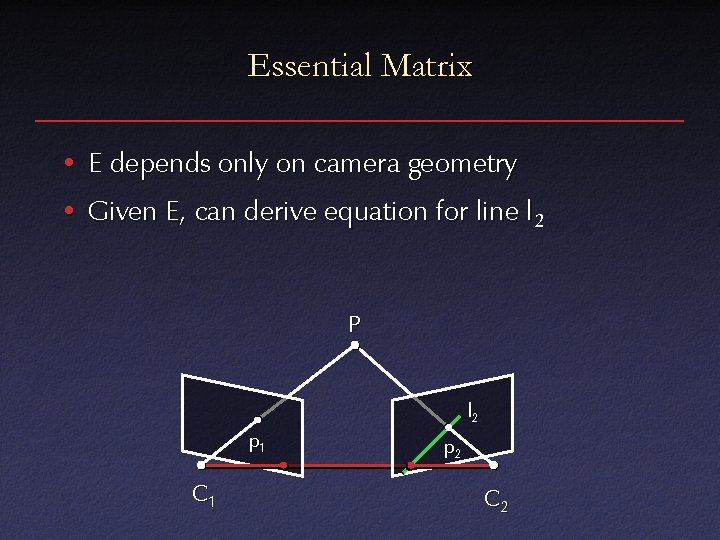

Epipolar Geometry • p 1 T * R p 2 = 0 p 1 T E p 2 = 0 • E is the essential matrix P l 2 p 1 C 1 p 2 C 2

Essential Matrix • E depends only on camera geometry • Given E, can derive equation for line l 2 P l 2 p 1 C 1 p 2 C 2

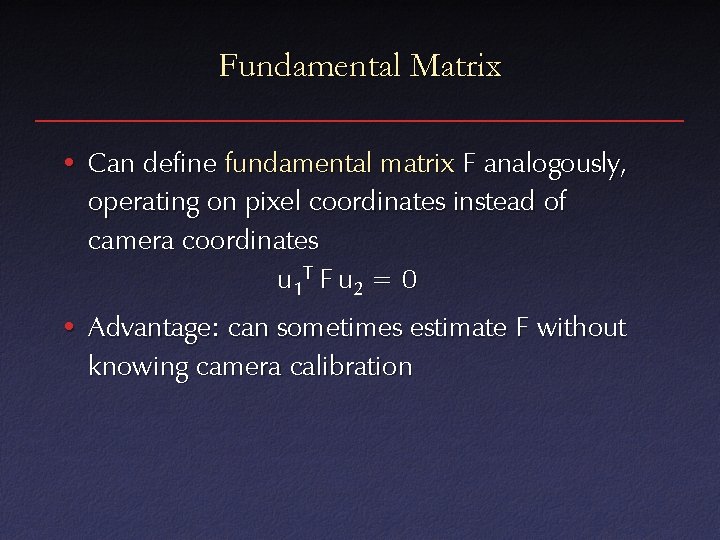

Fundamental Matrix • Can define fundamental matrix F analogously, operating on pixel coordinates instead of camera coordinates u 1 T F u 2 = 0 • Advantage: can sometimes estimate F without knowing camera calibration

- Slides: 35