Calibrated imputation of numerical data under linear edit

Calibrated imputation of numerical data under linear edit restrictions Jeroen Pannekoek Natalie Shlomo Ton de Waal

Missing data Data may be missing from collected data sets Unit non-response l l Data from entire units are missing Often dealt with by means of weighting Item non-response l l l Some items from units are missing Usually dealt with by means of imputation

Linear edit restrictions l l Data often have to satisfy edit restrictions For numerical data most edits are linear Balance equations: a 1 x 1 + a 2 x 2 + … + anxn + b = 0 Inequalities: a 1 x 1 + a 2 x 2 +. . . + anxn + b ≥ 0

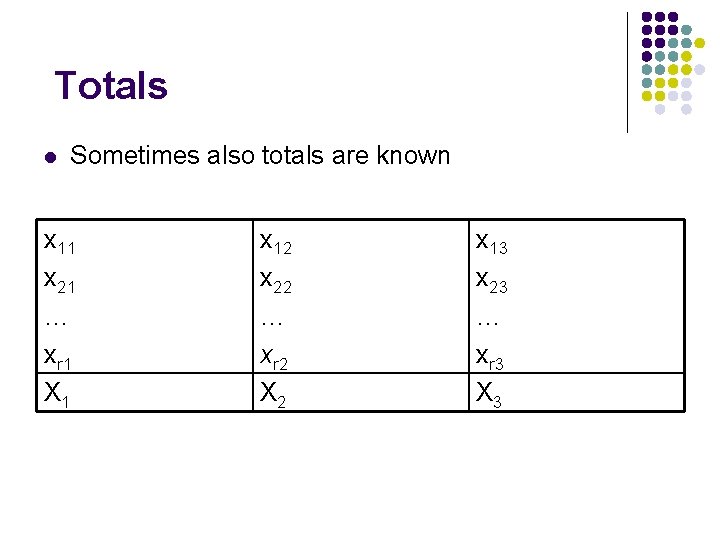

Totals l Sometimes also totals are known x 11 x 21 … xr 1 X 1 x 12 x 22 … xr 2 X 2 x 13 x 23 … xr 3 X 3

Eliminating balance equations l l We can “eliminate balance equations” Example: set of edits l l net + tax – gross = 0 net ≥ tax net ≥ 0 Eliminating the balance equations l l l net = gross – tax ≥ tax gross – tax ≥ 0

Eliminating balance equations l l We can “eliminate balance equations” Example: set of edits l l net + tax – gross = 0 net ≥ tax net ≥ 0 Eliminating the balance equations l l l net = gross – tax ≥ tax gross – tax ≥ 0

Eliminating balance equations l l By eliminating all balance equations we only have to deal with inequality edits If we sequentially impute variables, we only have to ensure that imputed values lie in an interval l l Li ≤ x i ≤ U i We can now focus on satisfying totals

Imputation methods l l l Adjusted predicted mean imputation with random residuals MCMC approach

Adjusted predicted mean imputation l l We use sequential imputation All missing values for a variable (the target variable) are imputed simultaneously l l We impute target column xt We use the model xt = β 0 + βxp + e We impute xt = β 0 + βxp Imputed values do not satisfy edits nor totals

Satisfying totals l l The totals of missing data for target variable (Xt, mis) as well as predictor (Xp, mis) are known We construct the following model for observed data l l l xt, obs = β 0 + βxp, obs + e Xt, mis = β 1 m + βXp, mis m is the number of missing values We apply OLS to estimate model parameters We impute xt, mis = β 1 + βxp, mis Sum of imputed values then equals known value of this total

Satisfying totals and intervals (edits) l l We impute xt, mis = β 1 + βxp, mis + at at, i are chosen in such a way that l l Imputed values lie in their feasible intervals Σi at, i = 0 Appropriate values for at, i can be found by means of operations research technique For simple alternative technique, see paper

Satisfying totals and intervals (edits) l l Alternatively, draw m residuals by Acceptance/Rejection sampling from a Normal Distribution (zero mean and residual variance of the regression model) that satisfy interval constraints Adjust random residuals to meet the sum constraints as carried out for at, i

MCMC approach l l l Start with pre-imputed consistent dataset Randomly select two records We select a variable in these records. Note that we know the sum of these two values of this variable for the two records

MCMC approach l We then apply following two steps 1. 2. l l We determine intervals for the two values. We then draw value for one missing value. Other value then immediately follows. Now, repeat Steps 1 and 2 until “convergence”. In Step 2 we draw a value from a posterior predictive distribution implied by a linear regression model under uninformative prior, conditional on the fact that it has to lie inside corresponding interval

Evaluation study: methods l Evaluated imputation methods: l l l UPMA: unbenchmarked simple predictive mean imputation with adjustments to imputations that satisfy interval constraints BPMA: benchmarked predictive mean imputation with adjustments to imputations that satisfy interval constraints and totals MCMC: BPMA with adjustments was used as preimputed data set for MCMC approach

Evaluation study: data set l l 11, 907 individuals aged 15 and over that responded to all questions in 2005 Israel Income Survey and earned more than 1000 Israel Shekels for their monthly gross income Item non-response was introduced randomly to income variables l l l 20% of records were selected randomly and their net income variable deleted 20% of records were selected randomly and their tax variable deleted while 10% of those records were in common with the missing net income variable Totals of each of the income variables are known

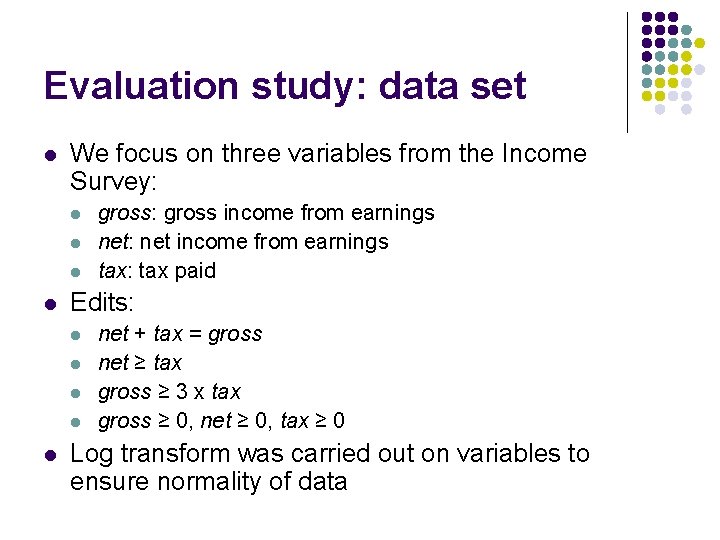

Evaluation study: data set l We focus on three variables from the Income Survey: l l Edits: l l l gross: gross income from earnings net: net income from earnings tax: tax paid net + tax = gross net ≥ tax gross ≥ 3 x tax gross ≥ 0, net ≥ 0, tax ≥ 0 Log transform was carried out on variables to ensure normality of data

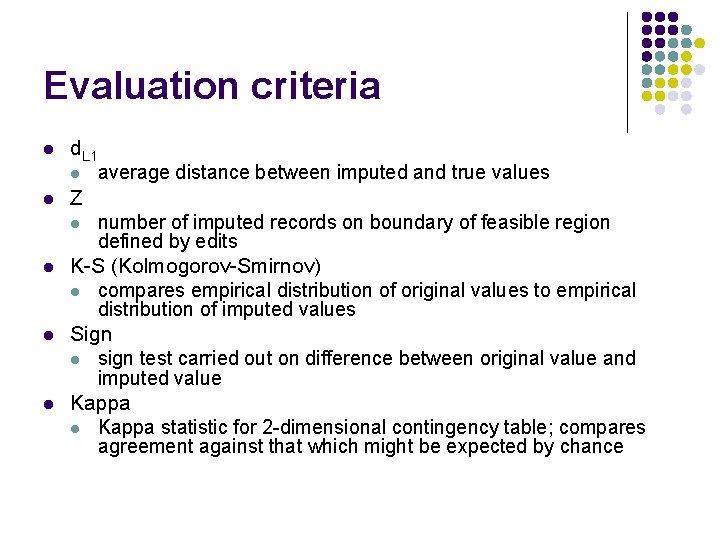

Evaluation criteria l d. L 1 l l Z l l average distance between imputed and true values number of imputed records on boundary of feasible region defined by edits K-S (Kolmogorov-Smirnov) l compares empirical distribution of original values to empirical distribution of imputed values Sign l sign test carried out on difference between original value and imputed value Kappa l Kappa statistic for 2 -dimensional contingency table; compares agreement against that which might be expected by chance

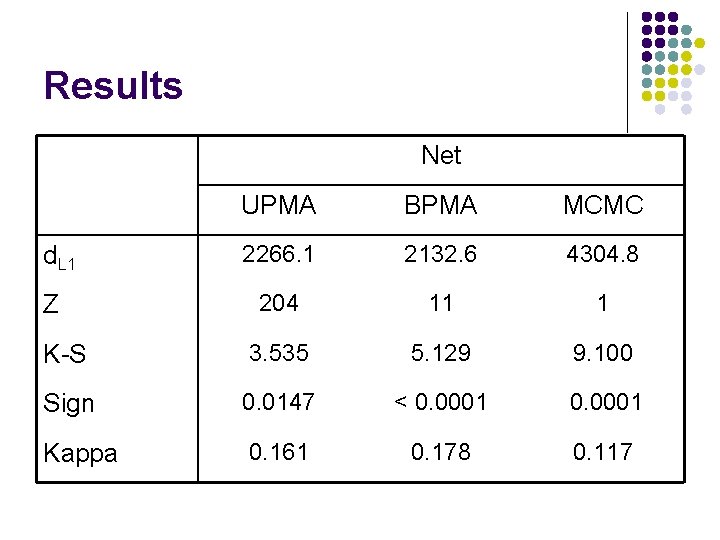

Results Net UPMA BPMA MCMC 2266. 1 2132. 6 4304. 8 204 11 1 K-S 3. 535 5. 129 9. 100 Sign 0. 0147 < 0. 0001 Kappa 0. 161 0. 178 0. 117 d. L 1 Z

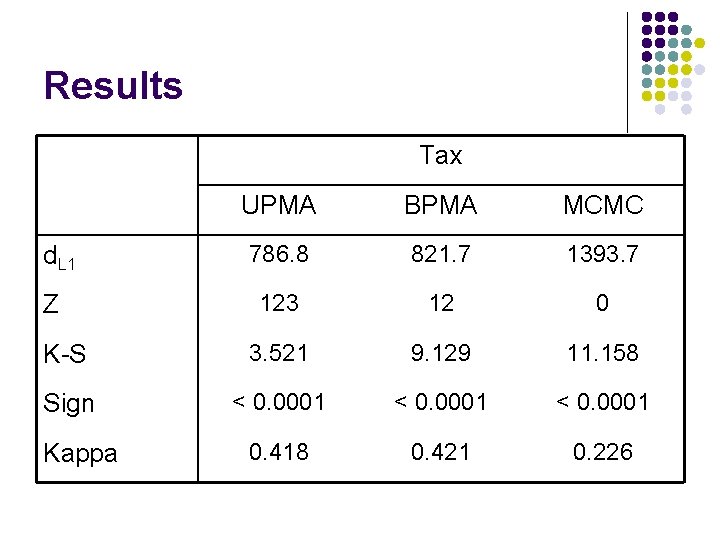

Results Tax UPMA BPMA MCMC 786. 8 821. 7 1393. 7 123 12 0 K-S 3. 521 9. 129 11. 158 Sign < 0. 0001 0. 418 0. 421 0. 226 d. L 1 Z Kappa

Conclusions l l MCMC approach is doing worse than other methods on all criteria except number of records that lie on boundary However, MCMC allows multiple imputation in order to take imputation uncertainty into account in variance estimation BPMA appear to be slightly better compared to UPMA except for K-S statistic Number of records that lie on boundary for UPMA is cause for concern l MCMC approach is doing slightly better than BPMA approach in this respect

Future research l l l Improving MCMC approach Carrying out multiple imputation using MCMC approach to obtain proper variance estimation In our study a log transformation was carried out on variables to ensure normality of data l l l Correction factor was introduced into constant term of regression model to correct for this log transformation Better approach to this problem will be investigated Extending problem to situations where one has non-equal sampling weights

- Slides: 22