Caching Chapter 7 Basics 7 1 7 2

Caching – Chapter 7 • • • Basics (7. 1, 7. 2) Cache Writes (7. 2 - p 483 -485) DRAM configurations (7. 2 – p 487 -491) Performance (7. 3) Associative caches (7. 3 – p 496 -504) Multilevel caches (7. 3 – p 505 -510)

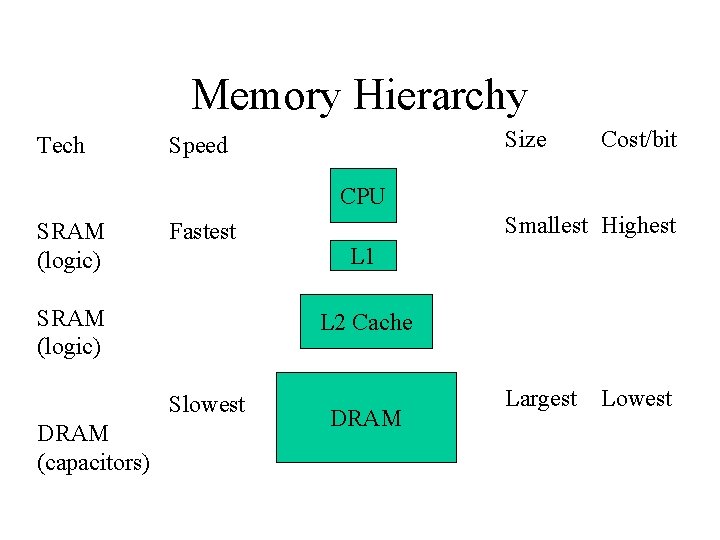

Memory Hierarchy Tech Size Speed Cost/bit CPU SRAM (logic) Fastest SRAM (logic) L 1 L 2 Cache Slowest DRAM (capacitors) Smallest Highest DRAM Largest Lowest

Program Characteristics • Temporal Locality • Spatial Locality

Locality • Programs tend to exhibit spatial & temporal locality. Just a fact of life. • How can we use this knowledge of program behavior to design a cache?

What does that mean? !? • 1. Architect: Design cache that _______ spatial & temporal locality • 2. Programmer: When you program, _____________________ locality – Java - difficult to do – C - more control over data placement • Note: Caches exploit locality. Programs have varying degrees of locality. Caches do not have locality!

Exploiting Spatial & Temporal Locality • 1. Cache: • 2. Program:

What do we put in cache? • Temporal Locality • Spatial Locality

Where do we put data in cache? • Searching whole cache takes time & power • Direct-mapped – Limit each piece of data to one possible position • Search is quick and simple

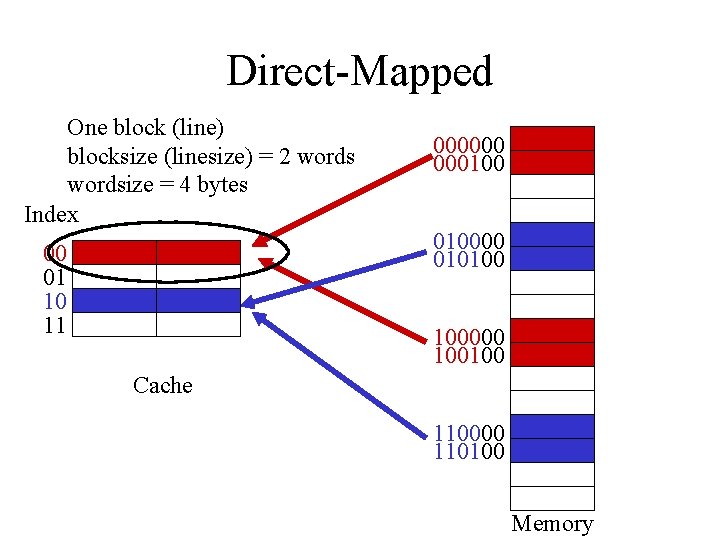

Direct-Mapped One block (line) blocksize (linesize) = 2 wordsize = 4 bytes Index 000000 00010000 010100 00 01 10 11 100000 100100 Cache 110000 110100 Memory

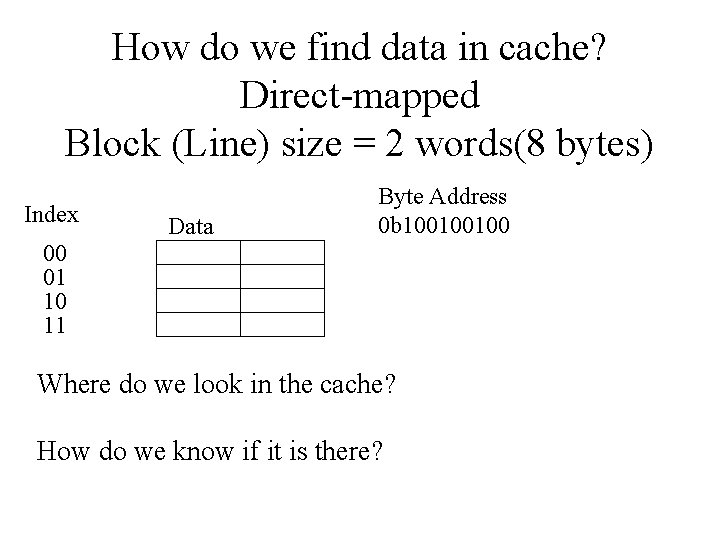

How do we find data in cache? Direct-mapped Block (Line) size = 2 words(8 bytes) Index Data Byte Address 0 b 100100100 00 01 10 11 Where do we look in the cache? How do we know if it is there?

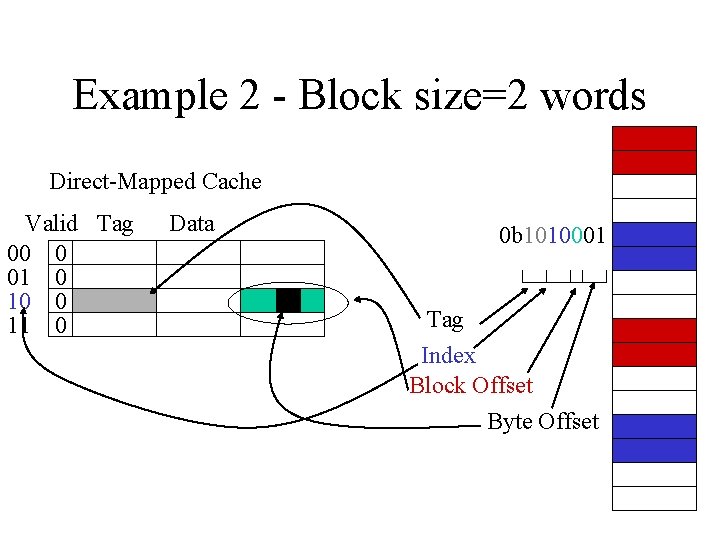

Example 2 - Block size=2 words Direct-Mapped Cache Valid Tag 00 0 01 0 10 0 11 0 Data 0 b 1010001 Tag Index Block Offset Byte Offset

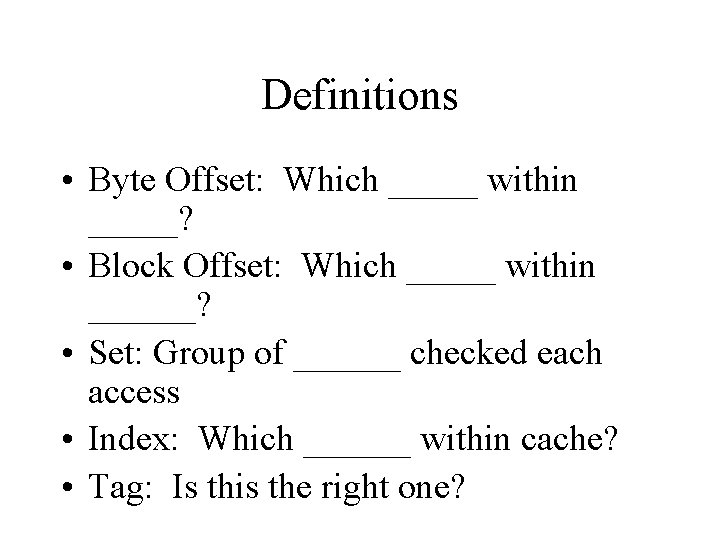

Definitions • Byte Offset: Which _____ within _____? • Block Offset: Which _____ within ______? • Set: Group of ______ checked each access • Index: Which ______ within cache? • Tag: Is this the right one?

Definitions • Block (Line) • Hit • Miss • Hit time / Access time • Miss Penalty

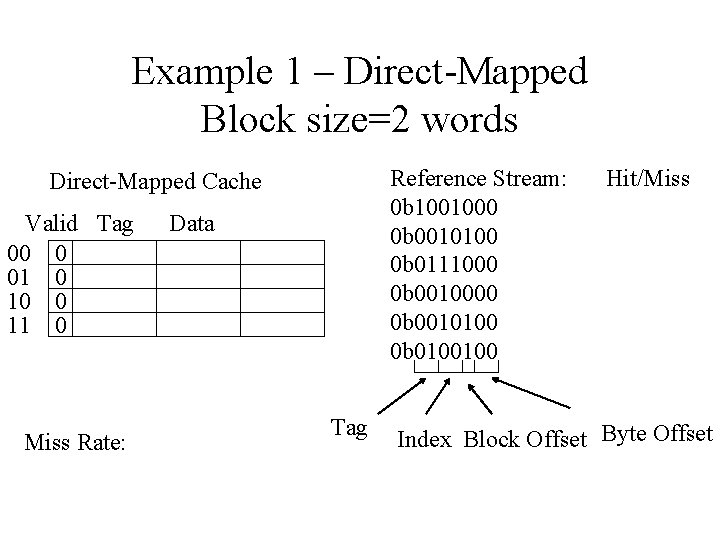

Example 1 – Direct-Mapped Block size=2 words Reference Stream: 0 b 1001000 0 b 0010100 0 b 0111000 0 b 0010100 0 b 0100100 Direct-Mapped Cache Valid Tag 00 0 01 0 10 0 11 0 Miss Rate: Data Tag Hit/Miss Index Block Offset Byte Offset

Implementation Byte Address 0 b 100100100 Tag Byte Offset Index Block offset Valid Tag Data 00 01 10 11 = MUX Hit? Data

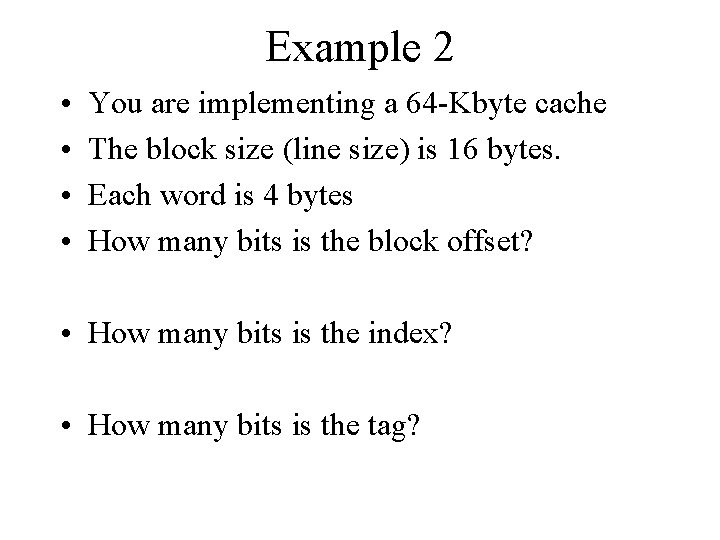

Example 2 • • You are implementing a 64 -Kbyte cache The block size (line size) is 16 bytes. Each word is 4 bytes How many bits is the block offset? • How many bits is the index? • How many bits is the tag?

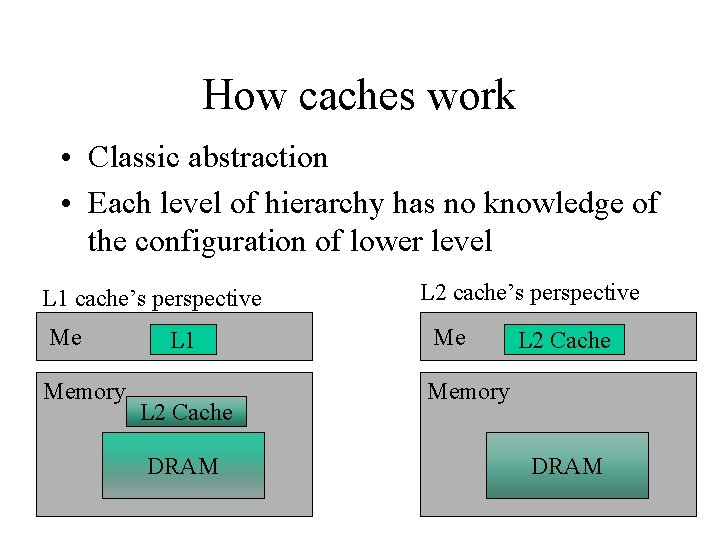

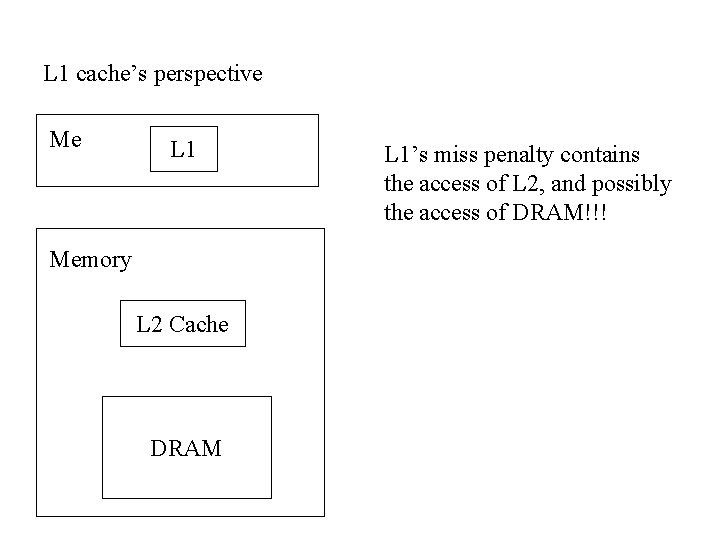

How caches work • Classic abstraction • Each level of hierarchy has no knowledge of the configuration of lower level L 1 cache’s perspective Me Memory L 1 L 2 Cache DRAM L 2 cache’s perspective Me L 2 Cache Memory DRAM

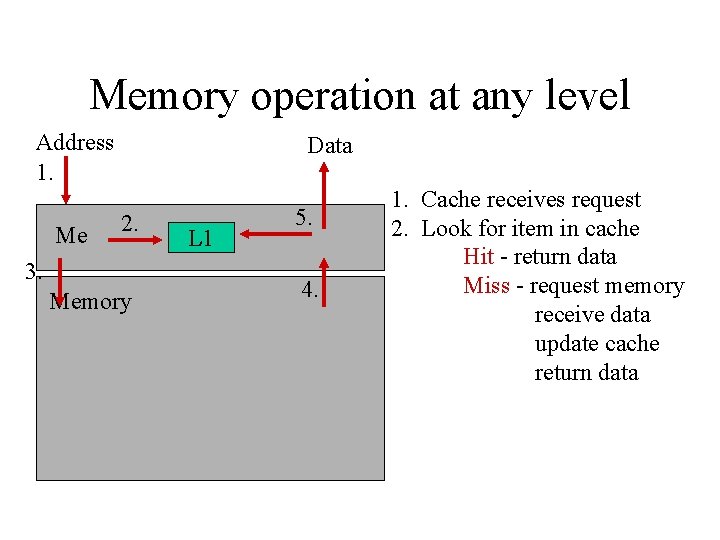

Memory operation at any level Address 1. Me Data 2. 3. Memory L 1 5. 4. 1. Cache receives request 2. Look for item in cache Hit - return data Miss - request memory receive data update cache return data

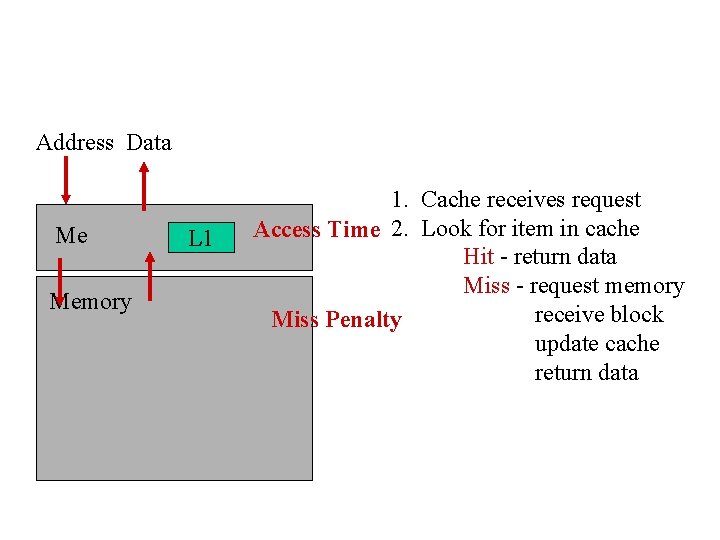

Address Data Me Memory L 1 1. Cache receives request Access Time 2. Look for item in cache Hit - return data Miss - request memory receive block Miss Penalty update cache return data

Performance • Hit: latency = ______ • Miss: latency = ___________ • Goal: minimize misses!!!

Cache Writes • There are multiple copies of the data lying around – L 1 cache, L 2 cache, DRAM • Do we write to all of them? • Do we wait for the write to complete before the processor can proceed?

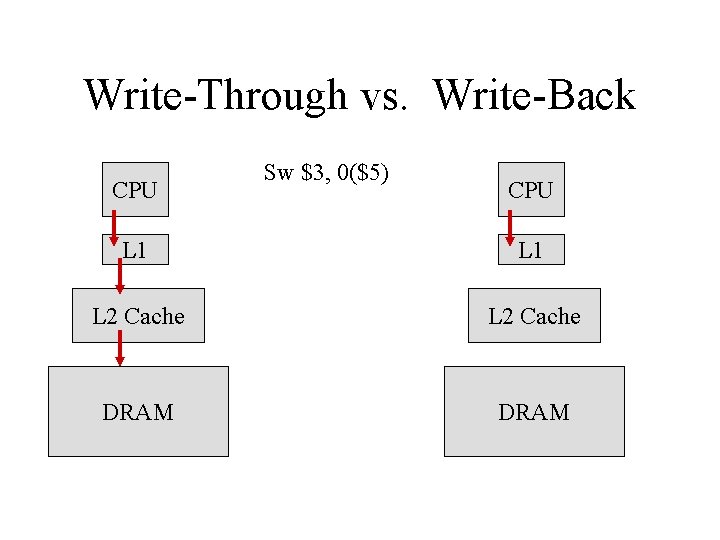

Do we write to all of them? • Write-through • Write-back – creates data - different values for same item in cache and DRAM. – This data is referred to as

Write-Through vs. Write-Back CPU Sw $3, 0($5) CPU L 1 L 2 Cache DRAM

Write-through vs Write-back • Which performs the write faster? • Which has faster evictions from a cache? • Which causes more bus traffic?

Does processor wait for write? • Write buffer – Any loads must check write buffer in parallel with cache access. – Buffer values are more recent than cache values.

Challenge • DRAM is designed for _______, not _____ • DRAM is _______ than the bus • We are allowed to change the width, the number of DRAMs, and the bus protocol, but the access latency stays slow. • Widening anything increases the cost by quite a bit.

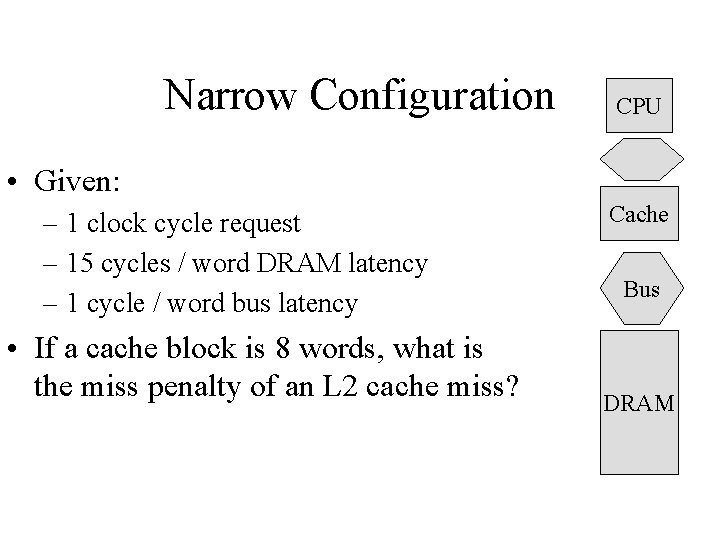

Narrow Configuration CPU • Given: – 1 clock cycle request – 15 cycles / word DRAM latency – 1 cycle / word bus latency • If a cache block is 8 words, what is the miss penalty of an L 2 cache miss? Cache Bus DRAM

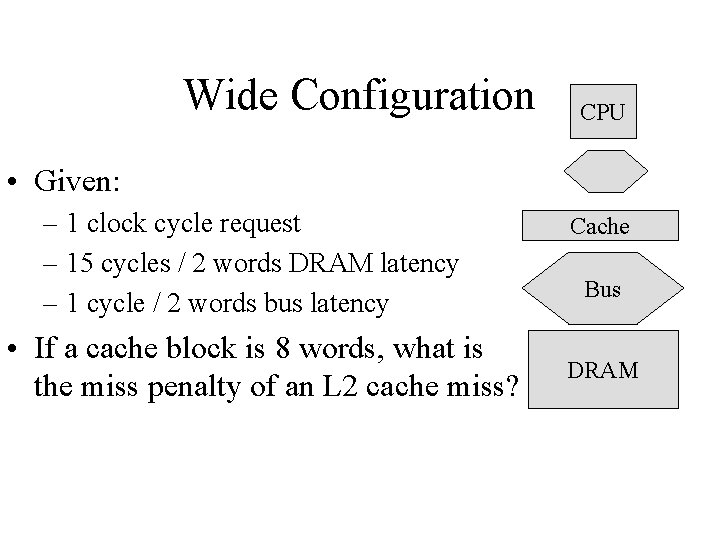

Wide Configuration CPU • Given: – 1 clock cycle request – 15 cycles / 2 words DRAM latency – 1 cycle / 2 words bus latency • If a cache block is 8 words, what is the miss penalty of an L 2 cache miss? Cache Bus DRAM

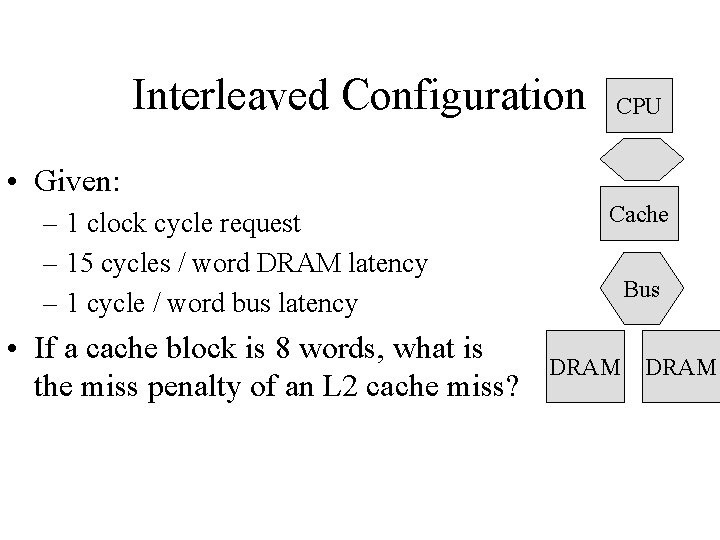

Interleaved Configuration CPU • Given: – 1 clock cycle request – 15 cycles / word DRAM latency – 1 cycle / word bus latency • If a cache block is 8 words, what is the miss penalty of an L 2 cache miss? Cache Bus DRAM

Recent DRAM trends • • Fewer, Bigger DRAMs New bus protocols (RAMBUS) small DRAM caches (page mode) SDRAM (synchronous DRAM) – one request & length nets several continuous responses.

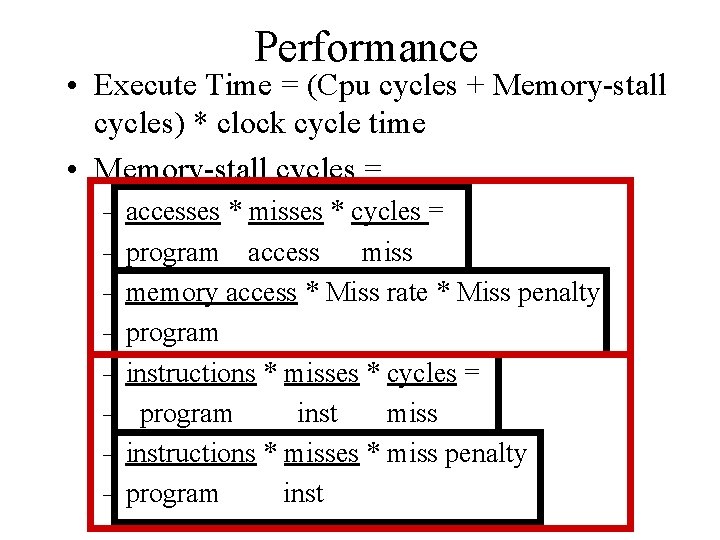

Performance • Execute Time = (Cpu cycles + Memory-stall cycles) * clock cycle time • Memory-stall cycles = – accesses * misses * cycles = – program access miss – memory access * Miss rate * Miss penalty – program – instructions * misses * cycles = – program inst miss – instructions * misses * miss penalty – program inst

Example 1 • • • instruction cache miss rate: 2% data cache miss rate: 3% miss penalty: 50 cycles ld/st instructions are 25% of instructions CPI with perfect cache is 2. 3 How much faster is the computer with a perfect cache?

Example 2 • Double the clock rate from Example 1. What is the ideal speedup when taking into account the memory system? • How long is the miss penalty now?

Example 1 Work • misses = Iacc * Imr + Dacc * Dmr • instr

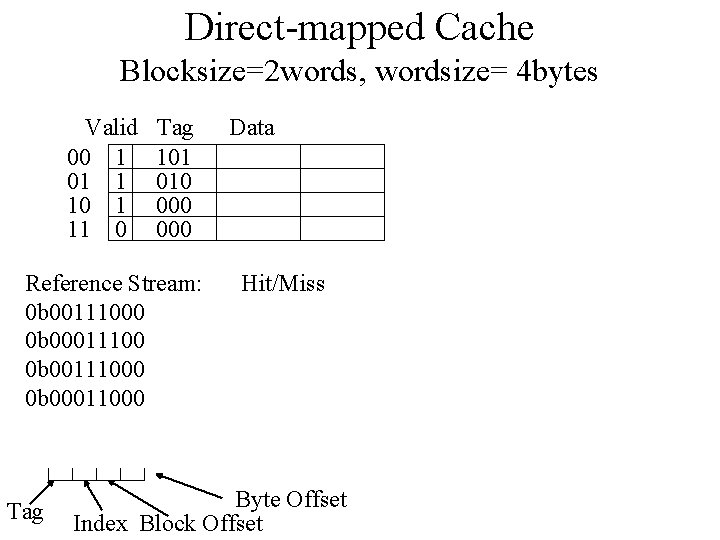

Direct-mapped Cache Blocksize=2 words, wordsize= 4 bytes Valid 00 1 01 1 10 1 11 0 Tag 101 010 000 Reference Stream: 0 b 00111000 0 b 00011100 0 b 00111000 0 b 00011000 Tag Data Hit/Miss Byte Offset Index Block Offset

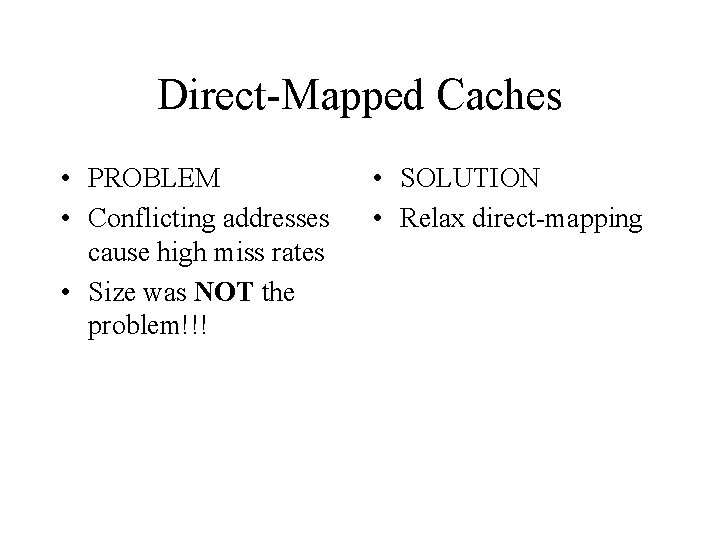

Direct-Mapped Caches • PROBLEM • Conflicting addresses cause high miss rates • Size was NOT the problem!!! • SOLUTION • Relax direct-mapping

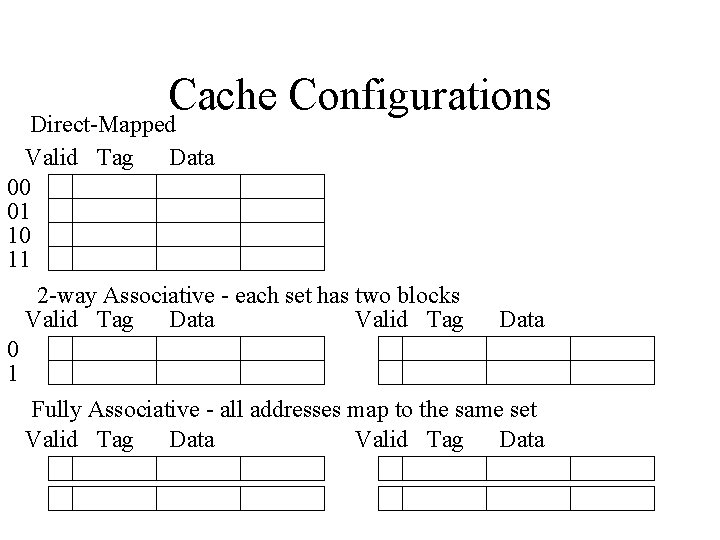

Cache Configurations Direct-Mapped Valid Tag Data 00 01 10 11 2 -way Associative - each set has two blocks Valid Tag Data 0 1 Fully Associative - all addresses map to the same set Valid Tag Data

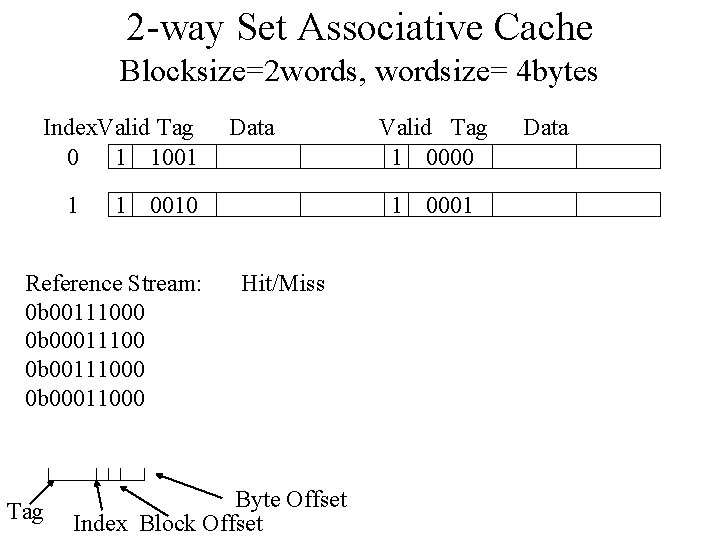

2 -way Set Associative Cache Blocksize=2 words, wordsize= 4 bytes Index. Valid Tag 0 1 1001 1 1 0010 Reference Stream: 0 b 00111000 0 b 00011100 0 b 00111000 0 b 00011000 Tag Data Valid Tag 1 0000 1 0001 Hit/Miss Byte Offset Index Block Offset Data

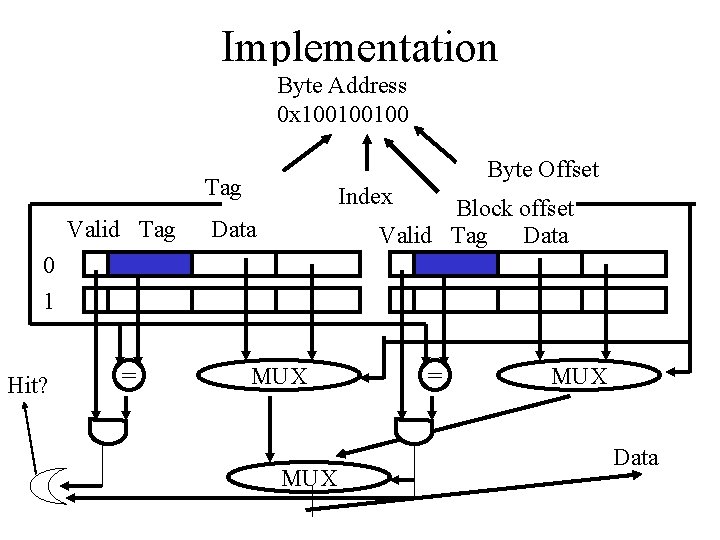

Implementation Byte Address 0 x 100100100 Byte Offset Tag Valid Tag Index Block offset Valid Tag Data 0 1 Hit? = MUX Data

Performance Implications • Increasing associativity increases/decreases hit rate • Increasing associativity increases/decreases access time • Increasing associativity increases/decreases miss penalty

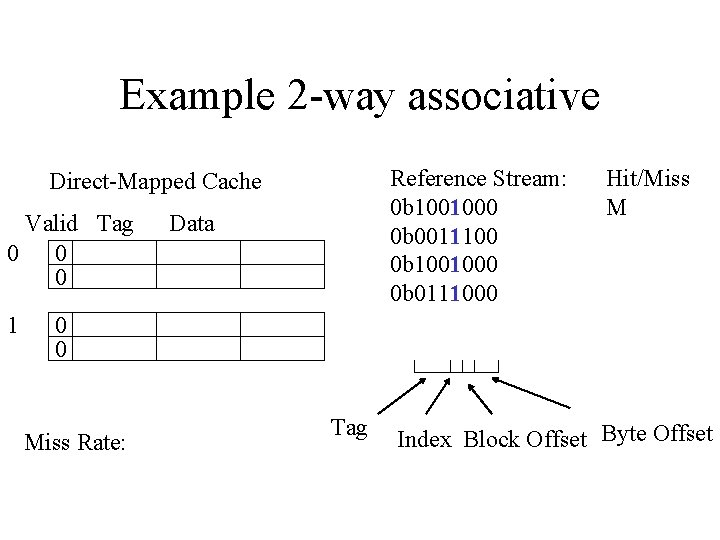

Example 2 -way associative Reference Stream: 0 b 1001000 0 b 0011100 0 b 1001000 0 b 0111000 Direct-Mapped Cache Valid Tag 0 0 0 1 Data Hit/Miss M 0 0 Miss Rate: Tag Index Block Offset Byte Offset

Which block to replace? • 0 b 1001000 • 0 b 001100

Replacement Algorithms • LRU & FIFO simple conceptually, but implementation difficult for high assoc. • LRU & FIFO must be approximated with high associativity • Random sometimes better than approximated LRU/FIFO • Tradeoff between accuracy, implementation cost

L 1 cache’s perspective Me L 1 Memory L 2 Cache DRAM L 1’s miss penalty contains the access of L 2, and possibly the access of DRAM!!!

Multi-level Caches • • • Base CPI 1. 0, 500 MHz clock main memory-100 cycles, L 2 - 10 cycles L 1 miss rate per instruction - 5% w/L 2 - 2% of instructions go to DRAM What is the speedup with the L 2 cache? There is a typo in the book for this example!

Multi-level Caches • CPI = 1 + memory stalls / instruction

Summary • Direct-mapped – simple – _____ access time – _______ hit rate • Variable block size – still simple – _______ access time

Summary • Associative caches – ____ the access time – ____ the hit rate – associativity above ___ has little to no gain • Multi-level caches – _____ potential miss penalty – _____ average miss penalty

- Slides: 50