By MOSES CHARIKAR CHANDRA CHEKURI TOMAS FEDER AND

By: MOSES CHARIKAR, CHANDRA CHEKURI, TOMAS FEDER, AND RAJEEV MOTWANI Presented By: Sarah Hegab 1

Outline: • • Motivation Main Problem Hierarchical Agglomerative Clustering A Model Incremental Clustering Different incremental algorithms Lower Bounds for incremental algorithms Dual Problem 2

I. Main Problem The clustering problem is as follows: given n points in a metric space M, partition the points into k clusters so as to minimize the maximum cluster diameter. 3

1. Greedy Incremental Clustering a) Center-Greedy b) Diameter-greedy 4

a) Center-Greedy The center-greedy algorithm associates a center for each cluster and merges the two clusters whose centers are closest. The center of the old cluster with the larger radius becomes the new center Theorem: The center-greedy algorithm’s performance ratio has a lower bound of 2 k - 1. 5

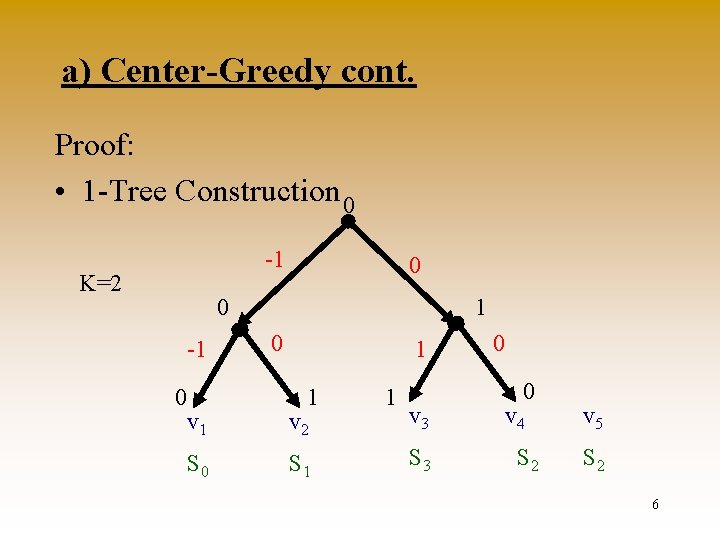

a) Center-Greedy cont. Proof: • 1 -Tree Construction 0 -1 K=2 0 0 -1 0 1 1 v 2 S 0 S 1 1 v 3 S 3 0 0 v 4 S 2 v 5 S 2 6

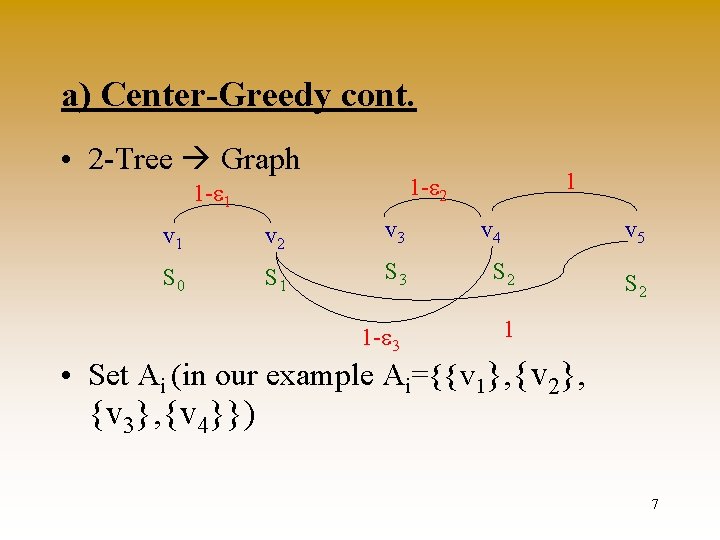

a) Center-Greedy cont. • 2 -Tree Graph 1 1 -e 2 1 -e 1 v 2 v 3 v 4 v 5 S 0 S 1 S 3 S 2 1 -e 3 1 S 2 • Set Ai (in our example Ai={{v 1}, {v 2}, {v 3}, {v 4}}) 7

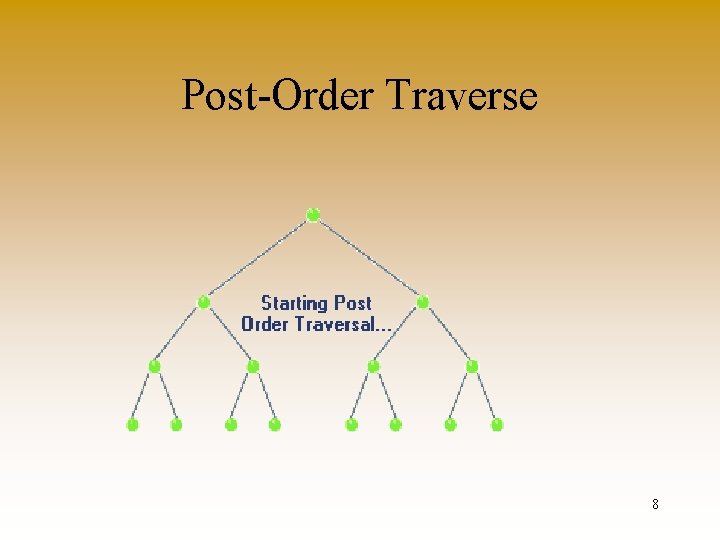

Post-Order Traverse 8

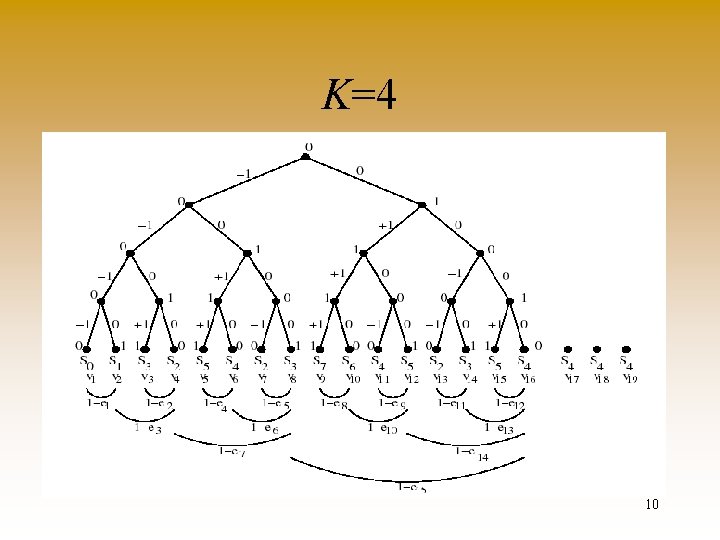

a) Center-Greedy cont. Claims: • For 1 <= i <= 2 k - 1, Ai is the set of clusters of center-greedy which contain more than one vertex after the k + i vertices v 1, . . . , vk+i are given. • There is a k-clustering of G of diameter 1. The clustering which achieves the above diameter is {S 0 US 1, . . . , S 2 k-2 US 2 k-1}. 9

K=4 10

Competitiveness of Center-Greedy Theorem : The center-greedy algorithm has performance ratio of 2 k-1 in any metric space. 11

b) Diameter-Greedy The diameter-greedy algorithm always merges those two clusters which minimize the diameter of the resulting merged cluster. Theorem : The diameter-greedy algorithm’s performance ratio W(log(k)) is even on the line. 12

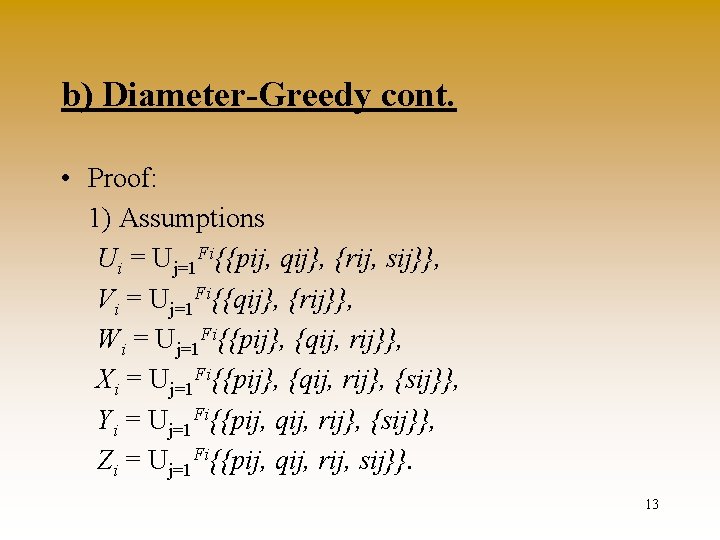

b) Diameter-Greedy cont. • Proof: 1) Assumptions Ui = Uj=1 Fi{{pij, qij}, {rij, sij}}, Vi = Uj=1 Fi{{qij}, {rij}}, Wi = Uj=1 Fi{{pij}, {qij, rij}}, Xi = Uj=1 Fi{{pij}, {qij, rij}, {sij}}, Yi = Uj=1 Fi{{pij, qij, rij}, {sij}}, Zi = Uj=1 Fi{{pij, qij, rij, sij}}. 13

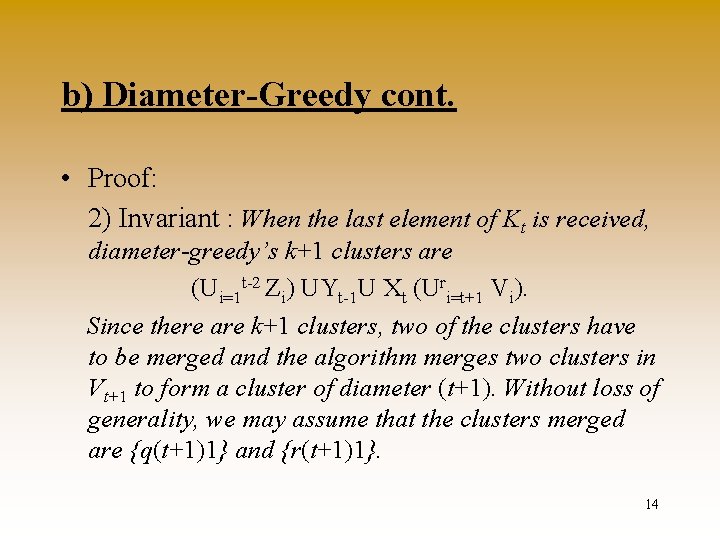

b) Diameter-Greedy cont. • Proof: 2) Invariant : When the last element of Kt is received, diameter-greedy’s k+1 clusters are (Ui=1 t-2 Zi) UYt-1 U Xt (Uri=t+1 Vi). Since there are k+1 clusters, two of the clusters have to be merged and the algorithm merges two clusters in Vt+1 to form a cluster of diameter (t+1). Without loss of generality, we may assume that the clusters merged are {q(t+1)1} and {r(t+1)1}. 14

Competitiveness of Diameter-Greedy Theorem : For k = 2, the diameter-greedy algorithm has a performance ratio 3 in any metric space. 15

2. Doubling Algorithm a) Deterministic b) Randomized c) Oblivious d) Randomized Oblivious 16

a) Deterministic doubling algorithm • • The algorithm works in phases At the start of phase i it has k+1 clusters Uses a and b, s. t a/(1 -a)<=b At start of phase i the following is assumed: 1. for each cluster Cj , the radius of Cj defined as maxp Cj d(cj, p) is at most αdi 2. for each pair of clusters Cj and Cl, the intercenter distance d(cj, cl) => di 3. di <= opt. 17

a) Deterministic doubling algorithm • Each phase has two stages 1 - Merging stage, in which the algorithm reduces the number of clusters by merging certain pairs 2 -Update stage, in which the algorithm accepts new updates and tries to maintain at most k clusters without increasing the radius of the clusters or violating the invariants A phase ends when number of clusters exceeds k 18

a) Deterministic doubling algorithm • Definition: The t-threshold graph on a set of points P = {p 1, p 2, . . . , pn} is the graph G=(P, E) such that (pi, pj) in E if and only if d(pi, pj) <= t. • Merging stage defines di+1= bdi and a graph G di+1–threshold for centers c 1, . . . , ck+1. • New clusters C’ 1…C’m. If m=k+1 this ends the phase i 19

a) Deterministic doubling algorithm • Lemma The pairwise distance between cluster centers after the merging stage of phase i is at least di+1. • Lemma The radius of the clusters after the merging stage of phase i is at most di+1+αdi<=αdi+1 • Update continues while the number of clusters is at most k. It is restricted by the radius bound αdi+1. Then phase i ends. 20

a) Deterministic doubling algorithm • Initialization : the algorithm waits until k+1 points have arrived then enters phase 1, with each point as a center containing just itself. And d 1 set to the distance between the closest pair of points 21

a) Deterministic doubling algorithm • Lemma The k + 1 clusters at the end of the ith phase satisfy the following conditions: 1. The radius of the clusters is at most αdi+1. 2. The pairwise distance between the cluster centers is at least d i+1. 3. di+1 <= OPT, where OPT is the diameter of the optimal clustering for the current set of points. Theorem: The doubling algorithm has performance ratio 8 in any metric space. 22

a) Deterministic doubling algorithm Example to show the analysis is tight: k=>3. Input consists of k+3 points p 1…pk+3 the points p 1…pk+1 have distance 1, pk+2 , pk+3 have distance 4 from the others, and 8 from each other. 23

![b) Randomized doubling algorithm • Choose a random variable r from [1/e, 1] according b) Randomized doubling algorithm • Choose a random variable r from [1/e, 1] according](http://slidetodoc.com/presentation_image/4fa3f91d1db12413b8b5af62bb906ace/image-24.jpg)

b) Randomized doubling algorithm • Choose a random variable r from [1/e, 1] according to the probability density function 1/r • The min pairwise distance of the first k+1 point is x. And d 1=rx • b=e, a=e/(e-1) 24

b) Randomized doubling algorithm Theorem : The randomized doubling algorithm has expected performance ratio 2 e in any metric space. The same bound is also achieved for the radius measure. 25

c) Oblivious clustering algorithm • Does not need to know k • Assume we have un upper bound on the max distance between point which is 1. • Points are maintained in a tree 26

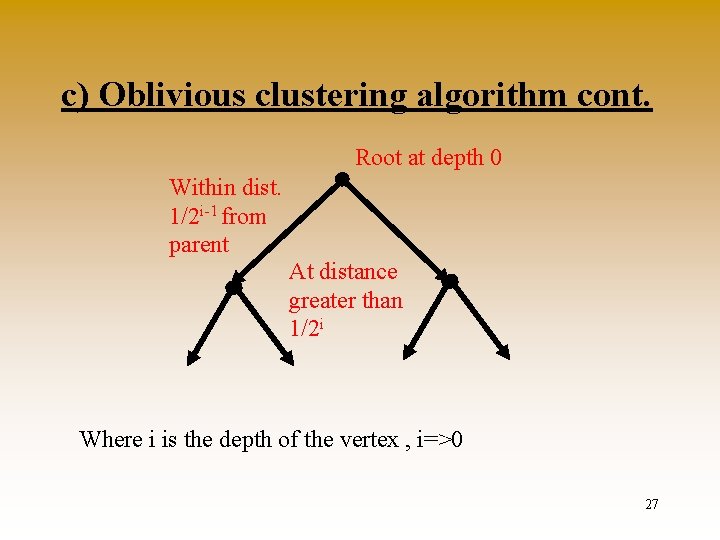

c) Oblivious clustering algorithm cont. Root at depth 0 Within dist. 1/2 i-1 from parent At distance greater than 1/2 i Where i is the depth of the vertex , i=>0 27

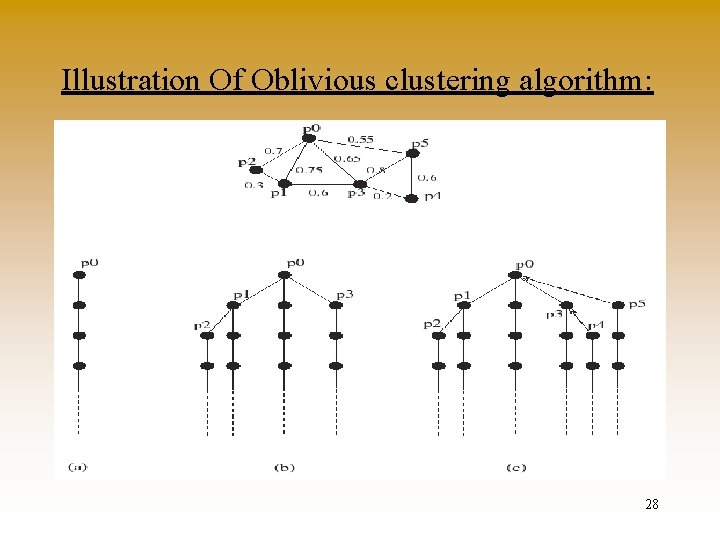

Illustration Of Oblivious clustering algorithm: 28

c) Oblivious clustering algorithm cont. • How do we obtain the k clusters from the tree? • If k is given, and i is the greatest depth containing at most k points. • These are the k cluster centers. The sub-trees of the vertices at depth i are the clusters. • As points are added, the number of vertices at depth i increases; if it goes beyond k, then we change i to i - 1, collapsing certain clusters; otherwise, the new point is inserted in one of the existing clusters. 29

c) Oblivious clustering algorithm cont. Theorem : The algorithm that outputs the k clusters obtained from the tree construction has performance ratio 8 for the diameter measure and the radius measure. • Optimal diameter is ½i+1 < d <= ½I • Then points at depth i are in different clusters, so there at most k of them. • j=>i be the greatest depth containing at most k points. • Subtrees are at a distance of the root within ½j + ½j+1 + ½j+2 + · · ·<= ½j-1< 4 d. 30

d) Randomized Oblivious • Distance threshold for depth i is r/ei • r chosen once at random from [1, e], according to the PDF 1/r • The expected diameter is at most 2 e. OPT diameter 31

Lower Bounds Theorem 1: For k = 2, there is a lower bound of 2 and 2 - ½k/2 on the performance ratio of deterministic and randomized algorithms, respectively, for incremental clustering on the line. 32

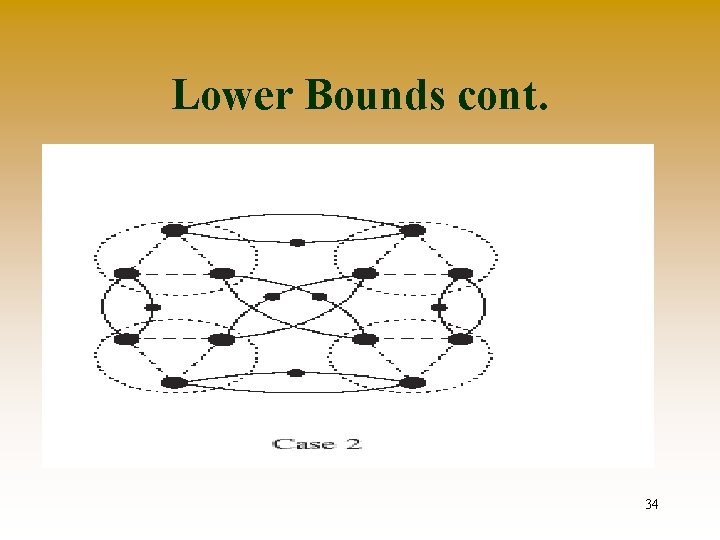

Lower Bounds cont. Theorem 2: There is a lower bound of 1+21/2 on the performance ratio of any deterministic incremental clustering algorithm for arbitrary metric spaces. 33

Lower Bounds cont. 34

Lower Bounds cont. Theorem 3: For any e>0 and k=>2, there is a lower bound of 2 - e on the performance ratio of any randomized incremental algorithm. 35

Lower Bounds cont. Theorem 4: For the radius measure, no deterministic incremental clustering algorithm has a performance ratio better than 3 and no randomized algorithm has a ratio better than 3 – e for any fixed e > 0. 36

II. Dual Problem For a sequence of points p 1, p 2, . . . , pn Rd, cover each point with a unit ball in d as it arrives, so as to minimize the total number of balls used. 37

II. Dual Problem Rogers Theorem: Rd can be covered by any convex shape with covering density O(d log d). Theorem: For the dual clustering problem in Rd, there is an incremental algorithm with performance ratio O(2 dd log d). Theorem: For the dual clustering problem in d, any incremental algorithm must have performance ratio W( (log d)/(log log d) ). 38

39

- Slides: 39