Bulk Data Copy Generalizations some DMIJSDL overlap Bulk

Bulk Data Copy Generalizations (some DMI/JSDL overlap) Bulk Copying: Recursive file/dir copying between multiple sources and sinks (potentially a draft straw-man proposal for a ‘Bulk Copy Document’? ) david. meredith@stfc. ac. uk

Data Copy Activity Descriptions (JSDL and DMI) • Some overlap between JSDL Data Staging and DMI DEPRs. • The Source/Target <jsdl: Data. Staging/> element largely similar to Source/Sink <dmi: DEPR/> element. • Both capture the source/target URI and credentials. • At present, neither JSDL DS or DMI fully captures our requirements (this is not a criticism, they are each intended to address their existing use cases which only partially overlap with the requirements for a bulk data copy activity !). Other • Condor Stork ‐ based on Condor Class‐Ads • Not sure if Globus has/intends a similar definition in its new developments (e. g. Saa. S) anyone ?

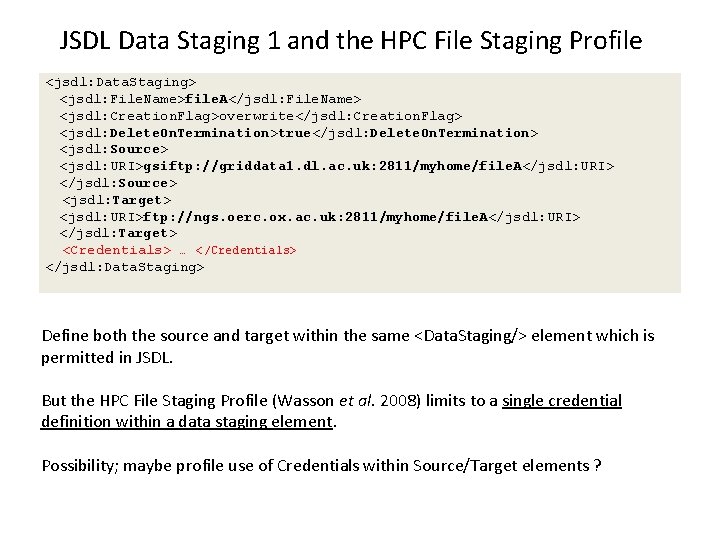

JSDL Data Staging 1 and the HPC File Staging Profile <jsdl: Data. Staging> <jsdl: File. Name>file. A</jsdl: File. Name> <jsdl: Creation. Flag>overwrite</jsdl: Creation. Flag> <jsdl: Delete. On. Termination>true</jsdl: Delete. On. Termination> <jsdl: Source> <jsdl: URI>gsiftp: //griddata 1. dl. ac. uk: 2811/myhome/file. A</jsdl: URI> </jsdl: Source> <jsdl: Target> <jsdl: URI>ftp: //ngs. oerc. ox. ac. uk: 2811/myhome/file. A</jsdl: URI> </jsdl: Target> <Credentials> … </Credentials> </jsdl: Data. Staging> Define both the source and target within the same <Data. Staging/> element which is permitted in JSDL. But the HPC File Staging Profile (Wasson et al. 2008) limits to a single credential definition within a data staging element. Possibility; maybe profile use of Credentials within Source/Target elements ?

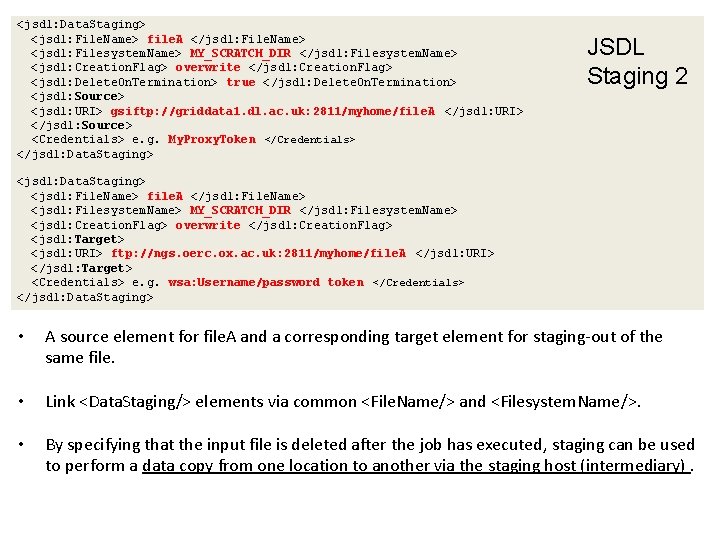

<jsdl: Data. Staging> <jsdl: File. Name> file. A </jsdl: File. Name> <jsdl: Filesystem. Name> MY_SCRATCH_DIR </jsdl: Filesystem. Name> <jsdl: Creation. Flag> overwrite </jsdl: Creation. Flag> <jsdl: Delete. On. Termination> true </jsdl: Delete. On. Termination> <jsdl: Source> <jsdl: URI> gsiftp: //griddata 1. dl. ac. uk: 2811/myhome/file. A </jsdl: URI> </jsdl: Source> <Credentials> e. g. My. Proxy. Token </Credentials> </jsdl: Data. Staging> JSDL Staging 2 <jsdl: Data. Staging> <jsdl: File. Name> file. A </jsdl: File. Name> <jsdl: Filesystem. Name> MY_SCRATCH_DIR </jsdl: Filesystem. Name> <jsdl: Creation. Flag> overwrite </jsdl: Creation. Flag> <jsdl: Target> <jsdl: URI> ftp: //ngs. oerc. ox. ac. uk: 2811/myhome/file. A </jsdl: URI> </jsdl: Target> <Credentials> e. g. wsa: Username/password token </Credentials> </jsdl: Data. Staging> • A source element for file. A and a corresponding target element for staging‐out of the same file. • Link <Data. Staging/> elements via common <File. Name/> and <Filesystem. Name/>. • By specifying that the input file is deleted after the job has executed, staging can be used to perform a data copy from one location to another via the staging host (intermediary).

Using Staging for Bulk Copying • In the context of bulk copying, the file staging host (intermediary) is redundant: – We don’t need to uniquely name and aggregate files on the staging host. • No equivalent <dmi: Data. Locations/> for defining alternative locations for a source and sink (a nice feature of DMI). • JSDL is designed to describe a single activity which is atomic from the perspective of an external user (staging is part of this atomic activity). In bulk copying, we need to identify and report on the status of each copy operation. • Some additional (proprietary? ) elements are required (e. g. <dmi: Transfer. Requirements/>, <other: File. Selector/>, abstract URI connection properties for different URI schemes, e. g. i. RODS/SRB).

OGSA DMI • The OGSA Data Movement Interface (DMI) (Antonioletti et al. 2008) defines a number of elements for describing and interacting with a data transfer activity. • The data source and destination are each described separately with a Data End Point Reference (DEPRs), which is a specialized form of WS‐Address element (Box et al. 2004). • In contrast to the JSDL data staging model, a DEPR facilitates the definition of one or more <Data/> elements within a <Data. Locations/> element. This is used to define alternative locations for the data source and/or sink. • An implementation can select between its supported protocols and select/retry different source/sink combinations (improves resilience and the likelihood of performing a successful copy).

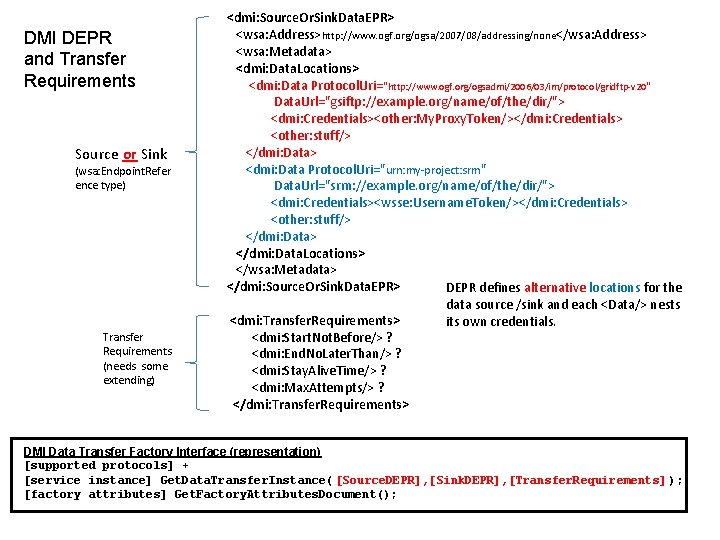

DMI DEPR and Transfer Requirements Source or Sink (wsa: Endpoint. Refer ence type) Transfer Requirements (needs some extending) <dmi: Source. Or. Sink. Data. EPR> <wsa: Address>http: //www. ogf. org/ogsa/2007/08/addressing/none</wsa: Address> <wsa: Metadata> <dmi: Data. Locations> <dmi: Data Protocol. Uri="http: //www. ogf. org/ogsadmi/2006/03/im/protocol/gridftp‐v 20 " Data. Url="gsiftp: //example. org/name/of/the/dir/"> <dmi: Credentials><other: My. Proxy. Token/></dmi: Credentials> <other: stuff/> </dmi: Data> <dmi: Data Protocol. Uri="urn: my‐project: srm" Data. Url="srm: //example. org/name/of/the/dir/"> <dmi: Credentials><wsse: Username. Token/></dmi: Credentials> <other: stuff/> </dmi: Data. Locations> </wsa: Metadata> </dmi: Source. Or. Sink. Data. EPR> DEPR defines alternative locations for the data source /sink and each <Data/> nests <dmi: Transfer. Requirements> its own credentials. <dmi: Start. Not. Before/> ? <dmi: End. No. Later. Than/> ? <dmi: Stay. Alive. Time/> ? <dmi: Max. Attempts/> ? </dmi: Transfer. Requirements> DMI Data Transfer Factory Interface (representation) [supported protocols] + [service instance] Get. Data. Transfer. Instance( [Source. DEPR], [Sink. DEPR], [Transfer. Requirements] ); [factory attributes] Get. Factory. Attributes. Document();

Current DMI Limitations for Bulk Copying (for multiple sources and sinks) • DMI is intended to describe only a single data copy operation between one source and one sink (this is by design). To do several transfers, client needs to perform multiple invocations of a DMI service factory would be required to create multiple DMI service instances. • We require a single message packet that wraps multiple transfers into a single ‘atomic’ activity rather than having to repeatedly invoke the DMI service factory (broadly similar to defining multiple JSDL data staging elements). • Some of the existing functional spec elements require extension / slight modification (in particular addition of <xsd: any/> extension points to embed proprietary info).

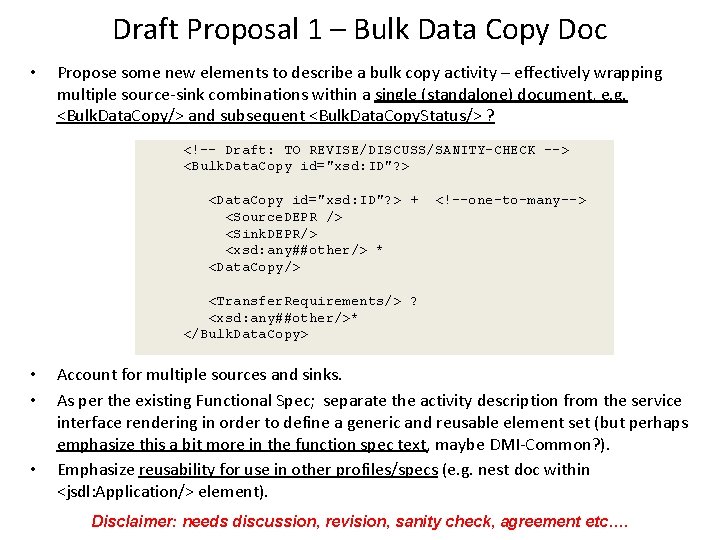

Draft Proposal 1 – Bulk Data Copy Doc • Propose some new elements to describe a bulk copy activity – effectively wrapping multiple source‐sink combinations within a single (standalone) document, e. g. <Bulk. Data. Copy/> and subsequent <Bulk. Data. Copy. Status/> ? <!-- Draft: TO REVISE/DISCUSS/SANITY-CHECK --> <Bulk. Data. Copy id="xsd: ID"? > <Data. Copy id="xsd: ID"? > + <Source. DEPR /> <Sink. DEPR/> <xsd: any##other/> * <Data. Copy/> <!--one-to-many--> <Transfer. Requirements/> ? <xsd: any##other/>* </Bulk. Data. Copy> • • • Account for multiple sources and sinks. As per the existing Functional Spec; separate the activity description from the service interface rendering in order to define a generic and reusable element set (but perhaps emphasize this a bit more in the function spec text, maybe DMI‐Common? ). Emphasize reusability for use in other profiles/specs (e. g. nest doc within <jsdl: Application/> element). Disclaimer: needs discussion, revision, sanity check, agreement etc….

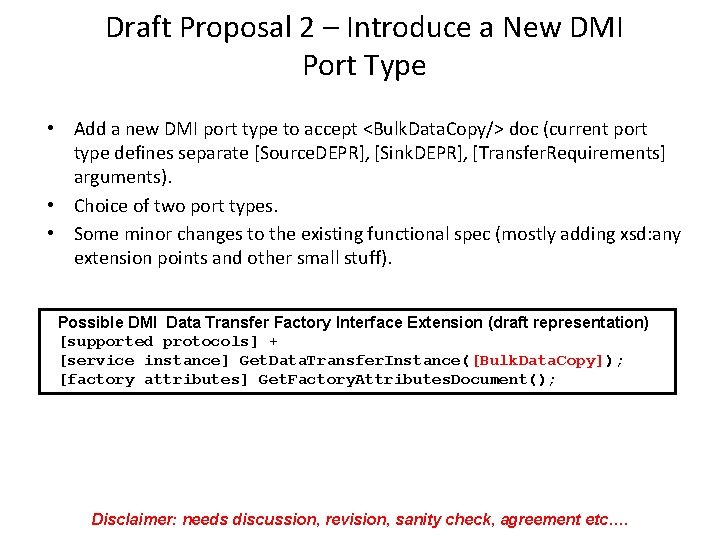

Draft Proposal 2 – Introduce a New DMI Port Type • Add a new DMI port type to accept <Bulk. Data. Copy/> doc (current port type defines separate [Source. DEPR], [Sink. DEPR], [Transfer. Requirements] arguments). • Choice of two port types. • Some minor changes to the existing functional spec (mostly adding xsd: any extension points and other small stuff). Possible DMI Data Transfer Factory Interface Extension (draft representation) [supported protocols] + [service instance] Get. Data. Transfer. Instance([Bulk. Data. Copy]); [factory attributes] Get. Factory. Attributes. Document(); Disclaimer: needs discussion, revision, sanity check, agreement etc….

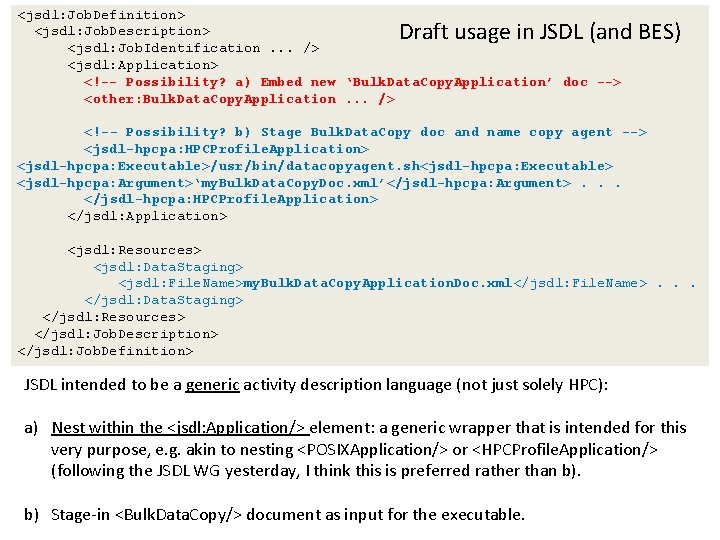

<jsdl: Job. Definition> <jsdl: Job. Description> <jsdl: Job. Identification. . . /> <jsdl: Application> <!-- Possibility? a) Embed new ‘Bulk. Data. Copy. Application’ doc --> <other: Bulk. Data. Copy. Application. . . /> Draft usage in JSDL (and BES) <!-- Possibility? b) Stage Bulk. Data. Copy doc and name copy agent --> <jsdl-hpcpa: HPCProfile. Application> <jsdl-hpcpa: Executable>/usr/bin/datacopyagent. sh<jsdl-hpcpa: Executable> <jsdl-hpcpa: Argument>‘my. Bulk. Data. Copy. Doc. xml’</jsdl-hpcpa: Argument>. . . </jsdl-hpcpa: HPCProfile. Application> </jsdl: Application> <jsdl: Resources> <jsdl: Data. Staging> <jsdl: File. Name>my. Bulk. Data. Copy. Application. Doc. xml</jsdl: File. Name>. . . </jsdl: Data. Staging> </jsdl: Resources> </jsdl: Job. Description> </jsdl: Job. Definition> JSDL intended to be a generic activity description language (not just solely HPC): a) Nest within the <jsdl: Application/> element: a generic wrapper that is intended for this very purpose, e. g. akin to nesting <POSIXApplication/> or <HPCProfile. Application/> (following the JSDL WG yesterday, I think this is preferred rather than b). b) Stage‐in <Bulk. Data. Copy/> document as input for the executable.

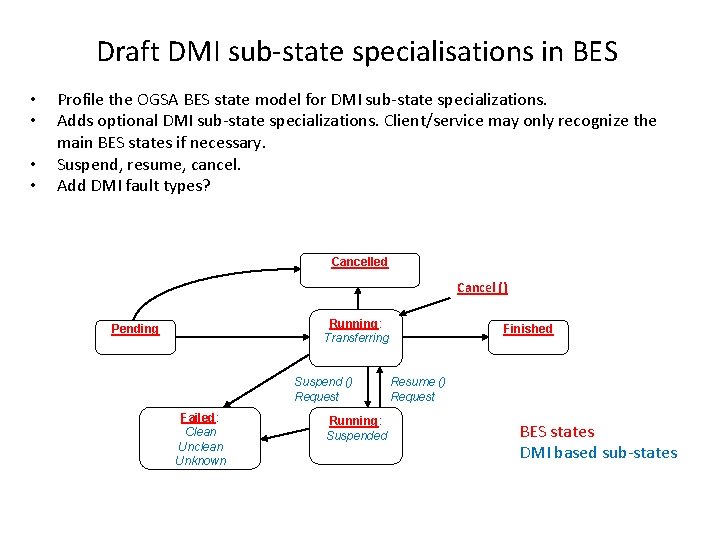

Draft DMI sub‐state specialisations in BES • • Profile the OGSA BES state model for DMI sub‐state specializations. Adds optional DMI sub‐state specializations. Client/service may only recognize the main BES states if necessary. Suspend, resume, cancel. Add DMI fault types? Cancelled Cancel () Running: Transferring Pending Suspend () Request Failed: Clean Unclean Unknown Running: Suspended Finished Resume () Request BES states DMI based sub‐states

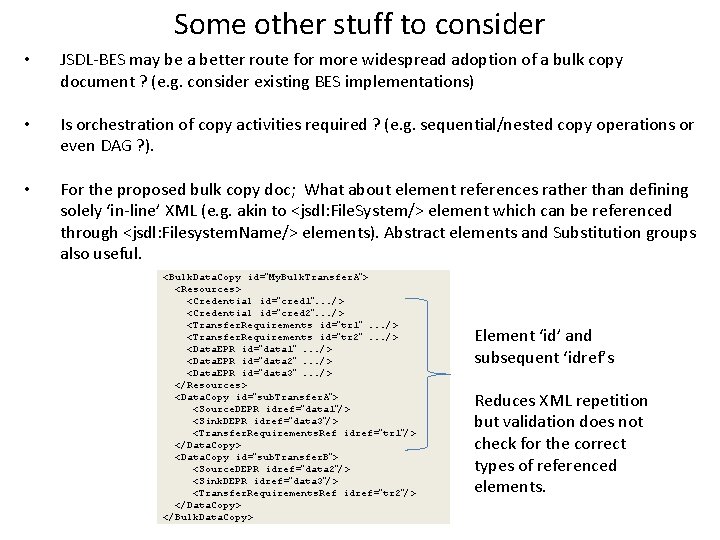

Some other stuff to consider • JSDL‐BES may be a better route for more widespread adoption of a bulk copy document ? (e. g. consider existing BES implementations) • Is orchestration of copy activities required ? (e. g. sequential/nested copy operations or even DAG ? ). • For the proposed bulk copy doc; What about element references rather than defining solely ‘in‐line’ XML (e. g. akin to <jsdl: File. System/> element which can be referenced through <jsdl: Filesystem. Name/> elements). Abstract elements and Substitution groups also useful. <Bulk. Data. Copy id=”My. Bulk. Transfer. A”> <Resources> <Credential id=”cred 1”. . . /> <Credential id=”cred 2”. . . /> <Transfer. Requirements id=”tr 1”. . . /> <Transfer. Requirements id=”tr 2”. . . /> <Data. EPR id=”data 1”. . . /> <Data. EPR id=”data 2”. . . /> <Data. EPR id=”data 3”. . . /> </Resources> <Data. Copy id=”sub. Transfer. A”> <Source. DEPR idref=”data 1”/> <Sink. DEPR idref=”data 3”/> <Transfer. Requirements. Ref idref=”tr 1”/> </Data. Copy> <Data. Copy id=”sub. Transfer. B”> <Source. DEPR idref=”data 2”/> <Sink. DEPR idref=”data 3”/> <Transfer. Requirements. Ref idref=”tr 2”/> </Data. Copy> </Bulk. Data. Copy> Element ‘id’ and subsequent ‘idref’s Reduces XML repetition but validation does not check for the correct types of referenced elements.

Supplementary slides OTHER STUFF / EXTRA SLIDES….

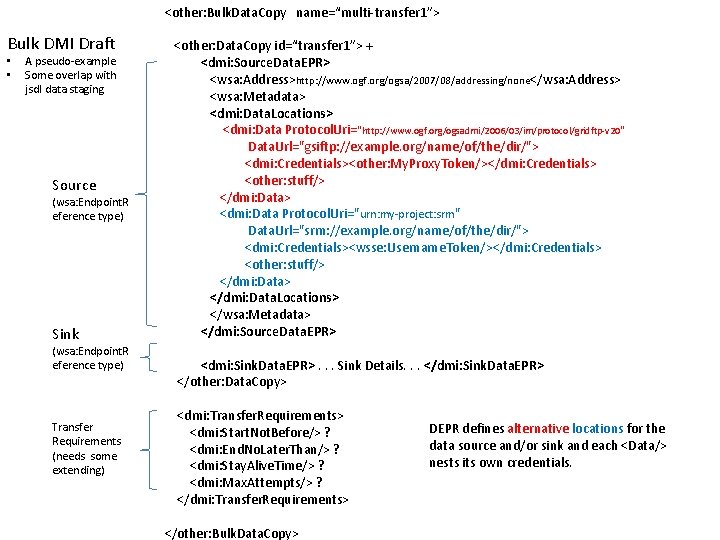

<other: Bulk. Data. Copy name=“multi‐transfer 1”> Bulk DMI Draft • • A pseudo‐example Some overlap with jsdl data staging Source (wsa: Endpoint. R eference type) Sink (wsa: Endpoint. R eference type) Transfer Requirements (needs some extending) <other: Data. Copy id=“transfer 1”> + <dmi: Source. Data. EPR> <wsa: Address>http: //www. ogf. org/ogsa/2007/08/addressing/none</wsa: Address> <wsa: Metadata> <dmi: Data. Locations> <dmi: Data Protocol. Uri="http: //www. ogf. org/ogsadmi/2006/03/im/protocol/gridftp‐v 20 " Data. Url="gsiftp: //example. org/name/of/the/dir/"> <dmi: Credentials><other: My. Proxy. Token/></dmi: Credentials> <other: stuff/> </dmi: Data> <dmi: Data Protocol. Uri="urn: my‐project: srm" Data. Url="srm: //example. org/name/of/the/dir/"> <dmi: Credentials><wsse: Username. Token/></dmi: Credentials> <other: stuff/> </dmi: Data. Locations> </wsa: Metadata> </dmi: Source. Data. EPR> <dmi: Sink. Data. EPR>. . . Sink Details. . . </dmi: Sink. Data. EPR> </other: Data. Copy> <dmi: Transfer. Requirements> <dmi: Start. Not. Before/> ? <dmi: End. No. Later. Than/> ? <dmi: Stay. Alive. Time/> ? <dmi: Max. Attempts/> ? </dmi: Transfer. Requirements> </other: Bulk. Data. Copy> DEPR defines alternative locations for the data source and/or sink and each <Data/> nests its own credentials.

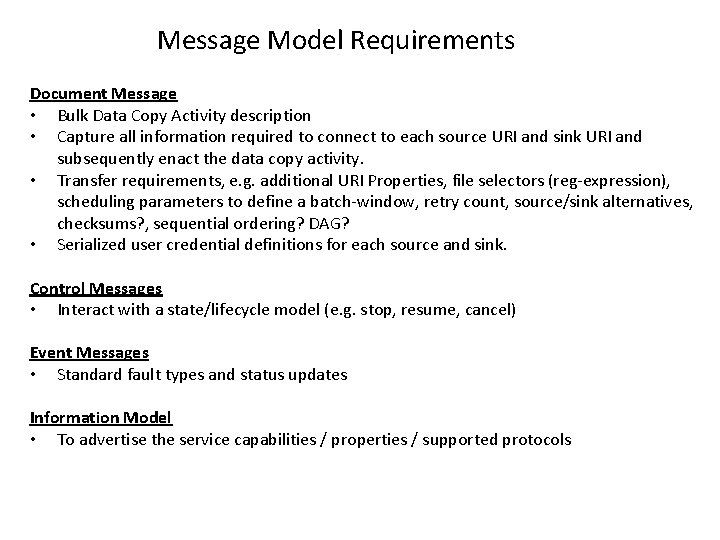

Message Model Requirements Document Message • Bulk Data Copy Activity description • Capture all information required to connect to each source URI and sink URI and subsequently enact the data copy activity. • Transfer requirements, e. g. additional URI Properties, file selectors (reg‐expression), scheduling parameters to define a batch‐window, retry count, source/sink alternatives, checksums? , sequential ordering? DAG? • Serialized user credential definitions for each source and sink. Control Messages • Interact with a state/lifecycle model (e. g. stop, resume, cancel) Event Messages • Standard fault types and status updates Information Model • To advertise the service capabilities / properties / supported protocols

In‐Scope 1. Job Submission Description Language (JSDL) • An activity description language for generic compute applications. 2. OGSA Data Movement Interface (DMI) • Low level schema for defining the transfer of bytes between and single source and sink. 3. JSDL HPC File Staging Profile (HPCFS) • Designed to address file staging not bulk copying. 4. OGSA Basic Execution Service (BES) • Defines a basic framework for defining and interacting with generic compute activities: JSDL + extensible state and information models. 5. Others that I am sure that I have missed ! (…Byte. IO) • Neither fully captures our requirements (not a criticism, they are designed to address their use‐ cases which only partially overlap with the requirements for our bulk data copy activity). Other • Condor Stork ‐ based on Condor Class‐Ads • Not sure if Globus has/intends a similar definition in its new developments (e. g. Saa. S) anyone ? – I believe Ravi was originally supportive of a DMI for data transfers between multiple sources/sinks

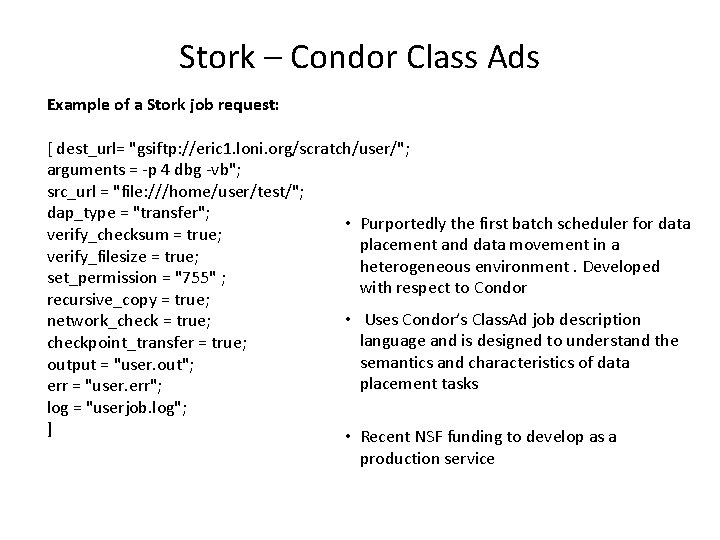

Stork – Condor Class Ads Example of a Stork job request: [ dest_url= "gsiftp: //eric 1. loni. org/scratch/user/"; arguments = ‐p 4 dbg ‐vb"; src_url = "file: ///home/user/test/"; dap_type = "transfer"; • Purportedly the first batch scheduler for data verify_checksum = true; placement and data movement in a verify_filesize = true; heterogeneous environment. Developed set_permission = "755" ; with respect to Condor recursive_copy = true; • Uses Condor’s Class. Ad job description network_check = true; language and is designed to understand the checkpoint_transfer = true; semantics and characteristics of data output = "user. out"; placement tasks err = "user. err"; log = "userjob. log"; ] • Recent NSF funding to develop as a production service

- Slides: 18