Building Evaluation Capacity Presentation Slides for Participatory Evaluation

Building Evaluation Capacity Presentation Slides for Participatory Evaluation Essentials: An Updated Guide for Non-Profit Organizations And Their Evaluation Partners 2010 Anita M. Baker, Ed. D. Bruner Foundation Rochester, New York

How to Use the Bruner Foundation Guide & Powerpoint Slides Evaluation Essentials: A Guide for Nonprofit Organizations and Their Evaluation Partners. (the Guide) and slides are organized to help an evaluation trainee walk through the process of designing an evaluation and collecting and analyzing evaluation data. The Guide also provides information about writing an evaluation report. The slides allow for easy presentation of the content, and in each section of the Guide there activities that provide practice opportunities. The Guide has a detailed table of contents for each section and it includes an evaluation bibliography. Also included are comprehensive appendices which can be pulled out and used for easy references, as well as to review brief presentations of other special topics that are not covered in the main section and sample logic models, completed interviews which can be used for training activities, and a sample observation protocol. For the Bruner Foundation-sponsored REP project, we worked through all the information up front, in a series of comprehensive training sessions. Each session included a short presentation of information, hands-on activities about the session topic, opportunities for discussion and questions, and homework for trainees to try on their own. By the end of the training sessions, trainees had developed their own evaluation designs which they later implemented as part of REP. We then provided an additional 10 months of evaluation coaching and review while trainees actually conducted the evaluations they had designed and we worked through several of the additional training topics that are presented in the appendix. At the end of their REP experience, trainees from non-profit organizations summarized and presented the findings from the evaluations they had designed and conducted. The REP non-profit partners agreed that the up-front training helped prepare them to do solid evaluation work and it provided opportunities for them to increase participation in evaluation within their organizations. The slides were first used in 2006 -07 in a similar training project sponsored by the Hartford Foundation for Public Giving. We recommend the comprehensive approach for those who are interested in building evaluation capacity. Whether you are a trainee or a trainer, using the guide to fully prepare for and conduct evaluation or just look up specific information about evaluation-related topics, we hope that the materials provided here will support your efforts. Bruner Foundation Rochester, New York

These materials are for the benefit of any 501 c 3 organization. They MAY be used in whole or in part provided that credit is given to the Bruner Foundation. They may NOT be sold or redistributed in whole or part for a profit. Copyright © by the Bruner Foundation 2010 * Please see the previous slide for further information about how to use the available materials. Bruner Foundation Rochester, New York

t n a ort Imp Bruner Foundation Rochester, New York y g o nol i m r e T Anita M. Baker, Evaluation Services

Building Evaluation Capacity Session 1 Evaluation Basics Anita M. Baker Evaluation Services Bruner Foundation Rochester, New York

Working Definition of Program Evaluation The practice of evaluation involves thoughtful, systematic collection and analysis of information about the activities, characteristics, and outcomes of programs, for use by specific people, to reduce uncertainties, improve effectiveness, and make decisions. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 1

Working Definition of Program Evaluation The practice of evaluation involves thoughtful, systematic collection and analysis of information Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 1

Working Definition of Program Evaluation The practice of evaluation involves thoughtful, systematic collection and analysis of information about the activities, characteristics, and outcomes of programs, Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 1

Working Definition of Program Evaluation The practice of evaluation involves thoughtful, systematic collection and analysis of information about the activities, characteristics, and outcomes of programs, for use by specific people, Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 1

Working Definition of Program Evaluation The practice of evaluation involves thoughtful, systematic collection and analysis of information about the activities, characteristics, and outcomes of programs, for use by specific people, to reduce uncertainties, improve effectiveness, and make decisions. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 1

Working Definition of Participatory Evaluation Participatory evaluation involves trained evaluation personnel and practice-based decision-makers working in partnership. P. E. brings together seasoned evaluators with seasoned program staff to: èAddress training needs èDesign, conduct and use results of program evaluation Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 2

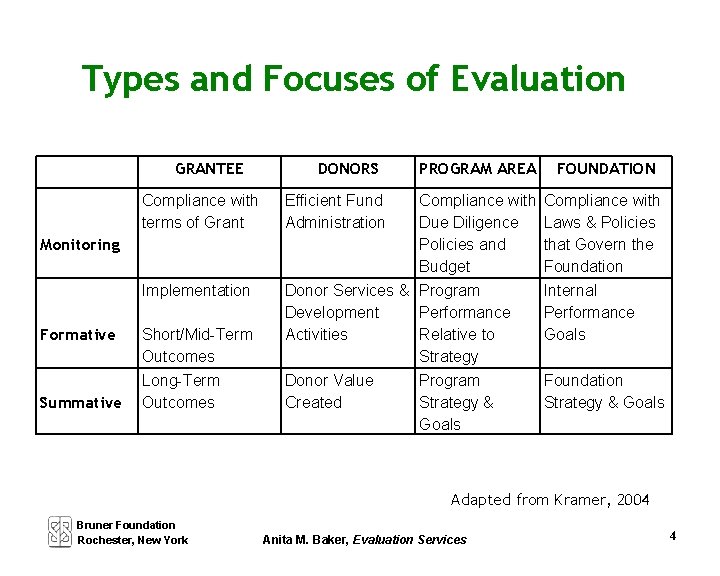

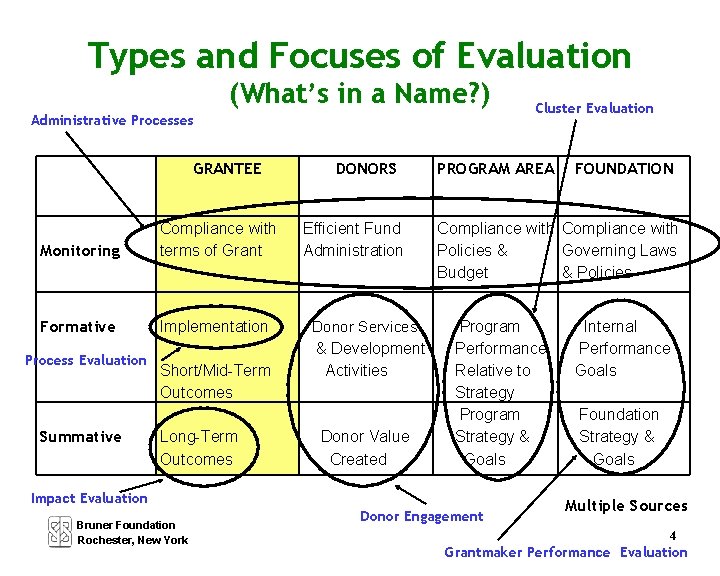

Types of Evaluation 1. Monitoring: Tracking progress through regular reporting. Usually focuses on activities and/or expenditures. 2. Formative Evaluation: An evaluation that is carried out while a project is underway. Often focuses on process and implementation and/or on more immediate or intermediate outcomes. 3. Summative Evaluation: An evaluation that assesses overall outcomes or impact of a project after it ends. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 3

Types and Focuses of Evaluation GRANTEE Compliance with terms of Grant Monitoring Implementation Formative Summative Short/Mid-Term Outcomes Long-Term Outcomes DONORS PROGRAM AREA Efficient Fund Administration Compliance with Due Diligence Policies and Budget Donor Services & Program Development Performance Activities Relative to Strategy Donor Value Program Created Strategy & Goals FOUNDATION Compliance with Laws & Policies that Govern the Foundation Internal Performance Goals Foundation Strategy & Goals Adapted from Kramer, 2004 Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 4

Types and Focuses of Evaluation (What’s in a Name? ) Administrative Processes GRANTEE Monitoring Compliance with terms of Grant Formative Implementation Process Evaluation Summative Short/Mid-Term Outcomes Long-Term Outcomes DONORS Efficient Fund Administration Donor Services & Development Activities Donor Value Created PROGRAM AREA FOUNDATION Compliance with Policies & Governing Laws Budget & Policies Program Performance Relative to Strategy Program Strategy & Goals Impact Evaluation Bruner Foundation Rochester, New York Cluster Evaluation Donor Engagement Internal Performance Goals Foundation Strategy & Goals Multiple Sources 4 Grantmaker Performance Evaluation

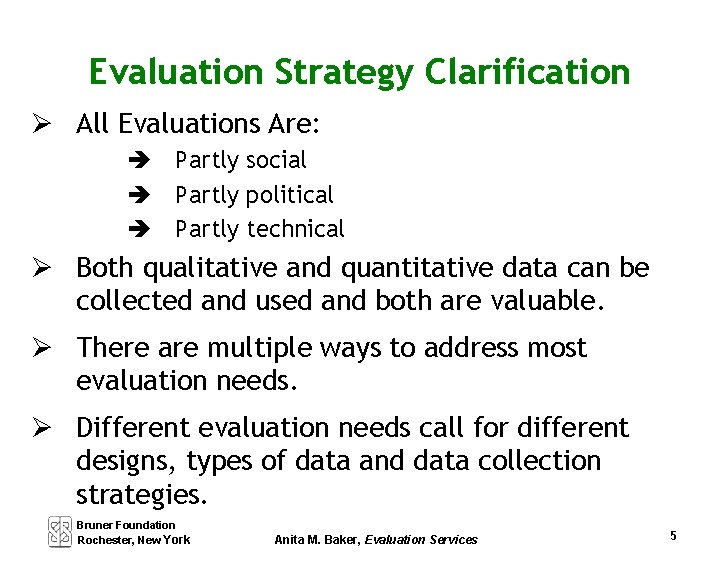

Evaluation Strategy Clarification All Evaluations Are: è Partly social è Partly political è Partly technical Both qualitative and quantitative data can be collected and used and both are valuable. There are multiple ways to address most evaluation needs. Different evaluation needs call for different designs, types of data and data collection strategies. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 5

Purposes of Evaluations are conducted to: è Render judgment è Facilitate improvements è Generate knowledge Evaluation purpose must be specified at the earliest stages of evaluation planning and with input from multiple stakeholders. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 6

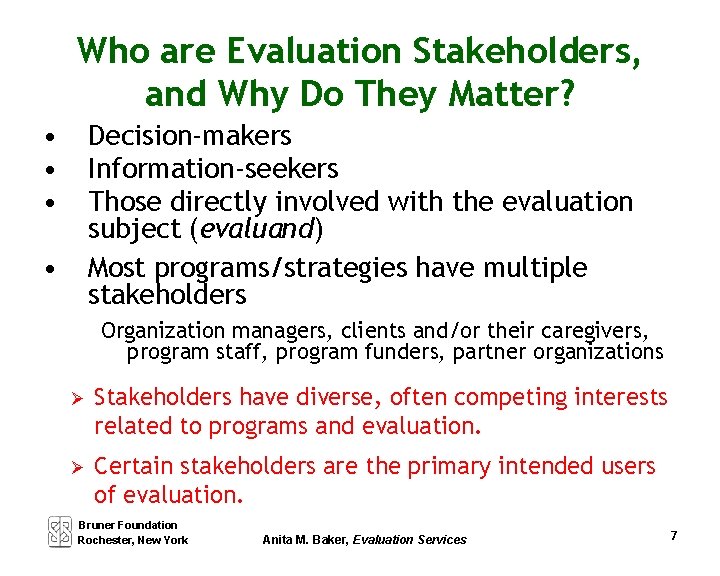

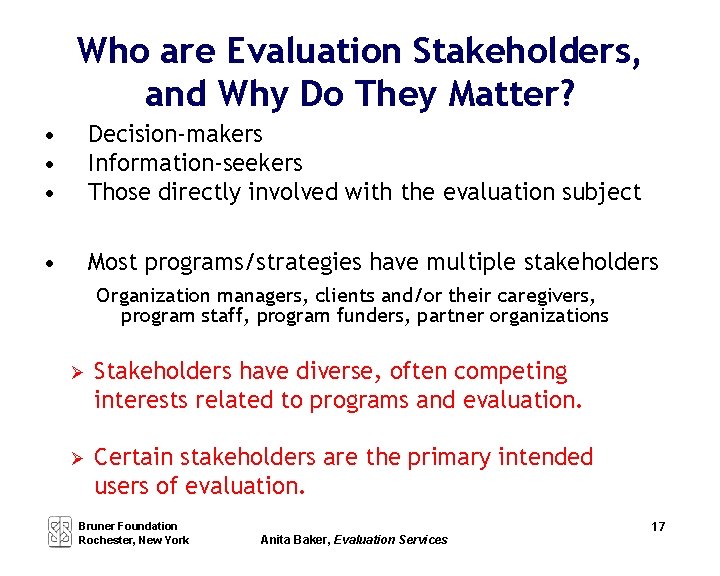

Who are Evaluation Stakeholders, and Why Do They Matter? • • • Decision-makers Information-seekers Those directly involved with the evaluation subject (evaluand) Most programs/strategies have multiple stakeholders • Organization managers, clients and/or their caregivers, program staff, program funders, partner organizations Stakeholders have diverse, often competing interests related to programs and evaluation. Certain stakeholders are the primary intended users of evaluation. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 7

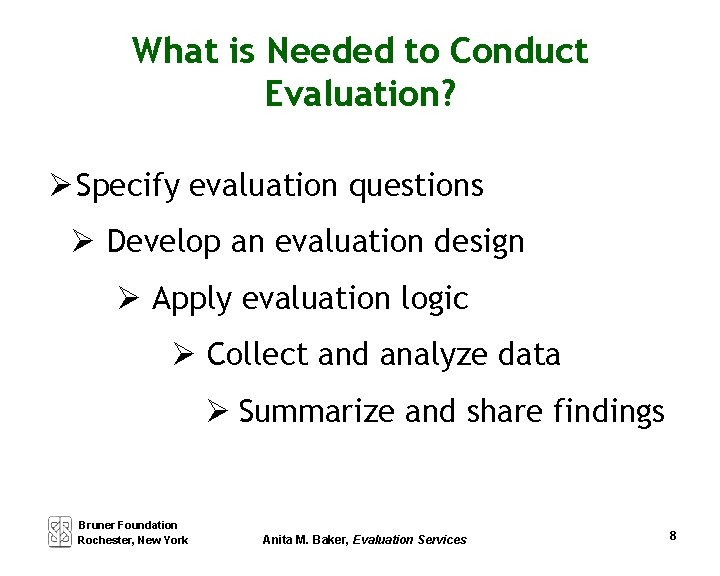

What is Needed to Conduct Evaluation? Specify evaluation questions Develop an evaluation design Apply evaluation logic Collect and analyze data Summarize and share findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 8

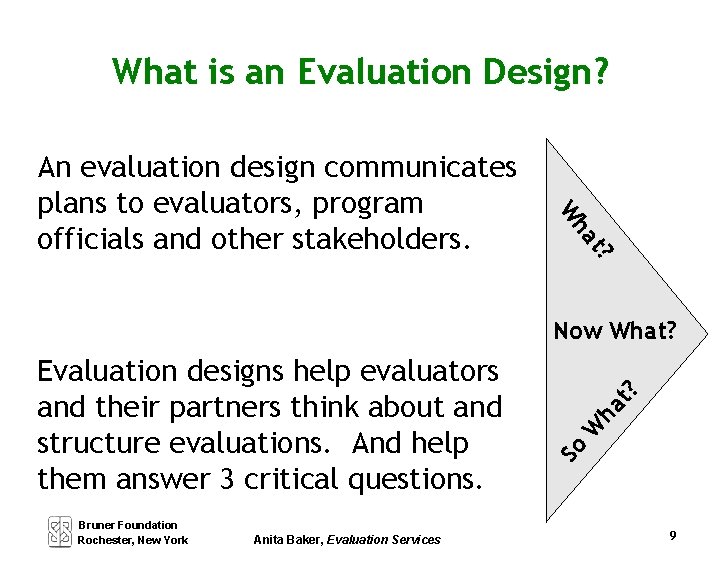

What is an Evaluation Design? ha W t? An evaluation design communicates plans to evaluators, program officials and other stakeholders. Bruner Foundation Rochester, New York Anita Baker, Evaluation Services W ha So Evaluation designs help evaluators and their partners think about and structure evaluations. And help them answer 3 critical questions. t? Now What? 9

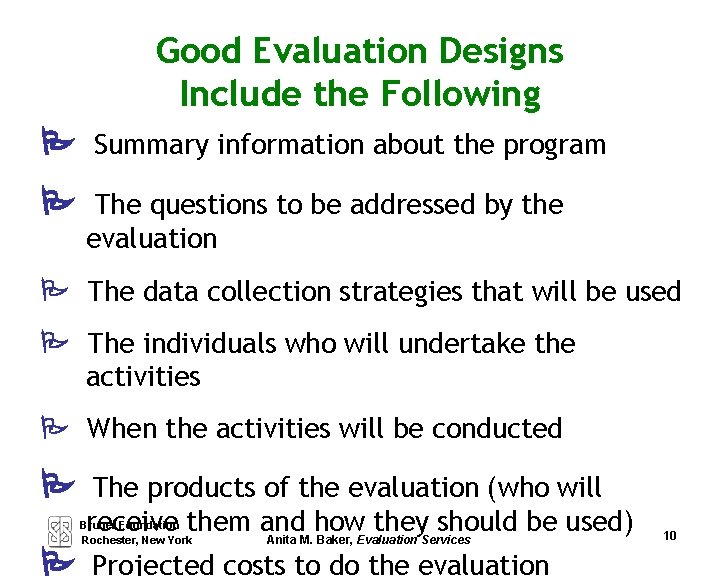

Good Evaluation Designs Include the Following Summary information about the program The questions to be addressed by the evaluation The data collection strategies that will be used The individuals who will undertake the activities When the activities will be conducted The products of the evaluation (who will receive them and how they should be used) Anita M. Baker, Evaluation Services Bruner Foundation Rochester, New York Projected costs to do the evaluation 10

Evaluation Questions Get you Started Focus and drive the evaluation. Should be carefully specified and agreed upon in advance of other evaluation work. Generally represent a critical subset of information that is desired. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 11

Evaluation Questions: Criteria • It is possible to obtain data to address the questions. • There is more than one possible “answer” to the question. • The information to address the questions is wanted and needed. • It is known how resulting information will be used internally (and externally). • The questions are aimed at changeable aspects of programmatic activity. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 12

Evaluation Questions: Advice Limit the number of questions Between two and five is optimal Keep it manageable

Evaluation Questions: Advice Limit the number of questions Between two and five is optimal Keep it manageable PAGE 12 What are staff and participant perceptions of the program? How and to what extent are participants progressing toward desired outcomes? Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services

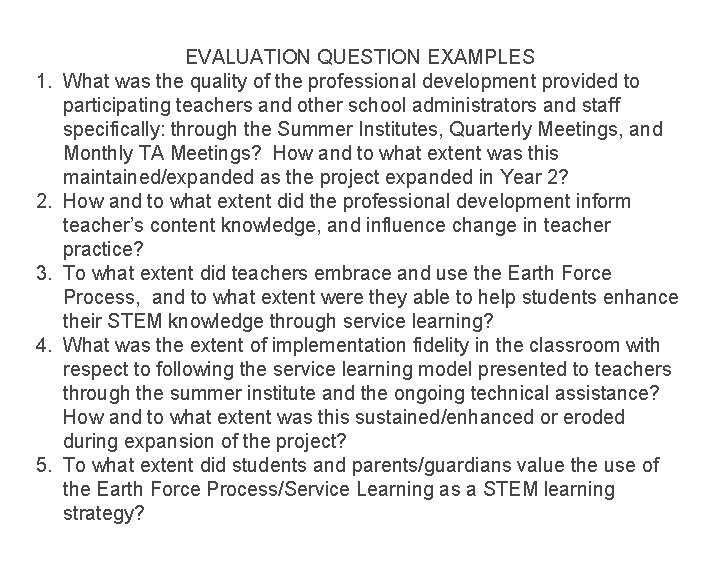

1. 2. 3. 4. 5. EVALUATION QUESTION EXAMPLES What was the quality of the professional development provided to participating teachers and other school administrators and staff specifically: through the Summer Institutes, Quarterly Meetings, and Monthly TA Meetings? How and to what extent was this maintained/expanded as the project expanded in Year 2? How and to what extent did the professional development inform teacher’s content knowledge, and influence change in teacher practice? To what extent did teachers embrace and use the Earth Force Process, and to what extent were they able to help students enhance their STEM knowledge through service learning? What was the extent of implementation fidelity in the classroom with respect to following the service learning model presented to teachers through the summer institute and the ongoing technical assistance? How and to what extent was this sustained/enhanced or eroded during expansion of the project? To what extent did students and parents/guardians value the use of the Earth Force Process/Service Learning as a STEM learning strategy?

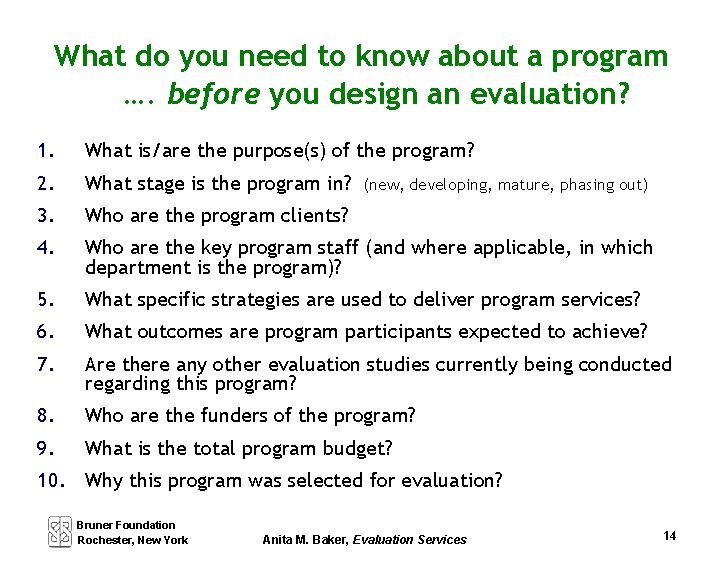

What do you need to know about a program …. before you design an evaluation? 1. What is/are the purpose(s) of the program? 2. What stage is the program in? 3. Who are the program clients? 4. Who are the key program staff (and where applicable, in which department is the program)? 5. What specific strategies are used to deliver program services? 6. What outcomes are program participants expected to achieve? 7. Are there any other evaluation studies currently being conducted regarding this program? 8. Who are the funders of the program? 9. What is the total program budget? (new, developing, mature, phasing out) 10. Why this program was selected for evaluation? Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 14

What is Evaluative Thinking? Evaluative Thinking is a type of reflective practice that incorporates use of systematically collected data to inform organizational decisions and other actions. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 15

What Are Key Components of Evaluative Thinking? 1. Asking questions of substance 2. Determining data needed to address questions 3. Gathering appropriate data in systematic ways 4. Analyzing data and sharing results 5. Developing strategies to act on findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 16

What Are Key Components of Evaluative Thinking? 1. Asking questions of substance 2. Determining data needed to address questions 3. Gathering appropriate data in systematic ways 4. Analyzing data and sharing results 5. Developing strategies to act on findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 16

What Are Key Components of Evaluative Thinking? 1. Asking questions of substance 2. Determining data needed to address questions 3. Gathering appropriate data in systematic ways 4. Analyzing data and sharing results 5. Developing strategies to act on findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 16

What Are Key Components of Evaluative Thinking? 1. Asking questions of substance 2. Determining data needed to address questions 3. Gathering appropriate data in systematic ways 4. Analyzing data and sharing results 5. Developing strategies to act on findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 16

What Are Key Components of Evaluative Thinking? 1. Asking questions of substance 2. Determining data needed to address questions 3. Gathering appropriate data in systematic ways 4. Analyzing data and sharing results 5. Developing strategies to act on findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 16

What Are Key Components of Evaluative Thinking? 1. Asking questions of substance 2. Determining data needed to address questions 3. Gathering appropriate data in systematic ways 4. Analyzing data and sharing results 5. Developing strategies to act on findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 16

Building Evaluation Capacity Session 2 Evaluation Logic Anita M. Baker Evaluation Services Bruner Foundation Rochester, New York

How is Evaluative Thinking Related to Organizational Effectiveness? Organizational effectiveness = the ability of an organization to fulfill its mission sound management, strong governance, persistent rededication to achieving results Grantmakers for Effective Organizations Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services I

What Are Key Components of Evaluative Thinking? 1. Asking questions of substance 2. Determining data needed to address questions 3. Gathering appropriate data in systematic ways 4. Analyzing data and sharing results 5. Developing strategies to act on findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services II

How is Evaluative Thinking Related to Organizational Effectiveness? To determine effectiveness, organization MUST have evaluative capacity evaluation capacity/skills ability to transfer those skills to organizational competencies, (i. e. , evaluative thinking in multiple areas). Organizational capacity areas where evaluative thinking is less evident, are also capacity areas of organizations that usually need to be strengthened. Mission, Strategic Planning, Leadership, Governance, Finance, Fund Raising/Fund Development, Business Venture Development, Technology Acquistion & Training, Client Interaction, Marketing & Communications, Program Development, Staff Development, Human Resources, Alliances & Collaboration Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services iii

What is Needed to Conduct Evaluation? Specify evaluation questions Develop an evaluation design Apply evaluation logic Collect and analyze data Summarize and share findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services iv

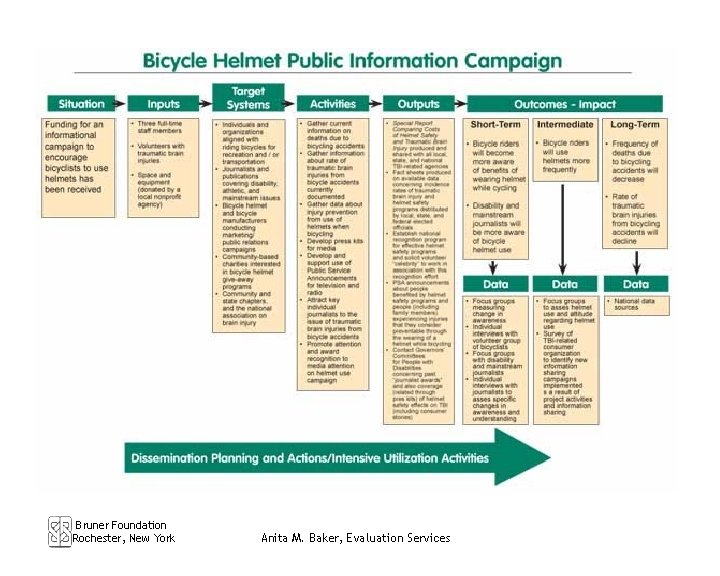

Logic Model Overview Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services

What is a Logic Model? A Logic Model is a simple description of how a program is understood to work to achieve outcomes for participants. It is a process that helps you to identify your vision, the rationale behind your program, and how your program will work. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 1

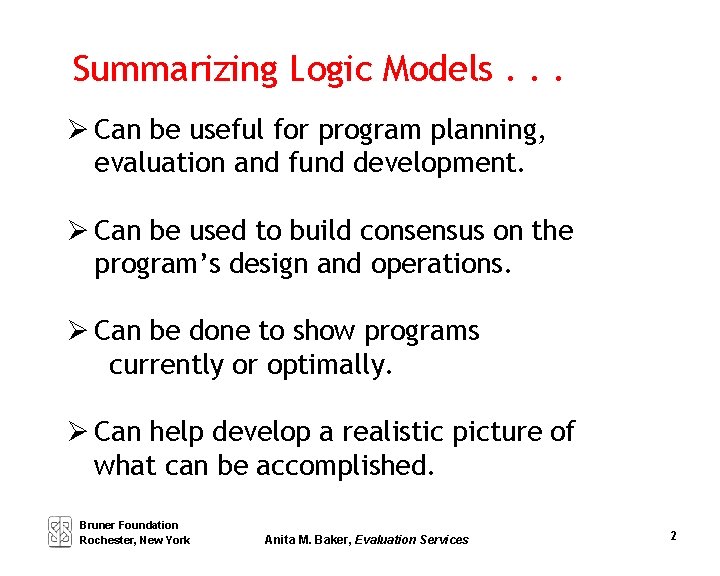

Summarizing Logic Models. . . Can be useful for program planning, evaluation and fund development. Can be used to build consensus on the program’s design and operations. Can be done to show programs currently or optimally. Can help develop a realistic picture of what can be accomplished. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 2

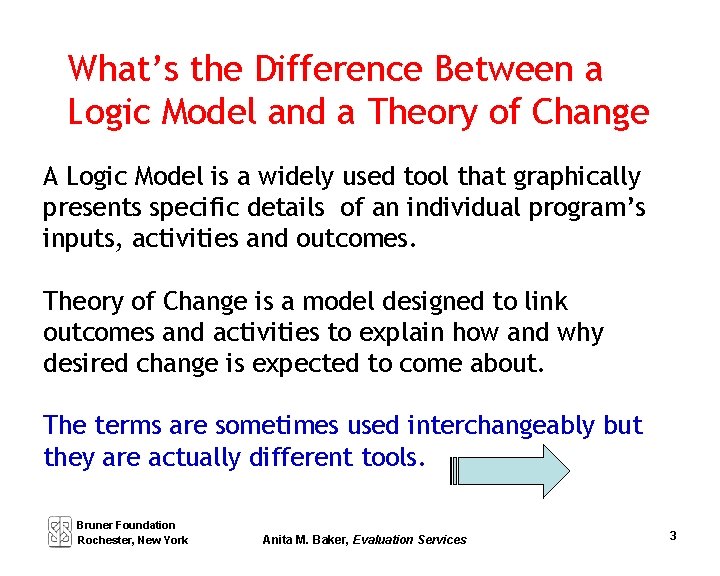

What’s the Difference Between a Logic Model and a Theory of Change A Logic Model is a widely used tool that graphically presents specific details of an individual program’s inputs, activities and outcomes. Theory of Change is a model designed to link outcomes and activities to explain how and why desired change is expected to come about. The terms are sometimes used interchangeably but they are actually different tools. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 3

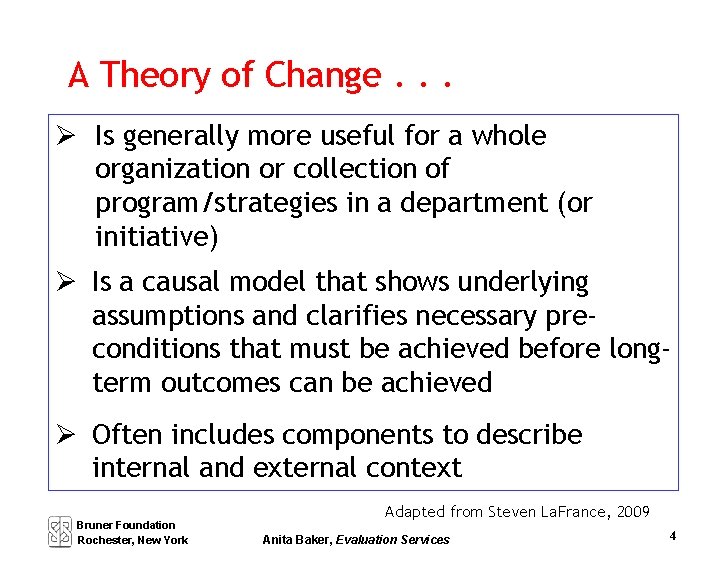

A Theory of Change. . . Is generally more useful for a whole organization or collection of program/strategies in a department (or initiative) Is a causal model that shows underlying assumptions and clarifies necessary preconditions that must be achieved before longterm outcomes can be achieved Often includes components to describe internal and external context Bruner Foundation Rochester, New York Adapted from Steven La. France, 2009 Anita Baker, Evaluation Services 4

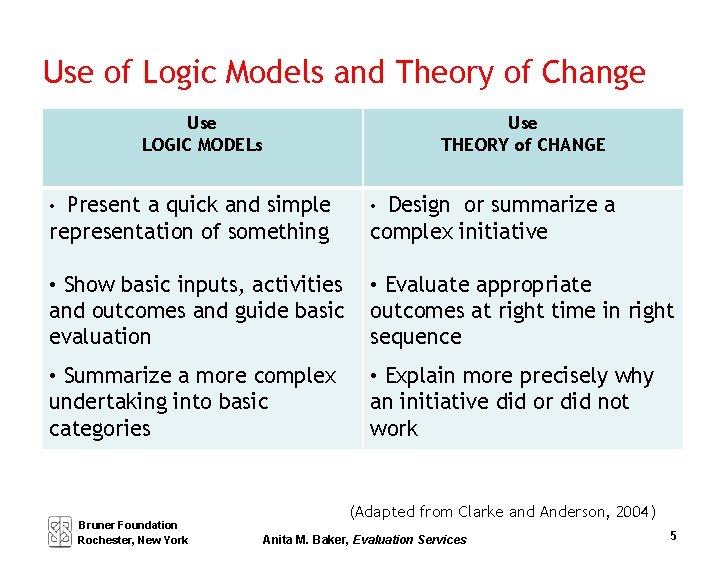

Use of Logic Models and Theory of Change Use LOGIC MODELs Use THEORY of CHANGE • Present a quick and simple • Design or summarize a representation of something complex initiative • Show basic inputs, activities and outcomes and guide basic evaluation • Evaluate appropriate outcomes at right time in right sequence • Summarize a more complex undertaking into basic categories • Explain more precisely why an initiative did or did not work Bruner Foundation Rochester, New York (Adapted from Clarke and Anderson, 2004) Anita M. Baker, Evaluation Services 5

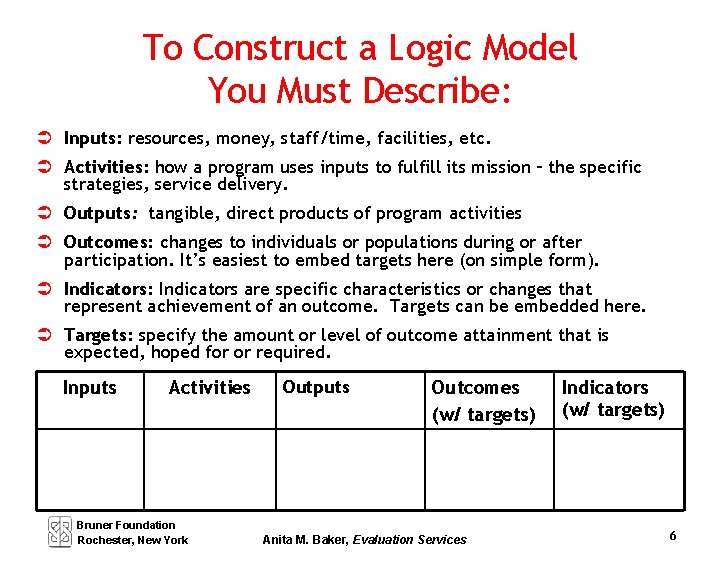

To Construct a Logic Model You Must Describe: Inputs: resources, money, staff/time, facilities, etc. Activities: how a program uses inputs to fulfill its mission – the specific strategies, service delivery. Outputs: tangible, direct products of program activities Outcomes: changes to individuals or populations during or after participation. It’s easiest to embed targets here (on simple form). Indicators: Indicators are specific characteristics or changes that represent achievement of an outcome. Targets can be embedded here. Targets: specify the amount or level of outcome attainment that is expected, hoped for or required. Inputs Activities Bruner Foundation Rochester, New York Outputs Outcomes (w/ targets) Anita M. Baker, Evaluation Services Indicators (w/ targets) 6

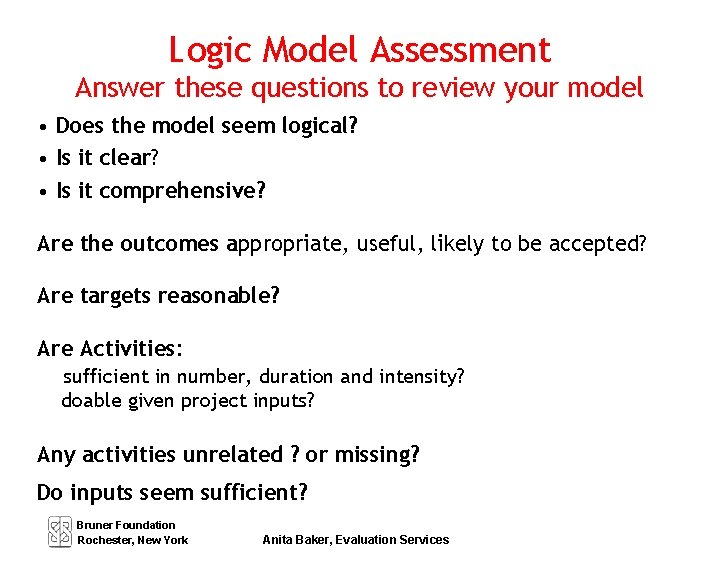

Logic Model Assessment Answer these questions to review your model • Does the model seem logical? • Is it clear? • Is it comprehensive? Are the outcomes appropriate, useful, likely to be accepted? Are targets reasonable? Are Activities: sufficient in number, duration and intensity? doable given project inputs? Any activities unrelated ? or missing? Do inputs seem sufficient? Bruner Foundation Rochester, New York Anita Baker, Evaluation Services

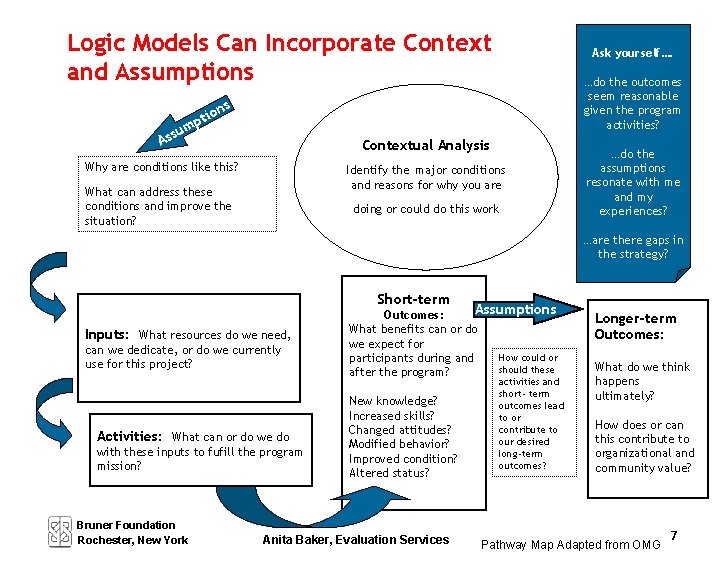

Logic Models Can Incorporate Context and Assumptions Ask yourself…. …do the outcomes seem reasonable given the program activities? ns o pti sum As Contextual Analysis Why are conditions like this? Identify the major conditions and reasons for why you are What can address these conditions and improve the situation? doing or could do this work …do the assumptions resonate with me and my experiences? …are there gaps in the strategy? Short-term Inputs: What resources do we need, can we dedicate, or do we currently use for this project? Activities: What can or do we do with these inputs to fufill the program mission? Bruner Foundation Rochester, New York Assumptions Outcomes: What benefits can or do we expect for How could or participants during and should these after the program? New knowledge? Increased skills? Changed attitudes? Modified behavior? Improved condition? Altered status? Anita Baker, Evaluation Services activities and short- term outcomes lead to or contribute to our desired long-term outcomes? Longer-term Outcomes: What do we think happens ultimately? How does or can this contribute to organizational and community value? Pathway Map Adapted from OMG 7

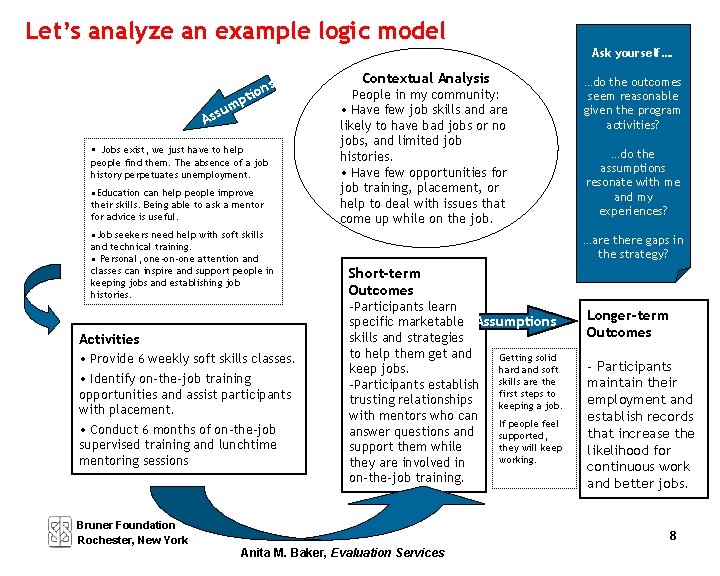

Let’s analyze an example logic model Ask yourself…. A m ssu s ion pt • Jobs exist, we just have to help people find them. The absence of a job history perpetuates unemployment. • Education can help people improve their skills. Being able to ask a mentor for advice is useful. • Job seekers need help with soft skills and technical training. • Personal, one-on-one attention and classes can inspire and support people in keeping jobs and establishing job histories. Activities • Provide 6 weekly soft skills classes. • Identify on-the-job training opportunities and assist participants with placement. • Conduct 6 months of on-the-job supervised training and lunchtime mentoring sessions Contextual Analysis People in my community: • Have few job skills and are likely to have bad jobs or no jobs, and limited job histories. • Have few opportunities for job training, placement, or help to deal with issues that come up while on the job. …do the outcomes seem reasonable given the program activities? …do the assumptions resonate with me and my experiences? …are there gaps in the strategy? Short-term Outcomes -Participants learn specific marketable Assumptions skills and strategies to help them get and Getting solid keep jobs. hard and soft -Participants establish skills are the first steps to trusting relationships keeping a job. with mentors who can If people feel answer questions and supported, support them while they will keep working. they are involved in on-the-job training. Bruner Foundation Rochester, New York Longer-term Outcomes - Participants maintain their employment and establish records that increase the likelihood for continuous work and better jobs. 8 Anita M. Baker, Evaluation Services

Important Things to Remember « There are several different approaches and formats for logic models. « Not all programs lend themselves easily to summarization in a logic model format. « The relationships between inputs, activities and outcomes are not one to one. Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 9

Important Things to Remember « Logic models are best used in conjunction with other descriptive information or as part of a conversation. « When used for program planning, it is advisable to start with outcomes and then determine what activities will be appropriate and what inputs are needed. « It is advisable to have one or two key project officials summarize the logic model. « It is advisable to have multiple stakeholders review the LM and agree upon what is included and how. Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 10

Outcomes, Indicators and Targets Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services

Logical Considerations - Planning 1. Think about the results you want. 2. Decide what strategies will help you achieve those results? 3. Think about what inputs you need to conduct the desired strategies. 4. Specify outcomes, identify indicators and targets. ** DECIDE IN ADVANCE, HOW GOOD IS GOOD ENOUGH 5. Document how services are delivered. 6. Evaluate actual results. Bruner Foundation Rochester, New York **use caution with these terms Anita M. Baker, Evaluation Services 11

Logical Considerations - Evaluation 4. Identify outcomes, indicators and targets. ** DECIDE IN ADVANCE, HOW GOOD IS GOOD ENOUGH 5 a. Collect descriptive information about participants. 5 b. Document service delivery. 6. Evaluate actual results. **use caution with these terms Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 12

Outcomes Changes in attitudes, behavior, skills, knowledge, condition or status. Must be: u Realistic and attainable u Related to core business u Within program’s sphere of influence Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 13

Outcomes: Reminders u Time-sensitive u Programs have more influence on more immediate outcomes u Usually more than one way to get an outcome u Closely related to program design; program changes usually = outcome changes u Positive outcomes are not always improvements (maintenance, prevention) Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 14

Indicators Specific, measurable characteristics or changes that represent achievement of an outcome. Indicators are: u Directly related to the outcome, help define it u Specific, measurable, observable, seen, heard, or read Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 15

Indicator: Reminders u Most outcomes have more than one indicator u Identify the set of indicators that accurately signal achievement of an outcome (get stakeholder input) u When measuring prevention, identify meaningful segments of time, check indicators during that time u Specific, Measurable, Achievable, Relevant, Timebound (SMART) Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 16

Targets Specify the amount or level of outcome attainment expected, hoped for or required. Targets can be set: u Relative to external standards (when available) u Past performance/similar programs u Professional hunches Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 17

Target: Reminders u Targets should be specified in advance, require buy in, and may be different for different subgroups. u Carefully word targets so they are not over or under-ambitious, make sense, and are in sync with time frames. u If target indicates change in magnitude – be sure to specify initial levels and what is positive. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 18

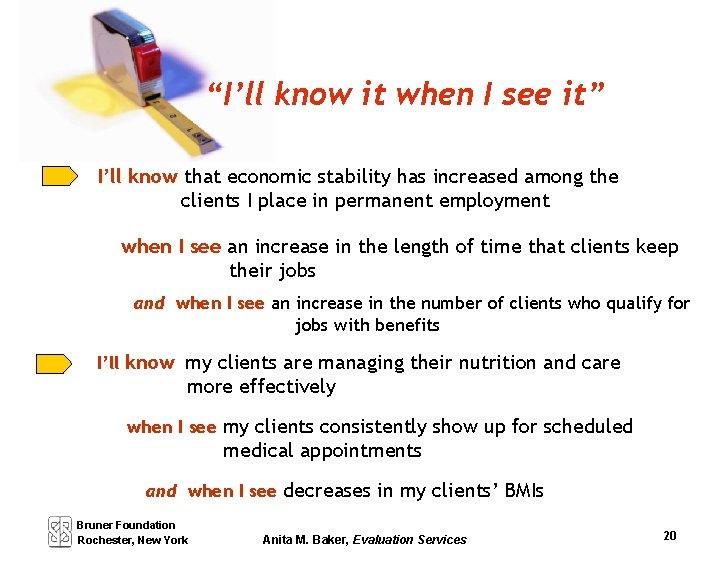

Let’s “break it down” Use the “I’ll know it when I see it” rule The BIG question is what evidence do we need to see to be convinced that things are changing or improving? The “I’ll know it (outcome) when I see it (indicator)” rule in action -- some examples: I’ll know that retention has increased among home health aides involved in a career ladder program when I see a reduction in the employee turnover rate among aides involved in the program and when I see Bruner Foundation Rochester, New York survey results that indicate that aides are experiencing increased job satisfaction Anita M. Baker, Evaluation Services 19

“I’ll know it when I see it” I’ll know that economic stability has increased among the clients I place in permanent employment when I see an increase in the length of time that clients keep their jobs and when I see an increase in the number of clients who qualify for jobs with benefits I’ll know my clients are managing their nutrition and care more effectively when I see my clients consistently show up for scheduled medical appointments and when I see decreases in my clients’ BMIs Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 20

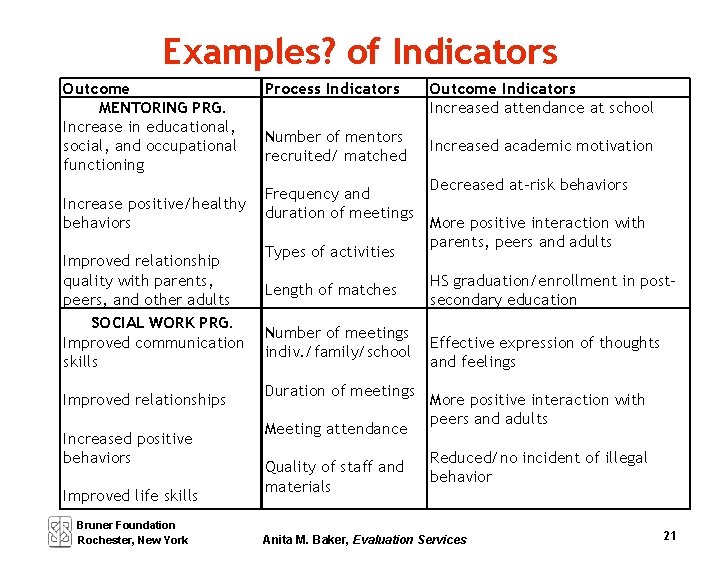

Examples? of Indicators Outcome MENTORING PRG. Increase in educational, social, and occupational functioning Increase positive/healthy behaviors Improved relationship quality with parents, peers, and other adults SOCIAL WORK PRG. Improved communication skills Improved relationships Increased positive behaviors Improved life skills Bruner Foundation Rochester, New York Process Indicators Number of mentors recruited/ matched Frequency and duration of meetings Types of activities Length of matches Number of meetings indiv. /family/school Duration of meetings Meeting attendance Quality of staff and materials Outcome Indicators Increased attendance at school Increased academic motivation Decreased at-risk behaviors More positive interaction with parents, peers and adults HS graduation/enrollment in postsecondary education Effective expression of thoughts and feelings More positive interaction with peers and adults Reduced/no incident of illegal behavior Anita M. Baker, Evaluation Services 21

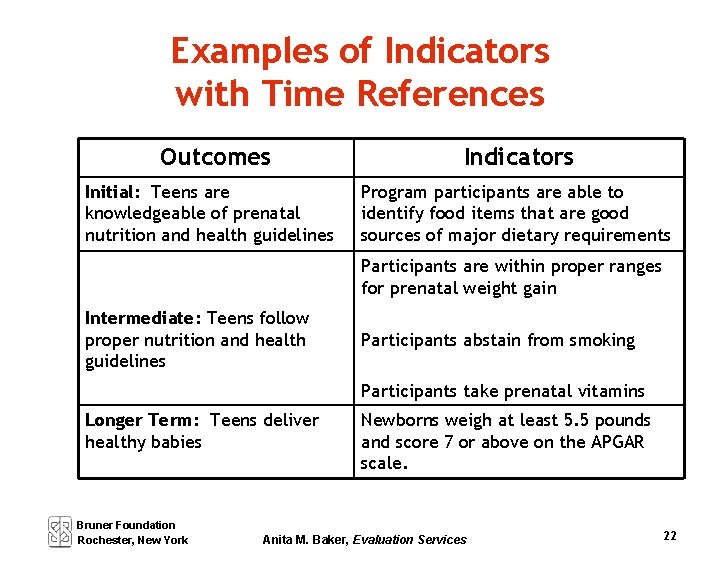

Examples of Indicators with Time References Outcomes Initial: Teens are knowledgeable of prenatal nutrition and health guidelines Indicators Program participants are able to identify food items that are good sources of major dietary requirements Participants are within proper ranges for prenatal weight gain Intermediate: Teens follow proper nutrition and health guidelines Participants abstain from smoking Participants take prenatal vitamins Longer Term: Teens deliver healthy babies Bruner Foundation Rochester, New York Newborns weigh at least 5. 5 pounds and score 7 or above on the APGAR scale. Anita M. Baker, Evaluation Services 22

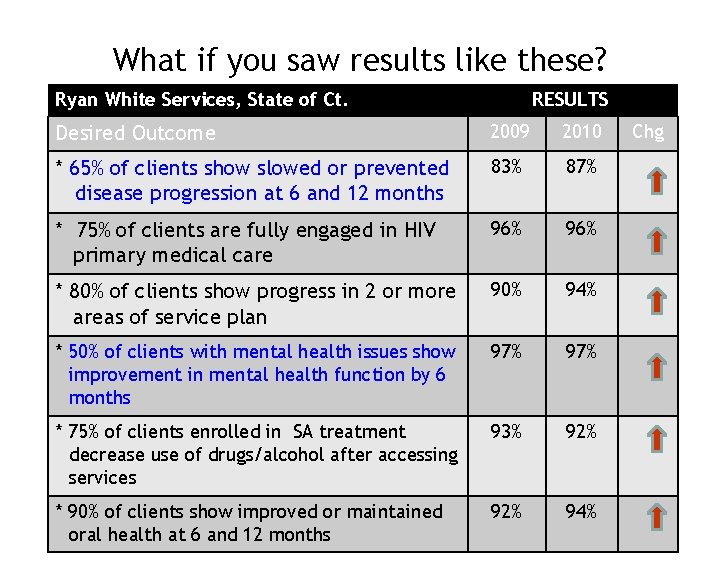

What if you saw results like these? Ryan White Services, State of Ct. RESULTS Desired Outcome 2009 2010 * 65% of clients show slowed or prevented disease progression at 6 and 12 months 83% 87% * 75% of clients are fully engaged in HIV primary medical care 96% * 80% of clients show progress in 2 or more areas of service plan 90% 94% * 50% of clients with mental health issues show improvement in mental health function by 6 months 97% * 75% of clients enrolled in SA treatment decrease use of drugs/alcohol after accessing services 93% 92% * 90% of clients show improved or maintained oral health at 6 and 12 months 92% 94% Chg

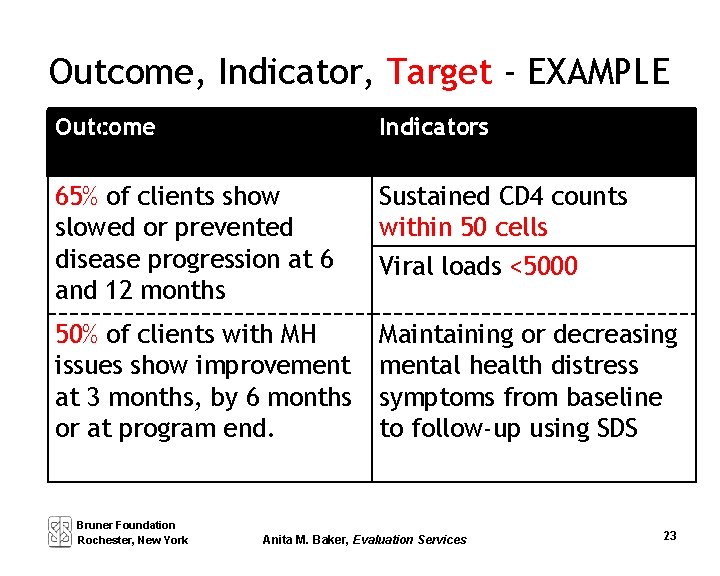

Outcome, Indicator, Target - EXAMPLE Outcome Indicators 65% of clients show slowed or prevented disease progression at 6 and 12 months Sustained CD 4 counts within 50 cells Viral loads <5000 50% of clients with MH issues show improvement at 3 months, by 6 months or at program end. Maintaining or decreasing mental health distress symptoms from baseline to follow-up using SDS Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 23

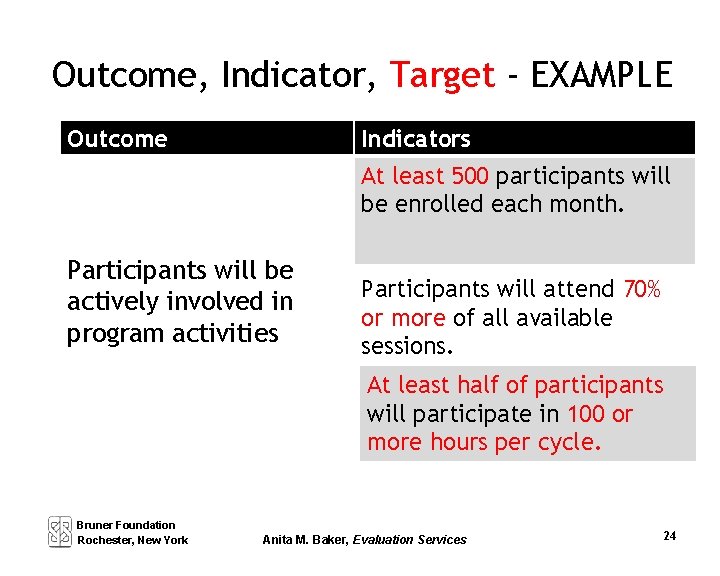

Outcome, Indicator, Target - EXAMPLE Indicators Outcome At least 500 participants will be enrolled each month. Participants will be actively involved in program activities Participants will attend 70% or more of all available sessions. At least half of participants will participate in 100 or more hours per cycle. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 24

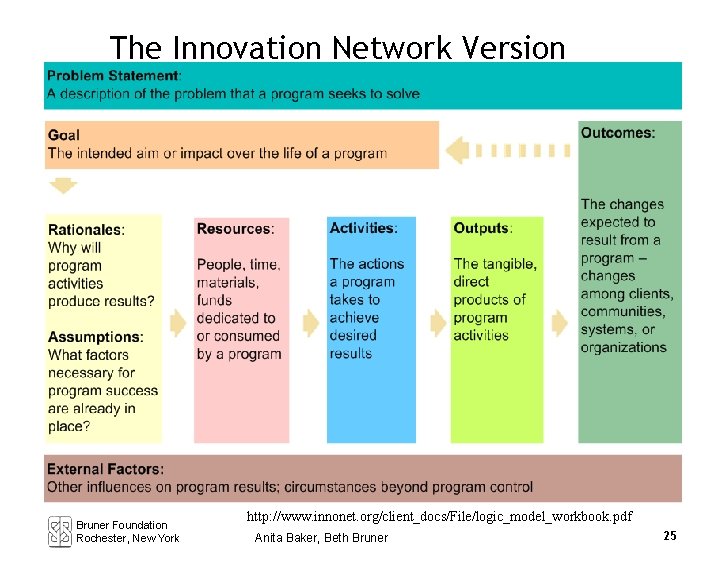

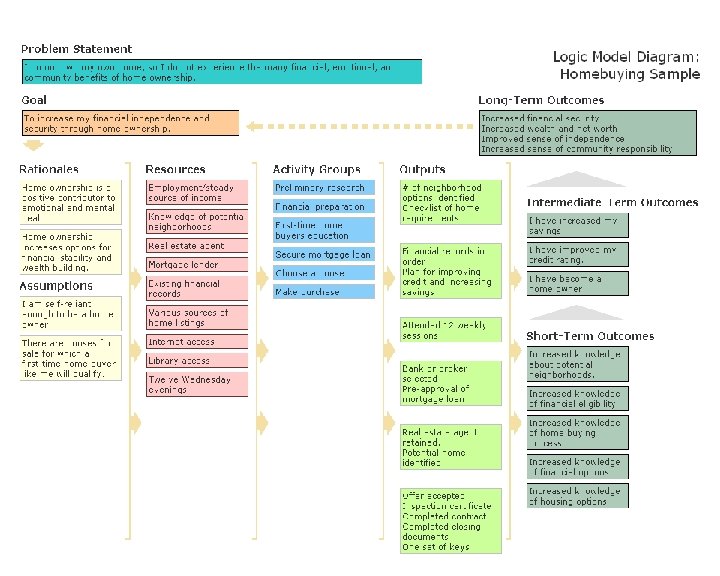

The Innovation Network Version Bruner Foundation Rochester, New York http: //www. innonet. org/client_docs/File/logic_model_workbook. pdf Anita Baker, Beth Bruner 25

Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 71

Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services

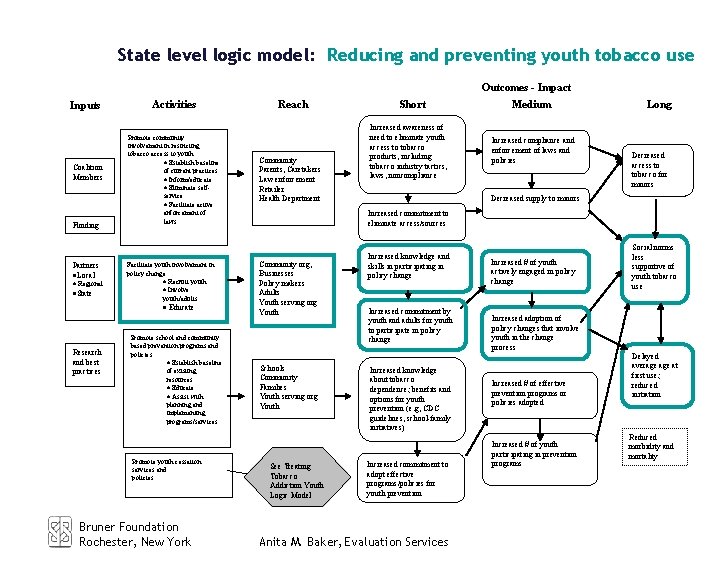

State level logic model: Reducing and preventing youth tobacco use Outcomes - Impact Inputs Coalition Members Funding Partners ·Local · Regional ·State Activities Promote community involvement in restricting tobacco access to youth · Establish baseline of current practices · Inform/educate · Eliminate selfservice · Facilitate active enforcement of laws Facilitate youth involvement in policy change · Recruit youth · Involve youth/adults · Educate Research and best practices Promote school and community based prevention programs and policies · Establish baseline of existing resources · Educate · Assist with planning and implementing programs/services Promote youth cessation services and policies Bruner Foundation Rochester, New York Reach Community Parents, Caretakers Law enforcement Retailer Health Department Short Increased awareness of need to eliminate youth access to tobacco products, including tobacco industry tactics, laws, noncompliance Medium Increased compliance and enforcement of laws and policies Long Decreased access to tobacco for minors Decreased supply to minors Increased commitment to eliminate access/sources Community org, Businesses Policy makers Adults Youth serving org Youth Schools Community Families Youth serving org Youth See Treating Tobacco Addiction Youth Logic Model Increased knowledge and skills in participating in policy change Increased commitment by youth and adults for youth to participate in policy change Increased knowledge about tobacco dependence; benefits and options for youth prevention (e. g, CDC guidelines, school-family initiatives) Increased commitment to adopt effective programs/policies for youth prevention Anita M. Baker, Evaluation Services Increased # of youth actively engaged in policy change Social norms less supportive of youth tobacco use Increased adoption of policy changes that involve youth in the change process Increased # of effective prevention programs or policies adopted Increased # of youth participating in prevention programs Delayed average at first use; reduced initiation Reduced morbidity and mortality

Building Evaluation Capacity Session 3 DOCUMENTING SERVICE DELIVERY DATA COLLECTION OVERVIEW SURVEY DEVELOPMENT Anita M. Baker Evaluation Services Bruner Foundation Rochester, New York

What is Needed to Conduct Evaluation? Specify evaluation questions Develop an evaluation design Apply evaluation logic Collect and analyze data Summarize and share findings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 1

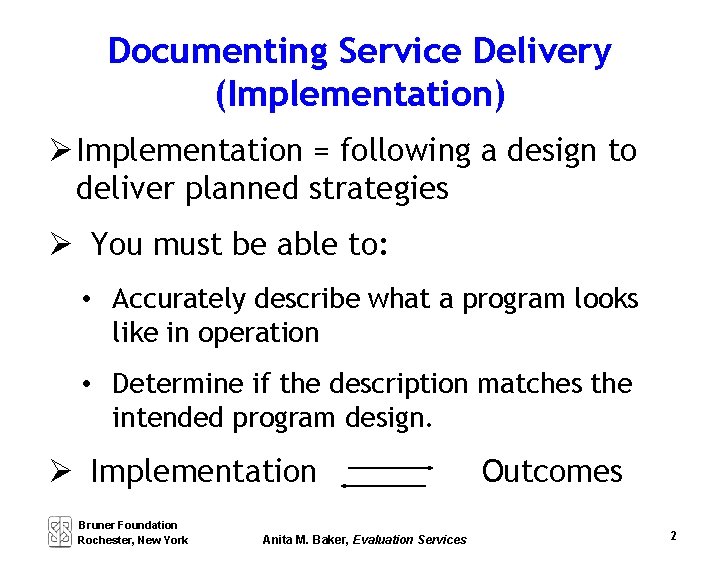

Documenting Service Delivery (Implementation) Implementation = following a design to deliver planned strategies You must be able to: • Accurately describe what a program looks like in operation • Determine if the description matches the intended program design. Implementation Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services Outcomes 2

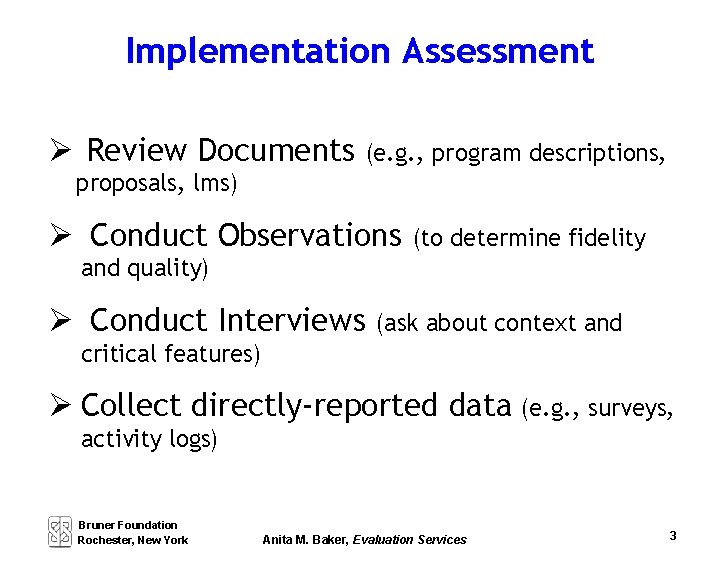

Implementation Assessment Review Documents (e. g. , program descriptions, proposals, lms) Conduct Observations (to determine fidelity and quality) Conduct Interviews (ask about context and critical features) Collect directly-reported data (e. g. , surveys, activity logs) Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 3

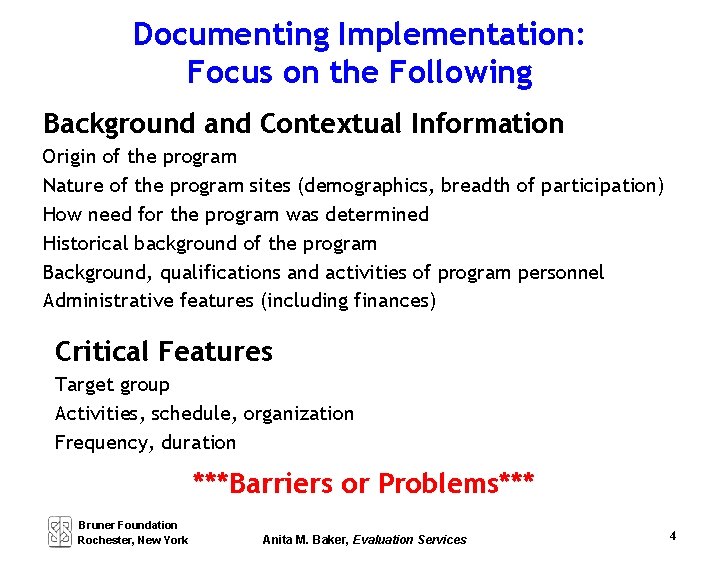

Documenting Implementation: Focus on the Following Background and Contextual Information Origin of the program Nature of the program sites (demographics, breadth of participation) How need for the program was determined Historical background of the program Background, qualifications and activities of program personnel Administrative features (including finances) Critical Features Target group Activities, schedule, organization Frequency, duration ***Barriers or Problems*** Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 4

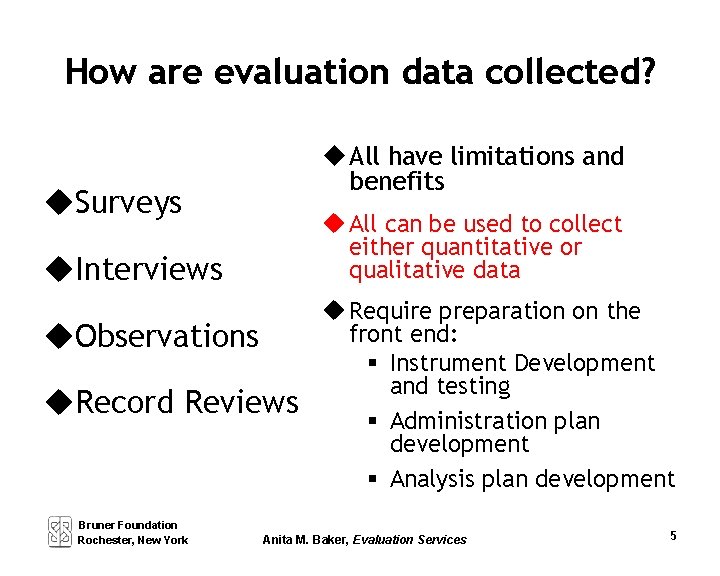

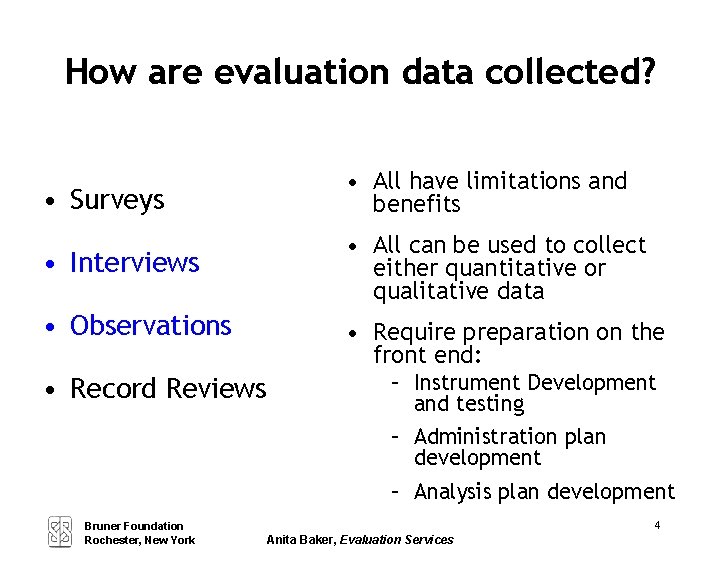

How are evaluation data collected? u All have limitations and benefits u. Surveys u All can be used to collect either quantitative or qualitative data u. Interviews u. Observations u. Record Reviews Bruner Foundation Rochester, New York u Require preparation on the front end: § Instrument Development and testing § Administration plan development § Analysis plan development Anita M. Baker, Evaluation Services 5

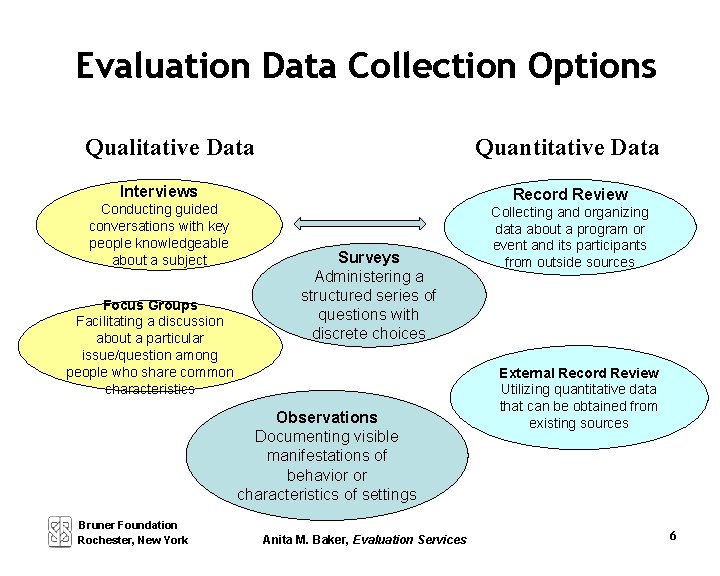

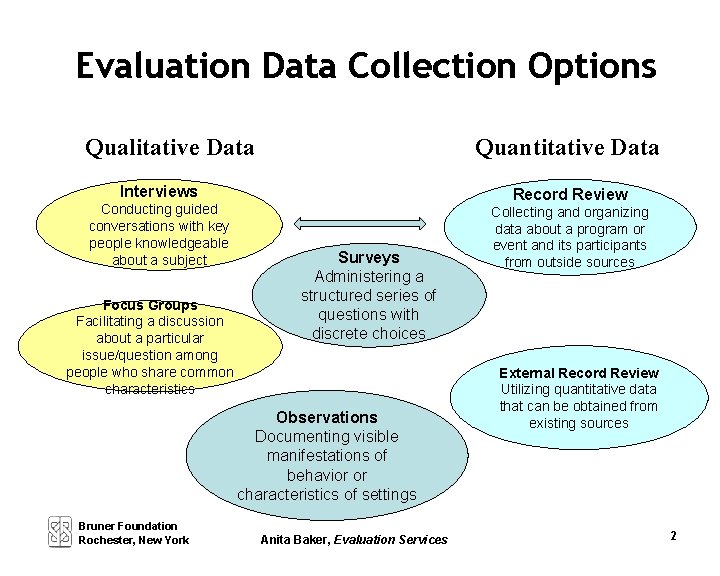

Evaluation Data Collection Options Qualitative Data Quantitative Data Interviews Conducting guided conversations with key people knowledgeable about a subject Focus Groups Facilitating a discussion about a particular issue/question among people who share common characteristics Record Review Surveys Administering a structured series of questions with discrete choices Observations Documenting visible manifestations of behavior or characteristics of settings Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services Collecting and organizing data about a program or event and its participants from outside sources External Record Review Utilizing quantitative data that can be obtained from existing sources 6

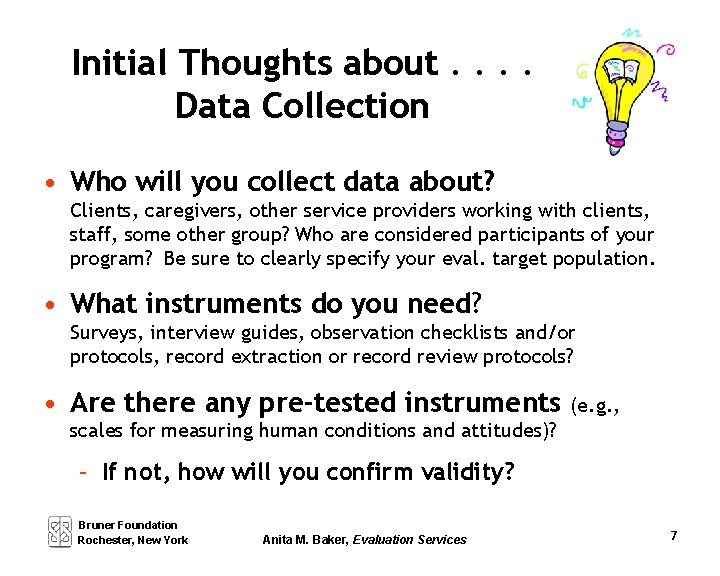

Initial Thoughts about. . Data Collection • Who will you collect data about? Clients, caregivers, other service providers working with clients, staff, some other group? Who are considered participants of your program? Be sure to clearly specify your eval. target population. • What instruments do you need? Surveys, interview guides, observation checklists and/or protocols, record extraction or record review protocols? • Are there any pre-tested instruments (e. g. , scales for measuring human conditions and attitudes)? – If not, how will you confirm validity? Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 7

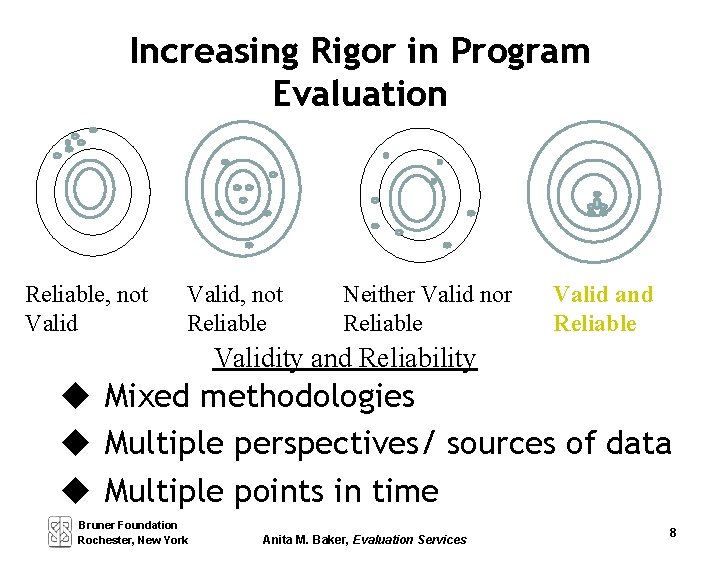

Increasing Rigor in Program Evaluation Reliable, not Valid, not Reliable Neither Valid nor Reliable Valid and Reliable Validity and Reliability u Mixed methodologies u Multiple perspectives/ sources of data u Multiple points in time Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 8

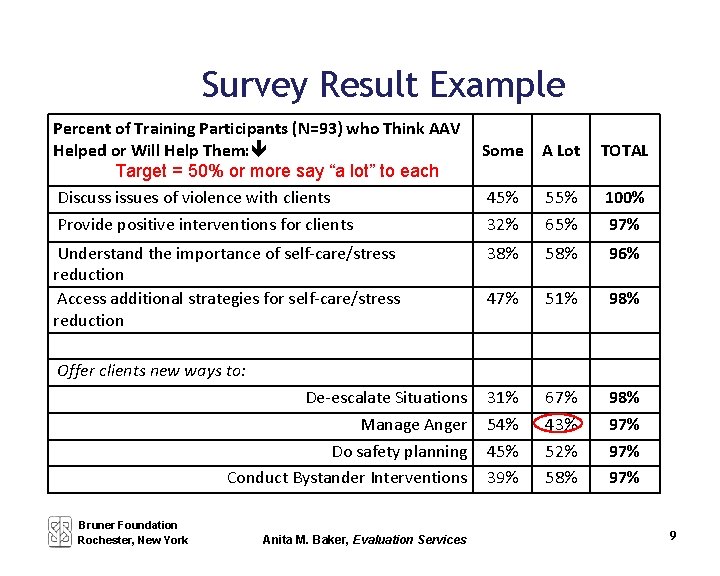

Survey Result Example Percent of Training Participants (N=93) who Think AAV Helped or Will Help Them: Target = 50% or more say “a lot” to each Discuss issues of violence with clients Provide positive interventions for clients Understand the importance of self-care/stress reduction Access additional strategies for self-care/stress reduction Some A Lot TOTAL 45% 32% 55% 65% 100% 97% 38% 58% 96% 47% 51% 98% 31% 54% 45% 39% 67% 43% 52% 58% 97% 97% Offer clients new ways to: De-escalate Situations Manage Anger Do safety planning Conduct Bystander Interventions Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 9

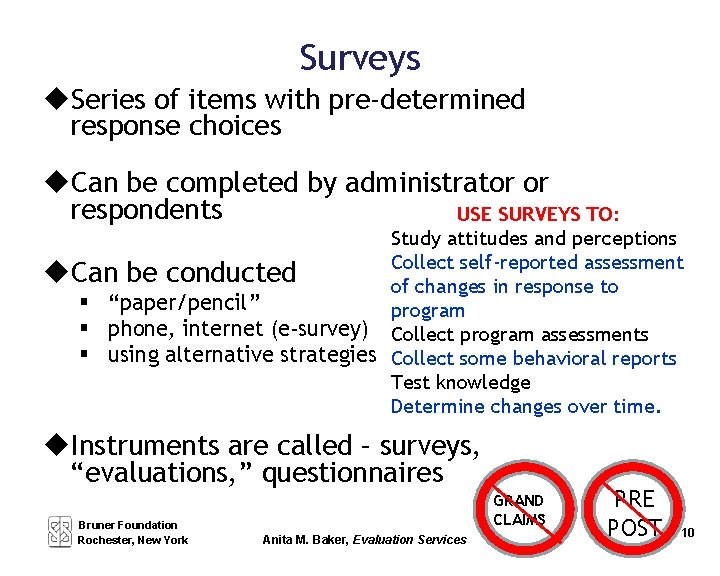

Surveys u. Series of items with pre-determined response choices u. Can be completed by administrator or respondents USE SURVEYS TO: Study attitudes and perceptions Collect self-reported assessment u. Can be conducted of changes in response to § “paper/pencil” program § phone, internet (e-survey) Collect program assessments § using alternative strategies Collect some behavioral reports Test knowledge Determine changes over time. u. Instruments are called – surveys, “evaluations, ” questionnaires Bruner Foundation Rochester, New York GRAND CLAIMS Anita M. Baker, Evaluation Services PRE POST 10

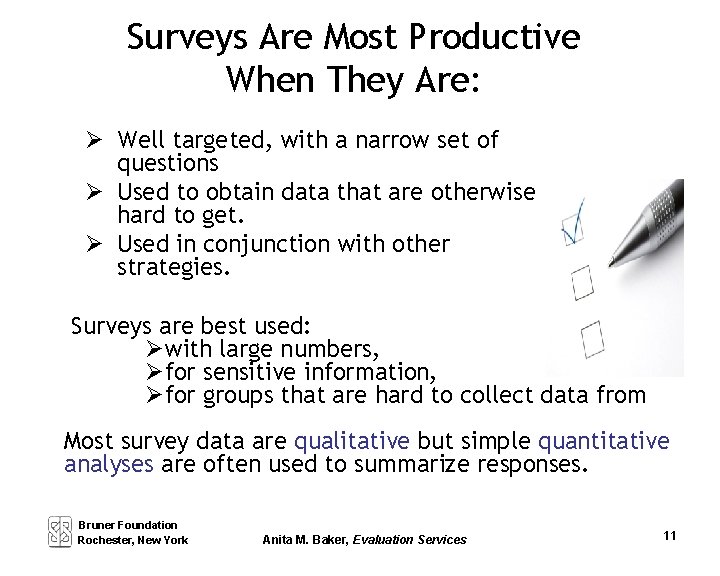

Surveys Are Most Productive When They Are: Well targeted, with a narrow set of questions Used to obtain data that are otherwise hard to get. Used in conjunction with other strategies. Surveys are best used: with large numbers, for sensitive information, for groups that are hard to collect data from Most survey data are qualitative but simple quantitative analyses are often used to summarize responses. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 11

Surveys can be administered analyzed quickly when. . . pre-validated instruments are used sampling is simple or not required the topic is narrowly focused the numbers of questions (and respondents*) is relatively small the need for disaggregation is limited Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 12

Benefits of Surveys • Can be used for a variety of reasons such as exploring ideas or getting sensitive information. • Can provide information about a large number and wide variety of participants. • Analysis can be simple. Computers are not required. • Results are compelling, have broad appeal and are easy to present. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 13

Drawbacks of Surveys Designing surveys is complicated and time consuming, but use of existing instruments is limited. The intervention effect can lead to false responses, or it can be overlooked. Broad questions and open-ended responses can be difficult to use. ! Analyses and presentations can require a great deal of work. You MUST be selective. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 14

Developing Survey Instruments Identify key issues or topics. Review available literature, other surveys. Convert key issues into questions, identify answer choices. Determine what other data are needed, add questions accordingly. Determine how questions will be ordered and formatted. ADD DIRECTIONS. Have survey instrument reviewed. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 15

For Survey Items, Remember: 1) State questions in specific terms, use appropriate language. 2) Use multiple questions to sufficiently cover topics. 3) Avoid “double-negatives. ” 4) Avoid asking multiple questions in one item (and). 5) Be sure response categories match the question, are exhaustive and don’t overlap. 6) Be sure to include directions, check numbering, formatting etc. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 16

Assessing Survey Instruments Are questions comprehensive without duplication, exhaustive without being exhausting? Do answer choices match question stem, provide coverage, avoid overlap? Are other data needs (e. g. , characteristics of respondent) addressed? Do order and formatting facilitate response? Are directions clear? Does the survey have face validity? Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 17

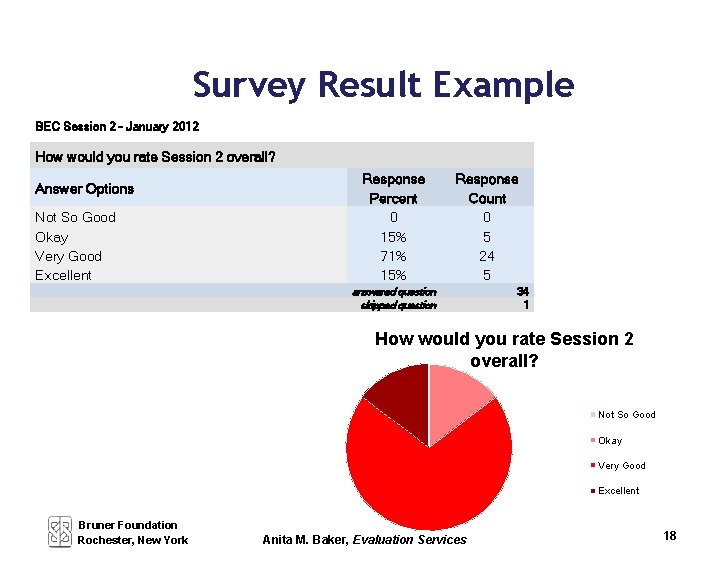

Survey Result Example BEC Session 2 - January 2012 How would you rate Session 2 overall? Answer Options Not So Good Okay Very Good Excellent Response Percent 0 15% 71% 15% Response Count 0 5 24 5 answered question skipped question 34 1 How would you rate Session 2 overall? Not So Good Okay Very Good Excellent Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 18

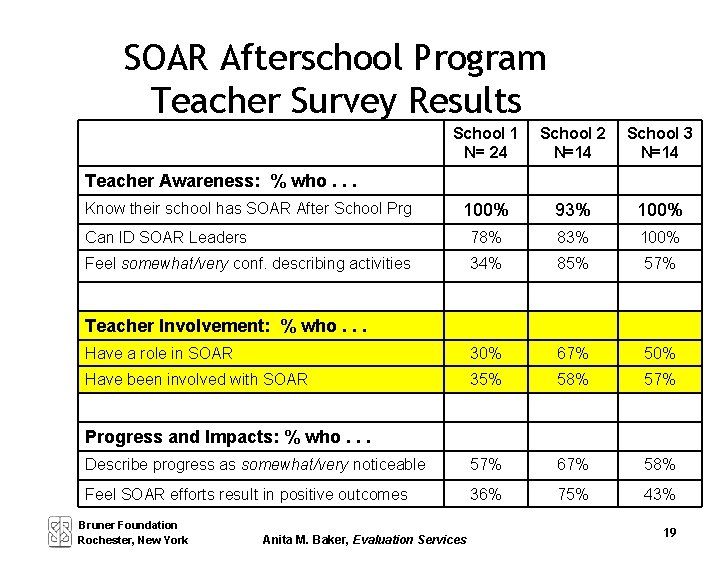

SOAR Afterschool Program Teacher Survey Results School 1 N= 24 School 2 N=14 School 3 N=14 100% 93% 100% Can ID SOAR Leaders 78% 83% 100% Feel somewhat/very conf. describing activities 34% 85% 57% Have a role in SOAR 30% 67% 50% Have been involved with SOAR 35% 58% 57% Describe progress as somewhat/very noticeable 57% 67% 58% Feel SOAR efforts result in positive outcomes 36% 75% 43% Teacher Awareness: % who. . . Know their school has SOAR After School Prg Teacher Involvement: % who. . . Progress and Impacts: % who. . . Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 19

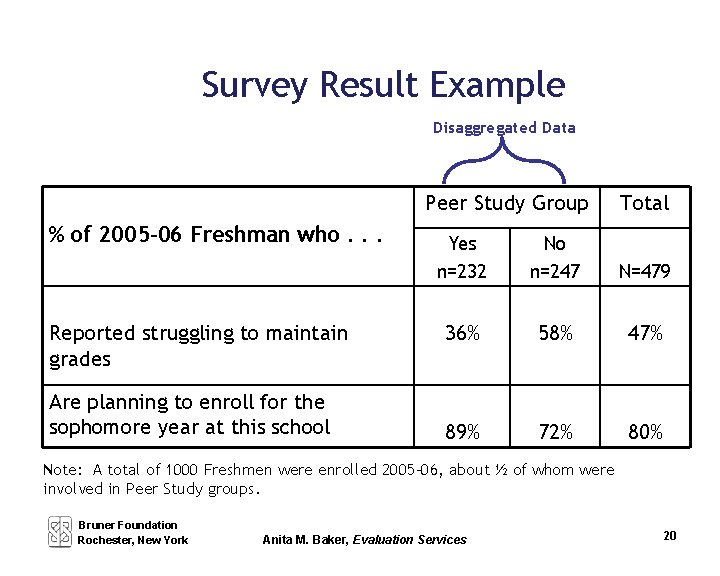

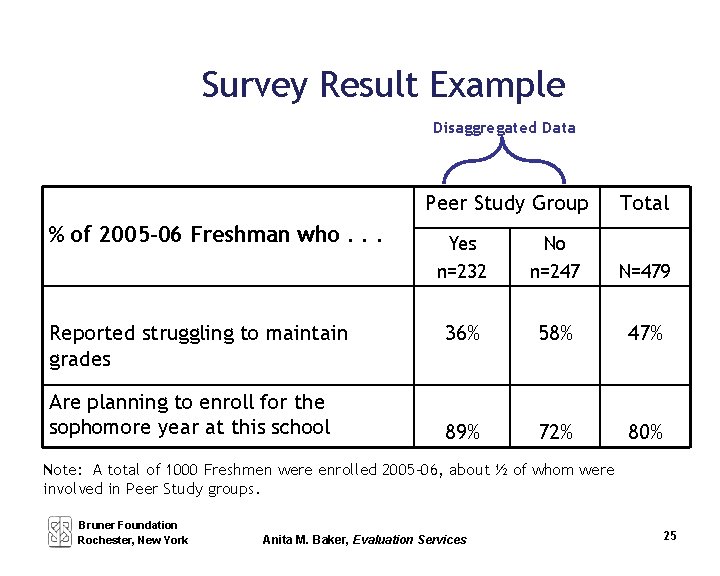

Survey Result Example Disaggregated Data Peer Study Group % of 2005 -06 Freshman who. . . Reported struggling to maintain grades Are planning to enroll for the sophomore year at this school Total Yes n=232 No n=247 N=479 36% 58% 47% 89% 72% 80% Note: A total of 1000 Freshmen were enrolled 2005 -06, about ½ of whom were involved in Peer Study groups. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 20

Types of Surveys Mail Surveys (must have correct addresses and return instructions, must conduct tracking and follow-up). Response is typically low. Electronic Surveys (must be sure respondents have access to internet, must have a host site that is recognizable or used by respondents; must have current email addresses). Response is often better. Web + (combining mail and e-surveys). Data input required, analysis is harder. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 21

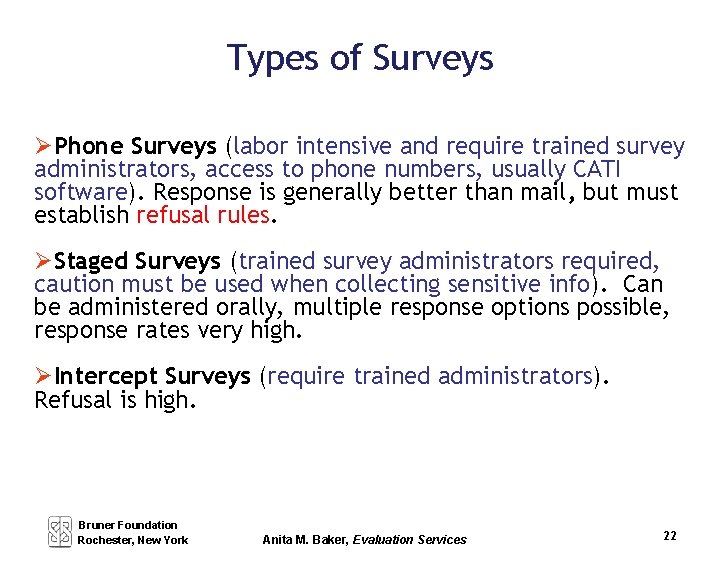

Types of Surveys Phone Surveys (labor intensive and require trained survey administrators, access to phone numbers, usually CATI software). Response is generally better than mail, but must establish refusal rules. Staged Surveys (trained survey administrators required, caution must be used when collecting sensitive info). Can be administered orally, multiple response options possible, response rates very high. Intercept Surveys (require trained administrators). Refusal is high. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 22

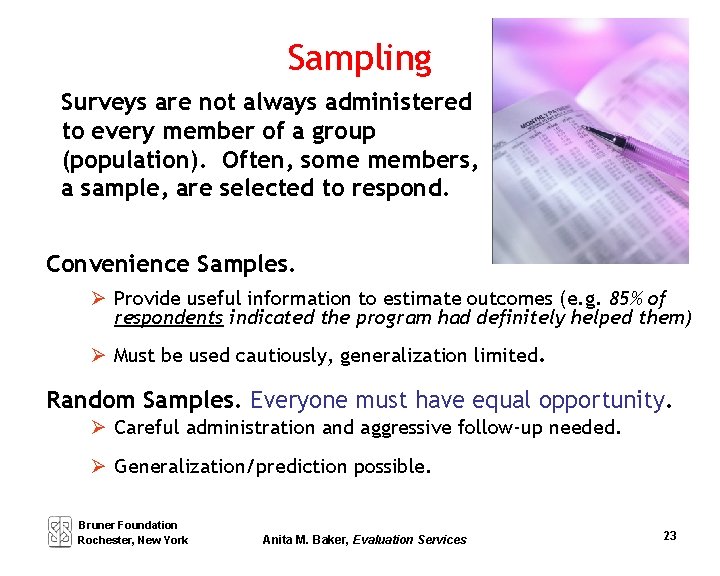

Sampling Surveys are not always administered to every member of a group (population). Often, some members, a sample, are selected to respond. Convenience Samples. Provide useful information to estimate outcomes (e. g. 85% of respondents indicated the program had definitely helped them) Must be used cautiously, generalization limited. Random Samples. Everyone must have equal opportunity. Careful administration and aggressive follow-up needed. Generalization/prediction possible. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 23

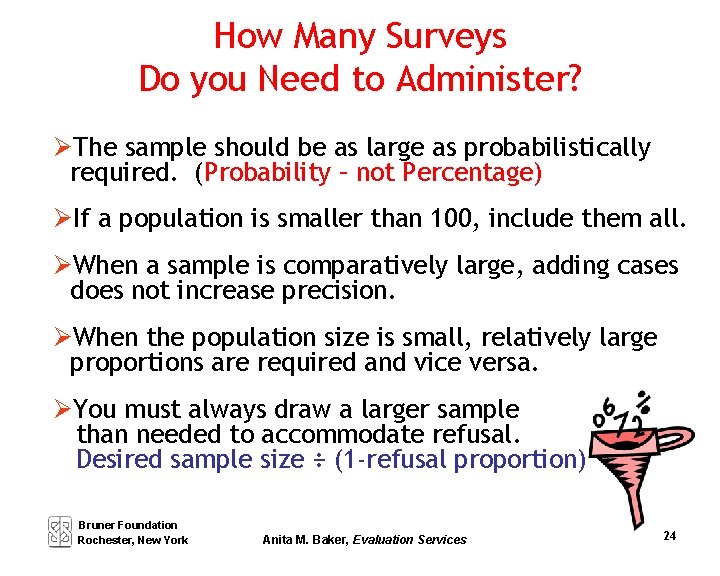

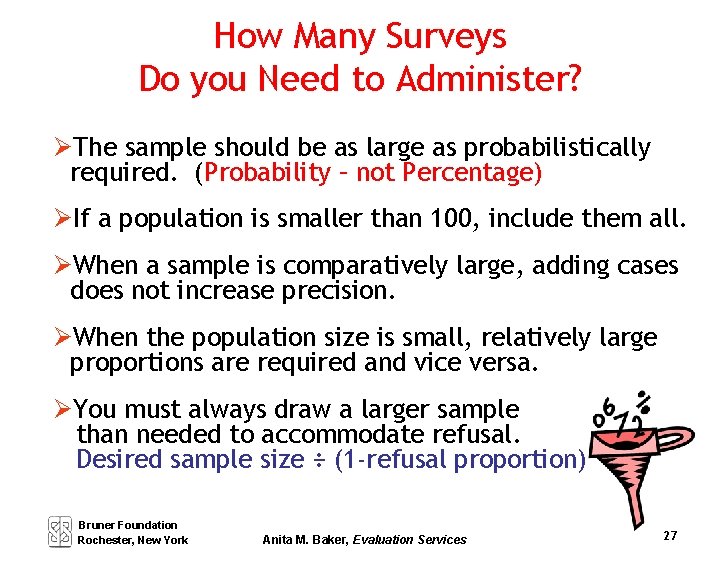

How Many Surveys Do you Need to Administer? The sample should be as large as probabilistically required. (Probability – not Percentage) If a population is smaller than 100, include them all. When a sample is comparatively large, adding cases does not increase precision. When the population size is small, relatively large proportions are required and vice versa. You must always draw a larger sample than needed to accommodate refusal. Desired sample size ÷ (1 -refusal proportion) Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 24

Survey Result Example Disaggregated Data Peer Study Group % of 2005 -06 Freshman who. . . Reported struggling to maintain grades Are planning to enroll for the sophomore year at this school Total Yes n=232 No n=247 N=479 36% 58% 47% 89% 72% 80% Note: A total of 1000 Freshmen were enrolled 2005 -06, about ½ of whom were involved in Peer Study groups. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 25

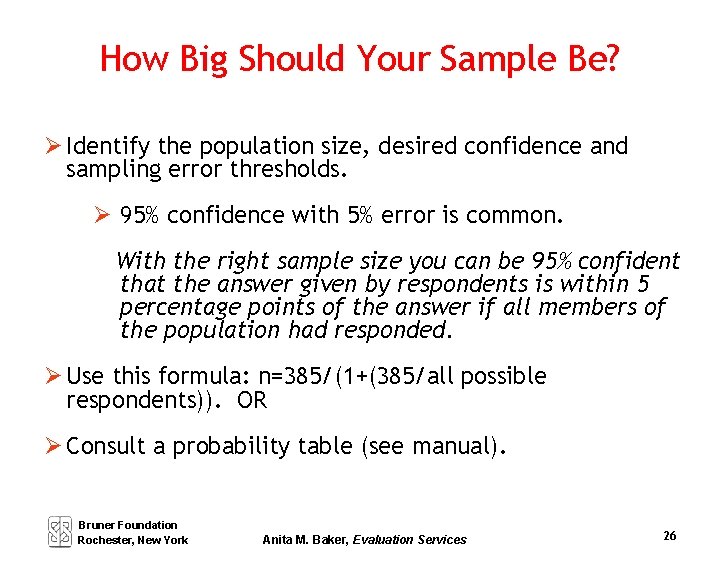

How Big Should Your Sample Be? Identify the population size, desired confidence and sampling error thresholds. 95% confidence with 5% error is common. With the right sample size you can be 95% confident that the answer given by respondents is within 5 percentage points of the answer if all members of the population had responded. Use this formula: n=385/(1+(385/all possible respondents)). OR Consult a probability table (see manual). Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 26

How Many Surveys Do you Need to Administer? The sample should be as large as probabilistically required. (Probability – not Percentage) If a population is smaller than 100, include them all. When a sample is comparatively large, adding cases does not increase precision. When the population size is small, relatively large proportions are required and vice versa. You must always draw a larger sample than needed to accommodate refusal. Desired sample size ÷ (1 -refusal proportion) Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 27

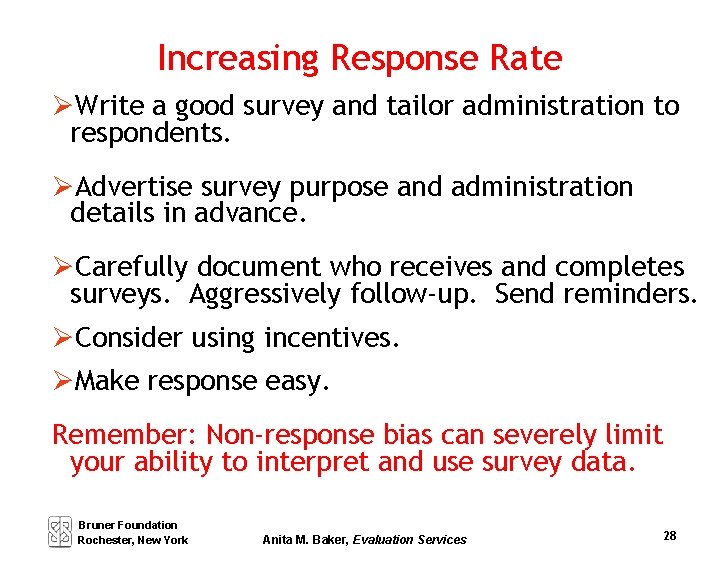

Increasing Response Rate Write a good survey and tailor administration to respondents. Advertise survey purpose and administration details in advance. Carefully document who receives and completes surveys. Aggressively follow-up. Send reminders. Consider using incentives. Make response easy. Remember: Non-response bias can severely limit your ability to interpret and use survey data. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 28

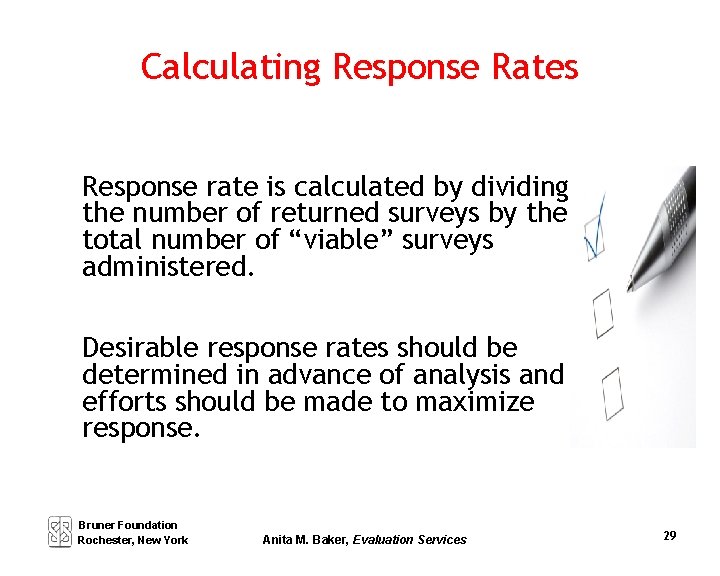

Calculating Response Rates Response rate is calculated by dividing the number of returned surveys by the total number of “viable” surveys administered. Desirable response rates should be determined in advance of analysis and efforts should be made to maximize response. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 29

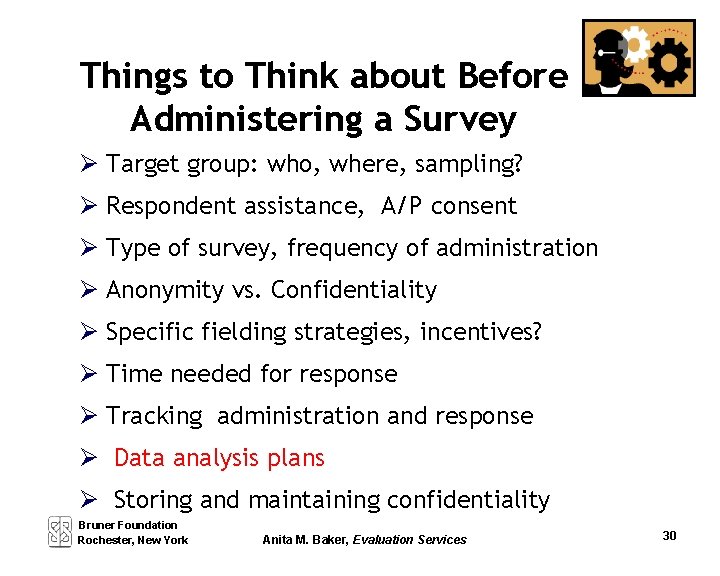

Things to Think about Before Administering a Survey Target group: who, where, sampling? Respondent assistance, A/P consent Type of survey, frequency of administration Anonymity vs. Confidentiality Specific fielding strategies, incentives? Time needed for response Tracking administration and response Data analysis plans Storing and maintaining confidentiality Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 30

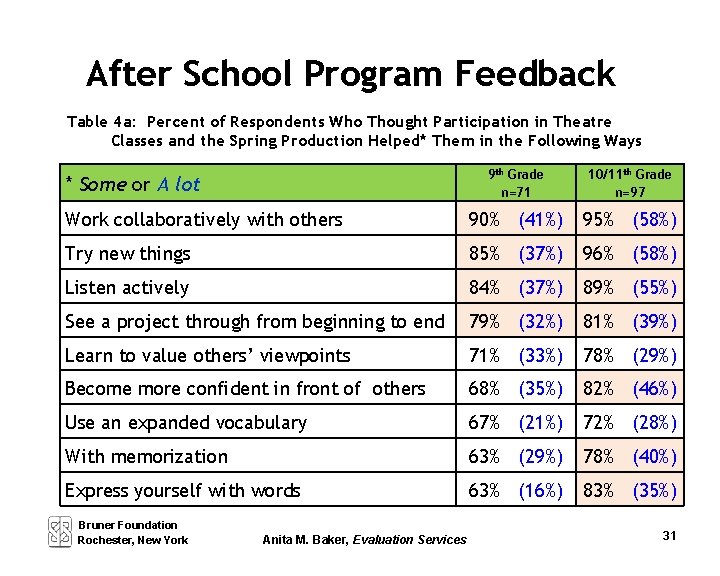

After School Program Feedback Table 4 a: Percent of Respondents Who Thought Participation in Theatre Classes and the Spring Production Helped* Them in the Following Ways 9 th Grade n=71 10/11 th Grade n=97 Work collaboratively with others 90% (41%) 95% (58%) Try new things 85% (37%) 96% (58%) Listen actively 84% (37%) 89% (55%) See a project through from beginning to end 79% (32%) 81% (39%) Learn to value others’ viewpoints 71% (33%) 78% (29%) Become more confident in front of others 68% (35%) 82% (46%) Use an expanded vocabulary 67% (21%) 72% (28%) With memorization 63% (29%) 78% (40%) Express yourself with words 63% (16%) 83% (35%) * Some or A lot Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 31

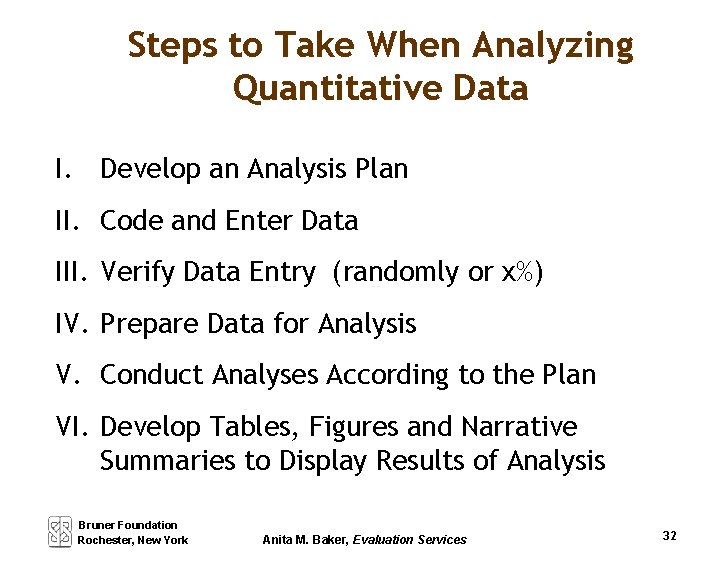

Steps to Take When Analyzing Quantitative Data I. Develop an Analysis Plan II. Code and Enter Data III. Verify Data Entry (randomly or x%) IV. Prepare Data for Analysis V. Conduct Analyses According to the Plan VI. Develop Tables, Figures and Narrative Summaries to Display Results of Analysis Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 32

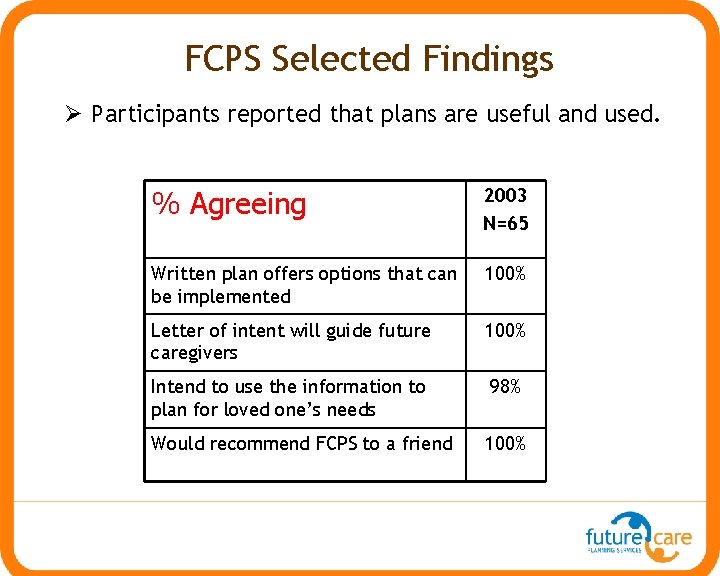

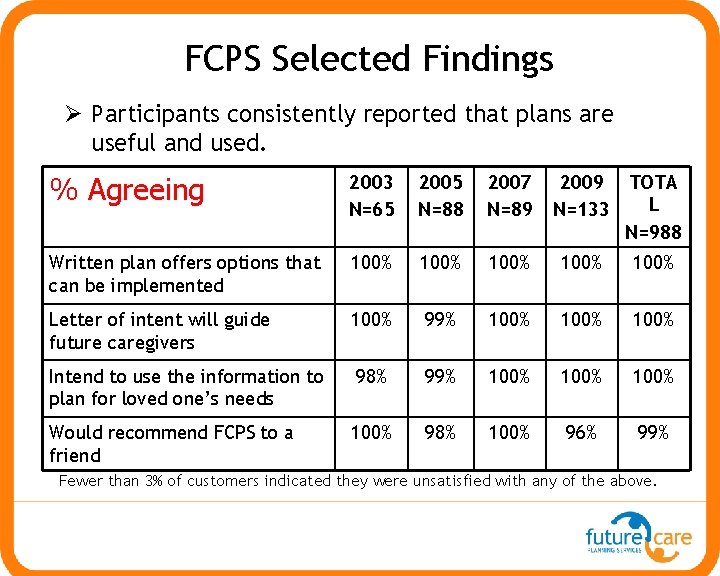

FCPS Selected Findings Participants reported that plans are useful and used. % Agreeing 2003 N=65 Written plan offers options that can be implemented 100% Letter of intent will guide future caregivers 100% Intend to use the information to plan for loved one’s needs 98% Would recommend FCPS to a friend 100%

FCPS Selected Findings Participants consistently reported that plans are useful and used. % Agreeing 2003 N=65 2005 N=88 2007 N=89 2009 N=133 TOTA L N=988 Written plan offers options that can be implemented 100% 100% Letter of intent will guide future caregivers 100% 99% 100% Intend to use the information to plan for loved one’s needs 98% 99% 100% Would recommend FCPS to a friend 100% 98% 100% 96% 99% Fewer than 3% of customers indicated they were unsatisfied with any of the above.

Building Evaluation Capacity Session 4 Surveys (e-Surveys), Record Reviews and Quantitative Analysis Anita M. Baker Evaluation Services Bruner Foundation Rochester, New York

Things to Think about Before Administering a Survey Target group: who, where, sampling? Respondent assistance, A/P consent Type of survey, frequency of administration Anonymity vs. Confidentiality Specific fielding strategies, incentives? Time needed for response Tracking administration and response Data analysis plans Storing and maintaining confidentiality Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 1

E-Surveys – Primary Uses Collecting survey data Alternative Administration Increases ease of access for some Generating hard copy surveys Entering and analyzing data Acct: BECAccount Password: Evalentine Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 2

E-Surveys – Key Decisions What Question types do you need? How will they be displayed? Do you need an “other” field? Should they be “required? ” How will you reach respondents? How will you conduct follow-up? Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 3

Record Reviews: • Accessing existing internal information, or information collected for other purposes. USE REC REVIEW TO: Collect some behavioral reports Conduct tests, collect test results Verify self-reported data Determine changes over time • Can be focused on – own records – records of other orgs – adding questions to existing docs • Instruments are called – protocols Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 4

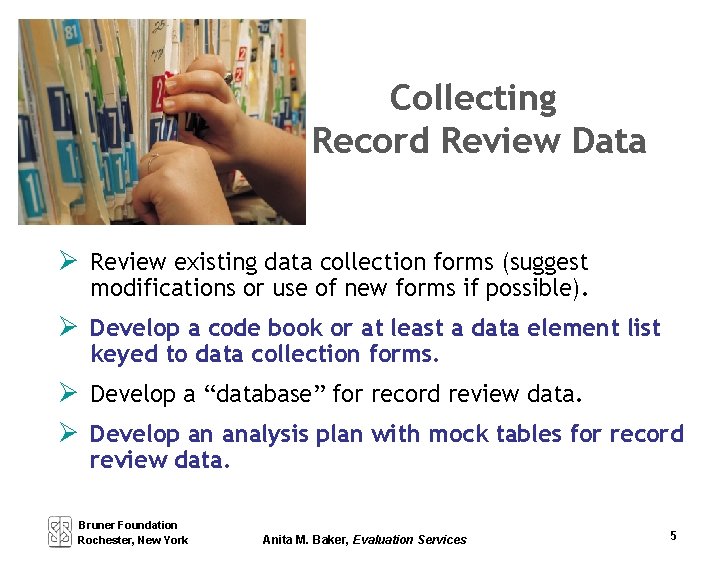

Collecting Record Review Data Review existing data collection forms (suggest modifications or use of new forms if possible). Develop a code book or at least a data element list keyed to data collection forms. Develop a “database” for record review data. Develop an analysis plan with mock tables for record review data. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 5

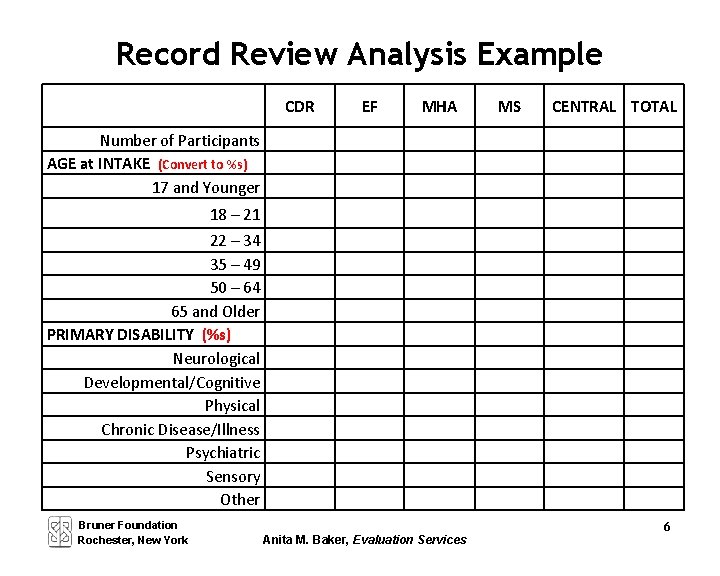

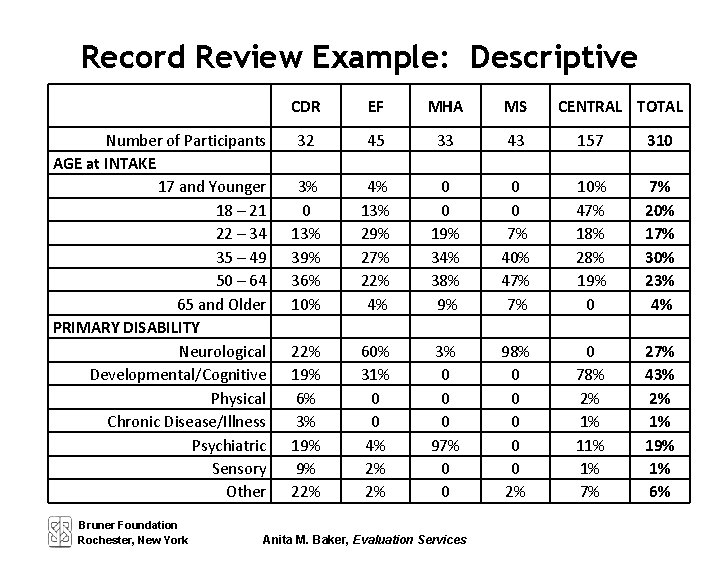

Record Review Analysis Example CDR EF MHA MS CENTRAL TOTAL Number of Participants AGE at INTAKE (Convert to %s) 17 and Younger 18 – 21 22 – 34 35 – 49 50 – 64 65 and Older PRIMARY DISABILITY (%s) Neurological Developmental/Cognitive Physical Chronic Disease/Illness Psychiatric Sensory Other Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 6

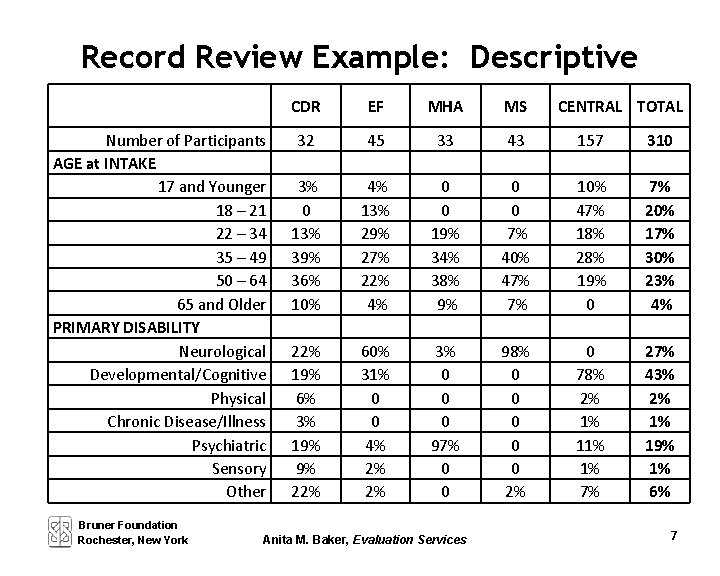

Record Review Example: Descriptive Number of Participants AGE at INTAKE 17 and Younger 18 – 21 22 – 34 35 – 49 50 – 64 65 and Older PRIMARY DISABILITY Neurological Developmental/Cognitive Physical Chronic Disease/Illness Psychiatric Sensory Other Bruner Foundation Rochester, New York CDR EF MHA MS 32 45 33 43 157 310 3% 0 13% 39% 36% 10% 4% 13% 29% 27% 22% 4% 0 0 19% 34% 38% 9% 0 0 7% 40% 47% 7% 10% 47% 18% 28% 19% 0 7% 20% 17% 30% 23% 4% 22% 19% 6% 3% 19% 9% 22% 60% 31% 0 0 4% 2% 2% 3% 0 0 0 97% 0 0 98% 0 0 0 2% 0 78% 2% 1% 1% 7% 27% 43% 2% 1% 19% 1% 6% Anita M. Baker, Evaluation Services CENTRAL TOTAL 7

Record Review Example: Evaluative Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 8

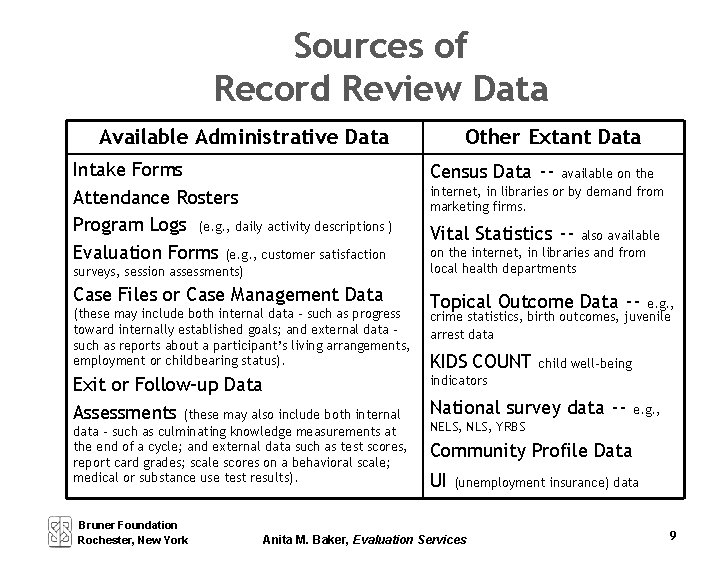

Sources of Record Review Data Available Administrative Data Intake Forms Attendance Rosters Program Logs (e. g. , daily activity descriptions ) Evaluation Forms (e. g. , customer satisfaction surveys, session assessments) Case Files or Case Management Data (these may include both internal data – such as progress toward internally established goals; and external data – such as reports about a participant’s living arrangements, employment or childbearing status). Exit or Follow-up Data Assessments (these may also include both internal data – such as culminating knowledge measurements at the end of a cycle; and external data such as test scores, report card grades; scale scores on a behavioral scale; medical or substance use test results). Bruner Foundation Rochester, New York Other Extant Data Census Data -- available on the internet, in libraries or by demand from marketing firms. Vital Statistics -- also available on the internet, in libraries and from local health departments Topical Outcome Data -- e. g. , crime statistics, birth outcomes, juvenile arrest data KIDS COUNT child well-being indicators National survey data -- e. g. , NELS, NLS, YRBS Community Profile Data UI (unemployment insurance) data Anita M. Baker, Evaluation Services 9

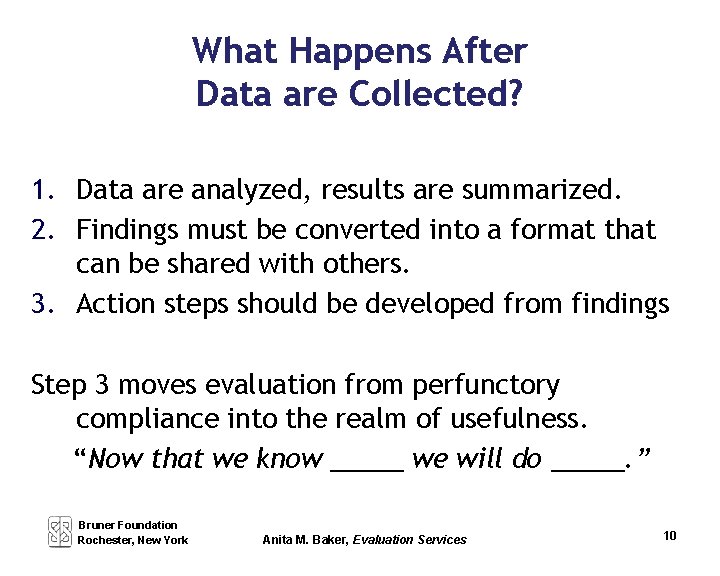

What Happens After Data are Collected? 1. Data are analyzed, results are summarized. 2. Findings must be converted into a format that can be shared with others. 3. Action steps should be developed from findings Step 3 moves evaluation from perfunctory compliance into the realm of usefulness. “Now that we know _____ we will do _____. ” Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 10

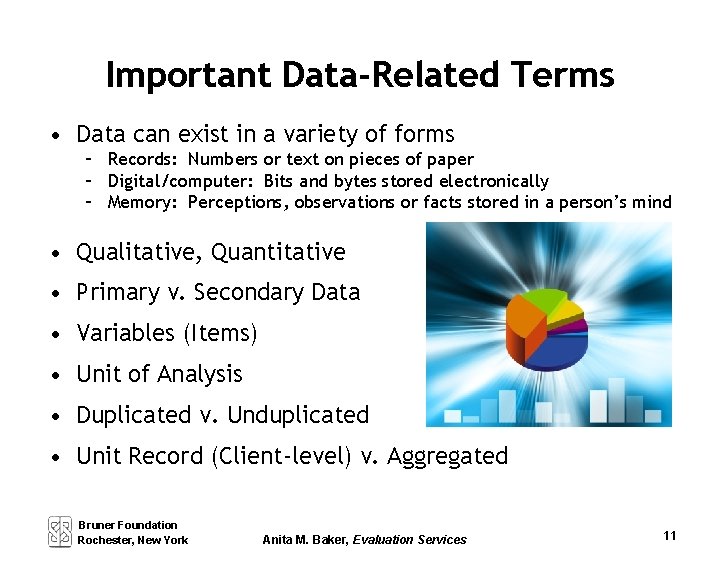

Important Data-Related Terms • Data can exist in a variety of forms – Records: Numbers or text on pieces of paper – Digital/computer: Bits and bytes stored electronically – Memory: Perceptions, observations or facts stored in a person’s mind • Qualitative, Quantitative • Primary v. Secondary Data • Variables (Items) • Unit of Analysis • Duplicated v. Unduplicated • Unit Record (Client-level) v. Aggregated Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 11

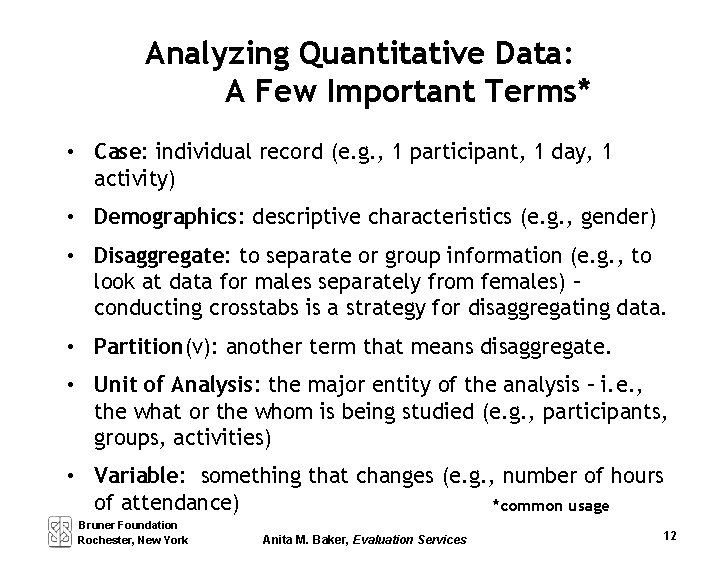

Analyzing Quantitative Data: A Few Important Terms* • Case: individual record (e. g. , 1 participant, 1 day, 1 activity) • Demographics: descriptive characteristics (e. g. , gender) • Disaggregate: to separate or group information (e. g. , to look at data for males separately from females) – conducting crosstabs is a strategy for disaggregating data. • Partition(v): another term that means disaggregate. • Unit of Analysis: the major entity of the analysis – i. e. , the what or the whom is being studied (e. g. , participants, groups, activities) • Variable: something that changes (e. g. , number of hours of attendance) *common usage Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 12

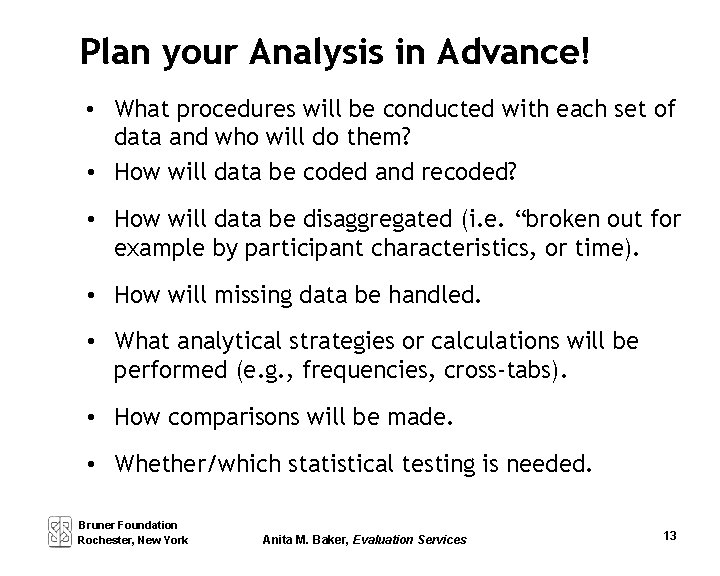

Plan your Analysis in Advance! • What procedures will be conducted with each set of data and who will do them? • How will data be coded and recoded? • How will data be disaggregated (i. e. “broken out for example by participant characteristics, or time). • How will missing data be handled. • What analytical strategies or calculations will be performed (e. g. , frequencies, cross-tabs). • How comparisons will be made. • Whether/which statistical testing is needed. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 13

Record Review Example: Descriptive Number of Participants AGE at INTAKE 17 and Younger 18 – 21 22 – 34 35 – 49 50 – 64 65 and Older PRIMARY DISABILITY Neurological Developmental/Cognitive Physical Chronic Disease/Illness Psychiatric Sensory Other Bruner Foundation Rochester, New York CDR EF MHA MS 32 45 33 43 157 310 3% 0 13% 39% 36% 10% 4% 13% 29% 27% 22% 4% 0 0 19% 34% 38% 9% 0 0 7% 40% 47% 7% 10% 47% 18% 28% 19% 0 7% 20% 17% 30% 23% 4% 22% 19% 6% 3% 19% 9% 22% 60% 31% 0 0 4% 2% 2% 3% 0 0 0 97% 0 0 98% 0 0 0 2% 0 78% 2% 1% 1% 7% 27% 43% 2% 1% 19% 1% 6% Anita M. Baker, Evaluation Services CENTRAL TOTAL

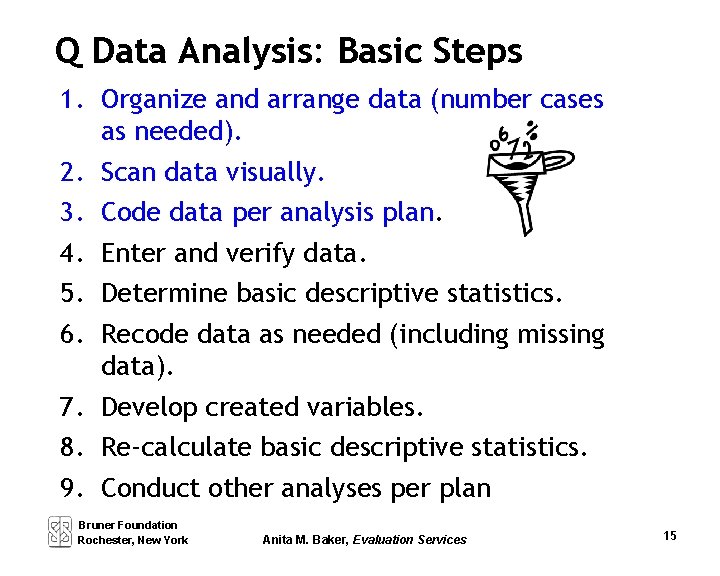

Q Data Analysis: Basic Steps 1. Organize and arrange data (number cases as needed). 2. Scan data visually. 3. Code data per analysis plan. 4. Enter and verify data. 5. Determine basic descriptive statistics. 6. Recode data as needed (including missing data). 7. Develop created variables. 8. Re-calculate basic descriptive statistics. 9. Conduct other analyses per plan Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 15

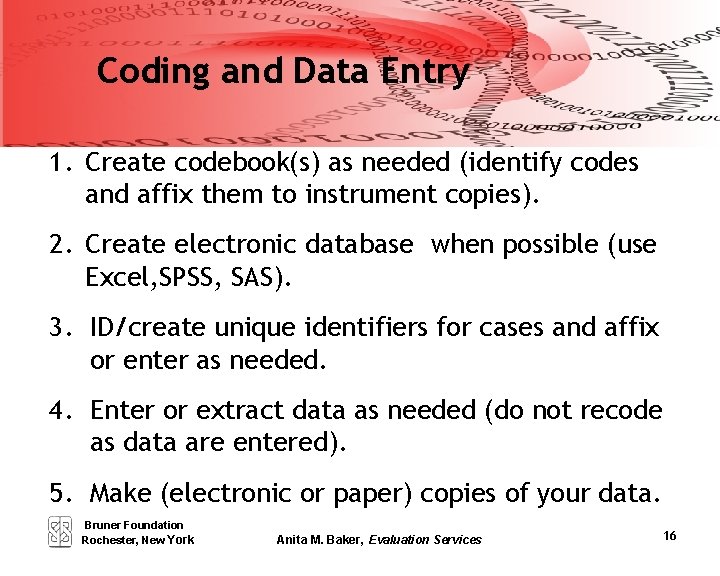

Coding and Data Entry 1. Create codebook(s) as needed (identify codes and affix them to instrument copies). 2. Create electronic database when possible (use Excel, SPSS, SAS). 3. ID/create unique identifiers for cases and affix or enter as needed. 4. Enter or extract data as needed (do not recode as data are entered). 5. Make (electronic or paper) copies of your data. Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 16

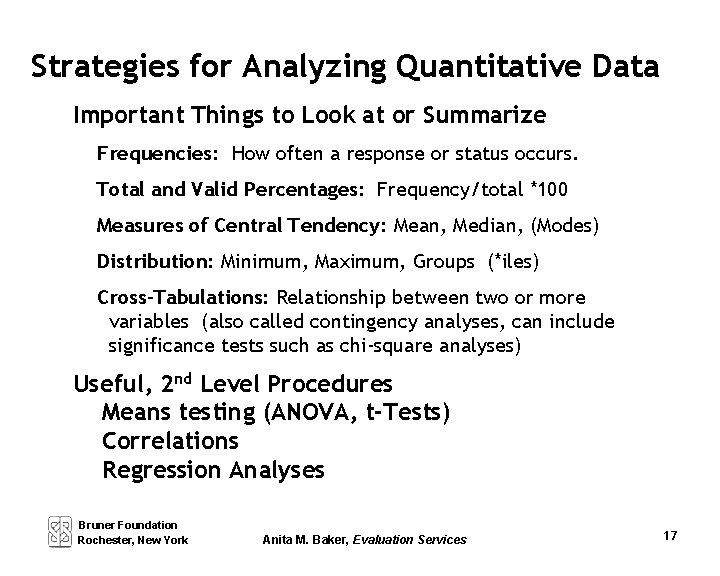

Strategies for Analyzing Quantitative Data Important Things to Look at or Summarize Frequencies: How often a response or status occurs. Total and Valid Percentages: Frequency/total *100 Measures of Central Tendency: Mean, Median, (Modes) Distribution: Minimum, Maximum, Groups (*iles) Cross-Tabulations: Relationship between two or more variables (also called contingency analyses, can include significance tests such as chi-square analyses) Useful, 2 nd Level Procedures Means testing (ANOVA, t-Tests) Correlations Regression Analyses Bruner Foundation Rochester, New York Anita M. Baker, Evaluation Services 17

Building Evaluation Capacity Session 5 Interviews, Observations, Analysis of Qualitative Data Anita M. Baker Evaluation Services Bruner Foundation Rochester, New York

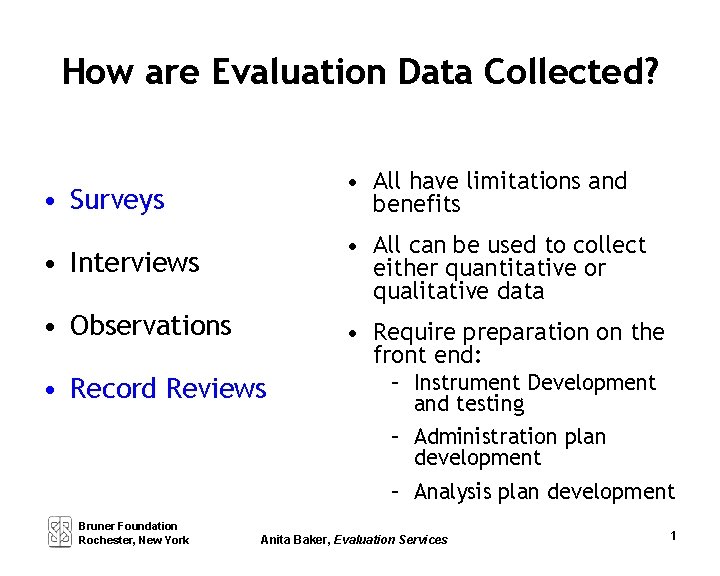

How are Evaluation Data Collected? • Surveys • All have limitations and benefits • Interviews • All can be used to collect either quantitative or qualitative data • Observations • Require preparation on the front end: • Record Reviews Bruner Foundation Rochester, New York – Instrument Development and testing – Administration plan development – Analysis plan development Anita Baker, Evaluation Services 1

Evaluation Data Collection Options Qualitative Data Quantitative Data Interviews Conducting guided conversations with key people knowledgeable about a subject Focus Groups Facilitating a discussion about a particular issue/question among people who share common characteristics Record Review Surveys Administering a structured series of questions with discrete choices Observations Documenting visible manifestations of behavior or characteristics of settings Bruner Foundation Rochester, New York Anita Baker, Evaluation Services Collecting and organizing data about a program or event and its participants from outside sources External Record Review Utilizing quantitative data that can be obtained from existing sources 2

What are Qualitative Data? Qualitative data - come from surveys, interviews, observations and sometimes record reviews. They consist of: – descriptions of situations, events, people, interactions, and observed behaviors; – direct quotations and ratings from people about their experiences, attitudes, beliefs, thoughts or assessments; – excerpts or entire passages from documents, correspondence, records, case histories, field notes. Collecting and analyzing qualitative data permit study of selected issues in depth and detail and help to answer the “why questions. ” ! Qualitative data are just as valid as quantitative data! Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 3

How are evaluation data collected? • Surveys • All have limitations and benefits • Interviews • All can be used to collect either quantitative or qualitative data • Observations • Record Reviews Bruner Foundation Rochester, New York • Require preparation on the front end: – Instrument Development and testing – Administration plan development – Analysis plan development 4 Anita Baker, Evaluation Services

Observations are conducted to view and hear actual program activities so that they can be described thoroughly and carefully. Observations can be focused on programs overall or participants in programs. Users of observation reports will know what has occurred and how it has occurred. Observation data are collected in the field, where the action is, as it happens. Instruments are called protocols, guides, sometimes checklists Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 5

Use Observations: To document implementation. To witness levels of skill/ability, program practices, behaviors. To determine changes over time. 4

Trained Observers Can: See things that may escape awareness of others Learn about things that others may be unwilling or unable to talk about Move beyond the selective perceptions of others Present multiple perspectives Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 7

Other Advantages The observer’s knowledge and direct experience can be used as resources to aid in assessment Feelings of the observer become part of the observation data OBSERVER’S REACTIONS are data, but they MUST BE KEPT SEPARATE Let’s try it. . . Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 8

Methodological Decisions: Observations What should be observed and how will you structure your protocol? (individual, event, setting, practice) How will you choose what to see? Will you ask for a “performance” or just attend a regular session, or both? Strive for “typicalness. ” Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 9

Methodological Decisions: Observations Will your presence be known, or unannounced? Who should know? How much will you disclose about the purpose of your observation? How much detail will you seek? (checklist vs. comprehensive) How long and how often will the observations be? Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 10

Conducting and Recording Observations: Before Clarify the purpose for conducting the observation Specify the methodological decisions you have made Collect background information about the subject (if possible/necessary) Develop a specific protocol to guide your observation Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 11

Conducting and Recording Observations: During Use the protocol to guide your observation and record observation data BE DESCRIPTIVE (keep observer impressions separate from descriptions of actual events) Inquire about the “typical-ness” of the session/event. Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 12

Conducting and Recording Observations: After Review observation notes and make clarifications where necessary. – clarify abbreviations – elaborate on details – transcribe if feasible or appropriate Evaluate results of the observation. Record whether: – the session went well, – the focus was covered, – there were any barriers to observation – there is a need for follow-up Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 13

Observation Protocols Comprehensive § Setting § Beginning, ending and chronology of events § Interactions § Decisions § Nonverbal behaviors § Program activities and participant behaviors, response of participants Checklist – “best” or expected practices Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 14

Analyzing Observation Data Make summary statements about trends in your observations Every time we visited the program, the majority of the children were involved in a literacy development activity such as reading, illustrating a story they had read or written, practicing reading aloud. Include “snippets” or excerpts from field notes to illustrate summary points. Take a minute to read slide 14 in your notes! Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 15

Interviews An interview is a one-sided conversation between an interviewer and a respondent. Questions are (mostly) pre-determined, but openended. Can be structured or semi-structured. Respondents are expected to answer using their own terms. Interviews can be conducted in person, via phone, one -on-one or in groups. Focus groups are specialized group interviews. Instruments are called protocols, interview schedules or guides Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 16

Use Interviews: To study attitudes and perceptions using respondent’s own language. To collect self-reported assessment of changes in response to program. To collect program assessments. To document program implementation. To determine changes over time. Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 17

Methodological Decisions: Interviews What type of interview should you conduct? § § Unstructured Semi-structured Structured Intercept What should you ask? How will you word and sequence the questions? What time frame will you use (past, present, future, mixed)? Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 18

Interviews: More About Methodological Decisions How much detail and how long to conduct? Who are respondents? (Is translation necessary? How many interviews, on what schedule? Will the interviews be conducted in-person, by phone, on-or-off site? Are group interviews possible/useful? Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 19

Conducting and Recording Interviews: Before Clarify purpose for the interview. Specify answers to the methodological decisions. Select potential respondents – sampling. Collect background information about respondents. Develop a specific protocol to guide your interview (develop an abbreviation strategy for recording answers). Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 20

Conducting and Recording Interviews: During Use the protocol (device) to record responses. Use probes and follow-up questions as necessary for depth and detail. Ask singular questions. Ask clear and truly open-ended questions. Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 21

Conducting and Recording Interviews: After Review interview responses, clarify notes, decide about transcription. Record observations about the interview. Evaluate how it went and determine follow-up needs. Identify and summarize some key findings. Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 22

Tips for Effective Interviewing Communicate clearly about what information is desired, why it’s important, what will happen to it. Remember to ask single questions and use clear and appropriate language. Avoid leading questions. Check (or summarize) occasionally. Let the respondent know how the interview is going, how much longer, etc. Understand the difference between a depth interview and an interrogation. Observe while interviewing. Practice Interviewing – Develop Your Skills! Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 23

More Tips Recognize when the respondent is not clearly answering and press for a full response. Maintain control of the interview and neutrality toward the content of response. Treat the respondent with respect. (Don’t share your opinions or knowledge. Don’t interrupt unless the interview is out of hand). Practice Interviewing – Develop Your Skills! Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 24

Analyzing Interview Data 1) Read/review completed sets of interviews. 2) Record general summaries 3) Where appropriate, encode responses. 4) Summarize coded data 5) Pull quotes to illustrate findings. Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 25

Analyze interviews pp. 116 - 119

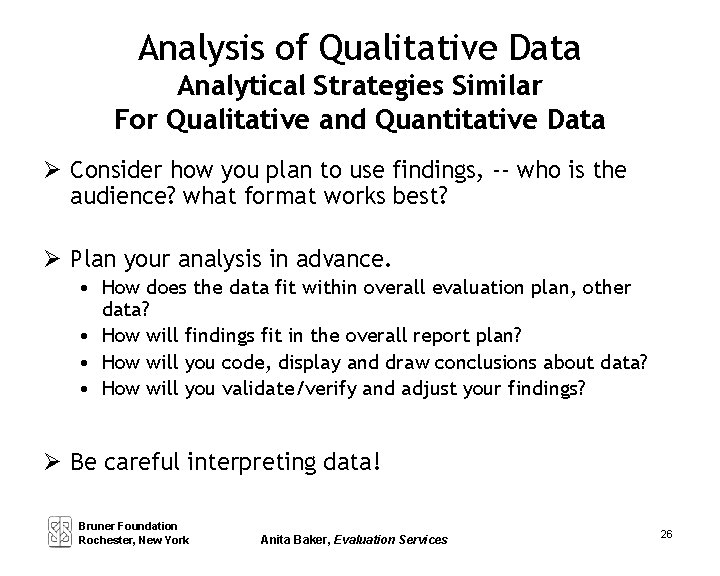

Analysis of Qualitative Data Analytical Strategies Similar For Qualitative and Quantitative Data Consider how you plan to use findings, -- who is the audience? what format works best? Plan your analysis in advance. • How does the data fit within overall evaluation plan, other data? • How will findings fit in the overall report plan? • How will you code, display and draw conclusions about data? • How will you validate/verify and adjust your findings? Be careful interpreting data! Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 26

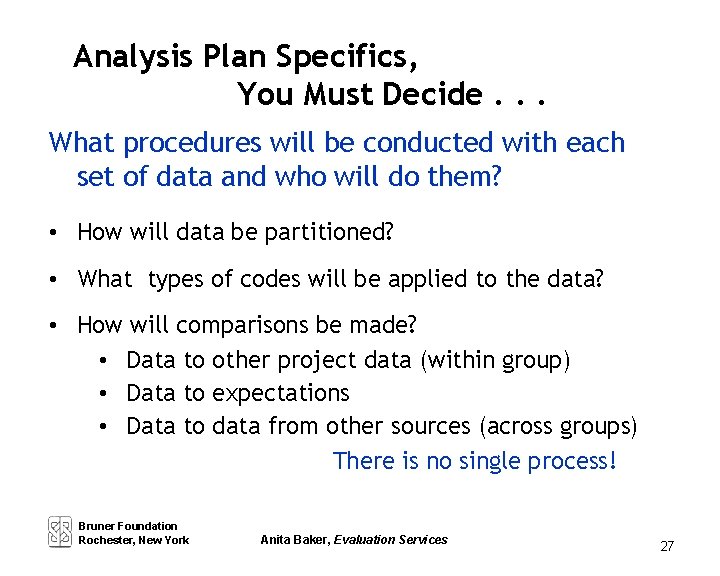

Analysis Plan Specifics, You Must Decide. . . What procedures will be conducted with each set of data and who will do them? • How will data be partitioned? • What types of codes will be applied to the data? • How will comparisons be made? • Data to other project data (within group) • Data to expectations • Data to data from other sources (across groups) There is no single process! Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 27

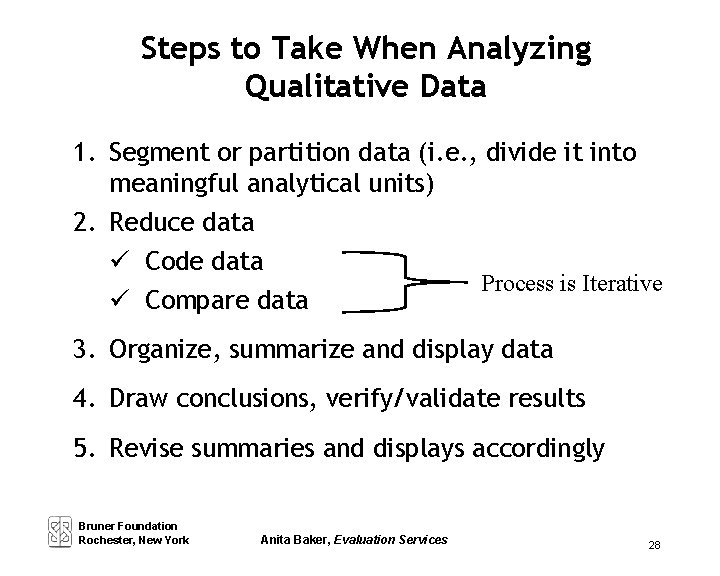

Steps to Take When Analyzing Qualitative Data 1. Segment or partition data (i. e. , divide it into meaningful analytical units) 2. Reduce data Code data Process is Iterative Compare data 3. Organize, summarize and display data 4. Draw conclusions, verify/validate results 5. Revise summaries and displays accordingly Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 28

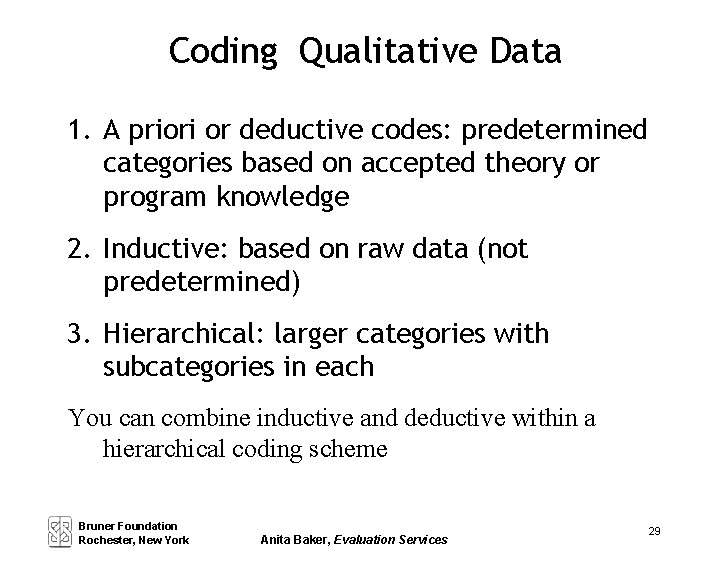

Coding Qualitative Data 1. A priori or deductive codes: predetermined categories based on accepted theory or program knowledge 2. Inductive: based on raw data (not predetermined) 3. Hierarchical: larger categories with subcategories in each You can combine inductive and deductive within a hierarchical coding scheme Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 29

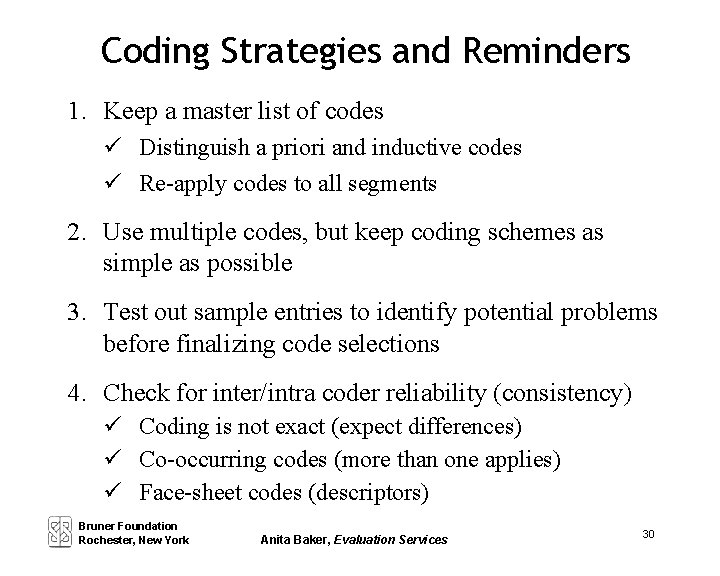

Coding Strategies and Reminders 1. Keep a master list of codes Distinguish a priori and inductive codes Re-apply codes to all segments 2. Use multiple codes, but keep coding schemes as simple as possible 3. Test out sample entries to identify potential problems before finalizing code selections 4. Check for inter/intra coder reliability (consistency) Coding is not exact (expect differences) Co-occurring codes (more than one applies) Face-sheet codes (descriptors) Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 30

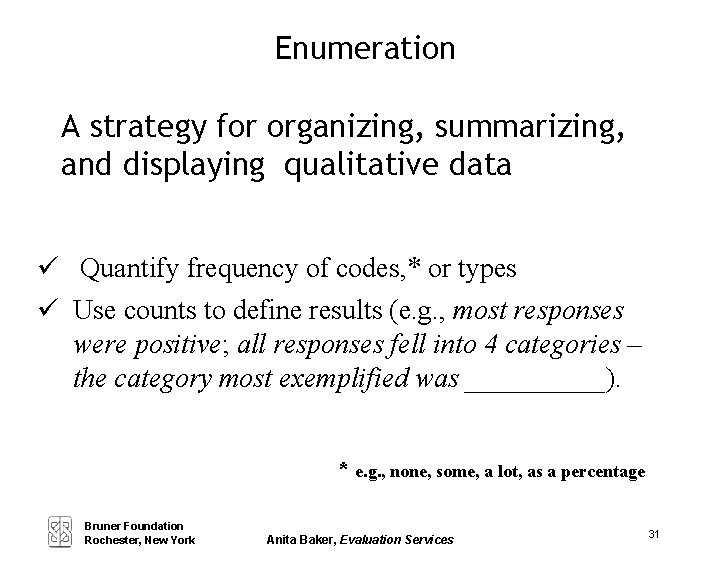

Enumeration A strategy for organizing, summarizing, and displaying qualitative data Quantify frequency of codes, * or types Use counts to define results (e. g. , most responses were positive; all responses fell into 4 categories – the category most exemplified was _____). * e. g. , none, some, a lot, as a percentage Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 31

Building Evaluation Capacity Session 6 Putting it All Together: Projecting Level of Effort and Cost, (Stakeholders, Reporting, Evaluative Thinking) Anita M. Baker Evaluation Services Bruner Foundation Rochester, New York

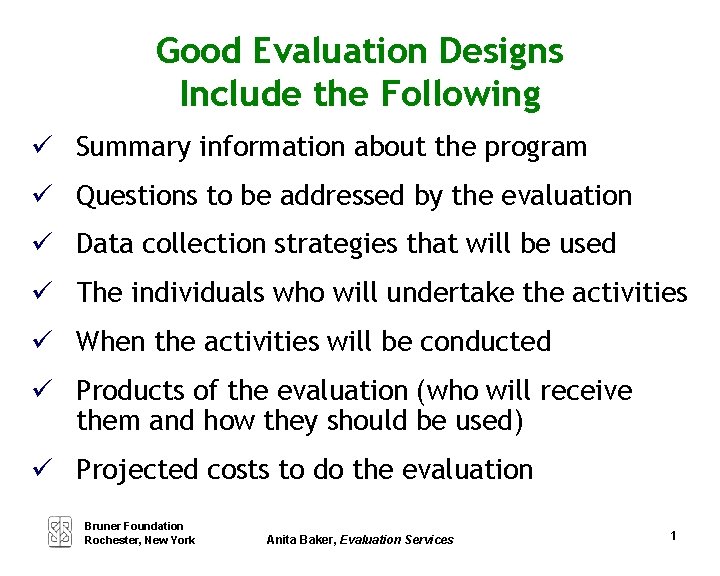

Good Evaluation Designs Include the Following Summary information about the program Questions to be addressed by the evaluation Data collection strategies that will be used The individuals who will undertake the activities When the activities will be conducted Products of the evaluation (who will receive them and how they should be used) Projected costs to do the evaluation Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 1

Increasing Rigor in Program Evaluation è Mixed methodologies è Multiple sources of data è Multiple points in time Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 2

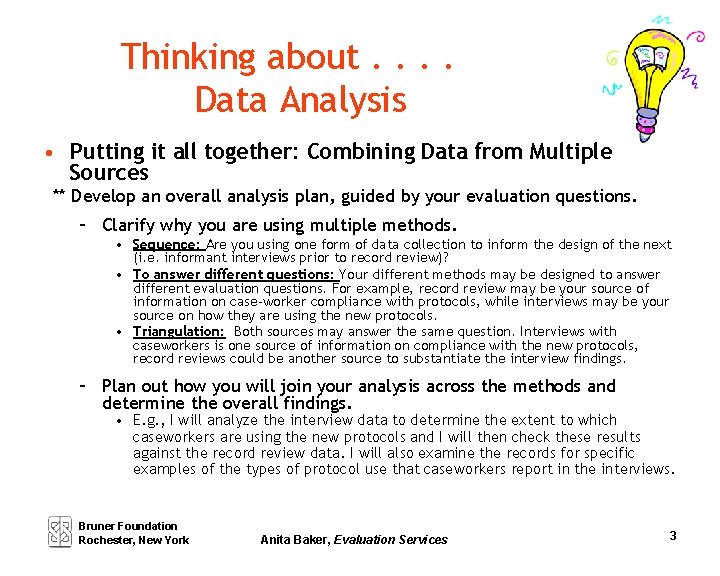

Thinking about. . Data Analysis • Putting it all together: Combining Data from Multiple Sources ** Develop an overall analysis plan, guided by your evaluation questions. – Clarify why you are using multiple methods. • Sequence: Are you using one form of data collection to inform the design of the next (i. e. informant interviews prior to record review)? • To answer different questions: Your different methods may be designed to answer different evaluation questions. For example, record review may be your source of information on case-worker compliance with protocols, while interviews may be your source on how they are using the new protocols. • Triangulation: Both sources may answer the same question. Interviews with caseworkers is one source of information on compliance with the new protocols, record reviews could be another source to substantiate the interview findings. – Plan out how you will join your analysis across the methods and determine the overall findings. • E. g. , I will analyze the interview data to determine the extent to which caseworkers are using the new protocols and I will then check these results against the record review data. I will also examine the records for specific examples of the types of protocol use that caseworkers report in the interviews. Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 3

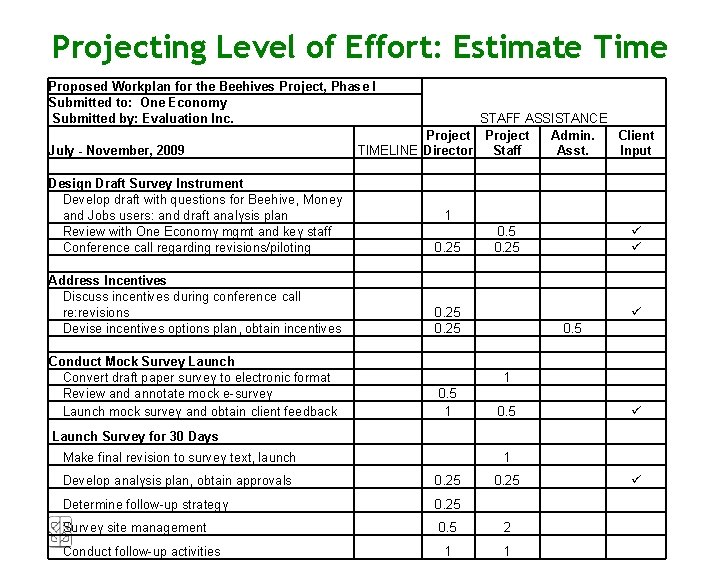

Projecting Level of Effort LOE projections are often summarized in a table or spreadsheet. To estimate labor and time: • List all evaluation tasks • Determine who will conduct each task • Estimate time required to complete each task in day or half-day increments (see page 77 in your manual). Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 4

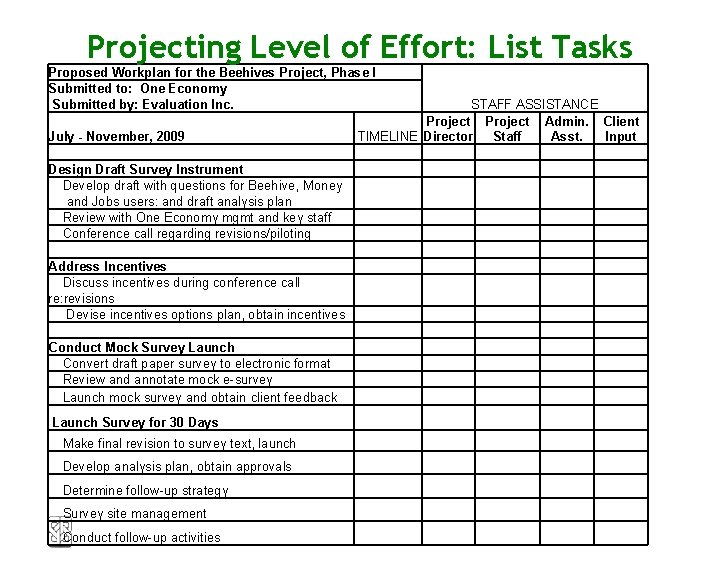

Projecting Level of Effort: List Tasks Proposed Workplan for the Beehives Project, Phase I Submitted to: One Economy Submitted by: Evaluation Inc. July - November, 2009 Design Draft Survey Instrument Develop draft with questions for Beehive, Money and Jobs users: and draft analysis plan Review with One Economy mgmt and key staff Conference call regarding revisions/piloting Address Incentives Discuss incentives during conference call re: revisions Devise incentives options plan, obtain incentives Conduct Mock Survey Launch Convert draft paper survey to electronic format Review and annotate mock e-survey Launch mock survey and obtain client feedback Launch Survey for 30 Days Make final revision to survey text, launch Develop analysis plan, obtain approvals Determine follow-up strategy Survey site management Conduct follow-up activities STAFF ASSISTANCE Project Admin. Client TIMELINE Director Staff Asst. Input

Projecting Level of Effort: Estimate Time Proposed Workplan for the Beehives Project, Phase I Submitted to: One Economy Submitted by: Evaluation Inc. July - November, 2009 STAFF ASSISTANCE Project Admin. Client TIMELINE Director Staff Asst. Input Design Draft Survey Instrument Develop draft with questions for Beehive, Money and Jobs users: and draft analysis plan Review with One Economy mgmt and key staff Conference call regarding revisions/piloting 0. 25 Address Incentives Discuss incentives during conference call re: revisions Devise incentives options plan, obtain incentives 0. 25 Conduct Mock Survey Launch Convert draft paper survey to electronic format Review and annotate mock e-survey Launch mock survey and obtain client feedback 1 0. 5 0. 25 0. 5 1 0. 5 Launch Survey for 30 Days 1 Make final revision to survey text, launch Develop analysis plan, obtain approvals 0. 25 Determine follow-up strategy 0. 25 Survey site management 0. 5 2 1 1 Conduct follow-up activities 0. 25

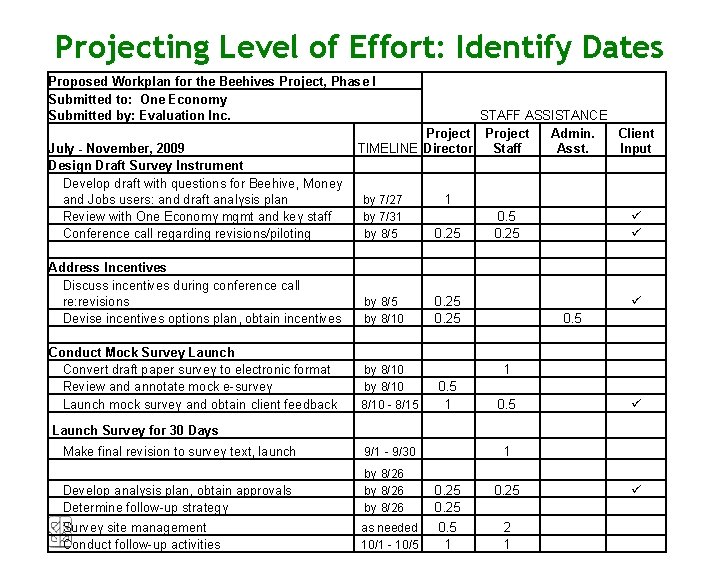

Projecting Timelines can be constructed separately or embedded in an LOE chart (see example pp. 7778). To project timelines: • Assign dates to your level of effort, working backward from overall timeline requirements. • Be sure the number of days required for a task and when it must be completed are in sync and feasible. • Check to make sure evaluation calendar is in alignment with program calendar. è Don’t plan to do a lot of data collecting around program holidays è Don’t expect to collect data only between 9 and 5 Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 7

Projecting Level of Effort: Identify Dates Proposed Workplan for the Beehives Project, Phase I Submitted to: One Economy Submitted by: Evaluation Inc. July - November, 2009 Design Draft Survey Instrument Develop draft with questions for Beehive, Money and Jobs users: and draft analysis plan Review with One Economy mgmt and key staff Conference call regarding revisions/piloting Address Incentives Discuss incentives during conference call re: revisions Devise incentives options plan, obtain incentives Conduct Mock Survey Launch Convert draft paper survey to electronic format Review and annotate mock e-survey Launch mock survey and obtain client feedback STAFF ASSISTANCE Project Admin. Client TIMELINE Director Staff Asst. Input 1 by 7/27 by 7/31 by 8/5 0. 25 by 8/10 8/10 - 8/15 0. 25 0. 5 1 0. 5 Launch Survey for 30 Days Make final revision to survey text, launch 9/1 - 9/30 Develop analysis plan, obtain approvals Determine follow-up strategy by 8/26 Survey site management Conduct follow-up activities as needed 10/1 - 10/5 1 0. 25 0. 5 1 2 1

Clearly Identify Audience Decide on Format Who is your audience? Staff? Funders? Board? Participants? Multiple What Presentation Strategies work best? Power. Point Newsletter Fact sheet Oral presentation Visual displays Video Storytelling Press releases Report full report, executive summary, stakeholder-specific report? Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 9

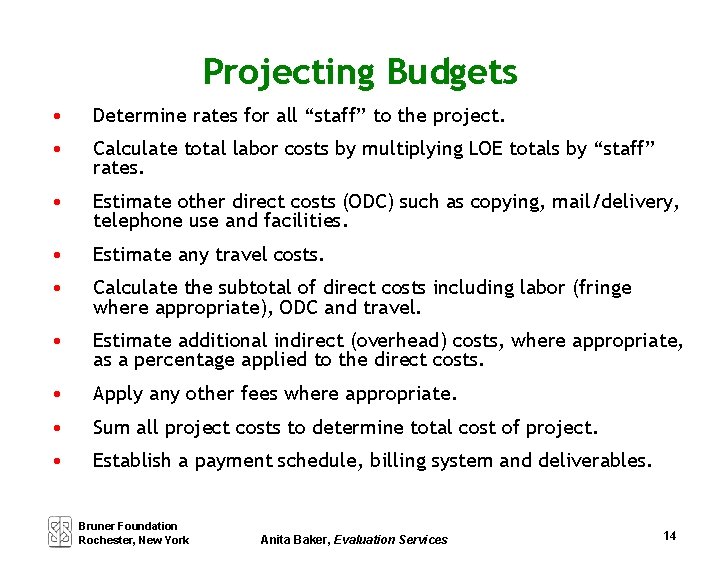

Think About Communication Strategies Are there natural opportunities for sharing (preliminary) findings with stakeholders? • At a special convening • At regular or pre-planned meetings • During regular work interactions (e. g. , clinical supervision, staff meetings, board meetings) • Via informal discussions Bruner Foundation Rochester, New York Anita Baker, Evaluation Services 10