Building Cyberinfrastructure into a University Culture EDUCAUSE Live

Building Cyberinfrastructure into a University Culture EDUCAUSE Live! March 30, 2010 Curt Hillegas Director, TIGRESS HPC Center Princeton University 1

Context As a world-renowned research university, Princeton seeks to achieve the highest levels of distinction in the discovery and transmission of knowledge and understanding. At the same time, Princeton is distinctive among research universities in its commitment to undergraduate teaching. www. Princeton. EDU 2

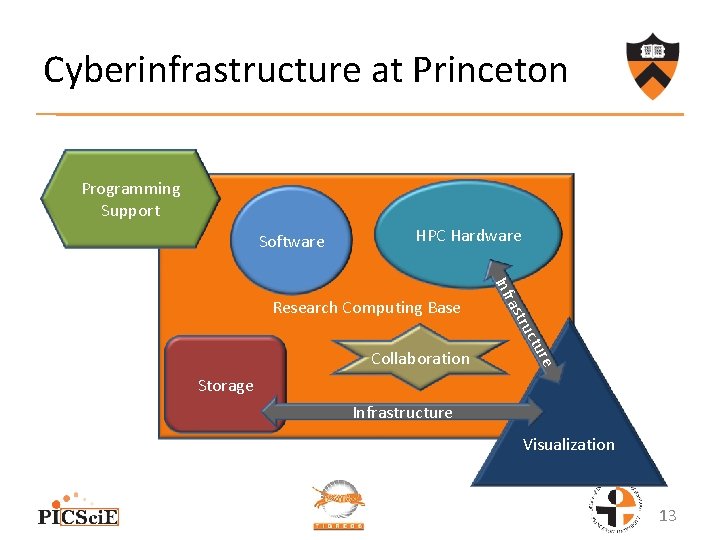

Cyberinfrastructure consists of computational systems, data and information management, advanced instruments, visualization environments, and people, all linked together by software and advanced networks to improve scholarly productivity and enable knowledge breakthroughs and discoveries not otherwise possible. Developing a Coherent Cyberinfrastructure from Local Campus to National Facilities: Challenges and Strategies A Workshop Report and Recommendations 3

Contents • • Yesterday Tomorrow Lessons 4

2002 -The Beginning • OIT, Academic Services – Computational Science and Engineering Support group (CSES) • The Princeton Institute for Computational Science and Engineering Support (PICSci. E) • Research Computing Advisory Group (RCAG) 5

Trust • • Adr. OIT – small Beowulf cluster Princeton Software Repository Maintenance of old systems Elders image – rebuilt RHEL distribution 6

Relationships • • • Hire new faculty New PICSci. E Director, Prof. Jerry Ostriker 1 st annual RCAG Presentation Wow!!! Let’s buy something big together – Collaborative selection process – Cobble together funding 7

Partnerships • 64 processor SGI Altix BX 2, Hecate • 1024 node IBM Blue. Gene/L, Orangena – Faculty – OIT – Development – Facilities • • Collaborative administration (without fees) Housed in central Data Center (without fees) 256 node Dell Beowulf cluster, Della HPC Steering Committee 8

Storage • Hire a pair of junior faculty – 192 node Beowulf cluster including startup • • • 35 TB Fees to recover 50% of capital cost 10% utilization within the first 6 months No fees!!! 95% utilization within 4 months 9

Hierarchical Storage Management • 96 node SGI Altix ICE, Artemis • RCAG – Need a scalable storage system that provides appropriate performance and availability for aging data • OIT proposal to Provost’s office • 1 PB total – IBM DS 4800, GPFS HSM, TSM HSM – Added benefit – free backups!!! • All systems have high performance access 10

Success Brings New Challenges Senior faculty hire 448 node Dell cluster New scheduling policies (Supercomputing|Scientific Computing) Administrators Meeting – SCAM • Data. Space • New PICSci. E Director – Prof. Jeroen Tromp • • 11

Visualization • Visualization Expert • Sony SRX-S 110 Projector – 8, 847, 360 pixels (4096 x 2160) – Rear projection ultra wide angle fabric screen – 9’ 3” (H) X 16’ 6” (W) • Open Source and Proprietary software 12

Cyberinfrastructure at Princeton Programming Support Software HPC Hardware re ctu tru ras Collaboration Inf Research Computing Base Storage Infrastructure Visualization 13

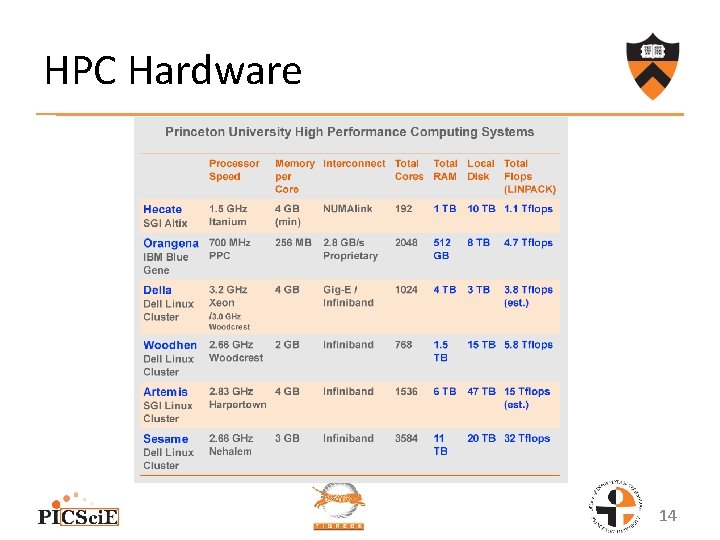

HPC Hardware 14

Collaboration • • • PICSci. E RCAG TIGRESS Steering Committee TIGRESS Users SCAM PLUG 15

Future • New Data Center • Coordinated supervision of departmental scientific/Linux system administrators • Collaboration with the Library • Lifecycle management • New technologies – GPGPU – Power 7 – X 86_64 based single image • Participation in Virtual Organizations 16

Lessons Learned • Research is driven by the faculty • Trust, relationships, and partnerships are essential to success • Research computing relies on the complete cyberinfrastructure of the University • Avoid fees • Change is a constant • Start small, do things well, and growth will follow • It’s all about the people 17

- Slides: 17