Bucketing Using MXNet Cyrus M Vahid Principal Solutions

Bucketing Using MXNet Cyrus M. Vahid, Principal Solutions Architect @ AWS Deep Learning cyrusmv@amazon. com June 2017 © 2017, Amazon Web Services, Inc. or its Affiliates. All rights reserved.

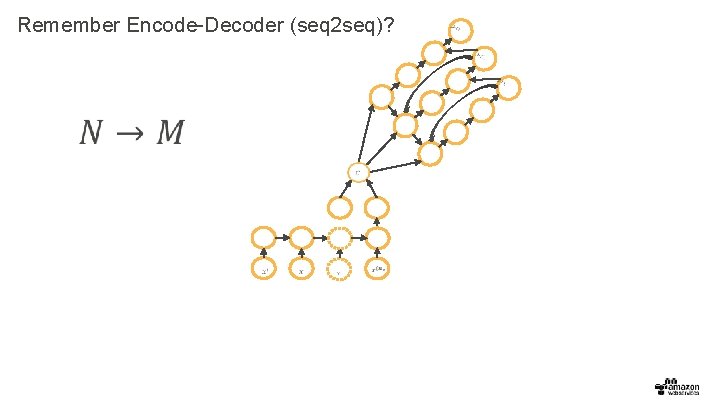

Remember Encode-Decoder (seq 2 seq)?

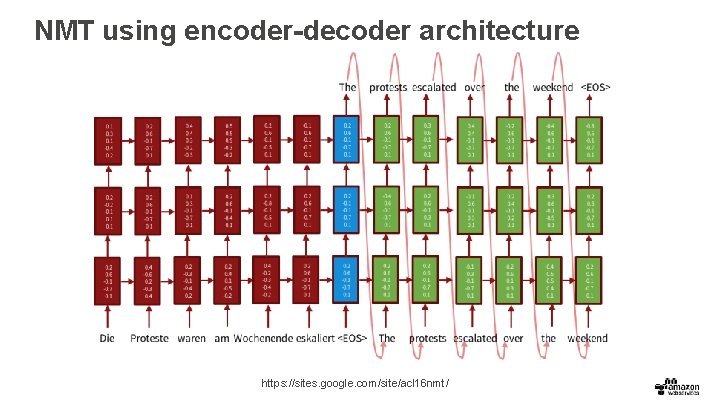

NMT using encoder-decoder architecture https: //sites. google. com/site/acl 16 nmt/

Word Embedding As we were told the story , we were Wexe d . This is a cat . - - - Lucy used to be the oldest know n Human -like creature . - My uncle bough me a watch . - - Als uns die Gesc hicht e erzählt wurde , waren wie Grau. 11 10 Das ist enie Katze . - - - 5 5 Lucy war früher die the älteste bekannte menschl iche Kreatur . 10 10 Mein Onkel hat mir eine Uhr gekauft . - - 6 7

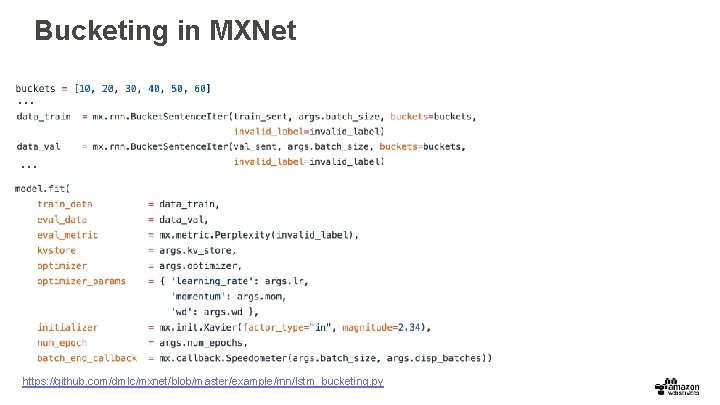

Bucketing and Padding • In order to avoid building a model for all combination of n m, use bucketing and padding. • A naïve approve is to create a model for a largest sequence example and pad the sentences. This results in sparse vectors with large memory requirements. • Instead bucketing can be used on mini-batches of varying-length sequences. Now we can unroll multiple instances of a network based on bucket size.

Bucketing in MXNet … … … https: //github. com/dmlc/mxnet/blob/master/example/rnn/lstm_bucketing. py

Thank you! Cyrus M. Vahid cyrusmv@amazon. com

- Slides: 7