BTHs Research in NV NFV and Cloud Networking

BTH’s Research in NV, NFV and Cloud Networking GENI Nordic Meeting, Stockholm, 2014 -09 -15 Kurt Tutschku (kurt. tutschku@bth. se) With Patrik Arlos, Anders Carlsson, Dragos Ilie and Markus Fiedler Blekinge Institute of Technology (BTH), Faculty of Computing Department of Communication Systems (DIKO)

Capacities at BTH • Blekinge Institute of Technology – 7200 students; 500 staff – emphasis on applied information technology and innovation for sustainable growth in industry and society – strong industrial environment in communication industry, both legacy (Ericsson, Telenor Sverige) and startup (Compu. Verde, Hyper. Island, City. Network, …. ) – network: 1 GBit; upgrade to full 10 GBit (in 2015)

Capacities at BTH’s DIKO Department • Department of Communication Systems (DIKO) – focus on future network/FI architectures and technologies, Quality of Experience, Cloud computing, performance evaluation, wireless communications, Internet of Things and security – currently four professors, four senior lecturers, two university adjuncts and 10 Ph. D. students • Current and past involvements in Future Internet projects – Current FI projects: XIFI (e. Xperimental Infrastructures for the Future Internet, EU), Queen (EU, Celtic plus), ETSI’s Industry Specifi -cation Group (ISG) for Network Function Virtualization (NFV), FI -PPP FI-STAR (EU), ENGENSEC (EU), BTH’s Cloud. Lab – Selected past contributions to FI projects: Akari (J), G-Lab (Ger), Mevico (Celtic, EU), Planet. Lab. Europe, Future Internet Assembly, FIPPP setup (AT representative)

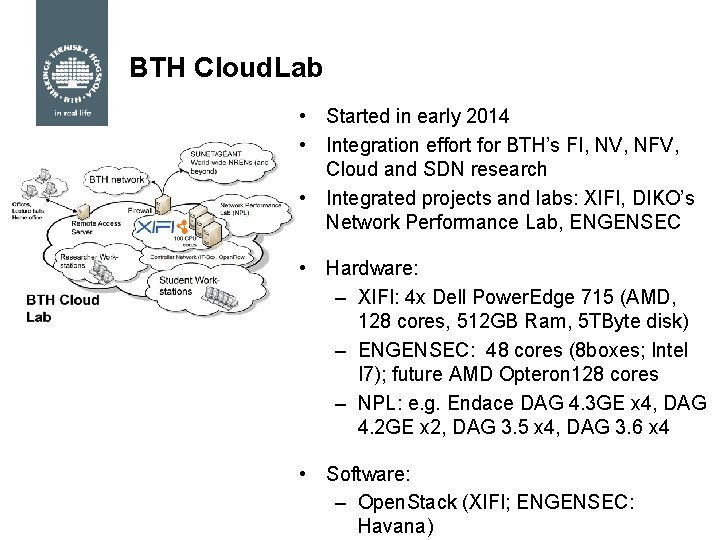

BTH Cloud. Lab • Started in early 2014 • Integration effort for BTH’s FI, NV, NFV, Cloud and SDN research • Integrated projects and labs: XIFI, DIKO’s Network Performance Lab, ENGENSEC • Hardware: – XIFI: 4 x Dell Power. Edge 715 (AMD, 128 cores, 512 GB Ram, 5 TByte disk) – ENGENSEC: 48 cores (8 boxes; Intel I 7); future AMD Opteron 128 cores – NPL: e. g. Endace DAG 4. 3 GE x 4, DAG 4. 2 GE x 2, DAG 3. 5 x 4, DAG 3. 6 x 4 • Software: – Open. Stack (XIFI; ENGENSEC: Havana)

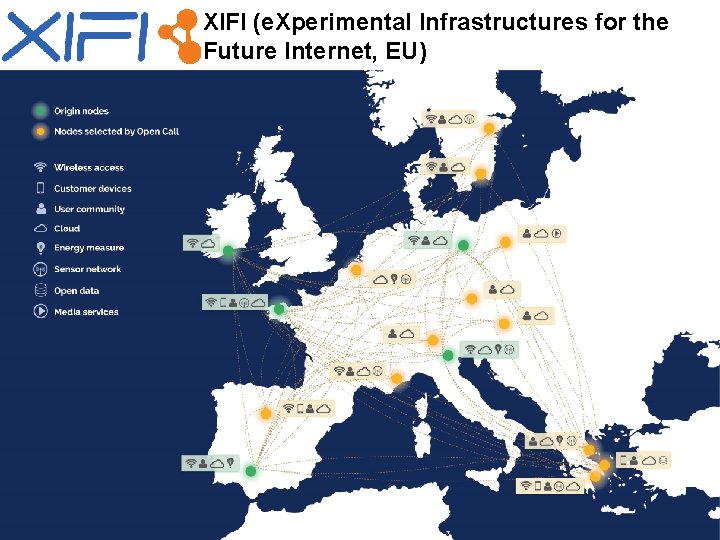

XIFI (e. Xperimental Infrastructures for the Future Internet, EU)

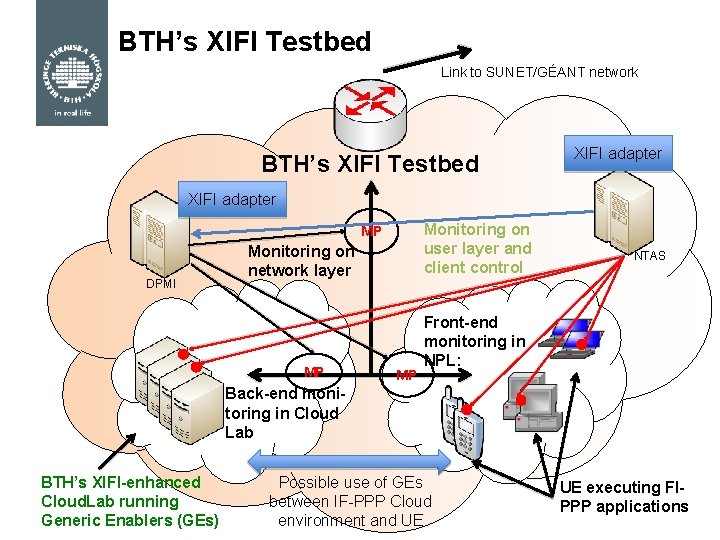

BTH’s XIFI Testbed Link to SUNET/GÉANT network BTH’s XIFI Testbed XIFI adapter Monitoring on user layer and client control MP DPMI Monitoring on network layer MP MP NTAS Front-end monitoring in NPL: Back-end monitoring in Cloud Lab BTH’s XIFI-enhanced Cloud. Lab running Generic Enablers (GEs) Possible use of GEs between IF-PPP Cloud environment and UE UE executing FIPPP applications

Educating the Next generation experts in Cyber Security (ENGENSEC) • Objective: create new Master’s program in IT Security as response on current and emerging cybersecurity threats by educating next generation experts • Funding organization: EU Tempus program • Number or participants: 21 • Participating countries: Sweden (coordinator), Poland, Latvia, Greece, Germany, Ukraine, Russia • Project activities: – Defining framework of joint Master’s program, Cloud-based security lab development, Development of the joint course curriculum, Develop new and further develop existing courses, Teacher training, Effective quality control ensured and project management, Dissemination of new Master’s programs benefits, Give pilot courses in a summer school, Prepare for participating Universities to

Direct Involvement of BTH in FI -PPP • FI-STAR = one out of five Call-2 FI-PPP use cases • BTH’s role – Major Swedish participant (with significant labs) – Requirement engineering (co-chair of FI-STAR WP 1) – Validation (Co-chair FI-STAR WP 6) • • Functional testing Quality of Service (Qo. S) measurements Quality of Experience (Qo. E) assessment Health Technology Assessment • BTH’s work is strongly focused on Generic Enablers (GE) and their performance Synergy with XIFI: Hosting would provide full control and unique Qo. S measurement facilities

A Very Brief View on Network Function Virtualization (NFV) Kurt Tutschku Blekinge Institute of Technology (BTH), Faculty of Computing Department of Communication Systems (DIKO)

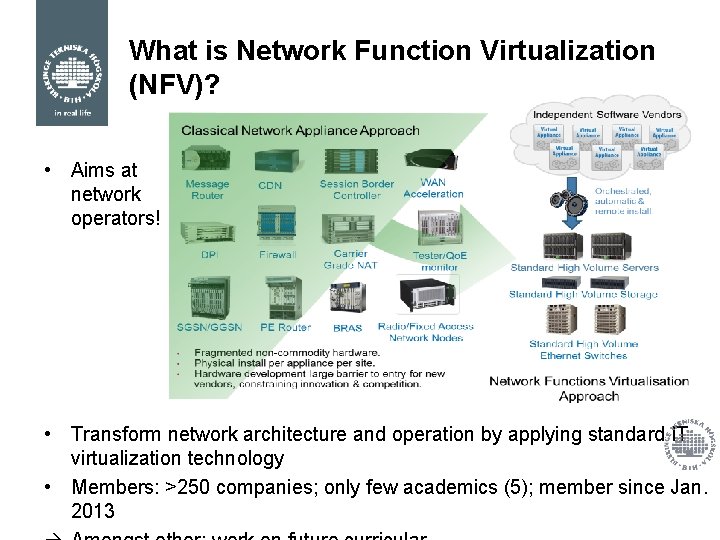

What is Network Function Virtualization (NFV)? • Aims at network operators! • Transform network architecture and operation by applying standard IT virtualization technology • Members: >250 companies; only few academics (5); member since Jan. 2013

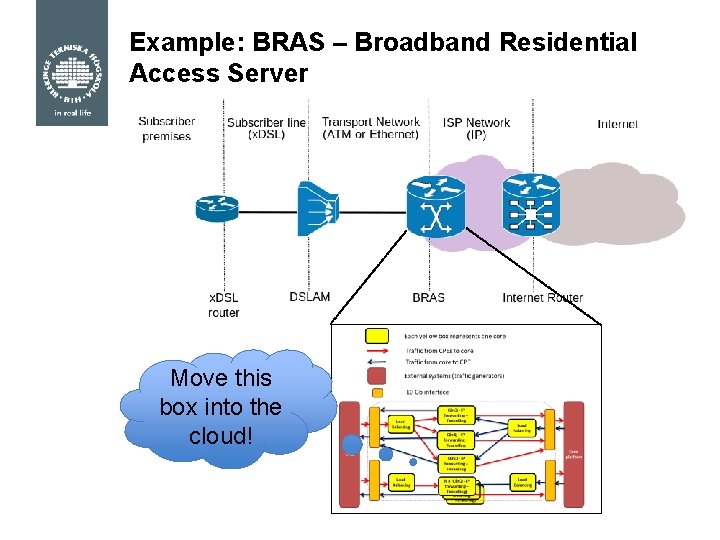

Example: BRAS – Broadband Residential Access Server Move this box into the cloud!

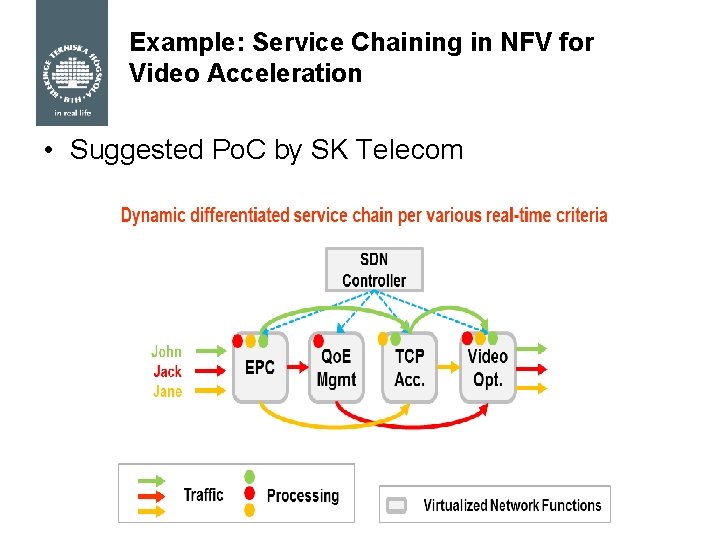

Example: Service Chaining in NFV for Video Acceleration • Suggested Po. C by SK Telecom

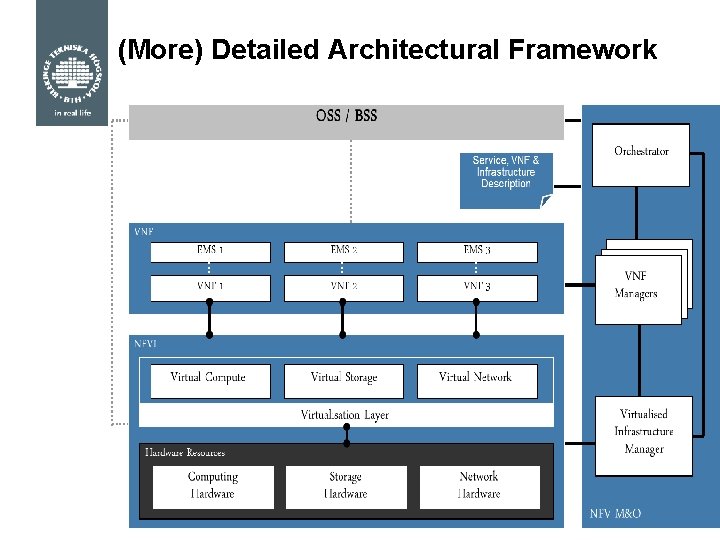

(More) Detailed Architectural Framework

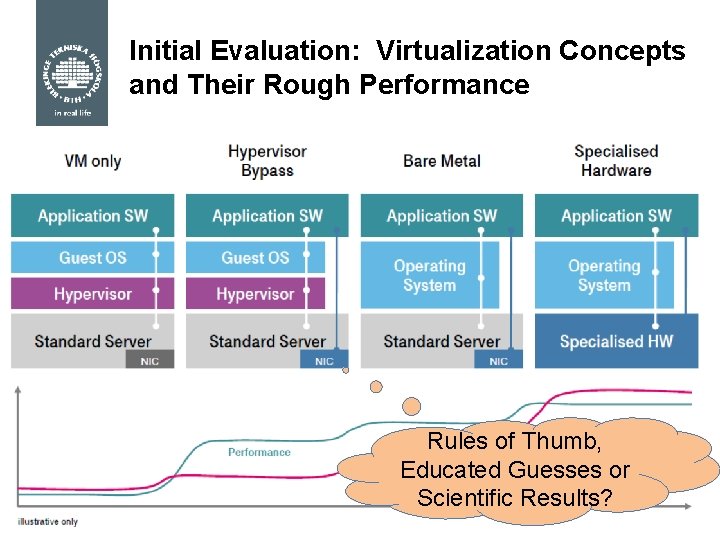

Initial Evaluation: Virtualization Concepts and Their Rough Performance Rules of Thumb, Educated Guesses or Scientific Results?

A Metric for Isolation and Transparency of Virtual Elements Kurt Tutschku Blekinge Institute of Technology (BTH), Faculty of Computing Department of Communication Systems (DIKO) With acknowledgements to the definitions and descriptions of M. Fiedler (BTH) and D. Stezenbach (University of Vienna)

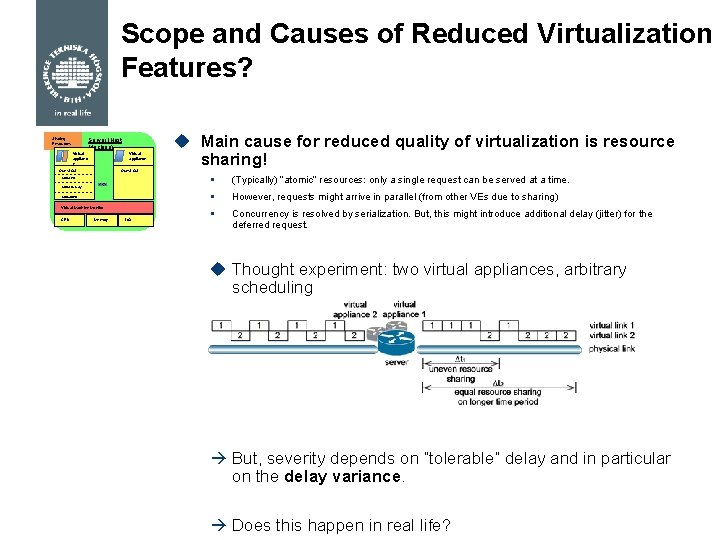

Scope and Causes of Reduced Virtualization Features? Sharing Resources Server (Host Machine) Virtual applianc e Virtual appliance Guest OS Virtual I/O Virtual Machi ne Virtual Memory Virtual CPU Virtual Machine Monitor CPU u Main cause for reduced quality of virtualization is resource sharing! Memory I/O § (Typically) “atomic” resources: only a single request can be served at a time. § However, requests might arrive in parallel (from other VEs due to sharing) § Concurrency is resolved by serialization. But, this might introduce additional delay (jitter) for the deferred request. u Thought experiment: two virtual appliances, arbitrary scheduling But, severity depends on “tolerable” delay and in particular on the delay variance. Does this happen in real life?

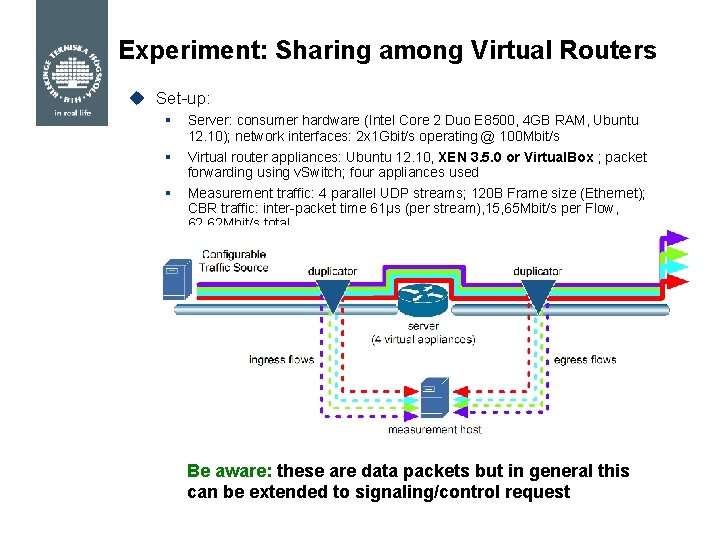

Experiment: Sharing among Virtual Routers u Set-up: § Server: consumer hardware (Intel Core 2 Duo E 8500, 4 GB RAM, Ubuntu 12. 10); network interfaces: 2 x 1 Gbit/s operating @ 100 Mbit/s § Virtual router appliances: Ubuntu 12. 10, XEN 3. 5. 0 or Virtual. Box ; packet forwarding using v. Switch; four appliances used § Measurement traffic: 4 parallel UDP streams; 120 B Frame size (Ethernet); CBR traffic: inter-packet time 61µs (per stream), 15, 65 Mbit/s per Flow, 62, 62 Mbit/s total Be aware: these are data packets but in general this can be extended to signaling/control request

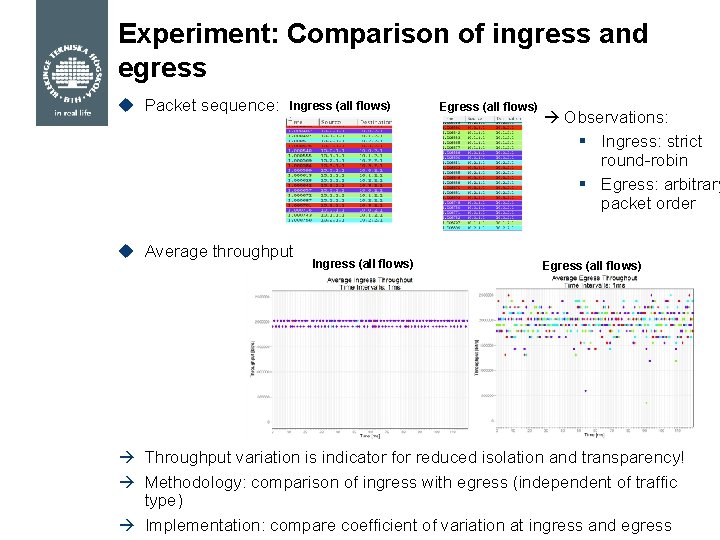

Experiment: Comparison of ingress and egress u Packet sequence: Ingress (all flows) u Average throughput Ingress (all flows) Egress (all flows) Observations: § Ingress: strict round-robin § Egress: arbitrary packet order Egress (all flows) Throughput variation is indicator for reduced isolation and transparency! Methodology: comparison of ingress with egress (independent of traffic type) Implementation: compare coefficient of variation at ingress and egress

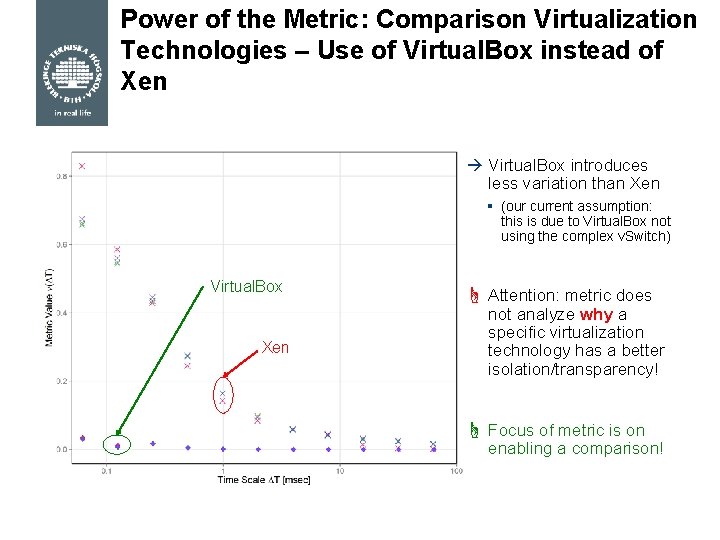

Power of the Metric: Comparison Virtualization Technologies – Use of Virtual. Box instead of Xen Virtual. Box introduces less variation than Xen § (our current assumption: this is due to Virtual. Box not using the complex v. Switch) Virtual. Box Xen ☝ Attention: metric does not analyze why a specific virtualization technology has a better isolation/transparency! ☝ Focus of metric is on enabling a comparison!

Tack så mycket! Frågor?

- Slides: 20