Broadband Protocols WP 1 2 1 IP protocols

Broadband Protocols WP 1. 2. 1 IP protocols, Lambda switching, multicasting Richard Hughes-Jones The University of Manchester www. hep. man. ac. uk/~rich/ then “Talks” FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 1

Protocols Document FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 2

Protocols Document 1 u “Protocol Investigation for e. VLBI Data Transfer” n Document JRA-WP 1. 2. 1. 001 n Jodrell & Manchester folks with hard work from Matt n Completed and on the EXPRe. S WIKI u Introduces e-VLBI and its Networking Requirements n n Continuously streamed data Individual packets are not particularly valuable. Maintenance of the data rate is important Quite different to those where bit-wise correct transmission is required e. g. file transfer n Forms a valuable use case for GGF GHPN-RG u Presents the actions required in order to make an informed decision and to implement suitable protocols in the European VLBI Network. Strategy document. FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 3

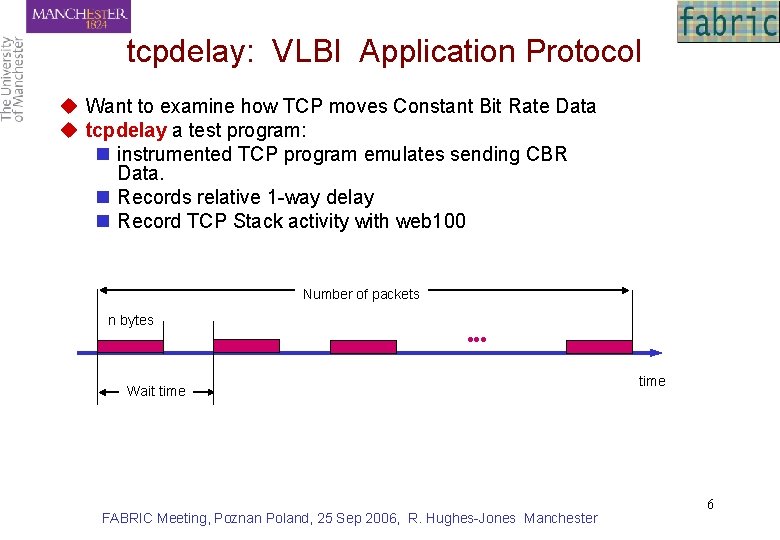

Protocols Document 2 u. Protocols considered for investigation include: n n n TCP/IP UDP/IP DCCP/IP VSI-E RTP/UDP/IP Remote Direct Memory Access TCP Offload Engines u. Very useful discussions at Haystack VLBI meeting n Agreement to make joint tests Haystack-Jodrell n Use of ESLEA 1 Gbit transatlantic link u. Work in progress – Links to ESLEA UK e-science n Vlbi-udp – Simon: UDP/IP stability & the effect of packet loss on correlations n Tcpdelay – Stephen: TCP/IP and CBR data FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 4

tcpdelay FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 5

tcpdelay: VLBI Application Protocol u Want to examine how TCP moves Constant Bit Rate Data u tcpdelay a test program: n instrumented TCP program emulates sending CBR Data. n Records relative 1 -way delay n Record TCP Stack activity with web 100 Number of packets n bytes Wait time FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester time 6

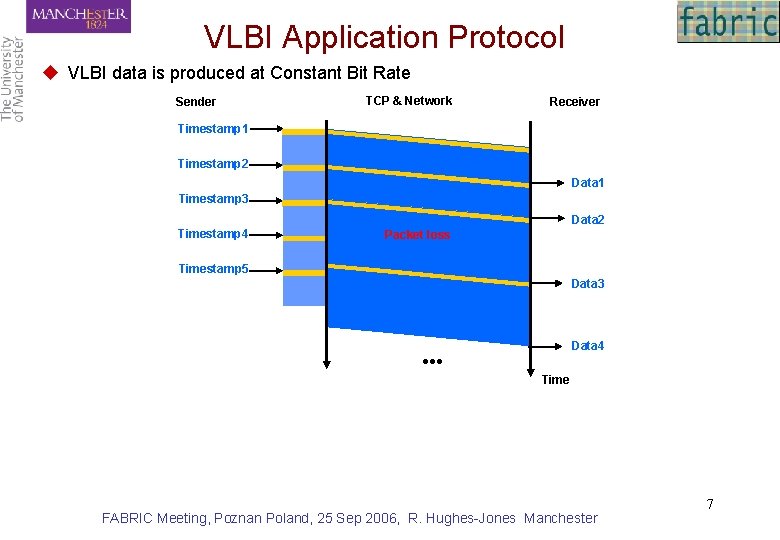

VLBI Application Protocol u VLBI data is produced at Constant Bit Rate Sender TCP & Network Receiver Timestamp 1 Timestamp 2 Data 1 Timestamp 3 Data 2 Timestamp 4 Packet loss Timestamp 5 Data 3 Data 4 ●●● Time FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 7

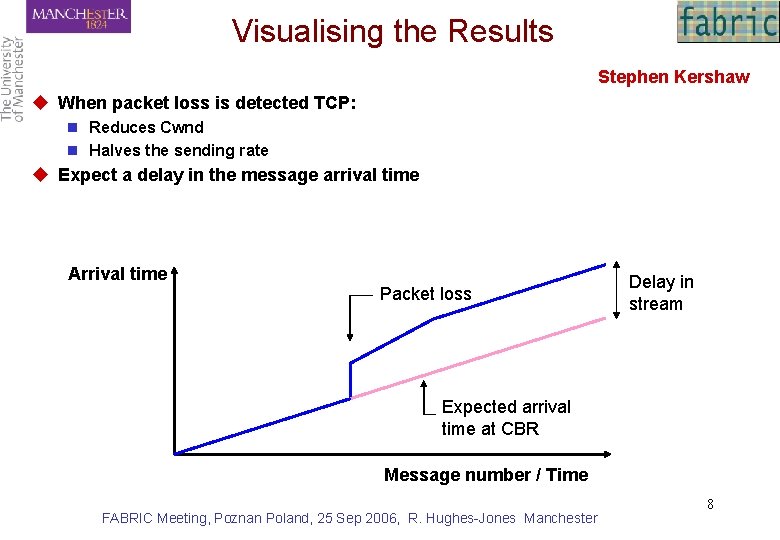

Visualising the Results Stephen Kershaw u When packet loss is detected TCP: n Reduces Cwnd n Halves the sending rate u Expect a delay in the message arrival time Arrival time Packet loss Delay in stream Expected arrival time at CBR Message number / Time FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 8

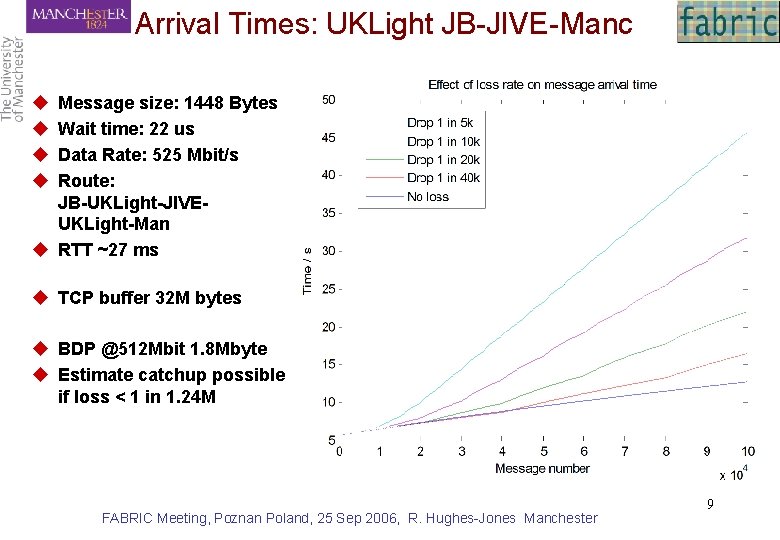

Arrival Times: UKLight JB-JIVE-Manc u u Message size: 1448 Bytes Wait time: 22 us Data Rate: 525 Mbit/s Route: JB-UKLight-JIVEUKLight-Man u RTT ~27 ms u TCP buffer 32 M bytes u BDP @512 Mbit 1. 8 Mbyte u Estimate catchup possible if loss < 1 in 1. 24 M FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 9

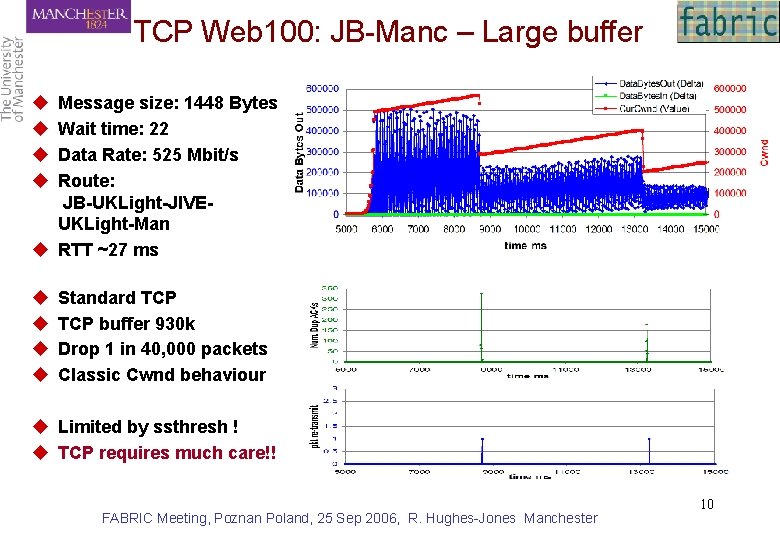

TCP Web 100: JB-Manc – Large buffer u u Message size: 1448 Bytes Wait time: 22 Data Rate: 525 Mbit/s Route: JB-UKLight-JIVEUKLight-Man u RTT ~27 ms u u Standard TCP buffer 930 k Drop 1 in 40, 000 packets Classic Cwnd behaviour u Limited by ssthresh ! u TCP requires much care!! FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 10

i. BOB FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 11

Prototype i. BOB with two sampler boards attached FPGA based signal processing board from UC Berkeley FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 12

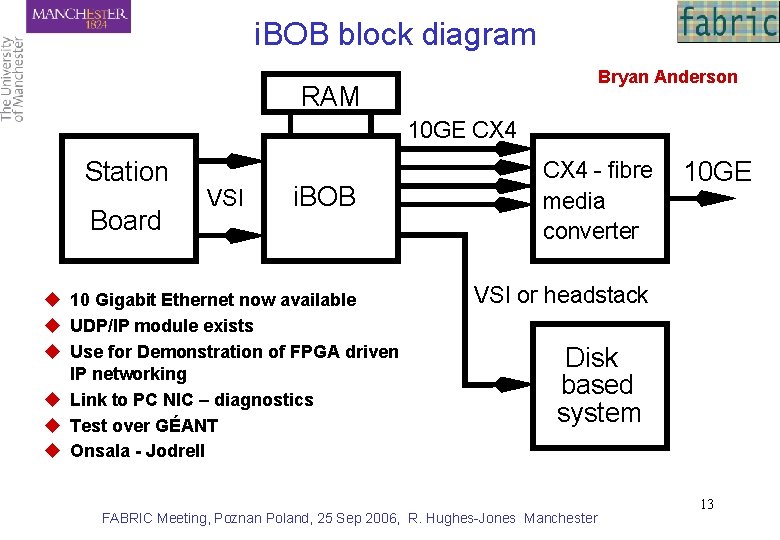

i. BOB block diagram Bryan Anderson RAM 10 GE CX 4 Station Board VSI i. BOB u 10 Gigabit Ethernet now available u UDP/IP module exists u Use for Demonstration of FPGA driven IP networking u Link to PC NIC – diagnostics u Test over GÉANT u Onsala - Jodrell CX 4 - fibre media converter 10 GE VSI or headstack Disk based system FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 13

Multi-Gigabit Trials on GEANT Collaboration with Dante. u. What is inside GÉANT 2 u What is the collaboration interesting? n 10 Gigabit Ethernet n UDP memory-2 -memory flows n TCP flows with allocated Bandwidth u. Options using GÉANT Development Network n 10 Gbit SDH Network u. Options Using the GÉANT Light. Path Service n Po. P Location for Network tests FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 14

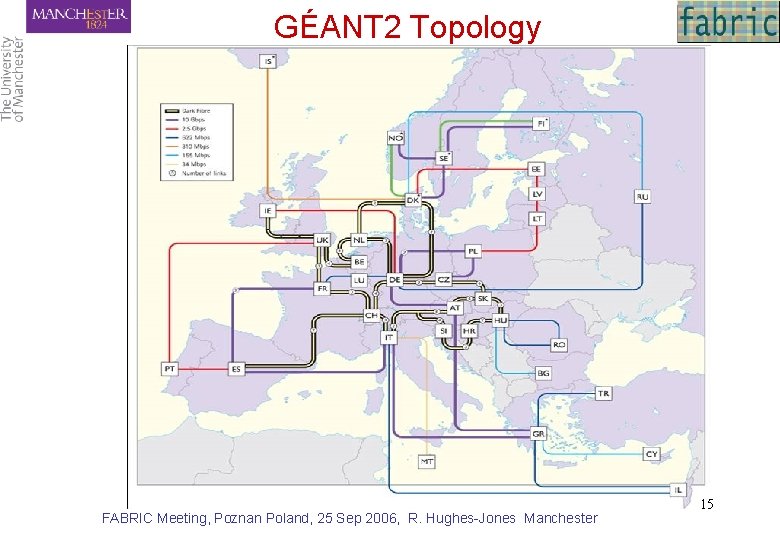

GÉANT 2 Topology FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 15

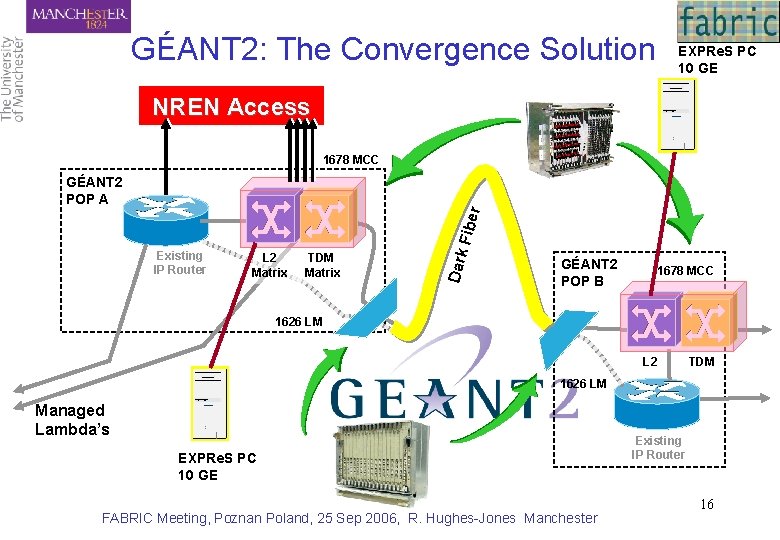

GÉANT 2: The Convergence Solution EXPRe. S PC 10 GE NREN Access 1678 MCC Existing IP Router L 2 Matrix TDM Matrix Dark Fibe r GÉANT 2 POP A GÉANT 2 POP B 1678 MCC 1626 LM L 2 TDM 1626 LM Managed Lambda’s EXPRe. S PC 10 GE FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester Existing IP Router 16

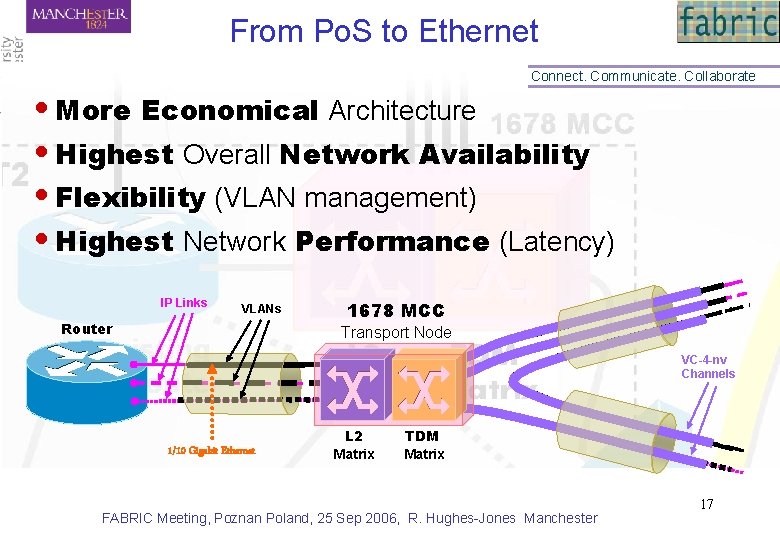

From Po. S to Ethernet Connect. Communicate. Collaborate • More Economical Architecture • Highest Overall Network Availability • Flexibility (VLAN management) • Highest Network Performance (Latency) IP Links VLANs Router 1678 MCC Transport Node VC-4 -nv Channels 1/10 Gigabit Ethernet L 2 Matrix TDM Matrix FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 17

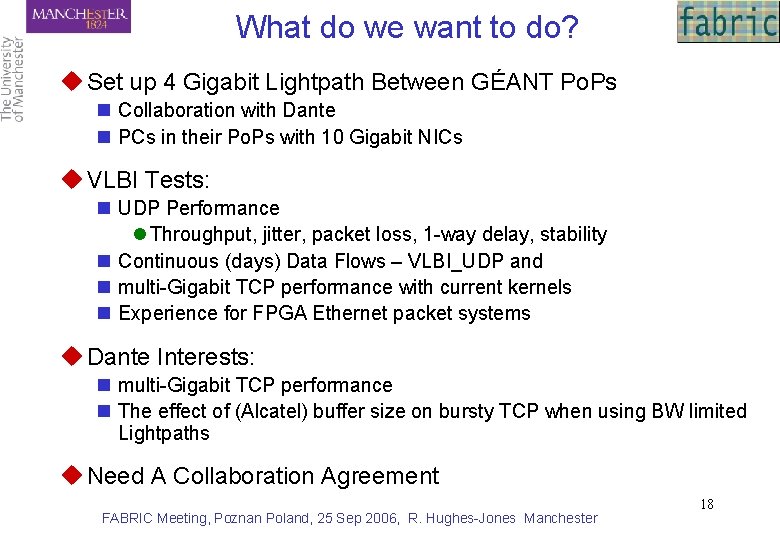

What do we want to do? u Set up 4 Gigabit Lightpath Between GÉANT Po. Ps n Collaboration with Dante n PCs in their Po. Ps with 10 Gigabit NICs u VLBI Tests: n UDP Performance l Throughput, jitter, packet loss, 1 -way delay, stability n Continuous (days) Data Flows – VLBI_UDP and n multi-Gigabit TCP performance with current kernels n Experience for FPGA Ethernet packet systems u Dante Interests: n multi-Gigabit TCP performance n The effect of (Alcatel) buffer size on bursty TCP when using BW limited Lightpaths u Need A Collaboration Agreement FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 18

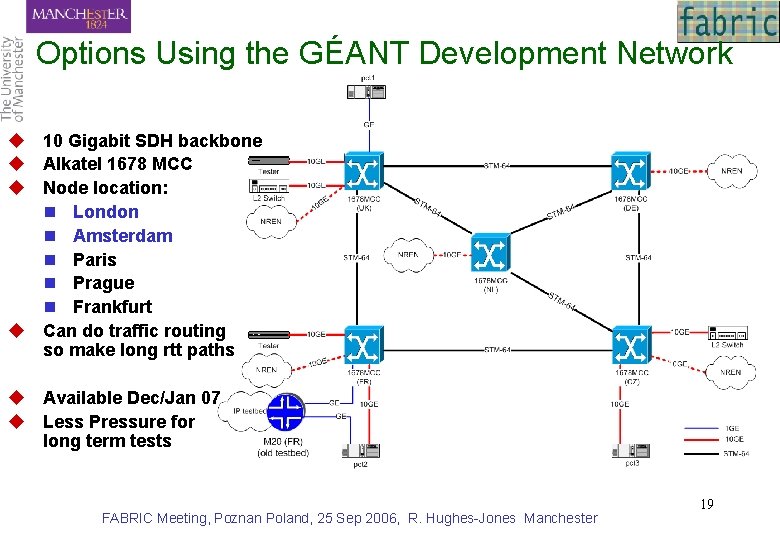

Options Using the GÉANT Development Network u 10 Gigabit SDH backbone u Alkatel 1678 MCC u Node location: n London n Amsterdam n Paris n Prague n Frankfurt u Can do traffic routing so make long rtt paths u Available Dec/Jan 07 u Less Pressure for long term tests FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 19

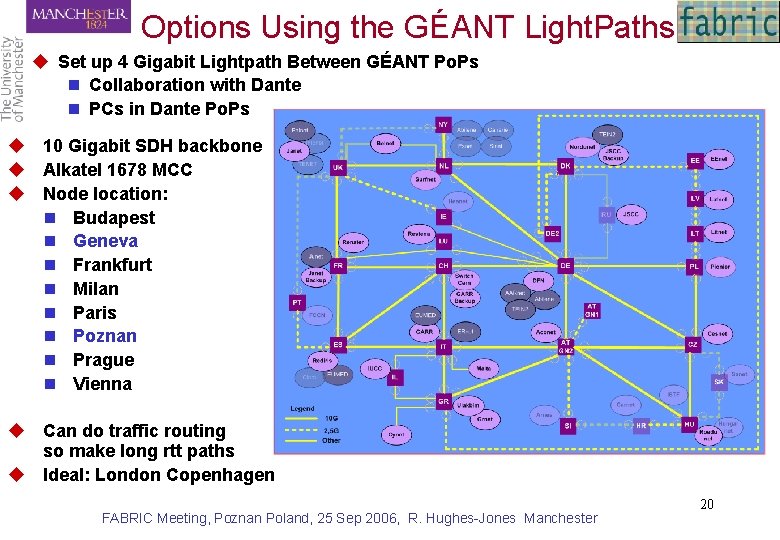

Options Using the GÉANT Light. Paths u Set up 4 Gigabit Lightpath Between GÉANT Po. Ps n Collaboration with Dante n PCs in Dante Po. Ps u 10 Gigabit SDH backbone u Alkatel 1678 MCC u Node location: n Budapest n Geneva n Frankfurt n Milan n Paris n Poznan n Prague n Vienna u Can do traffic routing so make long rtt paths u Ideal: London Copenhagen FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 20

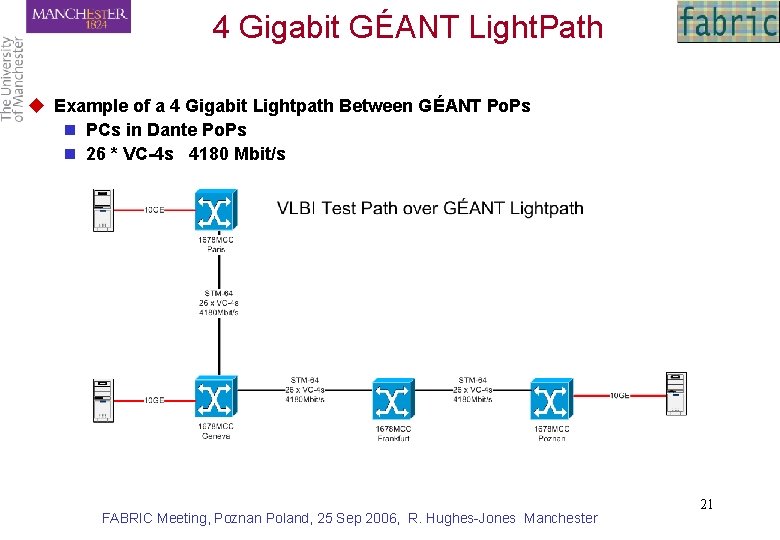

4 Gigabit GÉANT Light. Path u Example of a 4 Gigabit Lightpath Between GÉANT Po. Ps n PCs in Dante Po. Ps n 26 * VC-4 s 4180 Mbit/s FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 21

PCs and Current Tests FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 22

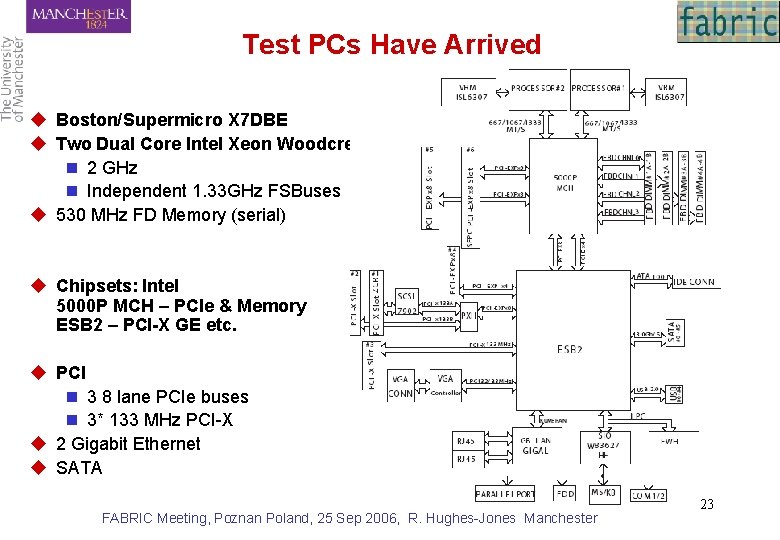

Test PCs Have Arrived u Boston/Supermicro X 7 DBE u Two Dual Core Intel Xeon Woodcrest 5130 n 2 GHz n Independent 1. 33 GHz FSBuses u 530 MHz FD Memory (serial) u Chipsets: Intel 5000 P MCH – PCIe & Memory ESB 2 – PCI-X GE etc. u PCI n 3 8 lane PCIe buses n 3* 133 MHz PCI-X u 2 Gigabit Ethernet u SATA FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 23

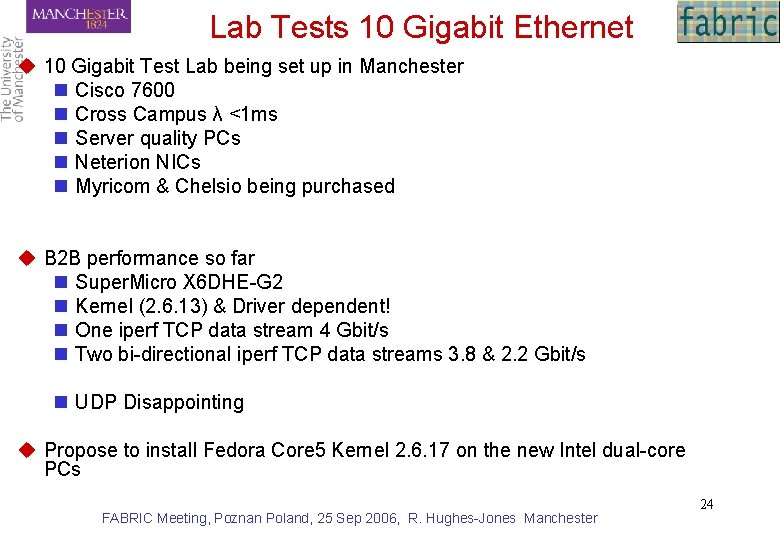

Lab Tests 10 Gigabit Ethernet u 10 Gigabit Test Lab being set up in Manchester n Cisco 7600 n Cross Campus λ <1 ms n Server quality PCs n Neterion NICs n Myricom & Chelsio being purchased u B 2 B performance so far n Super. Micro X 6 DHE-G 2 n Kernel (2. 6. 13) & Driver dependent! n One iperf TCP data stream 4 Gbit/s n Two bi-directional iperf TCP data streams 3. 8 & 2. 2 Gbit/s n UDP Disappointing u Propose to install Fedora Core 5 Kernel 2. 6. 17 on the new Intel dual-core PCs FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 24

Any Questions? FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 25

Backup Slides FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 26

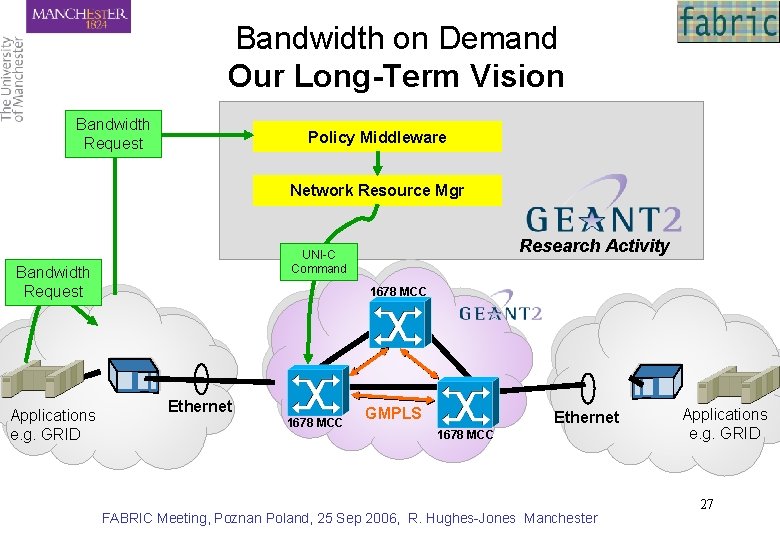

Bandwidth on Demand Our Long-Term Vision Bandwidth Request Policy Middleware Network Resource Mgr Bandwidth Request Applications e. g. GRID Research Activity UNI-C Command 1678 MCC Ethernet 1678 MCC GMPLS Ethernet 1678 MCC FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester Applications e. g. GRID 27

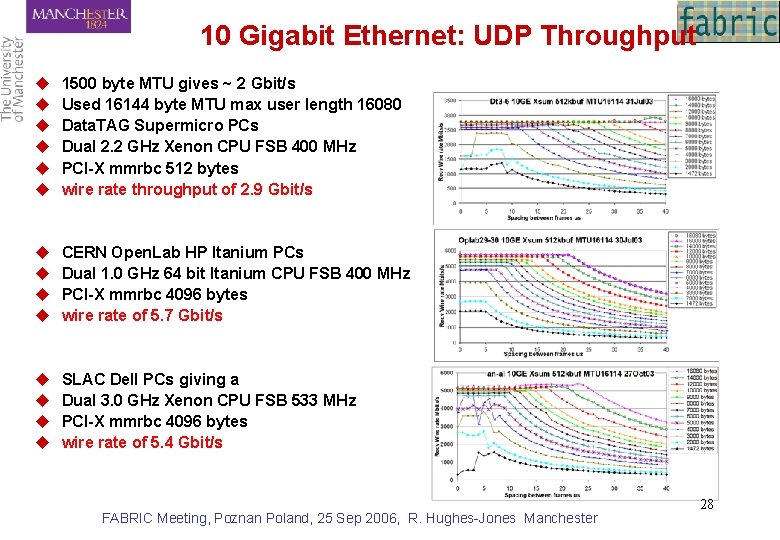

10 Gigabit Ethernet: UDP Throughput u u u 1500 byte MTU gives ~ 2 Gbit/s Used 16144 byte MTU max user length 16080 Data. TAG Supermicro PCs Dual 2. 2 GHz Xenon CPU FSB 400 MHz PCI-X mmrbc 512 bytes wire rate throughput of 2. 9 Gbit/s u u CERN Open. Lab HP Itanium PCs Dual 1. 0 GHz 64 bit Itanium CPU FSB 400 MHz PCI-X mmrbc 4096 bytes wire rate of 5. 7 Gbit/s u u SLAC Dell PCs giving a Dual 3. 0 GHz Xenon CPU FSB 533 MHz PCI-X mmrbc 4096 bytes wire rate of 5. 4 Gbit/s FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 28

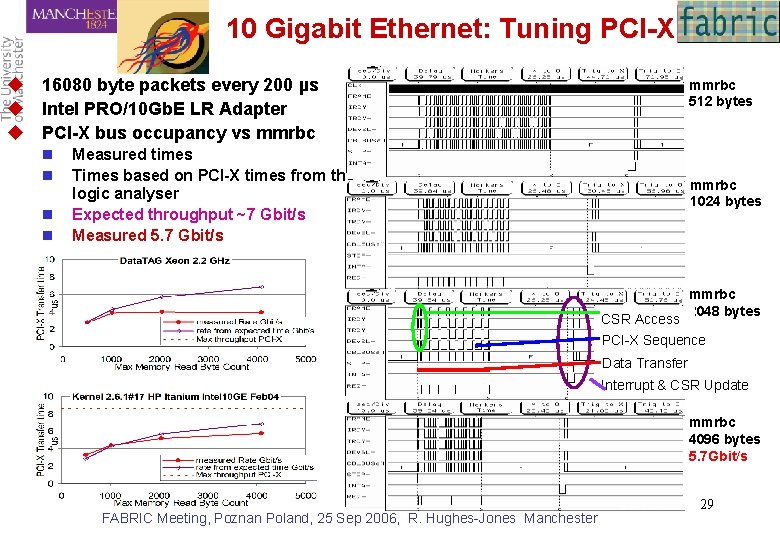

10 Gigabit Ethernet: Tuning PCI-X u 16080 byte packets every 200 µs u Intel PRO/10 Gb. E LR Adapter u PCI-X bus occupancy vs mmrbc n n mmrbc 512 bytes Measured times Times based on PCI-X times from the logic analyser Expected throughput ~7 Gbit/s Measured 5. 7 Gbit/s mmrbc 1024 bytes CSR Access mmrbc 2048 bytes PCI-X Sequence Data Transfer Interrupt & CSR Update mmrbc 4096 bytes 5. 7 Gbit/s FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 29

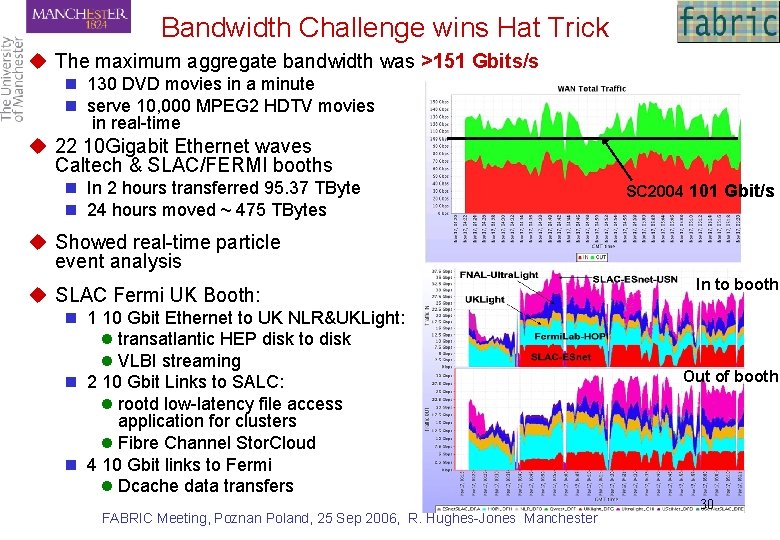

Bandwidth Challenge wins Hat Trick u The maximum aggregate bandwidth was >151 Gbits/s n 130 DVD movies in a minute n serve 10, 000 MPEG 2 HDTV movies in real-time u 22 10 Gigabit Ethernet waves Caltech & SLAC/FERMI booths n In 2 hours transferred 95. 37 TByte n 24 hours moved ~ 475 TBytes SC 2004 101 Gbit/s u Showed real-time particle event analysis u SLAC Fermi UK Booth: n 1 10 Gbit Ethernet to UK NLR&UKLight: l transatlantic HEP disk to disk l VLBI streaming n 2 10 Gbit Links to SALC: l rootd low-latency file access application for clusters l Fibre Channel Stor. Cloud n 4 10 Gbit links to Fermi l Dcache data transfers FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester In to booth Out of booth 30

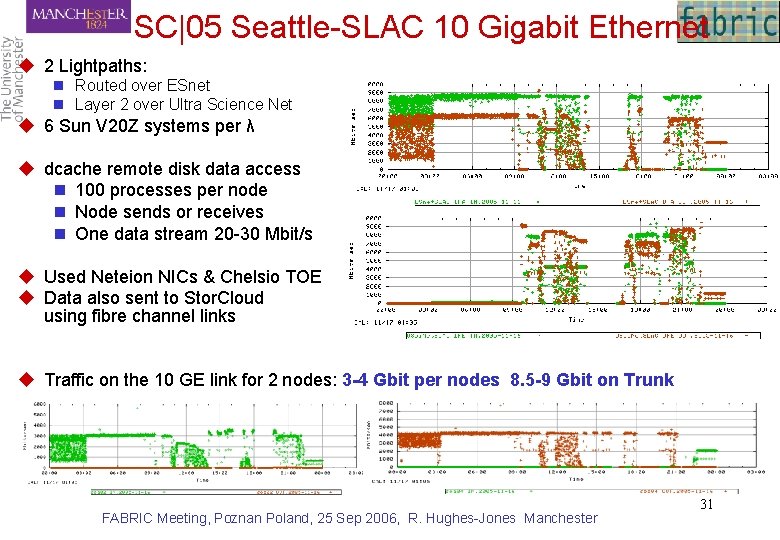

SC|05 Seattle-SLAC 10 Gigabit Ethernet u 2 Lightpaths: n Routed over ESnet n Layer 2 over Ultra Science Net u 6 Sun V 20 Z systems per λ u dcache remote disk data access n 100 processes per node n Node sends or receives n One data stream 20 -30 Mbit/s u Used Neteion NICs & Chelsio TOE u Data also sent to Stor. Cloud using fibre channel links u Traffic on the 10 GE link for 2 nodes: 3 -4 Gbit per nodes 8. 5 -9 Gbit on Trunk FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 31

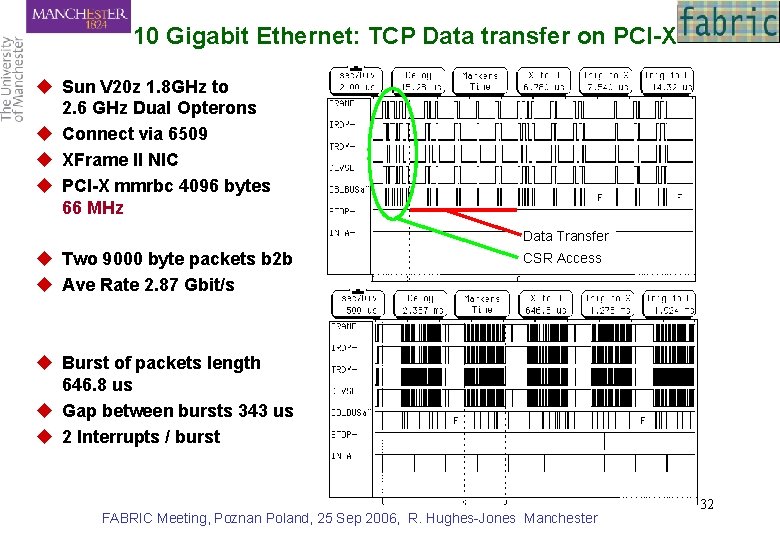

10 Gigabit Ethernet: TCP Data transfer on PCI-X u Sun V 20 z 1. 8 GHz to 2. 6 GHz Dual Opterons u Connect via 6509 u XFrame II NIC u PCI-X mmrbc 4096 bytes 66 MHz Data Transfer u Two 9000 byte packets b 2 b u Ave Rate 2. 87 Gbit/s CSR Access u Burst of packets length 646. 8 us u Gap between bursts 343 us u 2 Interrupts / burst FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 32

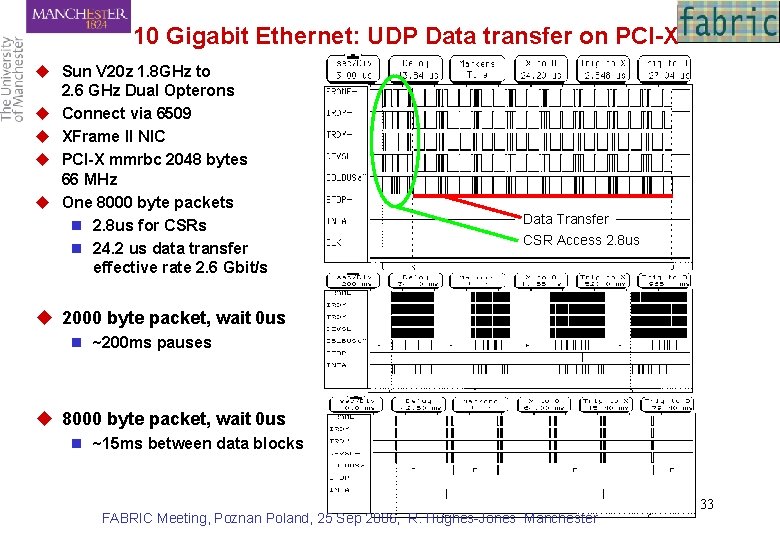

10 Gigabit Ethernet: UDP Data transfer on PCI-X u Sun V 20 z 1. 8 GHz to 2. 6 GHz Dual Opterons u Connect via 6509 u XFrame II NIC u PCI-X mmrbc 2048 bytes 66 MHz u One 8000 byte packets n 2. 8 us for CSRs n 24. 2 us data transfer effective rate 2. 6 Gbit/s Data Transfer CSR Access 2. 8 us u 2000 byte packet, wait 0 us n ~200 ms pauses u 8000 byte packet, wait 0 us n ~15 ms between data blocks FABRIC Meeting, Poznan Poland, 25 Sep 2006, R. Hughes-Jones Manchester 33

- Slides: 33