Bringing Grids to University Campuses Paul Avery University

Bringing Grids to University Campuses Paul Avery University of Florida avery@phys. ufl. edu International ICFA Workshop on HEP, Networking & Digital Divide Issues for Global e-Science Daegu, Korea May 27, 2005 Campus Grids (May 27, 2005) Paul Avery 1

Examples Discussed Here Ø Three campuses, in different states of readiness u University of Wisconsin: u University of Michigan: u University of Florida: Ø Not GLOW MGRID UF Research Grid complete, by any means u Goal is to illustrate factors that go into creating campus Grid facilities Campus Grids (May 27, 2005) Paul Avery 2

Grid Laboratory of Wisconsin Ø 2003 Initiative funded by NSF/UW: Six GLOW Sites u Computational Genomics, Chemistry u Amanda, Ice-cube, Physics/Space Science u High Energy Physics/CMS, Physics u Materials by Design, Chemical Engineering u Radiation Therapy, Medical Physics u Computer Science Ø Deployed in two Phases http: //www. cs. wisc. edu/condor/glow/ Campus Grids (May 27, 2005) Paul Avery 3

Condor/GLOW Ideas Ø Exploit commodity hardware for high throughput computing u The base hardware is the same at all sites u Local configuration optimization as needed (e. g. , CPU vs storage) u Must meet global requirements (very similar configurations now) Ø Managed locally at 6 sites u Shared globally across all sites u Higher priority for local jobs Campus Grids (May 27, 2005) Paul Avery 4

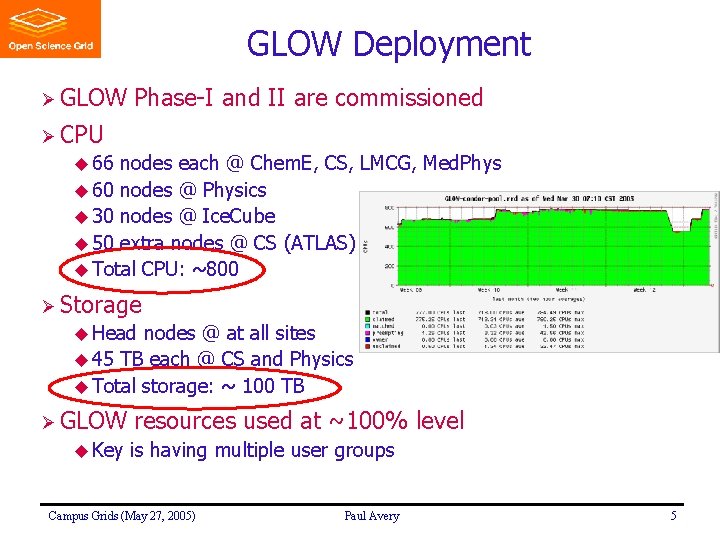

GLOW Deployment Ø GLOW Phase-I and II are commissioned Ø CPU u 66 nodes each @ Chem. E, CS, LMCG, Med. Phys u 60 nodes @ Physics u 30 nodes @ Ice. Cube u 50 extra nodes @ CS (ATLAS) u Total CPU: ~800 Ø Storage u Head nodes @ at all sites u 45 TB each @ CS and Physics u Total storage: ~ 100 TB Ø GLOW u Key resources used at ~100% level is having multiple user groups Campus Grids (May 27, 2005) Paul Avery 5

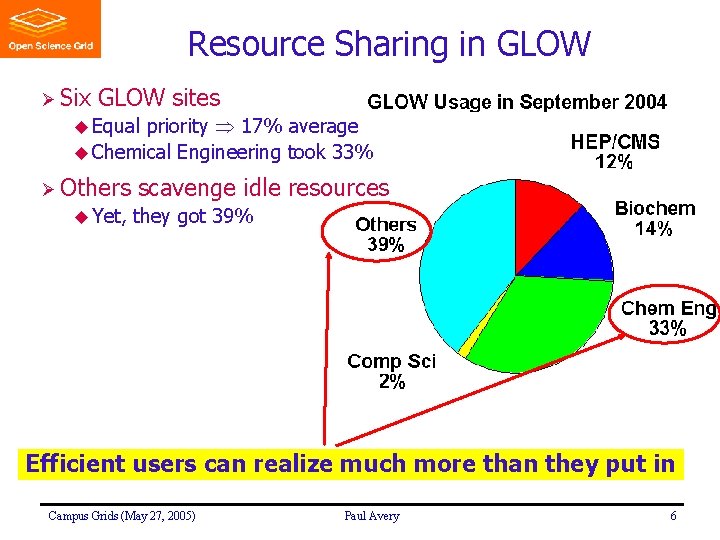

Resource Sharing in GLOW Ø Six GLOW sites priority 17% average u Chemical Engineering took 33% u Equal Ø Others u Yet, scavenge idle resources they got 39% Efficient users can realize much more than they put in Campus Grids (May 27, 2005) Paul Avery 6

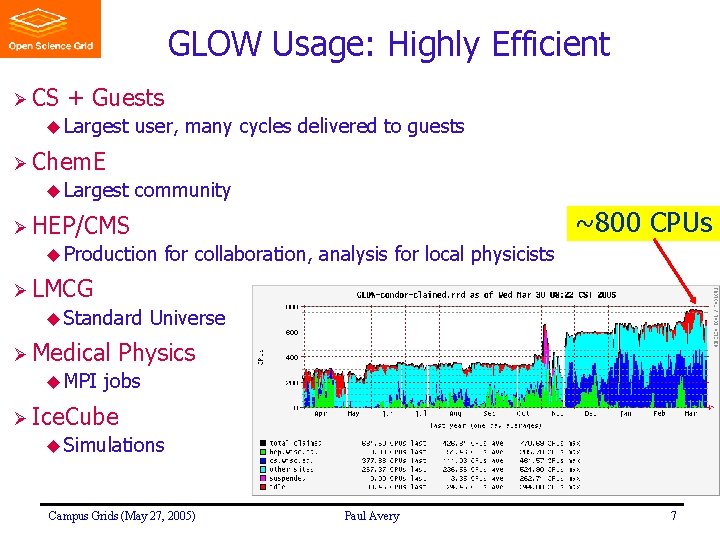

GLOW Usage: Highly Efficient Ø CS + Guests u Largest user, many cycles delivered to guests Ø Chem. E u Largest community ~800 CPUs Ø HEP/CMS u Production for collaboration, analysis for local physicists Ø LMCG u Standard Ø Medical u MPI Universe Physics jobs Ø Ice. Cube u Simulations Campus Grids (May 27, 2005) Paul Avery 7

Adding New GLOW Members Ø Proposed minimum involvement u One rack with about 50 CPUs Ø Identified system support person who joins GLOW-tech Ø PI joins the GLOW-exec Ø Adhere to current GLOW policies Ø Sponsored by existing GLOW members ATLAS group and Condensed matter group were proposed by CMS and CS, and were accepted as new members n ATLAS using 50% of GLOW cycles (housed @ CS) n New machines of CM Physics group being commissioned u Expressions of interest from other groups u Campus Grids (May 27, 2005) Paul Avery 8

GLOW & Condor Development Ø GLOW presents CS researchers with an ideal laboratory u Real users with diverse requirements u Early commissioning and stress testing of new Condor releases in an environment controlled by Condor team u Results in robust releases for world-wide Condor deployment Ø New features in Condor Middleware (examples) u Group wise or hierarchical priority setting u Rapid-response with large resources for short periods of time for high priority interrupts u Hibernating shadow jobs instead of total preemption u MPI use (Medical Physics) u Condor-G (High Energy Physics) Campus Grids (May 27, 2005) Paul Avery 9

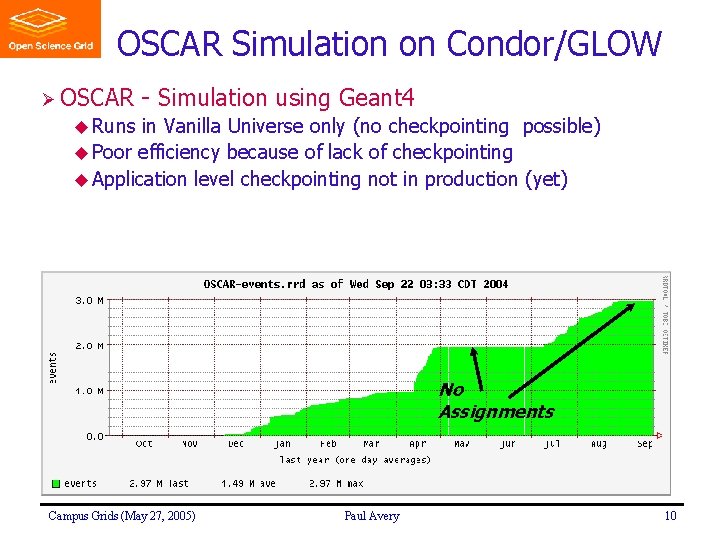

OSCAR Simulation on Condor/GLOW Ø OSCAR - Simulation using Geant 4 u Runs in Vanilla Universe only (no checkpointing possible) u Poor efficiency because of lack of checkpointing u Application level checkpointing not in production (yet) No Assignments Campus Grids (May 27, 2005) Paul Avery 10

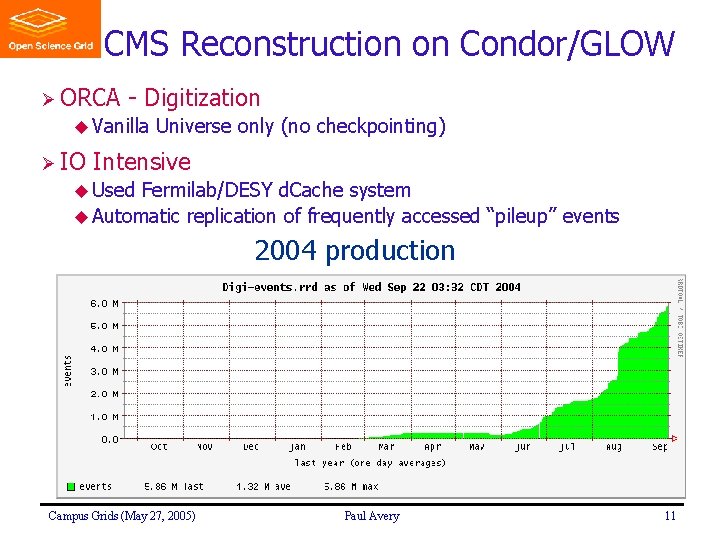

CMS Reconstruction on Condor/GLOW Ø ORCA - Digitization u Vanilla Ø IO Universe only (no checkpointing) Intensive u Used Fermilab/DESY d. Cache system u Automatic replication of frequently accessed “pileup” events 2004 production Campus Grids (May 27, 2005) Paul Avery 11

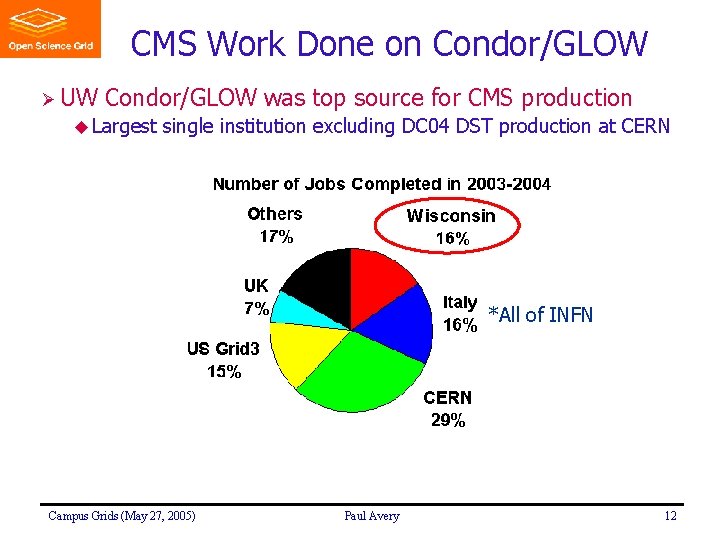

CMS Work Done on Condor/GLOW Ø UW Condor/GLOW was top source for CMS production u Largest single institution excluding DC 04 DST production at CERN *All of INFN Campus Grids (May 27, 2005) Paul Avery 12

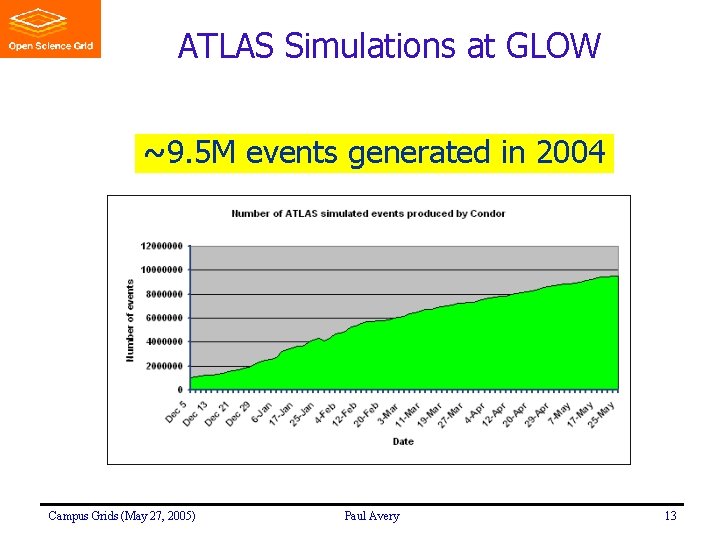

ATLAS Simulations at GLOW ~9. 5 M events generated in 2004 Campus Grids (May 27, 2005) Paul Avery 13

MGRID at Michigan Ø MGRID u Michigan Grid Research and Infrastructure Development u Develop, deploy, and sustain an institutional grid at Michigan u Group started in 2002 with initial U Michigan funding Ø Many groups across the University participate u Compute/data/network-intensive research grants u ATLAS, NPACI, NEESGrid, Visible Human, NFSv 4, NMI http: //www. mgrid. umich. edu Campus Grids (May 27, 2005) Paul Avery 14

MGRID Center Ø Central core of technical staff (3 FTEs, new hires) Ø Faculty and staff from participating units Ø Exec. committee from participating units & provost office Ø Collaborative grid research and development with technical staff from participating units Campus Grids (May 27, 2005) Paul Avery 15

MGrid Research Project Partners Ø College of LS&A (Physics) (www. lsa. umich. edu) Ø Center for Information Technology Intergration (www. citi. umich. edu) Ø Michigan Center for Bio. Informatics(www. ctaalliance. org) Ø Visible Human Project (vhp. med. umich. edu) Ø Center for Advanced Computing (cac. engin. umich. edu) Ø Mental Health Research Institute (www. med. umich. edu/mhri) Ø ITCom (www. itcom. itd. umich. edu) Ø School of Information (si. umich. edu) Campus Grids (May 27, 2005) Paul Avery 16

MGRID: Goals Ø For participating units u Knowledge, support and framework for deploying Grid technologies u Exploitation of Grid resources both on campus and beyond u A context for the University to invest in computing resources Ø Provide test bench for existing, emerging Grid technologies Ø Coordinate u GGF, Ø Make activities within the national Grid community Globus. World, etc significant contributions to general grid problems u Sharing resources among multiple VOs u Network monitoring and Qo. S issues for grids u Integration of middleware with domain specific applications u Grid filesystems Campus Grids (May 27, 2005) Paul Avery 17

MGRID Authentication Ø Developed a KX 509 module that bridges two technologies u Globus public key cryptography (X 509 certificates) u UM Kerberos user authentication Ø MGRID u “How provides step-by-step instructions on web site to Grid-Enable Your Browser” Campus Grids (May 27, 2005) Paul Avery 18

MGRID Authorization Ø MGRID uses Walden: fine-grained authorization engine u Leveraging Ø Walden open-source XACML implementation from Sun allows interesting granularity of authorization u Definition of authorization user groups u Each group has a different level of authority to run a job u Authority level depends on conditions (job queue, time of day, CPU load, …) Ø Resource owners still have complete control over user membership within these groups Campus Grids (May 27, 2005) Paul Avery 19

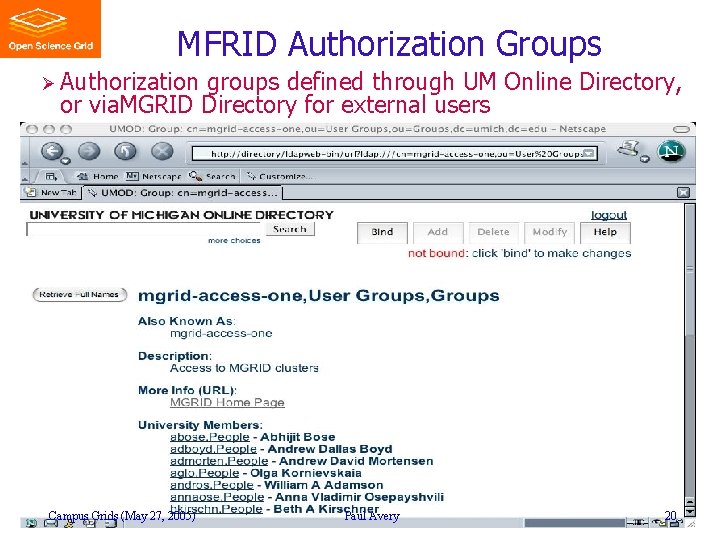

MFRID Authorization Groups Ø Authorization groups defined through UM Online Directory, or via. MGRID Directory for external users Campus Grids (May 27, 2005) Paul Avery 20

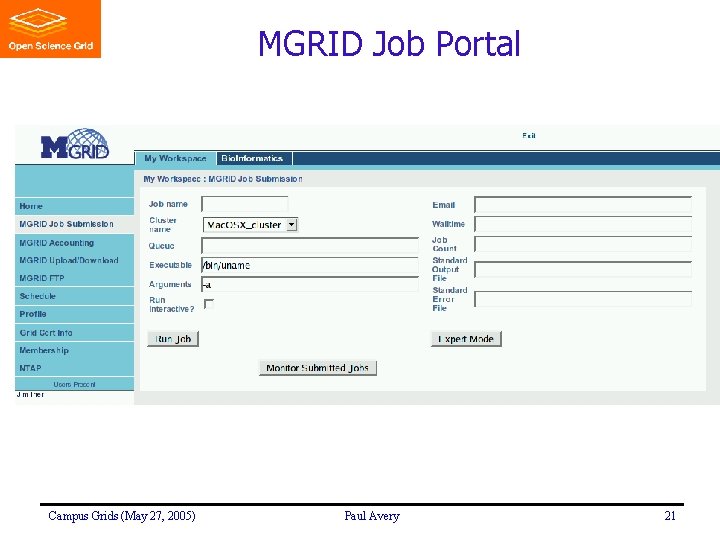

MGRID Job Portal Campus Grids (May 27, 2005) Paul Avery 21

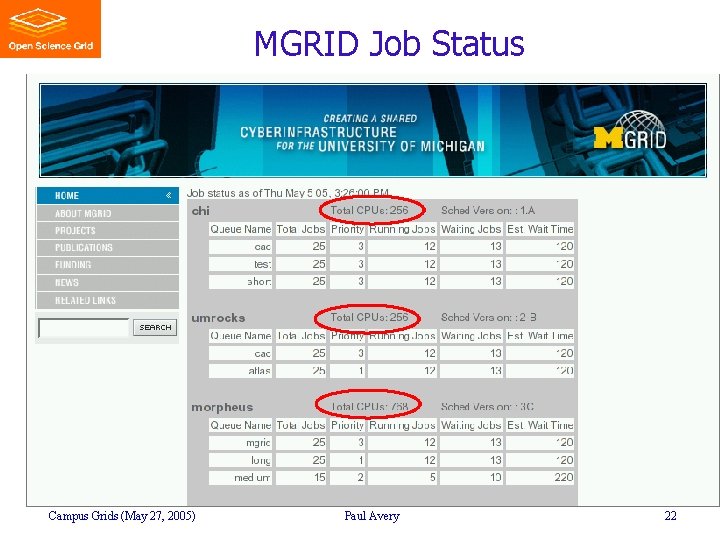

MGRID Job Status Campus Grids (May 27, 2005) Paul Avery 22

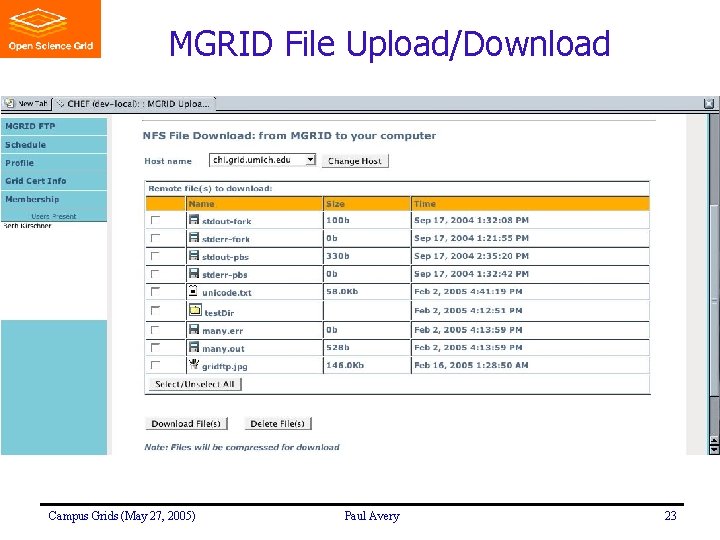

MGRID File Upload/Download Campus Grids (May 27, 2005) Paul Avery 23

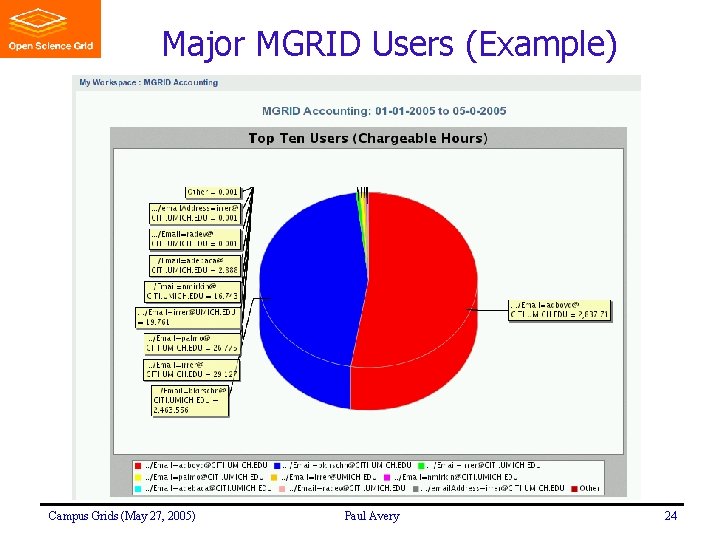

Major MGRID Users (Example) Campus Grids (May 27, 2005) Paul Avery 24

University of Florida Research Grid Ø High Performance Computing Committee: April 2001 u Created by Provost & VP for Research u Currently has 16 members from around campus Ø Study in 2001 -2002 u UF Strength: u UF Weakness: Ø Major Faculty expertise and reputation in HPC Infrastructure lags well behind AAU public peers focus u Create campus Research Grid with HPC Center as kernel u Expand research in HPC-enabled applications areas u Expand research in HPC infrastructure research u Enable new collaborations, visibility, external funding, etc. http: //www. hpc. ufl. edu/Campus. Grid/ Campus Grids (May 27, 2005) Paul Avery 25

UF Grid Strategy ØA campus-wide, distributed HPC facility u Multiple facilities, organization, resource sharing u Staff, seminars, training Ø Faculty-led, u With Ø Build administrative cost-matching & buy-in by key vendors basis for new multidisciplinary collaborations in HPC u HPC as a key common denominator for multidisciplinary research Ø Expand research opportunities for broad range of faculty u Including Ø Build research-driven, investor-oriented approach those already HPC-savvy and those new to HPC Grid facility in 3 phases u Phase I: Investment by College of Arts & Sciences (in operation) u Phase II: Investment by College of Engineering (in develpment) u Phase III: Investment by Health Science Center (in 2006) Campus Grids (May 27, 2005) Paul Avery 26

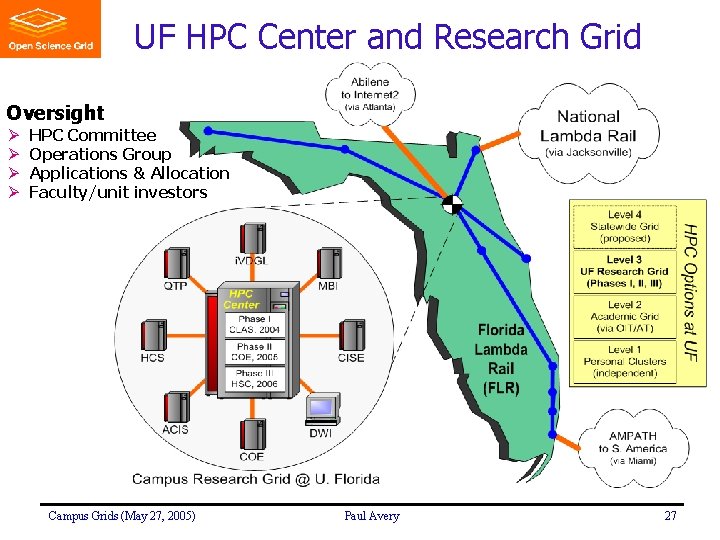

UF HPC Center and Research Grid Oversight Ø Ø HPC Committee Operations Group Applications & Allocation Faculty/unit investors Campus Grids (May 27, 2005) Paul Avery 27

Phase I (Coll. of Arts & Sciences Focus) Ø Physics u $200 K Ø College for equipment investment of Arts and Sciences u $100 K for equipment investment, $70 K/yr systems engineer Ø Provost’s office u $300 K matching for equipment investment u ~$80 K/yr Sr. HPC systems engineer u ~$75 K for physics computer room renovation u ~$10 K for an open account for various HPC Center supplies Ø Now deployed (see next slides) Campus Grids (May 27, 2005) Paul Avery 28

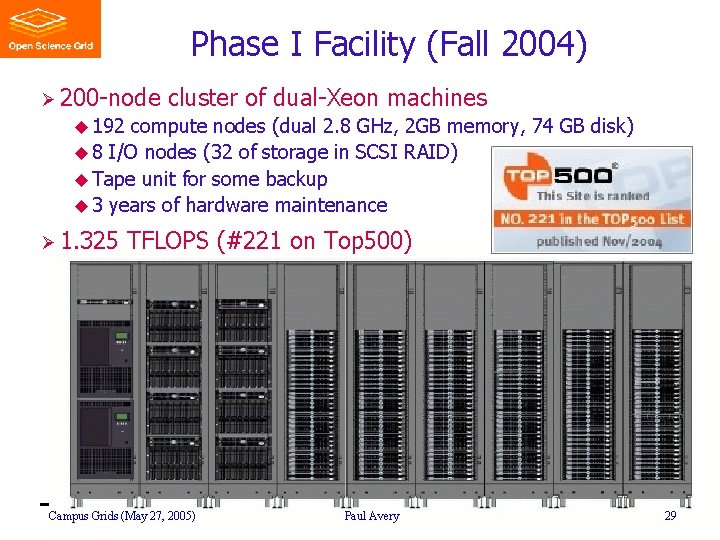

Phase I Facility (Fall 2004) Ø 200 -node cluster of dual-Xeon machines u 192 compute nodes (dual 2. 8 GHz, 2 GB memory, 74 GB disk) u 8 I/O nodes (32 of storage in SCSI RAID) u Tape unit for some backup u 3 years of hardware maintenance Ø 1. 325 TFLOPS (#221 on Top 500) Campus Grids (May 27, 2005) Paul Avery 29

Phase I HPC Use Ø Early period (2 -3 months) of severe underuse u Not “discovered” u Lack of documentation u Need for early adopters Ø Currently enjoying high level of use (> 90%) u CMS production simulations u Other Physics u Quantum Chemistry u Other chemistry u Health sciences u Several engineering apps Campus Grids (May 27, 2005) Paul Avery 30

Phase I HPC Use (cont) Ø Still primitive, in many respects u Insufficient monitoring & display u No accounting yet u Few services (compared to Condor, MGRID) Ø Job portals u PBS is currently main job portal u New In-VIGO portal being developed (http: //invigo. acis. ufl. edu/) u Working with TACC (Univ. of Texas) to deploy Grid. Port Ø Plan to leverage tools & services from others u Other campuses: GLOW, MGRID, TACC, Buffalo u Open Science Grid Campus Grids (May 27, 2005) Paul Avery 31

New HPC Resources Ø Recent NSF/MRI proposal for networking infrastructure u $600 K: 20 Gb/s network backbone u High performance storage (distributed) Ø Recent funding of Ultra. Light and DISUN proposals u Ultra. Light ($700 K): Advanced uses for optical networks u DISUN ($2. 5 M): CMS, bring advanced IT to other sciences Ø Special u Dell, vendor relationships Cisco, Ammasso Campus Grids (May 27, 2005) Paul Avery 32

UF Research Network (20 Gb/s) Funded by NSF-MRI grant Campus Grids (May 27, 2005) Paul Avery 33

Resource Allocation Strategy Ø Faculty/unit investors are first preference u Top-priority access commensurate with level of investment u Shared access to all available resources Ø Cost-matching by administration offers many benefits u Key resources beyond computation (storage, networks, facilities) u Support for broader user base than simply faculty investors Ø Economy of scale advantages with broad HPC Initiative u HPC vendor competition, strategic relationship, major discounts u Facilities savings (computer room space, power, cooling, staff) Campus Grids (May 27, 2005) Paul Avery 34

Phase II (Engineering Focus) Ø Funds being collected now from Engineering faculty u Electrical and Computer Engineering u Mechanical Engineering u Material Sciences u Chemical Engineering (possible) Ø Matching funds (including machine room & renovations) u Engineering departments u College of Engineering u Provost Ø Equipment expected in Phase II facility (Fall 2005) u ~400 dual nodes u ~100 TB disk u High-speed switching fabric u (20 Gb/s network backbone) Campus Grids (May 27, 2005) Paul Avery 35

Phase III (Health Sciences Focus) Ø Planning committee formed by HSC in Dec ‘ 04 u Submitting Ø Defining recommendations to HSC administration in May HPC needs of Health Science u Not only computation; heavy needs in comm. and storage u Need support with HPC applications development and use Ø Optimistic for major investments in 2006 u Phase I success & use by Health Sciences are major motivators u Process will start in Fall 2005, before Phase II complete Campus Grids (May 27, 2005) Paul Avery 36

- Slides: 36