Bridging AIC and BIC a new information criterion

Bridging AIC and BIC: a new information criterion Jie Ding Sept. 28, 2017 Joint work with: Vahid Tarokh School of Engineering and Applied Sciences, Harvard University Yuhong Yang School of Statistics, University of Minnesota

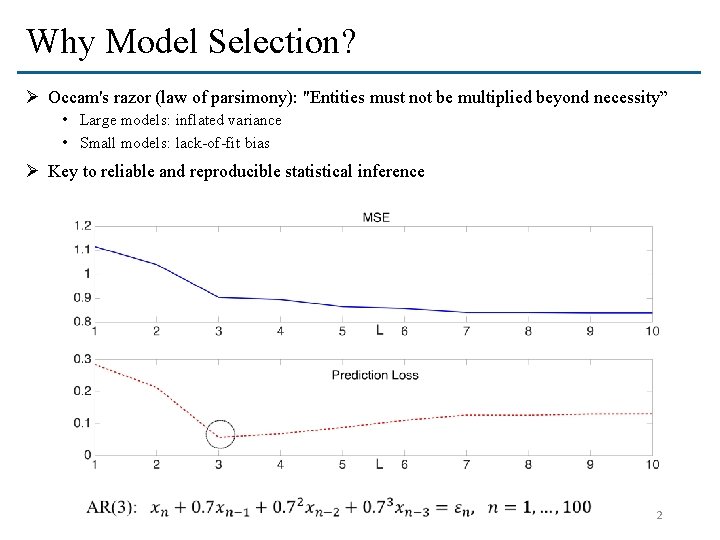

Why Model Selection? Ø Occam's razor (law of parsimony): "Entities must not be multiplied beyond necessity” • Large models: inflated variance • Small models: lack-of-fit bias Ø Key to reliable and reproducible statistical inference 2

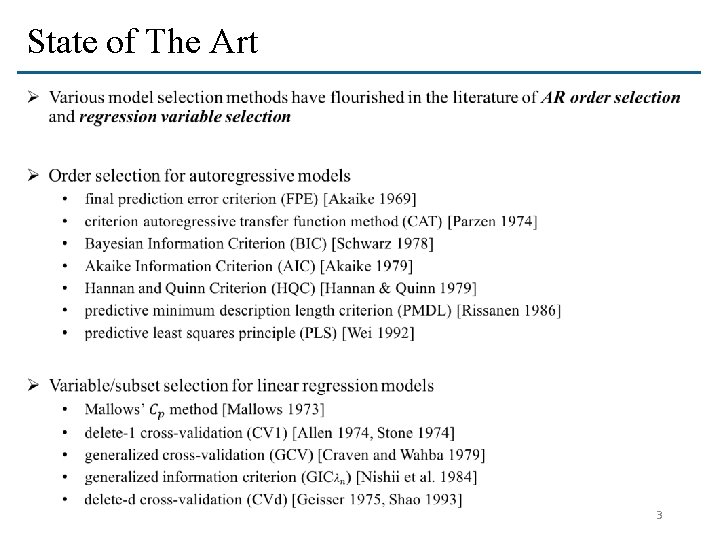

State of The Art Ø 3

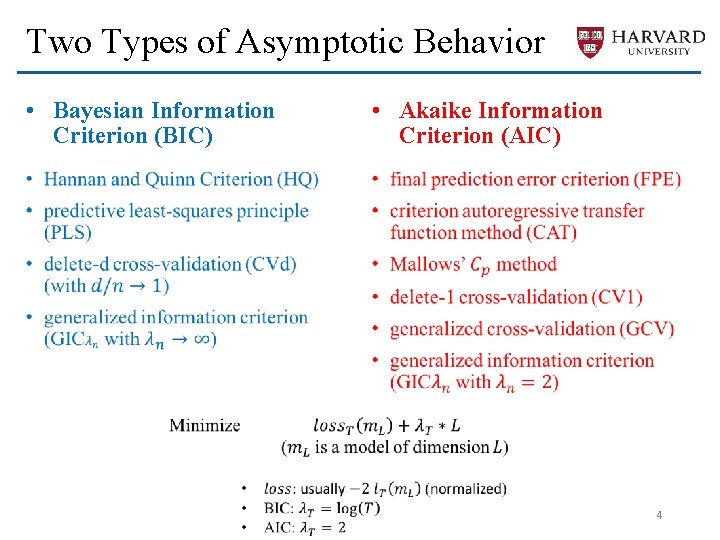

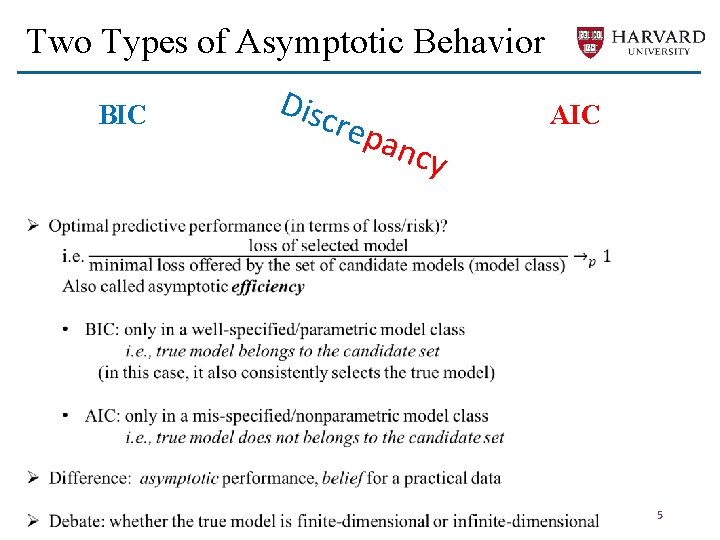

Two Types of Asymptotic Behavior • Bayesian Information Criterion (BIC) • Akaike Information Criterion (AIC) • • 4

Two Types of Asymptotic Behavior BIC Disc r epa ncy AIC 5

Challenges: an Illustration Ø Case 1: eventually converge to the true dimension (if it exists) 6

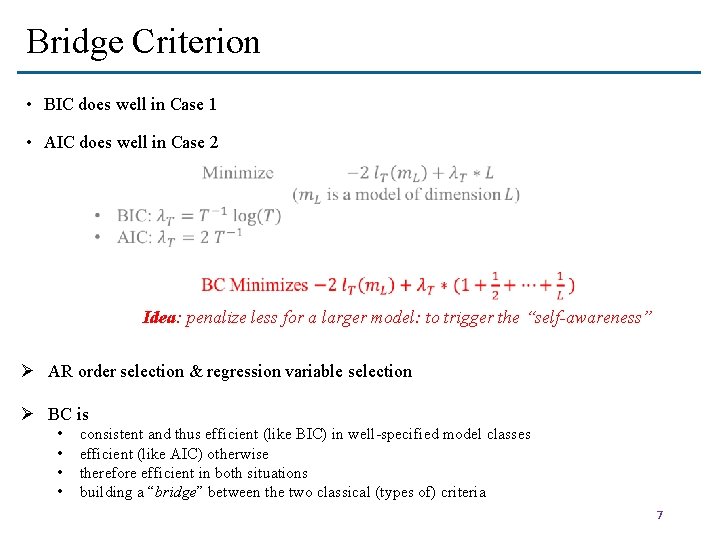

Bridge Criterion • BIC does well in Case 1 • AIC does well in Case 2 Idea: penalize less for a larger model: to trigger the “self-awareness” Ø AR order selection & regression variable selection Ø BC is • • consistent and thus efficient (like BIC) in well-specified model classes efficient (like AIC) otherwise therefore efficient in both situations building a “bridge” between the two classical (types of) criteria 7

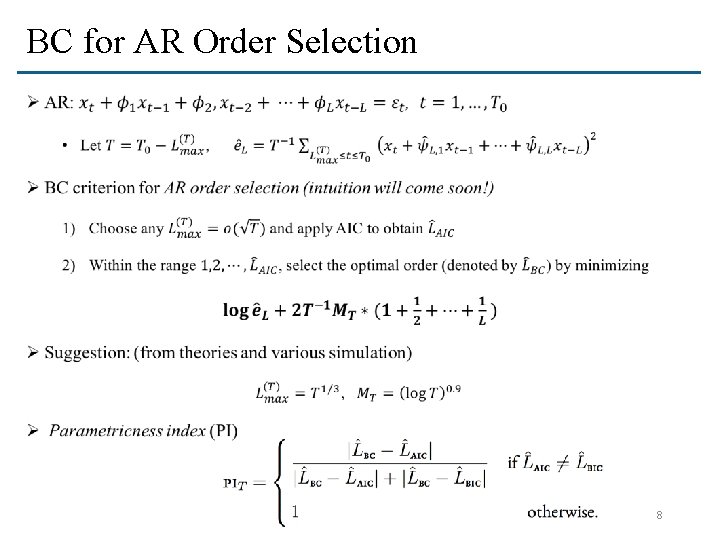

BC for AR Order Selection Ø 8

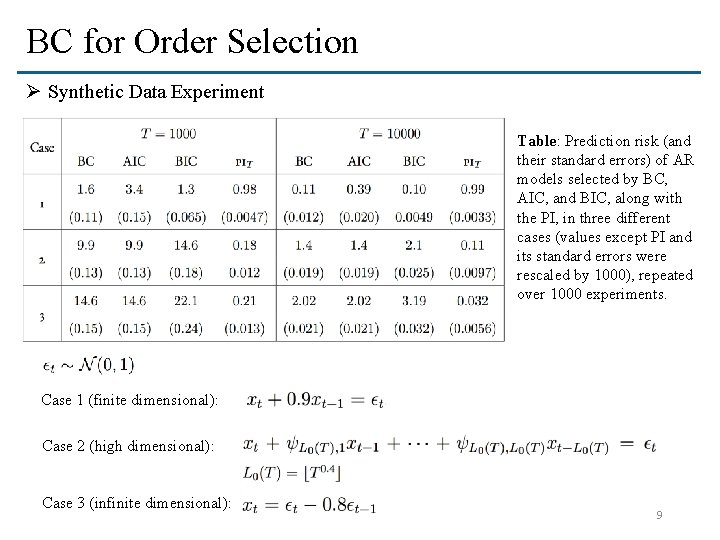

BC for Order Selection Ø Synthetic Data Experiment Table: Prediction risk (and their standard errors) of AR models selected by BC, AIC, and BIC, along with the PI, in three different cases (values except PI and its standard errors were rescaled by 1000), repeated over 1000 experiments. Case 1 (finite dimensional): Case 2 (high dimensional): Case 3 (infinite dimensional): 9

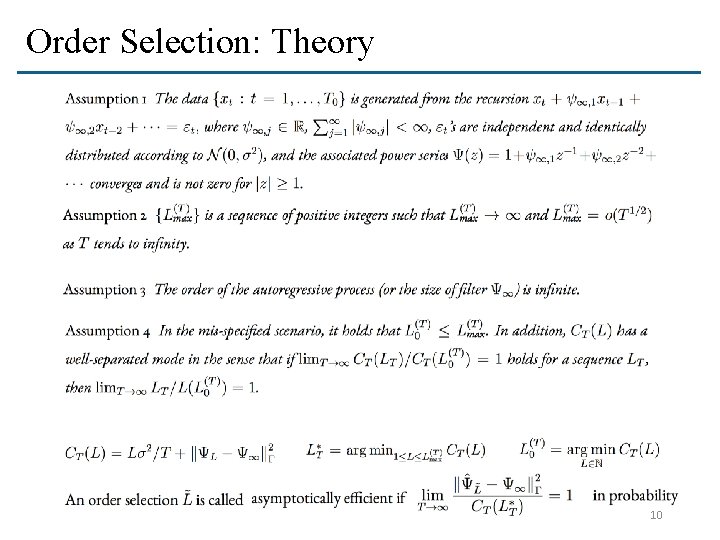

Order Selection: Theory 10

Order Selection: Theory

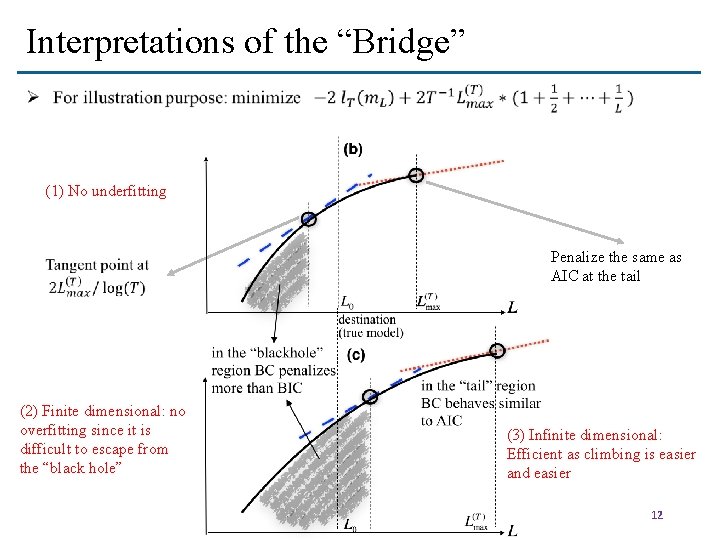

Interpretations of the “Bridge” (1) No underfitting (2) Finite dimensional: no overfitting since it is difficult to escape from the “black hole” Penalize the same as AIC at the tail (3) Infinite dimensional: Efficient as climbing is easier and easier 12

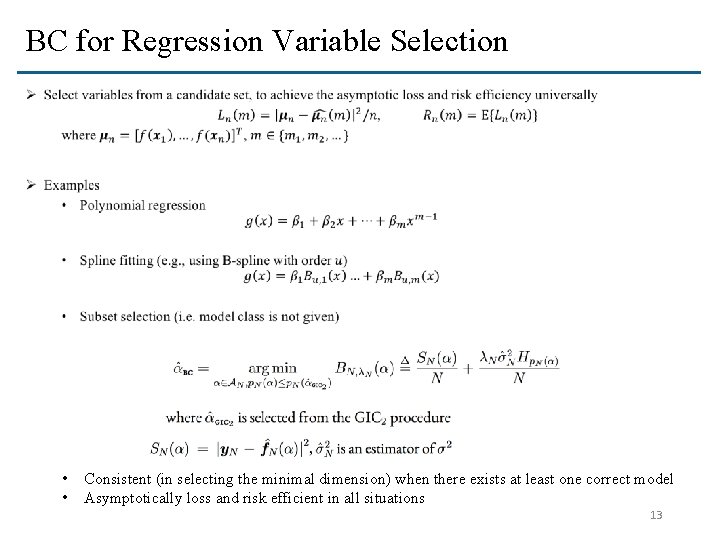

BC for Regression Variable Selection Ø • • Consistent (in selecting the minimal dimension) when there exists at least one correct model Asymptotically loss and risk efficient in all situations 13

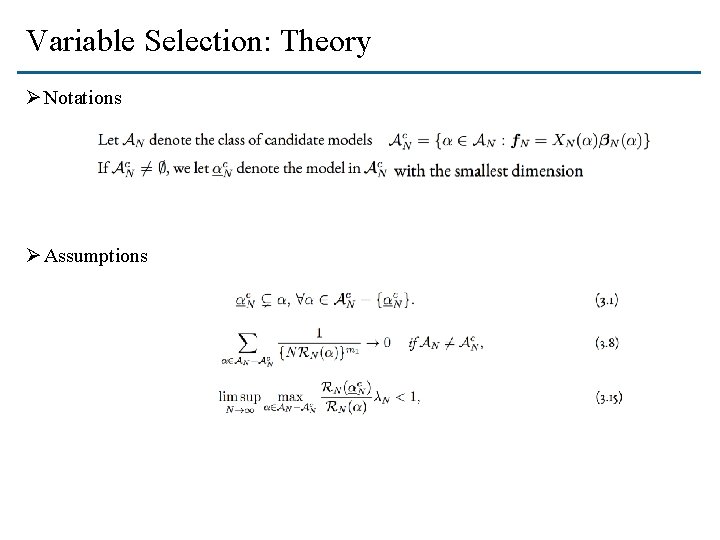

Variable Selection: Theory Ø Notations Ø Assumptions

Variable Selection: Theory

Extensions to High Dimension ØTwo definitions of “high dimension” • Model size grows with data size • Model size is much larger than data size ØHow to extend BC to the second case? • A byproduct: it is applicable when candidate subsets are not prescribed ØExtension of BC: • • Prescreening (dimension reduction) Subset weighting (solve high dimensional instability) BC applied to nested subsets (according to ordered variables) Much better than LASSO, SCAD, MCP, ESCV, etc. in our experiments 16

Extensions to High Dimension Ø 17

Comparison in terms of risk, underfitted dimension, and overfitted dimension on 3 models 18

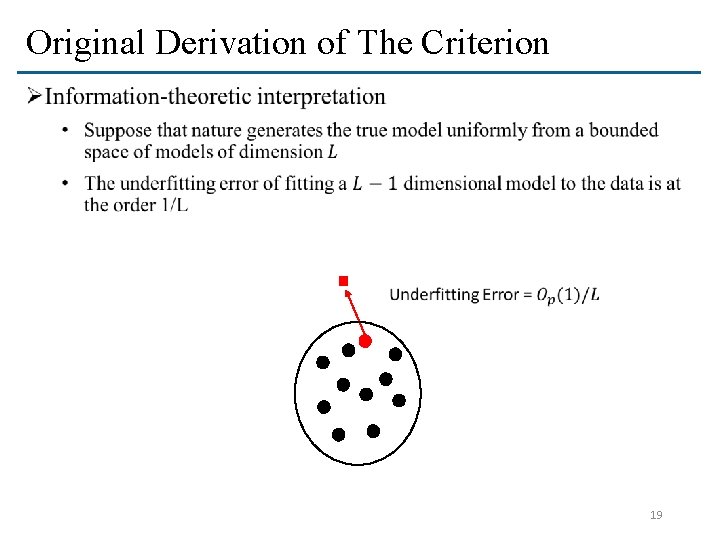

Original Derivation of The Criterion Ø 19

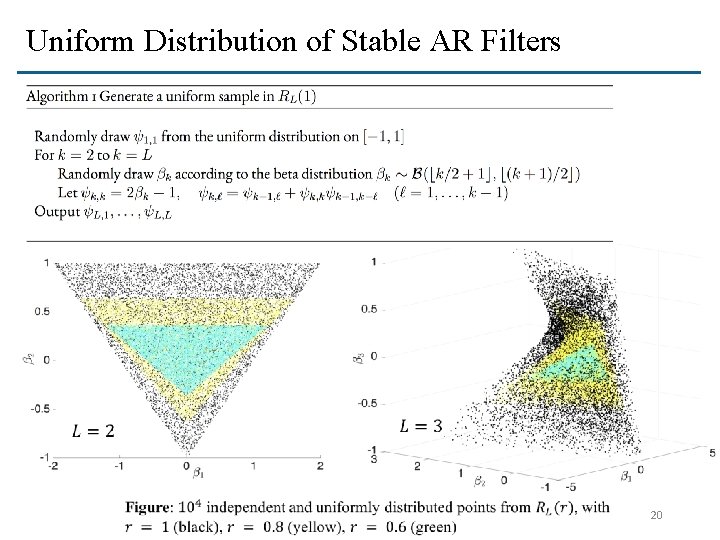

Uniform Distribution of Stable AR Filters 20

Summary ØA new information criterion that achieves universal optimality ØA bridge of well and mis-specifications, or finite and infinite dimensions ØFuture work: extension to broader statistical tasks 21

Thank you Contact J at: jieding@fas. harvard. edu q References • Jie Ding, Vahid Tarokh, and Yuhong Yang, “Bridging AIC and BIC: A New Criterion for Autoregression”, IEEE transactions on information theory • Jie Ding, Vahid Tarokh, and Yuhong Yang, “Optimal Variable Selection in Regression Models”, available at http: //jding. org/jie-uploads/2017/03/variableselection. pdf

- Slides: 22