Breaking the Commandments of Survey Design Cognitive Testing

Breaking the Commandments of Survey Design Cognitive Testing of a Commonly Used Scale of Subjective Religious Experiences Jessica Le. Blanc Center for Survey Research University of Massachusetts Boston

Acknowledgments • Funded by: Society for the Scientific Study of Religion - Jack Shand Research Award • Philip Brenner & Carol Cosenza from the Center for Survey Research at UMass Boston • Andrea Leverentz from the Dept. of Sociology at UMass Boston

Designing Survey Questions In general, when writing survey questions there are some basic standards to follow: • Avoid vague terms or concepts • Avoid double-barreled questions (asking 2 questions at once) • Avoid embedded assumptions (“When you drive your car…”) • Provide time frames (“In the past week…”) • Provide responses that are mutually exclusive and exhaustive By meeting these standards, survey questions are more consistently understood by respondents. (Fowler and Cosenza 2008)

Designing Survey Questions • Despite these well-documented standards, not all survey questions follow the rules. • The resulting data from these questions are often taken at face value as “truth, ” without consideration for how accurately the questions measure what they’re intended to.

Presentation Objective • Present the results of cognitive testing done on some questions from a commonly-used survey scale that measures subjective religious experiences • Daily Spiritual Experience Scale (DSES) • By examining these results, we can better understand: • How do respondents interpret and answer questions that disregard design standards? • How might these interpretations impact the quality of the data collected?

Daily Spiritual Experience Scale • 16 -item scale used to measure the “inner experience of spiritual feelings” (Underwood 2006) • Frequently-used scale to measure subjective concepts of religiosity • Originally developed for health outcomes studies • Increasingly used in social sciences across a variety of topics (including the GSS) • Scale items are used together to create index scores or are used as individual measures • Used in over 200 published studies since 2002 and translated into over 40 languages (dsesscale. org)

Why the DSES? • Existing work has evaluated error in survey questions that study objective, quantifiable measures of religiosity • church attendance, frequency of prayer • Not as much done to evaluate questions that measure more subjective aspects of religiosity such as frequency of feelings or emotional experiences • Topic salience – church attendance/frequency of prayer are only small portions of a religious identity (Underwood 2006) • Scale items are very popular

Methodology • 25 cognitive interviews with religious Christians between March and July of 2014 • Retrospective probing, interviewer-administered survey • Diverse group in terms of age, race/ethnicity, educational attainment • Goal of cognitive testing was evaluate 15 common survey questions used to measure various aspects of religiosity/spirituality OUR FOCUS • Six items from the DSES • Items from General Social Survey, the American National Election Studies, Pew Religion Studies

Methodology • All interviews were transcribed by professional transcription company • Transcripts coded for common patterns in respondent narration (responses to cognitive probes & information volunteered throughout the interview) • Various themes emerged about respondents’ comprehension of questions • Coding and analysis was done using NVivo software • Specific quotes will be used to highlight findings

DSES Items Tested The list that follows includes items you may or may not experience. Please consider if and how often you have these experiences and try to disregard whether you feel you should or should not have them. A number of items use the word “God. ” If this word is not a comfortable one, please substitute another idea to mean the divine or holy for you. I find strength in my religion or spirituality q Many times a day q Every day q Most days q Some days q Once in a while q Never or almost never

DSES Items Tested • I feel God’s presence • I find comfort in my religion or spirituality • During worship, or at other times when connecting with God, I feel joy, which lifts me out of my daily concerns • I ask for God’s help in the midst of daily activities • I feel guided by God in the midst of daily activities q Many times a day q Every day q Most days q Some days q Once in a while q Never or almost never

Findings • Patterns from transcripts show that the DSES breaks many of the “commandments” of survey design. • These design issues impact the way that respondents understand/respond to questions. • Findings of this research will describe how respondents handled tested items in respect to the following: • • Lack of specified time frame Lack of mutually-exclusive response categories Double-barreled questions Unclear terms/words (response categories)

Lack of Time Frame • Items in the DSES do not provide respondents with a specific period of time to reference, such as “in the past week” or “the past month. ” • Respondents must make their own judgments about what to include. • This lack of time frame also assumes that these experiences fall under a regular, consistent pattern.

Lack of Time Frame • Questions were easiest to answer for those whose experiences were consistent day to day and had not changed much over time • More difficult for those with less regularity of experiences to decide on an answer • For example, one respondent, in response to being asked how often she “feels guided by God in the midst of daily activities” said: “Jeez. This – I – this all depends on what day it is, really. So let me go with once in a while. ”

Lack of Time Frame • Some respondents stretched their interpretation of the time frame to include as far as a couple of years back, while others are thinking only of more recent time. • Additionally, responses to these questions are based on what is going on in their life at a particular time, which varies day to day. • Respondents discuss how “some days are tougher than others, ” which makes it difficult to for them to decide on an answer.

Lack of Time Frame • For example, one respondent answered “every day” to feeling guided by God in the midst of activities. • This was in response to feeling that some days she feels guided to make decisions many times a day, while other days she doesn’t need the guidance at all. • Her response of “every day” was a way of averaging out these inconsistent experiences. • This issue is partially due to lack of specified time frame, and partially due to a lack of mutually exclusive categories.

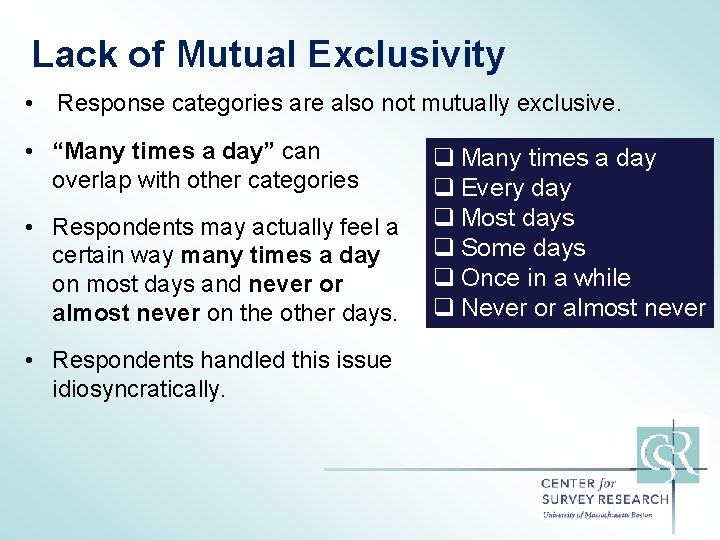

Lack of Mutual Exclusivity • Response categories are also not mutually exclusive. • “Many times a day” can overlap with other categories • Respondents may actually feel a certain way many times a day on most days and never or almost never on the other days. • Respondents handled this issue idiosyncratically. q Many times a day q Every day q Most days q Some days q Once in a while q Never or almost never

Lack of Mutual Exclusivity • For example, one respondent stated that she has the experience of feeling joy from connecting with God “many times a day” on some days in her life, but that on others it doesn’t happen at all. She chose to say “some days” for her answer. • Her response was a way of averaging out the two options, but the answer doesn’t really reflect what is going on. • Other respondents with this same experience (many times a day, only some days”) just chose “many times a day. ”

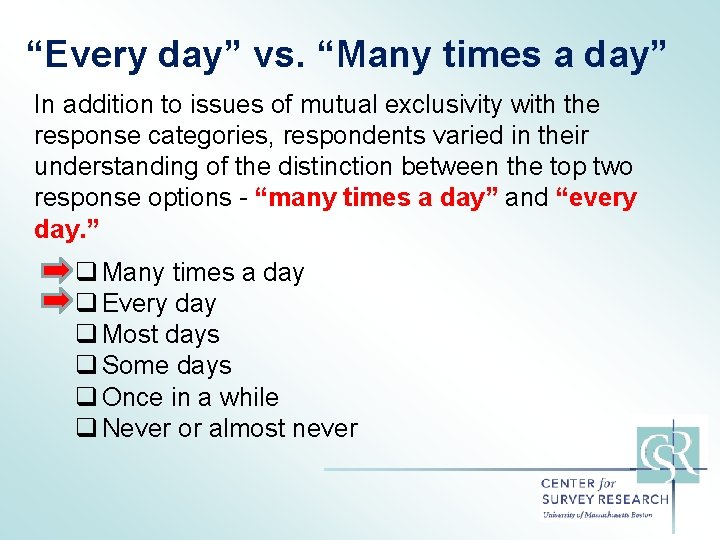

“Every day” vs. “Many times a day” In addition to issues of mutual exclusivity with the response categories, respondents varied in their understanding of the distinction between the top two response options - “many times a day” and “every day. ” q Many times a day q Every day q Most days q Some days q Once in a while q Never or almost never

“Every day” vs. “Many times a day” • To some, “many times a day” meant “all the time, ” “nearconstant”, “constant” or “throughout the day. ” • Others interpreted “every day” to mean this idea of “constant” or “near-constant” as well, while “many times a day” meant that it was separate, discrete experiences throughout the day. • Other respondents used the category “every day” to mean only once per day, while “many times a day” meant more than once a day.

“Every day” vs. “Many times a day” • Order of response categories was sometimes at odds with interpretations. “The “many times a day” and the “every day” – the “many times a day” is more than just the “every day”, right? Because it seems like almost the same. ” • After some discussion, this respondent explained that she thought “every day” meant “so much more” than “many times a day, ” but that the response ordering confused her (“I noticed it goes down”)

Double-Barreled Questions “During worship or at other times when connecting with God, I feel joy which lifts me out of my daily concerns” • Question is asking about a frequency of how often the respondent feels joy. • However, frequency is not out all every minute of every day, as implied by the other questions, but rather a frequency of how often this emotion is felt whilst engaging in a distinct action (“worshipping or connecting with God”). • Response categories still ask about frequency of this occurrence in the context of a day-to-day, spanning from “many times a day” to “never or almost never. ”

“Okay, do you mean like when I do it, no matter if I do it once a year, whatever, how I feel when I do it? Or do you mean if I do it on a daily basis, what – how do I feel? ” • This respondent ultimately chose “every day, ” explaining that every day that she connects with God, she feels this joy. • However, she does not connect with God every day – actually, she explained, it is much less often that (maybe closer to once a week). • So, we now have an answer of “every day” for someone who actually feels this way about once a week.

Now let’s compare two respondents who both said “some days” as their answer to this question: “During worship or at other times when connecting with God, I feel joy which lifts me out of my daily concerns” • One respondent says “some days” to this question because although he “worships or connects with God” multiple times a day, he only feels a sense of “joy” from it “about less than half of the time. ” • Another respondent says “some days” to this question because some of the days that she actually attends her worship services (which is about once a week or less often) she feels “joy. ” • Two respondents with very different experiences end up with same response.

Discussion • The findings discussed are only a small selection of the results of my interviews. • Analysis also found variations in interpretation of question concepts, such as what it meant to find “strength” or “comfort. ” • Variation seems to be due to topic area and personal and complex nature of these “inner experiences of religiosity. ” • However, results suggest that questions have many interpretation issues that are due to the scale not following survey question design protocol.

Discussion During worship, or at other times when connecting with God, I feel joy which lifts me out of my daily concerns” • Question was difficult not only because “joy” and “connecting with God” have very personal interpretations, but because of issues with overall question construction. • Question as it stands assumes that respondents feel the same way every time they worship/connect with God. • The issues with the stem of the question were made worse by response options that were inconsistently interpreted and not mutually exclusive.

Discussion • Given inconsistencies in interpretation of “every day” vs. “many times a day, ” can we categorize respondents as being “more” or “less” religious based on these categories? • “Many times a day” was intended to measure higher frequency than “every day, ” but many respondents think the opposite. • If one respondent is saying “some days” for ~2 x a month, while another says “some days” for ~5 -6 x a week, are these scores an accurate way to compare respondents’ levels of religiosity?

Limitations • While sample was diverse in some aspect, limited in geographic location and religious background • Not able to interview outside of Boston • Necessary to limit to Christian respondents due to other questions in interview • Only tested 6 -question subsample from entire DSES • Broader scope: Design issues may be exacerbated by unique topic area

Conclusions • A key to good question design is that questions should be consistently understood. • The best way to do this is to follow standards that make it clear what the respondent is to be answering about. • This study provides evidence that this is not the case for the DSES, despite being a scale that is a “validated” and frequently used in published research. • Findings call into question the accuracy of these scores as true measures by which respondents can be compared against one another.

Conclusions • While the focus of this study was on DSES, we can draw reasonable conclusions about how respondents interpret and respond to other questions that similarly break design standards. • Those that use the DSES or other similarly-constructed questions should be wary of what data is really measuring.

Thank you! Jessica. Le. Blanc@umb. edu

- Slides: 31