Bounding the error of misclassification Usman Roshan Bayesian

Bounding the error of misclassification Usman Roshan

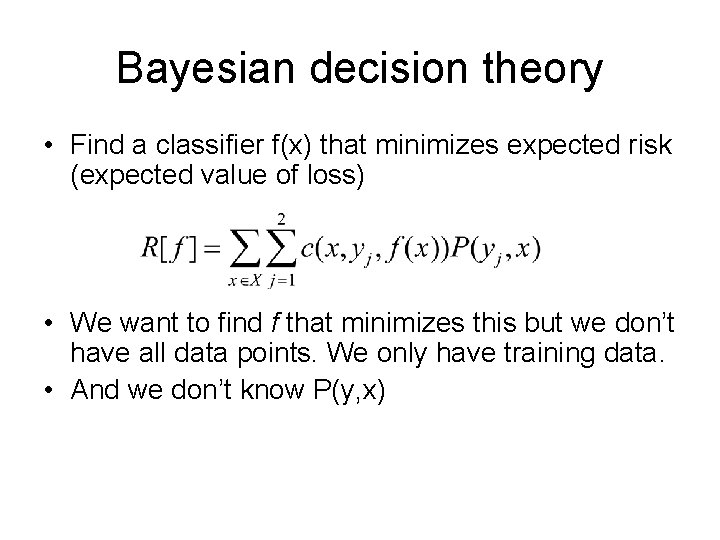

Bayesian decision theory • Find a classifier f(x) that minimizes expected risk (expected value of loss) • We want to find f that minimizes this but we don’t have all data points. We only have training data. • And we don’t know P(y, x)

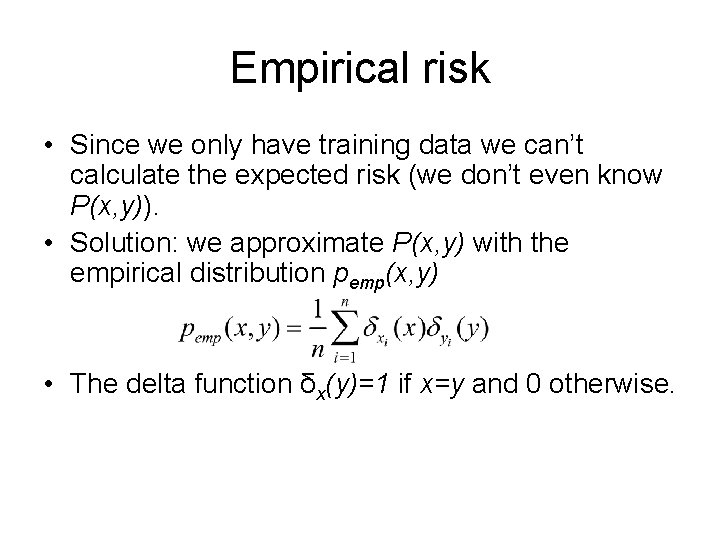

Empirical risk • Since we only have training data we can’t calculate the expected risk (we don’t even know P(x, y)). • Solution: we approximate P(x, y) with the empirical distribution pemp(x, y) • The delta function δx(y)=1 if x=y and 0 otherwise.

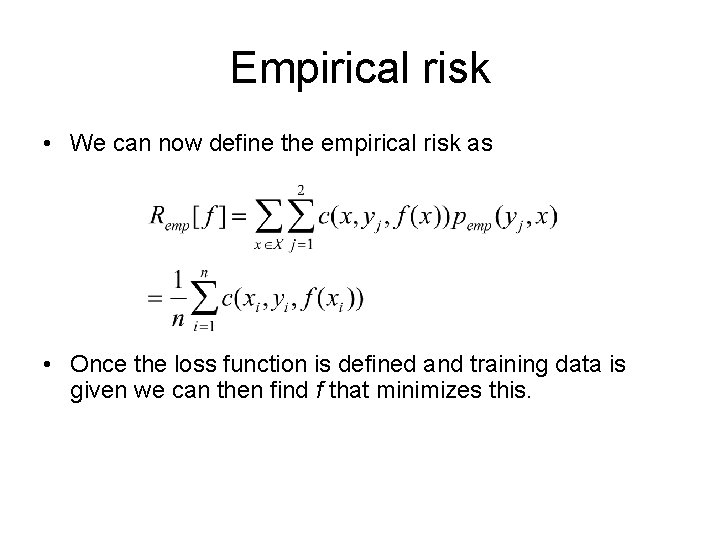

Empirical risk • We can now define the empirical risk as • Once the loss function is defined and training data is given we can then find f that minimizes this.

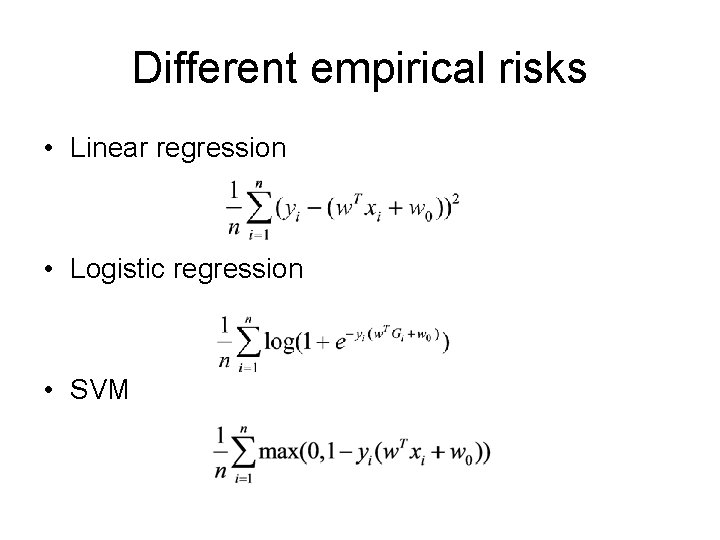

Different empirical risks • Linear regression • Logistic regression • SVM

Does it make sense to optimize empirical risk? • Does Remp(f) approach R(f) as we increase sample size? • Remember that f is our classifier. We are asking if the empirical error of f approaches the expected error of f with more samples. • Yes according to law of large numbers: mean value of random sample approaches true mean as sample size increases • But how fast does it converge?

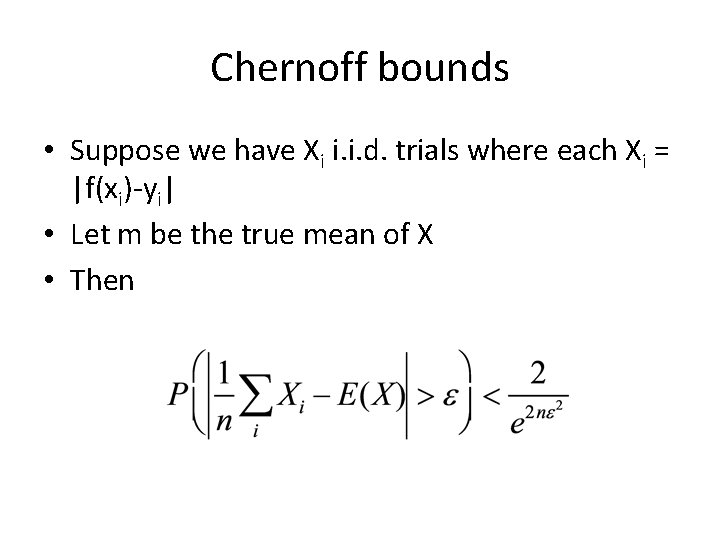

Chernoff bounds • Suppose we have Xi i. i. d. trials where each Xi = |f(xi)-yi| • Let m be the true mean of X • Then

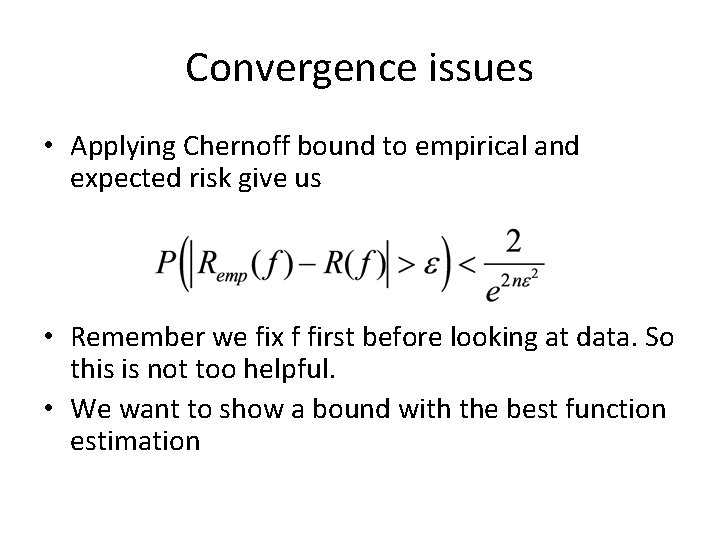

Convergence issues • Applying Chernoff bound to empirical and expected risk give us • Remember we fix f first before looking at data. So this is not too helpful. • We want to show a bound with the best function estimation

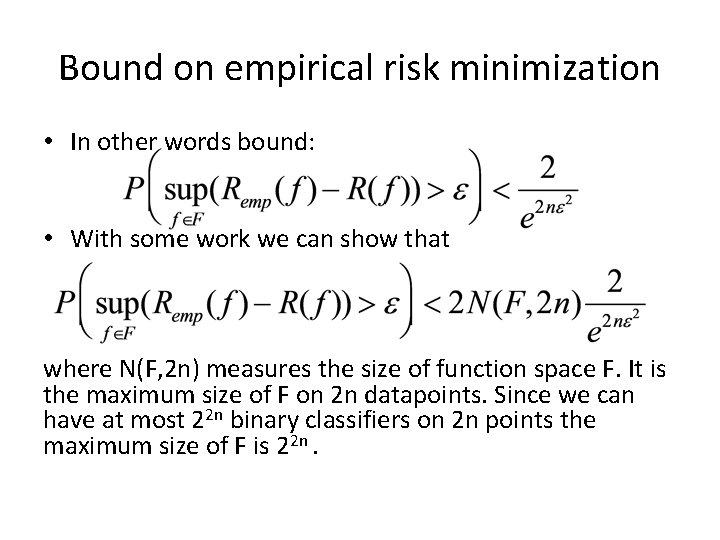

Bound on empirical risk minimization • In other words bound: • With some work we can show that where N(F, 2 n) measures the size of function space F. It is the maximum size of F on 2 n datapoints. Since we can have at most 22 n binary classifiers on 2 n points the maximum size of F is 22 n.

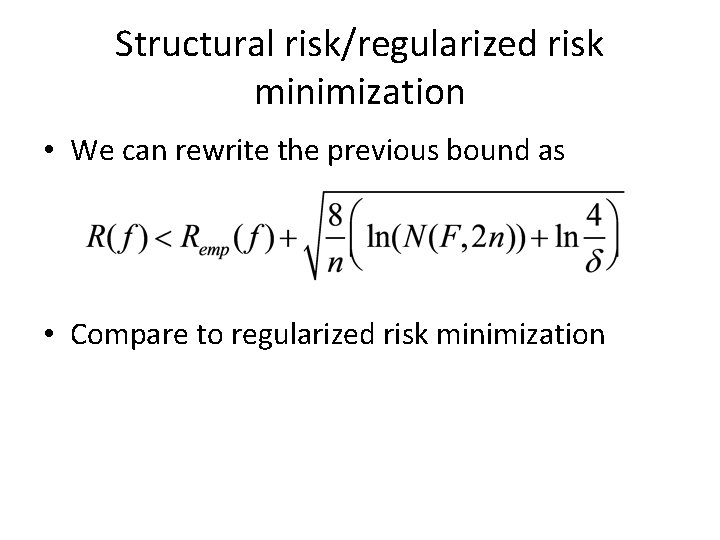

Structural risk/regularized risk minimization • We can rewrite the previous bound as • Compare to regularized risk minimization

- Slides: 10