Bottomup Parsing 1 Parsing Techniques Topdown parsers Start

Bottom-up Parsing 1

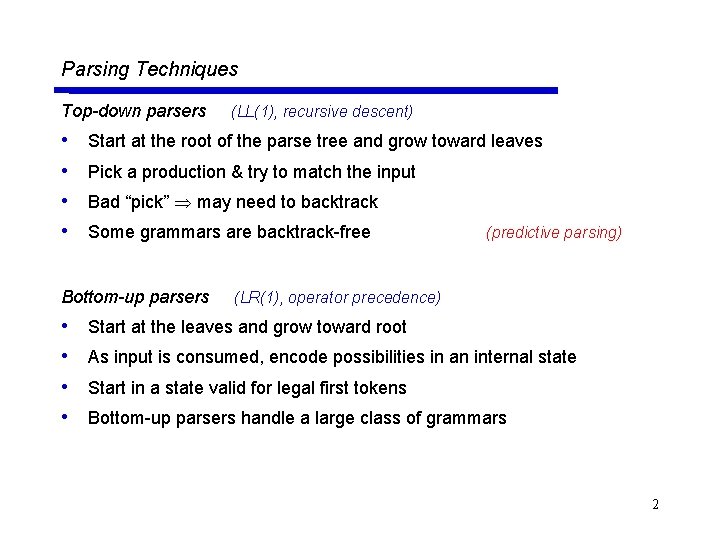

Parsing Techniques Top-down parsers • • Start at the root of the parse tree and grow toward leaves Pick a production & try to match the input Bad “pick” may need to backtrack Some grammars are backtrack-free Bottom-up parsers • • (LL(1), recursive descent) (predictive parsing) (LR(1), operator precedence) Start at the leaves and grow toward root As input is consumed, encode possibilities in an internal state Start in a state valid for legal first tokens Bottom-up parsers handle a large class of grammars 2

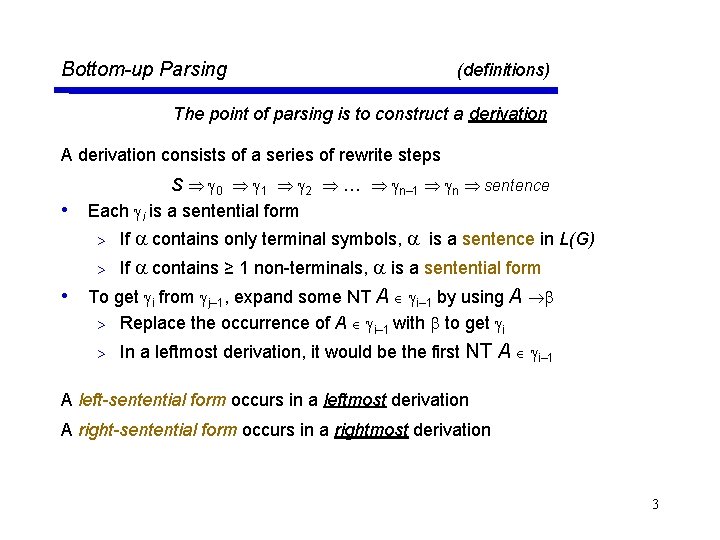

Bottom-up Parsing (definitions) The point of parsing is to construct a derivation A derivation consists of a series of rewrite steps • S 0 1 2 … n– 1 n sentence Each i is a sentential form > If contains only terminal symbols, is a sentence in L(G) > If contains ≥ 1 non-terminals, is a sentential form • To get i from j– 1, expand some NT A i– 1 by using A > Replace the occurrence of A i– 1 with to get i > In a leftmost derivation, it would be the first NT A i– 1 A left-sentential form occurs in a leftmost derivation A right-sentential form occurs in a rightmost derivation 3

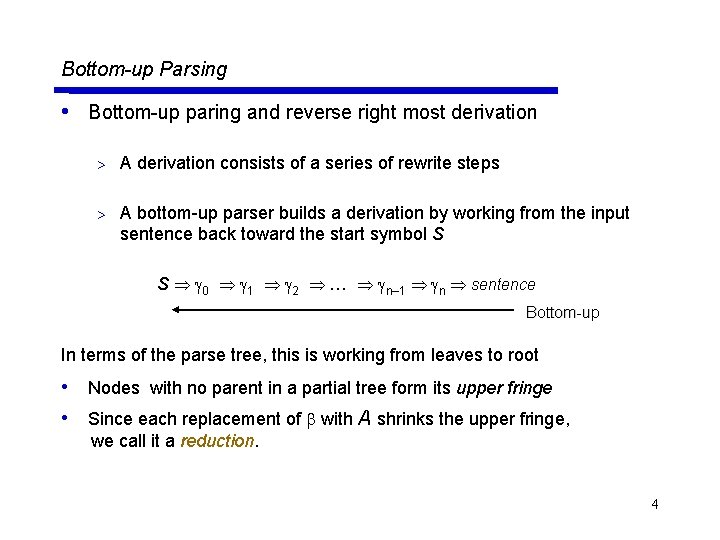

Bottom-up Parsing • Bottom-up paring and reverse right most derivation > A derivation consists of a series of rewrite steps > A bottom-up parser builds a derivation by working from the input sentence back toward the start symbol S S 0 1 2 … n– 1 n sentence Bottom-up In terms of the parse tree, this is working from leaves to root • Nodes with no parent in a partial tree form its upper fringe • Since each replacement of with A shrinks the upper fringe, we call it a reduction. 4

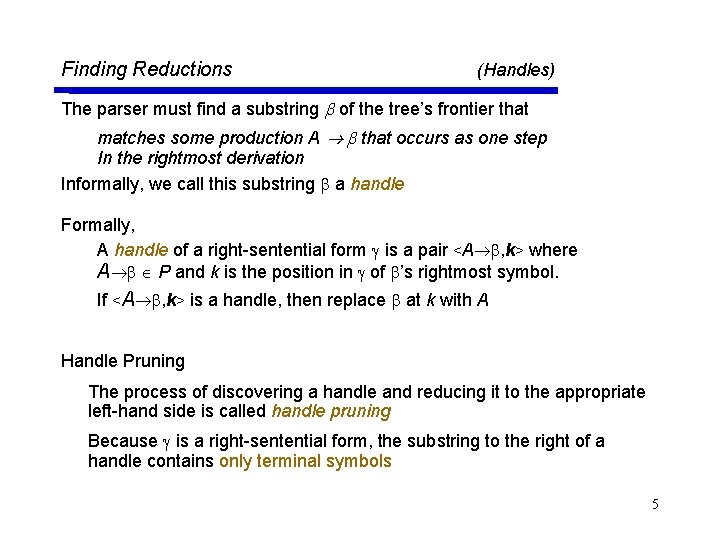

Finding Reductions (Handles) The parser must find a substring of the tree’s frontier that matches some production A that occurs as one step In the rightmost derivation Informally, we call this substring a handle Formally, A handle of a right-sentential form is a pair <A , k> where A P and k is the position in of ’s rightmost symbol. If <A , k> is a handle, then replace at k with A Handle Pruning The process of discovering a handle and reducing it to the appropriate left-hand side is called handle pruning Because is a right-sentential form, the substring to the right of a handle contains only terminal symbols 5

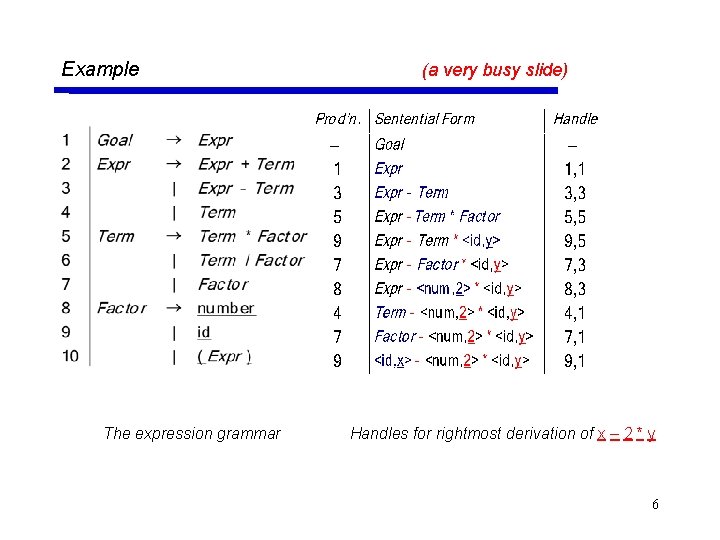

Example The expression grammar (a very busy slide) Handles for rightmost derivation of x – 2 * y 6

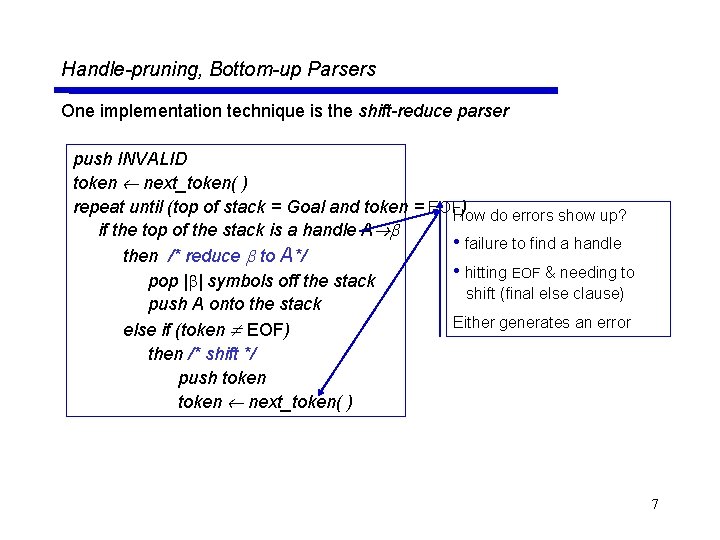

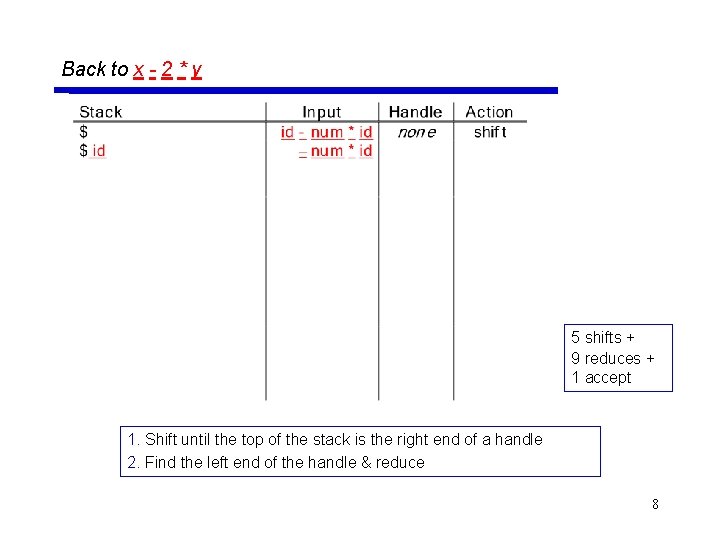

Handle-pruning, Bottom-up Parsers One implementation technique is the shift-reduce parser push INVALID token next_token( ) repeat until (top of stack = Goal and token = EOFHow ) do errors show up? if the top of the stack is a handle A • failure to find a handle then /* reduce to A*/ • hitting EOF & needing to pop | | symbols off the stack shift (final else clause) push A onto the stack Either generates an error else if (token EOF) then /* shift */ push token next_token( ) 7

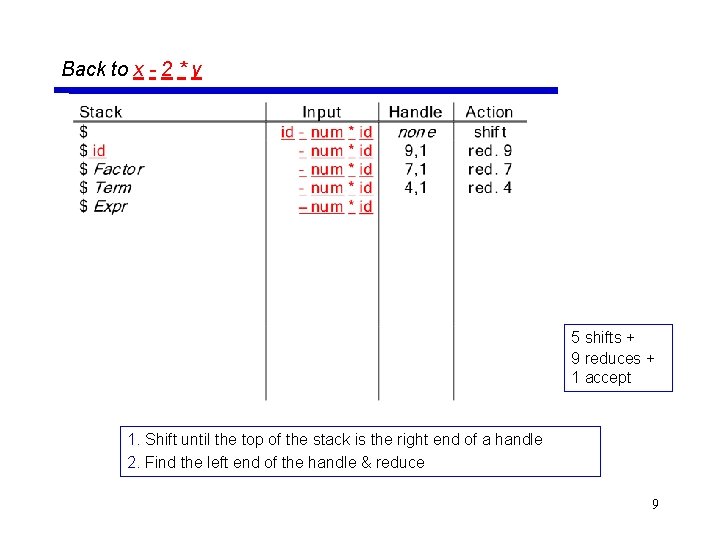

Back to x - 2 * y 5 shifts + 9 reduces + 1 accept 1. Shift until the top of the stack is the right end of a handle 2. Find the left end of the handle & reduce 8

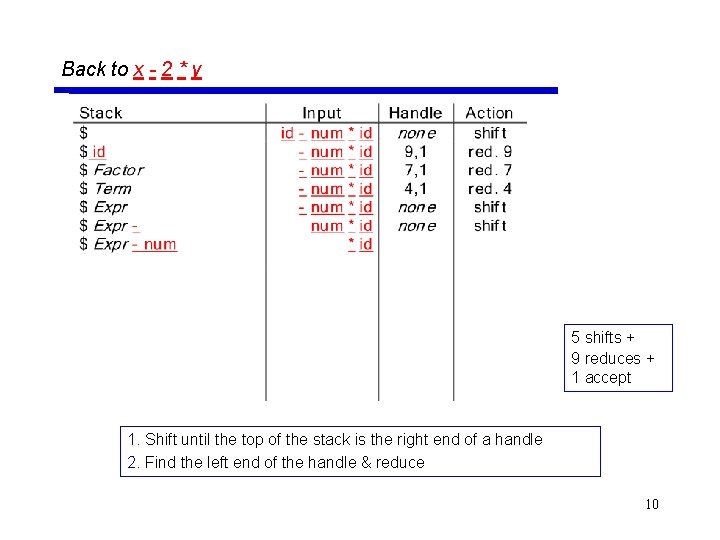

Back to x - 2 * y 5 shifts + 9 reduces + 1 accept 1. Shift until the top of the stack is the right end of a handle 2. Find the left end of the handle & reduce 9

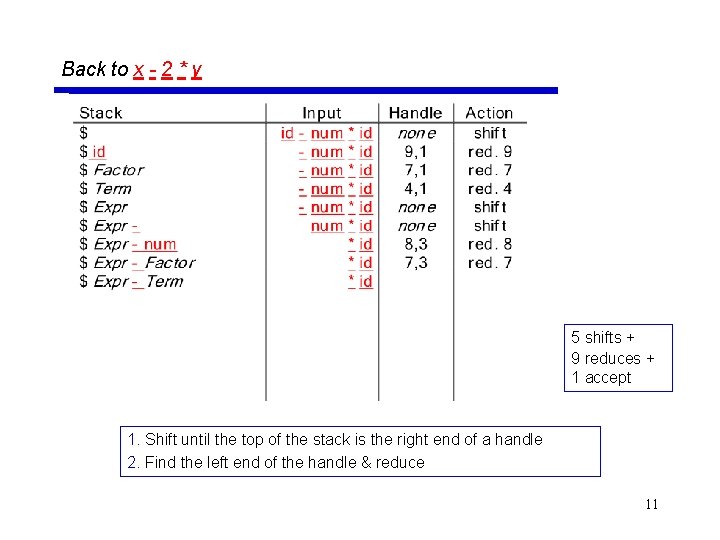

Back to x - 2 * y 5 shifts + 9 reduces + 1 accept 1. Shift until the top of the stack is the right end of a handle 2. Find the left end of the handle & reduce 10

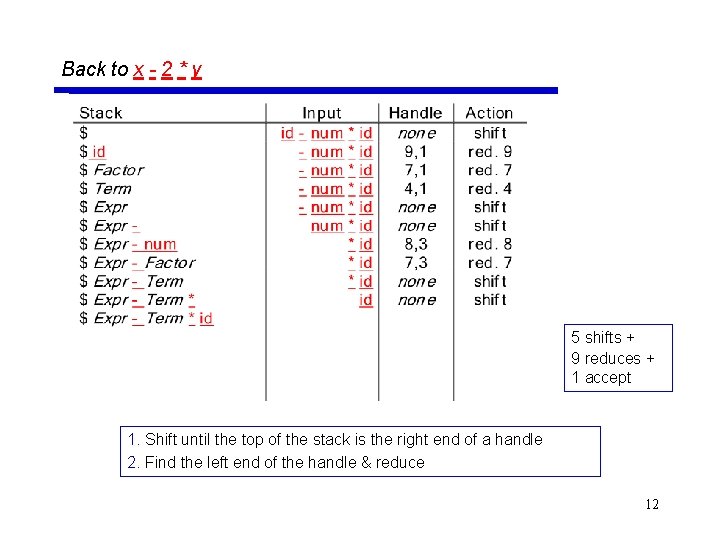

Back to x - 2 * y 5 shifts + 9 reduces + 1 accept 1. Shift until the top of the stack is the right end of a handle 2. Find the left end of the handle & reduce 11

Back to x - 2 * y 5 shifts + 9 reduces + 1 accept 1. Shift until the top of the stack is the right end of a handle 2. Find the left end of the handle & reduce 12

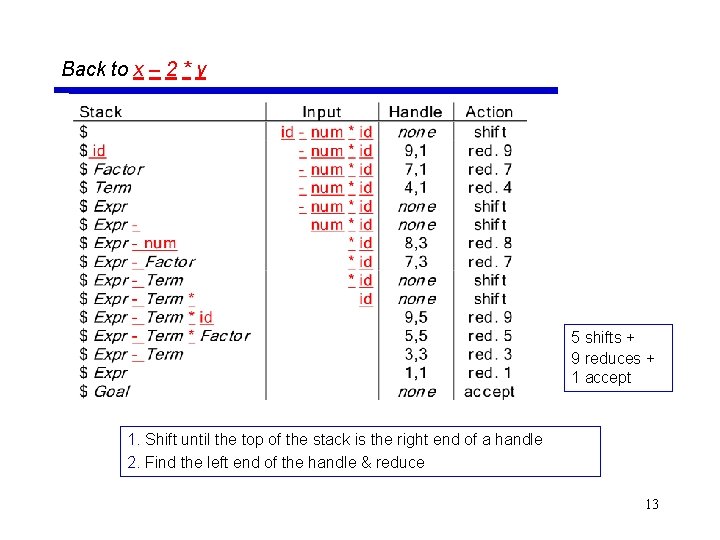

Back to x – 2 * y 5 shifts + 9 reduces + 1 accept 1. Shift until the top of the stack is the right end of a handle 2. Find the left end of the handle & reduce 13

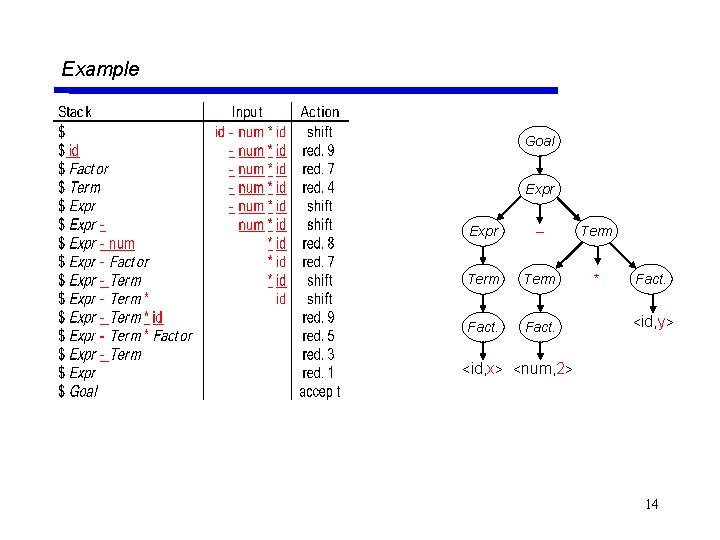

Example Goal Expr – Term * Fact. <id, y> <id, x> <num, 2> 14

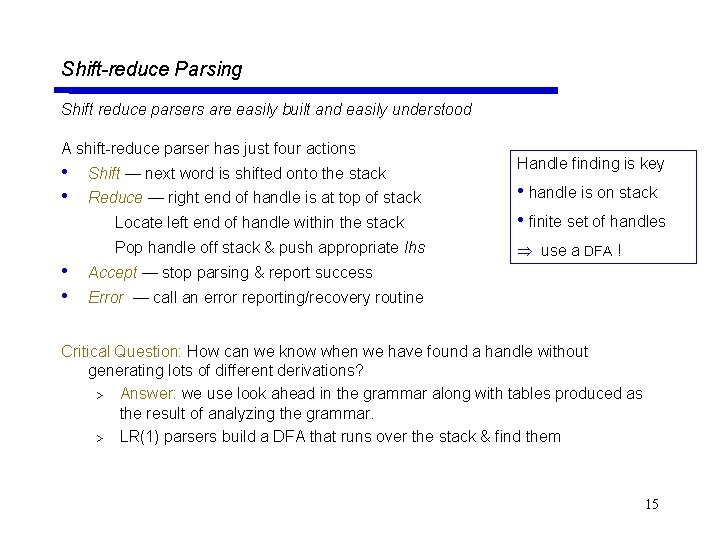

Shift-reduce Parsing Shift reduce parsers are easily built and easily understood A shift-reduce parser has just four actions • • Shift — next word is shifted onto the stack Handle finding is key Locate left end of handle within the stack • handle is on stack • finite set of handles Pop handle off stack & push appropriate lhs use a DFA ! Reduce — right end of handle is at top of stack Accept — stop parsing & report success Error — call an error reporting/recovery routine Critical Question: How can we know when we have found a handle without generating lots of different derivations? > Answer: we use look ahead in the grammar along with tables produced as the result of analyzing the grammar. > LR(1) parsers build a DFA that runs over the stack & find them 15

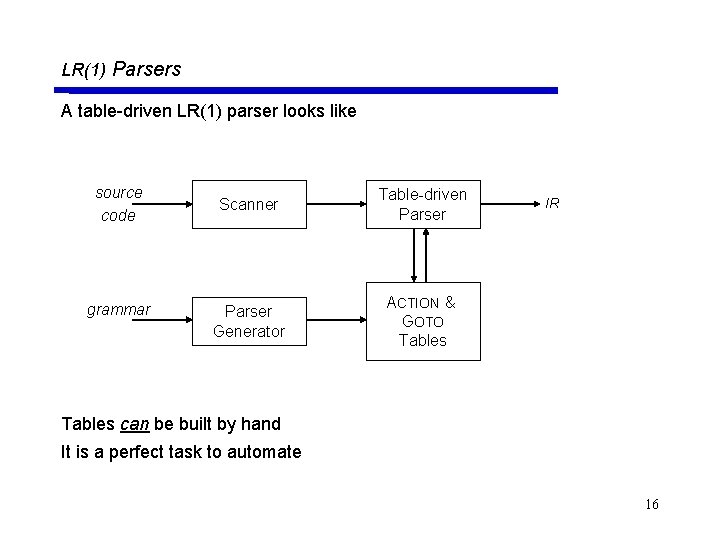

LR(1) Parsers A table-driven LR(1) parser looks like source code grammar Scanner Table-driven Parser Generator ACTION & GOTO Tables IR Tables can be built by hand It is a perfect task to automate 16

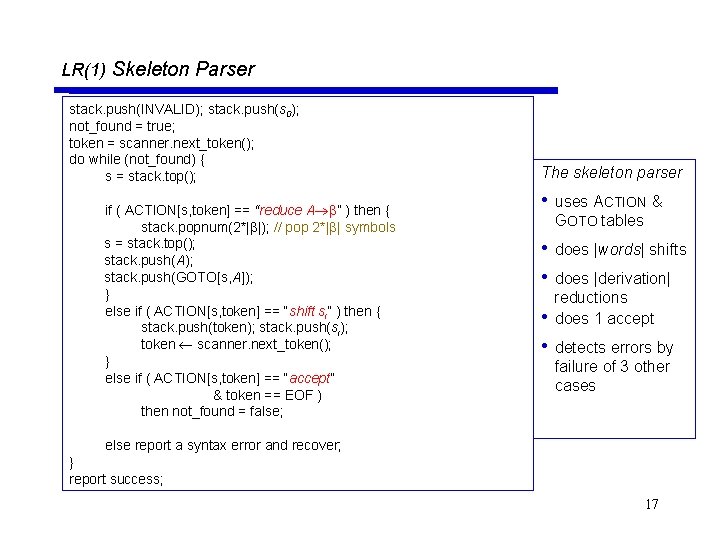

LR(1) Skeleton Parser stack. push(INVALID); stack. push(s 0); not_found = true; token = scanner. next_token(); do while (not_found) { s = stack. top(); if ( ACTION[s, token] == “reduce A ” ) then { stack. popnum(2*| |); // pop 2*| | symbols s = stack. top(); stack. push(A); stack. push(GOTO[s, A]); } else if ( ACTION[s, token] == “shift si” ) then { stack. push(token); stack. push(si); token scanner. next_token(); } else if ( ACTION[s, token] == “accept” & token == EOF ) then not_found = false; The skeleton parser • uses ACTION & GOTO tables • does |words| shifts • does |derivation| reductions does 1 accept • • detects errors by failure of 3 other cases else report a syntax error and recover; } report success; 17

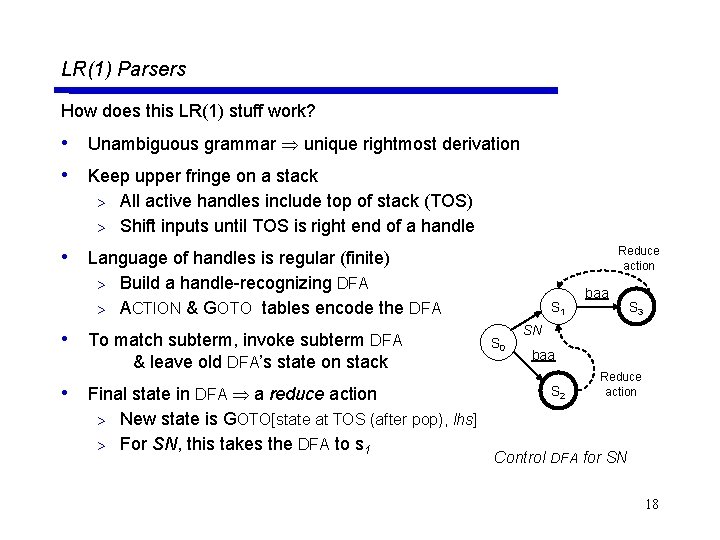

LR(1) Parsers How does this LR(1) stuff work? • Unambiguous grammar unique rightmost derivation • Keep upper fringe on a stack All active handles include top of stack (TOS) > Shift inputs until TOS is right end of a handle > • Language of handles is regular (finite) Reduce action Build a handle-recognizing DFA > ACTION & GOTO tables encode the DFA > • To match subterm, invoke subterm DFA & leave old DFA’s state on stack • Final state in DFA a reduce action New state is GOTO[state at TOS (after pop), lhs] > For SN, this takes the DFA to s 1 S 0 baa S 3 SN baa S 2 Reduce action > Control DFA for SN 18

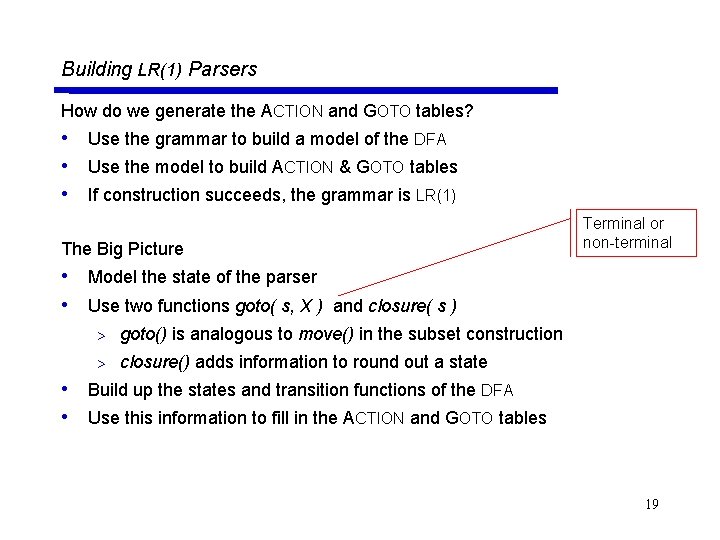

Building LR(1) Parsers How do we generate the ACTION and GOTO tables? • Use the grammar to build a model of the DFA • Use the model to build ACTION & GOTO tables • If construction succeeds, the grammar is LR(1) The Big Picture Terminal or non-terminal • Model the state of the parser • Use two functions goto( s, X ) and closure( s ) > goto() is analogous to move() in the subset construction > closure() adds information to round out a state • Build up the states and transition functions of the DFA • Use this information to fill in the ACTION and GOTO tables 19

![LR(k) items An LR(k) item is a pair [P, ], where P is a LR(k) items An LR(k) item is a pair [P, ], where P is a](http://slidetodoc.com/presentation_image/33513da83fd22dc1be00e6feee9708d0/image-20.jpg)

LR(k) items An LR(k) item is a pair [P, ], where P is a production A with a • at some position in the rhs is a lookahead string of length ≤ k (words or EOF) The • in an item indicates the position of the top of the stack [A • , a] means that the input seen so far is consistent with the use of A immediately after the symbol on top of the stack [A • , a] means that the input sees so far is consistent with the use of A at this point in the parse, and that the parser has already recognized . [A • , a] means that the parser has seen , and that a lookahead symbol of a is consistent with reducing to A. The table construction algorithm uses items to represent valid configurations of an LR(1) parser 20

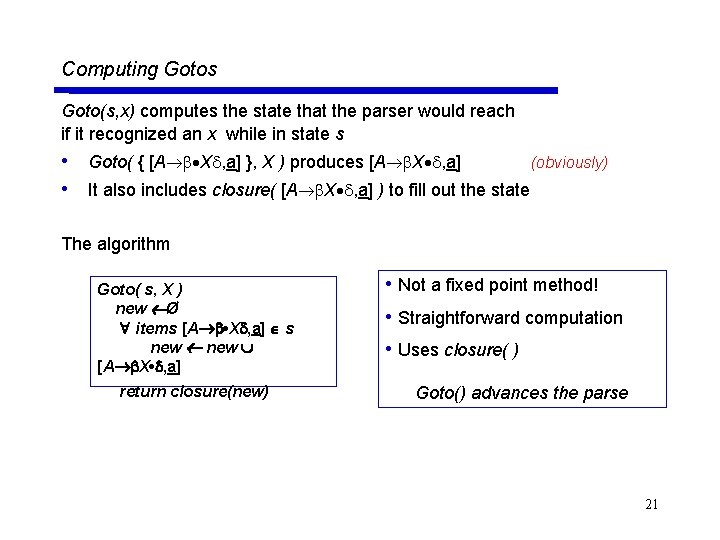

Computing Gotos Goto(s, x) computes the state that the parser would reach if it recognized an x while in state s • Goto( { [A X , a] }, X ) produces [A X , a] (obviously) • It also includes closure( [A X , a] ) to fill out the state The algorithm Goto( s, X ) new Ø items [A • X , a] s new [A X • , a] return closure(new) • Not a fixed point method! • Straightforward computation • Uses closure( ) Goto() advances the parse 21

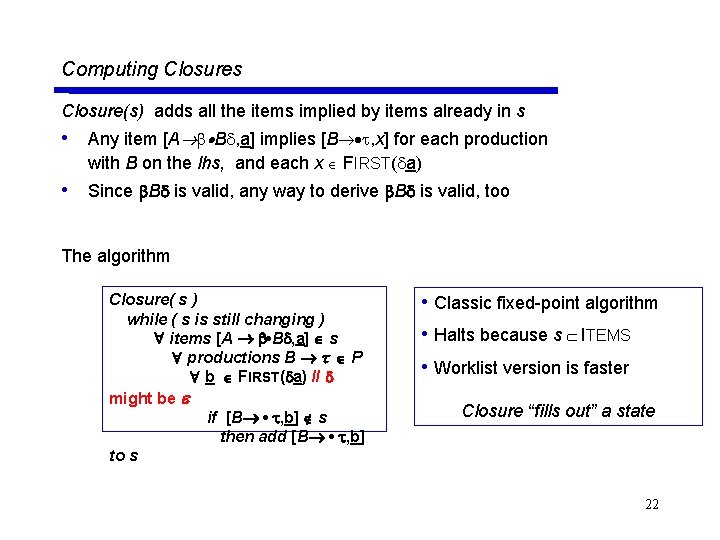

Computing Closures Closure(s) adds all the items implied by items already in s • Any item [A B , a] implies [B , x] for each production with B on the lhs, and each x FIRST( a) • Since B is valid, any way to derive B is valid, too The algorithm Closure( s ) while ( s is still changing ) items [A • B , a] s productions B P b FIRST( a) // might be if [B • , b] s then add [B • , b] to s • Classic fixed-point algorithm • Halts because s ITEMS • Worklist version is faster Closure “fills out” a state 22

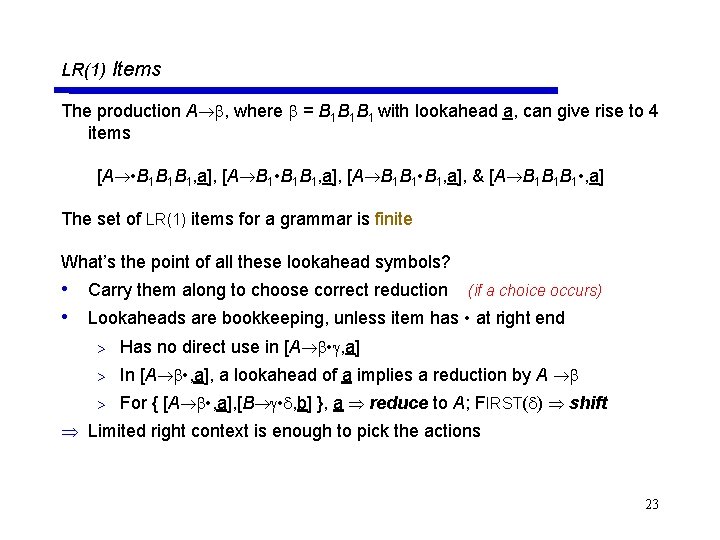

LR(1) Items The production A , where = B 1 B 1 B 1 with lookahead a, can give rise to 4 items [A • B 1 B 1 B 1, a], [A B 1 • B 1 B 1, a], [A B 1 B 1 • B 1, a], & [A B 1 B 1 B 1 • , a] The set of LR(1) items for a grammar is finite What’s the point of all these lookahead symbols? • Carry them along to choose correct reduction (if a choice occurs) • Lookaheads are bookkeeping, unless item has • at right end > Has no direct use in [A • , a] > In [A • , a], a lookahead of a implies a reduction by A > For { [A • , a], [B • , b] }, a reduce to A; FIRST( ) shift Limited right context is enough to pick the actions 23

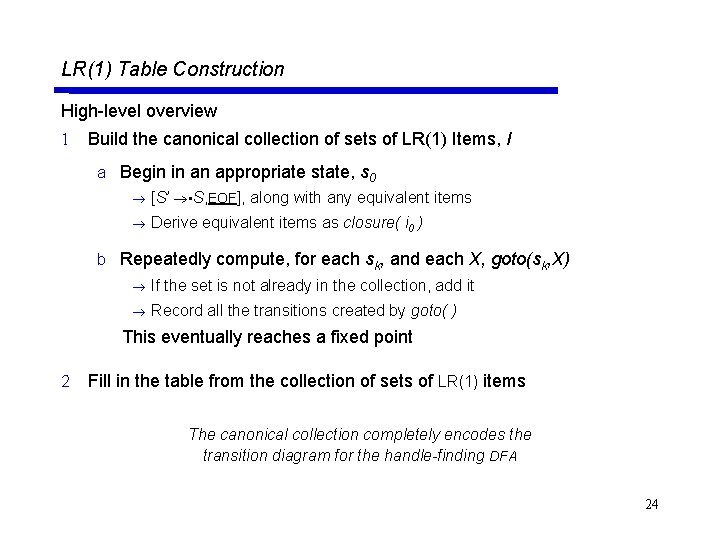

LR(1) Table Construction High-level overview 1 Build the canonical collection of sets of LR(1) Items, I a Begin in an appropriate state, s 0 [S’ • S, EOF], along with any equivalent items Derive equivalent items as closure( i 0 ) b Repeatedly compute, for each sk, and each X, goto(sk, X) If the set is not already in the collection, add it Record all the transitions created by goto( ) This eventually reaches a fixed point 2 Fill in the table from the collection of sets of LR(1) items The canonical collection completely encodes the transition diagram for the handle-finding DFA 24

![Building the Canonical Collection Start from s 0 = closure( [S’ S, EOF] ) Building the Canonical Collection Start from s 0 = closure( [S’ S, EOF] )](http://slidetodoc.com/presentation_image/33513da83fd22dc1be00e6feee9708d0/image-25.jpg)

Building the Canonical Collection Start from s 0 = closure( [S’ S, EOF] ) Repeatedly construct new states, until all are found The algorithm s 0 closure( [S’ S, EOF] ) S { s 0 } k 1 while ( S is still changing ) sj S and x ( T NT ) sk goto(sj, x) record sj sk on x if sk S then S S sk k k+1 • Fixed-point computation • Loop adds to S • S 2 ITEMS, so S is finite • Worklist version is faster 25

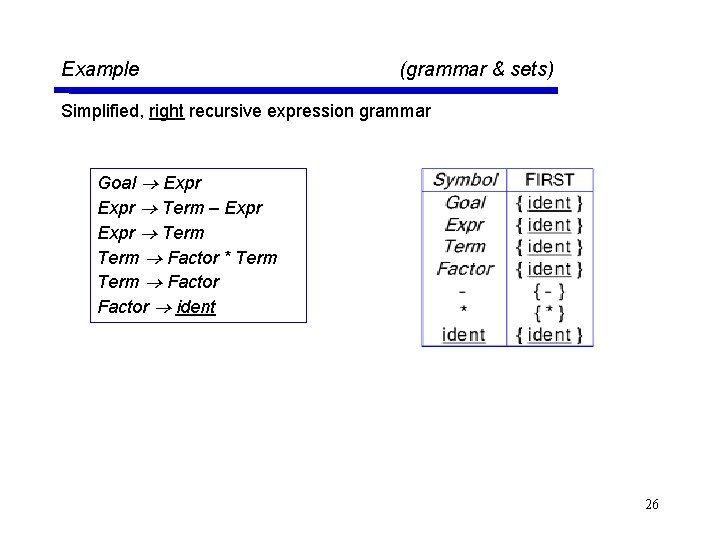

Example (grammar & sets) Simplified, right recursive expression grammar Goal Expr Term – Expr Term Factor * Term Factor ident 26

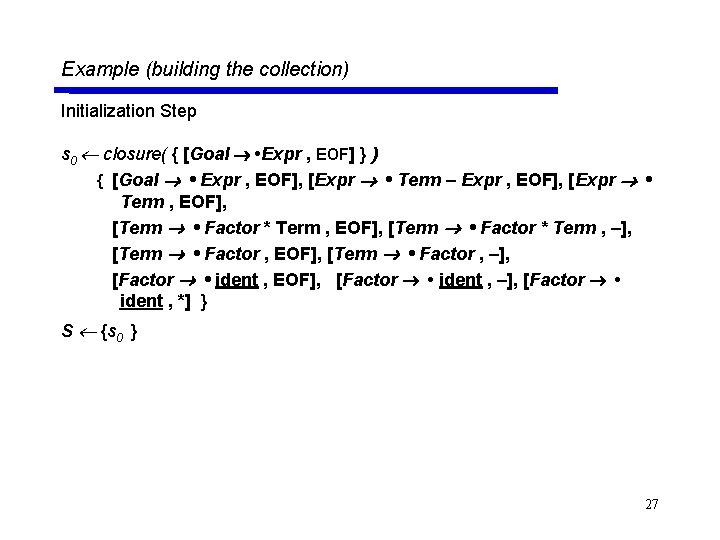

Example (building the collection) Initialization Step s 0 closure( { [Goal • Expr , EOF] } ) { [Goal • Expr , EOF], [Expr • Term – Expr , EOF], [Expr • Term , EOF], [Term • Factor * Term , –], [Term • Factor , EOF], [Term • Factor , –], [Factor • ident , EOF], [Factor • ident , –], [Factor • ident , *] } S {s 0 } 27

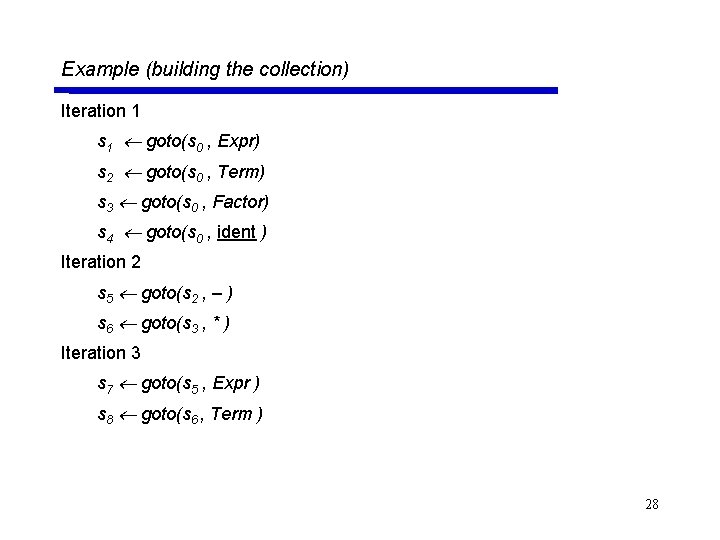

Example (building the collection) Iteration 1 s 1 goto(s 0 , Expr) s 2 goto(s 0 , Term) s 3 goto(s 0 , Factor) s 4 goto(s 0 , ident ) Iteration 2 s 5 goto(s 2 , – ) s 6 goto(s 3 , * ) Iteration 3 s 7 goto(s 5 , Expr ) s 8 goto(s 6 , Term ) 28

![Example (Summary) S 0 : { [Goal • Expr , EOF], [Expr • Term Example (Summary) S 0 : { [Goal • Expr , EOF], [Expr • Term](http://slidetodoc.com/presentation_image/33513da83fd22dc1be00e6feee9708d0/image-29.jpg)

Example (Summary) S 0 : { [Goal • Expr , EOF], [Expr • Term – Expr , EOF], [Expr • Term , EOF], [Term • Factor * Term , EOF], [Term • Factor * Term , –], [Term • Factor , EOF], [Term • Factor , –], [Factor • ident , EOF], [Factor • ident , –], [Factor • ident, *] } S 1 : { [Goal Expr • , EOF] } S 2 : { [Expr Term • – Expr , EOF], [Expr Term • , EOF] } S 3 : { [Term Factor • * Term , EOF], [Term Factor • * Term , –], [Term Factor • , EOF], [Term Factor • , –] } S 4 : { [Factor ident • , EOF], [Factor ident • , –], [Factor ident • , *] } S 5 : { [Expr Term – • Expr , EOF], [Expr • Term – Expr , EOF], [Expr • Term , EOF], [Term • Factor * Term , –], [Term • Factor , –], [Term • Factor * Term , EOF], [Term • Factor , EOF], 29 [Factor • ident , *], [Factor • ident , –], [Factor • ident , EOF] }

![Example (Summary) S 6 : { [Term Factor * • Term , EOF], [Term Example (Summary) S 6 : { [Term Factor * • Term , EOF], [Term](http://slidetodoc.com/presentation_image/33513da83fd22dc1be00e6feee9708d0/image-30.jpg)

Example (Summary) S 6 : { [Term Factor * • Term , EOF], [Term Factor * • Term , –], [Term • Factor * Term , EOF], [Term • Factor * Term , –], [Term • Factor , EOF], [Term • Factor , –], [Factor • ident , EOF], [Factor • ident , –], [Factor • ident , *] } S 7: { [Expr Term – Expr • , EOF] } S 8 : { [Term Factor * Term • , EOF], [Term Factor * Term • , –] } 30

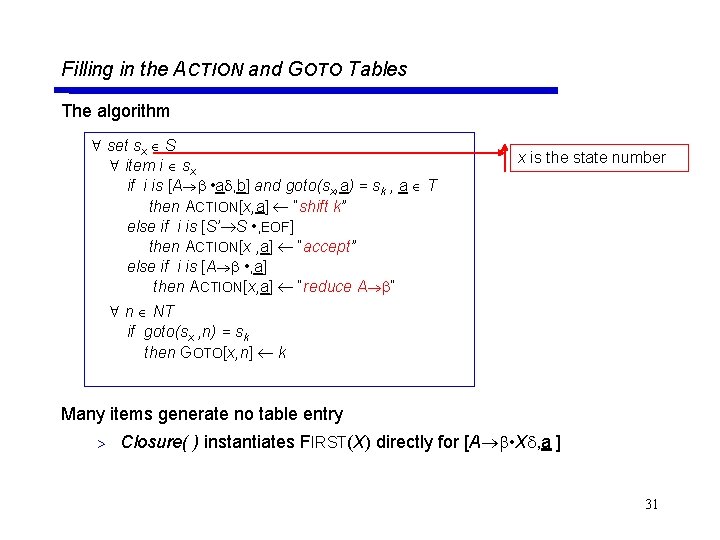

Filling in the ACTION and GOTO Tables The algorithm set sx S item i sx if i is [A • a , b] and goto(sx, a) = sk , a T then ACTION[x, a] “shift k” else if i is [S’ S • , EOF] then ACTION[x , a] “accept” else if i is [A • , a] then ACTION[x, a] “reduce A ” x is the state number n NT if goto(sx , n) = sk then GOTO[x, n] k Many items generate no table entry > Closure( ) instantiates FIRST(X) directly for [A • X , a ] 31

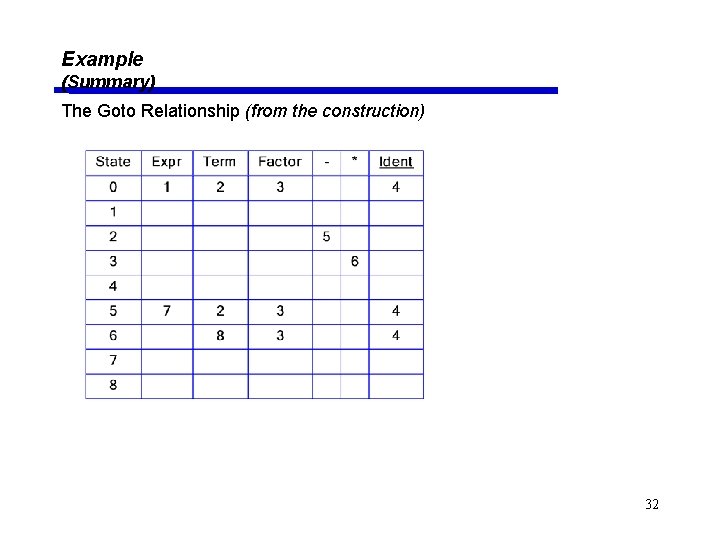

Example (Summary) The Goto Relationship (from the construction) 32

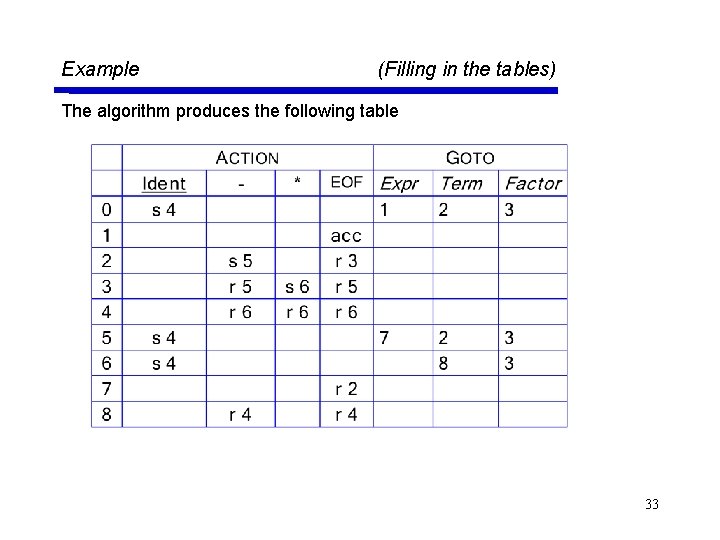

Example (Filling in the tables) The algorithm produces the following table 33

![What can go wrong? What if set s contains [A • a , b] What can go wrong? What if set s contains [A • a , b]](http://slidetodoc.com/presentation_image/33513da83fd22dc1be00e6feee9708d0/image-34.jpg)

What can go wrong? What if set s contains [A • a , b] and [B • , a] ? • • • First item generates “shift”, second generates “reduce” Both define ACTION[s, a] — cannot do both actions This is a fundamental ambiguity, called a shift/reduce error Modify the grammar to eliminate it (if-then-else) Shifting will often resolve it correctly What is set s contains [A • , a] and [B • , a] ? • • Each generates “reduce”, but with a different production Both define ACTION[s, a] — cannot do both reductions This is a fundamental ambiguity, called a reduce/reduce conflict Modify the grammar to eliminate it (PL/I’s overloading of (. . . )) In either case, the grammar is not LR(1) 34

LR(k) versus LL(k) (Top-down Recursive Descent ) Finding Reductions LR(k) Each reduction in the parse is detectable with 1 the complete left context, 2 the reducible phrase, itself, and 3 the k terminal symbols to its right 3 LL(k) Parser must select the reduction based on 1 The complete left context 2 The next k terminals 2 Thus, LR(k) examines more context 2 “… in practice, programming languages do not actually seem to fall in the gap between LL(1) languages and deterministic languages” J. J. Horning, “LR Grammars and Analysers”, in Compiler Construction, An Advanced Course, Springer-Verlag, 1976 35

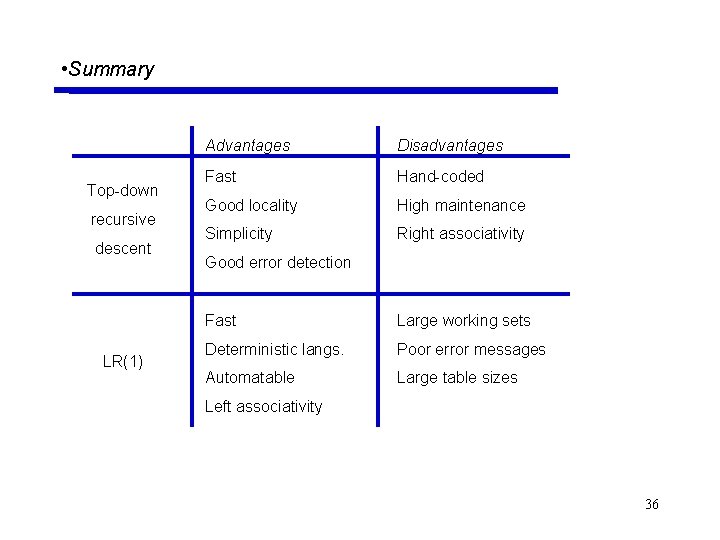

• Summary Top-down recursive descent LR(1) Advantages Disadvantages Fast Hand-coded Good locality High maintenance Simplicity Right associativity Good error detection Fast Large working sets Deterministic langs. Poor error messages Automatable Large table sizes Left associativity 36

- Slides: 36