Bootstrapping FeatureRich Dependency Parsers with Entropic Priors David

Bootstrapping Feature-Rich Dependency Parsers with Entropic Priors David A. Smith Jason Eisner Johns Hopkins University EMNLP-Co. NLL, 29 David A. Smith & Jason Eisner 1

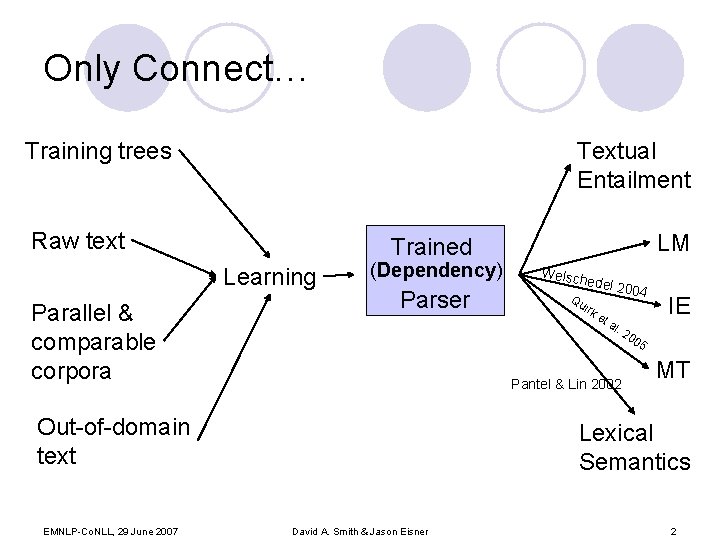

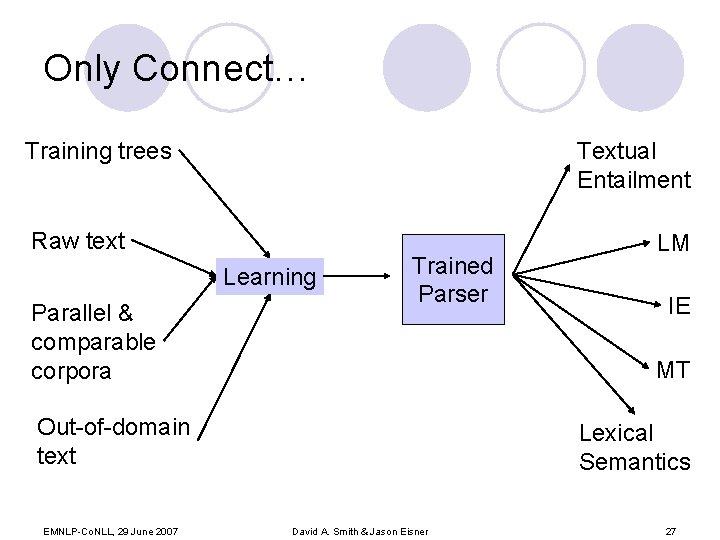

Only Connect… Training trees Textual Entailment Raw text Learning Parallel & comparable corpora (Dependency) Parser Weisc hedel Qu ir ke ta 2004 l. 2 Pantel & Lin 2002 Out-of-domain text EMNLP-Co. NLL, 29 June 2007 LM Trained IE 00 5 MT Lexical Semantics David A. Smith & Jason Eisner 2

Outline: Bootstrapping Parsers l What l How should we train it semi-supervised? l Does l How kind of parser should we train? it work? (initial experiments) can we incorporate other knowledge? EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 3

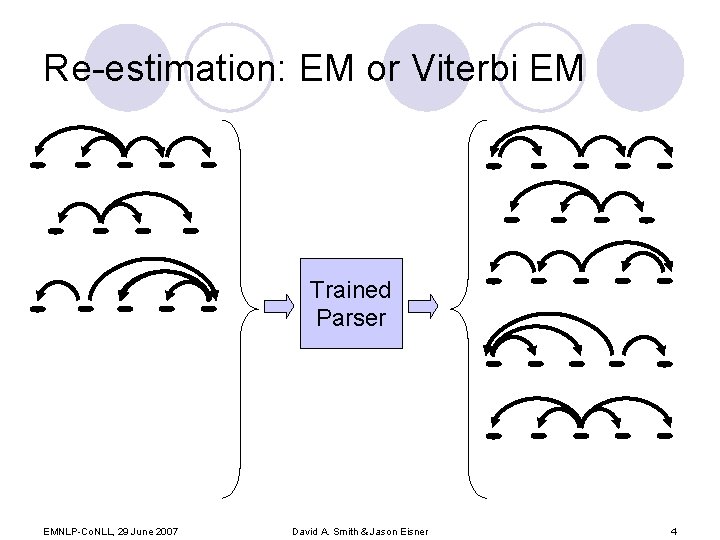

Re-estimation: EM or Viterbi EM Trained Parser EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 4

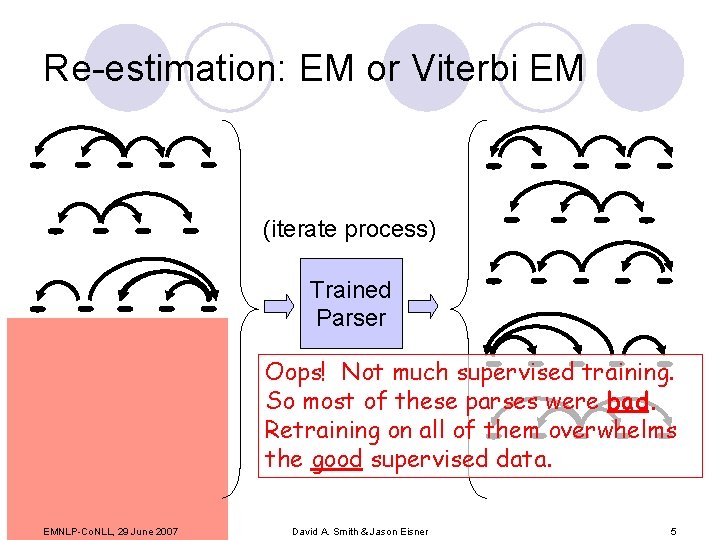

Re-estimation: EM or Viterbi EM (iterate process) Trained Parser Oops! Not much supervised training. So most of these parses were bad. Retraining on all of them overwhelms the good supervised data. EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 5

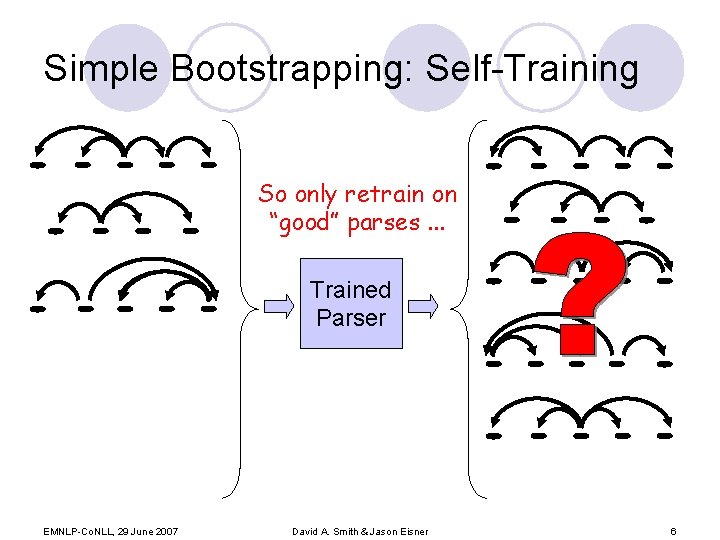

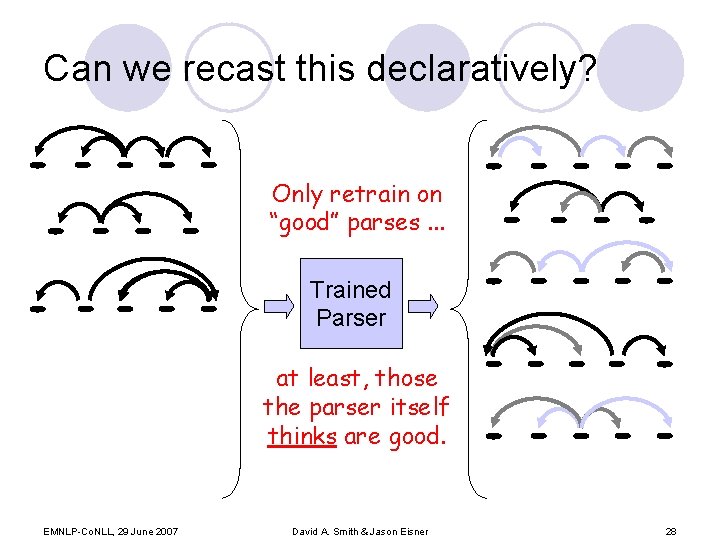

Simple Bootstrapping: Self-Training So only retrain on “good” parses. . . Trained Parser EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 6

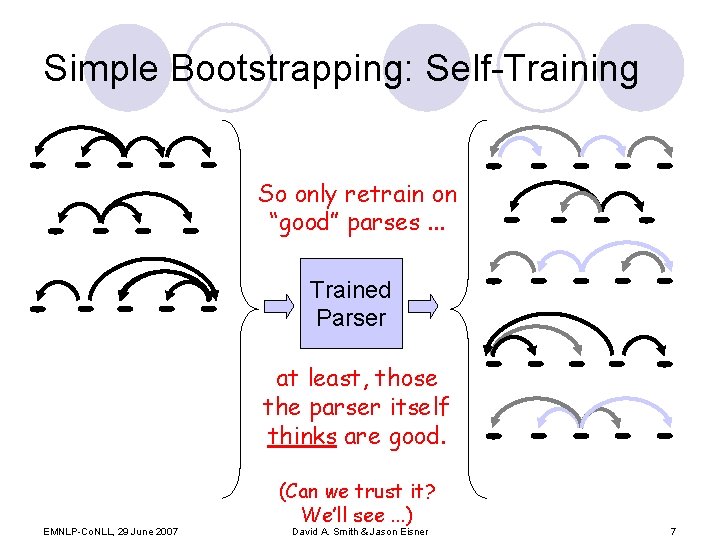

Simple Bootstrapping: Self-Training So only retrain on “good” parses. . . Trained Parser at least, those the parser itself thinks are good. EMNLP-Co. NLL, 29 June 2007 (Can we trust it? We’ll see. . . ) David A. Smith & Jason Eisner 7

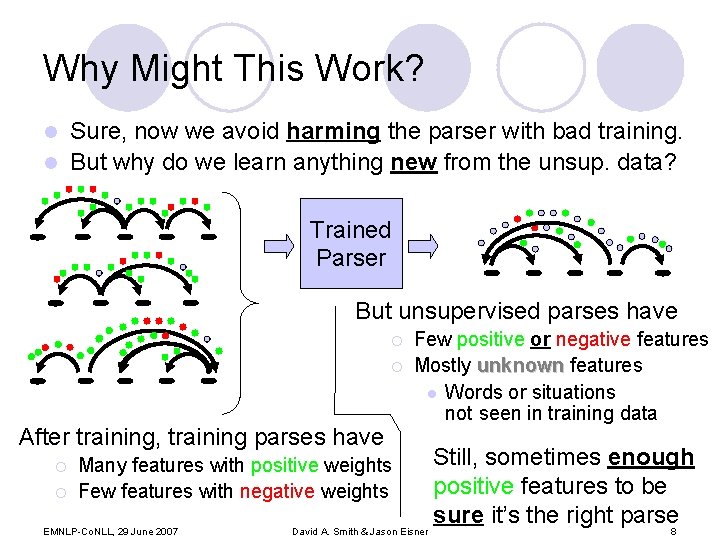

Why Might This Work? Sure, now we avoid harming the parser with bad training. l But why do we learn anything new from the unsup. data? l Trained Parser But unsupervised parses have ¡ ¡ After training, training parses have ¡ ¡ Few positive or negative features Mostly unknown features l Words or situations not seen in training data Many features with positive weights Few features with negative weights EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner Still, sometimes enough positive features to be sure it’s the right parse 8

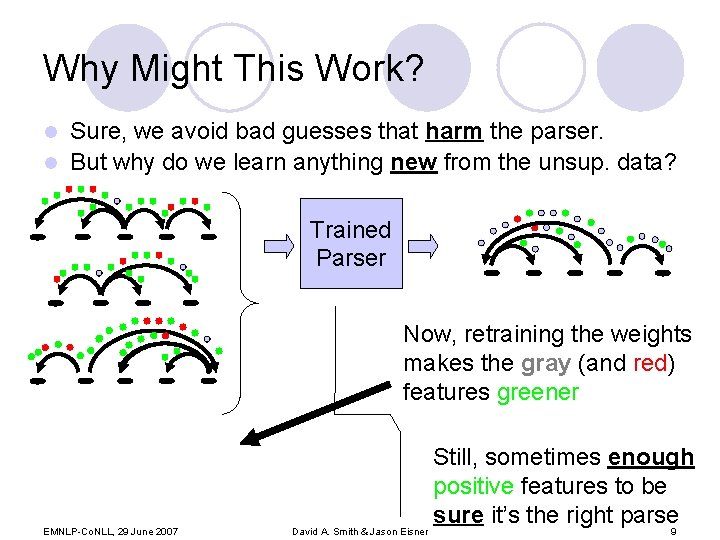

Why Might This Work? Sure, we avoid bad guesses that harm the parser. l But why do we learn anything new from the unsup. data? l Trained Parser Now, retraining the weights makes the gray (and red) features greener EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner Still, sometimes enough positive features to be sure it’s the right parse 9

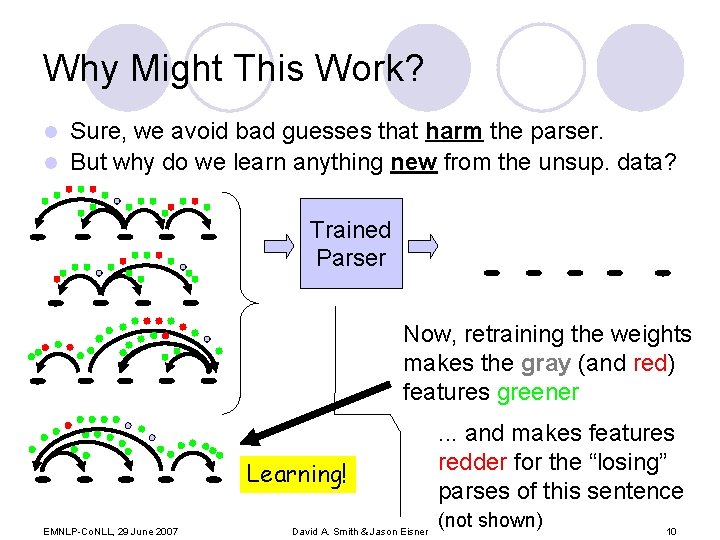

Why Might This Work? Sure, we avoid bad guesses that harm the parser. l But why do we learn anything new from the unsup. data? l Trained Parser Now, retraining the weights makes the gray (and red) features greener Learning! EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner . . . and makes features Still, sometimes enough redder for the “losing” positive features to be parses of this sentence sure it’s the right parse (not shown) 10

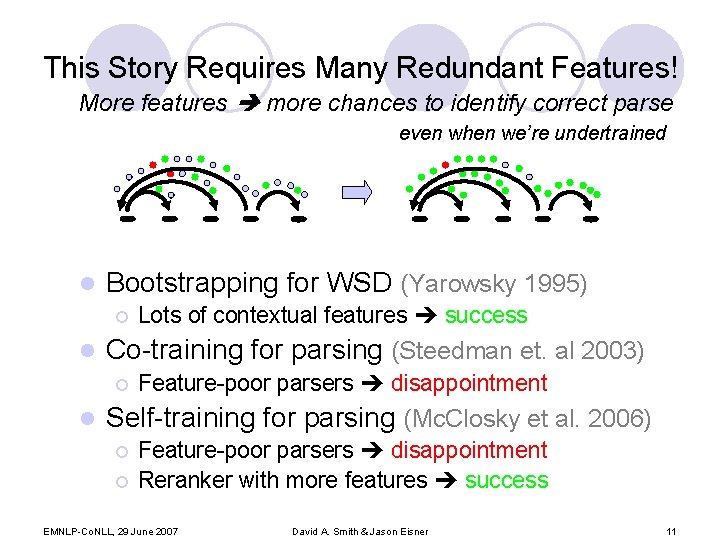

This Story Requires Many Redundant Features! More features more chances to identify correct parse even when we’re undertrained l Bootstrapping for WSD (Yarowsky 1995) ¡ l Co-training for parsing (Steedman et. al 2003) ¡ l Lots of contextual features success Feature-poor parsers disappointment Self-training for parsing (Mc. Closky et al. 2006) ¡ ¡ Feature-poor parsers disappointment Reranker with more features success EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 11

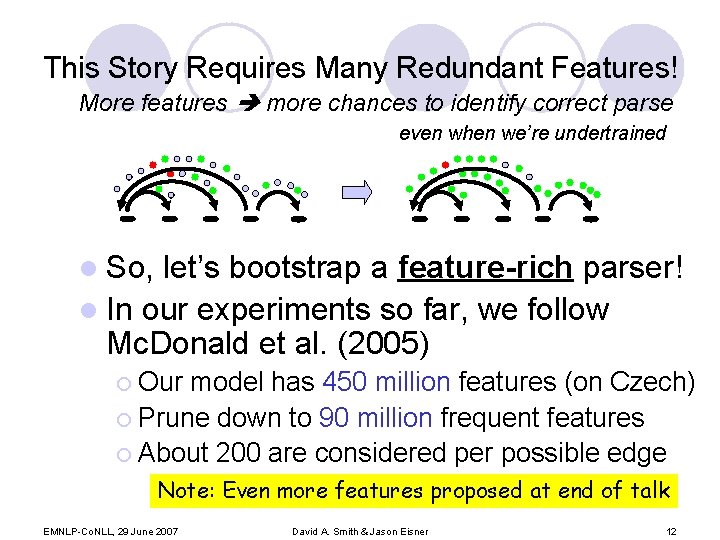

This Story Requires Many Redundant Features! More features more chances to identify correct parse even when we’re undertrained l So, let’s bootstrap a feature-rich parser! l In our experiments so far, we follow Mc. Donald et al. (2005) ¡ Our model has 450 million features (on Czech) ¡ Prune down to 90 million frequent features ¡ About 200 are considered per possible edge Note: Even more features proposed at end of talk EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 12

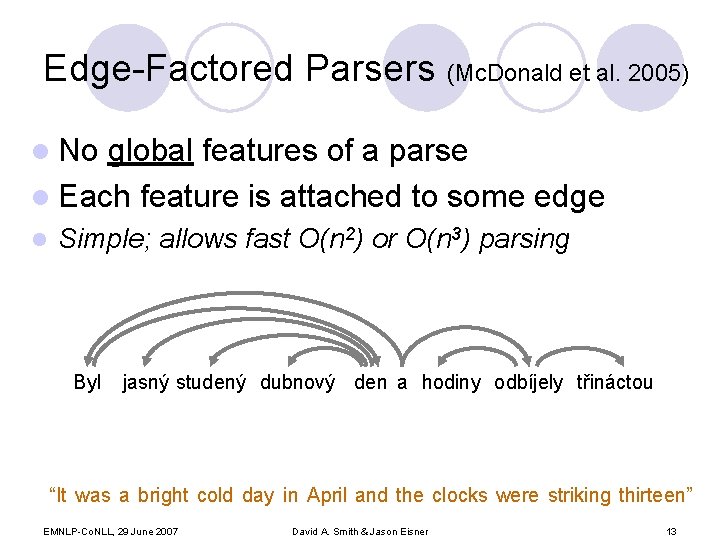

Edge-Factored Parsers (Mc. Donald et al. 2005) l No global features of a parse l Each feature is attached to some edge l Simple; allows fast O(n 2) or O(n 3) parsing Byl jasný studený dubnový den a hodiny odbíjely třináctou “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 13

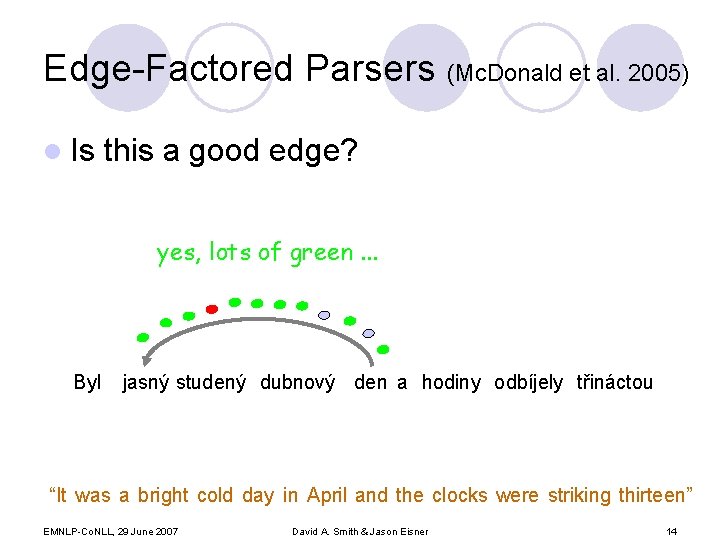

Edge-Factored Parsers (Mc. Donald et al. 2005) l Is this a good edge? yes, lots of green. . . Byl jasný studený dubnový den a hodiny odbíjely třináctou “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 14

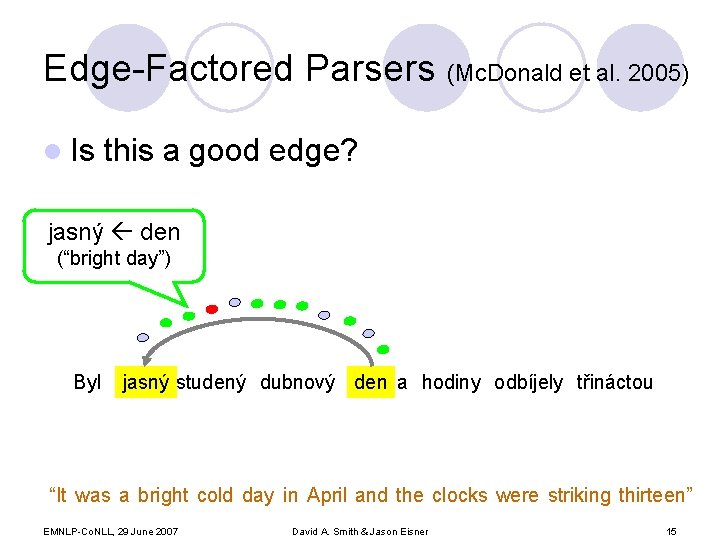

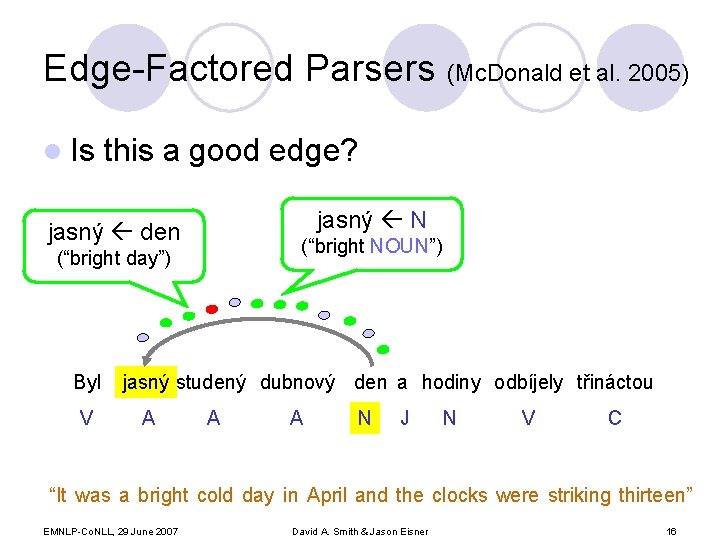

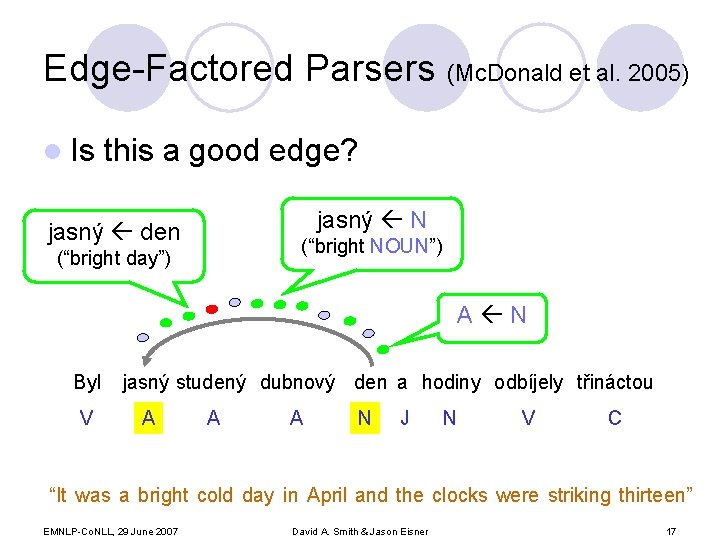

Edge-Factored Parsers (Mc. Donald et al. 2005) l Is this a good edge? jasný den (“bright day”) Byl jasný studený dubnový den a hodiny odbíjely třináctou “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 15

Edge-Factored Parsers (Mc. Donald et al. 2005) l Is this a good edge? jasný N jasný den (“bright NOUN”) (“bright day”) Byl V jasný studený dubnový den a hodiny odbíjely třináctou A A A N J N V C “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 16

Edge-Factored Parsers (Mc. Donald et al. 2005) l Is this a good edge? jasný N jasný den (“bright NOUN”) (“bright day”) A N Byl V jasný studený dubnový den a hodiny odbíjely třináctou A A A N J N V C “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 17

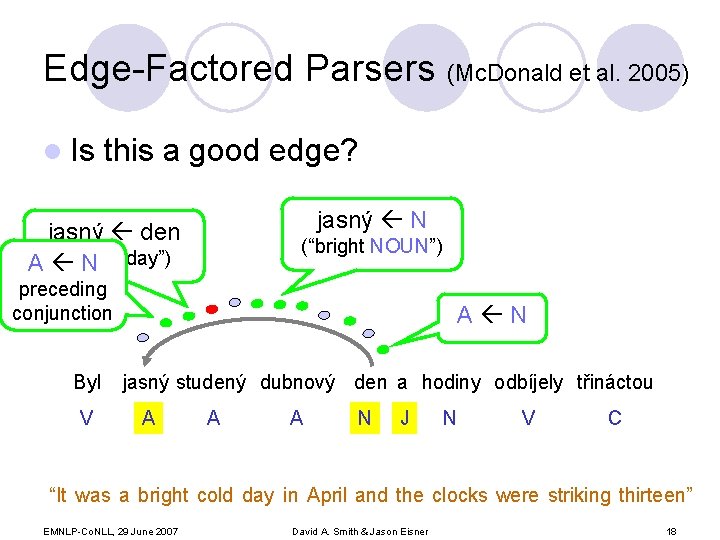

Edge-Factored Parsers (Mc. Donald et al. 2005) l Is this a good edge? jasný N jasný den (“bright A N day”) (“bright NOUN”) preceding conjunction Byl V A N jasný studený dubnový den a hodiny odbíjely třináctou A A A N J N V C “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 18

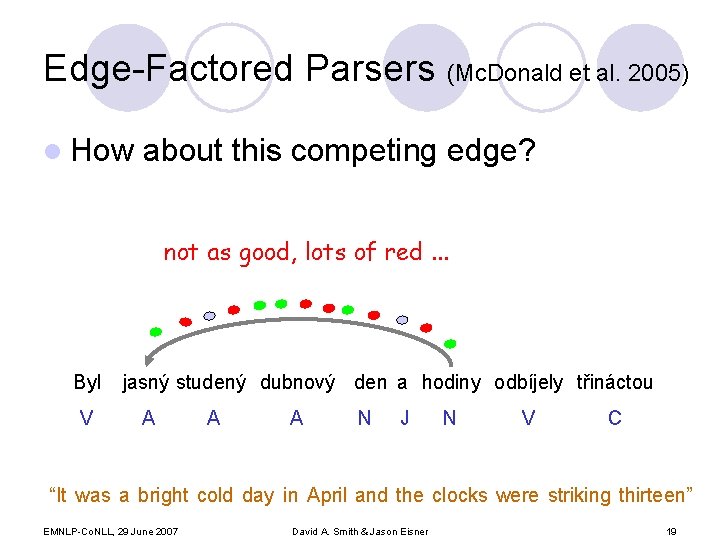

Edge-Factored Parsers (Mc. Donald et al. 2005) l How about this competing edge? not as good, lots of red. . . Byl V jasný studený dubnový den a hodiny odbíjely třináctou A A A N J N V C “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 19

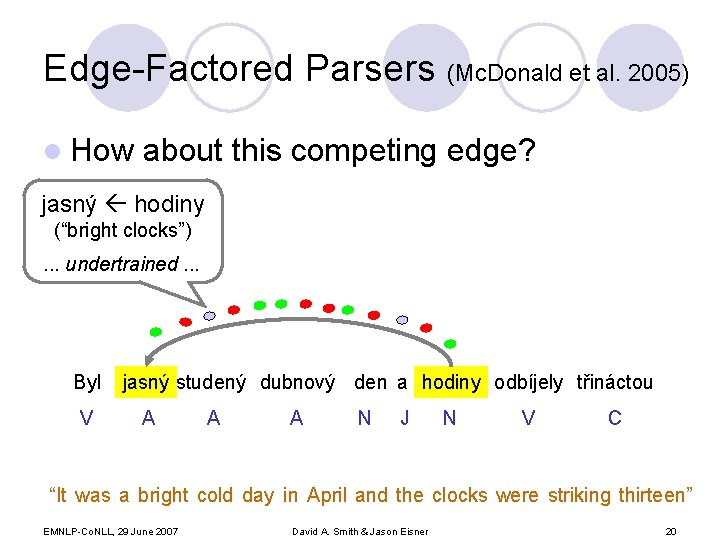

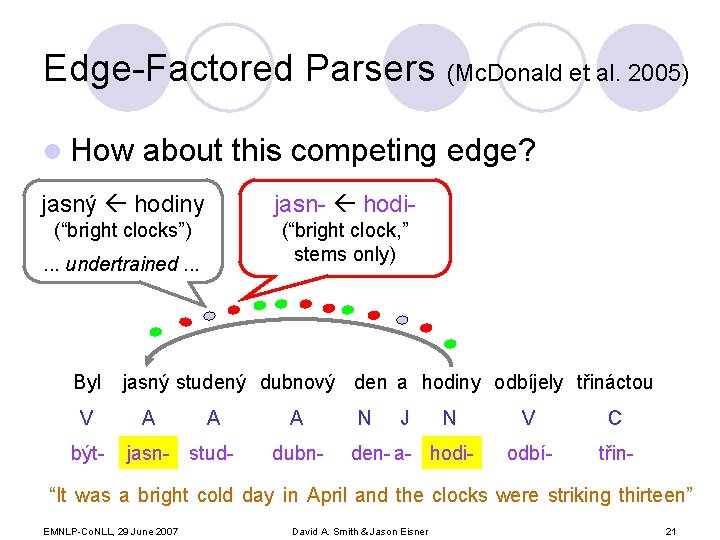

Edge-Factored Parsers (Mc. Donald et al. 2005) l How about this competing edge? jasný hodiny (“bright clocks”). . . undertrained. . . Byl V jasný studený dubnový den a hodiny odbíjely třináctou A A A N J N V C “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 20

Edge-Factored Parsers (Mc. Donald et al. 2005) l How about this competing edge? jasný hodiny jasn- hodi- (“bright clocks”) (“bright clock, ” stems only) . . . undertrained. . . Byl V být- jasný studený dubnový den a hodiny odbíjely třináctou A A jasn- stud- A dubn- N J N den- a- hodi- V C odbí- třin- “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 21

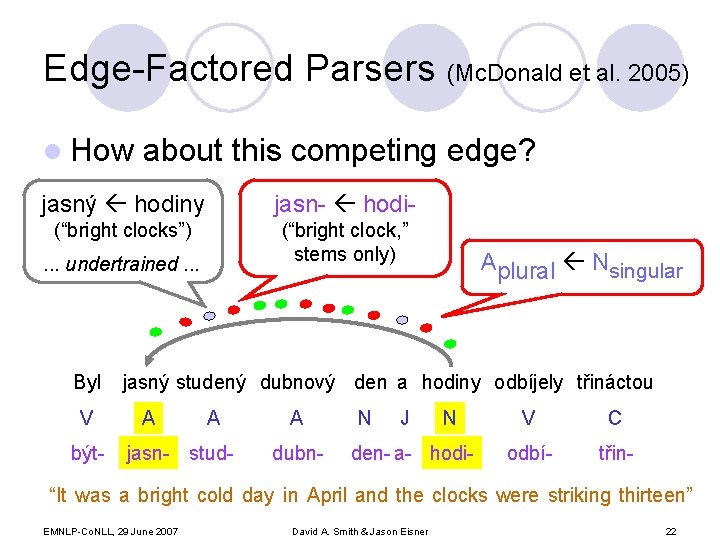

Edge-Factored Parsers (Mc. Donald et al. 2005) l How about this competing edge? jasný hodiny jasn- hodi- (“bright clocks”) (“bright clock, ” stems only) . . . undertrained. . . Byl V být- Aplural Nsingular jasný studený dubnový den a hodiny odbíjely třináctou A A jasn- stud- A dubn- N J N den- a- hodi- V C odbí- třin- “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 22

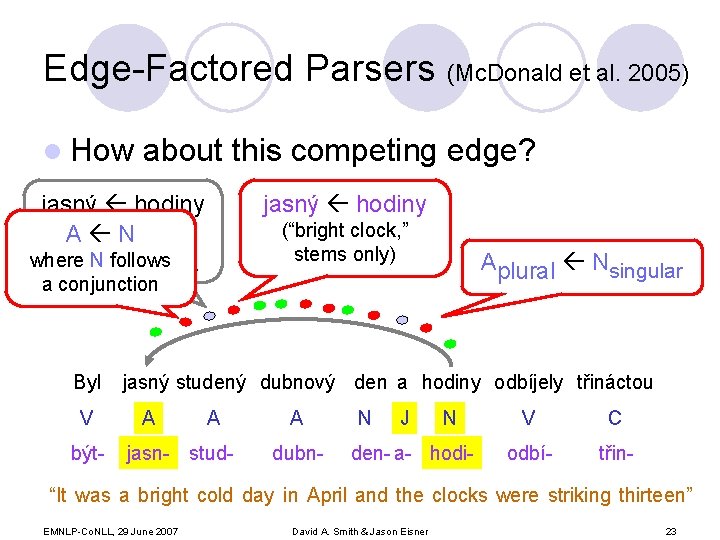

Edge-Factored Parsers (Mc. Donald et al. 2005) l How about this competing edge? jasný hodiny (“bright clocks”) A N (“bright clock, ” stems only) where N follows. . . undertrained a conjunction Byl V být- Aplural Nsingular jasný studený dubnový den a hodiny odbíjely třináctou A A jasn- stud- A dubn- N J N den- a- hodi- V C odbí- třin- “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 23

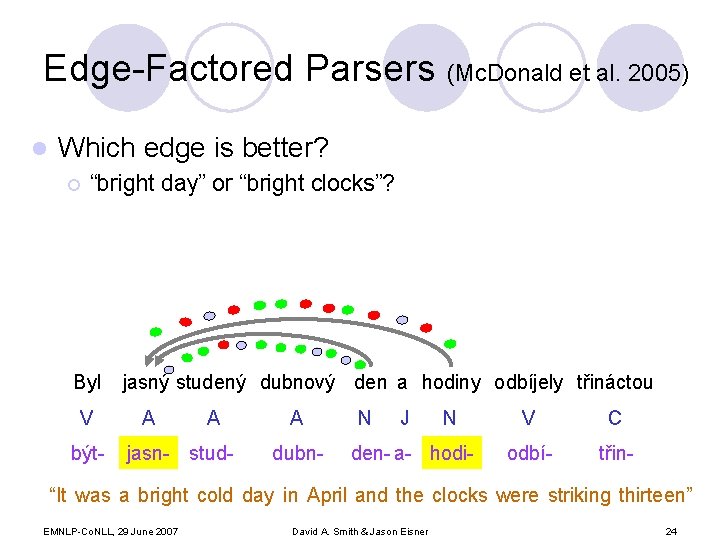

Edge-Factored Parsers (Mc. Donald et al. 2005) l Which edge is better? ¡ “bright day” or “bright clocks”? Byl V být- jasný studený dubnový den a hodiny odbíjely třináctou A A jasn- stud- A dubn- N J N den- a- hodi- V C odbí- třin- “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 24

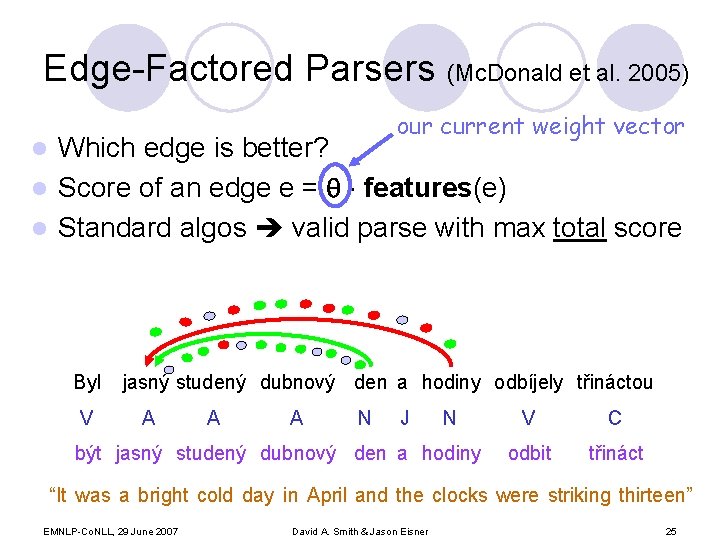

Edge-Factored Parsers (Mc. Donald et al. 2005) our current weight vector Which edge is better? l Score of an edge e = features(e) l Standard algos valid parse with max total score l Byl V jasný studený dubnový den a hodiny odbíjely třináctou A A A N J N být jasný studený dubnový den a hodiny V C odbit třináct “It was a bright cold day in April and the clocks were striking thirteen” EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 25

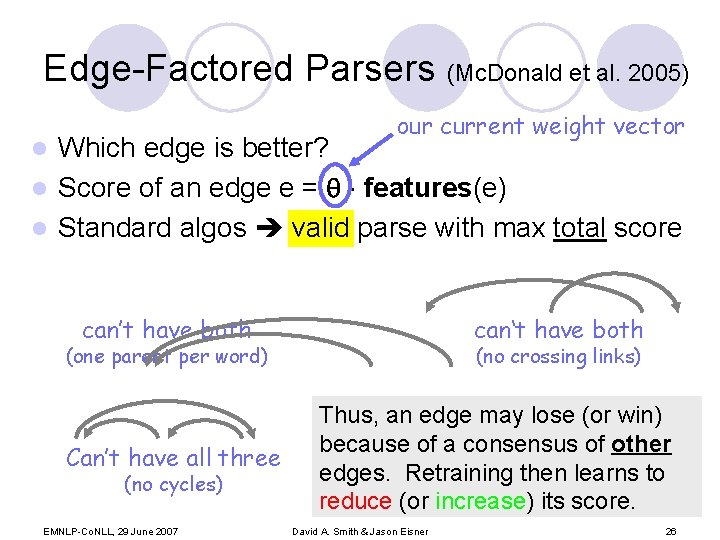

Edge-Factored Parsers (Mc. Donald et al. 2005) our current weight vector Which edge is better? l Score of an edge e = features(e) l Standard algos valid parse with max total score l can’t have both can‘t have both (one parent per word) Can’t have all three (no cycles) EMNLP-Co. NLL, 29 June 2007 (no crossing links) Thus, an edge may lose (or win) because of a consensus of other edges. Retraining then learns to reduce (or increase) its score. David A. Smith & Jason Eisner 26

Only Connect… Training trees Textual Entailment Raw text Learning Parallel & comparable corpora Trained Parser IE MT Out-of-domain text EMNLP-Co. NLL, 29 June 2007 LM Lexical Semantics David A. Smith & Jason Eisner 27

Can we recast this declaratively? Only retrain on “good” parses. . . Trained Parser at least, those the parser itself thinks are good. EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 28

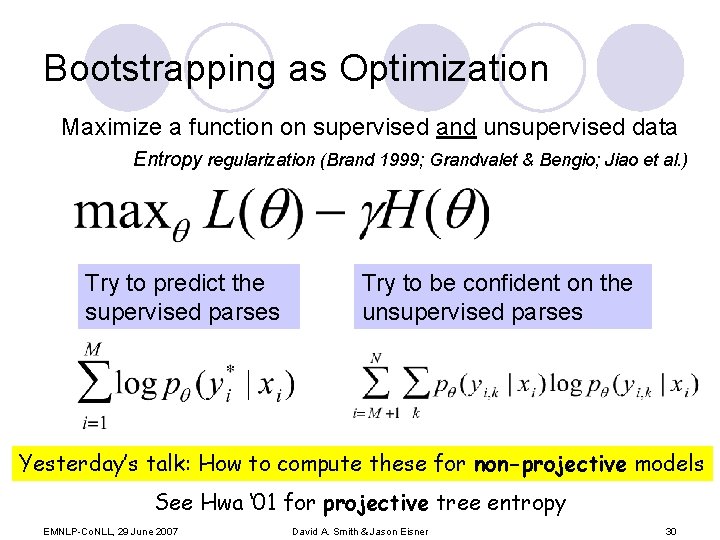

Bootstrapping as Optimization Maximize a function on supervised and unsupervised data Entropy regularization (Brand 1999; Grandvalet & Bengio; Jiao et al. ) Try to predict the supervised parses Try to be confident on the unsupervised parses Yesterday’s talk: How to compute these for non-projective models See Hwa ‘ 01 for projective tree entropy EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 30

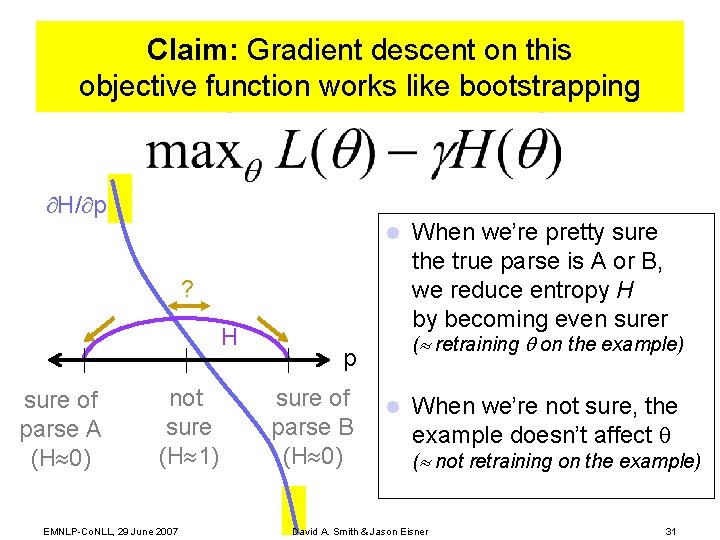

Claim: Gradient descent on this objective function works like bootstrapping H/ p l ? H sure of parse A (H 0) not sure (H 1) EMNLP-Co. NLL, 29 June 2007 ( retraining on the example) p sure of parse B (H 0) When we’re pretty sure the true parse is A or B, we reduce entropy H by becoming even surer l When we’re not sure, the example doesn’t affect ( not retraining on the example) David A. Smith & Jason Eisner 31

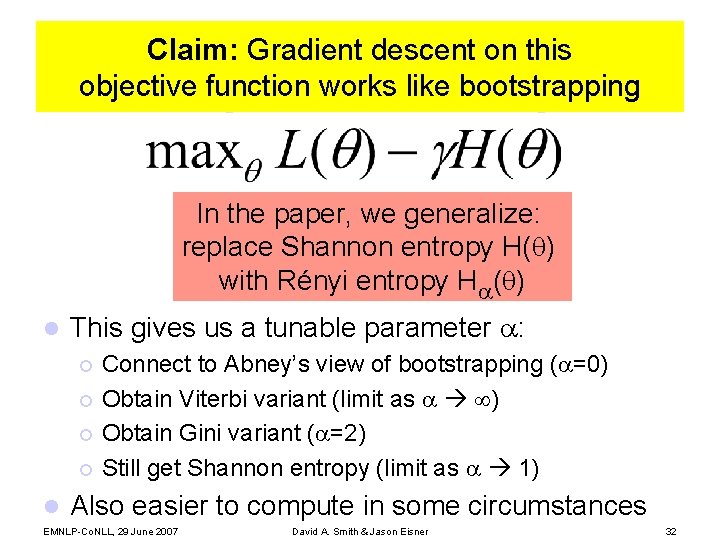

Claim: Gradient descent on this objective function works like bootstrapping In the paper, we generalize: replace Shannon entropy H( ) with Rényi entropy H ( ) l This gives us a tunable parameter : Connect to Abney’s view of bootstrapping ( =0) ¡ Obtain Viterbi variant (limit as ) ¡ Obtain Gini variant ( =2) ¡ Still get Shannon entropy (limit as 1) ¡ l Also easier to compute in some circumstances EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 32

Experimental Questions l Are confident parses (or edges) actually good for retraining? l Does bootstrapping help accuracy? l What is being learned? EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 33

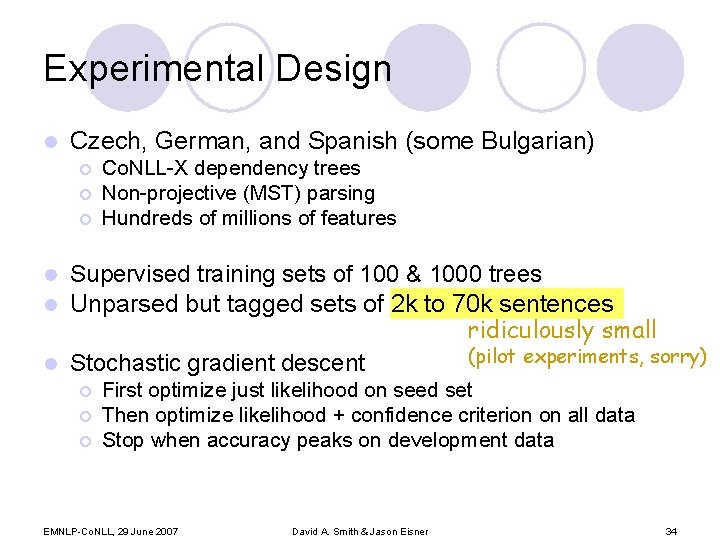

Experimental Design l Czech, German, and Spanish (some Bulgarian) ¡ ¡ ¡ Co. NLL-X dependency trees Non-projective (MST) parsing Hundreds of millions of features Supervised training sets of 100 & 1000 trees l Unparsed but tagged sets of 2 k to 70 k sentences ridiculously small (pilot experiments, sorry) l Stochastic gradient descent l ¡ ¡ ¡ First optimize just likelihood on seed set Then optimize likelihood + confidence criterion on all data Stop when accuracy peaks on development data EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 34

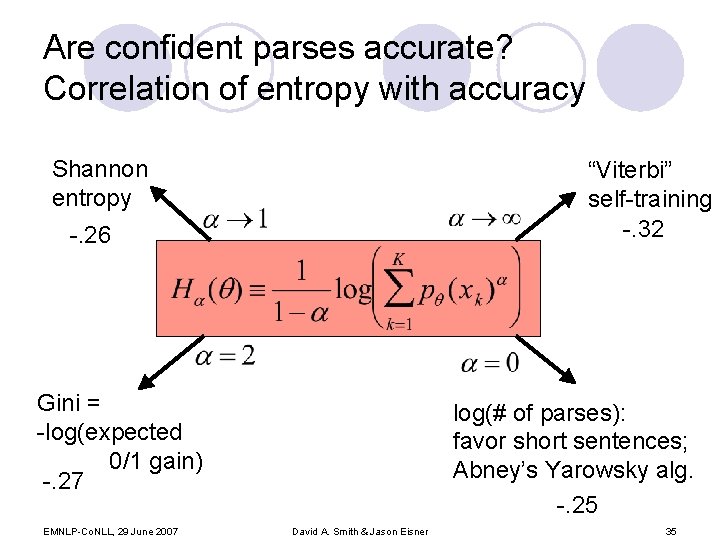

Are confident parses accurate? Correlation of entropy with accuracy Shannon entropy “Viterbi” self-training -. 32 -. 26 Gini = -log(expected 0/1 gain) -. 27 EMNLP-Co. NLL, 29 June 2007 log(# of parses): favor short sentences; Abney’s Yarowsky alg. -. 25 David A. Smith & Jason Eisner 35

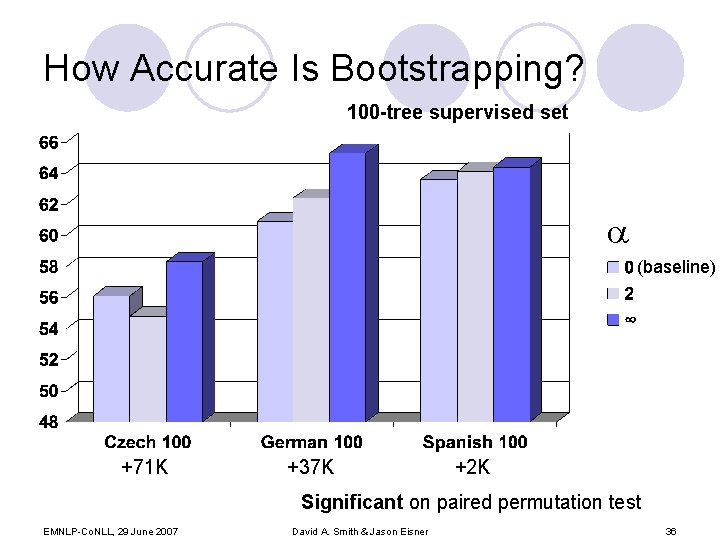

How Accurate Is Bootstrapping? 100 -tree supervised set (baseline) +71 K +37 K +2 K Significant on paired permutation test EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 36

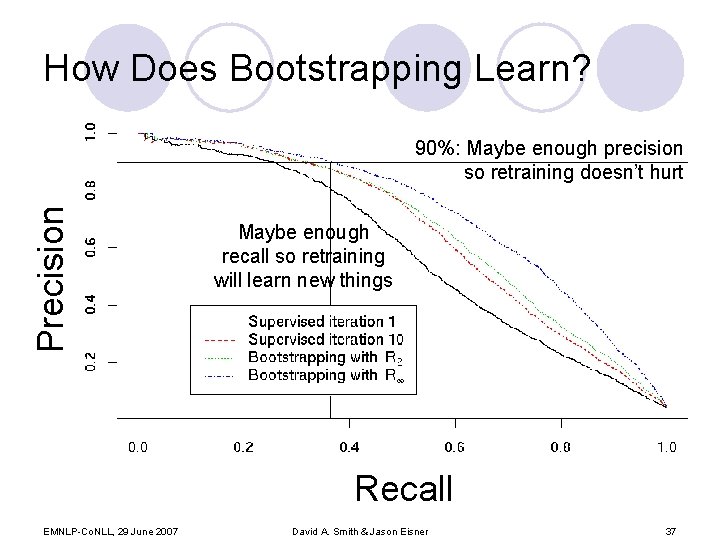

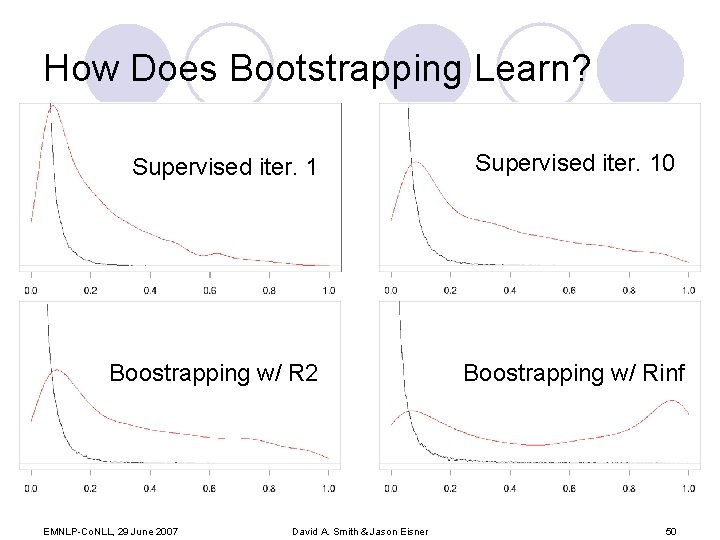

How Does Bootstrapping Learn? Precision 90%: Maybe enough precision so retraining doesn’t hurt Maybe enough recall so retraining will learn new things Recall EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 37

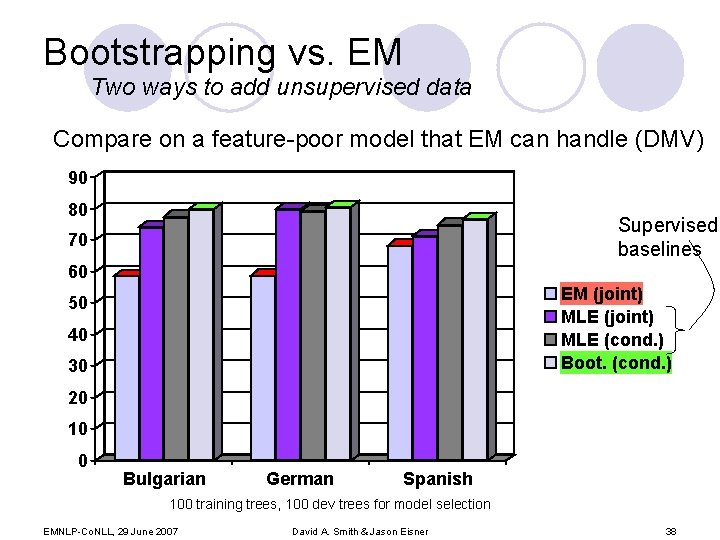

Bootstrapping vs. EM Two ways to add unsupervised data Compare on a feature-poor model that EM can handle (DMV) 90 80 Supervised baselines 70 60 EM (joint) MLE (cond. ) Boot. (cond. ) 50 40 30 20 10 0 Bulgarian German Spanish 100 training trees, 100 dev trees for model selection EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 38

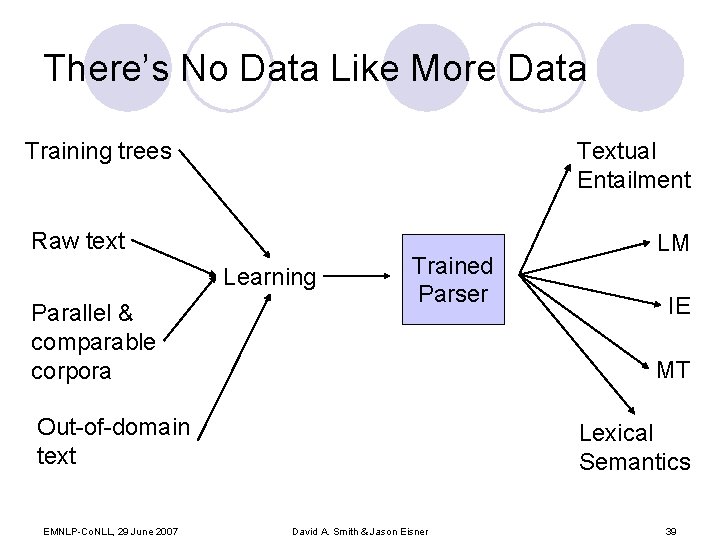

There’s No Data Like More Data Training trees Textual Entailment Raw text Learning Parallel & comparable corpora Trained Parser IE MT Out-of-domain text EMNLP-Co. NLL, 29 June 2007 LM Lexical Semantics David A. Smith & Jason Eisner 39

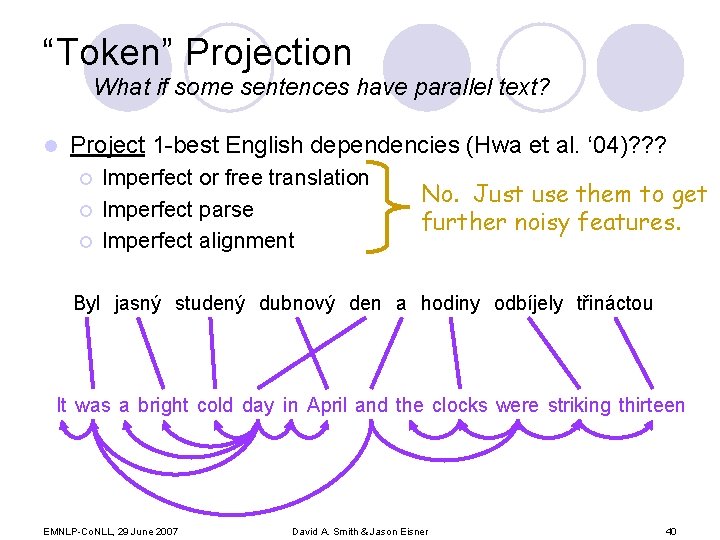

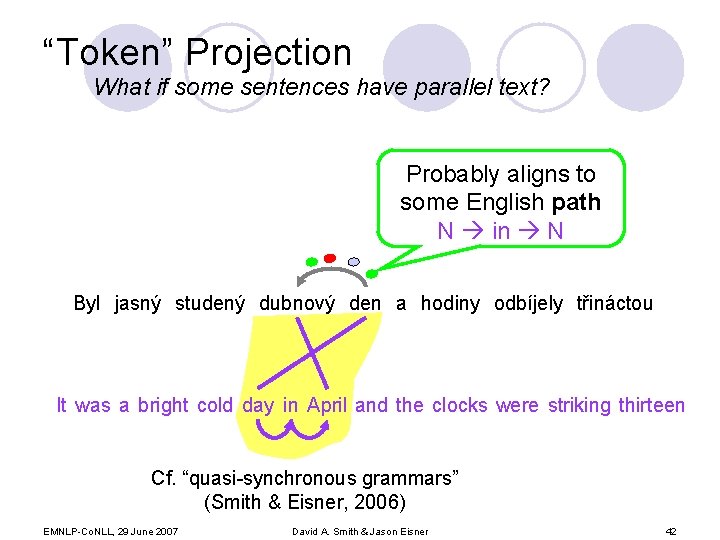

“Token” Projection What if some sentences have parallel text? l Project 1 -best English dependencies (Hwa et al. ‘ 04)? ? ? ¡ ¡ ¡ Imperfect or free translation Imperfect parse Imperfect alignment No. Just use them to get further noisy features. Byl jasný studený dubnový den a hodiny odbíjely třináctou It was a bright cold day in April and the clocks were striking thirteen EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 40

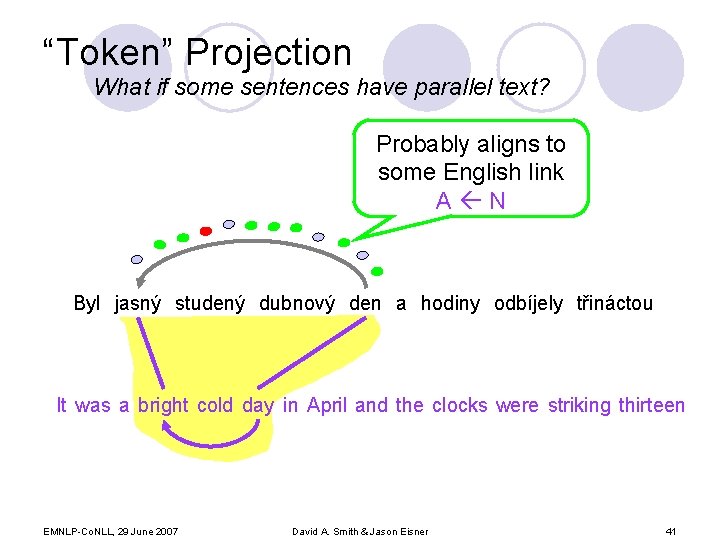

“Token” Projection What if some sentences have parallel text? Probably aligns to some English link A N Byl jasný studený dubnový den a hodiny odbíjely třináctou It was a bright cold day in April and the clocks were striking thirteen EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 41

“Token” Projection What if some sentences have parallel text? Probably aligns to some English path N in N Byl jasný studený dubnový den a hodiny odbíjely třináctou It was a bright cold day in April and the clocks were striking thirteen Cf. “quasi-synchronous grammars” (Smith & Eisner, 2006) EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 42

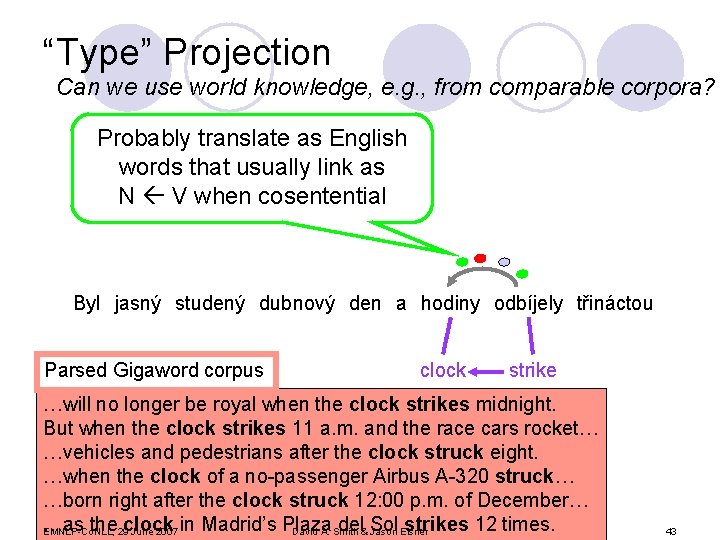

“Type” Projection Can we use world knowledge, e. g. , from comparable corpora? Probably translate as English words that usually link as N V when cosentential Byl jasný studený dubnový den a hodiny odbíjely třináctou Parsed Gigaword corpus clock strike …will no longer be royal when the clock strikes midnight. But when the clock strikes 11 a. m. and the race cars rocket… …vehicles and pedestrians after the clock struck eight. …when the clock of a no-passenger Airbus A-320 struck… …born right after the clock struck 12: 00 p. m. of December… …as the 29 clock del& Sol strikes 12 times. EMNLP-Co. NLL, June 2007 in Madrid’s Plaza David A. Smith Jason Eisner 43

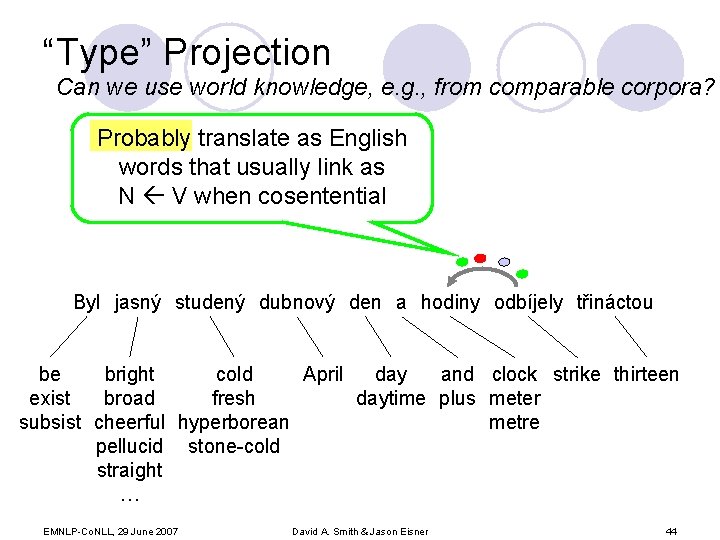

“Type” Projection Can we use world knowledge, e. g. , from comparable corpora? Probably translate as English words that usually link as N V when cosentential Byl jasný studený dubnový den a hodiny odbíjely třináctou be cold April day and clock strike thirteen bright exist fresh daytime plus meter broad subsist cheerful hyperborean metre pellucid stone-cold straight … EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 44

Conclusions l Declarative view of bootstrapping as entropy minimization l Improvements in parser accuracy with feature-rich models l Easily added features from alternative data sources, e. g. comparable text l In future: consider also the WSD decision list learner: is it important for learning robust feature weights? EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 45

Thanks Noah Smith Keith Hall The Anonymous Reviewers Ryan Mc. Donald for making his code available EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 46

Extra slides … EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner

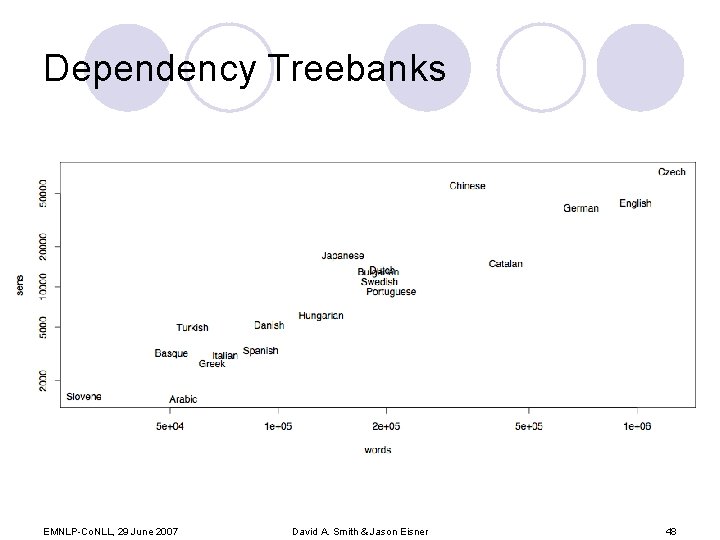

Dependency Treebanks EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 48

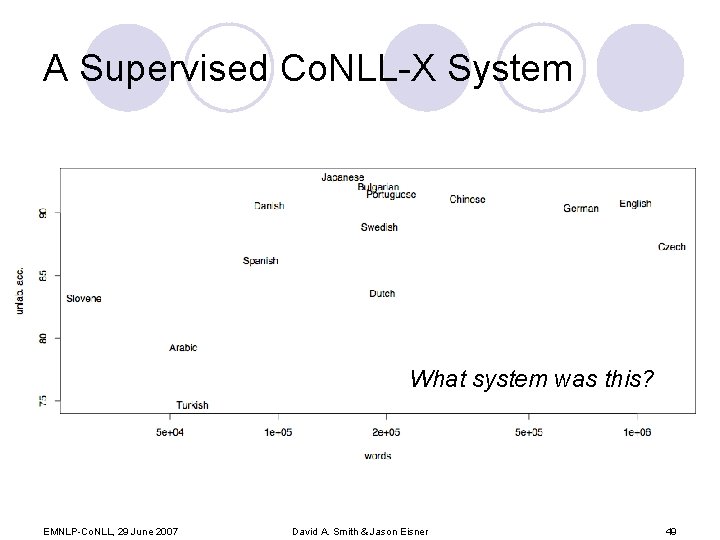

A Supervised Co. NLL-X System What system was this? EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 49

How Does Bootstrapping Learn? Supervised iter. 1 Boostrapping w/ R 2 EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner Supervised iter. 10 Boostrapping w/ Rinf 50

![How Does Bootstrapping Learn? Updated M feat. Acc. [%] Updated all M feat. Acc. How Does Bootstrapping Learn? Updated M feat. Acc. [%] Updated all M feat. Acc.](http://slidetodoc.com/presentation_image_h2/18cd899795d726d51c993cd35e1fb614/image-50.jpg)

How Does Bootstrapping Learn? Updated M feat. Acc. [%] Updated all M feat. Acc. [%] 15. 5 64. 3 none 0 60. 9 seed 1. 4 64. 1 Nonseed 14. 1 44. 7 Non-lex. 3. 5 64. 4 lexical 12. 0 59. 9 2. 9 61. 0 Nonbilex. EMNLP-Co. NLL, 29 June 2007 12. 6 64. 4 bilexical David A. Smith & Jason Eisner 51

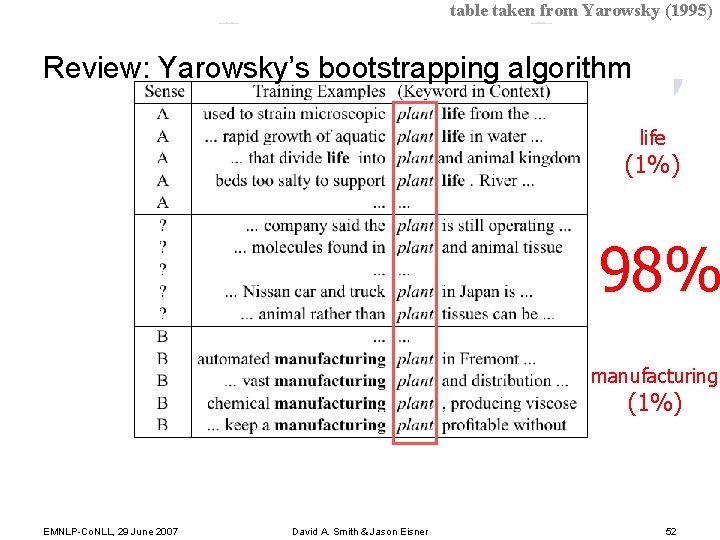

table taken from Yarowsky (1995) Review: Yarowsky’s bootstrapping algorithm life (1%) target word: plant 98% manufacturing (1%) EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 52

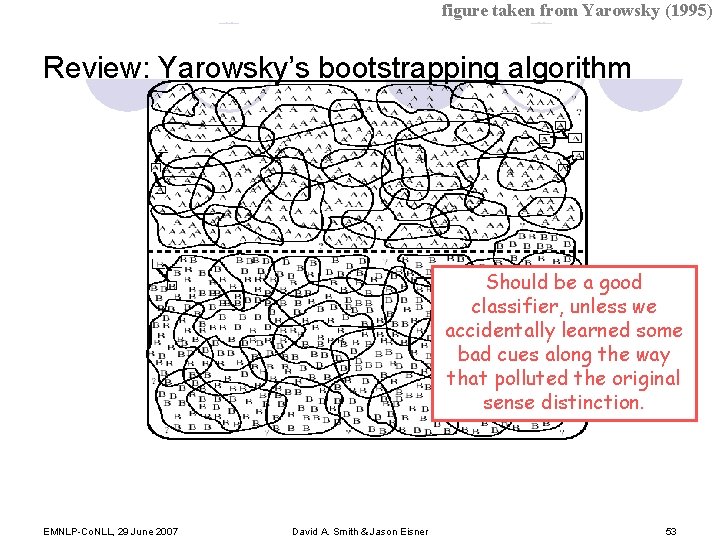

figure taken from Yarowsky (1995) Review: Yarowsky’s bootstrapping algorithm Should be a good classifier, unless we accidentally learned some bad cues along the way that polluted the original sense distinction. EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 53

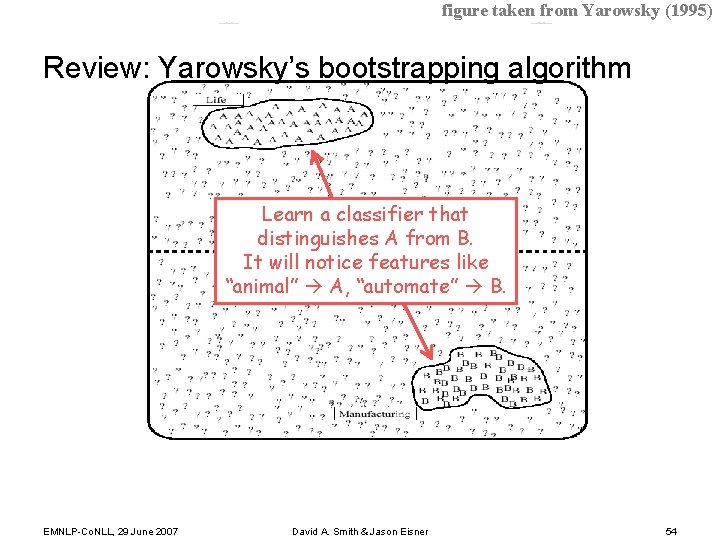

figure taken from Yarowsky (1995) Review: Yarowsky’s bootstrapping algorithm Learn a classifier that distinguishes A from B. It will notice features like “animal” A, “automate” B. EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 54

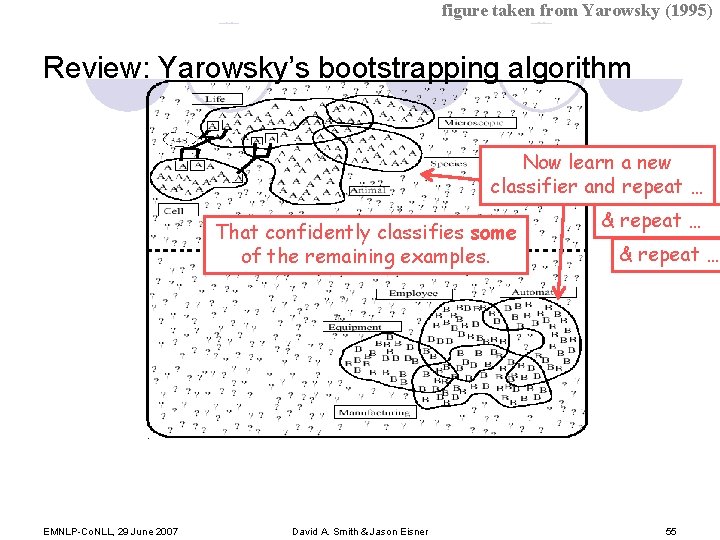

figure taken from Yarowsky (1995) Review: Yarowsky’s bootstrapping algorithm Now learn a new classifier and repeat … That confidently classifies some of the remaining examples. EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner & repeat … 55

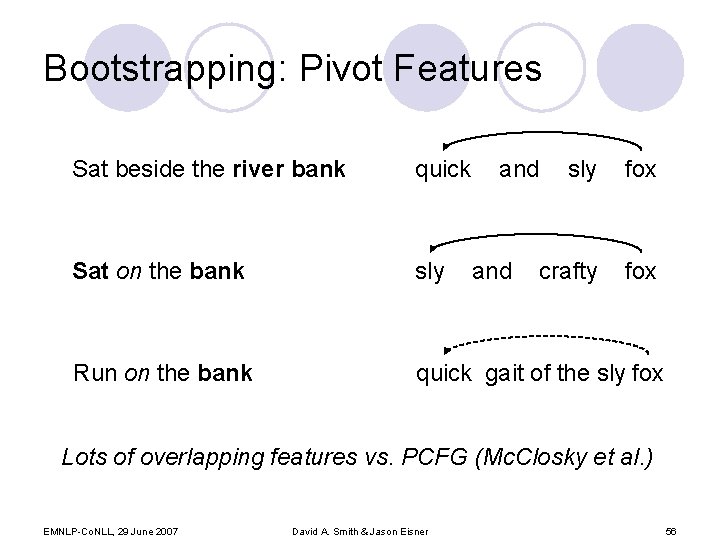

Bootstrapping: Pivot Features Sat beside the river bank quick and Sat on the bank sly Run on the bank quick gait of the sly fox and sly fox crafty fox Lots of overlapping features vs. PCFG (Mc. Closky et al. ) EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 56

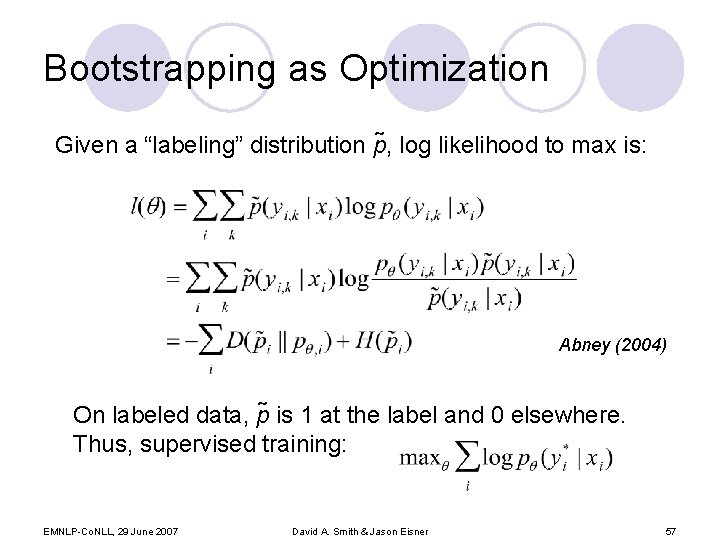

Bootstrapping as Optimization Given a “labeling” distribution p , log likelihood to max is: Abney (2004) On labeled data, p is 1 at the label and 0 elsewhere. Thus, supervised training: EMNLP-Co. NLL, 29 June 2007 David A. Smith & Jason Eisner 57

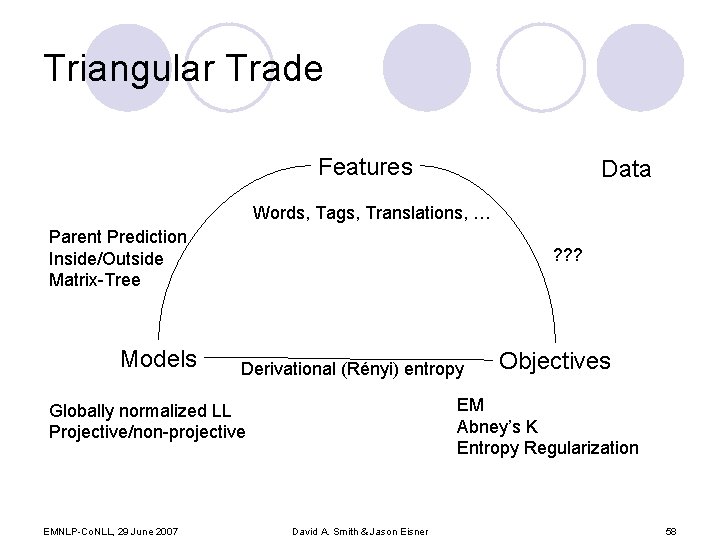

Triangular Trade Features Data Words, Tags, Translations, … Parent Prediction Inside/Outside Matrix-Tree Models ? ? ? Derivational (Rényi) entropy EM Abney’s K Entropy Regularization Globally normalized LL Projective/non-projective EMNLP-Co. NLL, 29 June 2007 Objectives David A. Smith & Jason Eisner 58

- Slides: 57