BIPS C O M P U T A

BIPS C O M P U T A T I O N A L R E S E A R C H D I V I S I O N Tuning Sparse Matrix Vector Multiplication for multi-core SMPs Samuel Williams 1, 2, Richard Vuduc 3, Leonid Oliker 1, 2, John Shalf 2, Katherine Yelick 1, 2, James Demmel 1, 2 1 University of California Berkeley 2 Lawrence Berkeley National Laboratory 3 Georgia Institute of Technology samw@cs. berkeley. edu

BIPS Overview v Multicore is the de facto performance solution for the next decade v Examined Sparse Matrix Vector Multiplication (Sp. MV) kernel § Important HPC kernel § Memory intensive § Challenging for multicore v Present two autotuned threaded implementations: § Pthread, cache-based implementation § Cell local store-based implementation v Benchmarked performance across 4 diverse multicore architectures § Intel Xeon (Clovertown) § AMD Opteron § Sun Niagara 2 § IBM Cell Broadband Engine v Compare with leading MPI implementation(PETSc) with an autotuned serial kernel (OSKI)

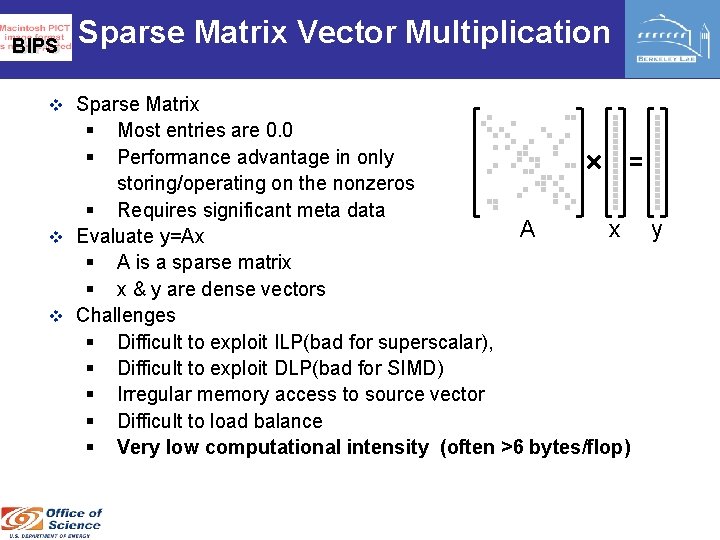

BIPS Sparse Matrix Vector Multiplication v Sparse Matrix § § Most entries are 0. 0 Performance advantage in only storing/operating on the nonzeros § Requires significant meta data A x v Evaluate y=Ax § A is a sparse matrix § x & y are dense vectors v Challenges § Difficult to exploit ILP(bad for superscalar), § Difficult to exploit DLP(bad for SIMD) § Irregular memory access to source vector § Difficult to load balance § Very low computational intensity (often >6 bytes/flop) y

BIPS C O M P U T A T I O N A L R E S E A R C H D Test Suite v. Dataset (Matrices) v. Multicore SMPs I V I S I O N

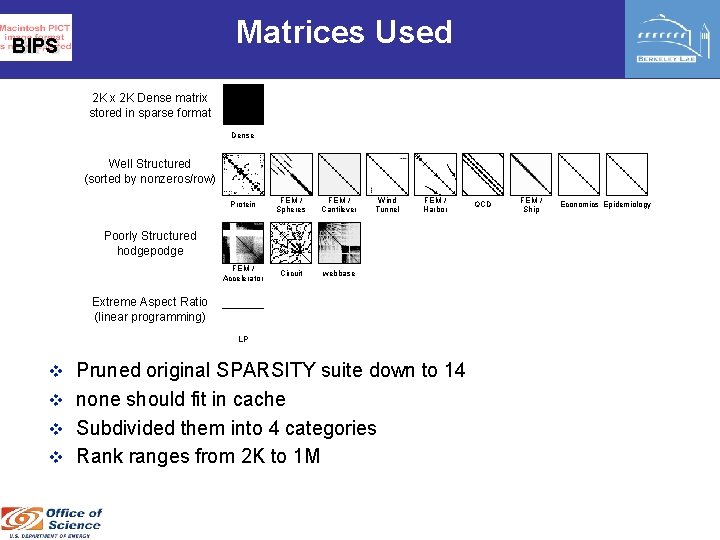

Matrices Used BIPS 2 K x 2 K Dense matrix stored in sparse format Dense Well Structured (sorted by nonzeros/row) Protein FEM / Spheres FEM / Cantilever FEM / Accelerator Circuit webbase Wind Tunnel FEM / Harbor Poorly Structured hodgepodge Extreme Aspect Ratio (linear programming) LP v Pruned original SPARSITY suite down to 14 v none should fit in cache v Subdivided them into 4 categories v Rank ranges from 2 K to 1 M QCD FEM / Ship Economics Epidemiology

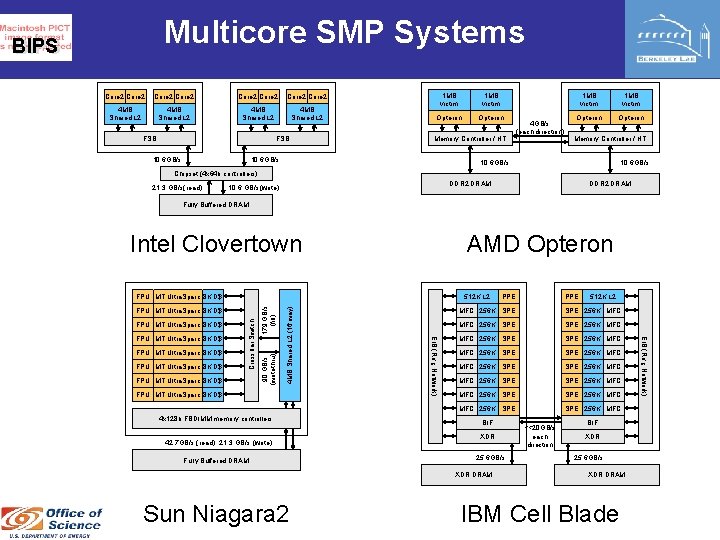

Multicore SMP Systems BIPS Core 2 Core 2 4 MB Shared L 2 FSB 10. 6 GB/s 1 MB victim Opteron Memory Controller / HT 10. 6 GB/s 4 GB/s (each direction) 1 MB victim Opteron Memory Controller / HT 10. 6 GB/s Chipset (4 x 64 b controllers) 21. 3 GB/s(read) DDR 2 DRAM 10. 6 GB/s(write) DDR 2 DRAM Fully Buffered DRAM Intel Clovertown AMD Opteron FPU MT Ultra. Sparc 8 K D$ 4 x 128 b FBDIMM memory controllers 42. 7 GB/s (read), 21. 3 GB/s (write) Fully Buffered DRAM PPE 512 K L 2 MFC 256 K SPE SPE 256 K MFC MFC 256 K SPE SPE 256 K MFC BIF XDR 25. 6 GB/s XDR DRAM Sun Niagara 2 PPE <<20 GB/s each direction BIF XDR 25. 6 GB/s XDR DRAM IBM Cell Blade EIB (Ring Network) FPU MT Ultra. Sparc 8 K D$ Crossbar Switch FPU MT Ultra. Sparc 8 K D$ 90 GB/s (writethru) FPU MT Ultra. Sparc 8 K D$ 4 MB Shared L 2 (16 way) 512 K L 2 179 GB/s (fill) FPU MT Ultra. Sparc 8 K D$

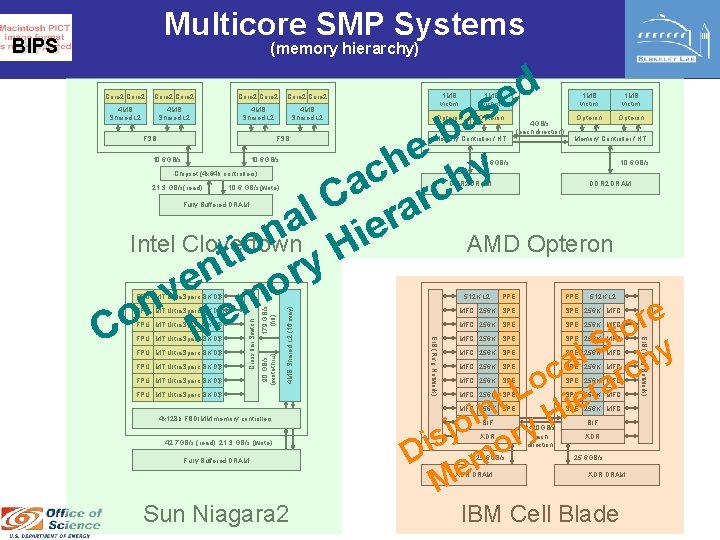

Multicore SMP Systems BIPS (memory hierarchy) d e s a b e y h c ch a C rar l a e n i Intel Clovertown AMD Opteron o H i t ry n e o v n em e o r o C M t S y Core 2 Core 2 4 MB Shared L 2 FSB 10. 6 GB/s 1 MB victim Opteron Memory Controller / HT 10. 6 GB/s 4 GB/s (each direction) 1 MB victim Opteron Memory Controller / HT 10. 6 GB/s Chipset (4 x 64 b controllers) 21. 3 GB/s(read) DDR 2 DRAM 10. 6 GB/s(write) DDR 2 DRAM Fully Buffered DRAM FPU MT Ultra. Sparc 8 K D$ 4 x 128 b FBDIMM memory controllers 42. 7 GB/s (read), 21. 3 GB/s (write) Fully Buffered DRAM PPE 512 K L 2 MFC 256 K SPE SPE 256 K MFC MFC 256 K SPE SPE 256 K MFC l h a c c r o a L ier t n i H o j y r s i D emo M BIF XDR 25. 6 GB/s XDR DRAM Sun Niagara 2 PPE <<20 GB/s each direction BIF XDR 25. 6 GB/s XDR DRAM IBM Cell Blade EIB (Ring Network) FPU MT Ultra. Sparc 8 K D$ Crossbar Switch FPU MT Ultra. Sparc 8 K D$ 90 GB/s (writethru) FPU MT Ultra. Sparc 8 K D$ 4 MB Shared L 2 (16 way) 512 K L 2 179 GB/s (fill) FPU MT Ultra. Sparc 8 K D$

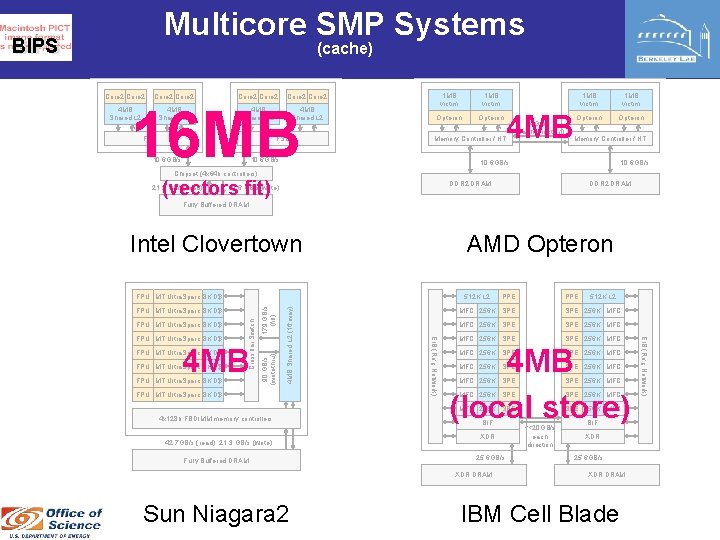

Multicore SMP Systems BIPS (cache) Core 2 Core 2 4 MB Shared L 2 16 MB FSB 10. 6 GB/s 1 MB victim Opteron 4 MB Memory Controller / HT 10. 6 GB/s 4 GB/s (each direction) 1 MB victim Opteron Memory Controller / HT 10. 6 GB/s Chipset (4 x 64 b controllers) (vectors fit) 21. 3 GB/s(read) DDR 2 DRAM 10. 6 GB/s(write) DDR 2 DRAM Fully Buffered DRAM Intel Clovertown AMD Opteron FPU MT Ultra. Sparc 8 K D$ 4 x 128 b FBDIMM memory controllers 42. 7 GB/s (read), 21. 3 GB/s (write) Fully Buffered DRAM PPE 512 K L 2 MFC 256 K SPE SPE 256 K MFC MFC 256 K SPE 256 K MFC 4 MB (local store) MFC 256 K SPE SPE 256 K MFC MFC 256 K SPE 256 K MFC BIF XDR 25. 6 GB/s XDR DRAM Sun Niagara 2 PPE <<20 GB/s each direction BIF XDR 25. 6 GB/s XDR DRAM IBM Cell Blade EIB (Ring Network) 4 MB FPU MT Ultra. Sparc 8 K D$ EIB (Ring Network) FPU MT Ultra. Sparc 8 K D$ Crossbar Switch FPU MT Ultra. Sparc 8 K D$ 90 GB/s (writethru) FPU MT Ultra. Sparc 8 K D$ 4 MB Shared L 2 (16 way) 512 K L 2 179 GB/s (fill) FPU MT Ultra. Sparc 8 K D$

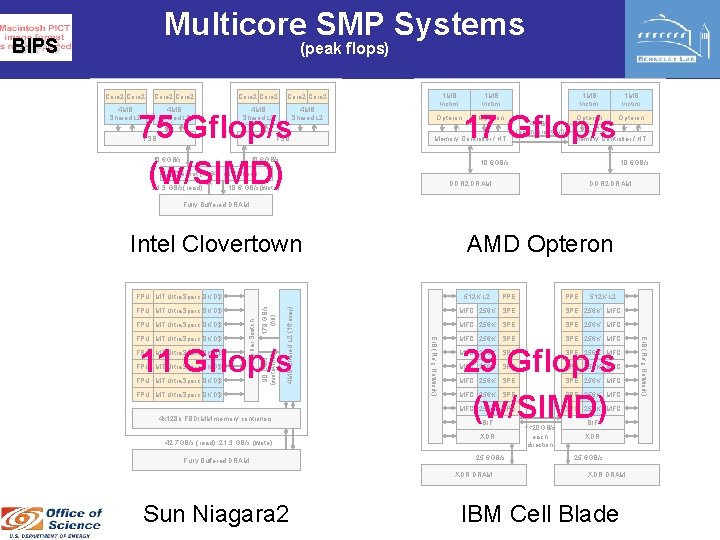

Multicore SMP Systems BIPS (peak flops) Core 2 Core 2 4 MB Shared L 2 75 Gflop/s (w/SIMD) FSB 10. 6 GB/s 1 MB victim Opteron 1 MB victim 17 Gflop/s Opteron Memory Controller / HT 10. 6 GB/s 4 GB/s (each direction) Opteron 1 MB victim Opteron Memory Controller / HT 10. 6 GB/s Chipset (4 x 64 b controllers) 21. 3 GB/s(read) DDR 2 DRAM 10. 6 GB/s(write) DDR 2 DRAM Fully Buffered DRAM Intel Clovertown AMD Opteron FPU MT Ultra. Sparc 8 K D$ 90 GB/s (writethru) FPU MT Ultra. Sparc 8 K D$ 4 x 128 b FBDIMM memory controllers 42. 7 GB/s (read), 21. 3 GB/s (write) Fully Buffered DRAM PPE 512 K L 2 MFC 256 K SPE SPE 256 K MFC MFC 256 K SPE 256 K MFC 29 Gflop/s (w/SIMD) MFC 256 K SPE SPE 256 K MFC MFC 256 K SPE 256 K MFC BIF XDR 25. 6 GB/s XDR DRAM Sun Niagara 2 PPE <<20 GB/s each direction BIF XDR 25. 6 GB/s XDR DRAM IBM Cell Blade EIB (Ring Network) 11 Gflop/s FPU MT Ultra. Sparc 8 K D$ EIB (Ring Network) FPU MT Ultra. Sparc 8 K D$ Crossbar Switch FPU MT Ultra. Sparc 8 K D$ 4 MB Shared L 2 (16 way) 512 K L 2 179 GB/s (fill) FPU MT Ultra. Sparc 8 K D$

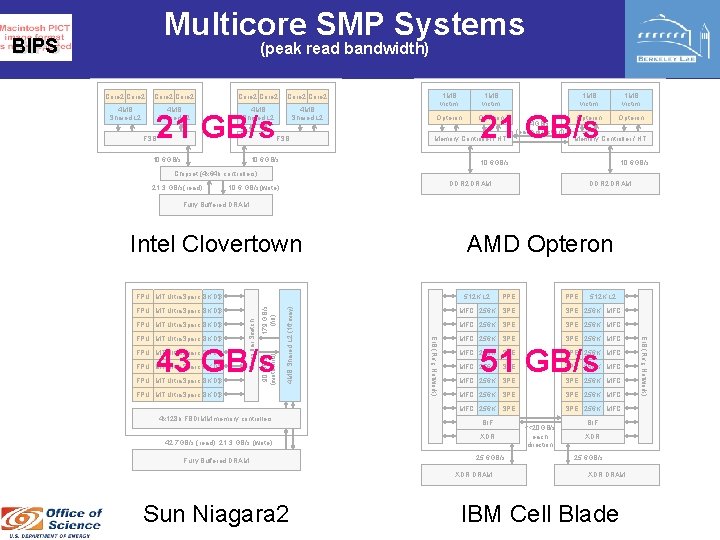

Multicore SMP Systems BIPS (peak read bandwidth) Core 2 Core 2 4 MB Shared L 2 21 GB/s FSB 10. 6 GB/s FSB 1 MB victim Opteron 21 GB/s Memory Controller / HT 10. 6 GB/s 4 GB/s (each direction) Opteron 1 MB victim Opteron Memory Controller / HT 10. 6 GB/s Chipset (4 x 64 b controllers) 21. 3 GB/s(read) DDR 2 DRAM 10. 6 GB/s(write) DDR 2 DRAM Fully Buffered DRAM Intel Clovertown AMD Opteron FPU MT Ultra. Sparc 8 K D$ 90 GB/s (writethru) FPU MT Ultra. Sparc 8 K D$ 4 x 128 b FBDIMM memory controllers 42. 7 GB/s (read), 21. 3 GB/s (write) Fully Buffered DRAM PPE 512 K L 2 MFC 256 K SPE SPE 256 K MFC MFC 256 K SPE 256 K MFC 51 GB/s MFC 256 K SPE SPE 256 K MFC MFC 256 K SPE 256 K MFC BIF XDR 25. 6 GB/s XDR DRAM Sun Niagara 2 PPE <<20 GB/s each direction BIF XDR 25. 6 GB/s XDR DRAM IBM Cell Blade EIB (Ring Network) 43 GB/s FPU MT Ultra. Sparc 8 K D$ EIB (Ring Network) FPU MT Ultra. Sparc 8 K D$ Crossbar Switch FPU MT Ultra. Sparc 8 K D$ 4 MB Shared L 2 (16 way) 512 K L 2 179 GB/s (fill) FPU MT Ultra. Sparc 8 K D$

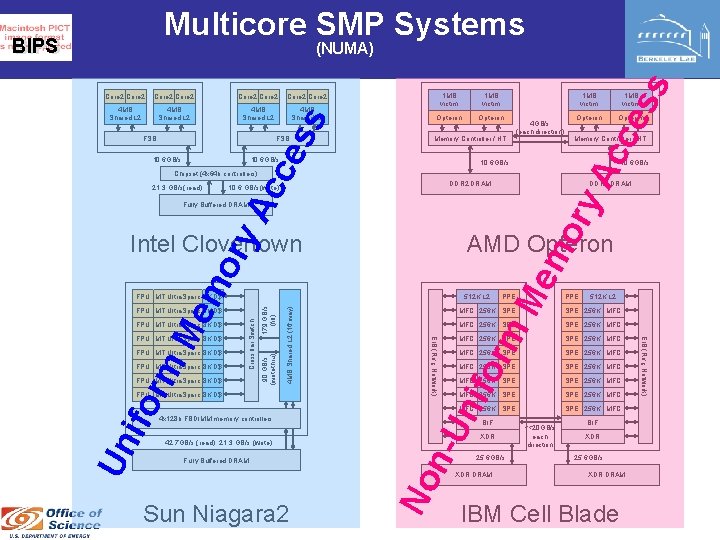

Multicore SMP Systems BIPS Core 2 4 MB Shared L 2 FSB 1 MB victim Opteron 10. 6 GB/s 1 MB victim Memory Controller / HT ce FSB 1 MB victim 10. 6 GB/s 4 GB/s (each direction) 10. 6 GB/s DDR 2 DRAM Un 4 x 128 b FBDIMM memory controllers 42. 7 GB/s (read), 21. 3 GB/s (write) Fully Buffered DRAM Sun Niagara 2 mo PPE 512 K L 2 SPE 256 K MFC MFC 256 K SPE SPE 256 K MFC 256 K SPE 256 K MFC orm MFC 256 K SPE n-U nif ifo FPU MT Ultra. Sparc 8 K D$ MFC 256 K SPE SPE 256 K MFC BIF XDR 25. 6 GB/s No FPU MT Ultra. Sparc 8 K D$ 4 MB Shared L 2 (16 way) Crossbar Switch FPU MT Ultra. Sparc 8 K D$ 90 GB/s (writethru) rm FPU MT Ultra. Sparc 8 K D$ EIB (Ring Network) FPU MT Ultra. Sparc 8 K D$ 512 K L 2 179 GB/s (fill) FPU MT Ultra. Sparc 8 K D$ Me AMD Opteron XDR DRAM <<20 GB/s each direction BIF XDR 25. 6 GB/s XDR DRAM IBM Cell Blade EIB (Ring Network) Me mo ry Intel Clovertown FPU MT Ultra. Sparc 8 K D$ Memory Controller / HT DDR 2 DRAM Fully Buffered DRAM FPU MT Ultra. Sparc 8 K D$ Opteron ry Ac 10. 6 GB/s(write) Opteron 10. 6 GB/s Chipset (4 x 64 b controllers) 21. 3 GB/s(read) 1 MB victim ce Core 2 4 MB Shared L 2 Ac Core 2 4 MB Shared L 2 ss Core 2 ss (NUMA)

BIPS C O M P U T A T I O N A L R E S E A R C H D I V I S I O Naïve Implementation v. For cache-based machines v. Included a median performance number N

BIPS vanilla C Performance Intel Clovertown AMD Opteron Sun Niagara 2 v Vanilla C implementation v Matrix stored in CSR (compressed sparse row) v Explored compiler options - only the best is presented here

BIPS C O M P U T A T I O N A L R E S E A R C H D I V I S I O N Pthread Implementation v. Optimized for multicore/threading v. Variety of shared memory programming models are acceptable(not just Pthreads) v. More colors = more optimizations = more work

BIPS Parallelization v Matrix partitioned by rows and balanced by the number of v v nonzeros SPMD like approach A barrier() is called before and after the Sp. MV kernel Each sub matrix stored separately in CSR Load balancing can be challenging x A y v # of threads explored in powers of 2 (in paper)

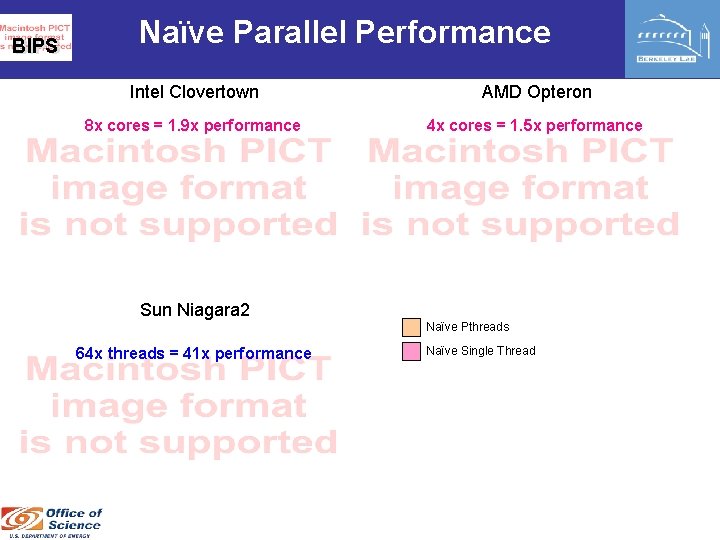

BIPS Naïve Parallel Performance Intel Clovertown AMD Opteron Sun Niagara 2 Naïve Pthreads Naïve Single Thread

BIPS Naïve Parallel Performance Intel Clovertown AMD Opteron 8 x cores = 1. 9 x performance 4 x cores = 1. 5 x performance Sun Niagara 2 Naïve Pthreads 64 x threads = 41 x performance Naïve Single Thread

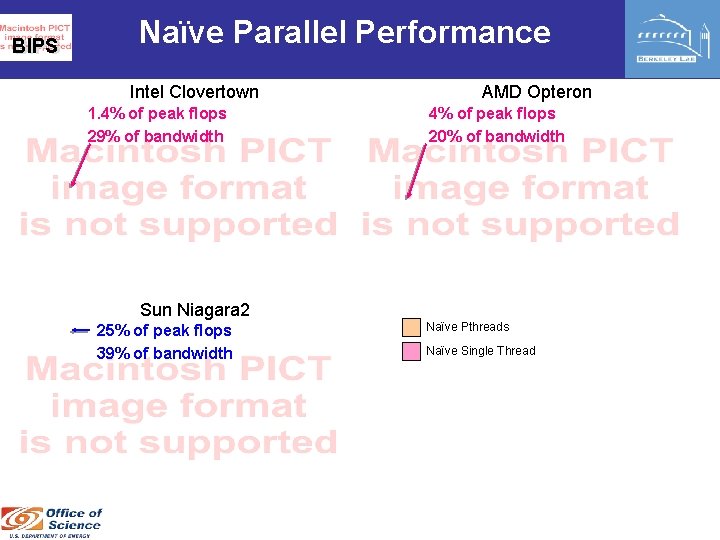

BIPS Naïve Parallel Performance Intel Clovertown 1. 4% of peak flops 29% of bandwidth AMD Opteron 4% of peak flops 20% of bandwidth Sun Niagara 2 25% of peak flops 39% of bandwidth Naïve Pthreads Naïve Single Thread

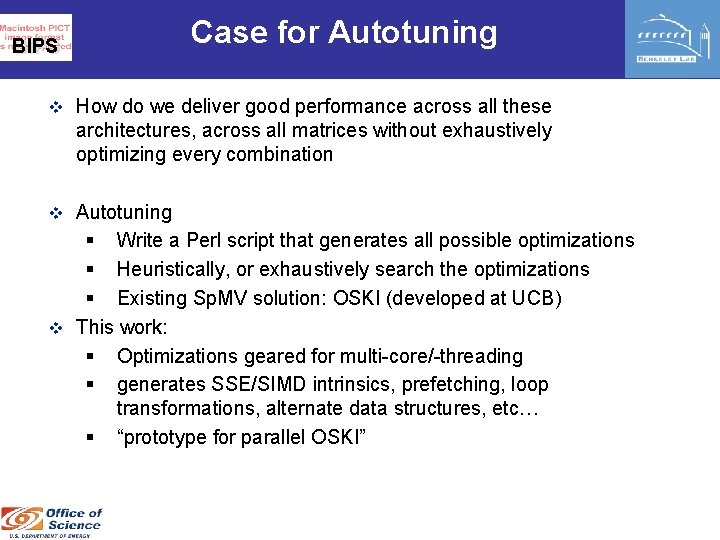

Case for Autotuning BIPS v How do we deliver good performance across all these architectures, across all matrices without exhaustively optimizing every combination v Autotuning § Write a Perl script that generates all possible optimizations § Heuristically, or exhaustively search the optimizations § Existing Sp. MV solution: OSKI (developed at UCB) v This work: § Optimizations geared for multi-core/-threading § generates SSE/SIMD intrinsics, prefetching, loop transformations, alternate data structures, etc… § “prototype for parallel OSKI”

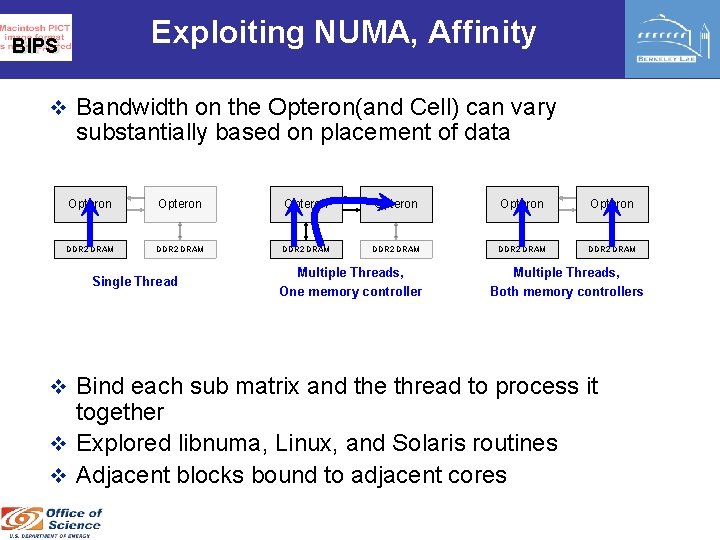

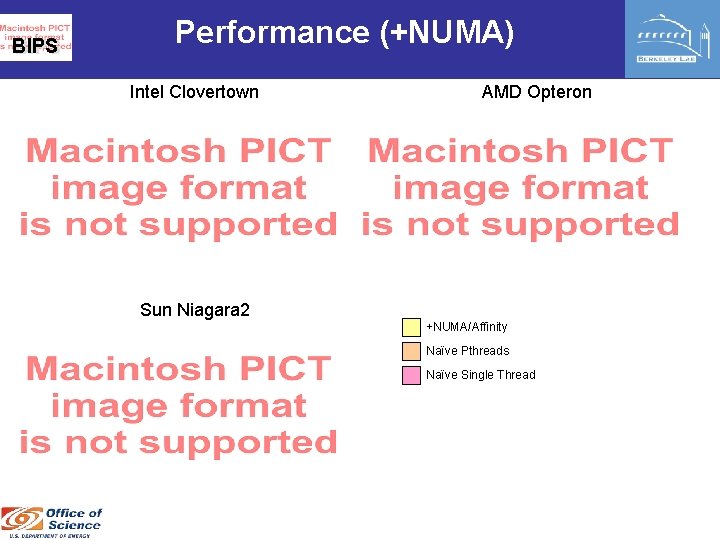

Exploiting NUMA, Affinity BIPS v Bandwidth on the Opteron(and Cell) can vary substantially based on placement of data Opteron Opteron DDR 2 DRAM DDR 2 DRAM Single Thread Multiple Threads, One memory controller Multiple Threads, Both memory controllers v Bind each sub matrix and the thread to process it together v Explored libnuma, Linux, and Solaris routines v Adjacent blocks bound to adjacent cores

BIPS Performance (+NUMA) Intel Clovertown AMD Opteron Sun Niagara 2 +NUMA/Affinity Naïve Pthreads Naïve Single Thread

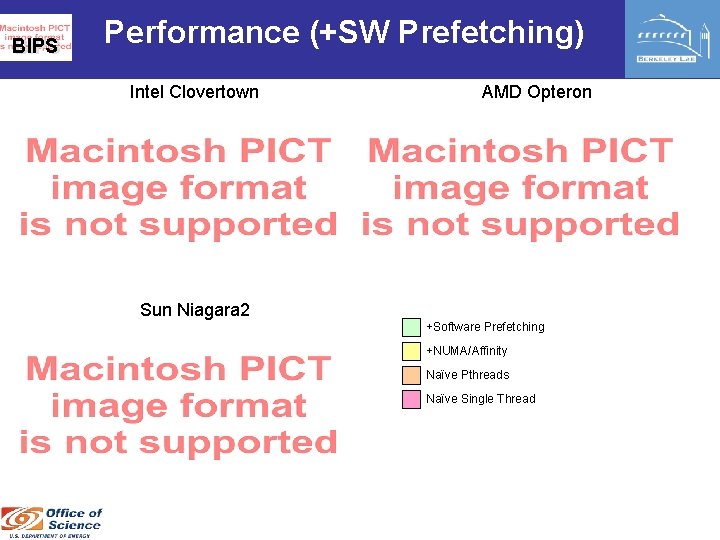

BIPS Performance (+SW Prefetching) Intel Clovertown AMD Opteron Sun Niagara 2 +Software Prefetching +NUMA/Affinity Naïve Pthreads Naïve Single Thread

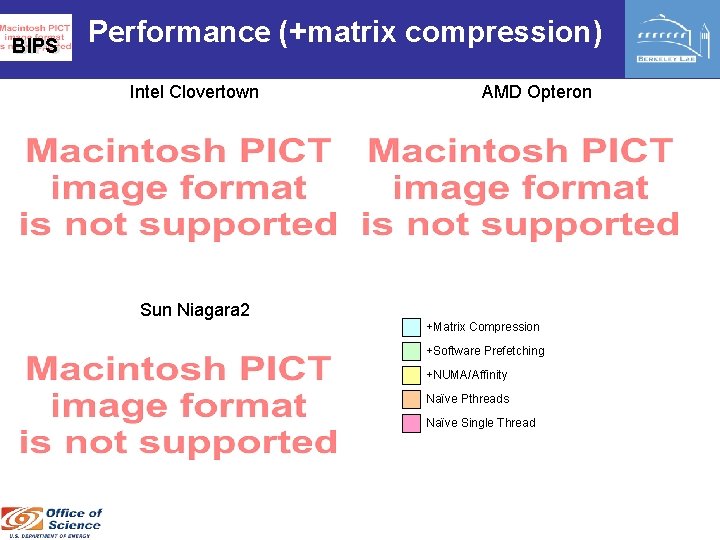

BIPS Matrix Compression v For memory bound kernels, minimizing memory traffic should maximize performance v Compress the meta data § Exploit structure to eliminate meta data v Heuristic: select the compression that minimizes the matrix size: § power of 2 register blocking § CSR/COO format § 16 b/32 b indices § etc… v Side effect: matrix may be minimized to the point where it fits entirely in cache

BIPS Performance (+matrix compression) Intel Clovertown AMD Opteron Sun Niagara 2 +Matrix Compression +Software Prefetching +NUMA/Affinity Naïve Pthreads Naïve Single Thread

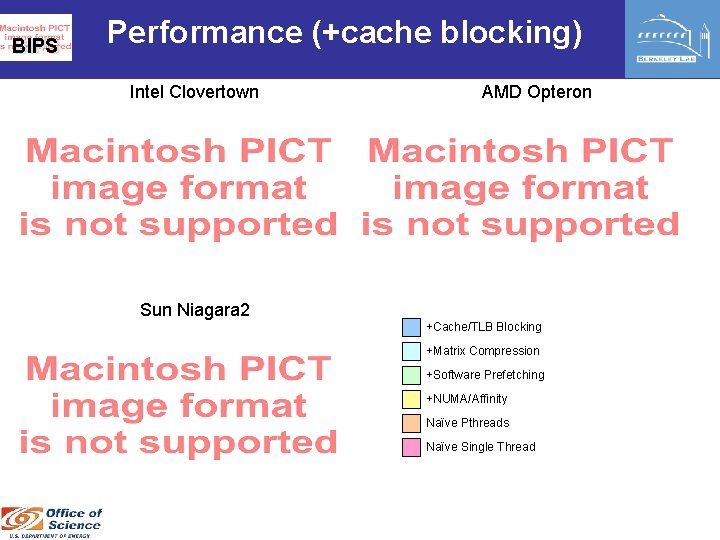

BIPS Cache and TLB Blocking v Accesses to the matrix and destination vector are streaming v But, access to the source vector can be random v Reorganize matrix (and thus access pattern) to maximize reuse. v Applies equally to TLB blocking (caching PTEs) v Heuristic: block destination, then keep adding more columns as long as the number of source vector cache lines(or pages) touched is less than the cache(or TLB). Apply all previous optimizations individually to each cache block. v Search: neither, cache&TLB v Better locality at the expense of confusing the hardware prefetchers. x A y

BIPS Performance (+cache blocking) Intel Clovertown AMD Opteron Sun Niagara 2 +Cache/TLB Blocking +Matrix Compression +Software Prefetching +NUMA/Affinity Naïve Pthreads Naïve Single Thread

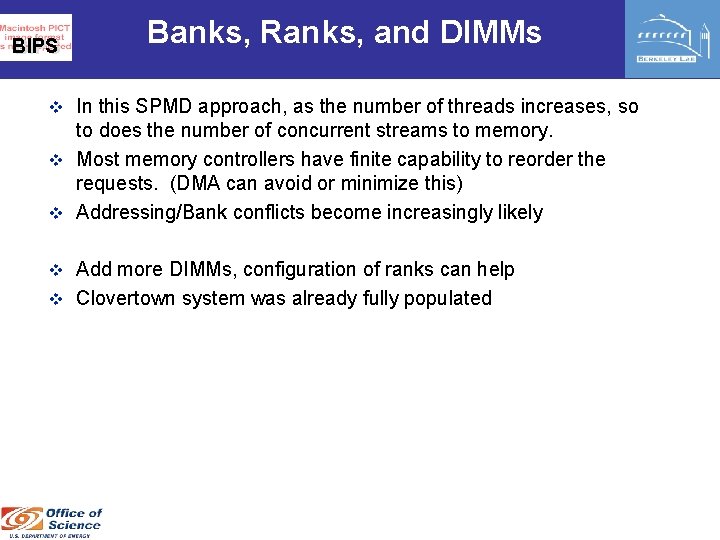

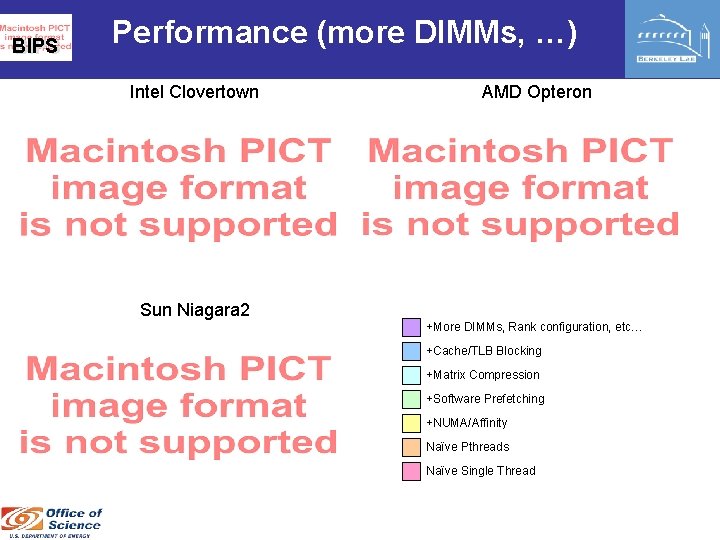

BIPS Banks, Ranks, and DIMMs v In this SPMD approach, as the number of threads increases, so to does the number of concurrent streams to memory. v Most memory controllers have finite capability to reorder the requests. (DMA can avoid or minimize this) v Addressing/Bank conflicts become increasingly likely v Add more DIMMs, configuration of ranks can help v Clovertown system was already fully populated

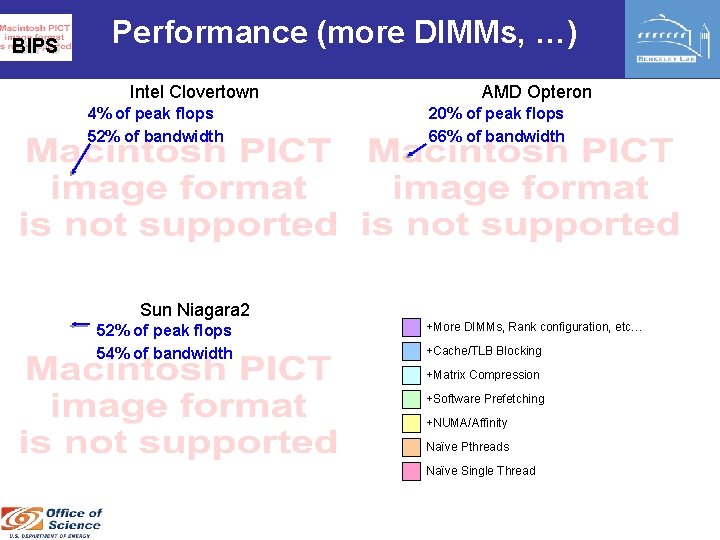

BIPS Performance (more DIMMs, …) Intel Clovertown AMD Opteron Sun Niagara 2 +More DIMMs, Rank configuration, etc… +Cache/TLB Blocking +Matrix Compression +Software Prefetching +NUMA/Affinity Naïve Pthreads Naïve Single Thread

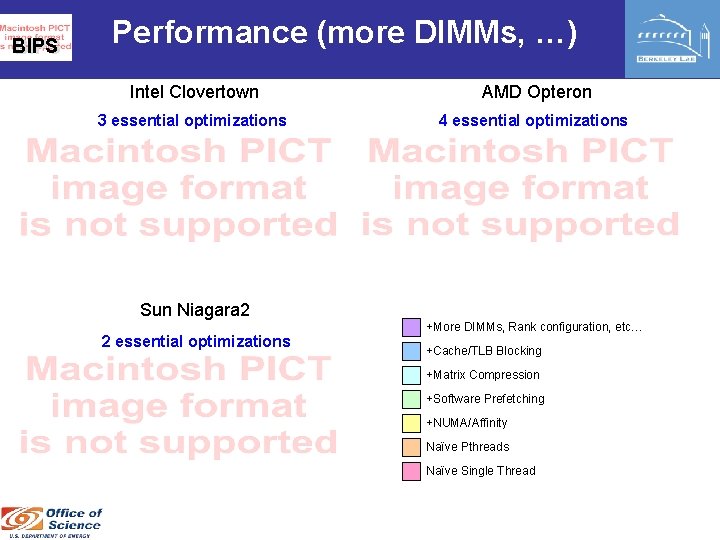

BIPS Performance (more DIMMs, …) Intel Clovertown 4% of peak flops 52% of bandwidth AMD Opteron 20% of peak flops 66% of bandwidth Sun Niagara 2 52% of peak flops 54% of bandwidth +More DIMMs, Rank configuration, etc… +Cache/TLB Blocking +Matrix Compression +Software Prefetching +NUMA/Affinity Naïve Pthreads Naïve Single Thread

BIPS Performance (more DIMMs, …) Intel Clovertown AMD Opteron 3 essential optimizations 4 essential optimizations Sun Niagara 2 2 essential optimizations +More DIMMs, Rank configuration, etc… +Cache/TLB Blocking +Matrix Compression +Software Prefetching +NUMA/Affinity Naïve Pthreads Naïve Single Thread

BIPS C O M P U T A T I O N A L R E S E A R C H D I V I S I O Cell Implementation v. Comments v. Performance N

BIPS Cell Implementation v No vanilla C implementation (aside from the PPE) v Even SIMDized double precision is extremely weak § Scalar double precision is unbearable § Minimum register blocking is 2 x 1 (SIMDizable) § Can increase memory traffic by 66% v Cache blocking optimization is transformed into local store blocking § Spatial and temporal locality is captured by software when the matrix is optimized § In essence, the high bits of column indices are grouped into DMA lists v No branch prediction § Replace branches with conditional operations v In some cases, what were optional optimizations on cache based machines, are requirements for correctness on Cell v Despite the performance, Cell is still handicapped by double precision

BIPS Performance Intel Clovertown AMD Opteron Sun Niagara 2 IBM Cell Broadband Engine

BIPS Performance Intel Clovertown AMD Opteron Sun Niagara 2 IBM Cell Broadband Engine 39% of peak flops 89% of bandwidth

BIPS C O M P U T A T I O N A L R E S E A R C H D I V I S I O N Multicore MPI Implementation v. This is the default approach to programming multicore

BIPS Multicore MPI Implementation v Used PETSc with shared memory MPICH v Used OSKI (developed @ UCB) to optimize each thread v = Highly optimized MPI Intel Clovertown Naïve Single Thread AMD Opteron MPI(autotuned) Pthreads(autotuned)

BIPS C O M P U T A T I O N A L R E S E A R C H D Summary I V I S I O N

BIPS Median Performance & Efficiency Used digital power meter to measure sustained system power § FBDIMM drives up Clovertown and Niagara 2 power § Right: sustained MFlop/s / sustained Watts v Default approach(MPI) achieves very low performance and efficiency v

Summary BIPS v Paradoxically, the most complex/advanced architectures required the most tuning, and delivered the lowest performance. v Most machines achieved less than 50 -60% of DRAM bandwidth v Niagara 2 delivered both very good performance and productivity v Cell delivered very good performance and efficiency § § 90% of memory bandwidth High power efficiency Easily understood performance Extra traffic = lower performance (future work can address this) v multicore specific autotuned implementation significantly outperformed a state of the art MPI implementation ü Matrix compression geared towards multicore ü NUMA ü Prefetching

BIPS Acknowledgments v UC Berkeley § RADLab Cluster (Opterons) § PSI cluster(Clovertowns) v Sun Microsystems § Niagara 2 v Forschungszentrum Jülich § Cell blade cluster

BIPS C O M P U T A T I O N A L R E S E A R C H D I Questions? V I S I O N

- Slides: 41