Bio Sec Multimodal Biometric Database in TextDependent Speaker

Bio. Sec Multimodal Biometric Database in Text-Dependent Speaker Recognition D. T. Toledano, D. Hernández-López, C. Esteve-Elizalde, J. Fiérrez, J. Ortega-García, D. Ramos and J. Gonzalez. Rodriguez ATVS, Universidad Autonoma de Madrid, Spain LREC 2008, Marrakesh, Morocco, 28 -30 May 08 1

Outline 1. Introduction and Goals 2. Databases for Text-Dependent Speaker Recognition 3. Bio. Sec and Related Databases 4. Experiments with YOHO and Bio. Sec Baseline 4. 1. Text Dependent SR Based on Phonetic HMMs 4. 2. YOHO and Bio. Sec Experimental Protocols 4. 3. Results with YOHO and Bio. Sec Baseline 5. Conclusions 2

1. Introduction and Goal Text-Independent Speaker Recognition Unknown lexical content Research driven by yearly NIST SRE evals and databases Text-Dependent Speaker Recognition Lexical content of test utterance known by system No competitive evaluations by NIST Less research and less standard benchmarks Password set by the user or text prompted by the system YOHO is probably the best known benchmark Newer databases are available, but results are difficult to compare Goals Study Bio. Sec as a benchmark for text dependent Speaker Rec. Compare results on Bio. Sec and YOHO with the same method 3

2. Databases for Text-Dependent Speaker Recognition YOHO (Campbell & Higgings, 1994): speech – Clean mic. speech, 138 speakers, 24 utt x 4 ses. for enrolment, 4 utt. x 10 ses. for test. (“ 12 -34 -56”) – Best known benchmark XM 2 VTS (Messer et al. 1999): speech, face – Clean microphone speech, 295 subjects, 4 sessions BIOMET (Garcia-Salicetti et al. 2003): speech, face, fingerprint, hand, signature – Clean mic. speech, 130 subjects, 3 ses. BANCA (Billy-Bailliere et al. 2003): speech, face – Clean and noisy mic. speech, 208 subjects, 12 sessions MYIDEA (Dumas et al. 2005): speech, face, fingerprint, signature, hand geometry, handwritting – BIOMET + BANCA contents for speech, 104 subjects, 3 sessions MIT Mobile Device Speaker Verification (Park and Hazen, 2006): speech – Mobile devices, realistic noisy conditions M 3 (Meng et al. 2006): speech, face, fingerprint - Microphone speech (3 devices), 39 subjects, 3 sessions (+108 single session) MBio. ID (Dessimoz et al. 2007): speech, face, iris, fingerprint, signature - Microphone clean speech, 120 subjects, 2 sessions 4

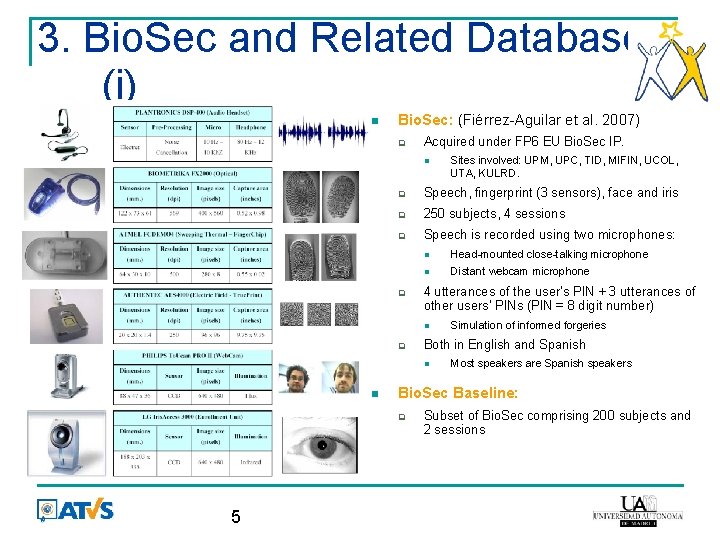

3. Bio. Sec and Related Databases (i) Bio. Sec: (Fiérrez-Aguilar et al. 2007) Acquired under FP 6 EU Bio. Sec IP. Speech, fingerprint (3 sensors), face and iris 250 subjects, 4 sessions Speech is recorded using two microphones: Head-mounted close-talking microphone Distant webcam microphone 4 utterances of the user’s PIN + 3 utterances of other users’ PINs (PIN = 8 digit number) Most speakers are Spanish speakers Bio. Sec Baseline: 5 Simulation of informed forgeries Both in English and Spanish Sites involved: UPM, UPC, TID, MIFIN, UCOL, UTA, KULRD. Subset of Bio. Sec comprising 200 subjects and 2 sessions

3. Bio. Sec and Related Databases (ii) Bio. Secur. ID: Speech, iris, face, handwriting, fingerprint, hand geometry and keystroking Microphone speech in realistic office-like scenario 400 subjects Bio. Secure: Three scenarios: Internet, office-like and mobile 1000 subjects (internet), 700 subjects (office-like and mobile) Bio. Secure and Bio. Secure. ID share subjects with Bio. Sec, which allows long-term studies Bio. Sec has several other advantages over YOHO: Multimodal, Multilingual (Spanish/English), Multichannel (close-talking and webcam) Same lexical content for target trials, allows simulation of informed forgeries But it also has a clear disadvantage: It is harder to compare results on Bio. Sec 6

4. Goals: Experiments with YOHO and Bio. Sec Study Bio. Sec Baseline as a benchmark for textdependent SR Compare the difficulty of YOHO and Bio. Sec Baseline Goals achieved through: Common text-dependent speaker recognition method Clear evaluation protocols Analysis of results for different conditions 7

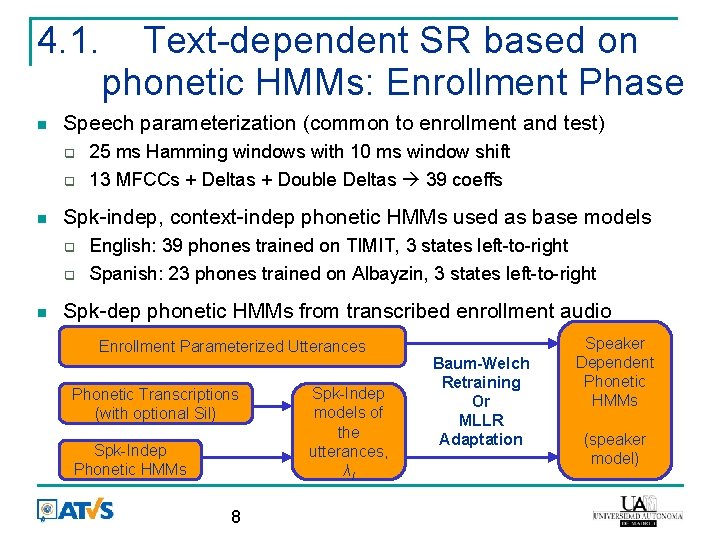

4. 1. Speech parameterization (common to enrollment and test) 25 ms Hamming windows with 10 ms window shift 13 MFCCs + Deltas + Double Deltas 39 coeffs Spk-indep, context-indep phonetic HMMs used as base models Text-dependent SR based on phonetic HMMs: Enrollment Phase English: 39 phones trained on TIMIT, 3 states left-to-right Spanish: 23 phones trained on Albayzin, 3 states left-to-right Spk-dep phonetic HMMs from transcribed enrollment audio Enrollment Parameterized Utterances Phonetic Transcriptions (with optional Sil) Spk-Indep Phonetic HMMs 8 Spk-Indep models of the utterances, λI Baum-Welch Retraining Or MLLR Adaptation Speaker Dependent Phonetic HMMs (speaker model)

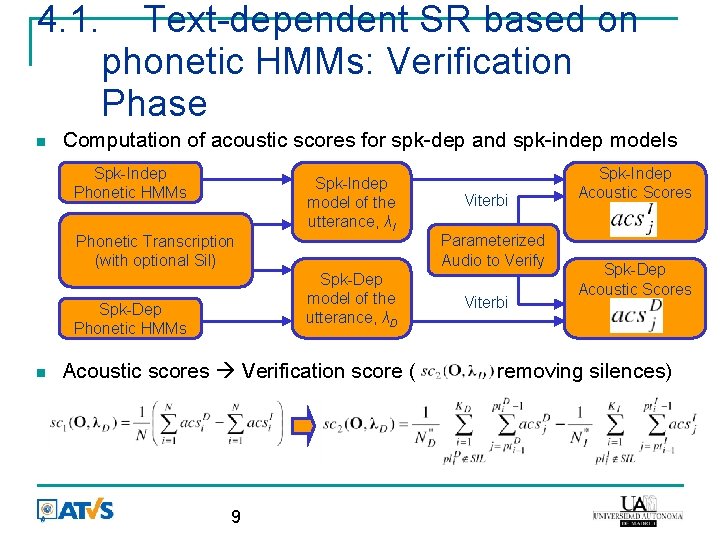

4. 1. Text-dependent SR based on phonetic HMMs: Verification Phase Computation of acoustic scores for spk-dep and spk-indep models Spk-Indep Phonetic HMMs Phonetic Transcription (with optional Sil) Spk-Dep model of the utterance, λD Spk-Dep Phonetic HMMs Spk-Indep model of the utterance, λI Acoustic scores Verification score ( 9 Viterbi Parameterized Audio to Verify Viterbi Spk-Indep Acoustic Scores Spk-Dep Acoustic Scores removing silences)

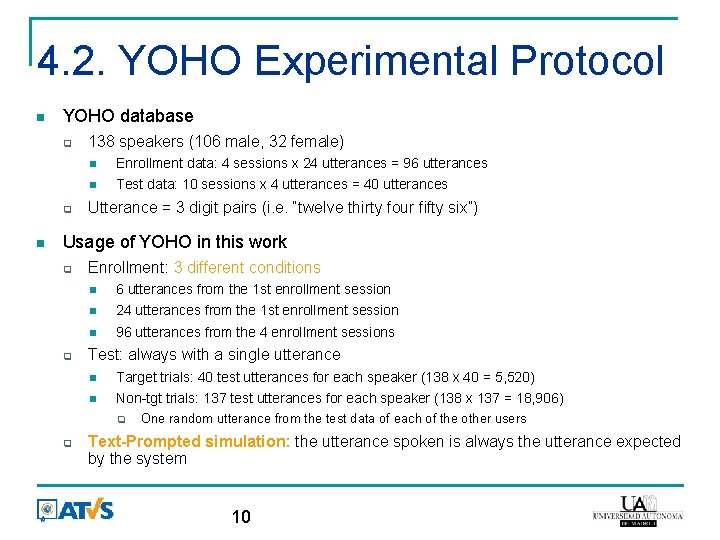

4. 2. YOHO Experimental Protocol YOHO database 138 speakers (106 male, 32 female) Enrollment data: 4 sessions x 24 utterances = 96 utterances Test data: 10 sessions x 4 utterances = 40 utterances Utterance = 3 digit pairs (i. e. “twelve thirty four fifty six”) Usage of YOHO in this work Enrollment: 3 different conditions 6 utterances from the 1 st enrollment session 24 utterances from the 1 st enrollment session 96 utterances from the 4 enrollment sessions Test: always with a single utterance Target trials: 40 test utterances for each speaker (138 x 40 = 5, 520) Non-tgt trials: 137 test utterances for each speaker (138 x 137 = 18, 906) One random utterance from the test data of each of the other users Text-Prompted simulation: the utterance spoken is always the utterance expected by the system 10

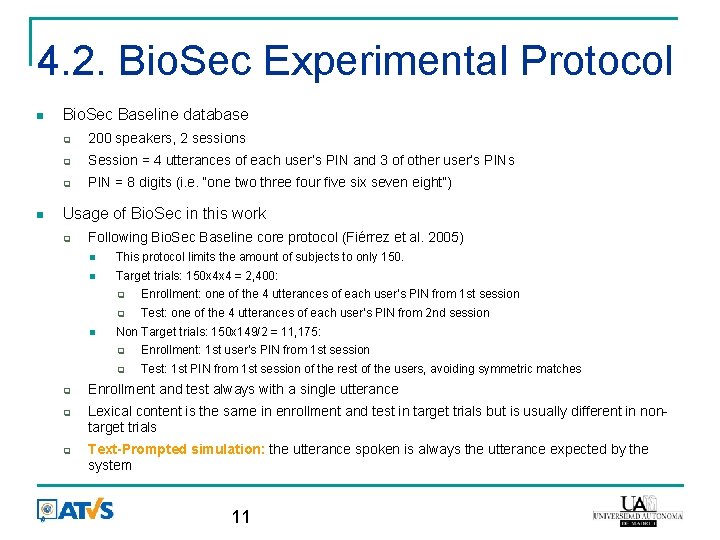

4. 2. Bio. Sec Experimental Protocol Bio. Sec Baseline database 200 speakers, 2 sessions Session = 4 utterances of each user’s PIN and 3 of other user’s PIN = 8 digits (i. e. “one two three four five six seven eight”) Usage of Bio. Sec in this work Following Bio. Sec Baseline core protocol (Fiérrez et al. 2005) This protocol limits the amount of subjects to only 150. Target trials: 150 x 4 x 4 = 2, 400: Enrollment: one of the 4 utterances of each user’s PIN from 1 st session Test: one of the 4 utterances of each user’s PIN from 2 nd session Non Target trials: 150 x 149/2 = 11, 175: Enrollment: 1 st user’s PIN from 1 st session Test: 1 st PIN from 1 st session of the rest of the users, avoiding symmetric matches Enrollment and test always with a single utterance Lexical content is the same in enrollment and test in target trials but is usually different in nontarget trials Text-Prompted simulation: the utterance spoken is always the utterance expected by the system 11

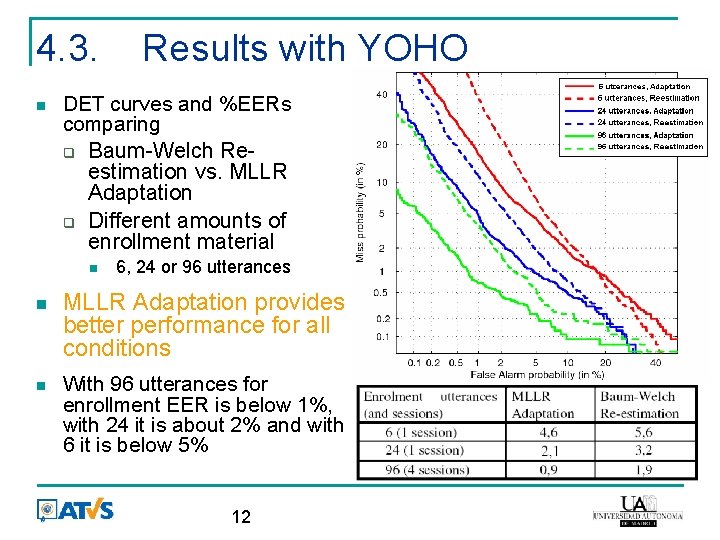

4. 3. DET curves and %EERs comparing Baum-Welch Reestimation vs. MLLR Adaptation Different amounts of enrollment material Results with YOHO 6, 24 or 96 utterances MLLR Adaptation provides better performance for all conditions With 96 utterances for enrollment EER is below 1%, with 24 it is about 2% and with 6 it is below 5% 12

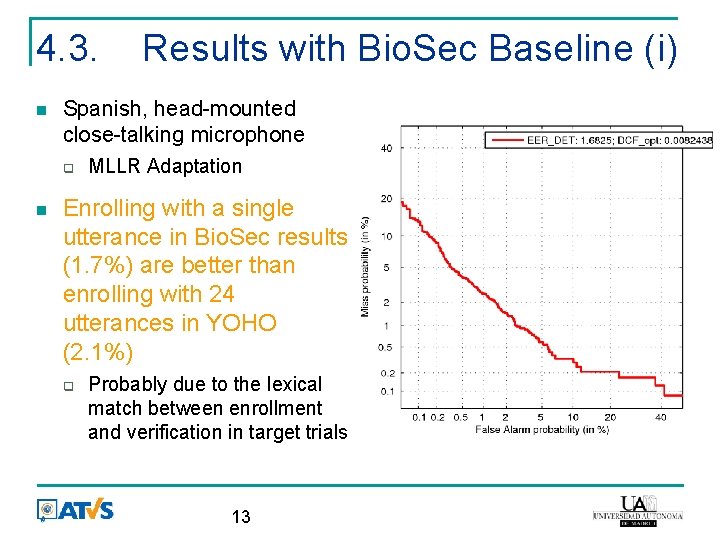

4. 3. Spanish, head-mounted close-talking microphone Results with Bio. Sec Baseline (i) MLLR Adaptation Enrolling with a single utterance in Bio. Sec results (1. 7%) are better than enrolling with 24 utterances in YOHO (2. 1%) Probably due to the lexical match between enrollment and verification in target trials 13

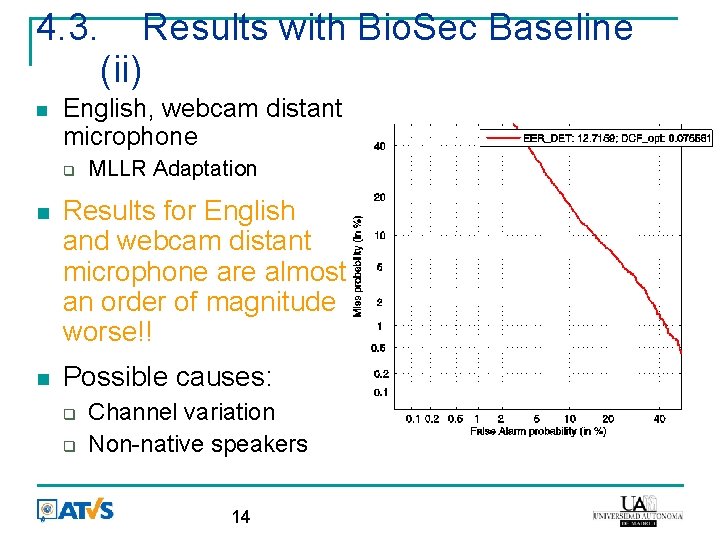

4. 3. Results with Bio. Sec Baseline (ii) English, webcam distant microphone MLLR Adaptation Results for English and webcam distant microphone are almost an order of magnitude worse!! Possible causes: Channel variation Non-native speakers 14

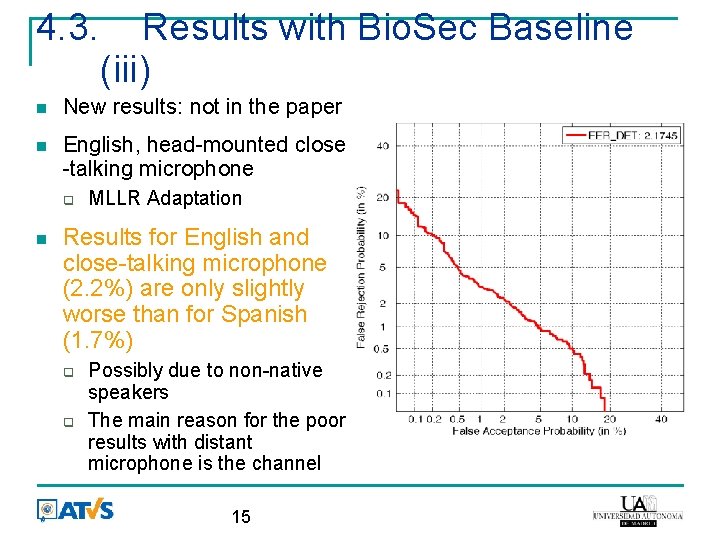

4. 3. Results with Bio. Sec Baseline (iii) New results: not in the paper English, head-mounted close -talking microphone MLLR Adaptation Results for English and close-talking microphone (2. 2%) are only slightly worse than for Spanish (1. 7%) Possibly due to non-native speakers The main reason for the poor results with distant microphone is the channel 15

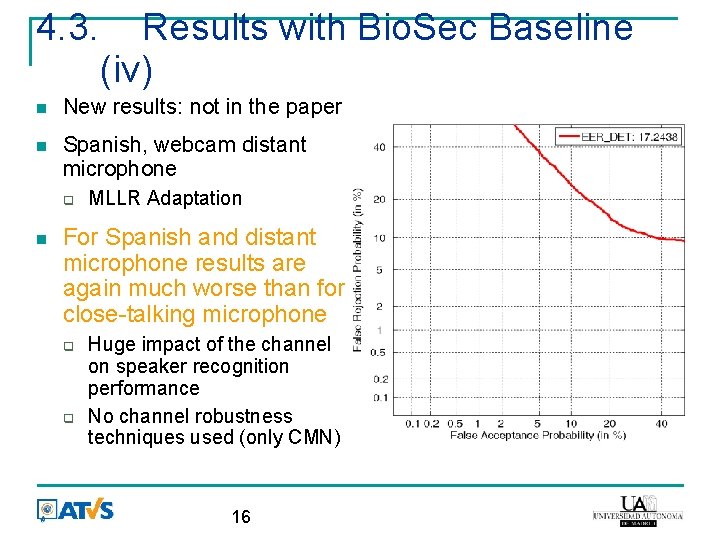

4. 3. Results with Bio. Sec Baseline (iv) New results: not in the paper Spanish, webcam distant microphone MLLR Adaptation For Spanish and distant microphone results are again much worse than for close-talking microphone Huge impact of the channel on speaker recognition performance No channel robustness techniques used (only CMN) 16

5. Conclusions We have studied Bio. Sec Baseline as a benchmark for textdependent speaker recognition We have tried to facilitate comparison of results between YOHO and Bio. Sec Baseline by evaluating the same method on both corpora For close-talking microphone results on Bio. Sec are much better than results on YOHO Probably due to the lexical match in enrollment and verification For distant webcam microphone results on Bio. Sec are much worse than results on YOHO Due to the channel variation No channel robustness techniques used (only CMN) 17

Thanks! 18

- Slides: 18