Binary Trees Binary Search Trees This is a

Binary Trees, Binary Search Trees, This is a Power. Point slideshow with and AVL Trees extensive animation; it is meant to be viewed in slideshow mode. If you’re reading this The text, Mostyou’re Beautiful Data Structures in the World not in slideshow mode. Enter slideshow mode by hitting function key 5 on your keyboard (the F 5 key) • This animation is a Power. Point slideshow • Hit the spacebar to advance • Hit the backspace key to go backwards • Hit the ESC key to terminate the show Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-1

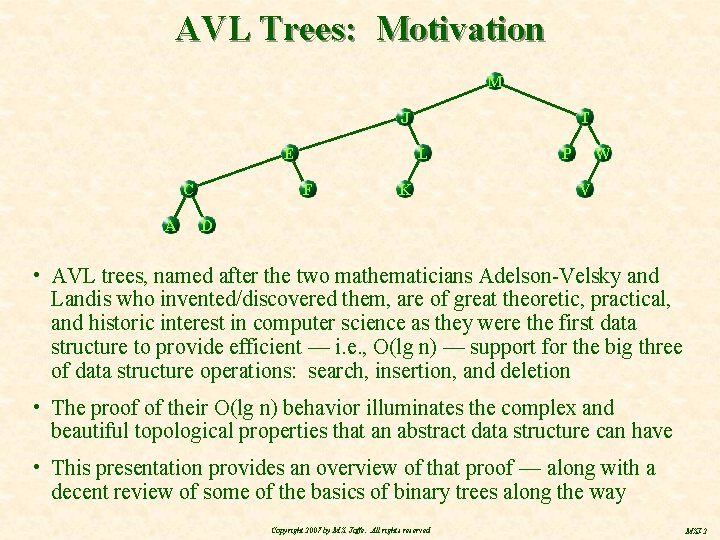

AVL Trees: Motivation M J E C A T L F K P W V D • AVL trees, named after the two mathematicians Adelson-Velsky and Landis who invented/discovered them, are of great theoretic, practical, and historic interest in computer science as they were the first data structure to provide efficient — i. e. , O(lg n) — support for the big three of data structure operations: search, insertion, and deletion • The proof of their O(lg n) behavior illuminates the complex and beautiful topological properties that an abstract data structure can have • This presentation provides an overview of that proof — along with a decent review of some of the basics of binary trees along the way Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-2

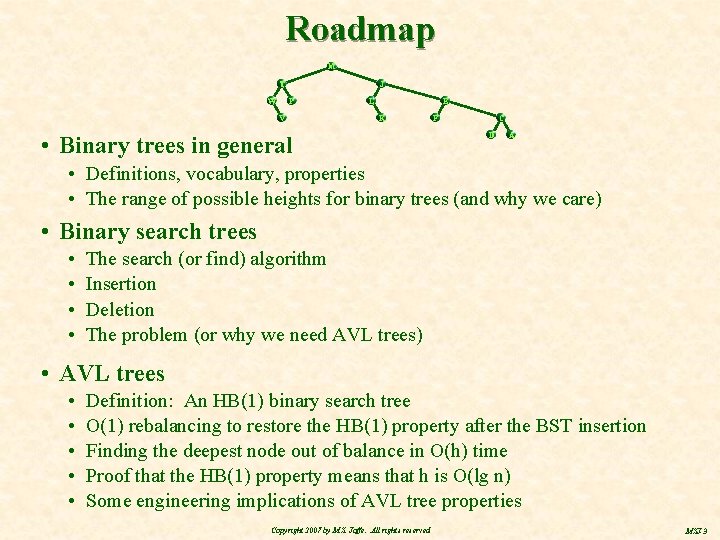

Roadmap M T W J P V L E K • Binary trees in general C F D A • Definitions, vocabulary, properties • The range of possible heights for binary trees (and why we care) • Binary search trees • • The search (or find) algorithm Insertion Deletion The problem (or why we need AVL trees) • AVL trees • • • Definition: An HB(1) binary search tree O(1) rebalancing to restore the HB(1) property after the BST insertion Finding the deepest node out of balance in O(h) time Proof that the HB(1) property means that h is O(lg n) Some engineering implications of AVL tree properties Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-3

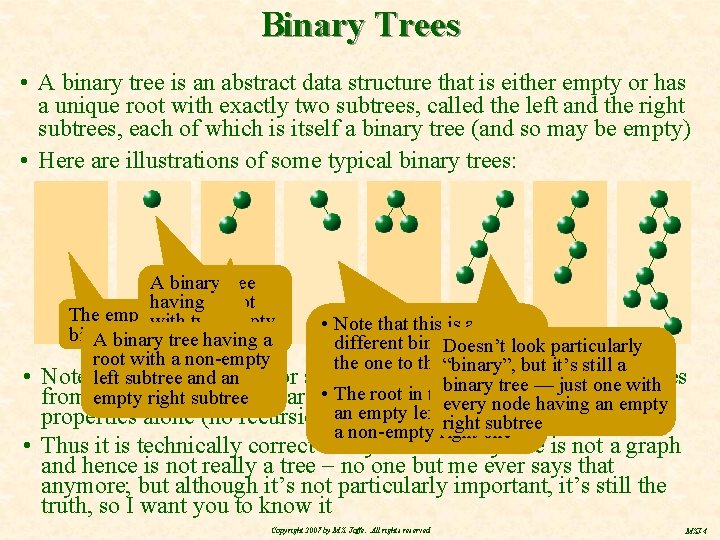

Binary Trees • A binary tree is an abstract data structure that is either empty or has a unique root with exactly two subtrees, called the left and the right subtrees, each of which is itself a binary tree (and so may be empty) • Here are illustrations of some typical binary trees: • A binary tree having a root The emptywith two empty • Note that this is a binary tree A binary tree having a subtrees different binary tree than Doesn’t look particularly root with a non-empty the one to the“binary”, left but it’s still a Note left thatsubtree the definition for a binary tree is recursive —justunlike trees and an binary tree — one with The rootbased in this onegraph has having subtree from empty graphright theory which are • defined on theoretic every node an empty subtree but properties alone (no recursion)an empty left right subtree a non-empty right one • Thus it is technically correct to say that a binary tree is not a graph and hence is not really a tree no one but me ever says that anymore; but although it’s not particularly important, it’s still the truth, so I want you to know it Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-4

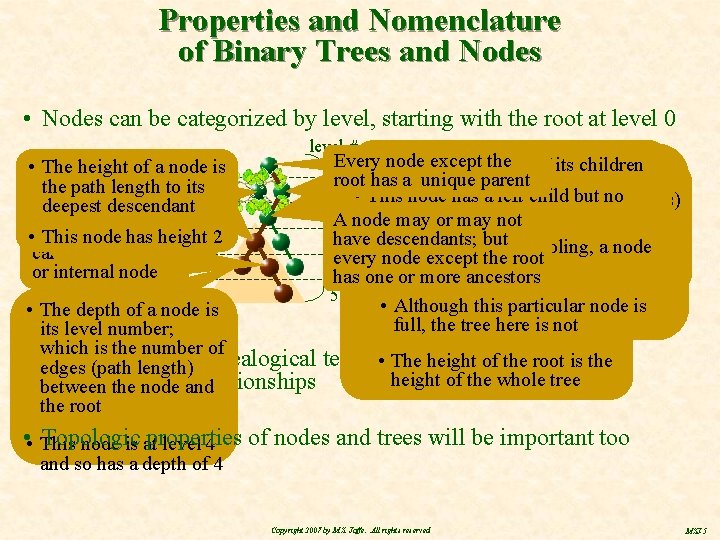

Properties and Nomenclature of Binary Trees and Nodes • Nodes can be categorized by level, starting with the root at level 0 • The height of a node is nodelength with no the. Apath to its descendants is descendant Adeepest node with called an is external • descendants This node has height 2 or leaf node called an interior or internal node level # node except the left (or right) aisgiven • • A • The node is the parent of itsof children 0 Every A node with two children said root has a unique parent node plus all that child’s There are many additional topologic to be full 1 properties • This node hascalled aare left child butproperties) no descendants collectively (also “shape” right child • referred A full tree is (or oneright) where all 2 Athat tomay as the left we’ll beorbinary defining and working with, node may not h=5 nodes are full — so there The height oforiginal a binary descendants of the node such asinternal the relationship between atree binary descendants; but 3 have • This node also has a sibling, a node are no nodes with onenodes, child, is the ofjust its deepest tree’s height anddepth its number of every node except the root with the same parent • The highlighted subtree contains 4 has everybody is this either a leaftree or full node; so binary has which we’ll examine next ormore • one Two orancestors less obvious the left descendants of this node a heightofofthis 5 definition: 5 consequences • Although this particular node is • The depth of a node is full, height the treeofhere notis 0 its level number; • The any is leaf is the number of • which We’ll often use genealogical terminology when referring to • The height of the root is the edges (path length) height of the whole tree nodes and theirand relationships between the node the root • • This Topologic properties of nodes and trees will be important too node is at level 4 and so has a depth of 4 Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-5

Roadmap M J E C A T L F K P W V D • Binary trees in general • Definitions, vocabulary, properties range of possible heights for binary trees • The (and why we care ; -) • Binary search trees • AVL trees Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-6

Range of Possible Heights for Binary Trees (and Why We Care) • The height and number of nodes in a binary tree are obviously related • For any given number of nodes, n, there is a minimum and a maximum possible height for a binary tree which contains that number of nodes – e. g. , a binary tree with 5 nodes can’t have height 0 or height 100 • Contra wise, for any given height, h, there is a minimum and maximum number of nodes possible for that height – e. g. , a binary tree with height 2 can’t have 1 node or 50 nodes in it • We’re very interested in the minimum and maximum possible heights for a binary tree because when we get to search trees (soon) it will be easy to see that search and insertion performance are O(h); the tougher question will be, what are the limitations on h as a function of n? • Large h leads to the poor performance • Small h will result in good performance Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-7

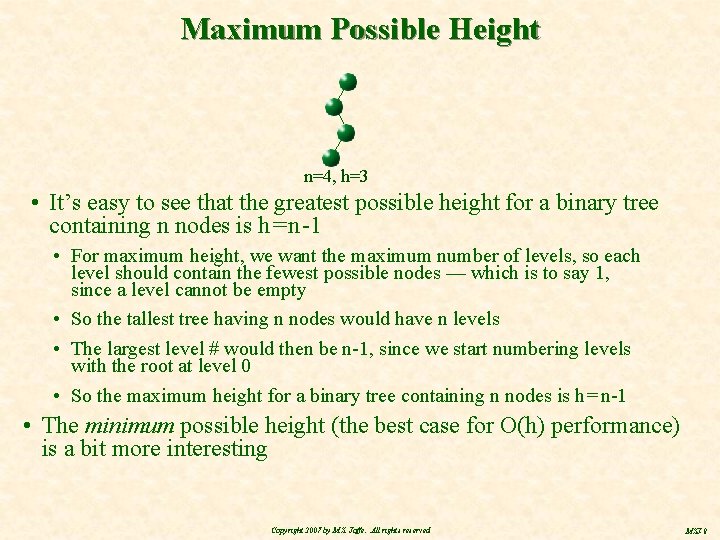

Maximum Possible Height n=4, h=3 • It’s easy to see that the greatest possible height for a binary tree containing n nodes is h = n -1 • For maximum height, we want the maximum number of levels, so each level should contain the fewest possible nodes — which is to say 1, since a level cannot be empty • So the tallest tree having n nodes would have n levels • The largest level # would then be n-1, since we start numbering levels with the root at level 0 • So the maximum height for a binary tree containing n nodes is h = n-1 • The minimum possible height (the best case for O(h) performance) is a bit more interesting Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-8

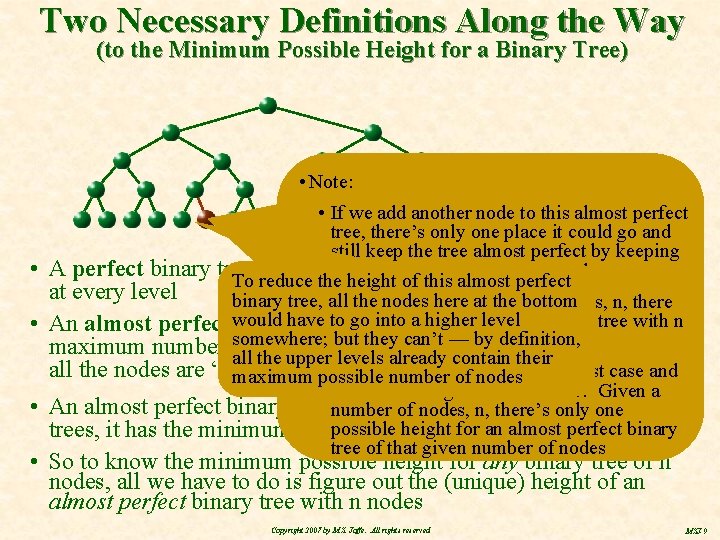

Two Necessary Definitions Along the Way (to the Minimum Possible Height for a Binary Tree) • Note: • If we add another node to this almost perfect tree, there’s only one place it could go and still keep the tree almost perfect by keeping • A perfect binary tree has the maximum possible bottom level all left number justified of nodes To reduce the height of this almost perfect at every level binary tree, • all herenumber at the bottom So the for nodes any given of nodes, n, there to go higher level is only onea almost perfect tree with • An almost perfect would (also have known asinto complete) binary tree has the n somewhere; but they can’t — byisdefinition, nodes — the shape unique maximum number of nodes at each level except the lowest, where all the upper levels already contain their all the nodes are “left justified” • possible Since the shapeof is nodes unique, the best case and maximum number worst case heights are the same: Given a • An almost perfect binary tree number is the most compact of only all binary of nodes, n, there’s one possible heightfor its an almost perfect binary trees, it has the minimum possible height number of nodes tree of that given number of nodes • So to know the minimum possible height for any binary tree of n nodes, all we have to do is figure out the (unique) height of an almost perfect binary tree with n nodes Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-9

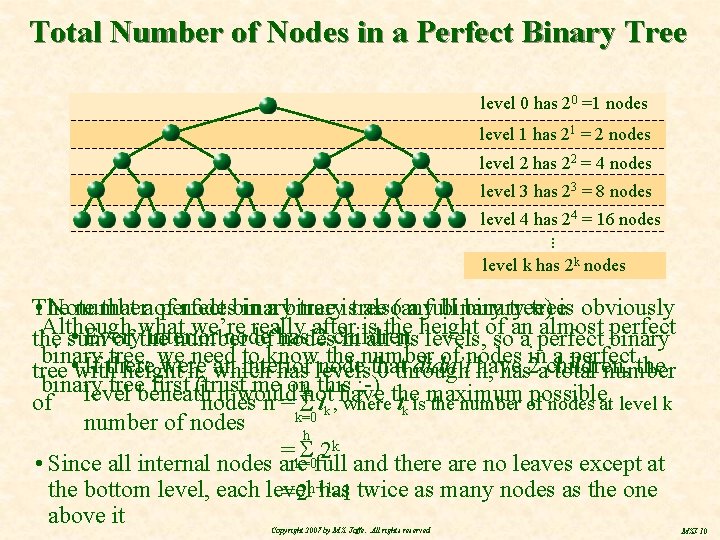

Total Number of Nodes in a Perfect Binary Tree level 0 has 20 =1 nodes level 1 has 21 = 2 nodes level 2 has 22 = 4 nodes level 3 has 23 = 8 nodes level 4 has 24 = 16 nodes • • • level k has 2 k nodes The number nodesbinary in a binary tree) • Note that a of perfect tree istree also(any a full binary treeis obviously Although what we’re really after is the height of an almost perfect • Every interior node 2 children the sum of the number of has nodes in all its levels, so a perfect binary tree, we need to knownode the number of nodes inchildren, a perfectthe • If there were an interior that didn’t have 2 tree with height h, which has levels 0 through h, has a total number binary treebeneath first (trust me onhnot this ; -) the maximum possible level it would have nodes n = k=0 of lk , where lk is the number of nodes at level k number of nodes h 2 k = k=0 • Since all internal nodes are full and there are no leaves except at the bottom level, each level has = 2 h+1 -1 twice as many nodes as the one above it Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-10

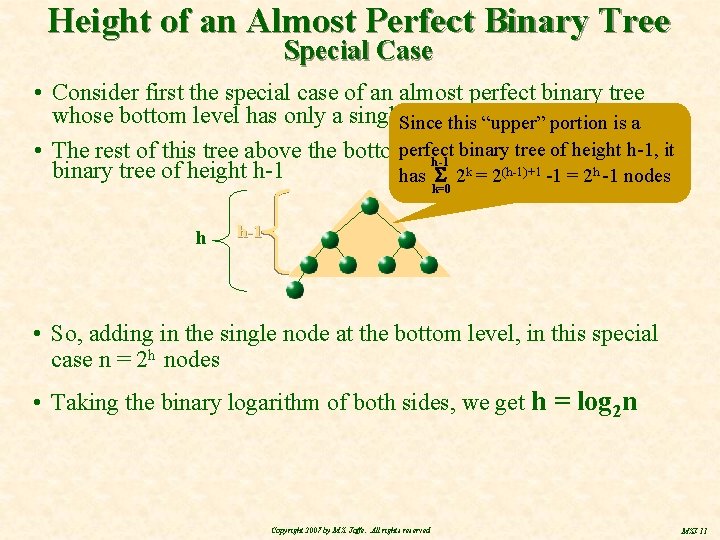

Height of an Almost Perfect Binary Tree Special Case • Consider first the special case of an almost perfect binary tree whose bottom level has only a single. Since nodethis in“upper” it portion is a binary tree of height h-1, it • The rest of this tree above the bottomperfect level is obviously a perfect h -1 binary tree of height h-1 has 2 k = 2(h-1)+1 -1 = 2 h -1 nodes k=0 h h-1 • So, adding in the single node at the bottom level, in this special case n = 2 h nodes • Taking the binary logarithm of both sides, we get h = log 2 n Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-11

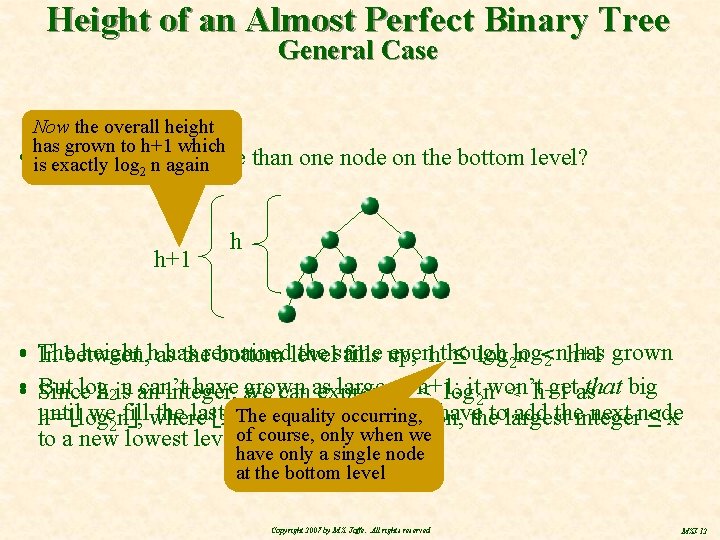

Height of an Almost Perfect Binary Tree General Case Now the overall height has grown to h+1 which • is. Suppose there’s more exactly log 2 n again h+1 than one node on the bottom level? h height hashas the same evenhthough has grown • The In between, theremained bottom level fills up, ≤ log 2 log n <2 nh+1 • • But log can’t have grown large as getas that big Since h 2 isn an integer, we canas express h h+1; ≤ logit 2 nwon’t < h+1 until we 2 nfill the last xlevel completely and have add theinteger next node equality occurring, h = log , where The is the “floor” function, thetolargest ≤x to a new lowest levelof course, only when we have only a single node at the bottom level Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-12

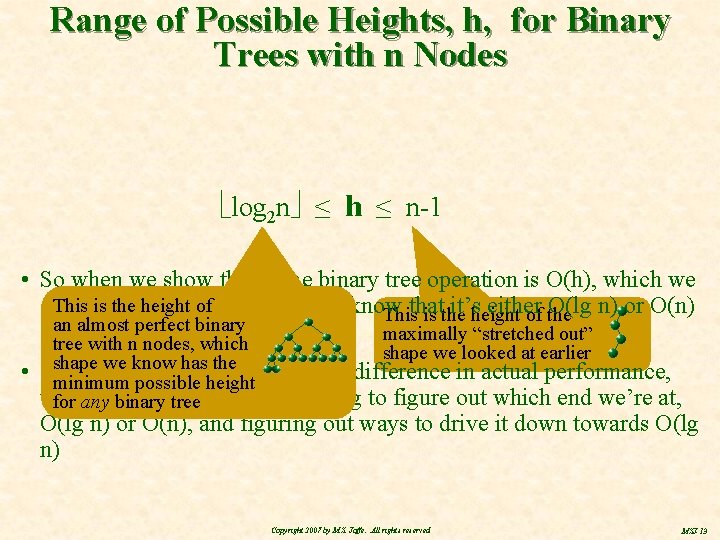

Range of Possible Heights, h, for Binary Trees with n Nodes log 2 n ≤ h ≤ n-1 • So when we show that some binary tree operation is O(h), which we Thisoften is thedo height of easily, we’ll know can rather it’sheight either O(lg n) or O(n) Thisthat is the of the almost perfectinbinary oran somewhere between maximally “stretched out” tree with n nodes, which • shape we looked at earlier shape we know has the Since thatpossible can make a pretty big difference in actual performance, minimum height the worktree will come in trying to figure out which end we’re at, forhard any binary O(lg n) or O(n), and figuring out ways to drive it down towards O(lg n) Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-13

Roadmap M J E C A T L F K P W V D • Binary trees in general • Binary search trees • • Definitions and the search (or find) algorithm Insertion Deletion The problem (or why we need AVL trees) • AVL trees Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-14

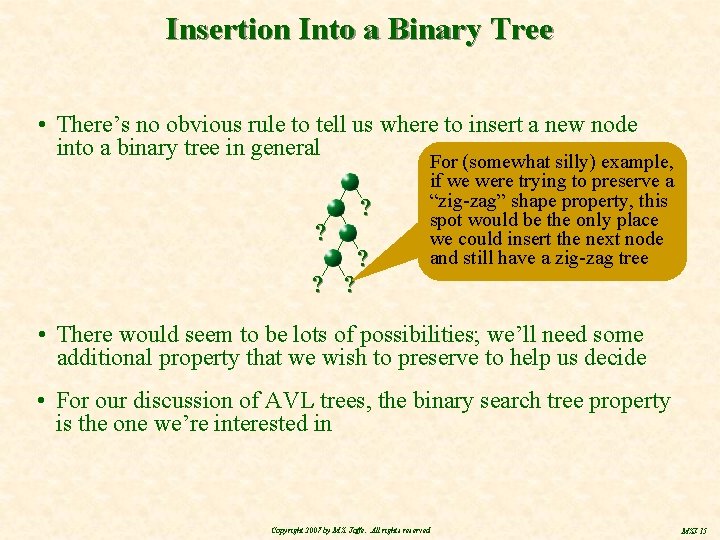

Insertion Into a Binary Tree • There’s no obvious rule to tell us where to insert a new node into a binary tree in general ? ? ? ? ? For (somewhat silly) example, if we were trying to preserve a “zig-zag” shape property, this spot would be the only place we could insert the next node and still have a zig-zag tree • There would seem to be lots of possibilities; we’ll need some additional property that we wish to preserve to help us decide • For our discussion of AVL trees, the binary search tree property is the one we’re interested in Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-15

Binary Search Trees A binary search tree (or BST) is a binary tree where each node contains some totally ordered* key value and all the nodes obey the BST property: The key values in all the left descendants of a given node are less than the key value of that given node; the key values of its right descendants are greater This binary tree is a BST M F S K Here’s a binary tree with at least one node that does not obey the BST property; so this binary tree is not a BST … although this node is M happy enough F H S T The key value here… L Note that the problem… is causes not between parent and this node to fail child but between more distant, still … the BSTbut property ancestral, relations — all the descendants of a * Values are totally ordered iff, for any node two keys , , exacty one of three relationships must be true: must have the correct key relationship with < , or = , or > it, not just its children Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-16

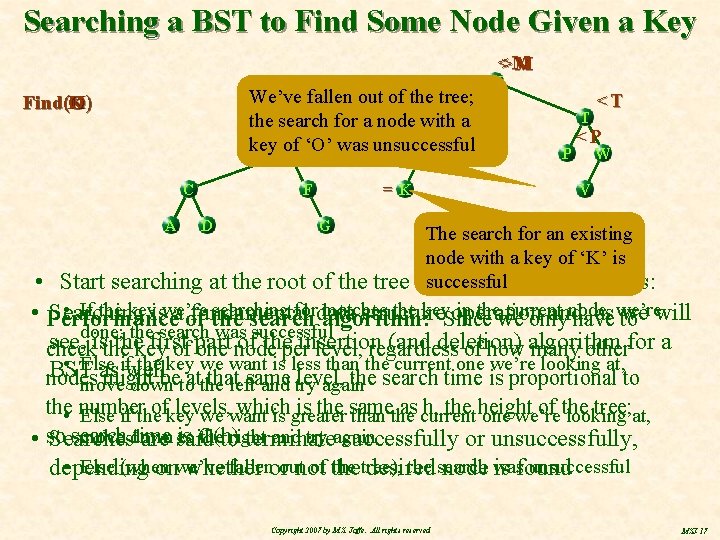

Searching a BST to Find Some Node Given a Key <>M M We’ve fallen out of the >J tree; J with a the search for a node <L key of ‘O’ was unsuccessful E L K Find(O K) O C A =K F D M T P <T <P W V G The search for an existing node with a key of ‘K’ is successful • Start searching at the root of the tree and then loop as follows: • If the keyiswe’re searching fordata matches the keyoperation in the current node, • Performance Searching a of fundamental structure and, as we’re we will the search algorithm: Since we only have to done; the search was successful see, isthe thekey first of theperinsertion (and deletion) check of part one node level, regardless of how algorithm many otherfor a • Else ifwell the key we want is less than the current one we’re looking at, BST as nodes might betoatthe that same level, the search time is proportional to move down left and try again the • number ofkey levels, which is the same as current h, the height of the tree; at, Else if the we want is greater than the one we’re looking search time is move down to O(h) thetoright and try again • so Searches are said terminate successfully or unsuccessfully, • Else (when fallenorout of the tree), the search unsuccessful depending onwe’ve whether not desired node was is found Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-17

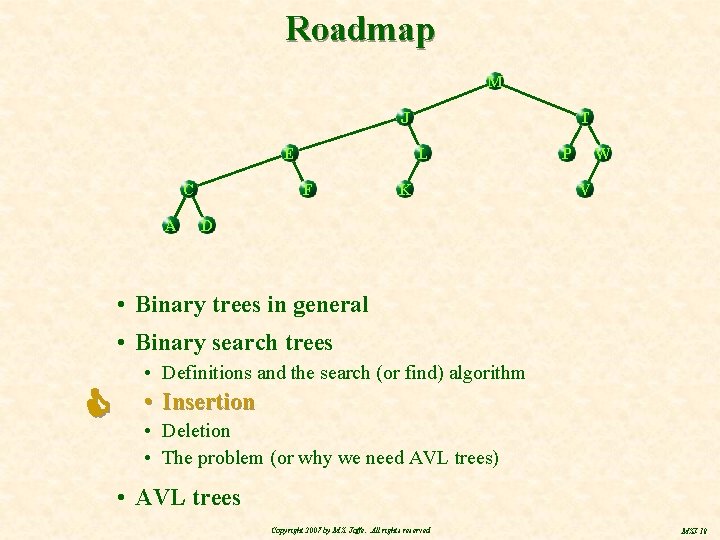

Roadmap M J E C A T L F K P W V D • Binary trees in general • Binary search trees • Definitions and the search (or find) algorithm • Insertion • Deletion • The problem (or why we need AVL trees) • AVL trees Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-18

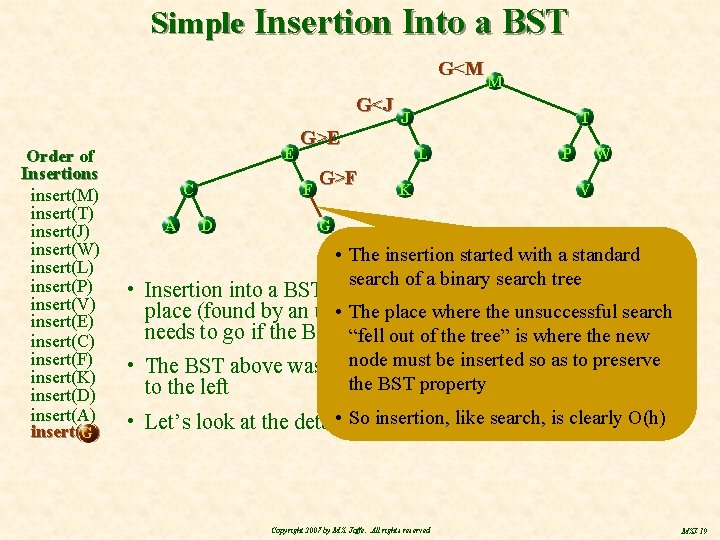

Simple Insertion Into a BST G<M G<J Order of Insertions insert(M) insert(T) insert(J) insert(W) insert(L) insert(P) insert(V) insert(E) insert(C) insert(F) insert(K) insert(D) insert(A) insert(G) G E C A D J G>E F G>F M T L K P W V G • The insertion started with a standard search of a binary search tree • Insertion into a BST involves searching the tree for the empty place (found by an unsuccessful search)thewhere the newsearch node • The place where unsuccessful needs to go if the BST property to be preserved “fell out ofisthe tree” is where the new node be insertedofsoinsertions as to preserve • The BST above was built bymust the sequence shown the BST property to the left • So search, is clearly O(h) • Let’s look at the details ofinsertion, the next like insertion Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-19

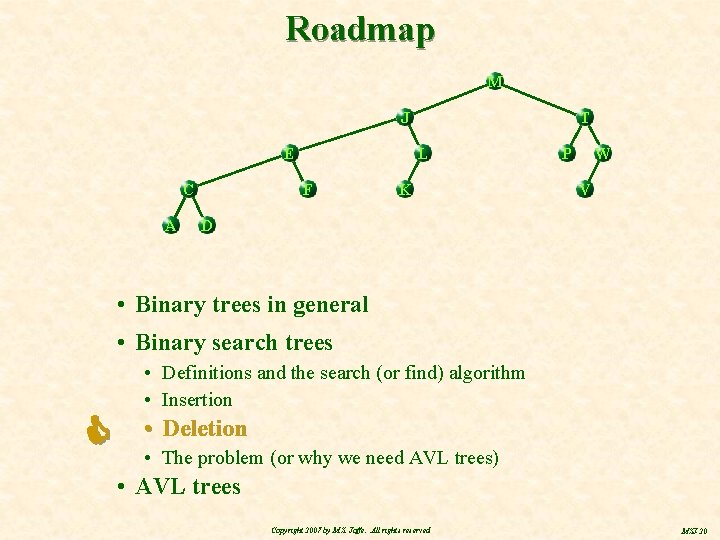

Roadmap M J E C A T L F K P W V D • Binary trees in general • Binary search trees • Definitions and the search (or find) algorithm • Insertion • Deletion • The problem (or why we need AVL trees) • AVL trees Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-20

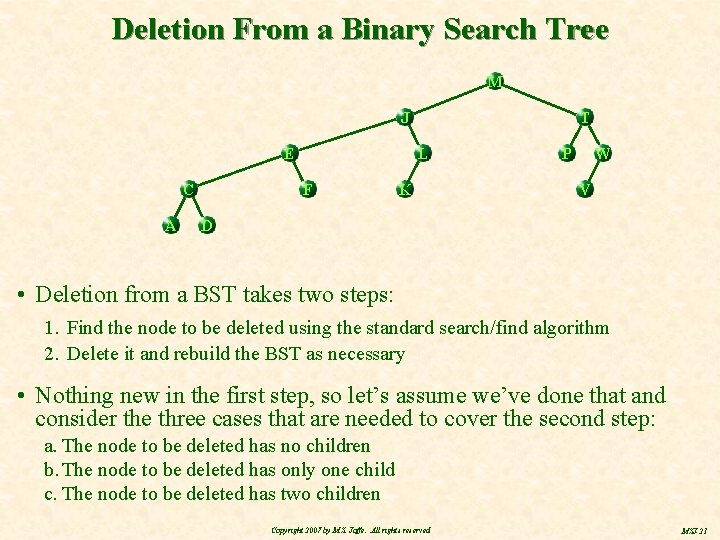

Deletion From a Binary Search Tree M J E C A T L F K P W V D • Deletion from a BST takes two steps: 1. Find the node to be deleted using the standard search/find algorithm 2. Delete it and rebuild the BST as necessary • Nothing new in the first step, so let’s assume we’ve done that and consider the three cases that are needed to cover the second step: a. The node to be deleted has no children b. The node to be deleted has only one child c. The node to be deleted has two children Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-21

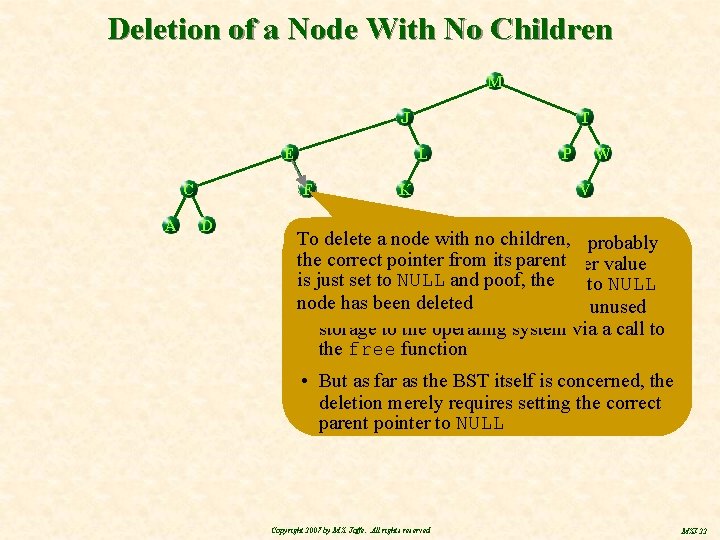

Deletion of a Node With No Children M J E C A L F D T K P W V To delete a node with matter, no children, • As a programming you’d probably thewant correct pointer from itsold parent to have saved that pointer value is just set to NULL poof, the it to NULL somewhere first, and before setting node deleted so has you been could, for example, return unused storage to the operating system via a call to the free function • But as far as the BST itself is concerned, the deletion merely requires setting the correct parent pointer to NULL Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-22

Deletion of a Node With Only One Child M parent of deleted node J Only one link needed to E change to delete the desired node and. Cpromote its child A D Let’s delete node ‘L’ node to be promoted deleted child L only child of node to be deleted K k 1 T P W V k 2 descendants (if any) of promoted child • Note that the BST property is preserved by the promotion: No left or right altered Allrelationships the promotedare child’s To delete a node with ancestor-descendant only one anywhere descendants (if any) move up child, the child is “promoted” to • Specifically, the now promoted child all so its will descendants withand it and be replace its deleted parent (if any) remain to the same side ofunaffected the parentby of the deletion now of deleted node as they were before one the deletion of their ancestors • In this example, node ‘K’ and its descendants were to the right of node ‘J’ before the deletion of node ‘L’ and they are to the right of node ‘J’ after the deletion and their subsequent promotion Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-23

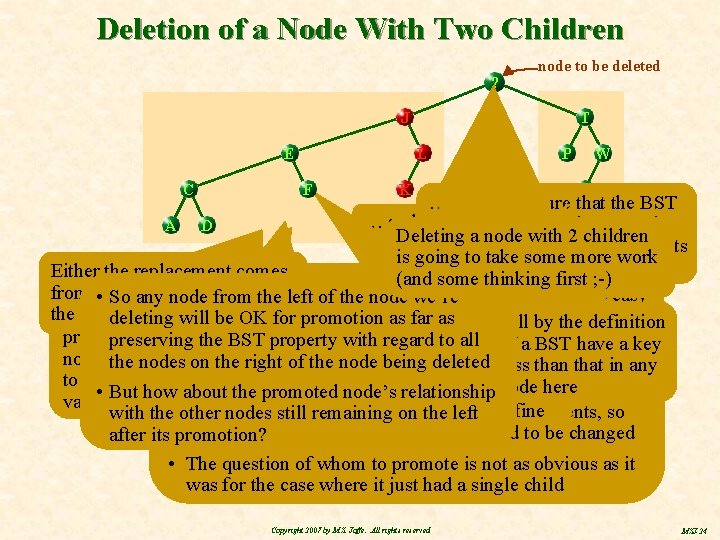

Deletion of a Node With Two Children M ? node to be deleted J E C T L F K P W V … so as to ensure that the BST … and move property holds between the A D Deleting a node with 2 children up here … newly promoted node and its is going toittake some more work … or comes from left descendents Either the replacement comes first ; -) Let’s look atchoose the case. If, where it(and some for example, we thinking promote on somewhere node we from Whichever somewhere on the left of • node Once wethe have found node to be deleted, we have easy • So anycomes from left of thethe node we’re from the left – the other node ‘F’, we willthe have right done to order “promote” from here … the being … Sonode in to deleted preserve the BST access to its descendants but, unless we’ve deleting will be OK for promotion as far as … will by ‘J’, the definition case is just the reverse destroyed our BST – nodes, property here insomething thewe special, have no access ancestors preserving the left BSTsubtree, property with regard to greater all toofits a BST have a key ‘K’, and ‘L’ are than ‘F’ node we toany “promote” has –right here, for example, we’ll deleteless the root)that in any the choose nodes (if on the ofare thetwo node being deleted than There choices for where to find but would be to its left to be the one • having thethe largest node herenode replacement to promote and So what wantkey tonode’s do isnode replace the data in the • But how about thewe promoted relationship value … either one will work justdescendents, fine beingnodes deleted with dataactually from of its so with the other still remaining on one the left none of the deleted node’s ancestors need to be changed after its promotion? • The question of whom to promote is not as obvious as it was for the case where it just had a single child Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-24

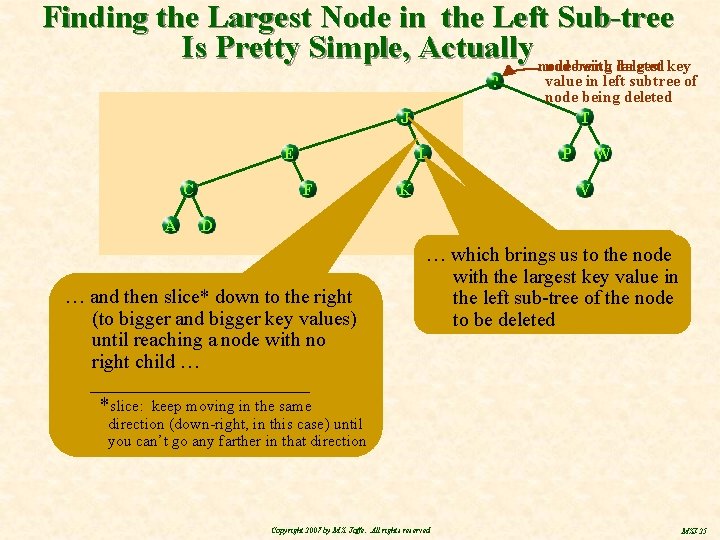

Finding the Largest Node in the Left Sub-tree Is Pretty Simple, Actually nodebeing with deleted largest key ? J E C A L F K value in left subtree of node being deleted T P W V D … and then slice* down to the right (to bigger and bigger key values) until reaching a node with no right child … ___________ *slice: keep moving in the same Move down … which brings us to to the left node get to thevalue root in withonce the (to largest key left subtree) the of leftthe sub-tree of the … node to be deleted direction (down-right, in this case) until you can’t go any farther in that direction Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-25

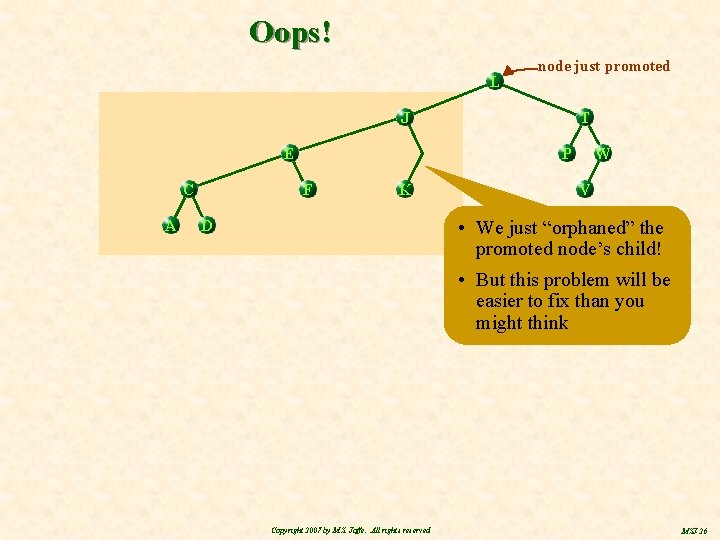

Oops! L node just promoted J E C A T P F K W V • We just “orphaned” the promoted node’s child! • But this problem will be easier to fix than you might think D Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-26

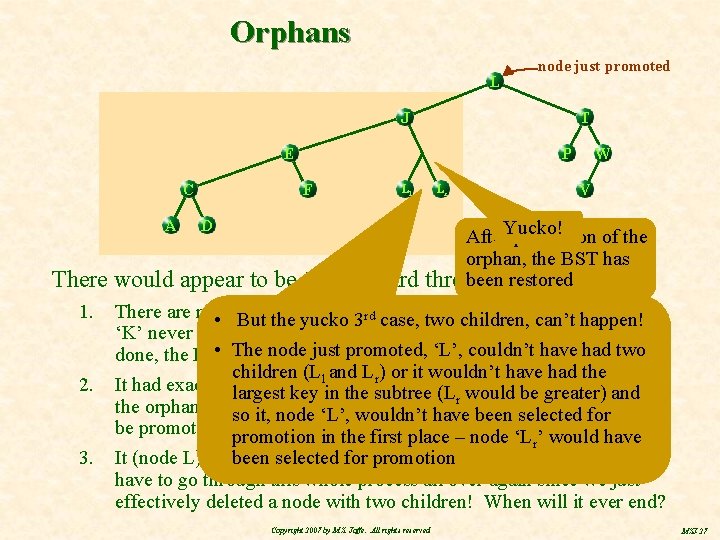

Orphans L node just promoted J T E C A P F Ll K D There would appear to be the standard 1. 2. 3. Lr W V After. Yucko! promotion of the orphan, the BST has restored threebeen possibilities: There are no • orphans; (node 3‘L’) actually had no children (node rd case, But theityucko two children, can’t happen! ‘K’ never existed), in which case after promoting node ‘L’ we’re all • The node holds just promoted, ‘L’, couldn’t have had two done, the BST property everywhere children (Ll and Lr) or it wouldn’t have had the It had exactly one child, thesubtree example in which largest keyasininthe (Lrshown wouldhere; be greater) andcase the orphaned child ‘K’) andhave its descendants (iffor any) can so it, itself node(node ‘L’, wouldn’t been selected be promoted a promotion single level, sawplace on an earlier‘Lchart inas thewe first – node r’ would have It (node L) hadbeen two selected children for (Ll promotion to the left and Lr to the right) and we have to go through this whole process all over again since we just effectively deleted a node with two children! When will it ever end? Q. E. D Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-27

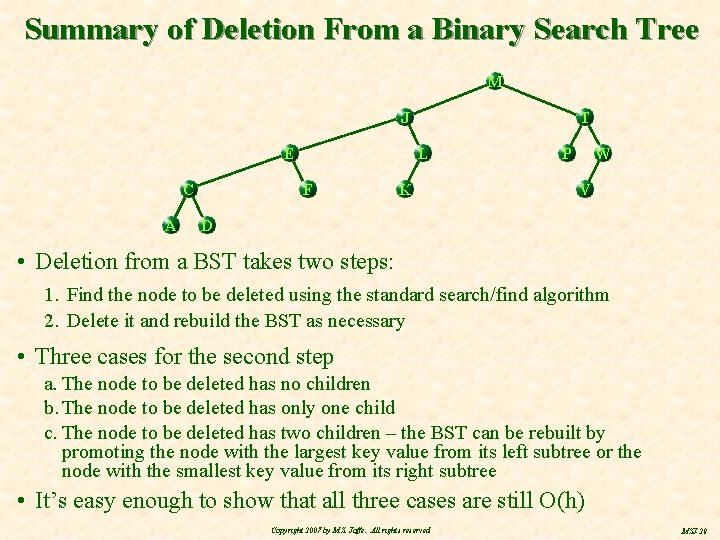

Summary of Deletion From a Binary Search Tree M J E C A T L F K P W V D • Deletion from a BST takes two steps: 1. Find the node to be deleted using the standard search/find algorithm 2. Delete it and rebuild the BST as necessary • Three cases for the second step a. The node to be deleted has no children b. The node to be deleted has only one child c. The node to be deleted has two children – the BST can be rebuilt by promoting the node with the largest key value from its left subtree or the node with the smallest key value from its right subtree • It’s easy enough to show that all three cases are still O(h) Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-28

Roadmap M J E C A T L F K P W V D • Binary trees in general • Binary search trees • Definitions and the search (or find) algorithm • Insertion • Deletion • The problem (or why we need AVL trees) • AVL trees Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-29

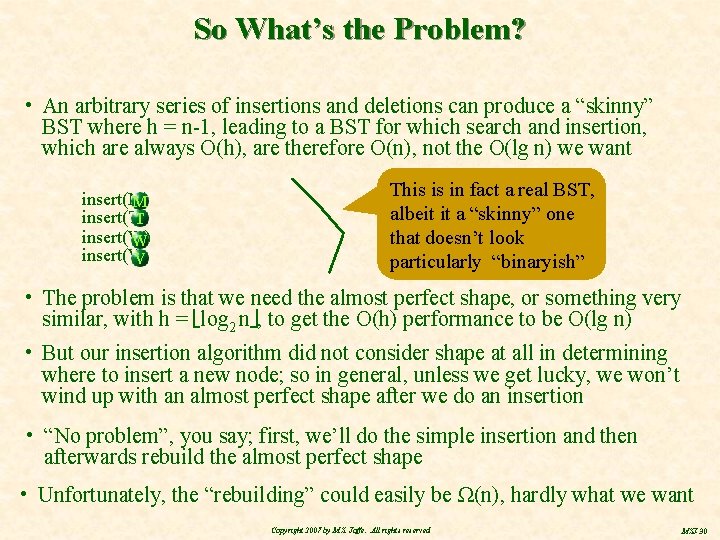

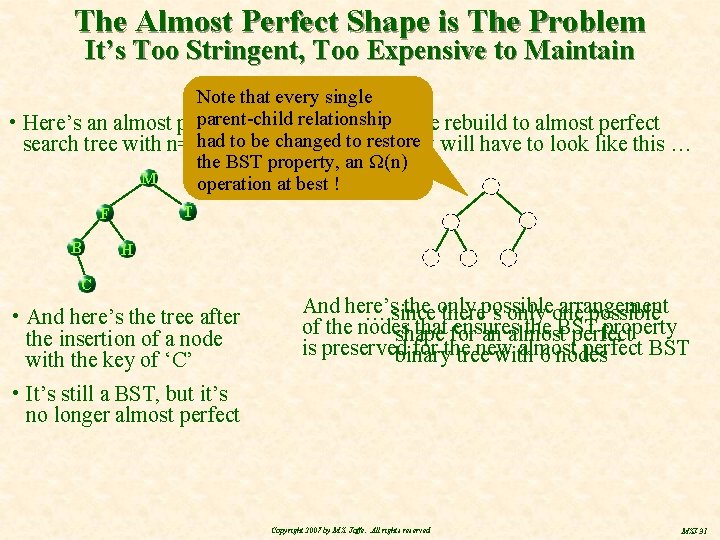

So What’s the Problem? • An arbitrary series of insertions and deletions can produce a “skinny” BST where h = n-1, leading to a BST for which search and insertion, which are always O(h), are therefore O(n), not the O(lg n) we want insert(M) M insert(T) T insert(W) W insert(V) V This is in fact a real BST, albeit it a “skinny” one that doesn’t look particularly “binaryish” • The problem is that we need the almost perfect shape, or something very similar, with h = log 2 n , to get the O(h) performance to be O(lg n) • But our insertion algorithm did not consider shape at all in determining where to insert a new node; so in general, unless we get lucky, we won’t wind up with an almost perfect shape after we do an insertion • “No problem”, you say; first, we’ll do the simple insertion and then afterwards rebuild the almost perfect shape • Unfortunately, the “rebuilding” could easily be Ω(n), hardly what we want Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-30

The Almost Perfect Shape is The Problem It’s Too Stringent, Too Expensive to Maintain Note that every single parent-child • Here’s an almost perfect binaryrelationship After we rebuild to almost perfect to be changed toshape, restore it will have to look like this … search tree with n=5 had nodes the BST property, an Ω(n) M operation at best ! T F B H C • And here’s the tree after the insertion of a node with the key of ‘C’ And here’s the only possible arrangement … since there’s only one possible of the nodes that for ensures the BST property shape an almost perfect is preserved for the new almost perfect BST binary tree with 6 nodes • It’s still a BST, but it’s no longer almost perfect Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-31

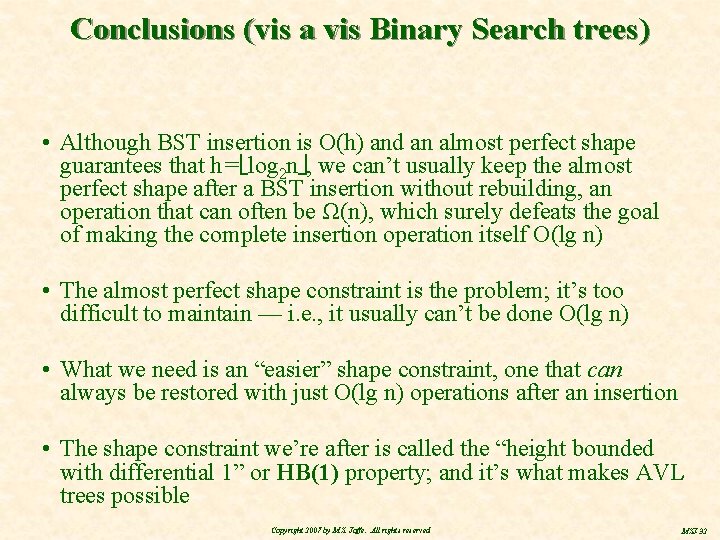

Conclusions (vis a vis Binary Search trees) • Although BST insertion is O(h) and an almost perfect shape guarantees that h = log 2 n , we can’t usually keep the almost perfect shape after a BST insertion without rebuilding, an operation that can often be Ω(n), which surely defeats the goal of making the complete insertion operation itself O(lg n) • The almost perfect shape constraint is the problem; it’s too difficult to maintain — i. e. , it usually can’t be done O(lg n) • What we need is an “easier” shape constraint, one that can always be restored with just O(lg n) operations after an insertion • The shape constraint we’re after is called the “height bounded with differential 1” or HB(1) property; and it’s what makes AVL trees possible Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-32

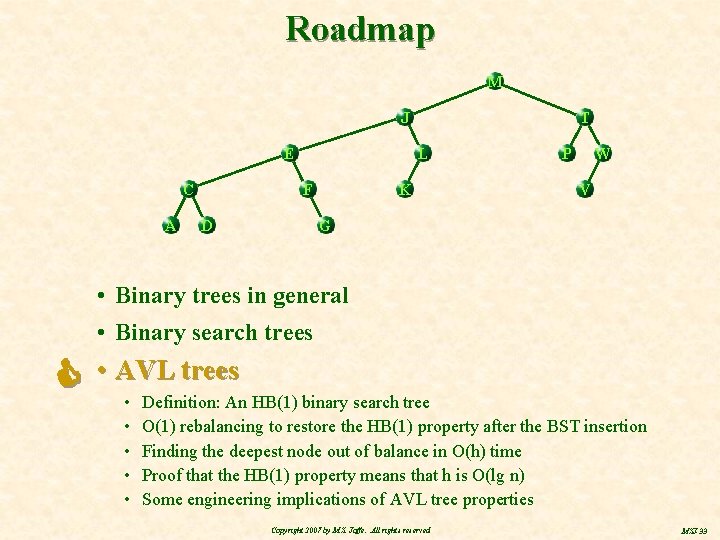

Roadmap M J E C A T L F D K P W V G • Binary trees in general • Binary search trees • AVL trees • • • Definition: An HB(1) binary search tree O(1) rebalancing to restore the HB(1) property after the BST insertion Finding the deepest node out of balance in O(h) time Proof that the HB(1) property means that h is O(lg n) Some engineering implications of AVL tree properties Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-33

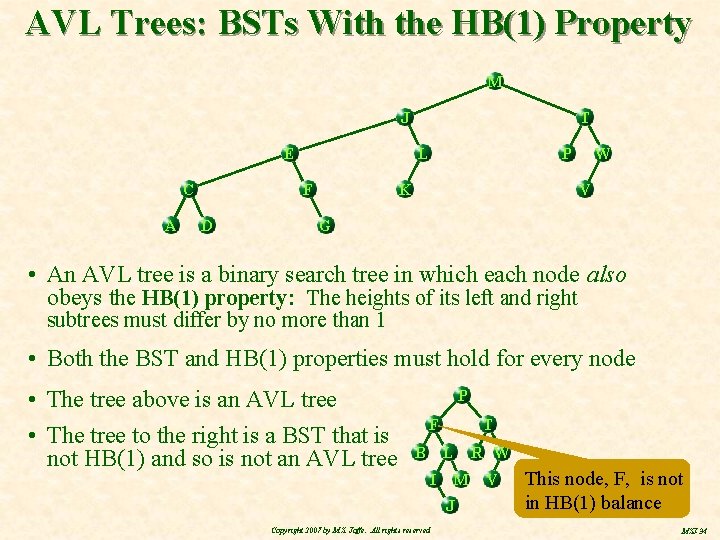

AVL Trees: BSTs With the HB(1) Property M T J E C A L F D P K W V G • An AVL tree is a binary search tree in which each node also obeys the HB(1) property: The heights of its left and right subtrees must differ by no more than 1 • Both the BST and HB(1) properties must hold for every node • The tree above is an AVL tree • The tree to the right is a BST that is not HB(1) and so is not an AVL tree P F B T L I M J Copyright 2007 by M. S. Jaffe. All rights reserved R W V This node, F, is not in HB(1) balance MSJ-34

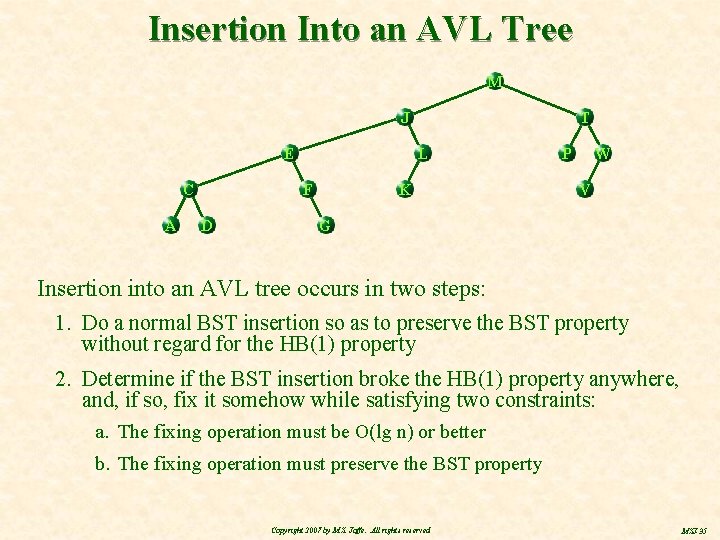

Insertion Into an AVL Tree M J E C A L F D T K P W V G Insertion into an AVL tree occurs in two steps: 1. Do a normal BST insertion so as to preserve the BST property without regard for the HB(1) property 2. Determine if the BST insertion broke the HB(1) property anywhere, and, if so, fix it somehow while satisfying two constraints: a. The fixing operation must be O(lg n) or better b. The fixing operation must preserve the BST property Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-35

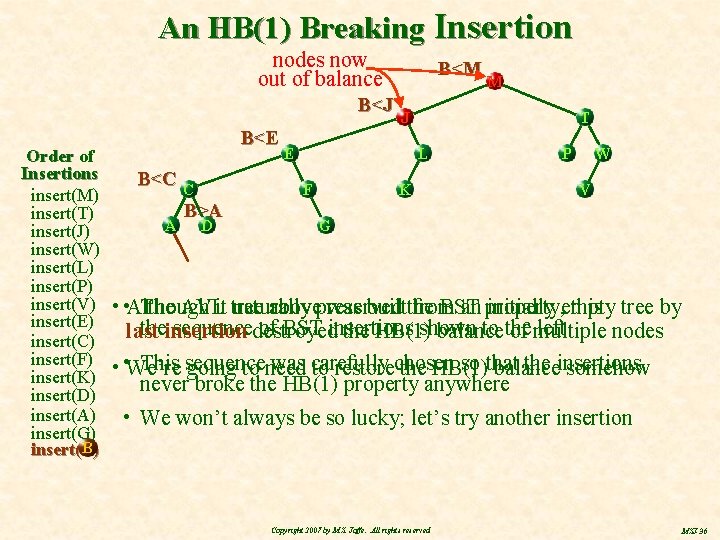

An HB(1) Breaking Insertion nodes now out of balance B<J Order of Insertions insert(M) insert(T) insert(J) insert(W) insert(L) insert(P) insert(V) insert(E) insert(C) insert(F) insert(K) insert(D) insert(A) insert(G) B insert(B) B<E B<C C A D M J E T L F B>A B<M K P W V G The AVLit tree abovepreserved was builtthe from an property, initially empty • • Although naturally BST this tree by theinsertion sequencedestroyed of BST insertions shown to the left last the HB(1) balance of multiple nodes This sequence was carefully so that the insertions • • We’re going to need to restorechosen the HB(1) balance somehow never broke the HB(1) property anywhere • We won’t always be so lucky; let’s try another insertion Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-36

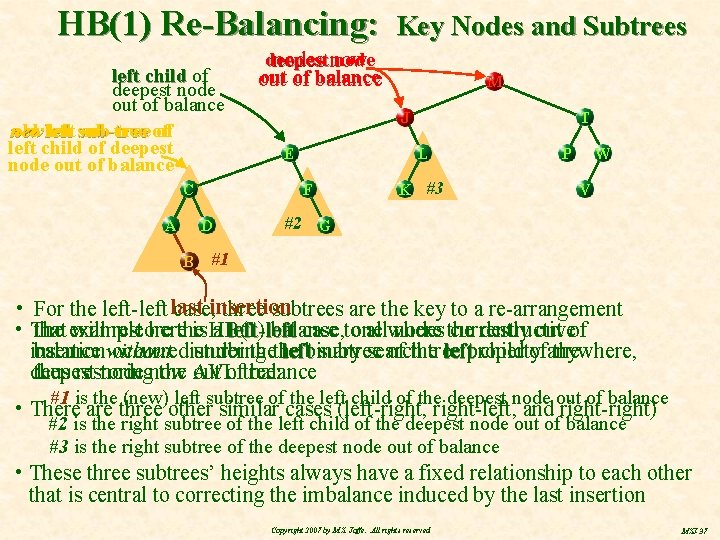

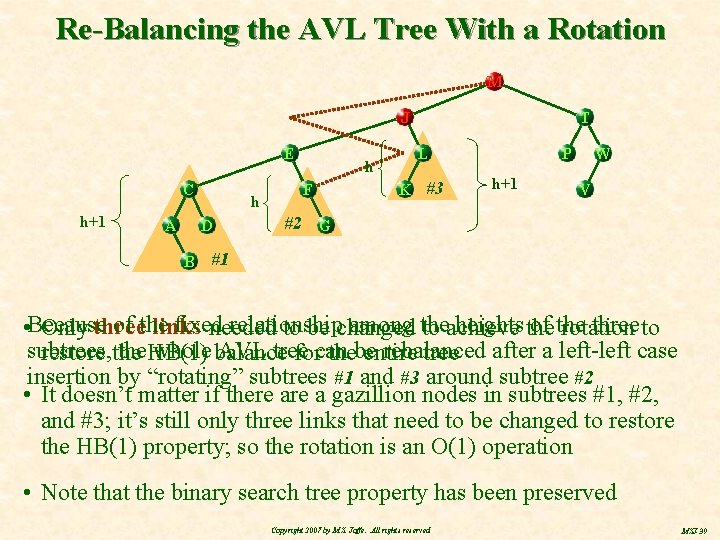

HB(1) Re-Balancing: left child of deepest node out of balance new old left sub-tree of of left child of deepest node out of balance C A B deepest nodes now out of balance out balance M J E T L F D Key Nodes and Subtrees K #3 P W V #2 G #1 • For the left-left last case, insertion three subtrees are the key to a re-arrangement that example will restore balance allwhere nodes the currently out of • The herethe is HB(1) a left-left case, toone destructive balance without search insertion occurreddisturbing under thethe leftbinary subtree of thetree leftproperty child ofanywhere, the thus restoring the AVL deepest node now out oftree: balance #1 is the (new) left subtree of the left child of the deepest node out of balance #2 is the right subtree of the left child of the deepest node out of balance #3 is the right subtree of the deepest node out of balance • There are three other similar cases (left-right, right-left, and right-right) • These three subtrees’ heights always have a fixed relationship to each other that is central to correcting the imbalance induced by the last insertion Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-37

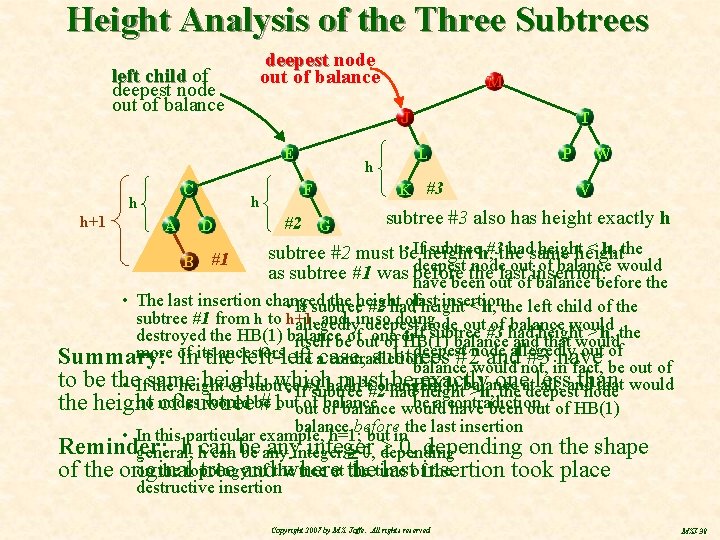

Height Analysis of the Three Subtrees deepest node out of balance left child of deepest node out of balance J E C h h+1 A h D B #1 M h F #2 G T L K #3 P W V subtree #3 also has height exactly h • Ifheight subtreeh, #3 the hadsame height < h, the subtree #2 must be nodelast outinsertion: of balance would as subtree #1 was deepest before the have been out of balance before the insertion • The last insertion changed the height oflast • If subtree #2 had height < h, the left child of the subtree #1 from h to h+1 , and, in so doing, allegedly deepest node out#3 of had balance would • If subtree height > h, the destroyed the HB(1) balance of one or itself be out of HB(1) balance and that would deepest node allegedly out of more of ancestors Summary: Initsthe left-left subtrees #2 and #3 have be acase, contradiction balance would not, in fact, be out of to be the same height, which must be exactly onedeepest less than all, and that would • If the height of subtree hadn’t#2 changed, • If#1 subtree had. HB(1) heightbalance >h, the at node be a contradiction no nodes would be of of balance the height of subtree #1 outout balance would have been out of HB(1) balance before the last insertion • In this particular example, h=1; but in Reminder: h hcan anyinteger 0, depending on the shape general, can be be any 0, depending on the topology of the tree at the time the of the original tree and where lastofinsertion took place destructive insertion Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-38

Re-Balancing the AVL Tree With a Rotation M T J E C h+1 A h D B h F P L K #3 h+1 W V #2 G #1 of the fixed relationship among the heights the of the three to links • Because Only three needed to be changed to achieve rotation subtrees, the. HB(1) whole balance AVL tree rebalanced restore the forcan thebe entire tree after a left-left case insertion by “rotating” subtrees #1 and #3 around subtree #2 • It doesn’t matter if there a gazillion nodes in subtrees #1, #2, and #3; it’s still only three links that need to be changed to restore the HB(1) property; so the rotation is an O(1) operation • Note that the binary search tree property has been preserved Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-39

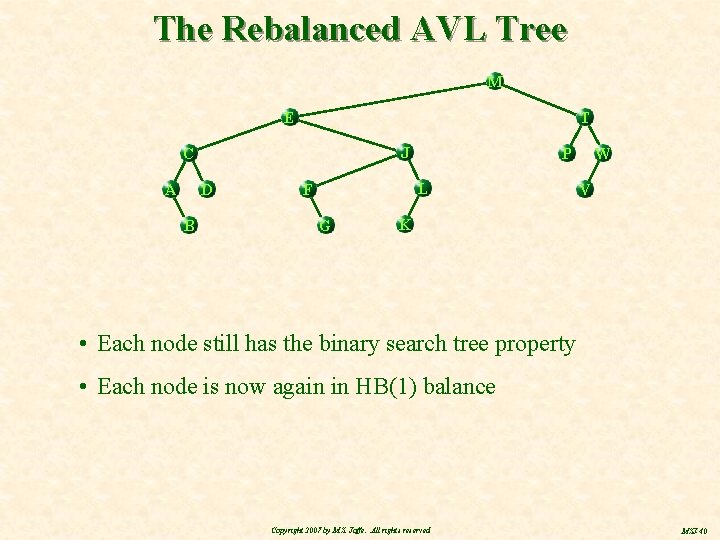

The Rebalanced AVL Tree M E T J C A D B P L F G W V K • Each node still has the binary search tree property • Each node is now again in HB(1) balance Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-40

Summary So Far • The example showed the rotation for the left-left case – the insertion that destroyed the HB(1) balance somewhere was at the bottom of the left sub-tree of the left child of the deepest node that wound up out of balance • A right-right case is obviously the mirror image of some left case • The left-right and right-left cases each require two rotations to rebalance the tree, not just one, but each rotation is still a fixed number of steps, i. e. , they’re still O(1) Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-41

So … • After an O(h) BST insertion into an HB(1) binary search tree, the HB(1) balance can be restored while preserving the binary search tree property with at most two O(1) rotations • Doing the rotation(s) is just O(1), but how do we find the deepest node out of balance, which is the key to the rotation? Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-42

Roadmap M J E C A T L F K P W V D • Binary trees in general • Binary search trees • AVL trees • Definition: An HB(1) binary search tree • O(1) rebalancing to restore the HB(1) property after the BST insertion • Finding the deepest node out of balance in O(h) time • Proof that the HB(1) property means that h is O(lg n) • Some engineering implications of AVL tree properties Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-43

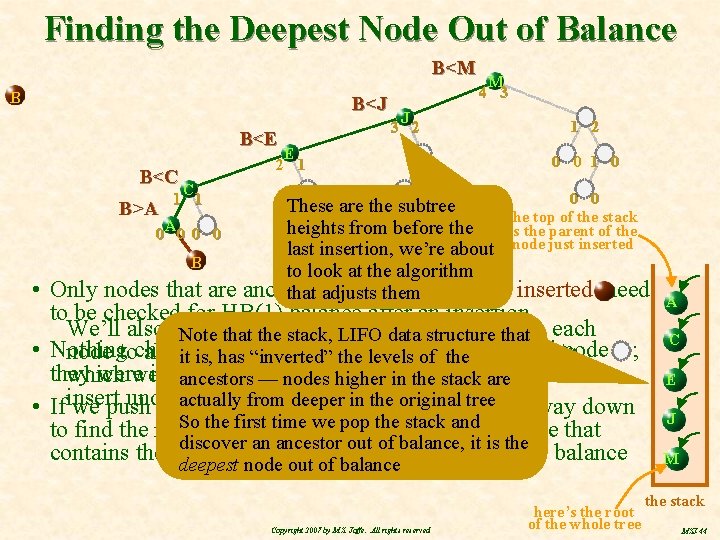

Finding the Deepest Node Out of Balance B<M B B<J B<E E 2 1 B<C B>A C 1 1 A 0 0 B J 3 2 1 0 M 4 3 1 2 0 0 1 0 0 0 These are the 0 subtree the top of the stack heights from before the is the parent of the last insertion, we’re about node just inserted to look at the algorithm ancestors ofthem the node just inserted need that adjusts • Only nodes that are to be checked for HB(1) balance after an insertion We’ll also need extra fields Note to thatprovide the stack, two LIFO data structure thatin each • Nothing intoany subtree anysubtrees’ node ; node tochanged allow ushas keep track its heights, it is, “inverted” theof levels ofnon-ancestral they were in balance before, they’re inare balance which we’ll use to determine balance, ifstack and when wenow ancestors — nodesso higher in thestill underneath itfromthe actually deeper in the • Ifinsert we push onto a stack nodes weoriginal check tree on the way down So the first time we pop the stack and to find the insertion point, we’ll have a data structure that discover an ancestor out of balance, it is the contains the only nodes we need to check for HB(1) balance deepest node out of balance Copyright 2007 by M. S. Jaffe. All rights reserved here’s the root of the whole tree A C E J M the stack MSJ-44

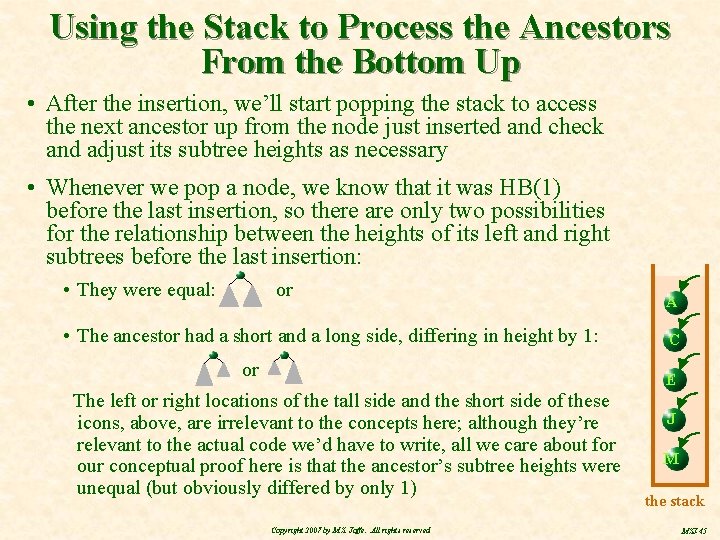

Using the Stack to Process the Ancestors From the Bottom Up • After the insertion, we’ll start popping the stack to access the next ancestor up from the node just inserted and check and adjust its subtree heights as necessary • Whenever we pop a node, we know that it was HB(1) before the last insertion, so there are only two possibilities for the relationship between the heights of its left and right subtrees before the last insertion: • They were equal: or • The ancestor had a short and a long side, differing in height by 1: or A C E The left or right locations of the tall side and the short side of these icons, above, are irrelevant to the concepts here; although they’re relevant to the actual code we’d have to write, all we care about for our conceptual proof here is that the ancestor’s subtree heights were unequal (but obviously differed by only 1) Copyright 2007 by M. S. Jaffe. All rights reserved J M the stack MSJ-45

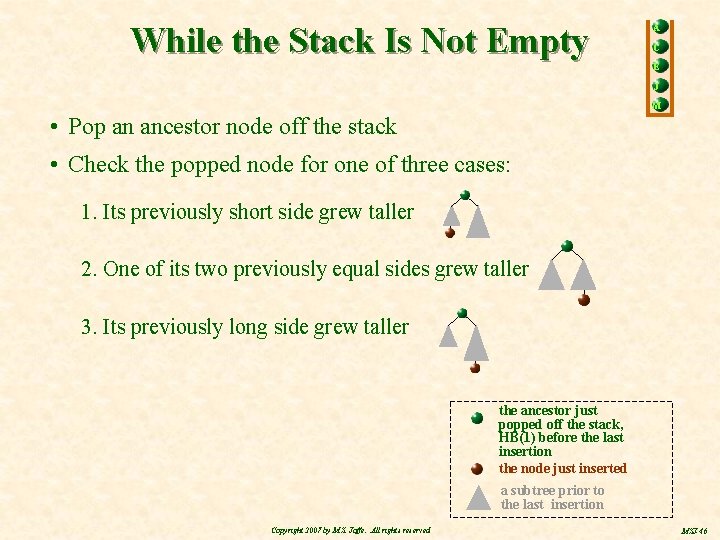

While the Stack Is Not Empty A C E J M • Pop an ancestor node off the stack • Check the popped node for one of three cases: 1. Its previously short side grew taller 2. One of its two previously equal sides grew taller 3. Its previously long side grew taller the ancestor just popped off the stack, HB(1) before the last insertion the node just inserted a subtree prior to the last insertion Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-46

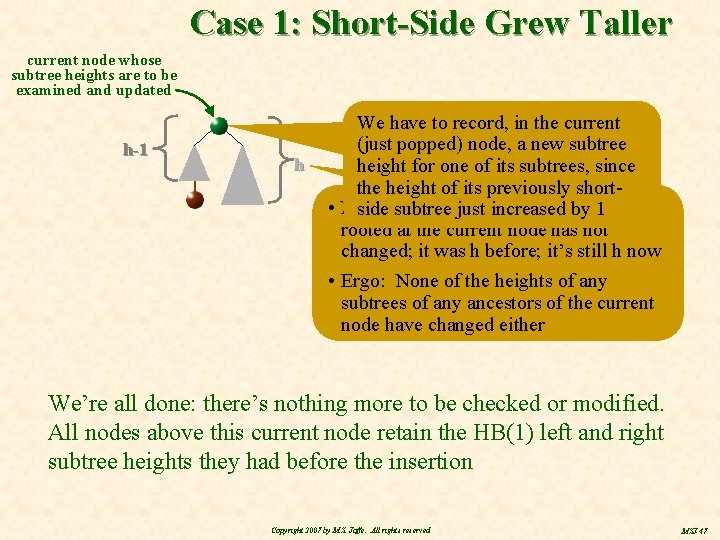

Case 1: Short-Side Grew Taller current node whose subtree heights are to be examined and updated h-1 h We have to record, in the current (just popped) node, a new subtree height for one of its subtrees, since the height of its previously short • Note the overall height of sidethat subtree just increased bythe 1 tree rooted at the current node has not changed; it was h before; it’s still h now • Ergo: None of the heights of any subtrees of any ancestors of the current node have changed either We’re all done: there’s nothing more to be checked or modified. All nodes above this current node retain the HB(1) left and right subtree heights they had before the insertion Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-47

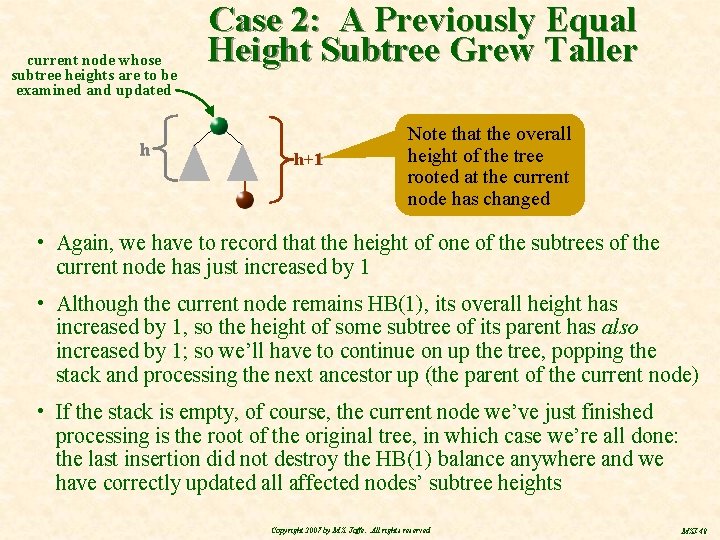

current node whose subtree heights are to be examined and updated h Case 2: A Previously Equal Height Subtree Grew Taller h+1 Note that the overall height of the tree rooted at the current node has changed • Again, we have to record that the height of one of the subtrees of the current node has just increased by 1 • Although the current node remains HB(1), its overall height has increased by 1, so the height of some subtree of its parent has also increased by 1; so we’ll have to continue on up the tree, popping the stack and processing the next ancestor up (the parent of the current node) • If the stack is empty, of course, the current node we’ve just finished processing is the root of the original tree, in which case we’re all done: the last insertion did not destroy the HB(1) balance anywhere and we have correctly updated all affected nodes’ subtree heights Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-48

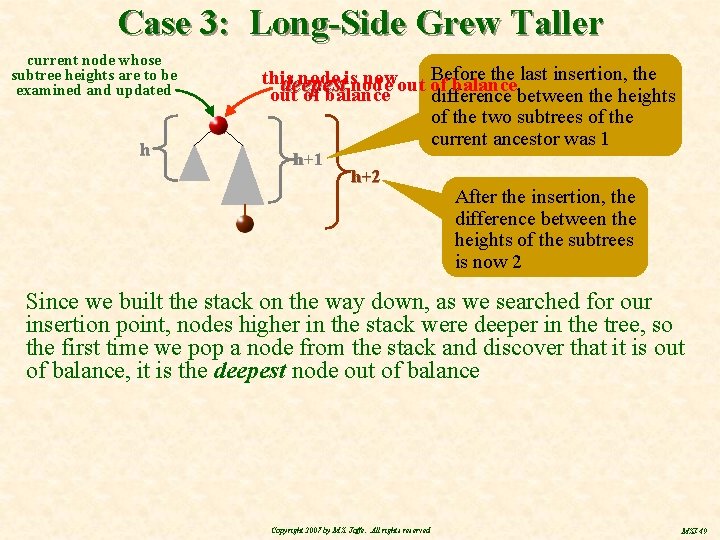

Case 3: Long-Side Grew Taller current node whose subtree heights are to be examined and updated h Before the last insertion, the this node isnode nowout of deepest balance out of balance difference between the heights of the two subtrees of the current ancestor was 1 h+2 After the insertion, the difference between the heights of the subtrees is now 2 Since we built the stack on the way down, as we searched for our insertion point, nodes higher in the stack were deeper in the tree, so the first time we pop a node from the stack and discover that it is out of balance, it is the deepest node out of balance Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-49

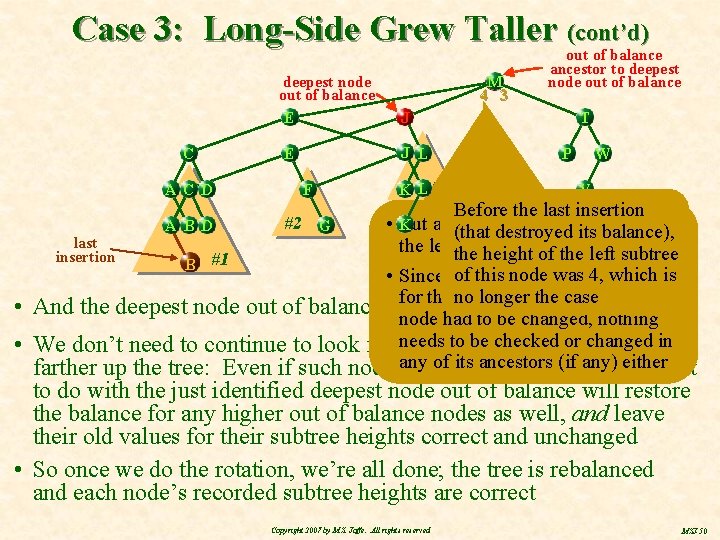

Case 3: Long-Side Grew Taller (cont’d) deepest node out of balance E C A C D M 4 3 F T J J L E out of balancestor to deepest node out of balance K L#3 W P V Before the last insertion • K But after thedestroyed rotation, this height for (that its balance), last the leftthe subtree is of correct again height the left subtree insertion B #1 of this of node 4, which is • Since neither thewas subtree heights longer theout caseof balance thisno temporarily • And the deepest node out of balance isfor the only one we care about! node had to be changed, nothing be checked changed in • We don’t need to continue to look for needs othertonodes out oforbalance of itsthe ancestors (if any) either farther up the tree: Even if such nodesany exist, rotation we’re about A B D #2 G to do with the just identified deepest node out of balance will restore the balance for any higher out of balance nodes as well, and leave their old values for their subtree heights correct and unchanged • So once we do the rotation, we’re all done; the tree is rebalanced and each node’s recorded subtree heights are correct Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-50

Summary of AVL Trees So Far • The search of, and simple BST insertion into, an AVL tree are, for all practical purposes, the same algorithm, the standard BST search/insertion algorithm, which is O(h) • Since we only check one node at each level during the insertion, the depth of the stack we use to hold nodes whose subtree heights need to be checked will never exceed the height of the original tree, so adjusting the subtree heights and finding the deepest node out of balance (if any) will be O(h) • Once we find the deepest node out of balance, doing the necessary rotation(s) to restore the HB(1) balance is just O(1) • Overall, we know that search, insertion (including re-balancing), and deletion* are all O(h) -------* The analysis for deletion is slightly more complex, but the end result is the same – O(h) Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-51

Next Step • We need to know the mathematical relationship between n and h induced by the HB(1) property to determine the “real” O-notation for AVL tree operations • We know they’re all O(h), of course, but what we want is a function f(n) that provides an upper bound for h for the maximum, or worst case, height of an HB(1) tree, in terms of n, the number of nodes; then we’d know that AVL tree operations were O(f(n)) • For almost perfect binary trees, for example, the almost perfect shape forces h ≤ log 2(n) • HB(1) trees may not be quite that good , but as long as the upper bound is still O(lg n), we’ll be happy Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-52

Roadmap M T J E C A L F K P W V D • Binary trees in general • Binary search trees • AVL trees • Definition: An HB(1) binary search tree • O(1) rebalancing to restore the HB(1) property after the BST insertion • Finding the deepest node out of balance in O(h) time • Proof that the HB(1) property means that h is O(lg n) • Some engineering implications of AVL tree properties Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-53

What’s the Worst Case Height for an HB(1) Tree as a Function of Its Number of Nodes? • We’re looking for an upper bound on h, the worst case, the stringiest, most stretched out AVL tree possible, having the maximum height for its number of nodes, n • We know that with no shape constraints at all, all we can say is that h ≤ n-1, which means operations on shape unconstrained binary search trees could be O(n) • We know that for an almost perfect binary tree, h = log 2 n , so h ≤ log 2 n, but we also know that only the search operation is O(h) for an almost perfect tree; rebuilding the almost perfect shape after an insertion (or deletion) could be Ω(n), which is why we’re looking at the HB(1) property • We hope that the HB(1) constraint will allow us to do better than h ≤ n-1, even if it’s not as good as the almost perfect h ≤ log 2 n itself • We don’t need an exact equation (one exists, but the math is tougher); as long as h < c·log 2 n for some constant c, we’ll be happy; since h will still be O(lg n) and all our O(h) AVL operations will therefore be O(lg n) • So h < c·log 2 n for HB(1) trees is what we hope to prove Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-54

Outline of the Proof • First, the longest part, we’ll derive a lower bound, call it m(h), for the minimum number of nodes in an HB(1) tree as a function of its height, h, so that we know for any HB(1) tree of height h, n m(h) • Once we have an m(h) for the minimum number of nodes, we’ll just apply its inverse, m-1, to both sides of the inequality, giving us m-1(n) m-1(m(h)), or m-1(n) h, which tells us that h ≤ m-1(n), which is the upper bound on the height that we’re really after • We’ve already seen this notion of “inverse” function • For ordinary binary trees, for example, n h+1 and therefore h ≤ n-1, since adding 1 and subtracting 1 are inverse operations • Since exponentiation and logarithm are inverse functions and we want m-1(n) to be c·log 2 n, look for an exponential function of h in the n m(h) lower bound for the number of nodes — when we see that, we’ll be very close to the end of our proof • We already know that all our AVL operations are O(h), so once we get h ≤ m-1(n), we’ll know AVL operations are O(m-1(n)) Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-55

n=m(h), the Worst Case for an HB(1) Tree • Define m(h) as the minimum number of nodes for an HB(1) tree of height h • If an HB(1) tree has fewer than m(h) nodes, it must have height < h • Conversely, h is the maximum height for an HB(1) tree with m(h) nodes; if its height is greater than h, it has to have n > m(h) nodes • For comparison purposes, consider a similar mu(h) for totally unconstrained binary trees, where, for example, mu(3)= 4 • An ordinary binary tree of height 3 must have at least 4 nodes; if it has fewer than 4 nodes, it can’t have height 3; its height must be less than that • Contra wise, with 4 nodes, it can’t get any taller than h=3; if it has h > 3, it has to have more than 4 nodes Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-56

Illustration of an m(3) HB(1) Tree • … and…the same three and three nodes become out …out andofthis node of balance again balance becomes out … and although the tree is of balance this leaf instead …… To keep n=7 Try but moving increase the height, Remove this Move this leaf Remove thisleaf… … and this node still HB(1), it no longer becomes out some existing node would have to be of balance has height 3 moved to a new level underneath this currently deepest node • Again, moving an interior node Remove this leaf … destroys the binary tree completely so let’s look only at the other leaves • Here’s an HB(1) tree with n=7 nodes and height h=3 • n=7 isno the minimum possible number for HB(1) tree 6) • There’s to eliminate any nodeof (tonodes reduce nanfrom 7 to This tree is way as “stringy” as the HB(1) property will let it be height (proof by number animated ; -)an HB(1) • of n=7 iskeep the 3 minimum ofexample nodes and still this tree an HB(1) tree offorheight 3 tree with h=3 • • Contra wise, there’s no way case) to rearrange 7 nodes to gain h=3 is the maximum (worst height for an HB(1) treeextra with n=7 • height Removeand an interior node and it’s not even a binary tree any more still keep every node HB(1) • So for HB(1) trees, m(3) = 7 • Let’s look at trying to eliminate some leaf node Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-57

The HB(1) “Spring” • The HB(1) property is thus like a spring, that resists being stretched too far n is still < m(4) • Without the spring, we could “stretch” 7 nodes out to a worst case height of 6 • But the HB(1) spring will prevent any attempt to stretch 7 nodes out beyond a height of 3 • We can add more nodes to this HB(1) tree with m(3) nodes, but until we’ve added enough to get to n ≥ m(4), there’s still no way to get it to stretch out to h = 4 while remaining HB(1) • In other words, not just for n = m(h), but for m(h) ≤ n < m(h+1), h is the greatest possible height for an HB(1) tree of n nodes • Now we need to know quantitatively just how strong the HB(1) spring is: As a function of n, how small can it keep h so that m(h) ≤ n < m(h+1)? Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-58

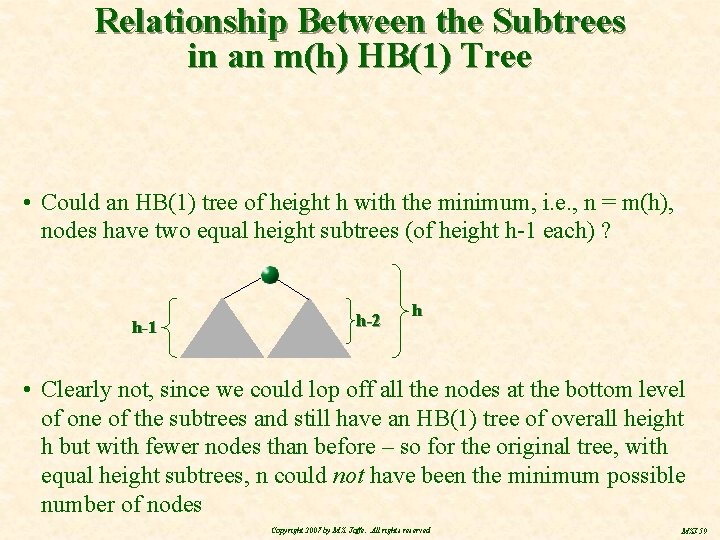

Relationship Between the Subtrees in an m(h) HB(1) Tree • Could an HB(1) tree of height h with the minimum, i. e. , n = m(h), nodes have two equal height subtrees (of height h-1 each) ? h-1 h-2 h • Clearly not, since we could lop off all the nodes at the bottom level of one of the subtrees and still have an HB(1) tree of overall height h but with fewer nodes than before – so for the original tree, with equal height subtrees, n could not have been the minimum possible number of nodes Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-59

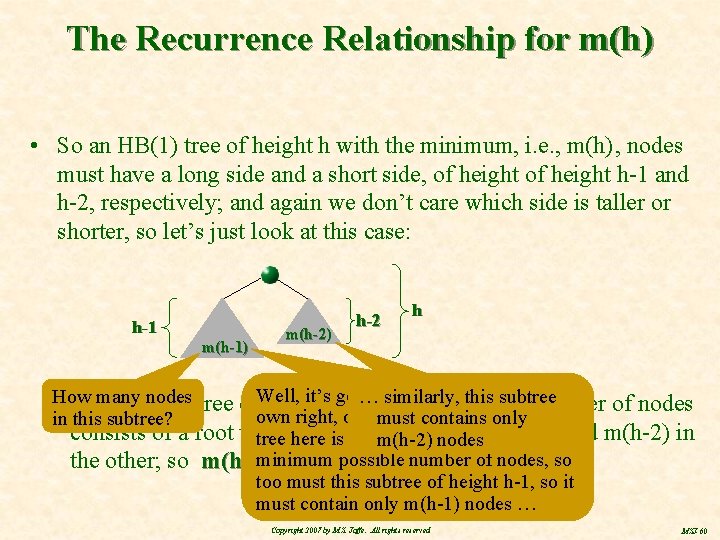

The Recurrence Relationship for m(h) • So an HB(1) tree of height h with the minimum, i. e. , m(h) , nodes must have a long side and a short side, of height h-1 and h-2, respectively; and again we don’t care which side is taller or shorter, so let’s just look at this case: h-1 m(h-1) m(h-2) h-2 h it’s hgot to similarly, bethe an minimum HB(1) innumber its … thistree subtree nodes tree of. Well, • How Somany an HB(1) height with of nodes own right, of course, and if the overall must contains only in this subtree? consists of a root with m(h-1) nodes innodes one subtree and m(h-2) in tree here is to have the absolute m(h-2) possible number of nodes, so the other; so m(h) =minimum 1 + m(h-1) + m(h-2) too must this subtree of height h-1, so it must contain only m(h-1) nodes … Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-60

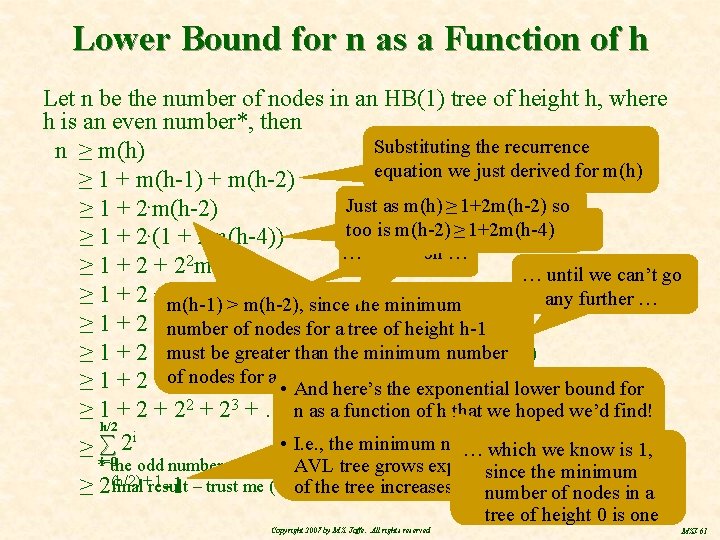

Lower Bound for n as a Function of h Let n be the number of nodes in an HB(1) tree of height h, where h is an even number*, then Substituting the recurrence n ≥ m(h) equation we just derived for m(h) ≥ 1 + m(h-1) + m(h-2) Just as m(h) ≥ 1+2 m(h-2) so ≥ 1 + 2 m(h-2) … m(h-4)≥≥ 1+2 m(h-4) 1+ 2 m(h-6) tooand is m(h-2) ≥ 1 + 2 (1 + 2 m(h-4)) … and so on … 2 ≥ 1 + 2 m(h-4) … until we can’t go 2 3 ≥ 1 + 2 +m(h-1) 2 + 2>m(h-6) any further … m(h-2), since the minimum k m(h-2 k) ≥ 1 + 2 +number 22 + 23 of+nodes. . . + 2 for a tree of height h-1 number ≥ 1 + 2 +must 22 +be 23 greater +. . . +than 2 k +the. . . minimum +2 h/2 m(h-2(h/2)) a (shorter) ofh/2 height ≥ 1 + 2 +of 22 nodes + 23 for +. . . 2 k +here’s. . . tree +2 m(0)h-2 … sincebound we’vefor • +And the exponential lower reached we’dm(0) find! ≥ 1 + 22 + 23 +. . . +n 2 ask a+function. . . +2 h/2 of • 1 h that we hoped h/2 i • I. e. , the minimum number of nodes in anis 1, ≥ ∑ 2 … which we know • • *i=0 the odd number case is slightly complicated but it doesn't alterheight the AVL more tree grows exponentially as minimum the since the (h/2) +result 1 -1 – trust me (or of do the math yourself ; -) number of nodes in a ≥ 2 final tree increases tree of height 0 is one Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-61

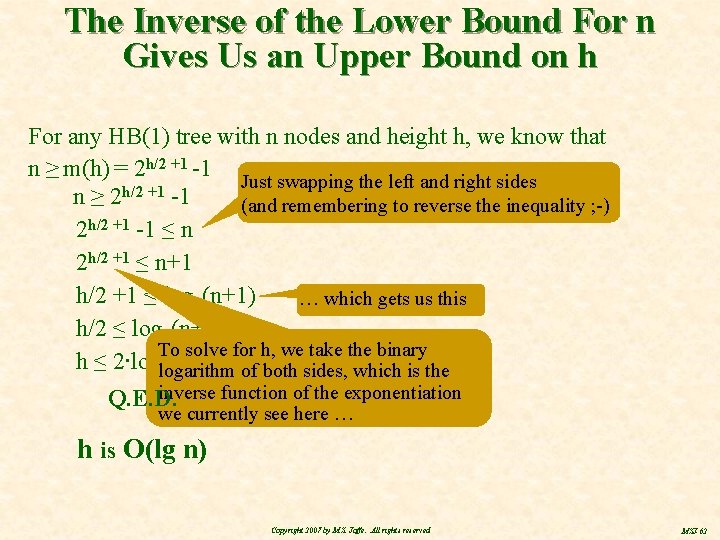

The Inverse of the Lower Bound For n Gives Us an Upper Bound on h For any HB(1) tree with n nodes and height h, we know that n ≥ m(h) = 2 h/2 +1 -1 Just swapping the left and right sides h/2 +1 n≥ 2 -1 (and remembering to reverse the inequality ; -) 2 h/2 +1 -1 ≤ n 2 h/2 +1 ≤ n+1 h/2 +1 ≤ log 2(n+1) … which gets us this h/2 ≤ log 2(n+1) - 1 To solve for h, we take the binary h ≤ 2∙loglogarithm 2 both sides, which is the 2(n+1) - of inverse function of the exponentiation Q. E. D. we currently see here … h is O(lg n) Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-62

We’re Done! (Almost ; -) Since the AVL tree operations we looked at were all O(h) and we now know that h is O(lg n), we have our dynamic data structure, the HB(1) binary search tree, or AVL tree, which can be searched, inserted into, and deleted from, all in O(lg n) time Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-63

Roadmap M T J E C A L F K P W V D • Binary trees in general • Binary search trees • AVL trees • • Definition: An HB(1) binary search tree O(1) rebalancing to restore the HB(1) property after the BST insertion Finding the deepest node out of balance in O(h) time Proof that the HB(1) property means that h is O(lg n) • Some engineering implications of AVL tree properties Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-64

Small Ratio Between Worst and Best Case Performance for AVL Trees • Since we’ve just proved that for an HB(1) tree h ≤ 2∙log 2(n+1) – 2, even in the worst case an HB(1) tree will never be more than twice the height of a best case HB(1) tree with the same number of nodes, namely log 2 n • It’s actually possible to get a tighter upper bound for the height of an HB(1) tree and show that h < 1. 44…· log 2 n + c, so in reality the worst case height penalty for relaxing the almost perfect shape constraint to an HB(1) constraint is under 45%, but: • The math to prove the tighter bound is more complex than what we did here (think Fibonacci numbers and the golden ratio) • From the standpoint of complexity classes, there’s no real point to the extra work; O(lg n) is O(lg n), the constants don’t matter • But remember the tighter upper bound (1. 44… ) and let’s look at the relationship between height and number of nodes in tabular numeric form and see what it means for software systems engineering Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-65

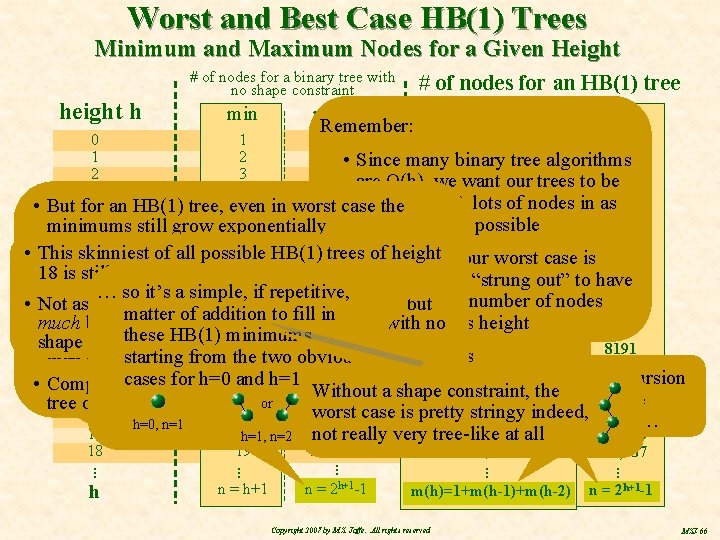

Worst and Best Case HB(1) Trees Minimum and Maximum Nodes for a Given Height h # of nodes for a binary tree with no shape constraint min # of nodes for an HB(1) tree max Remember: min max 1 0 1 1 1 2 tree algorithms 1 2 3 • Since many binary 3 4 2 3 7 7 are O(h), we want our trees to be 7 3 4 15 15 very “bushy”, lots of nodes in as • But for in worst 31 case the 12 4 an HB(1) tree, even 5 31 20 5 6 63 little height as possible minimums still grow exponentially 63 33 6 7 127 • • This of all possible HB(1) trees of height Ourskinniest old friend the HB(1) spring keeps the tree 7 8 255 • Contra wise, our 54 worst case is 255 18 is still pretty bushy from getting very strung 9 out, forcing to have lotsis “strung 88 8 511 it when 511 a tree out” to have … so it’s a simple, if repetitive, 143 9 in relationship 10 1023 of nodes toperfect its height —the not asbut minimum number of nodes 1023 • Not as 10 bushy as an almost binary tree, 232 11 tree, Since a perfect binary tree is 2047 ofthe addition toas fill inthetree many. This as amatter perfect binary in 2047 maximum is the number of much bushier than skinniest binary with no possible for its height 376 11 thethese 12 nonetheless; 4095 also case to right, but lots, many more HB(1), it is also the max 4095 HB(1) minimums shape nodes constraints whatsoever in a perfect binary 609 12 there were no HB(1) 13 constraint 8191 than if for HB(1) trees starting from the two obvious 986 13 that we derived 14 16, 383 tree 16, 383 recursion for h=0 15 and h=1: 32, 767 1596 This is the 32, 767 14 cases • Compare thethe minimum of n=10, 945 for ana HB(1) earlier, maximum for Without shape constraint, the 2583 relationship 15 h=18 with an unconstrained 16 or 65, 535 we tree of tree’s n=19 any binary tree worst case is pretty stringy indeed, 4180 derived earlier… 16 17 131, 071 h=0, n=1 6764 at all 17 18 not really very tree-like 262, 143 h=1, n=2 262, 143 10, 945 18 19 524, 287 • • • h • • • n = h+1 • • • n = 2 h+1 -1 • • • m(h)=1+m(h-1)+m(h-2) Copyright 2007 by M. S. Jaffe. All rights reserved • • • n = 2 h+1 -1 MSJ-66

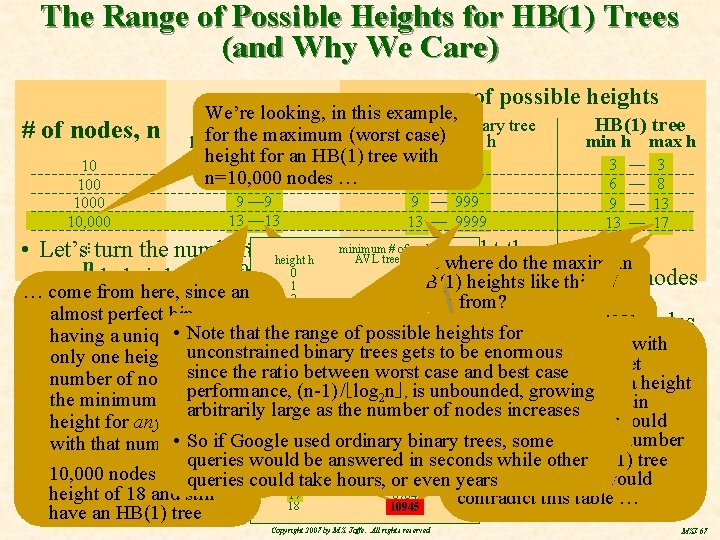

The Range of Possible Heights for HB(1) Trees (and Why We Care) # of nodes, n 10 1000 10, 000 range of possible heights range of heights We’re looking, in this example, for an almost unconstrained binary tree HB(1) tree for the maximum case) perfect binary tree (worst min h max h height for an HB(1) tree with 3 — 3 n=10, 000 6 — 6 nodes … 6 — 9 13 — 13 9 99 9 — 999 13 — 9999 3 6 9 13 — — 3 8 13 17 • • minimum # of nodes • for an • Let’s • • • turn the numbers • • • around so as to highlight the range of • • • AVL tree of. But heightwhere h height h log 21 n — n - 1 do the maximum log 2 n 0 n possible heights an HB(1) 1 tree can have for aheights given number minimums …of nodes like this 17 2 HB(1)These … come from here, since an … also These minimum 2 4 here, come from? The HB(1) spring…is doing its job; 3 7 almost perfect binary tree, heights since almost perfect • • Let’s look at how the range heights as the number of nodes 4 ofan 12 varies HB(1) trees stay nice and Data structures best case performance is always fantastic but whose But, as earlier (although we didn’t prove it), Note that the range of possible heights for These maximums come having a unique • whose shape, has 5 noted 20 It’sbinary pretty easy see tree is to also an Now iftree ancrammed HB(1) tree withbe bushy, lots of nodes into 6 binary 33 increases by factors ofthe 10 worst case performance, even if unlikely, is really awful usually can’t the worst case height of an HB(1) is always less unconstrained trees gets to be enormous from super stretched We’re looking at how the only one height for a given where most of these 7 54 so has 10, 000 nodes could get HB(1) tree and not much more than the bare than 45% greater than its best case; so an AVL tree since the ratio between worst case and best case used in real-time systems, which certainly includes systems with aa height 8 There is an exact formula; but it’s 88 out shape with only 1 node range of possible heights … so the worst case for number of nodes andan it is numbers come from stretched out to have minimum heightto itsgrowing the minimum height 9(n-1) Note that n=10, 000 isalways will provide pretty close optimum performance, / = log 143 , is unbounded, significant amount of human-computer interaction complicated and so is 2 nits derivation. HB(1) tree with 10, 000 per level so h n-1 for an HB(1) tree changes the minimum possible 10 that of h=18 and still remain 232 between the two entries for an HB(1) tree performance, regardless of its topology or how arbitrarily large as the number of nodes increases 11 Instead, let’s just do some 376 as thinking nodes must be h=17, not we increase the 10, 945 numberwould HB(1), then height. AVL for heights any binary tree 12 for 17 and 18 • Since trees not only offer really good, O(lg n), performance, but 609 the many nodes it contains based on an extract from table h=18; the HB(1) spring 13 not be the minimum number • So if Google used ordinary binary trees, some 986 of nodes in theand treeworst case, with that numbervery of nodes they little variation between best case 14 we developed on the previous chart. 1596 won’tdoletsouswith stretch of nodes forother an HB(1) tree queriesasset would answered in seconds while 15 be 2583 they are an important for systems engineers concerned with 10, 000 nodes outqueries to a could 16 take hours, or with h=18, which would 4180 even years consistently predictable systems performance 17 6764 height of 18 and still contradict this table … 18 10945 have an HB(1) tree Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-67

Now We’re Done! Having shown that binary trees, particularly AVL trees, are the most beautiful data structures in the universe – and practical too, although I doubt that they are much used by Google, for which there actually even better structures available (not as beautiful though ; -) but AVL trees are certainly heavily used in lots of other places Copyright 2007 by M. S. Jaffe. All rights reserved MSJ-68

- Slides: 68