BIG DATA TECHNOLOGIES LECTURE 3 ALGORITHM PARALLELIZATION Assoc

BIG DATA TECHNOLOGIES LECTURE 3: ALGORITHM PARALLELIZATION Assoc. Prof. Marc FRÎNCU, Ph. D. Habil. marc. frincu@e-uvt. ro

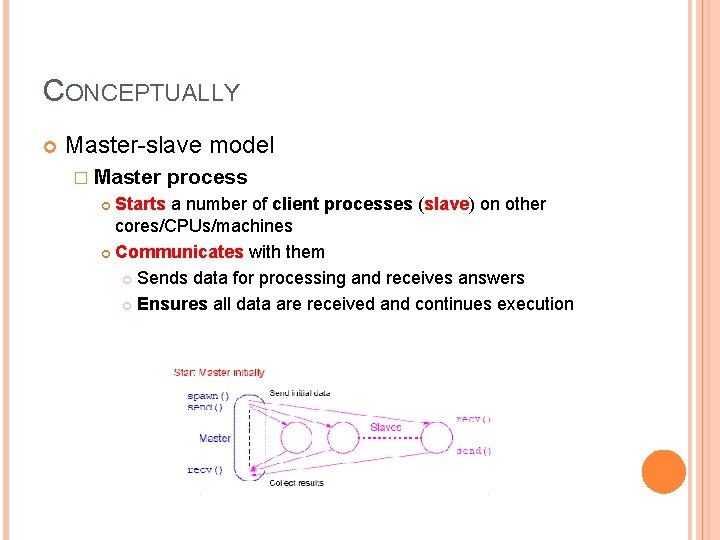

CONCEPTUALLY Master-slave model � Master process Starts a number of client processes (slave) on other cores/CPUs/machines Communicates with them Sends data for processing and receives answers Ensures all data are received and continues execution

CAN WE PARALLELIZE THE ALGORITHM? Code Understanding of sequential code (if exists) � Identifying critical points � Where are the computational heavy code lines? Profiling Parallelize code only where intense computation is found (legea lui Amdahl) Where are the bottlenecks? Are there areas of slow code? � � Use parallel optimized code � I/O IBM ESSL, Intel MKL, AMD AMCL, etc. If many parallel versions of the same algorithm exist ALL must be studied! Data � Any data dependencies? � Can they be removed? Can data be partitioned?

EXEMPLES Potential energy of each molecule. Find the minimum energy configuration Each computation can be done in parallel � Search for minimum energy can also be parallelized � Parallel search Fibonaci F(n) depends on F(n-1) and F(n-2) � Cannot be parallelized �

AUTOMATIC VS. MANUAL PARALLELIZATION The process of parallelizing code is complex, iterative and error prone, requiring time until an efficient solution is found Some compilers can parallelize code (pre-proccessing) � Automated Compiler identifies parallel code sections as well as bottleneck areas Offers an analysis of the benefits of parallelization The target are the iterative statements (do, for) � Programmer oriented Use compilation directives and execution flags The programmer is in charge of finding the parallel sections � They are mostly used on shared memory devices Open. MP BUT � Performance can degrade � Less flexible than manual parallelization � Limited to certain code sections (loops) � Not all sequential code is parallelizable as is

DESIGNING PARALLEL ALGORITHMS Partitioning Communication Synchronization Data dependencies Load balancing Granularity I/O Debugging Performance analysis and optimization

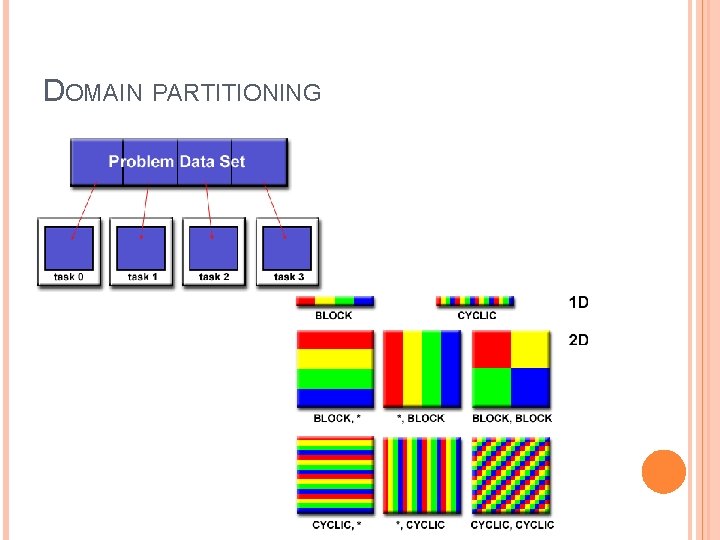

PROBLEM AND DATA PARTITIONING The first step in any parallel problem is to partition it in order to be handled by multiple parallel processes Data partitioning (domain) Algorithm partitioning

DOMAIN PARTITIONING

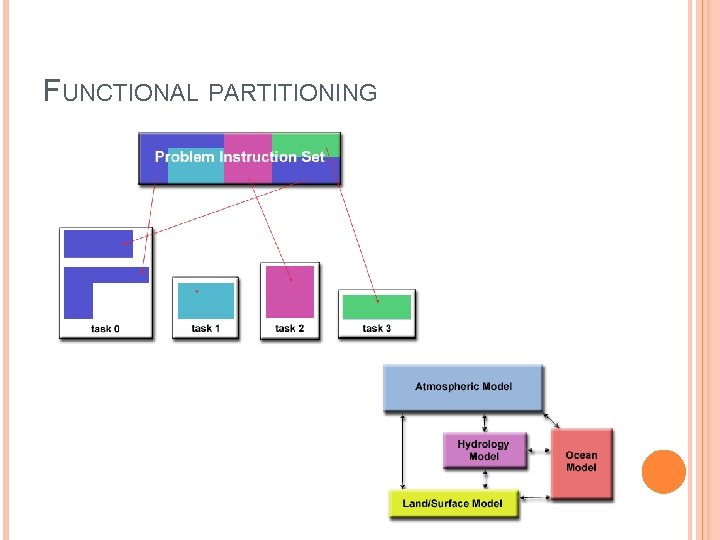

FUNCTIONAL PARTITIONING

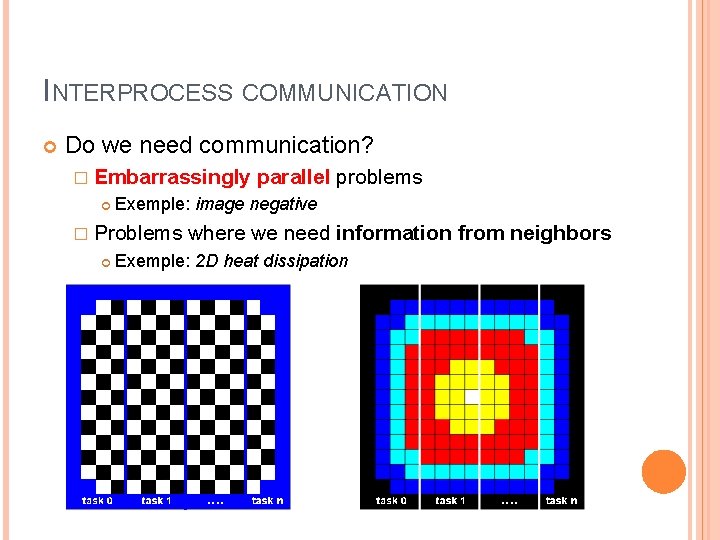

INTERPROCESS COMMUNICATION Do we need communication? � Embarrassingly parallel problems Exemple: image negative � Problems where we need information Exemple: 2 D heat dissipation from neighbors

INTERPROCESS COMMUNICATION Communication overhead � � Resources are used to store and send data Communication requires synchronization � Network limitation Latency vs. bandwidth � Latency: time needed to send information from point A to point B � � Microseconds Bandwidth: quantity of information sent per time unit A process will wait for the other to send data MB/s, GB/s Many small messages make latency dominant pack all together as a single message Communication visibility � � In MPI messages are visible and under the programmer’s control The Data Parallel model hides communication details (Map. Reduce)

INTERPROCESS COMMUNICATION Synchronous vs. asynchronous � Synchronization blocks the code as one task must wait for the other to end and send its data � Asinchronous execution assumes that a task executes independently from others (non-blocking communication)

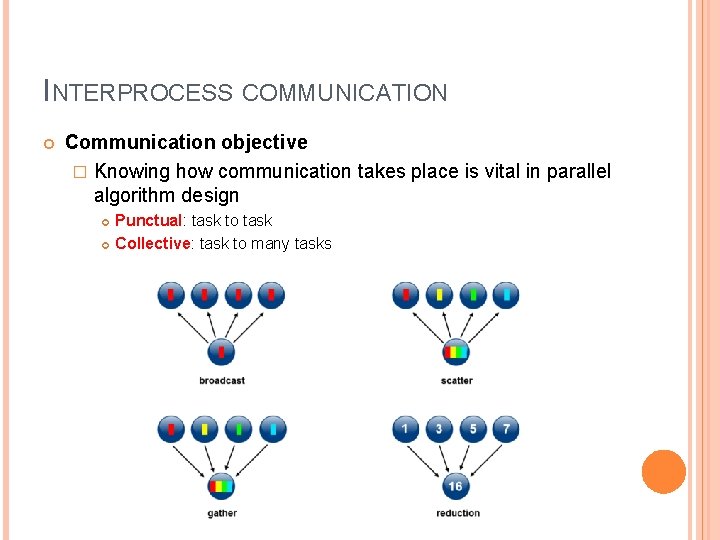

INTERPROCESS COMMUNICATION Communication objective � Knowing how communication takes place is vital in parallel algorithm design Punctual: task to task Collective: task to many tasks

INTERPROCESS COMMUNICATION Efficiency of communication � An MPI implemention may give different results based on the used hardware architecture � Asynchronous communication may improve the execution of the parallel algorithm � Type and property of the network Overhead and complexity

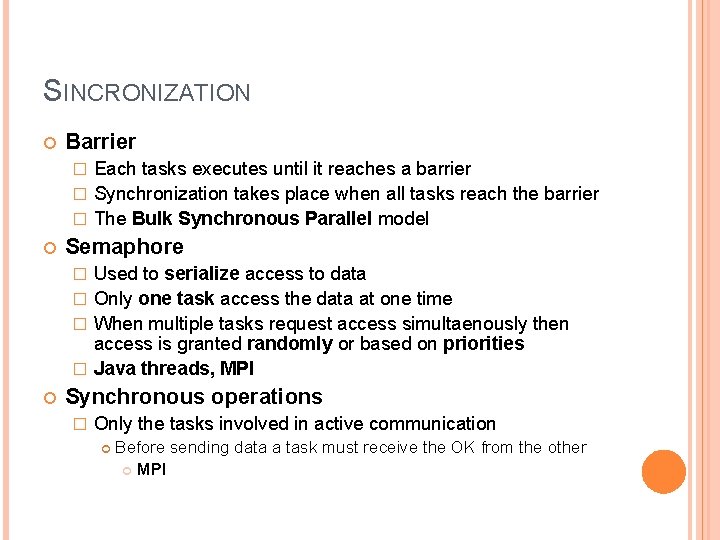

SINCRONIZATION Barrier Each tasks executes until it reaches a barrier � Synchronization takes place when all tasks reach the barrier � The Bulk Synchronous Parallel model � Semaphore Used to serialize access to data � Only one task access the data at one time � When multiple tasks request access simultaenously then access is granted randomly or based on priorities � Java threads, MPI � Synchronous operations � Only the tasks involved in active communication Before sending data a task must receive the OK from the other MPI

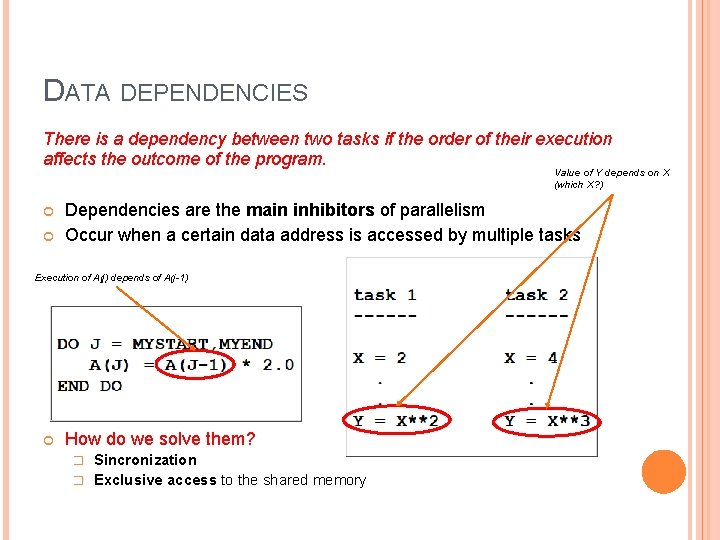

DATA DEPENDENCIES There is a dependency between two tasks if the order of their execution affects the outcome of the program. Value of Y depends on X (which X? ) Dependencies are the main inhibitors of parallelism Occur when a certain data address is accessed by multiple tasks Execution of A(j) depends of A(j-1) How do we solve them? Sincronization � Exclusive access to the shared memory �

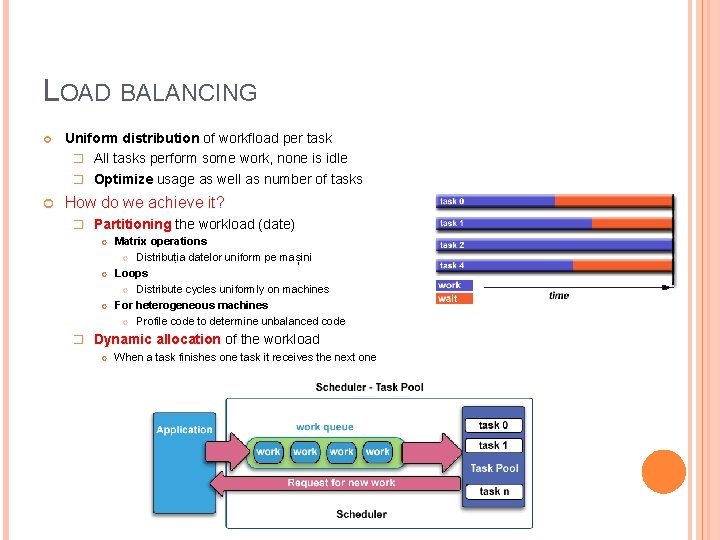

LOAD BALANCING Uniform distribution of workfload per task � All tasks perform some work, none is idle � Optimize usage as well as number of tasks How do we achieve it? � Partitioning the workload (date) Matrix operations Distribuția datelor uniform pe mașini Loops Distribute cycles uniformly on machines For heterogeneous machines Profile code to determine unbalanced code � Dynamic allocation of the workload When a task finishes one task it receives the next one

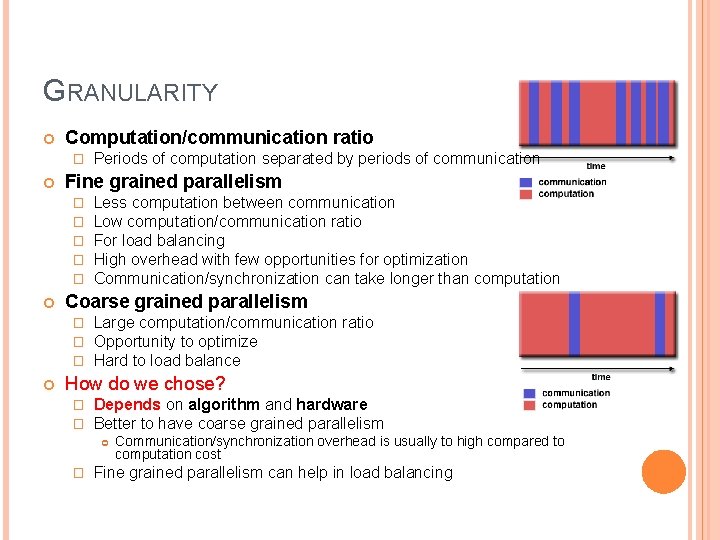

GRANULARITY Computation/communication ratio � Fine grained parallelism � � � Less computation between communication Low computation/communication ratio For load balancing High overhead with few opportunities for optimization Communication/synchronization can take longer than computation Coarse grained parallelism � � � Periods of computation separated by periods of communication Large computation/communication ratio Opportunity to optimize Hard to load balance How do we chose? � � Depends on algorithm and hardware Better to have coarse grained parallelism � Communication/synchronization overhead is usually to high compared to computation cost Fine grained parallelism can help in load balancing

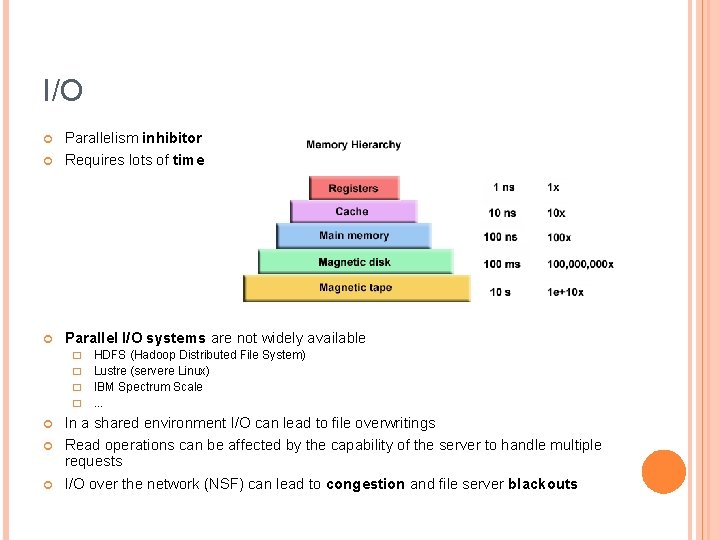

I/O Parallelism inhibitor Requires lots of time Parallel I/O systems are not widely available HDFS (Hadoop Distributed File System) � Lustre (servere Linux) � IBM Spectrum Scale �. . . � In a shared environment I/O can lead to file overwritings Read operations can be affected by the capability of the server to handle multiple requests I/O over the network (NSF) can lead to congestion and file server blackouts

DEBUGGING Can be costly if code complexity is high Many applications � Total. View � DDT � Inspector (Intel)

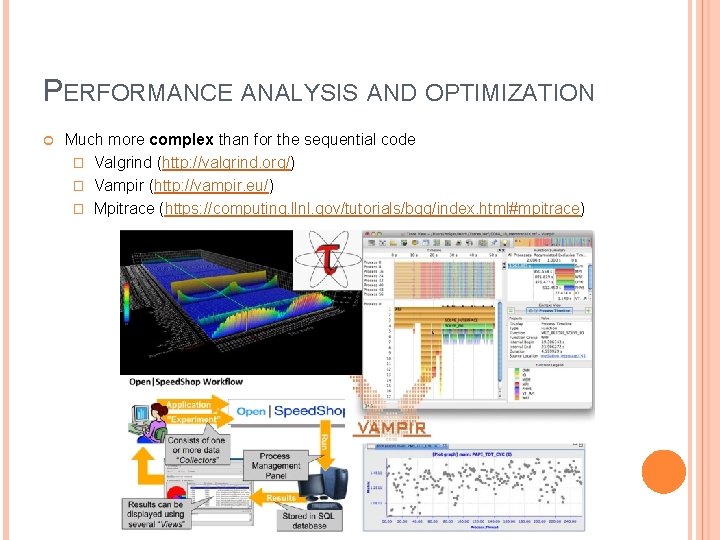

PERFORMANCE ANALYSIS AND OPTIMIZATION Much more complex than for the sequential code � Valgrind (http: //valgrind. org/) � Vampir (http: //vampir. eu/) � Mpitrace (https: //computing. llnl. gov/tutorials/bgq/index. html#mpitrace)

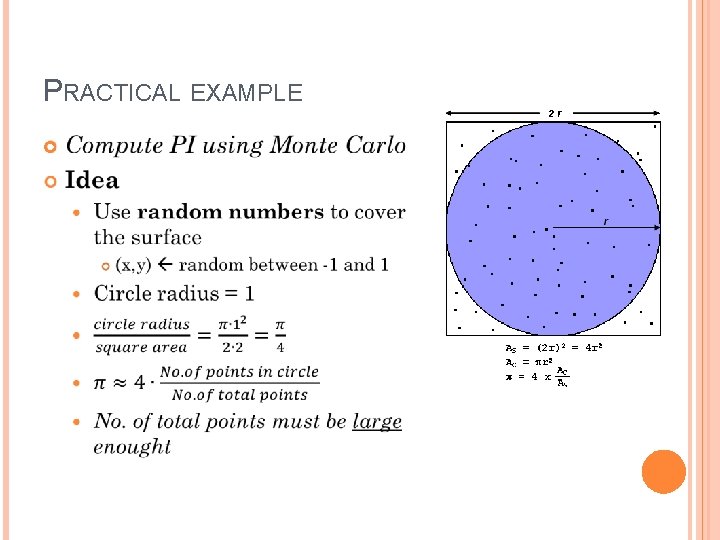

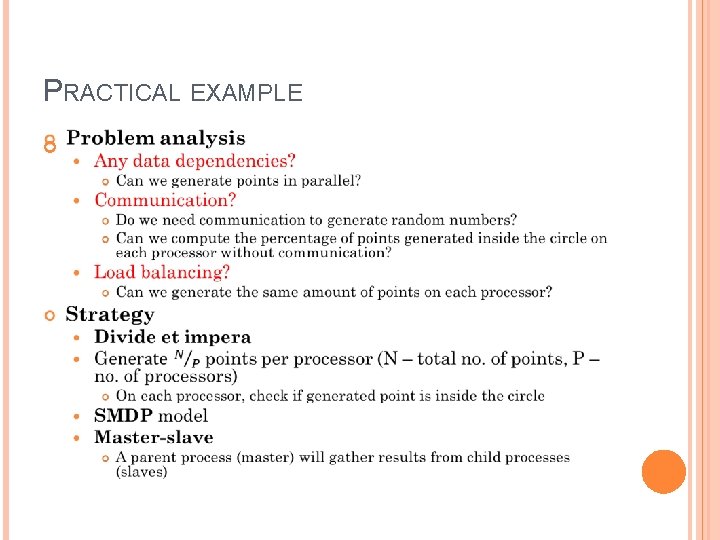

PRACTICAL EXAMPLE

PRACTICAL EXAMPLE

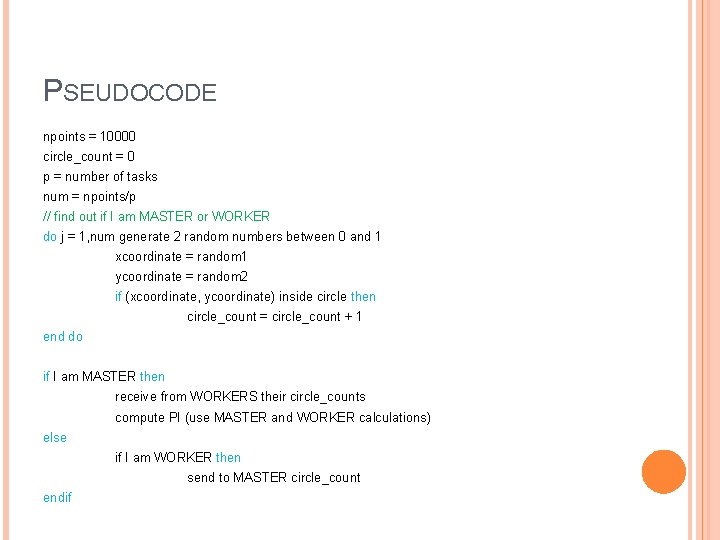

PSEUDOCODE npoints = 10000 circle_count = 0 p = number of tasks num = npoints/p // find out if I am MASTER or WORKER do j = 1, num generate 2 random numbers between 0 and 1 xcoordinate = random 1 ycoordinate = random 2 if (xcoordinate, ycoordinate) inside circle then circle_count = circle_count + 1 end do if I am MASTER then receive from WORKERS their circle_counts compute PI (use MASTER and WORKER calculations) else if I am WORKER then send to MASTER circle_count endif

APIS FOR PARALLEL SHARED AND DISTRIBUTED MEMORY ALGORITHMS Shared memory � Open. MP Distributed memory � Unified Parallel C � MPI GPUs � CUDA Distributed computing � Map. Reduce Data flows: Storm, Spark Graphs: Giraph, Graph. X

OPENMP Shared memory model Requires minimal modifications of the sequential code Specification is implemented in the compiler � g++ program. cpp -fopenmp -o program fork-join model � When the compiler reaches a parallel construct in Open. MP, it creates a series of threads which execute in parallel (fork) � The threads merge at the end of their execution (join)

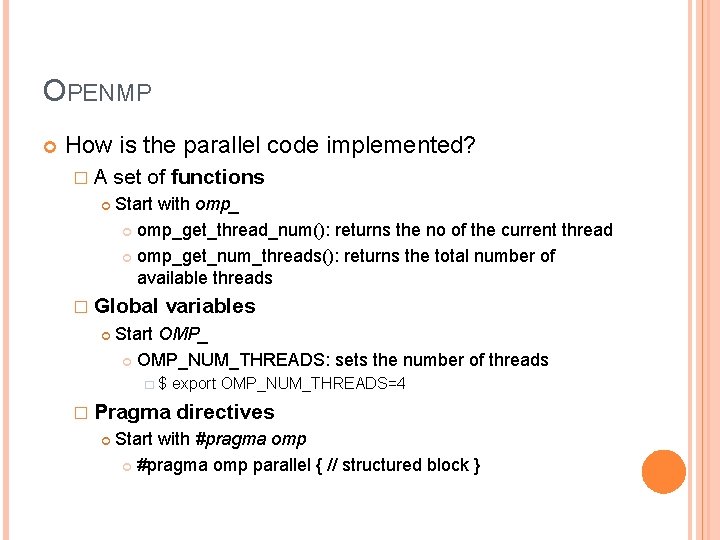

OPENMP How is the parallel code implemented? � A set of functions Start with omp_get_thread_num(): returns the no of the current thread omp_get_num_threads(): returns the total number of available threads � Global variables Start OMP_NUM_THREADS: sets the number of threads � $ export OMP_NUM_THREADS=4 � Pragma directives Start with #pragma omp parallel { // structured block }

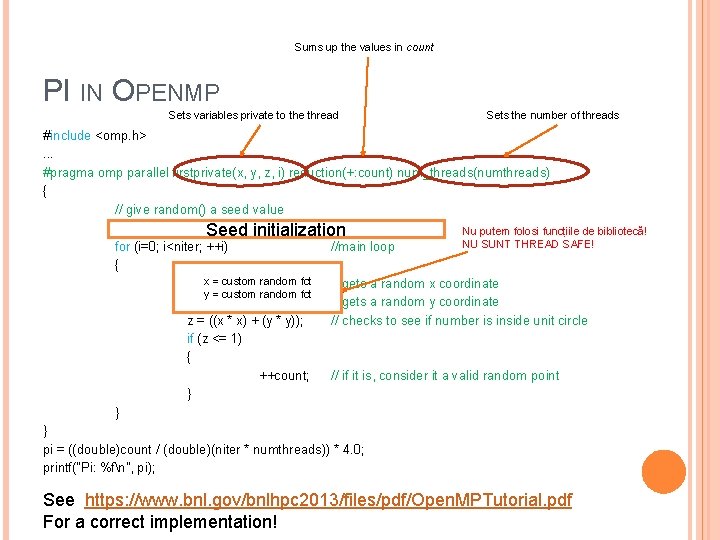

Sums up the values in count PI IN OPENMP Sets variables private to the thread Sets the number of threads #include <omp. h>. . . #pragma omp parallel firstprivate(x, y, z, i) reduction(+: count) num_threads(numthreads) { // give random() a seed value srand 48( (int)time(NULL) ^ omp_get_thread_num() ); Nu putem folosi funcțiile de bibliotecă! Seed initialization NU SUNT THREAD SAFE! for (i=0; i<niter; ++i) //main loop { x = custom random fct x = (double)drand 48(); // gets a random x coordinate y = custom random fct y = (double)drand 48(); // gets a random y coordinate z = ((x * x) + (y * y)); // checks to see if number is inside unit circle if (z <= 1) { ++count; // if it is, consider it a valid random point } } } pi = ((double)count / (double)(niter * numthreads)) * 4. 0; printf("Pi: %fn", pi); See https: //www. bnl. gov/bnlhpc 2013/files/pdf/Open. MPTutorial. pdf For a correct implementation!

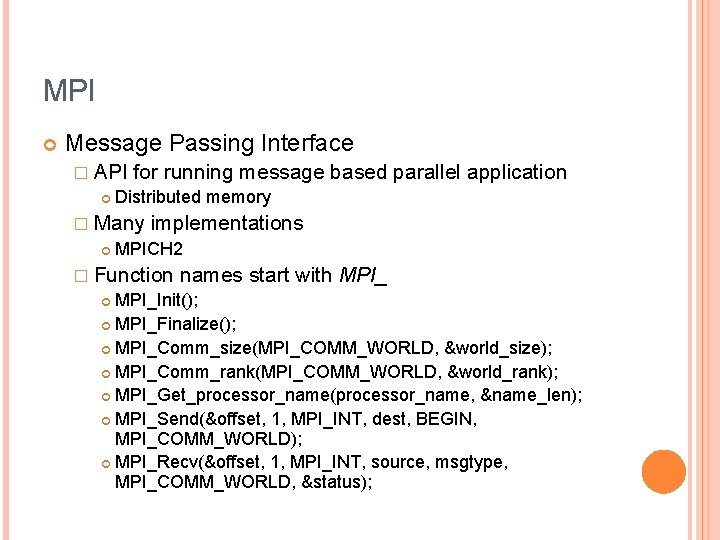

MPI Message Passing Interface � API for running message based parallel application Distributed memory � Many implementations MPICH 2 � Function names start with MPI_Init(); MPI_Finalize(); MPI_Comm_size(MPI_COMM_WORLD, &world_size); MPI_Comm_rank(MPI_COMM_WORLD, &world_rank); MPI_Get_processor_name(processor_name, &name_len); MPI_Send(&offset, 1, MPI_INT, dest, BEGIN, MPI_COMM_WORLD); MPI_Recv(&offset, 1, MPI_INT, source, msgtype, MPI_COMM_WORLD, &status);

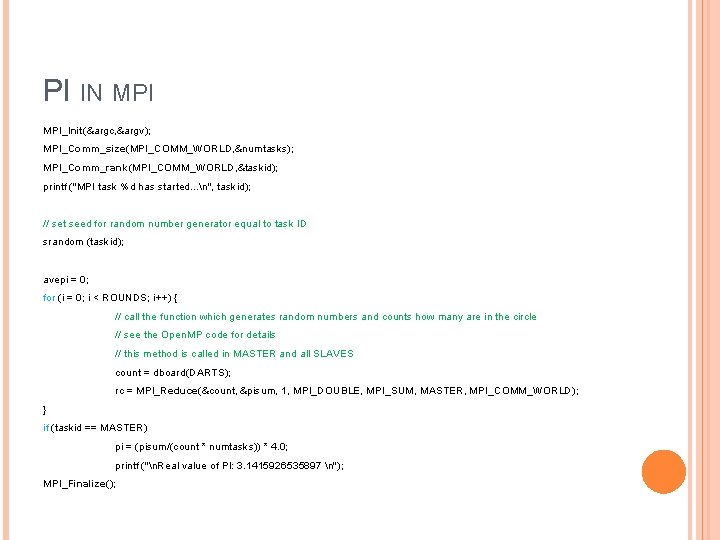

PI IN MPI_Init(&argc, &argv); MPI_Comm_size(MPI_COMM_WORLD, &numtasks); MPI_Comm_rank(MPI_COMM_WORLD, &taskid); printf ("MPI task %d has started. . . n", taskid); // set seed for random number generator equal to task ID srandom (taskid); avepi = 0; for (i = 0; i < ROUNDS; i++) { // call the function which generates random numbers and counts how many are in the circle // see the Open. MP code for details // this method is called in MASTER and all SLAVES count = dboard(DARTS); rc = MPI_Reduce(&count, &pisum, 1, MPI_DOUBLE, MPI_SUM, MASTER, MPI_COMM_WORLD); } if (taskid == MASTER) pi = (pisum/(count * numtasks)) * 4. 0; printf ("n. Real value of PI: 3. 1415926535897 n"); MPI_Finalize();

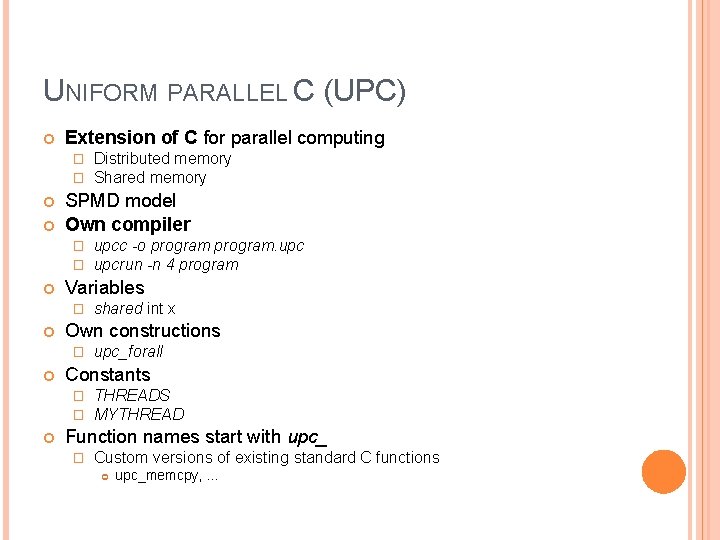

UNIFORM PARALLEL C (UPC) Extension of C for parallel computing � � SPMD model Own compiler � � upc_forall Constants � � shared int x Own constructions � upcc -o program. upcrun -n 4 program Variables � Distributed memory Shared memory THREADS MYTHREAD Function names start with upc_ � Custom versions of existing standard C functions upc_memcpy, . . .

![PI IN UPC shared int count [THREADS]; upc_forall (j=0; j<THREADS; ++j; j) // main PI IN UPC shared int count [THREADS]; upc_forall (j=0; j<THREADS; ++j; j) // main](http://slidetodoc.com/presentation_image_h/926d1894ee8252d83fa7a5b7a8fc6034/image-32.jpg)

PI IN UPC shared int count [THREADS]; upc_forall (j=0; j<THREADS; ++j; j) // main loop { for (i=0; i<niter; i++) { x = (double)drand 48(); // gets a random x coordinate y = (double)drand 48(); // gets a random y coordinate z = ((x * x) + (y * y)); // checks to see if number is inside unit circle if (z <= 1) { ++count[MYTHREAD]; } } } upc_barrier(); // ensure all is done if (MYTHREAD == 0) { for (j=0; j<THREADS; ++j; j) count. Hit += count[j]; pi = ((double)count. Hit / (double)(niter * THREADS)) * 4. 0; printf("Pi: %fn", pi); } // if it is, consider it a valid random point

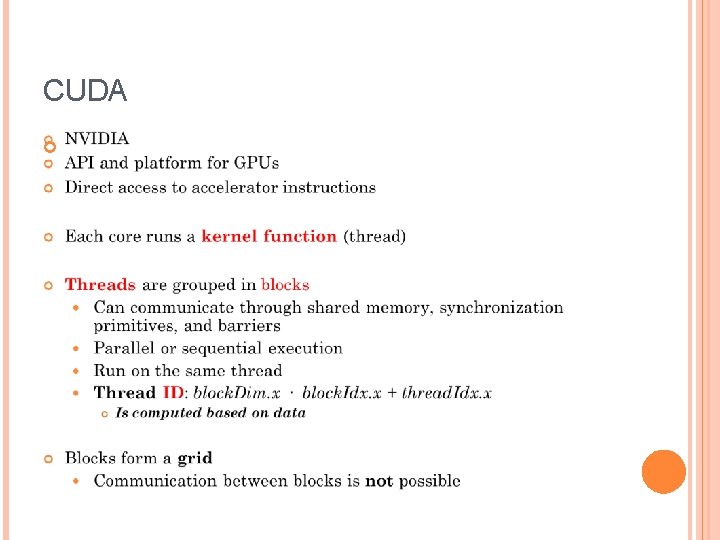

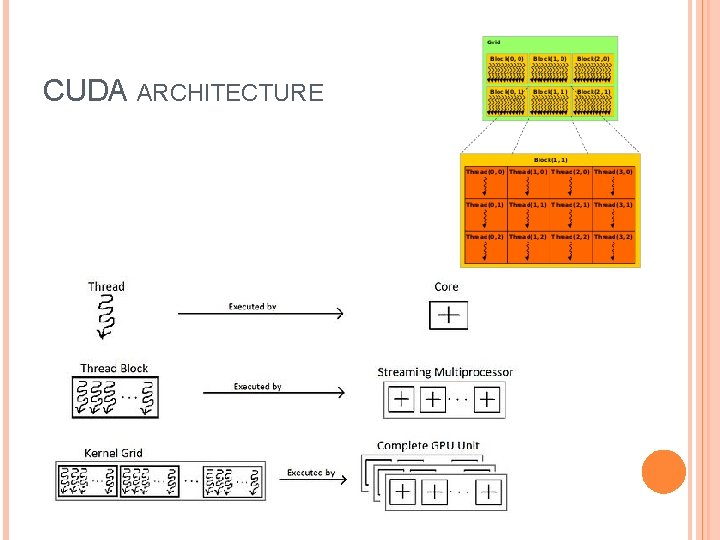

CUDA

CUDA ARCHITECTURE

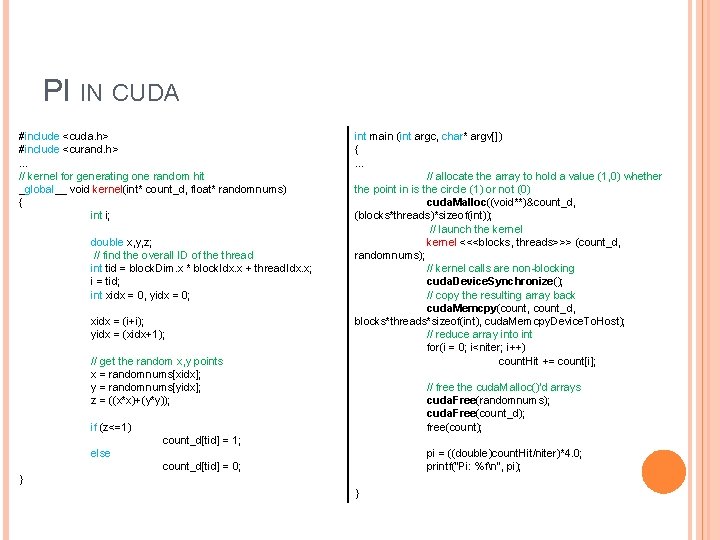

PI IN CUDA #include <cuda. h> #include <curand. h>. . . // kernel for generating one random hit _global__ void kernel(int* count_d, float* randomnums) { int i; double x, y, z; // find the overall ID of the thread int tid = block. Dim. x * block. Idx. x + thread. Idx. x; i = tid; int xidx = 0, yidx = 0; xidx = (i+i); yidx = (xidx+1); // get the random x, y points x = randomnums[xidx]; y = randomnums[yidx]; z = ((x*x)+(y*y)); if (z<=1) count_d[tid] = 1; else count_d[tid] = 0; } int main (int argc, char* argv[]) { … // allocate the array to hold a value (1, 0) whether the point in is the circle (1) or not (0) cuda. Malloc((void**)&count_d, (blocks*threads)*sizeof(int)); // launch the kernel <<<blocks, threads>>> (count_d, randomnums); // kernel calls are non-blocking cuda. Device. Synchronize(); // copy the resulting array back cuda. Memcpy(count, count_d, blocks*threads*sizeof(int), cuda. Memcpy. Device. To. Host); // reduce array into int for(i = 0; i<niter; i++) count. Hit += count[i]; // free the cuda. Malloc()'d arrays cuda. Free(randomnums); cuda. Free(count_d); free(count); pi = ((double)count. Hit/niter)*4. 0; printf("Pi: %fn", pi); }

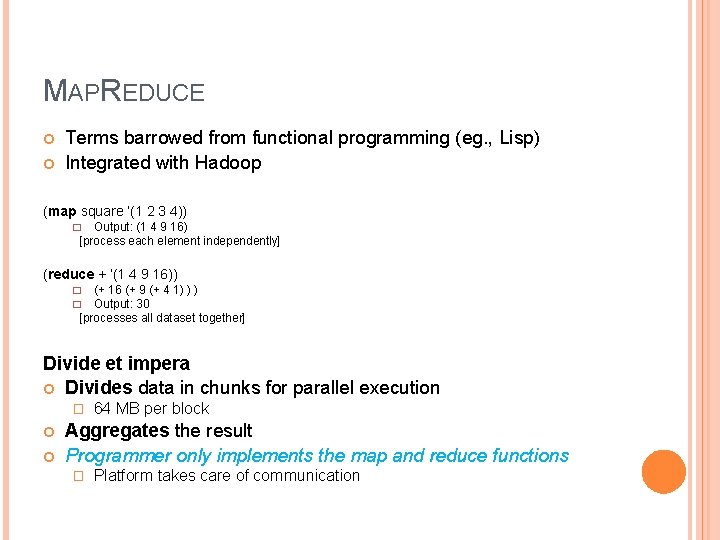

MAPREDUCE Terms barrowed from functional programming (eg. , Lisp) Integrated with Hadoop (map square ‘(1 2 3 4)) Output: (1 4 9 16) [process each element independently] � (reduce + ‘(1 4 9 16)) (+ 16 (+ 9 (+ 4 1) ) ) Output: 30 [processes all dataset together] � � Divide et impera Divides data in chunks for parallel execution � 64 MB per block Aggregates the result Programmer only implements the map and reduce functions � Platform takes care of communication

![MAPREDUCE MODEL Map(k, v) -> (k’, v’) Reduce(k’, v’[]) -> (k’’, v’’) Input Map MAPREDUCE MODEL Map(k, v) -> (k’, v’) Reduce(k’, v’[]) -> (k’’, v’’) Input Map](http://slidetodoc.com/presentation_image_h/926d1894ee8252d83fa7a5b7a8fc6034/image-37.jpg)

MAPREDUCE MODEL Map(k, v) -> (k’, v’) Reduce(k’, v’[]) -> (k’’, v’’) Input Map Shuffle – MR System Reduce Output Funcții definite utilizator void map(String key, String value) { //do work //emit (key, value) pairs to reducers } void reduce(String key, Iterator values) { //for each key, iterate through all values //aggregate results //emit final result }

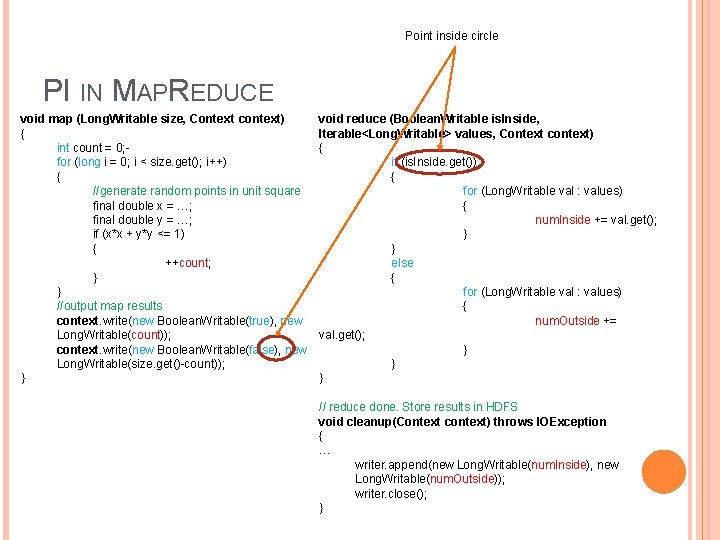

Point inside circle PI IN MAPREDUCE void map (Long. Writable size, Context context) { int count = 0; for (long i = 0; i < size. get(); i++) { //generate random points in unit square final double x = …; final double y = …; if (x*x + y*y <= 1) { ++count; } } //output map results context. write(new Boolean. Writable(true), new Long. Writable(count)); context. write(new Boolean. Writable(false), new Long. Writable(size. get()-count)); } void reduce (Boolean. Writable is. Inside, Iterable<Long. Writable> values, Context context) { if (is. Inside. get()) { for (Long. Writable val : values) { num. Inside += val. get(); } } else { for (Long. Writable val : values) { num. Outside += val. get(); } } } // reduce done. Store results in HDFS void cleanup(Context context) throws IOException { … writer. append(new Long. Writable(num. Inside), new Long. Writable(num. Outside)); writer. close(); }

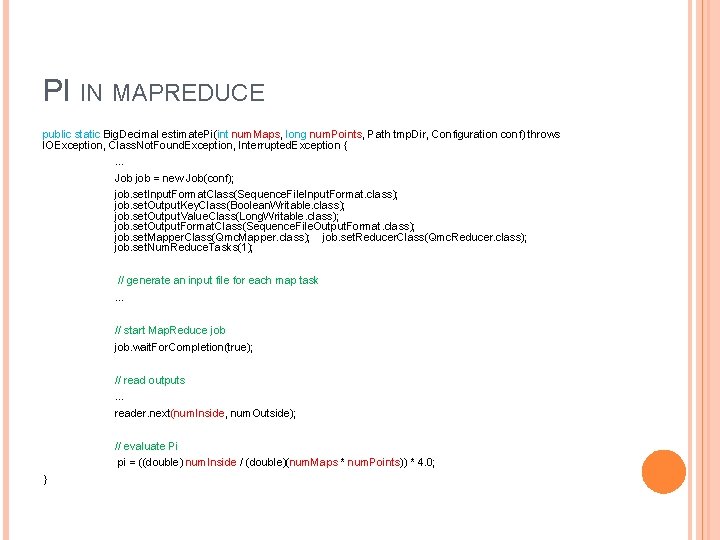

PI IN MAPREDUCE public static Big. Decimal estimate. Pi(int num. Maps, long num. Points, Path tmp. Dir, Configuration conf) throws IOException, Class. Not. Found. Exception, Interrupted. Exception { … Job job = new Job(conf); job. set. Input. Format. Class(Sequence. File. Input. Format. class); job. set. Output. Key. Class(Boolean. Writable. class); job. set. Output. Value. Class(Long. Writable. class); job. set. Output. Format. Class(Sequence. File. Output. Format. class); job. set. Mapper. Class(Qmc. Mapper. class); job. set. Reducer. Class(Qmc. Reducer. class); job. set. Num. Reduce. Tasks(1); // generate an input file for each map task … // start Map. Reduce job. wait. For. Completion(true); // read outputs … reader. next(num. Inside, num. Outside); // evaluate Pi pi = ((double) num. Inside / (double)(num. Maps * num. Points)) * 4. 0; }

LECTURE SOURCES https: //computing. llnl. gov/tutorials/parallel_comp/#D esigning http: //jcsites. juniata. edu/faculty/rhodes/smui/parex. h tm http: //mpitutorial. com/tutorials/mpi-hello-world/ http: //upc. gwu. edu/tutorials/UPC-SC 05. pdf https: //github. com/facebookarchive/hadoop 20/blob/master/src/examples/org/apache/hadoop/e xamples/Pi. Estimator. java

NEXT LECTURE Algorithm scalability � Hardware + data + algorithm

- Slides: 41