BIG DATA TECHNOLOGIES LECTURE 2 ARCHITECTURES AND SYSTEMS

BIG DATA TECHNOLOGIES LECTURE 2: ARCHITECTURES AND SYSTEMS FOR PARALLEL AND DISTRIBUTED COMPUTING Assoc. Prof. Marc FRÎNCU, Phd. Habil. marc. frincu@e-uvt. ro

WHAT IS A PARALLEL ARCHITECTURE ”A collection of processing elements that cooperate to solve large problems fast” – Almasi and Gottlieb, 1989 ARM 11 (2002 -2005) � 90% of the embedded systems is based on ARM processors � � Pipeline in 8 steps 8 in-flight instructions (out-of-order) Intel Pentium 4 (2000 -2008) � � � 32. 000 Intel Xeon CPUs with 12 cores 48. 000 Intel Xeon Phi CPUs with 57 cores 34 petaflops Sunway Taihulight � � � Quad core 2 threads per core GPU Tianhe-2 (2013) � � � 124 in-flight instructions Pipeline in 31 steps Superscalar: in processor instruction level parallelism Intel Skylake (august 2015) � � � Rasberry Pi First place in the world in November 2017 40. 960 SW 26010 CPUs with 256 cores 125 petaflops Google Cloud � � Cluster farms 10 Tbps bandwidth (US-Japan)

MOTIVATION The performance of sequential systems is limited Computation/data transfer through logic gates, memory devices � Latency <> 0 � Assuming an ideal environment we still have the limitation due to the light speed Many applications requires performance Nuclear reaction modelling � Climate modelling � Big Data � Google: 40. 000 searches/min Facebook: 1 billion users every day Technological breakthrough � What do we do with so many transistors?

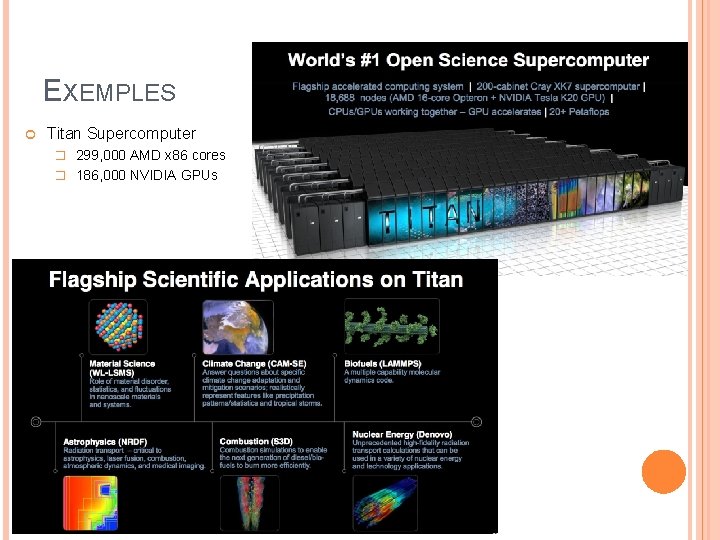

EXEMPLES Titan Supercomputer � 299, 000 AMD x 86 cores � 186, 000 NVIDIA GPUs

TRANSISTORS VS. SPEED More transistors pee chip equals more speed � Electronic devices that act as switches built to act as logical gates � The speed of each operation is given by the time required by each transistor to stop without causing any errors A small transistor will stop/start faster Exemple: 3 Ghz = 3 billion ops/sec � Increase density to increase speed Intel P 4: 170 milllion transistors Intel 15 -core Xeon IVY Bridge: 4. 3 billion On average, each year the processing power increased by 60%

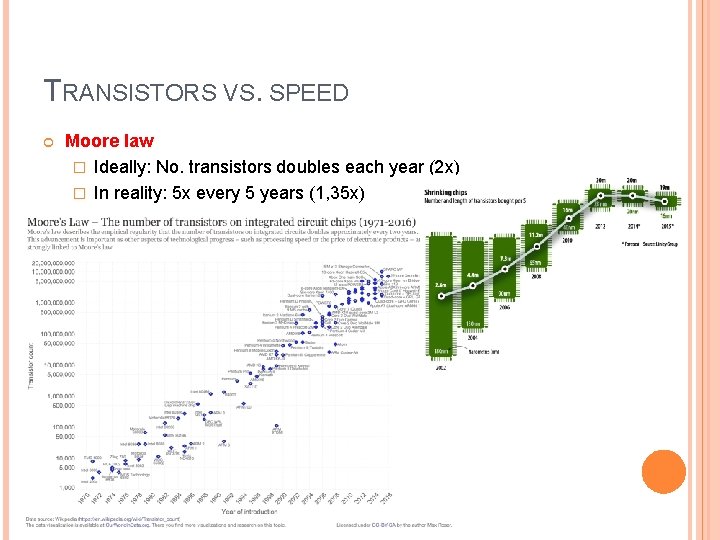

TRANSISTORS VS. SPEED Moore law � Ideally: No. transistors doubles each year (2 x) � In reality: 5 x every 5 years (1, 35 x)

TRANSISTORS VS. SPEED Why can’t we increase speed forever? � Size of chip = constant � But, density of transistors increases 2018: 10 nm Intel Cannonlake � Dennard’s scalability The power (V) needed to operate the transistors = constant even if the number of transistors per chip increases But this is no longer true (as of 2006)! The transistors is are becoming so small that their integrity breaks and they leak current The faster we turn off/on a transistor the more heat it generates For 8, 5 -9 GHz we require liquid nitrogen!

HOW DO WE REDUCE THE POWER CONSUMPTION? Frequency ∝ voltage 2 Gate energy ∝ voltage Executing the same number of cycles at a lower voltage and speed power economy Example � Task with deadline of 100 ms � Method #1: 50 ms at full speed then 50 ms idle � Method #2: 100 ms at frequency/2 and voltage/2 Energy requirement: energy = voltage/4 4 x cheaper

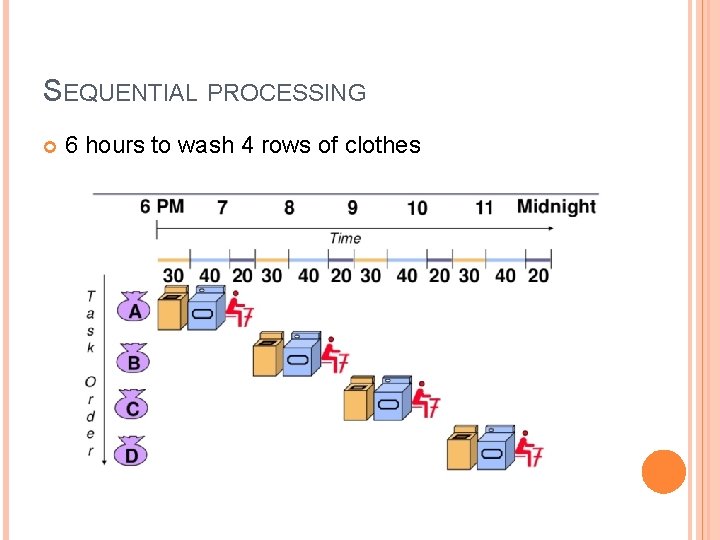

SEQUENTIAL PROCESSING 6 hours to wash 4 rows of clothes

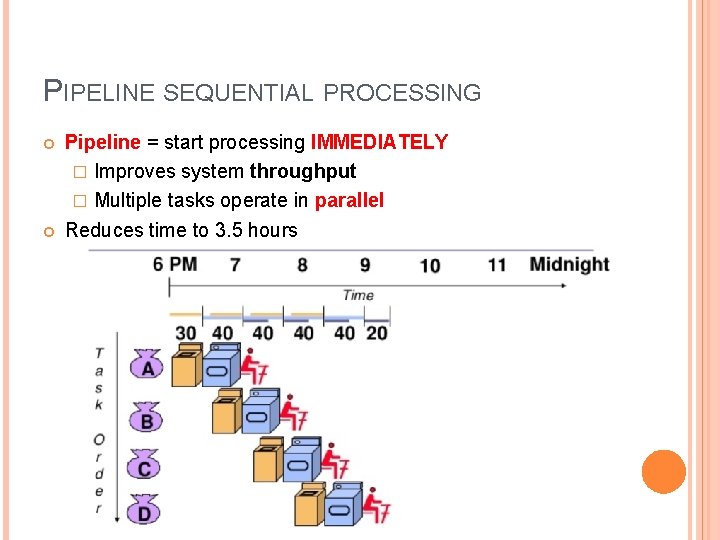

PIPELINE SEQUENTIAL PROCESSING Pipeline = start processing IMMEDIATELY � Improves system throughput � Multiple tasks operate in parallel Reduces time to 3. 5 hours

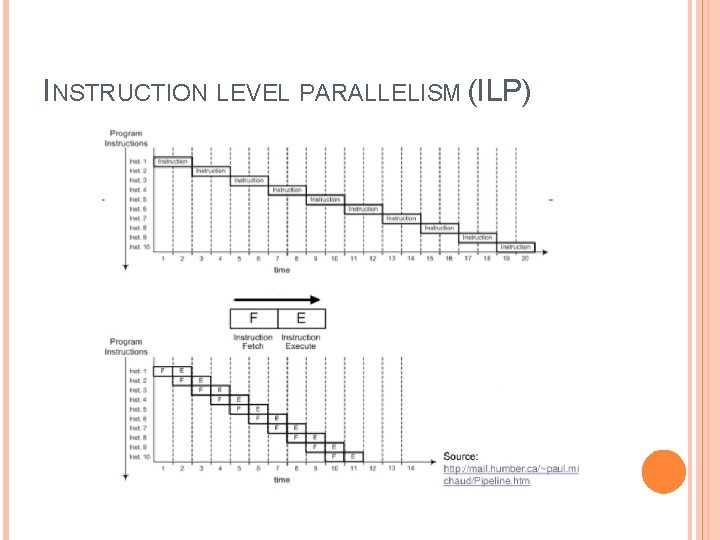

INSTRUCTION LEVEL PARALLELISM (ILP)

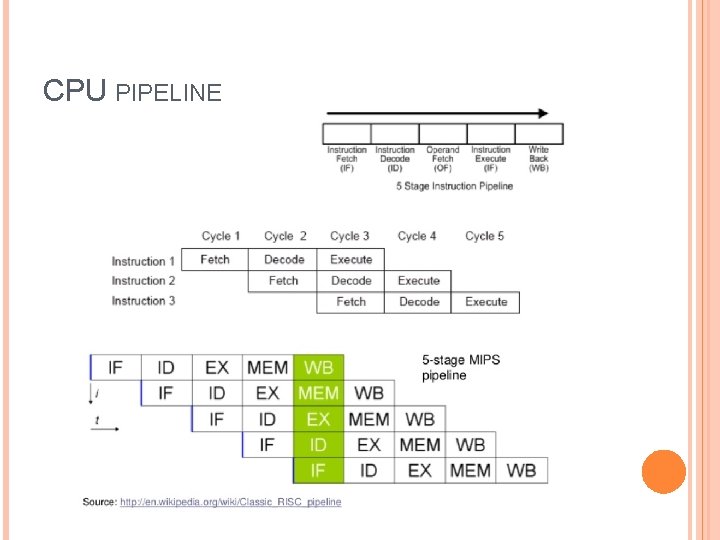

CPU PIPELINE

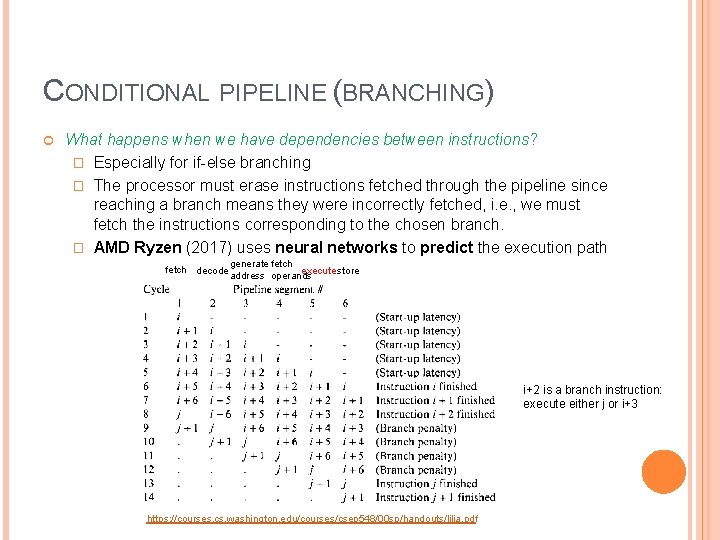

CONDITIONAL PIPELINE (BRANCHING) What happens when we have dependencies between instructions? � Especially for if-else branching � The processor must erase instructions fetched through the pipeline since reaching a branch means they were incorrectly fetched, i. e. , we must fetch the instructions corresponding to the chosen branch. � AMD Ryzen (2017) uses neural networks to predict the execution path fetch decode generate fetch execute store address operands i+2 is a branch instruction: execute either j or i+3 https: //courses. cs. washington. edu/courses/csep 548/00 sp/handouts/lilja. pdf

SUPERSCALAR ARCHITECTURES Execute more than one instruction per CPU cycle Send multiple instructions to redundant units � Hyperthreading (Intel) � Example � Intel P 4 One instruction (thread) processes integers (ALU unit for integers) another processes floating point numbers (ALU unit for floats) The OS thinks it deals with 2 processors Is accomplished by combining a series of shared, replicated or partitioned resources: Registers � Arithmetic units � Cache memory �

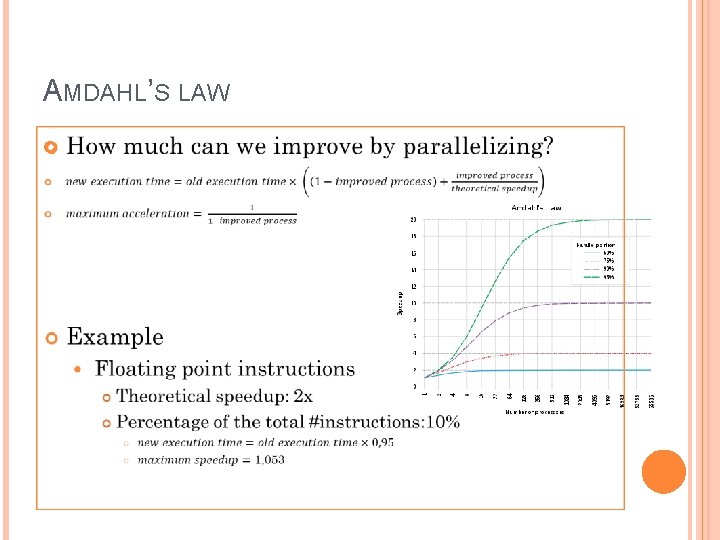

AMDAHL’S LAW

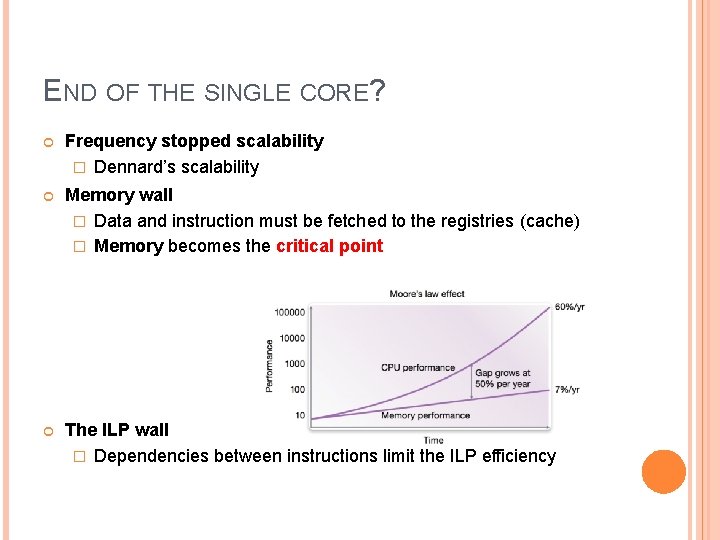

END OF THE SINGLE CORE? Frequency stopped scalability � Dennard’s scalability Memory wall � Data and instruction must be fetched to the registries (cache) � Memory becomes the critical point The ILP wall � Dependencies between instructions limit the ILP efficiency

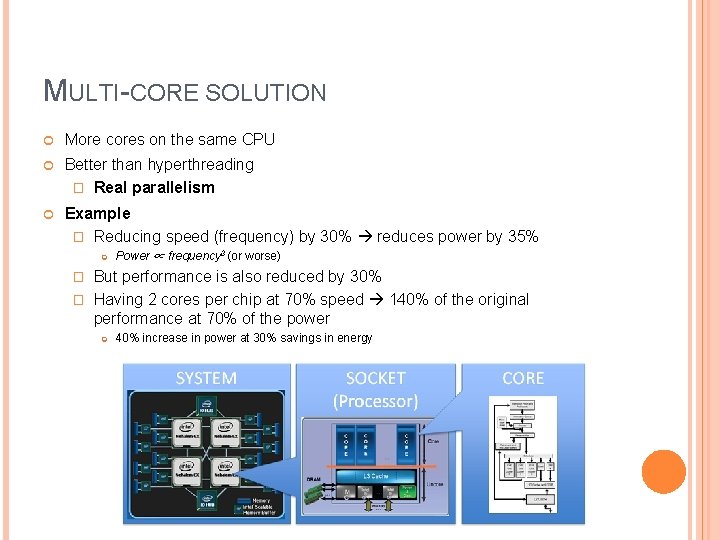

MULTI-CORE SOLUTION More cores on the same CPU Better than hyperthreading � Real parallelism Example � Reducing speed (frequency) by 30% reduces power by 35% Power ∝ frequency 3 (or worse) But performance is also reduced by 30% � Having 2 cores per chip at 70% speed 140% of the original performance at 70% of the power � 40% increase in power at 30% savings in energy

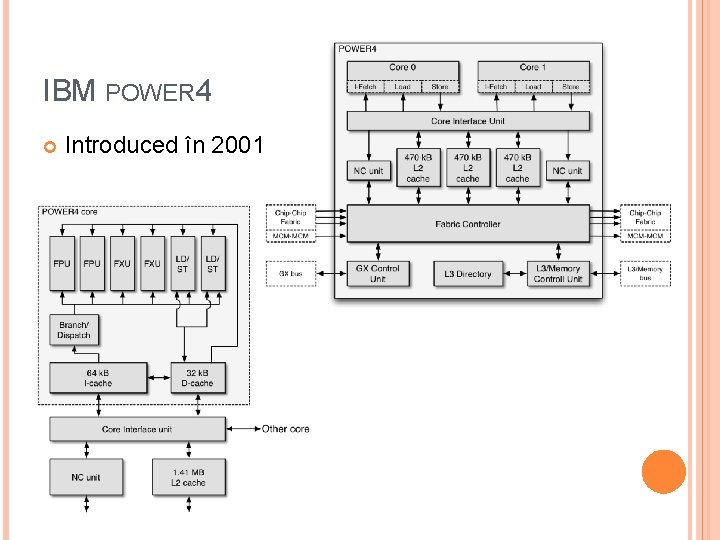

IBM POWER 4 Introduced în 2001

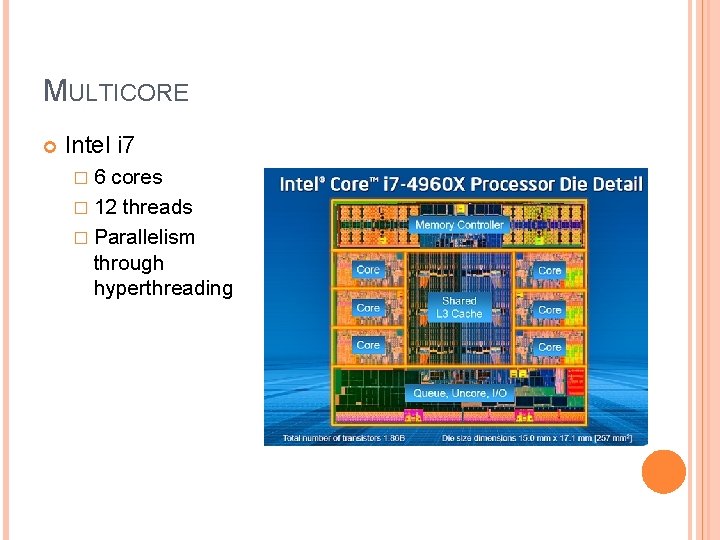

MULTICORE Intel i 7 � 6 cores � 12 threads � Parallelism through hyperthreading

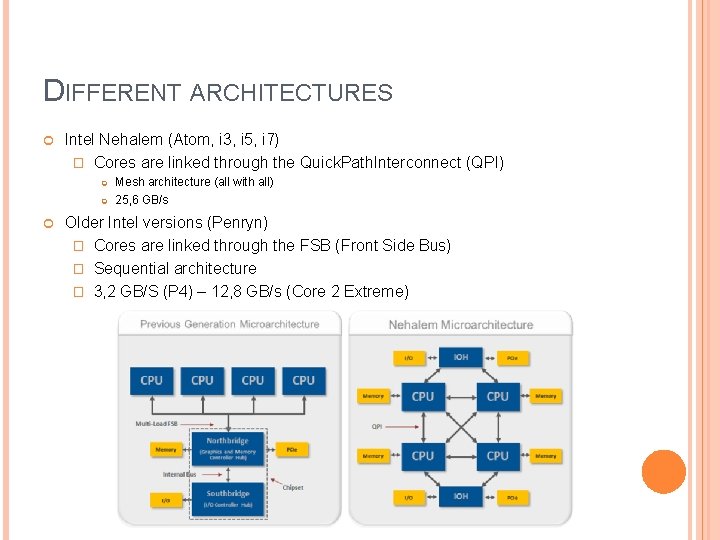

DIFFERENT ARCHITECTURES Intel Nehalem (Atom, i 3, i 5, i 7) � Cores are linked through the Quick. Path. Interconnect (QPI) Mesh architecture (all with all) 25, 6 GB/s Older Intel versions (Penryn) � Cores are linked through the FSB (Front Side Bus) � Sequential architecture � 3, 2 GB/S (P 4) – 12, 8 GB/s (Core 2 Extreme)

AMD INFINITY FABRIC Hyper. Transport protocol 30 -50 GB/s 512 GB/s for GPU Vega Mesh network Network on a chip, clustering � Link between GPUs and So. C � CCIX standard: accelerators, FPGA-uri

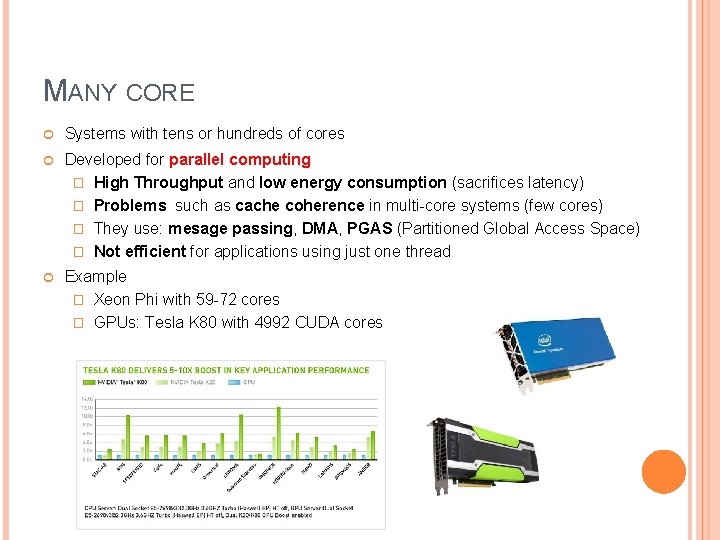

MANY CORE Systems with tens or hundreds of cores Developed for parallel computing � High Throughput and low energy consumption (sacrifices latency) � Problems such as cache coherence in multi-core systems (few cores) � They use: mesage passing, DMA, PGAS (Partitioned Global Access Space) � Not efficient for applications using just one thread Example � Xeon Phi with 59 -72 cores � GPUs: Tesla K 80 with 4992 CUDA cores

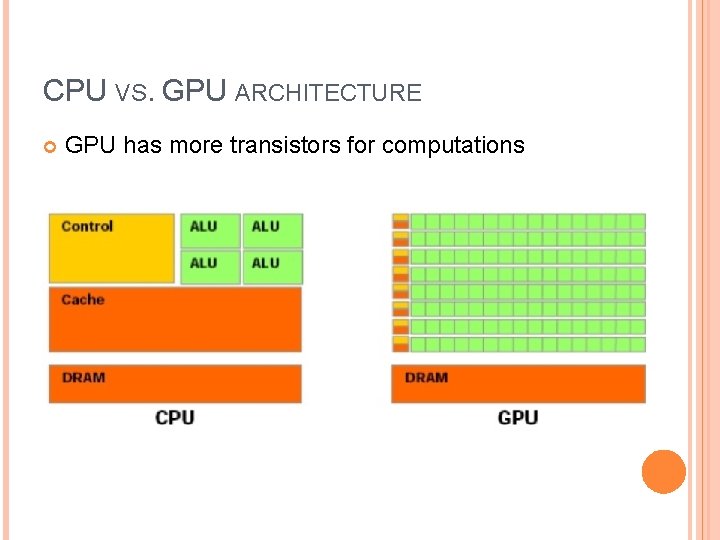

CPU VS. GPU ARCHITECTURE GPU has more transistors for computations

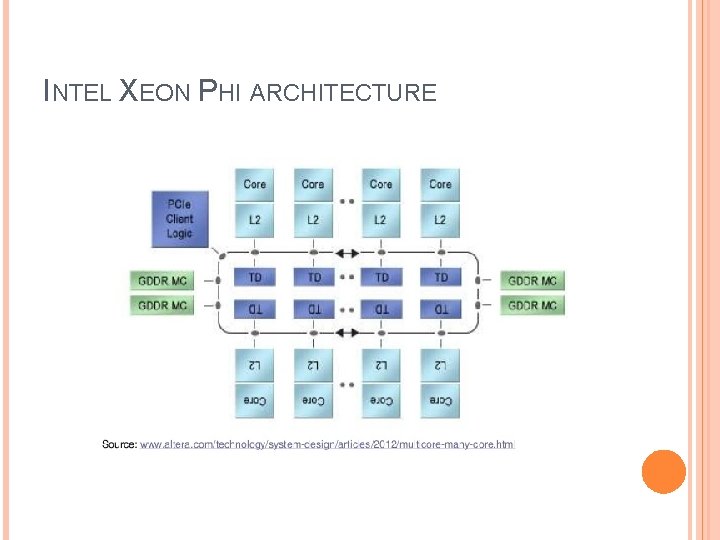

INTEL XEON PHI ARCHITECTURE

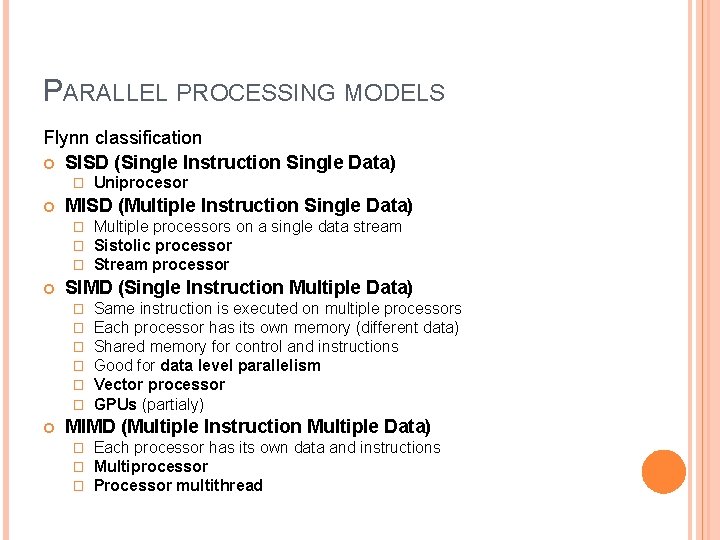

PARALLEL PROCESSING MODELS Flynn classification SISD (Single Instruction Single Data) � MISD (Multiple Instruction Single Data) � � � Multiple processors on a single data stream Sistolic processor Stream processor SIMD (Single Instruction Multiple Data) � � � Uniprocesor Same instruction is executed on multiple processors Each processor has its own memory (different data) Shared memory for control and instructions Good for data level parallelism Vector processor GPUs (partialy) MIMD (Multiple Instruction Multiple Data) � � � Each processor has its own data and instructions Multiprocessor Processor multithread

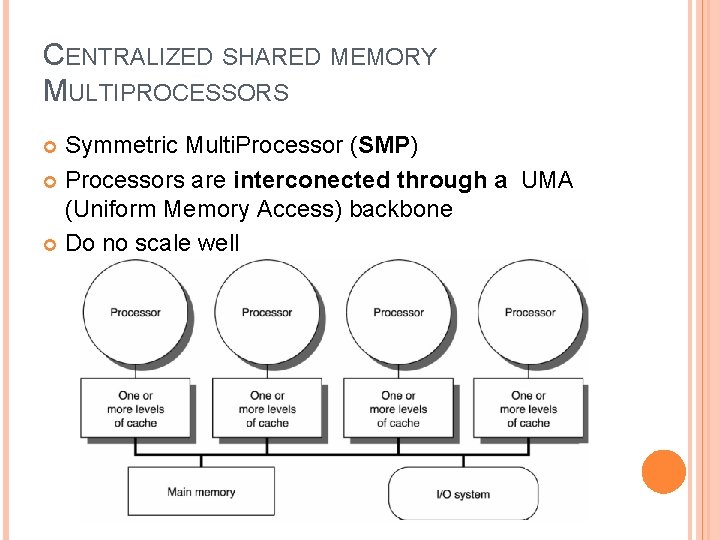

CENTRALIZED SHARED MEMORY MULTIPROCESSORS Symmetric Multi. Processor (SMP) Processors are interconected through a UMA (Uniform Memory Access) backbone Do no scale well

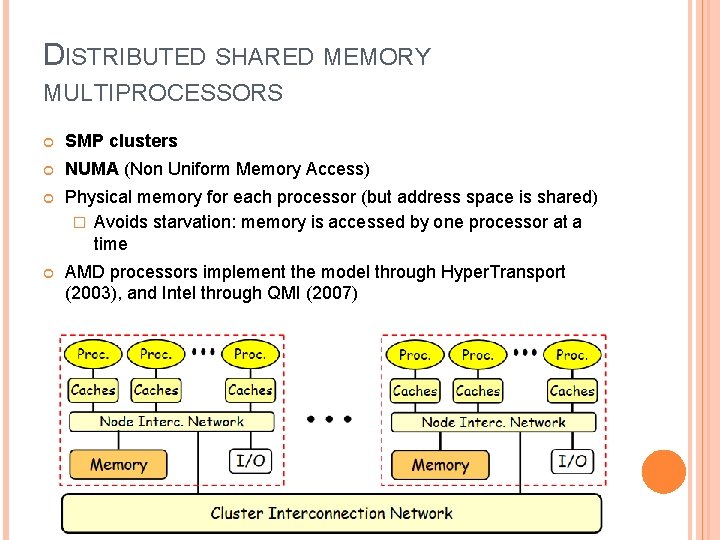

DISTRIBUTED SHARED MEMORY MULTIPROCESSORS SMP clusters NUMA (Non Uniform Memory Access) Physical memory for each processor (but address space is shared) � Avoids starvation: memory is accessed by one processor at a time AMD processors implement the model through Hyper. Transport (2003), and Intel through QMI (2007)

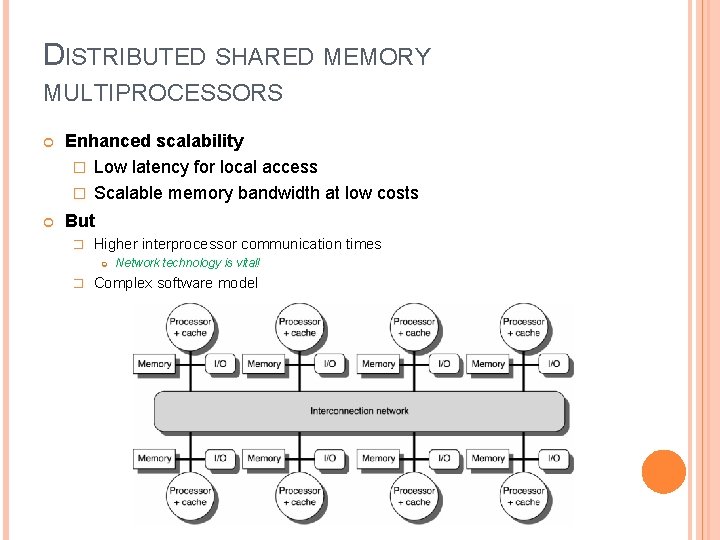

DISTRIBUTED SHARED MEMORY MULTIPROCESSORS Enhanced scalability � Low latency for local access � Scalable memory bandwidth at low costs But � Higher interprocessor communication times � Network technology is vital! Complex software model

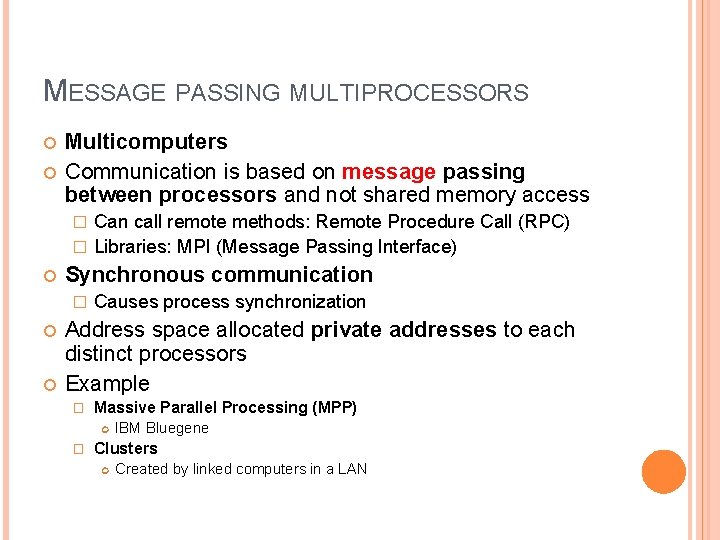

MESSAGE PASSING MULTIPROCESSORS Multicomputers Communication is based on message passing between processors and not shared memory access Can call remote methods: Remote Procedure Call (RPC) � Libraries: MPI (Message Passing Interface) � Synchronous communication � Causes process synchronization Address space allocated private addresses to each distinct processors Example � Massive Parallel Processing (MPP) � IBM Bluegene Clusters Created by linked computers in a LAN

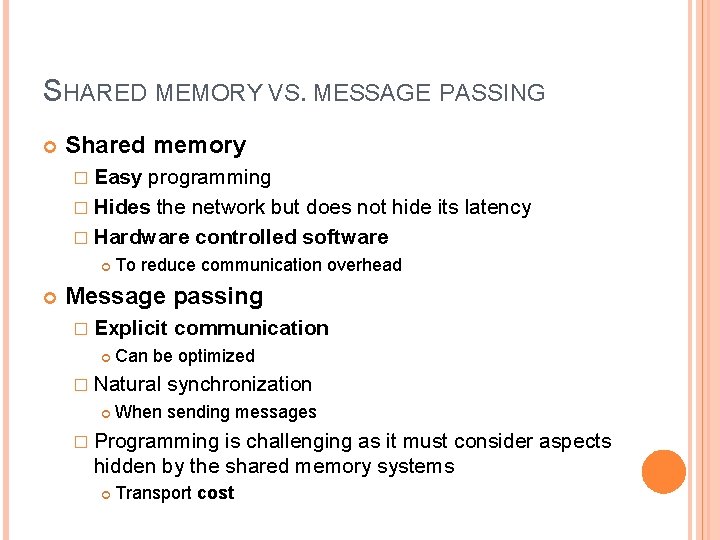

SHARED MEMORY VS. MESSAGE PASSING Shared memory � Easy programming � Hides the network but does not hide its latency � Hardware controlled software To reduce communication overhead Message passing � Explicit communication Can be optimized � Natural synchronization When sending messages � Programming is challenging as it must consider aspects hidden by the shared memory systems Transport cost

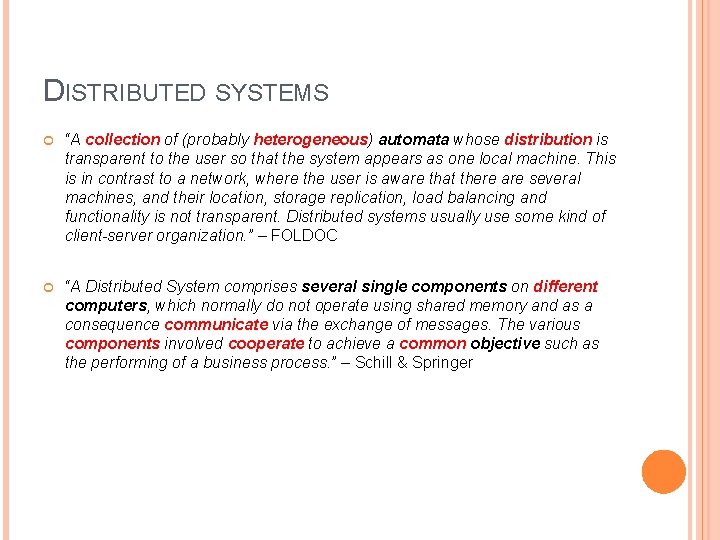

DISTRIBUTED SYSTEMS “A collection of (probably heterogeneous) automata whose distribution is transparent to the user so that the system appears as one local machine. This is in contrast to a network, where the user is aware that there are several machines, and their location, storage replication, load balancing and functionality is not transparent. Distributed systems usually use some kind of client-server organization. ” – FOLDOC “A Distributed System comprises several single components on different computers, which normally do not operate using shared memory and as a consequence communicate via the exchange of messages. The various components involved cooperate to achieve a common objective such as the performing of a business process. ” – Schill & Springer

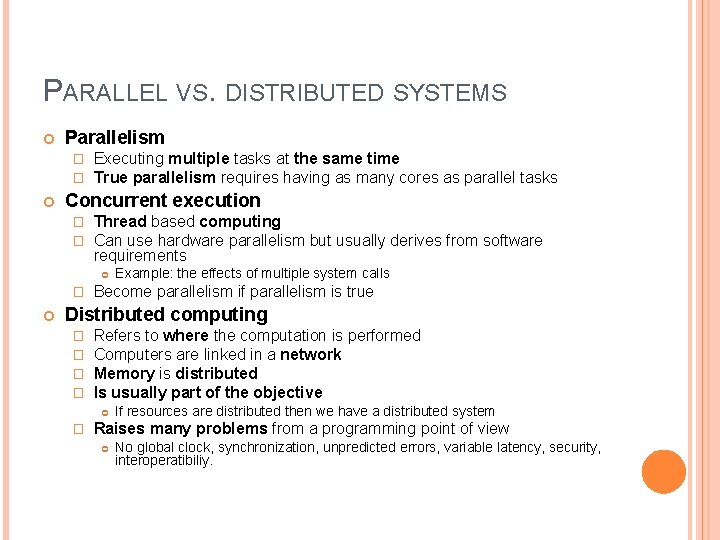

PARALLEL VS. DISTRIBUTED SYSTEMS Parallelism � � Executing multiple tasks at the same time True parallelism requires having as many cores as parallel tasks Concurrent execution � � Thread based computing Can use hardware parallelism but usually derives from software requirements � Example: the effects of multiple system calls Become parallelism if parallelism is true Distributed computing � � Refers to where the computation is performed Computers are linked in a network Memory is distributed Is usually part of the objective � If resources are distributed then we have a distributed system Raises many problems from a programming point of view No global clock, synchronization, unpredicted errors, variable latency, security, interoperatibiliy.

DISTRIBUTED SYSTEMS MODELS Miniccomputer Workstation Server workstation Processor pool Cluster Grid Cloud

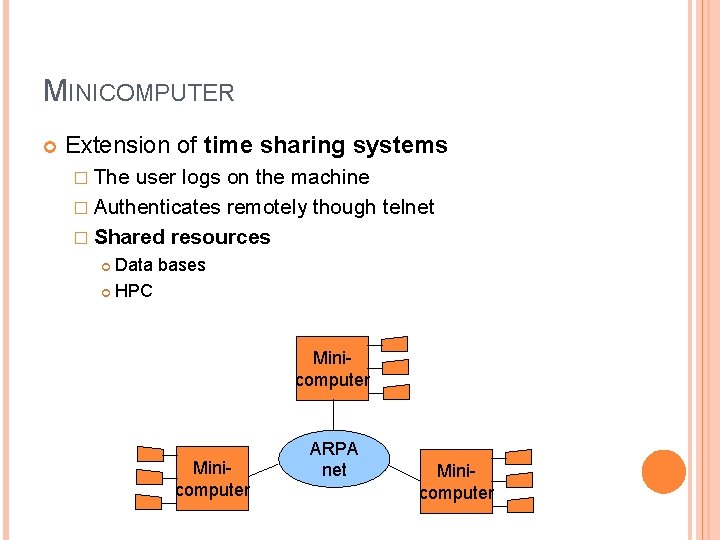

MINICOMPUTER Extension of time sharing systems � The user logs on the machine � Authenticates remotely though telnet � Shared resources Data bases HPC Minicomputer ARPA net Minicomputer

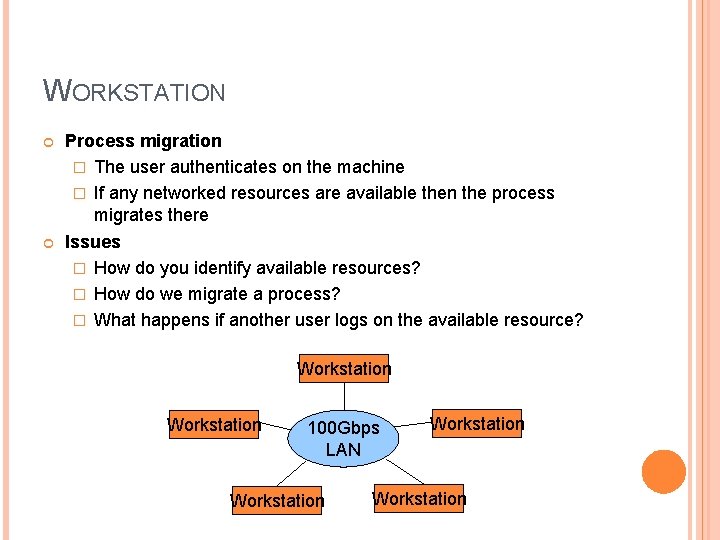

WORKSTATION Process migration � The user authenticates on the machine � If any networked resources are available then the process migrates there Issues � How do you identify available resources? � How do we migrate a process? � What happens if another user logs on the available resource? Workstation 100 Gbps LAN Workstation

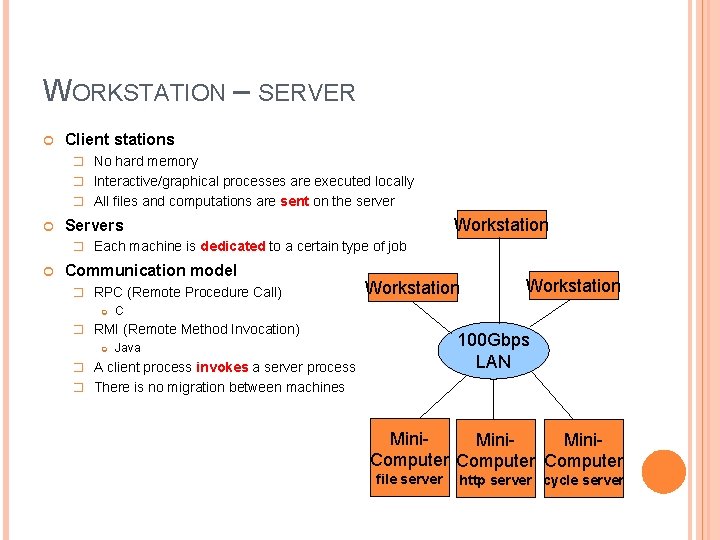

WORKSTATION – SERVER Client stations � No hard memory � Interactive/graphical processes are executed locally � All files and computations are sent on the server Workstation Servers � Each machine is dedicated to a certain type of job Communication model � RPC (Remote Procedure Call) Workstation C � RMI (Remote Method Invocation) Workstation 100 Gbps LAN Java � A client process invokes a server process � There is no migration between machines Mini. Computer file server http server cycle server

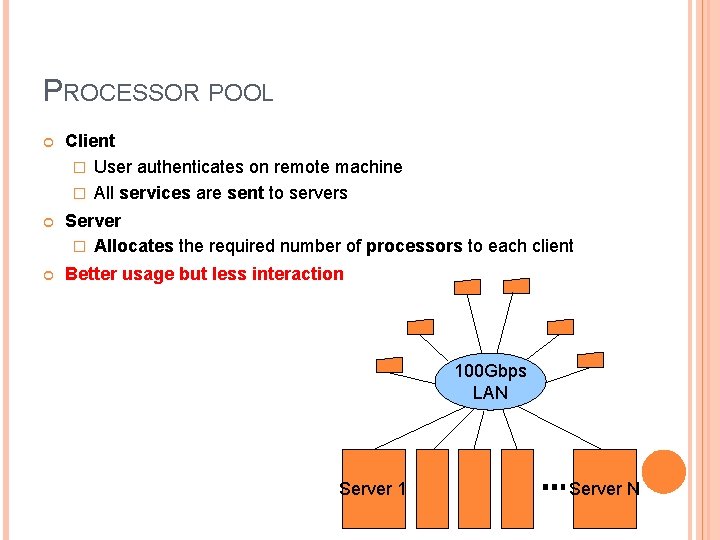

PROCESSOR POOL Client � User authenticates on remote machine � All services are sent to servers Server � Allocates the required number of processors to each client Better usage but less interaction 100 Gbps LAN Server 1 Server N

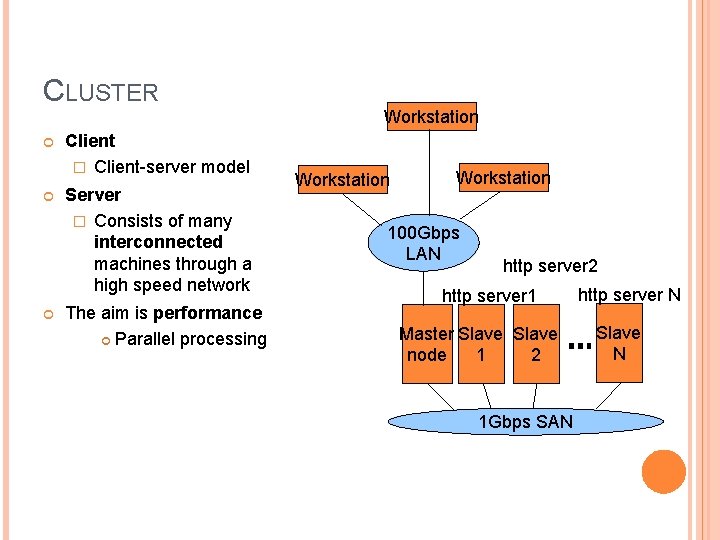

CLUSTER Client � Client-server model Server � Consists of many interconnected machines through a high speed network The aim is performance Parallel processing Workstation 100 Gbps LAN http server 2 http server 1 Master Slave node 1 2 1 Gbps SAN http server N Slave N

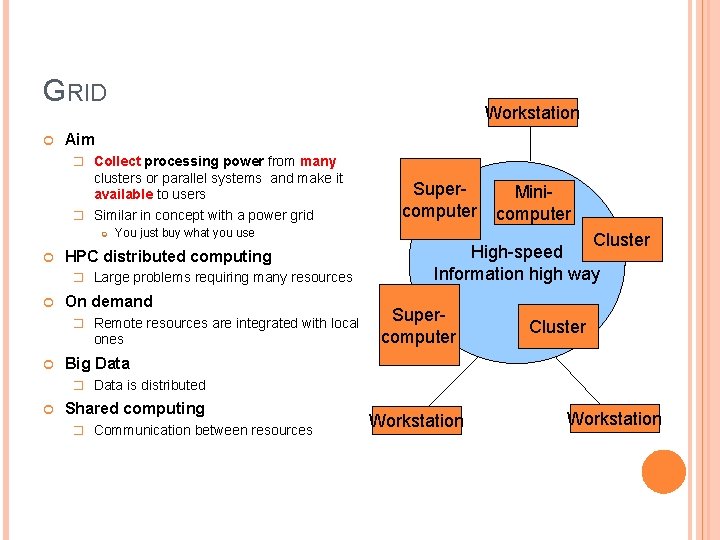

GRID Workstation Aim � Collect processing power from many clusters or parallel systems and make it available to users � Similar in concept with a power grid You just buy what you use HPC distributed computing � Large problems requiring many resources On demand � Remote resources are integrated with local ones Supercomputer Minicomputer Cluster High-speed Information high way Supercomputer Cluster Big Data � Data is distributed Shared computing � Communication between resources Workstation

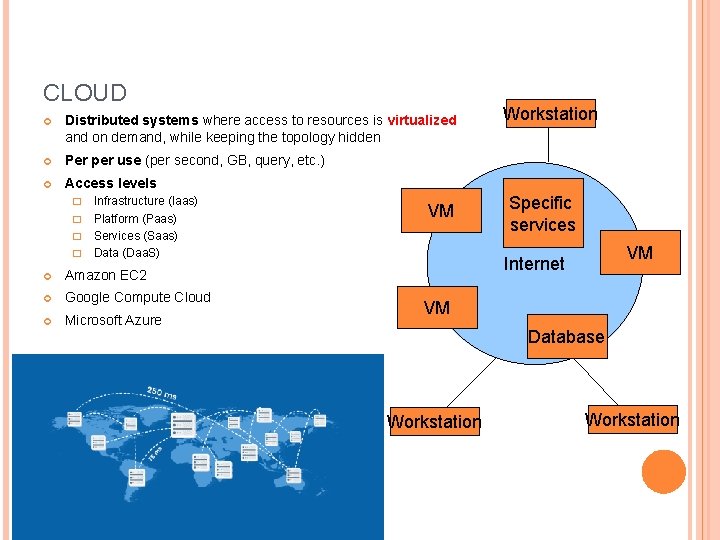

CLOUD Distributed systems where access to resources is virtualized and on demand, while keeping the topology hidden Per per use (per second, GB, query, etc. ) Access levels Infrastructure (Iaas) � Platform (Paas) � Services (Saas) � Data (Daa. S) � Amazon EC 2 Google Compute Cloud Microsoft Azure VM Workstation Specific services VM Internet VM Database Workstation

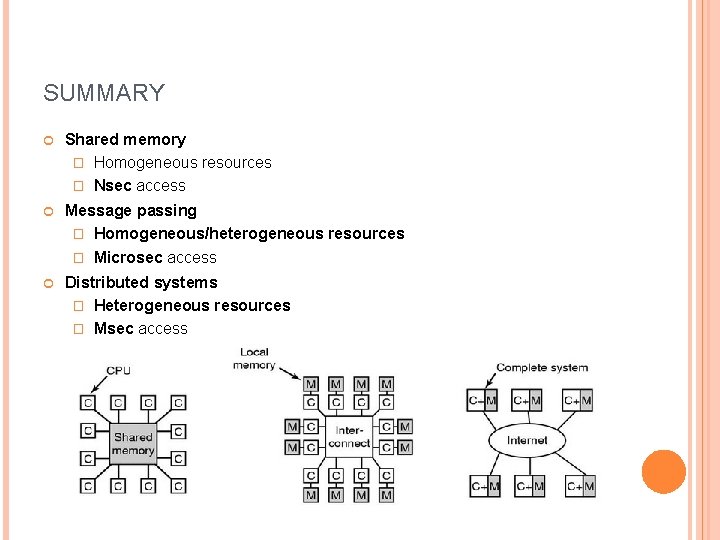

SUMMARY Shared memory � Homogeneous resources � Nsec access Message passing � Homogeneous/heterogeneous resources � Microsec access Distributed systems � Heterogeneous resources � Msec access

SOURCES http: //slideplayer. com/slide/5704113/ https: //www. comsol. com/blogs/havent-cpu-clock-speedsincreased-last-years/ http: //www. inf. ed. ac. uk/teaching/courses/pa/Notes/lectur e 01 -intro. pdf https: //www. slideshare. net/Dilum. Bandara/11 performance-enhancements http: //wgropp. cs. illinois. edu/courses/cs 598 s 16/lectures/lecture 15. pdf http: //www. ni. com/white-paper/11266/en/ https: //www. cs. virginia. edu/~skadron/cs 433_s 09_proces sors/arm 11. pdf http: //www. csie. nuk. edu. tw/~wuch/course/eef 011/4 p/eef 011 -6. pdf

NEXT LECTURE Parallelizing algorithms APIs and platforms for parallel and distributed computing � Open. MP � MPI � Uniform Parallel C � CUDA � Hadoop

- Slides: 43