Big Data in Performance Engineering Rachna Trivedi Performance

Big Data - in Performance Engineering Rachna Trivedi & Performance Test Analyst Quintiles IMS 1

Abstract Big data is a collection of large datasets that cannot be processed using traditional computing techniques. Big data relates to data creation, storage, retrieval and analysis that is remarkable in terms of volume, variety, and velocity. Any big data project involves in processing huge volumes of structured and unstructured data and is processed across multiple, nodes to complete the job in less amount of time. At times because of poor design and architecture performance is degraded. Some of the areas where performance issues can occur are imbalance in input slits, redundant shuffle and sorts, moving most of the aggregation computations to reduce process and so on. Performance testing is conducted by setting up huge volume of data in an environment. In current whitepaper I would like to focus on Performance engineering concepts in Big. Data. How we can achieve performance engineering in Biga. Data/solutions provided and various tools and technologies. 2

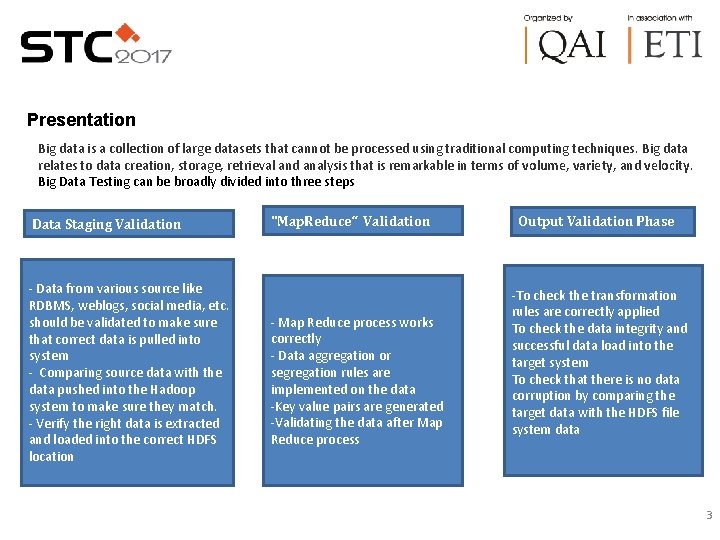

Presentation Big data is a collection of large datasets that cannot be processed using traditional computing techniques. Big data relates to data creation, storage, retrieval and analysis that is remarkable in terms of volume, variety, and velocity. Big Data Testing can be broadly divided into three steps Data Staging Validation - Data from various source like RDBMS, weblogs, social media, etc. should be validated to make sure that correct data is pulled into system - Comparing source data with the data pushed into the Hadoop system to make sure they match. - Verify the right data is extracted and loaded into the correct HDFS location "Map. Reduce“ Validation - Map Reduce process works correctly - Data aggregation or segregation rules are implemented on the data -Key value pairs are generated -Validating the data after Map Reduce process Output Validation Phase -To check the transformation rules are correctly applied To check the data integrity and successful data load into the target system To check that there is no data corruption by comparing the target data with the HDFS file system data 3

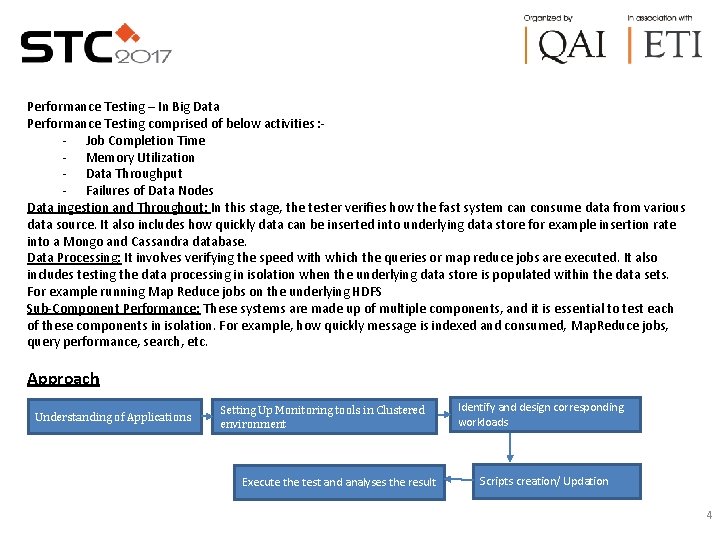

Performance Testing – In Big Data Performance Testing comprised of below activities : - - Job Completion Time - Memory Utilization - Data Throughput - Failures of Data Nodes Data ingestion and Throughout: In this stage, the tester verifies how the fast system can consume data from various data source. It also includes how quickly data can be inserted into underlying data store for example insertion rate into a Mongo and Cassandra database. Data Processing: It involves verifying the speed with which the queries or map reduce jobs are executed. It also includes testing the data processing in isolation when the underlying data store is populated within the data sets. For example running Map Reduce jobs on the underlying HDFS Sub-Component Performance: These systems are made up of multiple components, and it is essential to test each of these components in isolation. For example, how quickly message is indexed and consumed, Map. Reduce jobs, query performance, search, etc. Approach Understanding of Applications Setting Up Monitoring tools in Clustered environment Execute the test and analyses the result Identify and design corresponding workloads Scripts creation/ Updation 4

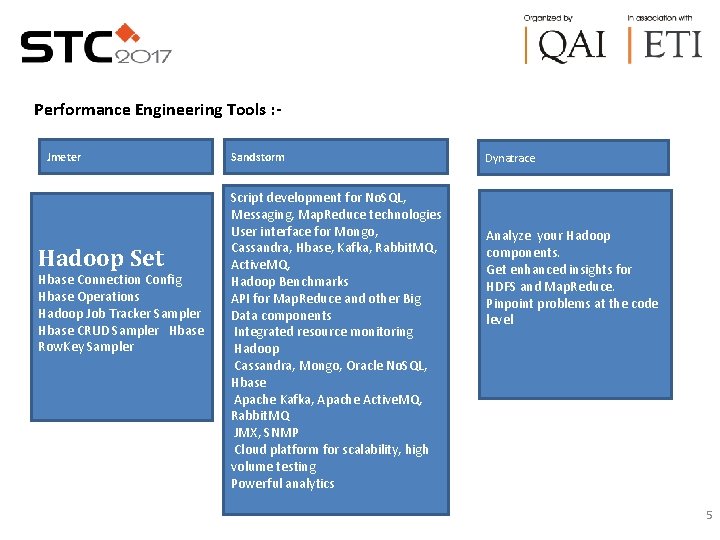

Performance Engineering Tools : Jmeter Sandstorm Script development for No. SQL, Messaging, Map. Reduce technologies User interface for Mongo, Cassandra, Hbase, Kafka, Rabbit. MQ, Active. MQ, Hbase Connection Config Hadoop Benchmarks Hbase Operations API for Map. Reduce and other Big Hadoop Job Tracker Sampler Data components Hbase CRUD Sampler Hbase Integrated resource monitoring Row. Key Sampler Hadoop Cassandra, Mongo, Oracle No. SQL, Hbase Apache Kafka, Apache Active. MQ, Rabbit. MQ JMX, SNMP Cloud platform for scalability, high volume testing Powerful analytics Hadoop Set Dynatrace Analyze your Hadoop components. Get enhanced insights for HDFS and Map. Reduce. Pinpoint problems at the code level 5

Performance Testing Challenges Diverse set of technologies: As seen above, each sub component involved in a big data application belongs to a different technology. While we need to test each in isolation, no single tool can support each of the technologies. This is unlike the web applications where though the technology may differ but underlying communication is through Http. But, in this case the communication mechanism vary from component to components. Unavailability of specific tools: No single tool can cater to each of the technology. For e. g. database testing tools for No. SQL might not be a fit for message queues. Similarly, custom tools and utilities will be require to test map reduce jobs. Test scripting: There are no record and playback mechanisms for such systems. A high degree of scripting is required to design test cases and scenarios 6

References & Appendix Big. Data Technologies Big. Data in Performance Engineering Big. Data - Performance Engineering Approach Big. Data - Performance Engineering Tools Big. Data - Performance Testing Challenges 7

Author Biography Rachna Trivedi is an experienced, delivery-focused technology specialist in performance testing solutions provided with close to 12 years testing experience. She is currently working as a Performance Lead at Quintiles Ims health Technologies. She brings diverse technology solution experience in performance engineering for software products, bottleneck identification and diagnostics, profiling and tuning app and database servers. The recent focus has been on cloud computing, big data. Delivered more than 300 projects in 3 years in IMS Health and focussed delivery in 4 years for FIFA Client in Tech Mahindra at different client locations. Participated in STC in 2015, 2016 and 2017. Rachna is also an active contributor to performance engineering forums. 8

Thank You!!! 9

- Slides: 9