Bibliometric Indicators Why do we need more than

Bibliometric Indicators: Why do we need more than one? Gianluca Setti Department of Engineering, University of Ferrara gianluca. setti@unife. it First Meeting of GEV 09 Rome, 05/10/2015

Outline 1. Overview on journal bibliometric indicators 2. Show that the "quality" of a journal as measured by journal bibliometric indicators is a multidimensional concept which cannot be captured by any single indicator 3. Show that the bibliometric indicators should not be misused by giving them "more significance than they have": a) the impact of an individual paper cannot be measured by the impact of the journal in which it has appeared b) there is no strong correlation between the Impact Factor of a journal and its selectivity (rejection rate) c) the Impact Factor of a journal is not a good proxy for the probability that an individual paper will be highly cited 4. Highlight that the misuse of journal bibliometric indicators has undesired consequences in the behavior of editors and individuals 2 06 -Jun-21

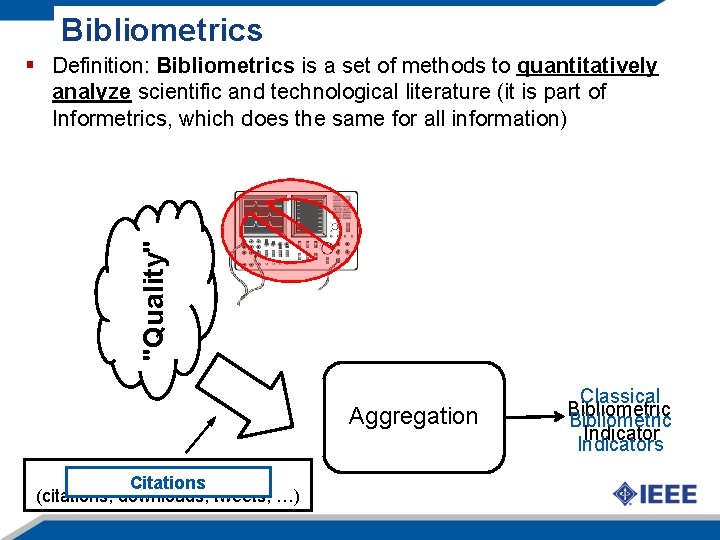

Bibliometrics "Quality" § Definition: Bibliometrics is a set of methods to quantitatively analyze scientific and technological literature (it is part of Informetrics, which does the same for all information) Aggregation Proxy of Quality Citations 3 06 -Jun-21 (citations, downloads, tweets, …) Classical Bibliometric Indicators

Journal Bibliometric Indicators, i. e. …numbers, numbers… Many bibliometric indicators exist, each aiming to measure "journal quality"; they should: 1. Give a result which corresponds to the technical quality of the papers published in that journal: Nature, Science or Proceedings of the IEEE and the “Journal of Obscurity” should have a very different value of the indicator 2. Be "fair" if applied to different areas: different areas/communities may have different citation practices (e. g. , long/short citation list) 3. Be immune to external manipulation: it should be very difficult to artificially manipulate its value 4 06 -Jun-21

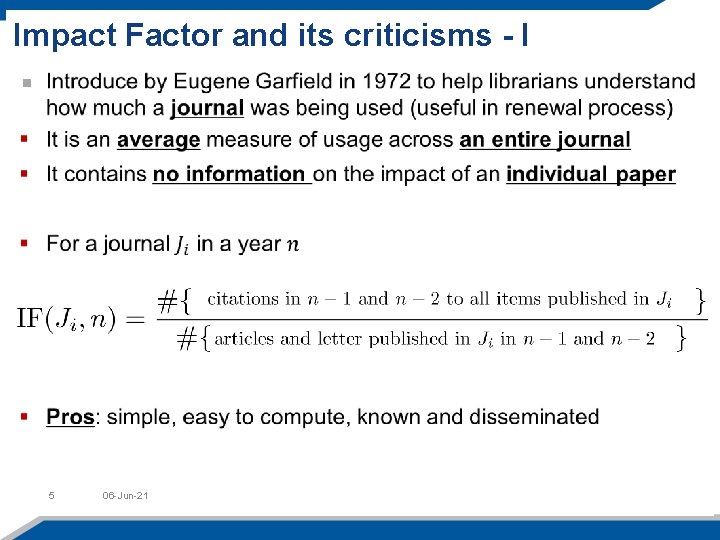

Impact Factor and its criticisms - I 5 06 -Jun-21

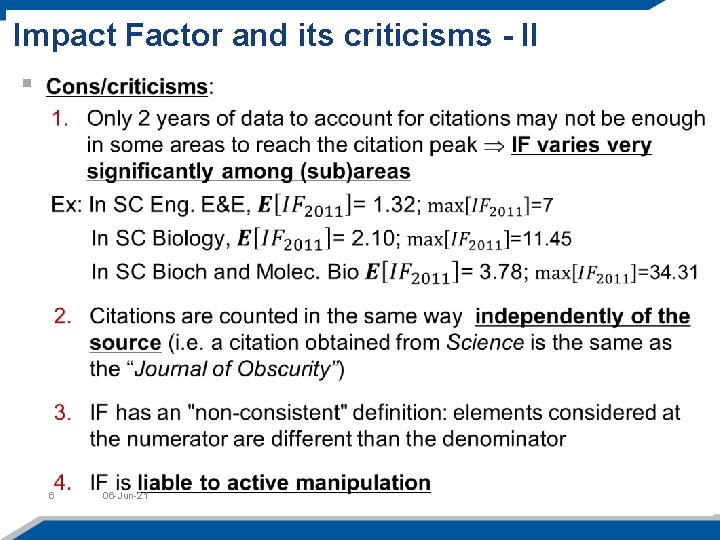

Impact Factor and its criticisms - II 6 06 -Jun-21

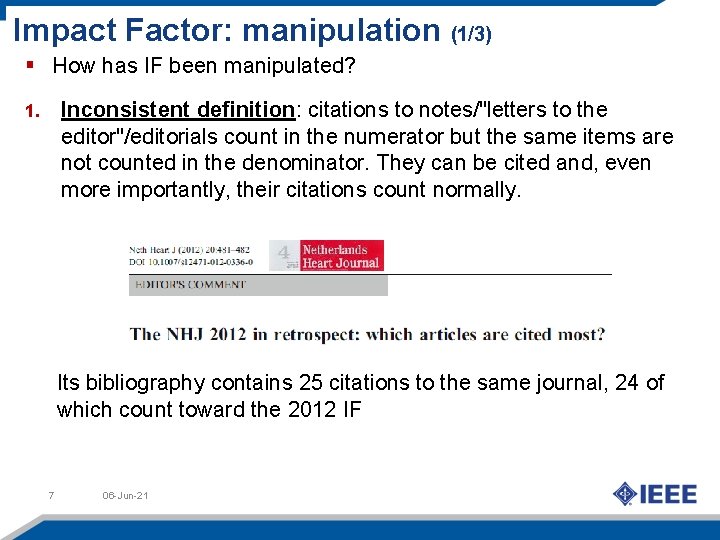

Impact Factor: manipulation (1/3) § How has IF been manipulated? Inconsistent definition: citations to notes/"letters to the editor"/editorials count in the numerator but the same items are not counted in the denominator. They can be cited and, even more importantly, their citations count normally. 1. Its bibliography contains 25 citations to the same journal, 24 of which count toward the 2012 IF 7 06 -Jun-21

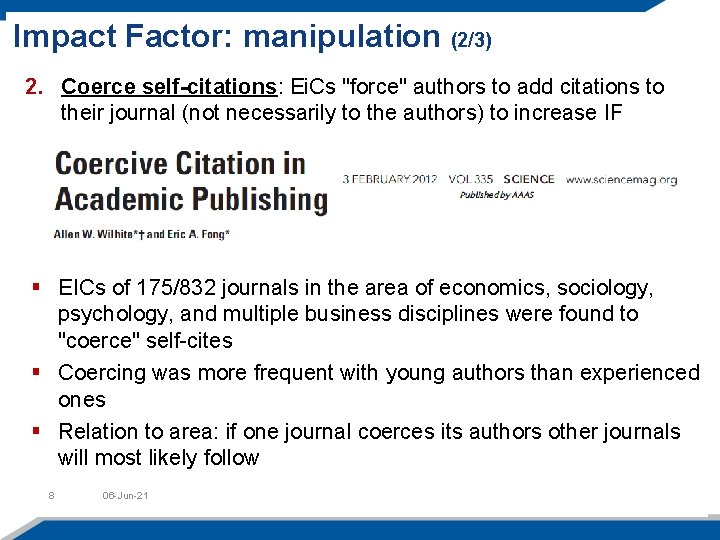

Impact Factor: manipulation (2/3) 2. Coerce self-citations: Ei. Cs "force" authors to add citations to their journal (not necessarily to the authors) to increase IF § EICs of 175/832 journals in the area of economics, sociology, psychology, and multiple business disciplines were found to "coerce" self-cites § Coercing was more frequent with young authors than experienced ones § Relation to area: if one journal coerces its authors other journals will most likely follow 8 06 -Jun-21

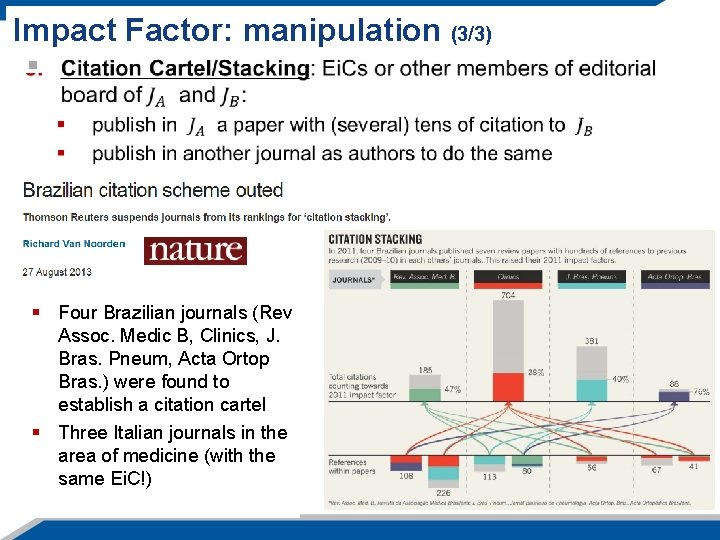

Impact Factor: manipulation (3/3) § Four Brazilian journals (Rev Assoc. Medic B, Clinics, J. Bras. Pneum, Acta Ortop Bras. ) were found to establish a citation cartel § Three Italian journals in the area of medicine (with the same Ei. C!) 9 06 -Jun-21

What is wrong with this conference paper? 11 06 -Jun-21

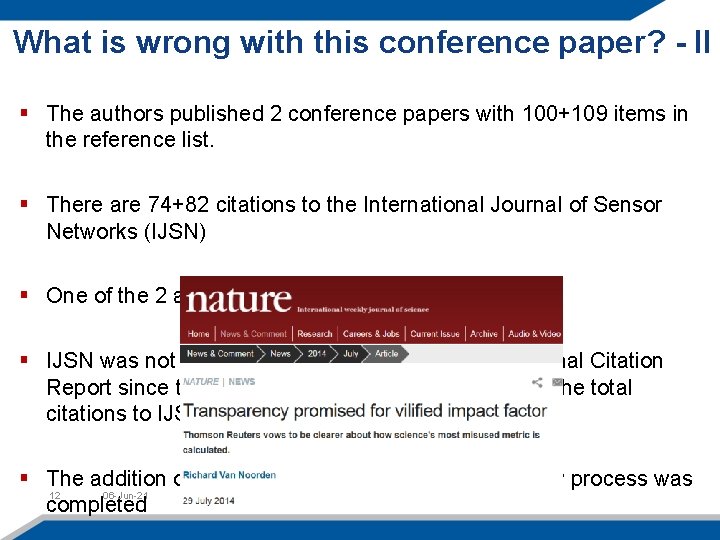

What is wrong with this conference paper? - II § The authors published 2 conference papers with 100+109 items in the reference list. § There are 74+82 citations to the International Journal of Sensor Networks (IJSN) § One of the 2 authors is the Ei. C of the IJSN § IJSN was not included by Thomson in the 2013 Journal Citation Report since the above citations account for 82% of the total citations to IJSN. § The addition of the citation was done after the review process was 12 06 -Jun-21 completed

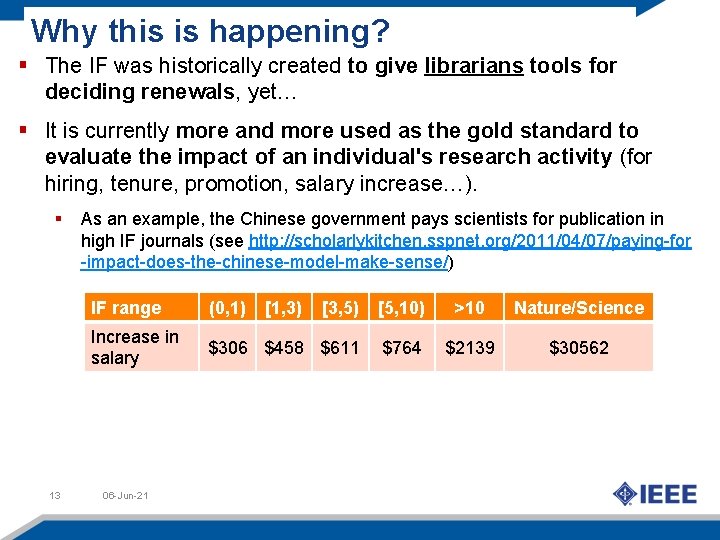

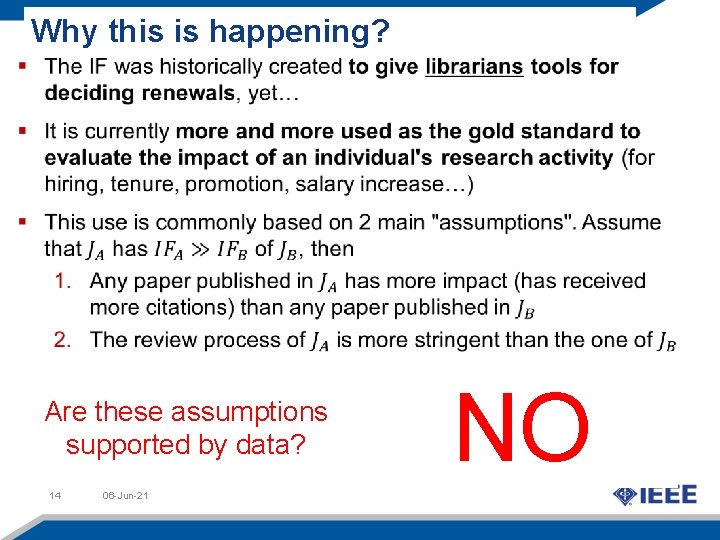

Why this is happening? § The IF was historically created to give librarians tools for deciding renewals, yet… § It is currently more and more used as the gold standard to evaluate the impact of an individual's research activity (for hiring, tenure, promotion, salary increase…). § 13 As an example, the Chinese government pays scientists for publication in high IF journals (see http: //scholarlykitchen. sspnet. org/2011/04/07/paying-for -impact-does-the-chinese-model-make-sense/) IF range (0, 1) [3, 5) [5, 10) >10 Nature/Science Increase in salary $306 $458 $611 $764 $2139 $30562 06 -Jun-21 [1, 3)

Why this is happening? Are these assumptions supported by data? 14 06 -Jun-21 NO

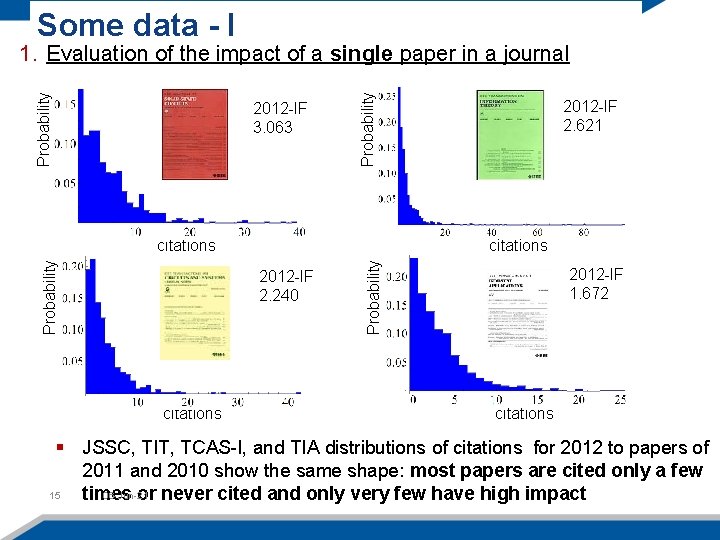

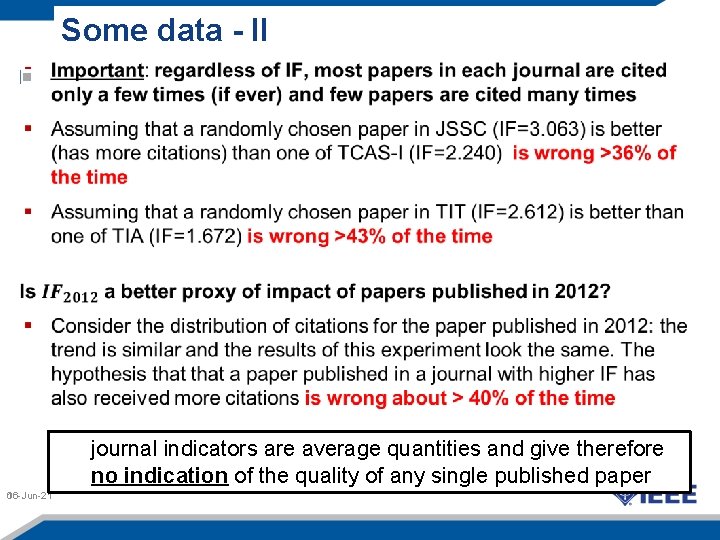

Some data - I 2012 -IF 3. 063 Probability 1. Evaluation of the impact of a single paper in a journal citations 2012 -IF 2. 240 citations Probability citations 2012 -IF 2. 621 2012 -IF 1. 672 citations § JSSC, TIT, TCAS-I, and TIA distributions of citations for 2012 to papers of 2011 and 2010 show the same shape: most papers are cited only a few 15 06 -Jun-21 times or never cited and only very few have high impact

Some data - II journal indicators are average quantities and give therefore no indication of the quality of any single published paper 06 -Jun-21 16

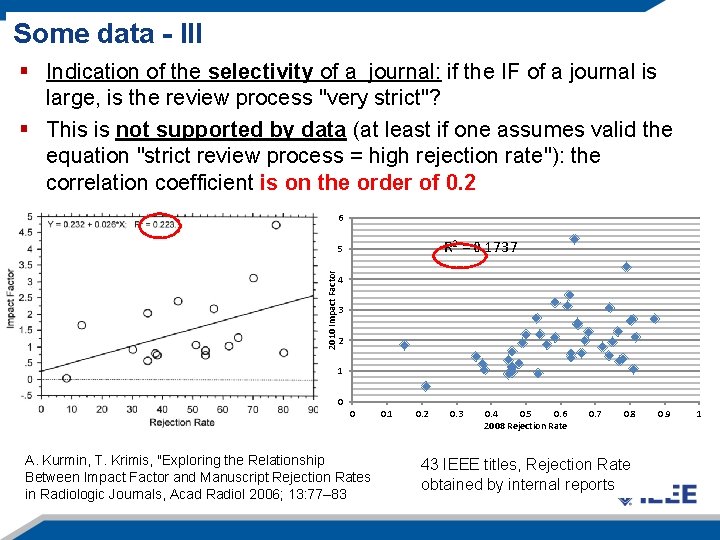

Some data - III § Indication of the selectivity of a journal: if the IF of a journal is large, is the review process "very strict"? § This is not supported by data (at least if one assumes valid the equation "strict review process = high rejection rate"): the correlation coefficient is on the order of 0. 2 6 R 2 = 0. 1737 2010 Impact Factor 5 4 3 2 1 0 0 A. Kurmin, T. Krimis, "Exploring the Relationship Between Impact Factor and Manuscript Rejection Rates in Radiologic Journals, Acad Radiol 2006; 13: 77– 83 17 06 -Jun-21 0. 2 0. 3 0. 4 0. 5 0. 6 2008 Rejection Rate 0. 7 0. 8 43 IEEE titles, Rejection Rate obtained by internal reports 0. 9 1

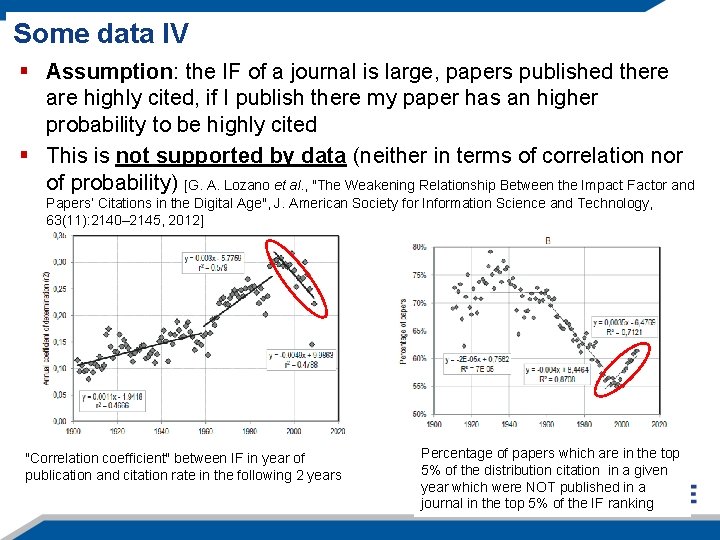

Some data IV § Assumption: the IF of a journal is large, papers published there are highly cited, if I publish there my paper has an higher probability to be highly cited § This is not supported by data (neither in terms of correlation nor of probability) [G. A. Lozano et al. , "The Weakening Relationship Between the Impact Factor and Papers’ Citations in the Digital Age", J. American Society for Information Science and Technology, 63(11): 2140– 2145, 2012] "Correlation coefficient" between IF in year of publication and citation rate in the following 2 years 18 06 -Jun-21 Percentage of papers which are in the top 5% of the distribution citation in a given year which were NOT published in a journal in the top 5% of the IF ranking

Why this is happening? § While the IF was historically created to help librarians, it is misused to evaluate individual's research activity (for hiring, tenure, promotion…) The unintended use of the IF made it the target and not the measure and created incentives to its manipulation. From evaluation view point: “when a measure becomes a target, it ceases to be a good measure” - Goodhart’s law (from D. Arnold, K. Fowler, "Nefarious Numbers", Notices of the AMS, vol 58, n. 3, pp 434 -437) According to the 2013 Nature article of Richard Van Noorden the Ei. Cs of the 4 journals involved in a citation cartel created it because "In Brazil, an agency in the education ministry, called CAPES, evaluates graduate programmes in part by the impact factors of the journals in which students publish research" 19 06 -Jun-21

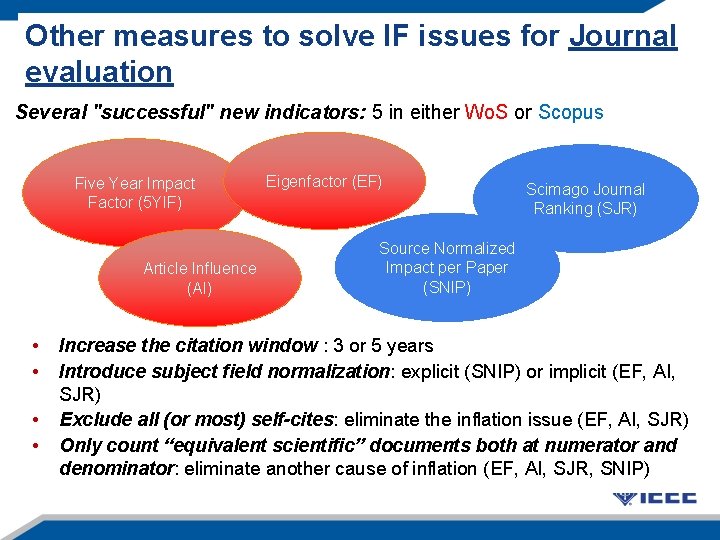

Other measures to solve IF issues for Journal To solve IF technical issues… evaluation Several "successful" new indicators: 5 in either Wo. S or Scopus Five Year Impact Factor (5 YIF) Article Influence (AI) • • Eigenfactor (EF) Scimago Journal Ranking (SJR) Source Normalized Impact per Paper (SNIP) Increase the citation window : 3 or 5 years Introduce subject field normalization: explicit (SNIP) or implicit (EF, AI, SJR) • Exclude all (or most) self-cites: eliminate the inflation issue (EF, AI, SJR) • 20 Only 06 -Jun-21 count “equivalent scientific” documents both at numerator and denominator: eliminate another cause of inflation (EF, AI, SJR, SNIP)

Popularity vs Prestige § An important distinction is between indicators measuring popularity or prestige 1. Popularity indicators: are based on an algebraic formula and count citations directly independently of their source (IF, 5 YIF, SNIP) 2. Prestige indicators: are based on an recursive formula and weight the influence of citations depending on their source (EF, AI, SJR) They evaluate different aspects of Journal Impact 06 -Jun-21 21 At the very minimum, one needs to use both popularity (ex. IF, 5 YIF) and prestige (ex. AI, SJR) indicators

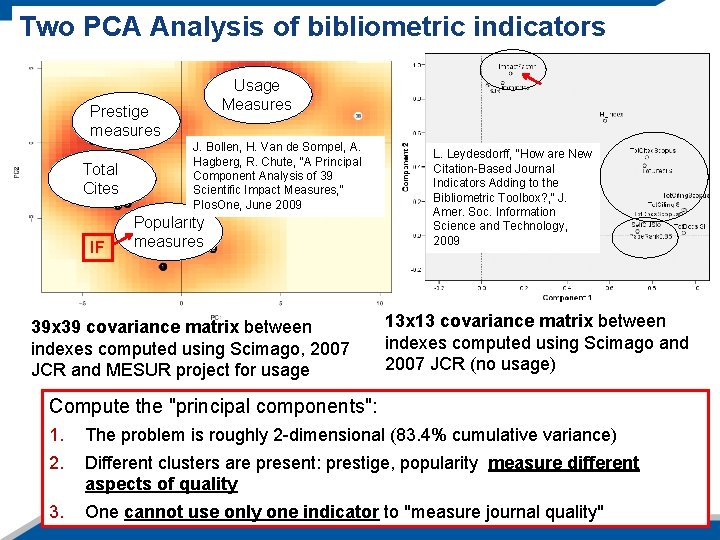

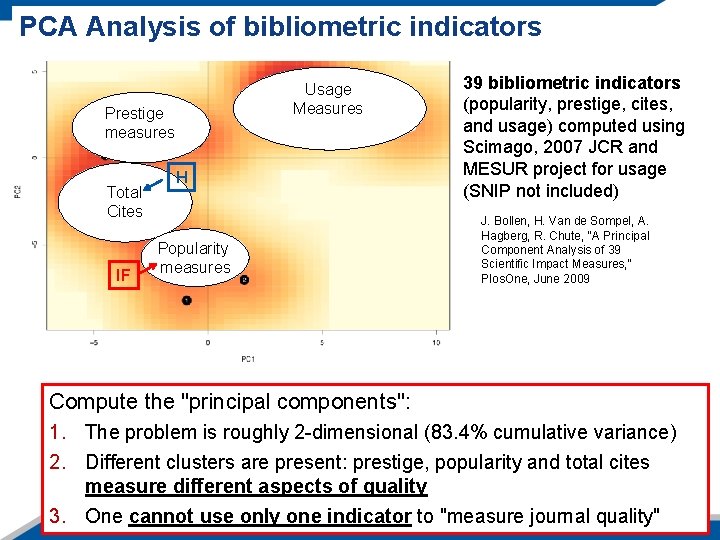

Two PCA Analysis of bibliometric indicators Usage Measures Prestige measures Total Cites IF J. Bollen, H. Van de Sompel, A. Hagberg, R. Chute, "A Principal Component Analysis of 39 Scientific Impact Measures, " Plos. One, June 2009 Popularity measures 39 x 39 covariance matrix between indexes computed using Scimago, 2007 JCR and MESUR project for usage L. Leydesdorff, "How are New Citation-Based Journal Indicators Adding to the Bibliometric Toolbox? , " J. Amer. Soc. Information Science and Technology, 2009 13 x 13 covariance matrix between indexes computed using Scimago and 2007 JCR (no usage) Compute the "principal components": 1. 2. 22 3. The problem is roughly 2 -dimensional (83. 4% cumulative variance) Different clusters are present: prestige, popularity measure different aspects of quality One cannot use only one indicator to "measure journal quality"

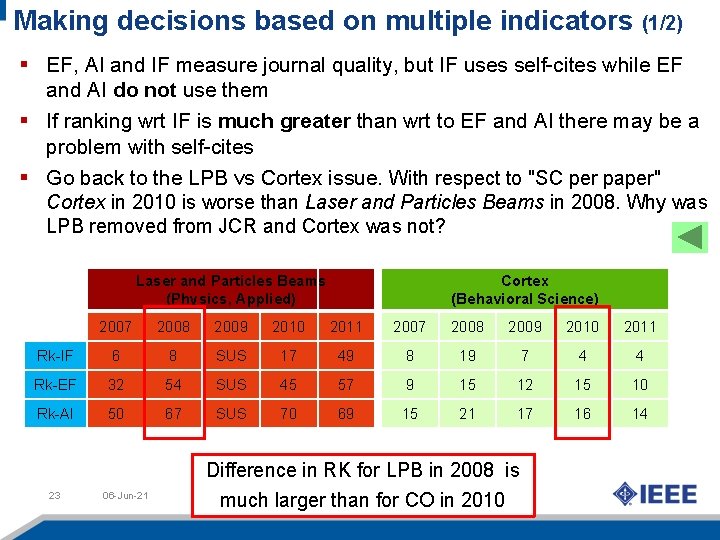

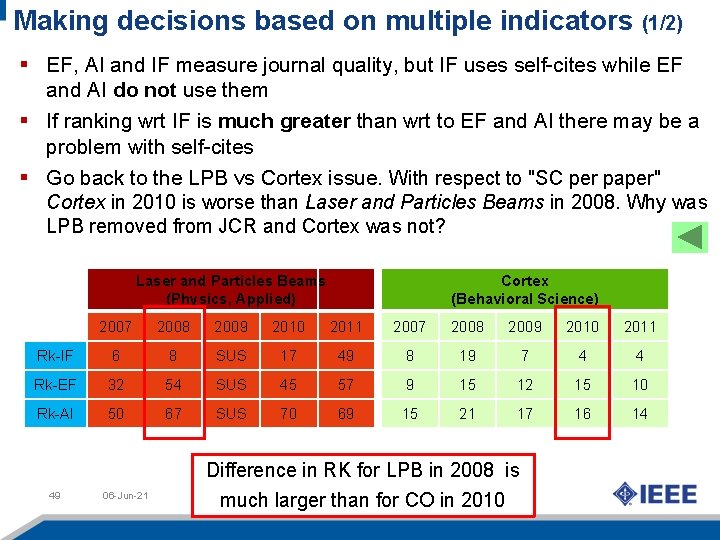

Making decisions based on multiple indicators (1/2) § EF, AI and IF measure journal quality, but IF uses self-cites while EF and AI do not use them § If ranking wrt IF is much greater than wrt to EF and AI there may be a problem with self-cites § Go back to the LPB vs Cortex issue. With respect to "SC per paper" Cortex in 2010 is worse than Laser and Particles Beams in 2008. Why was LPB removed from JCR and Cortex was not? Laser and Particles Beams (Physics, Applied) Cortex (Behavioral Science) 2007 2008 2009 2010 2011 Rk-IF 6 8 SUS 17 49 8 19 7 4 4 Rk-EF 32 54 SUS 45 57 9 15 12 15 10 Rk-AI 50 67 SUS 70 69 15 21 17 16 14 23 06 -Jun-21 Difference in RK for LPB in 2008 is much larger than for CO in 2010

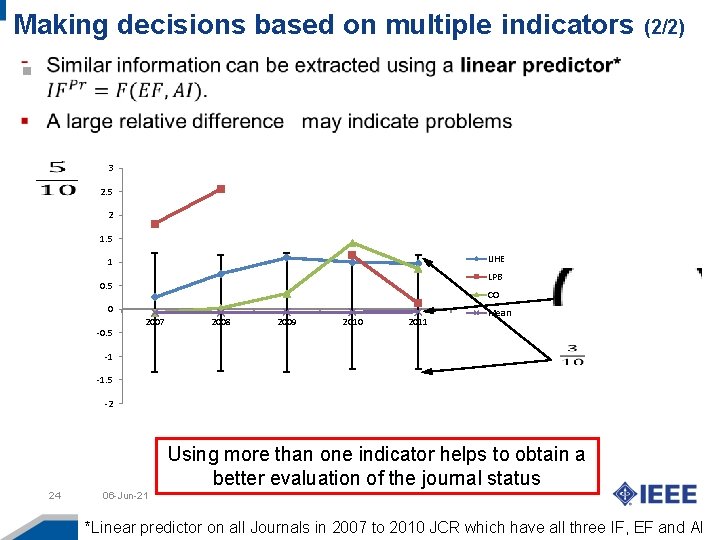

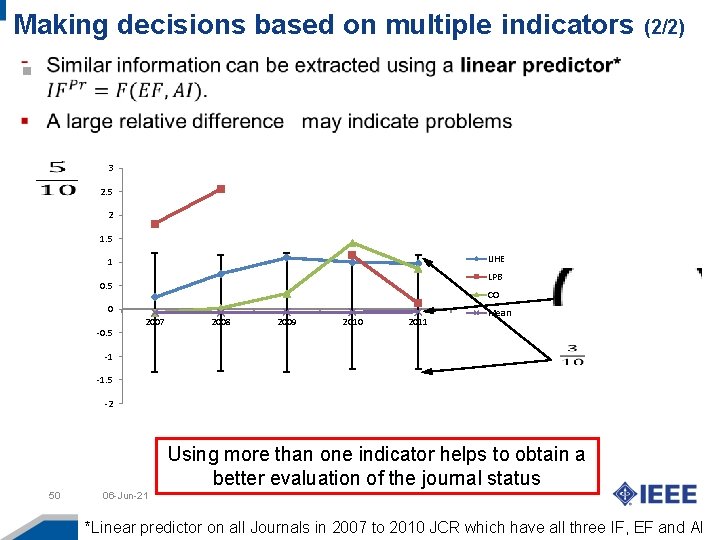

Making decisions based on multiple indicators (2/2) 3 2. 5 2 1. 5 IJHE 1 LPB 0. 5 CO 0 -0. 5 2007 2008 2009 2010 2011 Mean -1 -1. 5 -2 Using more than one indicator helps to obtain a better evaluation of the journal status 24 06 -Jun-21 *Linear predictor on all Journals in 2007 to 2010 JCR which have all three IF, EF and AI

Several organizations toke positions against bibliometric misuse Several other research agencies and professional organizations in the area of Physics, Medical Sciences, Biology toke positions againts bibliometrics misuse and abuse 25 06 -Jun-21

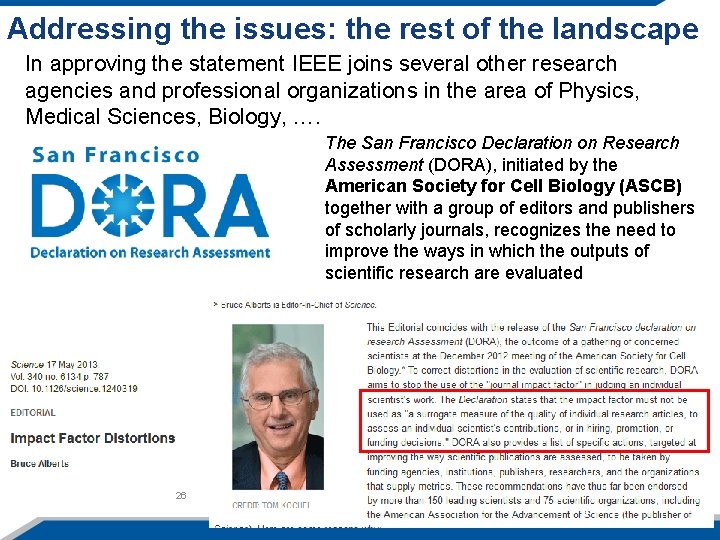

Addressing the issues: the rest of the landscape In approving the statement IEEE joins several other research agencies and professional organizations in the area of Physics, Medical Sciences, Biology, …. The San Francisco Declaration on Research Assessment (DORA), initiated by the American Society for Cell Biology (ASCB) together with a group of editors and publishers of scholarly journals, recognizes the need to improve the ways in which the outputs of scientific research are evaluated 26 06 -Jun-21

Addressing the issues: the rest of the landscape 27 06 -Jun-21

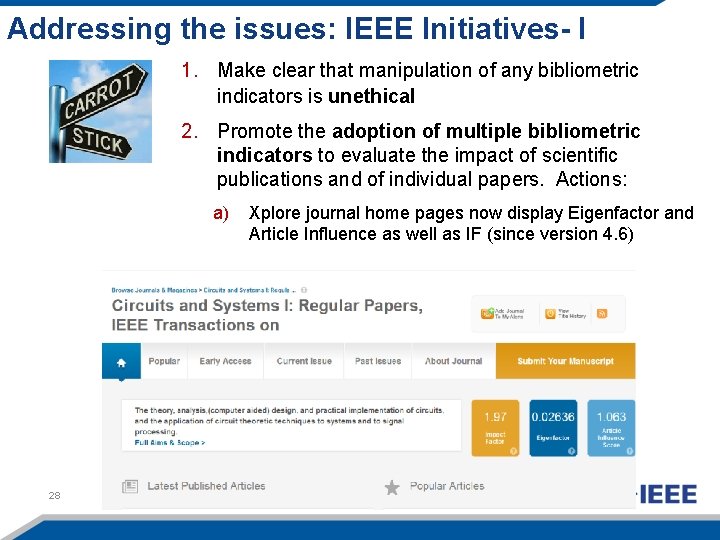

Addressing the issues: IEEE Initiatives- I 1. Make clear that manipulation of any bibliometric indicators is unethical 2. Promote the adoption of multiple bibliometric indicators to evaluate the impact of scientific publications and of individual papers. Actions: a) 28 06 -Jun-21 Xplore journal home pages now display Eigenfactor and Article Influence as well as IF (since version 4. 6)

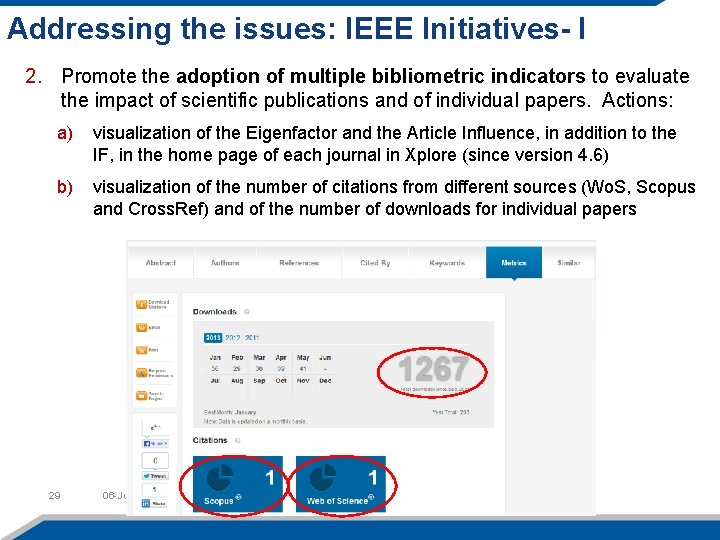

Addressing the issues: IEEE Initiatives- I 2. Promote the adoption of multiple bibliometric indicators to evaluate the impact of scientific publications and of individual papers. Actions: a) visualization of the Eigenfactor and the Article Influence, in addition to the IF, in the home page of each journal in Xplore (since version 4. 6) b) visualization of the number of citations from different sources (Wo. S, Scopus and Cross. Ref) and of the number of downloads for individual papers 29 06 -Jun-21

Addressing the issues: IEEE Initiatives- II 3. Educate the community on the significance of all bibliometric indicator and their proper use. Actions: a) panel discussion at the 2013, 2014 and 2015 IEEE Ei. Cs meeting b) presentation on this subject and major IEEE conferences and other venues (NSF, ECEDHA, …) c) preparation of white papers and articles on this subject to be distributed to the IEEE community 30 DOI: 10. 1109/ACCESS. 2013. 2261115 06 -Jun-21

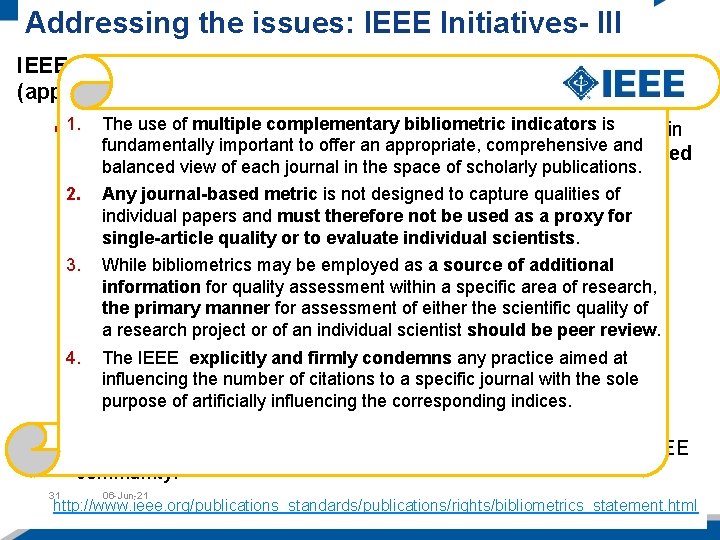

Addressing the issues: IEEE Initiatives- III IEEE position statement on correct use of bibliometrics (approved by Bo. D in 09/2013) Thejoins use of multiple complementary bibliometric indicators is none in § 1. IEEE several professional and scientific institutions (but important to to stress offer anthat appropriate, comprehensive thefundamentally area of Engineering) bibliometrics cannot and be used balanced view of each journal in the space of scholarly publications. (alone) to obtain an automatic evaluation of single researcher 2. "scientific Any journal-based quality" metric is not designed to capture qualities of individual papers and must therefore not be used as a proxy for single-article quality or to evaluate individual scientists. 3. While bibliometrics may be employed as a source of additional information for quality assessment within a specific area of research, the primary manner for assessment of either the scientific quality of a research project or of an individual scientist should be peer review. 4. The IEEE explicitly and firmly condemns any practice aimed at influencing the number of citations to a specific journal with the sole purpose of artificially influencing the corresponding indices. § A web page was created to make the statement available to the IEEE community. 31 06 -Jun-21 http: //www. ieee. org/publications_standards/publications/rights/bibliometrics_statement. html

Concluding Remarks 1. Many journal bibliometric indicators exists, each aiming to measure the journal scientific impact and they measure it in different ways 2. One cannot use a single indicator (neither IF, nor any other) to measure journal impact. At the very least, one needs to use a) One popularity indicator (f. i. the IF, or the 5 YIF) b) One prestige indicator (f. i. the AI or the SJR) 3. Use of multiple indicators provides a much more accurate evaluation of a journal’s impact and can also make evident existing anomalies (manipulations) 4. Journal bibliometrics cannot be employed as the sole measures of the quality of single papers or to evaluate single scientists 32 06 -Jun-21

Backup-Slides 33 06 -Jun-21

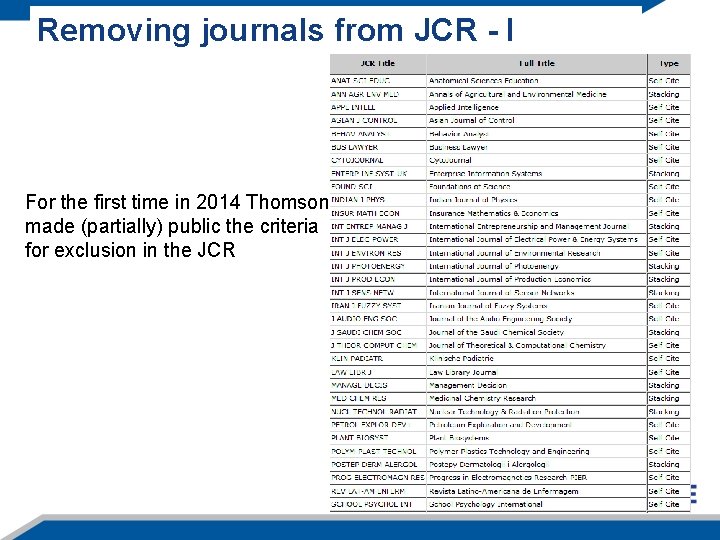

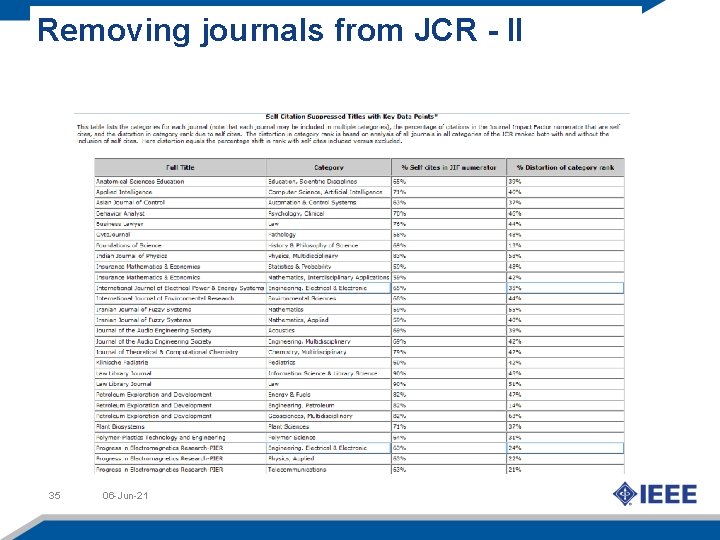

Removing journals from JCR - I For the first time in 2014 Thomson made (partially) public the criteria for exclusion in the JCR

Removing journals from JCR - II 35 06 -Jun-21

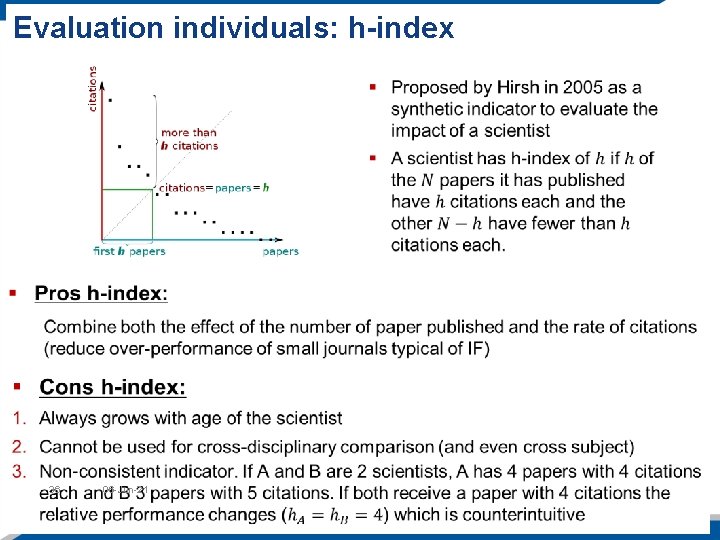

Evaluation individuals: h-index 36 06 -Jun-21

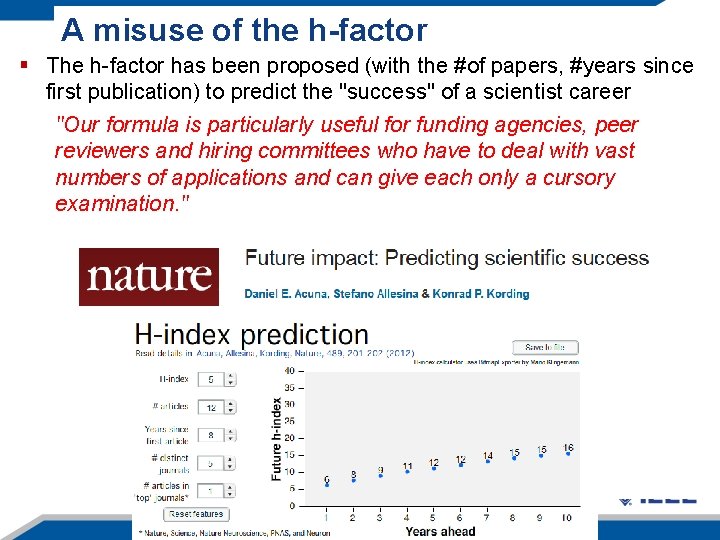

A misuse of the h-factor § The h-factor has been proposed (with the #of papers, #years since first publication) to predict the "success" of a scientist career "Our formula is particularly useful for funding agencies, peer reviewers and hiring committees who have to deal with vast numbers of applications and can give each only a cursory examination. " 37 06 -Jun-21

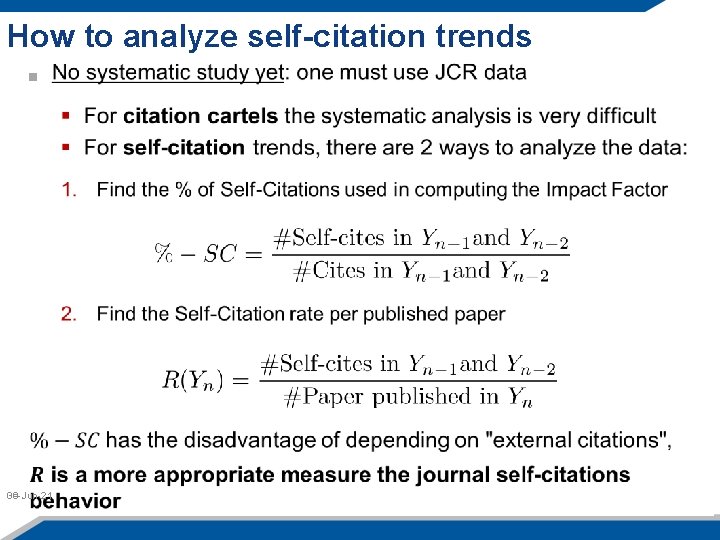

How to analyze self-citation trends 06 -Jun-21 39

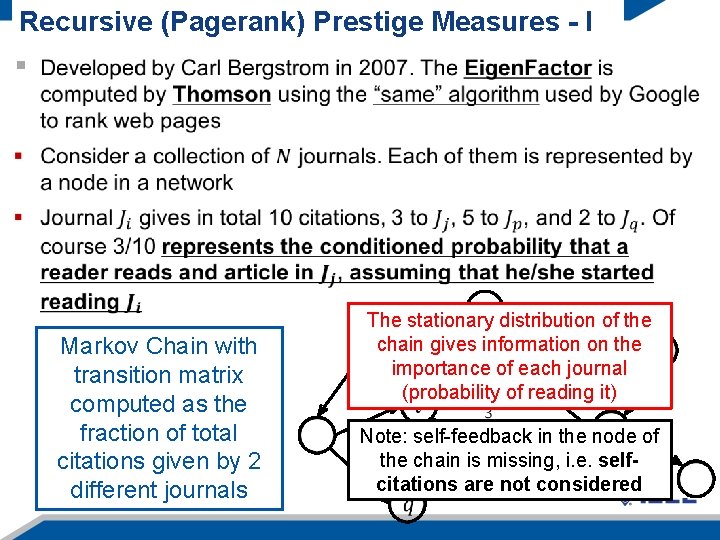

Recursive (Pagerank) Prestige Measures - I Markov Chain with transition matrix computed as the fraction of total citations given by 2 different journals 40 06 -Jun-21 The stationary distribution of the chain gives information on the importance of each journal (probability of reading it) Note: self-feedback in the node of the chain is missing, i. e. selfcitations are not considered

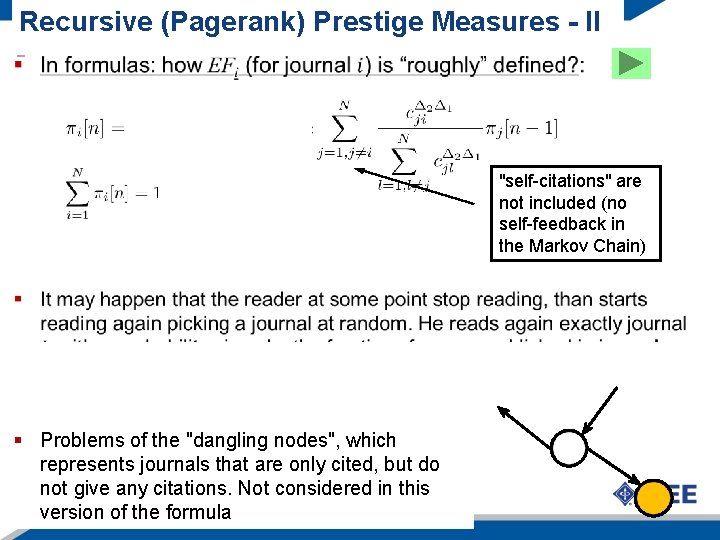

Recursive (Pagerank) Prestige Measures - II "self-citations" are not included (no self-feedback in the Markov Chain) § Problems of the "dangling nodes", which represents journals that are only cited, but do not any citations. Not considered in this 41 give 06 -Jun-21 version of the formula

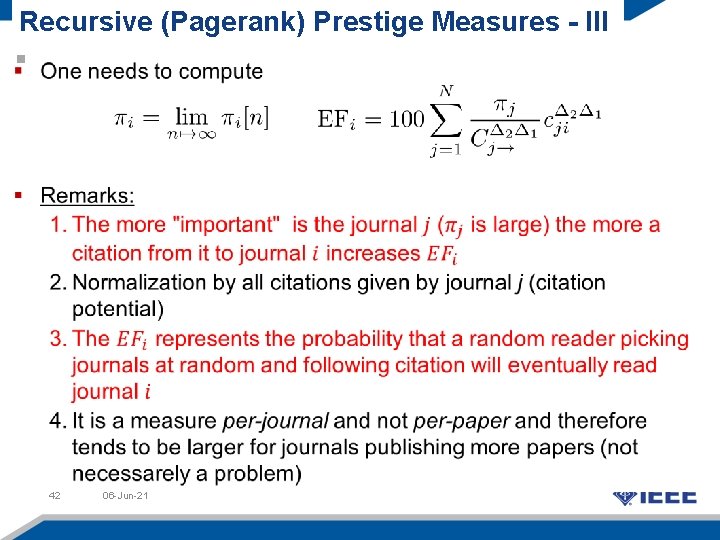

Recursive (Pagerank) Prestige Measures - III 42 06 -Jun-21

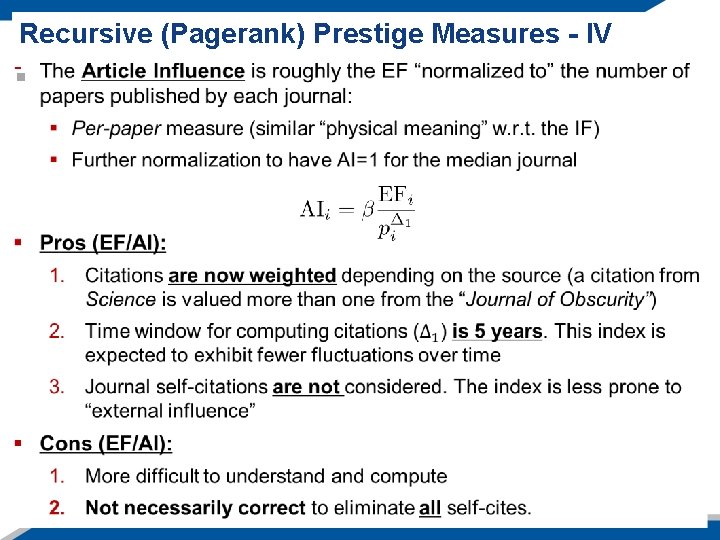

Recursive (Pagerank) Prestige Measures - IV 43 06 -Jun-21

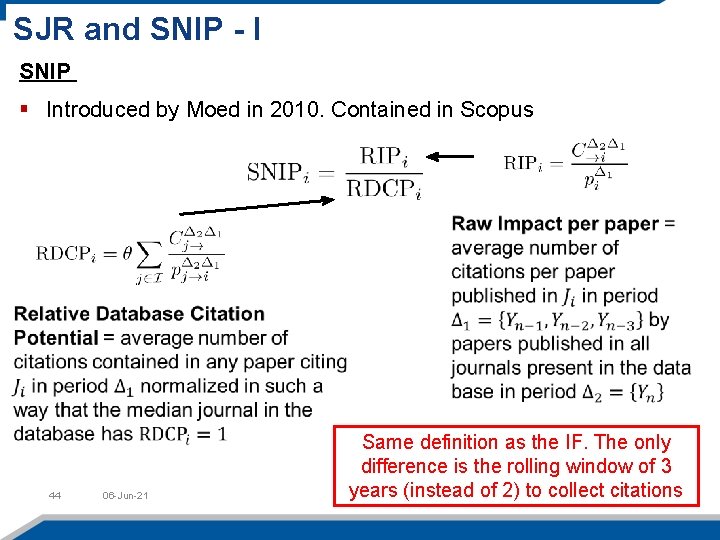

SJR and SNIP - I SNIP § Introduced by Moed in 2010. Contained in Scopus 44 06 -Jun-21 Same definition as the IF. The only difference is the rolling window of 3 years (instead of 2) to collect citations

SJR and SNIP - II …. 45 06 -Jun-21

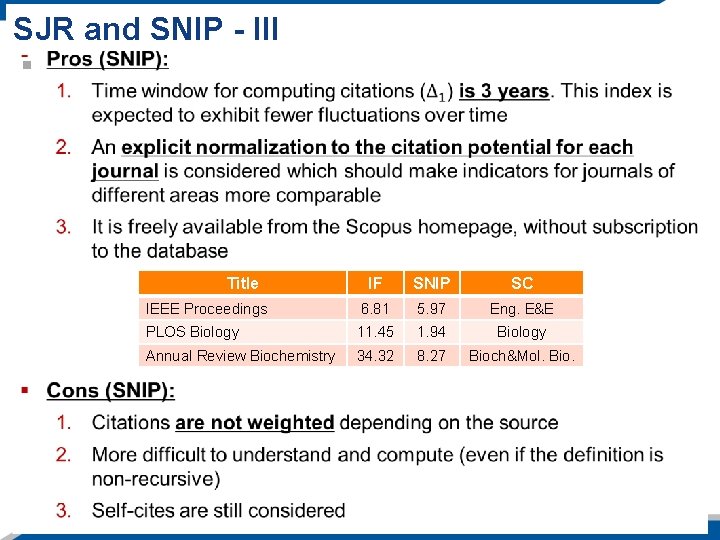

SJR and SNIP - III Title IF SNIP SC IEEE Proceedings 6. 81 5. 97 Eng. E&E PLOS Biology 11. 45 1. 94 Biology Annual Review Biochemistry 34. 32 8. 27 Bioch&Mol. Bio.

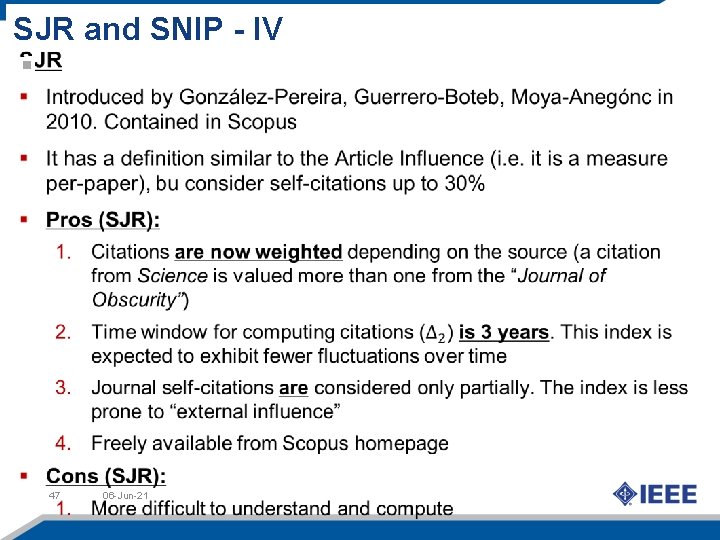

SJR and SNIP - IV 47 06 -Jun-21

PCA Analysis of bibliometric indicators Usage Measures Prestige measures Total Cites IF H Popularity measures 39 bibliometric indicators (popularity, prestige, cites, and usage) computed using Scimago, 2007 JCR and MESUR project for usage (SNIP not included) J. Bollen, H. Van de Sompel, A. Hagberg, R. Chute, "A Principal Component Analysis of 39 Scientific Impact Measures, " Plos. One, June 2009 Compute the "principal components": 1. The problem is roughly 2 -dimensional (83. 4% cumulative variance) 2. Different clusters are present: prestige, popularity and total cites measure different aspects of quality 48 3. One cannot use only one indicator to "measure journal quality"

Making decisions based on multiple indicators (1/2) § EF, AI and IF measure journal quality, but IF uses self-cites while EF and AI do not use them § If ranking wrt IF is much greater than wrt to EF and AI there may be a problem with self-cites § Go back to the LPB vs Cortex issue. With respect to "SC per paper" Cortex in 2010 is worse than Laser and Particles Beams in 2008. Why was LPB removed from JCR and Cortex was not? Laser and Particles Beams (Physics, Applied) Cortex (Behavioral Science) 2007 2008 2009 2010 2011 Rk-IF 6 8 SUS 17 49 8 19 7 4 4 Rk-EF 32 54 SUS 45 57 9 15 12 15 10 Rk-AI 50 67 SUS 70 69 15 21 17 16 14 49 06 -Jun-21 Difference in RK for LPB in 2008 is much larger than for CO in 2010

Making decisions based on multiple indicators (2/2) 3 2. 5 2 1. 5 IJHE 1 LPB 0. 5 CO 0 -0. 5 2007 2008 2009 2010 2011 Mean -1 -1. 5 -2 Using more than one indicator helps to obtain a better evaluation of the journal status 50 06 -Jun-21 *Linear predictor on all Journals in 2007 to 2010 JCR which have all three IF, EF and AI

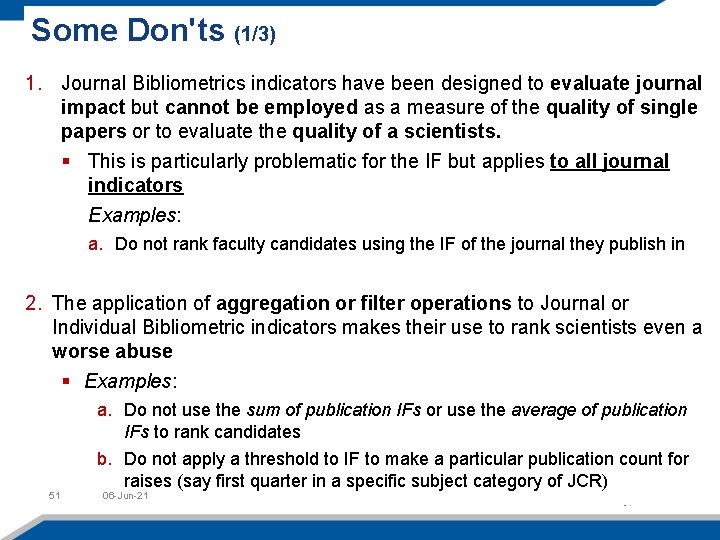

Some Don'ts (1/3) 1. Journal Bibliometrics indicators have been designed to evaluate journal impact but cannot be employed as a measure of the quality of single papers or to evaluate the quality of a scientists. § This is particularly problematic for the IF but applies to all journal indicators Examples: a. Do not rank faculty candidates using the IF of the journal they publish in 2. The application of aggregation or filter operations to Journal or Individual Bibliometric indicators makes their use to rank scientists even a worse abuse § Examples: 51 a. Do not use the sum of publication IFs or use the average of publication IFs to rank candidates b. Do not apply a threshold to IF to make a particular publication count for raises (say first quarter in a specific subject category of JCR) 06 -Jun-21

Some Don'ts (2/3) 3. Avoid to apply filters also to citation indicators if the goal is an automatic evaluation of the candidate. If filter are set they should assist human judgment and not replace it! (citations are easy to manipulate) § Examples: a. Do not require a minimum values for h to allow a scientist to apply for a grant b. Do not require a minimum number of citation to get promotion 4. Avoid ranking faculty/scientists according to "one/multiple bibliometric parameter(s)": It is legitimate to rank candidates who have been short-listed, e. g. , for a job position, according to relevant criteria, but ranking should not be merely based on bibliometrics 52 06 -Jun-21

Some Do's 1. Journal Bibliometric indicators exist, each aiming to measure the journal scientific impact and they measure it in different ways § One cannot use a single indicator (neither IF, nor any other) to measure journal impact. At the very least, one needs to use a. One popularity indicator (e. g. the IF, or the 5 YIF) b. One prestige indicator (e. g. the AI) § Use of multiple indicators provides a much more accurate evaluation of a journal’s impact and can also make evident existing anomalies 2. Individual Bibliometric indicators are statistical quantities and if the faculties/candidates have a sufficiently large publication output, citation analysis can be used (with caution!!) as an additional source of information for evaluation § Examples: 53 a. Different career progression dynamics may (will) exist b. Benchmarking is fundamental especially for multidisciplinary research c. Read the contribution and apply your own judgment!! 06 -Jun-21

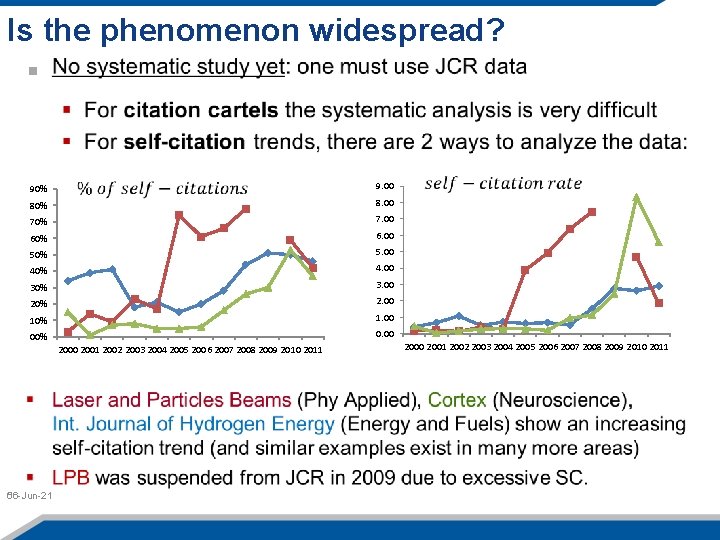

Is the phenomenon widespread? 90% 9. 00 80% 8. 00 70% 7. 00 60% 6. 00 50% 5. 00 40% 4. 00 30% 3. 00 20% 2. 00 10% 1. 00 00% 0. 00 2001 2002 2003 2004 2005 2006 2007 2008 2009 2010 2011 06 -Jun-21 56 2000 2001 2002 2003 2004 2005 2006 2007 2008 2009 2010 2011

- Slides: 54