Biased Random Walk based Social Regularization for Word

Biased Random Walk based Social Regularization for Word Embeddings Ziqian Zeng*, Xin Liu*, and Yangqiu Song The Hong Kong University of Science and Technology * Equal Contribution

Outline ØSocialized Word Embeddings ØMotivation (Problems of SWE and Solutions) ØAlgorithm ØRandom Walk Methods ØExperiments 2

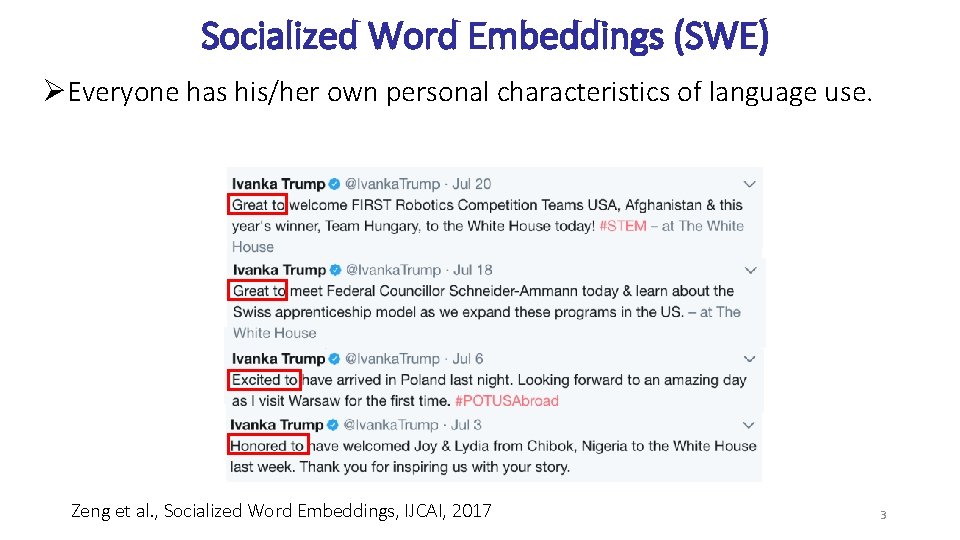

Socialized Word Embeddings (SWE) ØEveryone has his/her own personal characteristics of language use. Zeng et al. , Socialized Word Embeddings, IJCAI, 2017 3

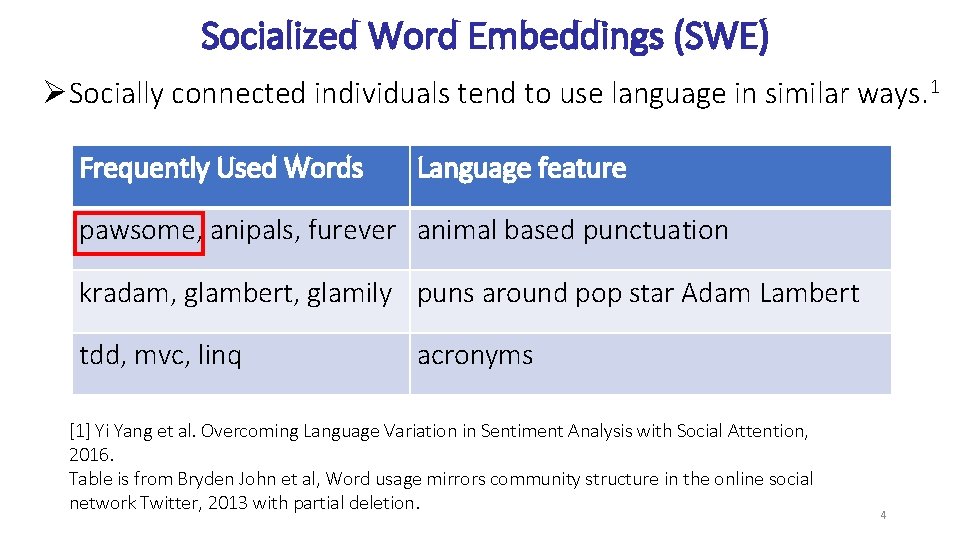

Socialized Word Embeddings (SWE) ØSocially connected individuals tend to use language in similar ways. 1 Frequently Used Words Language feature pawsome, anipals, furever animal based punctuation kradam, glambert, glamily puns around pop star Adam Lambert tdd, mvc, linq acronyms [1] Yi Yang et al. Overcoming Language Variation in Sentiment Analysis with Social Attention, 2016. Table is from Bryden John et al, Word usage mirrors community structure in the online social network Twitter, 2013 with partial deletion. 4

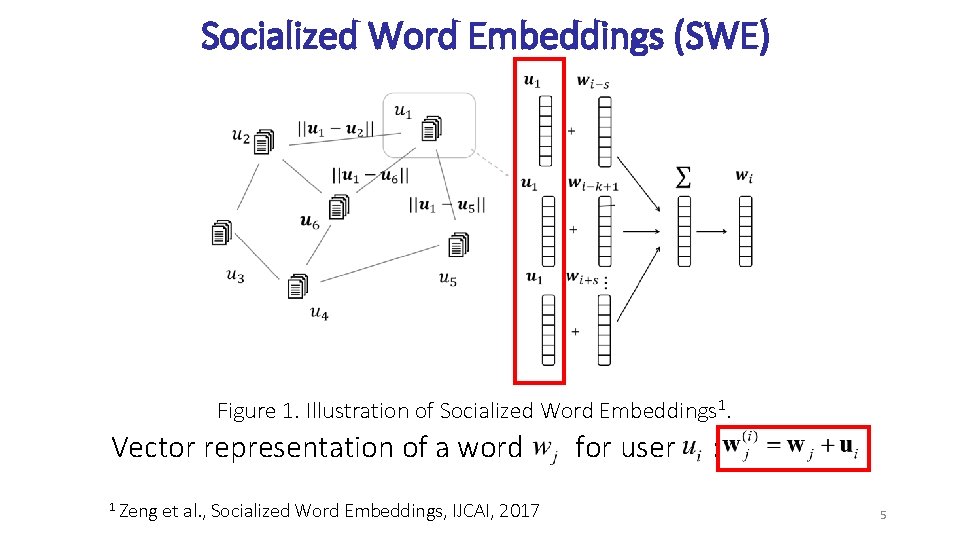

Socialized Word Embeddings (SWE) Figure 1. Illustration of Socialized Word Embeddings 1. Vector representation of a word 1 Zeng et al. , Socialized Word Embeddings, IJCAI, 2017 for user : 5

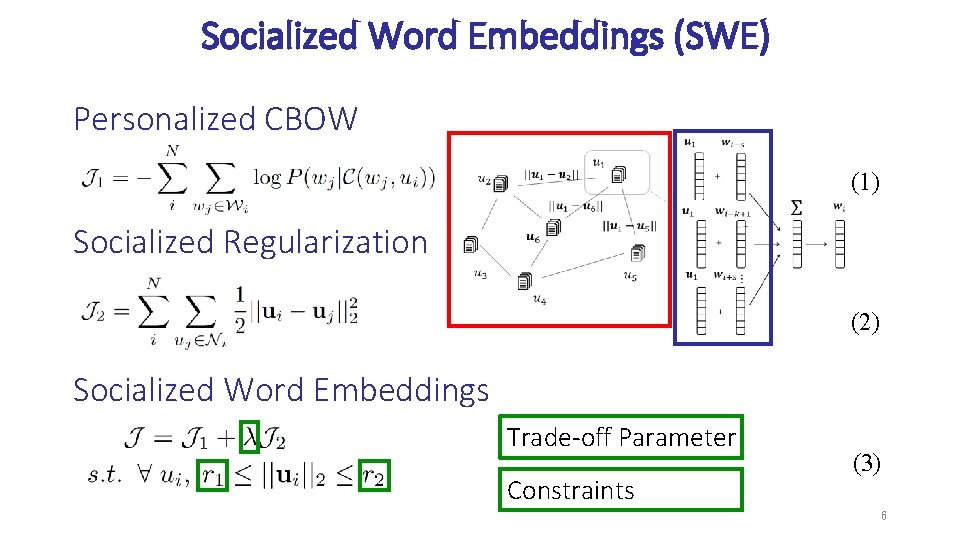

Socialized Word Embeddings (SWE) Personalized CBOW (1) Socialized Regularization (2) Socialized Word Embeddings Trade-off Parameter Constraints (3) 6

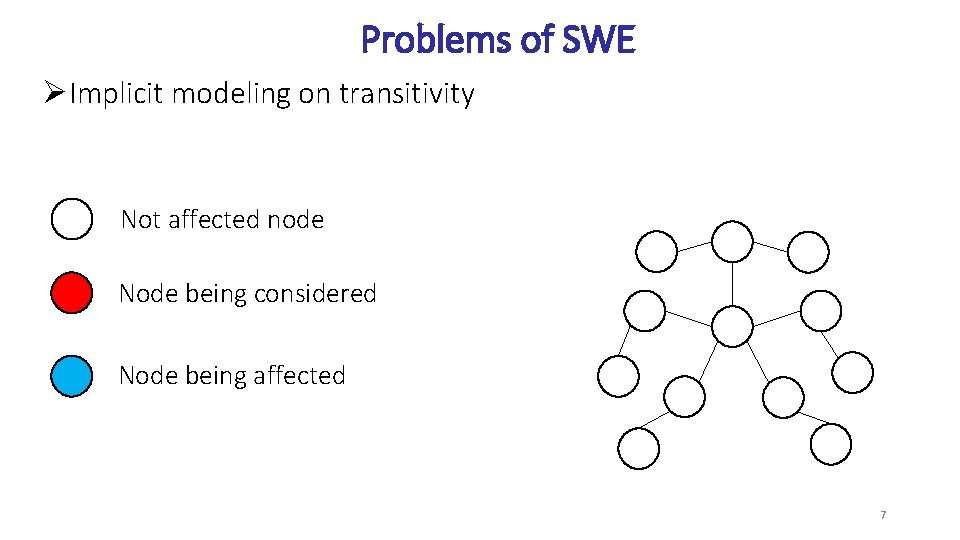

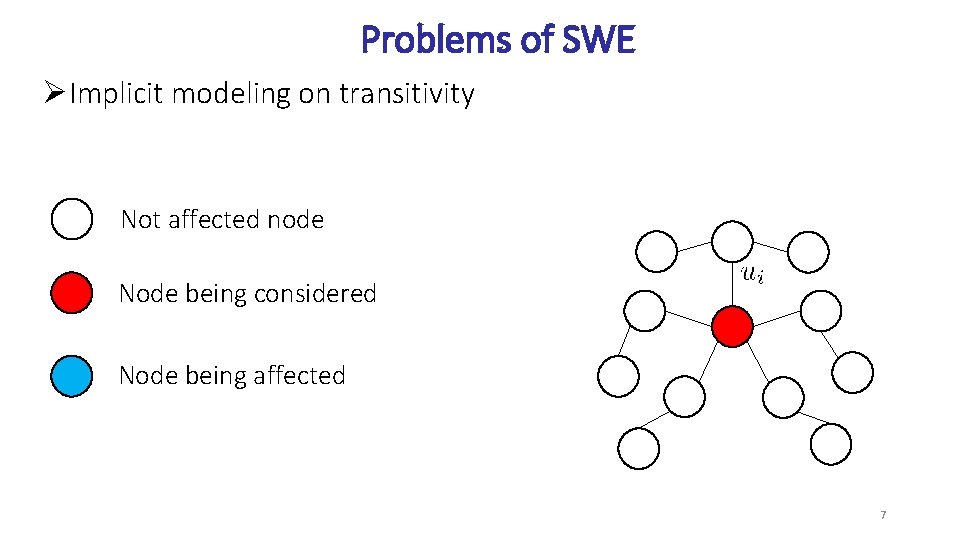

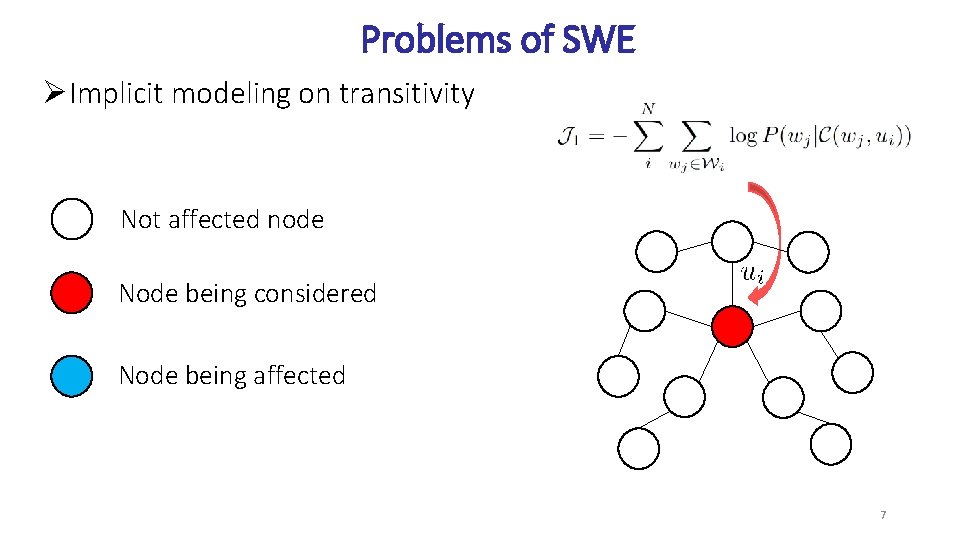

Problems of SWE ØImplicit modeling on transitivity Not affected node Node being considered Node being affected 7

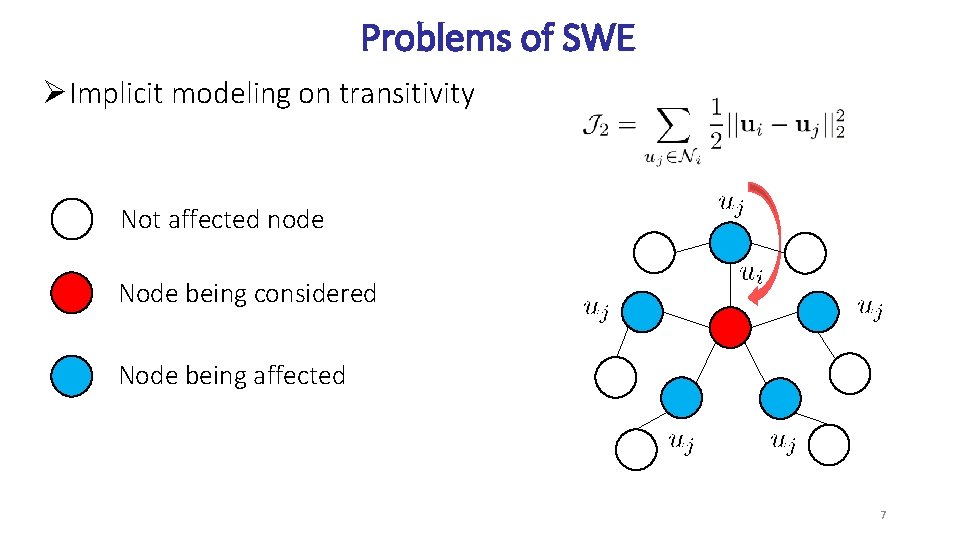

Problems of SWE ØImplicit modeling on transitivity Not affected node Node being considered Node being affected 7

Problems of SWE ØImplicit modeling on transitivity Not affected node Node being considered Node being affected 7

Problems of SWE ØImplicit modeling on transitivity Not affected node Node being considered Node being affected 7

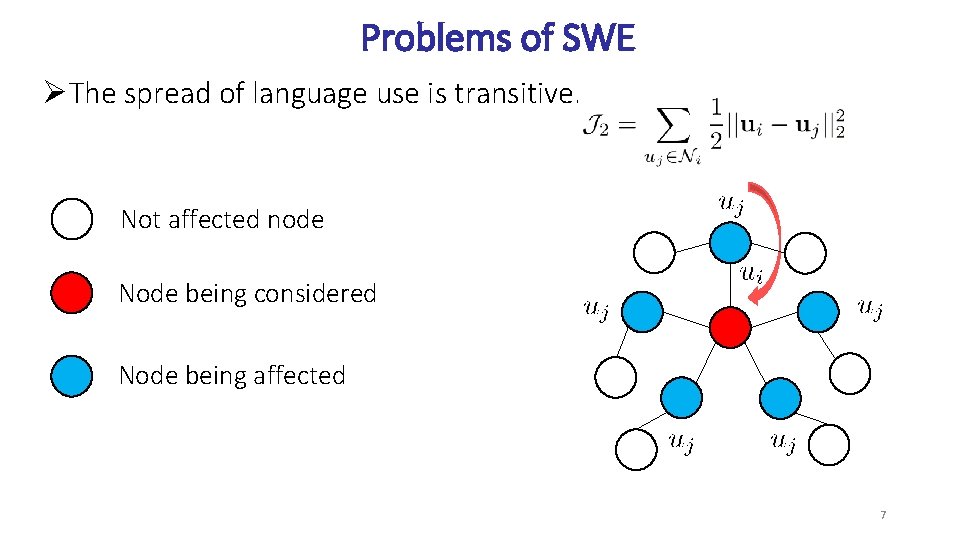

Problems of SWE ØThe spread of language use is transitive. Not affected node Node being considered Node being affected 7

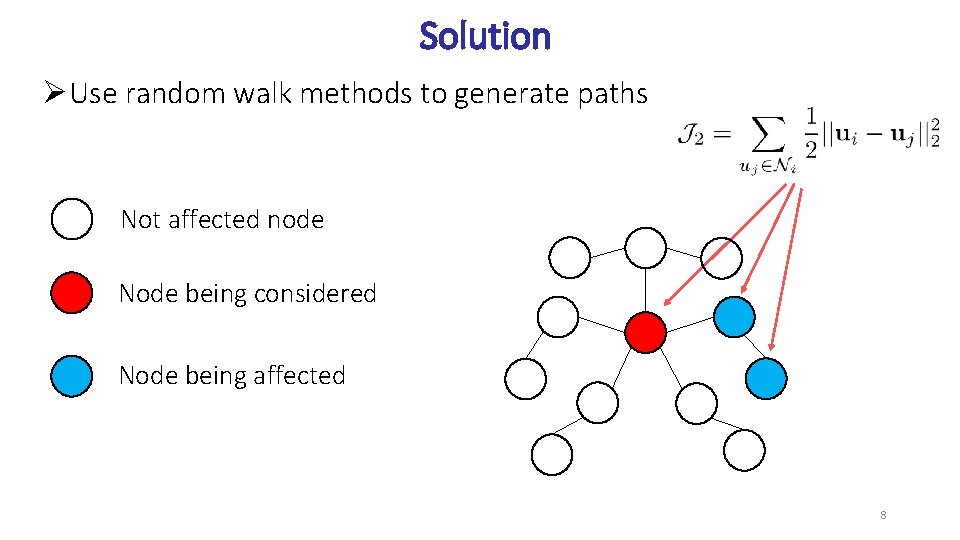

Solution ØUse random walk methods to generate paths Not affected node Node being considered Node being affected 8

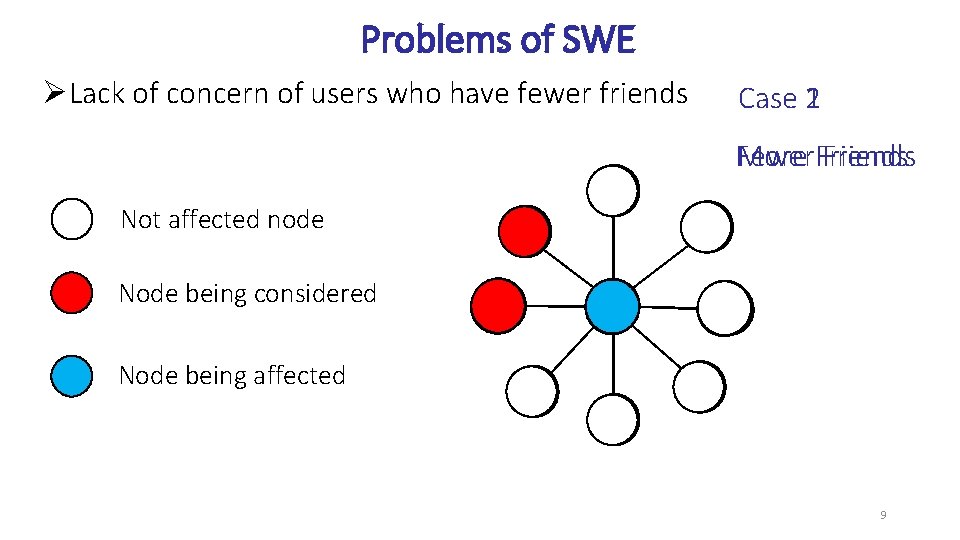

Problems of SWE ØLack of concern of users who have fewer friends Case 2 1 More Friends Fewer Friends Not affected node Node being considered Node being affected 9

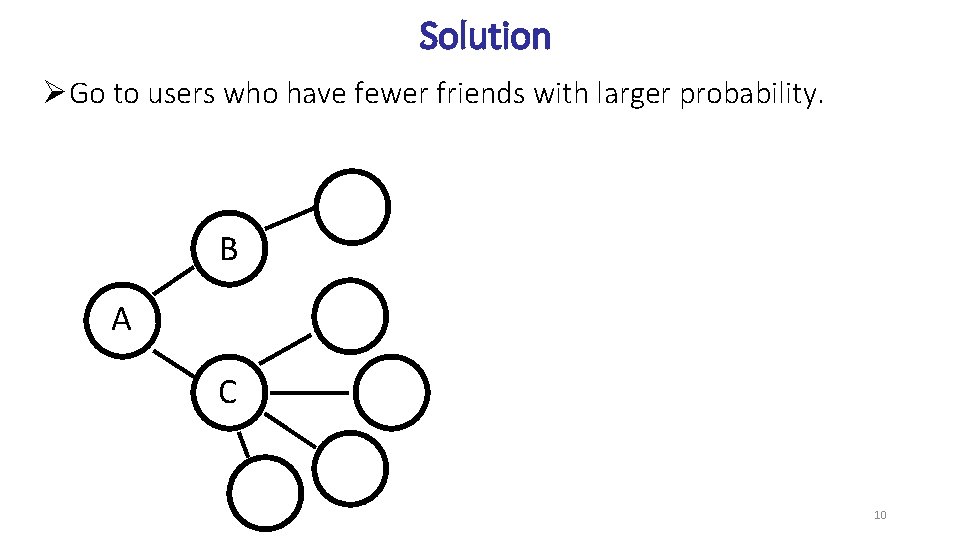

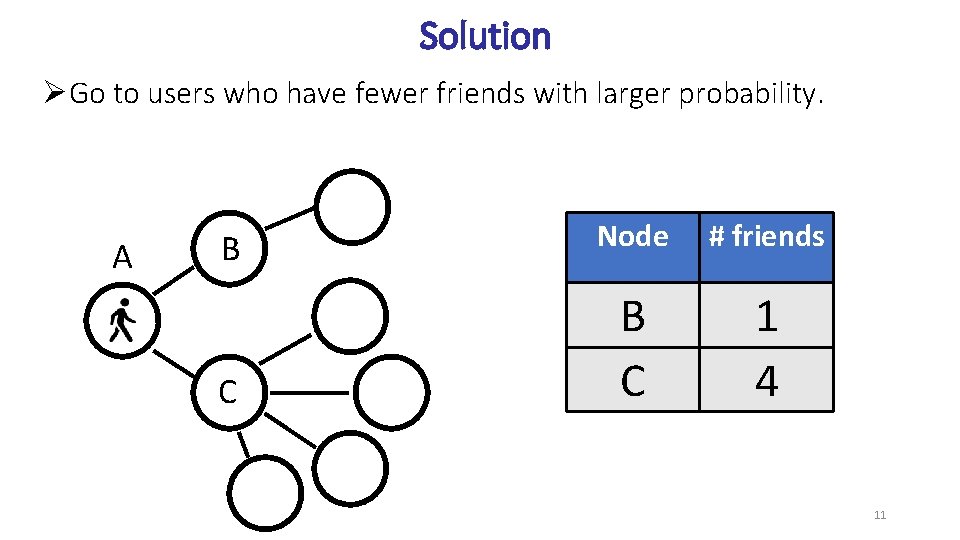

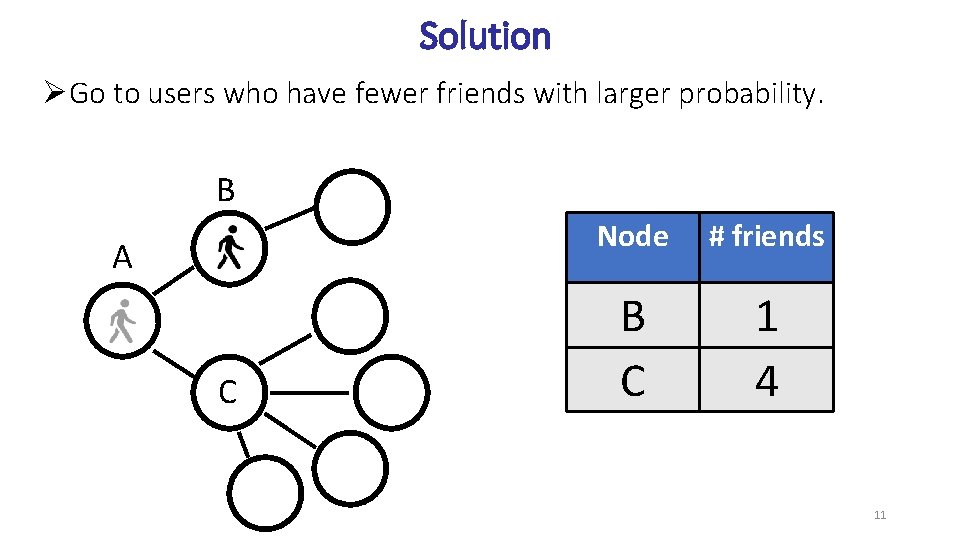

Solution ØGo to users who have fewer friends with larger probability. B A C 10

Solution ØGo to users who have fewer friends with larger probability. A B Node # friends C B C 1 4 11

Solution ØGo to users who have fewer friends with larger probability. B A C Node # friends B C 1 4 11

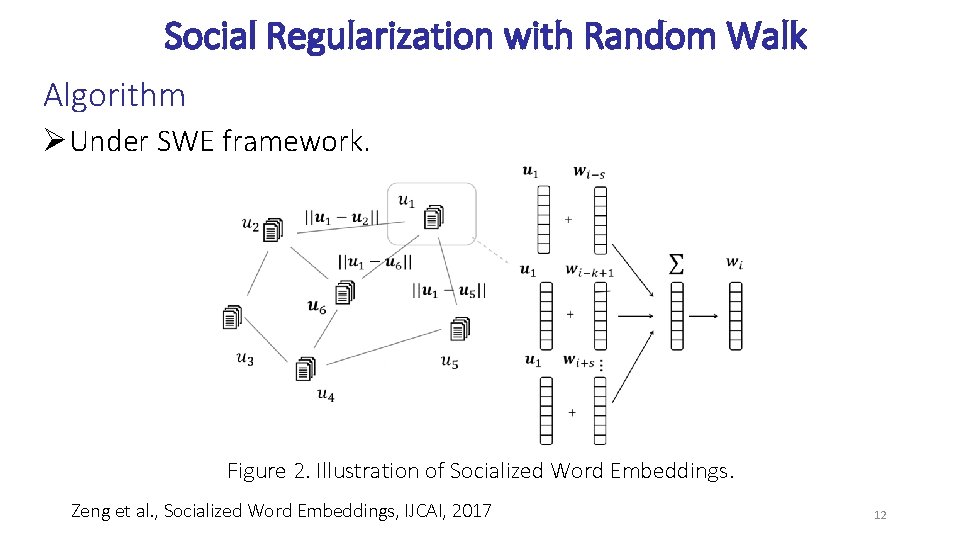

Social Regularization with Random Walk Algorithm ØUnder SWE framework. Figure 2. Illustration of Socialized Word Embeddings. Zeng et al. , Socialized Word Embeddings, IJCAI, 2017 12

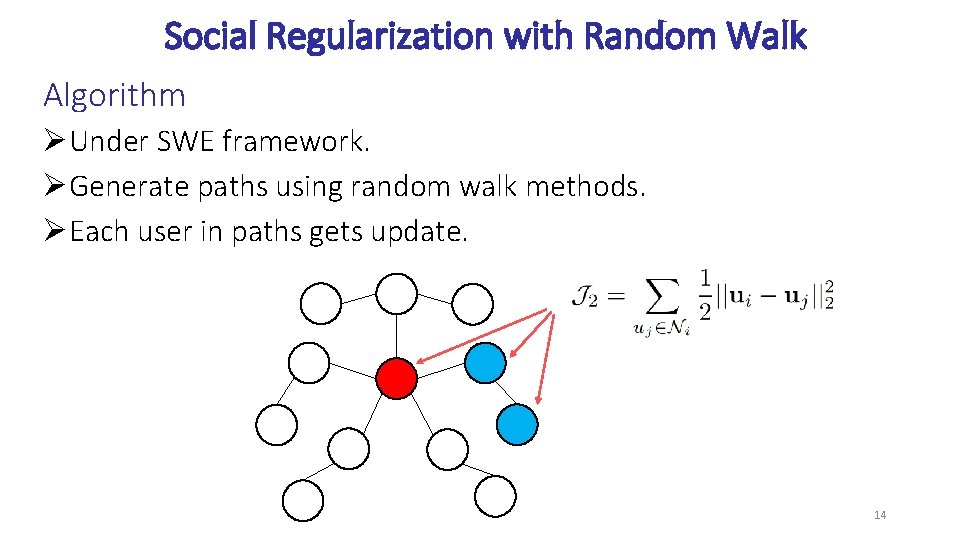

Social Regularization with Random Walk Algorithm ØUnder SWE framework. ØGenerate paths using random walk methods. 13

Social Regularization with Random Walk Algorithm ØUnder SWE framework. ØGenerate paths using random walk methods. ØEach user in paths gets update. 14

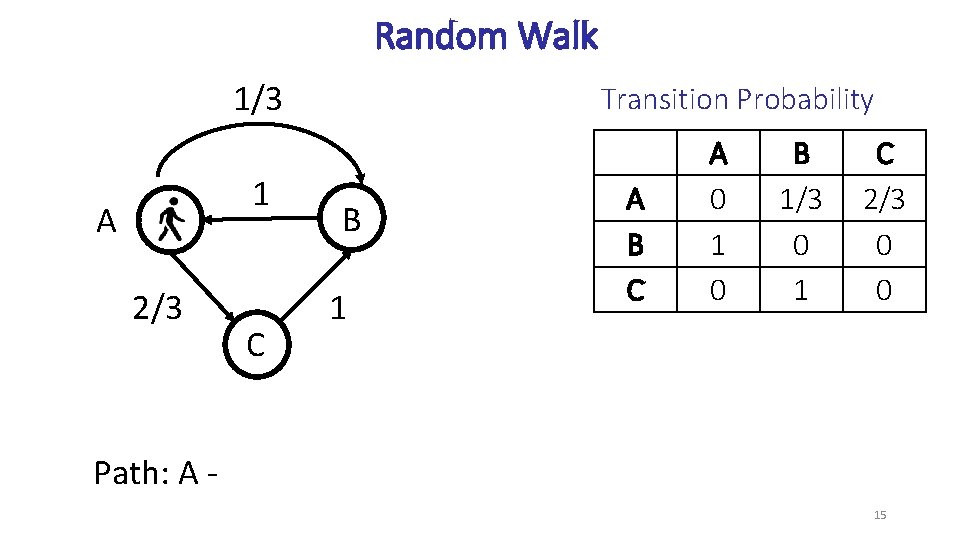

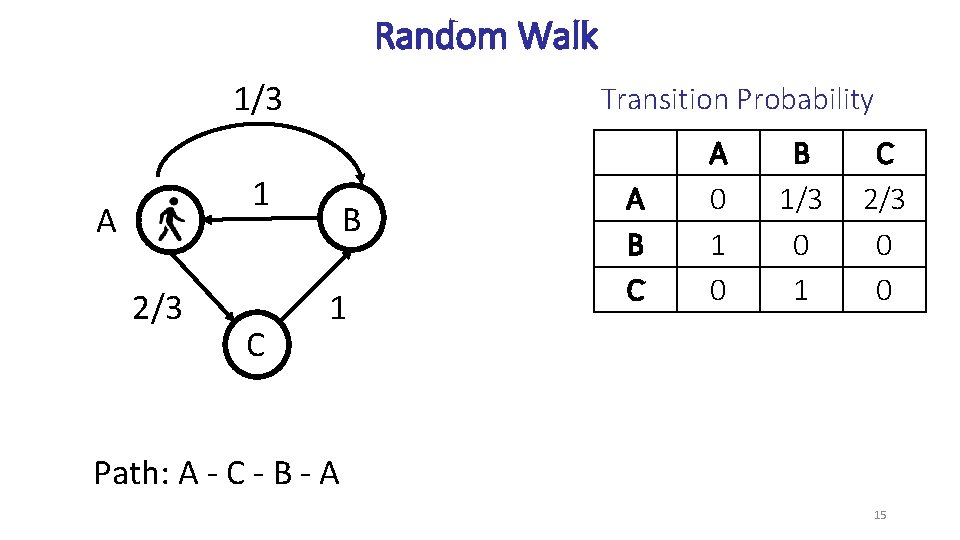

Random Walk 1/3 A 2/3 1 C Transition Probability B 1 A B C A 0 1 0 B 1/3 0 1 C 2/3 0 0 15

Random Walk 1/3 1 A 2/3 C Transition Probability B 1 A B C A 0 1 0 B 1/3 0 1 C 2/3 0 0 Path: A 15

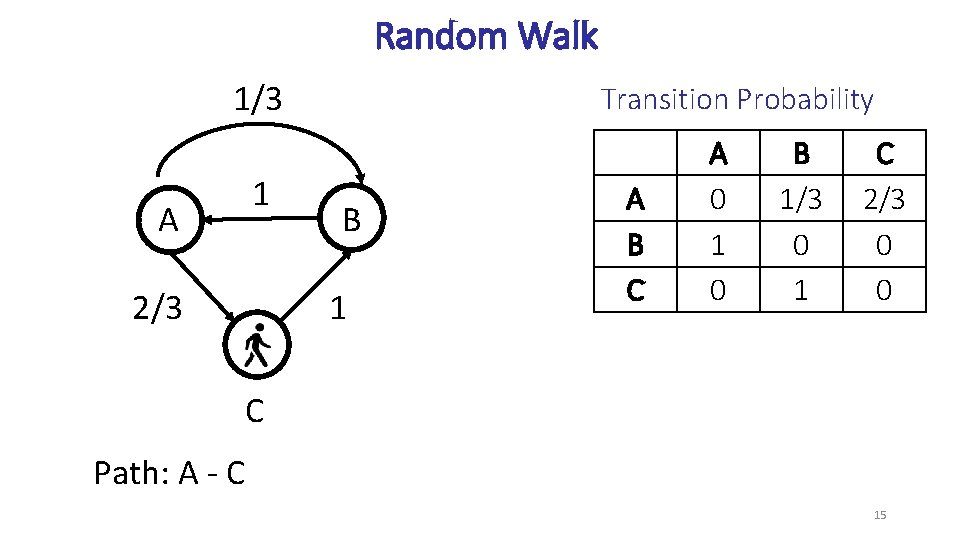

Random Walk 1/3 1 A 2/3 Transition Probability B 1 A B C A 0 1 0 B 1/3 0 1 C 2/3 0 0 C Path: A - C 15

Random Walk 1/3 A 2/3 Transition Probability 1 C B 1 A B C A 0 1 0 B 1/3 0 1 C 2/3 0 0 Path: A - C - B 15

Random Walk 1/3 Transition Probability 1 A 2/3 C B 1 A B C A 0 1 0 B 1/3 0 1 C 2/3 0 0 Path: A - C - B - A 15

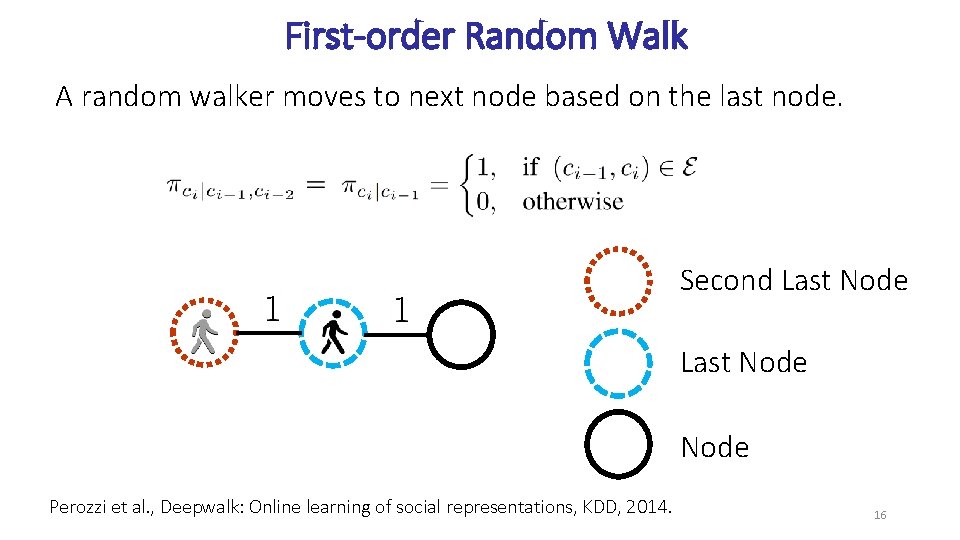

First-order Random Walk A random walker moves to next node based on the last node. Second Last Node Perozzi et al. , Deepwalk: Online learning of social representations, KDD, 2014. 16

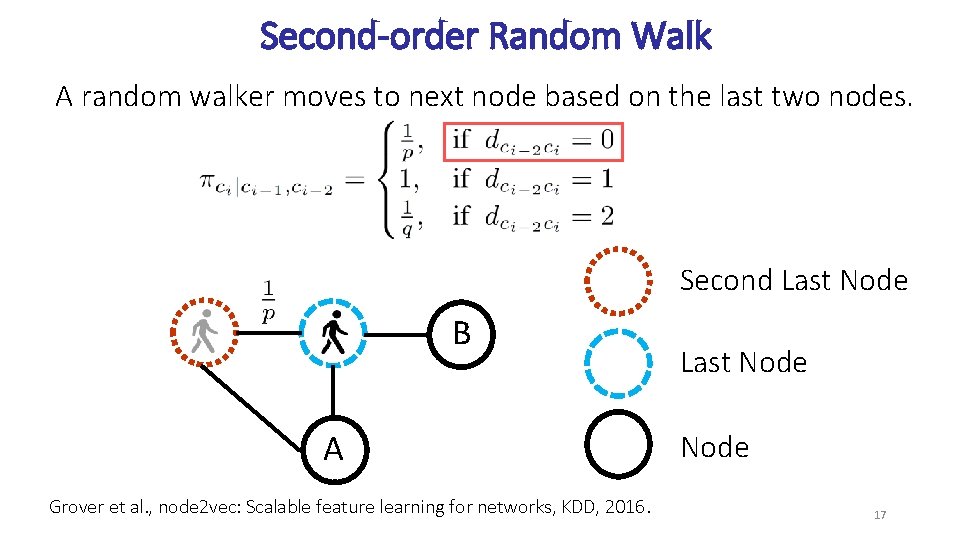

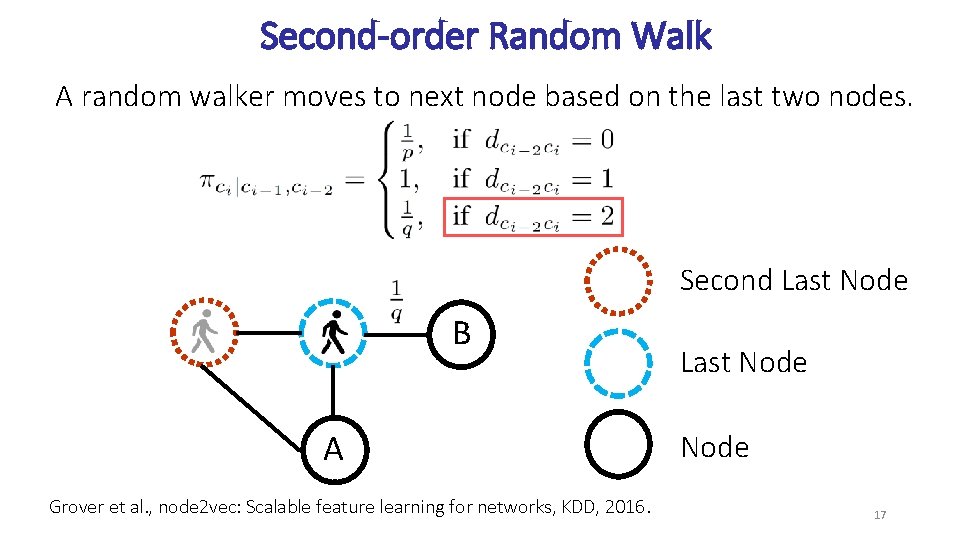

Second-order Random Walk A random walker moves to next node based on the last two nodes. Second Last Node B A Grover et al. , node 2 vec: Scalable feature learning for networks, KDD, 2016. Last Node 17

Second-order Random Walk A random walker moves to next node based on the last two nodes. Second Last Node B A Grover et al. , node 2 vec: Scalable feature learning for networks, KDD, 2016. Last Node 17

Second-order Random Walk A random walker moves to next node based on the last two nodes. Second Last Node B A Grover et al. , node 2 vec: Scalable feature learning for networks, KDD, 2016. Last Node 17

Biased Second-order Random Walk A random walker moves to next node based on the last two nodes. 18

Biased Second-order Random Walk Number of friends of node Average number of friends Hyper-parameter 19

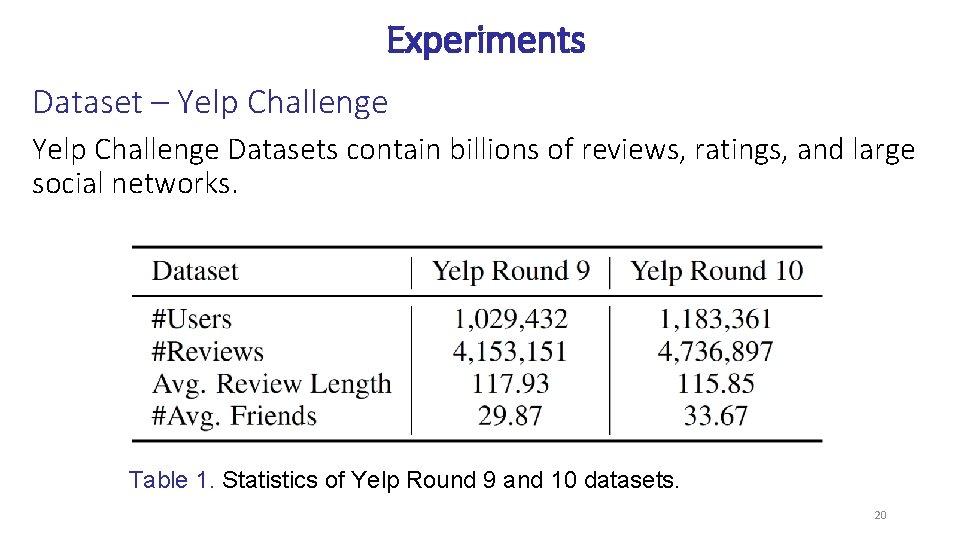

Experiments Dataset – Yelp Challenge Datasets contain billions of reviews, ratings, and large social networks. Table 1. Statistics of Yelp Round 9 and 10 datasets. 20

Sentiment Classification Ø Two phenomena of language use help sentiment analysis task. Ø Word such as “good” can indicate different sentiment ratings depending on the author. [1] Yang et al. Overcoming Language Variation in Sentiment Analysis with Social Attention, TACL, 2017. 21

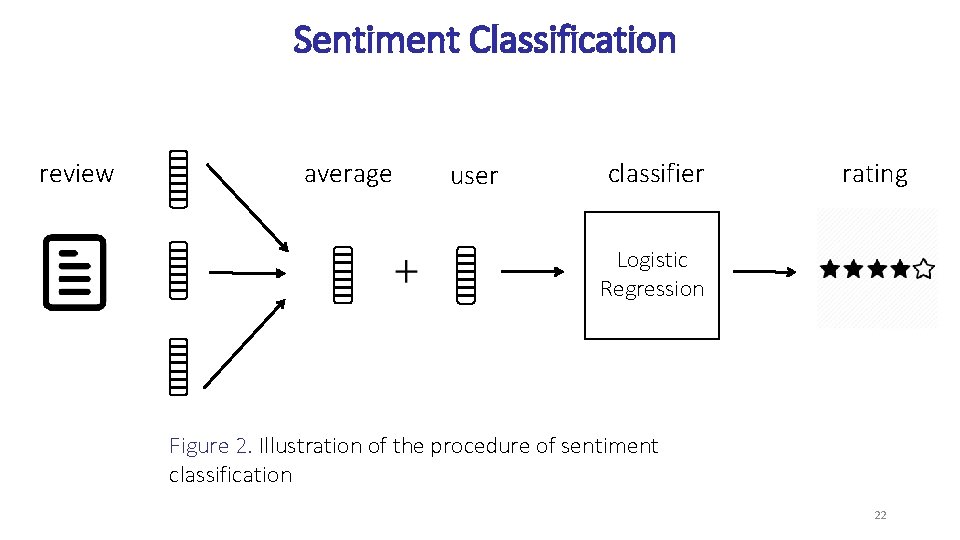

Sentiment Classification review average user classifier rating Logistic Regression Figure 2. Illustration of the procedure of sentiment classification 22

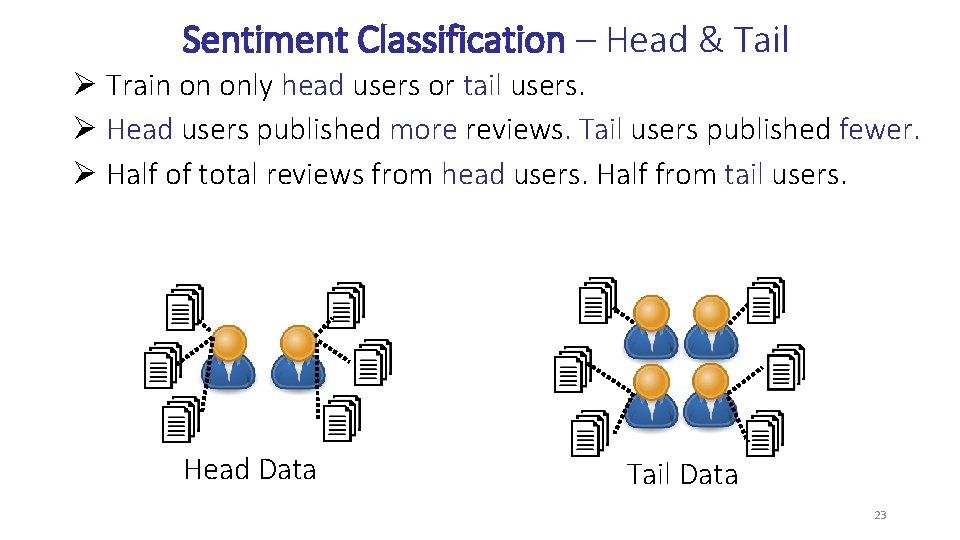

Sentiment Classification – Head & Tail Ø Train on only head users or tail users. Ø Head users published more reviews. Tail users published fewer. Ø Half of total reviews from head users. Half from tail users. Head Data Tail Data 23

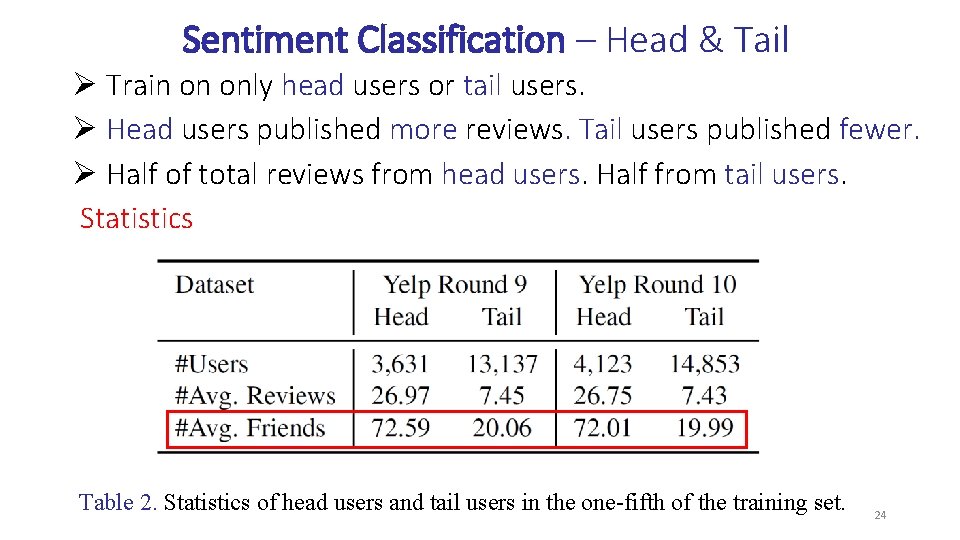

Sentiment Classification – Head & Tail Ø Train on only head users or tail users. Ø Head users published more reviews. Tail users published fewer. Ø Half of total reviews from head users. Half from tail users. Statistics Table 2. Statistics of head users and tail users in the one-fifth of the training set. 24

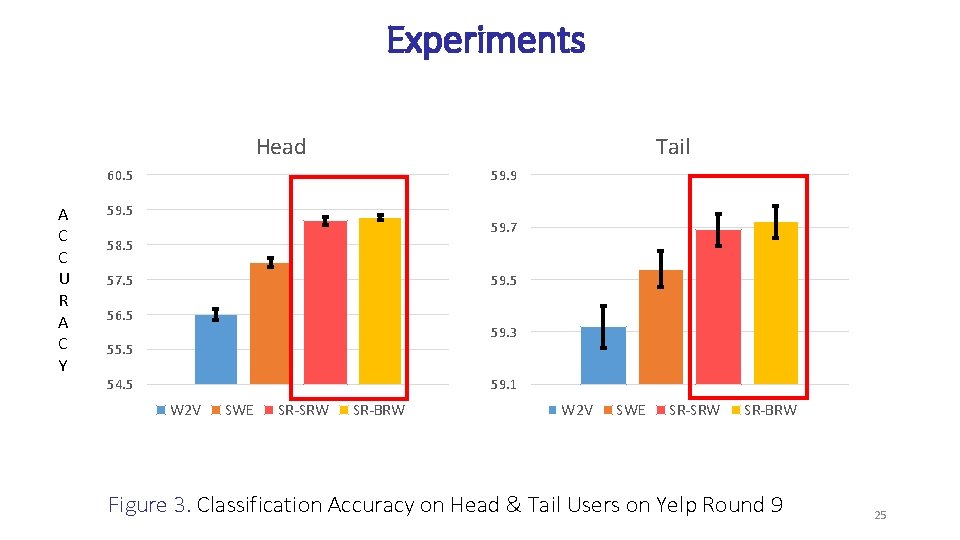

Experiments Head Tail 60. 5 A C C U R A C Y 59. 9 59. 5 59. 7 58. 5 57. 5 59. 5 56. 5 59. 3 55. 5 54. 5 59. 1 W 2 V SWE SR-SRW SR-BRW Figure 3. Classification Accuracy on Head & Tail Users on Yelp Round 9 25

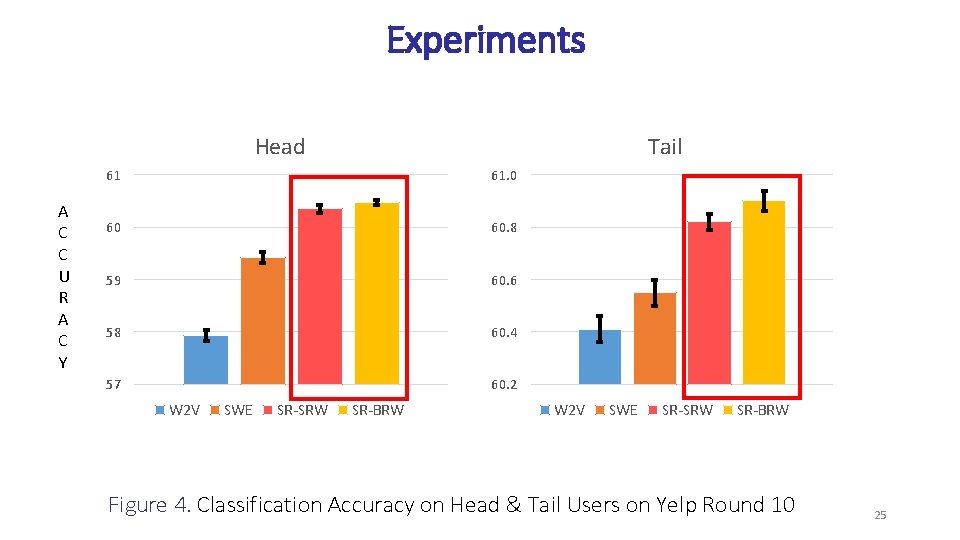

Experiments Head A C C U R A C Y Tail 61 61. 0 60 60. 8 59 60. 6 58 60. 4 57 60. 2 W 2 V SWE SR-SRW SR-BRW Figure 4. Classification Accuracy on Head & Tail Users on Yelp Round 10 25

Conclusion Ø Explicitly model the transitivity Ø Sample more users who have fewer friends. Thank You 26

- Slides: 38