Beyond kAnonymity Arik Friedman November 2008 Seminar in

Beyond k-Anonymity Arik Friedman November 2008 Seminar in Databases (236826)

Outline Ø Recap – privacy and k-anonymity Ø l-diversity (beyond k-anonymity) Ø t-closeness (beyond k-anonymity and l-diversity) Ø Privacy? 2

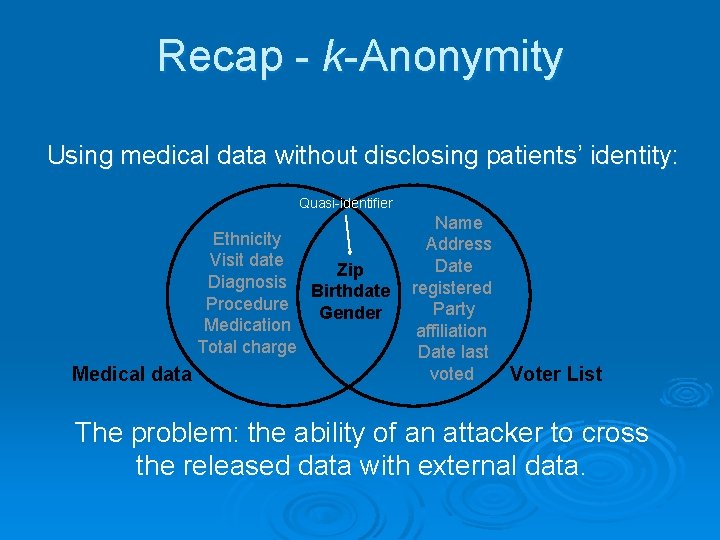

Recap - k-Anonymity Using medical data without disclosing patients’ identity: Quasi-identifier Ethnicity Visit date Zip Diagnosis Birthdate Procedure Gender Medication Total charge Medical data Name Address Date registered Party affiliation Date last voted Voter List The problem: the ability of an attacker to cross the released data with external data.

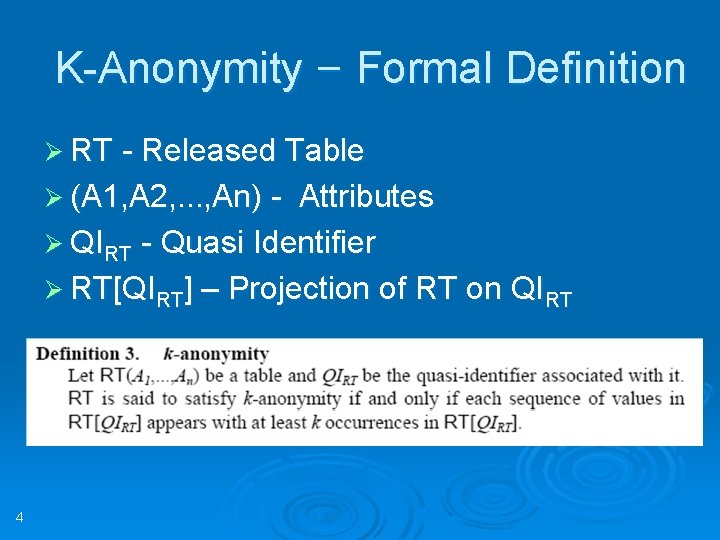

K-Anonymity – Formal Definition Ø RT - Released Table Ø (A 1, A 2, …, An) - Attributes Ø QIRT - Quasi Identifier Ø RT[QIRT] – Projection of RT on QIRT 4

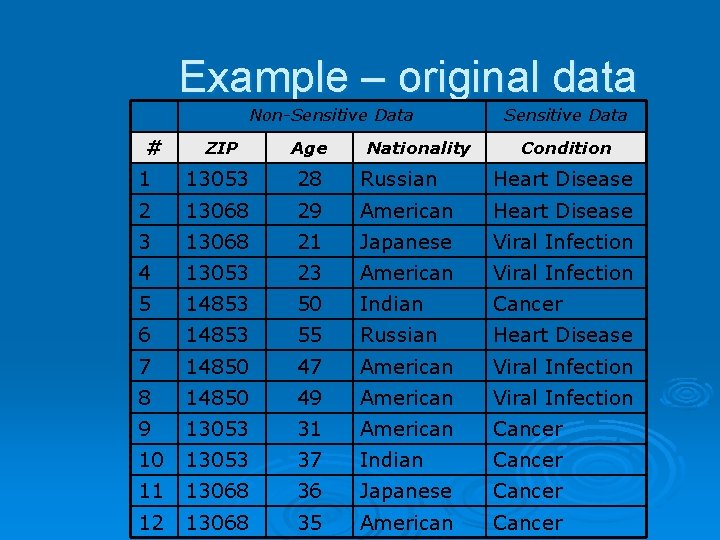

Example – original data Non-Sensitive Data # Nationality Sensitive Data ZIP Age Condition 1 13053 28 Russian Heart Disease 2 13068 29 American Heart Disease 3 13068 21 Japanese Viral Infection 4 13053 23 American Viral Infection 5 14853 50 Indian Cancer 6 14853 55 Russian Heart Disease 7 14850 47 American Viral Infection 8 14850 49 American Viral Infection 9 13053 31 American Cancer 10 13053 37 Indian Cancer 11 13068 36 Japanese Cancer 12 13068 35 American Cancer

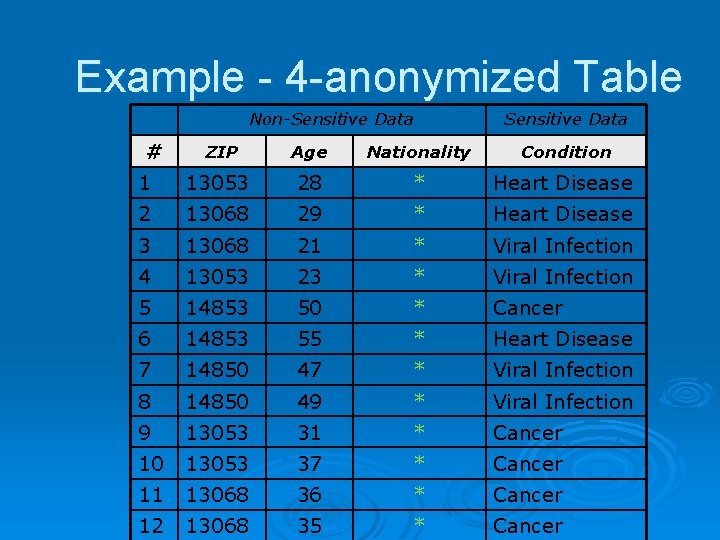

Example - 4 -anonymized Table Non-Sensitive Data # Sensitive Data ZIP Age Nationality Condition 1 13053 28 * Heart Disease 2 13068 29 * Heart Disease 3 13068 21 * Viral Infection 4 13053 23 * Viral Infection 5 14853 50 * Cancer 6 14853 55 * Heart Disease 7 14850 47 * Viral Infection 8 14850 49 * Viral Infection 9 13053 31 * Cancer 10 13053 37 * Cancer 11 13068 36 * Cancer 12 13068 35 * Cancer

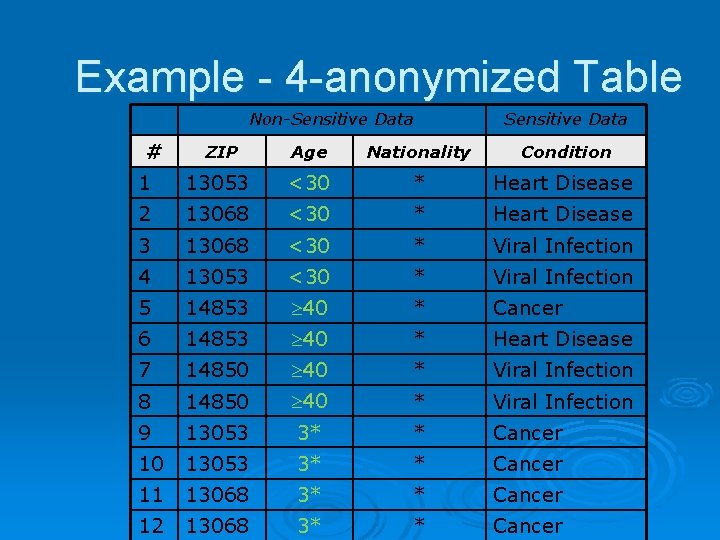

Example - 4 -anonymized Table Non-Sensitive Data # Sensitive Data ZIP Age Nationality Condition 1 13053 <30 * Heart Disease 2 13068 <30 * Heart Disease 3 13068 <30 * Viral Infection 4 13053 <30 * Viral Infection 5 14853 40 * Cancer 6 14853 40 * Heart Disease 7 14850 40 * Viral Infection 8 14850 40 * Viral Infection 9 13053 3* * Cancer 10 13053 3* * Cancer 11 13068 3* * Cancer 12 13068 3* * Cancer

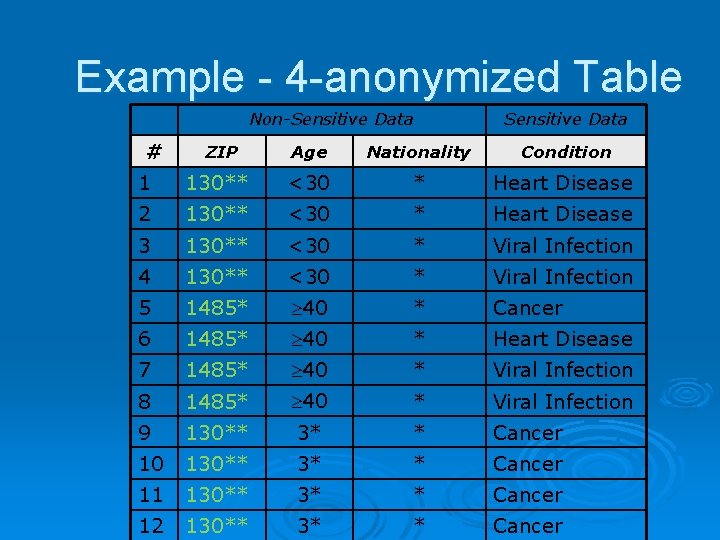

Example - 4 -anonymized Table Non-Sensitive Data # Sensitive Data ZIP Age Nationality Condition 1 130** <30 * Heart Disease 2 130** <30 * Heart Disease 3 130** <30 * Viral Infection 4 130** <30 * Viral Infection 5 1485* 40 * Cancer 6 1485* 40 * Heart Disease 7 1485* 40 * Viral Infection 8 1485* 40 * Viral Infection 9 130** 3* * Cancer 10 130** 3* * Cancer 11 130** 3* * Cancer 12 130** 3* * Cancer

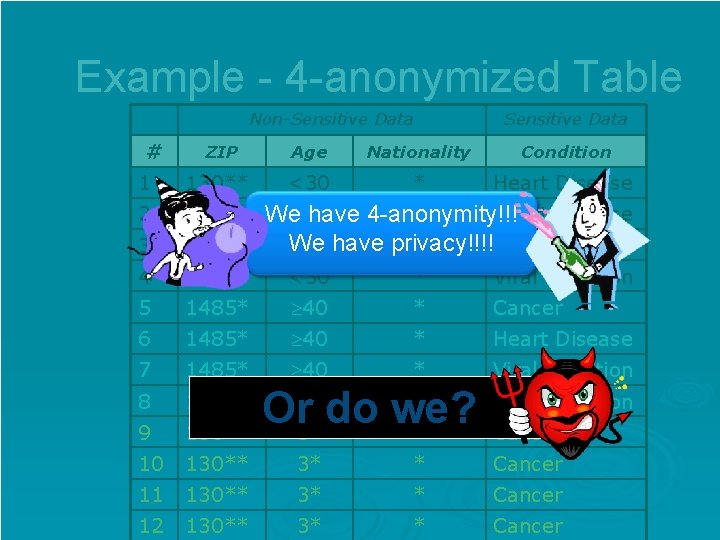

Example - 4 -anonymized Table Non-Sensitive Data # Sensitive Data ZIP Age Nationality Condition 1 130** <30 * Heart Disease 2 130** 3 <30 * Heart Disease We have 4 -anonymity!!! We have privacy!!!! 130** <30 * Viral Infection 4 130** <30 * Viral Infection 5 1485* 40 * Cancer 6 1485* 40 * Heart Disease 7 1485* 40 * Viral Infection 8 1485* Viral Infection 9 40 * Or do we? 130** 3* * 10 130** 3* * Cancer 11 130** 3* * Cancer 12 130** 3* * Cancer

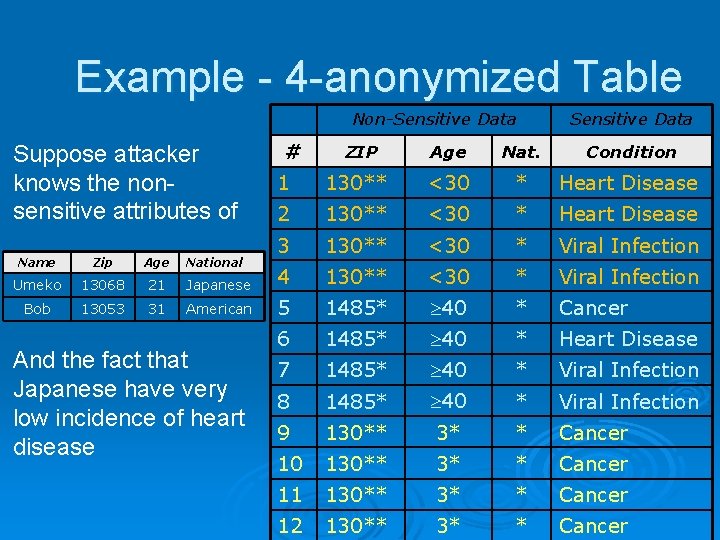

Example - 4 -anonymized Table Non-Sensitive Data Suppose attacker knows the nonsensitive attributes of National # Sensitive Data ZIP Age Nat. Condition 1 130** <30 * Heart Disease 2 130** <30 * Heart Disease 3 130** <30 * Viral Infection Name Zip Age Umeko 13068 21 Japanese 4 130** <30 * Viral Infection Bob 13053 31 American 5 1485* 40 * Cancer 6 1485* 40 * Heart Disease 7 1485* 40 * Viral Infection 8 1485* 40 * Viral Infection 9 130** 3* * Cancer 10 130** 3* * Cancer 11 130** 3* * Cancer 12 130** 3* * Cancer And the fact that Japanese have very low incidence of heart disease

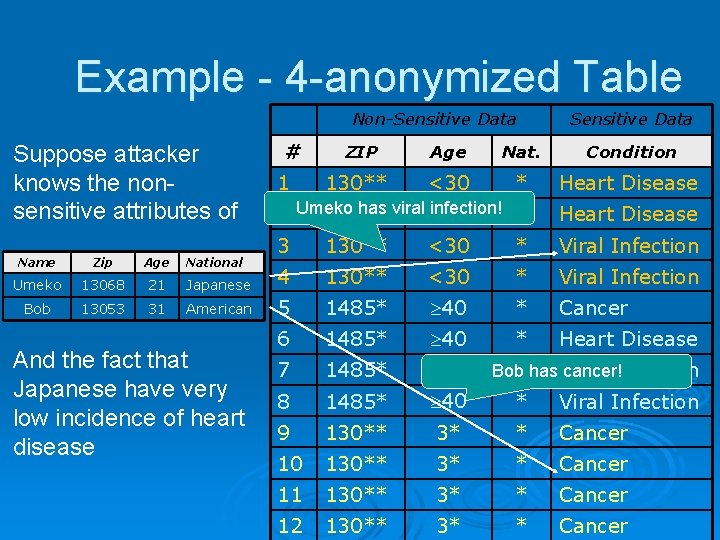

Example - 4 -anonymized Table Non-Sensitive Data Suppose attacker knows the nonsensitive attributes of National # Sensitive Data ZIP Age Nat. Condition 130** <30 * Heart Disease has viral <30 infection! * 2 Umeko 130** Heart Disease 3 130** <30 * Viral Infection 1 Name Zip Age Umeko 13068 21 Japanese 4 130** <30 * Viral Infection Bob 13053 31 American 5 1485* 40 * Cancer 6 1485* 40 * Heart Disease 7 1485* 40 8 1485* 40 * Viral Infection 9 130** 3* * Cancer 10 130** 3* * Cancer 11 130** 3* * Cancer 12 130** 3* * Cancer And the fact that Japanese have very low incidence of heart disease Bob* has Viral cancer! Infection

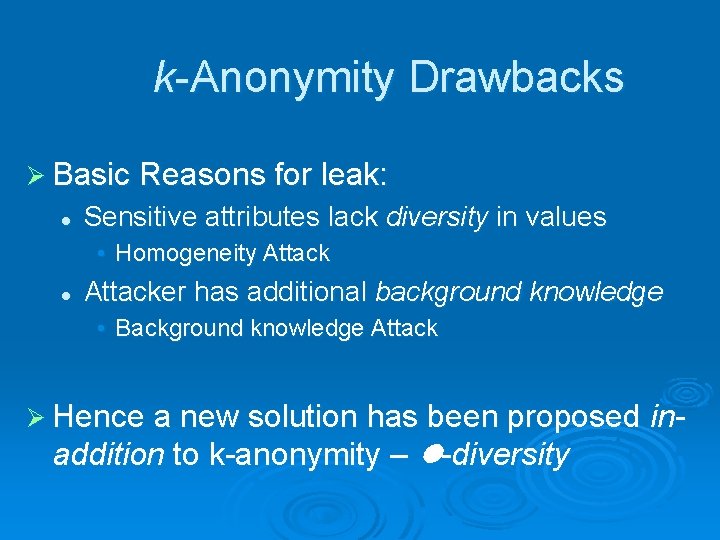

k-Anonymity Drawbacks Ø Basic Reasons for leak: l Sensitive attributes lack diversity in values • Homogeneity Attack l Attacker has additional background knowledge • Background knowledge Attack Ø Hence a new solution has been proposed addition to k-anonymity – l-diversity in-

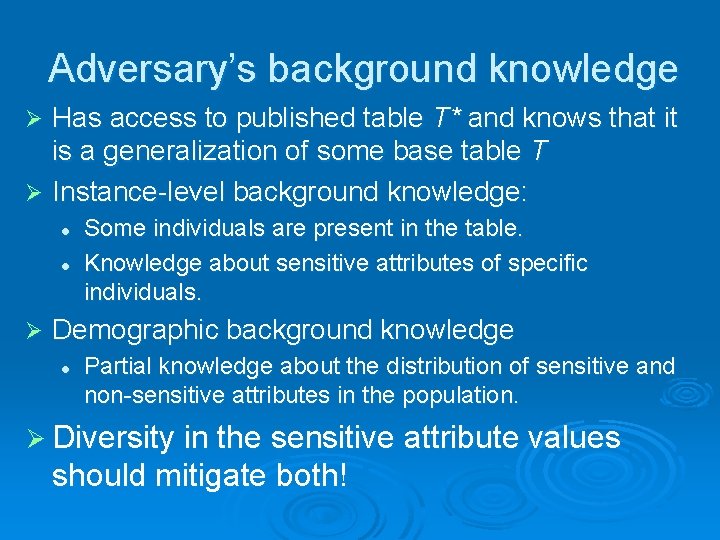

Adversary’s background knowledge Has access to published table T* and knows that it is a generalization of some base table T Ø Instance-level background knowledge: Ø l l Ø Some individuals are present in the table. Knowledge about sensitive attributes of specific individuals. Demographic background knowledge l Partial knowledge about the distribution of sensitive and non-sensitive attributes in the population. Ø Diversity in the sensitive attribute values should mitigate both!

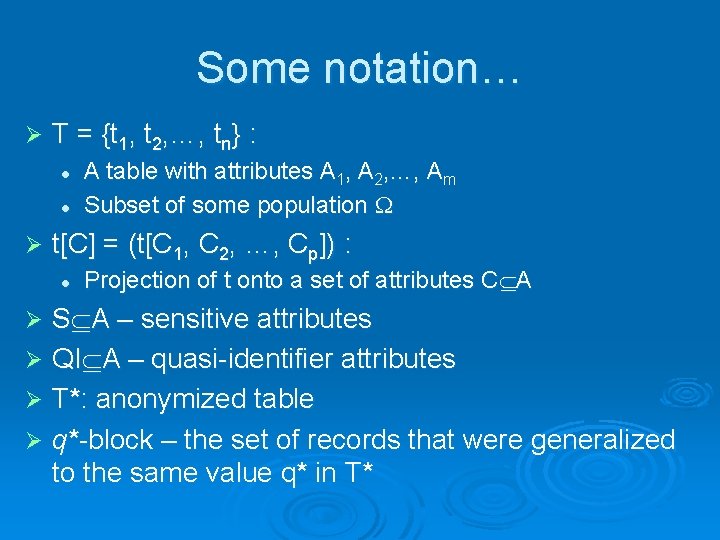

Some notation… Ø T = {t 1, t 2, …, tn} : l l Ø A table with attributes A 1, A 2, …, Am Subset of some population t[C] = (t[C 1, C 2, …, Cp]) : l Projection of t onto a set of attributes C A S A – sensitive attributes Ø QI A – quasi-identifier attributes Ø T*: anonymized table Ø q*-block – the set of records that were generalized to the same value q* in T* Ø

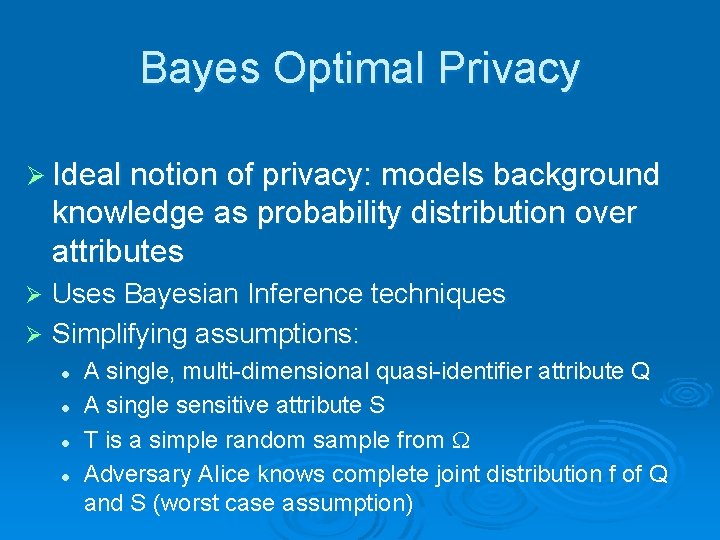

Bayes Optimal Privacy Ø Ideal notion of privacy: models background knowledge as probability distribution over attributes Uses Bayesian Inference techniques Ø Simplifying assumptions: Ø l l A single, multi-dimensional quasi-identifier attribute Q A single sensitive attribute S T is a simple random sample from Adversary Alice knows complete joint distribution f of Q and S (worst case assumption)

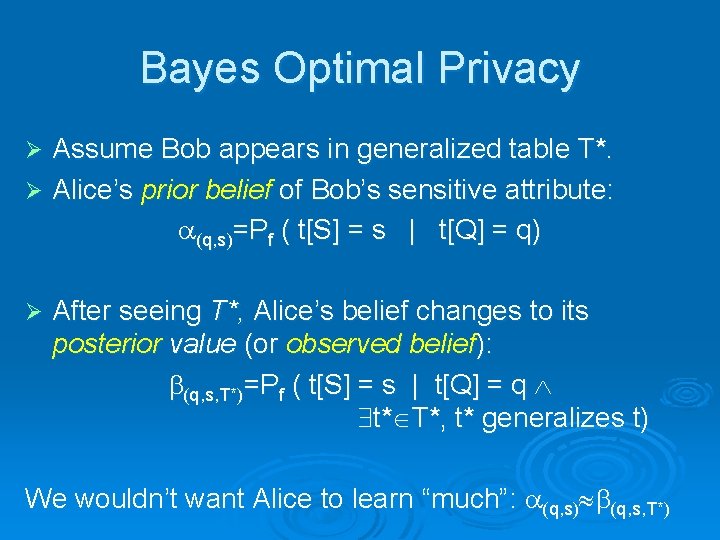

Bayes Optimal Privacy Assume Bob appears in generalized table T*. Ø Alice’s prior belief of Bob’s sensitive attribute: (q, s)=Pf ( t[S] = s | t[Q] = q) Ø Ø After seeing T*, Alice’s belief changes to its posterior value (or observed belief): (q, s, T*)=Pf ( t[S] = s | t[Q] = q t* T*, t* generalizes t) We wouldn’t want Alice to learn “much”: (q, s) (q, s, T*)

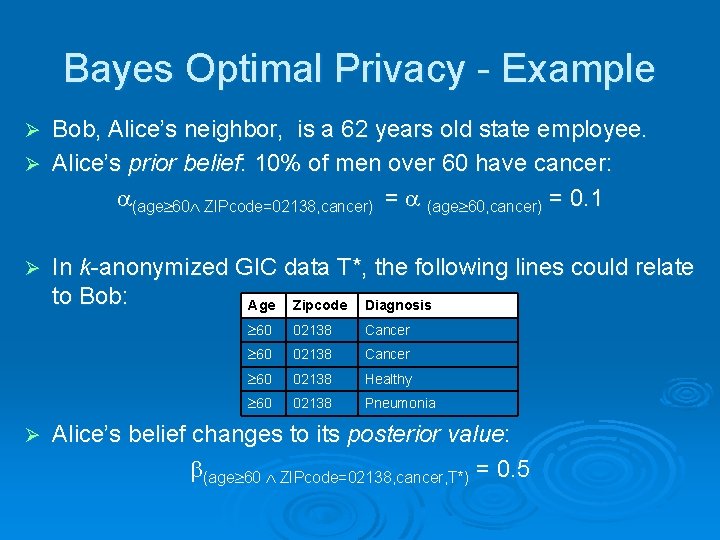

Bayes Optimal Privacy - Example Bob, Alice’s neighbor, is a 62 years old state employee. Ø Alice’s prior belief: 10% of men over 60 have cancer: (age 60 ZIPcode=02138, cancer) = (age 60, cancer) = 0. 1 Ø Ø Ø In k-anonymized GIC data T*, the following lines could relate to Bob: Age Zipcode Diagnosis 60 02138 Cancer 60 02138 Healthy 60 02138 Pneumonia Alice’s belief changes to its posterior value: (age 60 ZIPcode=02138, cancer, T*) = 0. 5

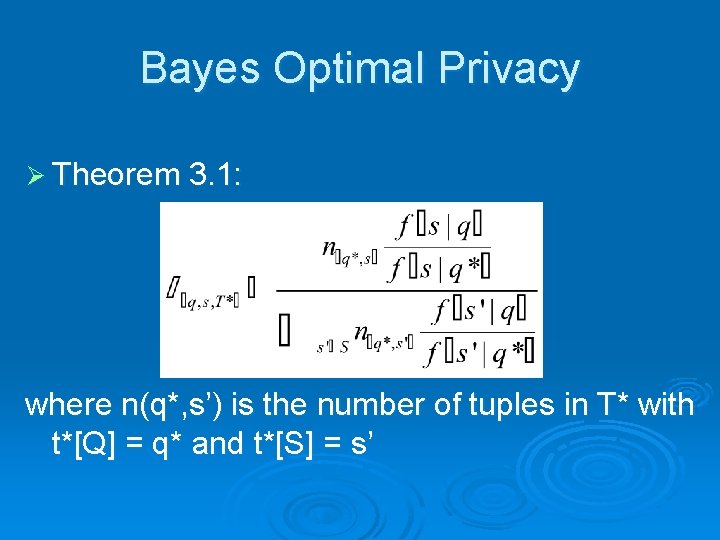

Bayes Optimal Privacy Ø Theorem 3. 1: where n(q*, s’) is the number of tuples in T* with t*[Q] = q* and t*[S] = s’

Privacy principles Ø Positive disclosure: the adversary can correctly identify the value of a sensitive attribute: q, s such that (q, s, T*)>1 - for a given Ø Negative disclosure: the adversary can correctly eliminate the value of a sensitive attribute: (q, s, T*)< for a given and t T such that t[Q]=q but t[S] s

Privacy principles Ø Note not all positive and negative disclosures are bad l If Alice already knew Bob has Cancer, there is nothing much one can do! Ø Uninformative principle: there should not be a large difference between the prior and posterior beliefs

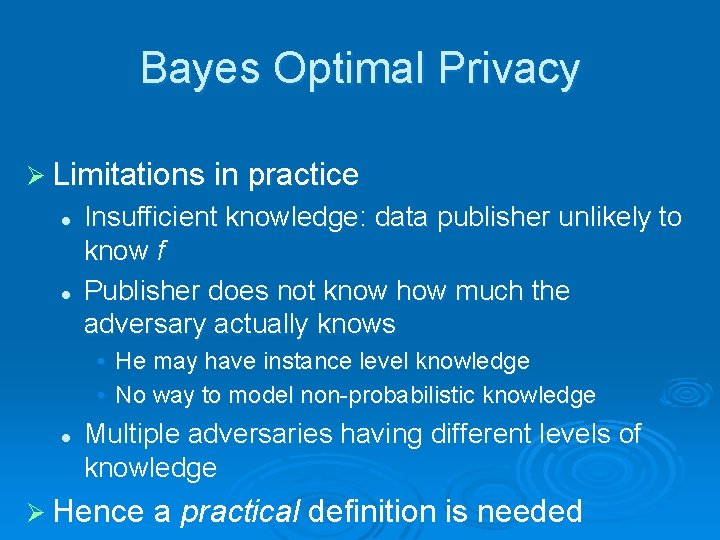

Bayes Optimal Privacy Ø Limitations in practice l l Insufficient knowledge: data publisher unlikely to know f Publisher does not know how much the adversary actually knows • He may have instance level knowledge • No way to model non-probabilistic knowledge l Multiple adversaries having different levels of knowledge Ø Hence a practical definition is needed

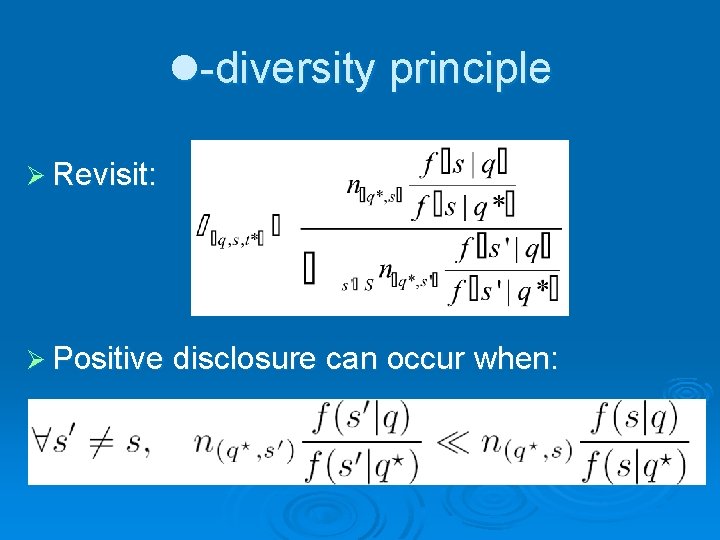

l-diversity principle Ø Revisit: Ø Positive disclosure can occur when:

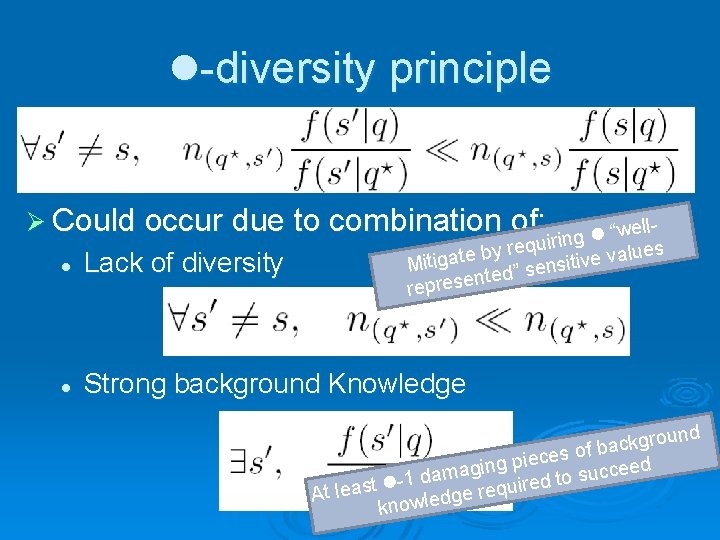

l-diversity principle Ø Could occur due to combination of: lle w “ l uiring q e r es y u b l a e t v a e g i v Mit nsiti e s ” d e t n represe l Lack of diversity l Strong background Knowledge nd u o r g k c of ba s e c e i ed e ging p c a c m u a s d l-1 ed to r t i s u a q e e l r t A dge knowle

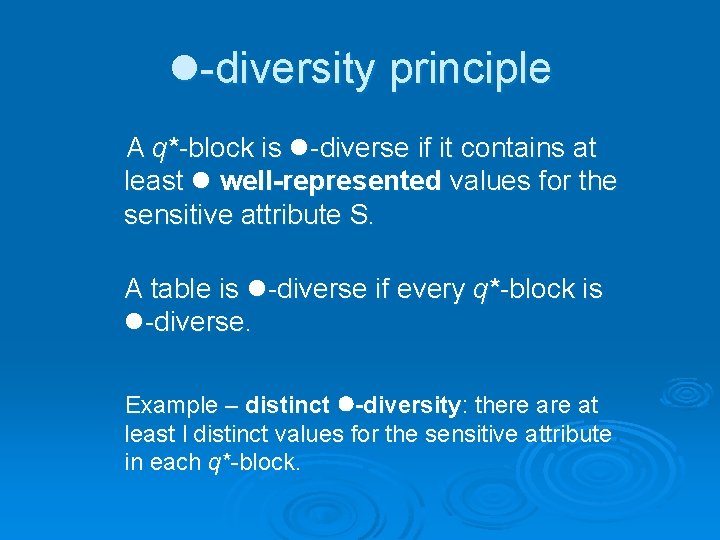

l-diversity principle A q*-block is l-diverse if it contains at least l well-represented values for the sensitive attribute S. A table is l-diverse if every q*-block is l-diverse. Example – distinct l-diversity: there at least l distinct values for the sensitive attribute in each q*-block.

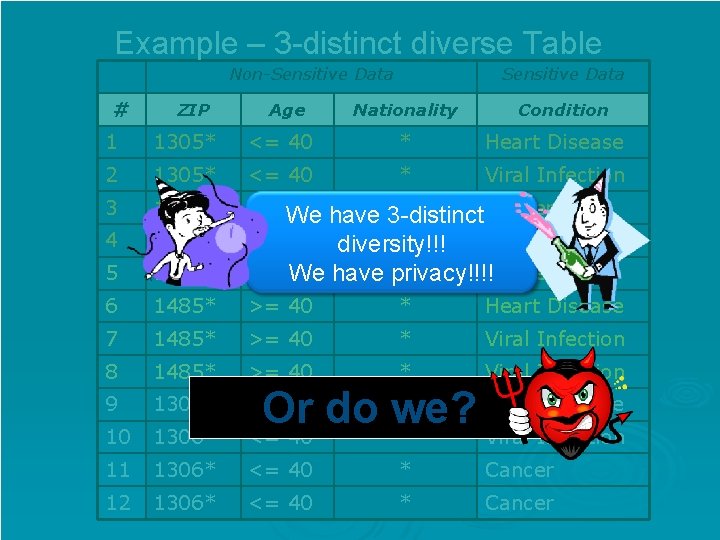

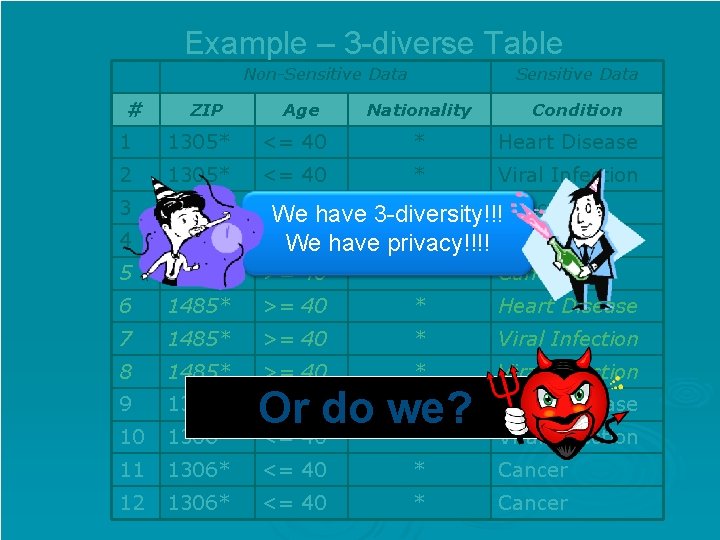

Example – 3 -distinct diverse Table Non-Sensitive Data # ZIP Age Sensitive Data Nationality Condition 1 1305* <= 40 * Heart Disease 2 1305* <= 40 * Viral Infection 3 1305* 4 1305* <= We 40 5 1485* * Cancer have 3 -distinct <= 40 diversity!!! * Cancer >= 40 * Cancer We have privacy!!!! 6 1485* >= 40 * Heart Disease 7 1485* >= 40 * Viral Infection 8 1485* >= 40 * Viral Infection 9 1306* <= 40 10 1306* * Heart Disease Or do we? <= 40 * Viral Infection 11 1306* <= 40 * Cancer 12 1306* <= 40 * Cancer

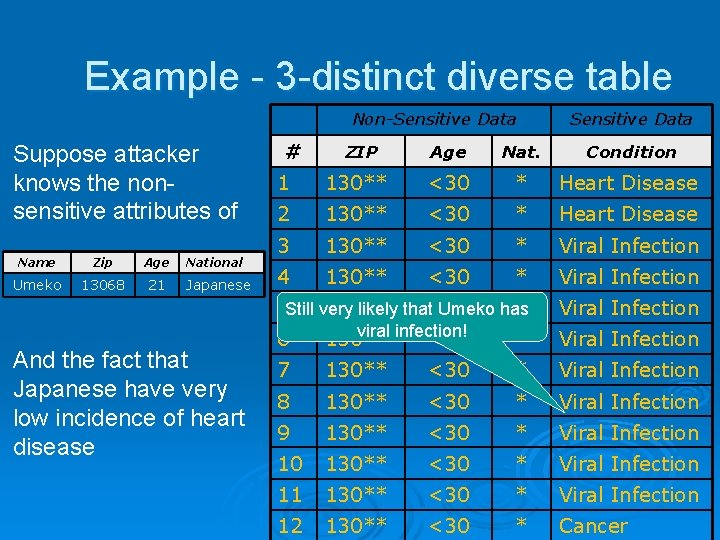

Example - 3 -distinct diverse table Non-Sensitive Data Suppose attacker knows the nonsensitive attributes of Name Zip Age Umeko 13068 21 National Japanese And the fact that Japanese have very low incidence of heart disease # Sensitive Data ZIP Age Nat. Condition 1 130** <30 * Heart Disease 2 130** <30 * Heart Disease 3 130** <30 * Viral Infection 4 130** <30 * Viral Infection 5 Still very 130** * likely that<30 Umeko has Viral Infection 6 viral infection! 130** <30 * Viral Infection 7 130** <30 * Viral Infection 8 130** <30 * Viral Infection 9 130** <30 * Viral Infection 10 130** <30 * Viral Infection 11 130** <30 * Viral Infection 12 130** <30 * Cancer

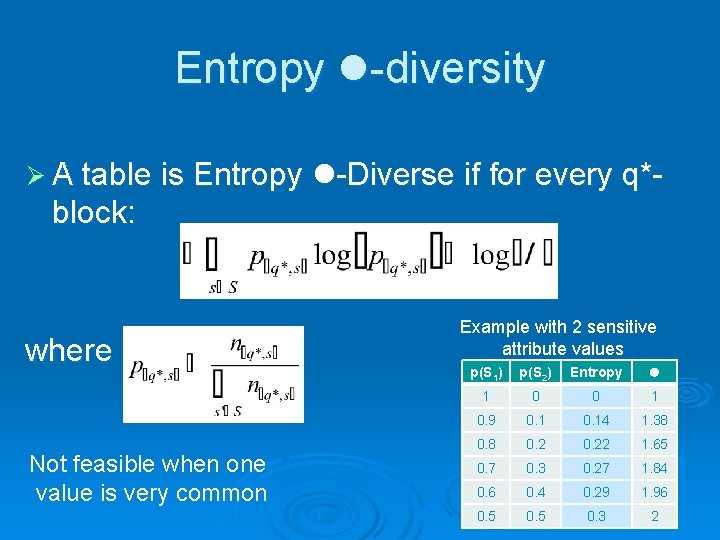

Entropy l-diversity Ø A table is Entropy l-Diverse if for every q*- block: where Not feasible when one value is very common Example with 2 sensitive attribute values p(S 1) p(S 2) Entropy l 1 0 0 1 0. 9 0. 14 1. 38 0. 22 1. 65 0. 7 0. 3 0. 27 1. 84 0. 6 0. 4 0. 29 1. 96 0. 5 0. 3 2

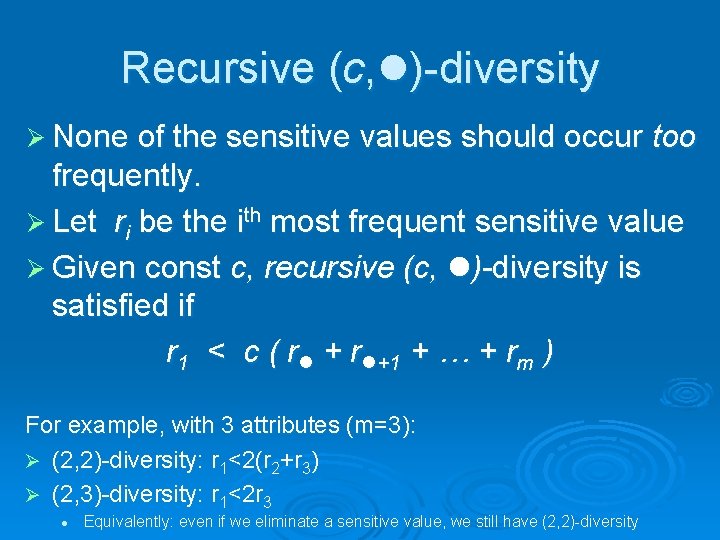

Recursive (c, l)-diversity Ø None of the sensitive values should occur too frequently. Ø Let ri be the ith most frequent sensitive value Ø Given const c, recursive (c, l)-diversity is satisfied if r 1 < c ( rl + rl+1 + … + rm ) For example, with 3 attributes (m=3): Ø (2, 2)-diversity: r 1<2(r 2+r 3) Ø (2, 3)-diversity: r 1<2 r 3 l Equivalently: even if we eliminate a sensitive value, we still have (2, 2)-diversity

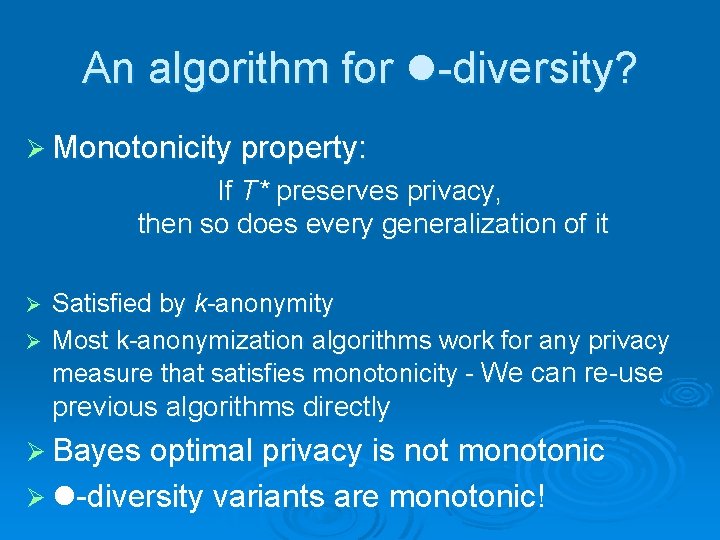

An algorithm for l-diversity? Ø Monotonicity property: If T* preserves privacy, then so does every generalization of it Satisfied by k-anonymity Ø Most k-anonymization algorithms work for any privacy measure that satisfies monotonicity - We can re-use Ø previous algorithms directly Ø Bayes optimal privacy is not monotonic Ø l-diversity variants are monotonic!

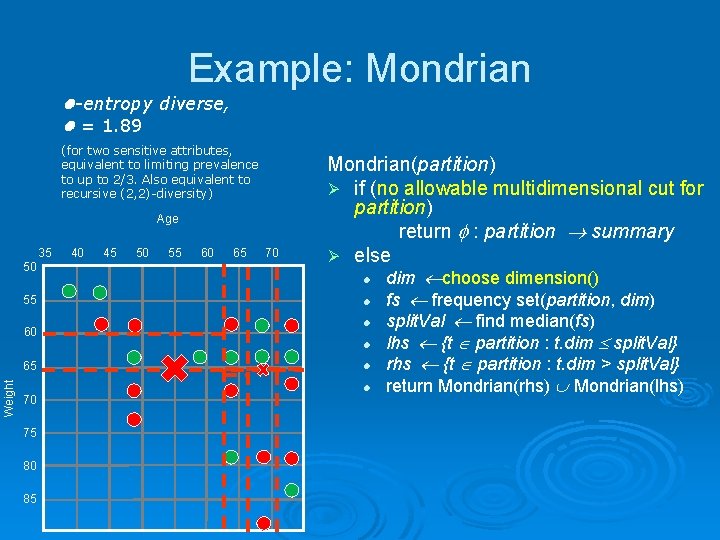

Example: Mondrian l-entropy diverse, l = 1. 89 (for two sensitive attributes, equivalent to limiting prevalence to up to 2/3. Also equivalent to recursive (2, 2)-diversity) Age 35 50 55 60 Weight 65 70 75 80 85 40 45 50 55 60 65 70 Mondrian(partition) Ø if (no allowable multidimensional cut for partition) return : partition summary Ø else l dim choose dimension() l fs frequency set(partition, dim) l split. Val find median(fs) l lhs {t partition : t. dim split. Val} l rhs {t partition : t. dim > split. Val} l return Mondrian(rhs) Mondrian(lhs)

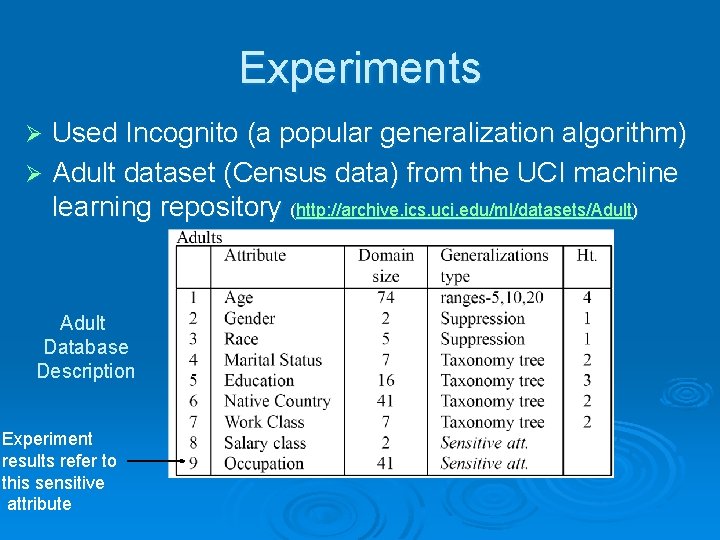

Experiments Used Incognito (a popular generalization algorithm) Ø Adult dataset (Census data) from the UCI machine learning repository (http: //archive. ics. uci. edu/ml/datasets/Adult) Ø Adult Database Description Experiment results refer to this sensitive attribute

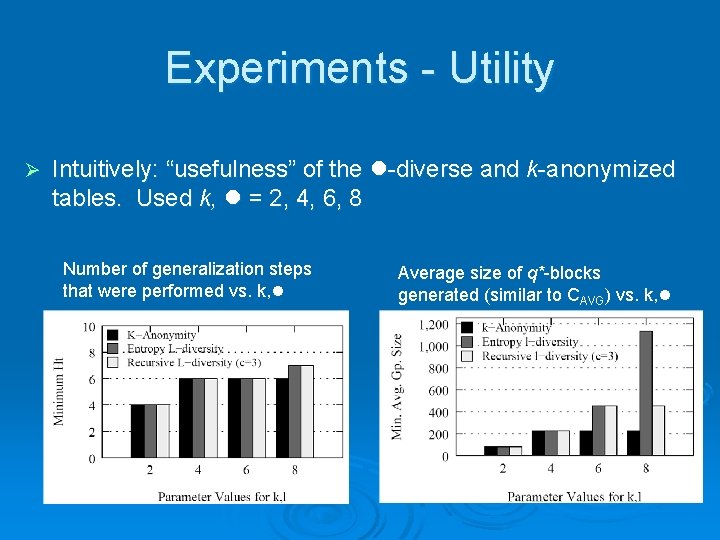

Experiments - Utility Ø Intuitively: “usefulness” of the l-diverse and k-anonymized tables. Used k, l = 2, 4, 6, 8 Number of generalization steps that were performed vs. k, l Average size of q*-blocks generated (similar to CAVG) vs. k, l

Example – 3 -diverse Table Non-Sensitive Data # ZIP Age Sensitive Data Nationality Condition 1 1305* <= 40 * Heart Disease 2 1305* <= 40 * Viral Infection 3 1305* 4 1305* <= We 40 have 5 1485* >= 40 * Cancer 6 1485* >= 40 * Heart Disease 7 1485* >= 40 * Viral Infection 8 1485* >= 40 * Viral Infection 9 1306* Heart Disease 10 1306* <= 40 * Or do we? <= 40 * 11 1306* <= 40 * Cancer 12 1306* <= 40 * Cancer 3 -diversity!!! <=We 40 have privacy!!!! * Cancer Viral Infection

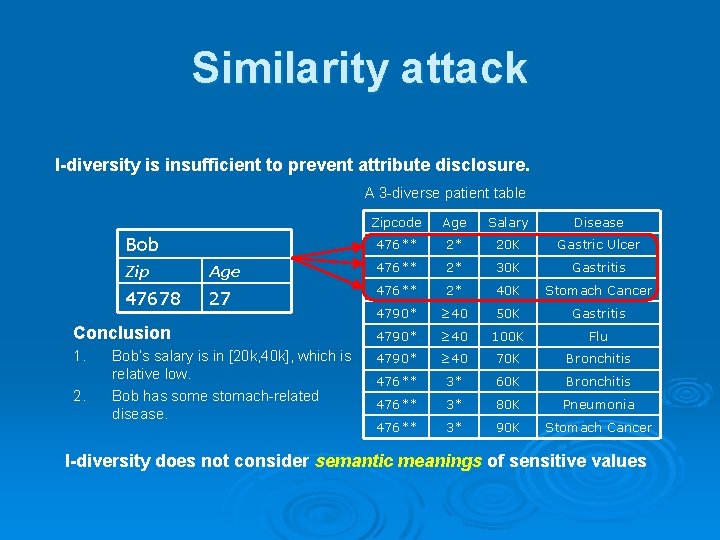

Similarity attack l-diversity is insufficient to prevent attribute disclosure. A 3 -diverse patient table Bob Zipcode Age Salary Disease 476** 2* 20 K Gastric Ulcer Zip Age 476** 2* 30 K Gastritis 47678 27 476** 2* 40 K Stomach Cancer 4790* ≥ 40 50 K Gastritis Conclusion 4790* ≥ 40 100 K Flu 1. 4790* ≥ 40 70 K Bronchitis 476** 3* 60 K Bronchitis 476** 3* 80 K Pneumonia 476** 3* 90 K Stomach Cancer 2. Bob’s salary is in [20 k, 40 k], which is relative low. Bob has some stomach-related disease. l-diversity does not consider semantic meanings of sensitive values

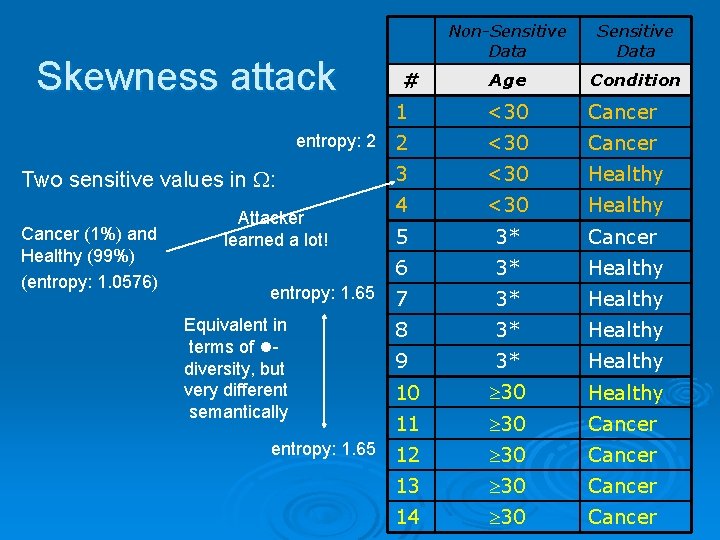

Skewness attack entropy: 2 Two sensitive values in : Cancer (1%) and Healthy (99%) (entropy: 1. 0576) Attacker learned a lot! entropy: 1. 65 Equivalent in terms of ldiversity, but very different semantically entropy: 1. 65 Non-Sensitive Data Age Condition 1 <30 Cancer 2 <30 Cancer 3 <30 Healthy 4 <30 Healthy 5 3* Cancer 6 3* Healthy 7 3* Healthy 8 3* Healthy 9 3* Healthy 10 30 Healthy 11 30 Cancer 12 30 Cancer 13 30 Cancer 14 30 Cancer #

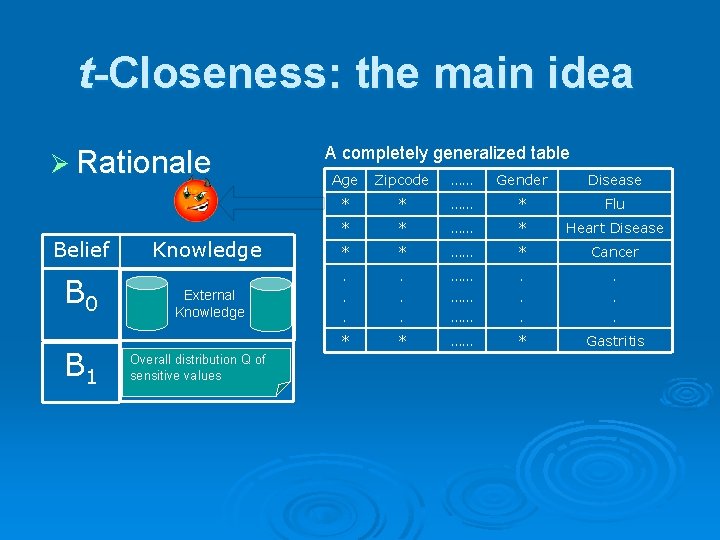

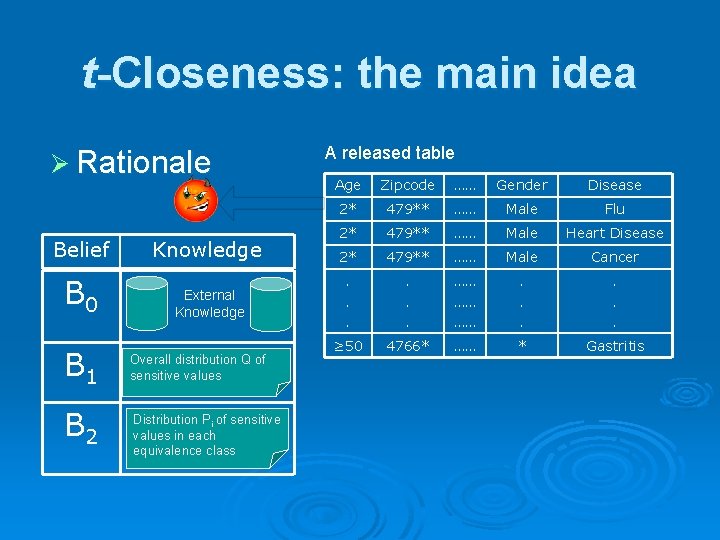

t-Closeness: the main idea Ø Rationale Belief B 0 B 1 A completely generalized table Age Zipcode …… Gender Disease * * …… * Flu * * …… * Heart Disease Knowledge * * …… * Cancer External Knowledge . . . …… …… …… . . . * * …… * Gastritis Overall distribution Q of sensitive values

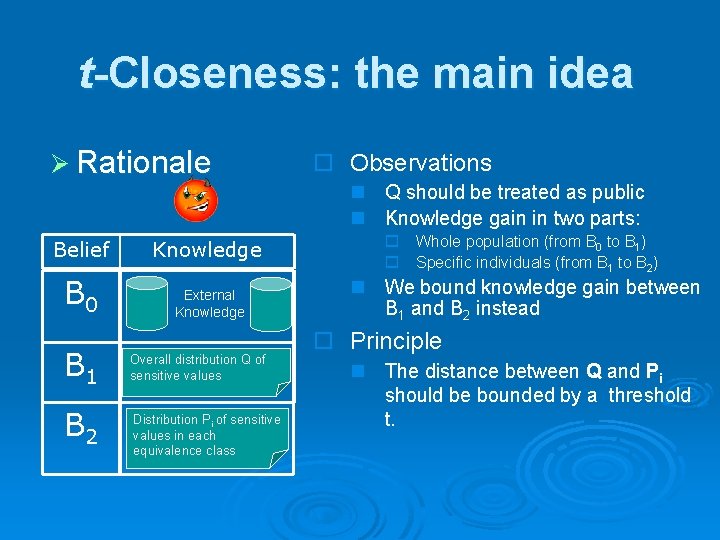

t-Closeness: the main idea Ø Rationale Belief B 0 B 1 B 2 Knowledge External Knowledge Overall distribution Q of sensitive values Distribution Pi of sensitive values in each equivalence class A released table Age Zipcode …… Gender Disease 2* 479** …… Male Flu 2* 479** …… Male Heart Disease 2* 479** …… Male Cancer . . . …… …… …… . . . ≥ 50 4766* …… * Gastritis

t-Closeness: the main idea Ø Rationale Belief Knowledge B 0 External Knowledge B 1 B 2 Overall distribution Q of sensitive values Distribution Pi of sensitive values in each equivalence class o Observations n Q should be treated as public n Knowledge gain in two parts: o Whole population (from B 0 to B 1) o Specific individuals (from B 1 to B 2) n We bound knowledge gain between B 1 and B 2 instead o Principle n The distance between Q and Pi should be bounded by a threshold t.

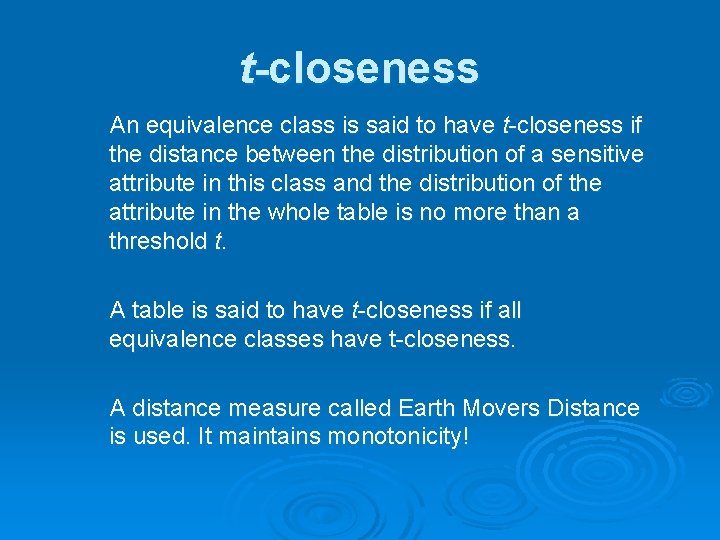

t-closeness An equivalence class is said to have t-closeness if the distance between the distribution of a sensitive attribute in this class and the distribution of the attribute in the whole table is no more than a threshold t. A table is said to have t-closeness if all equivalence classes have t-closeness. A distance measure called Earth Movers Distance is used. It maintains monotonicity!

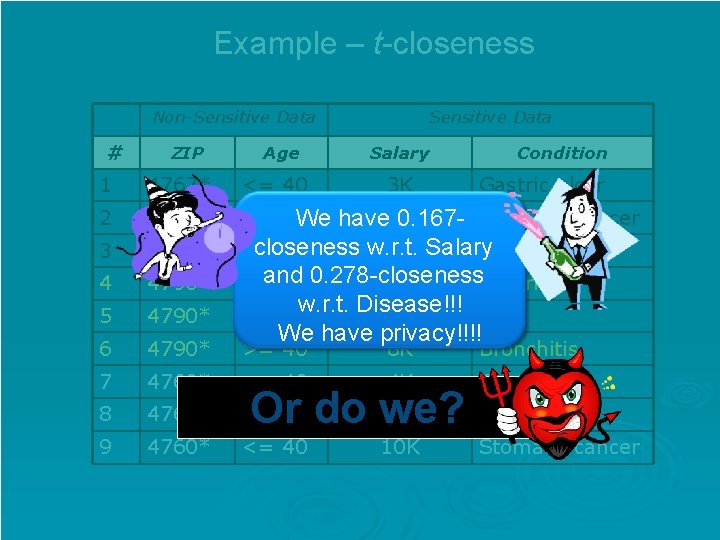

Example – t-closeness Non-Sensitive Data # ZIP Age 1 4767* <= 40 2 4767* <= 40 We 3 4767* 4 4790* 5 4790* 6 4790* 7 Sensitive Data Salary 3 K Condition Gastric ulcer have 5 K 0. 167 - Stomach cancer closeness w. r. t. <= 40 9 K Salary Pneumonia and >= 40 0. 278 -closeness 6 K Gastritis w. r. t. Disease!!! >= 40 11 K Flu We have privacy!!!! >= 40 8 K Bronchitis 4760* <= 40 4 K Gastritis 8 4760* <= 40 9 4760* <= 40 7 K Or do we? 10 K Bronchitis Stomach cancer

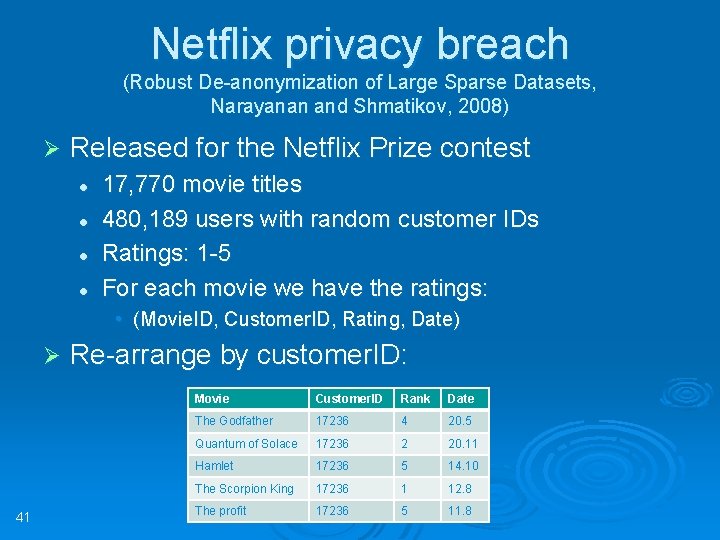

Netflix privacy breach (Robust De-anonymization of Large Sparse Datasets, Narayanan and Shmatikov, 2008) Ø Released for the Netflix Prize contest l l 17, 770 movie titles 480, 189 users with random customer IDs Ratings: 1 -5 For each movie we have the ratings: • (Movie. ID, Customer. ID, Rating, Date) Ø 41 Re-arrange by customer. ID: Movie Customer. ID Rank Date The Godfather 17236 4 20. 5 Quantum of Solace 17236 2 20. 11 Hamlet 17236 5 14. 10 The Scorpion King 17236 1 12. 8 The profit 17236 5 11. 8

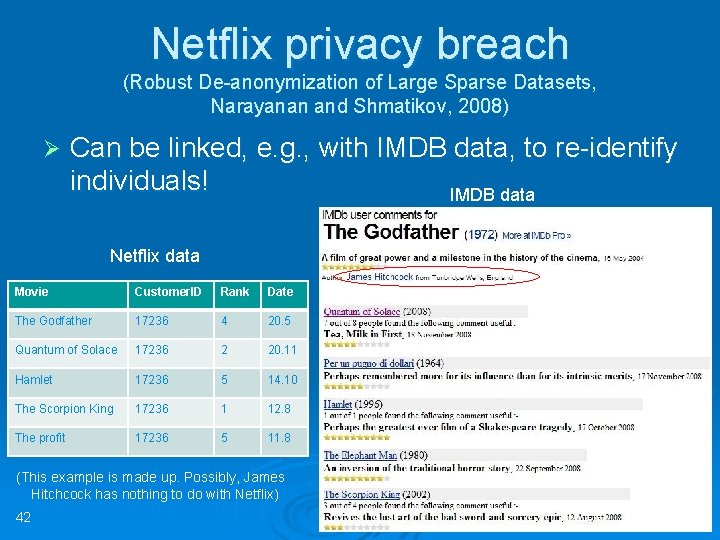

Netflix privacy breach (Robust De-anonymization of Large Sparse Datasets, Narayanan and Shmatikov, 2008) Ø Can be linked, e. g. , with IMDB data, to re-identify individuals! IMDB data Netflix data Movie Customer. ID Rank Date The Godfather 17236 4 20. 5 Quantum of Solace 17236 2 20. 11 Hamlet 17236 5 14. 10 The Scorpion King 17236 1 12. 8 The profit 17236 5 11. 8 (This example is made up. Possibly, James Hitchcock has nothing to do with Netflix) 42

Epilogue “You have zero privacy anyway. Get over it. ” Scott Mc. Neally (SUN CEO, January 1999) 43

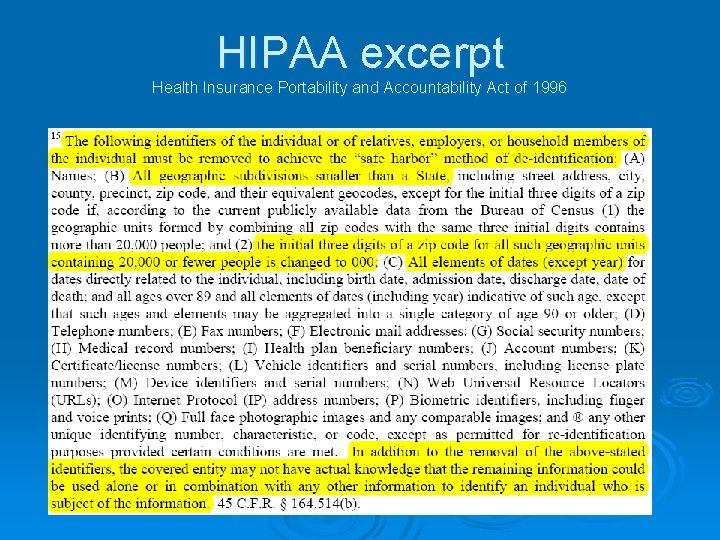

HIPAA excerpt Health Insurance Portability and Accountability Act of 1996

Thank you! 45

Bibliography Ø “Mondrian Multidimensional k-Anonymity”, K. Le. Fevre, D. J. De. Witt, R. Ramakrishnan, 2006 Ø l-diversity: Privacy beyond k-anonymity, A. Machanavajjhala, Johannes Gehrke, Daniel Kifer, 2006 Ø T-closeness: Privacy beyond k-anonymity and l-diversity, Ninghui Li, Tiancheng Li, Suresh Venkatasubramanian, 2006 Ø Presentations: 46 l “Privacy In Databases”, B. Aditya Prakash l “K-Anonymity and Other Cluster-Based Methods”, Ge. Ruan

- Slides: 46