Beyond Intuitive Designing Human Interaction with Intelligent Systems

Beyond Intuitive Designing Human Interaction with Intelligent Systems Alan Blackwell Professor of Interdisciplinary Design

Overview Background: Interaction with Machine Learning R 230 Module from MPhil in Advanced Computer Science (with Advait Sarkar, MSR) Practical experimental class requiring individual original research studies Specialist lectures in theories for intelligent user interface A little background to theory and methods in HCI research New perspective 1: Mixed initiative interaction New perspective 2: Program synthesis Theory Practical applications Empirical research Coda: Coda (intelligent tools for Africa, and African AI)

R 230 course objective: “deliver 4 th wave of AI” Four waves according to Hassabis (and with variants, many others): Our approach: First wave (GOFAI): Expert systems & symbolic reasoning Second wave: Statistical inference Third wave: Deep learning Fourth wave: Intelligent tools as advanced HCI Including: Visualisation, Programming, Labelling, Explanation Practical HCI course: Build, measure and observe

Human-Computer Interaction (HCI) - Three waves First wave (1980 s): Efficient design for humans to achieve well-defined functions Theory from Human Factors, Ergonomics and Cognitive Science / AI Second wave (1990 s): AI models did not result in more usable machines, leading to a backlash! Theory from Anthropology, Sociology and Work Psychology Third wave (2000 s): Ubiquitous and mobile computing prioritises user experience, not efficiency Theory from Art, Philosophy and Design

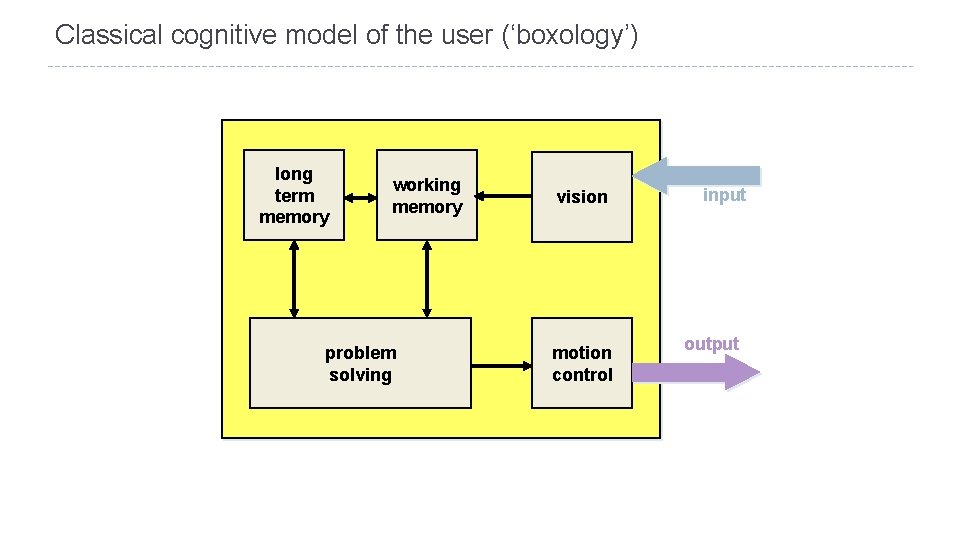

Classical cognitive model of the user (‘boxology’) long term memory working memory problem solving vision motion control input output

Classical engineering models of human I/O, memory, CPU Seeks “impedance match” of computer with computational user model Extend principles of human factors and ergonomics Psychophysical perception Speed and accuracy of movement at keystroke level Reaction and decision time Include working memory capacity 7 +/- 2 ‘chunks’ Single visual scene GOFAI-planner style Goals Operators Methods Selection Are intelligent interfaces a matter of ‘cognitive ergonomics’?

Classical HCI: The problem of learning Classical models assumed users would be made to read the manual In contrast, discretionary usage systems require exploratory learning models because users can (and do) walk away Focus on minimal instruction, immediate progress toward user goals Now taken for granted (but only after long battle with usability advocates) Cognitive walkthrough review methods allowed system designers to anticipate usability problems, based on model of situated learning rather than cognitive model of planning

Classical HCI: Wicked problems and the sticky problem of viscosity Many problems are “wicked” (Rittel & Webber) Deciding what to do is often harder than doing it But HCI models assume a ‘correct’ sequence of actions How do you change your mind if something goes wrong? Have no definitive formulation, stopping rule, or potential for iteration problem solving planning knowledge representation Many systems make it hard to change your mind

State of the art in intelligent user interfaces

Established paradigms of interacting with ML Perfect information games (toy worlds, chess, go, videogames) Recommender systems Not particularly interesting – they ignore problems of context and goal formation Once a major research area, now familiar - Amazon, Spotify etc Dialogue models: diagnostics, FAQ retrieval, interactive query refinement An early example was “meta. FAQ” from Cambridge company Transversal But also familiar – consider usage of Google results, autocomplete, image search Programming by example / program synthesis Human-in-the-loop automation Turing tests – but what is the (wicked? ) objective function?

Themes at Intelligent User Interfaces (IUI) conference Interactive labelling Information retrieval Information visualisation Recommender systems Personalisation Gesture recognition Tutoring systems User state recognition Trust

Themes in ACM TIIS (Trans. Intell. Interactive Systems) Creative arts applications Brain and body measurement Models of trust and persuastion Visual analytics Gaze and gesture interaction Recommender and query systems Affect and robot interaction

Themes of CHI 2016 workshop on Interaction with ML Gesture tracking Debugging ‘User state’ detection, including brain activity Interpreting topic models & sense-making Interactive visualisation / analytics Creativity – art and music Crowd-assisted data mining

New perspective 1: Mixed Initiative Interaction

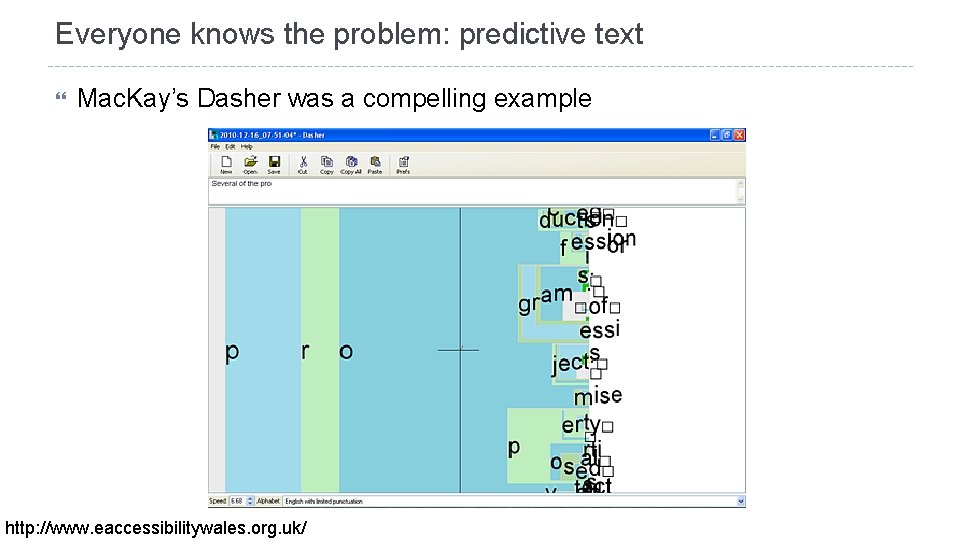

Everyone knows the problem: predictive text Mac. Kay’s Dasher was a compelling example http: //www. eaccessibilitywales. org. uk/

Principles of Mixed-Initiative User Interfaces Classic paper by Eric Horvitz: Principles of mixed-initiative user interfaces. In proceedings CHI 1999, pp. 159 -166. Advocates elegant coupling of automated services with direct manipulation Autonomous actions should be taken only when an agent believes that they will have greater expected value than inaction for the user.

How to add value with automation Consider uncertainty about user’s goals Consider status of user’s attention in timing services with cost/benefit of deferring action to a time when action will be less distracting. Infer ideal action in light of costs, benefits, and uncertainties Employ dialog to resolve key uncertainties consider costs of bothering user needlessly Allow efficient direct invocation and termination Minimise cost of poor guesses about action and timing

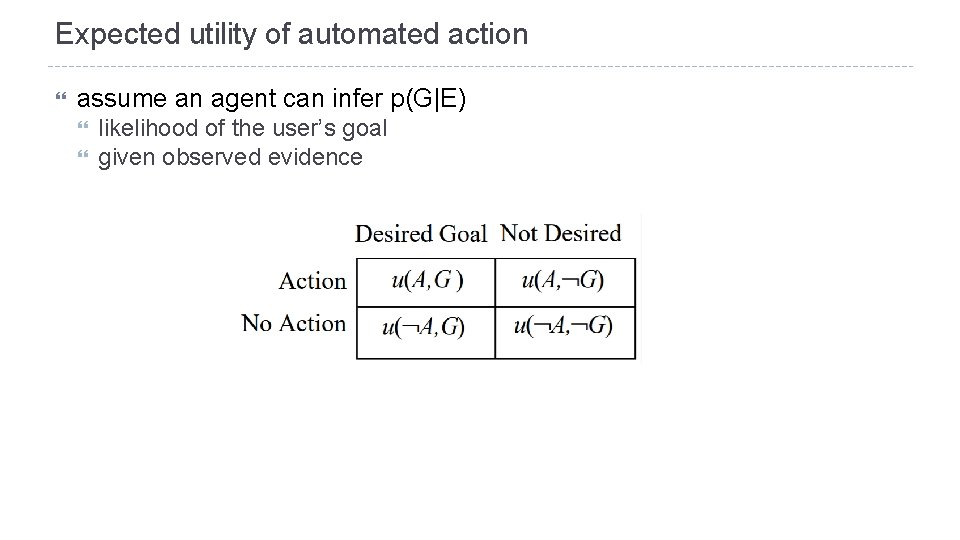

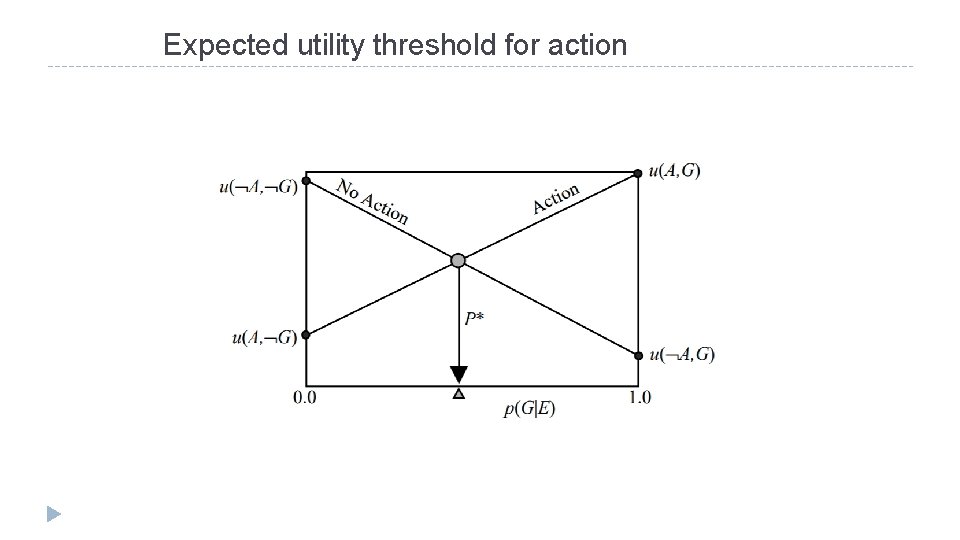

Expected utility of automated action assume an agent can infer p(G|E) likelihood of the user’s goal given observed evidence

Expected utility threshold for action

What does this look like if text entry becomes text commands? Machine: User: This machine can do a whole bunch of stuff. What is most likely to make it do the right stuff? Machine: I know how to do several things. I wonder which one the user wants me to do? I think the user has made a mistake User: I think the machine has made a mistake

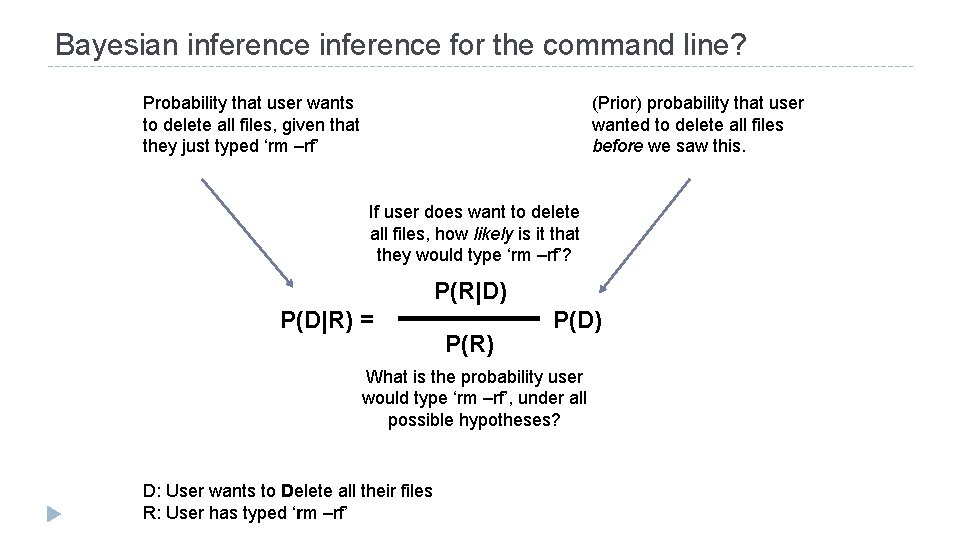

Bayesian inference for the command line? Probability that user wants to delete all files, given that they just typed ‘rm –rf’ (Prior) probability that user wanted to delete all files before we saw this. If user does want to delete all files, how likely is it that they would type ‘rm –rf’? P(R|D) P(D|R) = P(R) P(D) What is the probability user would type ‘rm –rf’, under all possible hypotheses? D: User wants to Delete all their files R: User has typed ‘rm –rf’

… but we don’t want this!

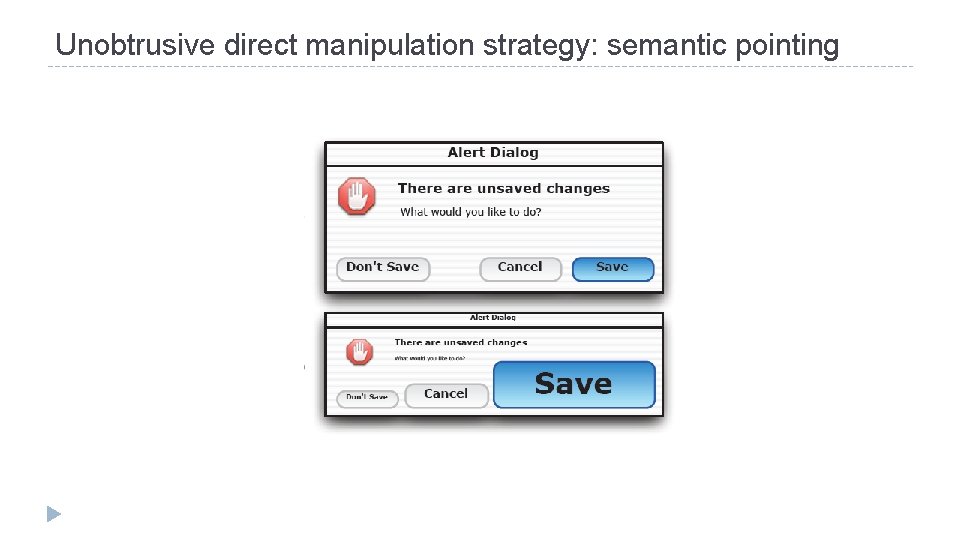

Unobtrusive direct manipulation strategy: semantic pointing

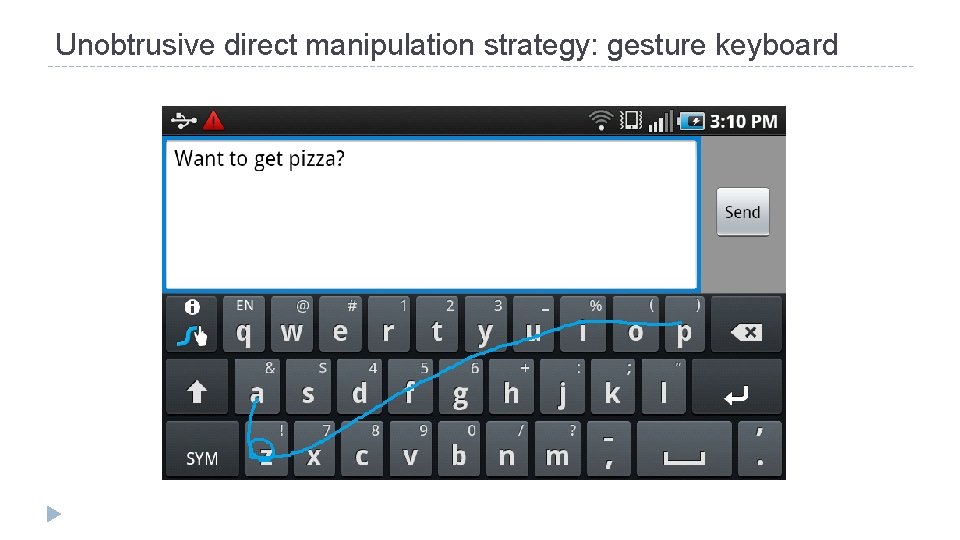

Unobtrusive direct manipulation strategy: gesture keyboard

Research questions: Agency and Control

The golden rules of HCI : rule # 7 “Support an internal locus of control” This rule is based on the observation that: “Users strongly desire the sense that they are in charge of the system and that the system responds to their actions. ” Shneiderman, B. & Plaisant, C. 2009 Designing the User Interface: Strategies for Effective Human-Computer Interaction. 26

The experience of agency is defined as: The experience of controlling one’s own actions and, through this control, affecting the external world. It is the experience of ourselves as agents that allows us to instinctively say: “I did that” Haggard, P. & Tsakiris, M. , The Experience of Agency: Feelings, Judgments, and Responsibility. Current Directions in Psychological Science, 2009. 27

When experience of agency fails Passivity phenomena in schizophrenia People feel that their actions - and sometimes their thoughts and emotions - are not under their own control. Rather they are under the control of some external force or agent. e. g. a patient with schizophrenia says: “It is my hand arm that move, and my fingers pick up the pen, but I don’t control them. ” Mellor, C. S. , First rank symptoms of schizophrenia. Br J Psychiatry, 1970. 28

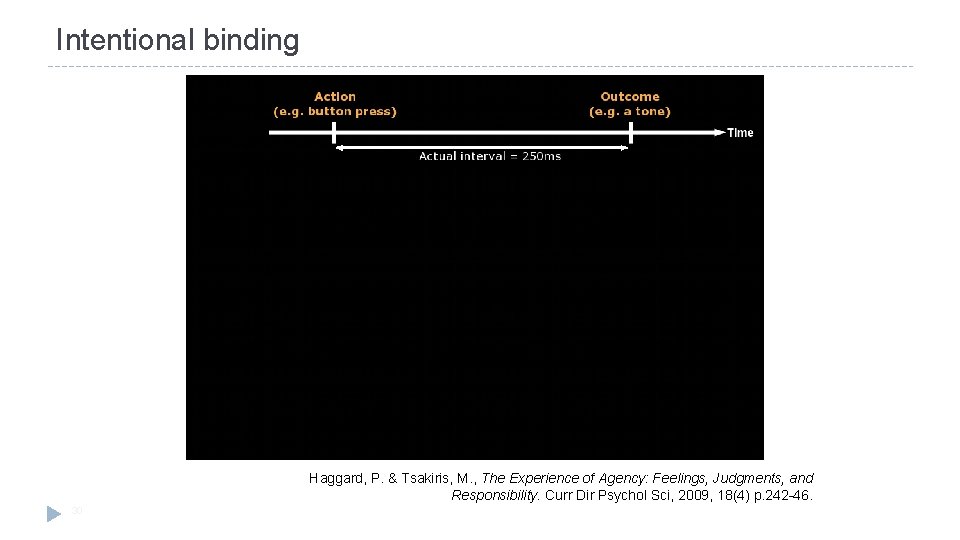

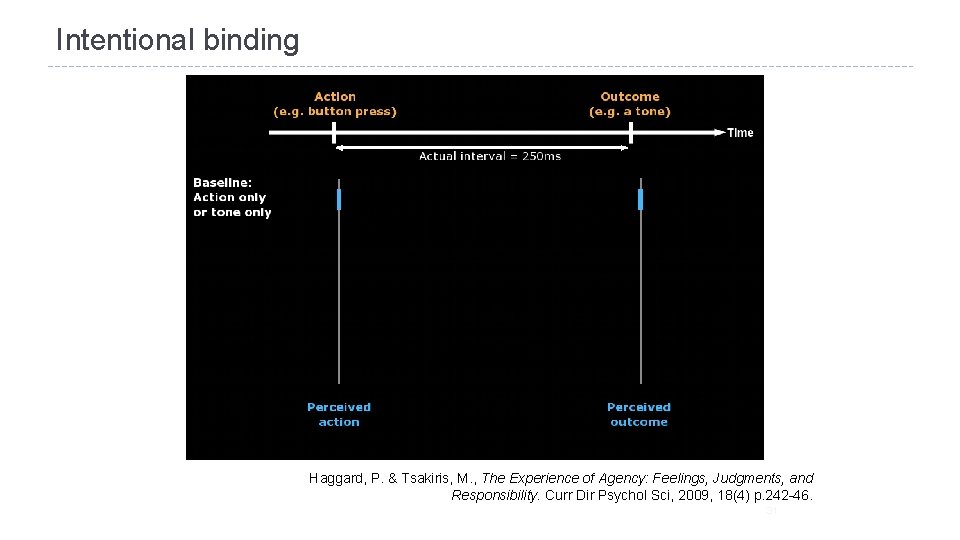

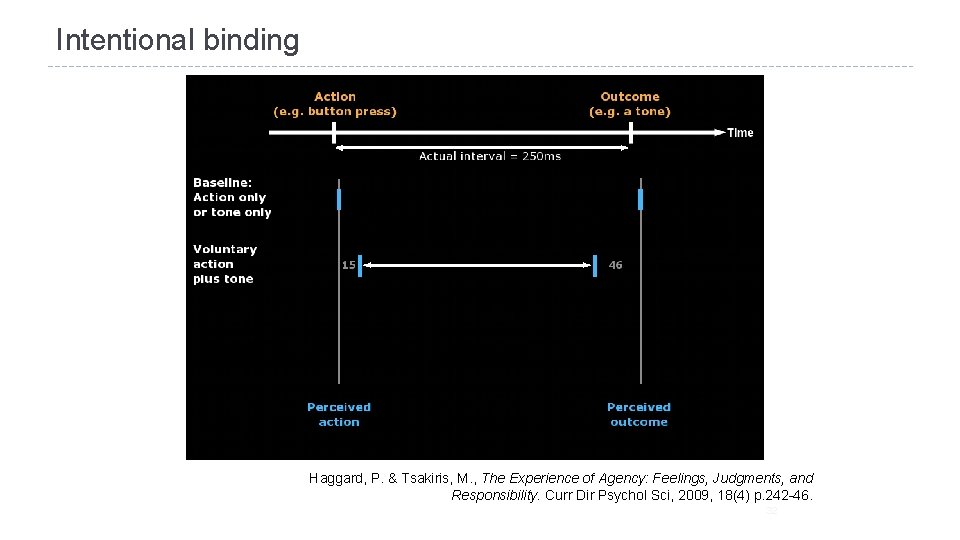

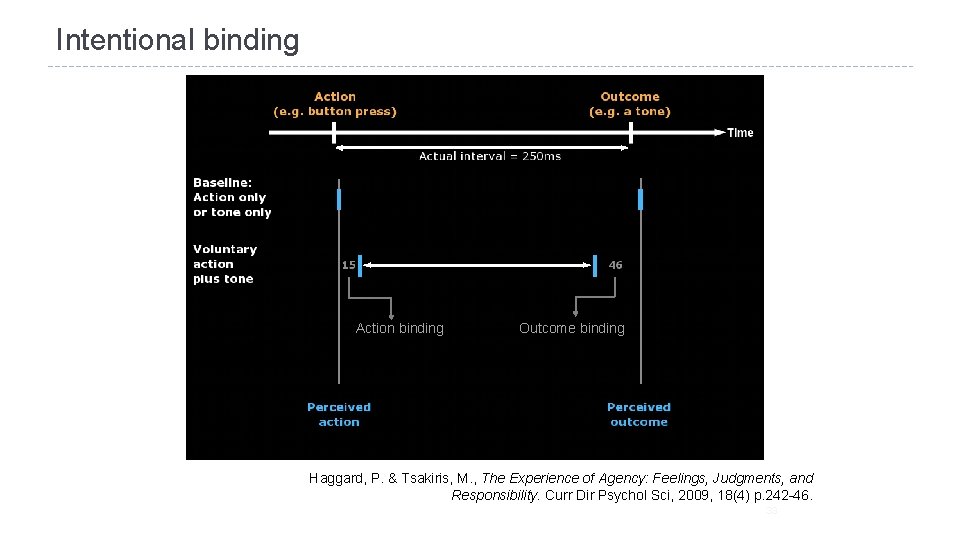

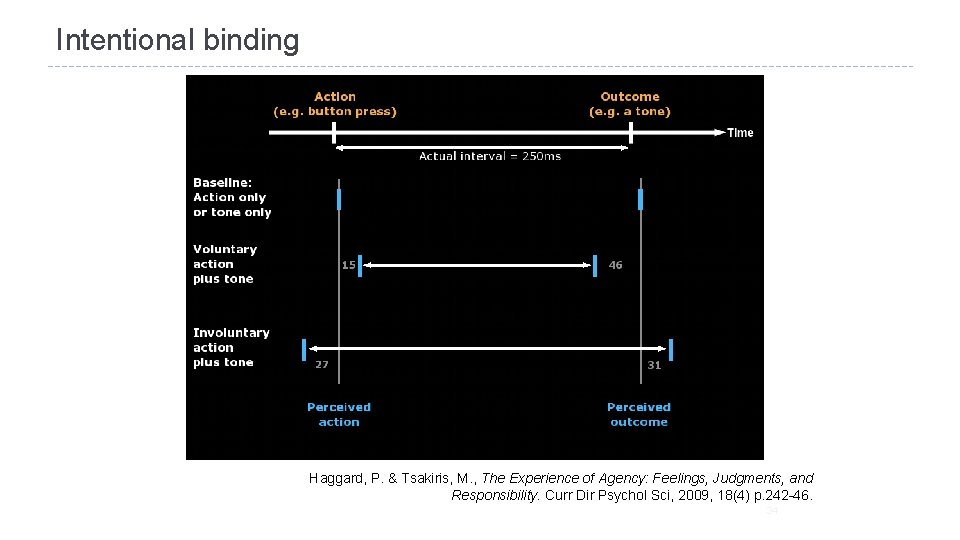

From psychiatric observation to empirical science We need some kind of metric to measure experience of agency We can use the “intentional binding” effect: An illusion that distorts perception of time, for events that result from one’s own actions 29

Intentional binding Haggard, P. & Tsakiris, M. , The Experience of Agency: Feelings, Judgments, and Responsibility. Curr Dir Psychol Sci, 2009, 18(4) p. 242 -46. 30

Intentional binding Haggard, P. & Tsakiris, M. , The Experience of Agency: Feelings, Judgments, and Responsibility. Curr Dir Psychol Sci, 2009, 18(4) p. 242 -46. 31

Intentional binding Haggard, P. & Tsakiris, M. , The Experience of Agency: Feelings, Judgments, and Responsibility. Curr Dir Psychol Sci, 2009, 18(4) p. 242 -46. 32

Intentional binding Action binding Outcome binding Haggard, P. & Tsakiris, M. , The Experience of Agency: Feelings, Judgments, and Responsibility. Curr Dir Psychol Sci, 2009, 18(4) p. 242 -46. 33

Intentional binding Haggard, P. & Tsakiris, M. , The Experience of Agency: Feelings, Judgments, and Responsibility. Curr Dir Psychol Sci, 2009, 18(4) p. 242 -46. 34

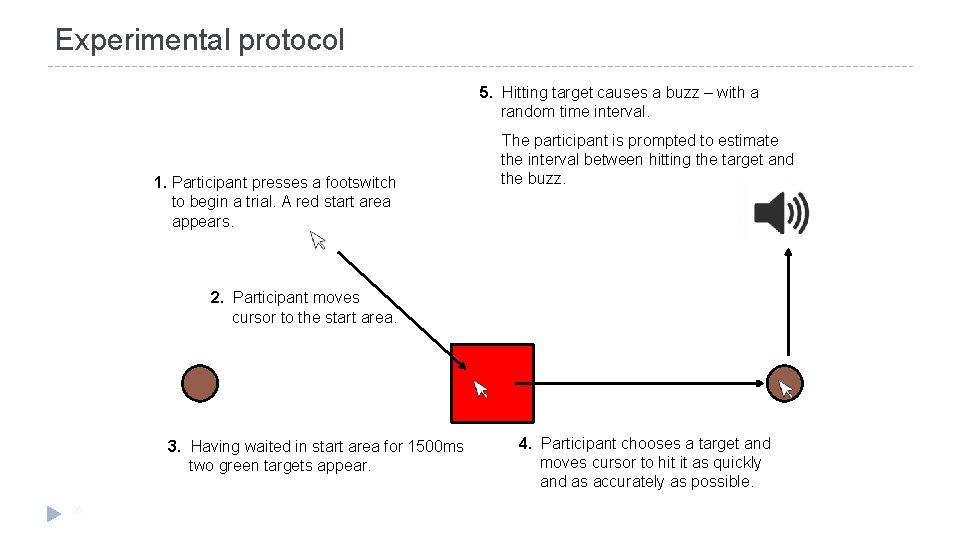

Experimental protocol 5. Hitting target causes a buzz – with a random time interval. 1. Participant presses a footswitch to begin a trial. A red start area appears. The participant is prompted to estimate the interval between hitting the target and the buzz. 2. Participant moves cursor to the start area. 3. Having waited in start area for 1500 ms two green targets appear. 35 4. Participant chooses a target and moves cursor to hit it as quickly and as accurately as possible.

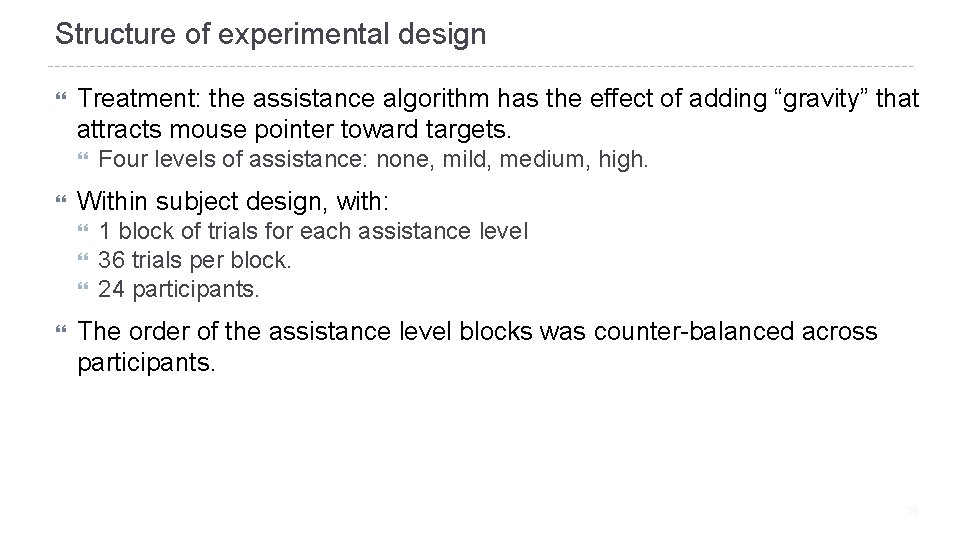

Structure of experimental design Treatment: the assistance algorithm has the effect of adding “gravity” that attracts mouse pointer toward targets. Within subject design, with: Four levels of assistance: none, mild, medium, high. 1 block of trials for each assistance level 36 trials per block. 24 participants. The order of the assistance level blocks was counter-balanced across participants. 36

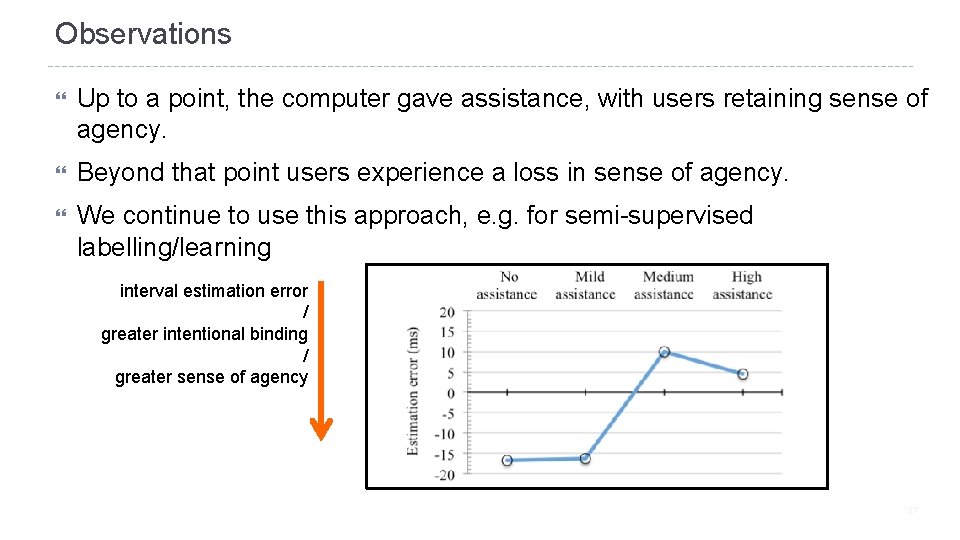

Observations Up to a point, the computer gave assistance, with users retaining sense of agency. Beyond that point users experience a loss in sense of agency. We continue to use this approach, e. g. for semi-supervised labelling/learning interval estimation error / greater intentional binding / greater sense of agency 37

New perspective 2: Program Synthesis

Principles of program synthesis, from HCI perspective The user experience of ML-based synthesis: System response: “OK, I’ll do others the same way” The user says: “Here is an example of what I want to do” Followed by: “You do the rest” How does it know what “others” are? How does it know what “the same way” is? Usability issues: How to specify applicability? How to control generalisation? How to understand what was inferred? How to modify the synthesised program?

Classic programming by example Keyboard macros – demo in Emacs Get a plain text file containing semi-structured text <Ctrl+x> ( starts macro recording Perhaps search for context, cut and paste, add text … Remember to go to known location (e. g. start of next line) <Ctrl+x> ) ends recording <Ctrl+x> e plays back once <ESC> 1 0 0 <Ctrl+x> e repeats 100 time

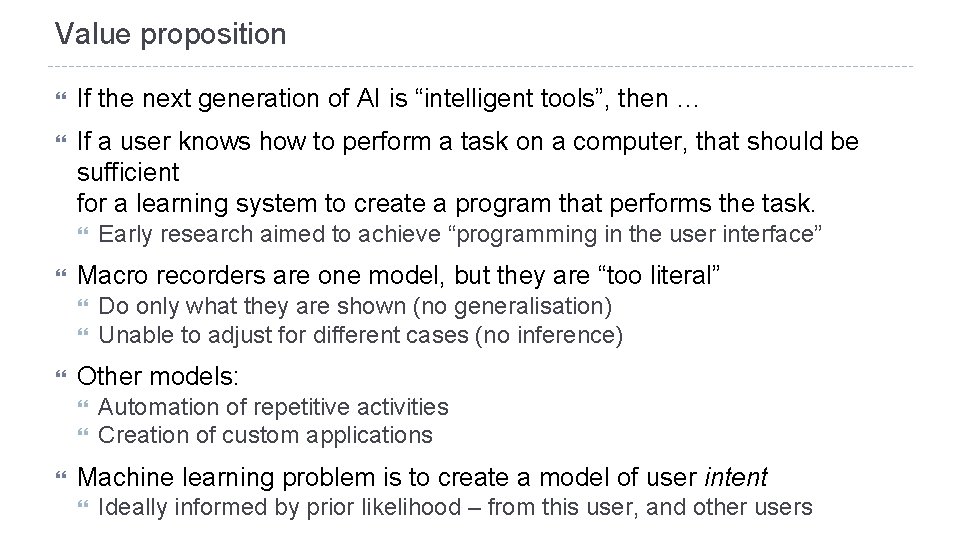

Value proposition If the next generation of AI is “intelligent tools”, then … If a user knows how to perform a task on a computer, that should be sufficient for a learning system to create a program that performs the task. Macro recorders are one model, but they are “too literal” Do only what they are shown (no generalisation) Unable to adjust for different cases (no inference) Other models: Early research aimed to achieve “programming in the user interface” Automation of repetitive activities Creation of custom applications Machine learning problem is to create a model of user intent Ideally informed by prior likelihood – from this user, and other users

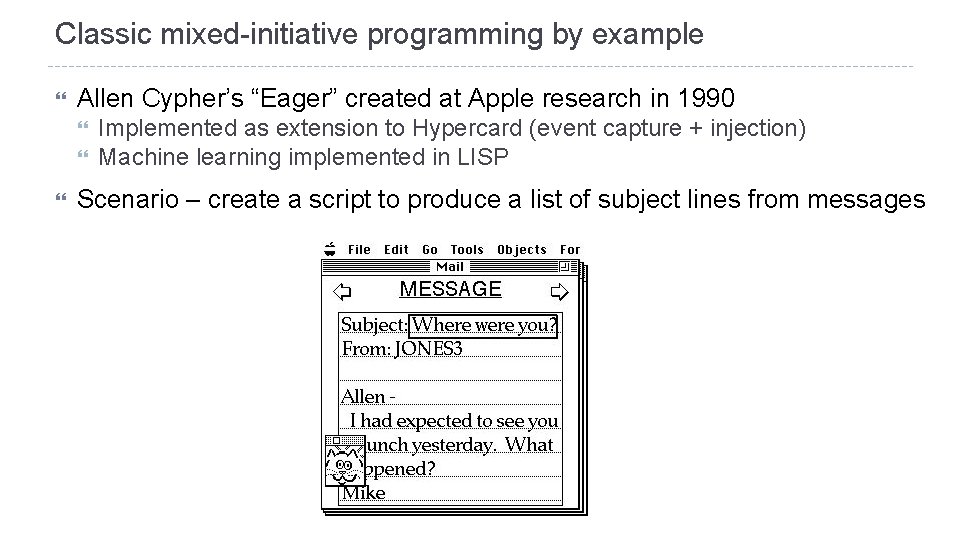

Classic mixed-initiative programming by example Allen Cypher’s “Eager” created at Apple research in 1990 Implemented as extension to Hypercard (event capture + injection) Machine learning implemented in LISP Scenario – create a script to produce a list of subject lines from messages

Research questions: Abstraction and Attention

Why is the generalisation step so significant? Generalisation from examples is fundamental to mental abstraction So program synthesis requires the user to conceptualise their problem in an abstract way Repetition of concrete instances (i. e. direct manipulation) does not require abstraction Any automated action (i. e. programming) does require abstraction Programming by example is a strategy for achieving this … … the user can become comfortable with individual cases, while … the system formulates abstractions at the same time the user does. Essential that user & system can “discuss” what they are concluding: So is this what you want me to do? No, here is a case where you should do something else. Oh, I see, so like this?

The Attention Investment model of abstraction use Programming is not like direct manipulation, so the standard rules of usability (Shneiderman’s direct manipulation principles) do not apply: Incremental action Fully visible state Immediate feedback Easily reversible actions Making abstractions is cognitively hard, because actions take place in the future, and they apply to multiple potential contexts. Automating repetitive actions does save time and (mental) effort But formulating and refining abstractions costs time and mental effort! What leads a user to approach their tasks in this way? Richard Potter’s “Just In Time Programming” Rosson and Carroll’s “Paradox of the Active User” Bainbridge’s “Ironies of Automation” Burnett’s “Surprise, Explain, Reward” (cf mixed-initiative design strategies, including Clippy)

A typical application: Flash. Fill for Excel Original work by Sumit Gulwani (MSR Redmond) “Synthesises a program from input-output examples” Automating String Processing in Spreadsheets using Input-Output Examples Proceedings of POPL 2011 https: //www. microsoft. com/en-us/research/publication/automating-stringprocessing-spreadsheets-using-input-output-examples/ How do you choose the examples? How do you know what will happen? Using this ‘program’ as a component of a larger system is still a research topic Live Demo (requires Excel 2013/16) Paste a list of semi-structured text data into the left column Type an example transform result in top cell to the right, then <Enter> Press <Ctrl+E>

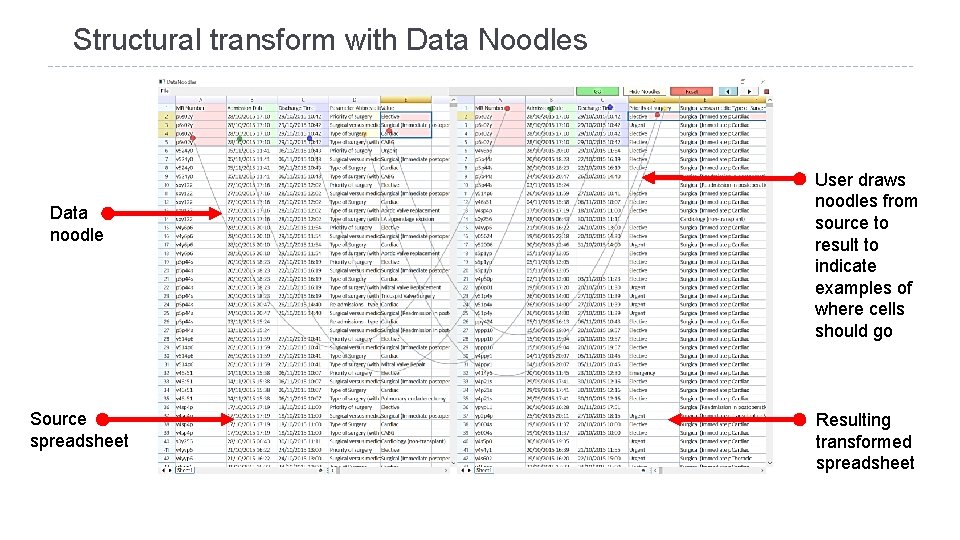

Structural transform with Data Noodles Data noodle Source spreadsheet User draws noodles from source to result to indicate examples of where cells should go Resulting transformed spreadsheet

Data Noodles synthesis of table transformations Interactive transformation paradigm Search procedure uses off-the-shelf program synthesis toolkit Directed search for fold/unfold transforms that will achieve the demonstrated result PROSE SDK from Gulwani team at MSR Redmond Custom-built front-end The “spreadsheet” is purely for familiarity of presentation No actual spreadsheet calculation is performed Drag-and-drop target previews allow user to anticipate inference Noodles preserve and visualise the demonstrated actions Allow reasoning about causality from example to synthesised program Potentially support modification/correction of examples

Coda: Coda

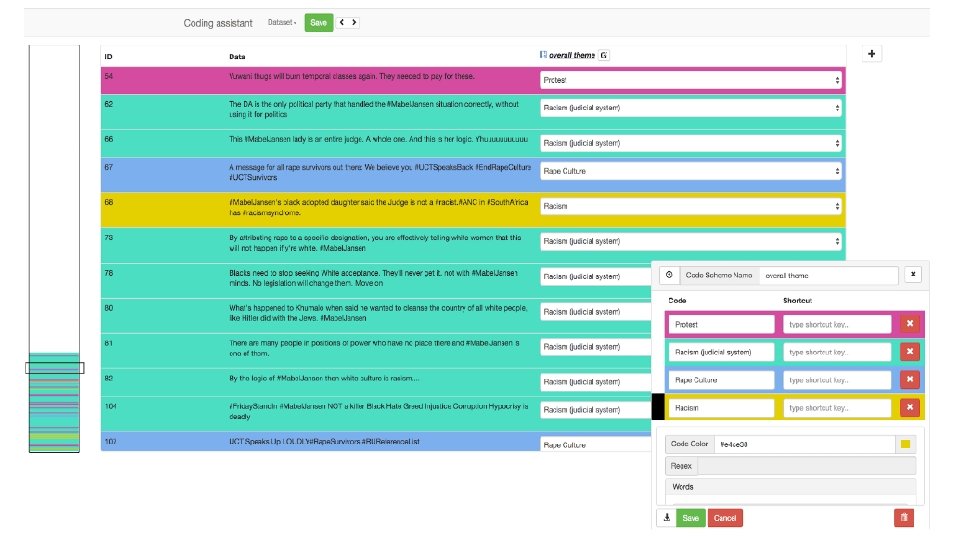

Coda: Coda Mixed initiative interface created for Africa’s Voices Foundation Somali translators classify SMS messages relating to public health semi-automated labels negotiated through varying shades of the category colours. we’ve studied agency in the rhythm of interaction with this kind of interface

What next? 2019/20 – co-constructing AI in Africa Collaboration with AI researchers and students in Namibia, Kenya, Ethiopia, Uganda, South Africa Collaboration with Vikash Mansinghka (MIT) Open source probabilistic computing stack: Bayes. DB + Venture language + Gen modelling Supporting innovation and entrepreneurship in “data poor” societies In association with

- Slides: 58