BEYOND CLASSICAL SEARCH 1 Instructor Kris Hauser http

BEYOND CLASSICAL SEARCH 1 Instructor: Kris Hauser http: //cs. indiana. edu/~hauserk

AGENDA Local search, optimization Branch and bound search Online search

LOCAL SEARCH Light-memory search methods No search tree; only the current state is represented! Applicable to problems where the path is irrelevant (e. g. , 8 -queen) � For other problems, must encode entire paths in the state Many similarities with optimization techniques 3

IDEA: MINIMIZE H(N) …Because h(G)=0 for any goal G An optimization problem! 4

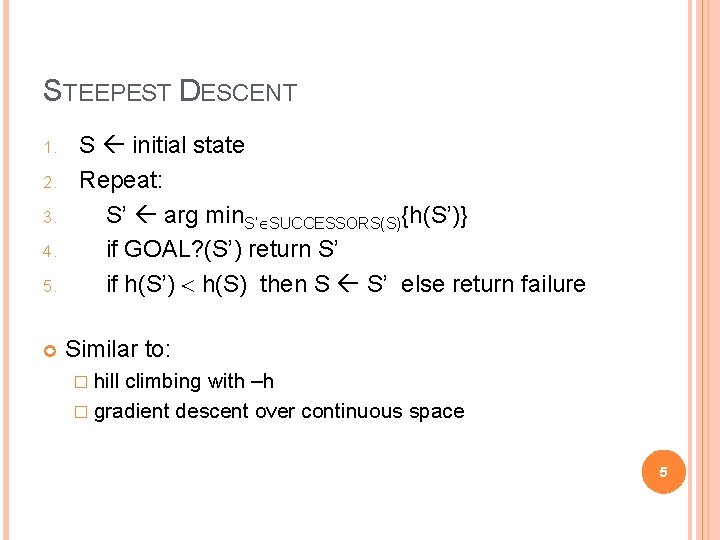

STEEPEST DESCENT 1. 2. 3. 4. 5. S initial state Repeat: S’ arg min. S’ SUCCESSORS(S){h(S’)} if GOAL? (S’) return S’ if h(S’) h(S) then S S’ else return failure Similar to: � hill climbing with –h � gradient descent over continuous space 5

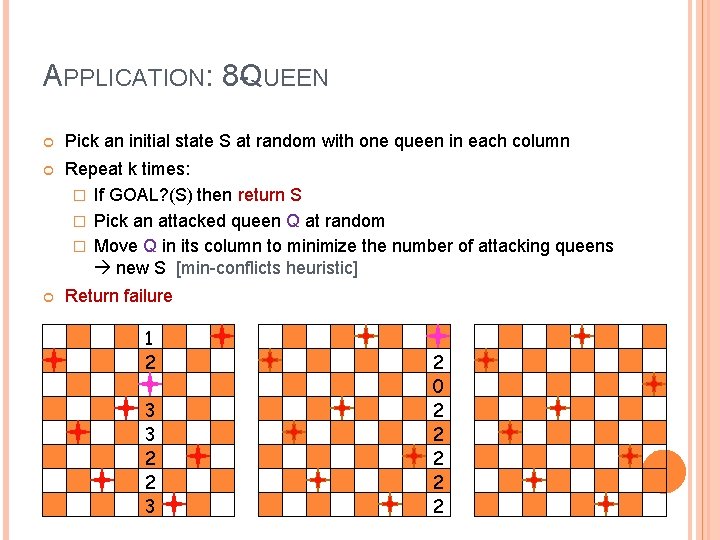

APPLICATION: 8 -QUEEN Pick an initial state S at random with one queen in each column Repeat k times: � If GOAL? (S) then return S � Pick an attacked queen Q at random � Move Q in its column to minimize the number of attacking queens new S [min-conflicts heuristic] Return failure 1 2 3 3 2 2 3 2 0 2 2 2

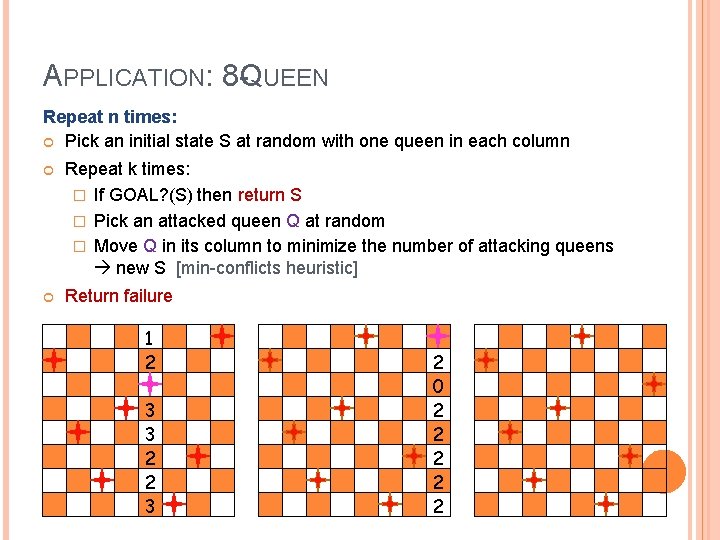

APPLICATION: 8 -QUEEN Repeat n times: Pick an initial state S at random with one queen in each column Repeat k times: � If GOAL? (S) then return S � Pick an attacked queen Q at random � Move Q in its column to minimize the number of attacking queens new S [min-conflicts heuristic] Return failure 1 2 3 3 2 2 3 2 0 2 2 2

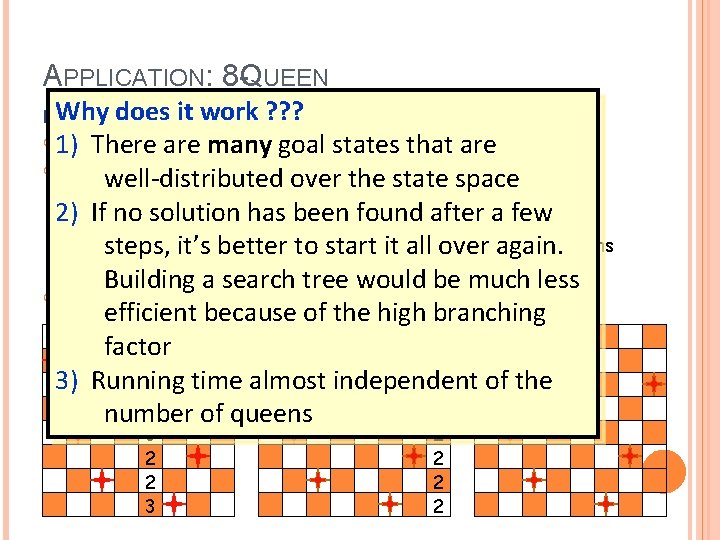

APPLICATION: 8 -QUEEN Whyndoes Repeat times: it work ? ? ? 1) Pick. There an initialare statemany S at random one queen each column goalwith states thatinare Repeat k times: well-distributed over the state space � If GOAL? (S) then return S 2)� If. Pick noansolution has. Qbeen found after a few attacked queen at random � Move Q in it’s its column to minimize theitnumber of attacking steps, better to start all over again. queens new S [min-conflicts heuristic] Building a search tree would be much less Return failure efficient because of the high branching 1 factor 2 2 3) Running time almost independent of the 0 3 2 number of queens 3 2 2 2 2

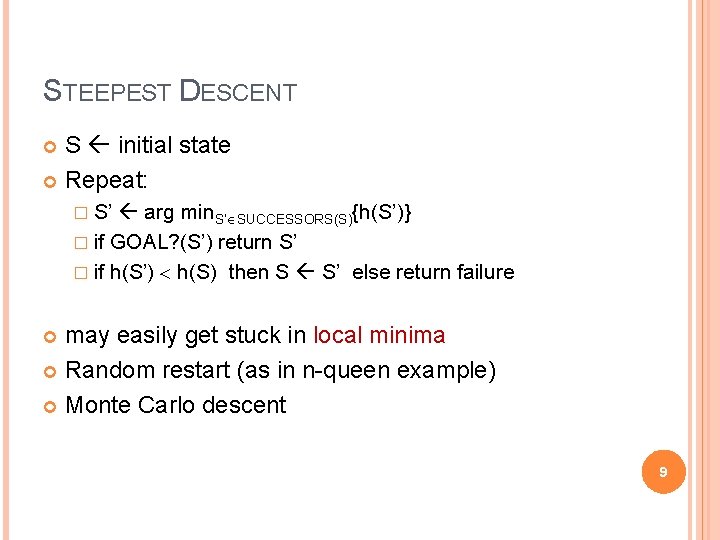

STEEPEST DESCENT S initial state Repeat: � S’ arg min. S’ SUCCESSORS(S){h(S’)} � if GOAL? (S’) return S’ � if h(S’) h(S) then S S’ else return failure may easily get stuck in local minima Random restart (as in n-queen example) Monte Carlo descent 9

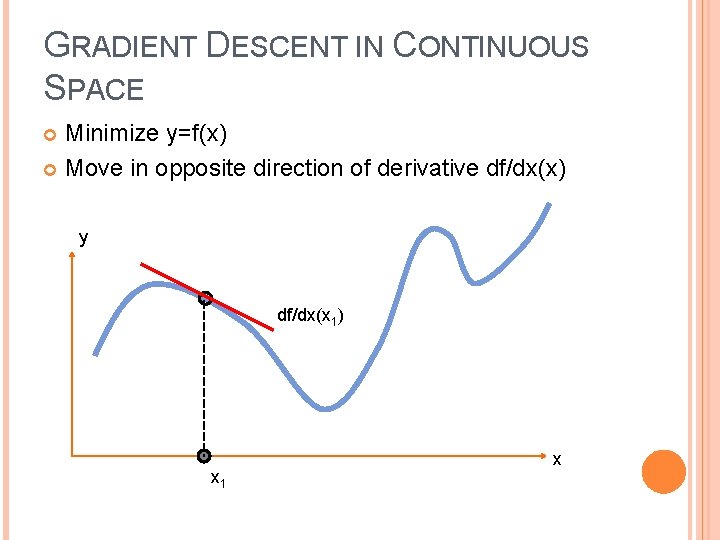

GRADIENT DESCENT IN CONTINUOUS SPACE Minimize y=f(x) Move in opposite direction of derivative df/dx(x) y df/dx(x 1) x 1 x

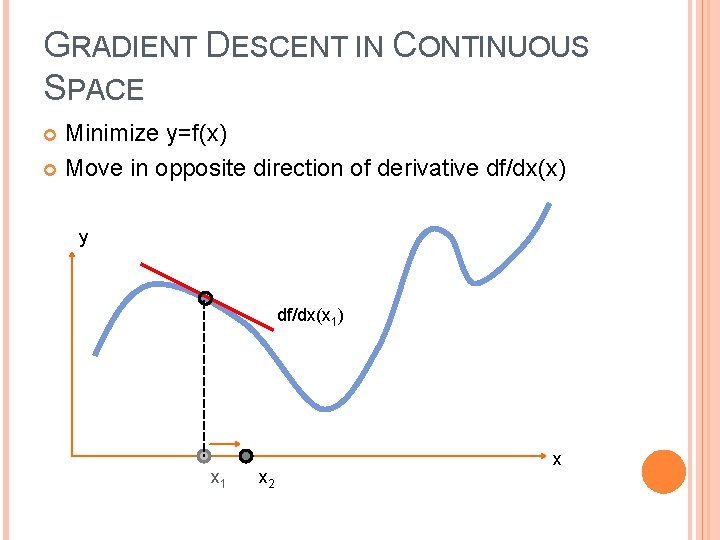

GRADIENT DESCENT IN CONTINUOUS SPACE Minimize y=f(x) Move in opposite direction of derivative df/dx(x) y df/dx(x 1) x 1 x 2 x

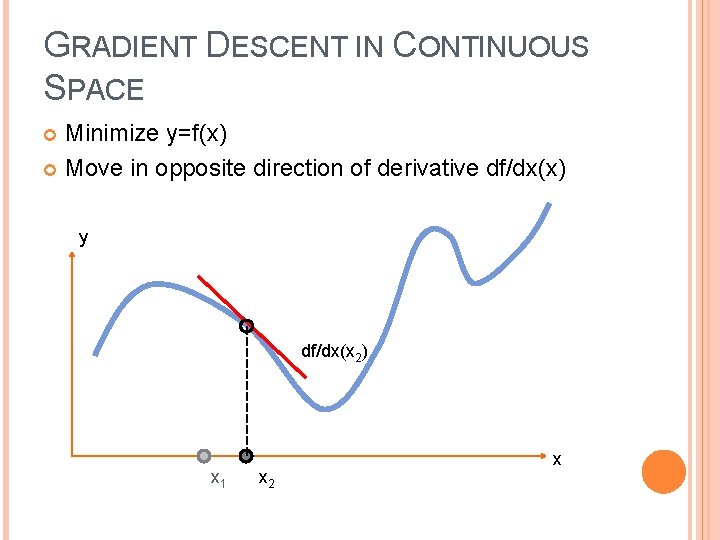

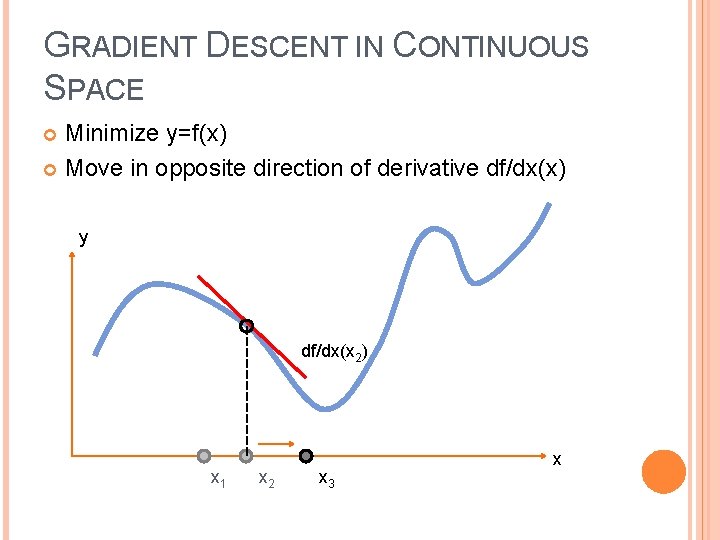

GRADIENT DESCENT IN CONTINUOUS SPACE Minimize y=f(x) Move in opposite direction of derivative df/dx(x) y df/dx(x 2) x 1 x 2 x

GRADIENT DESCENT IN CONTINUOUS SPACE Minimize y=f(x) Move in opposite direction of derivative df/dx(x) y df/dx(x 2) x 1 x 2 x 3 x

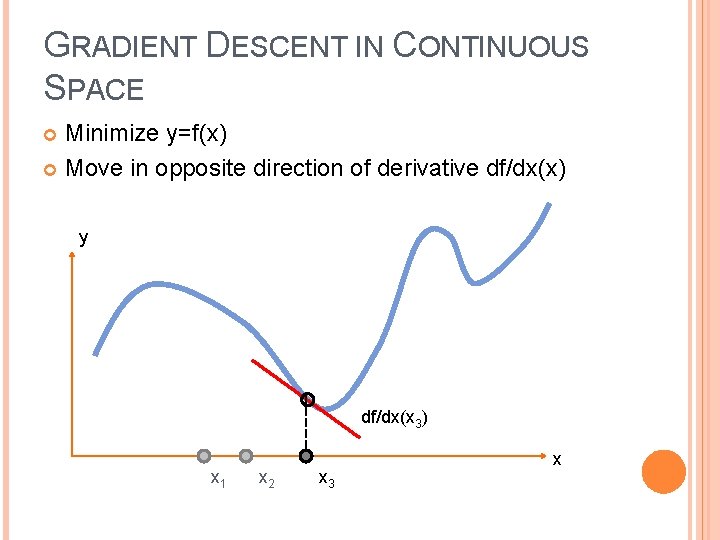

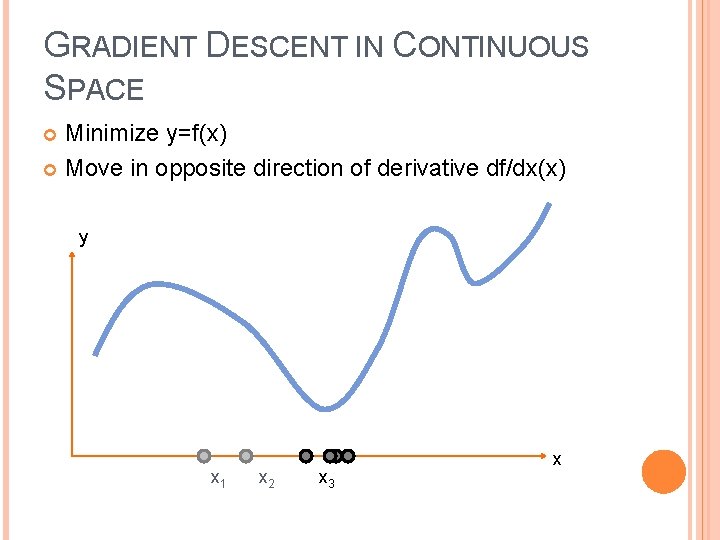

GRADIENT DESCENT IN CONTINUOUS SPACE Minimize y=f(x) Move in opposite direction of derivative df/dx(x) y df/dx(x 3) x 1 x 2 x 3 x

GRADIENT DESCENT IN CONTINUOUS SPACE Minimize y=f(x) Move in opposite direction of derivative df/dx(x) y x 1 x 2 x 3 x

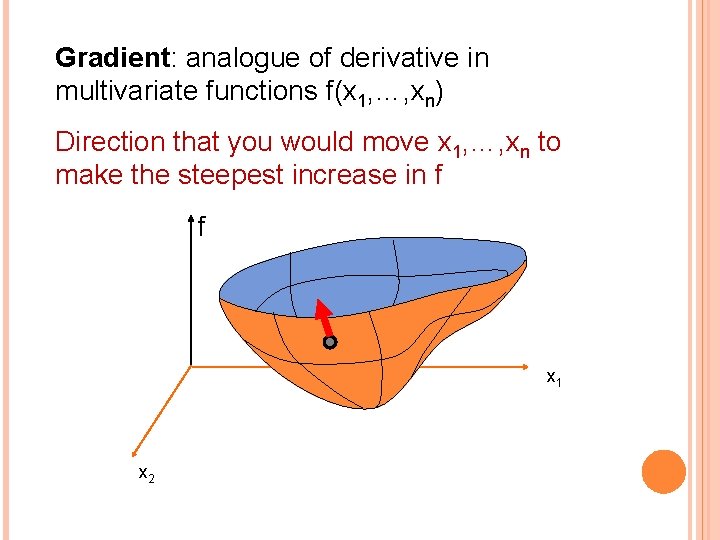

Gradient: analogue of derivative in multivariate functions f(x 1, …, xn) Direction that you would move x 1, …, xn to make the steepest increase in f f x 1 x 2

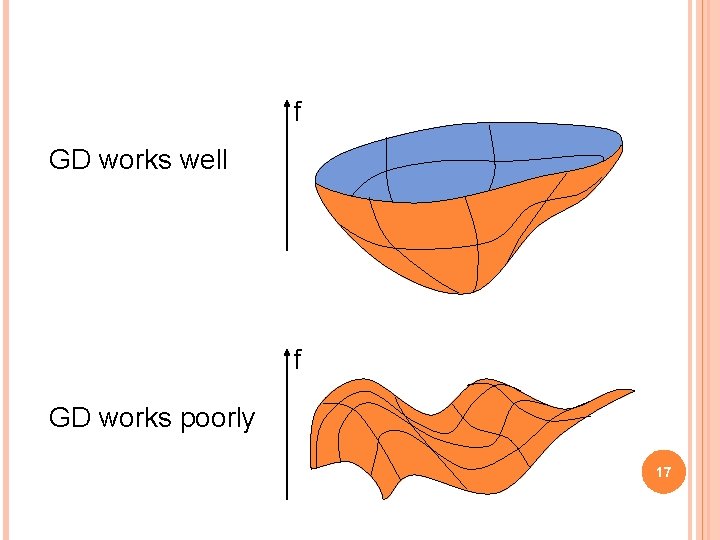

f GD works well f GD works poorly 17

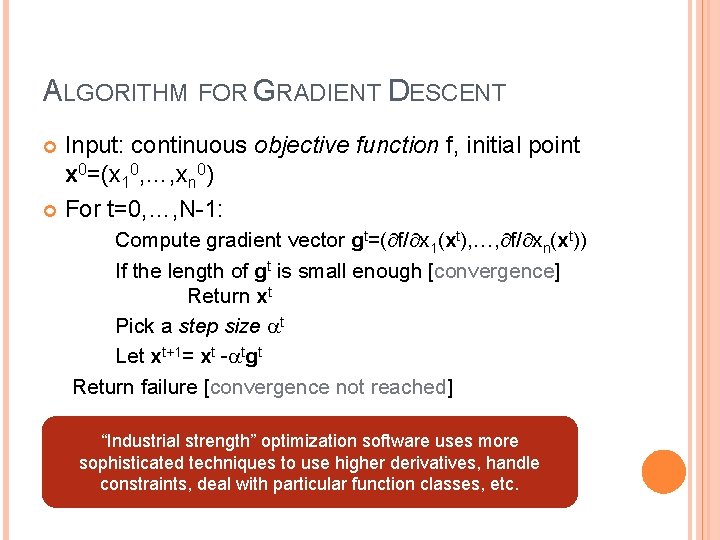

ALGORITHM FOR GRADIENT DESCENT Input: continuous objective function f, initial point x 0=(x 10, …, xn 0) For t=0, …, N-1: Compute gradient vector gt=( f/ x 1(xt), …, f/ xn(xt)) If the length of gt is small enough [convergence] Return xt Pick a step size t Let xt+1= xt - tgt Return failure [convergence not reached] “Industrial strength” optimization software uses more sophisticated techniques to use higher derivatives, handle constraints, deal with particular function classes, etc.

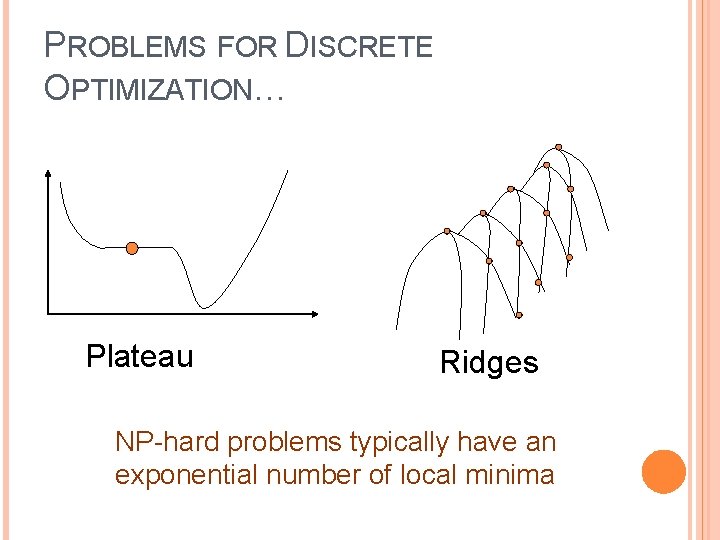

PROBLEMS FOR DISCRETE OPTIMIZATION… Plateau Ridges NP-hard problems typically have an exponential number of local minima 19

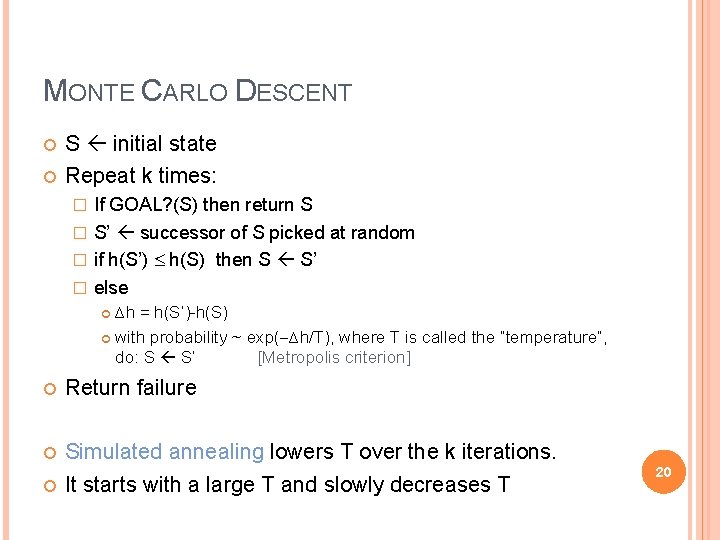

MONTE CARLO DESCENT S initial state Repeat k times: If GOAL? (S) then return S � S’ successor of S picked at random � if h(S’) h(S) then S S’ � else � ∆h = h(S’)-h(S) with probability ~ exp( ∆h/T), where T is called the “temperature”, do: S S’ [Metropolis criterion] Return failure Simulated annealing lowers T over the k iterations. It starts with a large T and slowly decreases T 20

“PARALLEL” LOCAL SEARCH TECHNIQUES They perform several local searches concurrently, but not independently: Beam search Genetic algorithms Tabu search Ant colony/particle swarm optimization 21

EMPIRICAL SUCCESSES OF LOCAL SEARCH Satisfiability (SAT) Vertex Cover Traveling salesman problem Planning & scheduling Many others… 22

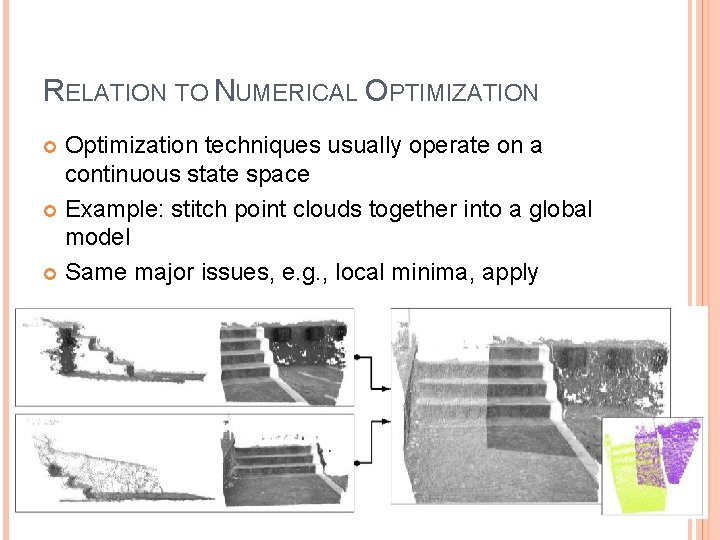

RELATION TO NUMERICAL OPTIMIZATION Optimization techniques usually operate on a continuous state space Example: stitch point clouds together into a global model Same major issues, e. g. , local minima, apply

DEALING WITH IMPERFECT KNOWLEDGE

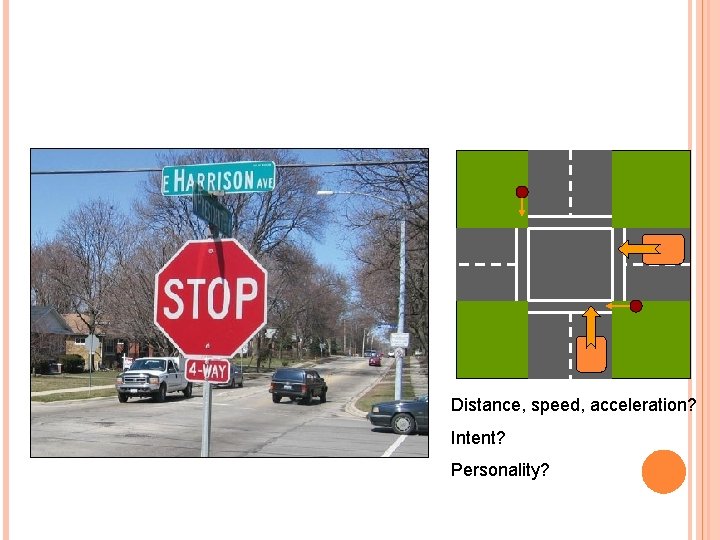

Classical search assumes that: � World states are perfectly observable, the current state is exactly known � Action representations are perfect, states are exactly predicted How an agent can cope with adversaries, uncertainty, and imperfect information? 25

Distance, speed, acceleration? Intent? Personality? 26

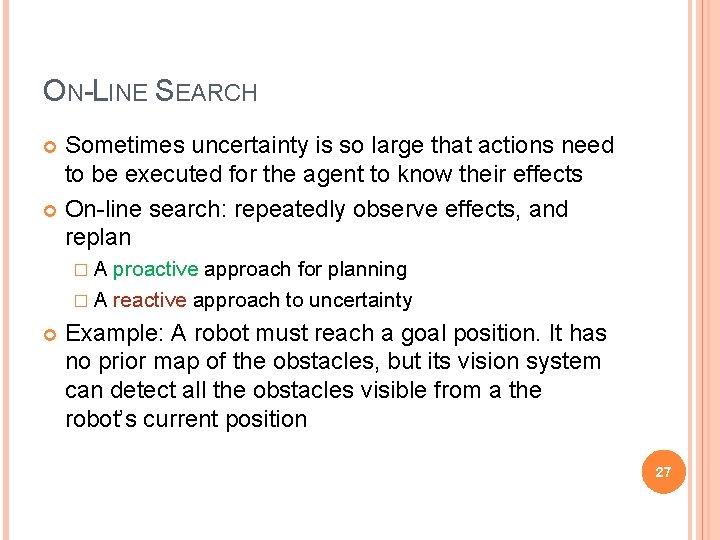

ON-LINE SEARCH Sometimes uncertainty is so large that actions need to be executed for the agent to know their effects On-line search: repeatedly observe effects, and replan �A proactive approach for planning � A reactive approach to uncertainty Example: A robot must reach a goal position. It has no prior map of the obstacles, but its vision system can detect all the obstacles visible from a the robot’s current position 27

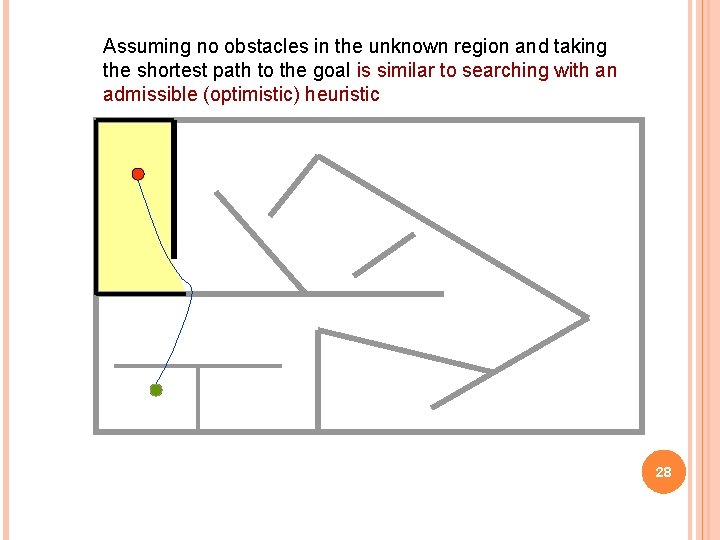

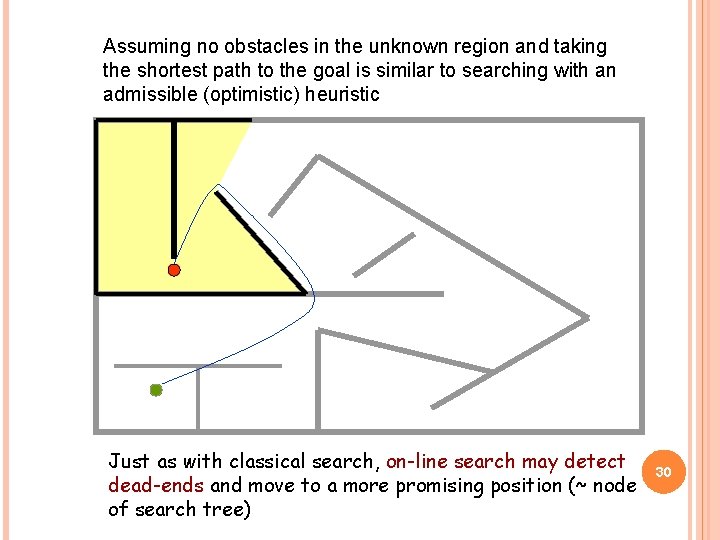

Assuming no obstacles in the unknown region and taking the shortest path to the goal is similar to searching with an admissible (optimistic) heuristic 28

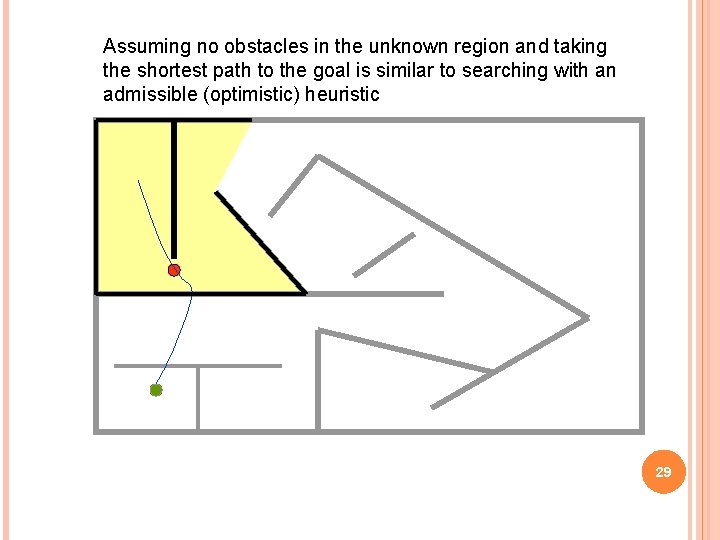

Assuming no obstacles in the unknown region and taking the shortest path to the goal is similar to searching with an admissible (optimistic) heuristic 29

Assuming no obstacles in the unknown region and taking the shortest path to the goal is similar to searching with an admissible (optimistic) heuristic Just as with classical search, on-line search may detect dead-ends and move to a more promising position (~ node of search tree) 30

D* ALGORITHM FOR MOBILE ROBOTS Tony Stentz 31

REAL-TIME REPLANNING AMONG UNPREDICTABLY MOVING OBSTACLES

NEXT CLASS Uncertain and partially observable environments Game playing Read R&N 5. 1 -4 HW 1 due at end of next class

- Slides: 33