BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Performance Understanding Prediction

BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Performance Understanding, Prediction, and Tuning at the Berkeley Institute for Performance Studies (BIPS) Katherine Yelick, BIPS Director Lawrence Berkeley National Laboratory and U. C. Berkeley, EECS Dept. National Science Foundation Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V I S I O N

Challenges to Performance BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Two trends in High End Computing • Increasing complicated systems • Multiple forms of parallelism • Many levels of memory hierarchy • Complex systems software in between • Increasingly sophisticated algorithms • Unstructured meshes and sparse matrices • Adaptivity in time and space • Multi-physics models lead to hybrid approaches • Conclusion: Deep understanding of performance at all levels is important Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 2 I S I O N

BIPS Institute Goals BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Bring together researchers on all aspects of performance engineering • Use performance understanding to: • Improve application performance • Compare architectures for application suitability • Influence the design of processors, networks and compilers • Identify algorithmic needs Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 3 I S I O N

BIPS Approaches BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Benchmarking and Analysis • Measure performance • Identify opportunities for improvements in software, hardware, and algorithms • Modeling • Predict performance on future machines • Understand performance limits • Tuning • Improve performance • By hand or with automatic self-tuning tools Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 4 I S I O N

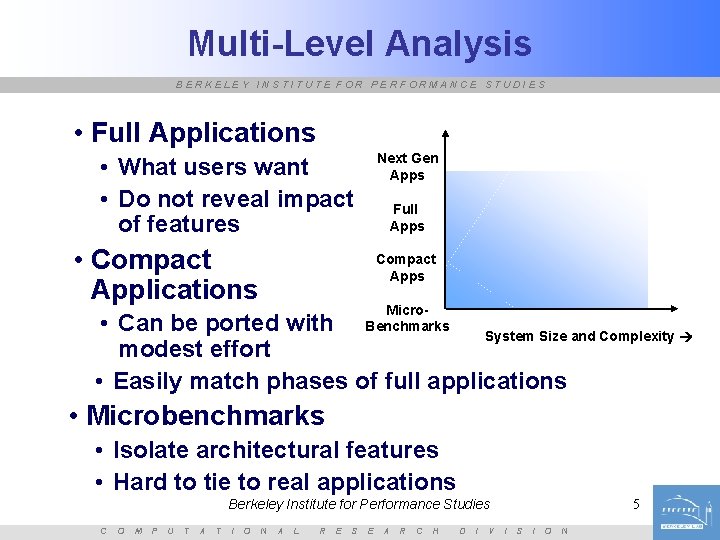

Multi-Level Analysis BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Full Applications Next Gen Apps • What users want • Do not reveal impact of features • Compact Applications Full Apps Compact Apps Micro. Benchmarks • Can be ported with System Size and Complexity modest effort • Easily match phases of full applications • Microbenchmarks • Isolate architectural features • Hard to tie to real applications Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 5 I S I O N

Projects Within BIPS BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Application evaluation on vector processors • APEX: Application Performance Characterization Benchmarking • Be. Bop: Berkeley Benchmarking and Optimization Group • Architectural probes for alternative architectures • LAPACK: Linear Algebra Package • PERC: Performance Engineering Research Center • Top 500 • Vi. VA: Virtual Vector Architectures Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 6 I S I O N

Application Evaluation of Vector Systems BERKELEY INSTITUTE FOR PERFORMANCE STUDIES § Two vector architectures: § The Japanese Earth Simulator § The Cray X 1 § Comparison to “commodity”-based systems § IBM SP, Power 4 § SGI Altix § Ongoing study of DOE applications § § § CACTUS PARATEC LBMHD GTC MADCAP Astrophysics 100, 000 lines Material Science 50, 000 lines Plasma Physics 1, 500 lines Magnetic Fusion 5, 000 lines Cosmology 5, 000 lines grid based Fourier space grid based particle based dense lin. alg. § Work by L. Oliker, J. Borrill, A. Canning, J. Carter, J. Shalf, S. Hongzhang 7 Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V I S I O N

APEX-MAP Benchmark BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Goal: Quantify the effects of temporal and spatial locality • Focus on memory system and network performance • Graphs over temporal and spatial locality axes • Show performance valleys/cliffs Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 11 I S I O N

Application Kernel Benchmarks BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Microbenchmarks are good for: • Identifying architecture/compiler bottlenecks • Optimization opportunities • Application benchmarks are good for: • Machine selection for specific apps • In between: Benchmarks to capture important behavior in real applications • Sparse matrices: SPMV benchmark • Stencil operations: Stencil probe • Possible future: sorting, narrow datatype ops, … Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 22 I S I O N

Sparse Matrix Vector Multiply (SPMV) BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Sparse matrix algorithms • Increasingly important in applications • Challenge memory systems: poor locality • Many matrices have structure, e. g. , dense subblocks, that can be exploited • Benchmarking SPMV • NAS CG, Sci. Mark, use a random matrix • Not reflective of most real problems • Benchmark challenge: • Ship real matrices: cumbersome & inflexible • Build “realistic” synthetic matrices Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 23 I S I O N

Importance of Using Blocked Matrices BERKELEY INSTITUTE FOR PERFORMANCE STUDIES C O M P U T Speedup of best-case Berkeley Institute for Performance blocked Studies matrix vs unblocked A T I O N A L R E S E A R C H D I V I S 24 I O N

Generating Blocked Matrices BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Our approach: Uniformly distributed random structure, each a rxc block • Collect data for r and c from 1 to 12 • Validation: Can our random matrices simulate “typical” matrices? • 44 matrices from various applications • 1: Dense matrix in sparse format • 2 -17: Finite-Element-Method matrices, FEM – 2 -9: single block size, 10 -17: multiple block sizes • 18 -44: non-FEM • Summarization: Weighted by occurrence in test suite (ongoing) Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 25 I S I O N

Itanium 2 prediction BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 26 I S I O N

Ultra. Sparc III prediction BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 27 I S I O N

Sample summary results (Apple G 5, 1. 8 GHz) BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 29 I S I O N

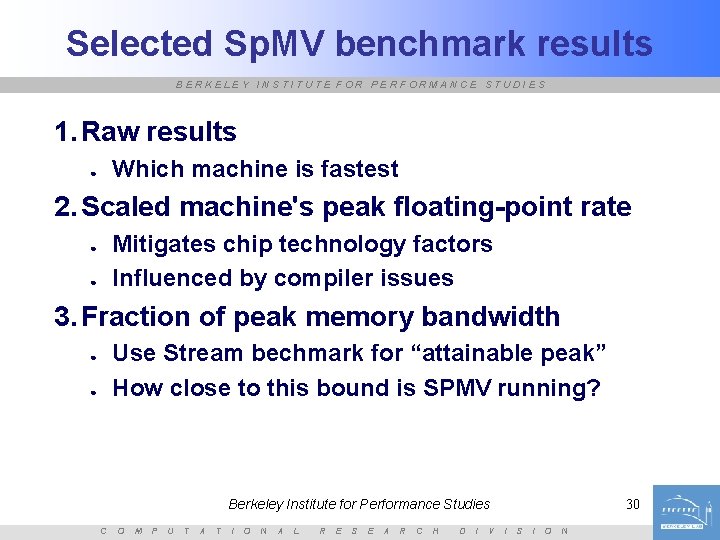

Selected Sp. MV benchmark results BERKELEY INSTITUTE FOR PERFORMANCE STUDIES 1. Raw results Which machine is fastest ● 2. Scaled machine's peak floating-point rate Mitigates chip technology factors Influenced by compiler issues ● ● 3. Fraction of peak memory bandwidth Use Stream bechmark for “attainable peak” How close to this bound is SPMV running? ● ● Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 30 I S I O N

BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 31 I S I O N

BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 32 I S I O N

BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 33 I S I O N

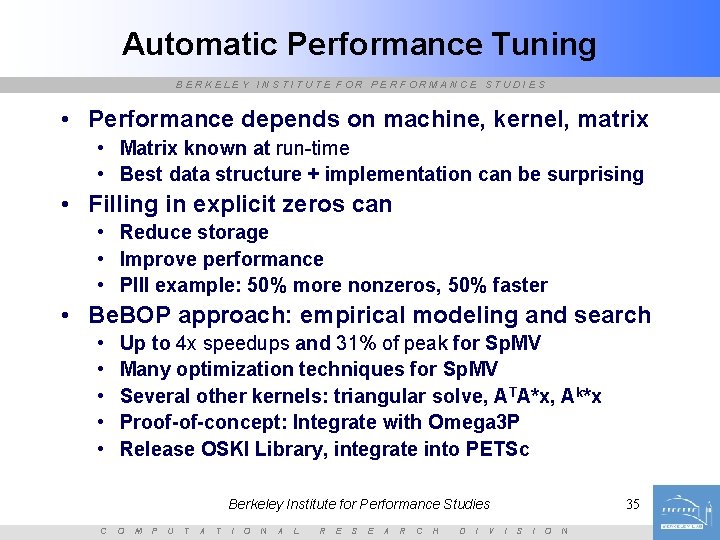

Automatic Performance Tuning BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Performance depends on machine, kernel, matrix • Matrix known at run-time • Best data structure + implementation can be surprising • Filling in explicit zeros can • Reduce storage • Improve performance • PIII example: 50% more nonzeros, 50% faster • Be. BOP approach: empirical modeling and search • • • Up to 4 x speedups and 31% of peak for Sp. MV Many optimization techniques for Sp. MV Several other kernels: triangular solve, ATA*x, Ak*x Proof-of-concept: Integrate with Omega 3 P Release OSKI Library, integrate into PETSc Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 35 I S I O N

Summary of Optimizations BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Optimizations for Sp. MV (numbers shown are maximums) • Register blocking (RB): up to 4 x • Variable block splitting: 2. 1 x over CSR, 1. 8 x over RB • Diagonals: 2 x • Reordering to create dense structure + splitting: 2 x • Symmetry: 2. 8 x • Cache blocking: 6 x • Multiple vectors (Sp. MM): 7 x • Sparse triangular solve • Hybrid sparse/dense data structure: 1. 8 x • Higher-level kernels • AAT*x, ATA*x: 4 x • A 2*x: 2 x over CSR, 1. 5 x • Future: automatic tuning for vectors Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 41 I S I O N

Architectural Probes BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Understanding memory system performance • Interaction with processor architecture: • • Number of registers Arithmetic units (parallelism) Prefetching Cache size, structure, policies • APEX-MAP: memory and network system • Sqmat: processor features included Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 42 I S I O N

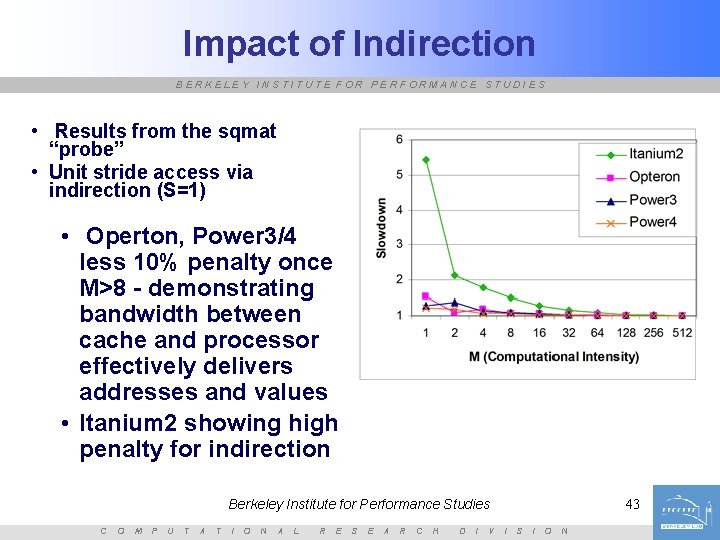

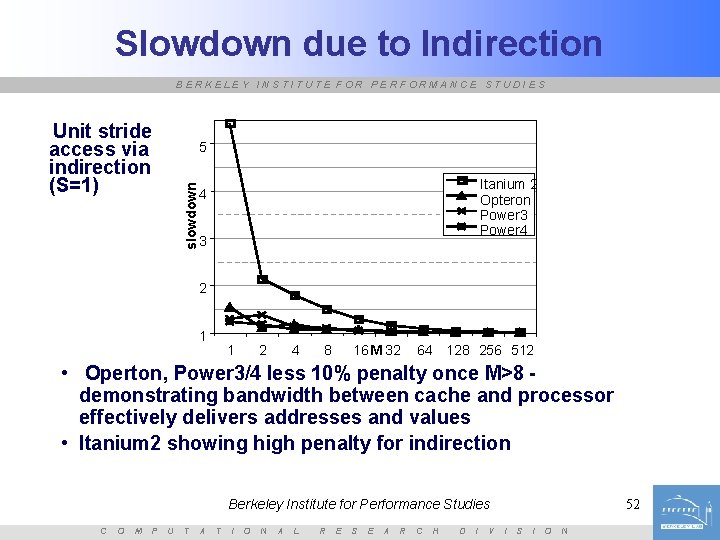

Impact of Indirection BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Results from the sqmat “probe” • Unit stride access via indirection (S=1) • Operton, Power 3/4 less 10% penalty once M>8 - demonstrating bandwidth between cache and processor effectively delivers addresses and values • Itanium 2 showing high penalty for indirection Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 43 I S I O N

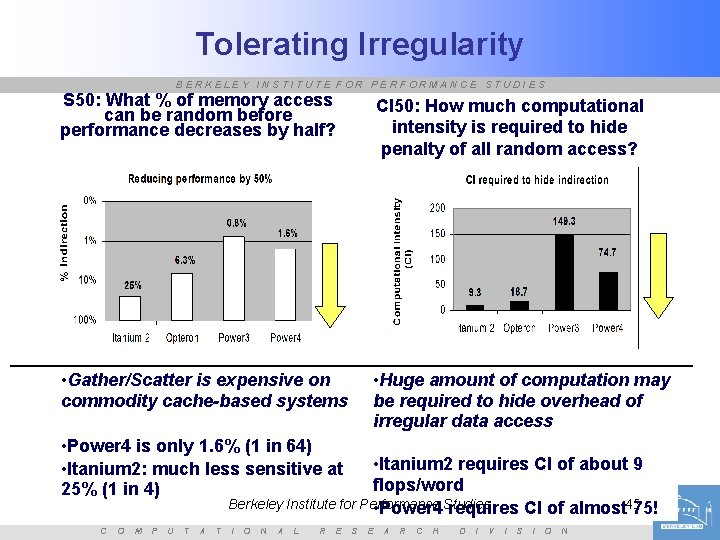

Tolerating Irregularity BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • S 50 (Penalty for random access) • S is the length of each unit stride run • Start with S= (indirect unit stride) • How large must S be to achieve at least 50% of this performance? • All done for a fixed computational intensity • CI 50 (Hide random access penalty using high computational intensity) • CI is computational intensity, controlled by number of squarings (M) per matrix • Start with M=1, S= • At S=1 (every access random), how large must M be to achieve 50% of this performance? • For both, lower numbers are better Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 44 I S I O N

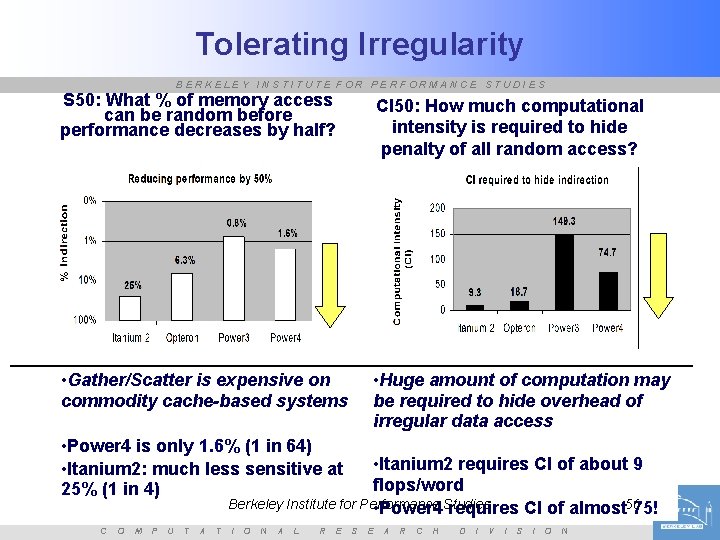

Tolerating Irregularity BERKELEY INSTITUTE FOR PERFORMANCE STUDIES S 50: What % of memory access can be random before performance decreases by half? CI 50: How much computational intensity is required to hide penalty of all random access? • Gather/Scatter is expensive on commodity cache-based systems • Huge amount of computation may be required to hide overhead of irregular data access • Power 4 is only 1. 6% (1 in 64) • Itanium 2: much less sensitive at 25% (1 in 4) • Itanium 2 requires CI of about 9 flops/word Berkeley Institute for Performance • Power 4 Studies requires CI of almost 4575! C O M P U T A T I O N A L R E S E A R C H D I V I S I O N

Memory System Observations BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Caches are important • Important gap has moved: • between L 3/memory, not L 1/L 2 • Prefetching increasingly important • Limited and finicky • Effect may overwhelm cache optimizations if blocking increases non-unit stride access • Sparse codes: matrix volume is key factor • Not the indirect loads Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 46 I S I O N

Ongoing Vector Investigation BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • How much hardware support for vector-like performance? • Can small changes to a conventional processor get this effect? • Role of compilers/software • Related to Power 5 effort • Latency hiding in software • Prefetch engines easily confused • Sparse matrix (random) and grid-based (strided) applications are target • Currently investigating simulator tools and any emerging hardware Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 47 I S I O N

Summary BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • High level goals: • Understand future HPC architecture options that are commercially viable • Can minimal hardware extensions make improve effectiveness for scientific applications • Various technologies • Current, future, academic • Various performance analysis techniques • • Application level benchmarks Application kernel benchmarks (SPMV, stencil) Architectural probes Performance modeling and prediction Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 48 I S I O N

People within BIPS BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • • • • • Jonathan Carter Kaushik Datta James Demmel Joe Gebis Paul Hargrove Parry Husbands Shoaib Kamil Bill Kramer Rajesh Nishtala Leonid Oliker John Shalf Hongzhang Shan Horst Simon David Skinner Erich Strohmaier Rich Vuduc Mike Welcome Sam Williams Katherine Yelick And many collaborators outside Berkeley Lab/Campus Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 49 I S I O N

BERKELEY INSTITUTE FOR PERFORMANCE STUDIES End of Slides Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V I S I O N

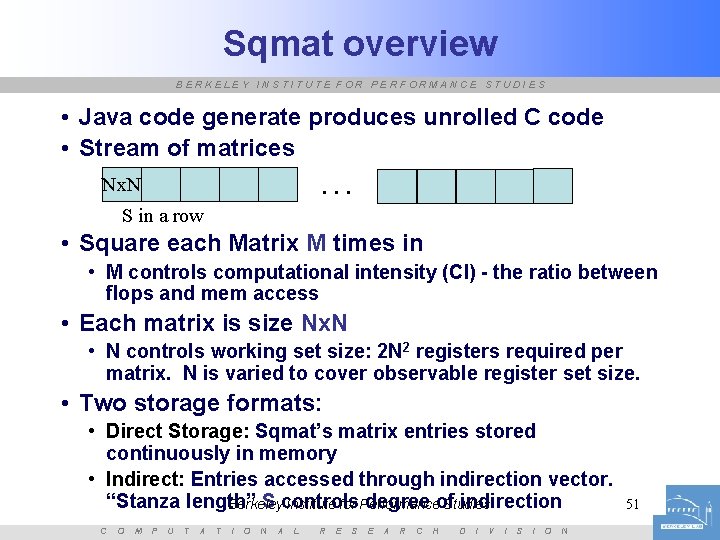

Sqmat overview BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Java code generate produces unrolled C code • Stream of matrices. . . Nx. N S in a row • Square each Matrix M times in • M controls computational intensity (CI) - the ratio between flops and mem access • Each matrix is size Nx. N • N controls working set size: 2 N 2 registers required per matrix. N is varied to cover observable register set size. • Two storage formats: • Direct Storage: Sqmat’s matrix entries stored continuously in memory • Indirect: Entries accessed through indirection vector. “Stanza length” S controls degree of indirection Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V I S I O N 51

Slowdown due to Indirection BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Unit stride access via indirection (S=1) 5 slowdown Itanium 2 Opteron Power 3 Power 4 4 3 2 1 1 2 4 16 M 32 8 64 128 256 512 • Operton, Power 3/4 less 10% penalty once M>8 demonstrating bandwidth between cache and processor effectively delivers addresses and values • Itanium 2 showing high penalty for indirection Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 52 I S I O N

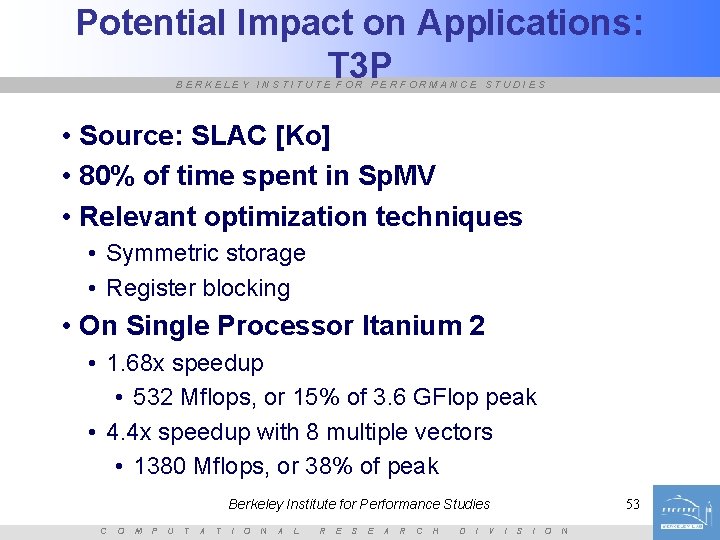

Potential Impact on Applications: T 3 P BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Source: SLAC [Ko] • 80% of time spent in Sp. MV • Relevant optimization techniques • Symmetric storage • Register blocking • On Single Processor Itanium 2 • 1. 68 x speedup • 532 Mflops, or 15% of 3. 6 GFlop peak • 4. 4 x speedup with 8 multiple vectors • 1380 Mflops, or 38% of peak Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 53 I S I O N

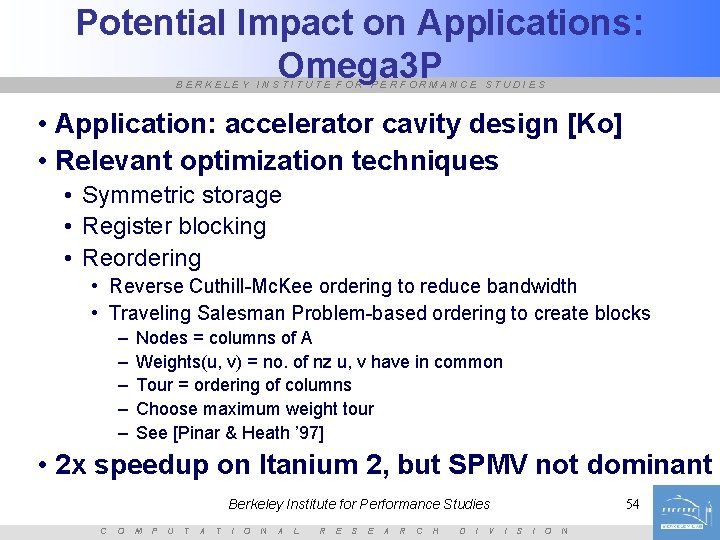

Potential Impact on Applications: Omega 3 P BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Application: accelerator cavity design [Ko] • Relevant optimization techniques • Symmetric storage • Register blocking • Reordering • Reverse Cuthill-Mc. Kee ordering to reduce bandwidth • Traveling Salesman Problem-based ordering to create blocks – – – Nodes = columns of A Weights(u, v) = no. of nz u, v have in common Tour = ordering of columns Choose maximum weight tour See [Pinar & Heath ’ 97] • 2 x speedup on Itanium 2, but SPMV not dominant Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 54 I S I O N

Tolerating Irregularity BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • S 50 (Penalty for random access) • S is the length of each unit stride run • Start with S= (indirect unit stride) • How large must S be to achieve at least 50% of this performance? • All done for a fixed computational intensity • CI 50 (Hide random access penalty using high computational intensity) • CI is computational intensity, controlled by number of squarings (M) per matrix • Start with M=1, S= • At S=1 (every access random), how large must M be to achieve 50% of this performance? • For both, lower numbers are better Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 55 I S I O N

Tolerating Irregularity BERKELEY INSTITUTE FOR PERFORMANCE STUDIES S 50: What % of memory access can be random before performance decreases by half? CI 50: How much computational intensity is required to hide penalty of all random access? • Gather/Scatter is expensive on commodity cache-based systems • Huge amount of computation may be required to hide overhead of irregular data access • Power 4 is only 1. 6% (1 in 64) • Itanium 2: much less sensitive at 25% (1 in 4) • Itanium 2 requires CI of about 9 flops/word Berkeley Institute for Performance • Power 4 Studies requires CI of almost 5675! C O M P U T A T I O N A L R E S E A R C H D I V I S I O N

Emerging Architectures BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • General purpose processors badly suited for data intensive ops • • Large caches not useful if re-use is low Low memory bandwidth, especially for irregular patterns Superscalar methods of increasing ILP inefficient Power consumption • Researchitectures • Berkeley IRAM: Vector and PIM chip • Stanford Imagine: Stream processor • ISI Diva: PIM with conventional processor Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 57 I S I O N

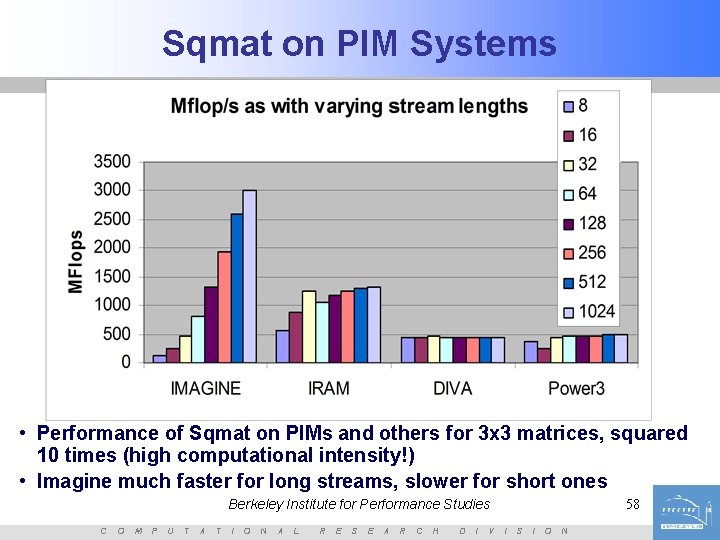

Sqmat on PIM Systems BERKELEY INSTITUTE FOR PERFORMANCE STUDIES • Performance of Sqmat on PIMs and others for 3 x 3 matrices, squared 10 times (high computational intensity!) • Imagine much faster for long streams, slower for short ones Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 58 I S I O N

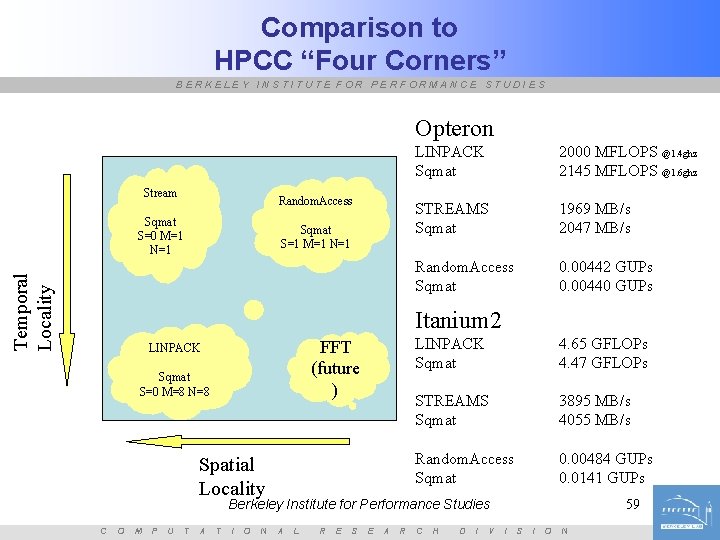

Comparison to HPCC “Four Corners” BERKELEY INSTITUTE FOR PERFORMANCE STUDIES Opteron Stream Random. Access Temporal Locality Sqmat S=0 M=1 N=1 Sqmat S=1 M=1 N=1 LINPACK Sqmat 2000 MFLOPS @1. 4 ghz 2145 MFLOPS @1. 6 ghz STREAMS Sqmat 1969 MB/s 2047 MB/s Random. Access Sqmat 0. 00442 GUPs 0. 00440 GUPs Itanium 2 FFT (future ) LINPACK Sqmat S=0 M=8 N=8 Spatial Locality LINPACK Sqmat 4. 65 GFLOPs 4. 47 GFLOPs STREAMS Sqmat 3895 MB/s 4055 MB/s Random. Access Sqmat 0. 00484 GUPs 0. 0141 GUPs Berkeley Institute for Performance Studies C O M P U T A T I O N A L R E S E A R C H D I V 59 I S I O N

- Slides: 39