Benchmarking Performance in LowResource Translation Results for African

Benchmarking Performance in Low-Resource Translation: Results for African & Indian Languages Dr. Kevin Duh Dr. Matt Post JHU HLTCOE Dr. Paul Mc. Namee

Research Question for this talk How does state-of-the-art Neural Machine Translation (NMT) compare with Statistical Machine Translation (SMT) for low-resource scenarios? Evaluate on African & Indian languages - Little bilingual text (bitext) MT is challenging to develop - Fewer linguists w. r. t major languages MT has potential for impact 1

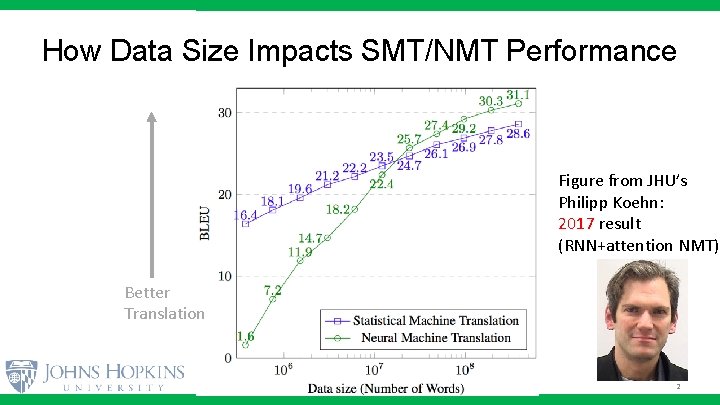

How Data Size Impacts SMT/NMT Performance Figure from JHU’s Philipp Koehn: 2017 result (RNN+attention NMT) Better Translation 2

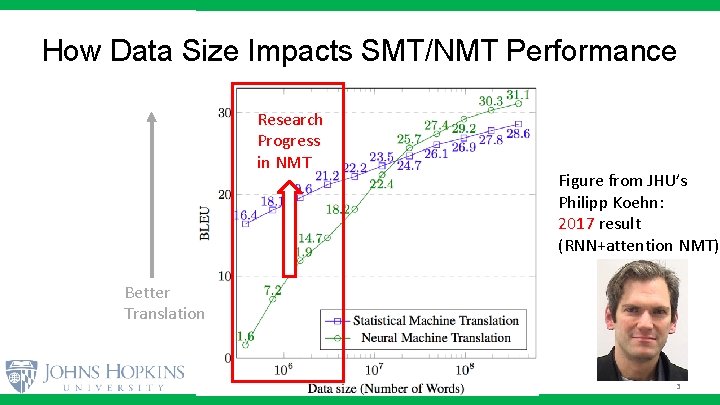

How Data Size Impacts SMT/NMT Performance Research Progress in NMT Figure from JHU’s Philipp Koehn: 2017 result (RNN+attention NMT) Better Translation 3

Outline 1. 2. 3. 4. Motivation: Importance of Low-Resource Brief Explanation of Neural Machine Translation (NMT) African languages Benchmark Indian languages Benchmark 4

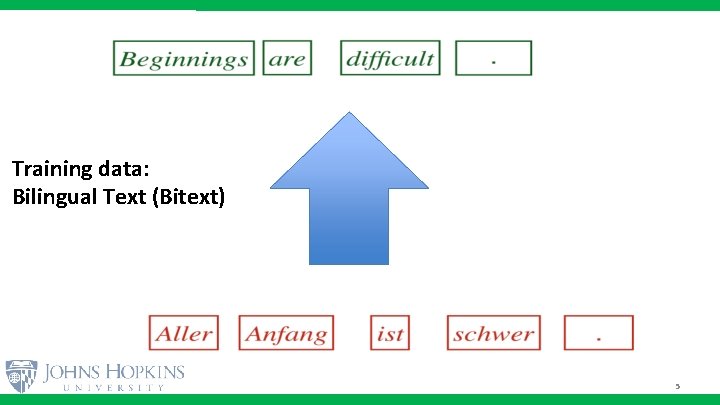

Training data: Bilingual Text (Bitext) 5

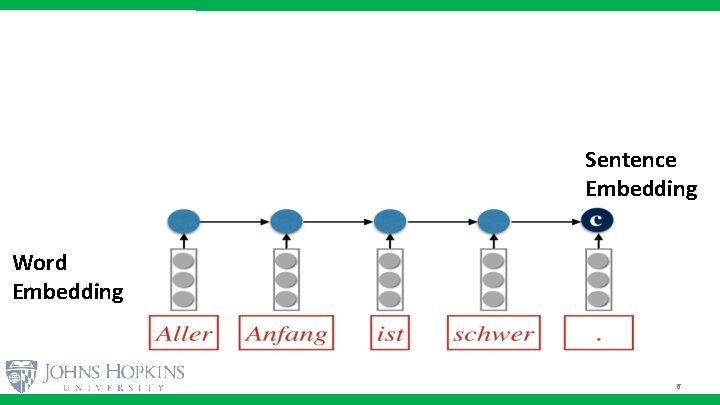

Sentence Embedding Word Embedding 6

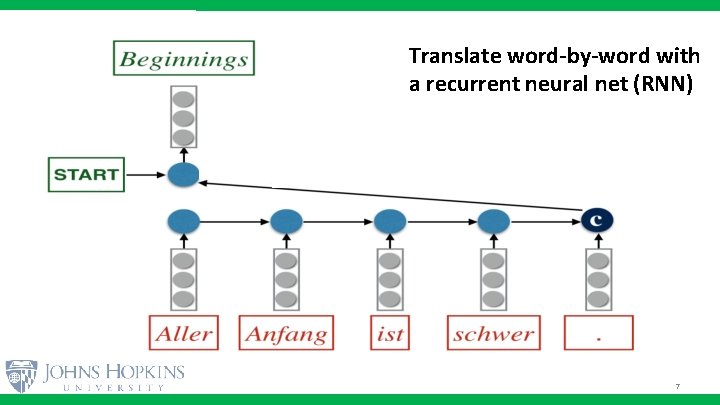

Translate word-by-word with a recurrent neural net (RNN) 7

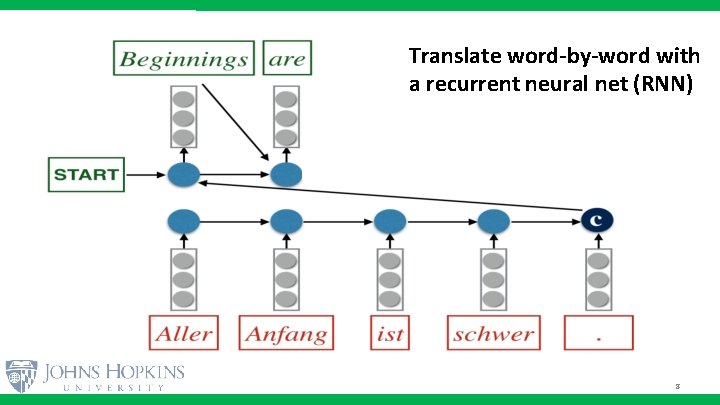

Translate word-by-word with a recurrent neural net (RNN) 8

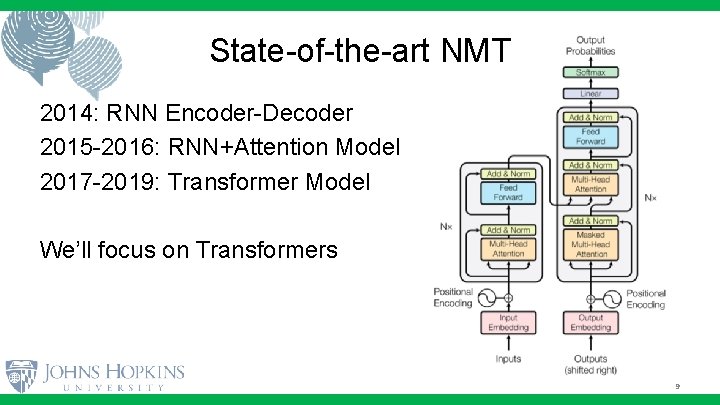

State-of-the-art NMT 2014: RNN Encoder-Decoder 2015 -2016: RNN+Attention Model 2017 -2019: Transformer Model We’ll focus on Transformers 9

Outline 1. 2. 3. 4. Motivation: Importance of Low-Resource Brief Explanation of Neural Machine Translation (NMT) African languages Benchmark Indian languages Benchmark 10

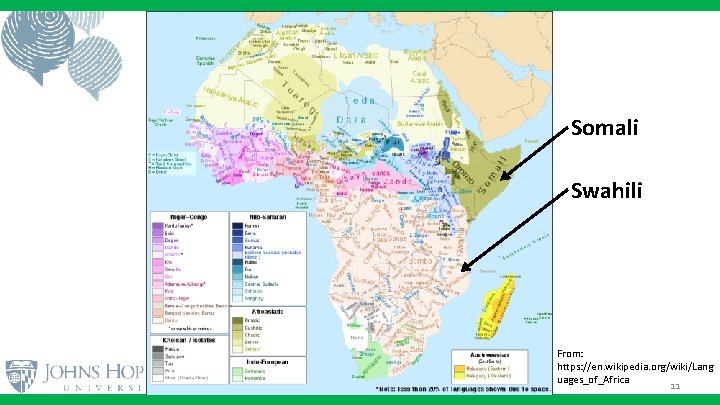

Somali Swahili From: https: //en. wikipedia. org/wiki/Lang uages_of_Africa 11

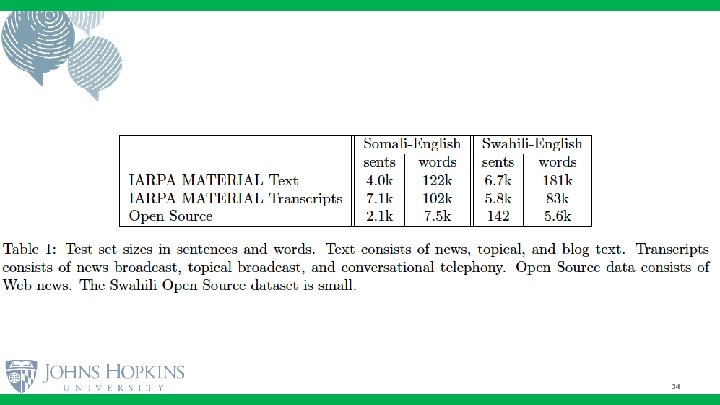

Experiment Setup (version 1) Compare best SMT and best NMT performance on: - Somali-to-English - Swahili-to-English Bitext from IARPA MATERIAL: - Only 24 k sentences for training (~800 k words) - Focus on text. Matched condition (unlike SCALE’ 18) 12

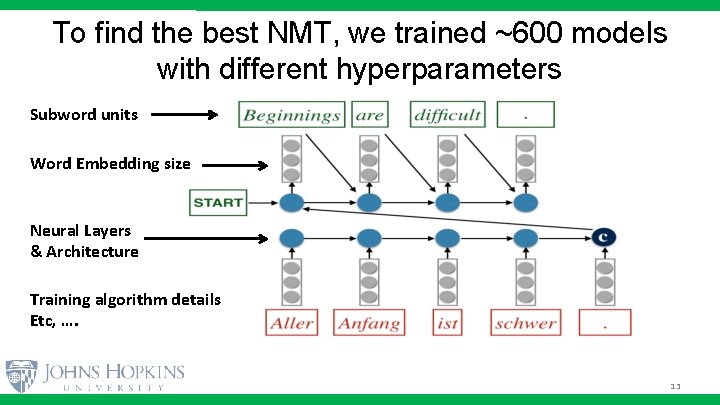

To find the best NMT, we trained ~600 models with different hyperparameters Subword units Word Embedding size Neural Layers & Architecture Training algorithm details Etc, …. 13

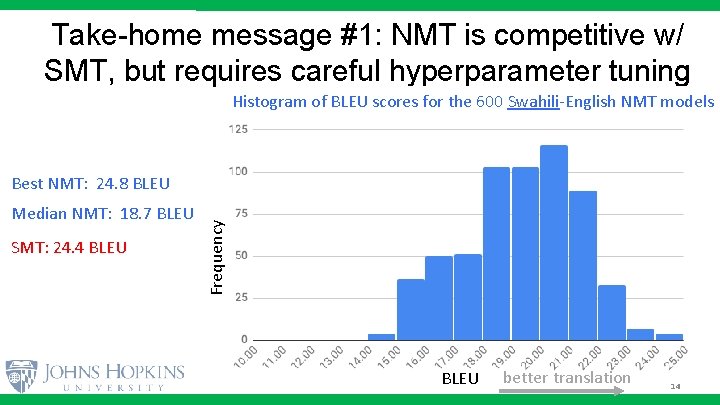

Take-home message #1: NMT is competitive w/ SMT, but requires careful hyperparameter tuning Histogram of BLEU scores for the 600 Swahili-English NMT models Median NMT: 18. 7 BLEU SMT: 24. 4 BLEU Frequency Best NMT: 24. 8 BLEU better translation 14

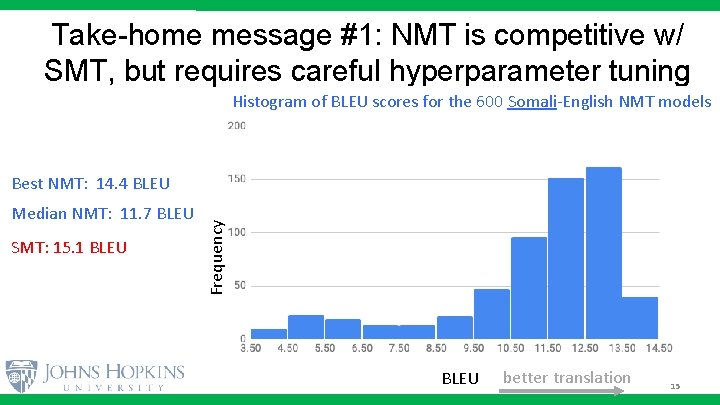

Take-home message #1: NMT is competitive w/ SMT, but requires careful hyperparameter tuning Histogram of BLEU scores for the 600 Somali-English NMT models Median NMT: 11. 7 BLEU SMT: 15. 1 BLEU Frequency Best NMT: 14. 4 BLEU better translation 15

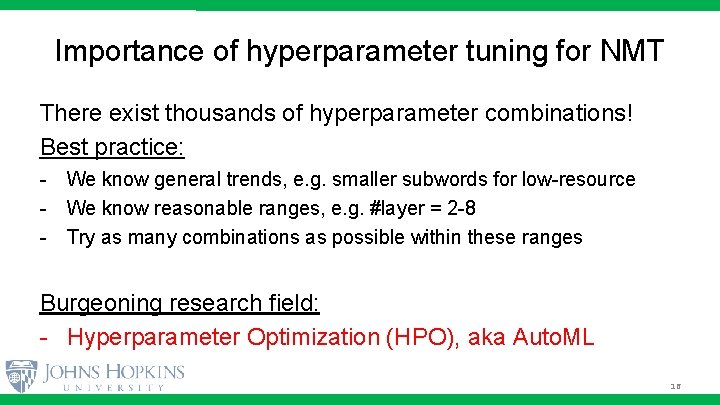

Importance of hyperparameter tuning for NMT There exist thousands of hyperparameter combinations! Best practice: - We know general trends, e. g. smaller subwords for low-resource - We know reasonable ranges, e. g. #layer = 2 -8 - Try as many combinations as possible within these ranges Burgeoning research field: - Hyperparameter Optimization (HPO), aka Auto. ML 16

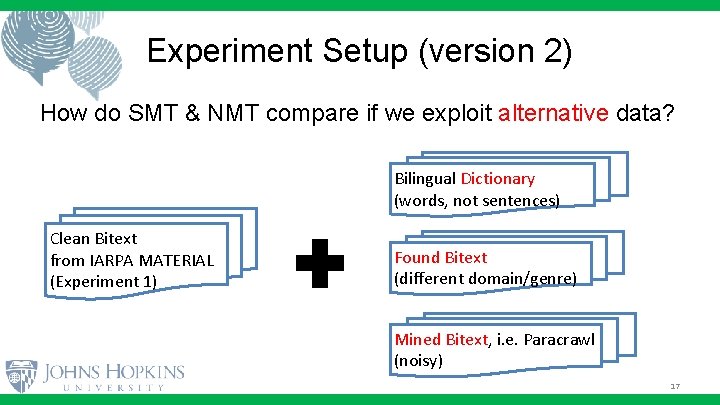

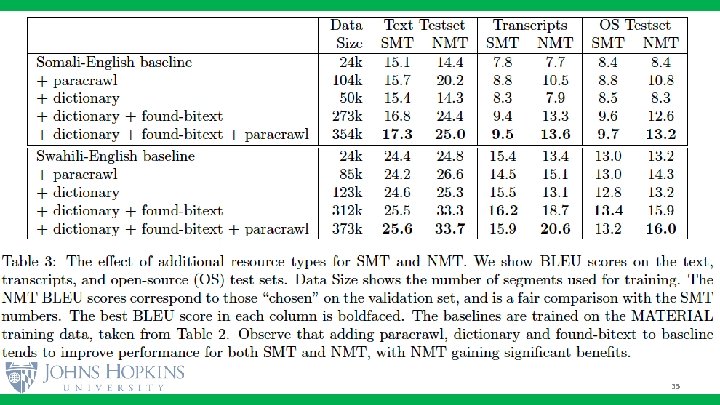

Experiment Setup (version 2) How do SMT & NMT compare if we exploit alternative data? Bilingual Dictionary (words, not sentences) Clean Bitext from IARPA MATERIAL (Experiment 1) Found Bitext (different domain/genre) Mined Bitext, i. e. Paracrawl (noisy) 17

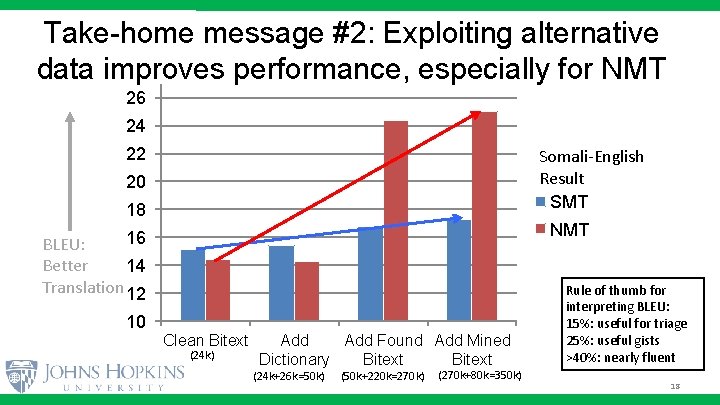

Take-home message #2: Exploiting alternative 28 data improves performance, especially for NMT 26 24 22 Somali-English Result SMT 20 18 NMT 16 BLEU: Better 14 Translation 12 10 Clean Bitext (24 k) Add Found Add Mined Dictionary Bitext (24 k+26 k=50 k) (50 k+220 k=270 k) (270 k+80 k=350 k) Rule of thumb for interpreting BLEU: 15%: useful for triage 25%: useful gists >40%: nearly fluent 18

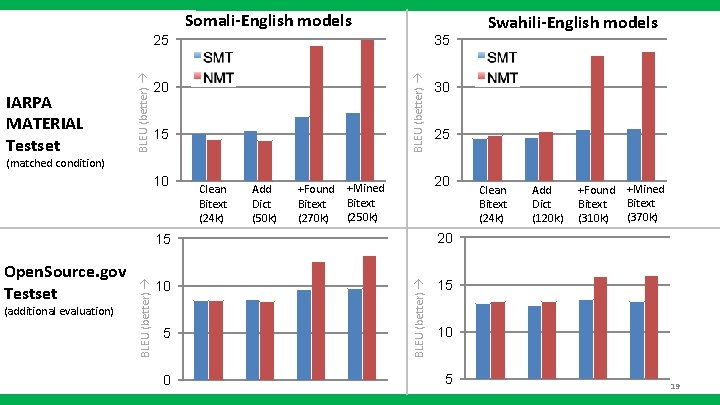

Somali-English models 35 BLEU (better) IARPA MATERIAL Testset BLEU (better) 25 20 15 Swahili-English models 30 25 (matched condition) (additional evaluation) Clean Bitext (24 k) Add Dict (50 k) +Found Bitext (270 k) 20 +Mined Bitext (250 k) 15 20 10 15 5 0 BLEU (better) Open. Source. gov Testset BLEU (better) 10 Clean Bitext (24 k) Add Dict (120 k) +Found Bitext (310 k) +Mined Bitext (370 k) 10 5 19

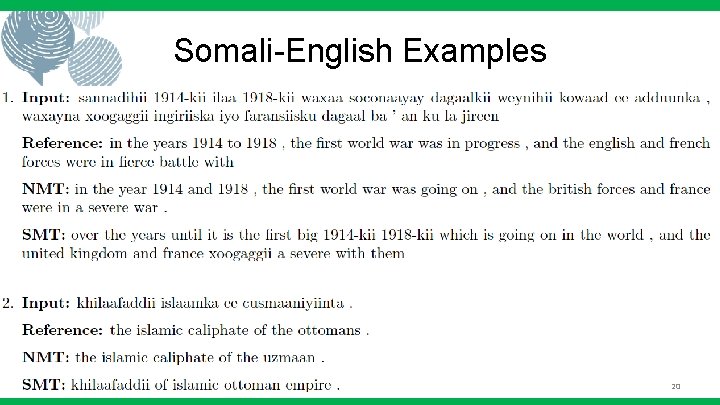

Somali-English Examples 20

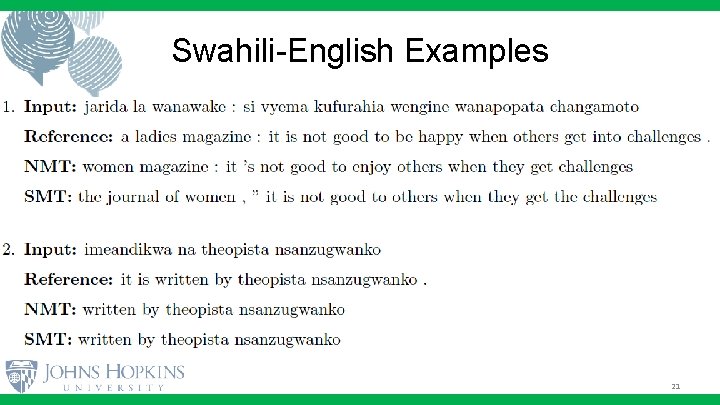

Swahili-English Examples 21

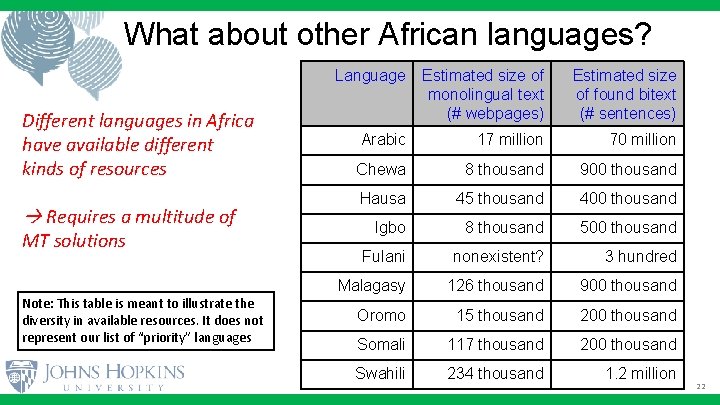

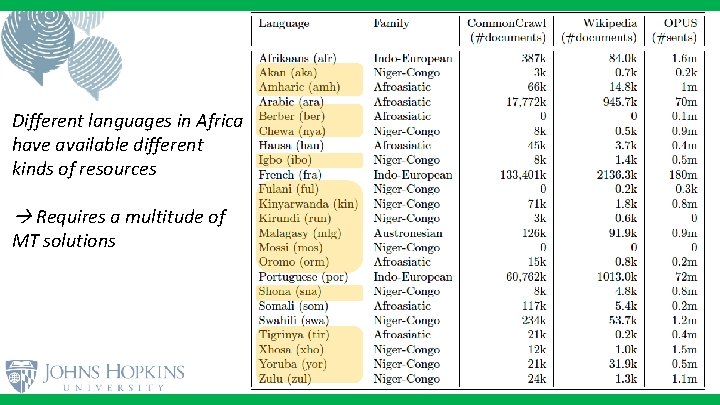

What about other African languages? Different languages in Africa have available different kinds of resources Requires a multitude of MT solutions Note: This table is meant to illustrate the diversity in available resources. It does not represent our list of “priority” languages Language Estimated size of monolingual text (# webpages) Estimated size of found bitext (# sentences) Arabic 17 million 70 million Chewa 8 thousand 900 thousand Hausa 45 thousand 400 thousand Igbo 8 thousand 500 thousand Fulani nonexistent? 3 hundred Malagasy 126 thousand 900 thousand Oromo 15 thousand 200 thousand Somali 117 thousand 200 thousand Swahili 234 thousand 1. 2 million 22

Outline 1. 2. 3. 4. Motivation: Importance of Low-Resource Brief Explanation of Neural Machine Translation (NMT) African languages Benchmark Indian languages Benchmark 23

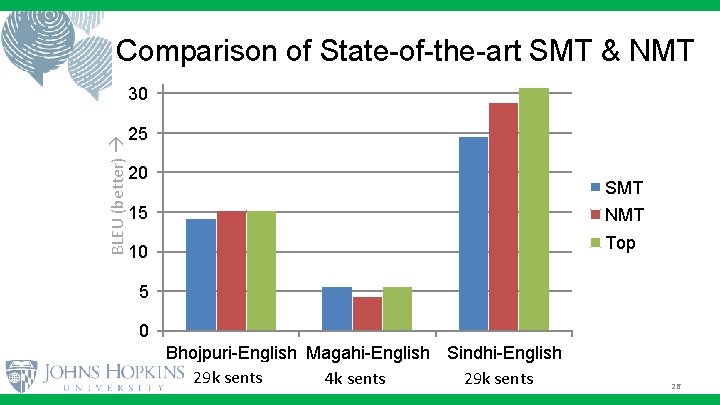

Lo. Res. MT'19 workshop at MT Summit Shared task in 4 languages (3 Indian + Latvian) Bhojpuri, Magahi, Sindhi Serendipitously at the same time as African experiments 24

Sindhi Bhojpuri Magahi From: https: //en. wikipedia. org/wiki/Lang uages_of_India 25

35 Comparison of State-of-the-art SMT & NMT BLEU (better) 30 25 20 SMT 15 NMT Top 10 5 0 Bhojpuri-English Magahi-English Sindhi-English (bho-eng) (mag-eng) (snd-eng) 29 k sents 4 k sents 29 k sents 26

Future Work in Low-Resource MT Better models and training algorithms - Morphological and syntactic models - Robust training objectives, unsupervised objectives - Synthetic Data Augmentation (next slide) Better mined bitext - (next talk) 27

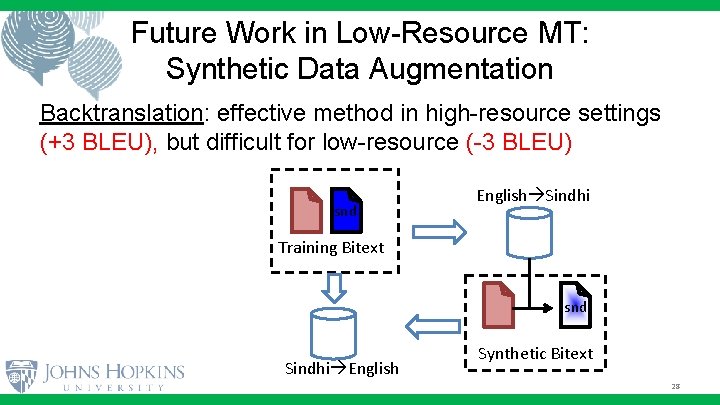

Future Work in Low-Resource MT: Synthetic Data Augmentation Backtranslation: effective method in high-resource settings (+3 BLEU), but difficult for low-resource (-3 BLEU) snd English Sindhi Training Bitext snd Sindhi English Synthetic Bitext 28

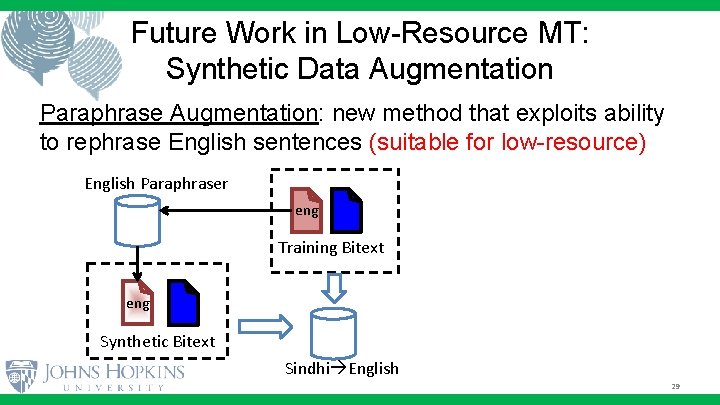

Future Work in Low-Resource MT: Synthetic Data Augmentation Paraphrase Augmentation: new method that exploits ability to rephrase English sentences (suitable for low-resource) English Paraphraser eng Training Bitext eng Synthetic Bitext Sindhi English 29

Conclusions • Benchmark SMT vs NMT in Low-Resource languages • Take-Home #1: NMT is competitive with SMT, but NMT hyperparameter tuning is essential • Take-Home #2: NMT benefits more from additional data, so improving data pipeline is an important research goal 30

Questions? Comments? asante (Swahili) mahadsanid (Somali) dhanvaad (Bhojpuri) dhenjewaad (Magahi) mehrbani (Sindhi)

32

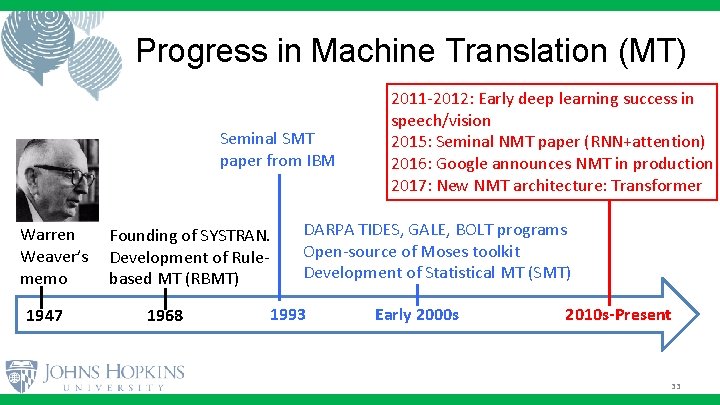

Progress in Machine Translation (MT) Seminal SMT paper from IBM Warren Weaver’s memo 1947 Founding of SYSTRAN. Development of Rulebased MT (RBMT) 1968 2011 -2012: Early deep learning success in speech/vision 2015: Seminal NMT paper (RNN+attention) 2016: Google announces NMT in production 2017: New NMT architecture: Transformer DARPA TIDES, GALE, BOLT programs Open-source of Moses toolkit Development of Statistical MT (SMT) 1993 Early 2000 s 2010 s-Present 33

34

35

Different languages in Africa have available different kinds of resources Requires a multitude of MT solutions

- Slides: 37