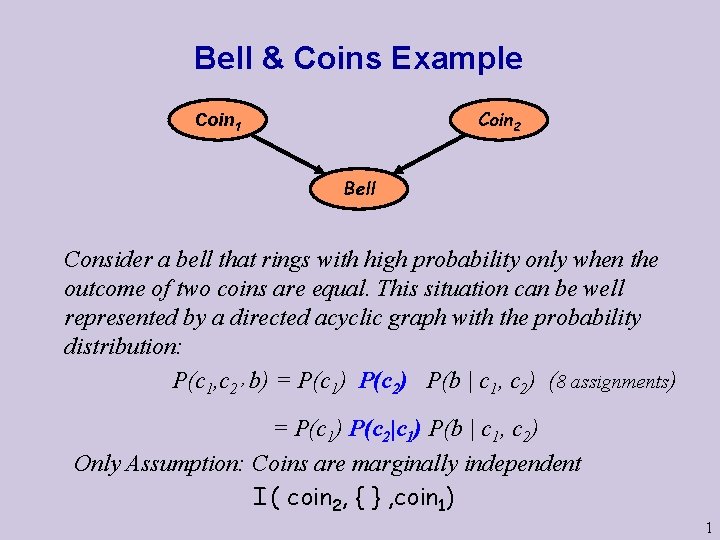

Bell Coins Example Coin 2 Coin 1 Bell

Bell & Coins Example Coin 2 Coin 1 Bell Consider a bell that rings with high probability only when the outcome of two coins are equal. This situation can be well represented by a directed acyclic graph with the probability distribution: P(c 1, c 2’ b) = P(c 1) P(c 2) P(b | c 1, c 2) (8 assignments) = P(c 1) P(c 2|c 1) P(b | c 1, c 2) Only Assumption: Coins are marginally independent I ( coin 2, { } , coin 1) 1

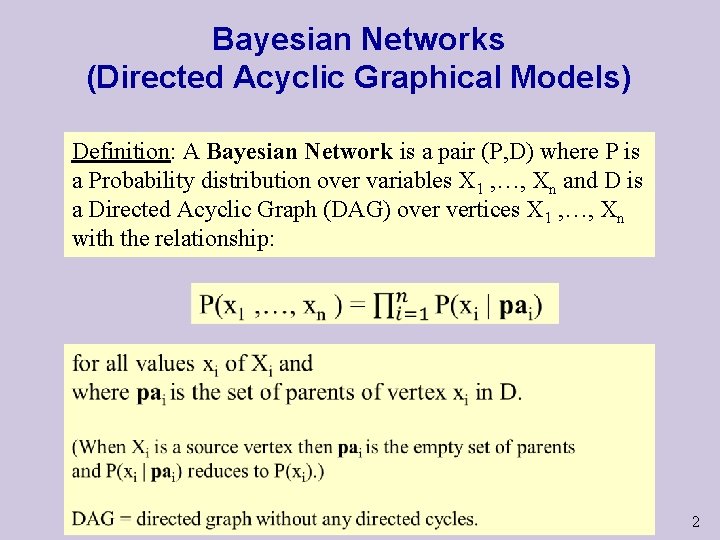

Bayesian Networks (Directed Acyclic Graphical Models) Definition: A Bayesian Network is a pair (P, D) where P is a Probability distribution over variables X 1 , …, Xn and D is a Directed Acyclic Graph (DAG) over vertices X 1 , …, Xn with the relationship: 2

Bayesian Networks (BNs) Examples to model diseases & symptoms & risk factors One variable for all diseases (values are diseases) (Draw it) One variable per disease (values are True/False) (Draw it) Naïve Bayesian Networks versus Bipartite BNs (details) Adding Risk Factors (Draw it) 3

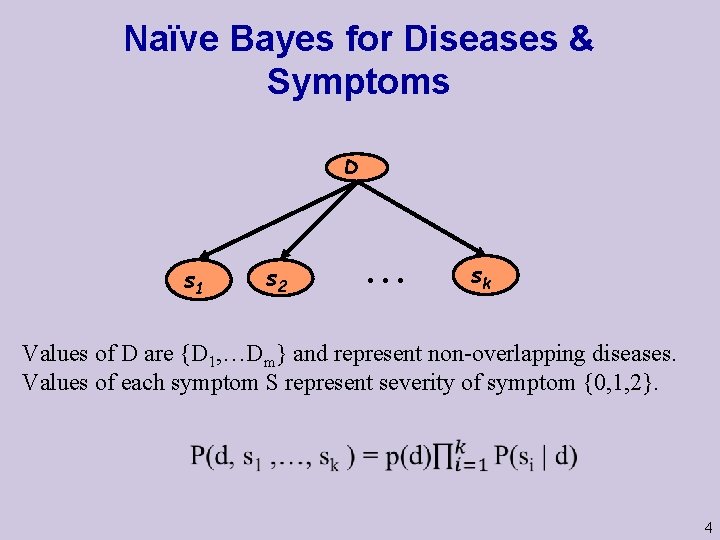

Naïve Bayes for Diseases & Symptoms D s 1 s 2 . . . sk Values of D are {D 1, …Dm} and represent non-overlapping diseases. Values of each symptom S represent severity of symptom {0, 1, 2}. 4

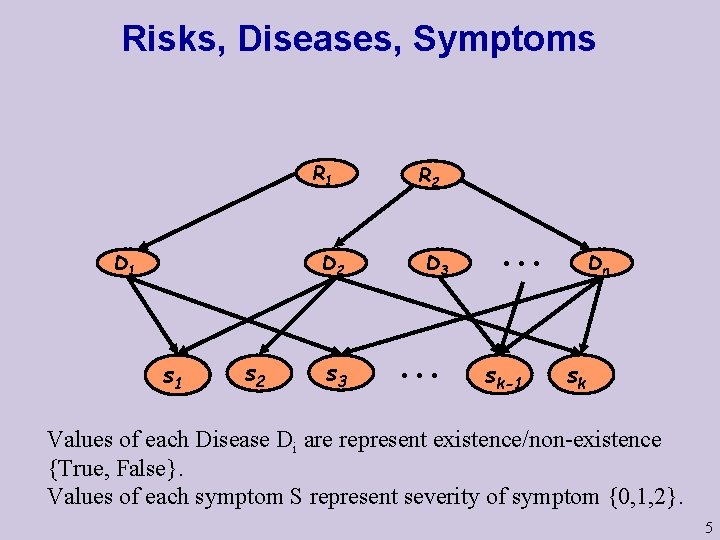

Risks, Diseases, Symptoms R 1 D 1 s 2 R 2 D 3 s 3 . . . sk-1 Dn sk Values of each Disease Di are represent existence/non-existence {True, False}. Values of each symptom S represent severity of symptom {0, 1, 2}. 5

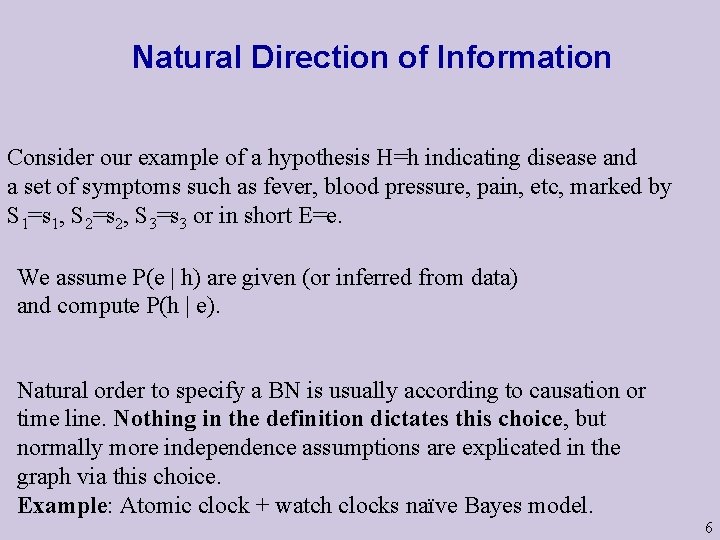

Natural Direction of Information Consider our example of a hypothesis H=h indicating disease and a set of symptoms such as fever, blood pressure, pain, etc, marked by S 1=s 1, S 2=s 2, S 3=s 3 or in short E=e. We assume P(e | h) are given (or inferred from data) and compute P(h | e). Natural order to specify a BN is usually according to causation or time line. Nothing in the definition dictates this choice, but normally more independence assumptions are explicated in the graph via this choice. Example: Atomic clock + watch clocks naïve Bayes model. 6

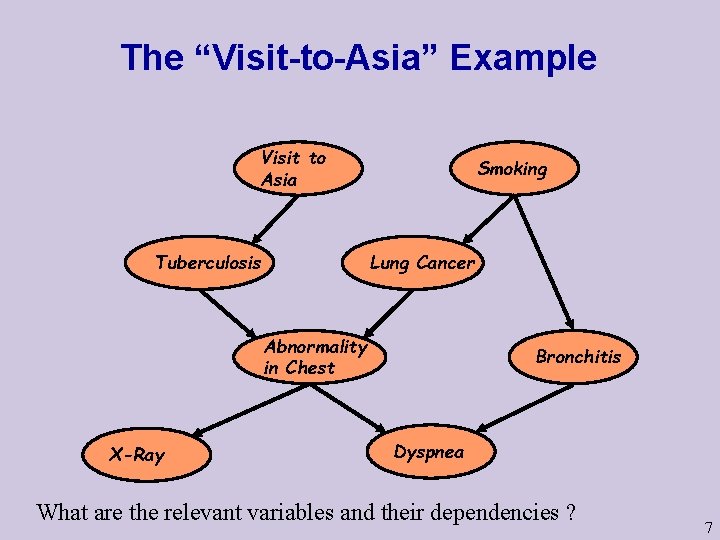

The “Visit-to-Asia” Example Visit to Asia Tuberculosis Smoking Lung Cancer Abnormality in Chest X-Ray Bronchitis Dyspnea What are the relevant variables and their dependencies ? 7

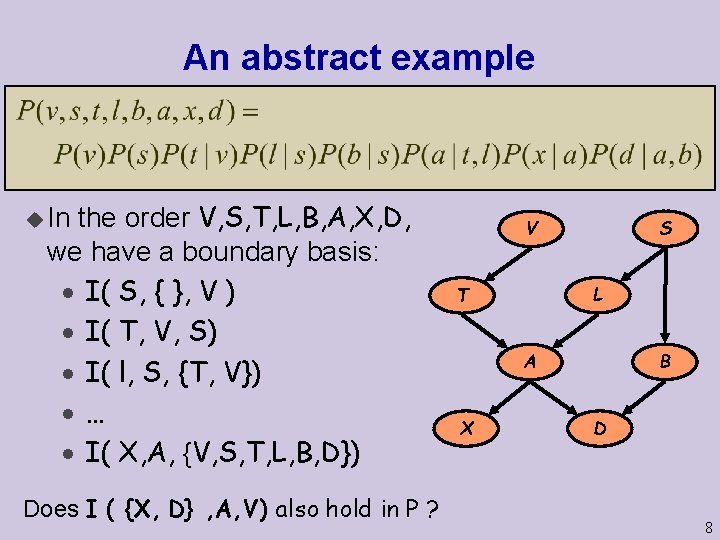

An abstract example the order V, S, T, L, B, A, X, D, we have a boundary basis: · I( S, { }, V ) · I( T, V, S) · I( l, S, {T, V}) ·… · I( X, A, {V, S, T, L, B, D}) u In Does I ( {X, D} , A, V) also hold in P ? S V L T B A X D 8

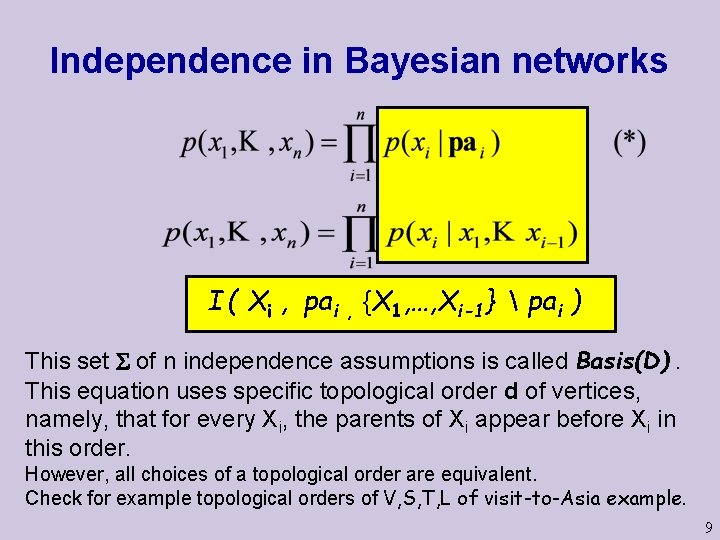

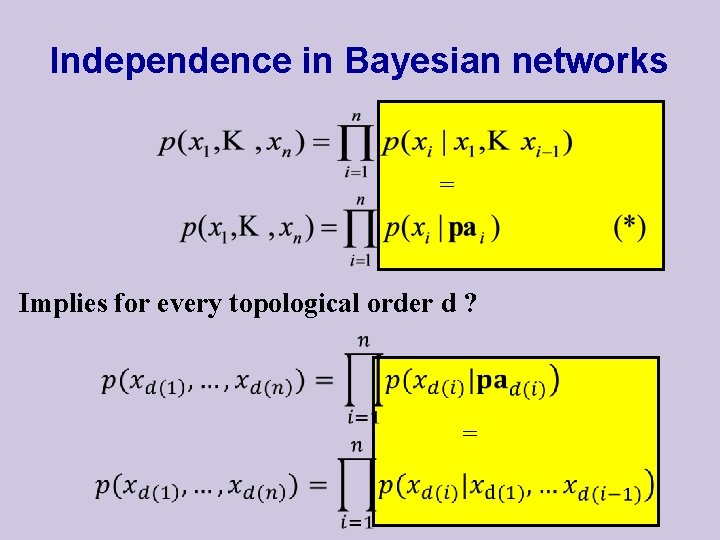

Independence in Bayesian networks I ( Xi , pai , {X 1, …, Xi-1} pai ) This set of n independence assumptions is called Basis(D). This equation uses specific topological order d of vertices, namely, that for every Xi, the parents of Xi appear before Xi in this order. However, all choices of a topological order are equivalent. Check for example topological orders of V, S, T, L of visit-to-Asia example. 9

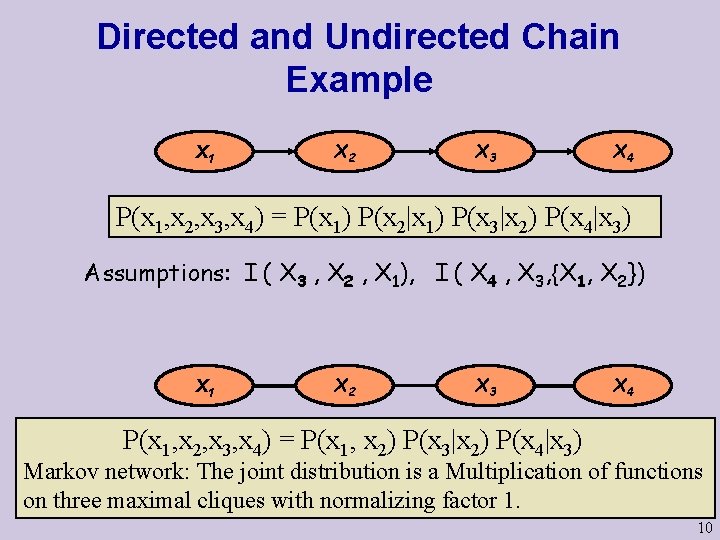

Directed and Undirected Chain Example X 1 X 2 X 3 X 4 P(x 1, x 2, x 3, x 4) = P(x 1) P(x 2|x 1) P(x 3|x 2) P(x 4|x 3) Assumptions: I ( X 3 , X 2 , X 1), I ( X 4 , X 3, {X 1, X 2}) X 1 X 2 X 3 X 4 P(x 1, x 2, x 3, x 4) = P(x 1, x 2) P(x 3|x 2) P(x 4|x 3) Markov network: The joint distribution is a Multiplication of functions on three maximal cliques with normalizing factor 1. 10

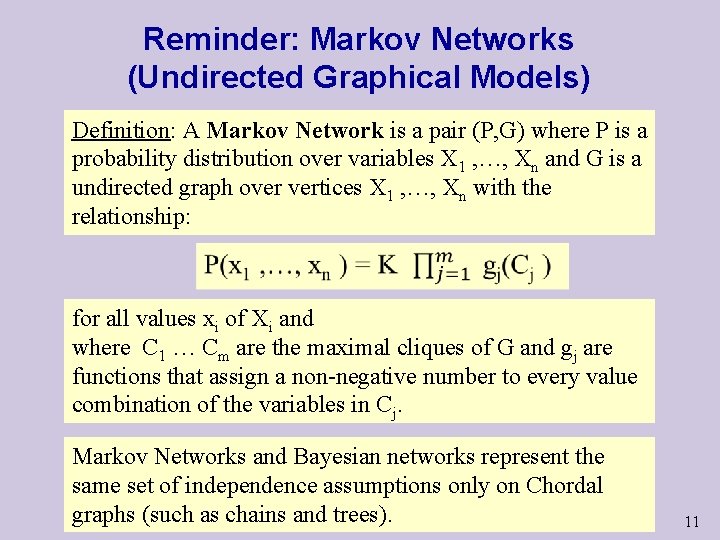

Reminder: Markov Networks (Undirected Graphical Models) Definition: A Markov Network is a pair (P, G) where P is a probability distribution over variables X 1 , …, Xn and G is a undirected graph over vertices X 1 , …, Xn with the relationship: for all values xi of Xi and where C 1 … Cm are the maximal cliques of G and gj are functions that assign a non-negative number to every value combination of the variables in Cj. Markov Networks and Bayesian networks represent the same set of independence assumptions only on Chordal graphs (such as chains and trees). 11

From Separation in UGs To d-Separation in DAGs 12

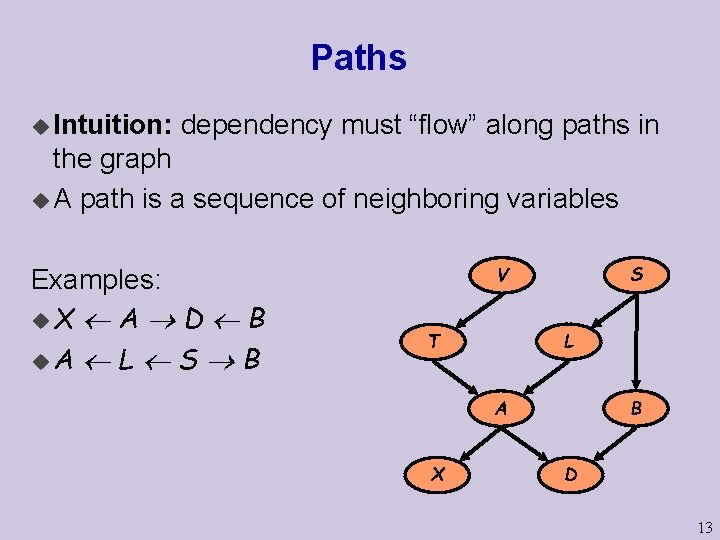

Paths u Intuition: dependency must “flow” along paths in the graph u A path is a sequence of neighboring variables Examples: u. X A D B u. A L S B S V L T B A X D 13

Path blockage u Every path is classified given the evidence: · active -- creates a dependency between the end nodes · blocked – does not create a dependency between the end nodes Evidence means the assignment of a value to a subset of nodes. 14

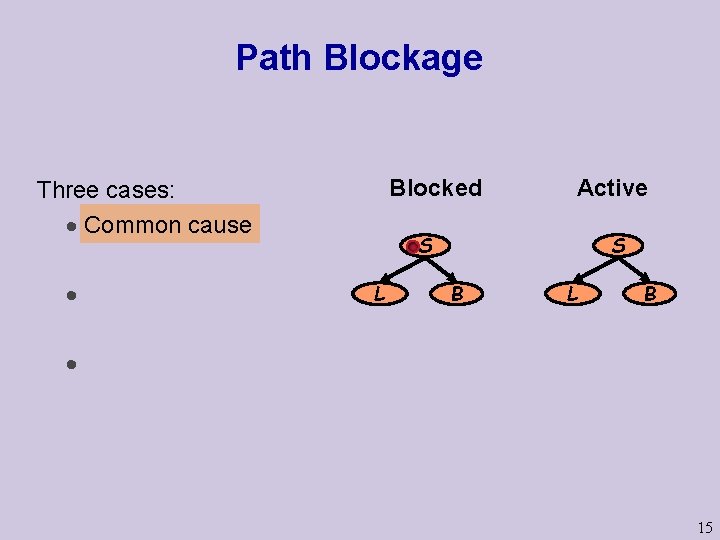

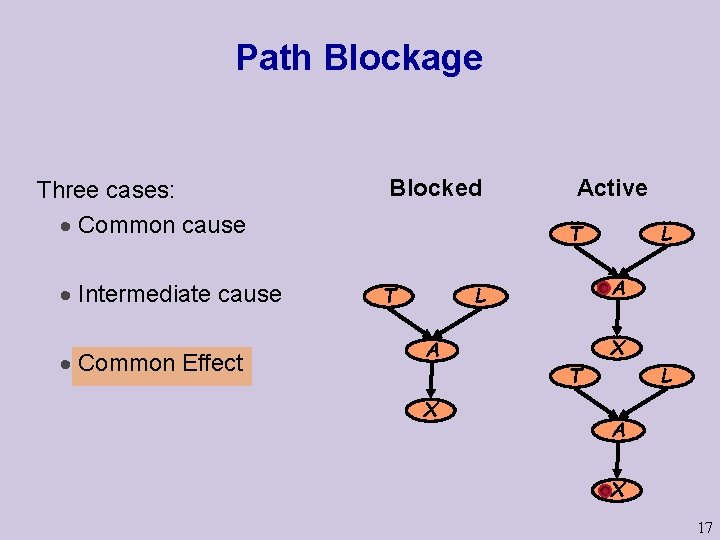

Path Blockage Blocked Three cases: · Common cause · Active S S L B · 15

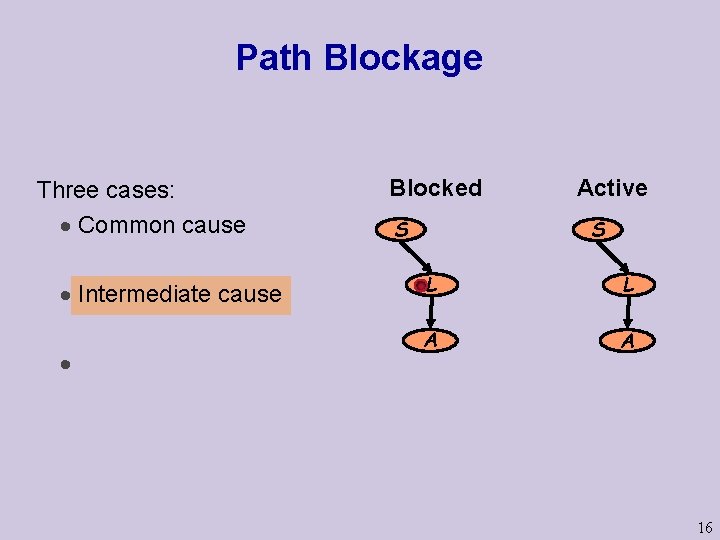

Path Blockage Three cases: · Common cause · Intermediate cause · Blocked S Active S L L A A 16

Path Blockage Three cases: · Common cause · Intermediate cause · Common Effect Blocked Active T T A L A X L X T L A X 17

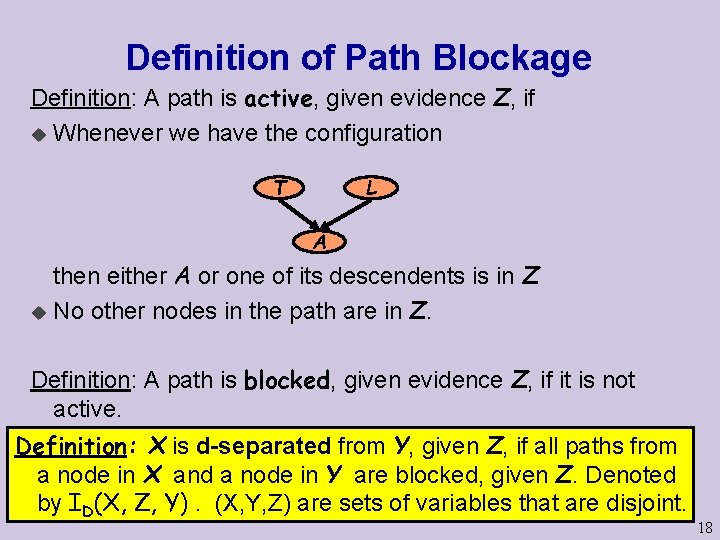

Definition of Path Blockage Definition: A path is active, given evidence Z, if u Whenever we have the configuration T L A then either A or one of its descendents is in Z u No other nodes in the path are in Z. Definition: A path is blocked, given evidence Z, if it is not active. Definition: X is d-separated from Y, given Z, if all paths from a node in X and a node in Y are blocked, given Z. Denoted by ID(X, Z, Y). (X, Y, Z) are sets of variables that are disjoint. 18

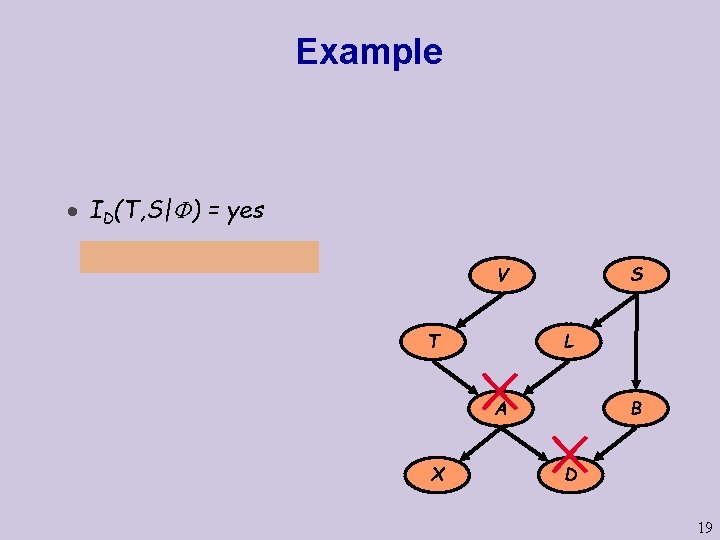

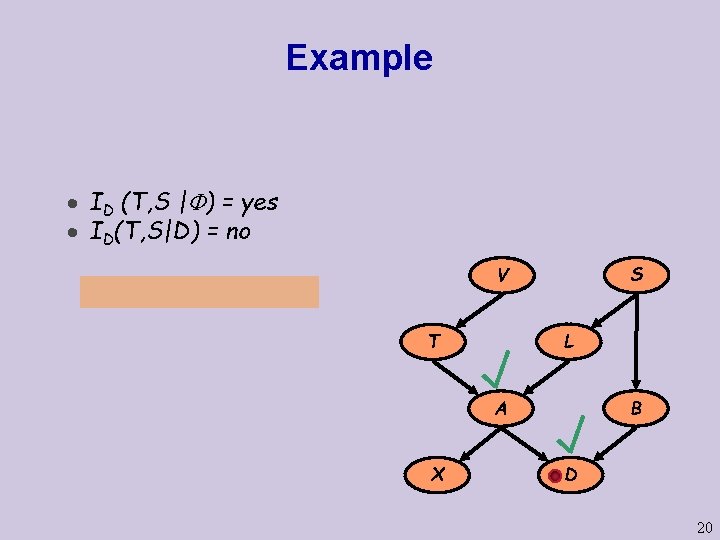

Example · ID(T, S| ) = yes S V L T B A X D 19

Example · ID (T, S | ) = yes · ID(T, S|D) = no S V L T B A X D 20

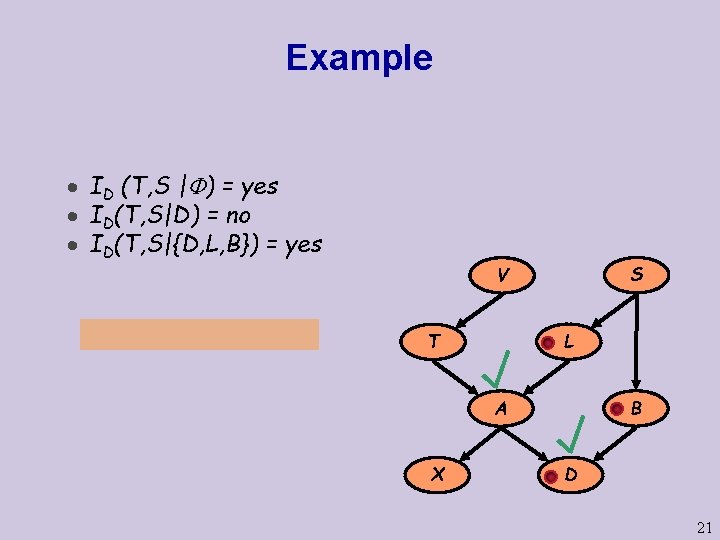

Example · ID (T, S | ) = yes · ID(T, S|D) = no · ID(T, S|{D, L, B}) = yes S V L T B A X D 21

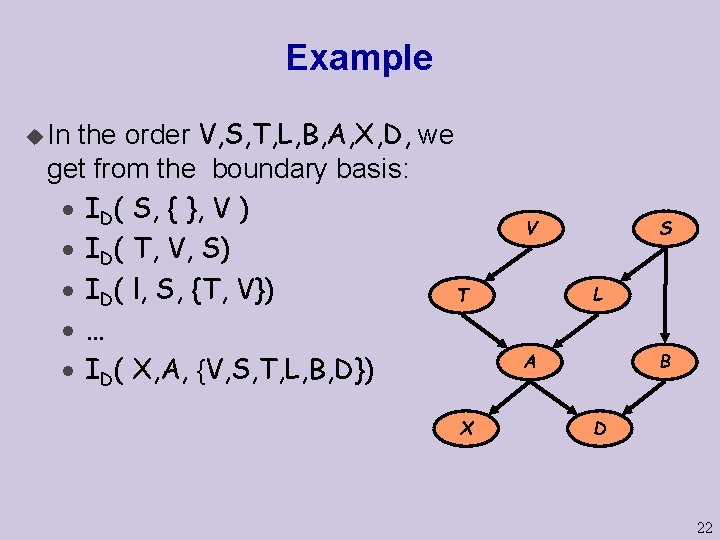

Example the order V, S, T, L, B, A, X, D, we get from the boundary basis: · ID( S, { }, V ) · ID( T, V, S) · ID( l, S, {T, V}) T ·… · ID( X, A, {V, S, T, L, B, D}) u In X S V L B A D 22

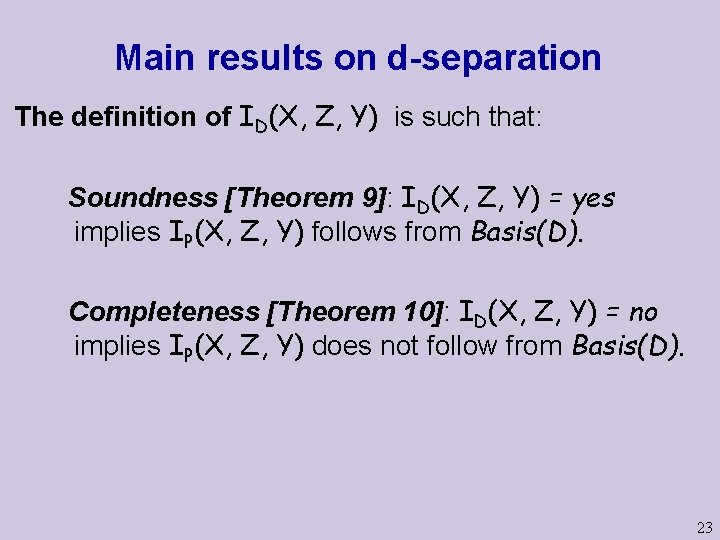

Main results on d-separation The definition of ID(X, Z, Y) is such that: Soundness [Theorem 9]: ID(X, Z, Y) = yes implies IP(X, Z, Y) follows from Basis(D). Completeness [Theorem 10]: ID(X, Z, Y) = no implies IP(X, Z, Y) does not follow from Basis(D). 23

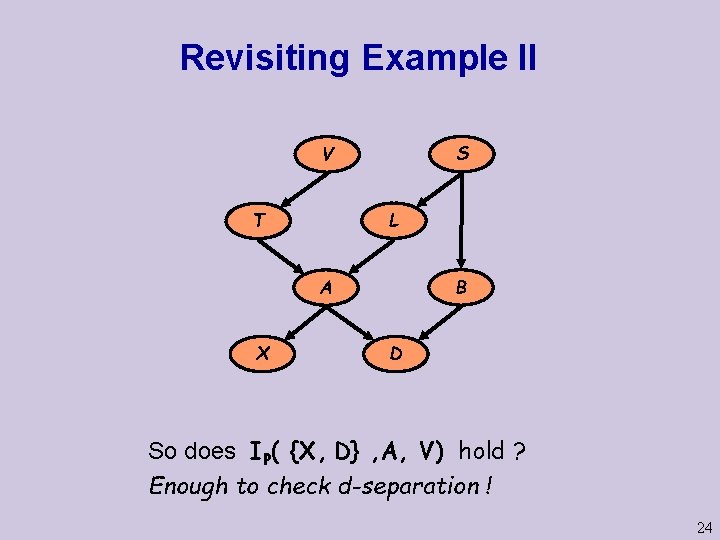

Revisiting Example II S V L T B A X D So does IP( {X, D} , A, V) hold ? Enough to check d-separation ! 24

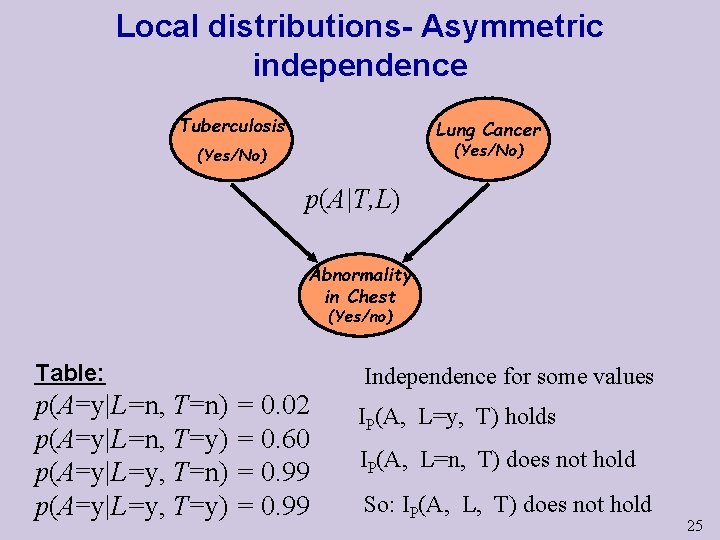

Local distributions- Asymmetric independence Tuberculosis Lung Cancer (Yes/No) p(A|T, L) Abnormality in Chest (Yes/no) Table: p(A=y|L=n, T=n) = 0. 02 p(A=y|L=n, T=y) = 0. 60 p(A=y|L=y, T=n) = 0. 99 p(A=y|L=y, T=y) = 0. 99 Independence for some values IP(A, L=y, T) holds IP(A, L=n, T) does not hold So: IP(A, L, T) does not hold 25

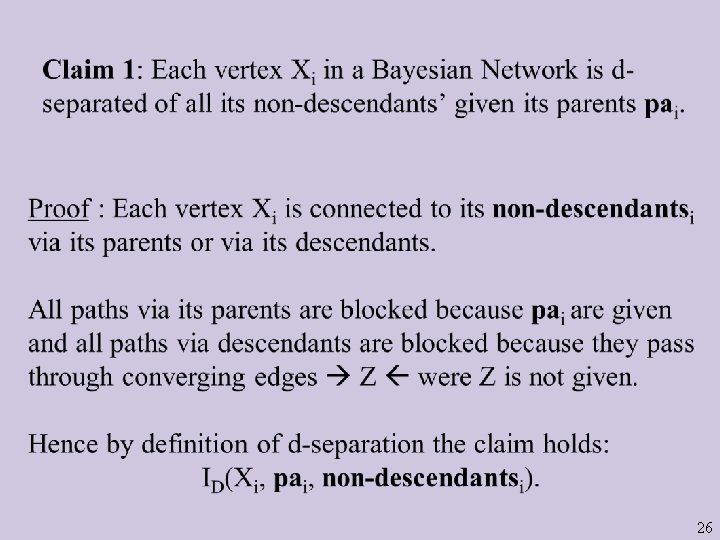

26

Independence in Bayesian networks = Implies for every topological order d ? =

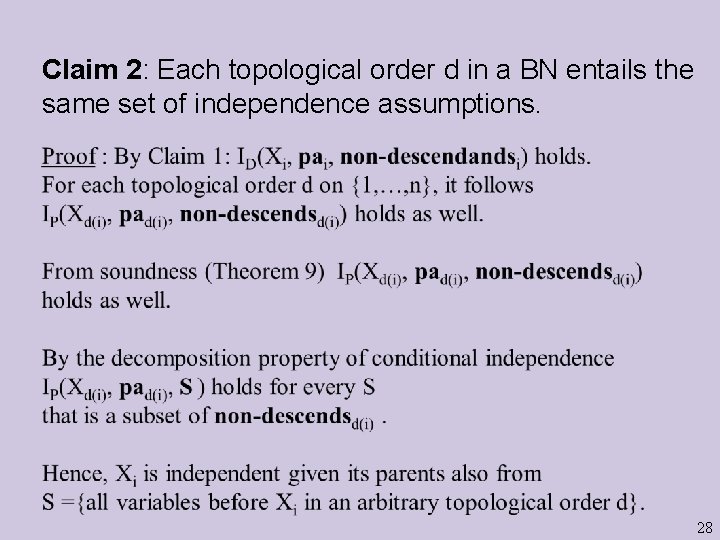

Claim 2: Each topological order d in a BN entails the same set of independence assumptions. 28

Extension of the Markov Chain Property For Markov chains we assume Basis(D): IP(Xi , Xi-1 , {X 1 … Xi-2}) Due to soundness of d-separation (Theorem 9) we also get: IP(Xi , {Xi-1 , Xi+1} , {X 1 … Xi-2 , Xi+2… Xn }) Definition: A Markov blanket of a variable X wrt probability distribution P is a set of variables B(X) such that IP(X, B(X), all-other-variables) Chain Example: B(Xi) = {Xi-1 , Xi+1}

Markov Blankets in BNs Proof: Consequence of soundness of d-separation (Theorem 9). 30

- Slides: 31