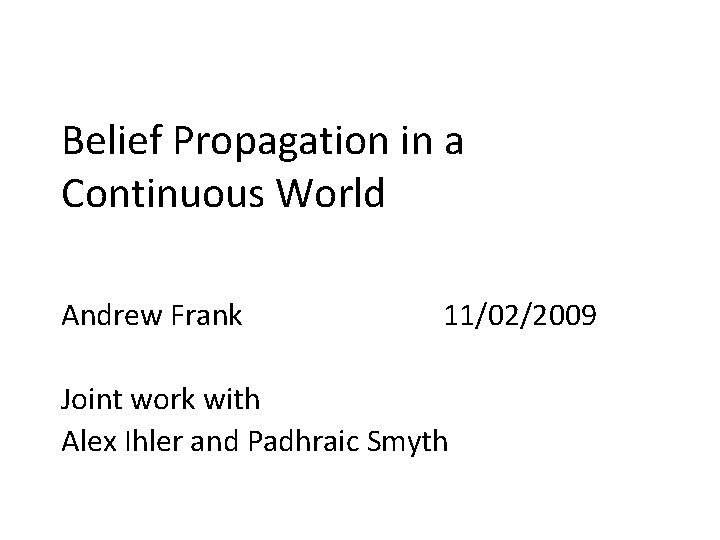

Belief Propagation in a Continuous World Andrew Frank

Belief Propagation in a Continuous World Andrew Frank 11/02/2009 Joint work with Alex Ihler and Padhraic Smyth

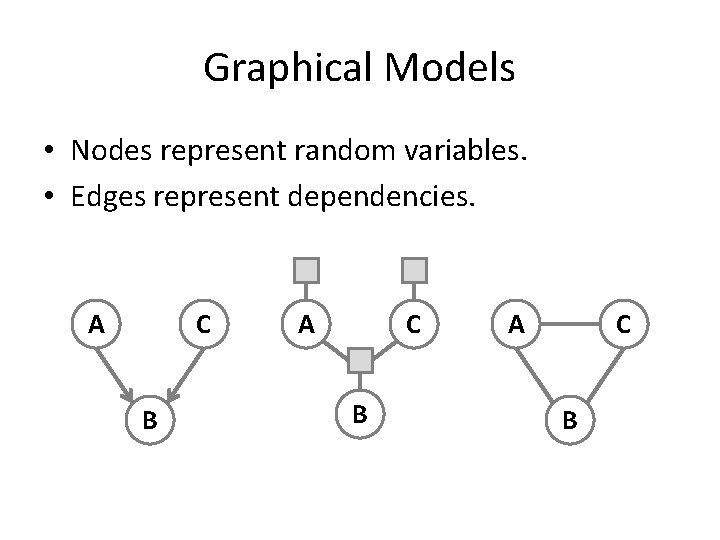

Graphical Models • Nodes represent random variables. • Edges represent dependencies. A C B

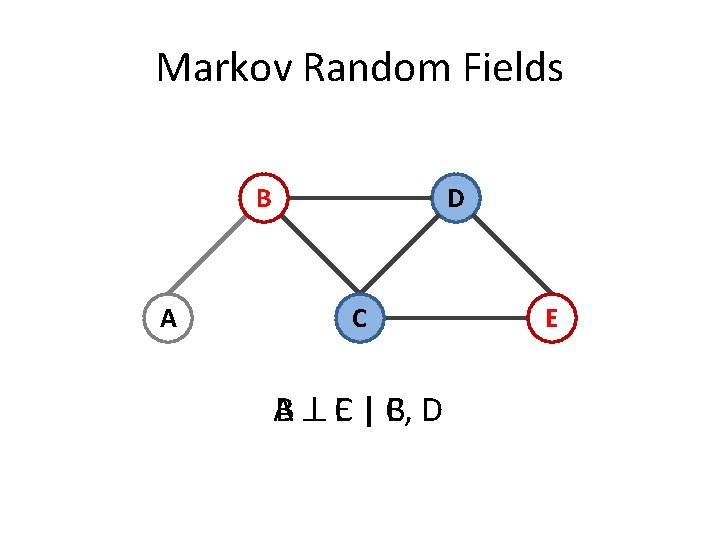

Markov Random Fields B A D C A B C E | C, B D E

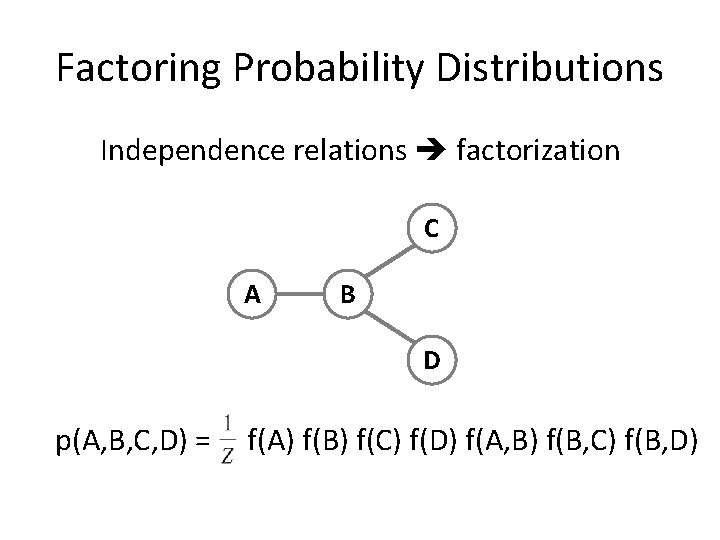

Factoring Probability Distributions Independence relations factorization C A B D p(A, B, C, D) = f(A) f(B) f(C) f(D) f(A, B) f(B, C) f(B, D)

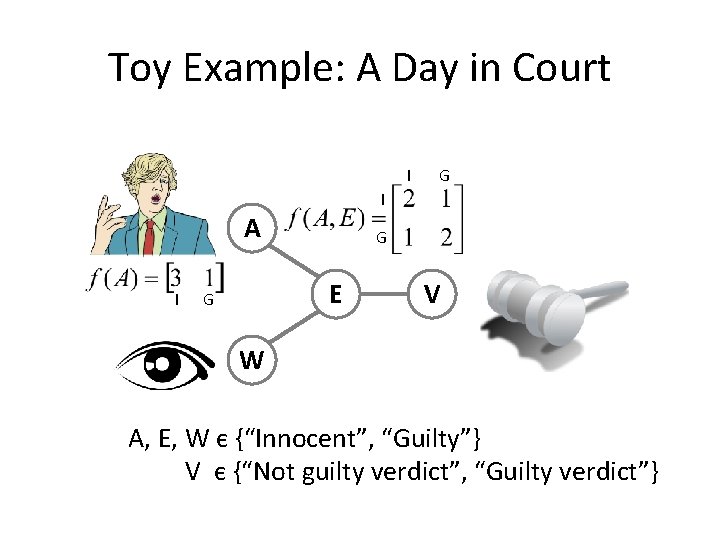

Toy Example: A Day in Court I G I A I G E G V W A, E, W є {“Innocent”, “Guilty”} V є {“Not guilty verdict”, “Guilty verdict”}

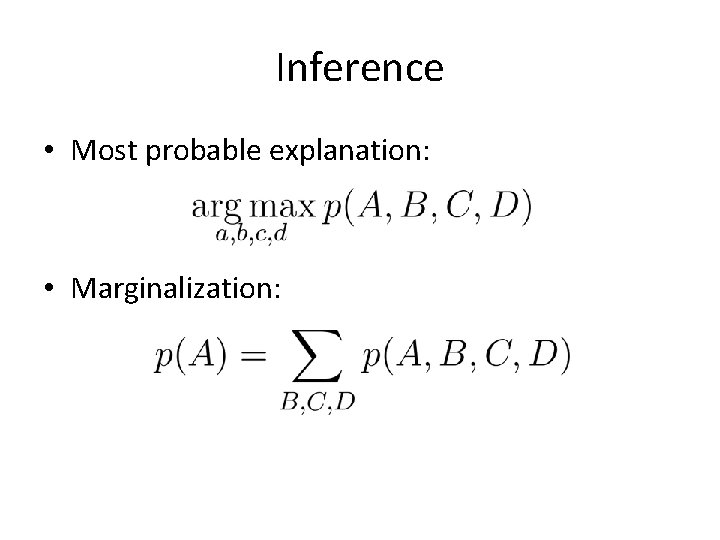

Inference • Most probable explanation: • Marginalization:

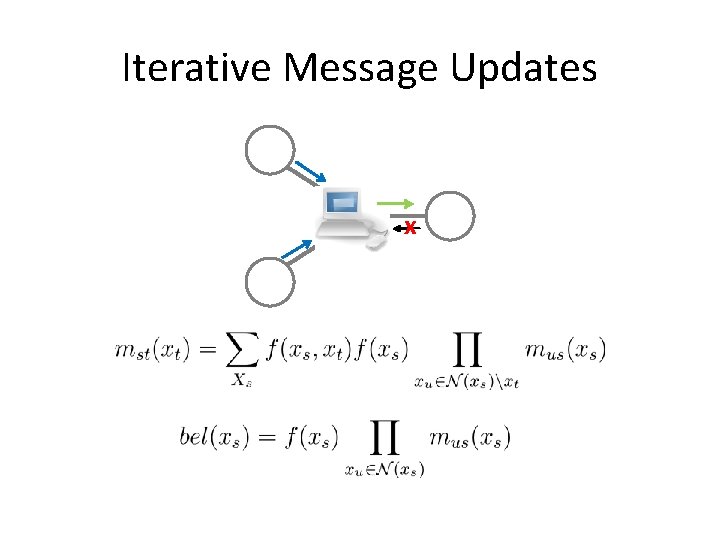

Iterative Message Updates x

Belief Propagation A m. AE(E) m. EV(V) E W m. WE(E) V

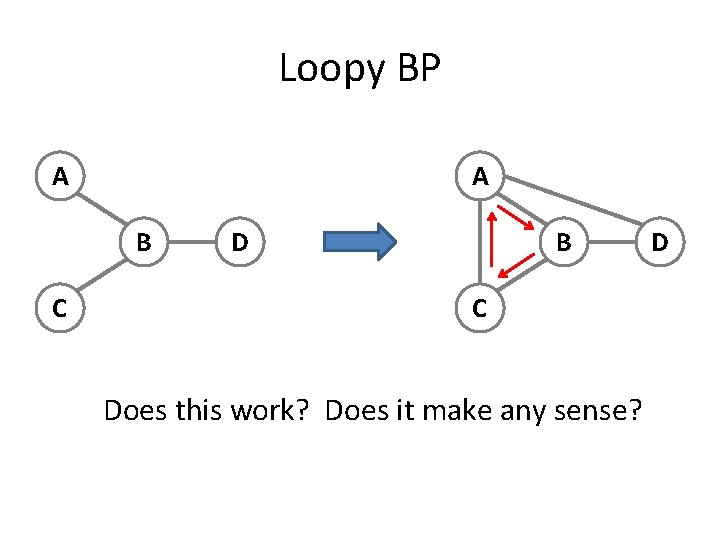

Loopy BP A A B C Does this work? Does it make any sense? D

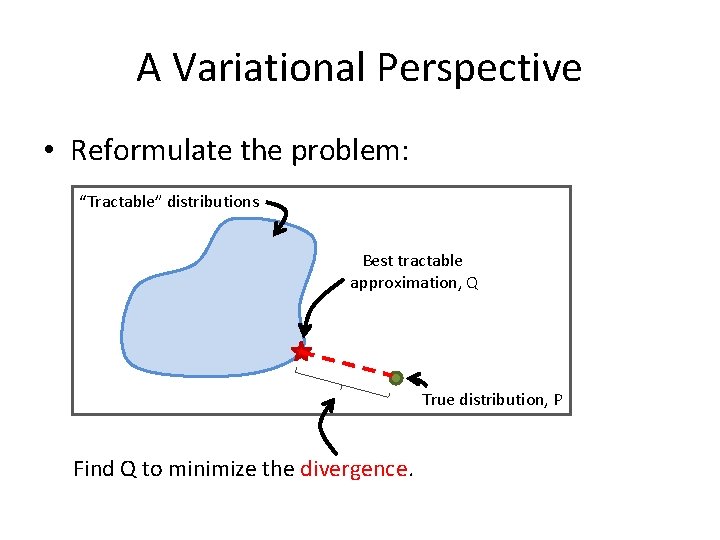

A Variational Perspective • Reformulate the problem: “Tractable” distributions Best tractable approximation, Q True distribution, P Find Q to minimize the divergence.

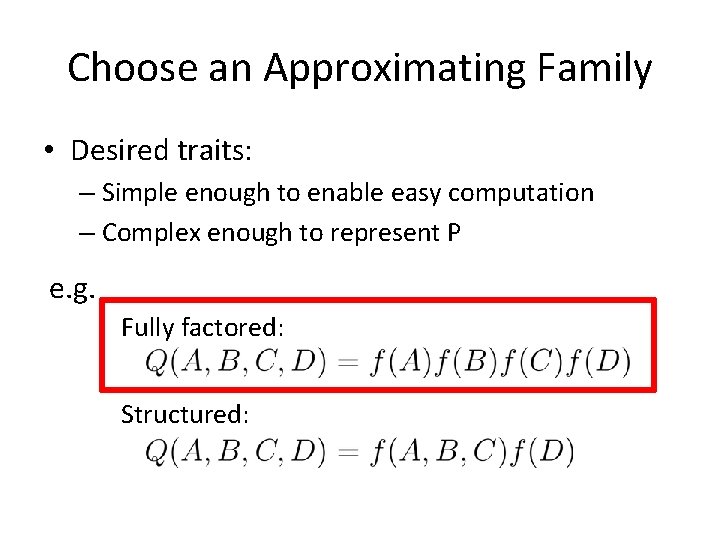

Choose an Approximating Family • Desired traits: – Simple enough to enable easy computation – Complex enough to represent P e. g. Fully factored: Structured:

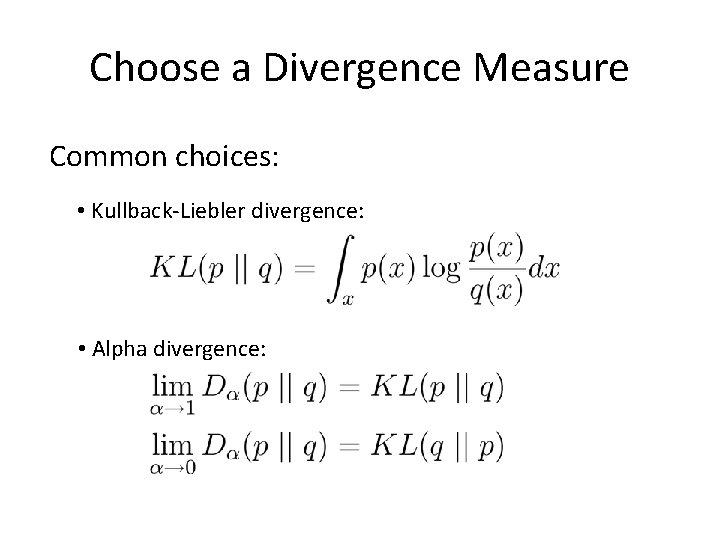

Choose a Divergence Measure Common choices: • Kullback-Liebler divergence: • Alpha divergence:

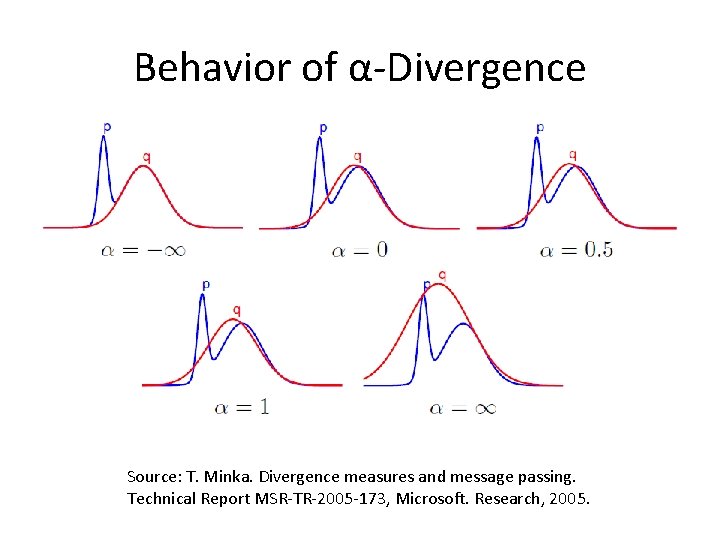

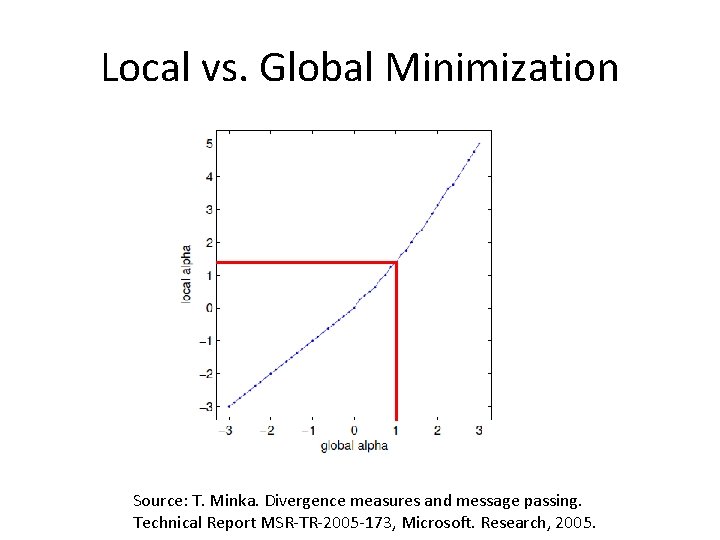

Behavior of α-Divergence Source: T. Minka. Divergence measures and message passing. Technical Report MSR-TR-2005 -173, Microsoft. Research, 2005.

Resulting Algorithms Assuming a fully-factored form of Q, we get…* • Mean field, α=0 • Belief propagation, α=1 • Tree-reweighted BP, α≥ 1 * By minimizing “local divergence”: Q(X 1, X 2, …, Xn) = f(X 1) f(X 2) … f(Xn)

Local vs. Global Minimization Source: T. Minka. Divergence measures and message passing. Technical Report MSR-TR-2005 -173, Microsoft. Research, 2005.

Applications

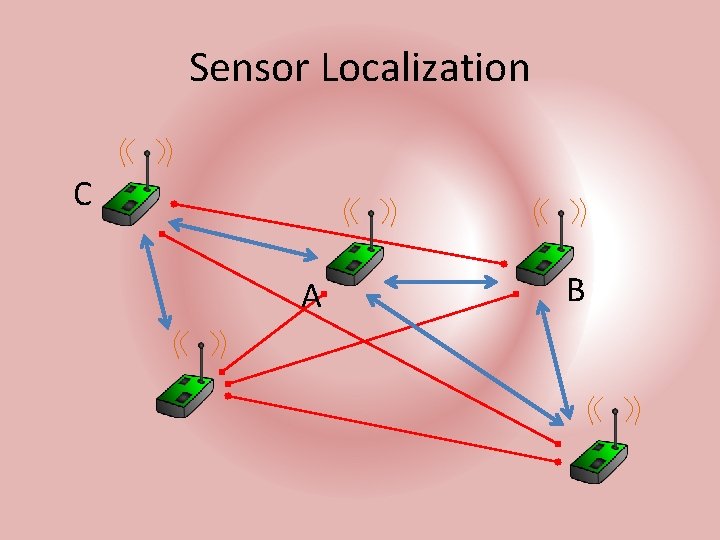

Sensor Localization C A B

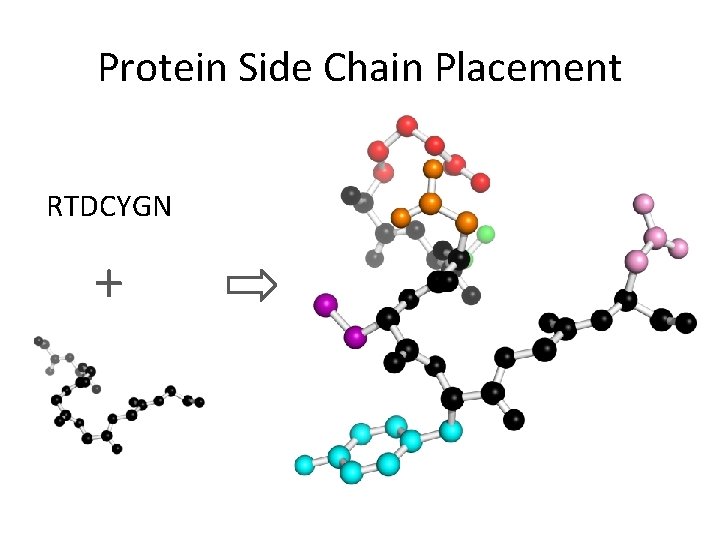

Protein Side Chain Placement RTDCYGN +

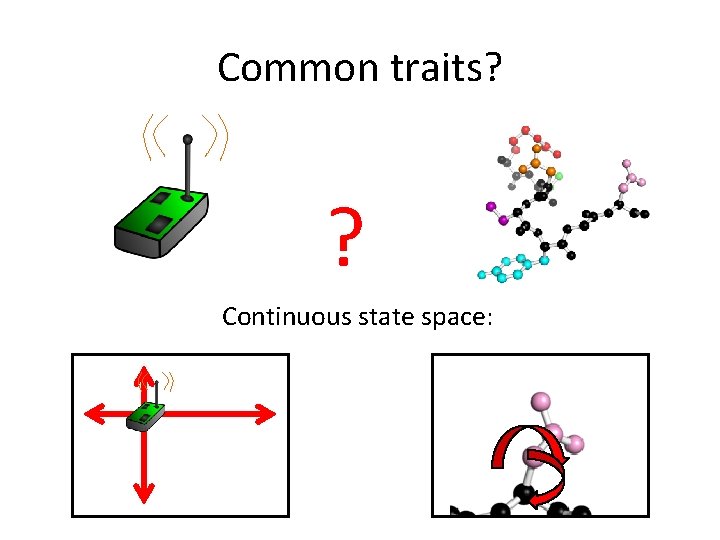

Common traits? ? Continuous state space:

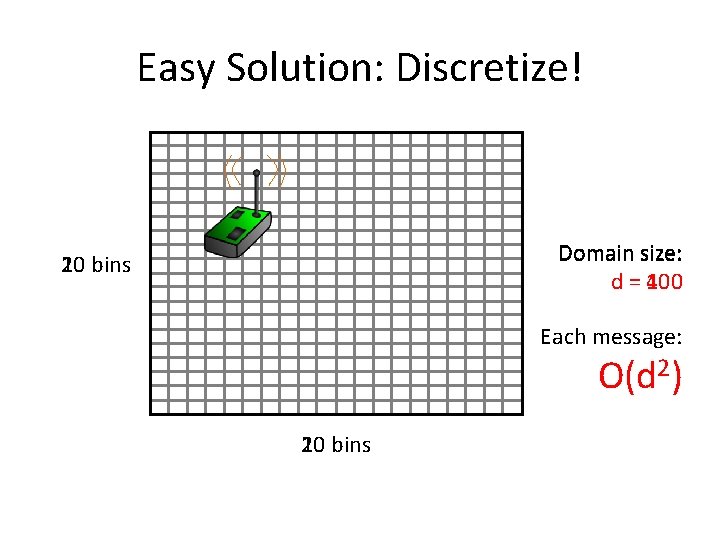

Easy Solution: Discretize! Domain size: d = 400 100 20 10 bins Each message: O(d 2) 20 10 bins

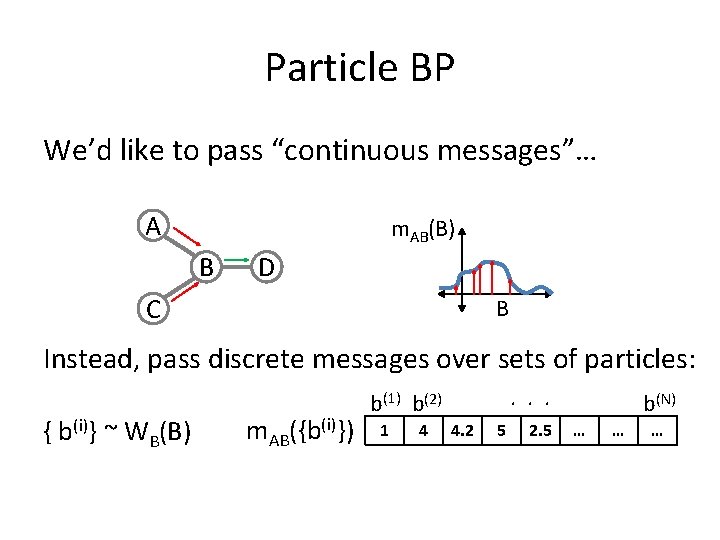

Particle BP We’d like to pass “continuous messages”… A m. AB(B) B D C B Instead, pass discrete messages over sets of particles: { b(i)} ~ WB(B) m. AB({b(i)}) . . . b(1) b(2) 1 4 4. 2 5 2. 5 b(N) … … …

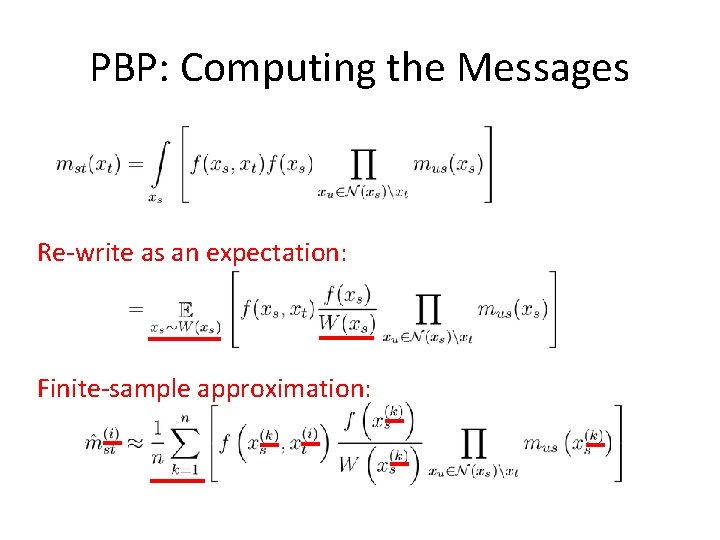

PBP: Computing the Messages Re-write as an expectation: Finite-sample approximation:

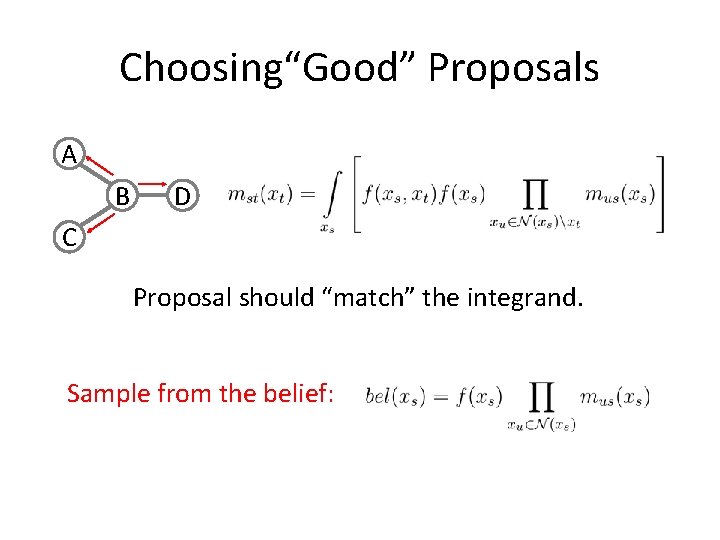

Choosing“Good” Proposals A B D C Proposal should “match” the integrand. Sample from the belief:

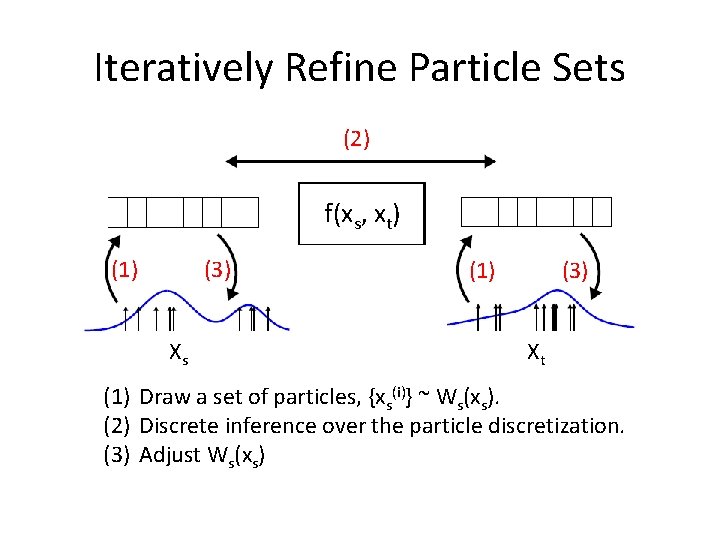

Iteratively Refine Particle Sets (2) f(xs, xt) (1) (3) Xs (1) (3) Xt (1) Draw a set of particles, {xs(i)} ~ Ws(xs). (2) Discrete inference over the particle discretization. (3) Adjust Ws(xs)

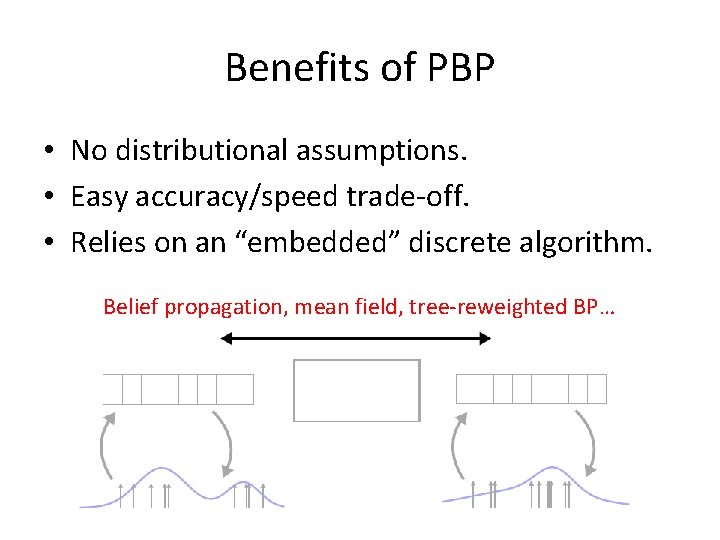

Benefits of PBP • No distributional assumptions. • Easy accuracy/speed trade-off. • Relies on an “embedded” discrete algorithm. Belief propagation, mean field, tree-reweighted BP…

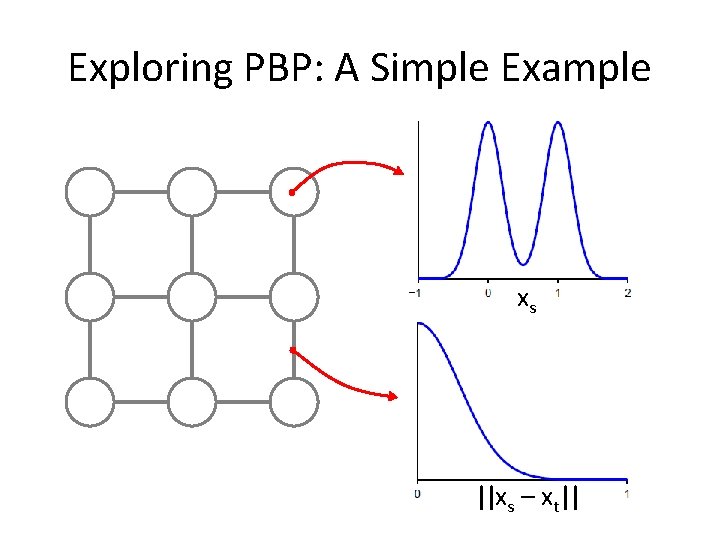

Exploring PBP: A Simple Example xs ||xs – xt||

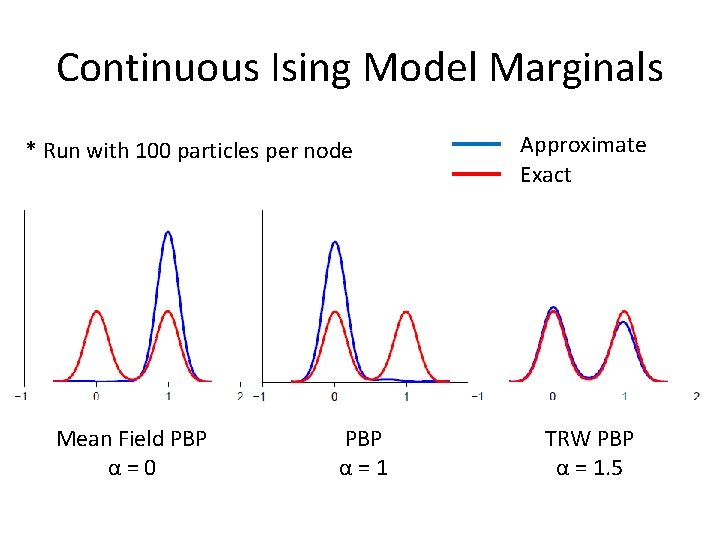

Continuous Ising Model Marginals * Run with 100 particles per node Mean Field PBP α=0 PBP α=1 Approximate Exact TRW PBP α = 1. 5

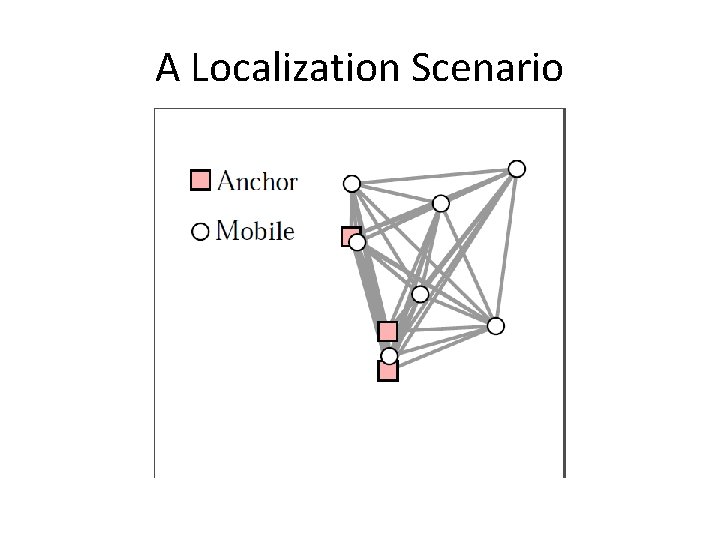

A Localization Scenario

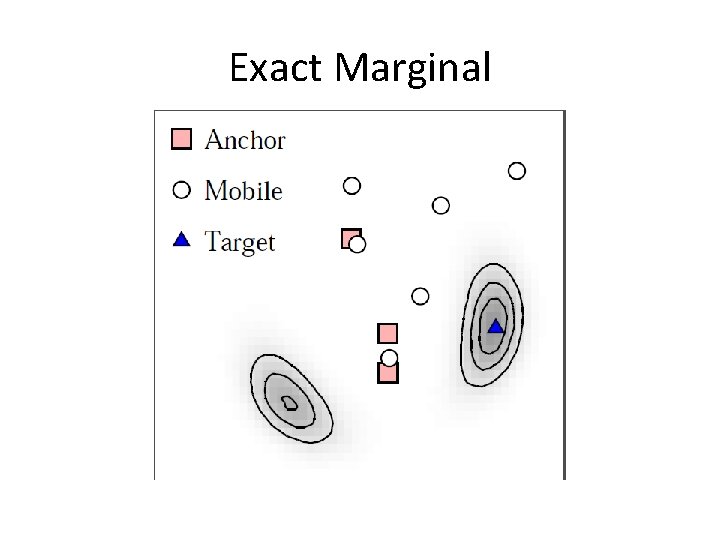

Exact Marginal

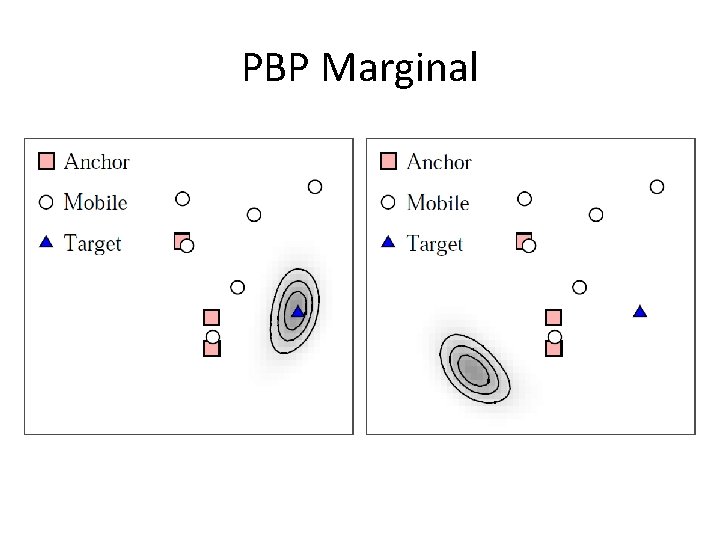

PBP Marginal

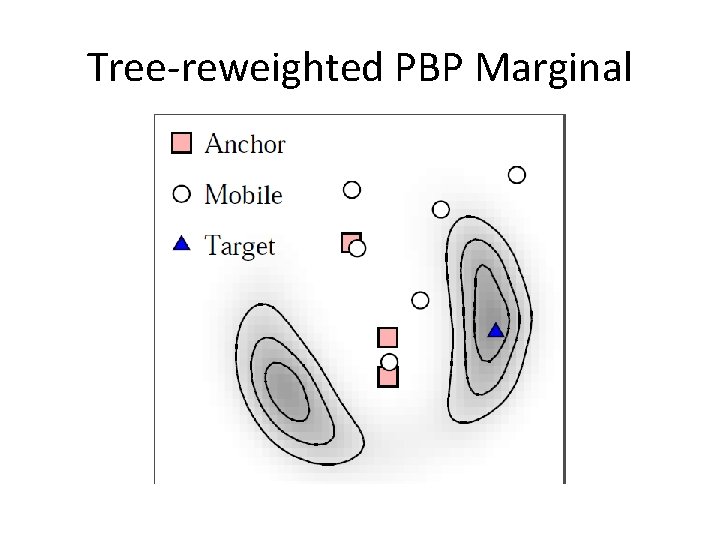

Tree-reweighted PBP Marginal

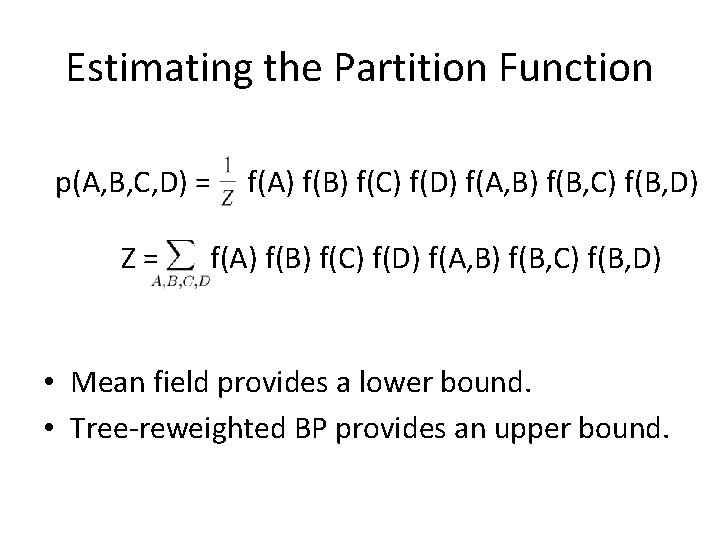

Estimating the Partition Function p(A, B, C, D) = Z= f(A) f(B) f(C) f(D) f(A, B) f(B, C) f(B, D) • Mean field provides a lower bound. • Tree-reweighted BP provides an upper bound.

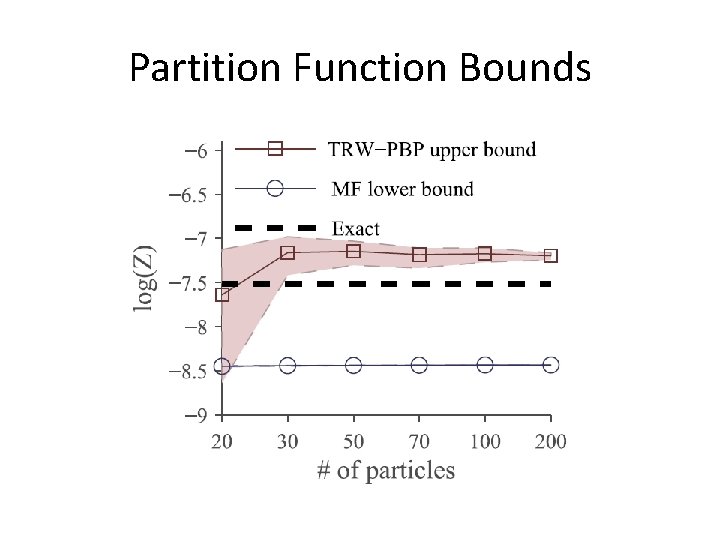

Partition Function Bounds

Conclusions • BP and related algorithms are useful! • Particle BP let’s you handle continuous RVs. • Extensions to BP can work with PBP, too. Thank You!

- Slides: 34