Bayesian Networks What is the likelihood of X

Bayesian Networks What is the likelihood of X given evidence E? i. e. P(X|E) = ?

Issues • Representational Power – allows for unknown, uncertain information • Inference – Question: What is Probability of X if E is true. – Processing: in general, exponential • Acquisition or Learning – network: human input – probabilities: data+ learning

Bayesian Network • • Directed Acyclic Graph Nodes are RV’s Edges denote dependencies Root nodes = nodes without predecessors – prior probability table • Non-root nodes – conditional probabilites for all predecessors

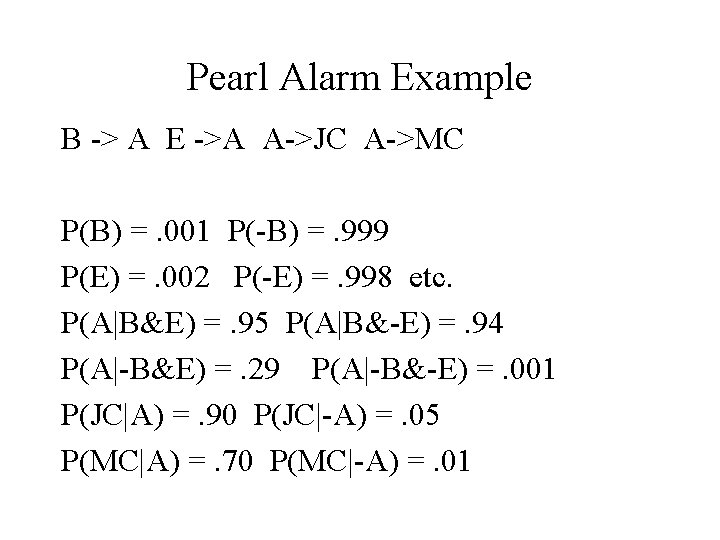

Pearl Alarm Example B -> A E ->A A->JC A->MC P(B) =. 001 P(-B) =. 999 P(E) =. 002 P(-E) =. 998 etc. P(A|B&E) =. 95 P(A|B&-E) =. 94 P(A|-B&E) =. 29 P(A|-B&-E) =. 001 P(JC|A) =. 90 P(JC|-A) =. 05 P(MC|A) =. 70 P(MC|-A) =. 01

Joint Probability yields all • Event = fully specified values for RVs. • Prob of event: P(x 1, x 2, . . xn) = P(x 1|Parents(X 1)*. . P(xn|Parents(Xn)) • E. g. P(j&m&a&-b&-e) = P(j|a)*P(m|a)*P(a|-b^-e)*P(-b)*P(-e) =. 9*. 7*. 001*. 999*. . 998 =. 00062. • Do this for all events and then sum as needed. • Yield exact probability

Summing out • P(b) from full joint = sum over all events with b = true (marginalization) – Silly: must be. 001. • P(MC|JC) = linked by alarm, sum still too much but not so apparent • Need to Answer: what RVs are independent of others depending on the evidence. • We’re skipping: Market Blankets

Probability Calculation Cost • For example, P( 5 boolean variables) requires 2^5 entries. In general 2^n. • For Bayes net, only need tables for all conditional probabilities and priors. • If max k inputs to a node, and n RVs, then need n*2^k table entries. • Computation can be reduced, but difficult.

Approximate Inference • Simple Sampling • Use Bayes. Network as a generative model • Eg. generate 10000 examples, via topological order. • Generates examples with appropriate distribution. • Now use examples to estimate probabilities.

Sampling -> probabilities • • • Generate examples with proper probability density. Use the ordering of the nodes to construct events. Finally use counting to yield an estimate of the exact probability.

Estimate P(JC|MC) 1. Do a large number of times 1. Use prior tables to compute “root events” 2. Say b = f and e = g 3. Use conditional tables to compute internal nodes values (IN ORDER) 4. Say a = false 5. Say JC = false and MC = true 2. Count the number of appropriate events 3. Note many entries irrelevant: In this case only if MC = true is event considered. Markov Chain Monte Carlo will only construct appropriate events.

Confidence of Estimate • Given n examples and k are heads. • How many examples needed to be 99% certain that k/n is within. 01 of the true p. • From statistic: Mean = np, Variance = npq • For confidence of. 99, t = 3. 25 (table) • 3. 25*sqrt(pq/N) <. 01 => N >6, 400. • Correct probabilities not needed: just correct ordering.

Applications • Bayesian Networks extended to decision theory. – decision nodes have actions attached – value nodes indicate expect utility • Pathfinder (heckerman): medical diagnosis – adds utility theory (decision theory) – some actions specific tests – 60 disease, 130 features • Research Arena

- Slides: 12