Bayesian Model Selection in Factorial Designs n Seminal

Bayesian Model Selection in Factorial Designs n Seminal work is by Box and Meyer n Intuitive formulation and analytical approach, but the devil is in the details! n Look at simplifying assumptions as we step through Box and Meyer’s approach n One of the hottest areas in statistics for several years

Model Domain n There are 2 k-p=n possible (fractional) factorial models, denoted as a set {Ml}. n To simplify later calculations, we usually assume that the only active effects are main effects, two-way effects or three-way effects – This assumption is already in place for lowresolution fractional factorials

Active Effects in Models n Each Ml denotes a set of active effects (both main effects and interactions) in a hierarchical model. n We will use Xik=1 for the high level of effect k and Xik=-1 for the low level of effect k.

Normal Linear Model n We will assume that the response variables follow a linear model with normal errors given model M: Yi~N(Xi’b, s 2) n Xi and b are model-specific, but we will use a saturated model in what follows.

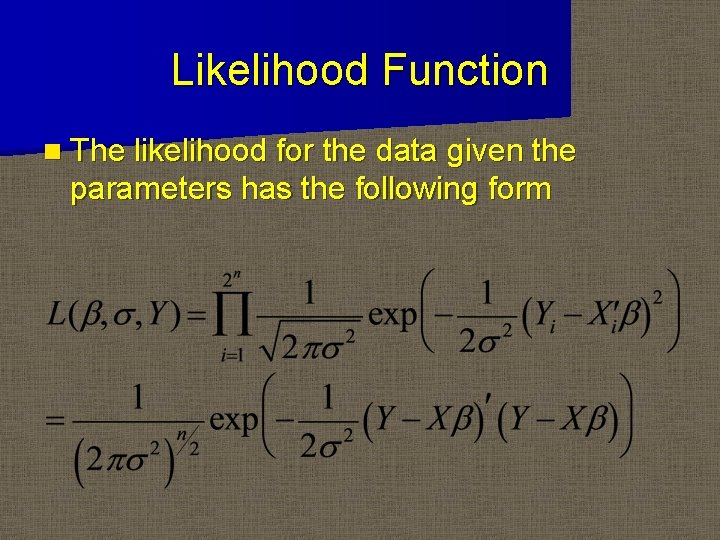

Likelihood Function n The likelihood for the data given the parameters has the following form

Bayesian Paradigm n Unlike in classical inference, we assume the parameters, Q, are random variables that have a prior distribution, f. Q(q), rather than being fixed unknown constants. n In classical inference, we estimate q by maximizing the likelihood L(q|y)

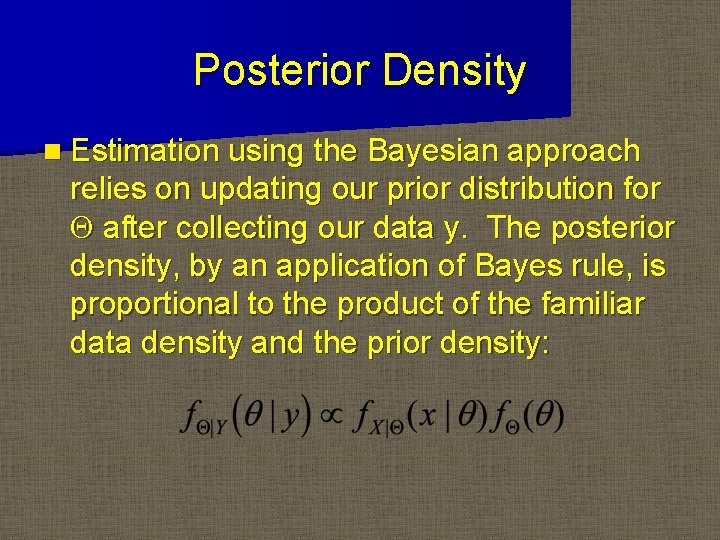

Posterior Density n Estimation using the Bayesian approach relies on updating our prior distribution for Q after collecting our data y. The posterior density, by an application of Bayes rule, is proportional to the product of the familiar data density and the prior density:

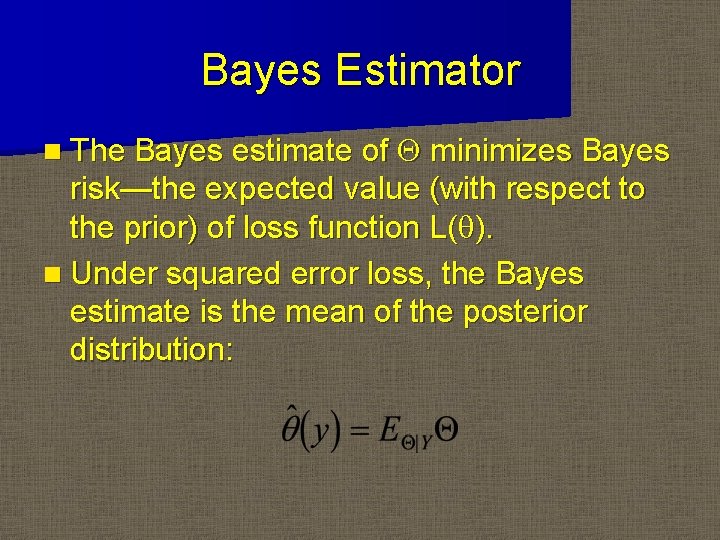

Bayes Estimator Q minimizes Bayes risk—the expected value (with respect to the prior) of loss function L(q). n Under squared error loss, the Bayes estimate is the mean of the posterior distribution: n The Bayes estimate of

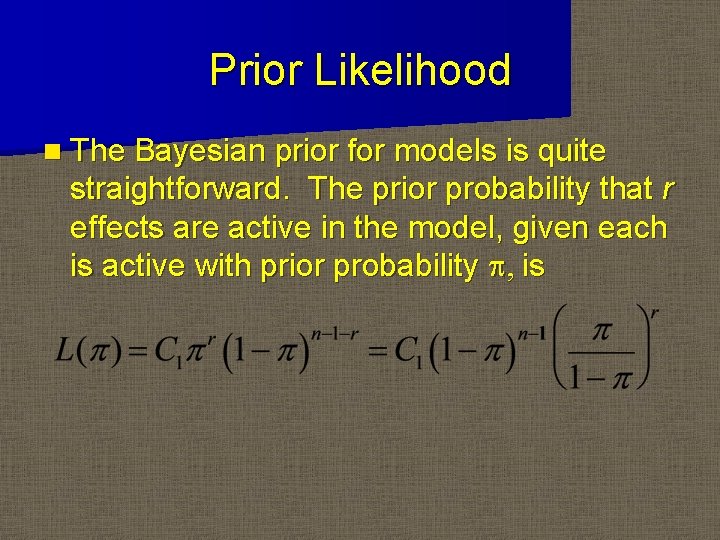

Prior Likelihood n The Bayesian prior for models is quite straightforward. The prior probability that r effects are active in the model, given each is active with prior probability p, is

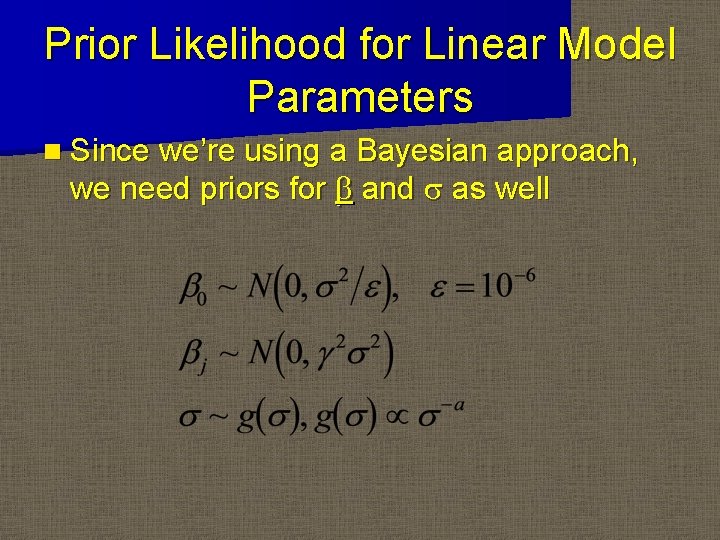

Prior Likelihood for Linear Model Parameters n Since we’re using a Bayesian approach, we need priors for b and s as well

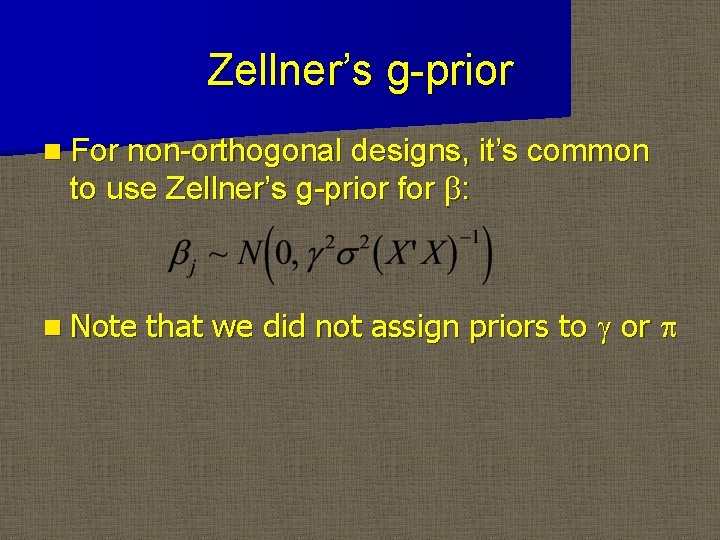

Zellner’s g-prior n For non-orthogonal designs, it’s common to use Zellner’s g-prior for b: n Note that we did not assign priors to g or p

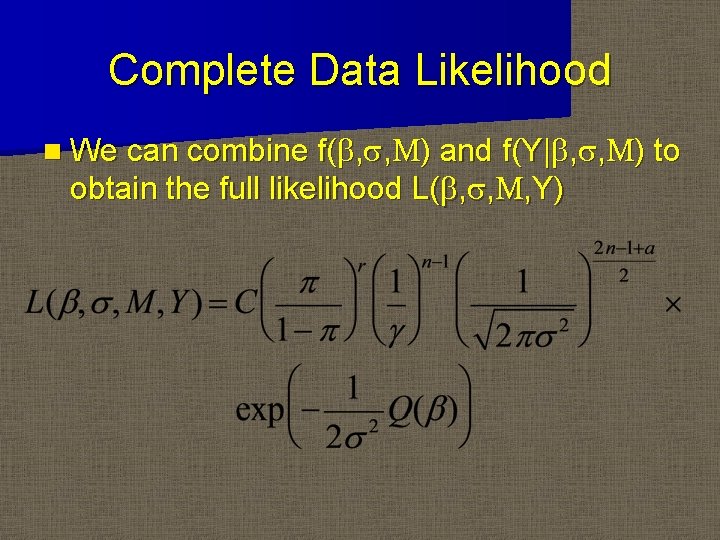

Complete Data Likelihood n We can combine f(b, s, M) and f(Y|b, s, M) to obtain the full likelihood L(b, s, M, Y)

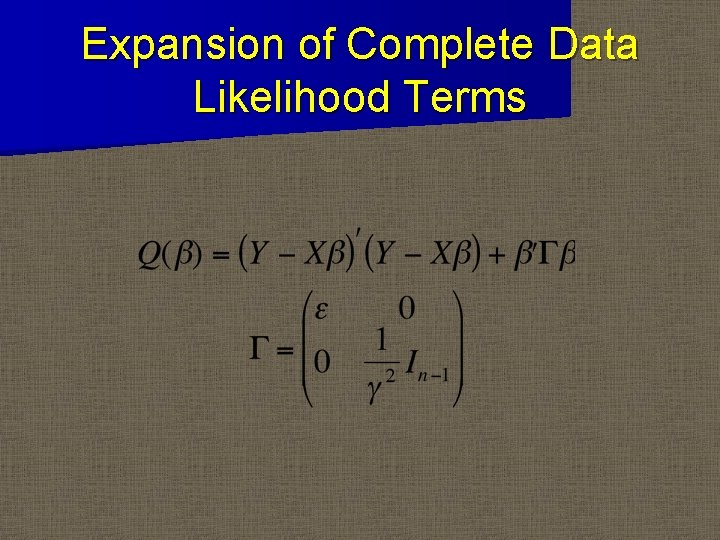

Expansion of Complete Data Likelihood Terms

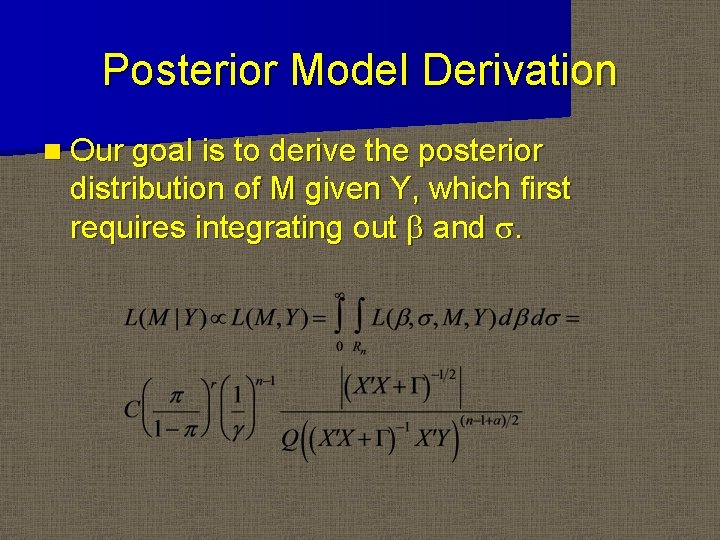

Posterior Model Derivation n Our goal is to derive the posterior distribution of M given Y, which first requires integrating out b and s.

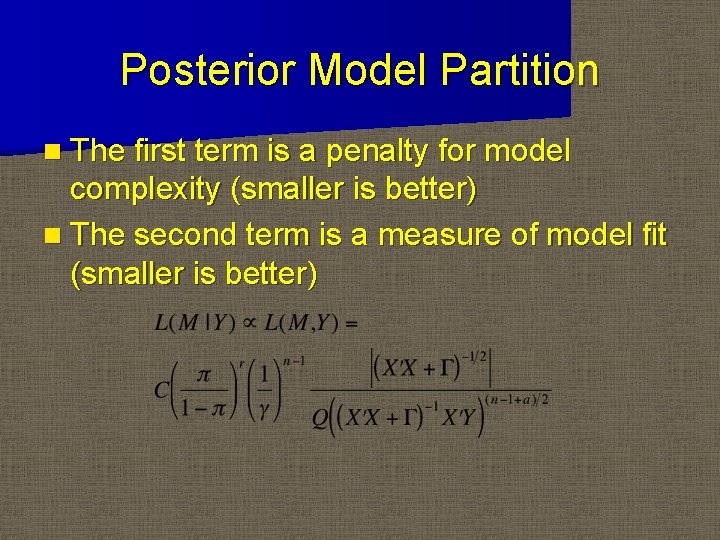

Posterior Model Partition n The first term is a penalty for model complexity (smaller is better) n The second term is a measure of model fit (smaller is better)

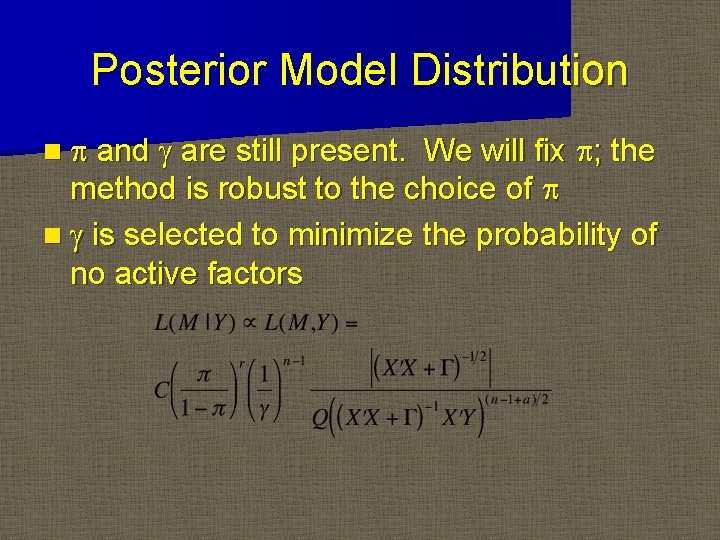

Posterior Model Distribution n p and g are still present. We will fix p; the method is robust to the choice of p n g is selected to minimize the probability of no active factors

Posterior Model Selection n With L(M|Y) in hand, we can actually evaluate the P(Mi|Y) for all Mi for any prior choice of p, provided the number of Mi is not burdensome n This is in part why we assume eligible Mi only include lower order effects.

Selection Criteria n Greedy search or MCMC algorithms are used to select models when they cannot be itemized n Selection criteria include Bayes Factor, Schwarz criterion, Bayesian Information Criterion n Refer to R package BMA and bic. glm for fitting more general models.

Marginal Selection n For each effect, we sum the probabilities for all Mi that contain that effect and obtain a marginal posterior probability for that effect. n These marginal probabilities are relatively robust to the choice of p.

Case Study n Violin data* (24 factorial design with n=11 replications) n Response: Decibels n Factors – A: Pressure (Low/High) – B: Placement (Near/Far) – C: Angle (Low/High) – D: Speed (Low/High) *Carla Padgett, STAT 706 taught by Don Edwards

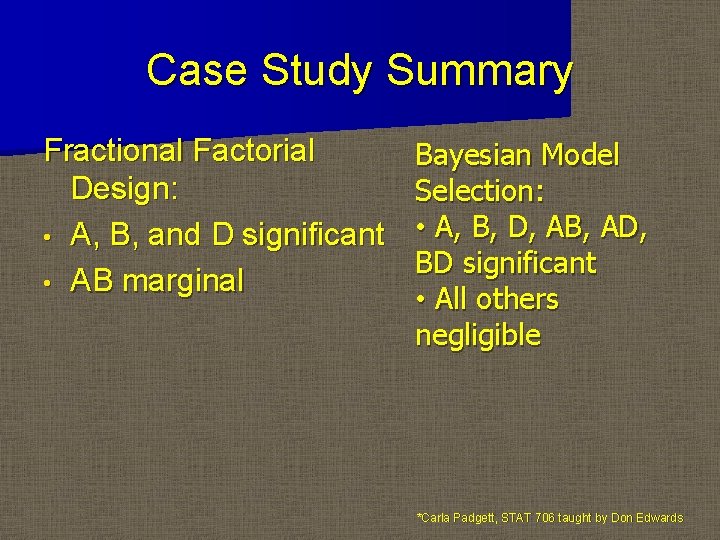

Case Study Summary Fractional Factorial Design: • A, B, and D significant • AB marginal Bayesian Model Selection: • A, B, D, AB, AD, BD significant • All others negligible *Carla Padgett, STAT 706 taught by Don Edwards

- Slides: 21