Bayesian Methods What they are and how they

Bayesian Methods What they are and how they fit into Forensic Science

Outline • Bayes’ Rule • Bayesian Statistics (Briefly!) • Conjugates • General parametric using BUGS/MC software • Bayesian Networks • Some software: Ge. NIe, Sam. Iam, Hugin, g. R R-packages • Bayesian Hypothesis Testing • The “Bayesian Framework” in Forensic Science • Likelihood Ratios with Bayesian Network software

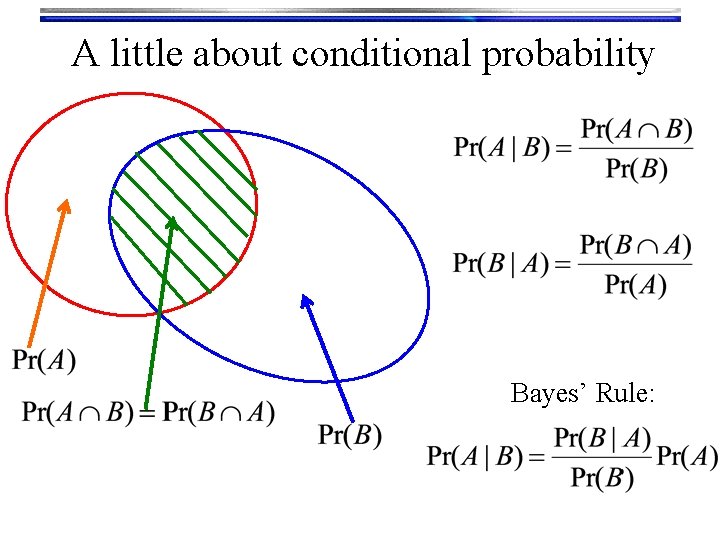

A little about conditional probability Bayes’ Rule:

Probability • Frequency: ratio of the number of observations of interest (ni) to the total number of observations (N) • It is EMPIRICAL! • Probability (frequentist): frequency of observation i in the limit of a very large number of observations

Probability • Belief: A “Bayesian’s” interpretation of probability. • An observation (outcome, event) is a “measure of the state of knowlege”Jaynes. • • Bayesian-probabilities reflect degree of belief and can be assigned to any statement Beliefs (probabilities) can be updated in light of new evidence (data) via Bayes theorem.

Bayesian Statistics • The basic Bayesian philosophy: Prior Knowledge × Data = Updated Knowledge A better understanding of the world Prior × Data = Posterior

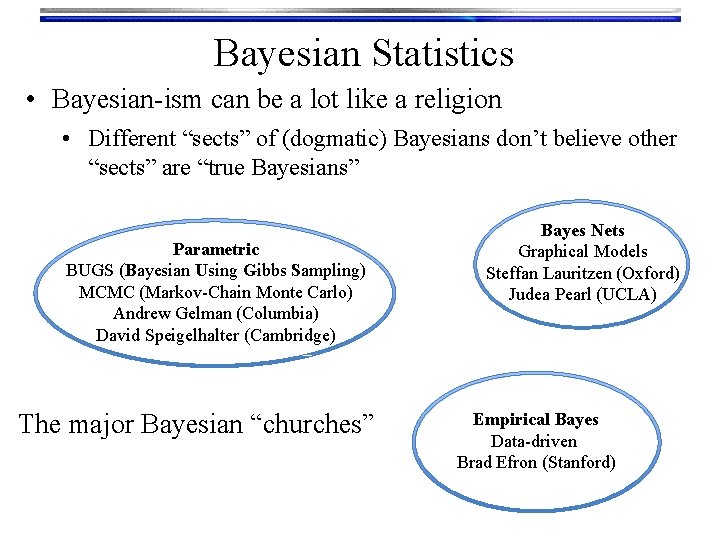

Bayesian Statistics • Bayesian-ism can be a lot like a religion • Different “sects” of (dogmatic) Bayesians don’t believe other “sects” are “true Bayesians” Parametric BUGS (Bayesian Using Gibbs Sampling) MCMC (Markov-Chain Monte Carlo) Andrew Gelman (Columbia) David Speigelhalter (Cambridge) The major Bayesian “churches” Bayes Nets Graphical Models Steffan Lauritzen (Oxford) Judea Pearl (UCLA) Empirical Bayes Data-driven Brad Efron (Stanford)

Bayesian Statistics • What’s a Bayesian…? ? • Someone who adheres ONLY to belief interpretation of probability? • Someone who uses Bayesian methods? • Only uses Bayesian methods? • Usually likes to beat-up on frequentist methodology…

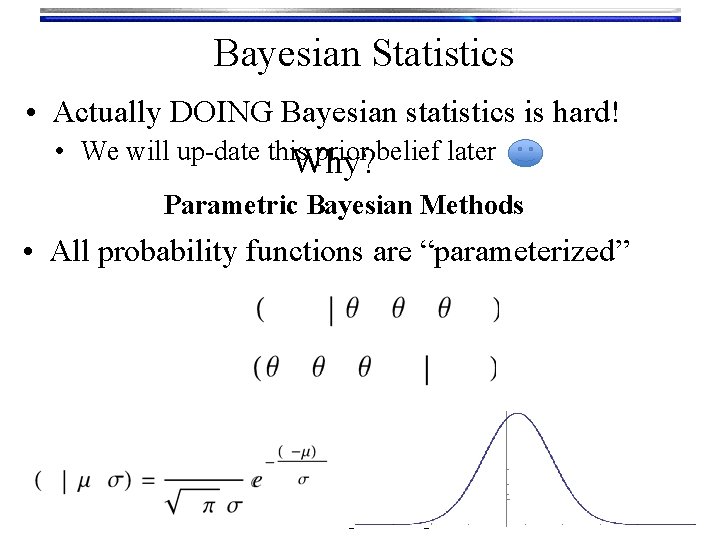

Bayesian Statistics • Actually DOING Bayesian statistics is hard! • We will up-date this prior belief later Why? Parametric Bayesian Methods • All probability functions are “parameterized”

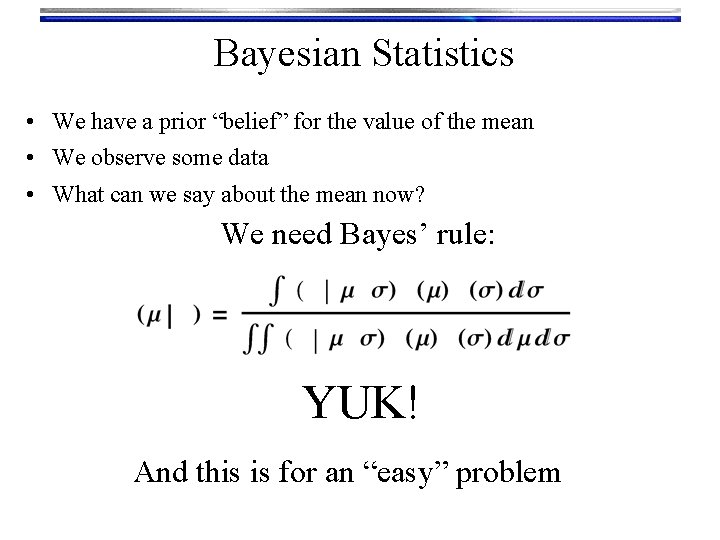

Bayesian Statistics • We have a prior “belief” for the value of the mean • We observe some data • What can we say about the mean now? We need Bayes’ rule: YUK! And this is for an “easy” problem

Bayesian Statistics • So what can we do? ? • Until ~ 1990, get lucky…. . • Sometimes we can work out the integrals by hand • Sometimes you get posteriors that are the same form as the priors (Conjugacy) • Now there is software to evaluate the integrals. • Some free stuff: • MCMC: Win. BUGS, Open. BUGS, JAGS • HMC: Stan

Bayesian Networks • A “scenario” is represented by a joint probability function • Contains variables relevant to a situation which represent uncertain information • Contain “dependencies” between variables that describe how they influence each other. • A graphical way to represent the joint probability function is with nodes and directed lines • Called a Bayesian Network. Pearl

Bayesian Networks • (A Very!!) Simple example. Wiki: • What is the probability the Grass is Wet? • Influenced by the possibility of Rain • Influenced by the possibility of Sprinkler action • Sprinkler action influenced by possibility of Rain • Construct joint probability function to answer questions about this scenario: • Pr(Grass Wet, Rain, Sprinkler)

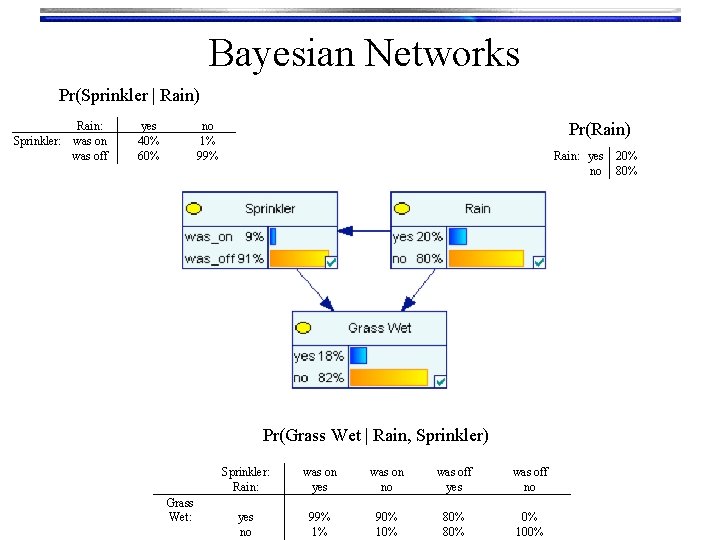

Bayesian Networks Pr(Sprinkler | Rain) Rain: Sprinkler: was on was off yes 40% 60% Pr(Rain) no 1% 99% Rain: yes no Pr(Grass Wet | Rain, Sprinkler) Grass Wet: Sprinkler: Rain: was on yes was on no was off yes was off no yes no 99% 1% 90% 10% 80% 0% 100% 20% 80%

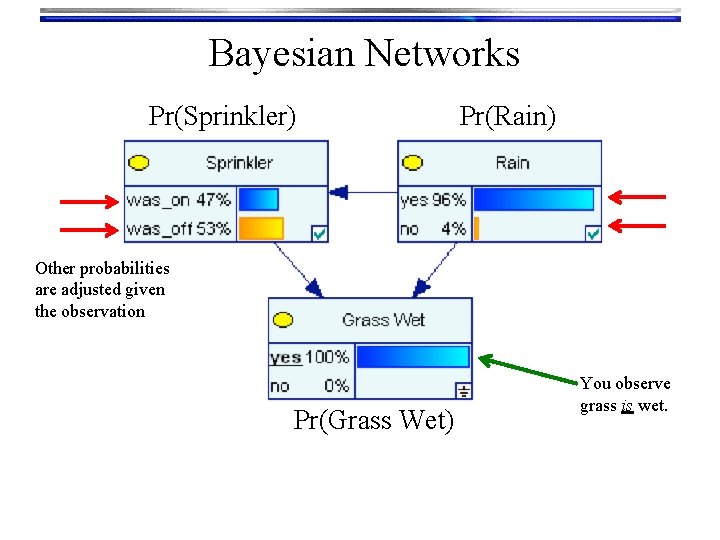

Bayesian Networks Pr(Sprinkler) Pr(Rain) Other probabilities are adjusted given the observation Pr(Grass Wet) You observe grass is wet.

Bayesian Networks • Areas where Bayesian Networks are used • Medical recommendation/diagnosis • IBM/Watson, Massachusetts General Hospital/DXplain • Image processing • Business decision support • Boeing, Intel, United Technologies, Oracle, Philips • Information search algorithms and on-line recommendation engines • Space vehicle diagnostics • NASA • Search and rescue planning • US Military • Requires software. Some free stuff: • Ge. NIe (University of Pittsburgh)G, • Sam. Iam (UCLA)S • Hugin (Free only for a few nodes)H • g. R R-packagesg. R

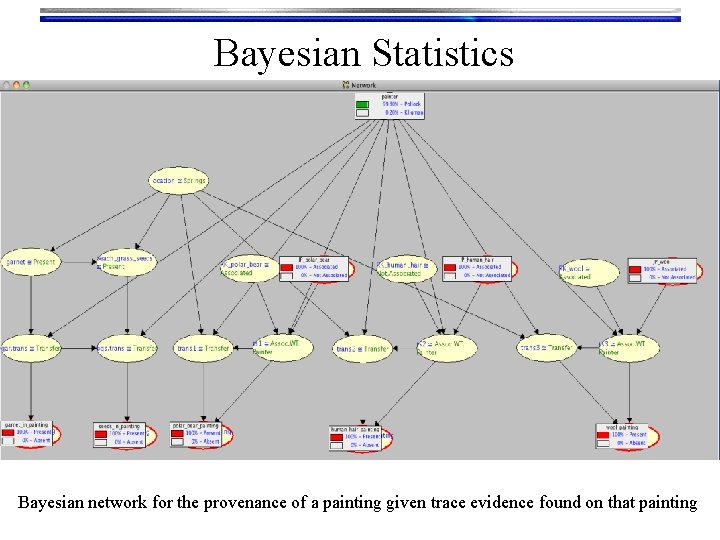

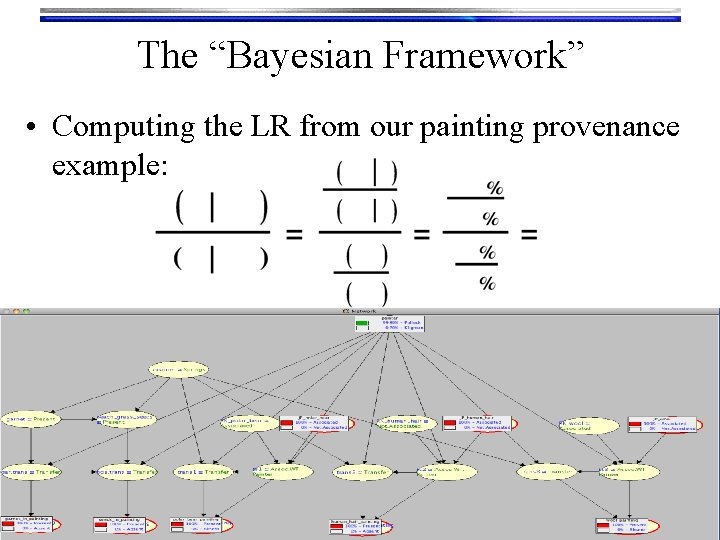

Bayesian Statistics Bayesian network for the provenance of a painting given trace evidence found on that painting

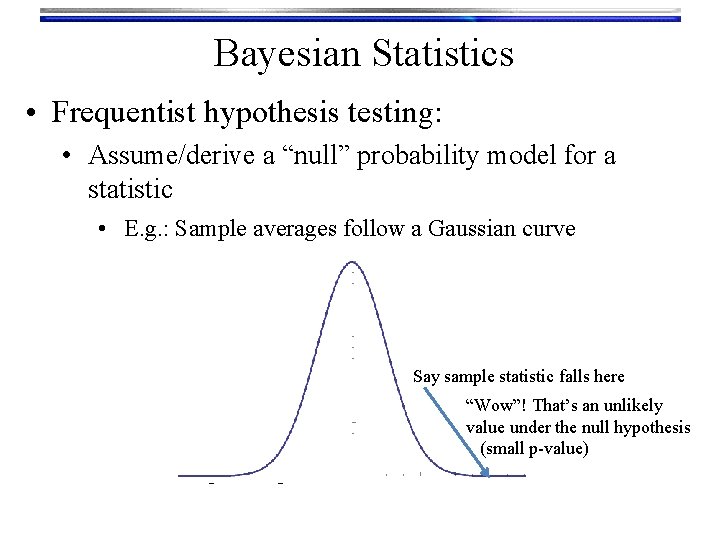

Bayesian Statistics • Frequentist hypothesis testing: • Assume/derive a “null” probability model for a statistic • E. g. : Sample averages follow a Gaussian curve Say sample statistic falls here “Wow”! That’s an unlikely value under the null hypothesis (small p-value)

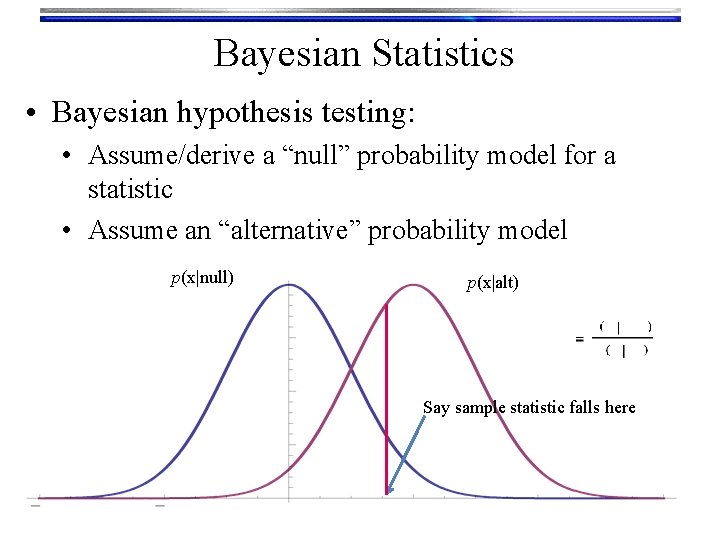

Bayesian Statistics • Bayesian hypothesis testing: • Assume/derive a “null” probability model for a statistic • Assume an “alternative” probability model p(x|null) p(x|alt) Say sample statistic falls here

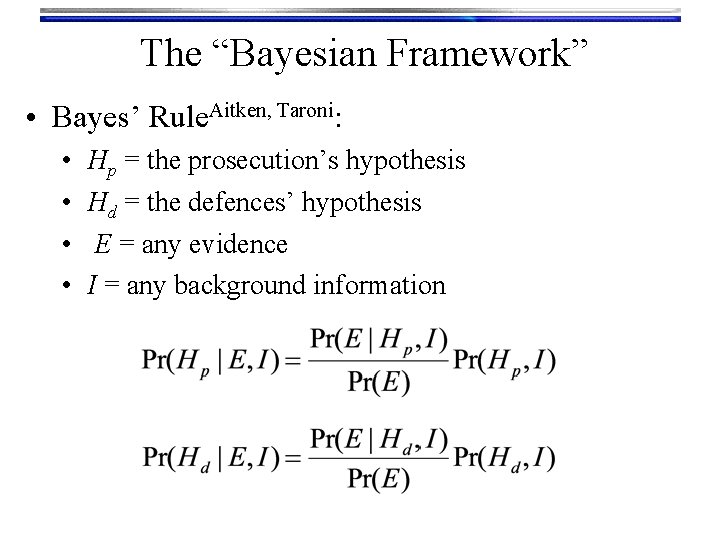

The “Bayesian Framework” • Bayes’ Rule. Aitken, Taroni: • • Hp = the prosecution’s hypothesis Hd = the defences’ hypothesis E = any evidence I = any background information

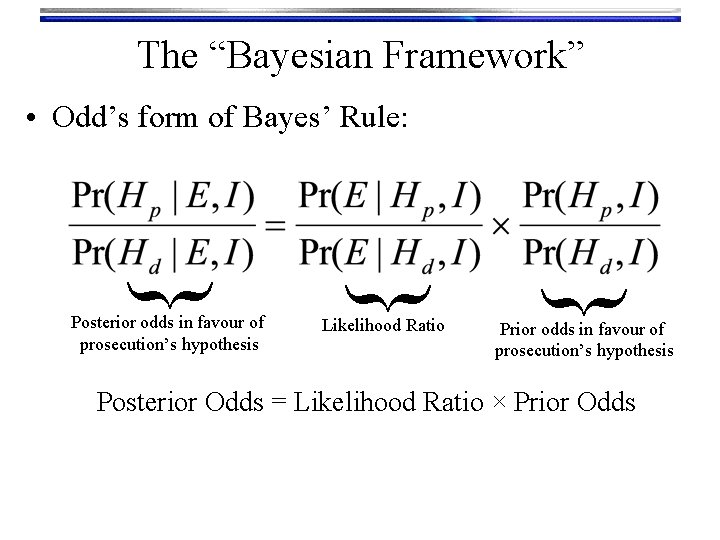

The “Bayesian Framework” Likelihood Ratio { Posterior odds in favour of prosecution’s hypothesis { { • Odd’s form of Bayes’ Rule: Prior odds in favour of prosecution’s hypothesis Posterior Odds = Likelihood Ratio × Prior Odds

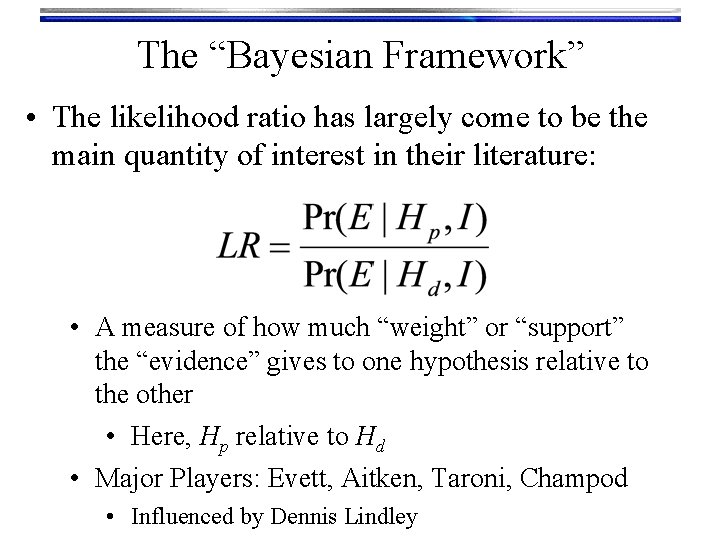

The “Bayesian Framework” • The likelihood ratio has largely come to be the main quantity of interest in their literature: • A measure of how much “weight” or “support” the “evidence” gives to one hypothesis relative to the other • Here, Hp relative to Hd • Major Players: Evett, Aitken, Taroni, Champod • Influenced by Dennis Lindley

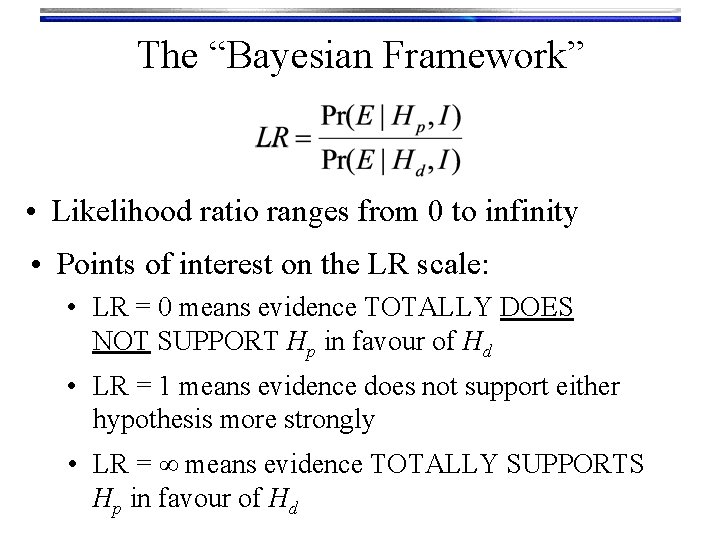

The “Bayesian Framework” • Likelihood ratio ranges from 0 to infinity • Points of interest on the LR scale: • LR = 0 means evidence TOTALLY DOES NOT SUPPORT Hp in favour of Hd • LR = 1 means evidence does not support either hypothesis more strongly • LR = ∞ means evidence TOTALLY SUPPORTS Hp in favour of Hd

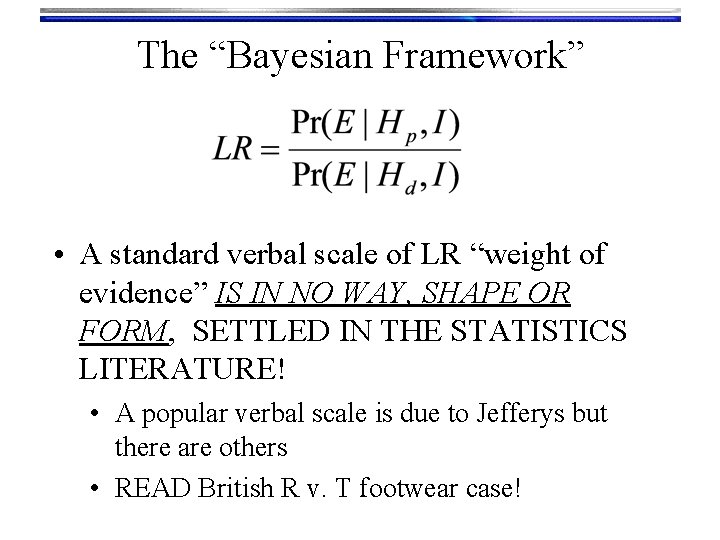

The “Bayesian Framework” • A standard verbal scale of LR “weight of evidence” IS IN NO WAY, SHAPE OR FORM, SETTLED IN THE STATISTICS LITERATURE! • A popular verbal scale is due to Jefferys but there are others • READ British R v. T footwear case!

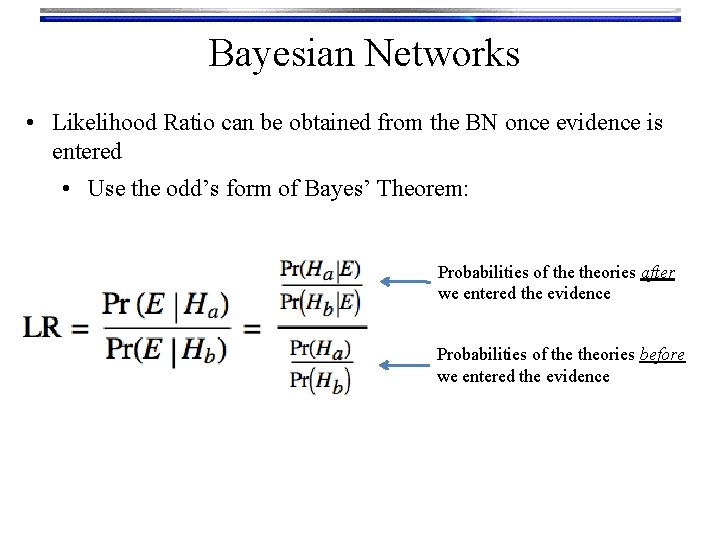

Bayesian Networks • Likelihood Ratio can be obtained from the BN once evidence is entered • Use the odd’s form of Bayes’ Theorem: Probabilities of theories after we entered the evidence Probabilities of theories before we entered the evidence

The “Bayesian Framework” • Computing the LR from our painting provenance example:

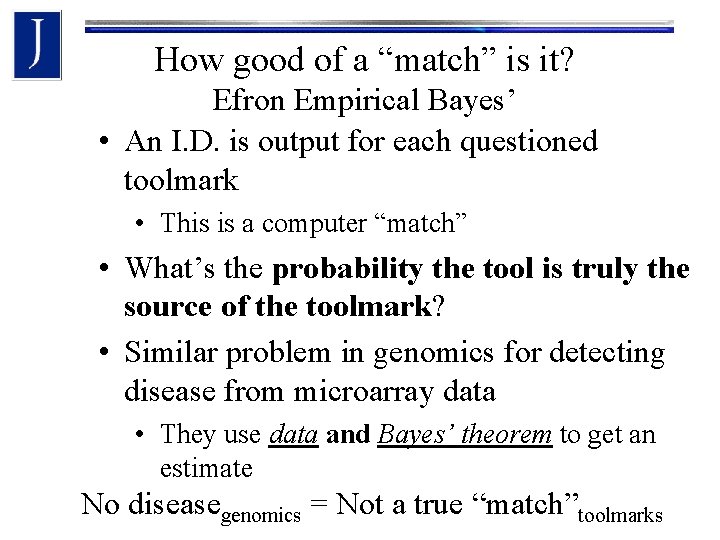

How good of a “match” is it? Efron Empirical Bayes’ • An I. D. is output for each questioned toolmark • This is a computer “match” • What’s the probability the tool is truly the source of the toolmark? • Similar problem in genomics for detecting disease from microarray data • They use data and Bayes’ theorem to get an estimate No diseasegenomics = Not a true “match”toolmarks

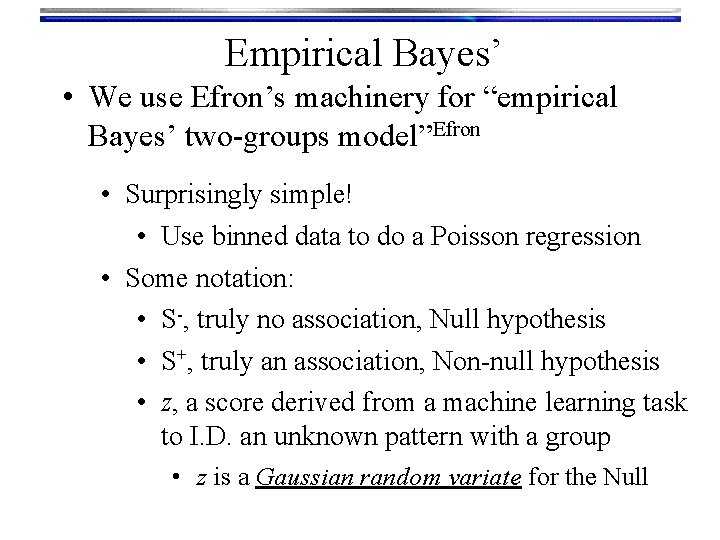

Empirical Bayes’ • We use Efron’s machinery for “empirical Bayes’ two-groups model”Efron • Surprisingly simple! • Use binned data to do a Poisson regression • Some notation: • S-, truly no association, Null hypothesis • S+, truly an association, Non-null hypothesis • z, a score derived from a machine learning task to I. D. an unknown pattern with a group • z is a Gaussian random variate for the Null

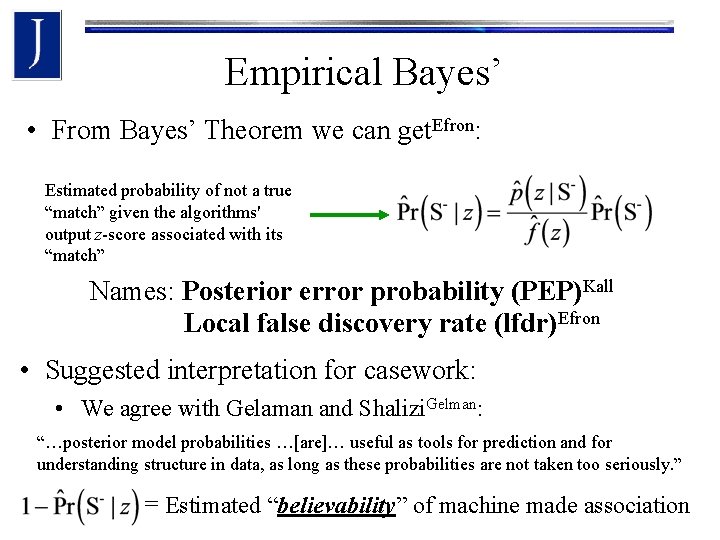

Empirical Bayes’ • From Bayes’ Theorem we can get. Efron: Estimated probability of not a true “match” given the algorithms' output z-score associated with its “match” Names: Posterior error probability (PEP)Kall Local false discovery rate (lfdr)Efron • Suggested interpretation for casework: • We agree with Gelaman and Shalizi. Gelman: “…posterior model probabilities …[are]… useful as tools for prediction and for understanding structure in data, as long as these probabilities are not taken too seriously. ” = Estimated “believability” of machine made association

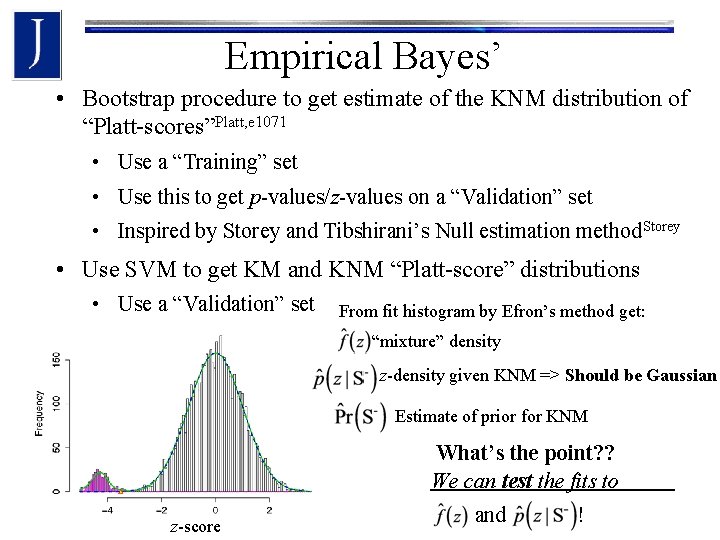

Empirical Bayes’ • Bootstrap procedure to get estimate of the KNM distribution of “Platt-scores”Platt, e 1071 • Use a “Training” set • Use this to get p-values/z-values on a “Validation” set • Inspired by Storey and Tibshirani’s Null estimation method. Storey • Use SVM to get KM and KNM “Platt-score” distributions • Use a “Validation” set From fit histogram by Efron’s method get: “mixture” density z-density given KNM => Should be Gaussian Estimate of prior for KNM What’s the point? ? We can test the fits to z-score and !

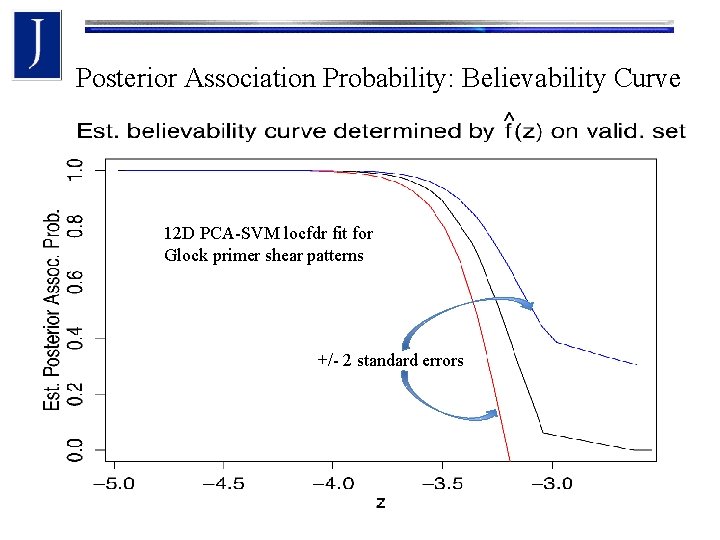

Posterior Association Probability: Believability Curve 12 D PCA-SVM locfdr fit for Glock primer shear patterns +/- 2 standard errors

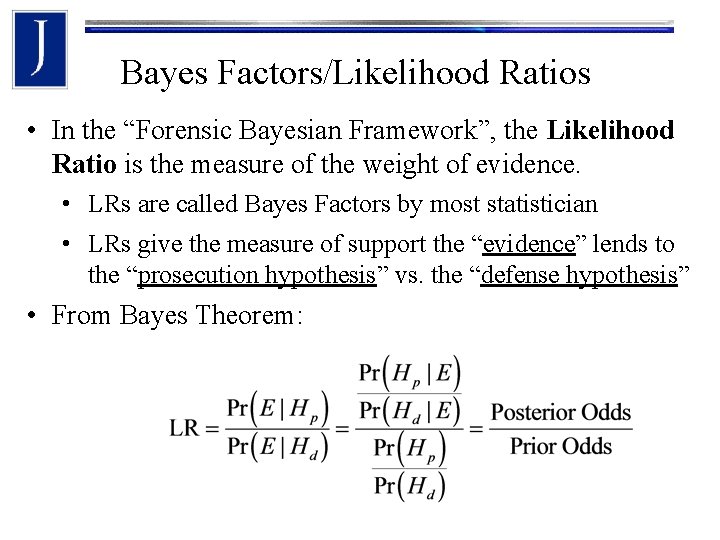

Bayes Factors/Likelihood Ratios • In the “Forensic Bayesian Framework”, the Likelihood Ratio is the measure of the weight of evidence. • LRs are called Bayes Factors by most statistician • LRs give the measure of support the “evidence” lends to the “prosecution hypothesis” vs. the “defense hypothesis” • From Bayes Theorem:

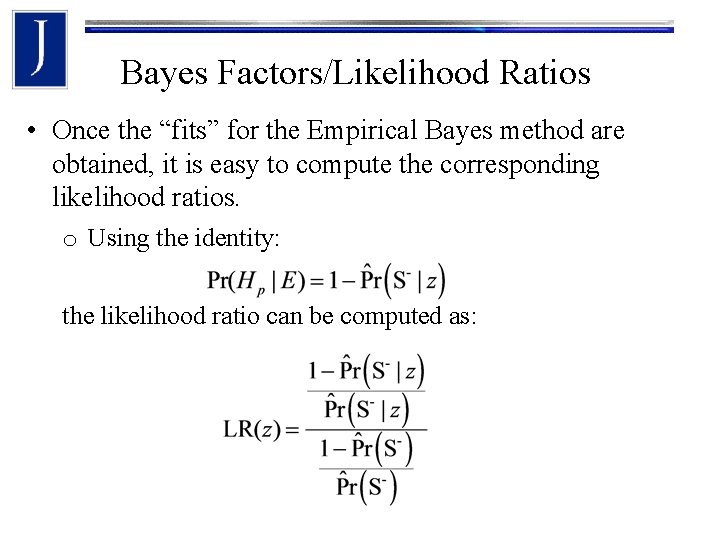

Bayes Factors/Likelihood Ratios • Once the “fits” for the Empirical Bayes method are obtained, it is easy to compute the corresponding likelihood ratios. o Using the identity: the likelihood ratio can be computed as:

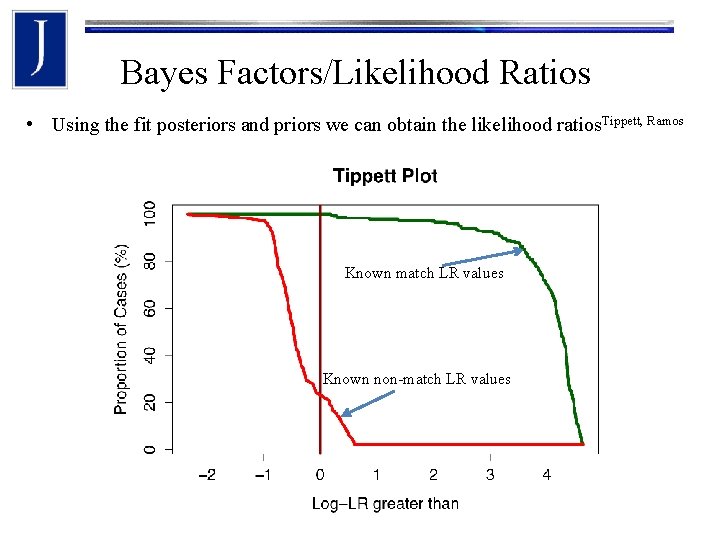

Bayes Factors/Likelihood Ratios • Using the fit posteriors and priors we can obtain the likelihood ratios. Tippett, Ramos Known match LR values Known non-match LR values

Acknowledgements • Professor Chris Saunders (SDSU) • Professor Christophe Champod (Lausanne) • Alan Zheng (NIST) • Research Team: • Dr. Martin Baiker • Ms. Helen Chan • Ms. Julie Cohen • Mr. Peter Diaczuk • Dr. Peter De Forest • Mr. Antonio Del Valle • Ms. Carol Gambino • Dr. James Hamby • • • Ms. Alison Hartwell, Esq. Dr. Thomas Kubic, Esq. Ms. Loretta Kuo Ms. Frani Kammerman Dr. Brooke Kammrath Mr. Chris Luckie Off. Patrick Mc. Laughlin Dr. Linton Mohammed Mr. Nicholas Petraco Dr. Dale Purcel Ms. Stephanie Pollut • • • Dr. Peter Pizzola Dr. Graham Rankin Dr. Jacqueline Speir Dr. Peter Shenkin Ms. Rebecca Smith Mr. Chris Singh Mr. Peter Tytell Ms. Elizabeth Willie Ms. Melodie Yu Dr. Peter Zoon

- Slides: 35