Bayesian Learning for Models of Human Speech Perception

Bayesian Learning for Models of Human Speech Perception Mark Hasegawa-Johnson, University of Illinois at Urbana. Champaign

1. Review of Psychological Results

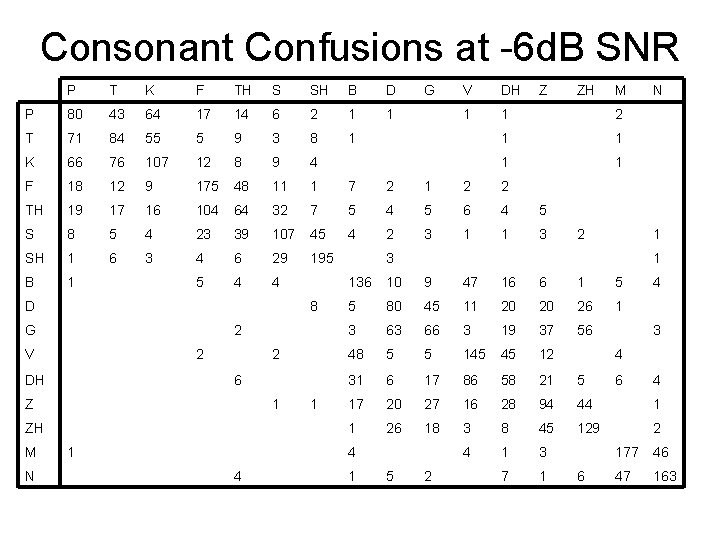

1. A. Independence of Distinctive Feature Errors (Miller and Nicely, 1955; Allen, 2003) • Experimental Method: – Subjects listen to nonsense syllables mixed with noise (white noise or BPF) – Subjects write the consonant they hear • Results:

Consonant Confusions at -6 d. B SNR P T K F TH S SH B D P 80 43 64 17 14 6 2 1 1 T 71 84 55 5 9 3 8 1 K 66 76 107 12 8 9 4 F 18 12 9 175 48 11 1 7 2 1 2 2 TH 19 17 16 104 64 32 7 5 4 5 6 4 5 S 8 5 4 23 39 107 45 4 2 3 1 1 3 SH 1 6 3 4 6 29 195 B 1 5 4 4 D 8 G 2 V 2 DH 2 6 Z 1 ZH M N 1 1 G DH Z ZH M 1 1 2 N 1 3 1 136 10 9 47 16 6 1 5 5 80 45 11 20 20 26 1 3 63 66 3 19 37 56 48 5 5 145 45 12 31 6 17 86 58 21 5 17 20 27 16 28 94 44 1 1 26 18 3 8 45 129 2 4 1 3 7 1 4 4 V 1 5 2 4 3 4 6 6 4 177 46 47 163

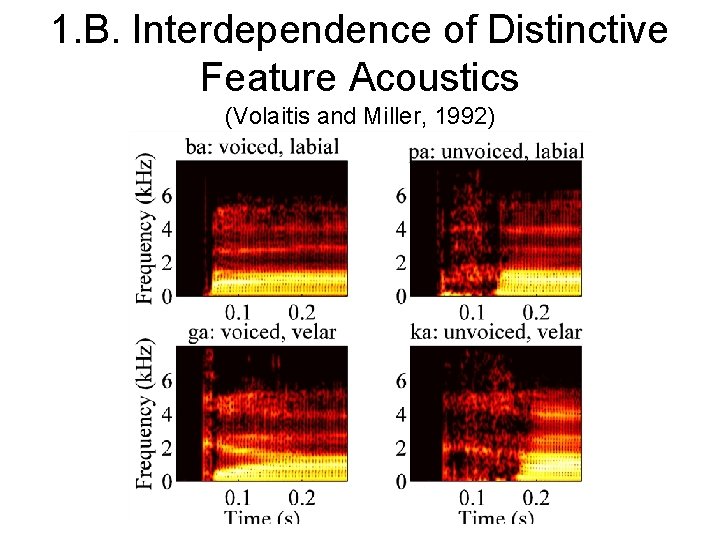

1. B. Interdependence of Distinctive Feature Acoustics (Volaitis and Miller, 1992)

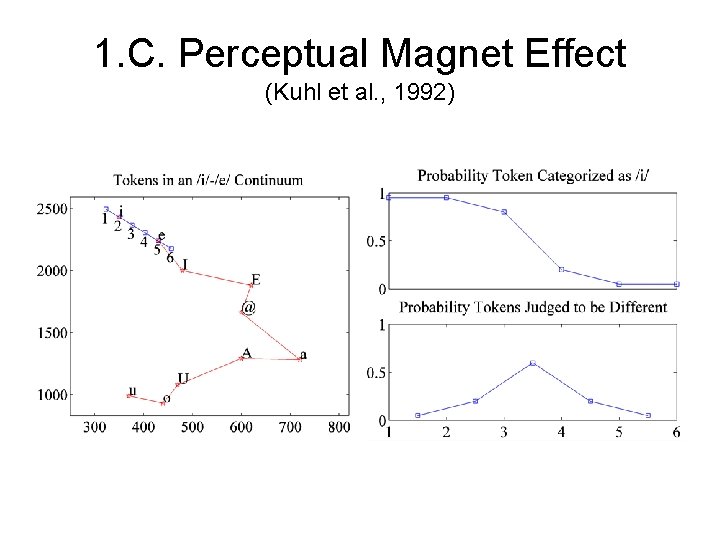

1. C. Perceptual Magnet Effect (Kuhl et al. , 1992)

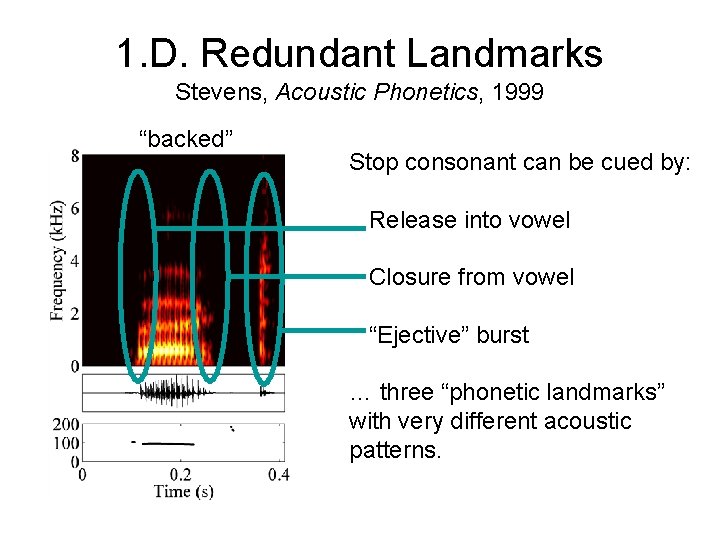

1. D. Redundant Landmarks Stevens, Acoustic Phonetics, 1999 “backed” Stop consonant can be cued by: Release into vowel Closure from vowel “Ejective” burst … three “phonetic landmarks” with very different acoustic patterns.

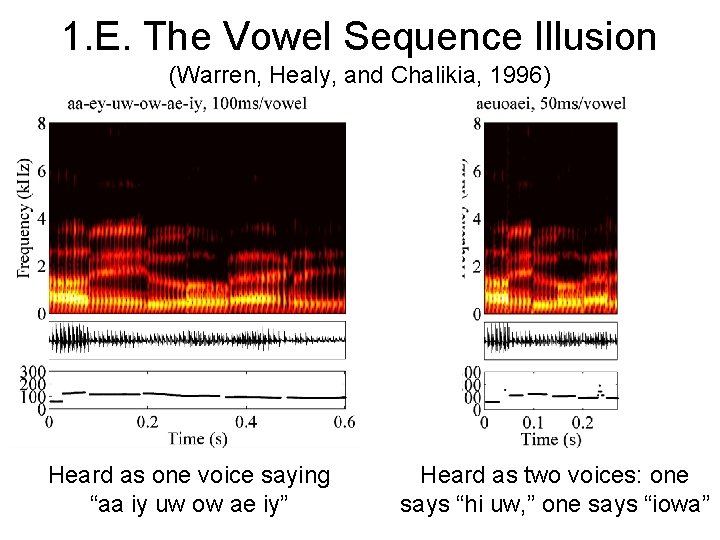

1. E. The Vowel Sequence Illusion (Warren, Healy, and Chalikia, 1996) Heard as one voice saying “aa iy uw ow ae iy” Heard as two voices: one says “hi uw, ” one says “iowa”

2. A Mathematical Model

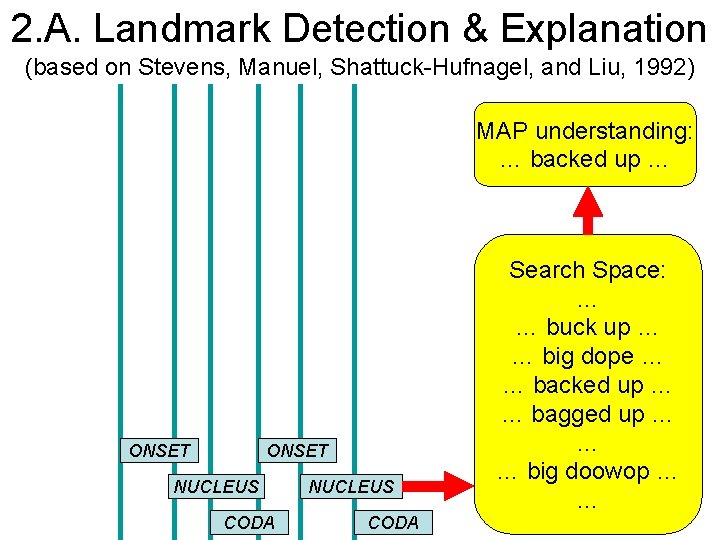

2. A. Landmark Detection & Explanation (based on Stevens, Manuel, Shattuck-Hufnagel, and Liu, 1992) MAP understanding: … backed up … ONSET NUCLEUS CODA Search Space: … … buck up … … big dope … … backed up … … bagged up … … … big doowop … …

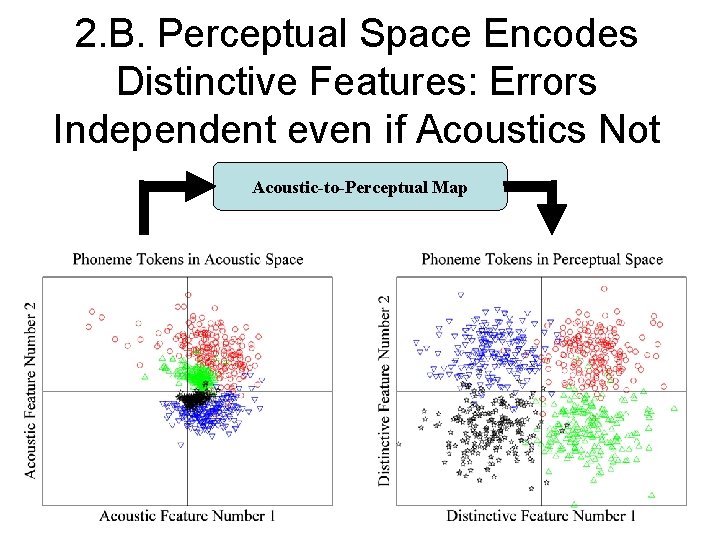

2. B. Perceptual Space Encodes Distinctive Features: Errors Independent even if Acoustics Not Acoustic-to-Perceptual Map

Problem Formulation: Acoustic-to. Perceptual Mapping

3. A Machine Learning Model

3. A. Acoustic-to-Perceptual Transform, Option #1: ML Learning (Omar & Hasegawa-Johnson, Trans SP in press)

3. A. Acoustic-to-Perceptual Transform, Option #2: Implied by SVM (Niyogi & Burges, tech report 2002)

3. B. Irregularly Sampled Observations, Option #1: Baum-Welch (Hasegawa-Johnson, tech report 2003)

3. B. Irregularly Sampled Observations, Option #2: Label-Sequence SVM (based on Altun & Hoffman, EUROSPEECH 2003)

Conclusions • Proposed: a machine learning model consistent with five psychophysical results. • Characteristics of the model: – Front-end processor detects syllable onsets, nuclei, codas (“acoustic landmarks”). – Acoustic-to-perceptual mapping (explicit or implied) classifies distinctive feature content of onset, nucleus, and coda of each syllable. • Experimental tests in progress

- Slides: 18