Bayesian cognitive modelling Modelling http doingbayesiandataanalysis blogspot co

Bayesian cognitive modelling

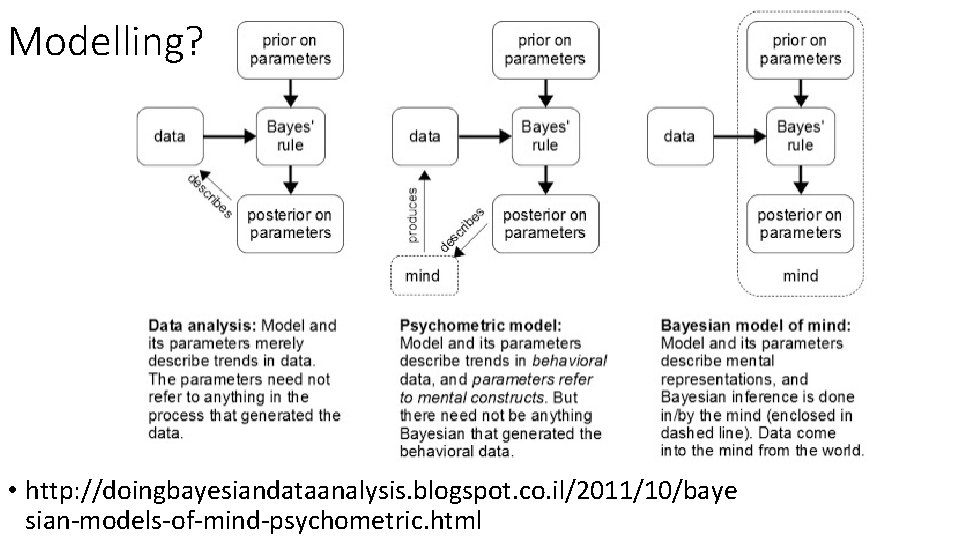

Modelling? • http: //doingbayesiandataanalysis. blogspot. co. il/2011/10/baye sian-models-of-mind-psychometric. html

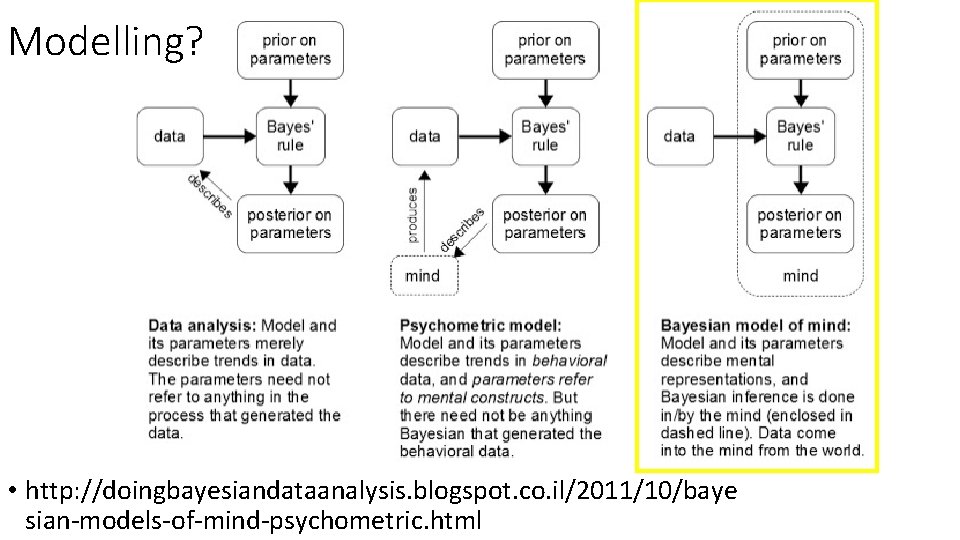

Modelling? • http: //doingbayesiandataanalysis. blogspot. co. il/2011/10/baye sian-models-of-mind-psychometric. html

The Bayesian brain hypothesis 1. Take a cognitive task or a more general cognitive or social function 2. Build a normative model describing how would an optimal Bayesian mechanism perform in this task 3. Generate predictions, and compare the normative model to the actual data

What functions can be Bayesian? • Sequential updating (e. g. learning tasks) • The need to inverse generative models (i. e. to infer from data to its causes – e. g. perception) • Integrating information under uncertainty (e. g. cue integration) • Functions involving predictions and prediction errors

Today • The normal/normal model (known variance) • The simple Kalman filter model • The normal/normal-inverse gamma model (unknown variance)

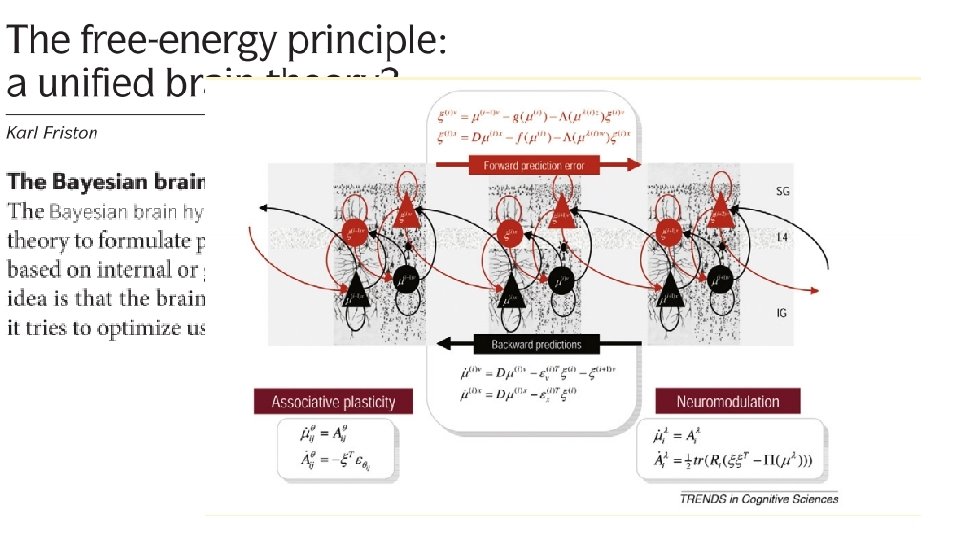

Computational basis • We talked about MCMC sampling for Bayesian data analysis • Bayesian-brain type models usually apply something called ‘conjugate priors’ • The classical (pre-computers) approach for Bayesian inference • Mathematical closed-form solution • For some likelihood functions, there are prior distributions that produce known-form posterior distributions, with algebraically derived parameters • E. g. For a normal likelihood, using a normal prior would produce a normal posterior distribution. • More limited, but much faster than MCMC. • https: //en. wikipedia. org/wiki/Conjugate_prior • A third, more complicated mathematical approach is called Variational Bayesian approximation.

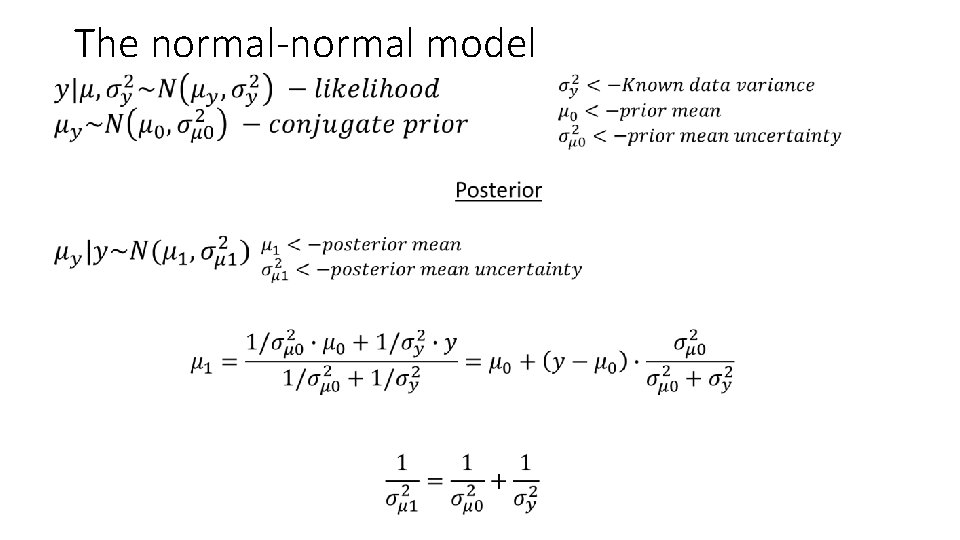

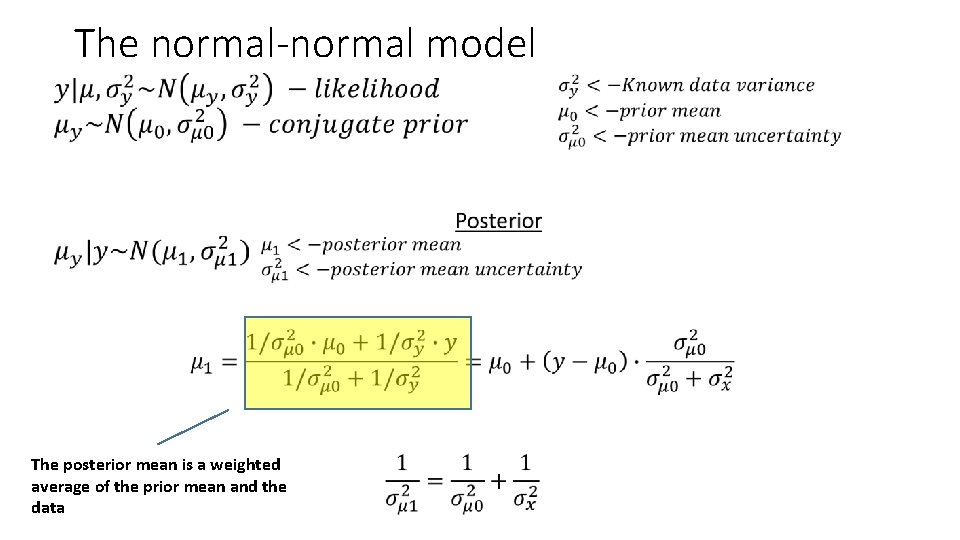

The normal-normal model •

The normal-normal model • The posterior mean is a weighted average of the prior mean and the data

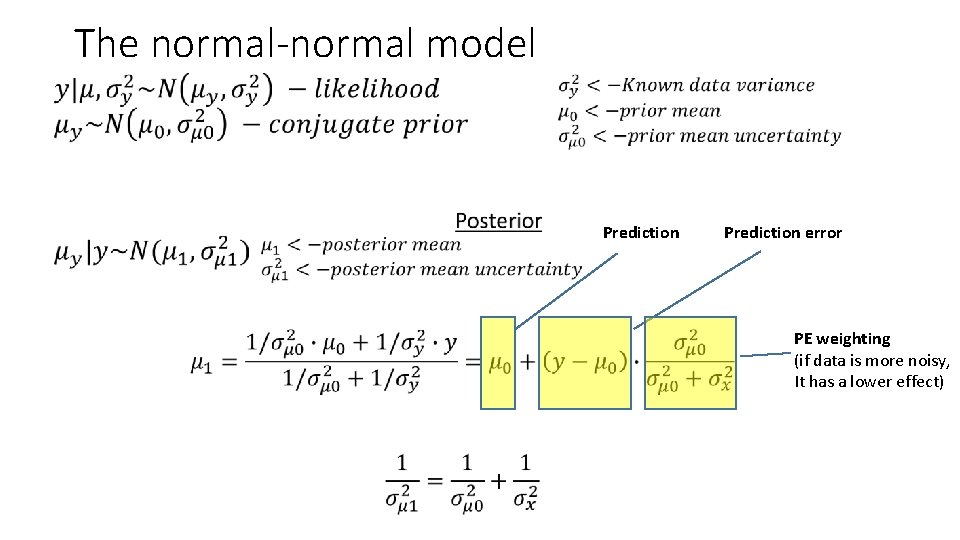

The normal-normal model • Prediction error PE weighting (if data is more noisy, It has a lower effect)

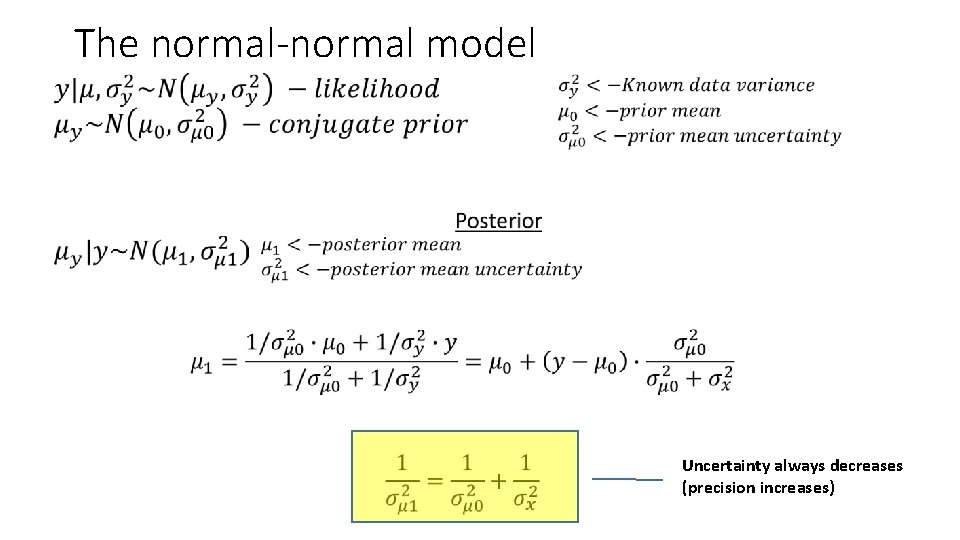

The normal-normal model • Uncertainty always decreases (precision increases)

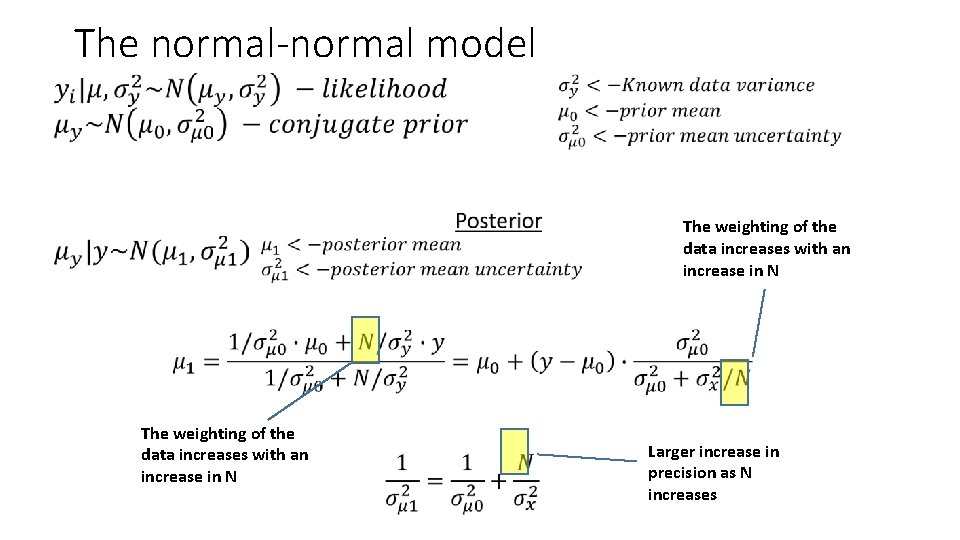

The normal-normal model • The weighting of the data increases with an increase in N Larger increase in precision as N increases

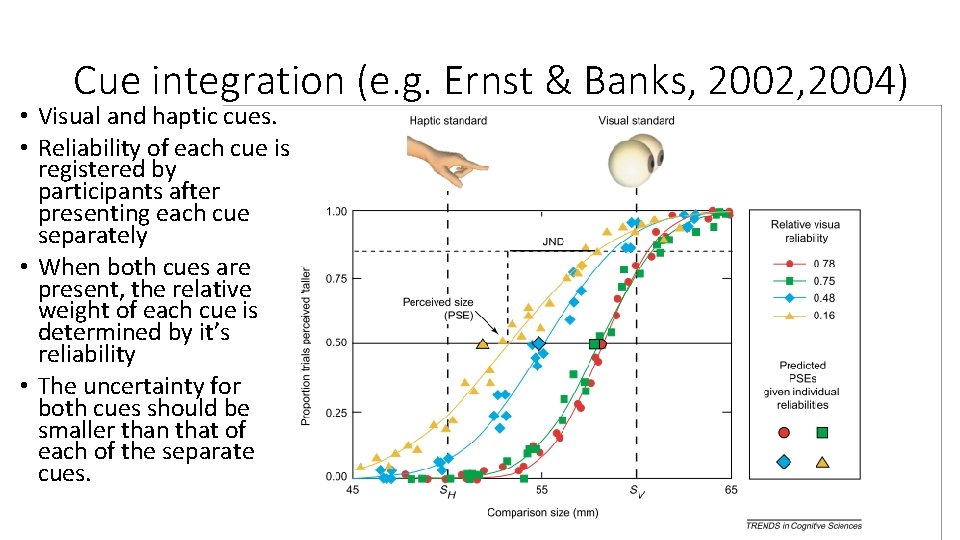

Cue integration (e. g. Ernst & Banks, 2002, 2004) • Visual and haptic cues. • Reliability of each cue is registered by participants after presenting each cue separately • When both cues are present, the relative weight of each cue is determined by it’s reliability • The uncertainty for both cues should be smaller than that of each of the separate cues.

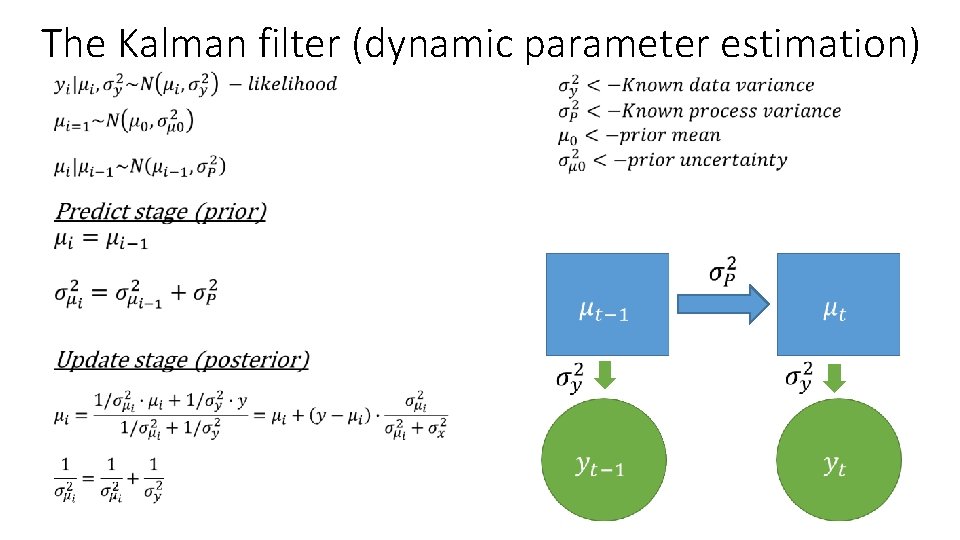

The Kalman filter (dynamic parameter estimation) •

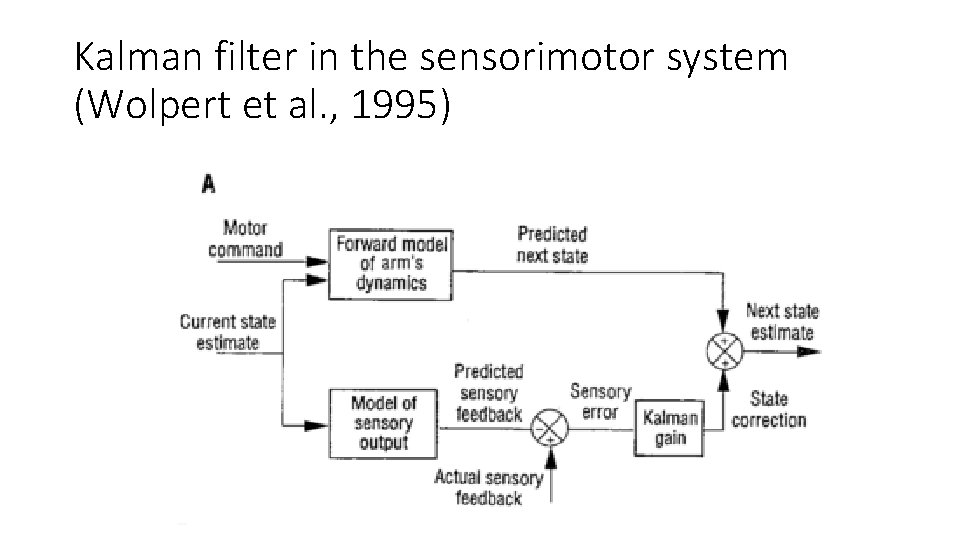

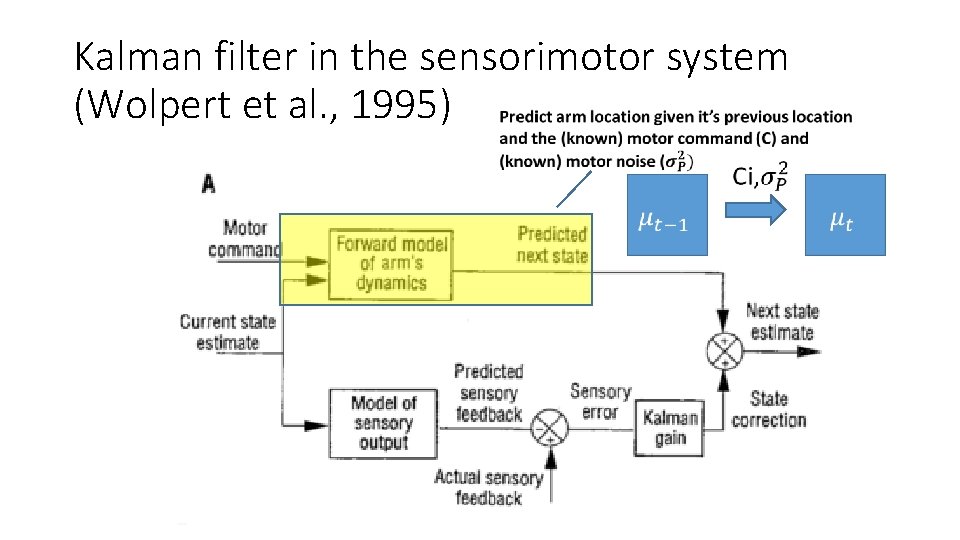

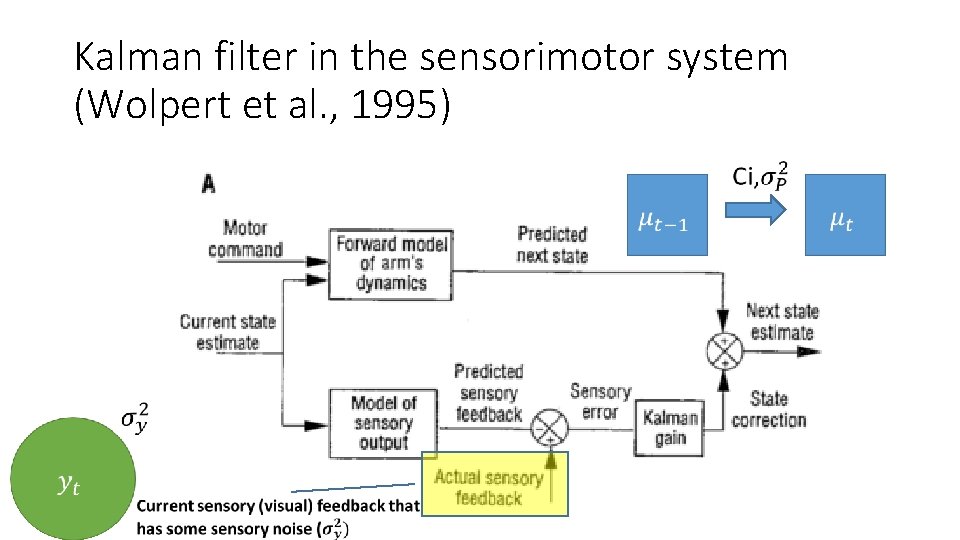

Kalman filter in the sensorimotor system (Wolpert et al. , 1995)

Kalman filter in the sensorimotor system (Wolpert et al. , 1995)

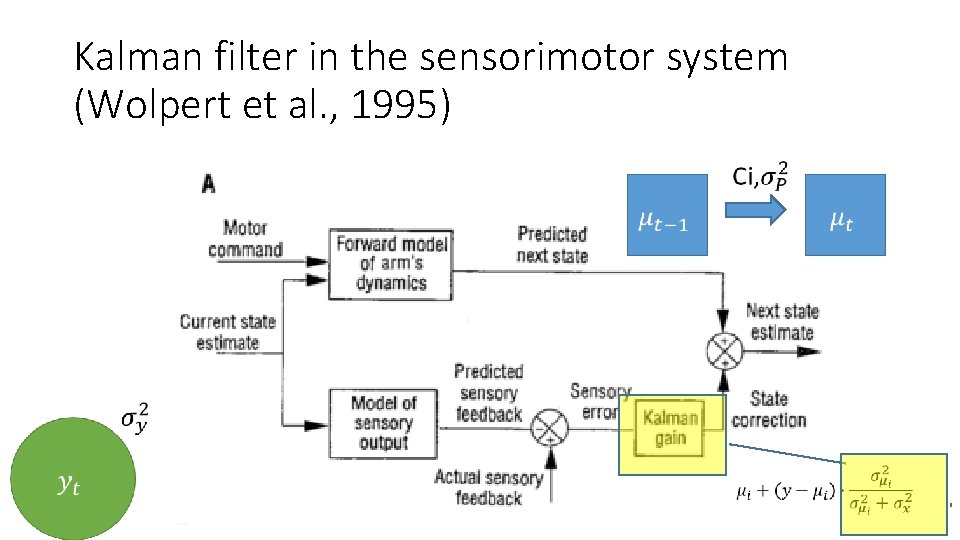

Kalman filter in the sensorimotor system (Wolpert et al. , 1995)

Kalman filter in the sensorimotor system (Wolpert et al. , 1995)

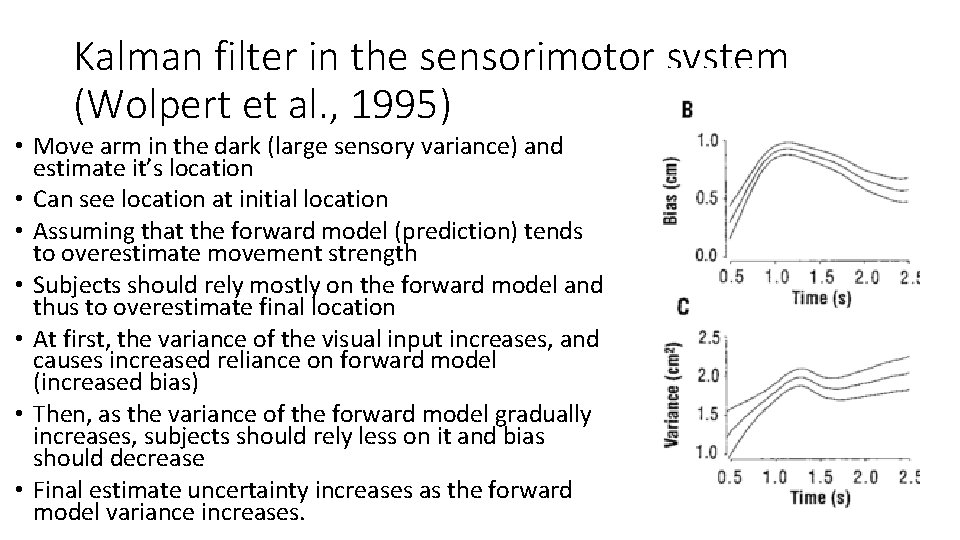

Kalman filter in the sensorimotor system (Wolpert et al. , 1995) • Move arm in the dark (large sensory variance) and estimate it’s location • Can see location at initial location • Assuming that the forward model (prediction) tends to overestimate movement strength • Subjects should rely mostly on the forward model and thus to overestimate final location • At first, the variance of the visual input increases, and causes increased reliance on forward model (increased bias) • Then, as the variance of the forward model gradually increases, subjects should rely less on it and bias should decrease • Final estimate uncertainty increases as the forward model variance increases.

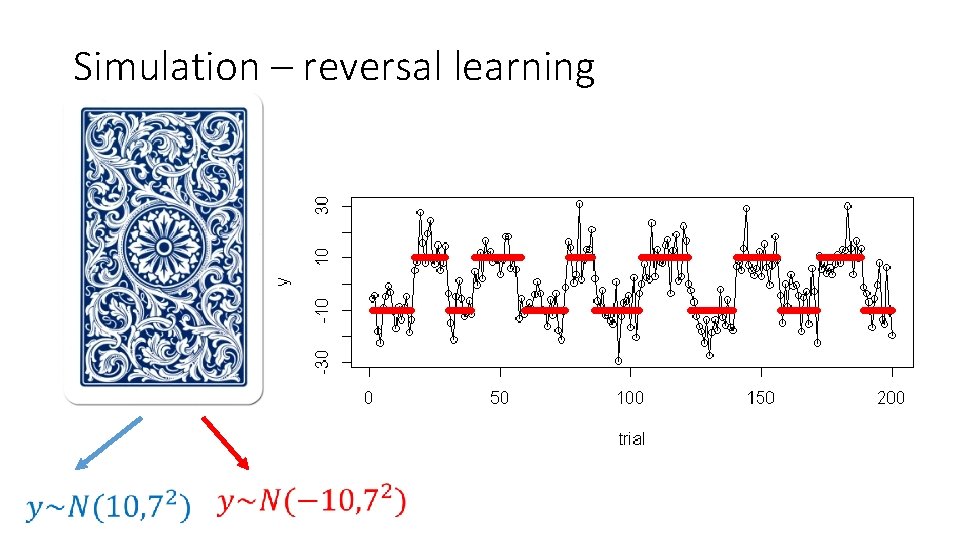

Simulation – reversal learning

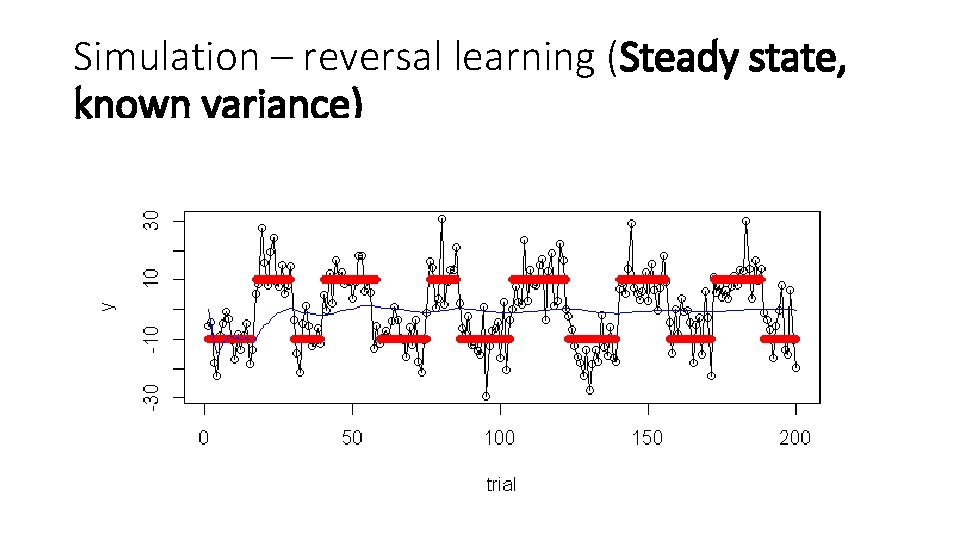

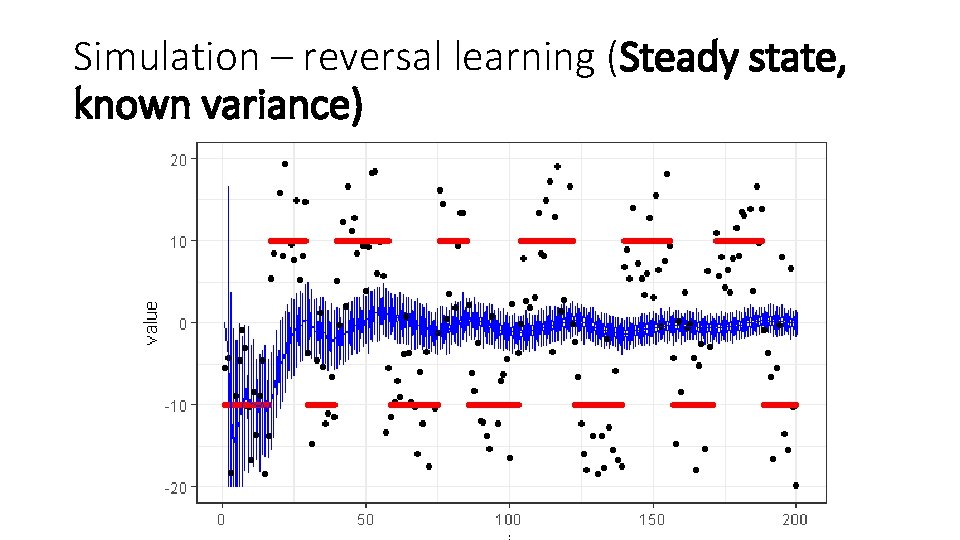

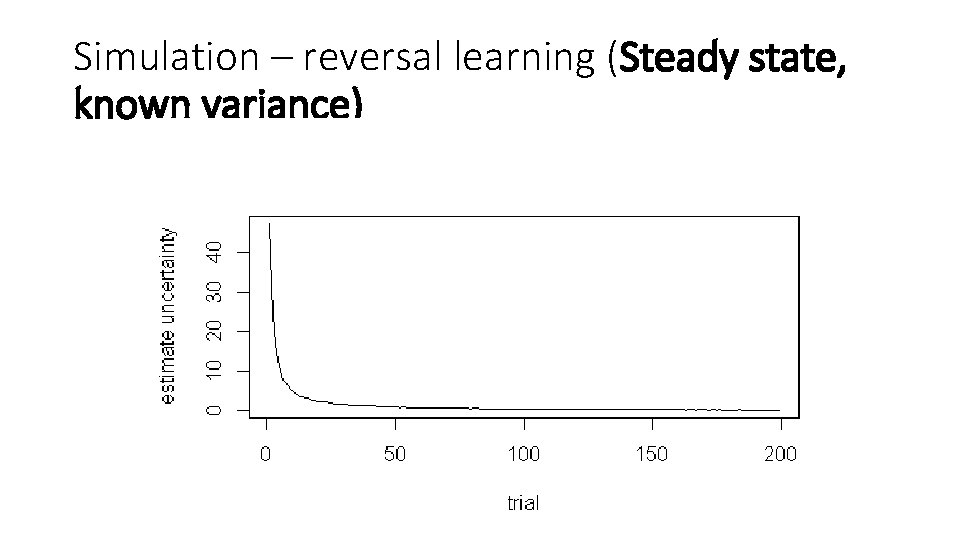

Simulation – reversal learning (Steady state, known variance)

Simulation – reversal learning (Steady state, known variance)

Simulation – reversal learning (Steady state, known variance)

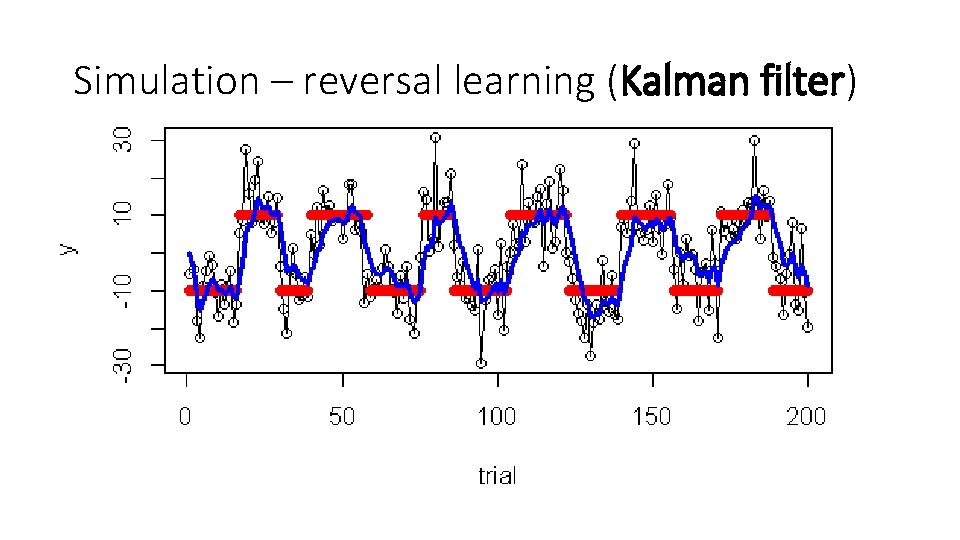

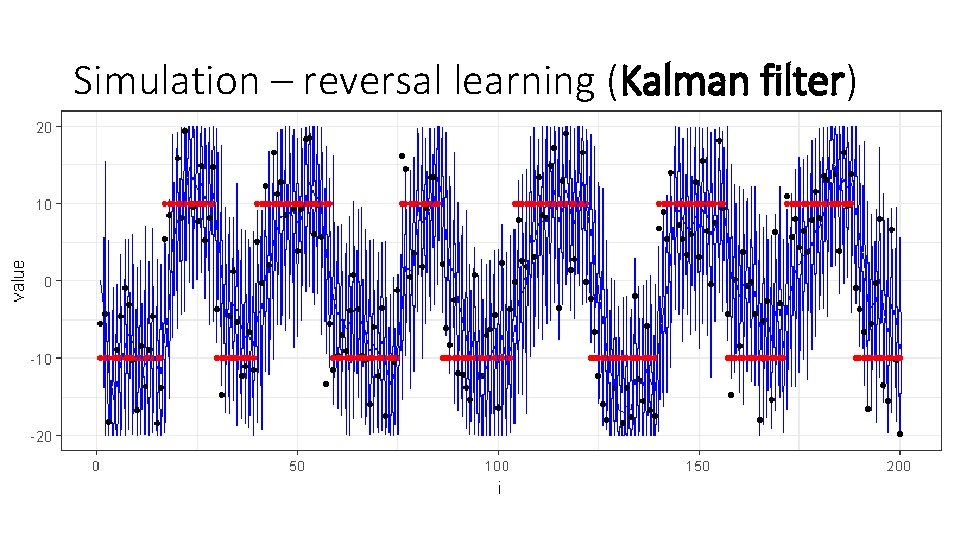

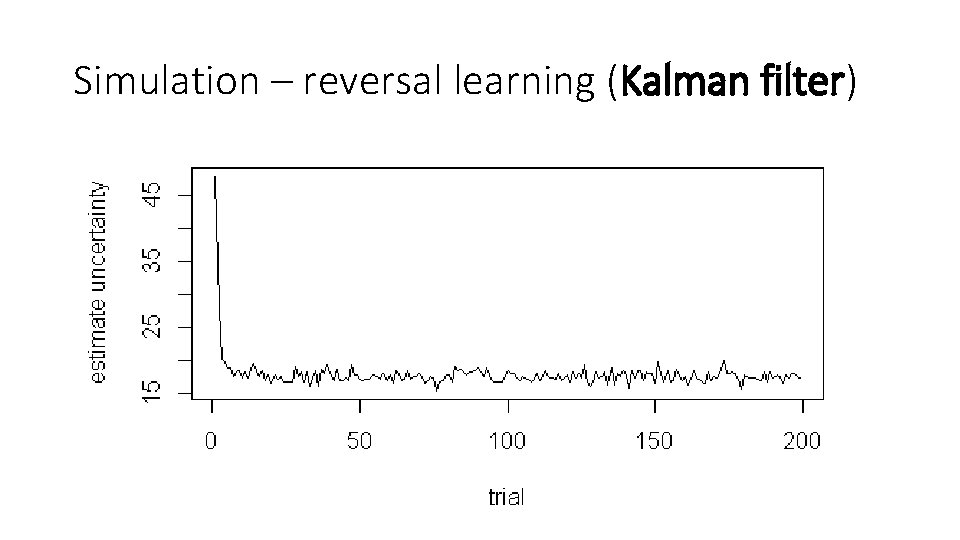

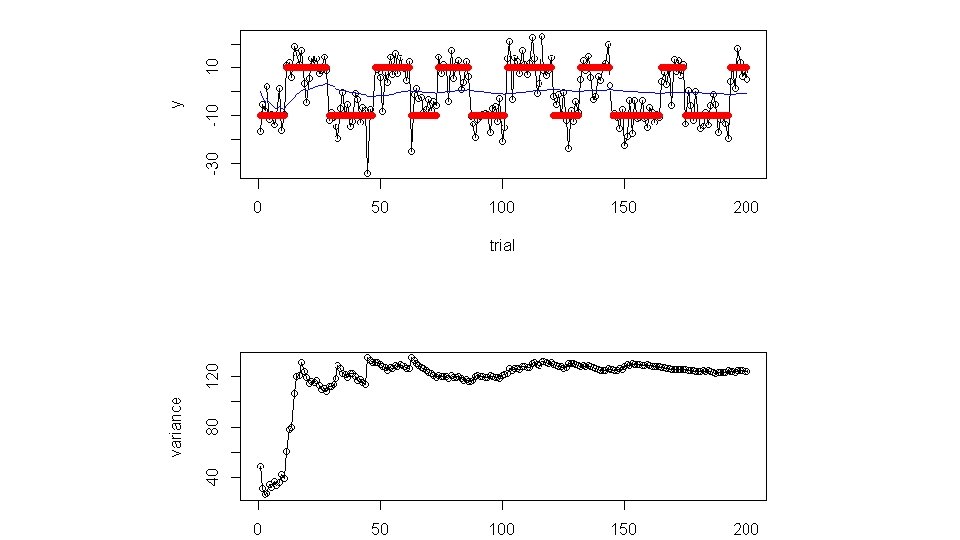

Simulation – reversal learning (Kalman filter)

Simulation – reversal learning (Kalman filter)

Simulation – reversal learning (Kalman filter)

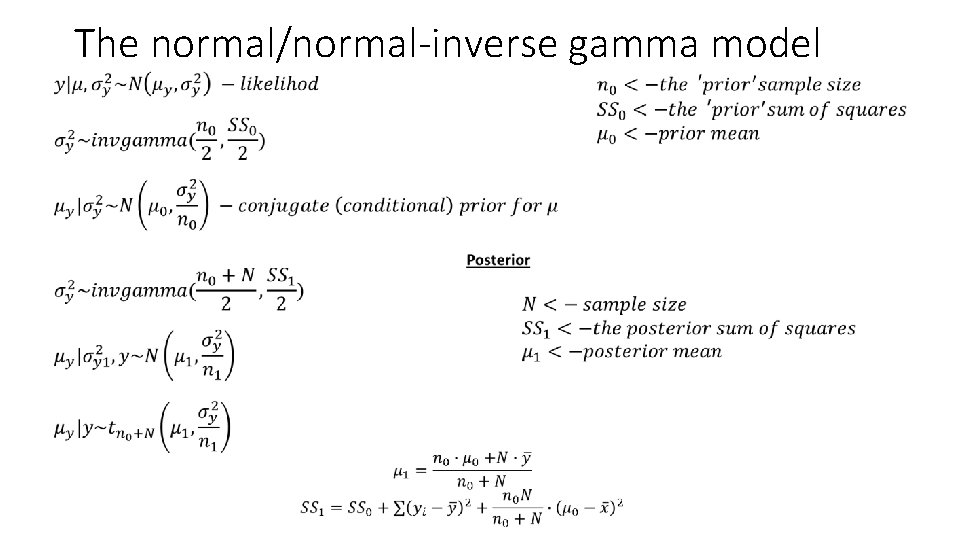

The normal/normal-inverse gamma model •

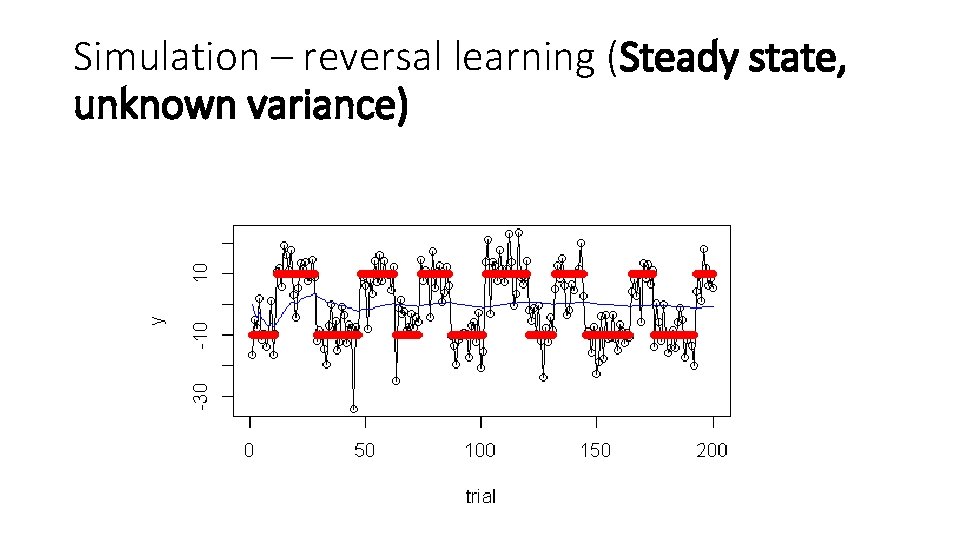

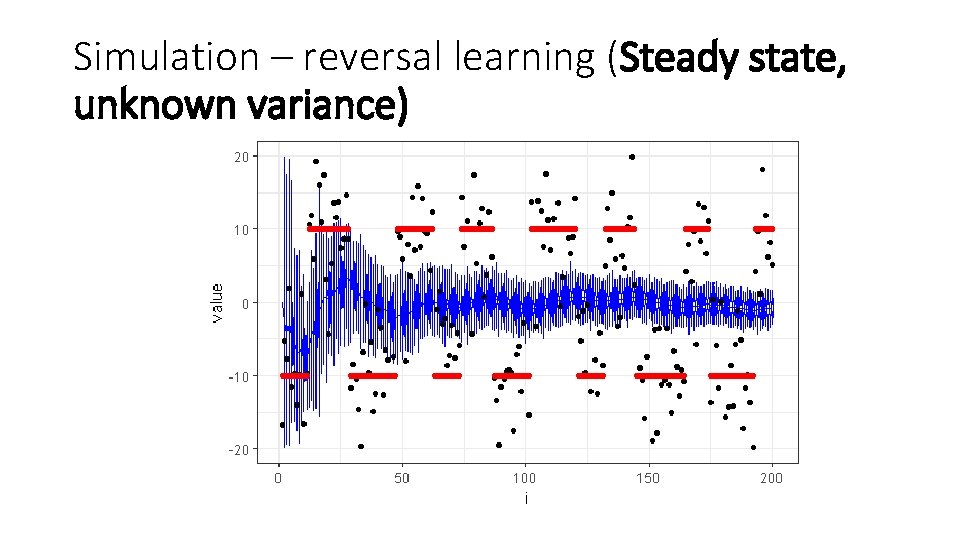

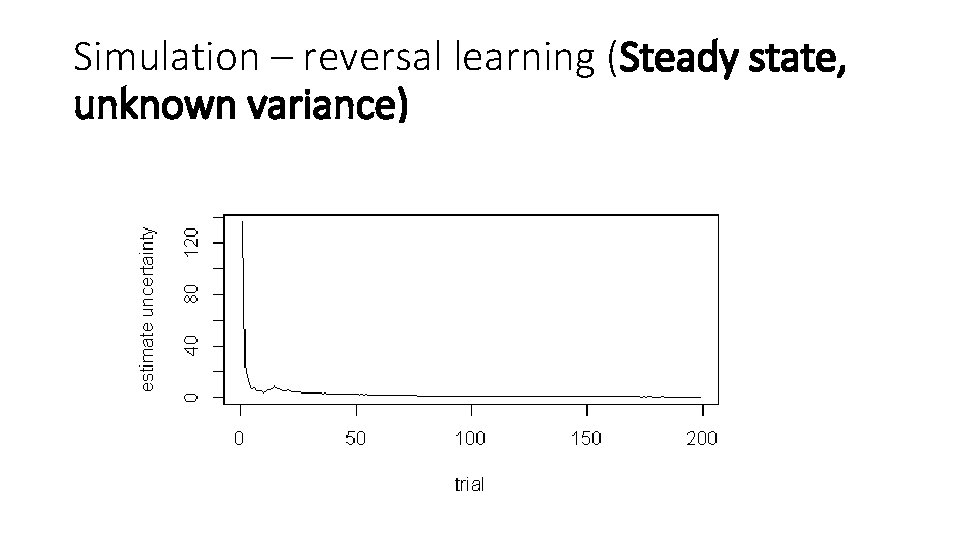

Simulation – reversal learning (Steady state, unknown variance)

Simulation – reversal learning (Steady state, unknown variance)

Simulation – reversal learning (Steady state, unknown variance)

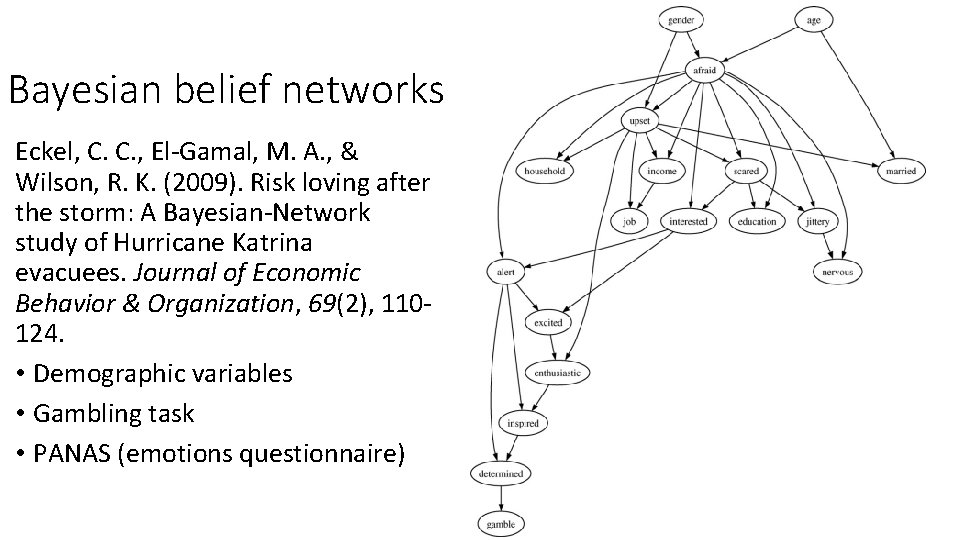

Bayesian belief networks Eckel, C. C. , El-Gamal, M. A. , & Wilson, R. K. (2009). Risk loving after the storm: A Bayesian-Network study of Hurricane Katrina evacuees. Journal of Economic Behavior & Organization, 69(2), 110124. • Demographic variables • Gambling task • PANAS (emotions questionnaire)

- Slides: 33