Batch size 32 Network Net conv 1 Conv

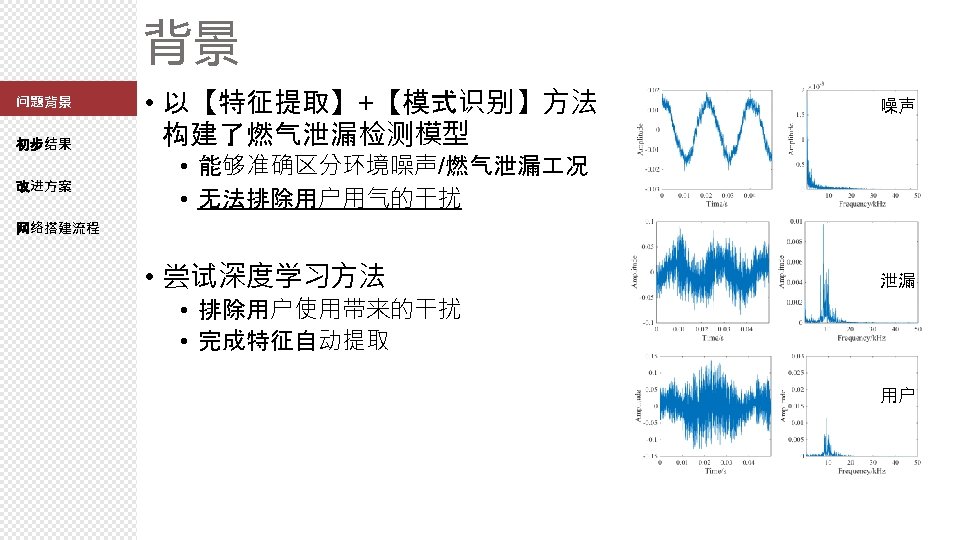

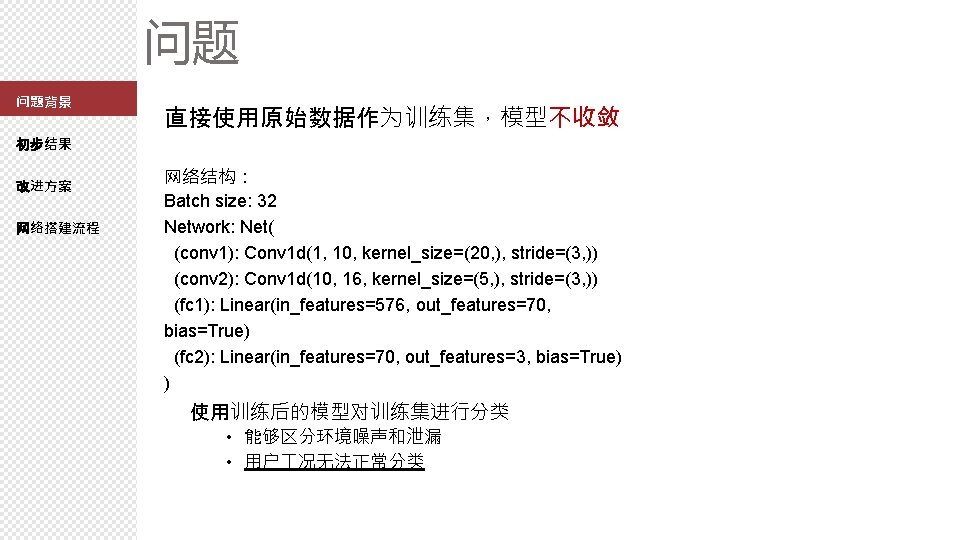

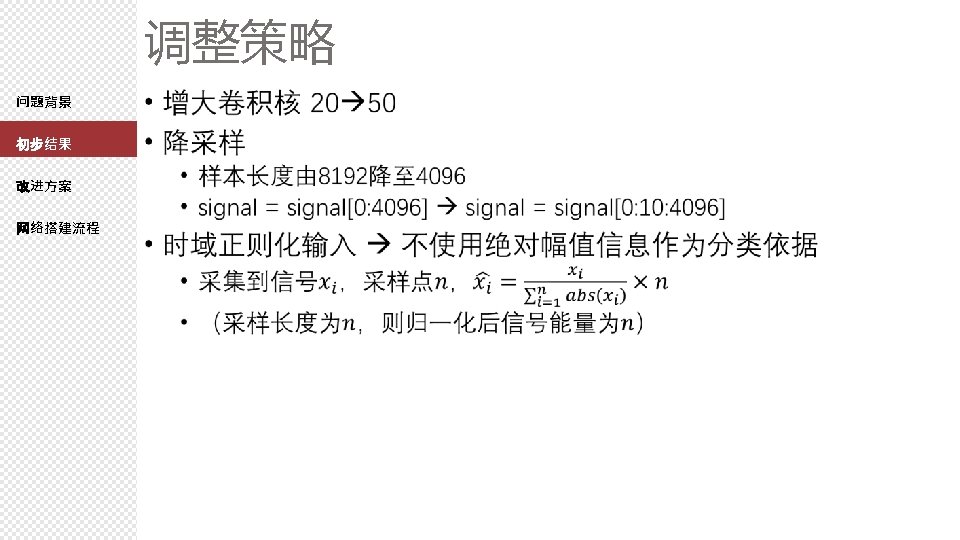

问题 问题背景 直接使用原始数据作为训练集,模型不收敛 初步结果 改进方案 网络搭建流程 网络结构: Batch size: 32 Network: Net( (conv 1): Conv 1 d(1, 10, kernel_size=(20, ), stride=(3, )) (conv 2): Conv 1 d(10, 16, kernel_size=(5, ), stride=(3, )) (fc 1): Linear(in_features=576, out_features=70, bias=True) (fc 2): Linear(in_features=70, out_features=3, bias=True) ) 使用训练后的模型对训练集进行分类 • 能够区分环境噪声和泄漏 • 用户 况无法正常分类

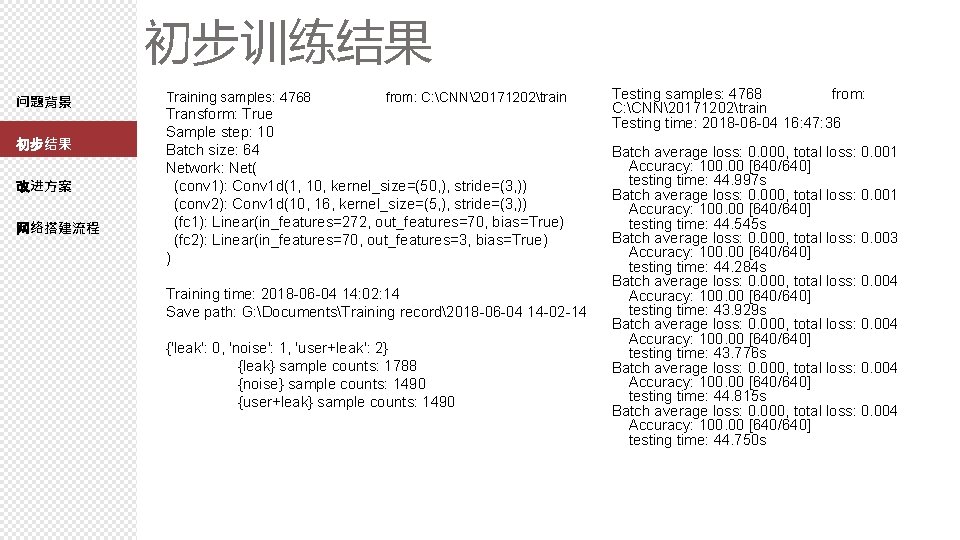

初步训练结果 问题背景 初步结果 改进方案 网络搭建流程 Training samples: 4768 from: C: CNN20171202train Transform: True Sample step: 10 Batch size: 64 Network: Net( (conv 1): Conv 1 d(1, 10, kernel_size=(50, ), stride=(3, )) (conv 2): Conv 1 d(10, 16, kernel_size=(5, ), stride=(3, )) (fc 1): Linear(in_features=272, out_features=70, bias=True) (fc 2): Linear(in_features=70, out_features=3, bias=True) ) Training time: 2018 -06 -04 14: 02: 14 Save path: G: DocumentsTraining record2018 -06 -04 14 -02 -14 {'leak': 0, 'noise': 1, 'user+leak': 2} {leak} sample counts: 1788 {noise} sample counts: 1490 {user+leak} sample counts: 1490 Testing samples: 4768 from: C: CNN20171202train Testing time: 2018 -06 -04 16: 47: 36 Batch average loss: 0. 000, total loss: 0. 001 Accuracy: 100. 00 [640/640] testing time: 44. 997 s Batch average loss: 0. 000, total loss: 0. 001 Accuracy: 100. 00 [640/640] testing time: 44. 545 s Batch average loss: 0. 000, total loss: 0. 003 Accuracy: 100. 00 [640/640] testing time: 44. 284 s Batch average loss: 0. 000, total loss: 0. 004 Accuracy: 100. 00 [640/640] testing time: 43. 929 s Batch average loss: 0. 000, total loss: 0. 004 Accuracy: 100. 00 [640/640] testing time: 43. 776 s Batch average loss: 0. 000, total loss: 0. 004 Accuracy: 100. 00 [640/640] testing time: 44. 815 s Batch average loss: 0. 000, total loss: 0. 004 Accuracy: 100. 00 [640/640] testing time: 44. 750 s

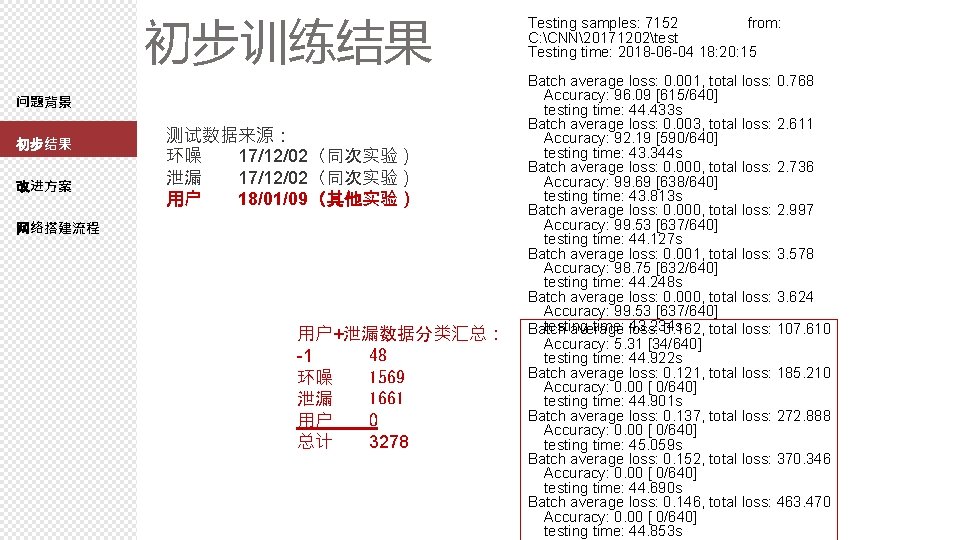

初步训练结果 问题背景 初步结果 改进方案 测试数据来源: 环噪 17/12/02(同次实验) 泄漏 17/12/02(同次实验) 用户 18/01/09(其他实验) 网络搭建流程 用户+泄漏数据分类汇总: -1 48 环噪 1569 泄漏 1661 用户 0 总计 3278 Testing samples: 7152 from: C: CNN20171202test Testing time: 2018 -06 -04 18: 20: 15 Batch average loss: 0. 001, total loss: 0. 768 Accuracy: 96. 09 [615/640] testing time: 44. 433 s Batch average loss: 0. 003, total loss: 2. 611 Accuracy: 92. 19 [590/640] testing time: 43. 344 s Batch average loss: 0. 000, total loss: 2. 736 Accuracy: 99. 69 [638/640] testing time: 43. 813 s Batch average loss: 0. 000, total loss: 2. 997 Accuracy: 99. 53 [637/640] testing time: 44. 127 s Batch average loss: 0. 001, total loss: 3. 578 Accuracy: 98. 75 [632/640] testing time: 44. 248 s Batch average loss: 0. 000, total loss: 3. 624 Accuracy: 99. 53 [637/640] testing time: loss: 43. 234 s Batch average 0. 162, total loss: 107. 610 Accuracy: 5. 31 [34/640] testing time: 44. 922 s Batch average loss: 0. 121, total loss: 185. 210 Accuracy: 0. 00 [ 0/640] testing time: 44. 901 s Batch average loss: 0. 137, total loss: 272. 888 Accuracy: 0. 00 [ 0/640] testing time: 45. 059 s Batch average loss: 0. 152, total loss: 370. 346 Accuracy: 0. 00 [ 0/640] testing time: 44. 690 s Batch average loss: 0. 146, total loss: 463. 470 Accuracy: 0. 00 [ 0/640] testing time: 44. 853 s

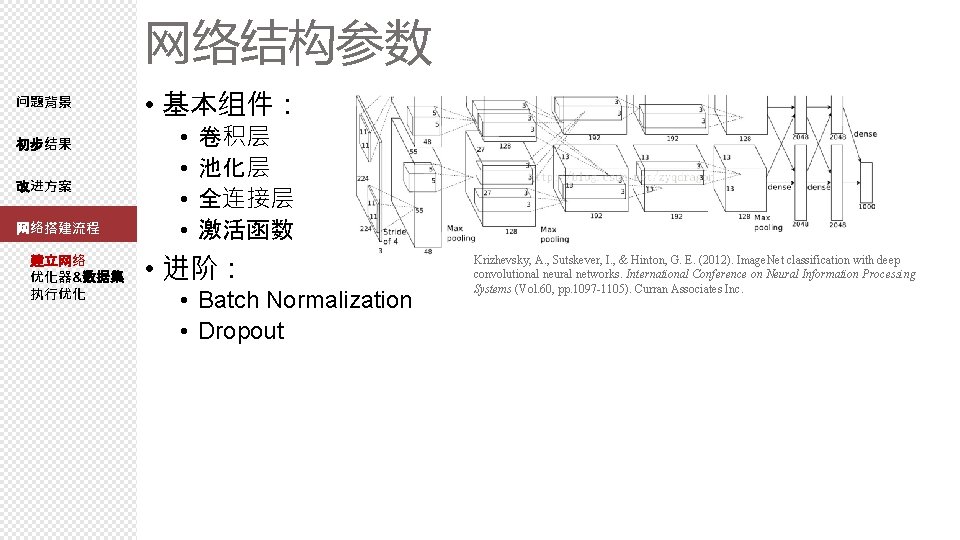

网络结构参数 问题背景 初步结果 改进方案 网络搭建流程 建立网络 优化器&数据集 执行优化 • 基本组件: • • 卷积层 池化层 全连接层 激活函数 • 进阶: • Batch Normalization • Dropout Krizhevsky, A. , Sutskever, I. , & Hinton, G. E. (2012). Image. Net classification with deep convolutional neural networks. International Conference on Neural Information Processing Systems (Vol. 60, pp. 1097 -1105). Curran Associates Inc.

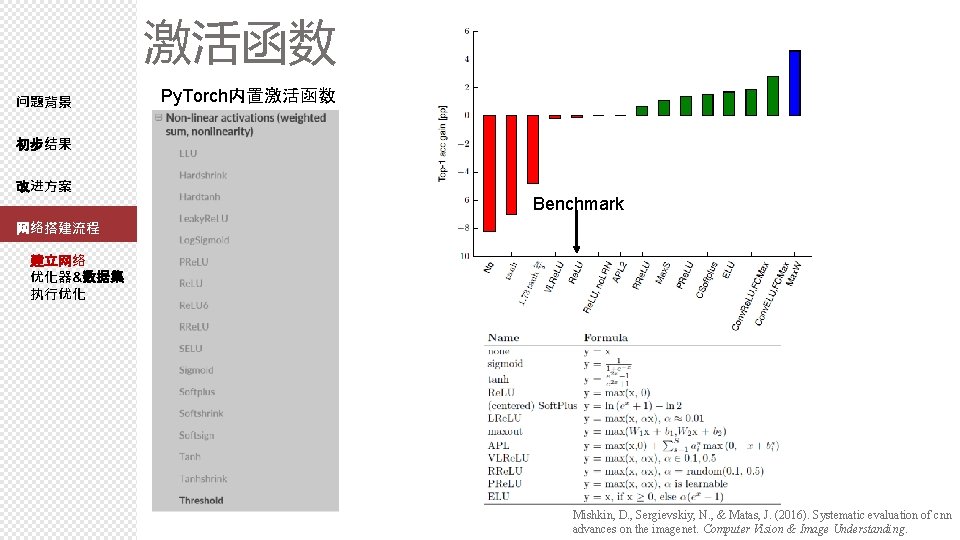

激活函数 问题背景 Py. Torch内置激活函数 初步结果 改进方案 Benchmark 网络搭建流程 建立网络 优化器&数据集 执行优化 Mishkin, D. , Sergievskiy, N. , & Matas, J. (2016). Systematic evaluation of cnn advances on the imagenet. Computer Vision & Image Understanding.

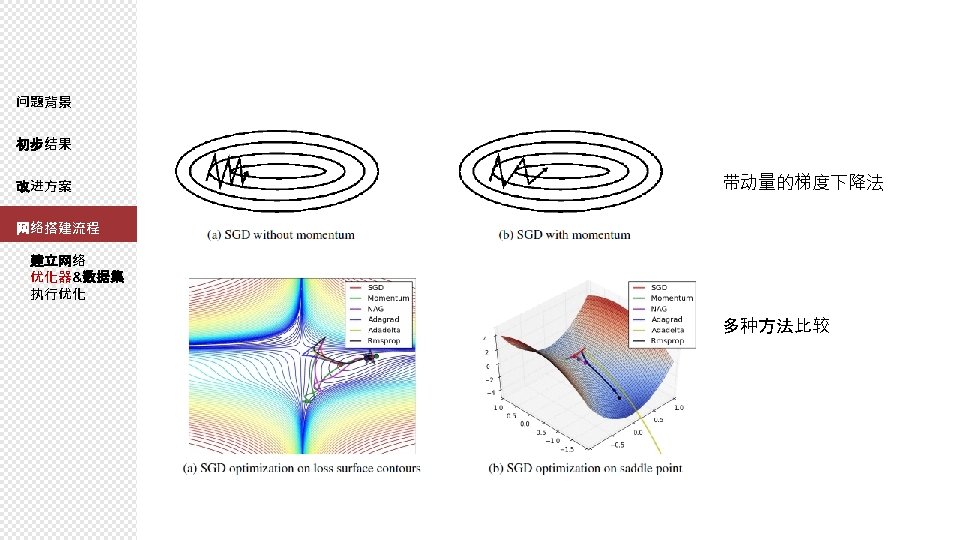

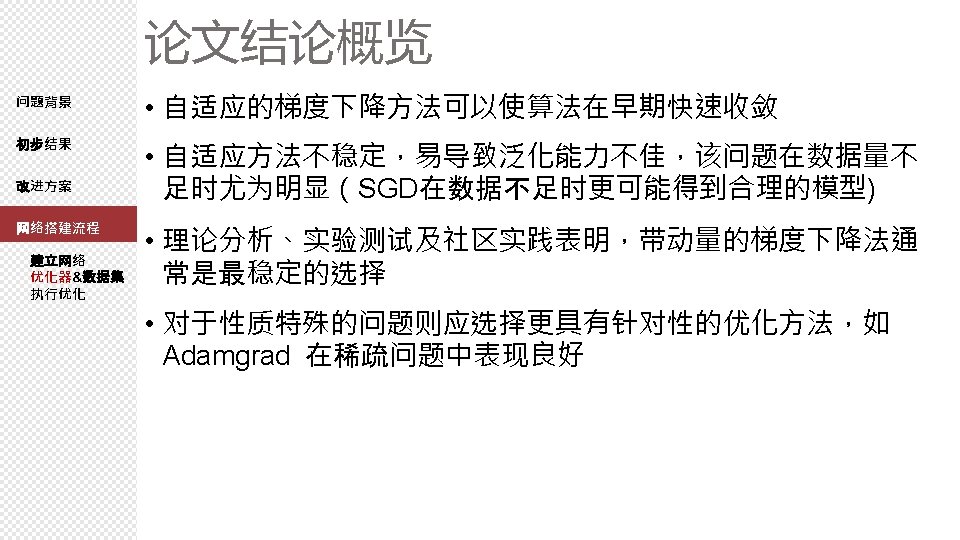

优化方法选择 问题背景 初步结果 改进方案 网络搭建流程 建立网络 优化器&数据集 执行优化 Py. Torch 内建优化器 • • • Adadelta Adagrad Adam Sparse. Adamax ASGD LBFGS RMSprop Rprop SGD • 基础方法:梯度下降法 • 升级版本: • 自适应的梯度下降法(自适应学习速率) • 带动量的梯度下降法(一定程度上防止陷入局部最优) • The Marginal Value of Adaptive Gradient Methods in Machine Learning : https: //arxiv. org/pdf/1705. 08292. pdf • An Overview of Gradient Descent Optimization Algorithms : https: //arxiv. org/pdf/1609. 04747. pdf

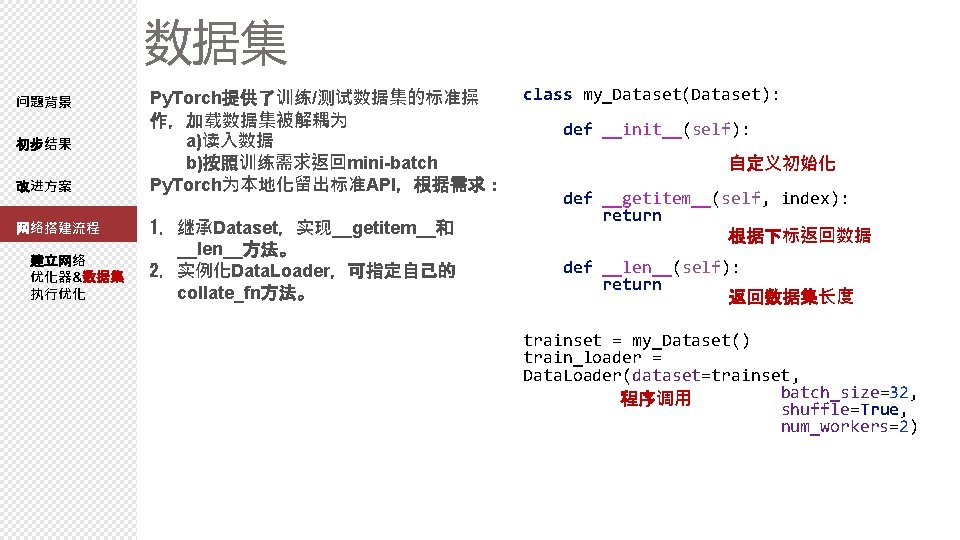

数据集 问题背景 初步结果 改进方案 网络搭建流程 建立网络 优化器&数据集 执行优化 Py. Torch提供了训练/测试数据集的标准操 作,加载数据集被解耦为 a)读入数据 b)按照训练需求返回mini-batch Py. Torch为本地化留出标准API,根据需求: 1. 继承Dataset,实现__getitem__和 __len__方法。 2. 实例化Data. Loader,可指定自己的 collate_fn方法。 class my_Dataset(Dataset): def __init__(self): xy = np. loadtxt(d elimiter=', ', 自定义初始化 self. len = xy. shape[0] def __getitem__(self, index): return self. x_data[index], self. y_data[index] 根据下标返回数据 def __len__(self): return self. len 返回数据集长度 trainset = my_Dataset() train_loader = Data. Loader(dataset=trainset, batch_size=32, 程序调用 shuffle=True, num_workers=2)

torchvision. datasets. mnist Py. Torch源码使用案例 From https: //pytorch. org/docs/stable/torchvision/datasets. html#mnist

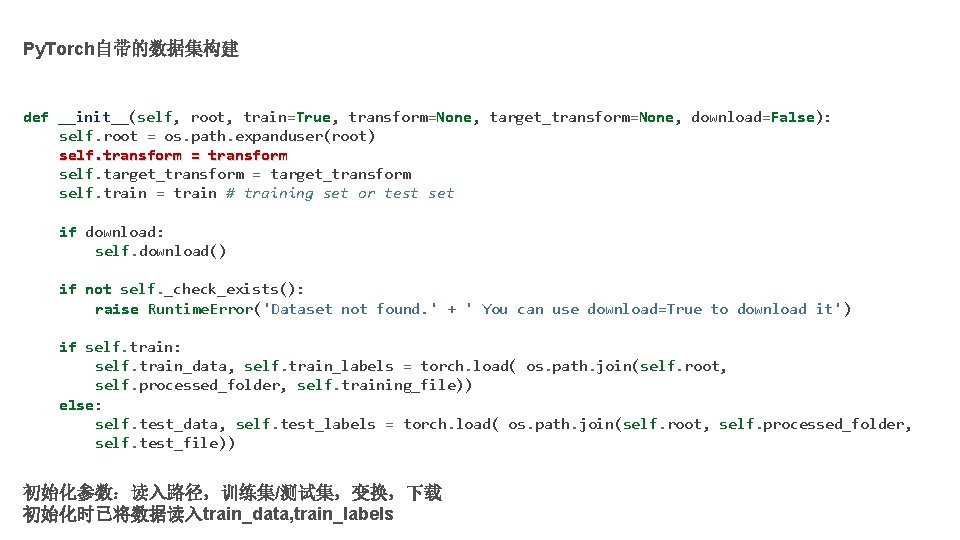

Py. Torch自带的数据集构建 def __init__(self, root, train=True, transform=None, target_transform=None, download=False): self. root = os. path. expanduser(root) self. transform = transform self. target_transform = target_transform self. train = train # training set or test set if download: self. download() if not self. _check_exists(): raise Runtime. Error('Dataset not found. ' + ' You can use download=True to download it') if self. train: self. train_data, self. train_labels = torch. load( os. path. join(self. root, self. processed_folder, self. training_file)) else: self. test_data, self. test_labels = torch. load( os. path. join(self. root, self. processed_folder, self. test_file)) 初始化参数:读入路径,训练集/测试集,变换,下载 初始化时已将数据读入train_data, train_labels

![def __getitem__(self, index): if self. train: img, target = self. train_data[index], self. train_labels[index] else: def __getitem__(self, index): if self. train: img, target = self. train_data[index], self. train_labels[index] else:](http://slidetodoc.com/presentation_image_h2/f4612b864c68cb82cbdc8cc6b55af435/image-21.jpg)

def __getitem__(self, index): if self. train: img, target = self. train_data[index], self. train_labels[index] else: img, target = self. test_data[index], self. test_labels[index] img = Image. fromarray(img. numpy(), mode='L') if self. transform is not None: img = self. transform(img) if self. target_transform is not None: target = self. target_transform(target) return img, target 根据下标返回数据及标签 如果有变换处理,调用变换,返回变换后数据 def __len__(self): if self. train: return len(self. train_data) else: return len(self. test_data)

torchvision. transforms

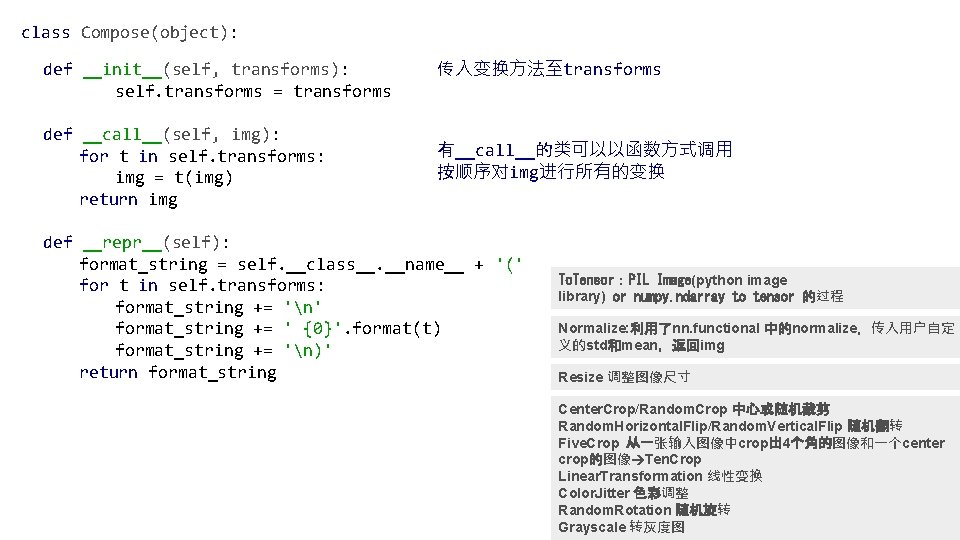

class Compose(object): def __init__(self, transforms): self. transforms = transforms def __call__(self, img): for t in self. transforms: img = t(img) return img 传入变换方法至transforms 有__call__的类可以以函数方式调用 按顺序对img进行所有的变换 def __repr__(self): format_string = self. __class__. __name__ + '(' for t in self. transforms: format_string += 'n' format_string += ' {0}'. format(t) format_string += 'n)' return format_string To. Tensor:PIL Image(python image library) or numpy. ndarray to tensor 的过程 Normalize: 利用了nn. functional 中的normalize,传入用户自定 义的std和mean,返回img Resize 调整图像尺寸 Center. Crop/Random. Crop 中心或随机裁剪 Random. Horizontal. Flip/Random. Vertical. Flip 随机翻转 Five. Crop 从一张输入图像中crop出 4个角的图像和一个center crop的图像 Ten. Crop Linear. Transformation 线性变换 Color. Jitter 色彩调整 Random. Rotation 随机旋转 Grayscale 转灰度图

- Slides: 26