Basisc of Map Reduce Programming Model By J

Basisc of Map. Reduce Programming Model By J. H. Wang Oct. 18, 2019

Outline • Introduction • Map. Reduce Basics

References • Jimmy Lin and Chris Dyer, “Data-Intensive Text Processing with Map. Reduce”, Ch. 1 -3

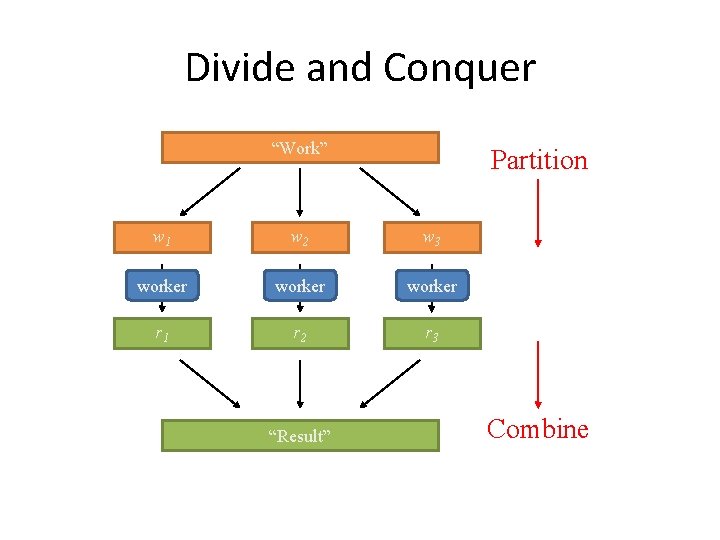

Divide and Conquer “Work” Partition w 1 w 2 w 3 worker r 1 r 2 r 3 “Result” Combine

Map. Reduce Programming Model • Functional programming roots – Map and fold • • Mappers and reducers Execution framework Combiners and partitioners Distributed file system

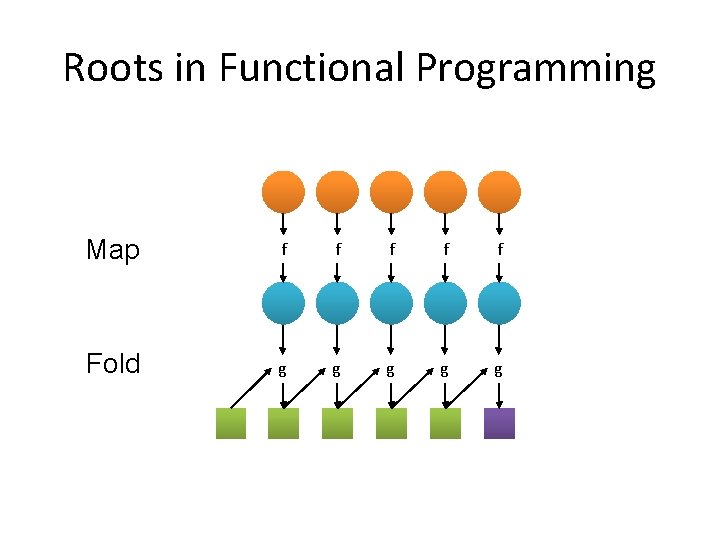

Roots in Functional Programming Map f f f Fold g g g

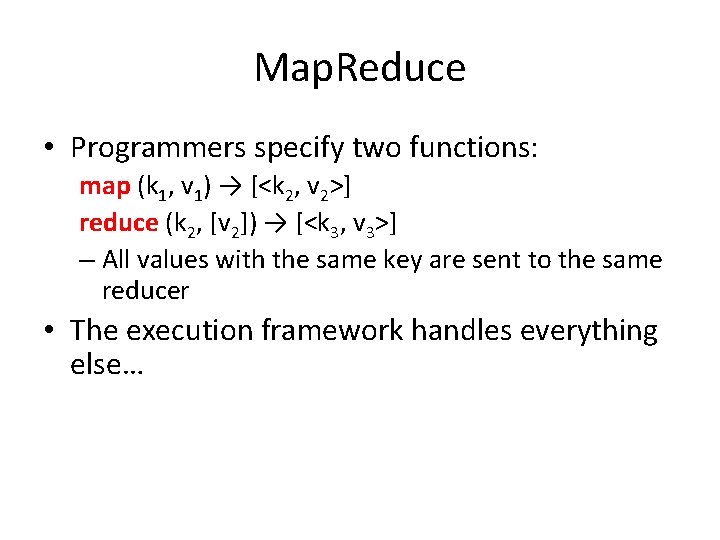

Map. Reduce • Programmers specify two functions: map (k 1, v 1) → [<k 2, v 2>] reduce (k 2, [v 2]) → [<k 3, v 3>] – All values with the same key are sent to the same reducer • The execution framework handles everything else…

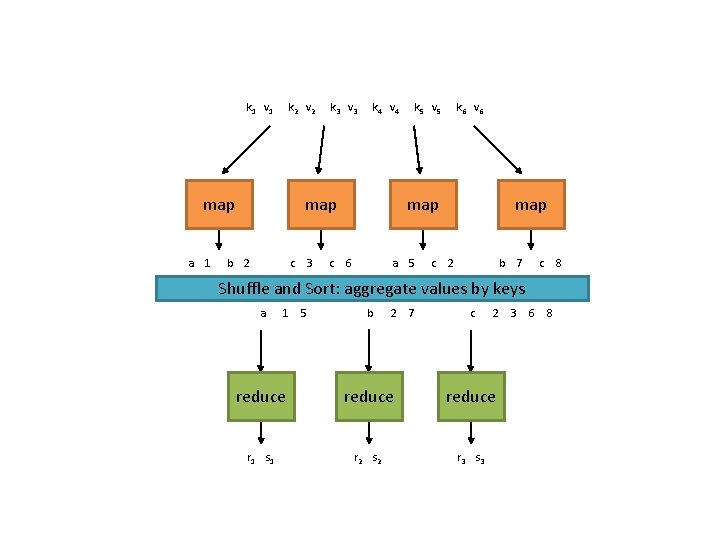

k 1 v 1 k 2 v 2 map a 1 k 3 v 3 k 4 v 4 map b 2 c 3 c k 5 v 5 k 6 v 6 map 6 a 5 c map 2 b 7 c Shuffle and Sort: aggregate values by keys a 1 5 b 2 7 c 2 3 6 8 reduce r 1 s 1 r 2 s 2 r 3 s 3 8

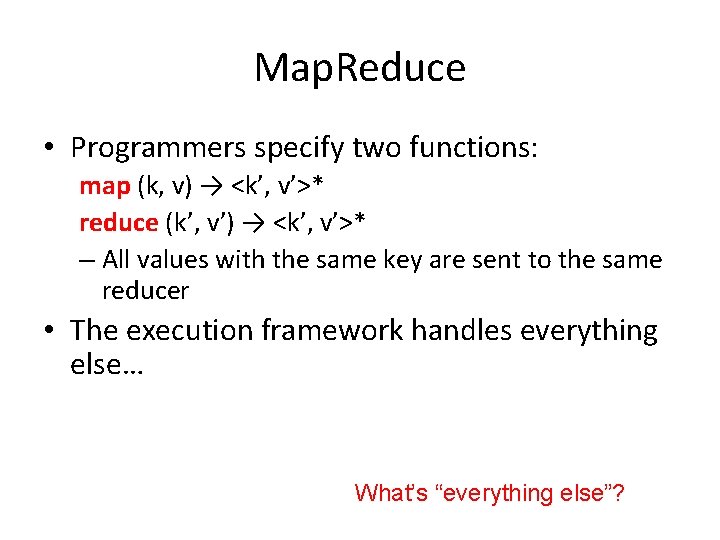

Map. Reduce • Programmers specify two functions: map (k, v) → <k’, v’>* reduce (k’, v’) → <k’, v’>* – All values with the same key are sent to the same reducer • The execution framework handles everything else… What’s “everything else”?

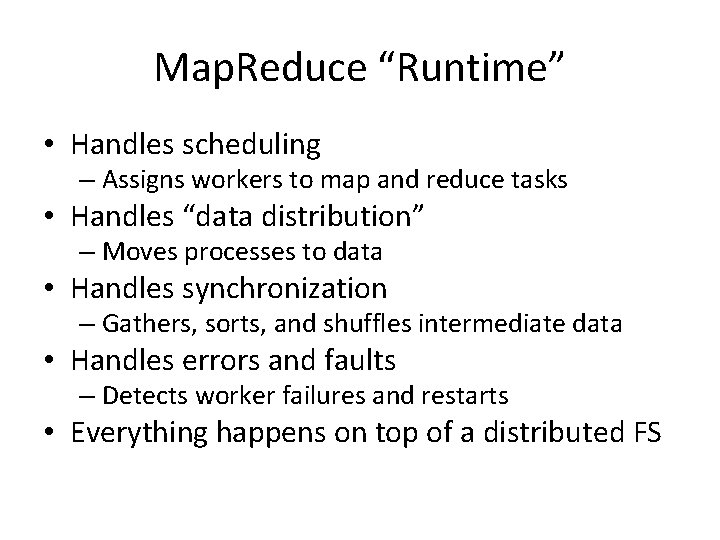

Map. Reduce “Runtime” • Handles scheduling – Assigns workers to map and reduce tasks • Handles “data distribution” – Moves processes to data • Handles synchronization – Gathers, sorts, and shuffles intermediate data • Handles errors and faults – Detects worker failures and restarts • Everything happens on top of a distributed FS

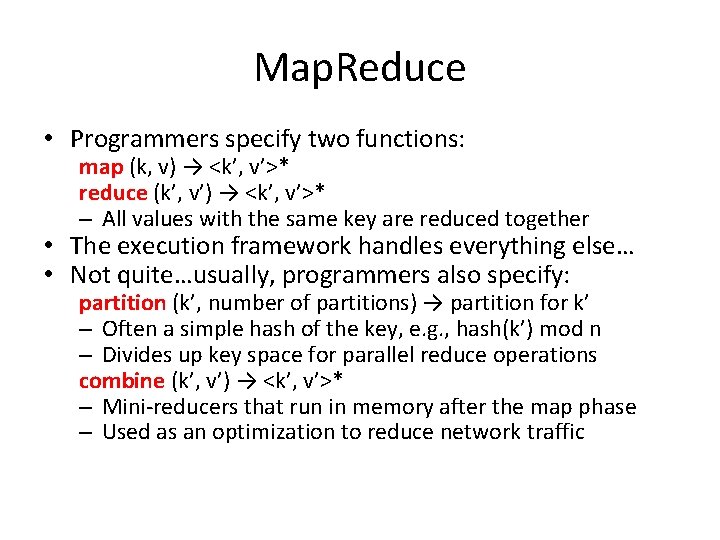

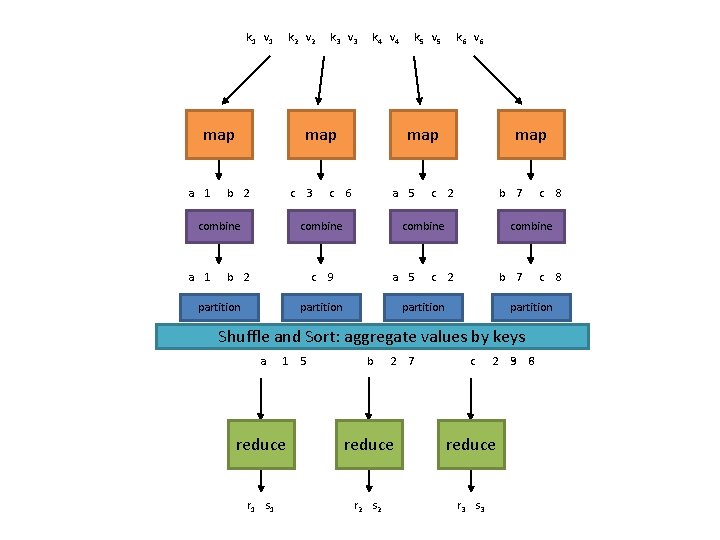

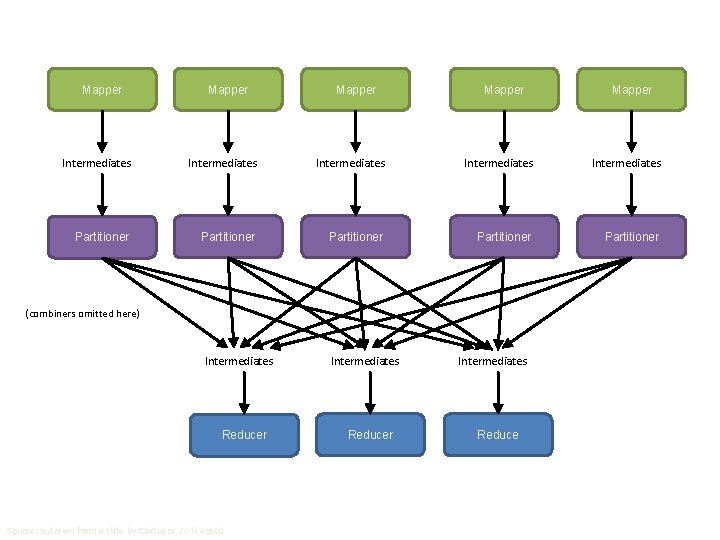

Map. Reduce • Programmers specify two functions: map (k, v) → <k’, v’>* reduce (k’, v’) → <k’, v’>* – All values with the same key are reduced together • The execution framework handles everything else… • Not quite…usually, programmers also specify: partition (k’, number of partitions) → partition for k’ – Often a simple hash of the key, e. g. , hash(k’) mod n – Divides up key space for parallel reduce operations combine (k’, v’) → <k’, v’>* – Mini-reducers that run in memory after the map phase – Used as an optimization to reduce network traffic

k 1 v 1 k 2 v 2 map a 1 k 4 v 4 map b 2 c combine a 1 k 3 v 3 3 c c partition k 6 v 6 map 6 a 5 combine b 2 k 5 v 5 c map 2 b 7 combine 9 a 5 partition c 1 5 2 b 7 partition b 2 7 8 combine c partition Shuffle and Sort: aggregate values by keys a c c 2 3 9 6 8 reduce r 1 s 1 r 2 s 2 r 3 s 3 8

Two more details… • Barrier between map and reduce phases – But we can begin copying intermediate data earlier • Keys arrive at each reducer in sorted order – No enforced ordering across reducers

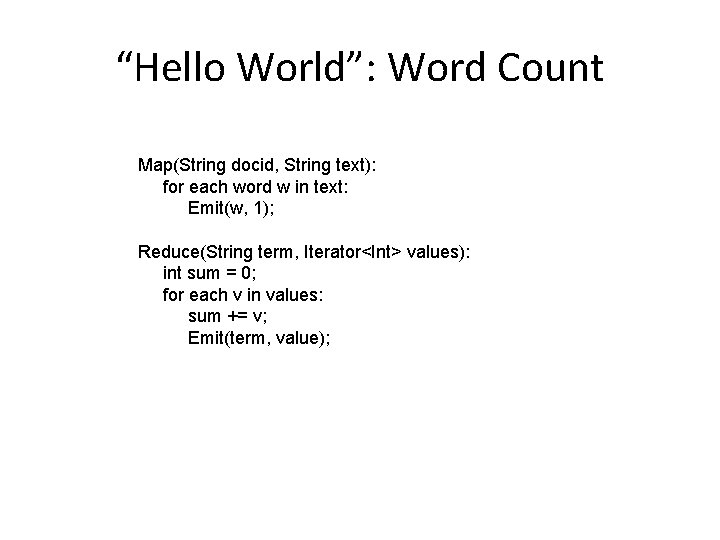

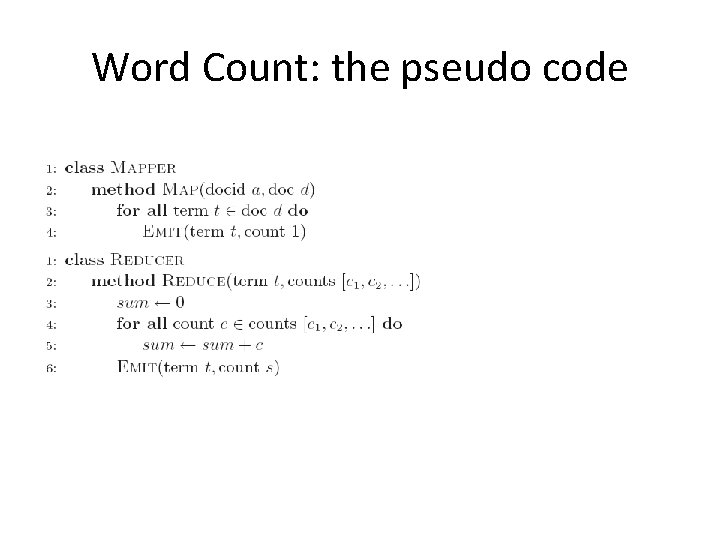

“Hello World”: Word Count Map(String docid, String text): for each word w in text: Emit(w, 1); Reduce(String term, Iterator<Int> values): int sum = 0; for each v in values: sum += v; Emit(term, value);

Map. Reduce can refer to… • The programming model • The execution framework (aka “runtime”) • The specific implementation Usage is usually clear from context!

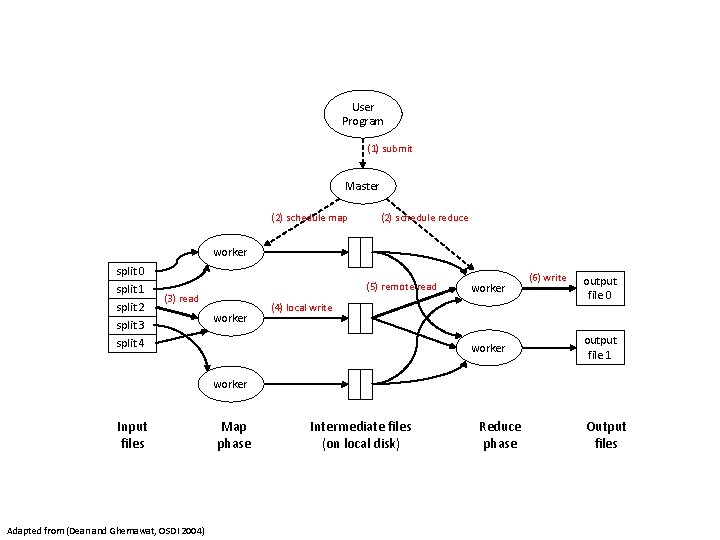

User Program (1) submit Master (2) schedule map (2) schedule reduce worker split 0 split 1 split 2 split 3 split 4 (5) remote read (3) read worker (4) local write worker (6) write output file 0 output file 1 worker Input files Adapted from (Dean and Ghemawat, OSDI 2004) Map phase Intermediate files (on local disk) Reduce phase Output files

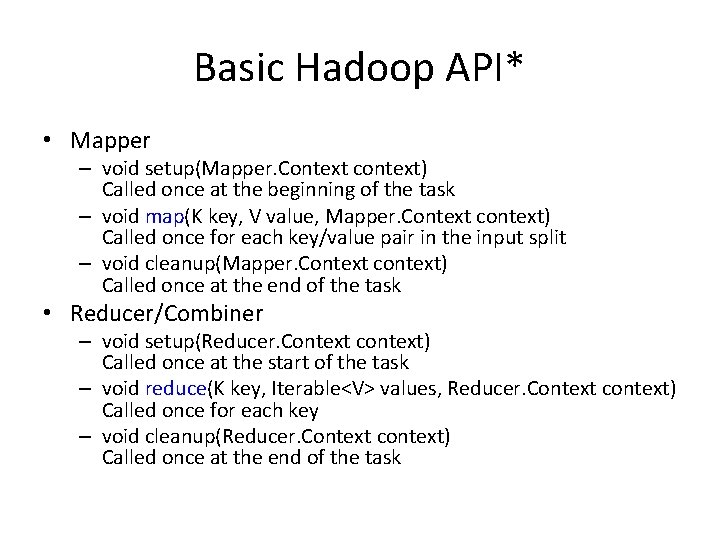

Basic Hadoop API* • Mapper – void setup(Mapper. Context context) Called once at the beginning of the task – void map(K key, V value, Mapper. Context context) Called once for each key/value pair in the input split – void cleanup(Mapper. Context context) Called once at the end of the task • Reducer/Combiner – void setup(Reducer. Context context) Called once at the start of the task – void reduce(K key, Iterable<V> values, Reducer. Context context) Called once for each key – void cleanup(Reducer. Context context) Called once at the end of the task *Note that there are two versions of the A

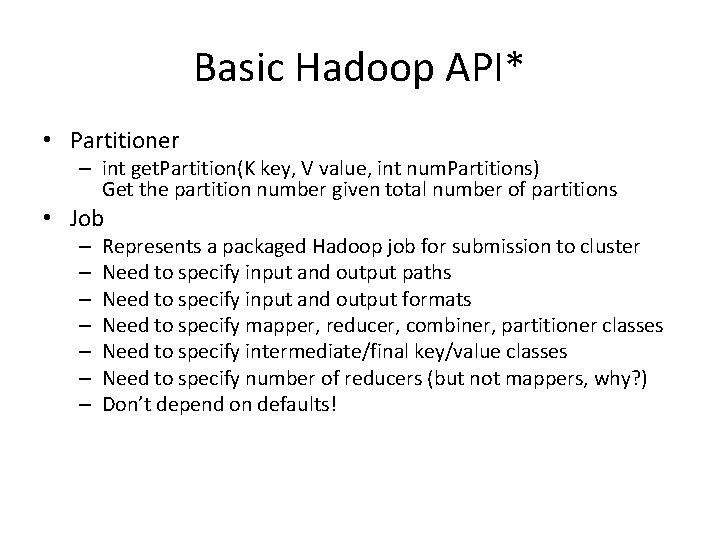

Basic Hadoop API* • Partitioner – int get. Partition(K key, V value, int num. Partitions) Get the partition number given total number of partitions • Job – – – – Represents a packaged Hadoop job for submission to cluster Need to specify input and output paths Need to specify input and output formats Need to specify mapper, reducer, combiner, partitioner classes Need to specify intermediate/final key/value classes Need to specify number of reducers (but not mappers, why? ) Don’t depend on defaults! *Note that there are two versions of the A

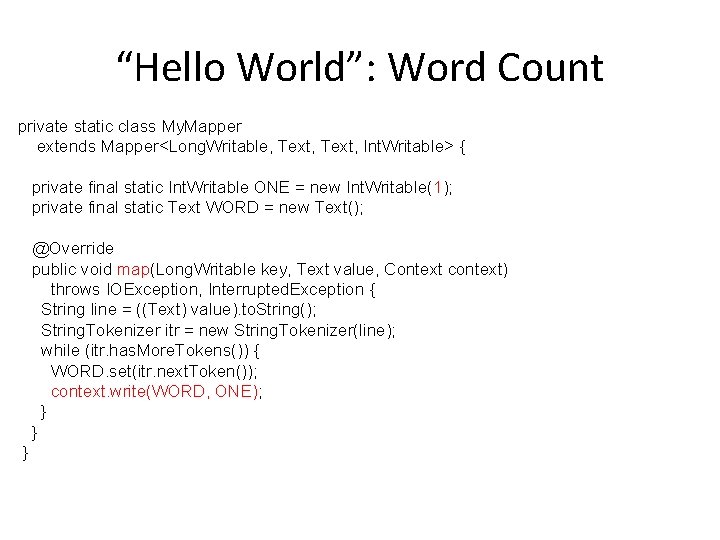

“Hello World”: Word Count private static class My. Mapper extends Mapper<Long. Writable, Text, Int. Writable> { private final static Int. Writable ONE = new Int. Writable(1); private final static Text WORD = new Text(); @Override public void map(Long. Writable key, Text value, Context context) throws IOException, Interrupted. Exception { String line = ((Text) value). to. String(); String. Tokenizer itr = new String. Tokenizer(line); while (itr. has. More. Tokens()) { WORD. set(itr. next. Token()); context. write(WORD, ONE); } } }

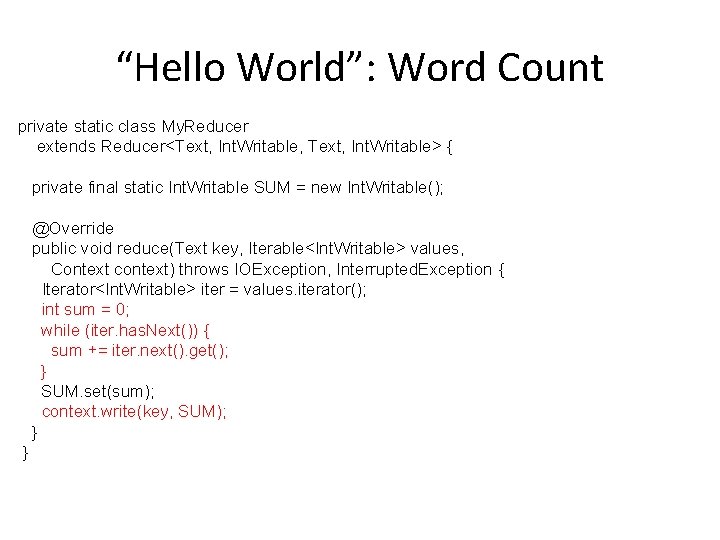

“Hello World”: Word Count private static class My. Reducer extends Reducer<Text, Int. Writable, Text, Int. Writable> { private final static Int. Writable SUM = new Int. Writable(); @Override public void reduce(Text key, Iterable<Int. Writable> values, Context context) throws IOException, Interrupted. Exception { Iterator<Int. Writable> iter = values. iterator(); int sum = 0; while (iter. has. Next()) { sum += iter. next(). get(); } SUM. set(sum); context. write(key, SUM); } }

Word Count: the pseudo code

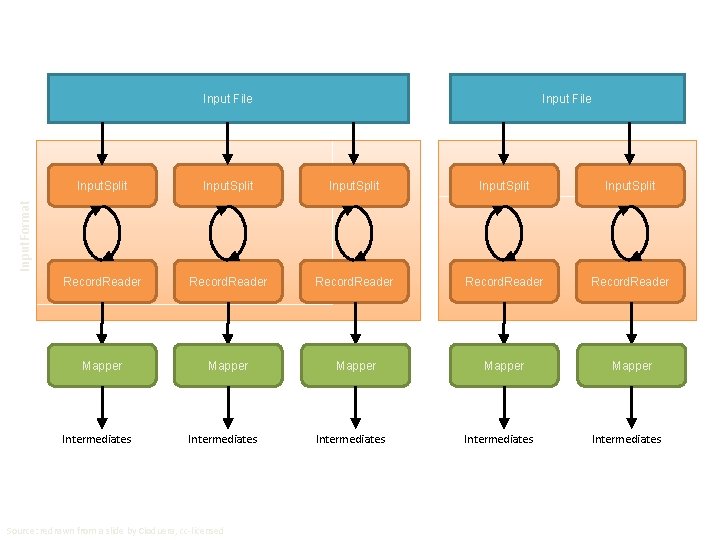

Input File Input. Split Record. Reader Mapper Mapper Input. Format Input. Split Intermediates Source: redrawn from a slide by Cloduera, cc-licensed Intermediates

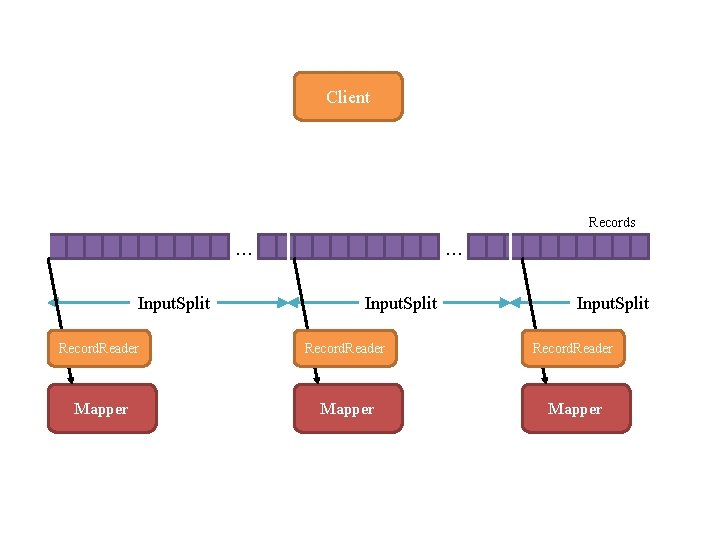

Client Records … Input. Split Record. Reader Mapper

Mapper Mapper Intermediates Intermediates Partitioner Partitioner (combiners omitted here) Intermediates Reducer Source: redrawn from a slide by Cloduera, cc-licensed Intermediates Reducer Intermediates Reduce

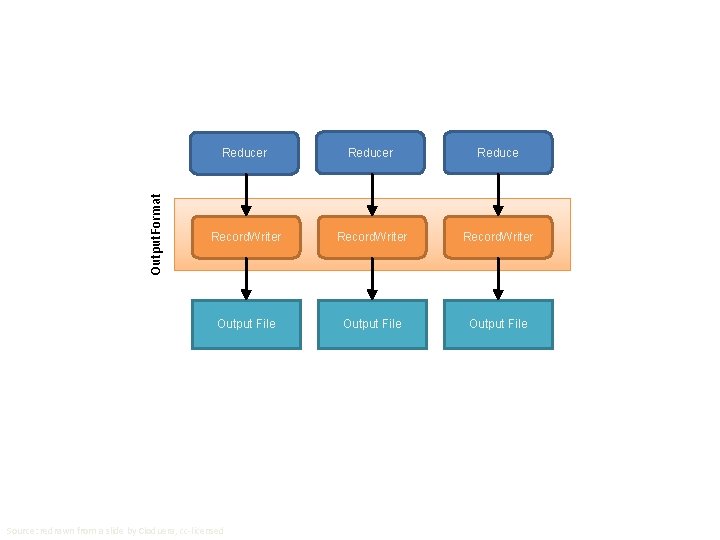

Output. Format Reducer Reduce Record. Writer Output File Source: redrawn from a slide by Cloduera, cc-licensed

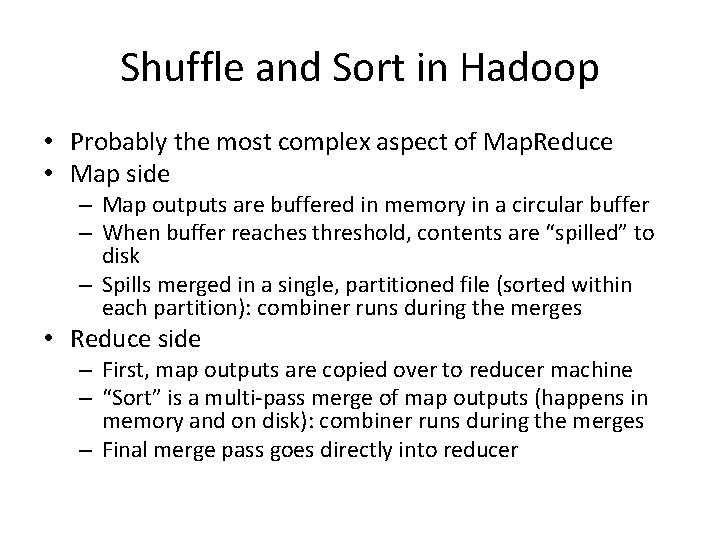

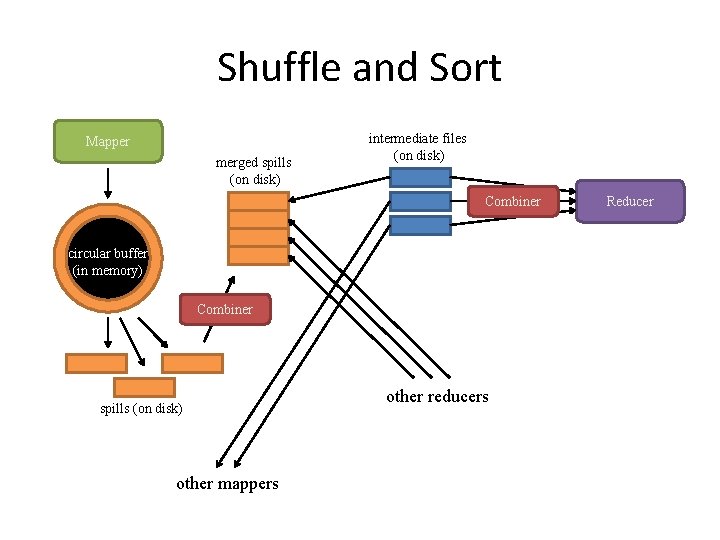

Shuffle and Sort in Hadoop • Probably the most complex aspect of Map. Reduce • Map side – Map outputs are buffered in memory in a circular buffer – When buffer reaches threshold, contents are “spilled” to disk – Spills merged in a single, partitioned file (sorted within each partition): combiner runs during the merges • Reduce side – First, map outputs are copied over to reducer machine – “Sort” is a multi-pass merge of map outputs (happens in memory and on disk): combiner runs during the merges – Final merge pass goes directly into reducer

Shuffle and Sort Mapper merged spills (on disk) intermediate files (on disk) Combiner circular buffer (in memory) Combiner spills (on disk) other mappers other reducers Reducer

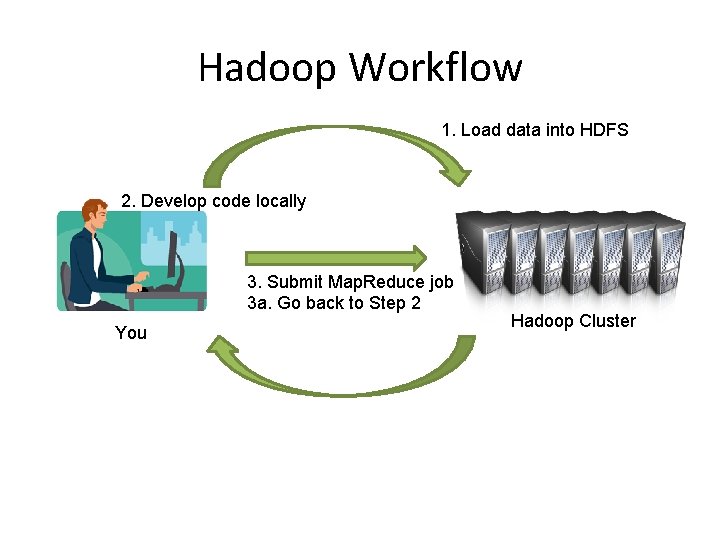

Hadoop Workflow 1. Load data into HDFS 2. Develop code locally 3. Submit Map. Reduce job 3 a. Go back to Step 2 You Hadoop Cluster 4. Retrieve data from HDFS

Recommended Workflow • Here’s how I work: – Develop code in local development environment on host machine – Build distribution on host machine – Check out copy of code on VM – Copy (i. e. , scp) jars over to VM (in same directory structure) – Run job on VM – Iterate – … – Commit code on host machine and push – Pull from inside VM, verify • Avoid using the UI of the VM – Directly ssh into the VM

Debugging Hadoop • First, take a deep breath • Start small, start locally • Build incrementally

Code Execution Environments • Different ways to run code: – Plain Java – Local (standalone) mode – Pseudo-distributed mode – Fully-distributed mode • Learn what’s good for what

Hadoop Debugging Strategies • Good ol’ System. out. println – Learn to use the webapp to access logs – Logging preferred over System. out. println – Be careful how much you log! • Fail on success – Throw Runtime. Exceptions and capture state • Programming is still programming – Use Hadoop as the “glue” – Implement core functionality outside mappers and reducers – Independently test (e. g. , unit testing) – Compose (tested) components in mappers and reducers

Thanks for Your Attention!

- Slides: 33