Basics of Data Compression Paolo Ferragina Dipartimento di

Basics of Data Compression Paolo Ferragina Dipartimento di Informatica Università di Pisa

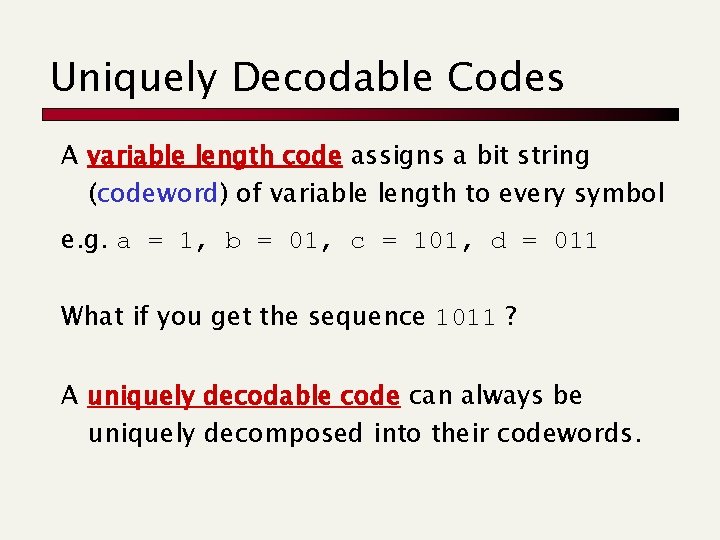

Uniquely Decodable Codes A variable length code assigns a bit string (codeword) of variable length to every symbol e. g. a = 1, b = 01, c = 101, d = 011 What if you get the sequence 1011 ? A uniquely decodable code can always be uniquely decomposed into their codewords.

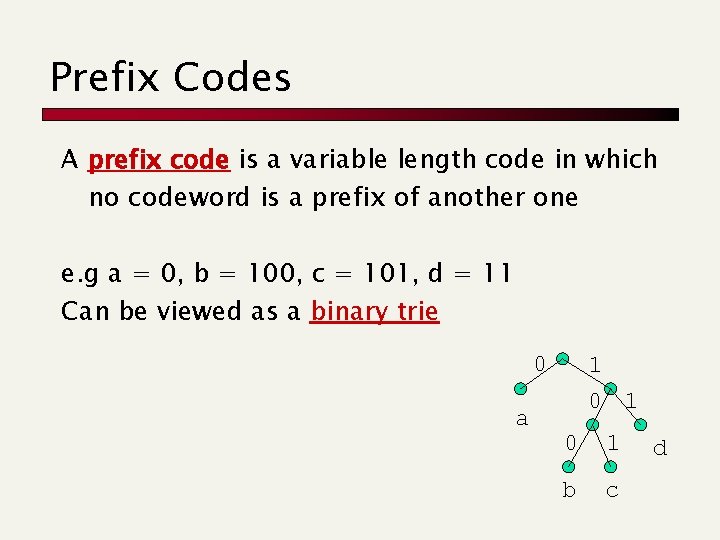

Prefix Codes A prefix code is a variable length code in which no codeword is a prefix of another one e. g a = 0, b = 100, c = 101, d = 11 Can be viewed as a binary trie 0 a 1 0 1 b c d

![Average Length For a code C with codeword length L[s], the average length is Average Length For a code C with codeword length L[s], the average length is](http://slidetodoc.com/presentation_image/611b2c7f3e1c49b75302aa96d0999cb5/image-4.jpg)

Average Length For a code C with codeword length L[s], the average length is defined as p(A) =. 7 [0], p(B) = p(C) = p(D) =. 1 [1 --] La =. 7 * 1 +. 3 * 3 = 1. 6 bit (Huffman achieves 1. 5 bit) We say that a prefix code C is optimal if for all prefix codes C’, La(C) La(C’)

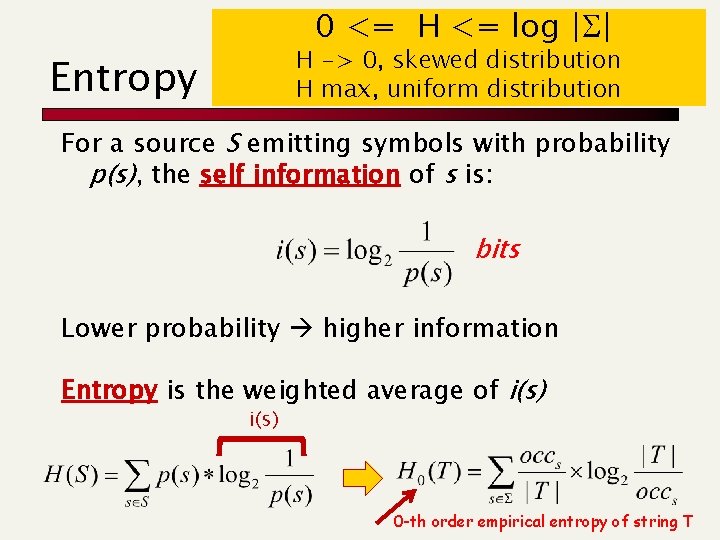

0 <= H <= log |S| H -> 0, skewed distribution H max, uniform distribution Entropy (Shannon, 1948) For a source S emitting symbols with probability p(s), the self information of s is: bits Lower probability higher information Entropy is the weighted average of i(s) 0 -th order empirical entropy of string T

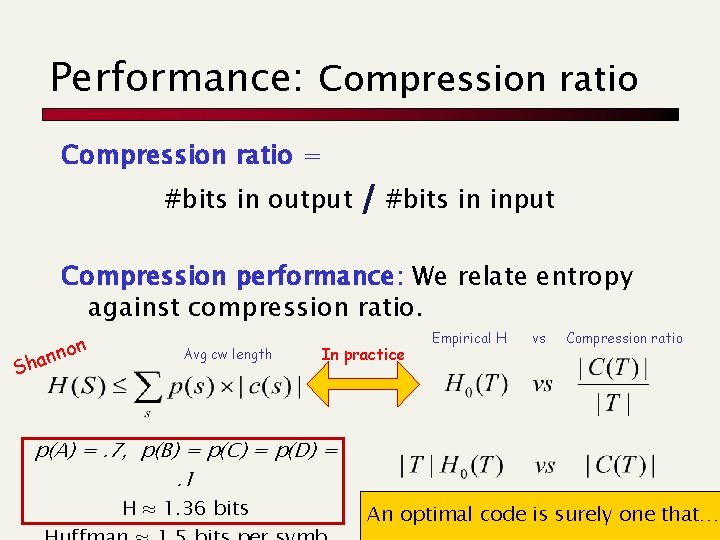

Performance: Compression ratio = #bits in output / #bits in input Compression performance: We relate entropy against compression ratio. Sha n nno Avg cw length In practice Empirical H vs Compression ratio p(A) =. 7, p(B) = p(C) = p(D) =. 1 H ≈ 1. 36 bits An optimal code is surely one that…

Index construction: Compression of postings Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 5. 3 and a paper

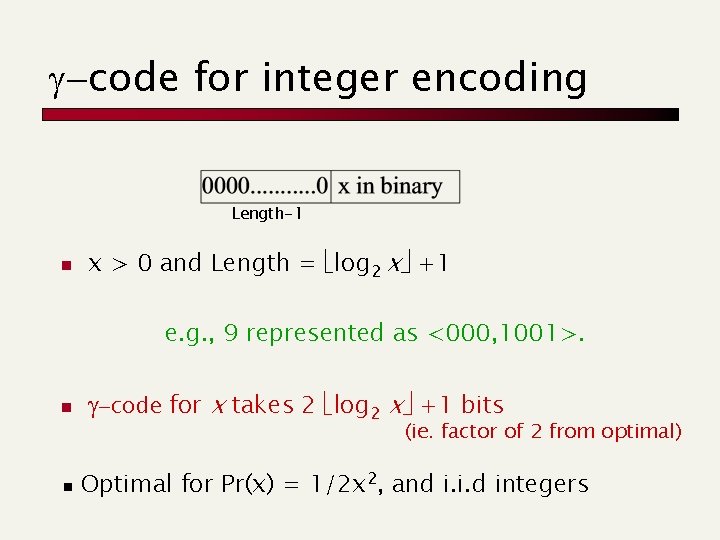

g-code for integer encoding Length-1 n x > 0 and Length = log 2 x +1 e. g. , 9 represented as <000, 1001>. n n g-code for x takes 2 log 2 x +1 bits (ie. factor of 2 from optimal) Optimal for Pr(x) = 1/2 x 2, and i. i. d integers

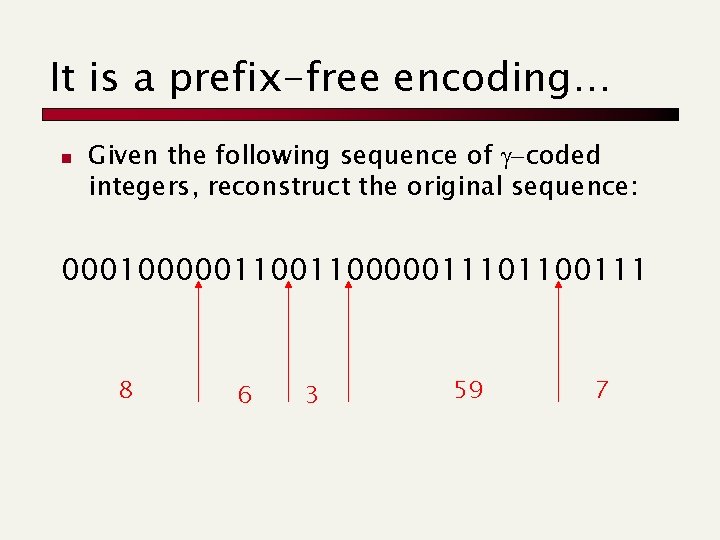

It is a prefix-free encoding… n Given the following sequence of g-coded integers, reconstruct the original sequence: 000100000110000011101100111 8 6 3 59 7

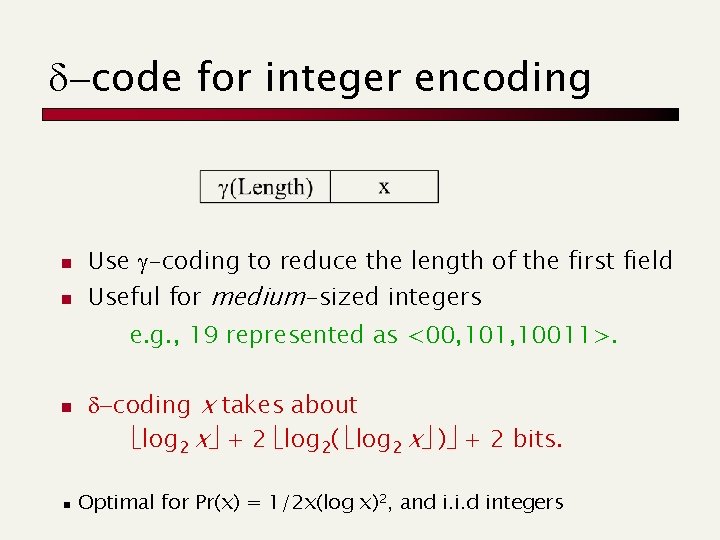

d-code for integer encoding n n Use g-coding to reduce the length of the first field Useful for medium-sized integers e. g. , 19 represented as <00, 101, 10011>. n n d-coding x takes about log 2 x + 2 log 2( log 2 x ) + 2 bits. Optimal for Pr(x) = 1/2 x(log x)2, and i. i. d integers

![Variable-byte codes [10. 2 bits per TREC 12] n Wish to get very fast Variable-byte codes [10. 2 bits per TREC 12] n Wish to get very fast](http://slidetodoc.com/presentation_image/611b2c7f3e1c49b75302aa96d0999cb5/image-11.jpg)

Variable-byte codes [10. 2 bits per TREC 12] n Wish to get very fast (de)compress byte-align n Given a binary representation of an integer n n n Append 0 s to front, to get a multiple-of-7 number of bits Form groups of 7 -bits each Append to the last group the bit 0, and to the other groups the bit 1 (tagging) e. g. , v=214+1 binary(v) = 100000001 100000001 Note: We waste 1 bit per byte, and avg 4 for the first byte. But it is a prefix code, and encodes also the value 0 !!

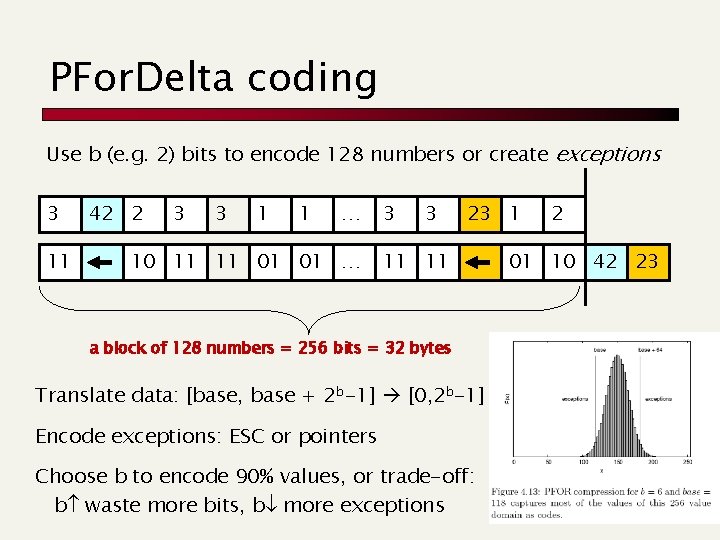

PFor. Delta coding Use b (e. g. 2) bits to encode 128 numbers or create exceptions 3 11 42 2 3 3 1 1 … 10 11 11 01 01 … 3 3 23 1 11 11 a block of 128 numbers = 256 bits = 32 bytes Translate data: [base, base + 2 b-1] [0, 2 b-1] Encode exceptions: ESC or pointers Choose b to encode 90% values, or trade-off: b waste more bits, b more exceptions 2 01 10 42 23

Index construction: Compression of documents Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading Managing-Gigabytes: pg 21 -36, 52 -56, 74 -79

Raw docs are needed

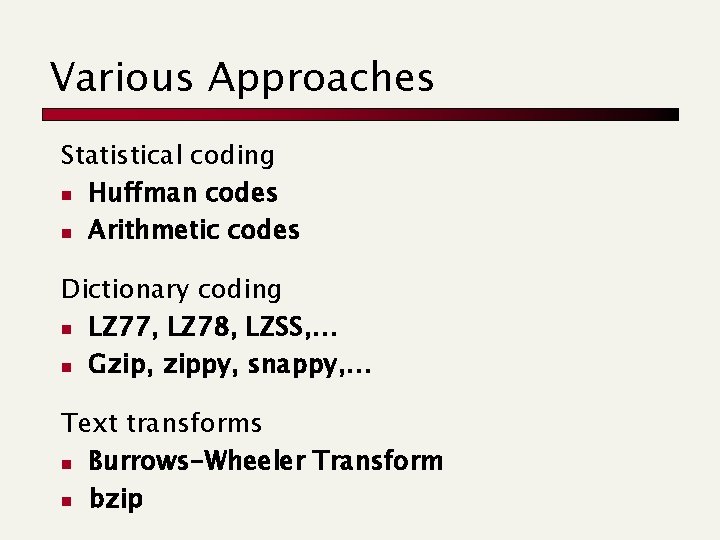

Various Approaches Statistical coding n Huffman codes n Arithmetic codes Dictionary coding n LZ 77, LZ 78, LZSS, … n Gzip, zippy, snappy, … Text transforms n Burrows-Wheeler Transform n bzip

Document Compression Huffman coding

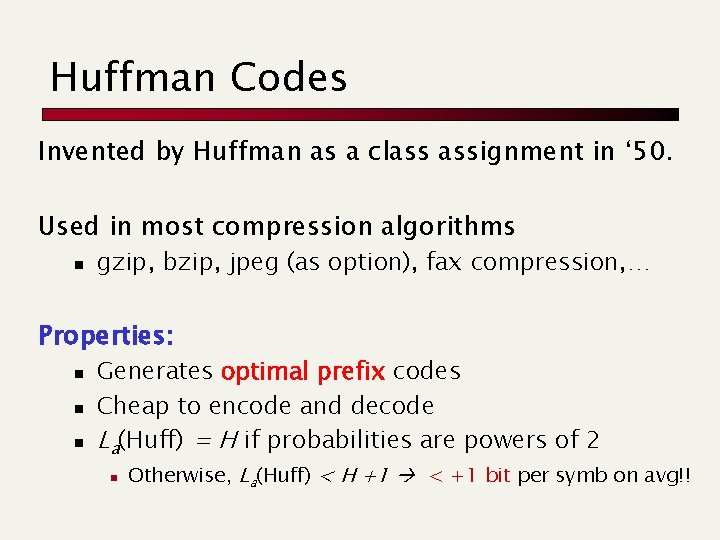

Huffman Codes Invented by Huffman as a class assignment in ‘ 50. Used in most compression algorithms n gzip, bzip, jpeg (as option), fax compression, … Properties: n n n Generates optimal prefix codes Cheap to encode and decode La(Huff) = H if probabilities are powers of 2 n Otherwise, La(Huff) < H +1 < +1 bit per symb on avg!!

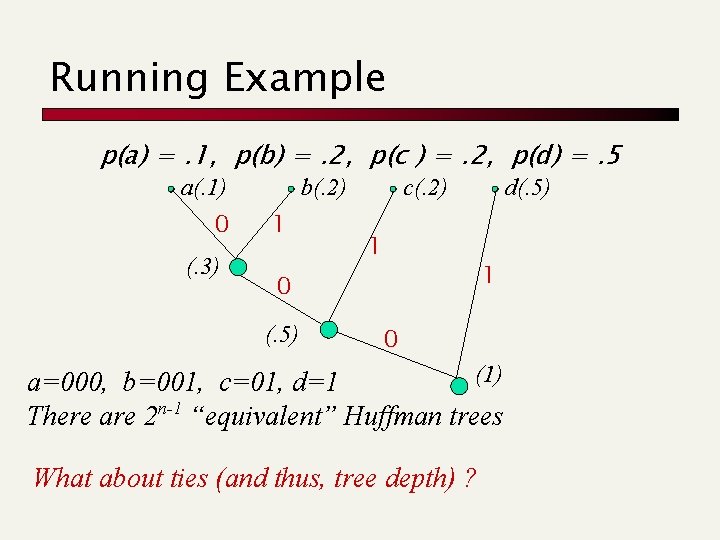

Running Example p(a) =. 1, p(b) =. 2, p(c ) =. 2, p(d) =. 5 a(. 1) 0 (. 3) b(. 2) 1 c(. 2) 1 1 0 (. 5) d(. 5) 0 (1) a=000, b=001, c=01, d=1 There are 2 n-1 “equivalent” Huffman trees What about ties (and thus, tree depth) ?

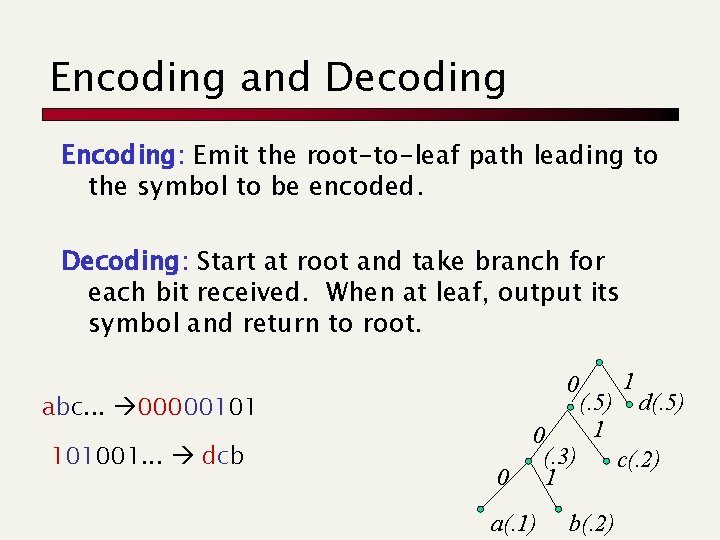

Encoding and Decoding Encoding: Emit the root-to-leaf path leading to the symbol to be encoded. Decoding: Start at root and take branch for each bit received. When at leaf, output its symbol and return to root. 0 abc. . . 00000101 101001. . . dcb 0 0 (. 3) 1 a(. 1) (. 5) 1 b(. 2) 1 d(. 5) c(. 2)

Huffman in practice The compressed file of n symbols, consists of: n Preamble: tree encoding + symbols in leaves n Body: compressed text of n symbols Preamble = Q(|S| log |S|) bits Body is at least n. H and at most n. H+n bits Extra +n is bad for very skewed distributions, namely ones for which H -> 0 Example: p(a) = 1/n, p(b) = n-1/n

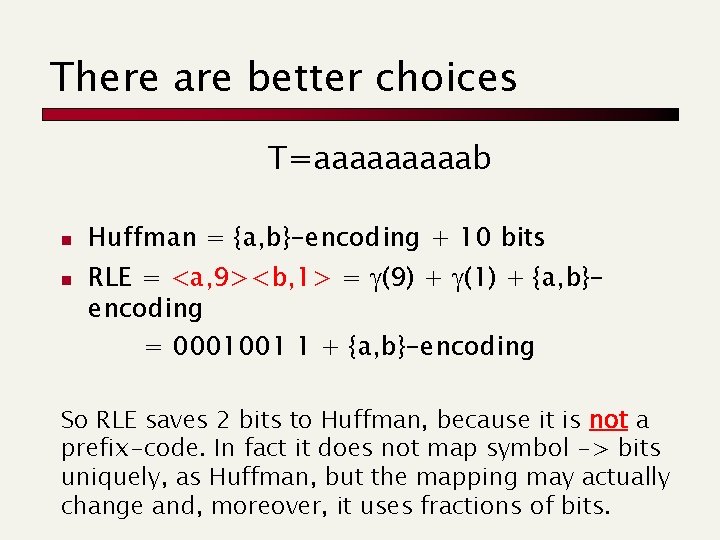

There are better choices T=aaaaab n n Huffman = {a, b}-encoding + 10 bits RLE = <a, 9><b, 1> = g(9) + g(1) + {a, b}encoding = 0001001 1 + {a, b}-encoding So RLE saves 2 bits to Huffman, because it is not a prefix-code. In fact it does not map symbol -> bits uniquely, as Huffman, but the mapping may actually change and, moreover, it uses fractions of bits.

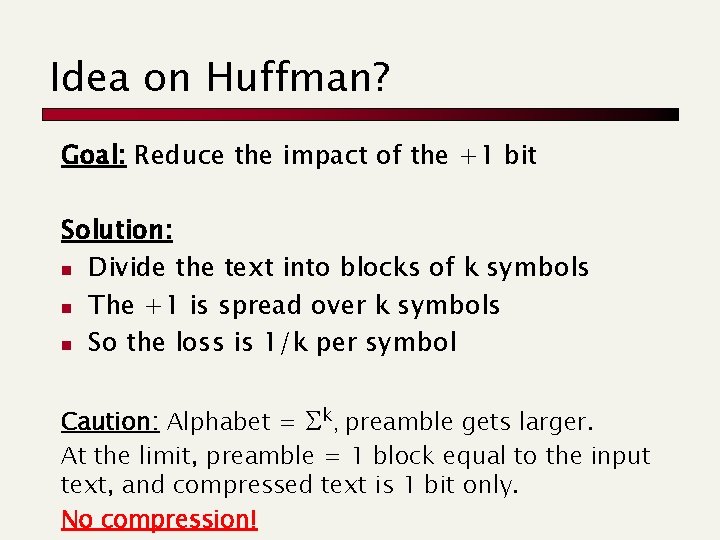

Idea on Huffman? Goal: Reduce the impact of the +1 bit Solution: n Divide the text into blocks of k symbols n The +1 is spread over k symbols n So the loss is 1/k per symbol Caution: Alphabet = Sk, preamble gets larger. At the limit, preamble = 1 block equal to the input text, and compressed text is 1 bit only. No compression!

Document Compression Arithmetic coding

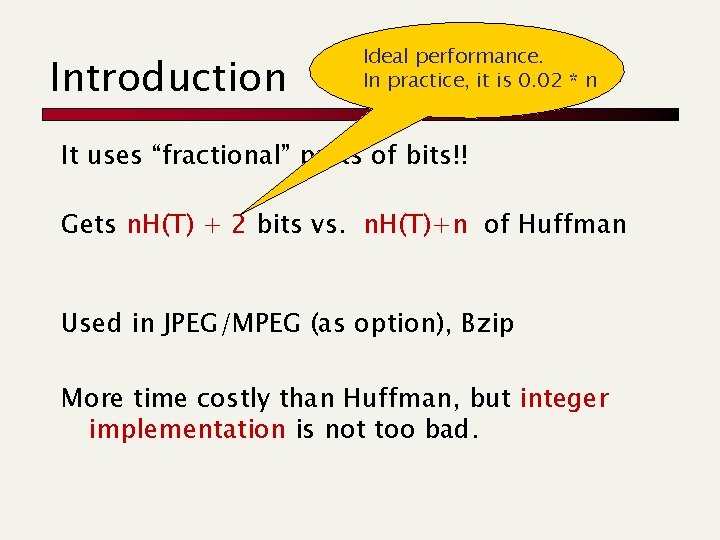

Introduction Ideal performance. In practice, it is 0. 02 * n It uses “fractional” parts of bits!! Gets n. H(T) + 2 bits vs. n. H(T)+n of Huffman Used in JPEG/MPEG (as option), Bzip More time costly than Huffman, but integer implementation is not too bad.

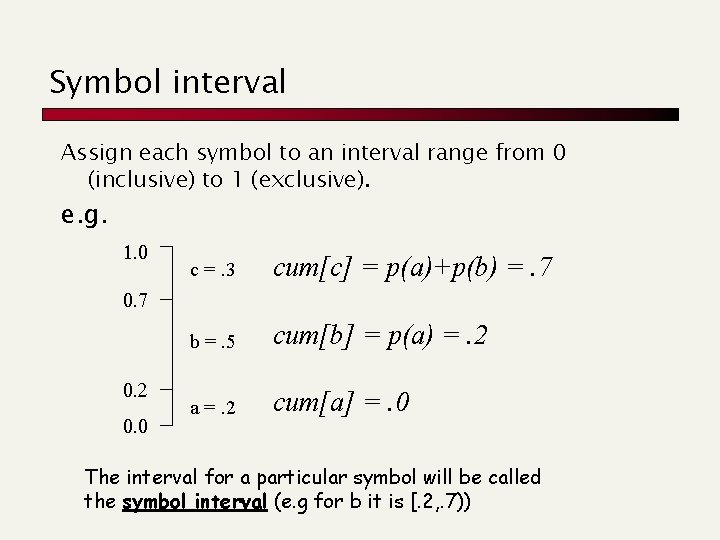

Symbol interval Assign each symbol to an interval range from 0 (inclusive) to 1 (exclusive). e. g. 1. 0 c =. 3 cum[c] = p(a)+p(b) =. 7 b =. 5 cum[b] = p(a) =. 2 a =. 2 cum[a] =. 0 0. 7 0. 2 0. 0 The interval for a particular symbol will be called the symbol interval (e. g for b it is [. 2, . 7))

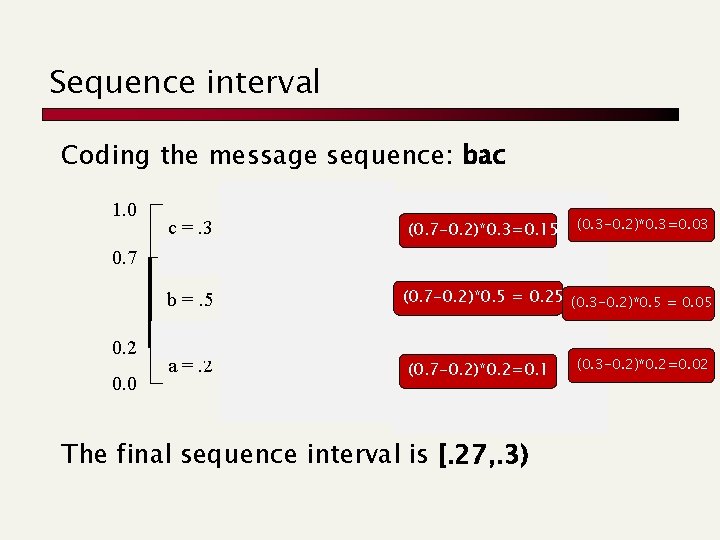

Sequence interval Coding the message sequence: bac 1. 0 0. 7 c =. 3 0. 7 c 0. 55 0. 2 0. 0 a =. 2 0. 3 0. 2 c (0. 7 -0. 2)*0. 3=0. 15 (0. 3 -0. 2)*0. 3=0. 03 0. 27 b b =. 5 0. 3 a (0. 7 -0. 2)*0. 5 = b 0. 25 (0. 3 -0. 2)*0. 5 = 0. 05 0. 22 a (0. 7 -0. 2)*0. 2=0. 1 0. 2 The final sequence interval is [. 27, . 3) (0. 3 -0. 2)*0. 2=0. 02

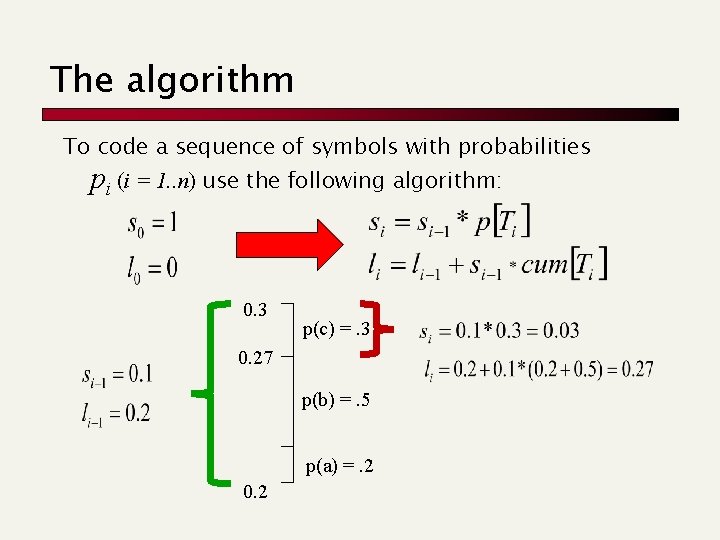

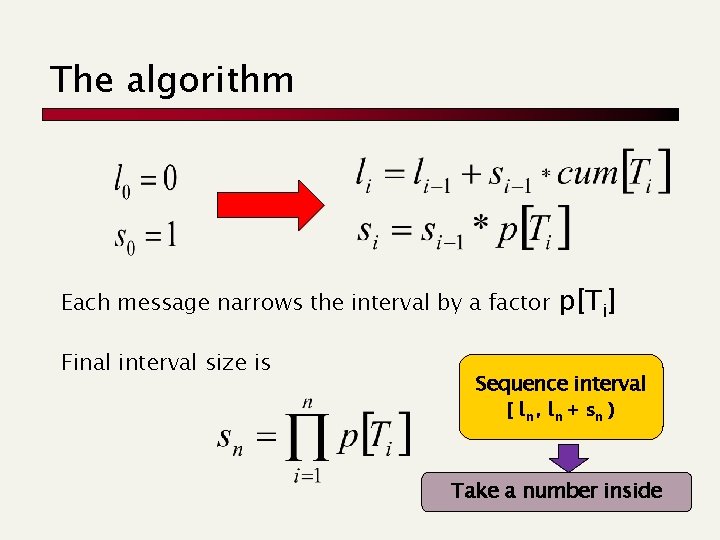

The algorithm To code a sequence of symbols with probabilities pi (i = 1. . n) use the following algorithm: 0. 3 p(c) =. 3 0. 27 p(b) =. 5 p(a) =. 2 0. 2

The algorithm Each message narrows the interval by a factor Final interval size is p[Ti] Sequence interval [ ln , l n + sn ) Take a number inside

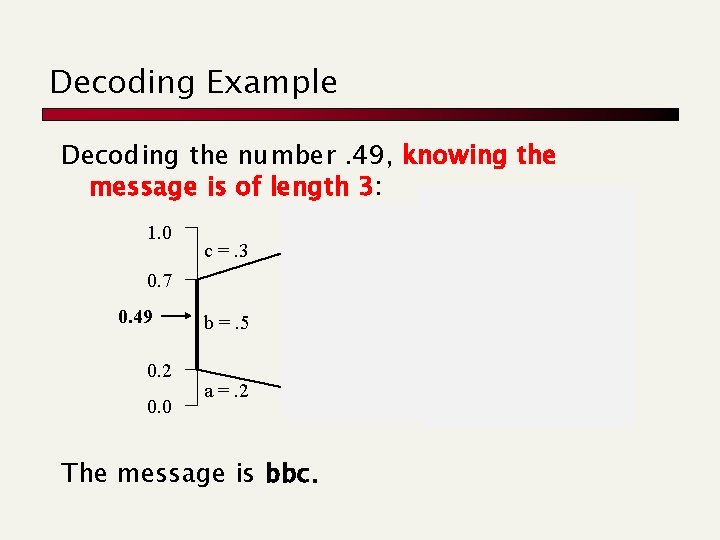

Decoding Example Decoding the number. 49, knowing the message is of length 3: 1. 0 0. 7 c =. 3 0. 7 0. 49 0. 2 0. 0 b =. 5 0. 55 c 0. 55 0. 49 a =. 2 The message is bbc. 0. 3 0. 2 0. 49 0. 475 b a c b 0. 35 0. 3 a

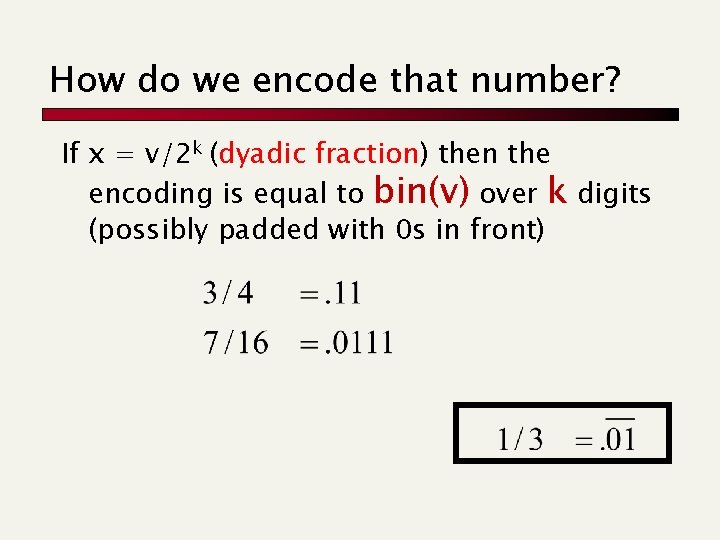

How do we encode that number? If x = v/2 k (dyadic fraction) then the encoding is equal to bin(v) over k digits (possibly padded with 0 s in front)

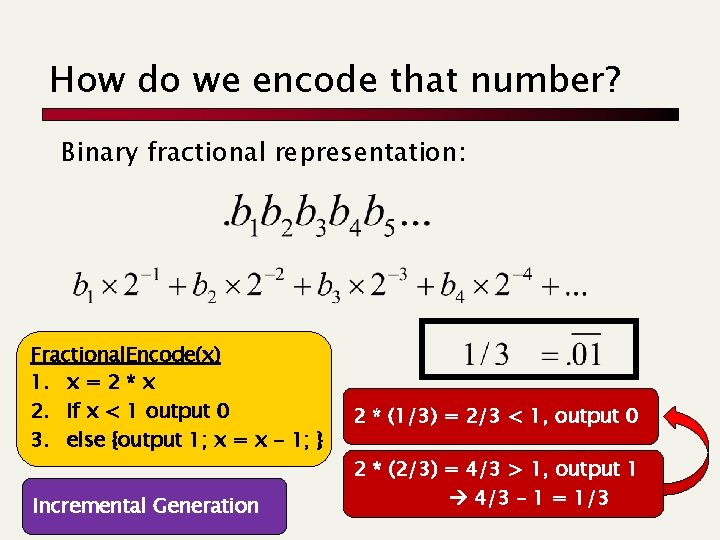

How do we encode that number? Binary fractional representation: Fractional. Encode(x) 1. x = 2 * x 2. If x < 1 output 0 3. else {output 1; x = x - 1; } Incremental Generation 2 * (1/3) = 2/3 < 1, output 0 2 * (2/3) = 4/3 > 1, output 1 4/3 – 1 = 1/3

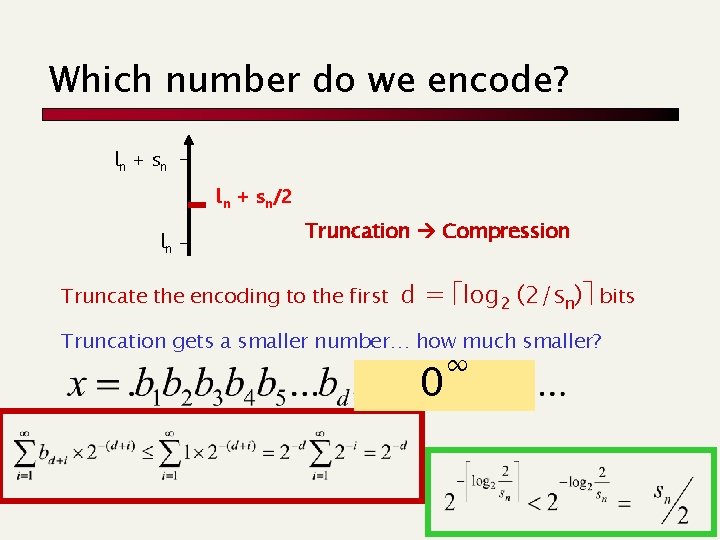

Which number do we encode? ln + s n ln + sn/2 ln Truncation Compression Truncate the encoding to the first d = log 2 (2/sn) bits Truncation gets a smaller number… how much smaller? 0 ∞

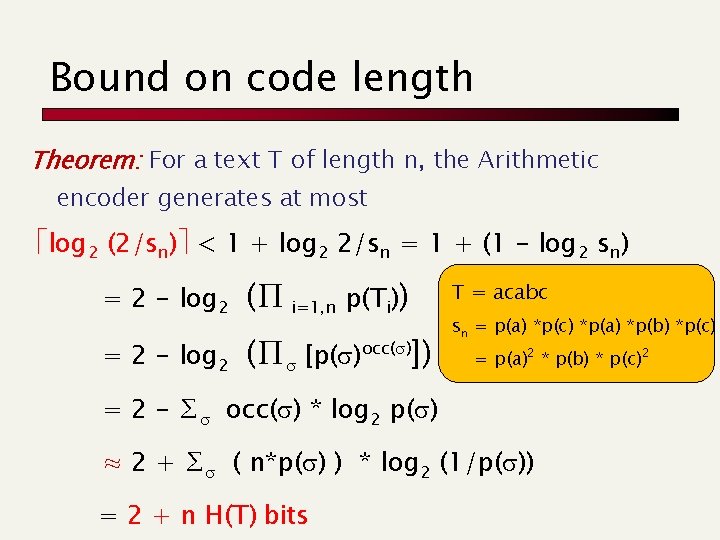

Bound on code length Theorem: For a text T of length n, the Arithmetic encoder generates at most log 2 (2/sn) < 1 + log 2 2/sn = 1 + (1 - log 2 sn) = 2 - log 2 (∏ i=1, n p(Ti)) = 2 - log 2 (∏s [p(s)occ(s)]) T = acabc sn = p(a) *p(c) *p(a) *p(b) *p(c) = p(a)2 * p(b) * p(c)2 = 2 - ∑s occ(s) * log 2 p(s) ≈ 2 + ∑s ( n*p(s) ) * log 2 (1/p(s)) = 2 + n H(T) bits

Document Compression Dictionary-based compressors

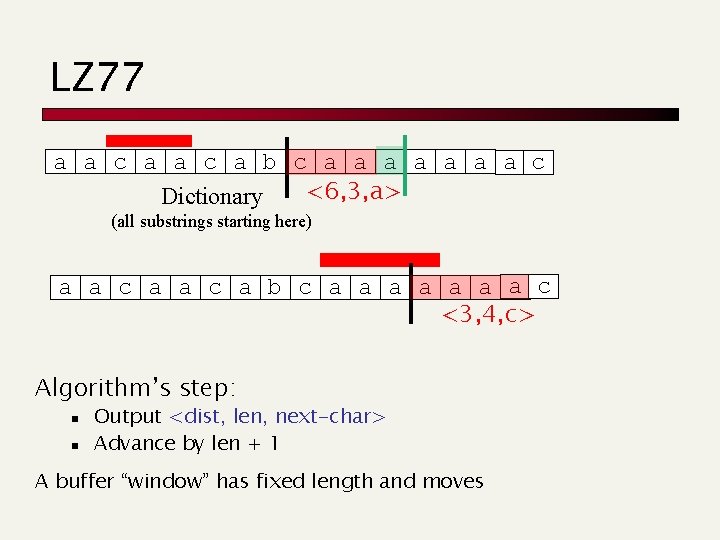

LZ 77 a a c a b c a a a a c <6, 3, a> Dictionary (all substrings starting here) a a c a b c a a a a c c <3, 4, c> Algorithm’s step: n n Output <dist, len, next-char> Advance by len + 1 A buffer “window” has fixed length and moves

LZ 77 Decoding Decoder keeps same dictionary window as encoder. n Finds substring and inserts a copy of it What if l > d? (overlap with text to be compressed) n n E. g. seen = abcd, next codeword is (2, 9, e) Simply copy starting at the cursor for (i = 0; i < len; i++) out[cursor+i] = out[cursor-d+i] n Output is correct: abcdcdcdce

LZ 77 Optimizations used by gzip LZSS: Output one of the following formats (0, position, length) or (1, char) Typically uses the second format if length < 3. Special greedy: possibly use shorter match so that next match is better Hash Table for speed-up searches on triplets Triples are coded with Huffman’s code

You find this at: www. gzip. org/zlib/

Google’s solution

- Slides: 39