Basic linear regression and multiple regression Psych 437

Basic linear regression and multiple regression Psych 437 - Fraley

Example • Let’s say we wish to model the relationship between coffee consumption and happiness

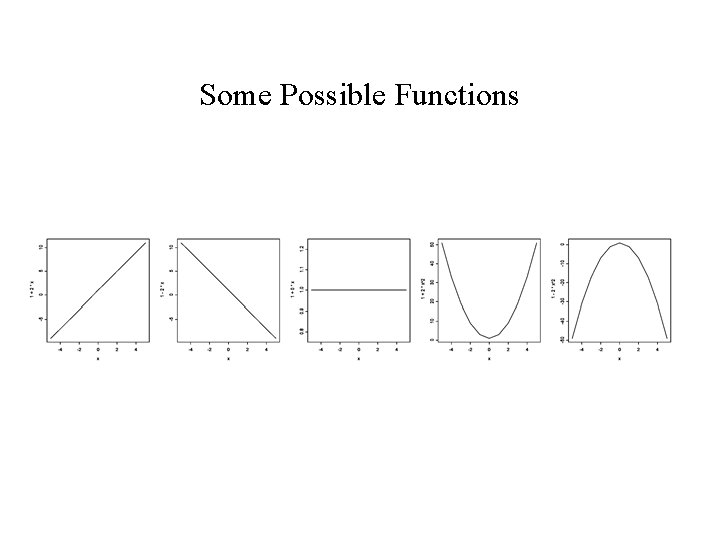

Some Possible Functions

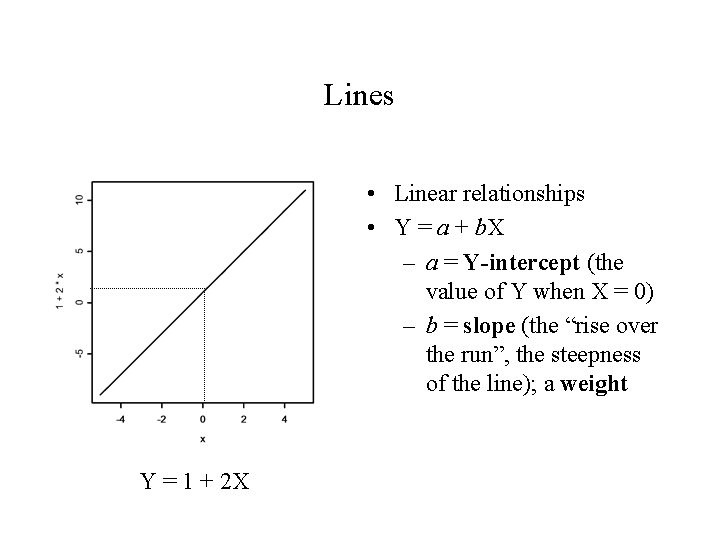

Lines • Linear relationships • Y = a + b. X – a = Y-intercept (the value of Y when X = 0) – b = slope (the “rise over the run”, the steepness of the line); a weight Y = 1 + 2 X

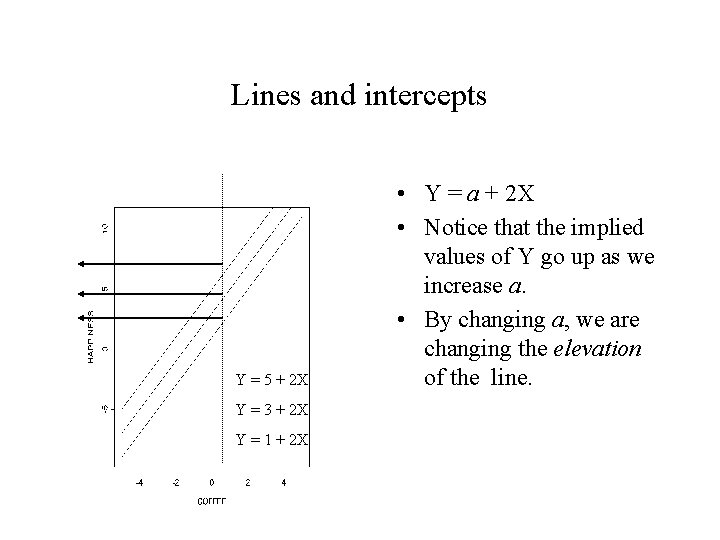

Lines and intercepts Y = 5 + 2 X Y = 3 + 2 X Y = 1 + 2 X • Y = a + 2 X • Notice that the implied values of Y go up as we increase a. • By changing a, we are changing the elevation of the line.

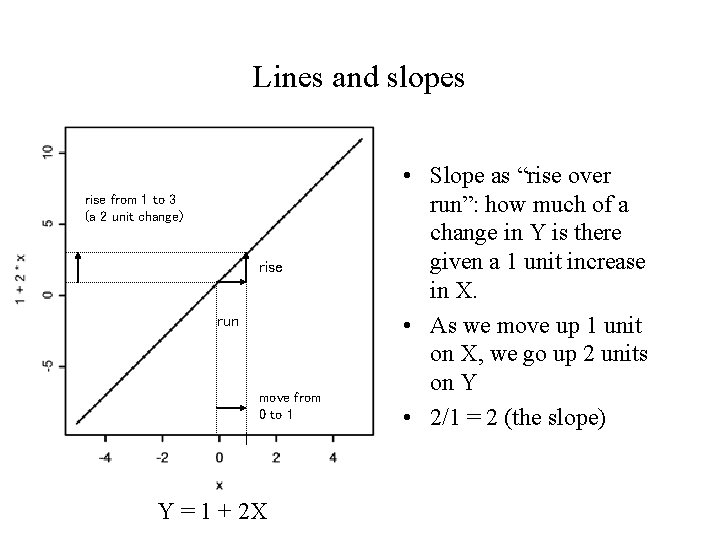

Lines and slopes rise from 1 to 3 (a 2 unit change) rise run move from 0 to 1 Y = 1 + 2 X • Slope as “rise over run”: how much of a change in Y is there given a 1 unit increase in X. • As we move up 1 unit on X, we go up 2 units on Y • 2/1 = 2 (the slope)

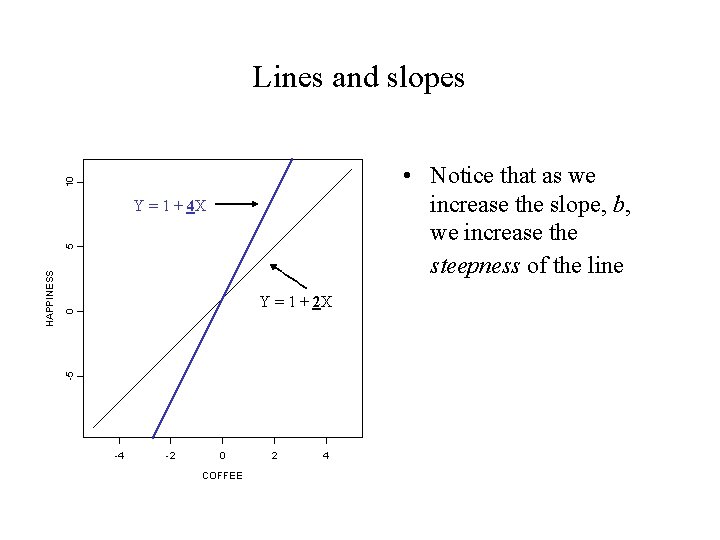

Lines and slopes 10 • Notice that as we increase the slope, b, we increase the steepness of the line 0 Y = 1 + 2 X -5 HAPPINESS 5 Y = 1 + 4 X -4 -2 0 COFFEE 2 4

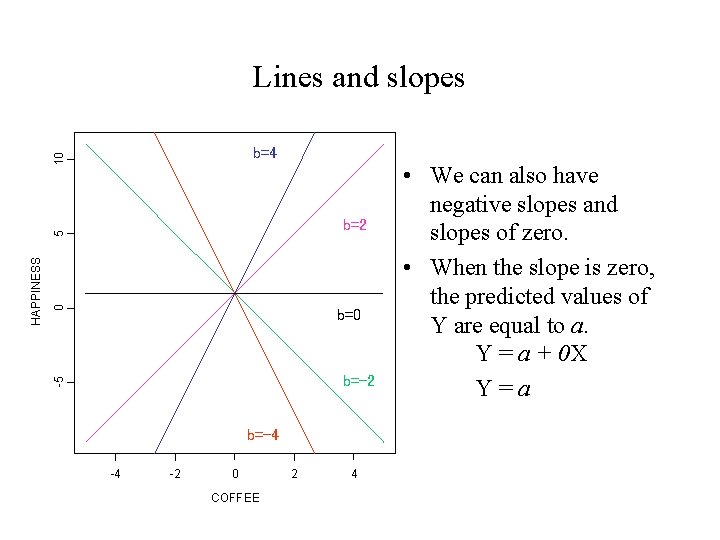

Lines and slopes 10 b=4 0 b=-2 -5 HAPPINESS 5 b=2 b=-4 -4 -2 0 COFFEE 2 4 • We can also have negative slopes and slopes of zero. • When the slope is zero, the predicted values of Y are equal to a. Y = a + 0 X Y=a

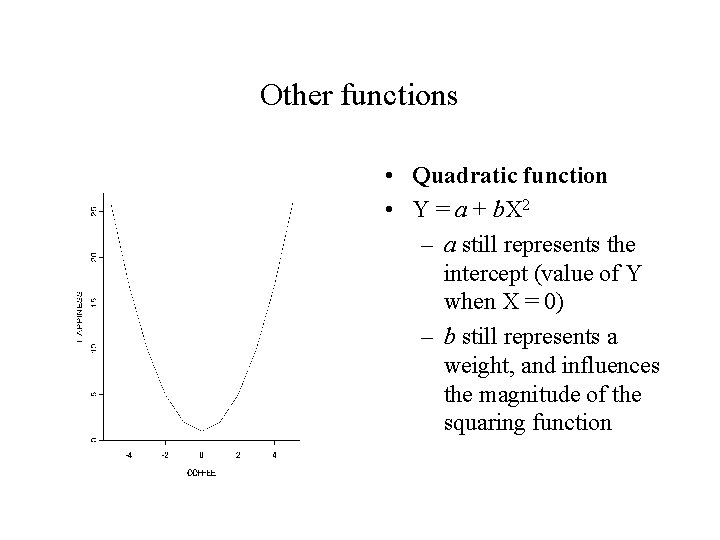

Other functions • Quadratic function • Y = a + b. X 2 – a still represents the intercept (value of Y when X = 0) – b still represents a weight, and influences the magnitude of the squaring function

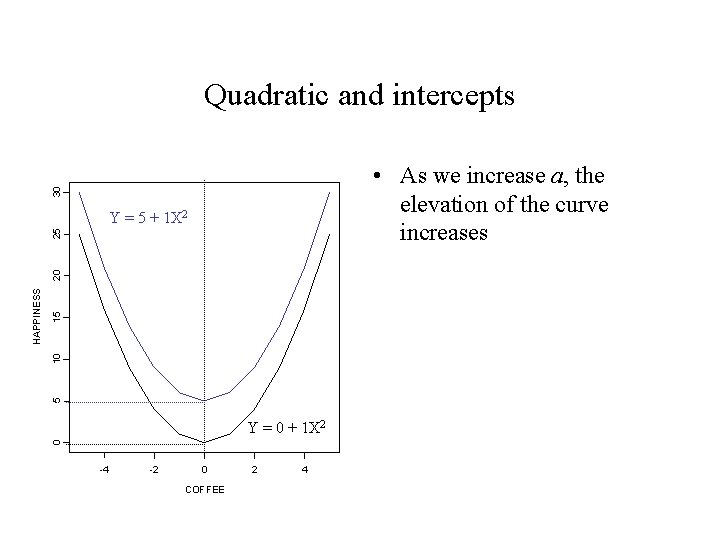

Quadratic and intercepts 30 • As we increase a, the elevation of the curve increases 15 10 5 Y = 0 + 1 X 2 0 HAPPINESS 20 25 Y = 5 + 1 X 2 -4 -2 0 COFFEE 2 4

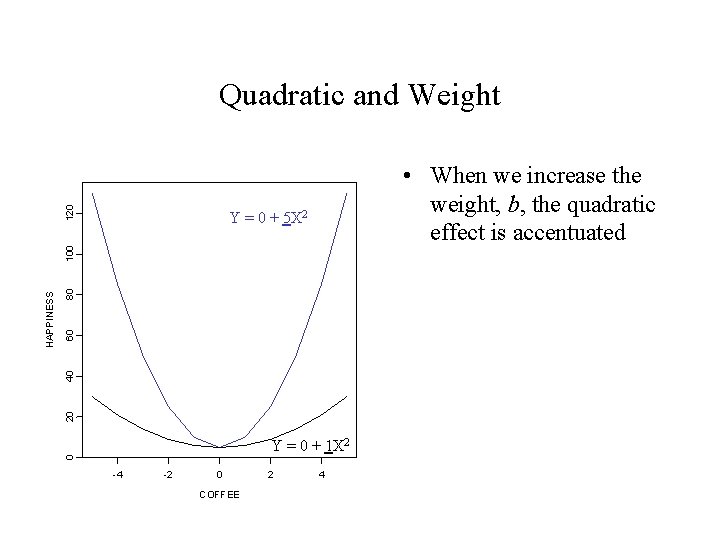

120 Quadratic and Weight • When we increase the weight, b, the quadratic effect is accentuated 80 60 40 20 Y = 0 + 1 X 2 0 HAPPINESS 100 Y = 0 + 5 X 2 -4 -2 0 COFFEE 2 4

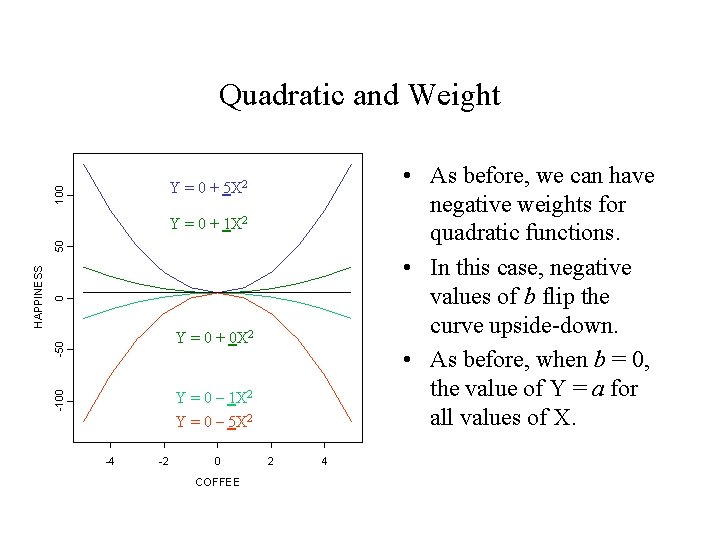

Quadratic and Weight • As before, we can have negative weights for quadratic functions. • In this case, negative values of b flip the curve upside-down. • As before, when b = 0, the value of Y = a for all values of X. 100 Y = 0 + 5 X 2 0 -50 Y = 0 + 0 X 2 Y = 0 – 1 X 2 Y = 0 – 5 X 2 -100 HAPPINESS 50 Y = 0 + 1 X 2 -4 -2 0 COFFEE 2 4

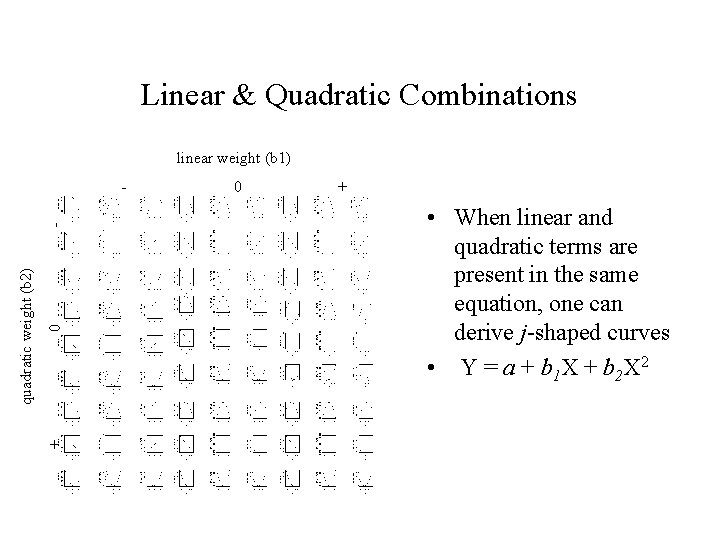

Linear & Quadratic Combinations linear weight (b 1) 0 + quadratic weight (b 2) - - 0 + • When linear and quadratic terms are present in the same equation, one can derive j-shaped curves • Y = a + b 1 X + b 2 X 2

Some terminology • When the relation between variables are expressed in this manner, we call the relevant equation(s) mathematical models • The intercept and weight values are called parameters of the model. • Although one can describe the relationship between two variables in the way we have done here, for now on we’ll assume that our models are causal models, such that the variable on the left-hand side of the equation is assumed to be caused by the variable(s) on the right-hand side.

Terminology • The values of Y in these models are often called predicted values, sometimes abbreviated as Y-hat or. Why? They are the values of Y that are implied by the specific parameters of the model.

Estimation • Up to this point, we have assumed that our models are correct. • There are two important issues we need to deal with, however: – Assuming the basic model is correct (e. g. , linear), what are the correct parameters for the model? – Is the basic form of the model correct? That is, is a linear, as opposed to a quadratic, model the appropriate model for characterizing the relationship between variables?

Estimation • The process of obtaining the correct parameter values (assuming we are working with the right model) is called parameter estimation.

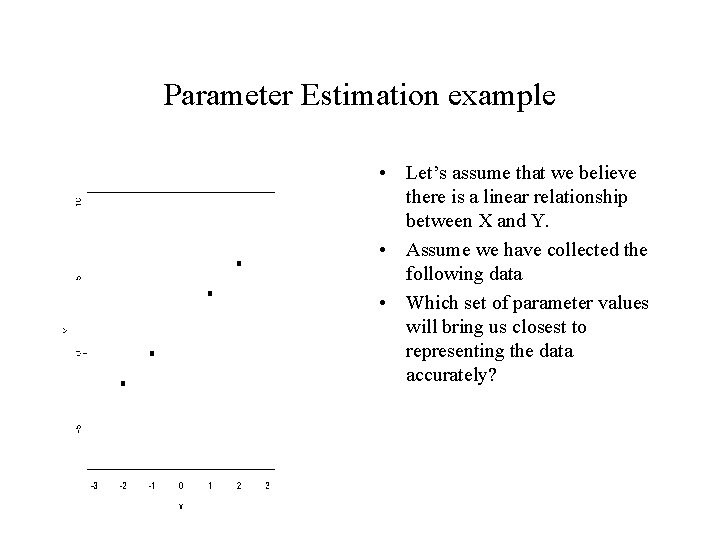

Parameter Estimation example • Let’s assume that we believe there is a linear relationship between X and Y. • Assume we have collected the following data • Which set of parameter values will bring us closest to representing the data accurately?

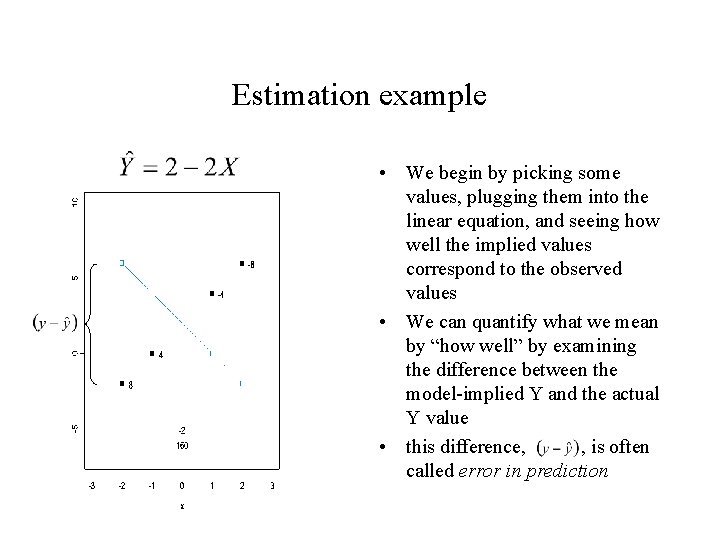

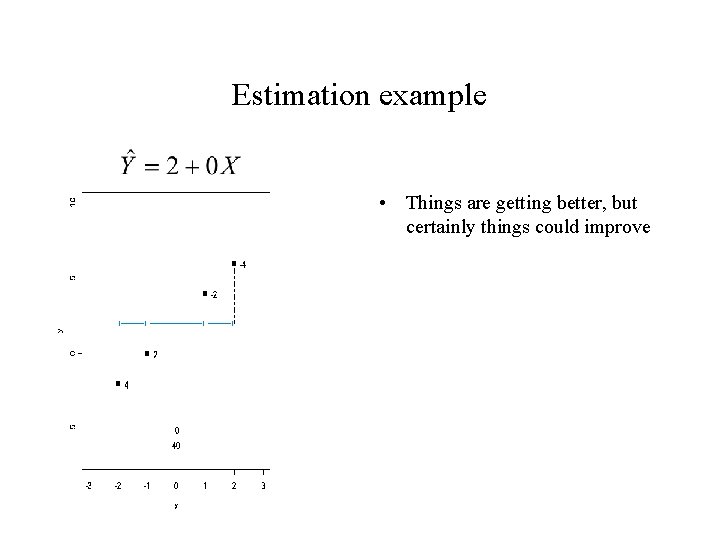

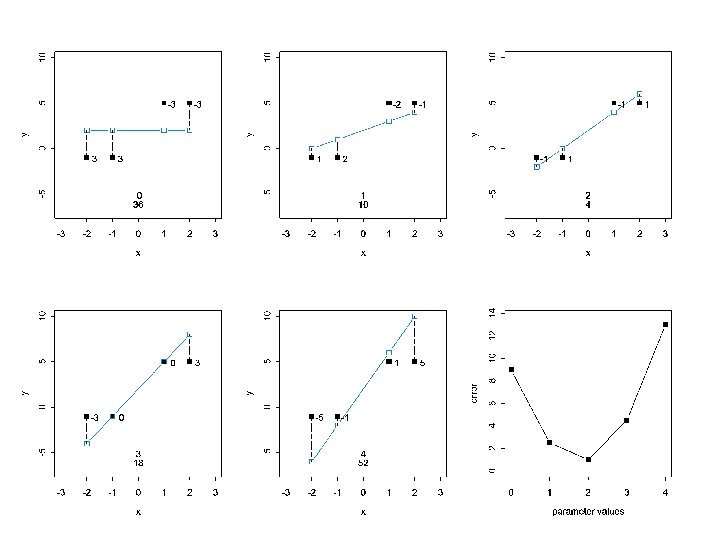

Estimation example • We begin by picking some values, plugging them into the linear equation, and seeing how well the implied values correspond to the observed values • We can quantify what we mean by “how well” by examining the difference between the model-implied Y and the actual Y value • this difference, , is often called error in prediction

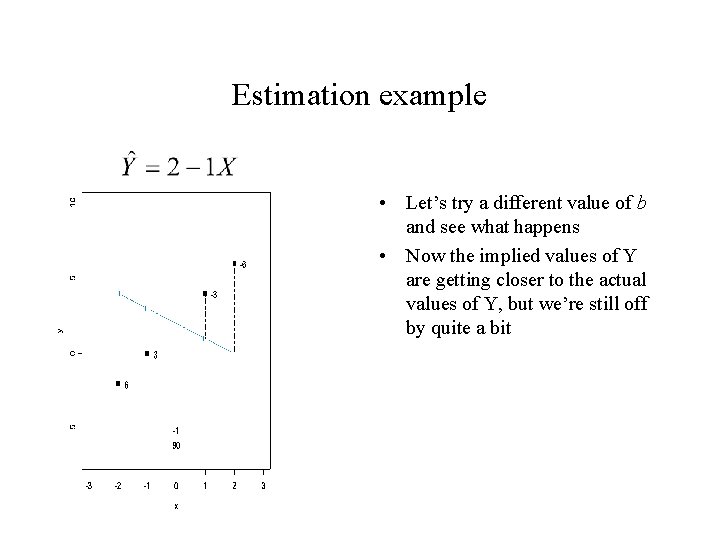

Estimation example • Let’s try a different value of b and see what happens • Now the implied values of Y are getting closer to the actual values of Y, but we’re still off by quite a bit

Estimation example • Things are getting better, but certainly things could improve

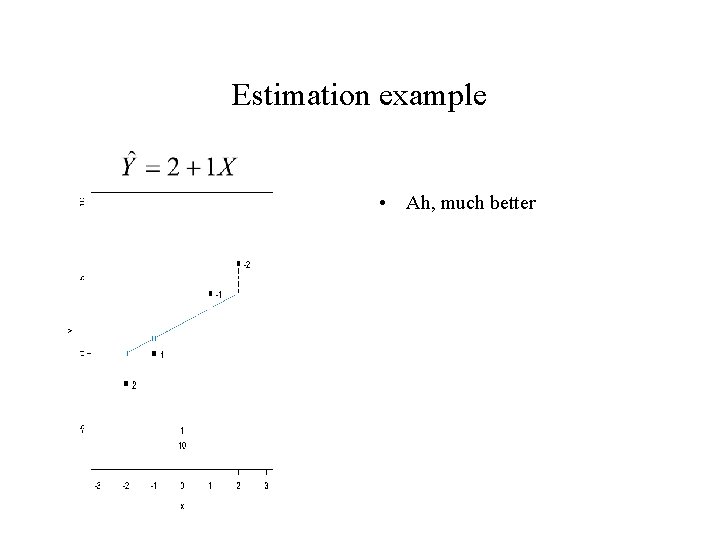

Estimation example • Ah, much better

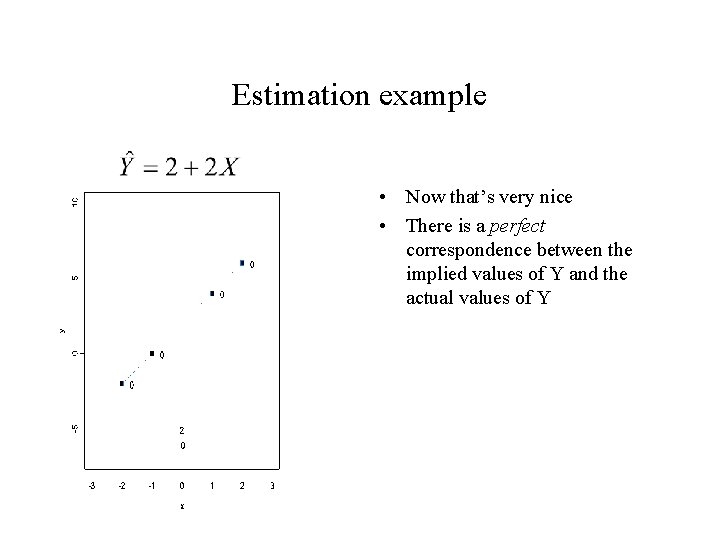

Estimation example • Now that’s very nice • There is a perfect correspondence between the implied values of Y and the actual values of Y

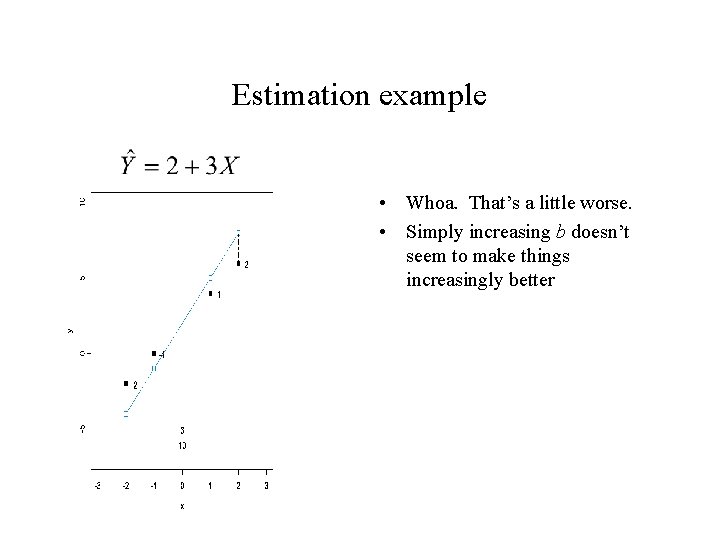

Estimation example • Whoa. That’s a little worse. • Simply increasing b doesn’t seem to make things increasingly better

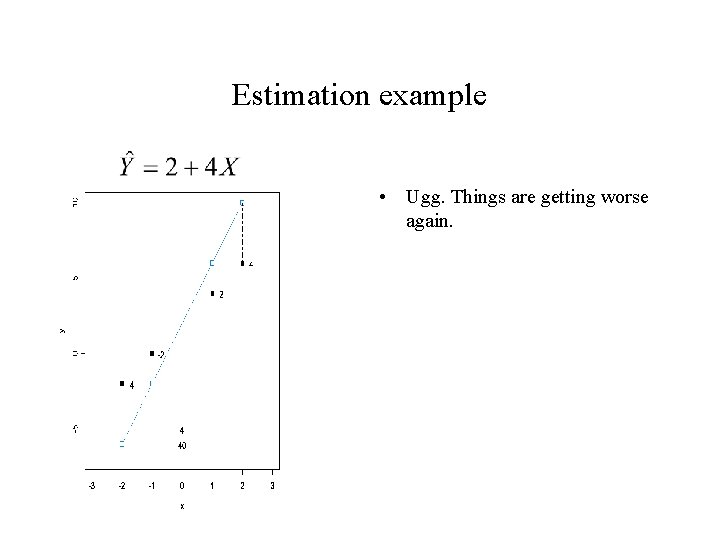

Estimation example • Ugg. Things are getting worse again.

Parameter Estimation example • Here is one way to think about what we’re doing: – We are trying to find a set of parameter values that will give us a small—the smallest—discrepancy between the predicted Y values and the actual values of Y. • How can we quantify this?

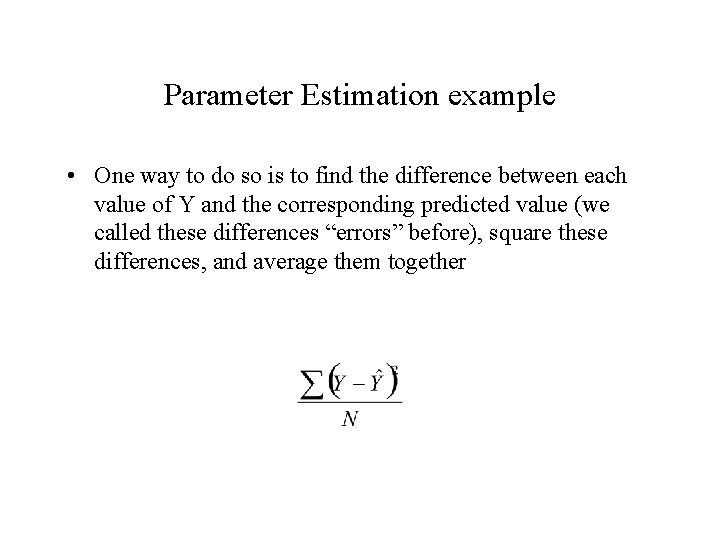

Parameter Estimation example • One way to do so is to find the difference between each value of Y and the corresponding predicted value (we called these differences “errors” before), square these differences, and average them together

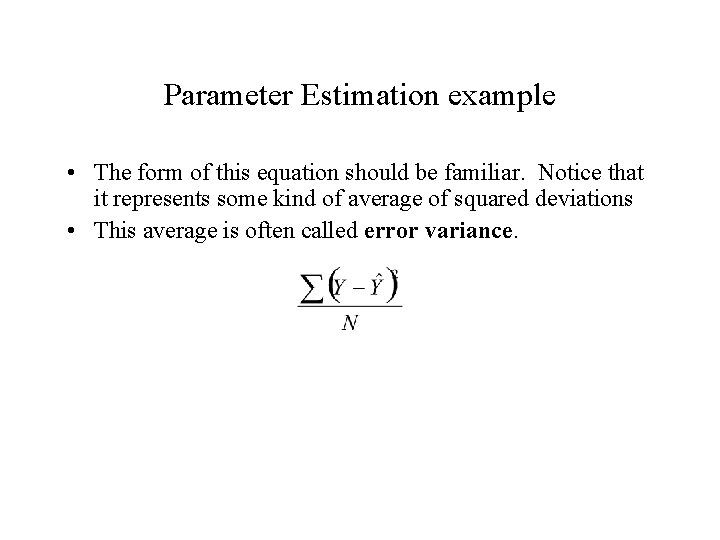

Parameter Estimation example • The form of this equation should be familiar. Notice that it represents some kind of average of squared deviations • This average is often called error variance.

Parameter Estimation example • In estimating the parameters of our model, we are trying to find a set of parameters that minimizes the error variance. In other words, we want to be as small as it possibly can be. • The process of finding this minimum value is called leastsquares estimation.

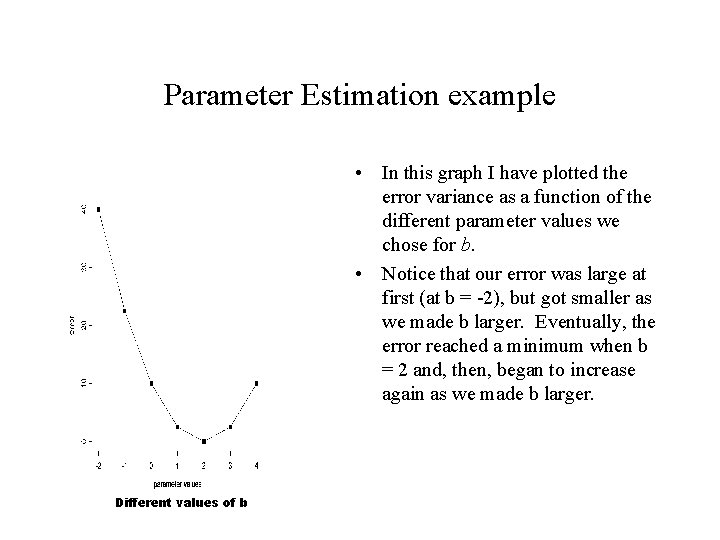

Parameter Estimation example • In this graph I have plotted the error variance as a function of the different parameter values we chose for b. • Notice that our error was large at first (at b = -2), but got smaller as we made b larger. Eventually, the error reached a minimum when b = 2 and, then, began to increase again as we made b larger. Different values of b

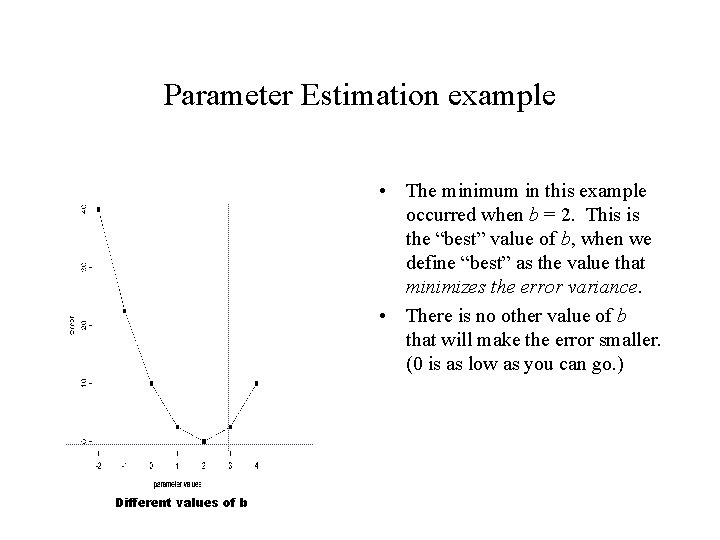

Parameter Estimation example • The minimum in this example occurred when b = 2. This is the “best” value of b, when we define “best” as the value that minimizes the error variance. • There is no other value of b that will make the error smaller. (0 is as low as you can go. ) Different values of b

Ways to estimate parameters • The method we just used is sometimes called the brute force or gradient descent method to estimating parameters. – More formally, gradient decent involves starting with viable parameter value, calculating the error using slightly different value, moving the best guess parameter value in the direction of the smallest error, then repeating this process until the error is as small as it can be. • Analytic methods – With simple linear models, the equation is so simple that brute force methods are unnecessary.

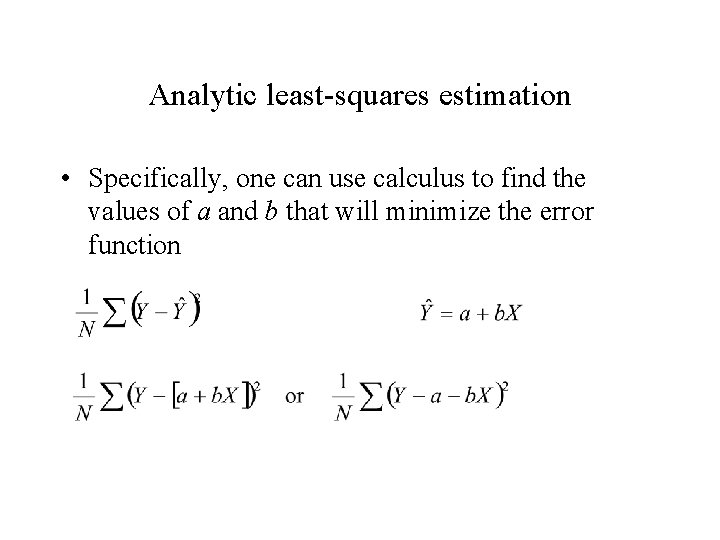

Analytic least-squares estimation • Specifically, one can use calculus to find the values of a and b that will minimize the error function

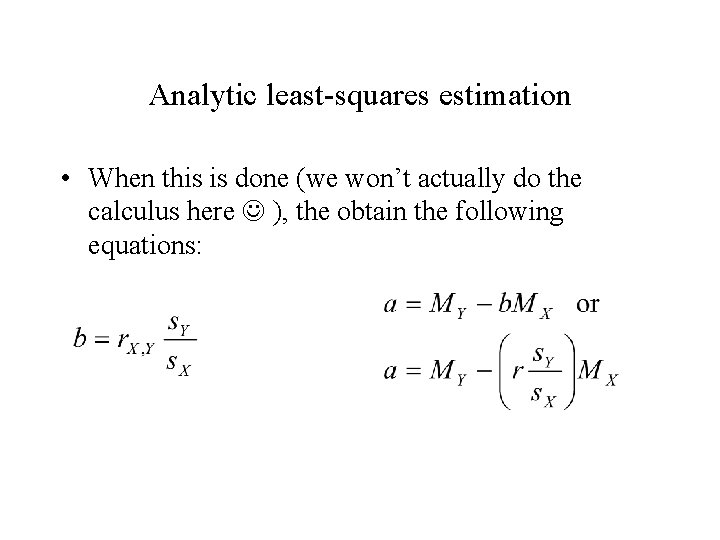

Analytic least-squares estimation • When this is done (we won’t actually do the calculus here ), the obtain the following equations:

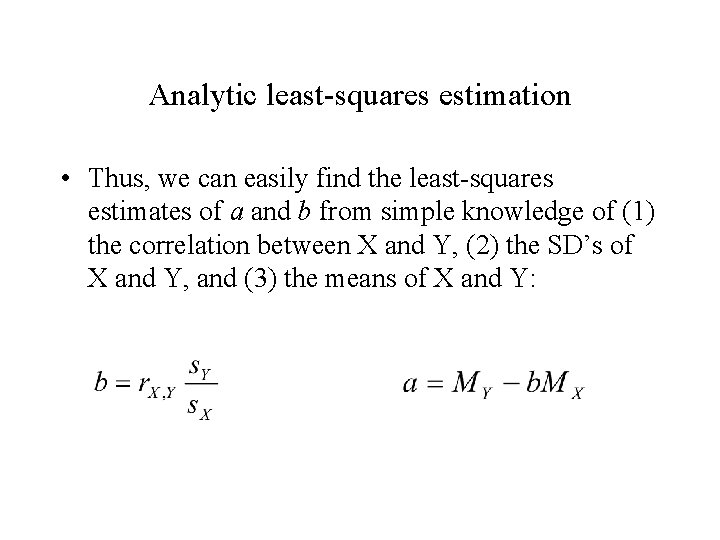

Analytic least-squares estimation • Thus, we can easily find the least-squares estimates of a and b from simple knowledge of (1) the correlation between X and Y, (2) the SD’s of X and Y, and (3) the means of X and Y:

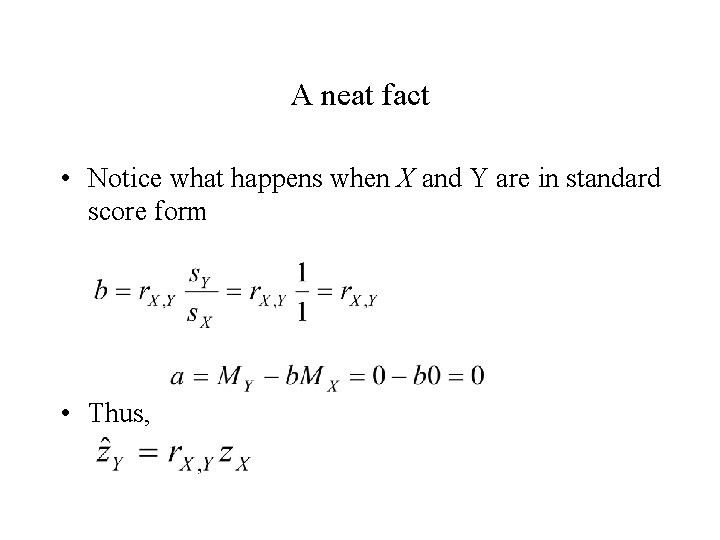

A neat fact • Notice what happens when X and Y are in standard score form • Thus,

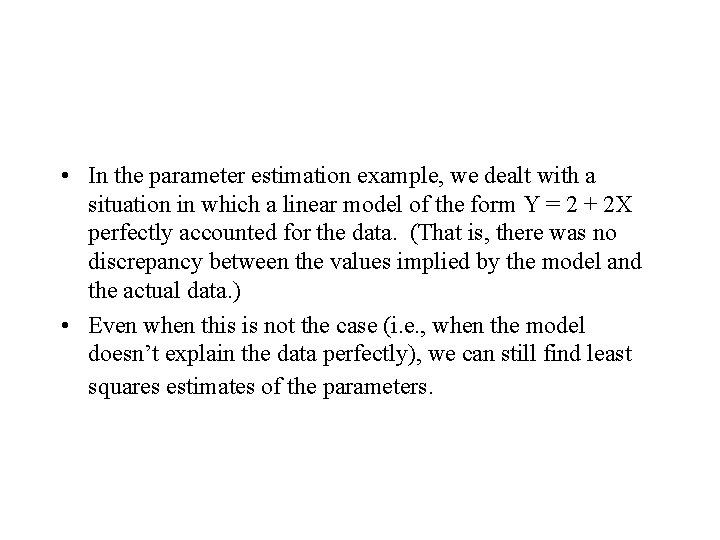

• In the parameter estimation example, we dealt with a situation in which a linear model of the form Y = 2 + 2 X perfectly accounted for the data. (That is, there was no discrepancy between the values implied by the model and the actual data. ) • Even when this is not the case (i. e. , when the model doesn’t explain the data perfectly), we can still find least squares estimates of the parameters.

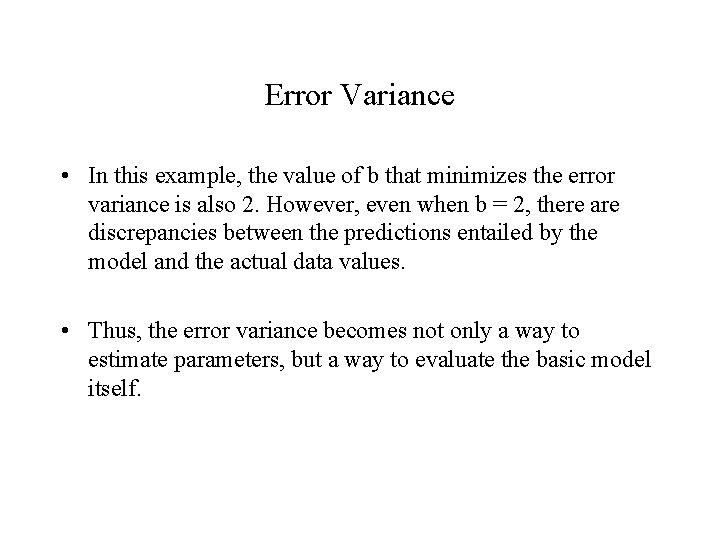

Error Variance • In this example, the value of b that minimizes the error variance is also 2. However, even when b = 2, there are discrepancies between the predictions entailed by the model and the actual data values. • Thus, the error variance becomes not only a way to estimate parameters, but a way to evaluate the basic model itself.

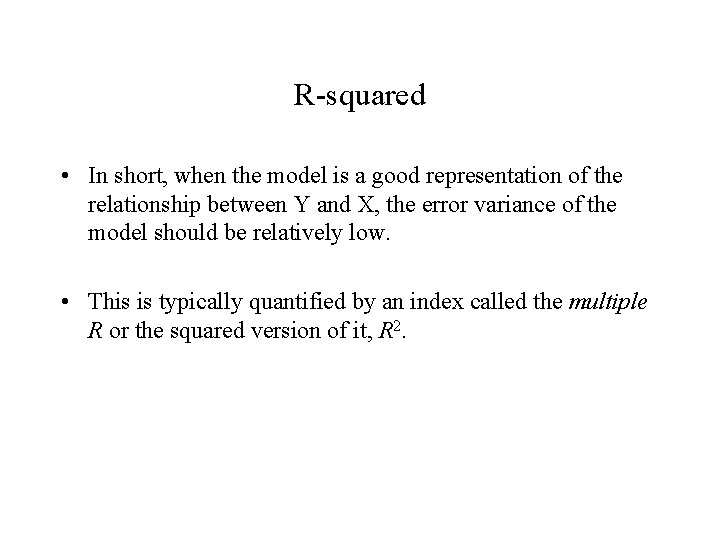

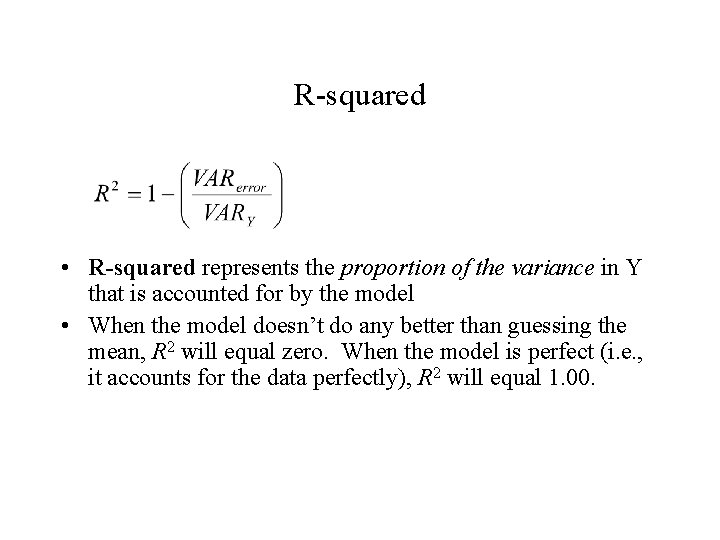

R-squared • In short, when the model is a good representation of the relationship between Y and X, the error variance of the model should be relatively low. • This is typically quantified by an index called the multiple R or the squared version of it, R 2.

R-squared • R-squared represents the proportion of the variance in Y that is accounted for by the model • When the model doesn’t do any better than guessing the mean, R 2 will equal zero. When the model is perfect (i. e. , it accounts for the data perfectly), R 2 will equal 1. 00.

Neat fact • When dealing with a simple linear model with one X, R 2 is equal to the correlation of X and Y, squared. • Why? Keep in mind that R 2 is in a standardized metric in virtue of having divided the error variance by the variance of Y. Previously, when working with standardized scores in simple linear regression equations, we found that the parameter b is equal to r. Since b is estimated via leastsquares techniques, it is directly related to R 2.

Why is R 2 useful? • R 2 is useful because it is a standard metric for interpreting model fit. – It doesn’t matter how large the variance of Y is because everything is evaluated relative to the variance of Y – Set end-points: 1 is perfect and 0 is as bad as a model can be.

Multiple Regression • In many situations in personality psychology we are interested in modeling Y not only as a function of a single X variable, but potentially many X variables. • Example: We might attempt to explain variation in academic achievement as a function of SES and maternal education.

• Y = a + b 1*SES + b 2*MATEDU • Notice that “adding” a new variable to the model is simple. This equation states that academic achievement is a function of at least two things, SES and MATEDU.

• However, what the regression coefficients now represent is not merely the change in Y expected given a 1 unit increase in X. They represent the change in Y given a 1 unit change in X assuming all the other variables in the equation equal zero. • In other words, these coefficients are kind of like partial correlations (technically, they are called semi-partial correlations). We’re statistically controlling SES when estimating the effect of MATEDU.

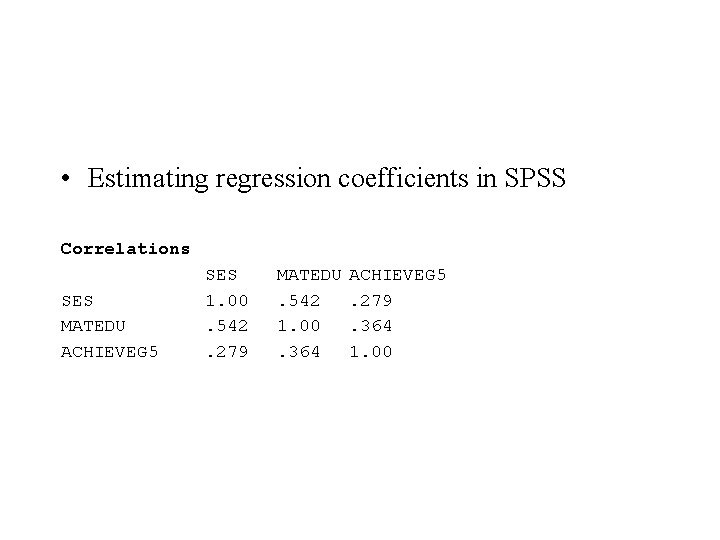

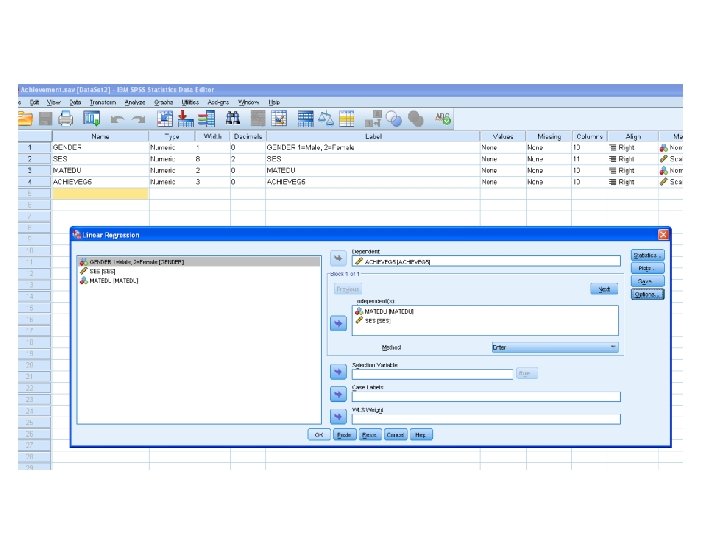

• Estimating regression coefficients in SPSS Correlations SES MATEDU ACHIEVEG 5 SES 1. 00. 542. 279 MATEDU. 542 1. 00. 364 ACHIEVEG 5. 279. 364 1. 00

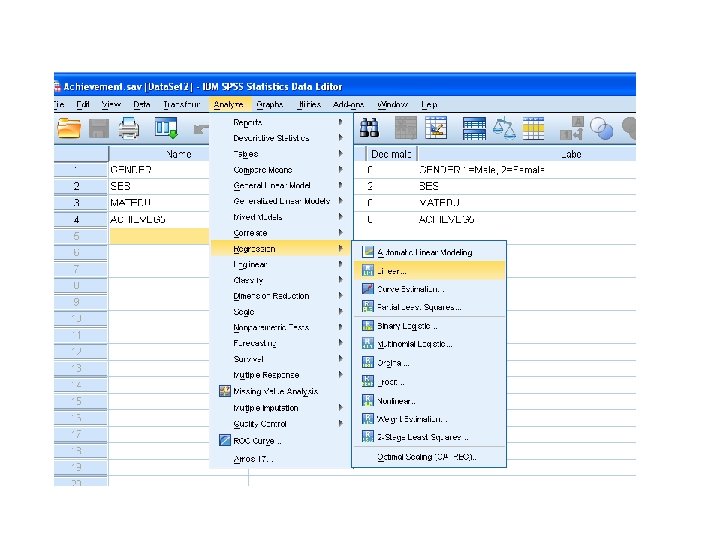

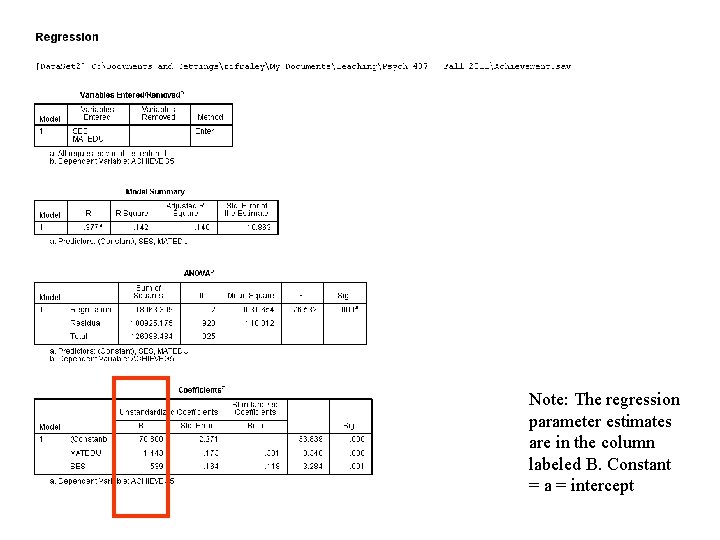

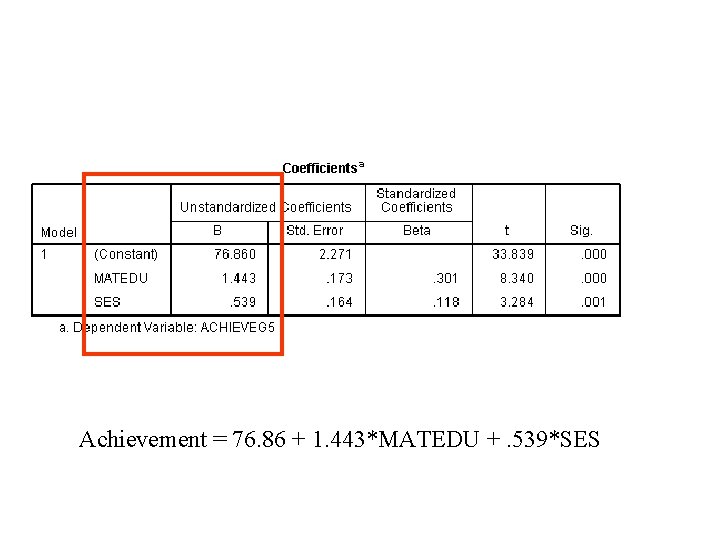

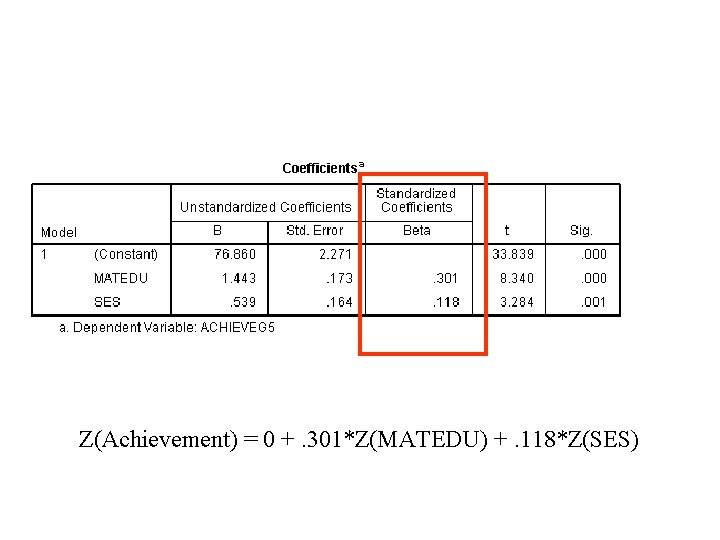

Note: The regression parameter estimates are in the column labeled B. Constant = a = intercept

Achievement = 76. 86 + 1. 443*MATEDU +. 539*SES

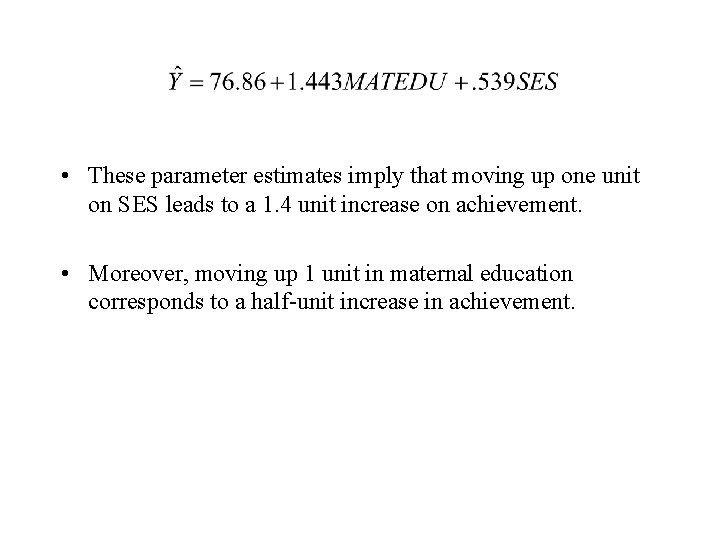

• These parameter estimates imply that moving up one unit on SES leads to a 1. 4 unit increase on achievement. • Moreover, moving up 1 unit in maternal education corresponds to a half-unit increase in achievement.

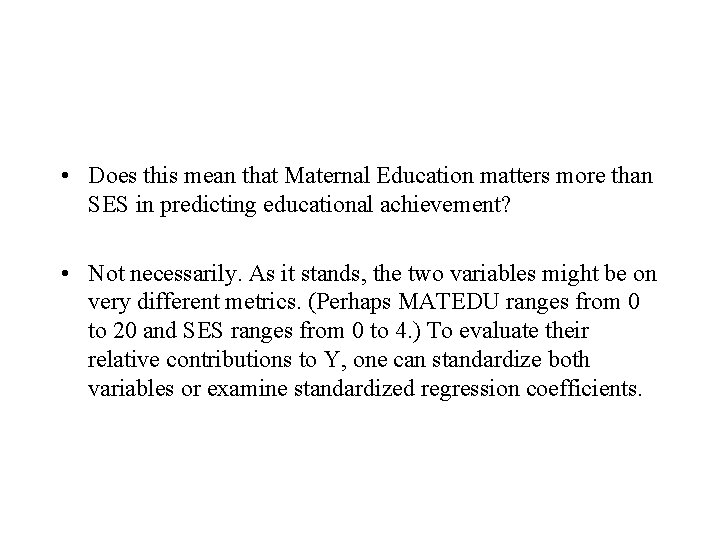

• Does this mean that Maternal Education matters more than SES in predicting educational achievement? • Not necessarily. As it stands, the two variables might be on very different metrics. (Perhaps MATEDU ranges from 0 to 20 and SES ranges from 0 to 4. ) To evaluate their relative contributions to Y, one can standardize both variables or examine standardized regression coefficients.

Z(Achievement) = 0 +. 301*Z(MATEDU) +. 118*Z(SES)

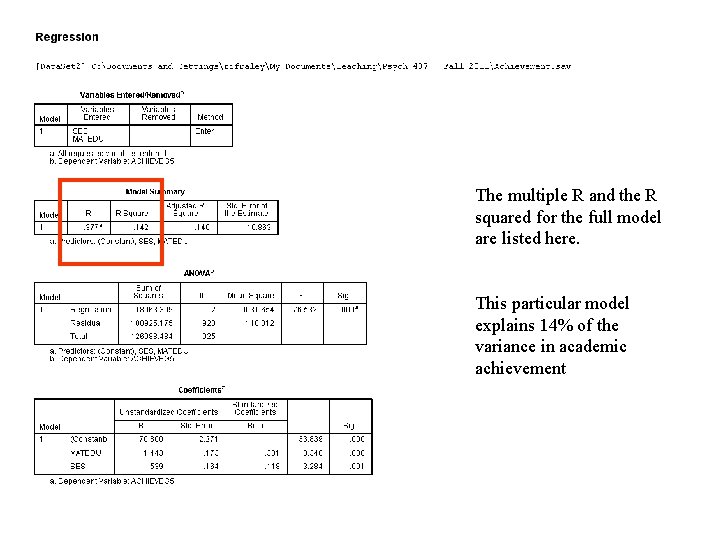

The multiple R and the R squared for the full model are listed here. This particular model explains 14% of the variance in academic achievement

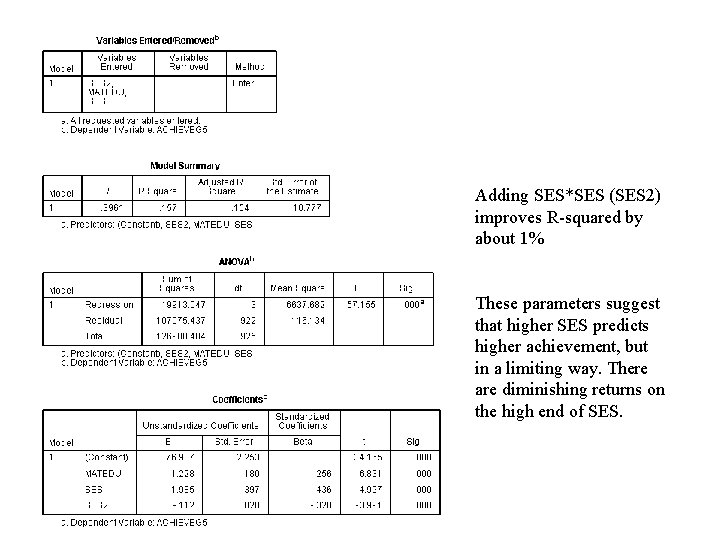

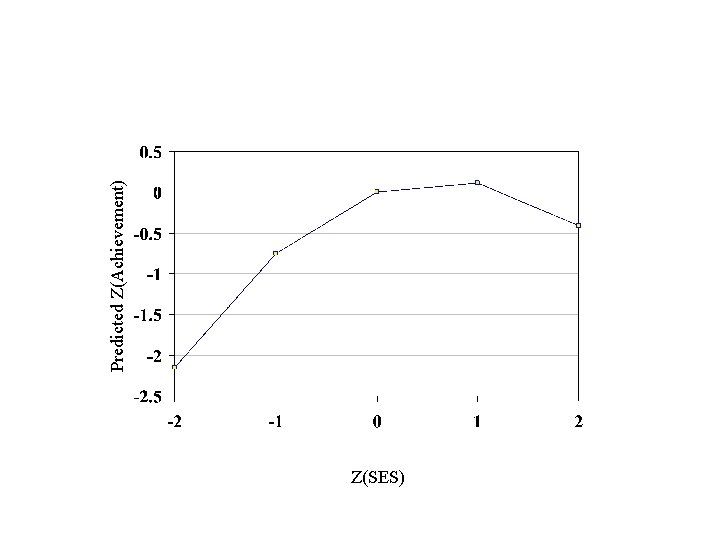

Adding SES*SES (SES 2) improves R-squared by about 1% These parameters suggest that higher SES predicts higher achievement, but in a limiting way. There are diminishing returns on the high end of SES.

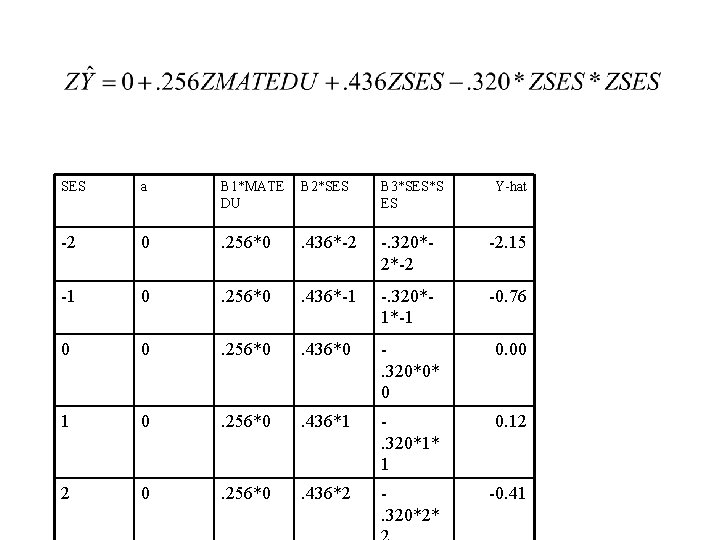

SES a B 1*MATE DU B 2*SES B 3*SES*S ES Y-hat -2 0 . 256*0 . 436*-2 -. 320*2*-2 -2. 15 -1 0 . 256*0 . 436*-1 -. 320*1*-1 -0. 76 0 0 . 256*0 . 436*0 . 320*0* 0 0. 00 1 0 . 256*0 . 436*1 . 320*1* 1 0. 12 2 0 . 256*0 . 436*2 . 320*2* -0. 41

Z(SES) Predicted Z(Achievement)

- Slides: 58