BASIC BUILDING BLOCKS Harit Desai Byzantine Generals Problem

BASIC BUILDING BLOCKS -Harit Desai

Byzantine Generals Problem • If a computer fails, – it behaves in a well defined manner • A component always shows a zero at the output or simply stop execution – It behaves arbitrarily • Sends totally different information to different components with which it communicates The problem of reaching an agreement in a system where components can fail in an arbitrary manner is called byzantine generals problem

Interactive Consistency Problem • Each node makes decision based on the values it gets • We require all non-faulty nodes to make same decision • So, the goal is that all non-faulty nodes gets the same set of values • Hence, consensus can be achieved • But, a faulty node may send different values to different nodes

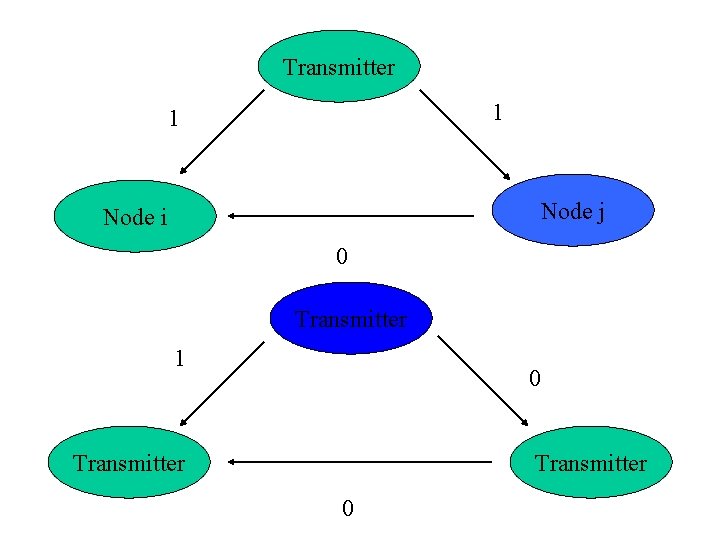

Transmitter 1 1 Node j Node i 0 Transmitter 1 0 Transmitter 0

Protocols with ordinary messages • Requirements n >= 3 m+1 where, n = total number of nodes m = number of faulty nodes • Assumptions about message passing system – Every message that is sent by node is delivered correctly by the message passing system to the receiver

Assumptions – Continued… – The receiver of a message knows which node has sent the message – Absence of a message can be detected

Interactive consistency algorithm Algorithm ICA(0) 1) The transmitter sends its value to all the other N-1 nodes. 2)Each node uses the value it receives from the transmitter or uses the default value.

Algorithm ICA(m), m>0 1) The transmitter its value to all the other n-1 nodes. 2)Let Vi be the value the node i receives from the transmitter, or else be the default value if it receives no value sends. Node i acts as the transmitter in algorithm ICA(m-1) to send the value Vi to each of the other n-2 nodes 3)For each node i, let Vj be the value received by by the node j (j != i). Node i uses the value majority(V 1, v 2, …. , Vn-1).

Protocol with signed messages • Algorithm SM(m) Initially Vi = null 1) The transmitter signs its value and sends to all other nodes. 2) For each i : (a) If a node i receives a message of the form v from the transmitter then (a 1)it sets Vi to {v}, and (a 2)it sends the message v: 0: i to every other node.

continued……. (b) If node i receives a message of the form v: 0: j 1: j 2: …. : jk and v is not in Vi, then (b 1) it adds v to Vi, and (b 2) if k<m it sends the message v: 0: j 1: j 2: …. : jk: i to every node other than j 1, j 2, …. , jk. 3)For each i: when node i will receive no more messages, it considers the final value as choice(Vi)

Clock synchronization • Problems – clocks of different nodes have different times and may be running at different speeds. – communication will induce delay between sending and receiving of the message. – networks delays can vary. – clocks may be faulty(dual-faced).

Requirements of clock synchronization • for a nonfaulty clock Ci |d. Ci/dt – 1| < $ where $ is of the order of 10 e-5 • at any time , the value of all the nonfaulty processors’ clocks must be approximately equal |Ci(t) – Cj(t)| <= b b = constant • there is a small bound by which a nonfaulty clock is changed during resynchronization.

Synchronization protocols • Deterministic protocols – clock synchronization conditions and bounds are guaranteed. – but, they require some assumption about message delays. • Probabilistic protocols – does not require any assumptions about message delays. – but guarantees precision only with a probability.

Deterministic Clock Synchronization • all clocks are initially synchronized to approximately the same value. |Ci(t 0) – Cj(t 0)| < b • each process can communicate directly with any other process. • if a process sends a message at real-time t and received at t’, then message delay is t’- t. message is delivered in [&-e, &+e] time, for fixed & and e, with &>e.

• Th algo works in rounds… • The ith rounds is triggered when the clock reaches Ti. • When process j reaches Ti, it broadcasts a message containing Ti. • It also collects ith round messages for a bounded amount of time and records their arrival times according to its local clock. • This waiting period is to ensure that correct process will send a message in this waiting period.

Bounded waiting time • • • Process j ‘s clock reaches Ti. Process k’s clock will reach Ti within a time b At this time k will broadcast Ti to all processes Message delay = &+e J receives k’s message at (b+&+e) after it own clock reaches the value Ti. • Clock rates may differ by $ from real time. • So, the bounded time, within which j should receive the message of k containing Ti is (1+$)(b+&+e) • Once this time is elapsed , the process must have received messages from all non-faulty processes.

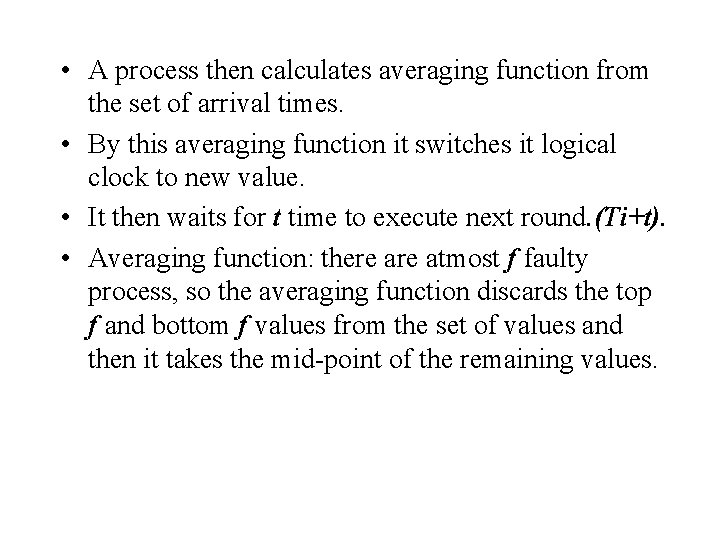

• A process then calculates averaging function from the set of arrival times. • By this averaging function it switches it logical clock to new value. • It then waits for t time to execute next round. (Ti+t). • Averaging function: there atmost f faulty process, so the averaging function discards the top f and bottom f values from the set of values and then it takes the mid-point of the remaining values.

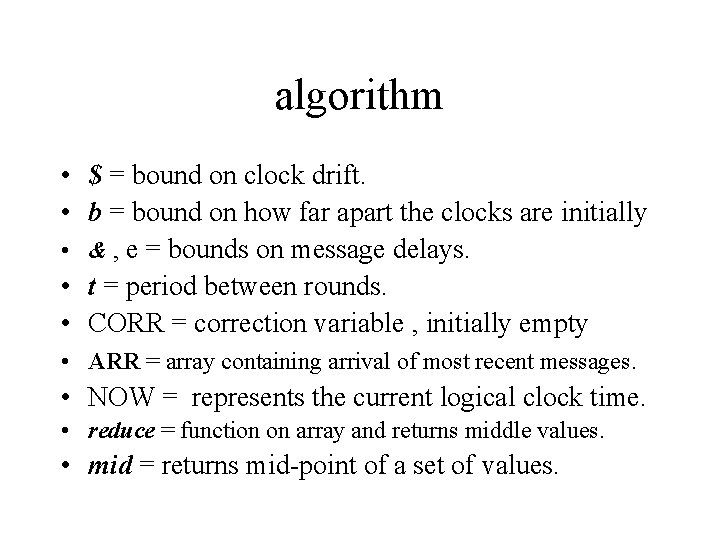

algorithm • $ = bound on clock drift. • b = bound on how far apart the clocks are initially • & , e = bounds on message delays. • t = period between rounds. • CORR = correction variable , initially empty • ARR = array containing arrival of most recent messages. • NOW = represents the current logical clock time. • reduce = function on array and returns middle values. • mid = returns mid-point of a set of values.

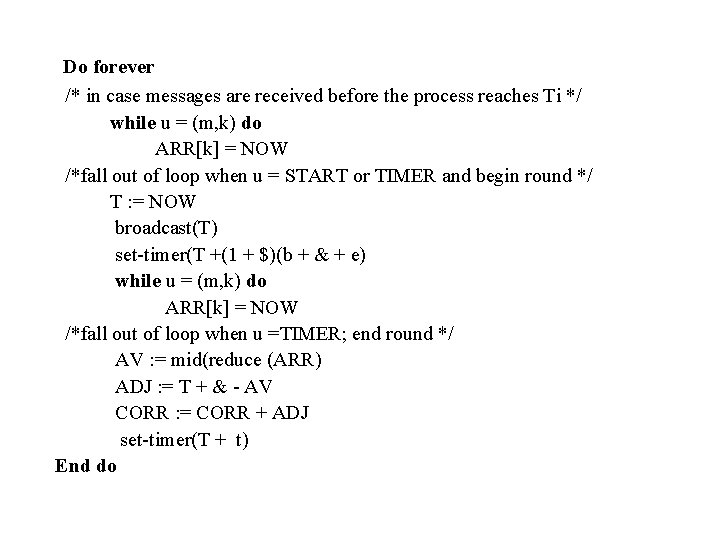

Do forever /* in case messages are received before the process reaches Ti */ while u = (m, k) do ARR[k] = NOW /*fall out of loop when u = START or TIMER and begin round */ T : = NOW broadcast(T) set-timer(T +(1 + $)(b + & + e) while u = (m, k) do ARR[k] = NOW /*fall out of loop when u =TIMER; end round */ AV : = mid(reduce (ARR) ADJ : = T + & - AV CORR : = CORR + ADJ set-timer(T + t) End do

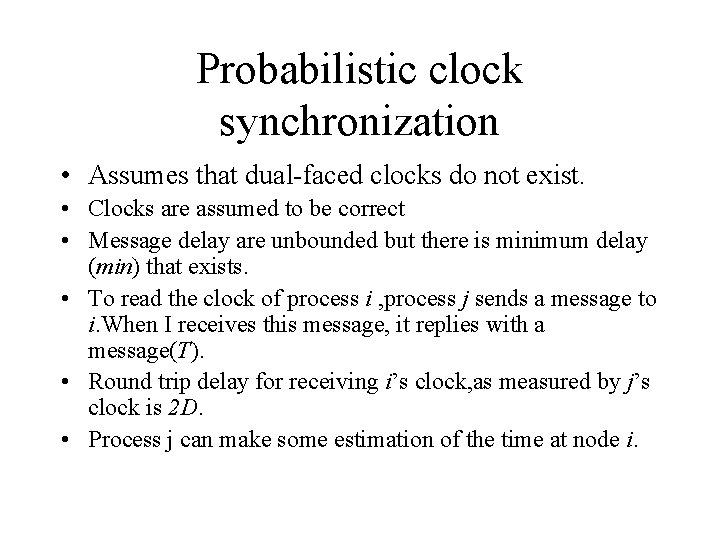

Probabilistic clock synchronization • Assumes that dual-faced clocks do not exist. • Clocks are assumed to be correct • Message delay are unbounded but there is minimum delay (min) that exists. • To read the clock of process i , process j sends a message to i. When I receives this message, it replies with a message(T). • Round trip delay for receiving i’s clock, as measured by j’s clock is 2 D. • Process j can make some estimation of the time at node i.

• Let t be the real-time when j receives a reply from i and 2 d be the real-time round trip delay. • The time of receipt of message, according to I’s clock has tobe more than T +min(1 -$), where $ is the bound on the drift of the clocks. • Maximum delay of the return message is 2 d – min. • Clock time maximum delay is ( 2 d – min )(1 + $). • Also, 2 d <= 2 D(1+$). • Hence maximum clock time delay is – 2 D(1+$)-min(1+$) = 2 D(1+2$)-min(1+$) • So, j can infer that at time j receives the message from i , i’s clock is in the range : – [T+min(1+$) , T+2 D(1+2$-min(1+$)]

• Now, j has to select its value in the interval as i’s clock value. • The error is minimised if mid-point value is selected. • Maximum error possible E =D(1+2$)-min. • Maximum error value E can be taken as the precision with which j can read the value of the i’s clock. • Shorter the round trip delay , better is the precision of reading a clock. • If a process j wants to read the clock of another process i with specified precision e, it must discard all reading attempts in which the round trip delay is greater than 2 U, where U = (1 -2$)(e+min).

Stable storage • Computer system has some stable storage whose contents are preserved despite failures. • Failures that these techniques cannot handle… – Transient failures: these cause the disk to behave unpredictably for short period of time. – Bad sector: page becomes corrupted, and data stored in it cannot be read. – Controller failure: the disk controller fails. – Disk failure: entire disk becomes unreadable.

• Undesirable results of a read(a) operation are: – Soft read error: page a is good , but read returns bad. this situation may not persist for long , and is caused by transient failures. – Persistent read error: page a is good, but read returns bad, and successive reads also returns bad. (bad sector) – Undetected error: page a is bad but returns good , or page a is good but returns different data. • Undesirable results of a write(a, d)operation are: – Null write: page a is unchanged. – Bad write: page a becomes (bad, a).

Decay events Corruption: A page goes from (good , d) to (bad , d). Revival: A page goes from (bad , d) to (good , d). Undetected error: A page changes from(s, d) to (s, d’) with d<>d’.

Implementation • Using one disk: – Careful. Read: read is performed repeatedly until it returns the status good, or the page cannot be read after certain amount of tries. – Careful. Write: performs a write followed by read until read returns the status good. – But, this cannot take care of decay events. Stable storage is represented as by an ordered pair of disk pages. – Stable. Read: performs a Careful. Read from one of the paired pages, and if the result is bad, performs a Careful. Read from the other. – Stable. Write: performs Careful. Write to one of the representative pages first. When the operation is completed, it performs a Careful. Write to the other page.

• This takes care of the decay events. • A crash during Stable. Write may cause two pages to differ. To handle this, cleanup operation is performed. Do a Careful. Read from each of the two representative pages If both return good and same data then Do nothing Elseif one returns bad then Do a Careful. Write of data from good page to bad page. Elseif both return good, but different data then choose either one of the page and do a Careful. Write of its data to the other page

Disk shadowing • It is technique for maintaining a set of identical disk images on separate disk devices. • Primary purpose is to increase reliability amd availability. • Consider a case of two disks(mirrored disks) – Total failure occur only if both disk fail. MTTFm = MTTF/2 * MTTF/MTTR

Redundant Arrays of Disks • Data is spread over multiple disks using “bit-interleaving”. • Bit-interleaving provides high I/O performance. • But are not reliable, since the failure of any disk can cause entire data to become unavailable. • So, disks are partitioned in groups. – Each group has some data disk and some check disk. – Number of check disk depends on the coding technique used. – Say, check disk stores parity then one disk is required.

• Failure of RAID occurs only if more than one disk fails. – Assume that a RAID consists of only one group of disks, with G data disks and C check disks. – If failure and repair of disks are exponential distributed, then mean time to failure of the group = MTTF/(G+C) * (MTTF/(G+C-1))/MTTR

- Slides: 30