Balancing Human Efforts and Performance of Student Response

Balancing Human Efforts and Performance of Student Response Analyzer in Dialogbased Tutors Tejas I. Dhamecha, Smit Marvaniya, Swarnadeep Saha, Renuka Sindhgatta, and Bikram Sengupta IBM Research-India {tidhamecha, smarvani, swarnads, renuka. sr, bsengupt}@in. ibm. com

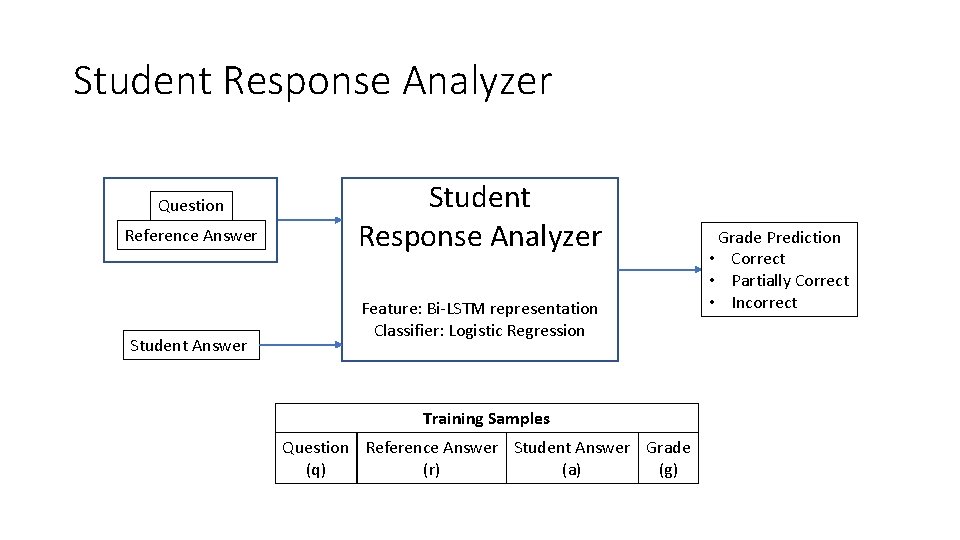

Student Response Analyzer Question Reference Answer Student Response Analyzer Feature: Bi-LSTM representation Classifier: Logistic Regression Training Samples Question Reference Answer Student Answer Grade (q) (r) (a) (g) Grade Prediction • Correct • Partially Correct • Incorrect

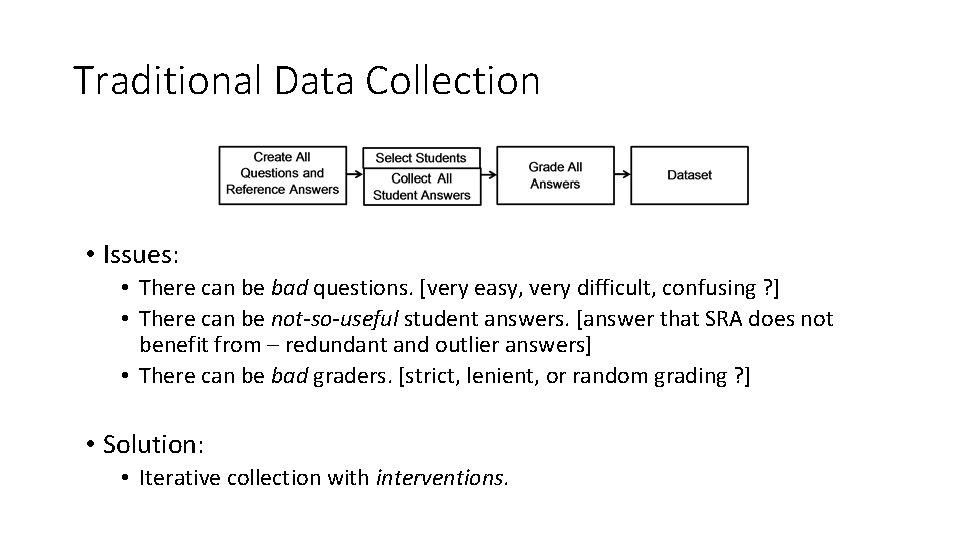

Traditional Data Collection • Issues: • There can be bad questions. [very easy, very difficult, confusing ? ] • There can be not-so-useful student answers. [answer that SRA does not benefit from – redundant and outlier answers] • There can be bad graders. [strict, lenient, or random grading ? ] • Solution: • Iterative collection with interventions.

Proposed

Contributions • An iterative data collection approach for reducing human efforts pertaining to the content creation and grading. • Automated approach to predict question difficulty. • Approach for selecting questions and students iteratively to obtain a representative dataset. • Approach for reducing the grading effort by filtering some of the student answers.

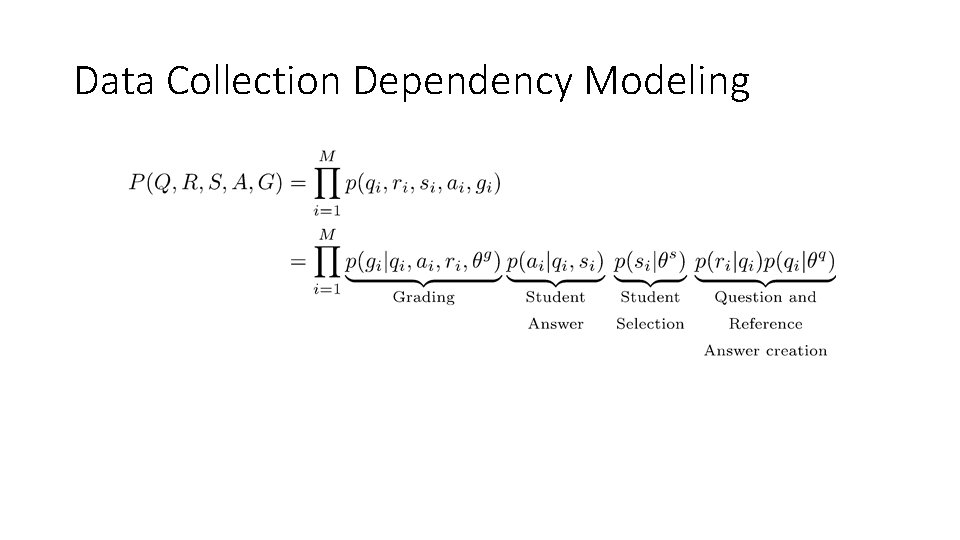

Data Collection Dependency Modeling

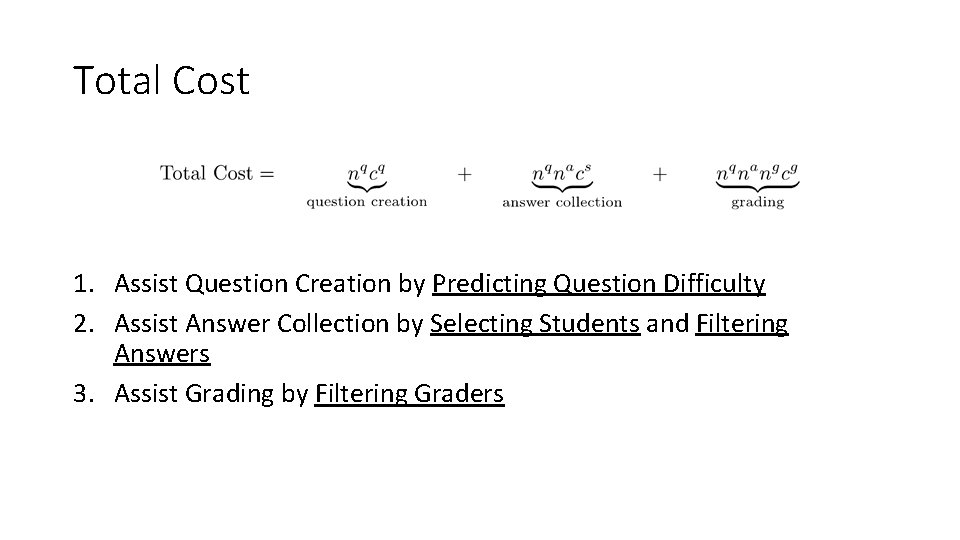

Total Cost 1. Assist Question Creation by Predicting Question Difficulty 2. Assist Answer Collection by Selecting Students and Filtering Answers 3. Assist Grading by Filtering Graders

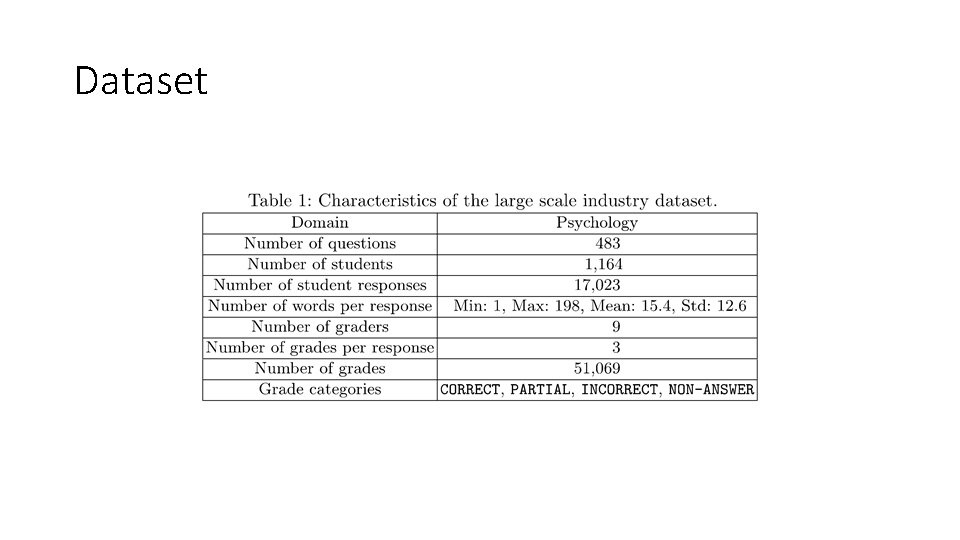

Dataset

1. Predict Question Difficulty

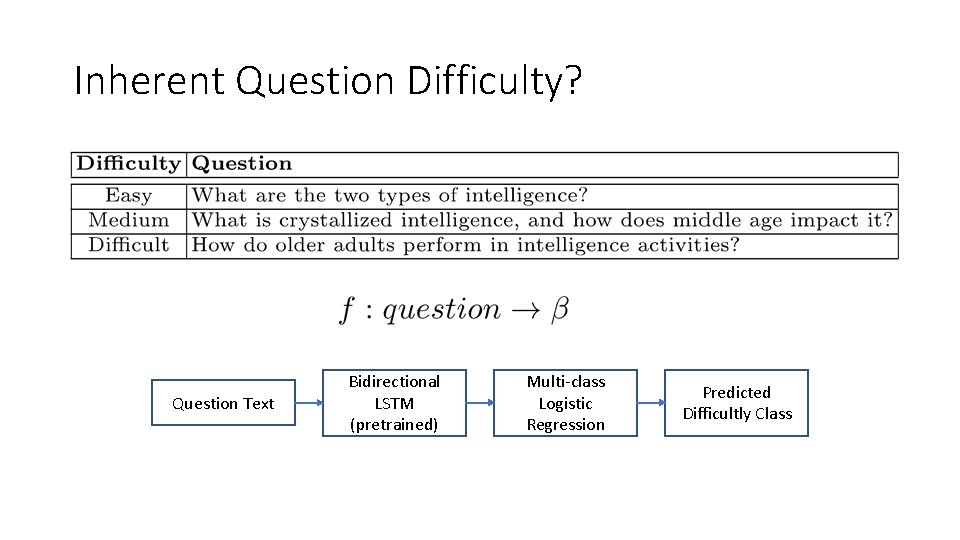

Inherent Question Difficulty? Question Text Bidirectional LSTM (pretrained) Multi-class Logistic Regression Predicted Difficultly Class

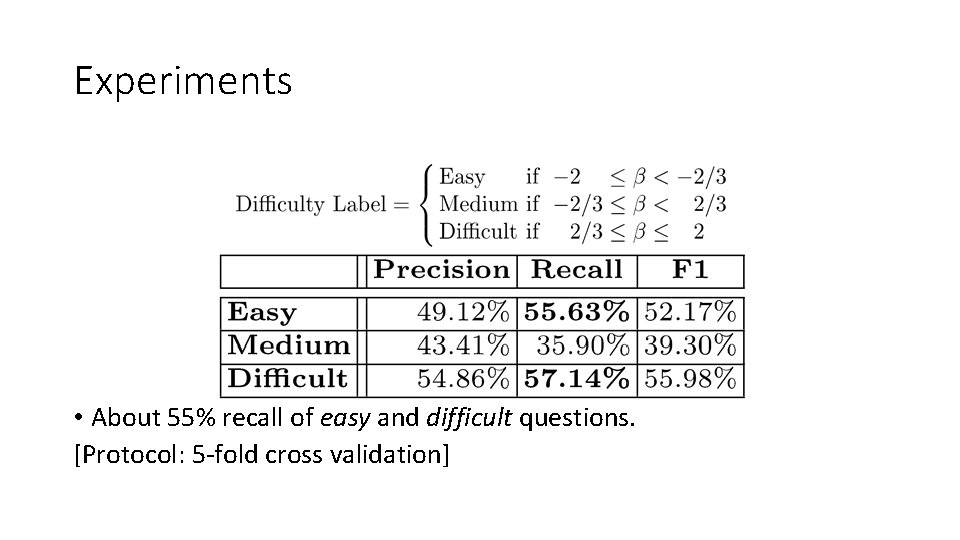

Experiments • About 55% recall of easy and difficult questions. [Protocol: 5 -fold cross validation]

2. Select Students (to Answer Selected Questions)

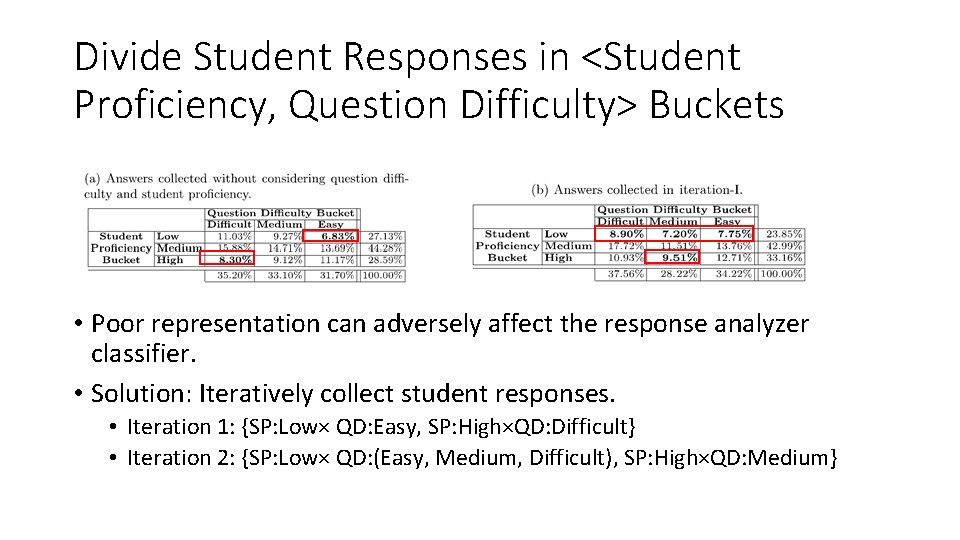

Divide Student Responses in <Student Proficiency, Question Difficulty> Buckets • Poor representation can adversely affect the response analyzer classifier. • Solution: Iteratively collect student responses. • Iteration 1: {SP: Low× QD: Easy, SP: High×QD: Difficult} • Iteration 2: {SP: Low× QD: (Easy, Medium, Difficult), SP: High×QD: Medium}

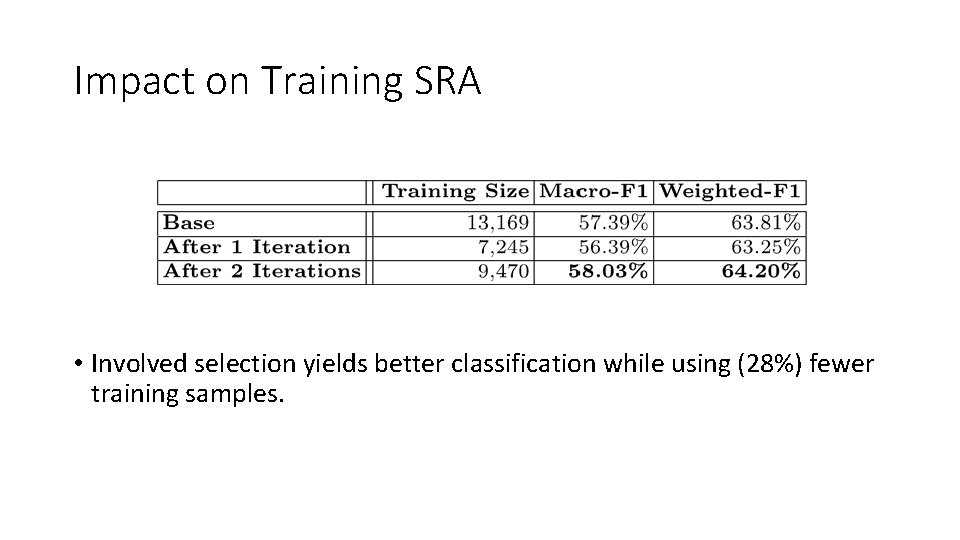

Impact on Training SRA • Involved selection yields better classification while using (28%) fewer training samples.

3. Filter Student Answers

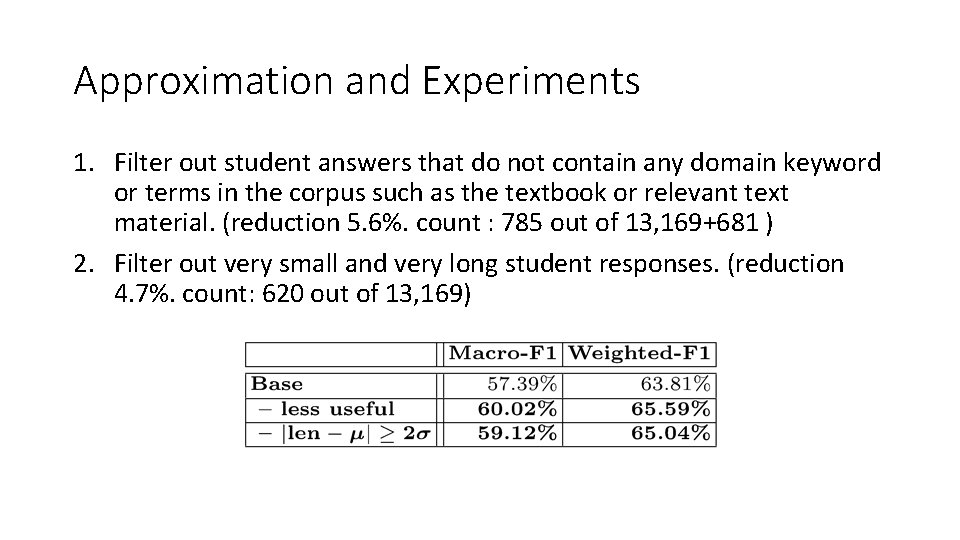

Approximation and Experiments 1. Filter out student answers that do not contain any domain keyword or terms in the corpus such as the textbook or relevant text material. (reduction 5. 6%. count : 785 out of 13, 169+681 ) 2. Filter out very small and very long student responses. (reduction 4. 7%. count: 620 out of 13, 169)

4. Filter Graders

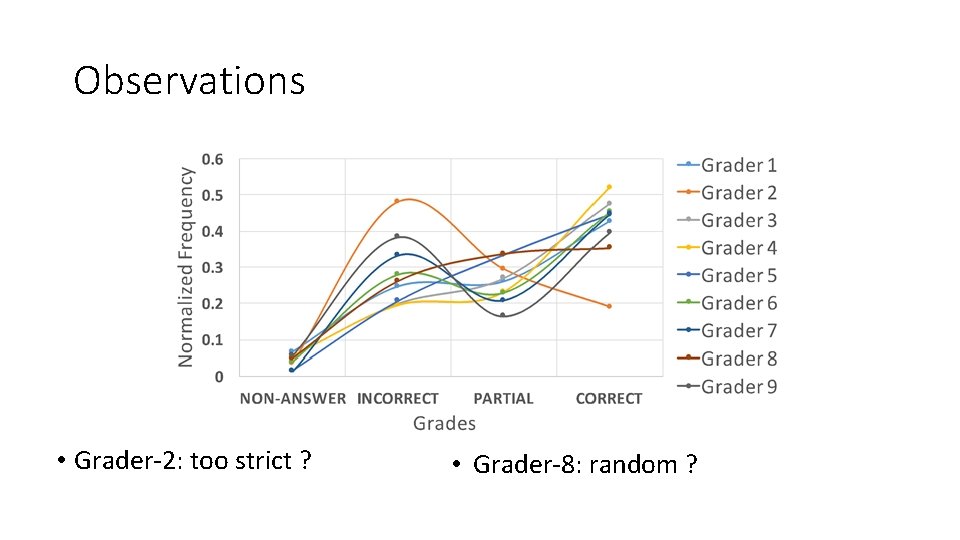

Observations • Grader-2: too strict ? • Grader-8: random ?

Inter-annotator Agreement • Grader-2 is problematic, Grader-8 is okay.

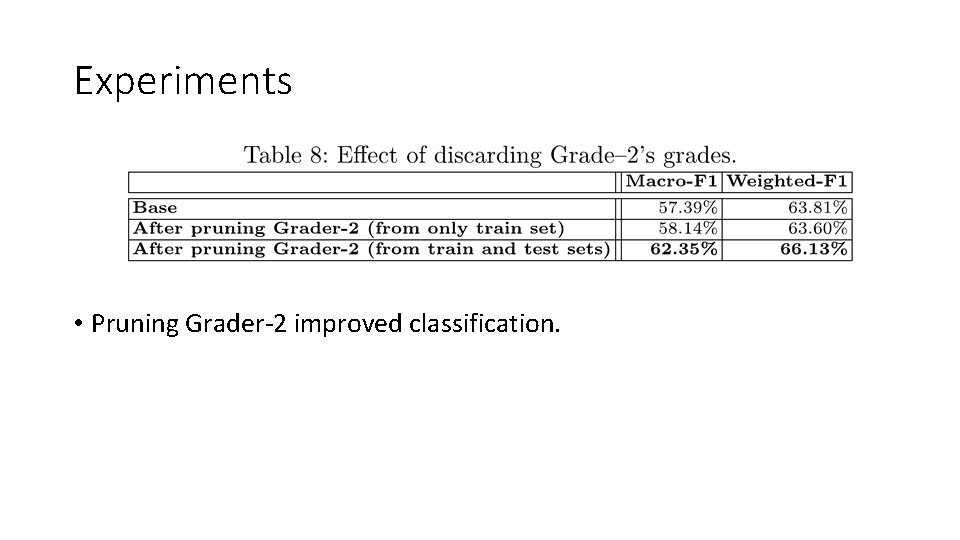

Experiments • Pruning Grader-2 improved classification.

Summary and Conclusion • Iteratively selecting students to answer certain questions helps reduce answer collection cost by 28%. • Saving about 10% on grading cost. • Pruning bad graders improves classification macro-F 1 by 5%. • There are initial (but encouraging) results about question difficulty prediction.

- Slides: 21