Azure Accelerated Networking Smart NICs in the Public

Azure Accelerated Networking: Smart. NICs in the Public Cloud CIS 800 – lecture 3

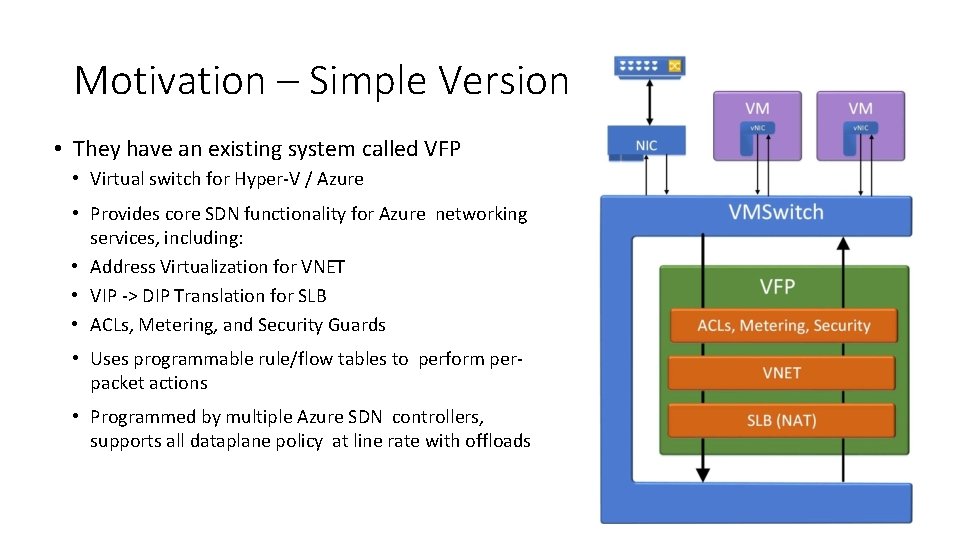

Motivation – Simple Version • They have an existing system called VFP • Virtual switch for Hyper-V / Azure • Provides core SDN functionality for Azure networking services, including: • Address Virtualization for VNET • VIP -> DIP Translation for SLB • ACLs, Metering, and Security Guards • Uses programmable rule/flow tables to perform perpacket actions • Programmed by multiple Azure SDN controllers, supports all dataplane policy at line rate with offloads

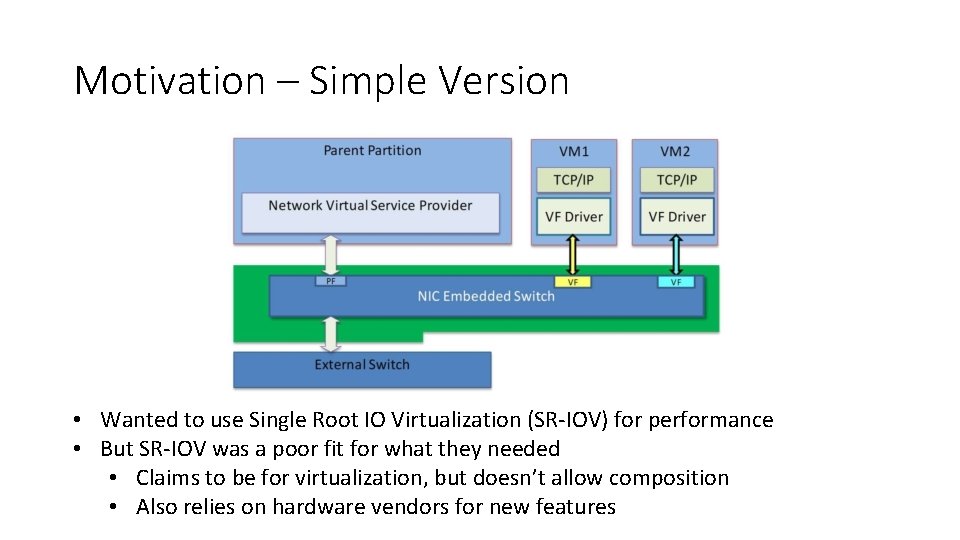

Motivation – Simple Version • Wanted to use Single Root IO Virtualization (SR-IOV) for performance • But SR-IOV was a poor fit for what they needed • Claims to be for virtualization, but doesn’t allow composition • Also relies on hardware vendors for new features

Solution – Simple Version • Let’s just do it ourselves! • Buy some programmable FPGAs (still cheaper than CPUs) • Hire some hardware programmers (probably already have some)

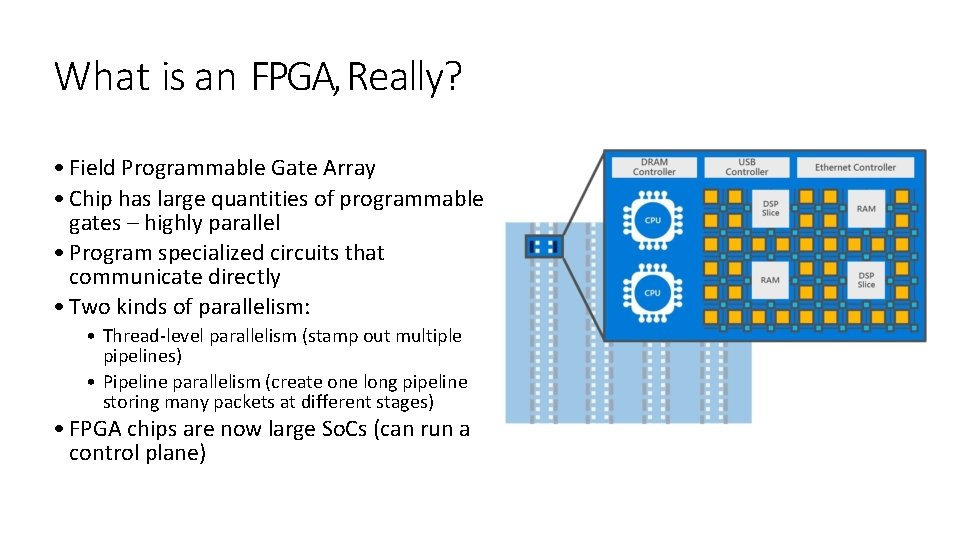

What is an FPGA, Really? • Field Programmable Gate Array • Chip has large quantities of programmable gates – highly parallel • Program specialized circuits that communicate directly • Two kinds of parallelism: • Thread-level parallelism (stamp out multiple pipelines) • Pipeline parallelism (create one long pipeline storing many packets at different stages) • FPGA chips are now large So. Cs (can run a control plane)

Solution – Simple Version • Let’s just do it ourselves! • Buy some programmable FPGAs (still cheaper than CPUs) • Hire some hardware programmers (probably already have some) • Straightforward solution • Mirrors trends elsewhere in the datacenter • Servers and Switches configurations are custom

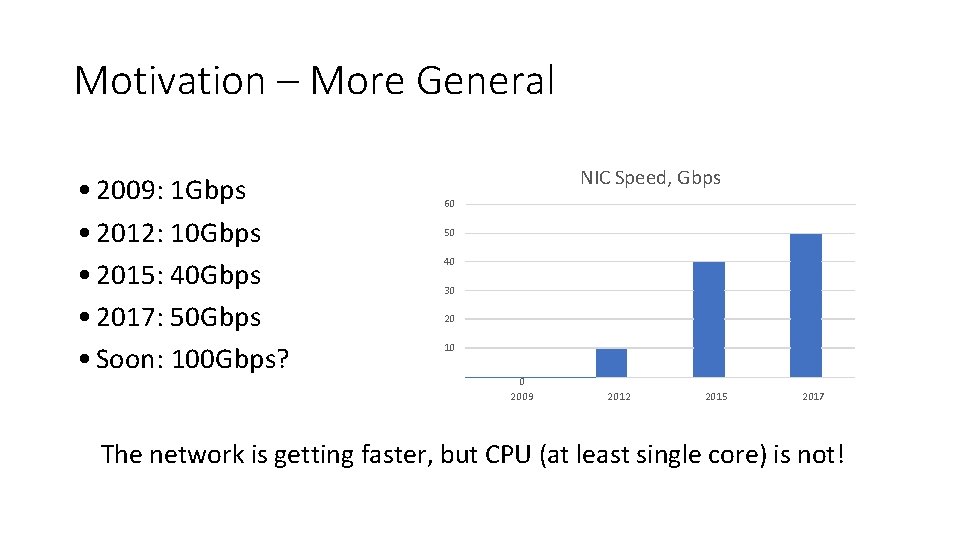

Motivation – More General • 2009: 1 Gbps • 2012: 10 Gbps • 2015: 40 Gbps • 2017: 50 Gbps • Soon: 100 Gbps? NIC Speed, Gbps 60 50 40 30 20 10 0 2009 2012 2015 2017 The network is getting faster, but CPU (at least single core) is not!

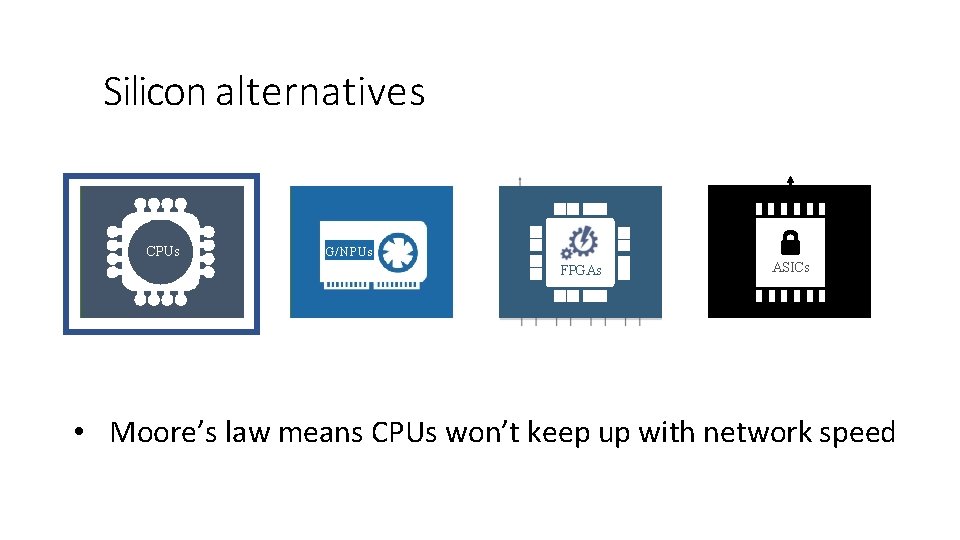

Silicon alternatives + Registers Control Unit CPUs (CU) Arithmetic Logic Unit (ALU) FLEXIBILITY + G/ NPUs FPGAs + + ASICs + + + EFFICIENCY • Moore’s law means CPUs won’t keep up with network speed

Silicon alternatives + Registers Control Unit CPUs (CU) Arithmetic Logic Unit (ALU) FLEXIBILITY + G/ NPUs FPGAs + + ASICs + + + EFFICIENCY • GPUs are great for bulk processing, but latency is not great

Silicon alternatives + Registers Control Unit CPUs (CU) Arithmetic Logic Unit (ALU) FLEXIBILITY + G/ NPUs FPGAs + + ASICs + + + EFFICIENCY • Slow to change • Limited to exactly what the hardware vendor is willing to provide

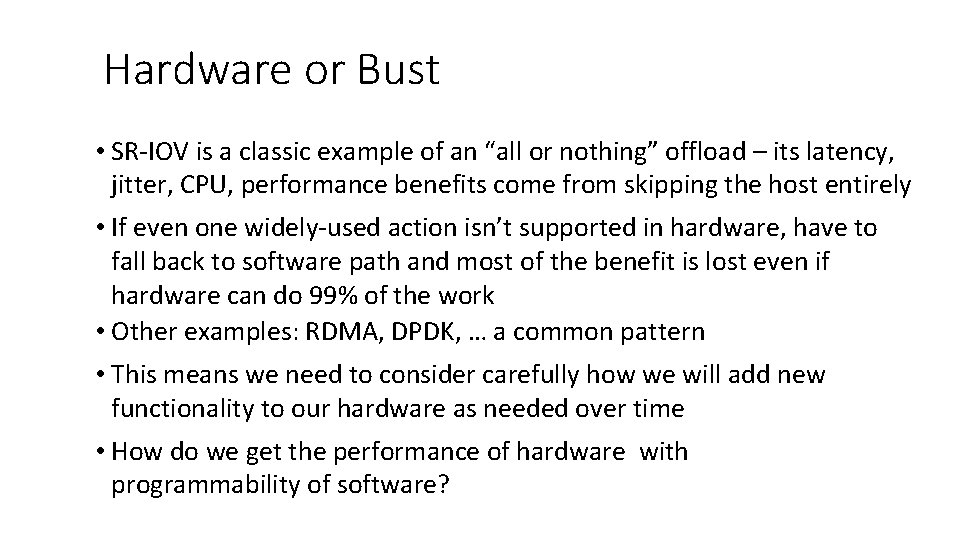

Hardware or Bust • SR-IOV is a classic example of an “all or nothing” offload – its latency, jitter, CPU, performance benefits come from skipping the host entirely • If even one widely-used action isn’t supported in hardware, have to fall back to software path and most of the benefit is lost even if hardware can do 99% of the work • Other examples: RDMA, DPDK, … a common pattern • This means we need to consider carefully how we will add new functionality to our hardware as needed over time • How do we get the performance of hardware with programmability of software?

Smart. NIC – Accelerating SDN

- Slides: 15