Averagecase Complexity Luca Trevisan UC Berkeley Distributional Problem

Average-case Complexity Luca Trevisan UC Berkeley

Distributional Problem <P, D> P computational problem – e. g. SAT D distribution over inputs – e. g. n vars 10 n clauses

Positive Results: • Algorithm that solves P efficiently on most inputs – Interesting when P useful problem, D distribution arising “in practice” Negative Results: • If <assumption>, then no such algorithm – P useful, D natural • guide algorithm design – Manufactured P, D, • still interesting for crypto, derandomization

Positive Results: • Algorithm that solves P efficiently on most inputs – Interesting when P useful problem, D distribution arising “in practice” Negative Results: • If <assumption>, then no such algorithm – P useful, D natural • guide algorithm design – Manufactured P, D, • still interesting for crypto, derandomization

Holy Grail If there is algorithm A that solves P efficiently on most inputs from D Then there is an efficient worst-case algorithm for [the complexity class] P [belongs to]

Part (1) In which the Holy Grail proves elusive

The Permanent Perm (M) : = Ss Pi M(i, s(i)) Perm() is #P-complete Lipton (1990): If there is algorithm that solves Perm() efficiently on most random matrices, Then there is an algorithm that solves it efficiently on all matrices (and BPP=#P)

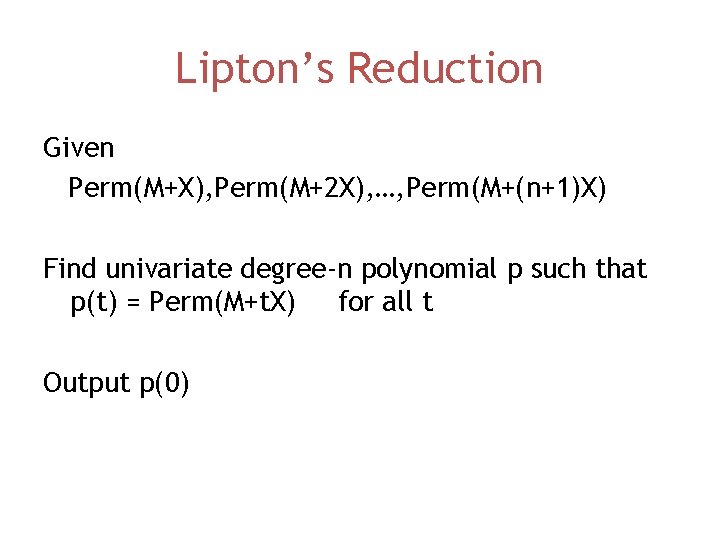

Lipton’s Reduction Suppose operations are over finite field of size >n A is good-on-average algorithm (wrong on < 1/(10(n+1)) fraction of matrices) Given M, pick random X, compute A(M+X), A(M+2 X), …, A(M+(n+1)X) Whp the same as Perm(M+X), Perm(M+2 X), …, Perm(M+(n+1)X)

Lipton’s Reduction Given Perm(M+X), Perm(M+2 X), …, Perm(M+(n+1)X) Find univariate degree-n polynomial p such that p(t) = Perm(M+t. X) for all t Output p(0)

![Improvements / Generalizations • Can handle constant fraction of errors [Gemmel-Sudan] • Works for Improvements / Generalizations • Can handle constant fraction of errors [Gemmel-Sudan] • Works for](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-10.jpg)

Improvements / Generalizations • Can handle constant fraction of errors [Gemmel-Sudan] • Works for PSPACE-complete, EXP-complete, … [Feigenbaum-Fortnow, Babai-Fortnow-Nisan-Wigderson] Encode the problem as a polynomial

![Strong Average-Case Hardness • [Impagliazzo, Impagliazzo-Wigderson] Manufacture problems in E, EXP, such that – Strong Average-Case Hardness • [Impagliazzo, Impagliazzo-Wigderson] Manufacture problems in E, EXP, such that –](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-11.jpg)

Strong Average-Case Hardness • [Impagliazzo, Impagliazzo-Wigderson] Manufacture problems in E, EXP, such that – Size-t circuit correct on ½ + 1/t inputs implies – Size poly(t) circuit correct on all inputs Motivation: [Nisan-Wigderson] P=BPP if there is problem in E of exponential average-case complexity

![Strong Average-Case Hardness • [Impagliazzo, Impagliazzo-Wigderson] Manufacture problems in E, EXP, such that – Strong Average-Case Hardness • [Impagliazzo, Impagliazzo-Wigderson] Manufacture problems in E, EXP, such that –](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-12.jpg)

Strong Average-Case Hardness • [Impagliazzo, Impagliazzo-Wigderson] Manufacture problems in E, EXP, such that – Size-t circuit correct on ½ + 1/t inputs implies – Size poly(t) circuit correct on all inputs Motivation: [Impagliazzo-Wigderson] P=BPP if there is problem in E of exponential average worst-case complexity

Open Question 1 • Suppose there are worst-case intractable problems in NP • Are there average-case intractable problems?

![Strong Average-Case Hardness • [Impagliazzo, Impagliazzo-Wigderson] Manufacture problems in E, EXP, such that – Strong Average-Case Hardness • [Impagliazzo, Impagliazzo-Wigderson] Manufacture problems in E, EXP, such that –](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-14.jpg)

Strong Average-Case Hardness • [Impagliazzo, Impagliazzo-Wigderson] Manufacture problems in E, EXP, such that – Size-t circuit correct on ½ + 1/t inputs implies – Size poly(t) circuit correct on all inputs • [Sudan-T-Vadhan] – IW result can be seen as coding-theoretic – Simpler proof by explicitly coding-theoretic ideas

Encoding Approach • Viola proves that an error-correcting code cannot be computed in AC 0 • The exponential-size error-correcting code computation not possible in PH

![Problem-specific Approaches? [Ajtai] • Proves that there is a lattice problem such that: – Problem-specific Approaches? [Ajtai] • Proves that there is a lattice problem such that: –](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-16.jpg)

Problem-specific Approaches? [Ajtai] • Proves that there is a lattice problem such that: – If there is efficient average-case algorithm – There is efficient worst-case approximation algorithm

Ajtai’s Reduction • Lattice Problem – If there is efficient average-case algorithm – There is efficient worst-case approximation algorithm The approximation problem is in NPIco. NP Not NP-hard

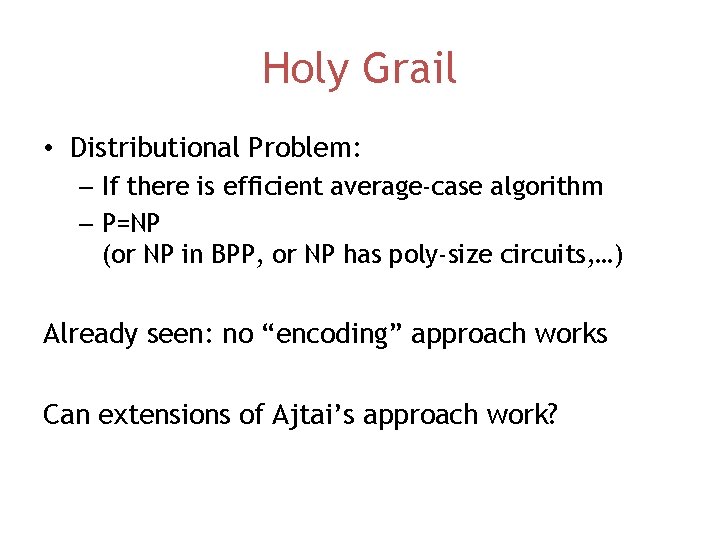

Holy Grail • Distributional Problem: – If there is efficient average-case algorithm – P=NP (or NP in BPP, or NP has poly-size circuits, …) Already seen: no “encoding” approach works Can extensions of Ajtai’s approach work?

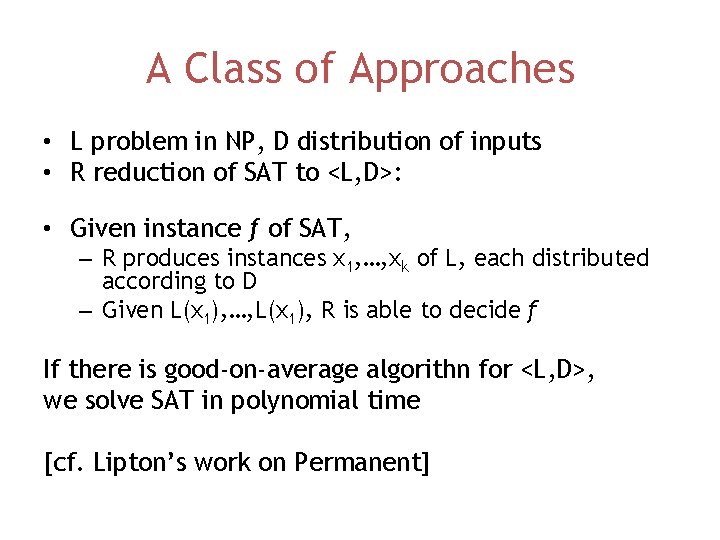

A Class of Approaches • L problem in NP, D distribution of inputs • R reduction of SAT to <L, D>: • Given instance f of SAT, – R produces instances x 1, …, xk of L, each distributed according to D – Given L(x 1), …, L(x 1), R is able to decide f If there is good-on-average algorithn for <L, D>, we solve SAT in polynomial time [cf. Lipton’s work on Permanent]

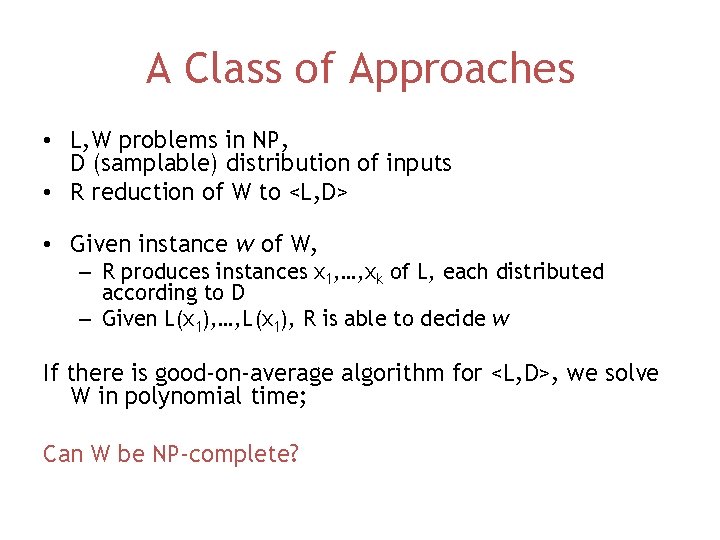

A Class of Approaches • L, W problems in NP, D (samplable) distribution of inputs • R reduction of W to <L, D> • Given instance w of W, – R produces instances x 1, …, xk of L, each distributed according to D – Given L(x 1), …, L(x 1), R is able to decide w If there is good-on-average algorithm for <L, D>, we solve W in polynomial time; Can W be NP-complete?

A Class of Approaches • Given instance w of W, – R produces instances x 1, …, xk of L, each distributed according to D – Given L(x 1), …, L(x 1), R is able to decide w Given good-on-average algorithm for <L, D>, we solve W in polynomial time; If we have such reduction, and W is NP-complete, we have Holy Grail! Feigenbaum-Fortnow: W is in “co. NP”

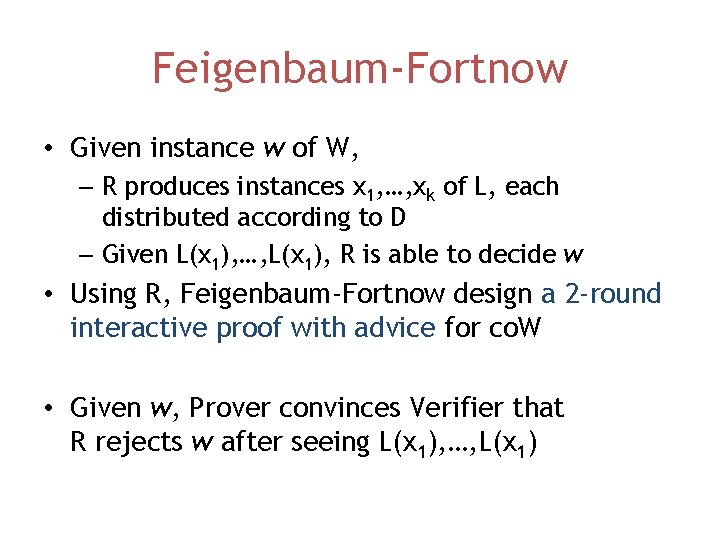

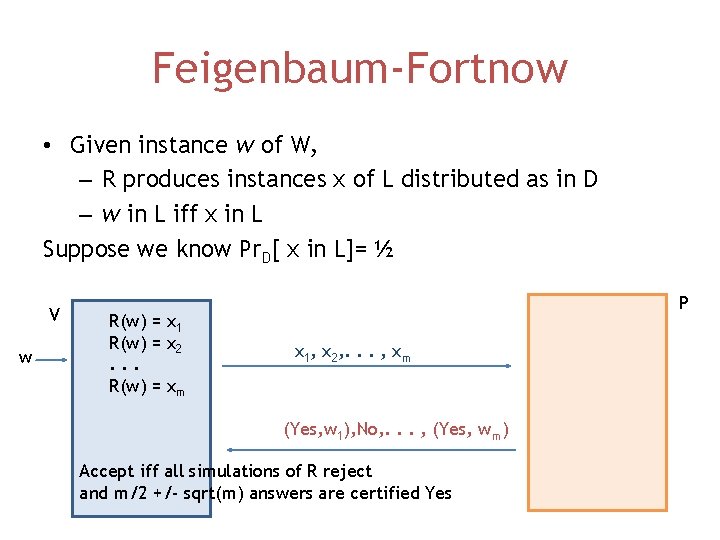

Feigenbaum-Fortnow • Given instance w of W, – R produces instances x 1, …, xk of L, each distributed according to D – Given L(x 1), …, L(x 1), R is able to decide w • Using R, Feigenbaum-Fortnow design a 2 -round interactive proof with advice for co. W • Given w, Prover convinces Verifier that R rejects w after seeing L(x 1), …, L(x 1)

Feigenbaum-Fortnow • Given instance w of W, – R produces instances x of L distributed as in D – w in L iff x in L Suppose we know Pr. D[ x in L]= ½ V w R(w) = x 1 R(w) = x 2. . . R(w) = xm P x 1, x 2, . . . , xm (Yes, w 1), No, . . . , (Yes, wm) Accept iff all simulations of R reject and m/2 +/- sqrt(m) answers are certified Yes

![Feigenbaum-Fortnow • Given instance w of W, p: = Pr[ xi in L] – Feigenbaum-Fortnow • Given instance w of W, p: = Pr[ xi in L] –](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-24.jpg)

Feigenbaum-Fortnow • Given instance w of W, p: = Pr[ xi in L] – R produces instances x 1, …, xk of L, each distrib. according to D – Given L(x 1), …, L(xk), R is able to decide w P V w R(w) -> x 11, …, xk 1. . . x 11, …, xkm R(w) -> x 1 m, …, xkm (Yes, w 11), …, NO Accept iff -pkm +/- sqrt(pkm) YES with certificates -R rejects in each case

Generalizations • Bogdanov-Trevisan: arbitrary non-adaptive reductions • Main Open Question: What happens with adaptive reductions?

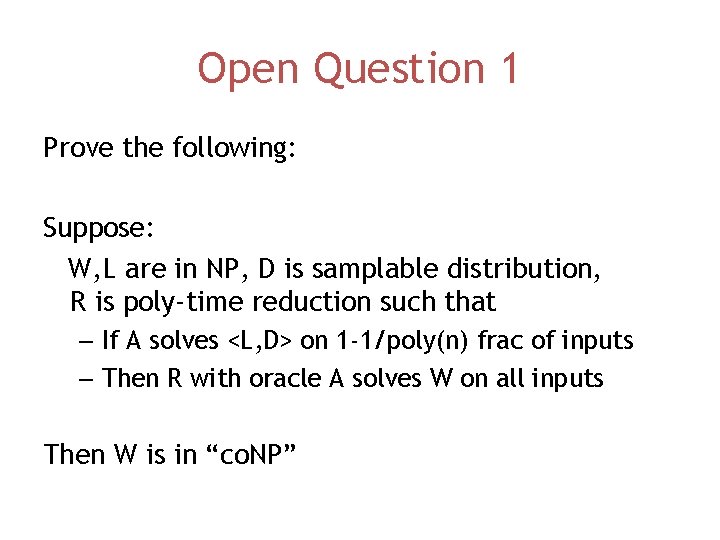

Open Question 1 Prove the following: Suppose: W, L are in NP, D is samplable distribution, R is poly-time reduction such that – If A solves <L, D> on 1 -1/poly(n) frac of inputs – Then R with oracle A solves W on all inputs Then W is in “co. NP”

By the Way • Probably impossible by current techniques: If NP not contained in BPP There is a samplable distribution D and an NP problem L Such that <L, D> is hard on average

By the Way • Probably impossible by current techniques: If NP not contained in BPP There is a samplable distribution D and an NP problem L Such that for every efficient A A makes many mistakes solving L on D

By the Way • Probably impossible by current techniques: If NP not contained in BPP There is a samplable distribution D and an NP problem L Such that for every efficient A A makes many mistakes solving L on D • [Guttfreund-Shaltiel-Ta. Shma] Prove: If NP not contained in BPP For every efficient A There is a samplable distribution D Such that A makes many mistakes solving SAT on D

Part (2) In which we amplify average-case complexity and we discuss a short paper

Revised Goal • Proving “If NP contains worst-case intractable problems, then NP contains average-case intractable problems” Might be impossible • Average-case intractability comes in different quantitative degrees • Equivalence?

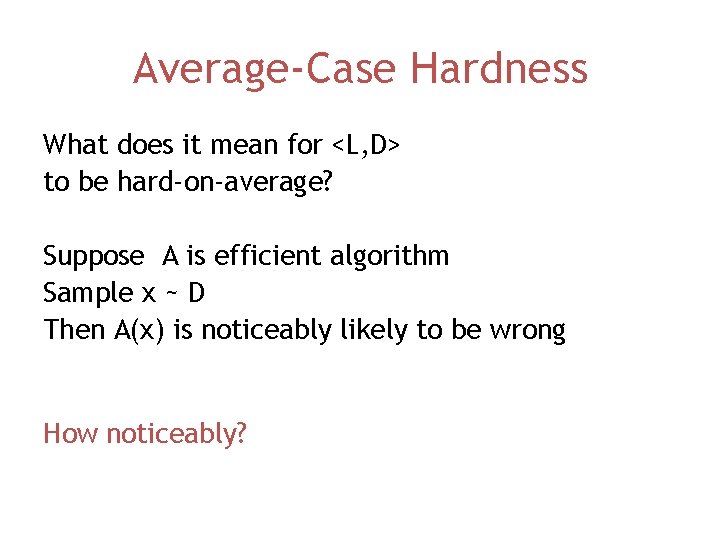

Average-Case Hardness What does it mean for <L, D> to be hard-on-average? Suppose A is efficient algorithm Sample x ~ D Then A(x) is noticeably likely to be wrong How noticeably?

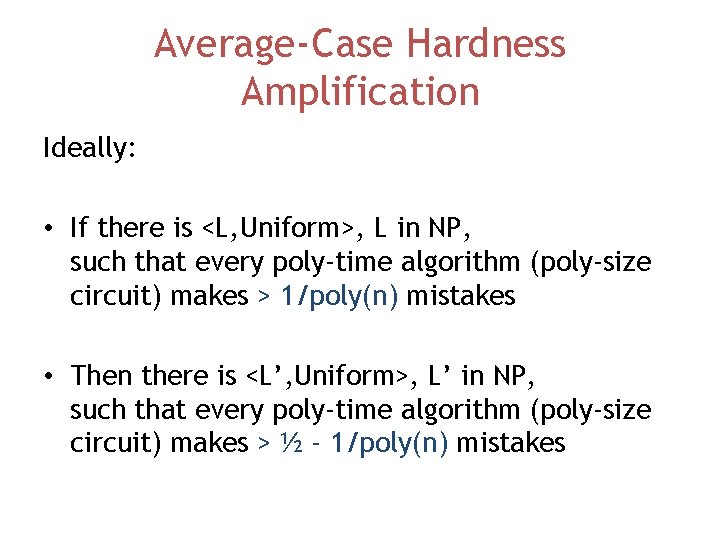

Average-Case Hardness Amplification Ideally: • If there is <L, Uniform>, L in NP, such that every poly-time algorithm (poly-size circuit) makes > 1/poly(n) mistakes • Then there is <L’, Uniform>, L’ in NP, such that every poly-time algorithm (poly-size circuit) makes > ½ - 1/poly(n) mistakes

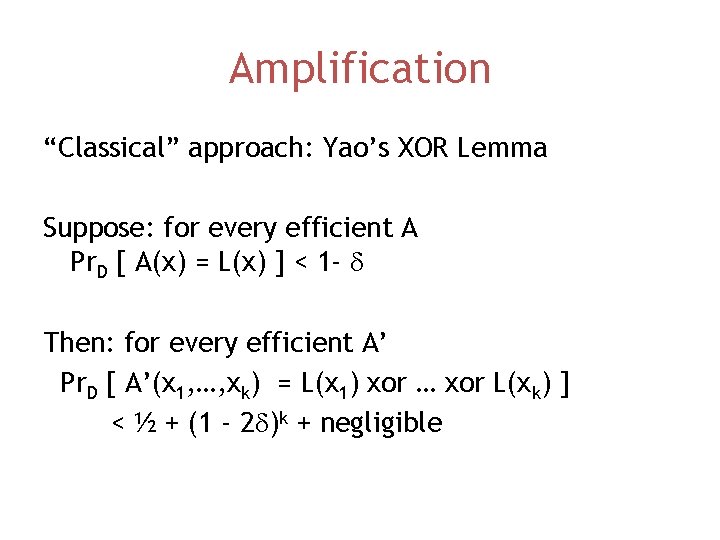

Amplification “Classical” approach: Yao’s XOR Lemma Suppose: for every efficient A Pr. D [ A(x) = L(x) ] < 1 - d Then: for every efficient A’ Pr. D [ A’(x 1, …, xk) = L(x 1) xor … xor L(xk) ] < ½ + (1 - 2 d)k + negligible

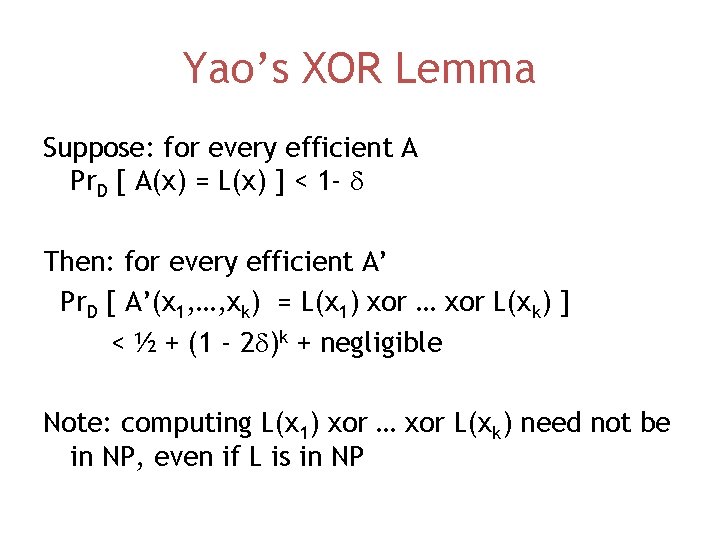

Yao’s XOR Lemma Suppose: for every efficient A Pr. D [ A(x) = L(x) ] < 1 - d Then: for every efficient A’ Pr. D [ A’(x 1, …, xk) = L(x 1) xor … xor L(xk) ] < ½ + (1 - 2 d)k + negligible Note: computing L(x 1) xor … xor L(xk) need not be in NP, even if L is in NP

![O’Donnell Approach Suppose: for every efficient A Pr. D [ A(x) = L(x) ] O’Donnell Approach Suppose: for every efficient A Pr. D [ A(x) = L(x) ]](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-36.jpg)

O’Donnell Approach Suppose: for every efficient A Pr. D [ A(x) = L(x) ] < 1 - d Then: for every efficient A’ Pr. D [ A’(x 1, …, xk) = g(L(x 1), …, L(xk)) ] < ½ + small(k, d) For carefully chosen monotone function g Now computing g(L(x 1), …, L(xk)) is in NP, if L is in NP

Amplification (Circuits) Ideally: • If there is <L, Uniform>, L in NP, such that every poly-time algorithm (poly-size circuit) makes > 1/poly(n) mistakes • Then there is <L’, Uniform>, L’ in NP, such that every poly-time algorithm (poly-size circuit) makes > ½ - 1/poly(n) mistakes Achieved by [O’Donnell, Healy-Vadhan-Viola] for poly-size circuits

Amplification (Algorithms) • If there is <L, Uniform>, L in NP, such that every polytime algorithm makes > 1/poly(n) mistakes • Then there is <L’, Uniform>, L’ in NP, such that every poly-time algorithm makes > ½ - 1/polylog(n) mistakes [T] [Impagliazzo-Jaiswal-Kabanets-Wigderson] ½ - 1/poly(n) but for PNP||

Open Question 2 Prove: • If there is <L, Uniform>, L in NP, such that every poly-time algorithm makes > 1/poly(n) mistakes • Then there is <L’, Uniform>, L’ in NP, such that every poly-time algorithm makes > ½ - 1/poly(n) mistakes

Completeness • Suppose we believe there is L in NP, D distribution, such that <L, D> is hard • Can we point to a specific problem C such that <C, Uniform> is also hard?

Completeness • Suppose we believe there is L in NP, D distribution, such that <L, D> is hard • Can we point to a specific problem C such that <C, Uniform> is also hard? Must put restriction on D, otherwise assumption is the same as P != NP

Side Note Let K be distribution such that x has probability proportional to 2 -K(x) Suppose A solves <L, K> on 1 -1/poly(n) fraction of inputs of length n Then A solves L on all but finitely many inputs Exercise: prove it

Completeness • Suppose we believe there is L in NP, D samplable distribution, such that <L, D> is hard • Can we point to a specific problem C such that <C, Uniform> is also hard?

Completeness • Suppose we believe there is L in NP, D samplable distribution, such that <L, D> is hard • Can we point to a specific problem C such that <C, Uniform> is also hard? Yes we can! [Levin, Impagliazzo-Levin]

Levin’s Completeness Result • There is an NP problem C, such that • If there is L in NP, D computable distribution, such that <L, D> is hard • Then <C, Uniform> is also hard

Reduction Need to define reduction that preserves efficiency on average (Note: we haven’t yet defined efficiency on average) R is a (Karp) average-case reduction from <A, DA> to <B, DB> if 1. x in A iff R(x) in B 2. R(DA) is “dominated” by DB: Pr[ R(DA)=y] < poly(n) * Pr [DB = y]

Reduction R is an average-case reduction from <A, DA> to <B, DB> if • x in A iff R(x) in B • R(DA) is “dominated” by DB: Pr[ R(DA)=y] < poly(n) * Pr [DB = y] Suppose we have good algorithm for <B, DB> Then algorithm also good for <B, R(DA)> Solving <A, DA> reduces to solving <B, R(DA)>

![Reduction If Pr[ Y=y] < poly(n) * Pr [DB = y] and we have Reduction If Pr[ Y=y] < poly(n) * Pr [DB = y] and we have](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-49.jpg)

Reduction If Pr[ Y=y] < poly(n) * Pr [DB = y] and we have good algorithm for <B, DB > Then algorithm also good for <B, Y> Reduction works for any notion of average-case tractability for which above is true.

![Levin’s Completeness Result Follow presentation of [Goldreich] • If <BH, Uniform> is easy on Levin’s Completeness Result Follow presentation of [Goldreich] • If <BH, Uniform> is easy on](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-50.jpg)

Levin’s Completeness Result Follow presentation of [Goldreich] • If <BH, Uniform> is easy on average • Then for every L in NP, every D computable distribution, <L, D> is easy on average BH is non-deterministic Bounded Halting: given <M, x, 1 t>, does M(x) accept with t steps?

Levin’s Completeness Result BH, non-deterministic Bounded Halting: given <M, x, 1 t>, does M(x) accept with t steps? Suppose we have good-on-average alg A Want to solve <L, D>, where L solvable by NDTM M First try: x -> <M, x, 1 poly(n)>

Levin’s Completeness Result First try: x -> <M, x, 1 poly(n)> Doesn’t work: x may have arbitrary distribution, we need target string to be nearly uniform (high entropy) Second try: x -> <M’, C(x), 1 poly(n)> Where C() is near-optimal compression alg, M’ recover x from C(x), then runs M

Levin’s Completeness Result Second try: x -> <M’, C(x), 1 poly(n)> Where C() is near-optimal compression alg, M’ recover x from C(x), then runs M Works! Provided C(x) has length at most O(log n) + log 1/Pr. D[x] Possible if cumulative distribution function of D is computable.

Impagliazzo-Levin Do the same but for all samplable distribution Samplable distribution not necessarily efficiently compressible in coding theory sense. (E. g. output of PRG) Hashing provides “non-constructive” compression

![Complete Problems BH with Uniform distribution Tiling problem with Uniform distribution [Levin] Generalized edge-coloring Complete Problems BH with Uniform distribution Tiling problem with Uniform distribution [Levin] Generalized edge-coloring](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-55.jpg)

Complete Problems BH with Uniform distribution Tiling problem with Uniform distribution [Levin] Generalized edge-coloring [Venkatesan-Levin] Matrix representability [Venkatesan-Rajagopalan] Matrix transformation [Gurevich]. . .

Open Question 3 L in NP, M NDTM for L is specified by k bits Levin’s reduction incurs 2 k bits in fraction of “problematic” inputs (comparable to having 2 k slowdown) Limited to problems having non-deterministic algorithm of 5 bytes Inherent?

More Reductions? Still relatively few complete problems Similar to study of inapproximability before Papadimitriou-Yannakakis and PCP Would be good, as in Papadimitriou-Yannakakis, to find reductions between problems that are not known to be complete but are plausibly hard

Open Question 4 (Heard from Russell Impagliazzo) Prove that If 3 SAT is hard on instances with n variables and 10 n clauses, Then it is also hard on instances with 12 n clauses

![See • http: //www. cs. berkeley. edu/~luca/average [slides, references, addendum to Bogdanov-T, coming soon] See • http: //www. cs. berkeley. edu/~luca/average [slides, references, addendum to Bogdanov-T, coming soon]](http://slidetodoc.com/presentation_image_h2/6921368437fcd587c58530f3ad45e7a5/image-59.jpg)

See • http: //www. cs. berkeley. edu/~luca/average [slides, references, addendum to Bogdanov-T, coming soon] • http: //www. cs. uml. edu/~wang/acc-forum/ [average-case complexity forum] • Impagliazzo A personal view of average-case complexity Structures’ 95 • Goldreich Notes on Levin’s theory of average-case complexity ECCC TR-97 -56 • Bogdanov-T. Average case complexity F&TTCS 2(1): (2006)

- Slides: 59