Automatically Linking Registered Clinical Trials to their Published

Automatically Linking Registered Clinical Trials to their Published Results with Deep Highway Networks Standards and models for clinical trial, mobile health, and population data S 24 Travis R. Goodwin (Presenter), Ph. D, Michael A. Skinner, MD, and Sanda M. Harabagiu, Ph. D The University of Texas at Dallas Twitter: #TBICRI 18

Disclosure All authors and their spouses/partners have no relevant relationships with commercial interests to disclose. AMIA 2018 Informatics Summit | amia. org 2

Learning Objectives After participating in this session the learner should be better able to: • automatically link clinical trials to publications reporting their results • design and implement a Deep Highway Network • extract features characterizing the relationship between a registered clinical trial and a published article • design a custom, offline index of MEDLINE AMIA 2018 Informatics Summit | amia. org 3

Presentation Outline 1. Introduction 2. Methods 3. Experiments 4. Discussion 5. Conclusions AMIA 2017 | amia. org 4

Introduction: History • In 1997, congress mandated the development of the online trial registry Clinical. Trials. gov • provide more convenient access to clinical trials for persons with serious medical conditions • make the results of clinical trial more available to health care providers • In 2004, the International Committee of Medical Journal Editors (ICMJE) mandated the registration of trials before considering publication of trial results (De Angelis et al. , 2004) • In 2007, congress’s mandate was expanded by requiring the timely inclusion of clinical trial results within the registry for all sponsors of non-phase-1 human trials seeking FDA approval for a new device or drug (Congress, 2007) AMIA 2018 | amia. org 5

Introduction: The Problem Despite the numerous policies intended to improve the timely accessibility of clinical trial results to clinicians, there remain several barriers hindering effective use of these important data. • only 13. 4% of the trials reported summary results within 12 months of study completion (Anderson et al. , 2015) • only 38. 3% of the registered studies reported any results at any time (Anderson et al. , 2015) • once trial results are published in peer-reviewed literature, the article citation is only provided to the Clinical. Trials. gov registry in about 23%-31% of cases (Ross et al. , 2009; Huser and Cimino, 2013) • when registered trials with no reported publications were manually reviewed, investigators were able to find relevant MEDLINE articles for 31%-45% of reviewed clinical trials (Ross et al. , 2009; Huser and Cimino, 2013) • despite the ICMJE recommendation, only about 7% of articles presenting trial results include a specific citation of the trial registry number (Huser and Cimino, 2013) • hinders simple retrieval of the article with a MEDLINE search AMIA 2018 | amia. org 6

Introduction: The Problem II Bashir et al. (2017) conducted a systematic review of studies examining links between registered clinical trials and the publications reporting their results • 83% of studies required some level of manual (i. e. , human) analysis • 19% involving strictly manual analyses, 64% involving both manual and automatic analyses and 17% involving strictly automatic analyses. • the number of articles amenable to being automatically linked to the clinical trials they report has not increased over time • automatic methods were only able to identify a median of 23% of articles reporting the results of registered trials, • identifying publications reporting the results of a clinical trial remains an arduous, manual task. Clearly, there is a need for the creation of robust methods to automatically link clinical trials with their results in the medical literature! AMIA 2018 | amia. org 7

Introduction: The Approach We present NCT Link, a system for automatically linking registered clinical trials to articles reporting their results Problem: It is difficult to define exact and complete criteria for determining whether a link exists between an article and a clinical trial Solution: supervised deep-learning • incorporates state-of-the-art deep learning techniques through a specialized Deep Highway Network (DHN) • determines the likelihood that a link exists between an article and a clinical trial by considering a variety of information (i. e. , features) about the article, the trial, and the relationships (if any) between them. Our experiments demonstrate that NCT Link provides a 30%-58% improvement over the automatic methods surveyed in Bashir et al. (2017) AMIA 2018 | amia. org 8

Introduction: The Applications NCT Link has potential applications for: • health care providers seeking to obtain timely access to the publications reporting the results of clinical trials. • researchers investigating selective publication and reporting of clinical trial outcomes • study designers aiming to avoid unnecessary duplication of research efforts AMIA 2018 | amia. org 9

Presentation Outline 1. Introduction 2. Methods 3. Experiments 4. Discussion 5. Conclusions AMIA 2018 | amia. org 10

Methods: What is a link? In previous studies examining links between registered clinical trials and published articles, investigators have described different ways that a published article may be considered linked to a clinical trial. In this work, we focus exclusively on one type of link: articles which report the results of a clinical trial • we consider a publication to be linked to a clinical trial if and only if it reports the results of the trial. • As in Huser and Cimino (2013), we only consider links between clinical trials registered to Clinical. Trials. gov and published articles indexed by MEDLINE. AMIA 2018 | amia. org 11

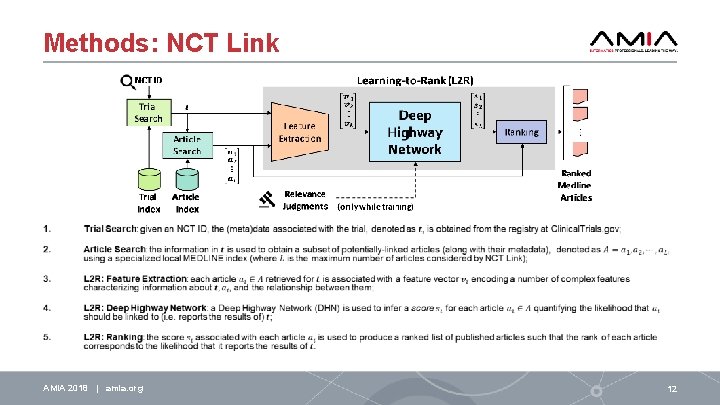

Methods: NCT Link AMIA 2018 | amia. org 12

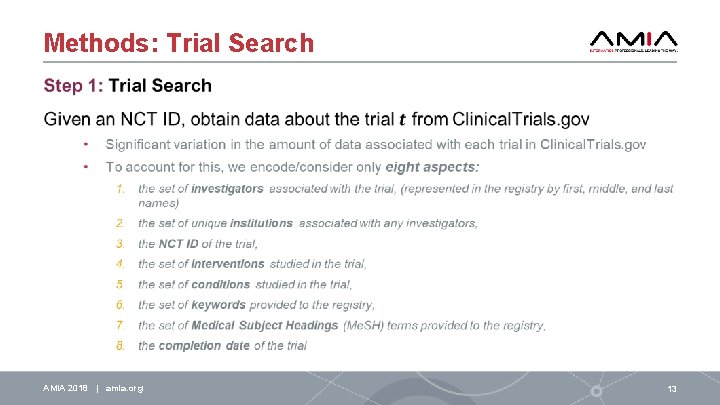

Methods: Trial Search AMIA 2018 | amia. org 13

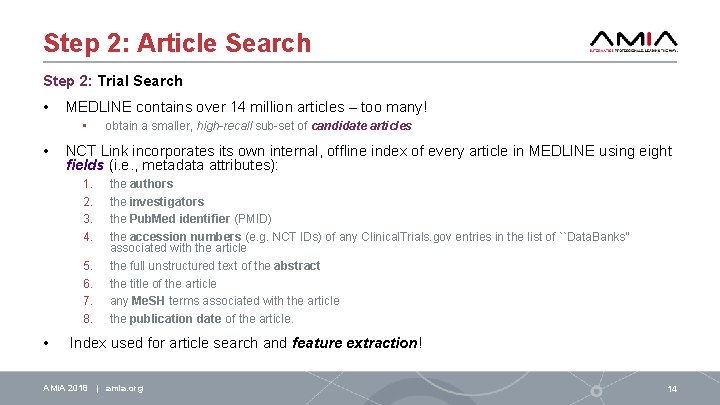

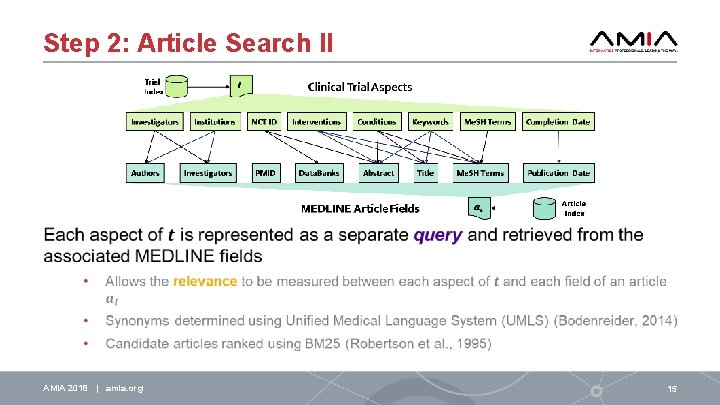

Step 2: Article Search Step 2: Trial Search • MEDLINE contains over 14 million articles – too many! • • NCT Link incorporates its own internal, offline index of every article in MEDLINE using eight fields (i. e. , metadata attributes): 1. 2. 3. 4. 5. 6. 7. 8. • obtain a smaller, high-recall sub-set of candidate articles the authors the investigators the Pub. Med identifier (PMID) the accession numbers (e. g. NCT IDs) of any Clinical. Trials. gov entries in the list of ``Data. Banks'' associated with the article the full unstructured text of the abstract the title of the article any Me. SH terms associated with the article the publication date of the article. Index used for article search and feature extraction! AMIA 2018 | amia. org 14

Step 2: Article Search II AMIA 2018 | amia. org 15

Step 3: Feature Extraction AMIA 2018 | amia. org 16

Step 3: Feature Extraction II AMIA 2018 | amia. org 17

Step 4: Deep Highway Network (DHN) AMIA 2018 | amia. org 18

Step 5: Ranking AMIA 2018 | amia. org 19

Presentation Outline 1. Introduction 2. Methods 3. Experiments 4. Discussion 5. Conclusions AMIA 2018 | amia. org 20

Experiments: Relevance Judgments Clinical. Trials. gov Background: • Each clinical trial in Clinical. Trials. gov was manually registered by a Study Record Manager (SRM) • Trials may be associated with two types of publications corresponding to distinct fields in the registry: 1. related articles, articles the SRM deemed related to the trial (typically references) 2. result articles, articles the SRM indicated as reporting the results of the trial. To evaluate NCT Link, we randomly selected 500 clinical trials which were each associated with at least one result article in the registry • standard 3: 1: 1 split for training, development, and testing. Relevance judgments for all 500 trials were automatically produced using the result articles encoded for each trial. AMIA 2018 | amia. org 21

Experiments: Relevance Judgments II AMIA 2018 | amia. org 22

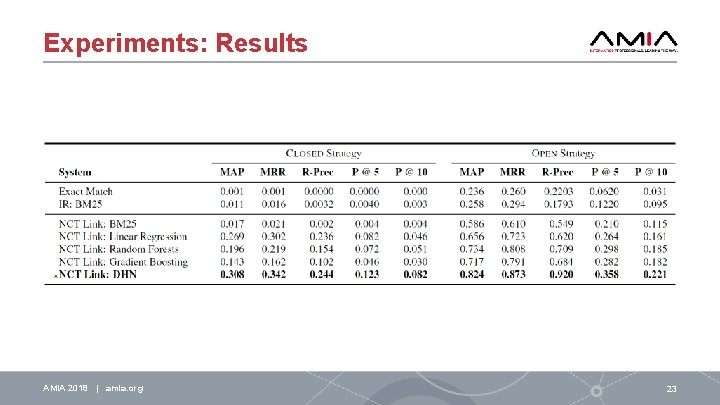

Experiments: Results AMIA 2018 | amia. org 23

Presentation Outline 1. Introduction 2. Methods 3. Experiments 4. Discussion 5. Conclusions AMIA 2018 | amia. org 24

Discussion: Error Analysis We manually analyzed the MEDLINE articles retrieved by NCT Link for 30 clinical trials in test set and four main sources of error. 1. investigator and author names: 1. clinical trials represented investigator names with three fields: first name, middle name, and last name. 2. many journals in MEDLINE only report the authors' last names and the initials of first and sometimes middle names 3. system incorrectly concluded that the investigator of a trial was the same as the author of a paper. 1. common last names (Lin, Brown), common first initials (J, M, S, D), or missing middle initials 2. investigator missing or provided as sponsoring company 2. affiliations 1. same institution was referenced in multiple ways, 1. 2. e. g. UCLA and University of California, Los Angeles’ addresses were often specified with different levels of detail (street names, cities, states, country) AMIA 2018 | amia. org 25

Discussion: Error Analysis II 3. trial completion dates 1. dates in the European fashion (day-month-year), while others preferred the American notation (month-day-year) 2. in some cases, only the month and the year and year were indicated (04 05 vs 05 04) 3. months were specified using digits (e. g. ``01''), the full name (e. g. , ``January'') as well as a variety of abbreviations (e. g. , ``J'', ``Jan'', and ``Jan. ‘’). 4. years were specified in both two and four digit varieties (e. g. , ``07'', and ``2007‘’). 4. incorrect data 1. result articles for a clinical trial were published before the trial's start date • 2. in some cases, decades before It is unclear whether incorrect citations were given, or whethere was confusion between the related articles and result articles fields in the registry. AMIA 2018 | amia. org 26

Discussion: Limitations • we only considered the clinical trials registered on Clinical. Trials. gov despite the availability of other registries • World Health Organization (WHO) International Clinical Trials Registry Platform (ICTRP) • we limited our system to considering only articles published on MEDLINE and did not consider other databases • EMBASE or research conference proceedings. • because MEDLINE itself only provides abstracts, NCT Link did not have access to the full text of articles. AMIA 2018 | amia. org 27

Presentation Outline 1. Introduction 2. Methods 3. Experiments 4. Discussion 5. Conclusions AMIA 2018 | amia. org 28

Conclusions • It is feasible to automatically infer links between registered clinical trials and MEDLINE articles • 30 -58% improvement to previous automatic efforts • Learning-to-rank is able to infer better relevance criteria than standard IR approaches • Deep learning (DHN) is able to learn useful feature combinations compared to standard ML methods • Many opportunities for future work: • • • incorporating citation analyses to help resolve author ambiguities geo-spatial reasoning about institutions temporal expression normalization considering other data in the registry/MEDLINE considering full text for MEDLINE articles in the Pub. Med Open Access Subset AMIA 2018 | amia. org 29

Acknowledgments Research reported in this publication was supported by the National Human Genome Research Institute of the National Institutes of Health under award number 1 U 01 HG 008468. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health. AMIA 2018 | amia. org 30

AMIA is the professional home for more than 5, 400 informatics professionals, representing frontline clinicians, researchers, public health experts and educators who bring meaning to data, manage information and generate new knowledge across the research and healthcare enterprise. AMIA 2018 Informatics Summit | amia. org @AMIAInformatics @AMIAinformatics Official Group of AMIA @AMIAInformatics #Why. Informatics 31

Thank you!

- Slides: 32