Automatic FloatingPoint to FixedPoint Transformations Kyungtae Han Alex

![14 Code Generation for Fixed-Point Program • Adder function in MATLAB Function [c] = 14 Code Generation for Fixed-Point Program • Adder function in MATLAB Function [c] =](https://slidetodoc.com/presentation_image_h2/ad1cee5e618906f3db9dbeba336df897/image-14.jpg)

- Slides: 28

Automatic Floating-Point to Fixed-Point Transformations Kyungtae Han, Alex G. Olson, Brian L. Evans Dept. of Electrical and Computer Engineering The University of Texas at Austin 2006 Asilomar Conference on Signals, Systems, and Computers October 30 th, 2006

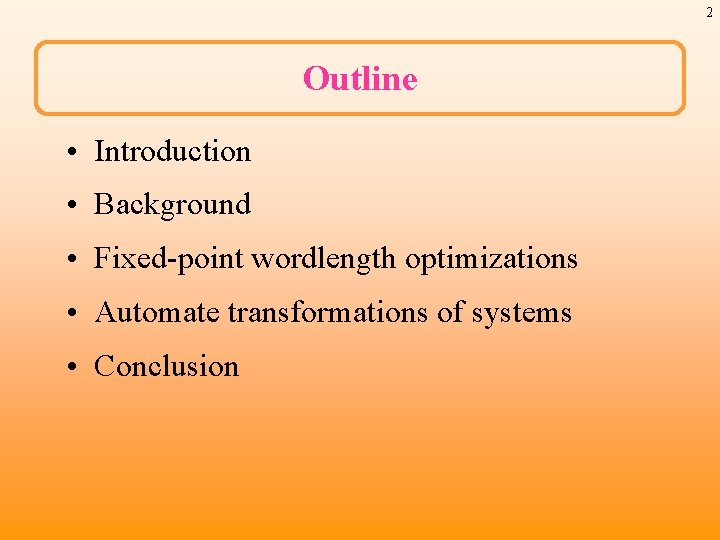

2 Outline • Introduction • Background • Fixed-point wordlength optimizations • Automate transformations of systems • Conclusion

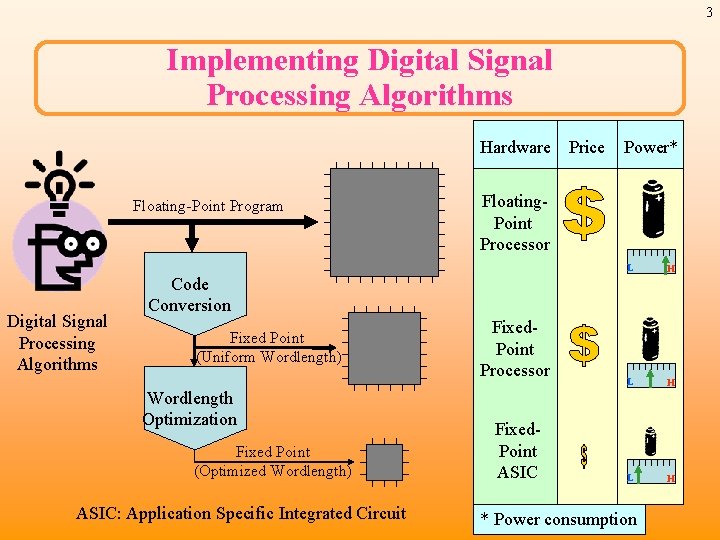

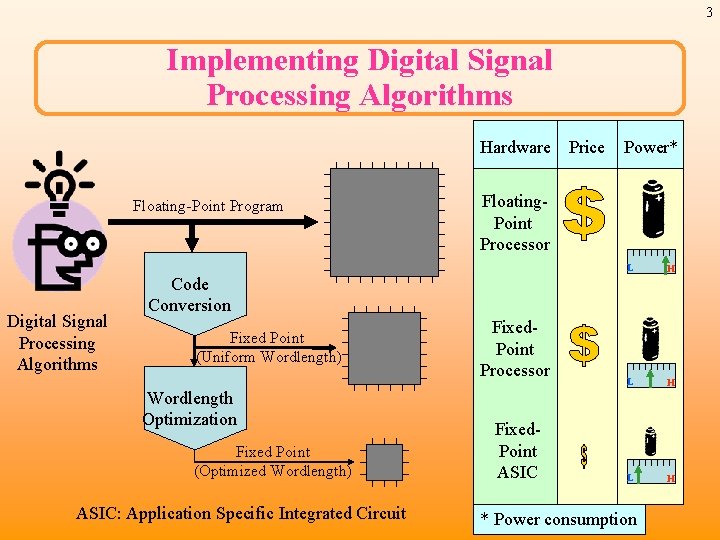

3 Implementing Digital Signal Processing Algorithms Hardware Price Floating-Point Program Digital Signal Processing Algorithms Power* Floating. Point Processor L H L H Code Conversion Fixed Point (Uniform Wordlength) Wordlength Optimization Fixed Point (Optimized Wordlength) ASIC: Application Specific Integrated Circuit Fixed. Point Processor Fixed. Point ASIC * Power consumption

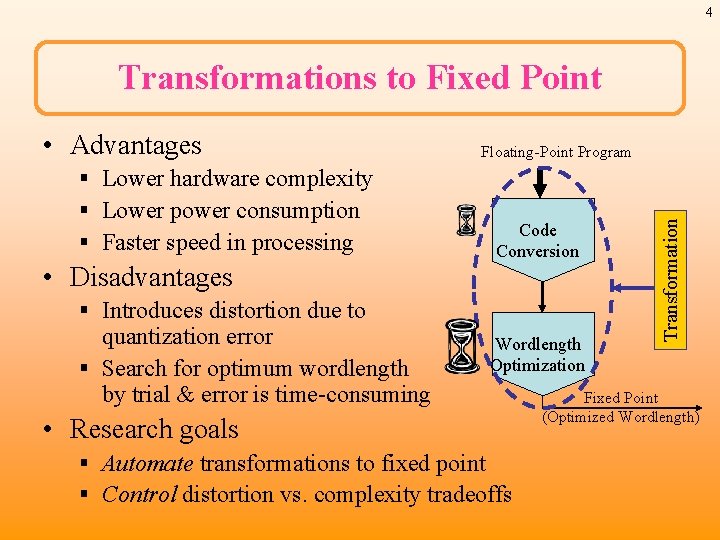

4 Transformations to Fixed Point § Lower hardware complexity § Lower power consumption § Faster speed in processing • Disadvantages Floating-Point Program Code Conversion § Introduces distortion due to quantization error § Search for optimum wordlength by trial & error is time-consuming Wordlength Optimization • Research goals § Automate transformations to fixed point § Control distortion vs. complexity tradeoffs Transformation • Advantages Fixed Point (Optimized Wordlength)

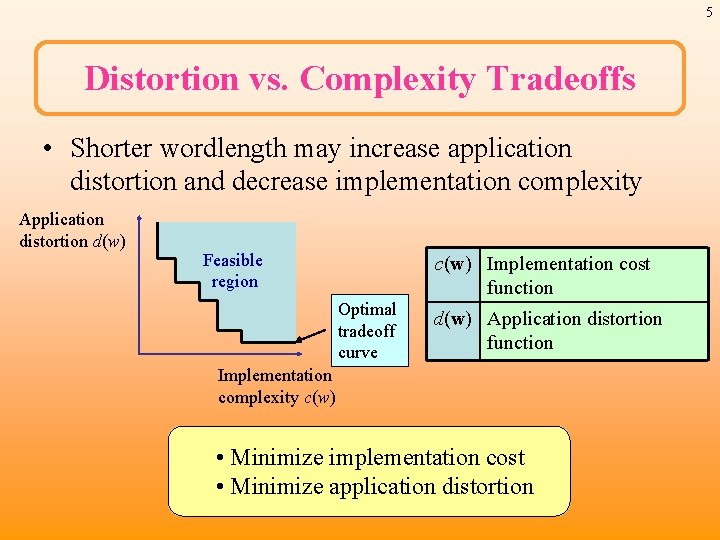

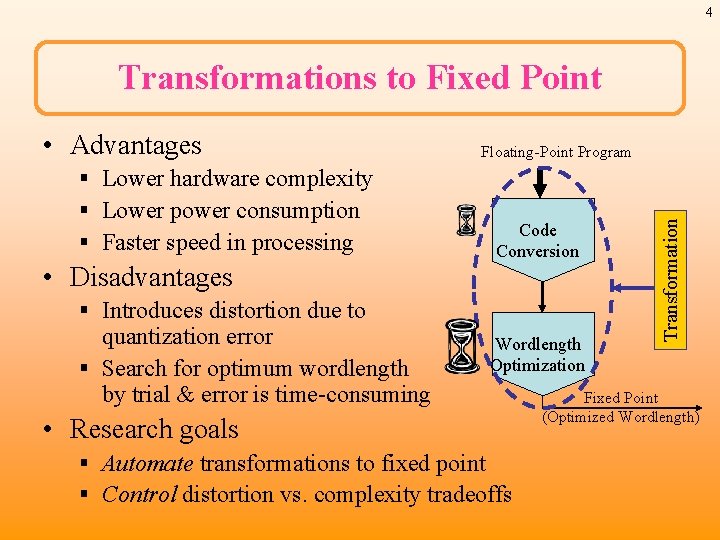

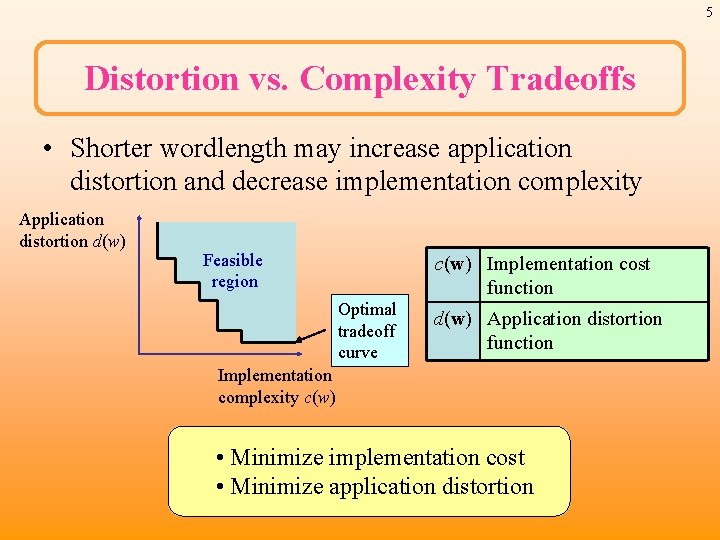

5 Distortion vs. Complexity Tradeoffs • Shorter wordlength may increase application distortion and decrease implementation complexity Application distortion d(w) Feasible region c(w) Implementation cost function Optimal tradeoff curve d(w) Application distortion function Implementation complexity c(w) • Minimize implementation cost • Minimize application distortion

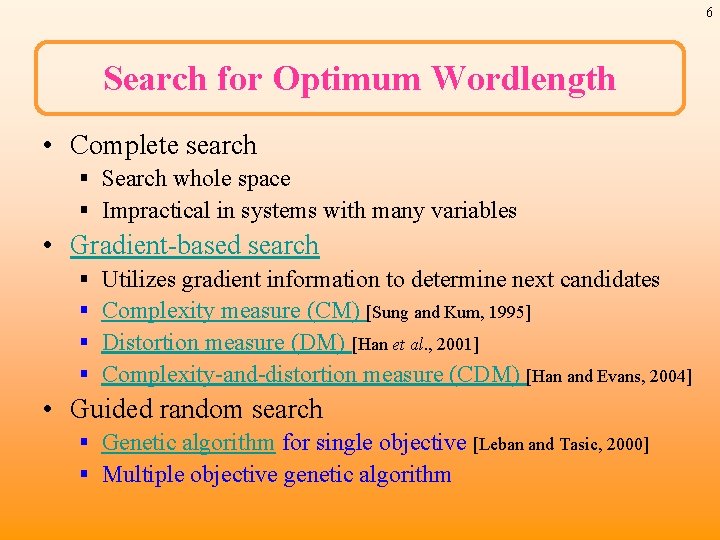

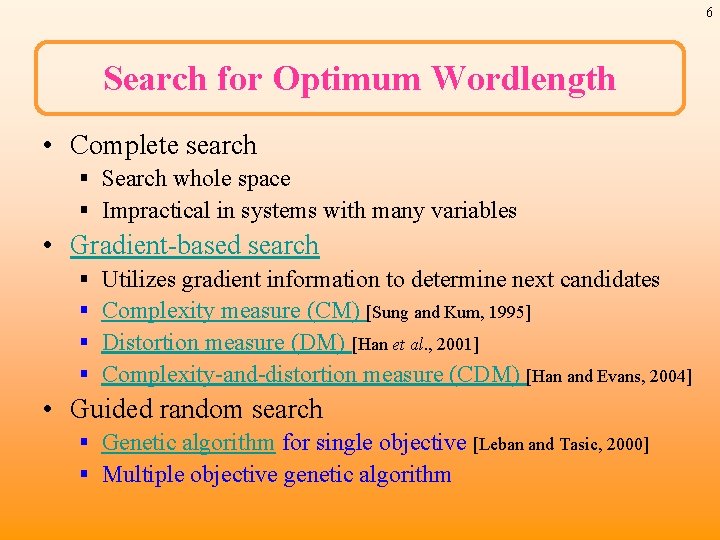

6 Search for Optimum Wordlength • Complete search § Search whole space § Impractical in systems with many variables • Gradient-based search § § Utilizes gradient information to determine next candidates Complexity measure (CM) [Sung and Kum, 1995] Distortion measure (DM) [Han et al. , 2001] Complexity-and-distortion measure (CDM) [Han and Evans, 2004] • Guided random search § Genetic algorithm for single objective [Leban and Tasic, 2000] § Multiple objective genetic algorithm

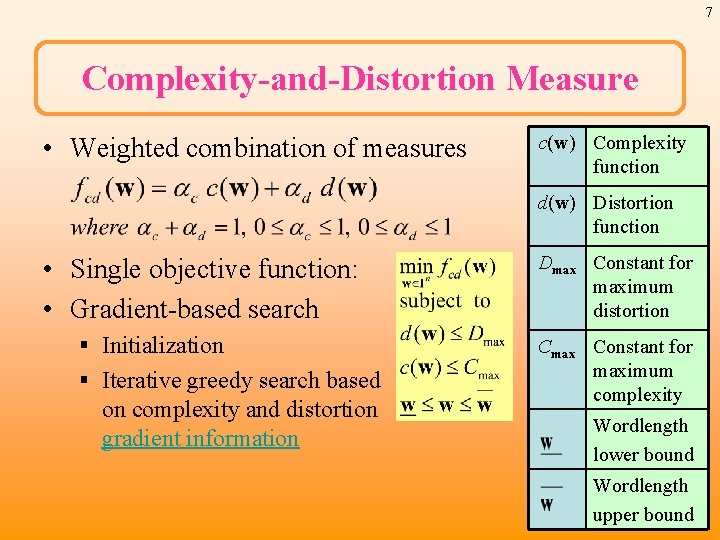

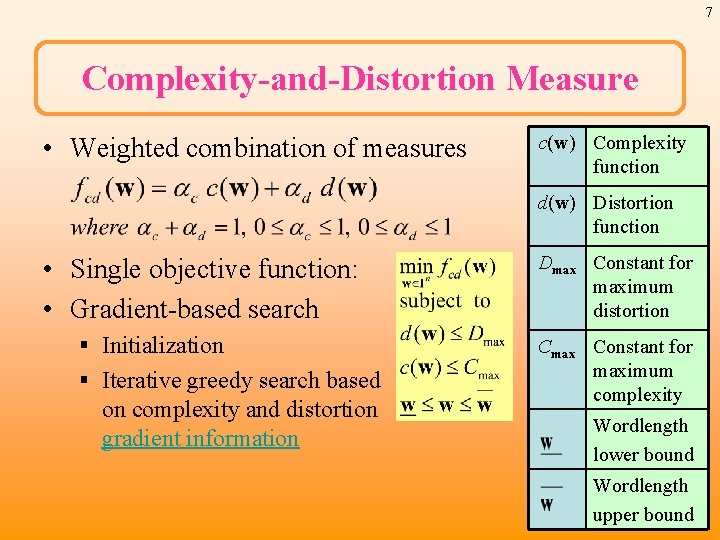

7 Complexity-and-Distortion Measure • Weighted combination of measures c(w) Complexity function d(w) Distortion function • Single objective function: • Gradient-based search § Initialization § Iterative greedy search based on complexity and distortion gradient information Dmax Constant for maximum distortion Cmax Constant for maximum complexity Wordlength lower bound Wordlength upper bound

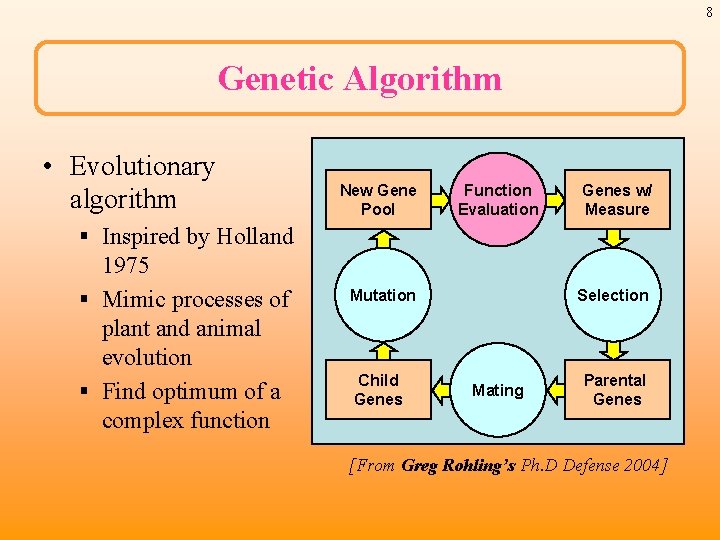

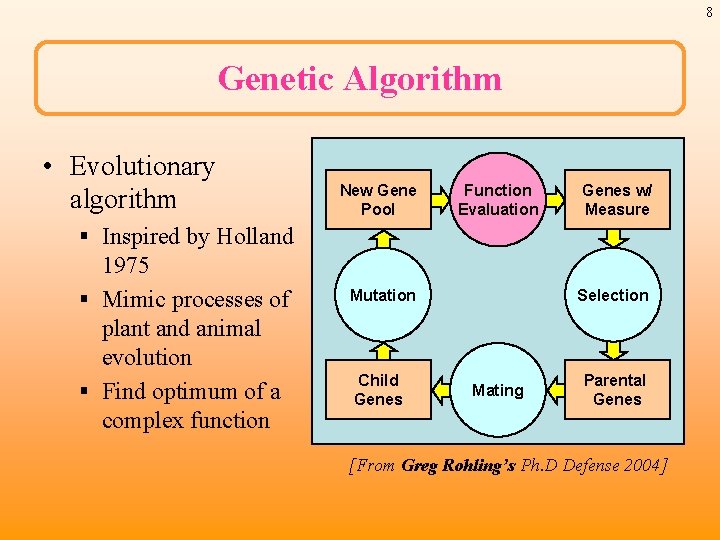

8 Genetic Algorithm • Evolutionary algorithm New Gene Pool Function Evaluation Genes w/ Measure § Inspired by Holland 1975 § Mimic processes of plant and animal evolution § Find optimum of a complex function Mutation Child Genes Selection Mating Parental Genes [From Greg Rohling’s Ph. D Defense 2004]

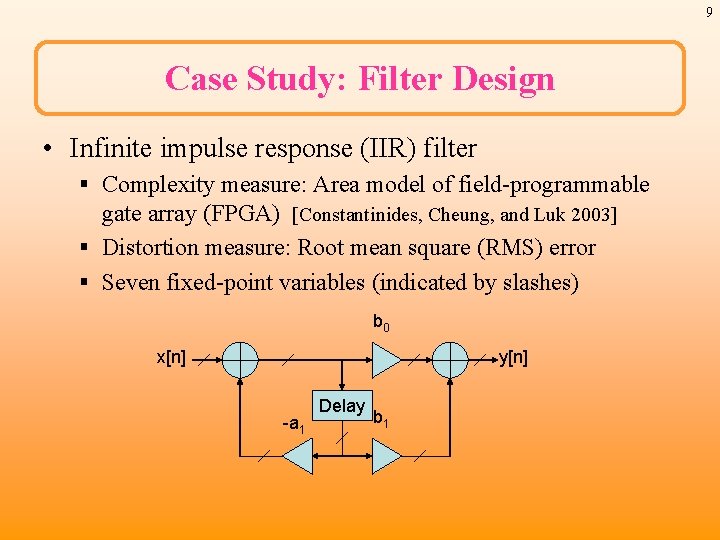

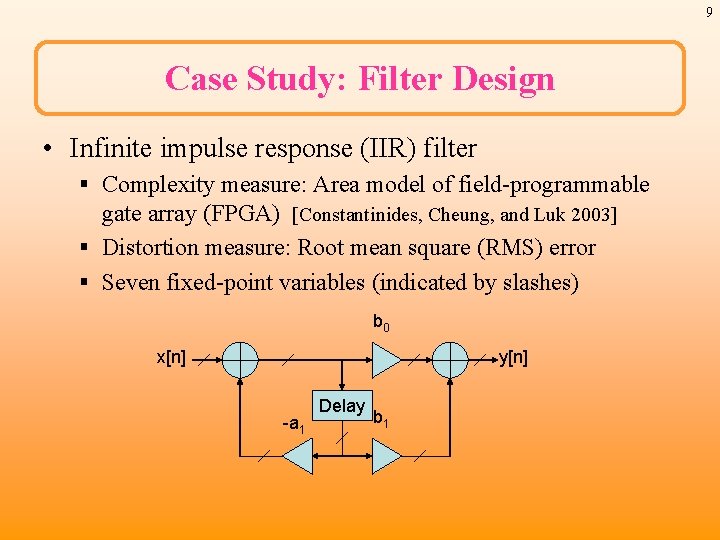

9 Case Study: Filter Design • Infinite impulse response (IIR) filter § Complexity measure: Area model of field-programmable gate array (FPGA) [Constantinides, Cheung, and Luk 2003] § Distortion measure: Root mean square (RMS) error § Seven fixed-point variables (indicated by slashes) b 0 x[n] y[n] -a 1 Delay b 1

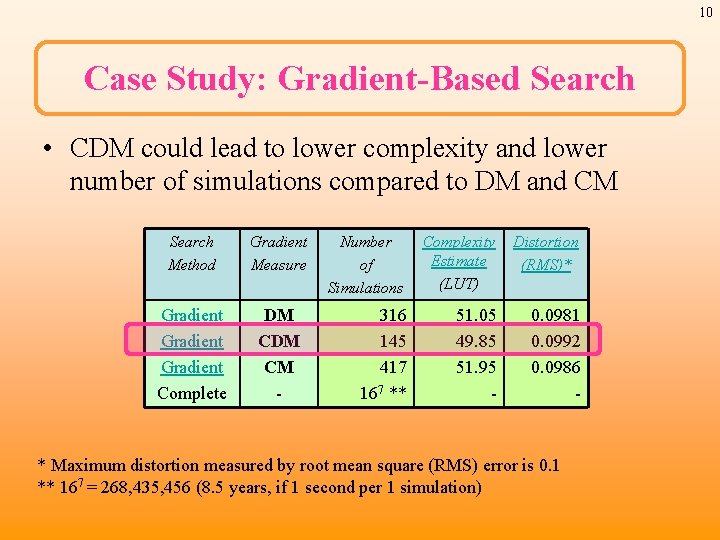

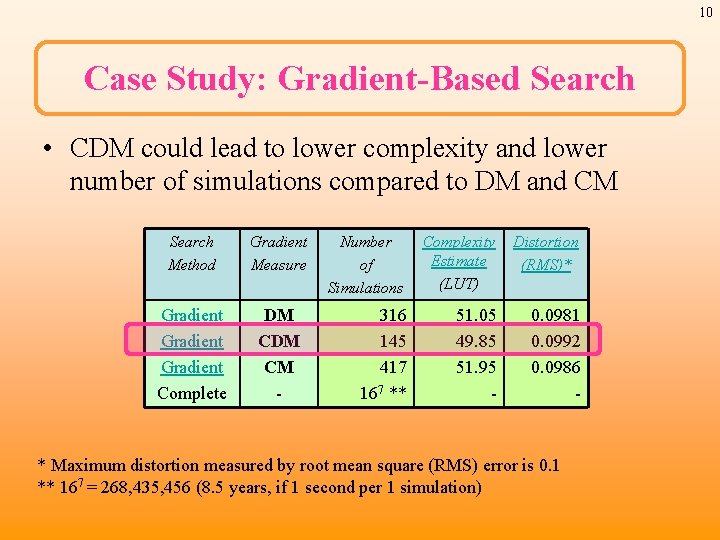

10 Case Study: Gradient-Based Search • CDM could lead to lower complexity and lower number of simulations compared to DM and CM Search Method Gradient Measure Gradient Complete DM CM - Number of Simulations Complexity Estimate (LUT) Distortion (RMS)* 316 145 417 167 ** 51. 05 49. 85 51. 95 - 0. 0981 0. 0992 0. 0986 - * Maximum distortion measured by root mean square (RMS) error is 0. 1 ** 167 = 268, 435, 456 (8. 5 years, if 1 second per 1 simulation)

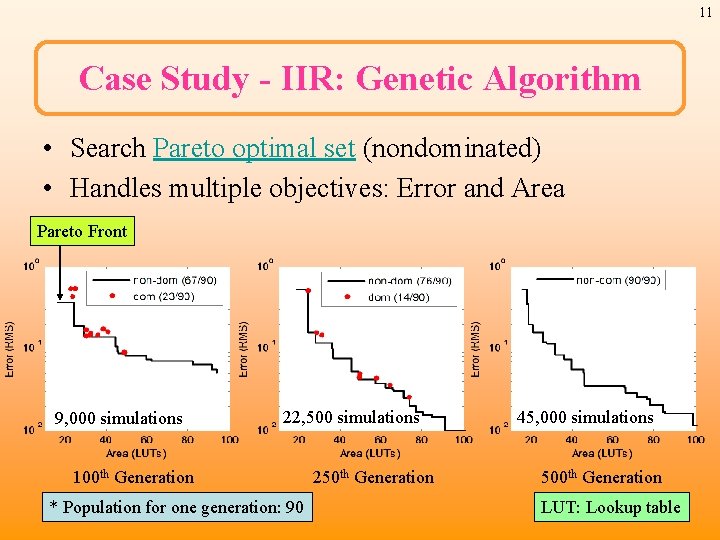

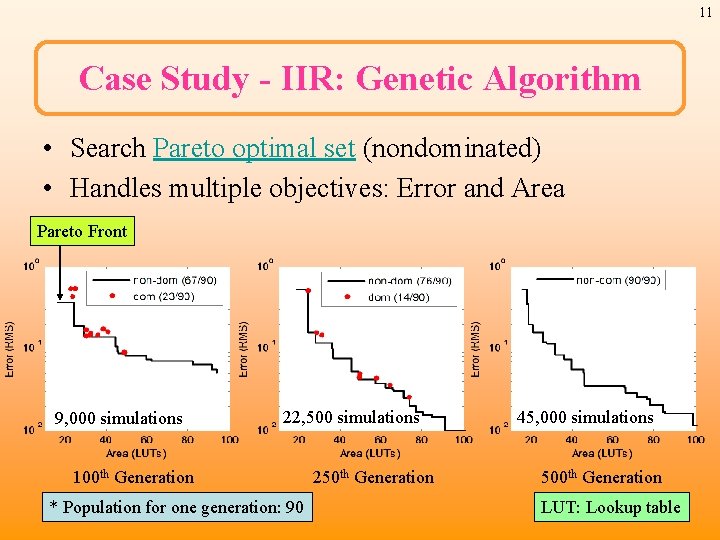

11 Case Study - IIR: Genetic Algorithm • Search Pareto optimal set (nondominated) • Handles multiple objectives: Error and Area Pareto Front 9, 000 simulations 22, 500 simulations 100 th Generation * Population for one generation: 90 250 th Generation 45, 000 simulations 500 th Generation LUT: Lookup table

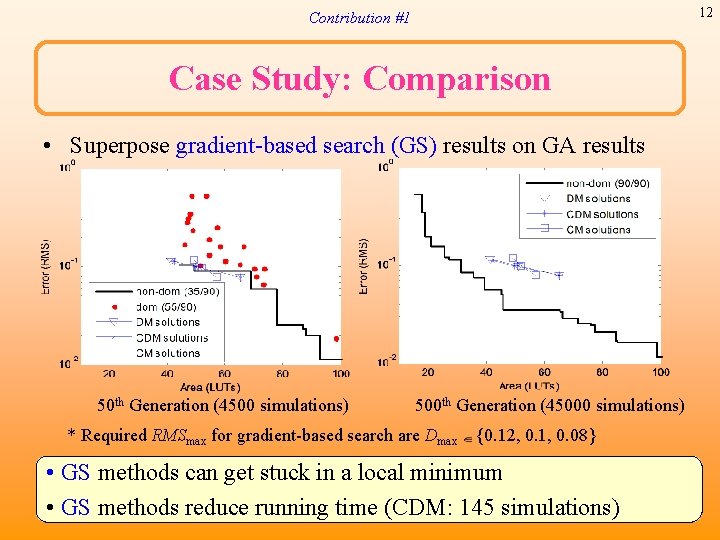

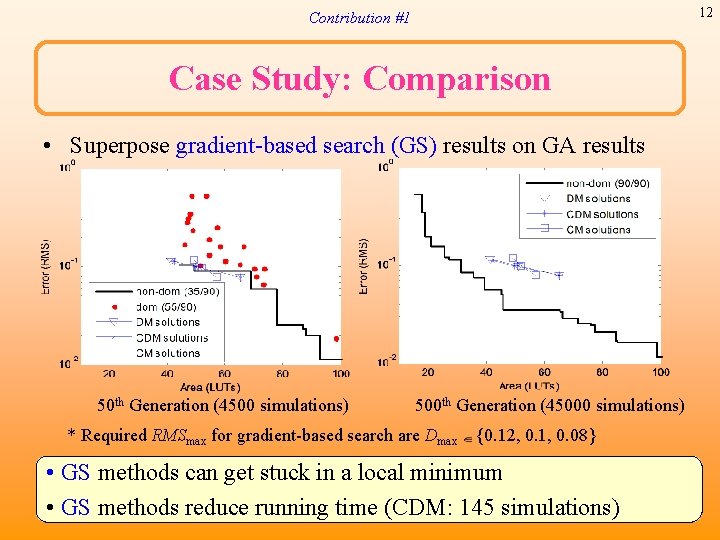

12 Contribution #1 Case Study: Comparison • Superpose gradient-based search (GS) results on GA results 50 th Generation (4500 simulations) 500 th Generation (45000 simulations) * Required RMSmax for gradient-based search are Dmax {0. 12, 0. 1, 0. 08} • GS methods can get stuck in a local minimum • GS methods reduce running time (CDM: 145 simulations)

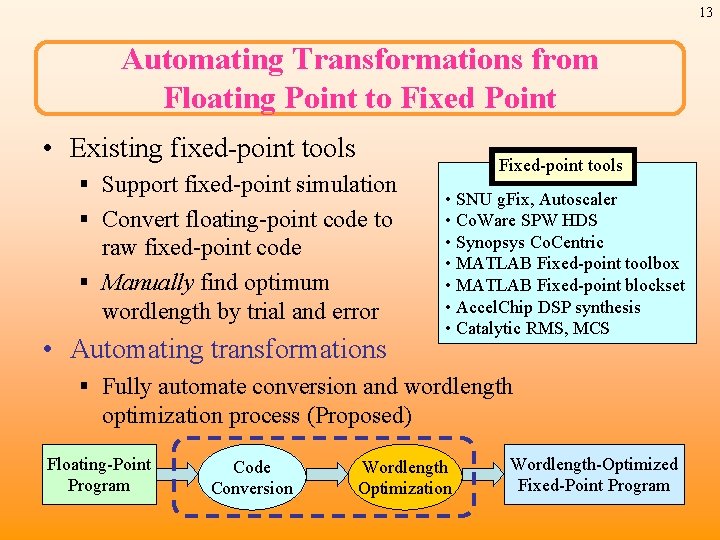

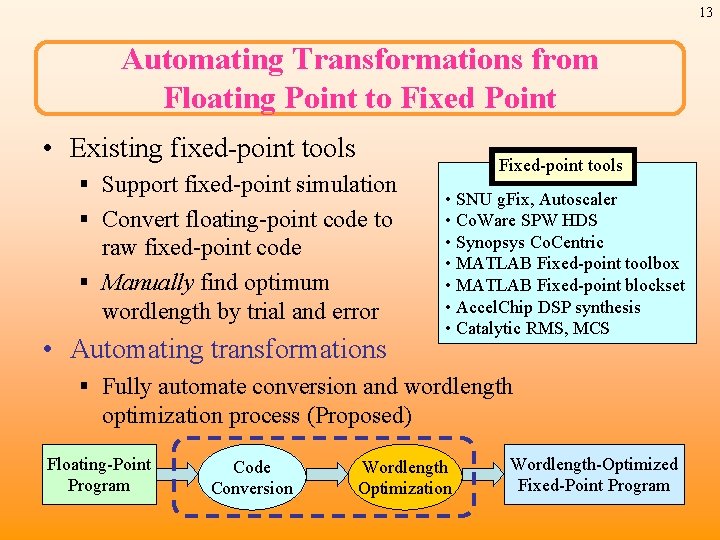

13 Automating Transformations from Floating Point to Fixed Point • Existing fixed-point tools § Support fixed-point simulation § Convert floating-point code to raw fixed-point code § Manually find optimum wordlength by trial and error • Automating transformations Fixed-point tools • SNU g. Fix, Autoscaler • Co. Ware SPW HDS • Synopsys Co. Centric • MATLAB Fixed-point toolbox • MATLAB Fixed-point blockset • Accel. Chip DSP synthesis • Catalytic RMS, MCS § Fully automate conversion and wordlength optimization process (Proposed) Floating-Point Program Code Conversion Wordlength Optimization Wordlength-Optimized Fixed-Point Program

![14 Code Generation for FixedPoint Program Adder function in MATLAB Function c 14 Code Generation for Fixed-Point Program • Adder function in MATLAB Function [c] =](https://slidetodoc.com/presentation_image_h2/ad1cee5e618906f3db9dbeba336df897/image-14.jpg)

14 Code Generation for Fixed-Point Program • Adder function in MATLAB Function [c] = adder(a, b) c = 0; c = a + b; (a) Floating point program for adder Function [c] = adder_fx(a, b, numtype) c = 0; a = fi (a, numtype. a); b = fi (b, numtype. b); c = fi (c, numtype. c); c(: ) = a + b; (c) Converted fixed-point program for automating optimization (Proposed) Function [c] = adder_fx(a, b) c = 0; Determined a = fi (a, 1, 32, 16); by designers b = fi (b, 1, 32, 16); with trial c = fi (c, 1, 32, 16); and error c(: ) = a + b; (b) Raw fixed-point program WL S FWL fi(a, S, WL, FWL) is a constructor function for a fixed-point object in fixed-point toolbox [S: Signed, WL: Wordlength, FWL: Fraction length]

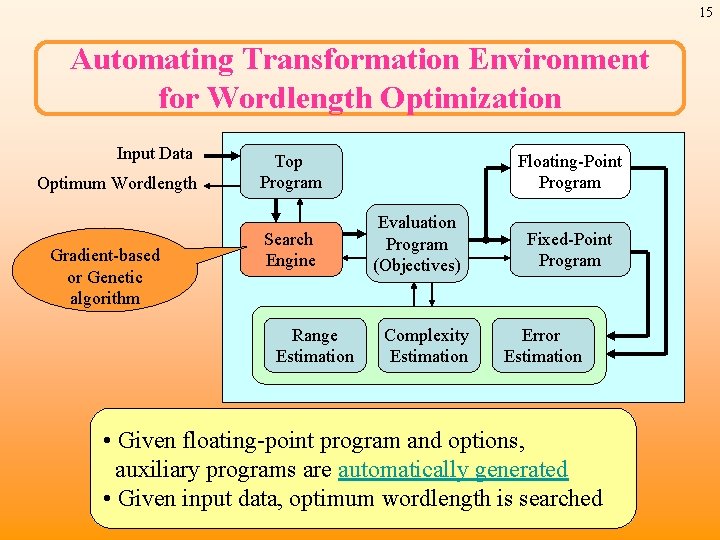

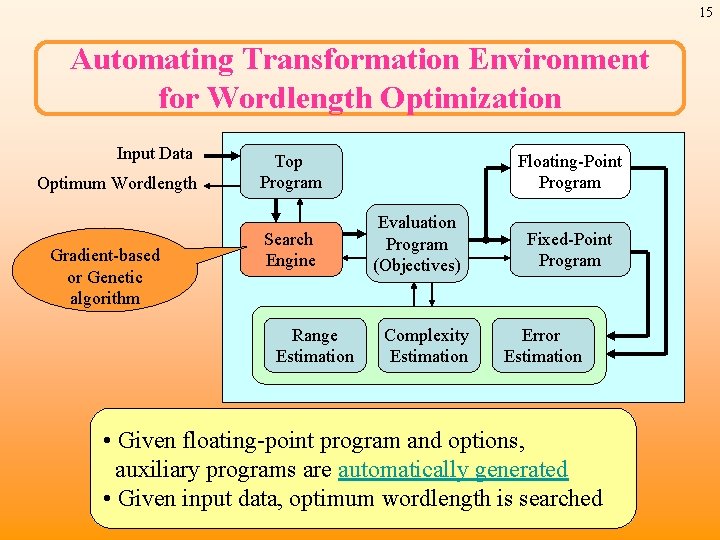

15 Automating Transformation Environment for Wordlength Optimization Input Data Optimum Wordlength Gradient-based or Genetic algorithm Top Program Search Engine Range Estimation Floating-Point Program Evaluation Program (Objectives) Complexity Estimation Fixed-Point Program Error Estimation • Given floating-point program and options, auxiliary programs are automatically generated • Given input data, optimum wordlength is searched

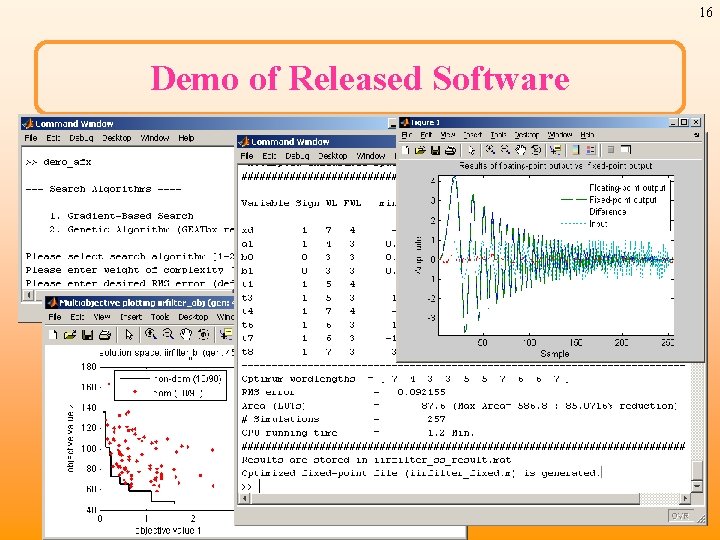

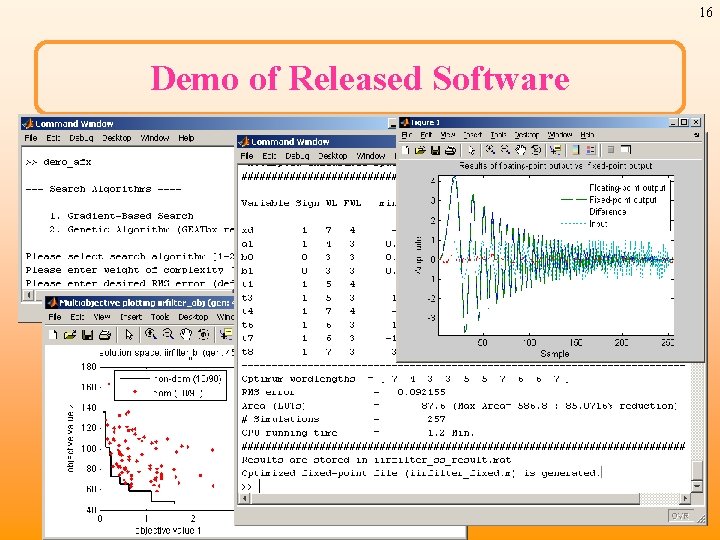

16 Demo of Released Software

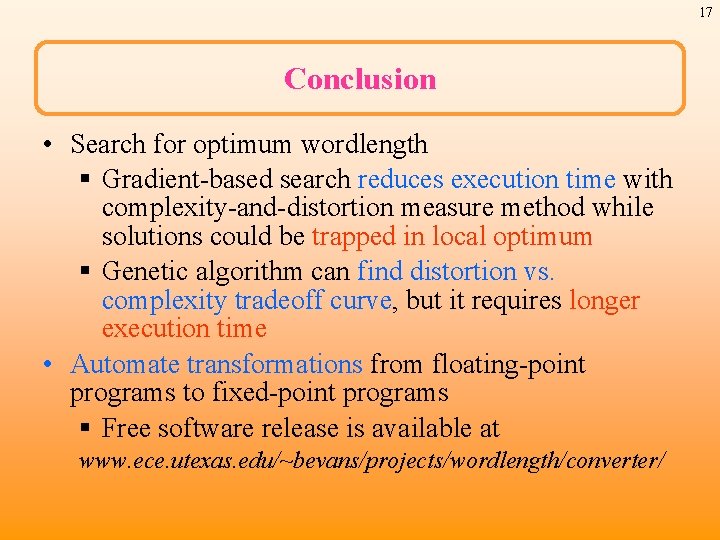

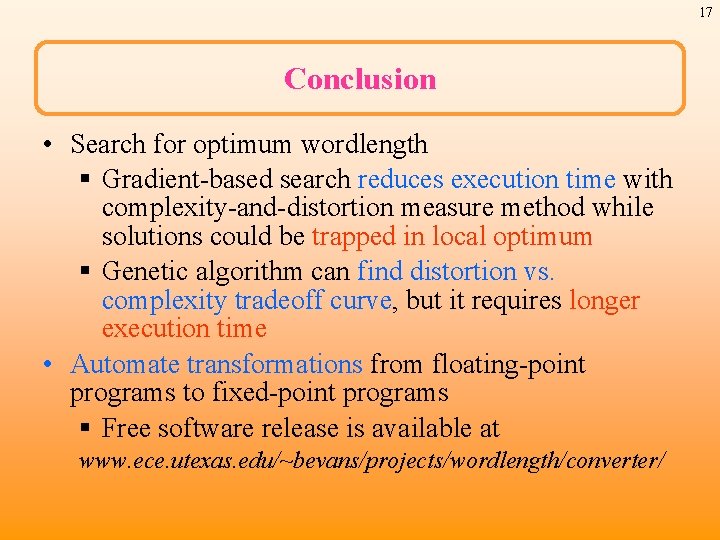

17 Conclusion • Search for optimum wordlength § Gradient-based search reduces execution time with complexity-and-distortion measure method while solutions could be trapped in local optimum § Genetic algorithm can find distortion vs. complexity tradeoff curve, but it requires longer execution time • Automate transformations from floating-point programs to fixed-point programs § Free software release is available at www. ece. utexas. edu/~bevans/projects/wordlength/converter/

18 End

19 Backup Slides

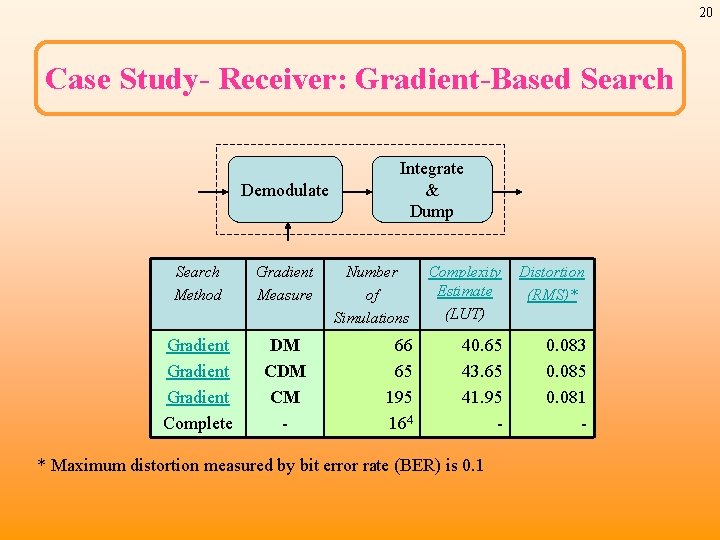

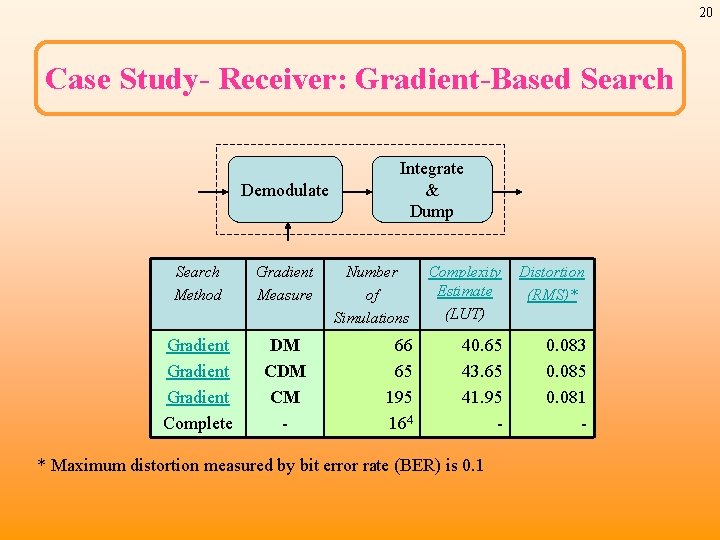

20 Case Study- Receiver: Gradient-Based Search Demodulate Search Method Gradient Measure Gradient Complete DM CM - Integrate & Dump Number of Simulations Complexity Estimate (LUT) Distortion (RMS)* 66 65 195 164 40. 65 43. 65 41. 95 - 0. 083 0. 085 0. 081 - * Maximum distortion measured by bit error rate (BER) is 0. 1

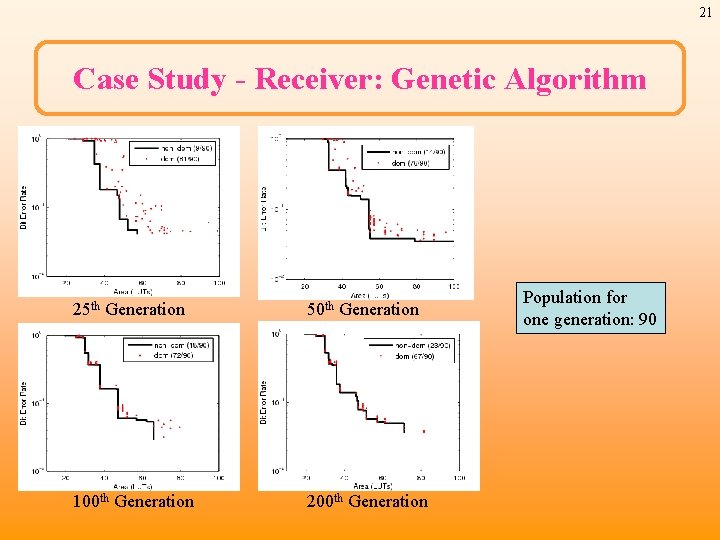

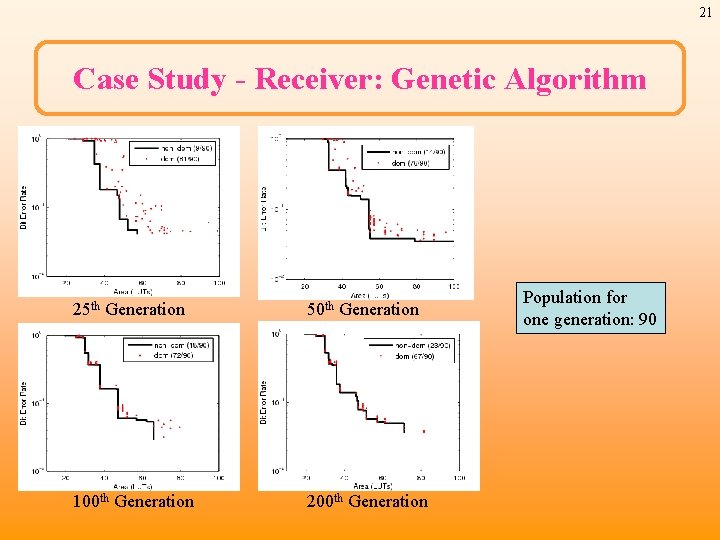

21 Case Study - Receiver: Genetic Algorithm 25 th Generation 50 th Generation 100 th Generation 200 th Generation Population for one generation: 90

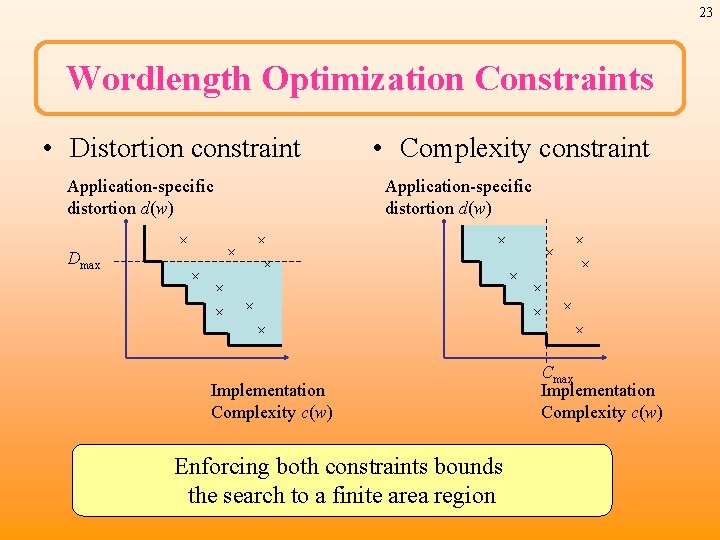

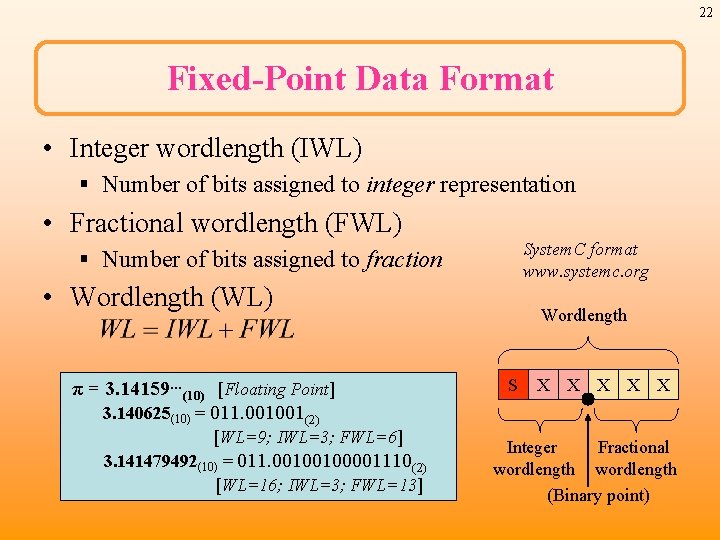

22 Fixed-Point Data Format • Integer wordlength (IWL) § Number of bits assigned to integer representation • Fractional wordlength (FWL) System. C format www. systemc. org § Number of bits assigned to fraction • Wordlength (WL) π = 3. 14159…(10) [Floating Point] 3. 140625(10) = 011. 001001(2) [WL=9; IWL=3; FWL=6] 3. 141479492(10) = 011. 00100100001110(2) [WL=16; IWL=3; FWL=13] Wordlength S X X X Integer Fractional wordlength (Binary point)

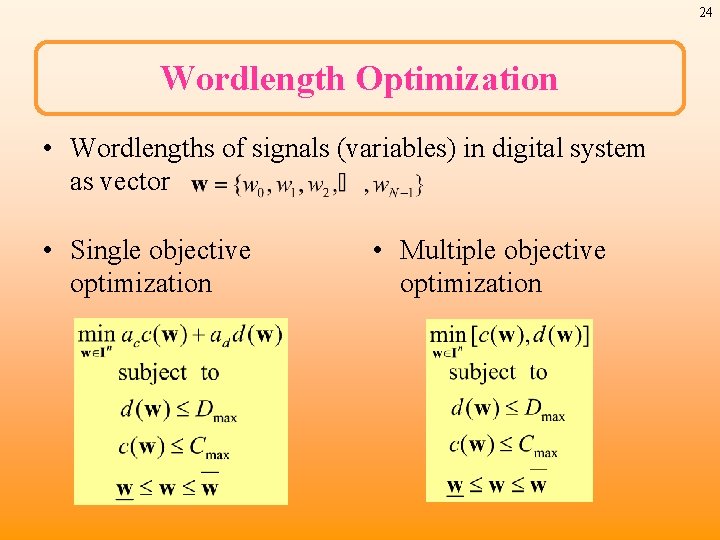

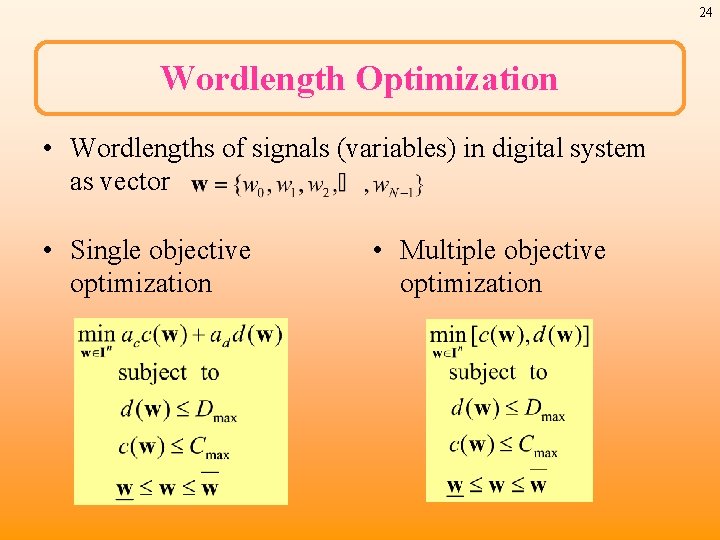

23 Wordlength Optimization Constraints • Distortion constraint Application-specific distortion d(w) • Complexity constraint Application-specific distortion d(w) Dmax Implementation Complexity c(w) Enforcing both constraints bounds the search to a finite area region Cmax Implementation Complexity c(w)

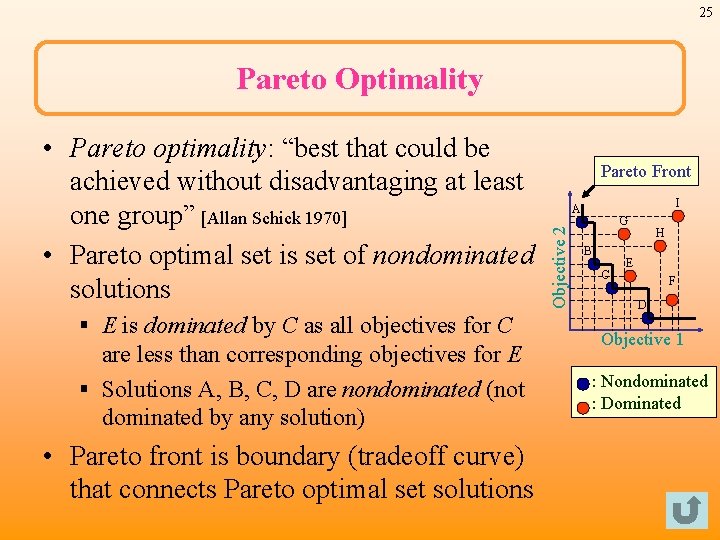

24 Wordlength Optimization • Wordlengths of signals (variables) in digital system as vector • Single objective optimization • Multiple objective optimization

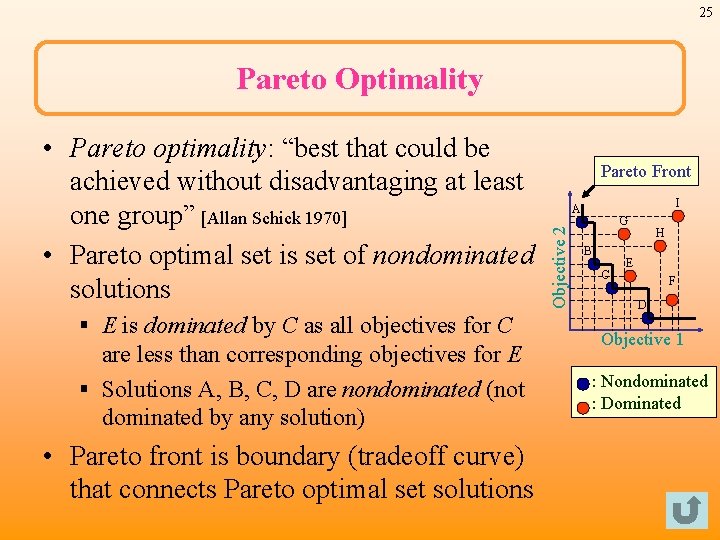

25 Pareto Optimality § E is dominated by C as all objectives for C are less than corresponding objectives for E § Solutions A, B, C, D are nondominated (not dominated by any solution) • Pareto front is boundary (tradeoff curve) that connects Pareto optimal set solutions Pareto Front I A Objective 2 • Pareto optimality: “best that could be achieved without disadvantaging at least one group” [Allan Schick 1970] • Pareto optimal set is set of nondominated solutions G B C H E F D Objective 1 : Nondominated : Dominated

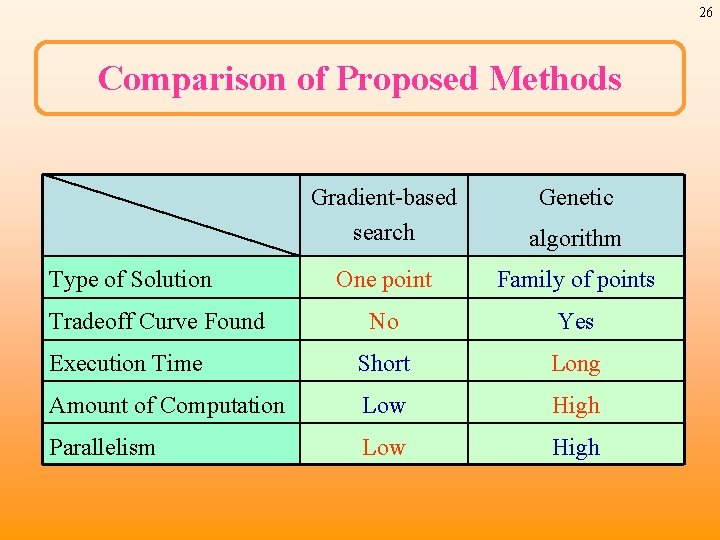

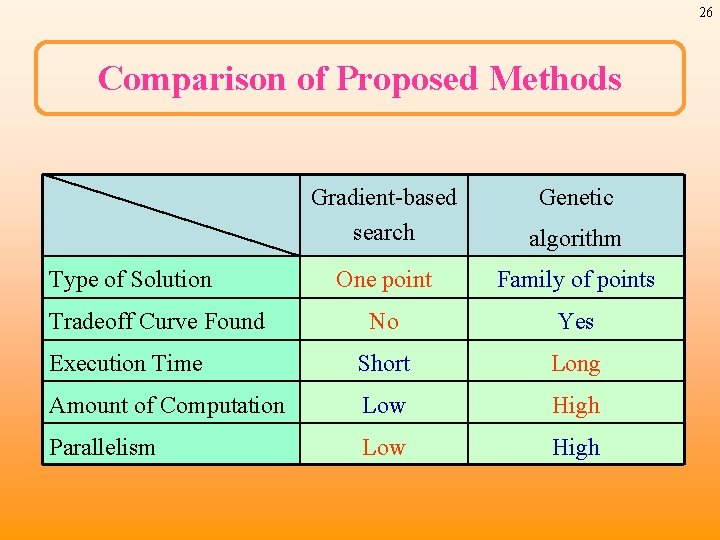

26 Comparison of Proposed Methods Gradient-based search algorithm One point Family of points No Yes Execution Time Short Long Amount of Computation Low High Parallelism Low High Type of Solution Tradeoff Curve Found Genetic

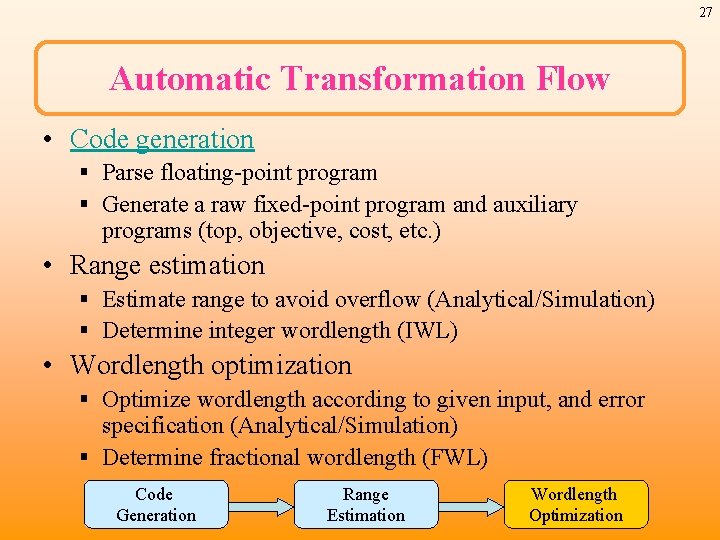

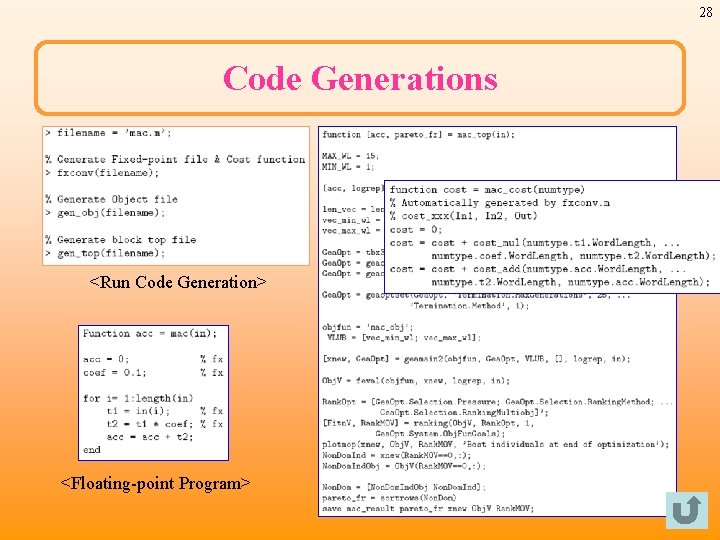

27 Automatic Transformation Flow • Code generation § Parse floating-point program § Generate a raw fixed-point program and auxiliary programs (top, objective, cost, etc. ) • Range estimation § Estimate range to avoid overflow (Analytical/Simulation) § Determine integer wordlength (IWL) • Wordlength optimization § Optimize wordlength according to given input, and error specification (Analytical/Simulation) § Determine fractional wordlength (FWL) Code Generation Range Estimation Wordlength Optimization

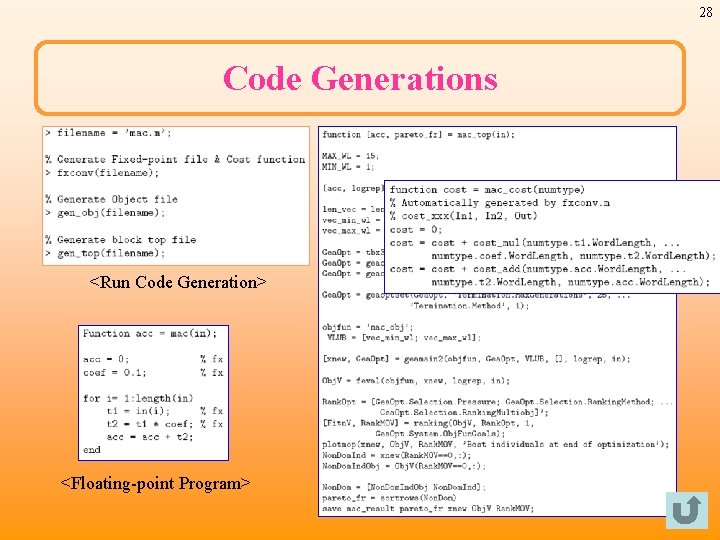

28 Code Generations <Run Code Generation> <Floating-point Program>